Abstract

Assembly optimisation activities that involve assembly sequence planning and assembly line balancing have been extensively studied because of the importance of optimal assembly efficiency to manufacturing competitiveness. Numerous research works in assembly sequence planning and assembly line balancing mainly focus on developing algorithms to solve problems and to optimise assembly sequence planning and assembly line balancing. However, there is a scarcity in works that focus on developing problems to test these algorithms. In optimisation algorithm development, testing algorithms by a broad range of test problems is crucial to identify their strengths and weaknesses. This article proposes a generator of assembly sequence planning and assembly line balancing test problems with tuneable complexity levels. Experiments confirm that the selected combination of input attributes does control the generated assembly sequence planning and assembly line balancing problem complexity, and also that the generated problems can be used to identify the suitability of a given algorithm to problem types.

Introduction

In manufacturing, assembly optimisation involves bringing and joining parts and/or subassemblies together to make the process as efficient as possible. Assembly sequence planning (ASP) and assembly line balancing (ALB) are classified among major topics in assembly optimisation because both are directly related to assembly efficiency.1,2 Recently, researchers have discovered benefits of solving and optimising ASP and ALB problems together,3,4 leading to increased research focus on testing new or improved algorithms that operate on these combined problems. In order to assess the performance of new or improved algorithms and to compare them with existing algorithms, a wide range of test problems are required. In ASP and ALB optimisation works that focus on algorithm development or improvement, researchers have used two approaches to test algorithm performance. One approach is to test the algorithms using specific case studies.5,6 Another acknowledged approach is to adopt the test problems that are frequently used in literature.7,8 These approaches lack generality because there has been no investigation into the fit of algorithms to problem types. Algorithms have not been tested with a wide range of problem types.

The most frequently used test problem in ASP is an assembly of a transmission-type part with eleven components, presented by De Fazio and Whitney. 9 This problem has been presented in many articles, such as Chen and Liu, 10 Smith and Liu 11 and Wang et al., 12 to evaluate algorithm performance. Other than this widely used problem example, most ASP test problems found in literature have only been used within the same research group. There is thus no accepted standard ASP test problem for evaluating algorithm performance. On the other hand, in ALB optimisation, development of test problems was started in 1960s, resulting in many that have been developed and collected by different researchers. These problems vary in task size from eight to 297 tasks. The famous ALB problems, such as the eight-tasks by Bowman, 45 tasks by Kilbridge and Wester, 70 tasks by Tonge, 111 tasks by Arcus and 297 tasks by Scholl, are still being used until today to evaluate algorithm performance for line balancing problems. 13

Although these few benchmark ASP and ALB problems are available for comparing algorithm performance, there is no standard test problem set that covers a wide variety of problem difficulties, especially to test the combined ASP and ALB optimisation. Not only is this important for enhancing the researchers’ understanding of their algorithm, it will also help users in selecting which algorithm is more appropriate to their requirements. In order to facilitate such experimentation, a set of problems with a controllable complexity level is needed. One way to address this is to devise a test problem generator (TPG) with tuneable difficulty level that can systematically generate a set of test problems with a desired mix of complexity levels.

This article proposes a TPG with a tuneable complexity level for combined ASP and ALB problems. ‘TPG for ASP and ALB’ explains the requirements and specifications for the proposed TPG. ‘TPG development’ will explain the methodology of the TPG development, which is divided into graph and data generation methodology. Then, ‘Experimental design’ describes the experimental design to test the proposed TPG for ASP and ALB. ‘Results and discussion’ presents and discusses the experimental results in this work. Finally, we present the authors’ conclusions on the proposed TPG according to experimental results.

TPG for ASP and ALB

In the mathematical optimisation community, the importance of TPGs is widely appreciated. Although algorithm development is important, any new algorithm should ideally be tested with a wide range of problem types before making any conclusion on their usefulness. 14 Most of ASP and ALB works focus on proposing and demonstrating algorithm performance on specific ASP and/or ALB problems. There is a lack of investigation into testing and validating the performance of algorithms on wider classes of problems.

A TPG will be useful to provide a wide range of ASP and ALB problems with differing characteristics and difficulties. In many cases, the problem difficulty is only determined by the size of the problem. While this is correct in certain cases, this overlooks the influence of many other attributes on problem difficulty. Additionally, TPGs will also be useful to identify which algorithm may be more suitable for a given type of problem. This knowledge is very important to help users to choose the right algorithm, and also for researchers to identify opportunities for further improvement in a particular algorithm.

To provide the mentioned benefits, the TPG must satisfy the following requirements.

Representation. The problems are generated on the basis of assembly task and represented using a precedence graph. This was the common way to represent task-based assembly problems in earlier works. 2

Output. The TPG is expected to produce precedence graphs that represent task-based assembly problems. Besides that, the TPG must also be able to generate assembly data, which consists of assembly direction and tool for ASP and assembly time for ALB. These types of data are selected based on popularity from a literature survey. 2

Tuneable difficulty level. One of the important features expected in a TPG is a tuneable difficulty level. This feature will ensure that test problems are generated within known difficulty ranges as required.

There are not many proposals in literature on methods for generating test problems in this domain. Furthermore, existing proposals are limited to generating test problems for ALB. Bhattacharjee and Sahu’s proposal is to generate a random precedence graph to represent an ALB problem. 15 In this approach, the assembly problem is generated randomly, and then the problem difficulty is measured to determine its complexity level. Later, a systematic data generator for assembly line balancing was proposed by Otto et al. 16 Besides presenting a systematic method for generating precedence graphs, this work also demonstrates that common graph structures in real-world assembly problems, i.e. chains, bottlenecks and modules, can be generated on a precedence graph. This approach is also able to generate problems at the desired difficulty levels. 16

Otto’s work is the one closest to our stated requirements because this work fulfils requirements (1) and (3). In Otto’s work, ALB problems are generated based on assembly tasks and represented using precedence graphs. It also gives users the ability to create test problem difficulty at the desired level of difficulty. However, since this work was specifically developed for ALB problems, it fails requirement (2). Therefore, in this article, the ALB-only systematic data generator proposed by Otto in 2011 will be expanded to incorporate both ASP and ALB test problems.

TPG development

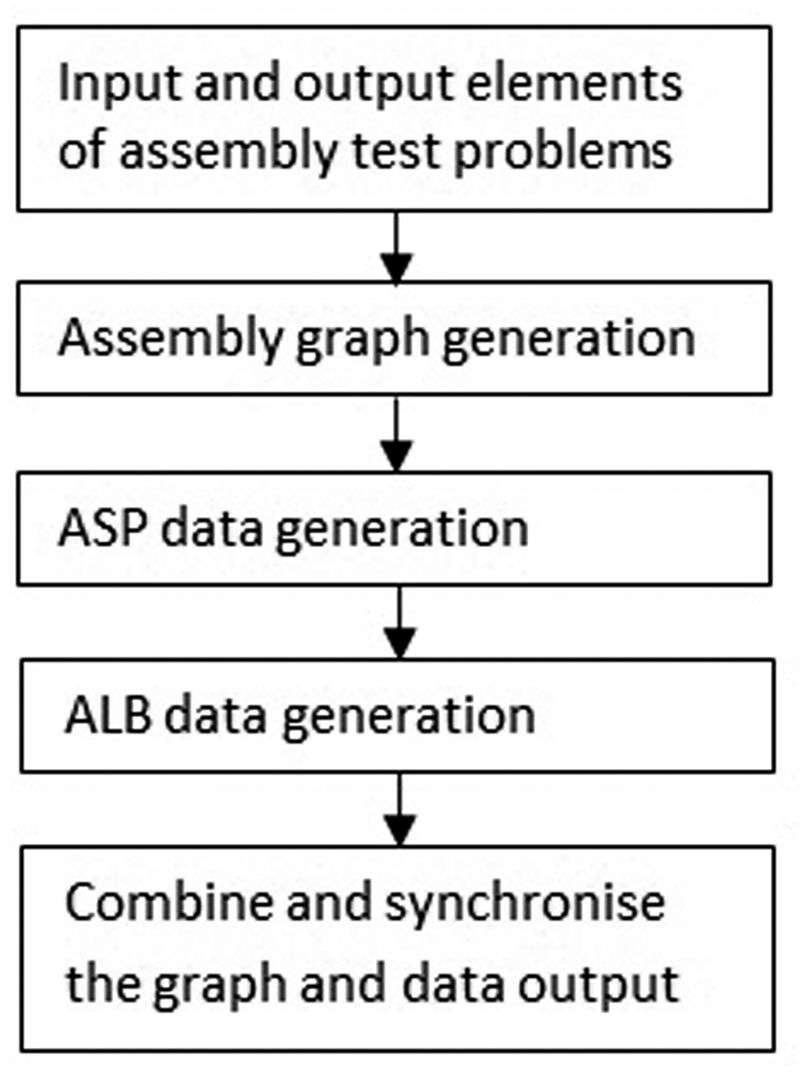

The TPG was developed using the methodology presented in Figure 1. The details of each stage are explained in this section.

Test problem generator development flow.

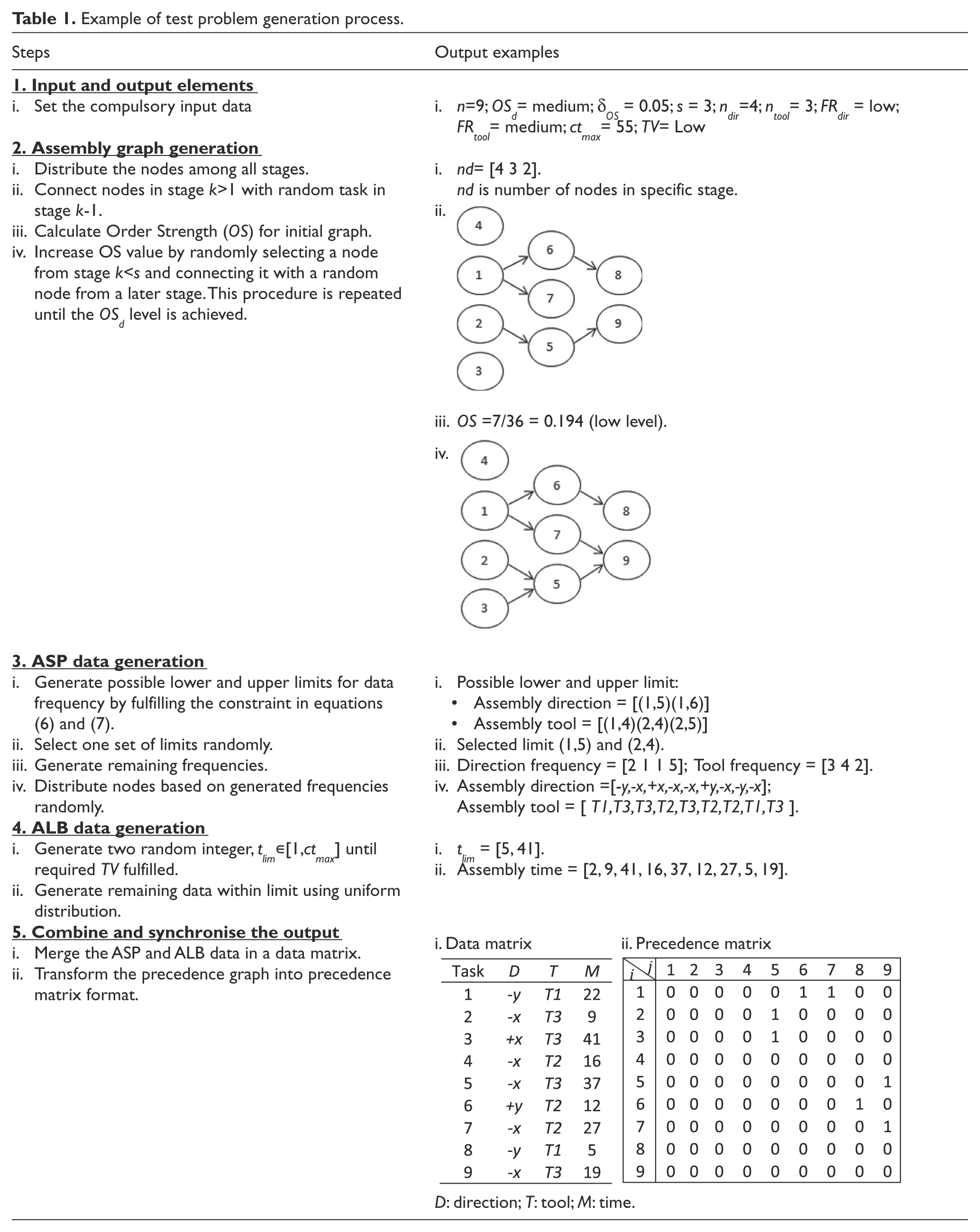

The first stage in developing the TPG is to identify the input and output elements. Next is the independent development of automated generators for assembly graph, ASP and ALB data. Finally, the outputs from graph and data generators are synchronised and combined to produce a complete test problem set. A worked example of the proposed TPG, with outputs for each step, is presented in Table 1.

Example of test problem generation process.

Input and output elements of assembly test problems

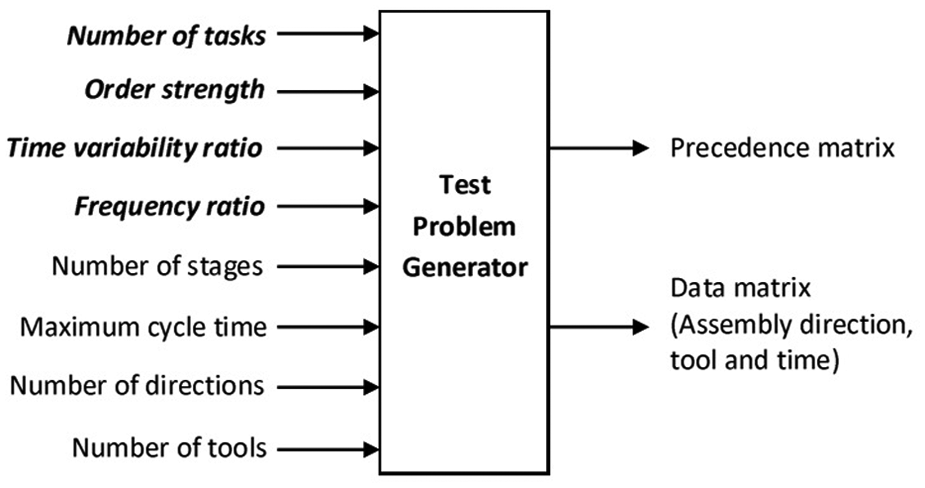

The mapping of input and output variables is shown in Figure 2. The tuneable inputs are presented in bold and italic font.

Problem generator input and output map.

Tuneable input elements

The tuneable input elements are variables that are used to control the problem difficulty generated by the TPG. In this work, one new tuning variable is proposed and the rest are adopted from previous works. The TPG is conceptually divided into two parts: the generation of assembly graphs and the generation of assembly data. The next section will discuss the tuneable input variables for each part. Although the tuneable input variables for ALB has been discussed in earlier works, no clear link has been suggested in literature between input and specific difficulty levels for ASP. 13

Tuneable input for assembly graph

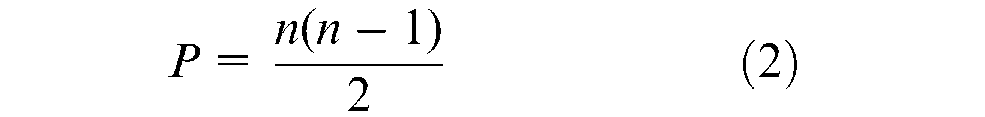

Two tuneable inputs will be used to generate precedence at a specific complexity level. The first input variable to measure graph complexity is

Another graph input variable conceptually linked to graph difficulty is order strength. Order strength (

where

where

The order strength value varies between [0, 1].

Tuneable input for assembly data

Previously, a number of time-related measures for ALB data have been proposed, such as ratio between maximum and minimum completion time between assembly lines, 17 standard deviation, 15 and time variability ratio. A time variability ratio (TV) has consistently been used in previous works and is selected for use in this work. A time variability ratio indicates the range of task time of all tasks dispersed between the assembly lines. A time variability ratio is calculated as

where

A smaller time variability ratio value indicates that existing task times are distributed in a smaller range, which leads to an increased level of problem complexity. The

Meanwhile, in the ASP problem domain, no variable for measuring data complexity has been established. In this work, the ASP data considered are assembly directions and assembly tools. This type of data can be measured by considering how many times (i.e. frequency) a similar direction or tool appears in the problem. A common optimisation objective is to minimise direction or tool changes in a sequence of tasks. Thus, the frequency ratio (

where

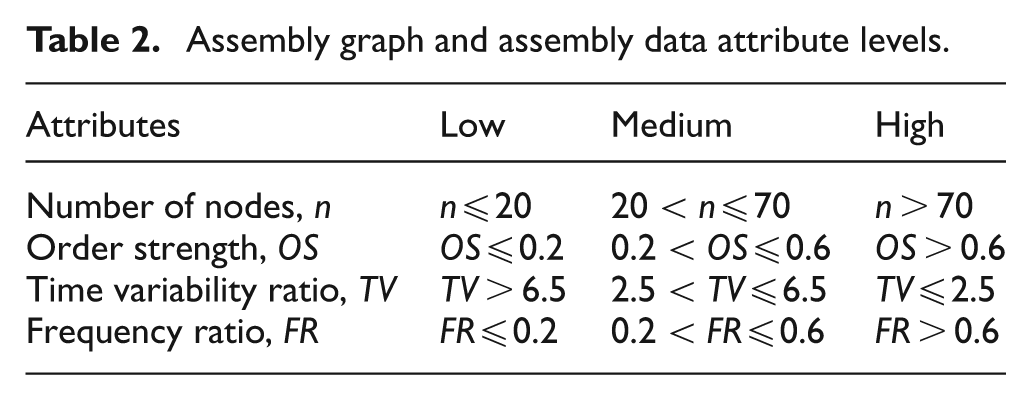

Data with a higher frequency ratio is harder to arrange to achieve the minimum number of changes because the choice and variability of data are high. This type of data will usually produce a higher number of changes compared with smaller frequency ratio data. The details of graph and assembly data attributes level are shown in Table 2. In this table, the attribute level for the ‘number of nodes’ is proposed based on a survey on problem sizes, as mentioned in ‘Tuneable input elements’, while the proposed classification of frequency ratio and time variability levels are based on a few initial tests. The proposed classification of order strength levels is adopted from literature review. 16

Assembly graph and assembly data attribute levels.

The tuneable input variables are classified into low, medium and high levels because of nonlinearity of the problem. Although the general trend of problem difficulty over tuneable variables can be predicted, when tuning for a targeted difficulty level, too small variable changes may lead to inconsistent difficulty levels. The classification of level difficulties, as in Table 2, can be used as a guideline for users in selecting appropriate difficulty levels for their use. To reduce the possibility of inconsistent difficulty levels, it is suggested to use the midpoint of the medium level to generate a medium difficulty problem.

Other input elements

Apart from the tuneable elements, there are other ‘compulsory’ inputs that are required for generating a complete problem. Although some of these variables have implications to the problem difficulty level, they are not used here as a means to control the problem difficulty because of a lack of agreement in literature. These inputs are: number of stages (

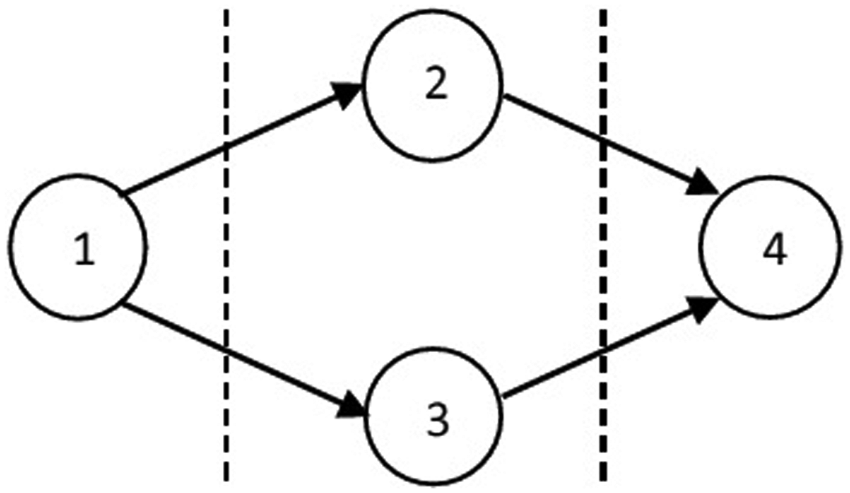

Example of precedence graph.

Another important element of the TPG is the pseudo-random number generator that underlies most of the data generation algorithm. In this work, the pseudo-random generator used is Mersenne Twister with the range between [0, 232−1] for a 32-bit integer. 18 Appropriate use of seed values ensures that all results are reproducible.

Output elements

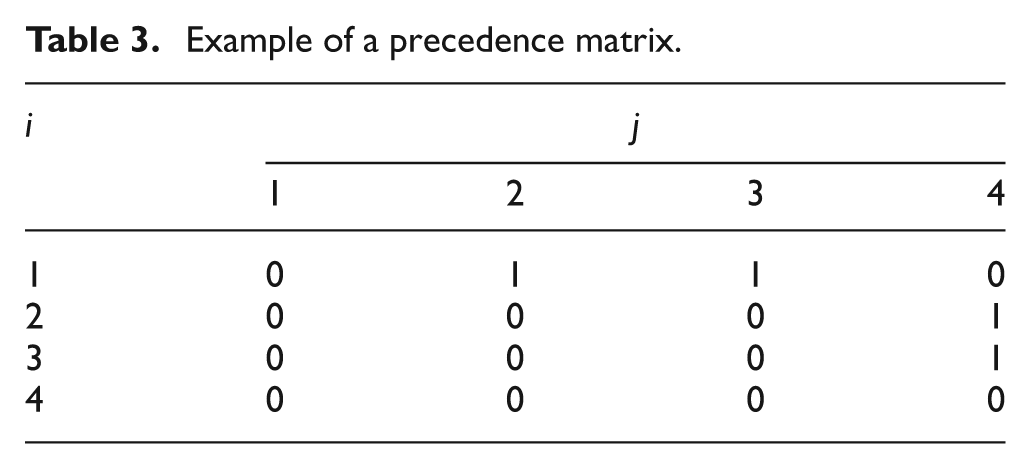

There are two sets of outputs generated by the proposed TPG. The first output is the assembly precedence graph (e.g. Figure 3), represented by a precedence matrix, which is an

Example of a precedence matrix.

The second output is a data matrix that consists of assembly directions, assembly tools and assembly time associated with every task. This data is generated according to the required difficulty level as determined in tuneable input variables.

Assembly graph generation

In this work, the systematic graph generation method is adopted from Otto’s work. 16 The five steps below are as proposed in that work.

Step 1. Provide all the compulsory inputs. The compulsory inputs are number of nodes (

Step 2. Generate and distribute the nodes in all stages using uniform distribution.

Step 3. Connect every node in stage

Step 4. Calculate the order strength using equation (1). If the order strength is within

Step 5. Select a node

Task

The order strength values have not exceeded the desired upper limit.

ASP data generation

In this work, the ASP data that are considered are ‘assembly direction’ and ‘assembly tool change’. The following steps are applied to generate these data. Besides number of tasks,

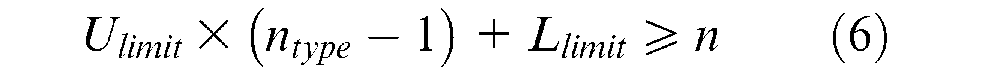

Step 1. Calculate all possible lower (

Equations (6) and (7) ensure that the summation of generated data within upper and lower limits matches the number of tasks,

Step 2. Randomly select a pair of lower and upper limits from the set of possible limits determined in Step 1. Generate remaining data frequencies using uniform distribution. The summation of data frequencies must be equal to

Step 3. Generate the ASP data based on frequencies (Step 2) in random order.

ALB data generation

The ALB data to be generated is the ‘task time’ for all nodes. The required inputs are ‘maximum cycle time’ (

Step 1. Calculate all possible limit of task time based on

Step 2. Generate the remaining task times between upper and lower limits using uniform distribution.

Combine and synchronise the graph and data output

Synchronisation of ASP-specific and ALB-specific outputs is straightforward because both ASP and ALB representations are both developed using the same assembly task basis. 19 Data generated in ‘ASP data generation’ and ‘ALB data generation’ are directly linked with assembly tasks and no further adjustment is needed. In this synchronisation step, the output data consisting of ASP data from ‘ASP data generation’ and ALB data from ‘ALB data generation’ are combined to establish a data matrix. In the data matrix, the assembly direction data is located on the first column, assembly tool data in the second column and assembly data for ALB in the third column.

The final process in this step is to transform the precedence graph into a precedence matrix as explained in ‘Output elements’. This is an important process to synchronise the format of the assembly graph into readable computer language.

Experimental design

This section describes the set-up of the experimental design to assess problems generated using the proposed TPG. The experiment is divided into two phases. In Phase 1, the experiment will focus on the ability of the TPG to generate problems at a desired complexity level by manipulating the tuneable input attributes. Then, in Phase 2, the generated problems from the TPG will be used to evaluate the performance of a set of selected algorithms. The purpose of the second phase experiment is to identify if the generated problems from the TPG can be used to characterise the best and worst performance of each algorithm.

Phase 1. Testing of tuneable input

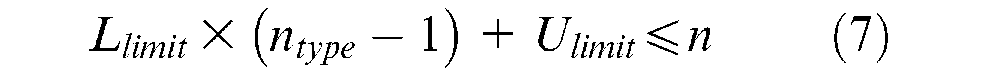

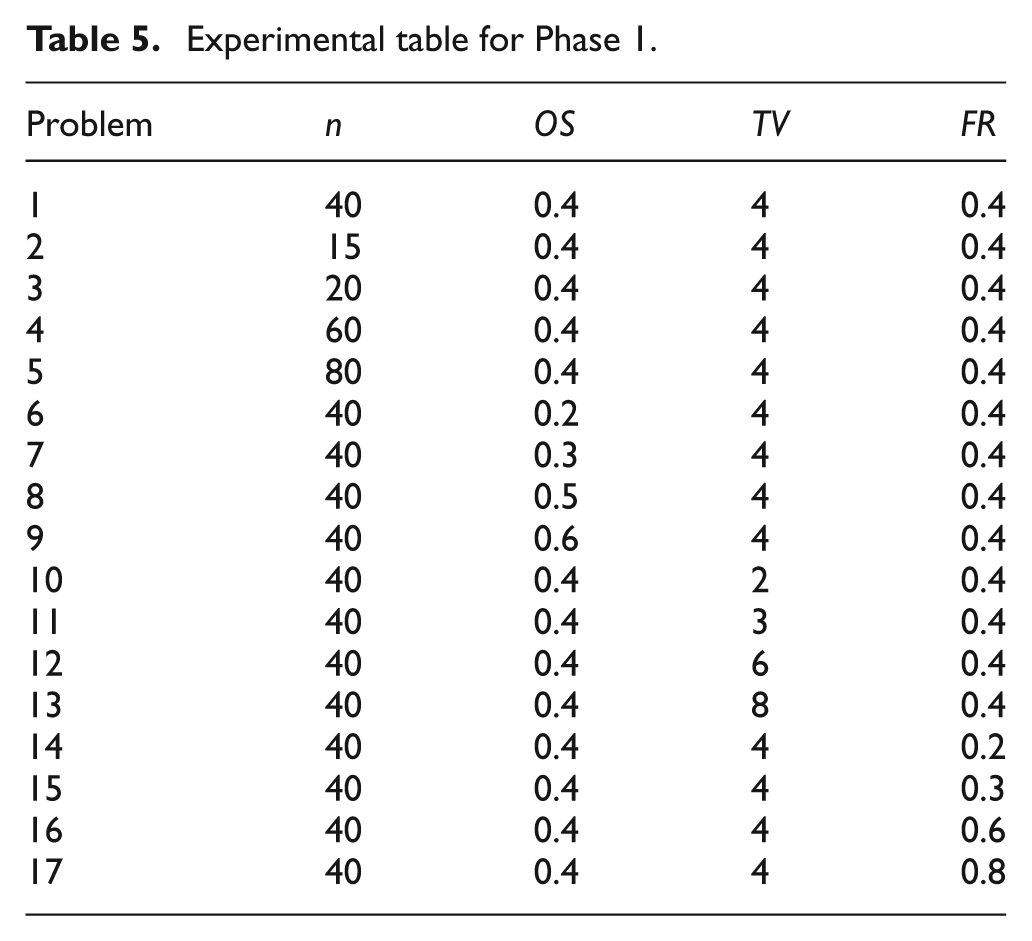

The experiment in this phase is conducted by dividing all the tuneable input variables into five levels, as presented in Table 4.

Tuneable input level setting.

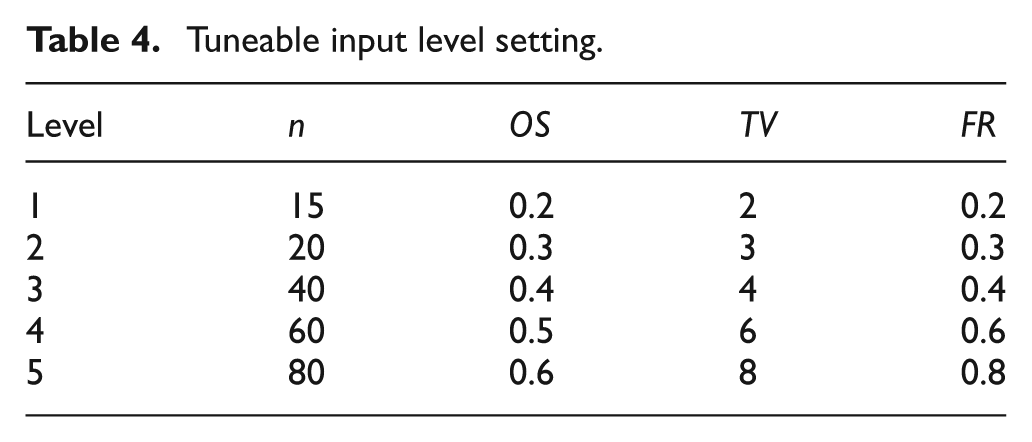

A reference variable setting (datum) is selected as a baseline, while the rest of the problem variable settings are generated by changing only one variable value at a time. In this case, level 3 is selected as the reference variable setting because it is in the middle between the minimum and maximum value. The complete experimental table for Phase 1 is shown in Table 5.

Experimental table for Phase 1.

From Table 5, 17 test problems are generated by changing one variable at a time. Problem 1 represents the reference variable setting, problems 2–5 examine the effect of

In order to solve precedence graphs, the topological sort algorithm is used to generate feasible assembly sequences. This approach will ensure that the generated sequences are always feasible by sorting the nodes into ‘available’ and ‘unavailable’ tasks, during the sequence generation process. 20

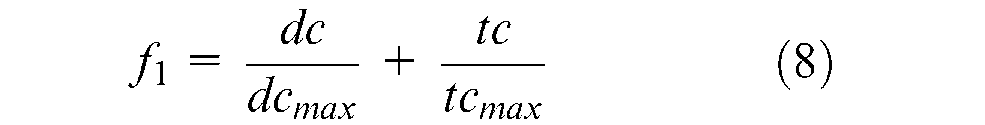

To test the generated problems, three different algorithms were selected for each problem type. For an ASP problem, a multi-objective genetic algorithm (MOGA) that was used in Choi et al. 21 is chosen. This algorithm is selected because, in common with this work, it used task-based representation in representing ASP problems. Additionally, a genetic algorithm is one of the most frequently used algorithms for solving and optimising ASP problems. 2 In this algorithm, the fitness function for ASP is

where

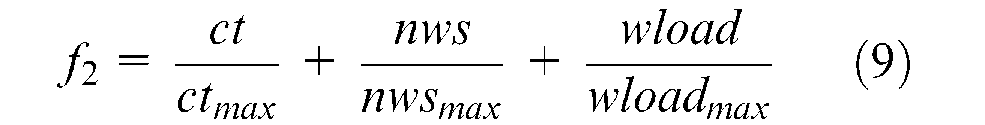

To test the ALB problem, an ant colony optimisation (ACO) algorithm that has been used for a simple assembly line balancing problem (SALBP) in Bautista and Pereira 22 is used. This algorithm is selected based on citation popularity. In addition, an ant colony algorithm is also one of the frequently used algorithms to solve and optimise the ALB problem. 2 In this algorithm, the fitness function is designed as

where

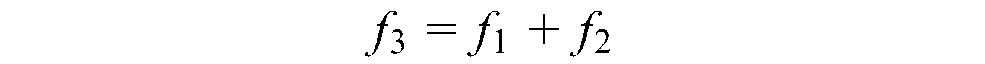

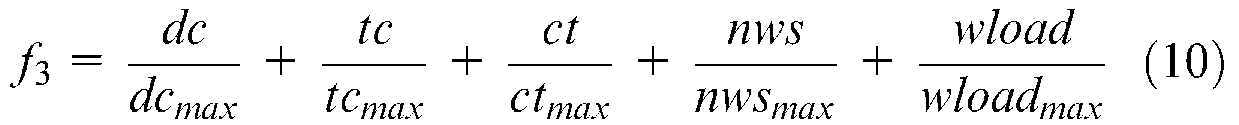

Finally, for an integrated ASP and ALB problem, a hybrid genetic algorithm (HGA) that used in Chen et al. 3 is selected. This algorithm is also selected based on the popularity of this work for integrated ASP and ALB. The fitness function for this problem is designed as

Phase 2. Algorithm testing using generated problems

In Phase 2, the algorithms’ performance to generate a Pareto optimal solution for a combined ASP and ALB problem are tested. The purpose of this test is to determine whether the problems generated by the TPG have sufficient variety that enables users to perceive differences in algorithm performance. To perform this test, the MOGA and ACO algorithm, previously used to optimise ASP and ALB independently, will be used to optimise a combined ASP and ALB problem alongside a HGA. The objective function set for this experiment is as follows.

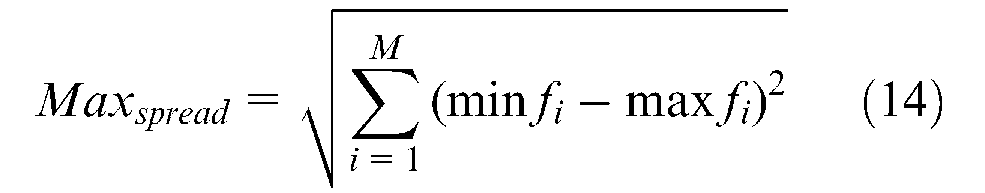

In order to evaluate the performance of each algorithm when dealing with different complexity problems, the following performance indicators are adopted from Deb 23 and Yoosefelahi et al. 24 are used.

Number of non-dominated solutions in Pareto optimal, : Show the number of non-dominated solutions generated by each algorithm in Pareto solution. Higher indicates better algorithm performance.

Error ratio,

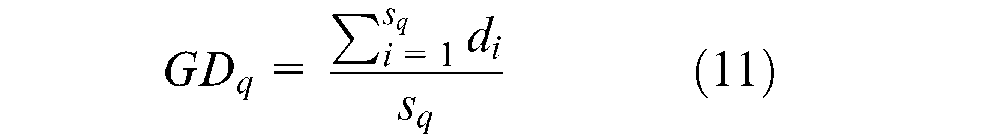

Generational distance,

where

where

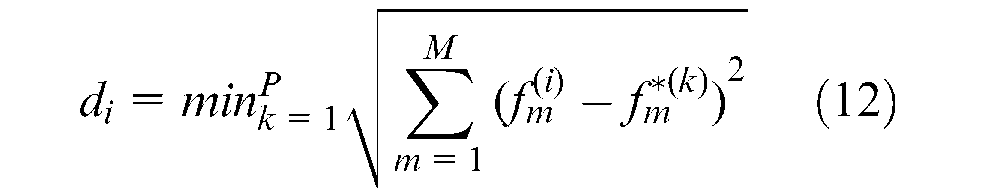

iv. Spacing: This indicator measures the relative distance between each solution.

where

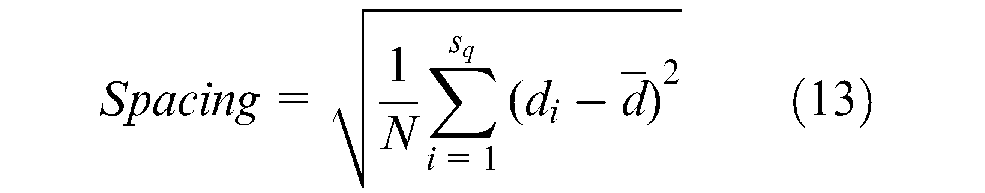

v. Maximum Spread,

Results and discussion

Phase 1: Results

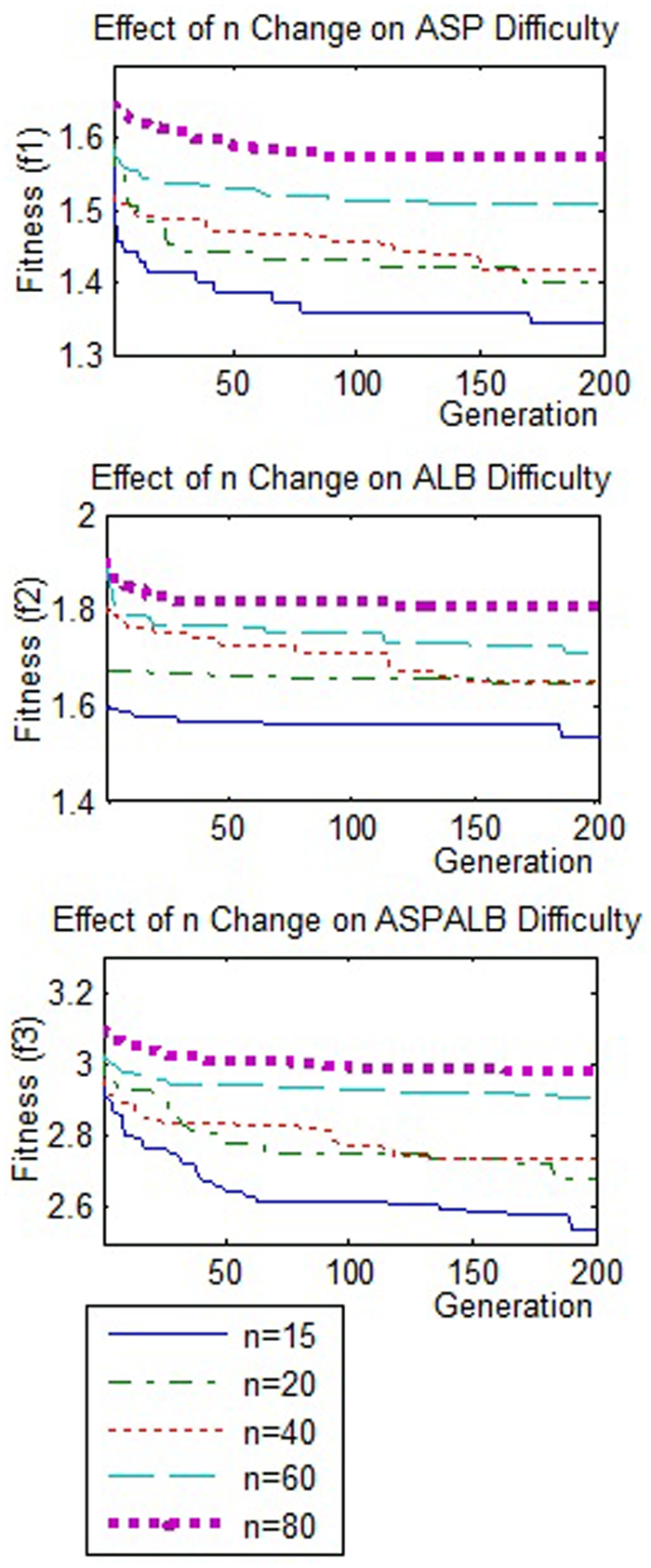

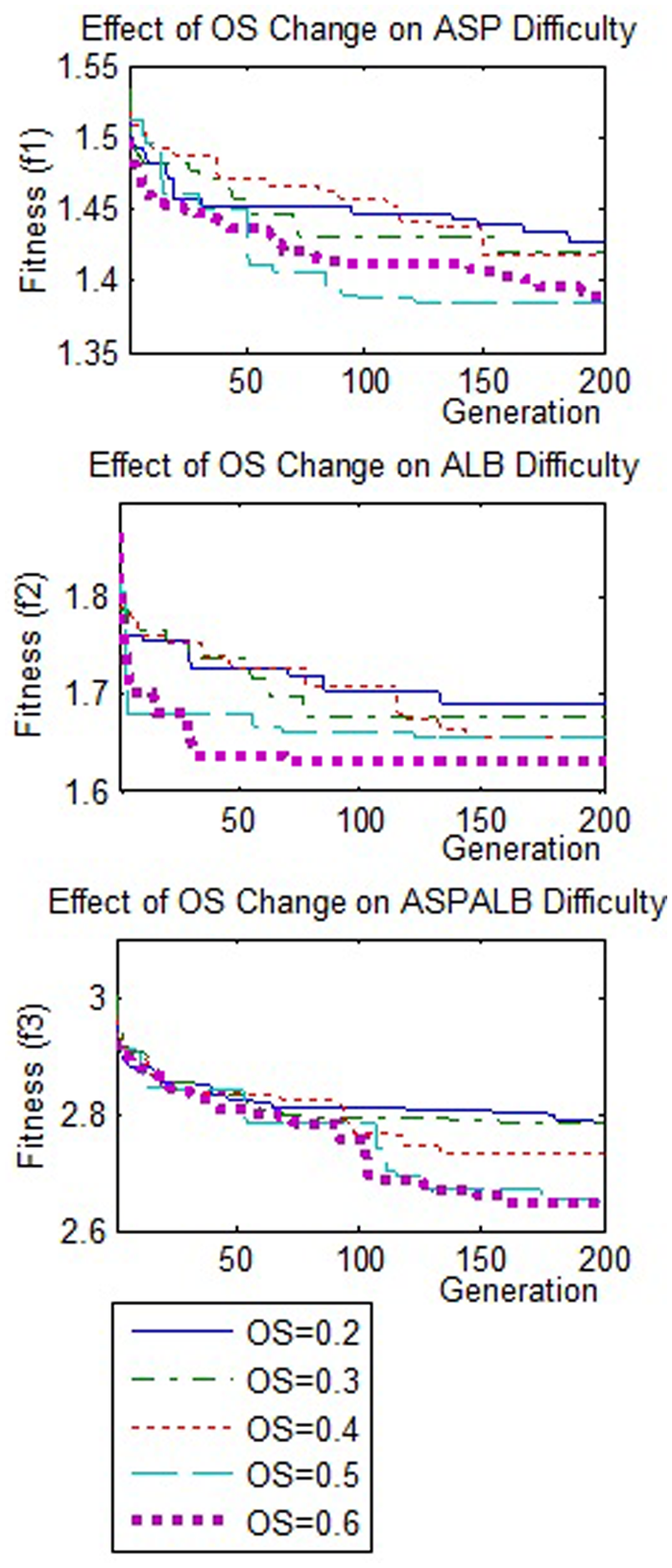

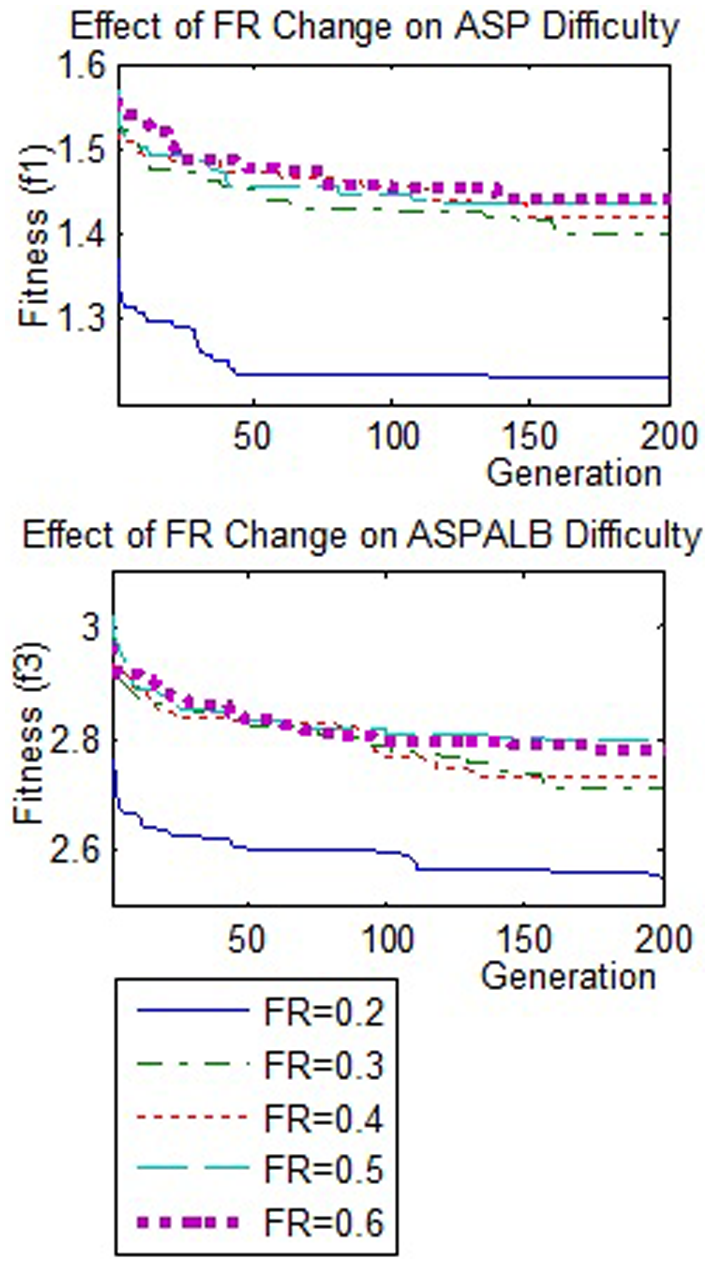

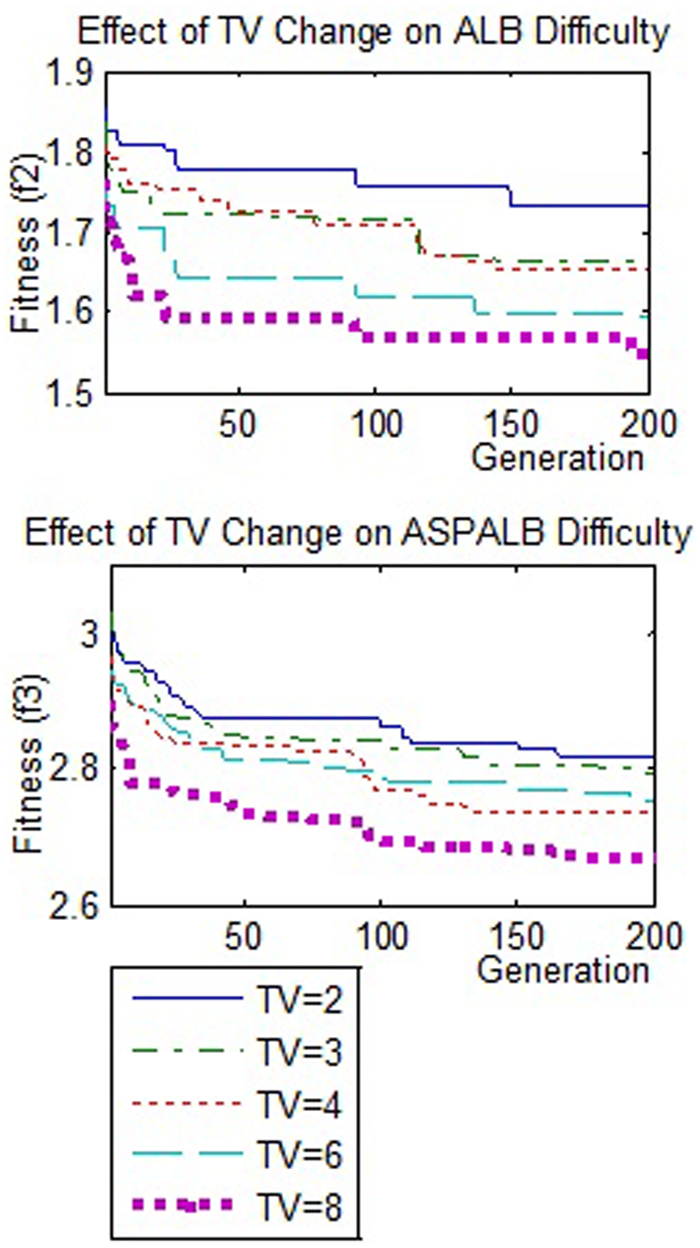

The output from Phase 1 experiments are presented in Figures 4 to 7, showing the average of best fitness value from ten runs.

Average of best fitness for a range of

Average of best fitness for different

Average of best fitness for different frequency ratio.

Average of best fitness for different time variability ratio.

Number of tasks (n)

Figure 4 shows the effect of

Order strength (OS)

Figure 5 shows the effect of

This mismatch is due to the dissimilar approaches used in solving the precedence graph. In the works that directly used generated permutation as an assembly sequence, precedence graphs with higher

On the other hand, in the works that ensures the feasibility of sequence, such as using topological sort, the precedence graph with higher

Nevertheless, there is inconsistency in outputs for ASP with

Frequency ratio (FR)

The output from the ASP problem in Figure 6 shows that the proposed complexity attributes of

ASP data with a high

Time variability ratio (TV)

ALB results in Figure 7 confirm that the time variability ratio adopted from previous works is effective to control the assembly time data complexity.13,16

The assembly times with higher

The results of the tuneable input test show that the ASP and ALB problem complexity can be controlled via the input attributes of the TPG. Although the early assumption that the precedence graph with higher

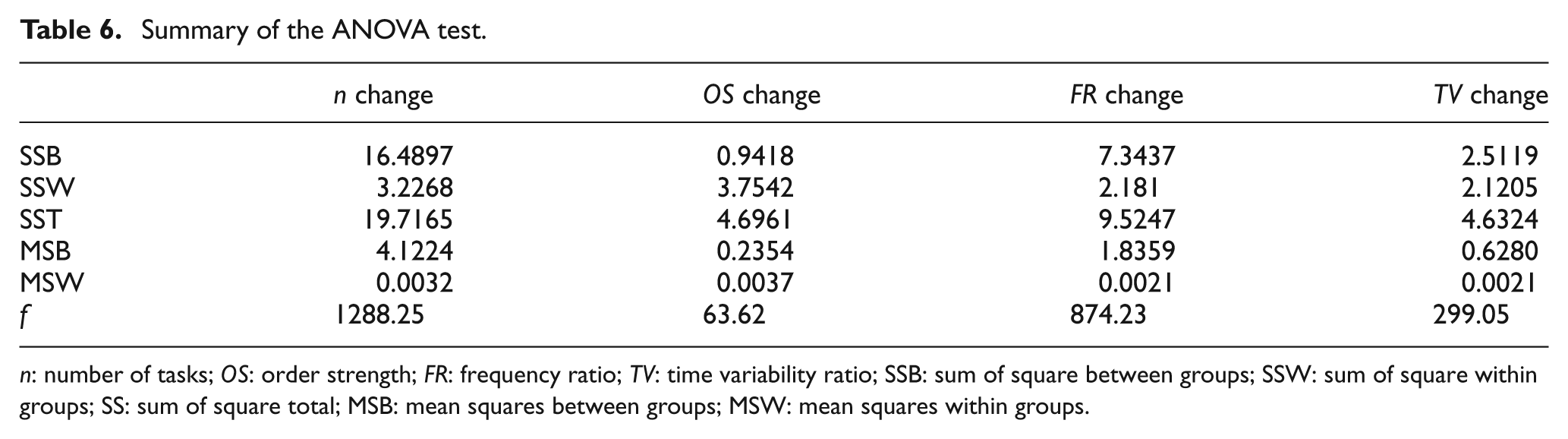

In order to test the significance of the results, statistical tests are performed. In this case, an ANOVA test is carried out to test if there are any significant differences between the results of one level with results from another level. The null hypothesis stated that there would be no difference among five tuneable input levels means. The summary of the ANOVA test is presented in Table 6.

Summary of the ANOVA test.

In this case, the critical

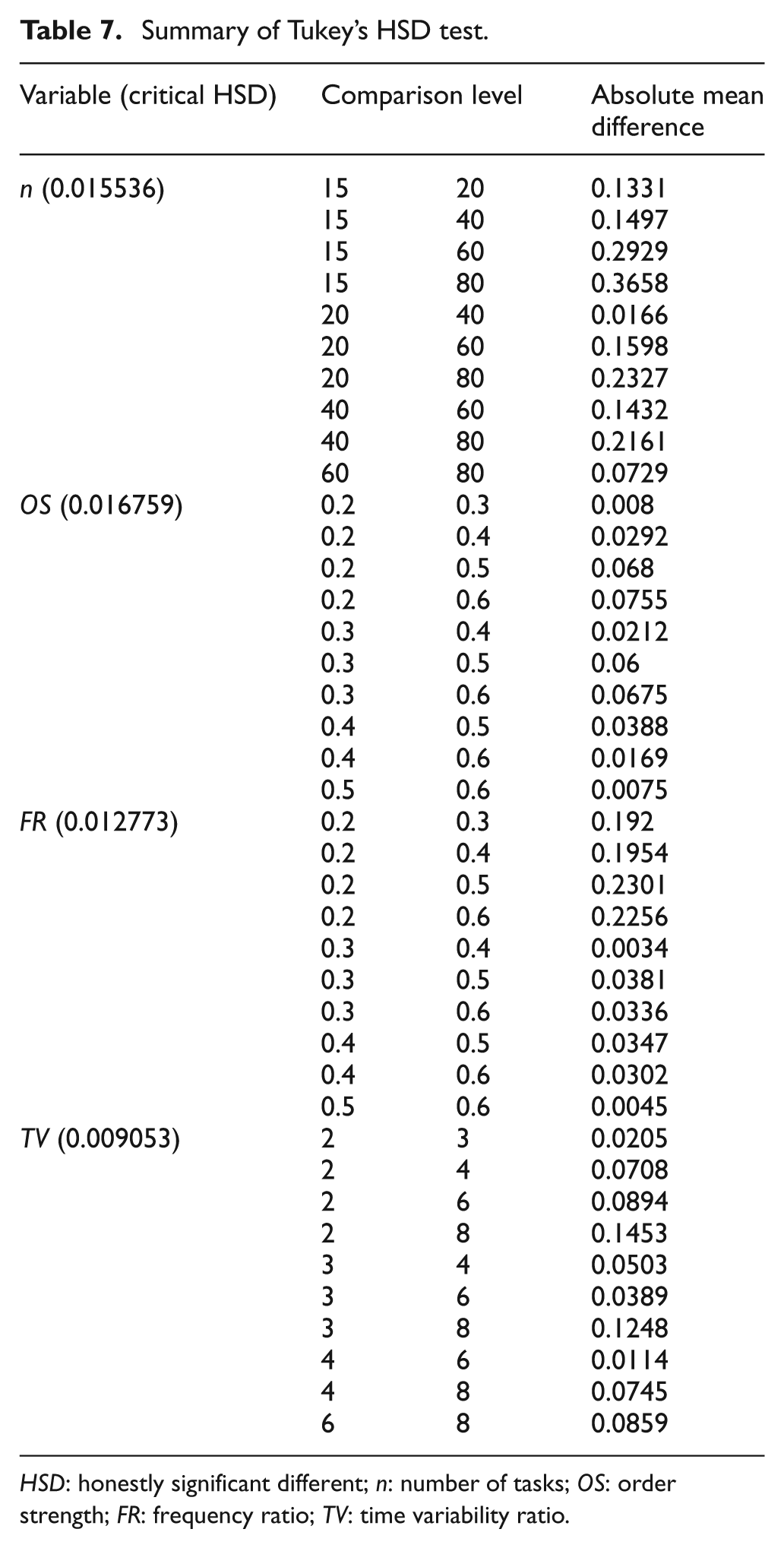

Tukey’s HSD test compares the mean of the rejected null hypothesis with the means of other groups to identify if there is any significant difference between the mean of one level with another. The value of the absolute difference between two means will be compared with a critical HSD as proposed in the Tukey’s table.

28

The summary of the Tukey’s HSD test at 0.05 confidence interval is presented in Table 7. From Table 7, the absolute mean difference between two levels for

Summary of Tukey’s HSD test.

Meanwhile, in the

Phase 2: Results

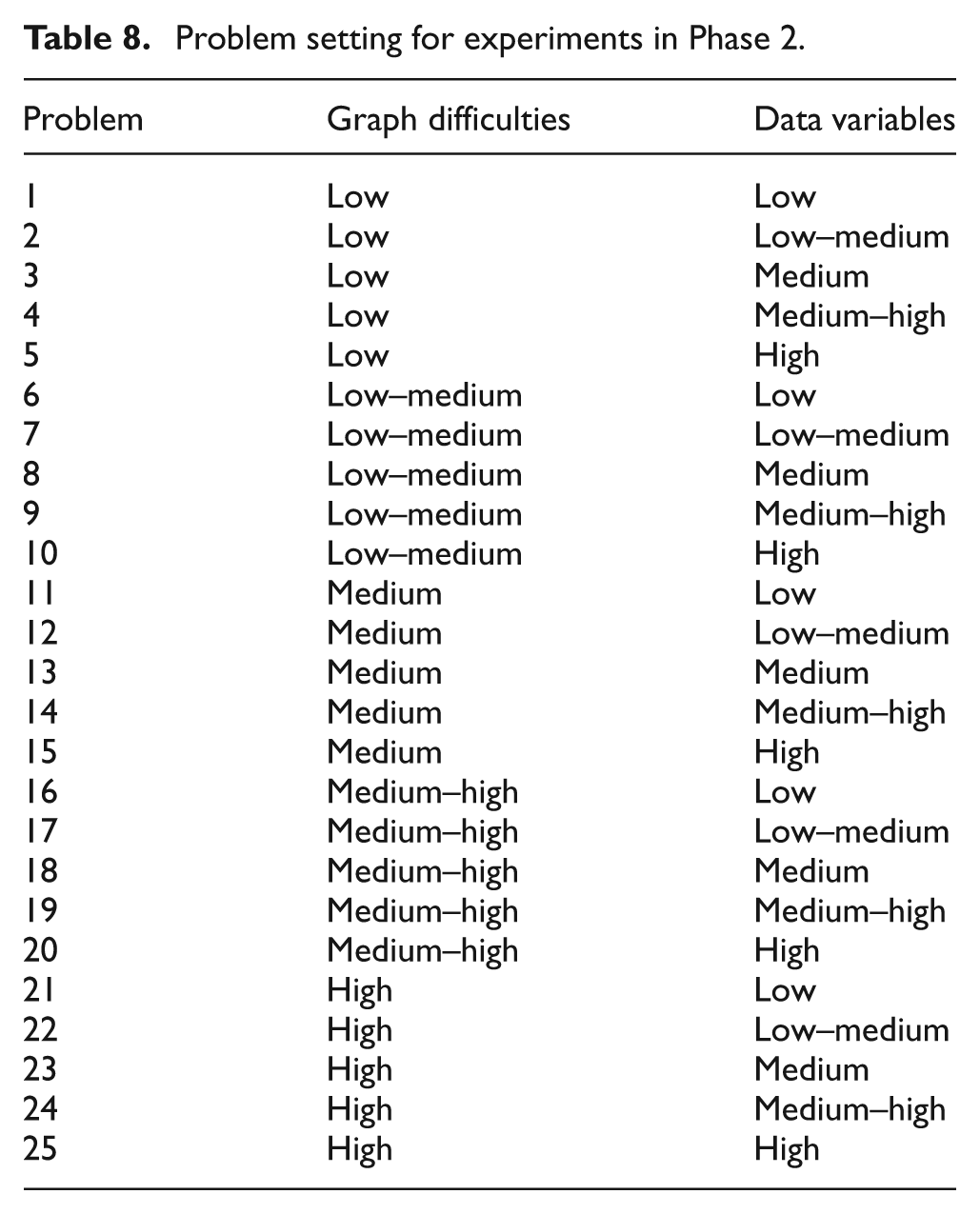

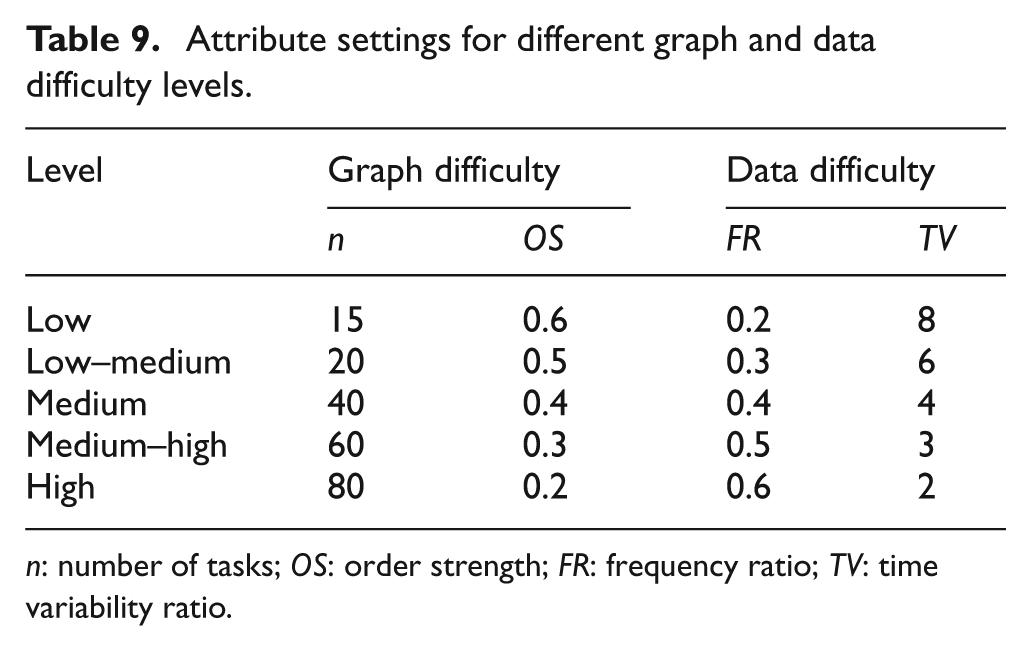

In this phase, 25 problems with different difficulty settings are used to demonstrate the usefulness of the TPG for testing the performance of algorithms. The set-up is for multi-objective optimisation of ASP, ALB, and ASPALB problems. The assembly problem for this experiment is set up as in Tables 8 and 9.

Problem setting for experiments in Phase 2.

Attribute settings for different graph and data difficulty levels.

The tuneable input for these test problems are grouped into graph difficulty (

The results from Phase 2 experiments are summarised in Table 10. Numbers in brackets are weighting values that are assigned to each algorithm based on its performance for the respective indicator. For every indicator in a given problem, the best result is assigned a weight value of 3, while the second and third positions are assigned weight values of 2 and 1, respectively. Then, the algorithm ranking is made through comparison of the weighted sums.

Summary of the result of experiments on selected multi-objective algorithms.

Numbers in brackets are weighting values from the best (weight = 3) to worst (weight = 1) performance.

Based on the results in Table 10, the HGA consistently shows the best performance in all problems having low and low–medium graph difficulties (problems 1–10). Meanwhile, the MOGA shows better performance compare with the ACO algorithm for problems 1–3, but then showed inconsistent performance for problems 4–10. In problems 4–10, the ACO algorithm starts to overcome the MOGA performance in some cases.

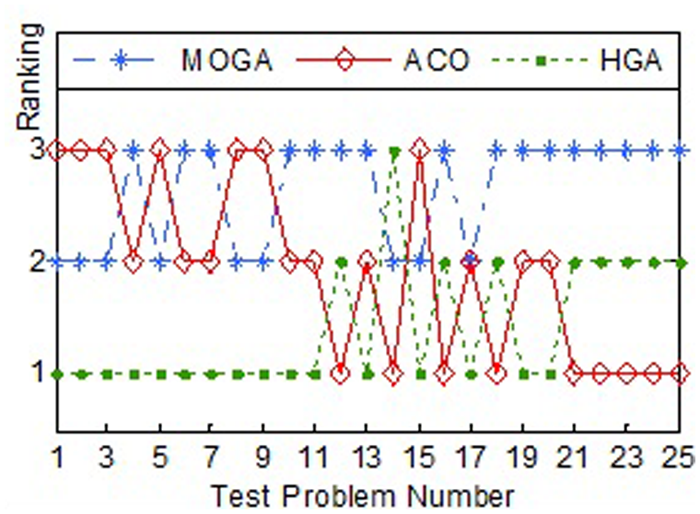

Meanwhile, for the problems with medium and medium–high graph difficulties (problems 11–20), the HGA and ACO algorithms alternately lead the algorithms in the first rank. However, when the graph difficulty is increased to a high difficulty (problems 21–25), ACO has consistently shown a better performance and followed by HGA and MOGA. The relative performance of each algorithm is presented graphically in Figure 8.

Algorithm’s ranking for the range of test problems.

Conclusions

In this work, a TPG with tuneable complexity for ASP and ALB problems has been proposed. A set of experiments has been conducted to assess the TPG. Experimental results confirm that problem complexities can be controlled by tuneable input variables.

The results from the Phase 1 experiments that test the effects of tuneable inputs confirm the ability of the TPG to generate problems with varying complexity levels. The problem difficulties will increase when using a larger number of tasks (

The results of the algorithm performance experiment in Phase 2 show that the HGA consistently performed well in optimising problems with low and medium difficulties. Meanwhile, the ACO showed good performance in problems with a high level of difficulty. Based on the performance of both algorithms, the HGA is recommended for integrated ASP and ALB problems with low and medium difficulties, while the ACO is for ASP and ALB problems at high difficulty. These findings confirm that the problems generated by the TPG offer a sufficient range of problem varieties to be used in algorithm testing. The generated problems were found to be useful to identify the strengths and weaknesses of the tested algorithms.

Although further experiments are needed to confirm these strengths and weaknesses, the TPG has provided an important path by supplying a variety of ASP and ALB problems for systematic testing. Therefore, it can be concluded that the proposed TPG is able to generate combined ASP and ALB problems in a wide range of difficulties.

Footnotes

Funding

This research received no specific grant from any funding agency in the public, commercial, or not-for-profit sectors.