Abstract

Governments are increasingly using chatbots to facilitate public encounters. Using survey vignette experiments conducted in Estonia, we conclude that citizens’ perceived usefulness and trust in technology significantly correlate with the intended use of chatbots in public encounters. Privacy concerns are related to the intended uses of chatbots for service provision but not for information provision, while trust in government, explainability, and the amount of information are not related to the intended uses of chatbots. These findings contribute to a better understanding of how citizens’ attitudes and perceived risks are relevant to citizens’ intended use of artificial intelligence applications in citizen-state relations.

Keywords

Introduction

Estonian folklore offers an intriguing tale about Kratt, mystical rural creatures that, through their master’s pact with the devil, perform menial tasks tirelessly and obediently. In so doing, Kratt are instrumental in accumulating wealth effortlessly. When left idle, however, Kratt quickly turn themselves against their masters, damage property, and engage in self-destructive behaviours (Valk, 2008). Originally warning against moral compromises in pursuing effortless wealth, the tale of Kratt offers an imaginative way to reflect on the risks of using technological artefacts, including contemporary uses of artificial intelligence in our daily lives. One of the applications of artificial intelligence that is now at the centre of discussions is the use of chatbots in interactions between governments and citizens (Alishani et al., 2025). Academic literature has described over a hundred instances of chatbots in over 50 countries’ governments in the world (Makasi et al., 2022; Senadheera et al., 2024), with specific applications providing assistance to citizens looking for information on websites, engaging in rich conversations with citizens to clarify needs and explain services (Goodsell, 1981; Willems et al., 2022), or executing entire service processes.

With the academic disciplines of artificial intelligence and computer science focusing on the design and development of chatbots, public administration scholarship has focused on chatbots’ impact on government internal processes (Chen and Gasco-Hernandez, 2024; Cortés-Cediel et al., 2023; Larsen and Følstad, 2024; Wang et al., 2024), with limited attention thus far being paid to why, or under what conditions, citizens would be willing to use chatbots in realistic public encounters and thus be served by technologies rather than by human public officials (Alishani et al., 2025; Criado et al., 2024; Miller et al., 2022; Roehl and Crompvoets, 2025). What makes chatbots in public encounters stand out as an adoption and diffusion topic is that, like Kratt, AI-propelled chatbots in realistic public encounters bring about specific privacy risks that, according to citizens’ privacy calculus, may or may not be mitigated. It has been argued that explainability and transparency are adequate recipes for boosting trust in digital service delivery by chatbots, but, until to date, the role of risk, risk calculus, and measures to mitigate risks have not been theorised or empirically studied (Criado et al., 2024; Kleizen et al., 2023; Willems et al., 2022). This paper addresses this gap by testing what factors relate to citizens’ intentions to use chatbots in public encounters, with a special focus on perceived risks and citizens’ risk calculus. A known criticism of chatbot research is that studies tend to focus on cases in which users are not very familiar with government chatbots yet (Miller et al., 2022; Wang et al., 2023; Willems et al., 2022). We address this criticism by using original survey data that was gathered in Estonia, a country in which citizens are familiarised with digital transactions (Solvak et al., 2019), and government technologies have become part of the nation’s international branding and national identity (Kaun et al., 2024; Männiste and Masso, 2018). In this context, it is assumed that engaging with chatbots is a far less revolutionary change in dealing with governments than in many other countries of the world.

Therefore, this study’s research question is formulated as follows: What factors are associated with citizens’ use of chatbots in public encounters in Estonia?

The research question is addressed through a vignette survey experiment (n = 1058) with a 2 × 2 factorial design, in which we recorded and analysed respondents’ responses to encounters with chatbots that have lower and higher information exchange requirements and lower and higher levels of explainability. This paper contributes to the public administration literature in at least three specific ways. A first contribution is that we reconceptualise the classic theme of public encounters by empirically analysing why, and under what conditions, chatbots are considered by citizens as accepted or acceptable participants in public encounters (Alishani et al., 2025; Wirtz et al., 2021) and how possible risks affect citizens’ use of chatbots, using original and unique data gathered in Estonia. A second contribution is that we extend adoption and diffusion theories by considering variables extracted from privacy calculus theory, public encounter literature, and trust theory (Grimmelikhuijsen, 2023; Kleizen et al., 2023; Wang et al., 2023; Willems et al., 2022). A third contribution is that we provide an increased understanding of advanced digital public service delivery in arguably one of the most digitally advanced societies in the world, Estonia.

Conceptual foundation and theoretical framework

Definition and uses of chatbots

Chatbots can be defined as software applications that use machine learning and natural language processing to engage in personalised and responsive user interactions (Androutsopoulou et al., 2019; Cortés-Cediel et al., 2023; Makasi et al., 2022; Van Noordt and Misuraca, 2019). Users typically initiate interactions by asking questions or requesting services from chatbots and, in doing so, share personal information (Schomakers et al., 2022). Chatbots typically use algorithms to predict the next words that will be used in a conversation. Given a large corpus of texts (as provided by large language models), chatbots can generate reasonable answers to various prompts, up to a point where behaviour in conversations is indistinguishable from that of humans (Biever, 2023). Artefacts with human-like appearances and behaviours have, in about a century, transcended from visualisations in science fiction movies (Fritz Lang’s depiction of the robot Maria in the 1927 movie Metropolis being a case in point here, see Nardi and O’Day (1999)) to tangible realities (Androutsopoulou et al., 2019; Cortés-Cediel et al., 2023). Makasi et al.’s (2022) study of 92 real-world applications of chatbots confirms that chatbots are capable of learning and improving the way they respond to users’ queries and questions and suggests that many chatbots have sufficient pattern recognition capabilities to replace humans in carrying out specific, even seemingly unstructured, information-processing tasks, including but not limited to public encounters.

Despite the intuitive appeal of convenience and efficiency, government deployments of chatbots into citizen-state interactions have been lagging behind optimists’ expectations. Most implementations at this stage focus on information provision by automating responses to frequently asked questions or guiding users through predefined scripts (Androutsopoulou et al., 2019; Cortés-Cediel et al., 2023; Makasi et al., 2022). There are, however, more advanced systems that provide services by interpreting user needs, presenting service options, and facilitating transactions (Androutsopoulou et al., 2019; Van Noordt and Misuraca, 2019). This, in turn, changes the way citizens experience and respond to interactions in encounters with the government (Alishani et al., 2025). The Estonian Bürokratt initiative that was mentioned in the introduction aims for the automation of parts of the activities of human public officials in voice- and text-based conversations with Estonian residents (Sikkut et al., 2020) that traditionally would answer citizens’ questions and respond to citizens’ requests for information, assistance, or public services more generally.

Chatbots and public encounters

From a public administration perspective, the use of artificial intelligence raises several more specific questions. A first question concerns the concept of public encounters as a focal area of attention in the academic discipline of public administration. Traditionally, public encounters are defined as interactions between citizens and government representatives to conduct business that stretches beyond the mechanical application of formalised legal rules and procedures (Goodsell, 1981) and provides answers to factual questions (Følstad and Bjerkreim-Hanssen, 2024). In his foundational work, Lipsky (1969, 1980) emphasised how public officials that are engaging in conversations and interactions with citizens are typically confronted with citizens’ needs and concerns that do not nicely fit legal or administrative categories or formal realities (Hupe, 2022; Tummers and Bekkers, 2014), and/or inevitably have to work with open textured, ambiguous or even contradictory rules (Dworkin, 1977; Hart, 1961; Hupe and Hill, 2007; Jowell, 1973). The extant street-level bureaucracy literature has reported how so-called street-level bureaucrats apply discretion and at least occasionally rule-bending as coping behaviours to align interpretations and professional judgment with legislators’ and policymakers’ intentions (Bayamlıoğlu and Leenes, 2018; Binns, 2022; Bovens and Zouridis, 2002; Hildebrandt, 2021; Johansson, 2024; Petersen et al., 2020). Bayamlıoğlu and Leenes (2018) argue that machine learning has fundamental issues with interpreting open-textured or conflicting rules and implicit meanings prescribed in law. However, Carlsson (2025) finds that, in algorithmic decision-making, fairness and justice are determined by group treatment rather than individual treatment, which differs from how public professionals typically operate.

The algorithmic governance literature suggests that with the emergence of chatbots, contradictions and open texture do not disappear (Alshallaqi, 2024; Giest and Grimmelikhuijsen, 2020; Jorna and Wagenaar, 2007; Lindgren et al., 2019; Petersen et al., 2020; Young et al., 2019), but rather that discretion is shifted from the street level to the system level. This implies that discretion is moved from being applied in in-person, street-level interactions between frontline workers and citizens, to back office settings, in which information systems professionals work in tandem with domain experts to continuously update, refine, and tinker with language models and workflows to keep conversations with citizens going (Vogl et al., 2020). In real-world chatbot implementations, so-called prompt engineers or chat whisperers are required to train algorithms in the back office to answer citizens’ questions and handle unforeseen scenarios (Frederick, 2024; ISSA, 2022). All this raises the question of whether citizens will accept chatbots’ answers or services, knowing or interpreting that their original questions or requests require interpretation and/or rule-bending at arm’s length from where the conversation is taking place.

A second, more sociolinguistic or political question is whether the machine learning at the core of chatbot functionality may reinforce pre-existing biases and thus produce discriminatory outcomes against social groups (Alshallaqi, 2024; Coeckelbergh, 2022; Zabel and Otto, 2021). Hovy and Prabhumoye (2021) argue that the language annotation process, chatbot design, and implementation process can all result in biases. For example, Bolukbasi et al. (2016) demonstrate that language models that are the foundation of chatbots’ machine learning associate ‘man’ more closely with ‘computer programmer’ than ‘woman’, and ‘grandmother’ and ‘grandfather’ more closely with ‘wisdom’ than ‘gal’ and ‘guy’. Others have shown that natural language processing systems may also struggle to process text produced by users with certain ethnic backgrounds (Blodgett et al., 2016; Jurgens et al., 2017) and understand dialects or word choices when models are trained on standard datasets (Bolukbasi et al., 2016). In addition, Zabel and Ott (2021) and Alshallaqi (2024) argue that chatbot developers working individually or in teams in back-office settings may amplify or reinforce the injustices, biases, and problematic ideologies that underlie them. All of this raises the question of whether and, if so, how perceived biases and injustices affect citizens’ acceptance of chatbots.

In this study, we do not study the functioning of chatbots or the way they are being developed, but focus on citizens’ intended uses of chatbot uses in public encounters and, thus, in situations where citizens are confronted with (1) uncertainties and risks associated with the very nature of public encounters, and (2) with the way artificial intelligence works its way in public encounters. Academic literature suggests various lines of reasoning regarding citizens’ acceptance of chatbots and their willingness to absorb associated risks. Some authors hold a rather techno-optimistic view, suggesting that citizens generally have optimistic beliefs about the capabilities and objectivity of artificial intelligence and are likely to embrace chatbots (Helberger et al., 2020; König, 2023). Miller and Keiser (2021) hold an even more optimistic belief, stating that citizens who feel marginalised rate decisions made through artificial intelligence higher than those made through human judgment. Other authors suggest that trust plays a crucial role in explaining citizens’ acceptance of chatbots, with trust in chatbots being more valuable than trust in human beings when mistakes occur (De Visser et al., 2017). Aoki (2020) argues that citizens generally are optimistic and enthusiastic about the uses of chatbots in conversations about predictable issues like how to separate waste, but are more sceptical about chatbots in conversations about more complex and contested issues, such as parental support that require judgment (see also Bitkina et al., 2020; Bullock, 2019; Ingrams et al., 2022).

Existing theoretical bodies of knowledge have been used to coalesce the diverging statements mentioned above. Diffusion theories, for instance, state that citizens’ attitudinal variables explain citizens’ intentions to use chatbots (Marangunić and Granić, 2015; Venkatesh et al., 2003, 2012), and privacy calculus theory (Culnan and Armstrong, 1999; Dinev and Hart, 2006) suggest that citizens that are confronted with chatbots typically balance (1) convenience and value with (2) risks that sharing personal information brings with it. In the context of chatbots, authors have suggested various variables that influence whether citizens are willing to share personal information with a chatbot and thus accept the perceived risks or refrain from doing so. These variables include the amount of information that has to be shared to trigger a specific desired outcome (Willems et al., 2022), the transparency and explainability a chatbot provides in conversations with citizens (Grimmelikhuijsen, 2023), citizens’ trust levels and privacy concerns (Aoki, 2020; Grimmelikhuijsen, 2023; Kleizen et al., 2023; Wenzelburger et al., 2022), and perceived value of information or services provided by chatbots (Choung et al., 2023; Wang et al., 2023; Willems et al., 2022). The empirical evidence is far from conclusive; therefore, in the next section, we present several hypotheses that identify various relevant variables affecting the balancing act.

Hypothesis development

Explaining individuals’ intended and actual use of innovations (including, but not limited to, all kinds of technological devices and software applications) has been a core challenge for researchers working in the field of diffusion and innovation theory for decades. According to scholars working with more recent diffusion of innovations-derivates like the Technology Acceptance Model, Theory of Planned Behaviour, the Unified Theory of Acceptance and Utilisation of Technology, and the Unified Model of e-Governance Adoption, the strongest predictor for the intended use of new technology is its users’ expectation about whether the technology serves its intended purpose (Choung et al., 2023). In relation to chatbots as an innovative technology, this translates into an expected positive relationship between the perceived performance of chatbots as a channel of communication between citizens and the government, and the use of chatbots by citizens. Based on this, we hypothesise that.

The higher the perceived usefulness of a government chatbot used in public encounters, the higher the citizens’ intentions to use it. A more or less recent theoretical innovation in diffusion of innovation models is inspired by privacy calculus theory (Dinev and Hart, 2006; Valle-Cruz et al., 2023). Privacy calculus theory posits that disclosing personal information exposes users to uncertainties and risks, and the theory suggests that individuals may sacrifice privacy and potentially be exposed to adverse consequences, based on whether convenience, access and other affordances outweigh costs associated with data misuse, loss of control, and reputational harm (Baruh et al., 2017; Mutimukwe et al., 2020; Willems et al., 2022). In a study of the adoption of COVID contact tracing apps, Hassandoust et al. (2021) hypothesised that privacy concerns, risk beliefs, and perceived benefits influence American users’ intention to install contact tracing apps and found support for this hypothesis (for comparable results of a study of contact tracing apps conducted in Germany and Switzerland, see Abramova et al. (2022). Attié and Meyer-Waarden (2022) extended a diffusion of innovation model with privacy calculus logic to study the adoption of smart devices by French students, finding that privacy concerns are the primary obstacles to their adoption. A study by Beldad et al. (2012) showed comparable results to those in studies of government digitalisation initiatives. If we apply privacy calculus theory to chatbots, we can infer that there is a positive association between citizens’ belief that benefits of sharing personal information outweighs or at least balances privacy risks, and citizens’ intended uses of chatbots (Dinev and Hart, 2006; Li, 2012; Willems et al., 2022), are likely to accept and intend to use chatbots. Based on this, we hypothesise that.

The higher citizens’ privacy concerns, the lower their intention to use government chatbots in public encounters. Research also suggests that privacy concerns limit users’ intentions to share information online (Mutimukwe et al., 2020) and, thereby, can challenge the uptake of digital services (Baruh et al., 2017). Castañeda and Montoro (2007) note that requesting more information from users reduces their intention to engage in online activities. The relevance of the amount of information to be exchanged through chatbots, especially when personal information is requested (Dinev and Hart, 2006), has been confirmed in other studies on chatbot use (Willems et al., 2022). Based on these findings, we hypothesise that.

The higher the degree of information requested from citizens, the lower their intention to use government chatbots in public encounters. Artificial intelligence explainability refers to a system’s ability to provide human-understandable explanations of how and why an outcome was produced (Grimmelikhuijsen, 2023; Miller, 2019). Given that it is unrealistic to expect all users to comprehend the code, multiple approaches to construct explanations are discussed in the literature (See Aoki et al., 2024; De Bruijn et al., 2022). For example, when an online ID renewal application is rejected, users want to understand the rationale behind the decision to improve their chances for future submissions. Similar to face-to-face public encounters, users expect chatbots to justify public decisions and allow them to assess the fairness of the processes or potentially contest the outcome (Grimmelikhuijsen, 2023; Janssen et al., 2022). Therefore, we adopt a bureaucratic approach to explainability that emphasizes the internal logic underlying the output (Aoki et al., 2024). In this study, higher levels of explainability indicate that the chatbot can justify its outcomes in clear language and explain how adjusting the input would lead to a particular service outcome (Aoki et al., 2024; Wachter et al., 2017). To date, artificial intelligence explainability is recognised as a key solution for tackling the opacity of black-box systems (De Bruijn et al., 2022) and stands out as a prominent theme in discussions about artificial intelligence transparency (Grimmelikhuijsen, 2023; Janssen et al., 2022). It is also suggested as a means to improve user experience and satisfaction with applications, potentially affecting user willingness to use these technologies (Aoki et al., 2024; Ebermann et al., 2023). Pieters (2011) found that explainability positively impacts users’ beliefs that the chatbot is effective and designed with good intentions. While users are more likely to follow explained recommendations (Giboney et al., 2015), researchers note that low-quality explanations may have opposing effects (Ebermann et al., 2023; Giboney et al., 2015). However, the impact of artificial intelligence explainability on user attitudes towards chatbots is a new research area, and the relationship between the two variables remains relatively unclear. In this study, we investigate the impact of explainable chatbot decisions on user intentions to use chatbots in public encounters and hypothesise that.

The more the decisions made by a government chatbot are explained to citizens in public encounters, the higher their intentions to use it. Trust is essential in relationships and becomes increasingly relevant when risk, uncertainty, or interdependence are involved (McKnight et al., 2002). Zand (1972) suggests that by placing trust in another party, individuals are willing to make themselves vulnerable towards a party whose actions they cannot monitor or control. Trust is based on the belief that the trusted party will act as expected and perform a particular action that is important to the trustor, despite having the freedom to choose alternative actions that may negatively impact the trustor (Koller, 1988; Mayer et al., 1995). By accepting vulnerability, the trusting party possesses trust, whereas by refraining from exploiting vulnerability, the trusted party is trustworthy (Homburg et al., 2022). In the context of artificial intelligence, trust becomes crucial as individuals may feel at risk when disclosing personal information online or relying on artificial intelligence-propelled outcomes. Therefore, decisions to accept artificial intelligence applications demand a “leap of faith” in the technology used in service delivery and the party providing the service (Beldad et al., 2012). In this study, the focus is on two types of trust necessary to establish trust in artificial intelligence: trust in technology and trust in government as a service provider (Wang et al., 2023; Yang and Wibowo, 2022). Trust in technology refers to the degree to which citizens believe a chatbot is helpful and reliable in completing service tasks (McKnight et al., 2011). A higher degree of public trust in technology implies greater citizen confidence in the ability of a chatbot to provide the necessary features and help to find information and access digital public services, hence performing effectively even in situations that may involve some degree of risk (Mcknight et al., 2011). Government chatbots are promoted for their capacity to handle numerous service requests simultaneously and benefit users by providing consistent and timely service responses (Cortés-Cediel et al., 2023). However, in many situations where chatbots are beneficial, they also introduce user risks (Cortés-Cediel et al., 2023; Zabel and Otto, 2021). Therefore, viewed or proven flawed chatbots may adversely affect user intention to use these applications (Ribeiro et al., 2016). However, the empirical evidence is contradictory. While a lack of public trust has led to the rejection of effective applications (Kostka et al., 2021), some technically flawed systems are used because of people’s trust (Hamacher et al., 2016). In line with studies that suggest that people use technologies that they trust (Lee and See, 2004; Ribeiro et al., 2016), we hypothesise that.

The more citizens trust technology, the higher their intention to use government chatbots in public encounters. On the other hand, trust in government refers to the degree to which citizens believe their government acts competently and conducts itself with benevolence and integrity (Homburg et al., 2022; McKnight et al., 2002). Citizens’ intentions to use chatbots are not only affected by their trust in technology but also by their relationship with the organisation deploying the chatbot (Wang et al., 2023). A higher degree of public trust would imply that citizens are more likely to believe that their government is capable of addressing their service concerns through chatbots, as well as acting in their best interest and fulfilling obligations (McKnight et al., 2002). As such, it is reasonable to expect that users are more likely to engage with chatbots deployed by trustworthy public entities, as shown in related domains (Männiste and Masso, 2018; Pérez-Morote et al., 2020). In particular, if citizens trust their government, they may be more likely to support using government chatbots simply because they have a positive attitude towards their government. In line with this, we hypothesise that.

The more citizens trust their government, the higher their intention to use government chatbots in public encounters.

Methodology

Experiment design and measurement

This study puts hypotheses relating to citizens’ intentions to use chatbots in public encounters to the test by confronting the hypotheses with data gathered in nationwide survey-based experiments in Estonia in 2024 (n = 1058). Vignettes are hypothetical stories featuring real-life events or scenarios and are used in experiments to stimulate respondents to form judgments and capture information about their personal beliefs (Atzmüller and Steiner, 2010). Vignettes are designed to incorporate variables of interest to the study and are systematically manipulated across the vignette population to test their impact. Respondents are then invited to read the vignette and react to the experiment, often depicting third persons as characters. Using a third-person approach allows researchers to investigate topics that respondents may be hesitant to discuss openly or refrain from sharing their possible beliefs or actions (Gourlay et al., 2014). In quantitative research, vignette experiments are embedded in surveys to test the impact of beliefs, norms, attitudes, and perceptions on behaviour across populations (Atzmüller and Steiner, 2010; Auspurg and Hinz, 2015), as well as to combine the internal validity associated with experiments and the external validity associated with surveys (Mee, 2009; Migchelbrink and Van de Walle, 2020), which make survey-vignette-experiments suitable for testing our hypotheses.

This study uses a 2x2 factorial design to conduct two survey experiments. The first experiment consists of four vignettes illustrating a scenario where a citizen uses a chatbot to search for service information. The second experiment also consists of four vignettes and illustrates scenarios where the character uses the chatbot to access a digital public service. Both experiments were embedded in the same survey, and each respondent participated in both experiments.

The survey experiments followed a between-subjects design, where each respondent was randomly assigned to read one vignette per experiment and answer the survey questions. Each vignette in experiments one and two received between 262 and 267 responses. The vignettes manipulated two variables at high and low levels (Mee, 2009). The first experimental variable concerned the amount of information requested from citizens during the chatbot interaction (see Appendix A). In Experiment 1, in line with Gupta et al. (2025), Tsap (2022), and Vrakas et al. (2010), the high-information condition was operationalised as requiring authentication in addition to the passport’s expiration date and place of residence, while the low-information condition was limited to the place of residence only. In Experiment 2, a similar manipulation was implemented, but it was adapted to the health service context: the high-information condition required age, symptoms, onset of symptoms, and place of residence, whereas the low-information condition was restricted to symptoms and place of residence. To ensure realism and clarity, we tailored the amount of requested information to the specific service context based on expert consultation and with an eye for comprehensiveness and understandability. The second variable manipulated the explainability of chatbot decisions. Following Aoki et al. (2024), the high explainability condition was operationalised as a chatbot providing a straightforward and understandable explanation in plain language, which suits non-technical audiences to justify the outcome by focusing on content clarity in the conversation. In low-level conditions, a similar approach was followed, where a vignette depicts a situation where the chatbot does not explain the outcome at all. The focus in the operationalisation of the high explainability condition is thus on content clarity from the perspective of the target audience, and to a lesser degree on explaining the reasoning behind algorithms that drive the conversation. Other variables were measured using existing operationalisations as much as possible, with specific formulations of items, sometimes including specifics of the vignettes. The main variables were measured using items on a 7-point Likert scale. The complete set of questionnaire items, including the references to studies in which similar measurements were used, can be found in Appendix B.

The vignette experiments were presented in text format, and respondents were asked to share their reactions by responding to the survey questions. Before starting the survey experiment, participants were provided with an overview of the survey, required to provide consent, and started the survey by answering items on descriptive variables. Each respondent was invited to participate in the first experiment, where they read one randomly assigned vignette illustrating a chatbot providing information and reacted by answering survey items related to their “intentions to use” it. Between experiments, respondents were also instructed to complete a short distractor task to minimise any potential effects transfer between the two experiments (see Appendix B). In the second experiment, respondents were again requested to read one randomly assigned vignette illustrating a chatbot providing services and react to survey questions related to their ‘intention to use’ it. To prevent participants from responding based on pre-existing beliefs about artificial intelligence, experiments referred to these technologies as “virtual assistants”.

Experiment scenarios and data collection

The survey experiment was conducted in Estonia, a country with a relatively well-established tradition of digital governance that has familiarised citizens with digital public encounters (Solvak et al., 2019). Since regaining independence from the Soviet Union, government technologies have been actively integrated into governance and have become a defining aspect of Estonia’s national identity and international branding (Kaun et al., 2024; Männiste and Masso, 2018). In 2019, the Ministry of Economic Affairs and Communications also launched the Bürokratt program, a term derived from Kratt servants referenced earlier. The program introduces a national vision to create a network of interoperable information- and service-providing chatbots as part of its already highly digital public service system to offer personalised, one-stop services through voice- and text-based interactions (MKM, 2021). While still under development, early versions of chatbots are being piloted (Dreyling et al., 2024). Estonia was chosen for this study because it provides a context where citizens are already accustomed to digital transactions, and chatbots are not viewed as a transformative shift but rather as an extension of the country’s already highly digitalised public service ecosystem. Citizens are also familiar with the Bürokratt program due to public visibility, with 22 articles published in Estonia’s leading online news outlets (Delfi, ERR, Postimees) as of November 2024. A review of these articles indicates that the program is mainly portrayed positively, with no significant controversies or public objections reported.

Vignettes were initially developed based on Bürokratt promotional materials and later validated through discussions with one policymaker and two service developers from the Bürokratt program to ensure realism of the depicted situations, as well as a to check whether the manipulations in the experiments (presence and absence of explanation, and amount of requested information) where depicted in accurate and plausible ways in an Estonian context, where web-based and mobile digital service delivery is widespread and part of daily practice. In the first experiment, vignettes show a character using a chatbot to find information to renew the passport, while in the second experiment, vignettes show a character using a chatbot to find medical help and appointment booking. These scenarios were selected for two main reasons. First, they are straightforward and easy for citizens to understand, even for those without direct experience with government chatbots. Second, these scenarios are feasible to implement and have been discussed as possible use cases in the Bürokratt program. Once the vignettes were developed and the survey items were finalised, the survey experiment was tested with five experienced e-government researchers for realism, understandability, and conciseness. The pilot tests resulted in various revisions before the survey experiments were carried out.

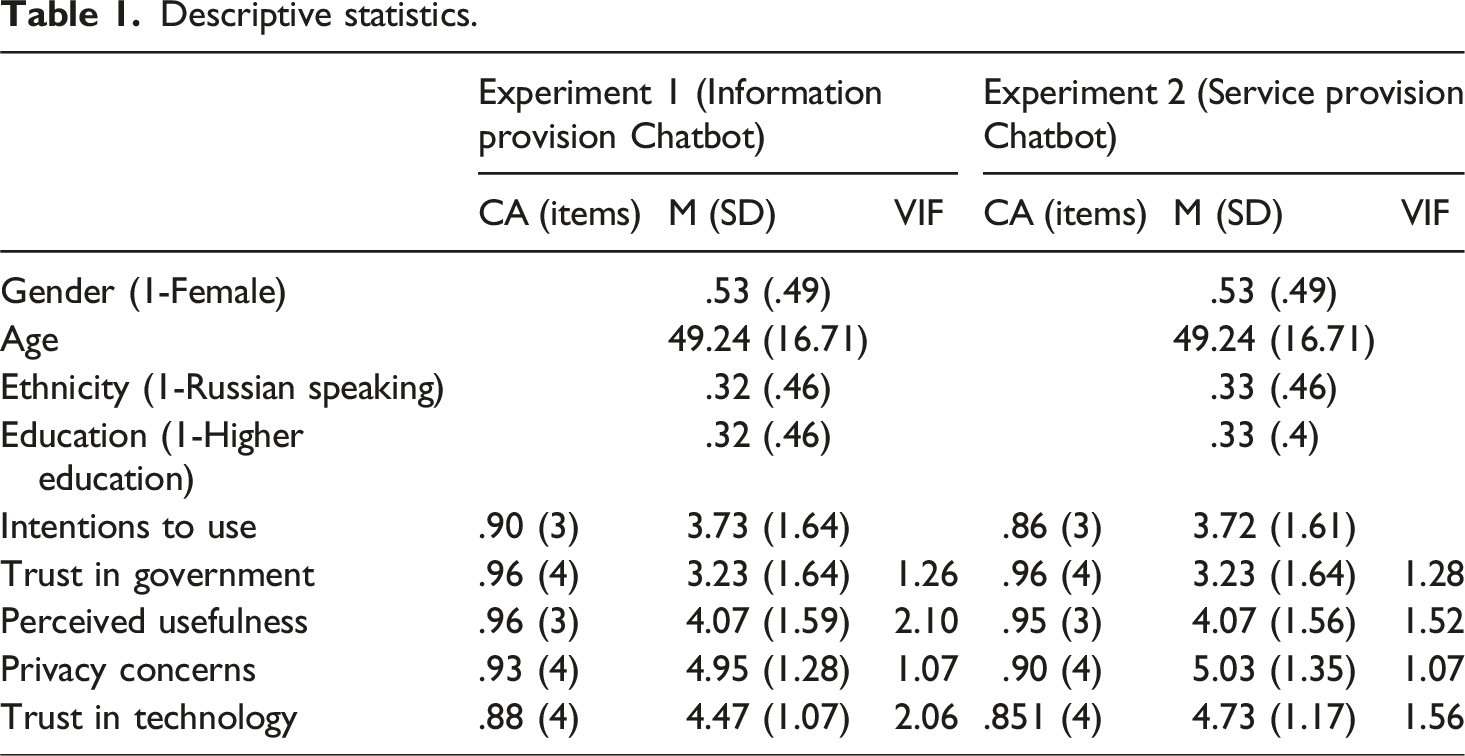

This study received ethics approval in May 2024. All respondents were informed that their participation was voluntary, responses were anonymous, and data would be used solely for scientific purposes. We involved a market research service provider to collect the responses in Estonia, and panel members received bonus points through the provider’s financial reward system. A total of 1,083 complete responses were collected from the survey vignette experiment conducted between July 26 and August 6, 2024. After screening the dataset, 22 responses were excluded because of possible speeding, and three were removed for identical scoring across items (indicating inattentiveness). The final sample included 1058 respondents, with 53.0% female participants (proportion of females in the Estonian population is 52.2%, Statistics Estonia (2025)), and an average age of 49.26 years (range: 18-84), with the Estonian population having a mean age of 42.2 years (Statistics Estonia, 2025). In the sample, 33.7% of the respondents reported speaking Russian at home (the population level is 28.4%, according to Statistics Estonia (2025)), and 32.6% reported having completed higher education (the population level of people with higher education is 37.1%, according to Statistics Estonia (2025)).

Results

Descriptive statistics.

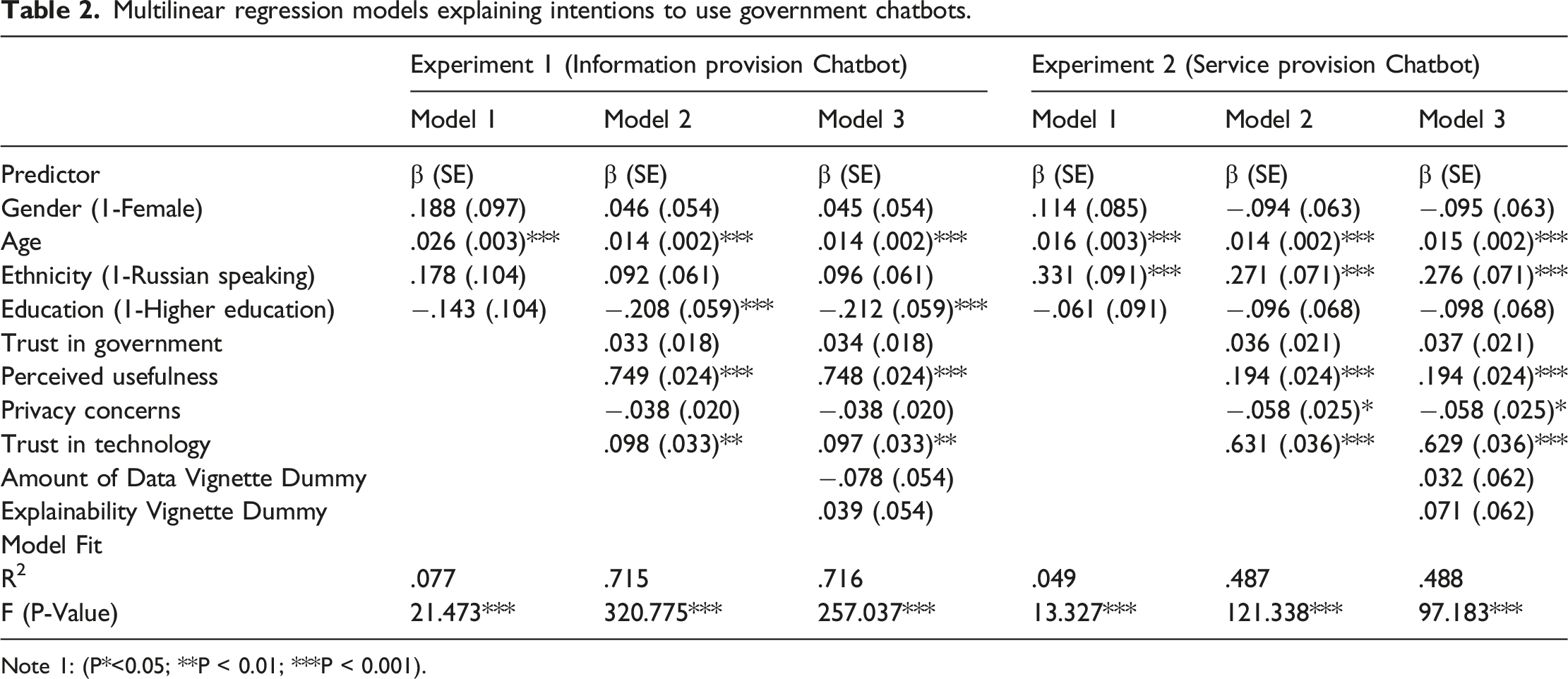

Multilinear regression models explaining intentions to use government chatbots.

Note 1: (P*<0.05; **P < 0.01; ***P < 0.001).

The results from Experiment 1 show that the predictors significantly contribute to explaining the variance in the dependent variable F (10,1020) = 257.037, p < .001, and the model explains 71.6% of the variance in citizens’ intentions to use government chatbots. Hypothesis 1 proposed that the higher the perceived usefulness of a chatbot, the higher the citizens’ intentions to use these technologies in public encounters. The results show a highly significant correlation between perceived usefulness and citizens’ intentions to use chatbots (β = 0.748, p < .001), supporting this hypothesis. The results also support Hypothesis 5, suggesting that trust in technology is highly correlated with citizens’ intentions to use government chatbots in public encounters (β = 0.097, p < .003). Nevertheless, the results of Experiment 1 did not support our expectations for the other hypothesis (See Table 2). The amount of requested information (Hypothesis 3) and the explainability of chatbot decisions (Hypothesis 4) had no statistically significant effect on citizens’ intentions to use chatbots.

The results from Experiment 2 show that the explanatory variables significantly contribute to explaining the variation in citizens’ intentions to use government chatbots in public encounters, F (10,1020) = 97.183, p < .001, and the model explains 48.8% of the variance in citizens’ intentions to use government chatbots during public encounters (R2 = .488). The analysis also supports Hypothesis 1, indicating that perceived usefulness has a significant effect on citizens’ intentions to use government chatbots (β = 0.194; p < .001). Hypothesis 5 proposed that higher trust in technology would increase citizens’ intentions to use chatbots, and the results indicated a highly significant correlation between trust in technology and citizens’ intentions (β = 0.629; p < .001). Hypothesis 2 proposed that privacy concerns negatively impact citizens’ intentions, and results show a significant negative effect of privacy concerns on intentions (β = −.058; p < .021). The results did not support the other hypotheses (See Table 2). The amount of requested information (Hypothesis 3) and the explainability of chatbots (Hypothesis 4) had no significant effect on citizens’ intentions to use chatbots. Also, trust in government had no significant effect on citizens’ intentions to use chatbots during public encounters.

The analysis suggests that citizens’ intentions to use government chatbots in Estonia are primarily driven by perceived usefulness, while trust in technology is an important explanatory variable. Also, privacy concerns showed mixed results. When chatbots are used to facilitate citizens’ search for information, privacy concerns have little effect on citizens’ intentions to use these technologies. However, this changed when chatbots were used in service provision. Interestingly, the amount of information requested from citizens does not appear to play a significant role in the calculus citizens engage in when deciding whether to use government chatbots. Additionally, whether the chatbot explains its decisions appears to have little impact on how citizens weigh the trade-offs prescribed by the privacy calculus theory.

Discussions and conclusions

This study tested hypotheses related to citizens’ intentions to use chatbots in public encounters, using unique and nationwide data collected in Estonia. Estonia is relevant in the sense that it is a country in which chatbots are a plausible and gradual extension to an already highly digitised digital experience with government. Moreover, chatbots are part of the discussions on service redesign and government innovation in the news. Analyses of survey data gathered in an experimental vignette study revealed that perceived usefulness significantly (and stronger for information provision than for service provission) predicts Estonian citizens’ intention to use chatbots in public encounters, trust in technology is a significant correlate of citizens’ intended use of chatbots in public encounters (stronger for information than for service provision), trust in government does not predict chatbots’ intended use, privacy concerns are relevant for explaining Estonians’ preferred use of chatbots for service provision but not for information provision. Transparency and the amount of information exchanged during conversations in public encounters were found not to be correlated with the intended uses of chatbots in public encounters.

These findings add to the literature on the cornerstones of public encounters, algorithmic governance, and artificial intelligence in several ways.

A first contribution is to the public administration literature on public encounters and street-level bureaucracy. Our findings demonstrate that, at least in an Estonian context, citizens’ willingness to use government chatbots for information and service provision is shaped by perceived usefulness and trust in technology, with trust in government not being correlated with intended use. Support for perceived usefulness is consistent with empirical studies on the diffusion and adoption of government chatbots (Choung et al., 2023; Willems et al., 2022), indicating that citizens are more likely to use technology if they view it as useful for completing specific tasks (Davis, 1989). Notably, perceived usefulness was a stronger predictor of intentions to use information-provision chatbots than it was for service-provision chatbots. In addition, results suggest that trust in technology significantly correlates with Estonian citizens’ intention to use Bürokratt for both information provision and service provision by Bürokratt. The general support for the role of trust in technology is consistent with the broader literature on technology adoption in other geographic contexts (Lee and See, 2004; Mcknight et al., 2011), as well as with the study of Internet voting adoption in Estonia, a digital service unique to Estonia and whose only consistent and significant predictor over time was trust in Internet voting technology (Sindermann et al., 2023). There are, however, two novel findings that, to a lesser degree, are consistent with previous research in related fields of study. The first finding is the difference in the impact of trust in technology on various chatbots: trust in technology is a stronger predictor for intentions to use service provision than for information provision chatbots. A possible interpretation is that individuals with low trust in technology have less difficulty accepting information as answers to questions, while those with high trust tend to have more favourable attitudes towards receiving services; however, both groups display less hesitation when receiving services from government chatbots. The second finding is that trust in government was not found to be correlated with Estonians’ intention to use Bürokratt, which echoes patterns observed in other studies about government chatbots (Wang et al., 2023), but diverges from the results of research that highlighted the importance of trust in government in explaining technology acceptance in Estonia (Männiste and Masso, 2018; Stephany, 2020). Further research should focus on what determinants are of trust in technology and whether or not, over time, there is a very sensitive, if not fragile, relationship between trust in technology and chatbot-enabled public encounters. A relevant question in this context is whether trust in technology originates in an enlightened understanding of technology due to education or trust in technology originates in trust in media and experts.

A second contribution of this study is an improved understanding of how privacy calculus theory, adoption and diffusion theories, and the literature on trust can be integrated. Our findings indicate that privacy concerns matter for citizens’ acceptance of chatbots in service provision but do not explain chatbots’ uses in information provision, suggesting that the type of information service matters for how citizens balance various competing values and interests. Furthermore, and we think ultimately relevant for theorising at the cornerstones of ethics, privacy calculus, and innovation, we concluded that chatbots’ capabilities to explain their contributions to conversations in public encounters were found not to be relevant for explaining citizens’ intentions to use chatbots in public encounters. This conclusion contradicts policy beliefs on artificial intelligence regulation, responsible innovation thought, and general artificial intelligence wisdom that emphasises explainability as a core concept, and urges a reconceptualisation and reflection on the role of explainability at various levels of analysis. The conclusion also suggests further research on how the concept of explainability is used rhetorically and pragmatically in discussions on responsible uses of artificial intelligence. Also, future research, inspired by Aoki’s et al. (2024) design, could treat explainability as a much more dynamic and interactive phenomenon, and explore and eventually test what kinds of explainability, emerging in specific parts of ongoing conversations in public encounters, may or may not have an impact on users (Miller, 2019). A third contribution of this study focuses on the role of privacy concerns. Results indicated that individuals’ general privacy concerns negatively impact intentions to use service-provision chatbots but have little impact on information-provision chatbots in Estonia. In contrast to prior research showing that privacy concerns reduce trust when government chatbots request sensitive information (Wang et al., 2023), this study’s findings suggest that citizens are more sensitive to privacy risks for tasks involving transactional interactions than for general information retrieval. Interestingly, the amount of information that chatbots request during public encounters does not affect citizens’ intended use. This pattern aligns with findings from other geographic contexts (Willems et al., 2022). Where privacy concerns were not always reflected in users’ actual behaviour. At the same time, the findings highlight the importance of trust in technology as a foundation of Estonia’s digital society, underscoring the need for further research into its components, determinants, and impacts as a critical driver of continued digitalisation.

In addition to its theoretical contributions, this study points to several practical lessons for policymakers and public managers working with government chatbots. Citizens already interact with governments through many channels, including offices, websites, apps, and email, so chatbots are best seen as one option in this mix rather than a replacement. A multichannel approach gives citizens flexibility and respects different preferences and levels of trust (W Ebbers et al., 2008). Our findings also show that usefulness is a key driver of acceptance. Citizens are more willing to use chatbots if they can get things done, whether by solving service bottlenecks, saving effort, or providing the necessary information. For managers, this means delivering designs that offer tangible value for users, especially in information provision, where utility mattered most. We also found that trust in technology, not trust in government, shapes willingness to use chatbots. This points to a need for practitioners to build confidence in the systems themselves, through reliable performance, security, and clear demonstrations of competence, rather than relying on institutional trust alone. The explainability features we tested did not make citizens more willing to use chatbots, suggesting that abstract or static explanations may not be very effective. More dynamic and context-sensitive forms of explainability, those that feel relevant in the moment, may be more effective. Finally, privacy concerns remain especially strong in transactional contexts. Protecting data is not enough; public managers also need to make those protections visible and easy to understand. Clear, proactive communication about safeguards can help mitigate concerns before they become actual usage barriers.

As with any other research, this study is subject to certain limitations. A first limitation concerns the operationalisation of the core constructs, explainability, and the amount of personal information in the vignette experiments. While scenarios were designed to approximate realistic interactions, their inclusion in a questionnaire arguably underscores the dynamic and interactive qualities of actual chatbot interactions. Therefore, both constructs were operationalised in a relatively linguistic and scenario-bound way, which may not fully capture the evolving process through which users make sense of chatbot communication in real-time. Future research could address this limitation by implementing longitudinal designs that allow for the study of how explanations and chatbots’ requests for personal information in public encounters impact citizens’ attitudes.

A second limitation is that, for reasons of elegance and conciseness in the survey questionnaire, we presented respondents with scenarios that either favoured or opposed the use of chatbots in public encounters. In practice, citizens have access to multiple channels for public encounters (Ebbers et al., 2008). Future research could explore citizens’ preferences for chatbots as opposed to alternative channels, such as face-to-face interactions, websites, mobile applications, or email encounters.

A third, and for now, final limitation is that this study was conducted in Estonia, which was deliberately chosen for its advanced digital governance system, but the findings may be specific to the context and limit the generalisability of the results. Existing studies have reported that Estonians have relatively high levels of enthusiasm for automatic decision-making in general (more than Swedes and Germans, see Kaun et al. (2024), which arguably they associate with identity building and branding of Estonia as a digital state since it regained its independence in 1991 (Homburg, 2025). Cross-country comparisons could address this limitation by testing whether the variables in this study hold explanatory power in other contexts and by providing insights into the administrative, economic, social, and political factors influencing chatbot acceptance and implementation across cultural settings (See Kaun et al. (2024)).

Supplemental Material

Supplemental Material - When citizens meet the chatbot: Evidence from a survey vignette experiment in Estonia

Supplemental Material for When citizens meet the chatbot: Evidence from a survey vignette experiment in Estonia by Art Alishani, Vincent Homburg in Public Policy and Administration.

Footnotes

Acknowledgments

An earlier draft of this article was presented at the Skytte Institute of Political Studies colloquium at the University of Tartu, and we especially thank Mihkel Solvak for his valuable insights as a discussant. We also extend our gratitude to Colin, Gentrit, Logan, Robert, and Stefan for their support in testing the survey vignette experiments and providing constructive feedback. Finally, we thank Jurgen Willems for his insightful feedback during the development of this paper.

Ethical considerations

This study received approval from Research Ethics Review Committees (RERC)/Internal Review Boards (IRB) at Erasmus University Rotterdam’ in May 2024.

Consent to participate

Written informed consent to participate was obtained from all respondents.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by European Union’s Horizon 2020 research and innovation program under grant agreement no 857622 ERA Chair in E-Governance and Digital Public Services – ECePS. Views and opinions expressed are however those of the authors only and do not necessarily reflect those of the European Union or the European Commission. Neither the European Union nor the granting authority can be held responsible for them.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication.

Data Availability Statements

The dataset will be made available in the University of Tartu repository upon acceptance of the article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.