Abstract

Despite high expectations about the results of agencification and a legal obligation to evaluate executive agencies, ministers and MPs seem not very interested in evaluating agencies’ results. Hood’s theory on blame avoidance is used to explain the lack of evaluation in the case of the Dutch ZBOs. Only one in seven ZBOs is evaluated as frequently as mandated. Findings show that ZBO evaluations are more an administrative than a political process. Reports do not offer hard evidence and are seldom used in parliamentary debates. There are no clear patterns as to which ZBOs are evaluated more, or less, often.

Introduction

Agencification came with high expectations. Compared to government bureaucracy, executive agencies were expected to operate more business-like, provide more value and quality of public services for less money, operate at a distance from politics and closer to citizens, ensure more transparency and accountability, and offer a more motivating work environment for employees (Overman, 2016). Little is known however about the actual results, mostly due to a generic lack of formal evaluation studies on the results of these types of reforms (Pollitt and Dan, 2013).

A lack of formal evaluations of policy outcomes is not uncommon (McCubbins and Schwartz, 1984). Different explanations are offered for the lack of interest among politicians – members of parliament and ministers alike – for evaluating policy implementation. McCubbins and Schwartz (1984) posit that politicians prefer incidental (‘fire alarm oversight’) and informal evaluations over formal and systematic evaluations (‘police patrol oversight’) as this is a more efficient strategy, fitting with opportunity costs, available technology and limited human cognitive abilities. Busuioc and Lodge (2017) point to reputation effects as explanation; evaluation is only undertaken when it contributes to the reputation of either the accountholder or the account-giver. Hood (2007, 2011) and Weaver (1986) mention the inclination of public office holders to avoid blame as the main reason for not wanting to evaluate the outcomes of their decisions, while other authors show that even if evaluation information is present many politicians are not interested or cognitively unable to use that information to properly evaluate their policies and decisions regarding policy execution (Askim, 2007; Dubnick and Frederickson, 2010; Nielsen and Baekgaard, 2015; Schillemans and Busuioc, 2015; Ter Bogt, 2004).

This article will delve into the lack of evaluations in the case of Dutch ZBOs, a specific type of executive agency (more explanation below). Despite the high expectations and a legal obligation to evaluate these agencies every five years, only 14.4% is evaluated as often as they should be. The central research question is how this lack of evaluation, and the apparent disinterest of politicians in agency evaluation, can be explained.

Because one cannot study evaluations that did not happen, I will use 102 reports that have been published so far, to examine which ZBOs have been evaluated compared to the overall population. This will offer insight into which types of ZBOs have been evaluated, but also which ones not. Furthermore, I will look into why, how and what is evaluated, and how evaluation reports are used in political decision-making, as this may also shed light on the question when politicians are interested in evaluations, and by implication when not.

The outline of this article is straightforward. First the theoretical framework is presented. I will use Hood’s theory as an integrative framework for a number of explanations and deduce six testable hypotheses. The method section is next, describing the data, mostly from public sources, and the methods used to test these hypotheses. Based on the results, conclusions are drawn and theoretical implications are discussed.

Blame games

Multiple authors have observed a lack of evaluation of policy implementation. McCubbins and Schwartz (1984) were one of the first to discuss the lack of oversight by Congress. They concluded that this is not to be lamented, but rather understood as a very logical and efficient choice. Instead of spending a lot of time and energy on systematic forms of formal evaluation (‘police patrol’) politicians focus on incidental cases that attract their attention (‘fire alarms’) or are of direct concern to them and their voters. Following a comparable line of reasoning, Busuioc and Lodge (2017) posit that accountholders like MPs and ministers will only call for information if it will contribute to their reputation – or damage the reputation of opponents. Saliency and reputation are also important elements in Hood’s (2011) theory on blame avoidance. This theory departs from the observation that all public officeholders (politicians, managers, professionals and front-line bureaucrats) face risks of personal blame while carrying out their tasks. Examples are easy to find, for example, when ministers are held to account in parliament for certain incidents or when media report on public organizations’ managerial, financial or performance problems. Hood explains that evaluations are not undertaken, to avoid blame for poor performance. Besides arguments of saliency and reputation, other authors also point to the limited cognitive ability of politicians to process vast amounts of expert information (Askim, 2007; Dubnick and Frederickson, 2010; Nielsen and Baekgaard, 2015; Ter Bogt, 2004) or their limited amount of time to spent on such a task.

In sum, there are various explanations for a lack of evaluation of policy implementation and the executive agencies in charge of policy implementation. In this article, I will put these explanations to the test in the case of Dutch ZBOs. To that end I will have to make the explanations more concrete and pinpoint exact conditions under which politicians will be more interested in evaluating ZBOs, and when not. As Hood’s theory offers a more comprehensive explanation, with multiple factors to explain a lack of evaluation interest, I will use this as the overarching framework while integrating the other explanations as mentioned before. This way I can pay attention to both variables related to politicians and ZBOs, as well as characteristics of evaluations and the evaluation process.

Agency strategy

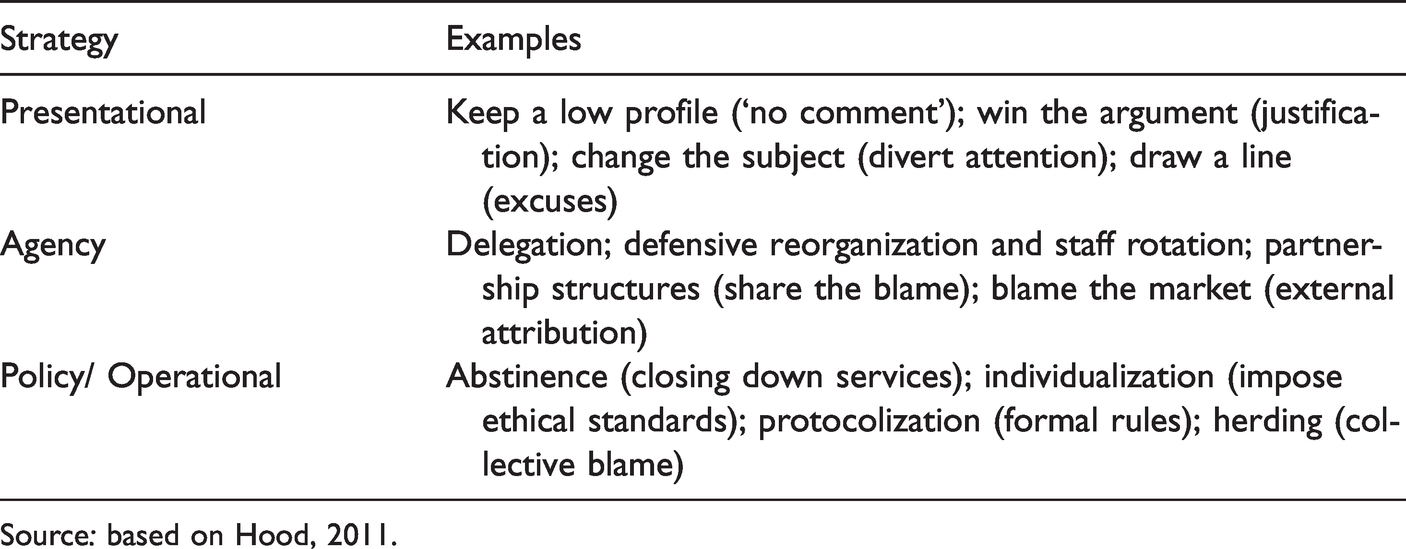

Hood (2011, 2007) posits that public officeholders can use three strategies to reduce or even avoid the risk of being blamed for certain policy outcomes and prevent reputational damage (cf. Busuioc and Lodge, 2017): presentational, agency and policy strategies (cf. also Weaver, 1986).

Presentational strategies focus on limiting the blame by making excuses or giving justifying decisions, to change the perception of what went wrong. Policy strategies, sometimes also referred to as operational strategies, aim to minimize risks by introducing new routines and protocols to limit discretion and non-compliance with rules and procedures during implementation. Agency strategies consist of the delegation of tasks and hence redistribution of the blame to others, for example to semi-autonomous agencies. See Table 1 for some examples of each of these strategies.

Examples of blame avoidance strategies by public officeholders.

Source: based on Hood, 2011.

Agency strategies are directly linked to the object of study in this paper: ZBOs, which are a Dutch type of semi-autonomous agency, in charge of policy implementation, regulation or public service delivery. (More explanation about ZBOs will be given in the method section below.) By delegating tasks to agencies, politicians can shift or avoid blame when things go wrong (Cohn, 1997; Hood, 2002). This was however not mentioned by politicians as an official motive (nor admitted playing a role) when agencies were created in large numbers in western countries from the 1980s on (Pollitt et al., 2004; Van Thiel, 2001). Instead, two other motives were given. First, it was expected – along the lines of New Public Management ideology – that agencies would operate more business-like and hence more efficiently than the traditional government bureaucracy. Second, politicians can use agencies to assure voters that public tasks will be carried out by impartial and expert officeholders, forfeiting the possibility to interfere with task execution for political or personal reasons. Moreover, delegation of a task to an impartial and expert agency will also ensure the continuity of task execution even after elections and changing rulers. Majone (2001) labels this the logic of political credibility. In light of Hood’s theory on blame (2011) this motive could also be construed as an example of ‘credit claiming’; politicians can claim the success of expert, impartial and longstanding public services. However, Hood upholds a smaller definition of credit claiming; he argues that a decision to delegate will render credit only if the agency is performing well. The decision to create an agency as such is not sufficient to claim credit.

Given the expected benefits of agencification, the question can be raised why politicians would ever choose direct control over a public service rather than delegate them all (cf. Hood, 2002). Hood (2011: 72–29) suggests that in some cases politicians may have a personal preference for direct control, while in other situations not taking control is simply not an option and seen as failure, leading to blame. Blame cannot always be avoided (Novak, 2013; Weaver, 1986). However, there are different types of delegation with varying degrees of control and discretion – ranging from soft delegation with limited autonomy to hard delegation with high degrees of autonomy – which may allow for a combination of direct control and delegation, offering multiple opportunities to claim credit and avoid blame. In general, however, Hood’s theory predicts that tasks with higher risks of blame will be delegated to agencies more often than tasks with lower risks of blame. And tasks with higher chances of claiming credit will be less often delegated to agencies than tasks with lower chances of credit.

If these predictions are correct, it is clear why politicians would not want to know how agencies are performing: the chances of credit claiming are small, while the risk of blame is high, which is why the agency was established in the first place. This effect is even stronger as an agency has been granted more autonomy (i.e. in case of hard delegation). This leads to the following hypothesis:

Hypothesis 1: ZBOs with higher degrees of autonomy (hard delegation) are evaluated less often than ZBOs with less degrees of autonomy (soft delegation).

whether or not privatization, agencification, and outsourcing of public service provisions really do cut costs, improve quality, or produce all the other effects that are so confidently and earnestly claimed for them (on the basis of so little hard evidence), what those arrangements can offer is the apparent prospect of shifting blame away from politicians and central bureaucrats to private or independent operators. (Hood, 2011: 68, original emphasis)

Hypothesis 2: ZBO evaluations will use more qualitative (soft) than quantitative (hard) information.

Hypothesis 3: ZBO evaluations are carried out more often by order of opposition parties (or parliament) than ruling parties (represented by the minister).

Hypothesis 4a: ZBOs in charge of tasks salient to voters are evaluated more often than ZBOs with non-salient tasks.

Hypothesis 4b: ZBOs in charge of tasks salient to voters are evaluated less often than ZBOs with non-salient tasks.

Hypothesis 5: ‘Younger’ ZBOs are evaluated more often than ‘older’ ones.

Similarly, the question is relevant what will happen with the results of the report. Above it was argued that reports will be of use to politicians only if they can claim credit, but credit claiming is very difficult in the case of policy implementation. This would suggest that reports are not often used. Other indications that point to the same result would be twofold. First, if an evaluation is only used to divert attention (a presentational strategy, Hood (2011)) or, second, if it is just part of an administrative process (Busuioc and Lodge, 2017). In both instances I would not expect to see much use of a report by politicians:

Hypothesis 6: ZBO evaluation reports are not used by politicians.

Data and method

Dutch ZBOs (acronym of ‘

In 2007, the Charter Law on ZBOs became effective after a ten-year long debate in parliament. Article 39 of the Charter Law stipulates that every ZBO has to be evaluated every five years, to assess its effectiveness and efficiency (article 39). The minister of the parent department of a ZBO has to send the report of this evaluation to parliament, which will make it a public document. Before the Charter Law came into effect in 2007, there were official guidelines on ZBOs in place (from 1996 onwards) that also included a formal obligation to evaluate each ZBO every five year. There is no format prescribed for how to carry out this evaluation, and there are no further instructions besides the two criteria (efficiency and effectiveness), nor is there an authority in charge of checking whether parent ministries comply with this article of the Charter Law. More recently, reports have been included in the official ZBO register (online), although not all.

In this study, evaluation reports were included that were published until and including 2015. Most reports were found in the official government document repositories (www.rijksoverheid.nl), some were found online for example on ZBO-websites. Following the list of ZBOs in 2015, we tracked for each ZBO which reports were published, identified as a formal evaluation, and made public. Reports until 2011 were collected as part of a parliamentary inquiry for the Dutch Senate (POC, 2012), by an information specialist from the Dutch parliament. 2 Older reports were digitalized as they were only available on paper (in the parliamentary library). Reports from the period 2012-2015 were collected as part of five master student projects (supervised by the author); the students first compiled a list of ZBOs and then searched for reports in the online government repository, and in some cases from the websites of the ZBOs themselves for easier access. Appendix 1 lists all reports that were included in the analysis (and which are available upon request to the author).

To enable the comparison with population data, information on for example the task and date of establishment of ZBOs was taken from earlier surveys by the author (Van Thiel and Yesilkagit, 2014), although it has to be noted that data from years before 2008 were not always available or complete (then left missing). All data were analysed using SPSS 25, using simple statistical techniques (e.g., descriptives and cross tabulations) because of the nominal/ordinal nature of most variables.

The analysis of the usage of reports in parliamentary debate was carried out as part of the POC study, and findings have only been summarized and translated for this article. 18 reports were selected for 10 ZBOs, varying in the type of report (by a consultancy or the ministry) and over different policy sectors. The minutes of parliamentary debates in which these reports were put on the agenda were analysed (content analysis), searching for references to the evaluation report. When such references were found, the text was further analysed to determine what was being said and by whom (POC, 2012). In addition, four interviews with parliamentary clerks were held to determine how evaluation reports are dealt with (see POC (2012) for more details).

Results

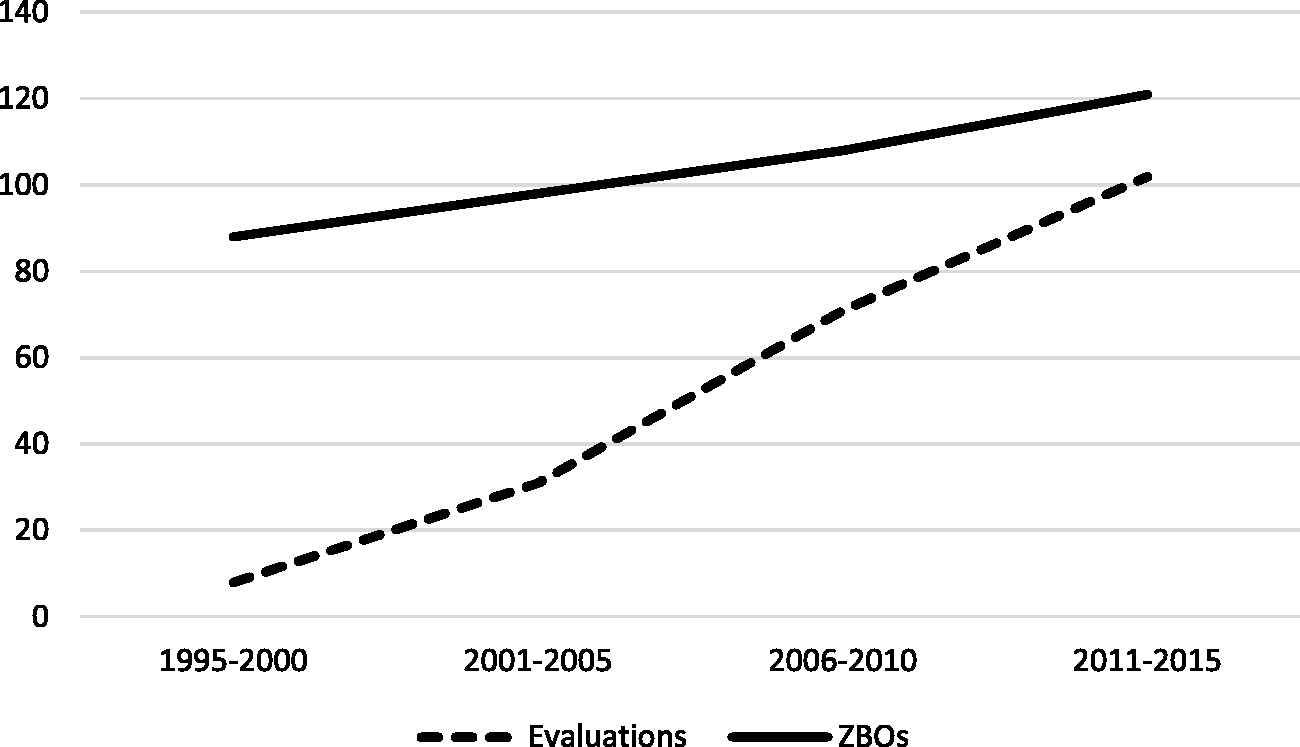

Based on the guidelines and Charter Law, it is legally obligatory to evaluate each ZBO every five years. Figure 1 shows the increase in the total number of reports (N = 102) that have been published until 2016, in five-year intervals. The first reports we found date back to the early 1990s, so even before it became legally obligatory. The number has since then indeed increased strongly. As most reports (62.7%) have been published in or after 2007, we can assume that the Charter Law has spurred this growth. However, although the number of ZBOs that have been evaluated has increased, most of them have been evaluated only once (31, 53.4%) and not every five years as is legally mandatory. The 102 evaluation reports cover 58 (out of 118) individual ZBOs; 15 of those (25.9%) have been evaluated twice, 9 (15.5%) have been evaluated thrice, and 3 (5.1%) have been evaluated four or five times. That implies that non-compliance with the Charter Law is quite substantial: 51% of the ZBOs has never been evaluated, and of the 58 ZBOs that have been evaluated only 17 (14.4%) have been evaluated multiple times, every five years or even more frequently.

Number of ZBOs and ZBO-evaluation reports 1995–2015.

Which ZBOs are evaluated?

When we compare the ZBOs that have been evaluated to the ones that have not been evaluated, we find some noteworthy differences. Public law based ZBOs are evaluated more frequently than private law based, even when taking into account that there are more public law based than private law based ones: 69% of the evaluated ZBOs have a public law basis (against 56.3% in the population), 23.7% of the evaluated ZBOs have a private law basis (against 43.7% in the population). If we assume that public law based ZBOs are more similar to what Hood would call ‘soft’ delegation and private law based ZBOs are more exemplary of ‘hard’ delegation, this finding contradicts the theoretical expectation (H1) formulated above.

Task effects

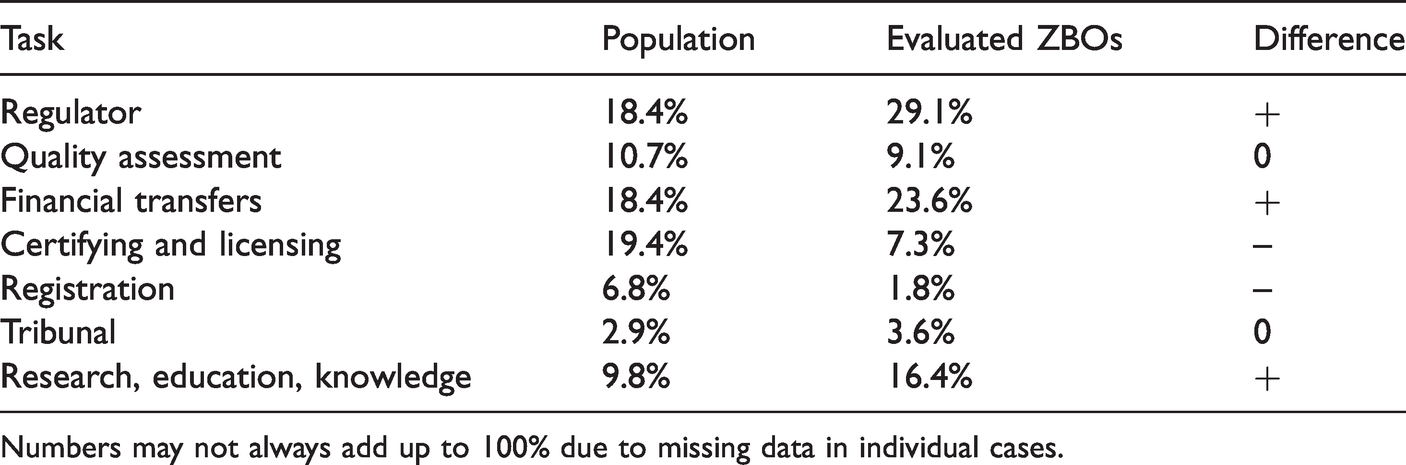

There are some task differences. ZBOs in charge of financial transfers (e.g. paying benefits), regulation and research/information related tasks have been evaluated more often than would be expected based on the proportion of ZBOs with such tasks in the population, see Table 2. ZBOs in charge of certification, licensing and quality assessment (e.g. as part of accreditations) are evaluated less often.

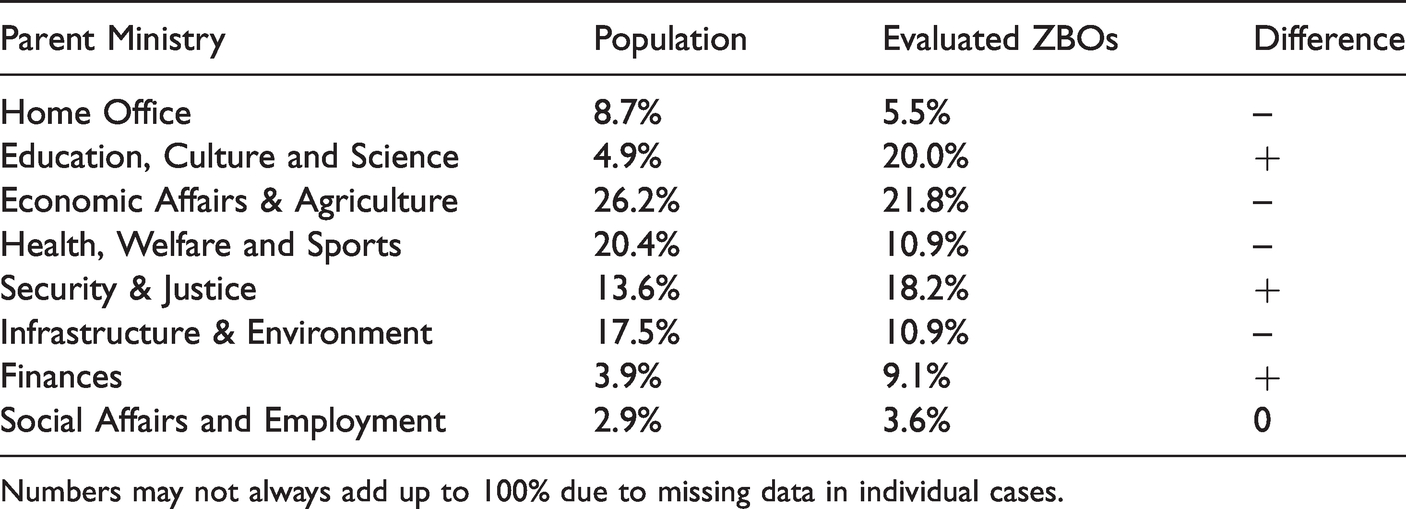

Percentage of ZBOs being evaluated, per parent ministry.

Numbers may not always add up to 100% due to missing data in individual cases.

It was expected (H4a) that tasks that are more salient to voters would be evaluated more often. It is hard to say whether or which of these tasks is more salient than the others, but it could be argued that financial transfers most directly affect voters – which would make them highly salient. Moreover, their output might be easier to measure and hence suitable for evaluation. ZBOs with regulatory tasks are also evaluated more often than would be expected based on their proportion in the population. Regulatory tasks are often put at arm’s length to ensure impartiality, in line with Majone’s (2001) argument about political credibility. The higher frequency of evaluation could then be seen as part of an agency strategy (Hood, 2011) and of credit claiming. Finally, ZBOs in charge of research and knowledge related tasks could be more open to evaluation as that fits with their own purpose, but that is pure speculation.

Certification and quality assessment could be considered as a type of task for which specific expertise is crucial, creating higher risks of blame (or lower chances of credit claiming) and hence less interest among politicians to call for an evaluation (H4b) – but again that is speculation and it calls into question whether these tasks have an inherent quality that makes them more suitable for agencification than other tasks (cf. Pollitt et al., 2004, on the task specific path dependency theory). Agencies in general, as well as ZBOs more specifically, have been charged with a large array of tasks, of which certification and quality assessment are important categories but not the most frequent ones (Van Thiel and Yesilkagit, 2014). An alternative explanation for the lack of attention for ZBOs with these tasks could be that their output is more difficult to measure, but that does not seem plausible: certificates and accreditations are quite tangible results. More research is therefore necessary to help explain these findings better.

Sector differences might shed some light on this matter. The two parent ministries (Economics and Health) with the largest numbers of ZBOs both have lower numbers of evaluations, see Table 3. This could in part be attributed to the fact that many of their ZBOs are private law based (see above on H1). Other ministries, like Education, Justice and Finance have proportionally more ZBO evaluations than expected, which could be linked to the tasks of the majority of ZBOs in their policy sectors: education ZBOs do research, financial ZBOs are often regulators. However, there is no clear explanation for ZBOs in the field of Justice, so that will require more in-depth case study research.

Percentage of ZBOs being evaluated per task.

Numbers may not always add up to 100% due to missing data in individual cases.

All in all, task may matter but it is not straightforwardly clear how. A more case by case analysis would be necessary to get more clarity (on H4a and H4b).

Age effects

There is no statistically significant correlation between age (i.e. the year of a ZBO’s establishment) and being evaluated, refuting the theoretical expectation that younger ZBOs are evaluated more frequently (H5).

Motives for evaluation

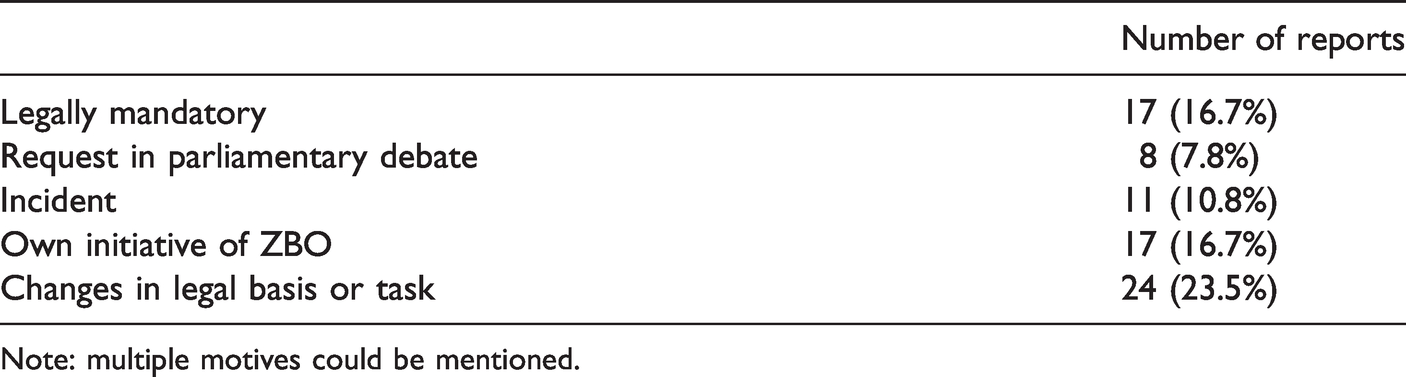

Table 4 shows the motives that were mentioned in the reports for doing the evaluation. Although 80% of the ZBOs that have been evaluated fall under the Charter Law, the legal obligation to evaluate is mentioned in only 17 cases. This makes sense when one considers that the Charter Law became effective in 2007 and some reports predate that, but even in the 64 reports that have been published since 2007 this motive is mentioned in a minority (15 out of 64, 23.4%) 3 of the cases. Changes in the legal basis or task of a ZBO are the most frequent motive (23.5%), while incidents (10.8%) and parliamentary requests (7.8%) are mentioned much less often, contrary to what was expected (H3). ZBOs also take the initiative themselves to be evaluated, sometimes as part of a peer review procedure. 4 This fits with the idea that account-givers will actively seek accountability to improve their reputation (Busuioc and Lodge, 2017).

Motives for carrying out an evaluation (N = 102).

Note: multiple motives could be mentioned.

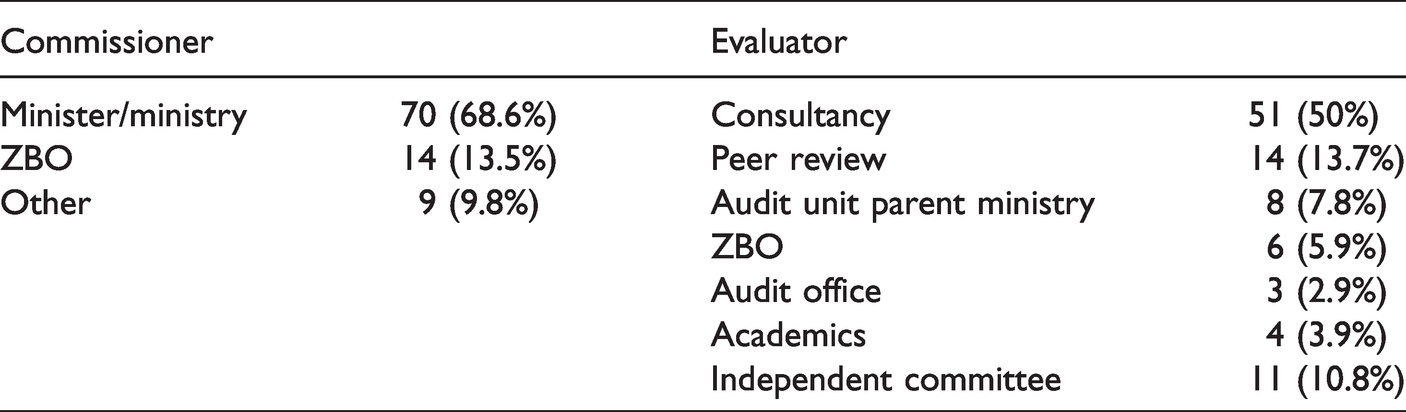

Commissioners and evaluators

Table 5 shows who commissioned the evaluation and who carried it out. In most cases (68.6%) the ministry i.e. civil servants are the commissioner and the evaluation is carried out by a consultancy firm (50%). This pattern holds regardless of the motive mentioned for an evaluation. In the case of a peer review evaluation, the ZBO is usually the commissioner itself. ‘Other’ commissioners are for example an audit office or the board of supervisors of the ZBO. Independent committees that perform an evaluation have very mixed compositions; they may for example include civil servants (from the parent ministry or the Senior Civil Service), academic experts, or former/retired politicians. All in all, the findings in Tables 4 and 5 suggest that evaluating ZBOs is more an administrative process than a political one (Busuioc and Lodge, 2017), refuting H3. ZBO-evaluations do not have a high priority for politicians.

Who has commissioned and who has carried out a ZBO-evaluation? (N = 102).

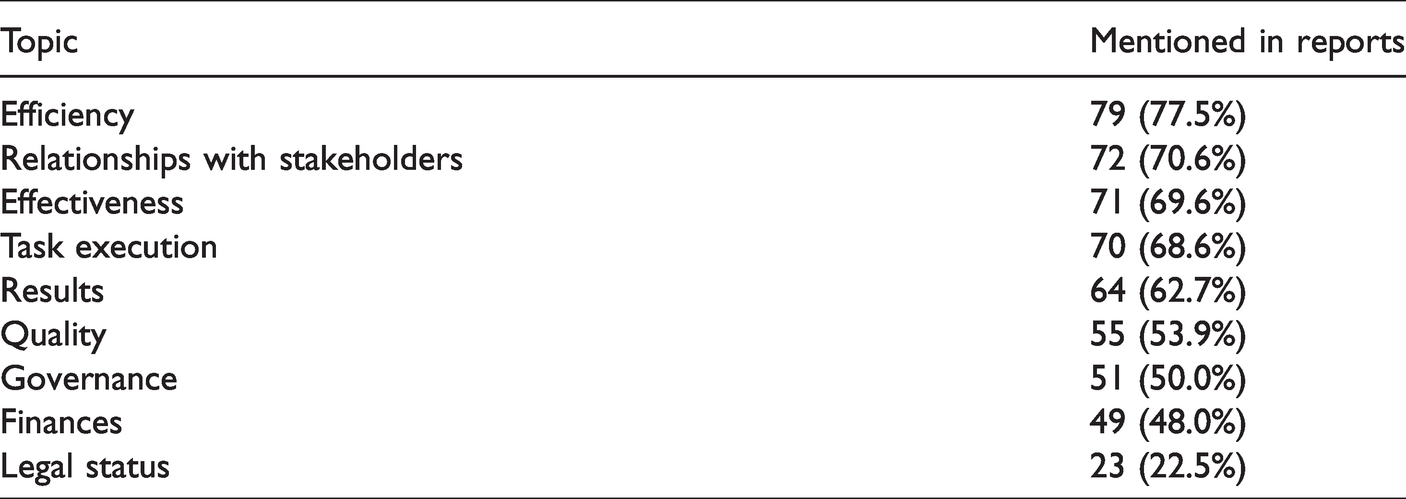

Topics of evaluation

The legal obligation to evaluate ZBOs specifies that the evaluation should focus on efficiency and effectiveness over the past five years. Table 6 shows which topics are covered in the reports (note that multiple topics can be covered in one report).

Topics mentioned most frequently in ZBO evaluations (N = 102).

As mentioned in the Charter Law, efficiency and effectiveness are the most important topics (although not in all). However, the relationship with stakeholders scores equally high. To me, as a researcher who has studied ZBOs for over 20 years this is not surprising as the relationship between ZBOs and their parent ministries is complicated and often dysfunctional (Van Thiel, 2019). However in most reports relationships with stakeholders are not mentioned in the central question nor as a motive for the evaluation; in my experience, it comes up during the evaluation process as something that deserves attention and therefore is reported on – mostly in a negative tone. The attention for governance in half of the reports is connected to this.

Several other performance related topics such as task execution, results and quality are reported on, fitting with the focus on efficiency and effectiveness (but not mentioned in the Charter Law). And even though the legal status is mentioned in the explanatory notes of the Charter Law as a topic for evaluation, it is discussed in only a minority (22.5%) of the reports. From the perspective of blame avoidance (Hood, 2011) this could be interpreted as fitting with the agency strategy to put ZBOs at arm’s length and leave them there.

Methodology

The lower coverage of financial matters (see above) fits with the qualitative nature of most reports. Most evaluators use a combination of (a) interviews (81.4%) with respondents from the ZBO, its parent ministry and stakeholders, and (b) content analysis of documents (85.3%), usually in a qualitative way. About 40% of the reports displays figures for example on personnel, budgets and results, but with limited analysis thereof. A qualitative approach can be explained by measurement problems with policy results, but it could also be interpreted as avoidance of ‘hard’ results – to avoid blame (in line with H2).

Use of evaluation reports

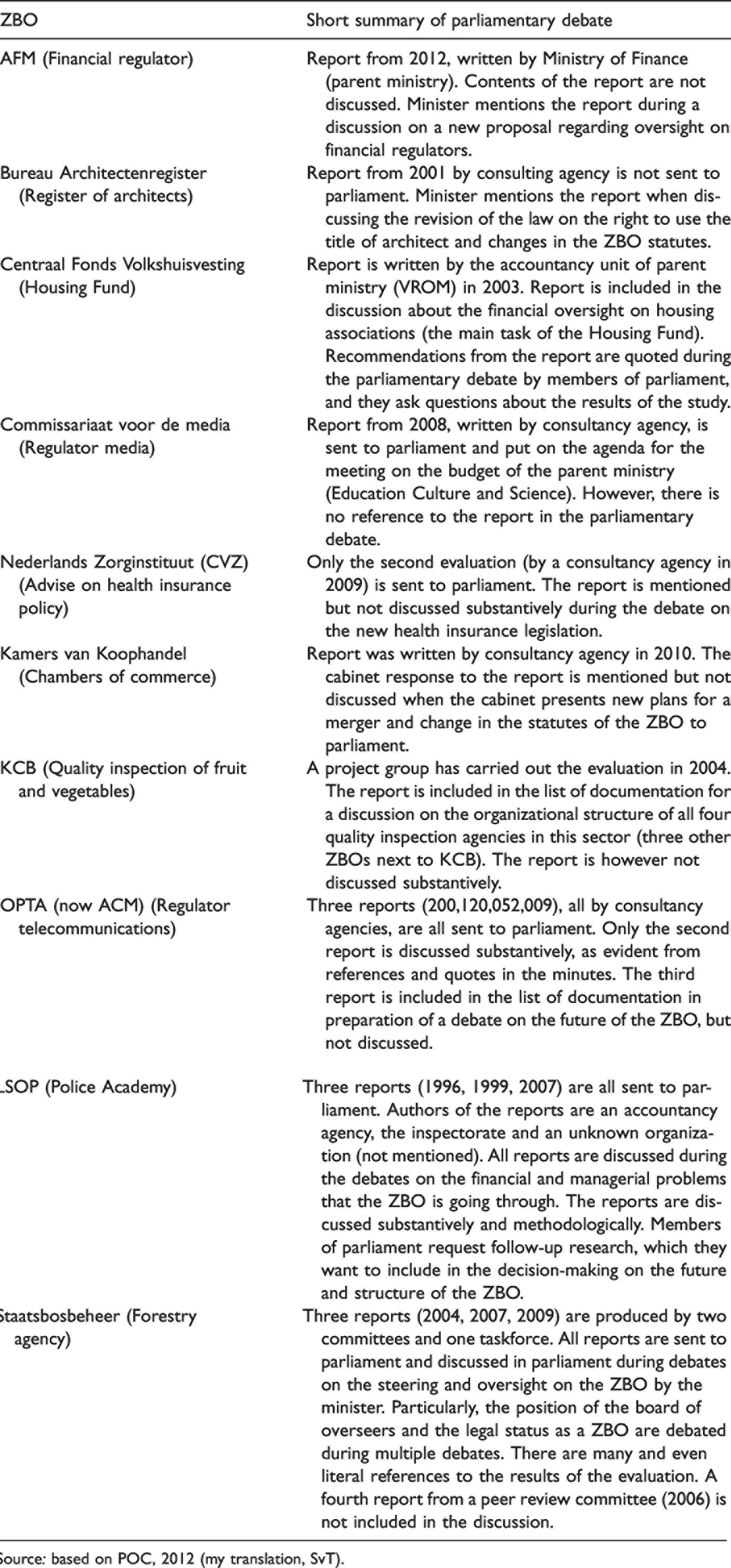

Finally, we turn to the use that politicians make, or do not make, of the evaluation reports. Most reports (73, 71.6%) are sent to parliament, with an accompanying letter in which the parent minister expresses agreement (62.2%) or disagreement with the conclusions. Most reports are however not discussed in parliament at all. Table 7 offers a few examples, taken from the POC (2012) study (note that this includes reports until 2012 only). These examples show how 18 reports on 10 selected ZBOs were dealt with in the parliamentary debates.

Discussion of ten ZBO evaluation reports in Dutch parliament.

Source: based on POC, 2012 (my translation, SvT).

The analysis shows that reports are not always sent to parliament, and even when they are, most reports are not discussed in a substantive way – only in a minority of the cases (8 out of the 18 reports in Table 7) and on an even smaller number of ZBOs (4 out of 10 ZBOs, marked in grey). Reports are generally part of a long list of documentation that members of parliament are given to read in preparation of a debate on new plans from the cabinet. This was confirmed by the interviews with four clerks of parliamentary committees (POC, 2012). The initiative to use evaluation reports thus lies in most cases with the parent minister, although their usage should not be over-exaggerated as most reports are only mentioned in passing and not discussed substantively.

Moreover, substantive discussion of reports does not imply that the evaluation studies were carried out for the topic of the debate in which the discussion takes place; reports are usually included in discussions of new legislative proposals (made by the minister) or how to deal with certain problems or incidents (like in the cases of the policy academy and the forestry agency). The reports have often been published before these debates occurred, before the proposals were developed, or before incidents arose. Most of the reports in Table 7 have been commissioned by the parent ministry and carried out by a consulting agency – like the full sample. Only in the case of the police academy did political parties explicitly request further investigation, although not through an official evaluation.

Based on these findings we can conclude that ZBOs are not only evaluated less frequently than they legally should be, but also that when they have been evaluated the reports are seldom used for further political decision-making and political debate (confirming H6).

Discussion and conclusion

Despite the high expectations underlying the creation of ZBOs (Van Thiel, 2001) and legal obligations to evaluate their efficiency and effectiveness every five years, less than half of the ZBOs has been evaluated once and only one in seven is evaluated as frequently as legally required. This article set out to find an explanation for this lack of evaluating. By looking into reports that were published, we can also establish in which cases evaluations have not been carried out. The findings show that ZBO-evaluations are mostly an administrative process, commissioned by civil servants from the parent ministry and carried out by independent consultants. Political requests for evaluations are rare (H3-), and reports are generally not used for political debate (H6+). Most reports do not present hard evidence (H2+), which probably makes it difficult to pass hard judgements. There are no clear patterns which types of ZBOs are evaluated more or less often. Most hypotheses were not corroborated: ‘younger’ ZBOs are not evaluated more often than older ones (H5-), task differences are found but not very clear (H4?), and private law based ZBOs are not evaluated more often than public law based ones (H1-).

The lack of evaluations and the low interest of politicians was expected, based on among others the theories on the lack of oversight by McCubbins and Schwartz (1984) and the inclination of politicians to avoid blame (Hood, 2011). However, it was also expected that under certain conditions politicians would be interested, for example in the case of incidents (‘fire alarms’ in the terminology of McCubbins and Schwartz, 1984), hard delegation (Hood, 2011) or when tasks were at stake that are very salient to voters (McCubbins and Schwartz, 1984), but this was not found to be true. This shows that the lack of evaluating in the case of executive agencies is very persistent and perhaps even generic, despite the legal obligations. This is in line with the agency strategy, as defined by Hood (2007). The lack of reports can be explained better by the desire of politicians to avoid blame, rather than the fire alarm theory: incidents only seldom lead to evaluations being undertaken – and not for a lack of incidents, as there were multiple incidents during the research period. However, when an incident occurs, existing reports are included in the parliamentary debate (see the example of the Police Academy in Table 7), whereas most reports usually remain undiscussed.

The implementation of the Charter Law did lead to an increase in the number of evaluation studies (see Figure 1). Reports are also made public more often, but they are not used very often in political debates. This lack of interest is in part due to the administrative processual character (as predicted by Busuioc and Lodge, 2017) but could also be contributed to the methodological quality of the reports. The predominantly qualitative research makes it difficult to draw ‘hard’ conclusions – although this could also be done on purpose, to avoid blame or reputation damage (or scapegoat the researchers). The latter interpretation would fit with the negativity bias that Hood (2011) mentions. But we should not forget that measurement of policy outcomes is a difficult exercise, and chances of credit claiming are small (Hood, 2007, 2011), which could be seen as a more neutral explanation. The lack of a format or clear instructions on how to carry out a ZBO-evaluation does not help to overcome such measurement problems – which again could be done on purpose. And similarly, the absence of a regulatory authority to oversee ZBO-evaluations being carried out makes it easy to not comply with the legal obligation. For example, in the United Kingdom, a dedicated unit has been established within the Cabinet Office to oversee policies regarding the Non-Departmental Public Bodies (comparable to ZBOs) and the triannual reviews that are carried out quite diligently.

All in all, ZBO-evaluations have a low priority for Dutch politicians (Busuioc and Lodge, 2017; Dudley, 1994). The ZBOs themselves attach more importance to evaluating their performance, as reflected in the establishment of a peer review procedure. This could be interpreted as an attempt to increase the ZBOs’ reputation (Busuioc and Lodge, 2017; Schillemans and Busuioc, 2015) or ‘spin’ the debate (a presentational strategy; Hood, 2011). Reports that have come about through peer review are however not presented to parliament by the parent minister as a formal evaluation; they are made publicly available by the ZBOs and have thus only on occasion reached parliament (POC, 2012).

Finally, this study has a number of limitations that need to be mentioned. First of all, the findings are restricted to the Dutch case of ZBOs. Whether they also apply to other countries can only be found out by replicating this study. The Dutch politico-administrative context is characterized by consensus, with political parties cooperating in changing coalitions over time. Not only would this reduce the motivation to seek out political conflict, for example over incidents related to ZBOs and policy outcomes (cf. Novak, 2013), it also creates a time-lag in instigating or reporting ZBO-evaluations; the average cabinet lasts four years while ZBO-evaluations cover a period of five years. Replication of this research in countries with a majoritarian system could lead to different results (McCubbins and Schwartz, 1984; Weaver, 1986). Second, the data did not allow for advanced statistical analysis. A more in-depth interpretation of the data, through qualitative research like interviews and case studies, could give more insight. For example, additional data could be used like media reporting to determine whether incidents involving a particular ZBO have played a role in setting up an evaluation or in the report thereof.

Supplemental Material

sj-pdf-1-ppa-10.1177_09520767211022490 - Supplemental material for Blame avoidance, scapegoats and spin: Why Dutch politicians won’t evaluate ZBO-outcomes

Supplemental material, sj-pdf-1-ppa-10.1177_09520767211022490 for Blame avoidance, scapegoats and spin: Why Dutch politicians won’t evaluate ZBO-outcomes by Sandra van Thiel in Public Policy and Administration

Footnotes

Acknowledgement

The author would like to thank Joep van der Spoel, Peter van Goch, Martijn Dresmé, Stefan Soetekouw, Niels van Beurden and Harm Bassa for their assistance in the collection and coding of the data.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.