Abstract

In response to the need to strengthen university–industry collaboration (UIC), particularly with underrepresented micro and small enterprises (MSEs), this paper explores critical factors in university–MSE collaborations from both actors’ perspectives and assigns them to specific UIC phases. The study draws on a 3-year collaborative project with rich data from interviews and participant observation. Four phases—initiation, formation, execution, and evaluation—were empirically identified and delineated, contributing to the conceptualization of phases in the literature. Critical factors, factors facilitating or hindering UIC success, were assigned to the phases: for example, collaborative experience (initiation), establishing detailed work plans (formation), competent project management (execution), and what and when to evaluate (evaluation). MSE-specific factors include challenges in establishing economic reporting structures and reliance on peer-to-peer support to manage them. Presenting critical factors chronologically across UIC phases enhances practical understanding and may encourage university and MSE managers to engage in further collaborations.

Introduction

University–industry collaborations (UICs) stimulate innovation, promote knowledge acquisition, organizational learning, and problem-solving, and support symbiotic relationships between university research groups and manufacturing entities (Bui and Takuro, 2024; Fernandes et al., 2023). Universities contribute expertise across levels and provide access to advanced testing laboratories, resources less accessible to companies. UIC also allows companies to enhance their brand by partnering with successful academic institutions (Ankrah and AL-Tabbaa, 2015). The absence of internal research and development functions motivates companies to collaborate with universities (Ankrah and AL-Tabbaa, 2015) to access new knowledge and technologies. Simultaneously, university research groups face challenges due to limited access to manufacturing infrastructure, making industry collaboration crucial. UIC further creates opportunities for joint funding and supports regional growth, profitability, and innovation capability (Mura et al., 2025; Sjöö and Hellström, 2019).

Establishing effective interorganizational relationships is challenging, particularly when organizations belong to different sectors, as in UICs. Despite their recognized importance, few studies have explored the dynamics of collaborations between universities and small firms (Goel et al., 2017; Mura et al., 2025). Badillo et al. (2017) note that small firms seldom collaborate with universities, underutilizing them as sources of innovation. Rybnicek and Königsgruber (2019) highlight company size as a moderating variable for success factors in UIC, emphasizing the need to focus on small companies. While there are several studies on university collaboration with small and medium-sized enterprises (SME) (e.g., Johnston, 2022), SMEs are heterogeneous (up to 250 employees), which introduces nuances in their engagement with universities (Goel et al., 2017). The European Commission (2020) defines micro (<10) and small (<50) enterprises (MSE) as particularly unlikely to engage with universities (Silva Néto and Teixeira, 2014). Policymakers increasingly focus on SMEs, including MSEs, due to their role in employment and national income (Silva Néto and Teixeira, 2014). Accordingly, this study focuses on the under-researched MSEs.

To support successful UIC, it is essential to understand the influencing factors (Johnston, 2022; Mura et al., 2025) that create the necessary prerequisites for collaboration. Accordingly, this study examines both factors that facilitate UIC success (e.g., drivers, enablers, critical success factors) and those that hinder it (e.g., barriers, challenges, pitfalls); hereafter referred to collectively as critical factors. Critical factors can be viewed from the perspectives of different actors. Most studies adopt either the university perspective (e.g., Tseng et al., 2020) or that of SMEs (Johnston, 2022). Only a few, such as Mura et al. (2025) and Ankrah and AL-Tabbaa (2015), address both universities and firms more broadly, without specifically accounting for MSEs. Consequently, there is still limited empirical understanding of UICs from both universities and MSEs, particularly regarding which factors are critical for MSEs. Identifying these factors would provide actionable insights for universities and MSE in designing and managing effective collaborations. UICs also evolve over time, progressing through phases such as formation and operation (Ankrah and AL-Tabbaa, 2015). However, prior research rarely assigns critical factors to these phases; exceptions include Barnes et al. (2006), who recognized this difficulty, and Rybnicek and Königsgruber (2019), who emphasized the need for future studies to help actors focus on relevant factors during different UIC phases. This study addresses these gaps and advances knowledge, particularly for MSEs, regarding how successful UIC can be achieved, thereby enabling further collaborations.

The purpose of this paper is to explore critical factors in university–MSE collaboration from the perspectives of both actors and to assign these factors to specific UIC phases.

Frame of reference

Critical factors for UIC

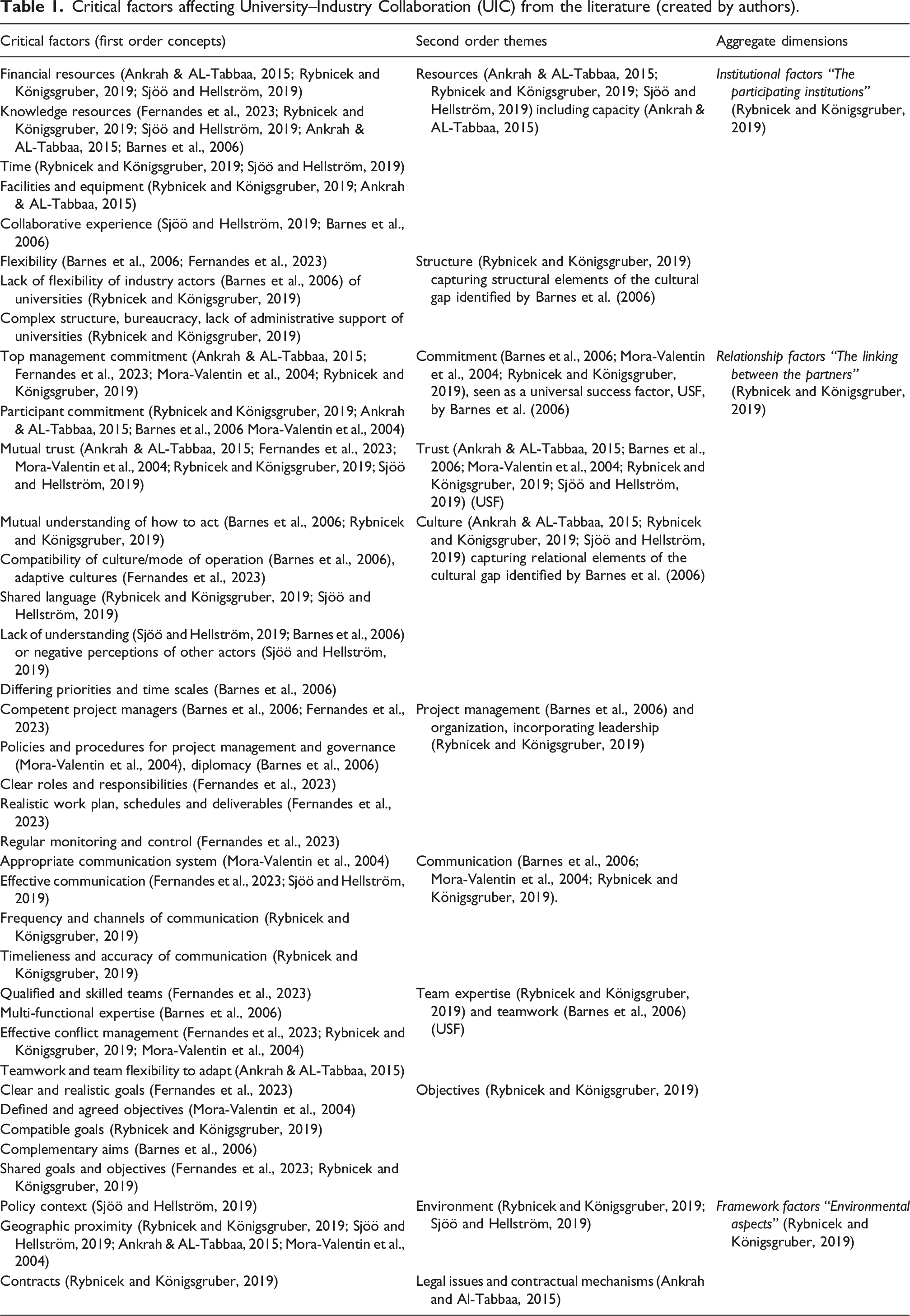

Critical factors affecting University–Industry Collaboration (UIC) from the literature (created by authors).

The aggregate dimensions broadly align with the categories proposed by Rybnicek and Königsgruber (2019). Due to the conceptual ambiguity of how objectives—such as shared goals or complementary aims—differ from relationship factors, we have reclassified objectives (originally placed under “output factors”) within the aggregate dimension of relationship factors. The three aggregate dimensions are therefore: (1) institutional factors, reflecting the characteristics of the participating actors; (2) relationship factors, representing the interactional dynamics between actors; and (3) framework factors, covering external contextual conditions such as funding requirements, contractual arrangements, and policy environment influencing collaboration. Together, these dimensions capture critical factors for UIC.

Institutional factors

The first theme is resources, relating to institutional factors or organizational characteristics. Funding, whether internal or external, is crucial for initiating and advancing UICs and applies to both universities and small companies (Löfqvist, 2017; Sjöö and Hellström, 2019; Tseng et al., 2020). Scarce capital is often a constraint for SMEs (Löfqvist, 2017), and reallocating resources is challenging, since production and sales must be maintained to ensure stable cash flow (Rybnicek and Königsgruber, 2019). Knowledge resources include, for example, scientific and technological knowledge. SMEs often face challenges in collaborating with universities for innovation (Rybnicek and Königsgruber, 2019) due to limited highly skilled staff and generally lower education levels (Silva Néto and Teixeira, 2014), although exceptions exist among university spin-offs and high-tech companies. Universities differ in knowledge resources, expertise, and industry connections (Gibson et al., 2016). Time is a crucial resource in UICs, and financial resources are converted into dedicated time (Sjöö and Hellström, 2019). Facilities and equipment include both physical assets and access to testing facilities, often integral to UIC (Ankrah and AL-Tabbaa, 2015; Mura et al., 2025). SME capacity constraints can be a limiting factor, making awareness of them essential. While universities may offer advanced testing laboratories (Ankrah and AL-Tabbaa, 2015), companies can provide manufacturing infrastructure. Collaborative experience is another key resource; a track record of collaboration is a strong enabling factor for collaboration (Mura et al., 2025; Sjöö and Hellström, 2019), supporting trust between partners (Barnes et al., 2006). Extensive professional networks enhance faculty members’ ability to form partnerships, secure research funding, and engage with industry experts (Bui and Takuro, 2024).

Structure

Flexibility is a critical factor in UICs (Barnes et al., 2006; Fernandes et al., 2023), with Barnes et al. (2006) conceptualizing a lack of flexibility among industry actors as part of the broader cultural gap between industry and academia. In our structure (Table 1), however, we differentiate the elements discussed under the cultural gap label and map them onto our aggregate dimensions. Specifically, aspects related to structural rigidity are captured under institutional factors (Structure) while cultural and value-based differences are reflected under relational factors (Culture). For small companies, organizational structures are often inherently flexible, enabling swift decision-making and effective coordination and control (Löfqvist, 2017). In contrast, universities’ complex structures can hinder collaboration through excessive bureaucracy, legal frameworks, unclear rules (Gibson et al., 2016; Sjöö and Hellström, 2019), and a lack of administrative support (Rybnicek and Königsgruber, 2019). A university’s attractiveness as a co-innovation partner depends partly on whether it is more research- or teaching-oriented (Sjöö and Hellström, 2019). Reputable and large universities—rather than geographically close ones—are more likely to collaborate with companies (Ankrah and AL-Tabbaa, 2015).

Relationship factors

Relationship factors or relational characteristics include “universal success factors” as identified by Barnes et al. (2006), such as trust and commitment. Commitment from both top management and participants is critical for UIC success (Ankrah and AL-Tabbaa, 2015; Rybnicek and Königsgruber, 2019) across all phases of collaboration (Barnes et al., 2006). The extent of a partner’s commitment reflects the resources invested and the motivation and engagement of participating staff (Mora-Valentin et al., 2004). Mutual trust underpins collaboration and innovation. Trust involves both confidence in the partner’s capabilities and the belief that they will act as promised (Dagbro, 2016). Further, culture and cultural similarities can support mutual understanding of how to act in certain situations (Rybnicek and Königsgruber, 2019). However, cultural distance, what Barnes et al. (2006) refer to as the cultural gap (e.g., in relation to differing time priorities), can mean that culture can act as both a facilitator and a barrier to collaboration. The impact depends on the extent to which actors share or differ in goals, interests, work routines, and timeframes (Ankrah and AL-Tabbaa, 2015; Sjöö and Hellström, 2019). The cultural gap may cause a lack of understanding or negative perceptions (Barnes et al., 2006; Sjöö and Hellström, 2019;). Establishing a shared language (Rybnicek and Königsgruber, 2019; Sjöö and Hellström, 2021) is essential to reduce interpretive challenges between industry and university actors. Shared understanding helps harmonize interests and priorities and supports the development of a common vision (AL-Tabbaa and Ankrah, 2016). Differing time scales are particularly problematic (Sjöö and Hellström, 2019); universities focus on long-term research and publication goals, while industry seeks rapid, market-ready solutions (Goel et al., 2017; Johnston, 2022; Sjöö and Hellström, 2019, 2021). Allocating time to collaboration also limits time for regular tasks, a challenge acknowledged by both sides (Sjöö and Hellström, 2021).

Project management is among the most important enablers of UIC (Ankrah and AL-Tabbaa, 2015). Competent project managers require technical and administrative skills, boundary-spanning abilities (Fernandes et al., 2023), and diplomacy—especially as they often lack direct authority (Barnes et al., 2006). Clear roles and responsibilities are essential (Barnes et al., 2006; Fernandes et al., 2023); proper allocation supports both goal achievement and accountability when objectives are not met (Mora-Valentin et al., 2004). Realistic work plans, developed through joint planning and follow-up, are critical for success (Sand et al., 2021) and rely on collaborative focus on milestones and deliverables (Ankrah and AL-Tabbaa, 2015). Timely project initiation is equally crucial, as early delays typically cascade into later overruns caused by procrastination, planning complexities, and a lack of information (Lock, 2013). Continuous monitoring and control with feedback to stakeholders is vital (Barnes et al., 2006; Fernandes et al., 2023) but must be balanced with creative freedom (Fernandes et al., 2023). Ongoing monitoring can also reveal tensions between collaborators, stemming from different personalities or professional perspectives (Barnes et al., 2006). Effective communication involves multiple aspects, notably frequency and channel choice. High-frequency communication typically improves quality (Mohr and Nevin, 1990; Sand et al., 2021) and serves as a key driver of trust in UIC (AL-Tabbaa and Ankrah, 2016). Varied communication channels are often preferred (Rybnicek and Königsgruber, 2019), ranging from formal, planned, routinized, and structured to informal, personalized, irregular, and spontaneous (Mohr and Nevin, 1990). Formal exchange is often unidirectional, while informal and bidirectional communication amplify the voice of weaker actors and allow non-dominant participants to be heard (Spence and Bourlakis, 2009). Bidirectional flows, including feedback (Mohr and Nevin, 1990), are essential for aligning expectations and understanding capabilities.

Teamwork is a universal success factor (Barnes et al., 2006) vital for effective UIC. It requires technically qualified members who are competent in their roles (team expertise), with the soft skills needed for collaboration (Fernandes et al., 2023). Multifunctional expertise (Barnes et al., 2006) and effective conflict management (Mora-Valentin et al., 2004; Rybnicek and Königsgruber, 2019) are required to deliver strong teamwork and adaptability (Ankrah and AL-Tabbaa, 2015). Objectives: Shared objectives are critical in UICs (e.g., Sand et al., 2021), yet goals often diverge: industry prioritizes tangible outcomes such as business opportunities, skills, and networks, while academia focuses on knowledge generation (Sjöö and Hellström, 2021). Even if not complementary or shared, goals must be compatible. Successful UIC requires understanding each partner’s interests (Rybnicek and Königsgruber, 2019) and specifying clear, agreed objectives (Mora-Valentin et al., 2004; Rybnicek and Königsgruber, 2019).

Framework factors

Environmental characteristics include external context and legal issues and contractual mechanisms. The former covers geographic and policy contexts of UIC. Geographic proximity facilitates collaboration by enabling face-to-face meetings, enhancing visibility, and improving partner evaluation (Ankrah and AL-Tabbaa, 2015; Sjöö and Hellström, 2019). The policy context includes government support, legal restrictions, and market conditions (Rybnicek and Königsgruber, 2019), with universities increasingly encouraged to engage in UIC through policy initiatives (Ankrah and AL-Tabbaa, 2015). Contracts are key facilitators, formalizing roles and responsibilities and mitigating perceived relational or technical risks when trust is limited (Dagbro, 2016; Sand et al., 2021), providing companies with the necessary control to commit resources to UICs (Dagbro, 2016). The contract can be seen as a hybrid, that bridges institutional characteristics, relational governance, and environmental constraints. In UIC, contracts often coincide with funding agreements, serving not only as dyadic governance tools but also as externally imposed arrangements that shape actor inclusion, reporting requirements, economic conditions, and power distribution. For these reasons, we classify contracts as a framework factor.

UIC phases and assigning critical factors

The literature offers various conceptualizations of UIC phases, ranging from two to four distinct stages. Sand et al. (2021) outline four phases within a collaboration arena: initiation, implementation, evaluation (from project end to evaluated project), and either renewal or termination, while noting that the phases may not be strictly linear and that contracts should include clear evaluation structures. Sjöö and Hellström (2021) identify two phases: initiation (including idea generation and funding acquisition) and interaction. They examine collaboration conditions and outcomes within these phases. Similarly, Ankrah and AL-Tabbaa (2015) distinguish formation and operational phases, emphasizing collaboration outcomes. Barnes et al. (2006) likewise identify a formation phase linked to team establishment, followed by an execution phase. They also highlight the importance of evaluating outcomes toward project completion. Fernandes et al. (2023) separate pre-award (proposal) and post-award (execution) phases, adding a transitional phase before formalizing the contract. Johnston (2022) focuses on UIC formation and function, with formation following project initiation. A synthesis of these studies suggests that even those advocating fewer phases still incorporate aspects such as evaluation. A general evaluation decision is whether the evaluation should be formative and diagnostic (during the process), summative and evaluative (after completion), or a combination of both to provide holistic feedback (e.g., Segbenya et al., 2023). Across these studies, phase boundaries remain ambiguous. In contrast, Ankrah and AL-Tabbaa’s (2015) formation phase is clearly delineated, beginning with establishing the purpose and ending with contract signing. These observations indicate that while existing phase models acknowledge the importance of evaluation, the literature leaves open questions about the explicit treatment of evaluation and how phase boundaries should be structured.

Studies have linked critical factors to specific UIC phases (e.g., Fernandes et al., 2023; Johnston, 2022; Ankrah and AL-Tabbaa, 2015; Barnes et al., 2006), yet two limitations remain. First, there is ambiguity in how phases are defined and delineated; second, assigning critical factors to particular phases is challenging. Barnes et al. (2006), for example, note that while some factors can be seen as discrete elements, they rarely fit neatly into a single phase, while certain factors—such as project management or efforts to bridge cultural gaps—tend to be more prominent during execution. Ankrah and AL-Tabbaa (2015) link factors such as agreeing on a plan and drafting the contract with formation, while emphasizing communication during operation. We contend that university and small company practitioners often see UIC as a chronological sequence of phases, which limits the practical use of factor-by-factor tables, such as Table 1. Accordingly, this study draws on empirical data to assign critical factors to UIC phases and thereby enhance understanding of UIC.

Methodology

Research design

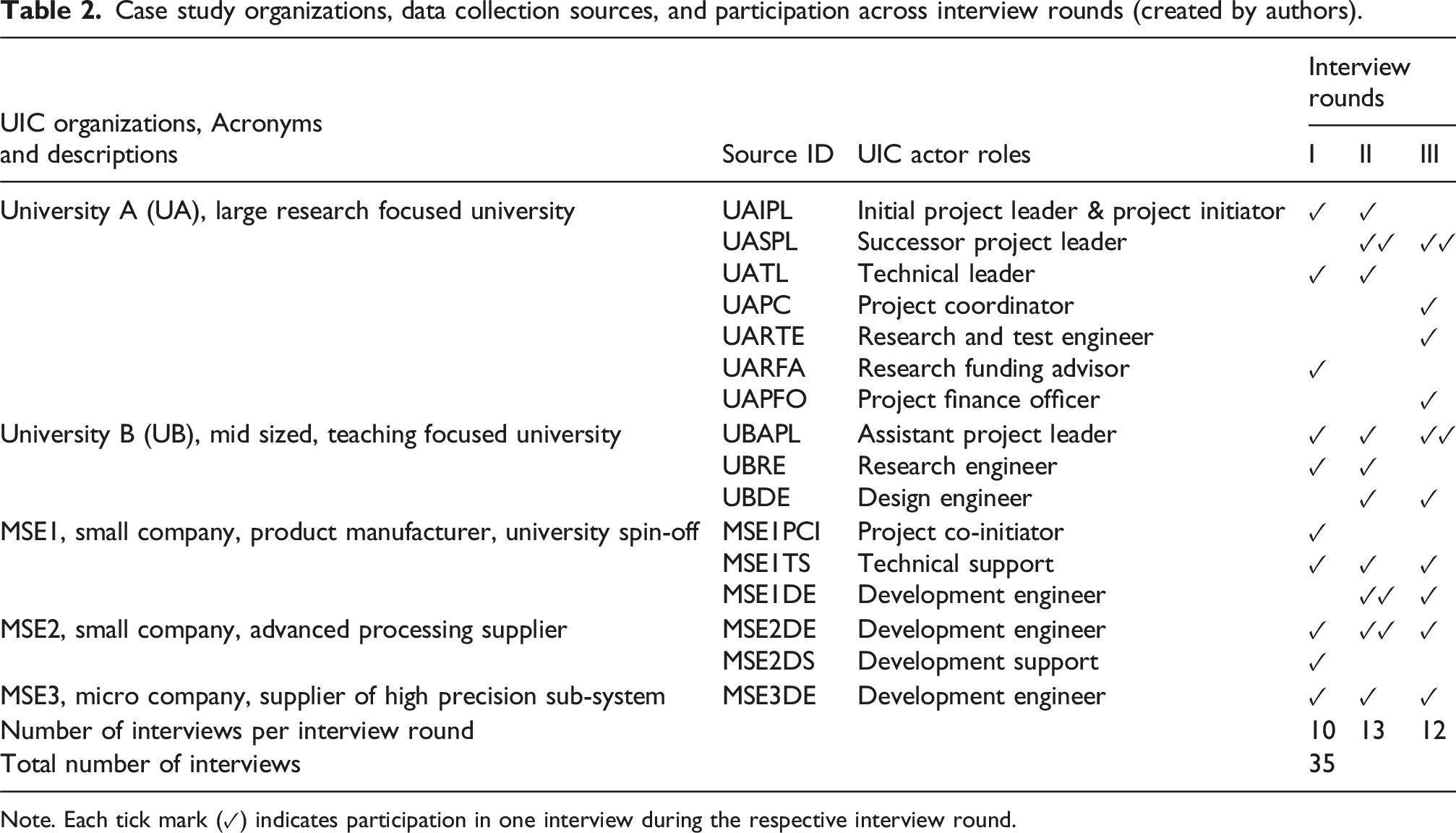

An illustrative case of UIC is the Cryogenic Magnet project, an innovative product development initiative, already involving two universities (A and B) and three MSEs (1-3). The project was initiated by University A in collaboration with MSE1, as they possessed an innovative idea. Together they selected the remaining actors based on their unique manufacturing competencies (MSE), local presence (University B), consistent with one of the funding agencies’ criteria, and their willingness to participate in the project. The UIC was primarily funded to develop a prototype. A secondary objective was to increase understanding by evaluating university-industry collaboration including MSEs. This evaluation was conducted by the authors of this paper, referred to as the accompanying researchers. A qualitative research approach is suitable when the primary goal of a study is to gain deep insights and understanding (Bryman and Bell, 2022). The purpose was outlined in the UIC funding application and refined by the researchers to ensure theoretical relevance. Case studies are often suitable for understanding detailed, complex, contemporary, and unique phenomena (Yin, 2018), so a qualitative case study research design was chosen. With full 3-year access to the UIC, the researchers adopted an interactive research approach (e.g., Sandberg et al., 2022), well-suited to capturing collaboration dynamics while contributing to both research and practice. Selecting this single case, common in interactive research, is based on its potential to provide in-depth learning opportunities, in line with Yin (2018). Altogether, the research is considered well-designed for its purpose. The empirical material stems from the same longitudinal collaboration project as Ülgen and Forslund (2025), who examined the UIC from a supply chain perspective, using proximities as the theoretical lens and assessing delays as an outcome.

Data collection

Different types of data were collected throughout the UIC duration. The primary data collection methods were semi-structured interviews, participant observations, and interactive workshops. As the accompanying researchers joined after the UIC was initiated, initiation was captured retrospectively during the first round of interviews. The interviews with project participants were guided by the progressively developed frame of reference to ensure construct validity (e.g., Yin, 2018). Respondents were selected based on their significance during different UIC phases, either through active involvement or through a lack of involvement that was perceived to have affected the collaboration. According to Swedish research ethics guidelines, formal ethical review was not required for this study. All participants were informed about the study’s purpose and provided verbal informed consent. Confidentiality was maintained by using participant IDs and the removal of identifiable details. Three major rounds of interviews were conducted, each interview lasting 40–75 minutes. The first round, 6 months after project launch, included 10 interviews; the second, after 1.5 years, comprised 13; and the third, after 3 years, added 12 more, totaling 35 interviews.

Case study organizations, data collection sources, and participation across interview rounds (created by authors).

Note. Each tick mark (✓) indicates participation in one interview during the respective interview round.

Analysis

As data were collected longitudinally, phases were identified through observations, interviews, and workshops. The identification and delineation of phases was a particular focus in several interactive workshop discussions, paying specific attention to how phase boundaries developed in practice. The understanding of critical factors emerged over the course of the analysis, and they were assigned and analyzed within the different UIC phases. Critical factors, as described in the frame of reference, refer to attributes, behaviors, or conditions related to the actors, their relationships, or the surrounding environment. These factors may be present or absent, or they may reflect actions that actors take or fail to take. They capture what the actors have or lack and what they do or fail to do, influencing the progression and outcome of the collaboration. If a critical factor affects the UIC in distinct ways across phases, it may be assigned to multiple phases. It is particularly important to establish enabling or positive critical factors early in a UIC as they continue to guide and shape the collaboration in subsequent phases. For example, communication is a critical factor in both formation and execution. In formation, it is labeled “establishing communication structure,” while in execution, it is referred to as “maintaining communication structure”.

Both researchers participated in the data analysis by coding the data through note-taking and color-coding the documented statements, observations, and other materials, as recommended by Halldórsson and Aastrup (2003) to enhance confirmability. Any disagreements between the researchers were reconciled. Drawing on, for example, Rockmann and Vough (2024), quotations provide illustrative examples and evidence. A pattern-matching approach (Bryman and Bell, 2022) was applied to the data, and staying close to the frame of reference was essential to ensure dependability and confirmability (Halldórsson and Aastrup, 2003). Given our role as accompanying researchers conducting interactive research, it was essential to mitigate potential personal biases, enhance objectivity, and reflexivity. Reflexivity is an important mitigation strategy for insider bias in qualitative research, building an awareness of continuously balancing the insider researcher perspective with external perspectives (Finefter-Rosenbluh, 2017). Following the recommendations of Halldórsson and Aastrup (2003), we employed triangulation across multiple data sources and drew on discussions at university seminars and conferences. These external perspectives provided additional viewpoints for interpreting the findings and increasing the robustness of qualitative research (Finefter-Rosenbluh, 2017).

Empirical findings and results

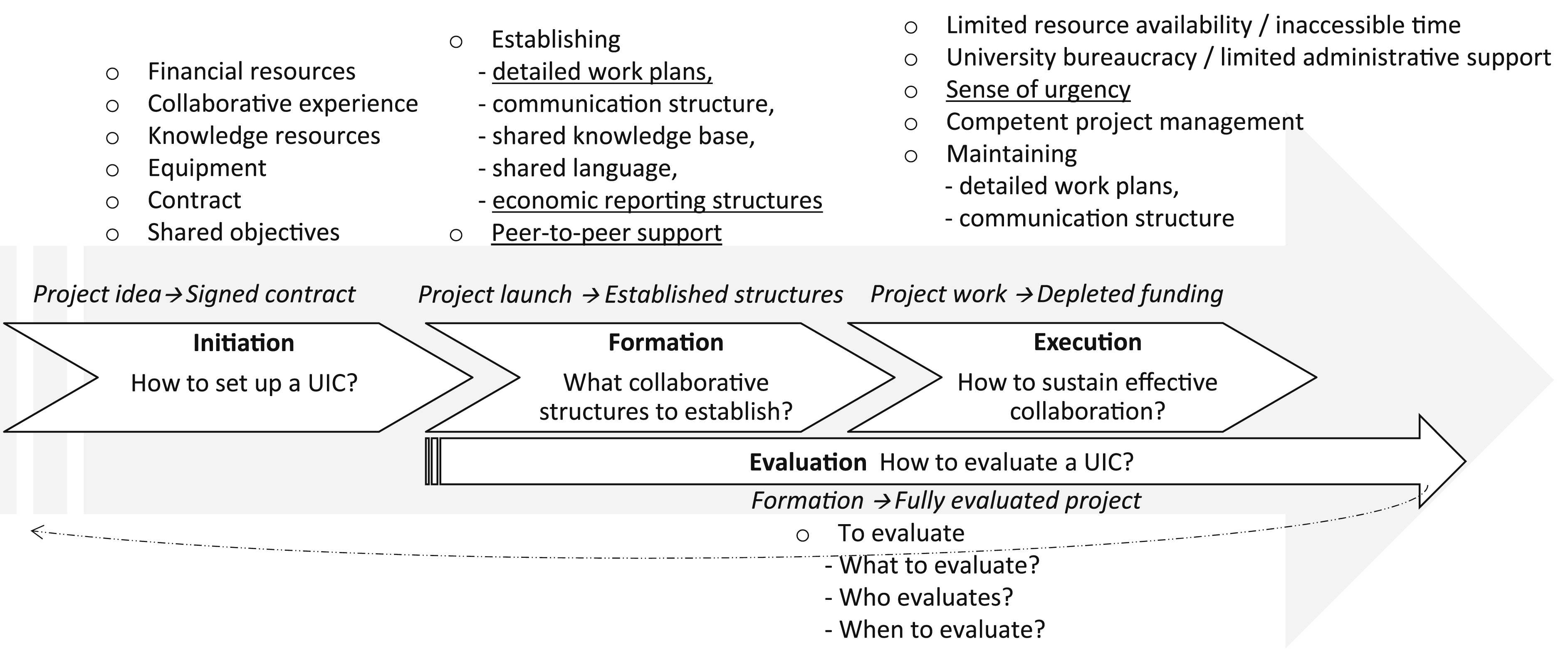

Building on the simple phases of formation and function (Johnston, 2022), our longitudinal data highlighted the need to distinguish additional phases at the start and end of UICs. Before the project formally begins, an initiation phase (Sjöö and Hellström, 2021) is important for defining objectives and identifying actors. Similarly, following project completion, an evaluation phase captures evaluation and reflection, corresponding to what Sand et al. (2021) call evaluation and termination. Taken together, these considerations support a four-phase model. This section provides UIC phases overviews and assigns critical factors to the initiation, formation, execution, and evaluation phases.

The initiation phase and its critical factors

Phase overview

The initiation phase (Sjöö and Hellström, 2021) began with a project idea and concluded with the signing of the contract. This empirical framing aligns closely with the explicit phase delineation proposed by Ankrah and AL-Tabbaa (2015), who describe a similar phase (referred to as the formation phase). During workshop discussions with project participants, this delineation resonated with their understanding of the project’s early development, and we therefore retained the suggested phase boundaries. The phase also corresponds to what Fernandes et al. (2023) refer to as the pre-award phase. The project idea originated with the initial project leader (UAIPL), a recognized authority in the subject area. It was primarily developed within UA, with MSE1 in mind as the lead manufacturer. In this phase, objectives were defined, participants needed to achieve them were identified, and funding applications were prepared, thereby establishing the project boundaries. One year later, the project received funding approval, and the contract was signed. The initiation phase, from idea to signed contract, spanned 12 months, making it a significant phase in the UIC lifecycle. Critical factors for this phase clarify how to set up a UIC.

Critical factors

Financial resources, commonly considered a prerequisite for UICs (e.g., Rybincek and Königsgruber, 2019), were a crucial aspect of establishing this UIC: “We wouldn’t have been able to do the project without the external funding” (MSE1PCI). More importantly, the funding agencies’ requirements also shaped who could participate. “The participation of small companies was mandated by Funding Agency 1” (UAIPL). Similarly, Funding Agency 2 stipulated regional actors (UBAPL), aiming to ensure that results would stay within the region. This ambition ultimately led to the recruitment of the regional university (UB) and highlights the importance of sponsors, as noted by AL-Tabbaa and Ankrah (2016), as well as the policy context (Sjöö and Hellström, 2019). In addition, the initial project leader’s professional network played a pivotal role in accessing industry professionals and external funding, underscoring the influence of individual actors in initiating UIC. The recruitment of the participating MSEs was grounded in collaborative experience, consistent with the findings of Mura et al. (2025) and Barnes et al. (2006).

MSE1 contributed longstanding collaborative experience with UA, along with critical knowledge resources (e.g., Fernandes et al., 2023) and equipment (Ankrah and AL-Tabbaa, 2015; Rybnicek and Königsgruber, 2019). As the initial project leader and project initiator emphasized: “Based on our market knowledge, MSE1 was the only possible product manufacturer” (UAIPL). Similarly, MSE2 and MSE3, both introduced by MSE1, were well-known suppliers selected for their technical capabilities and equipment. MSE3 was particularly valued for its precision manufacturing expertise and advanced machinery. As noted about MSE2, “They have experience working with high-demand customers, such as those in the aviation industry” (MSE1PCI), and they are “completely unique” (UAIPL) with expertise unmatched elsewhere in Europe. Together, the MSEs complemented the universities’ simulation and design knowledge with hands-on manufacturing competence.

The contract, comprising the application and funding decision, reduced perceived risk (Dagbro, 2016), as reflected in this remark: “The contract was important, as we had no order” (MSE2DS). Although the MSEs already knew each other and did not perceive major risks, the formalized contract nonetheless enabled them to engage fully in the UIC. This finding extends Sand et al. (2021) to MSEs and reinforces Dagbro’s (2016) argument that formalized agreements can provide companies with the control needed to commit resources to UICs. Shared objectives among the actors were essential for UIC initiation, contrasting the often-diverging objectives in UICs as described by Sjöö and Hellström (2019). The applied project’s main objective was to develop and manufacture a cryogenic magnet prototype, which all partners readily supported. This objective was complemented by broader aims to increase understanding of UIC and to strengthen the region, which allowed for risk spreading. As the research funding advisor (UARFA) noted, “Even if every magnet ends up short-circuiting, the project can still be successful.” The MSEs were primarily focused on creating a functioning magnet: “We [the MSEs] share a common technical goal focused on the product, the others are more interested in collaboration” (MSE2DE).

The formation phase and its critical factors

Phase overview

The formation phase (e.g., Johnston, 2022) was empirically delineated as beginning at project launch and concluding when the project was fully established. It corresponds closely to what Fernandes et al. (2023) call the transitional phase, following the pre-award phase. This phase involves partners getting to know each other while establishing working methods and communication structures. The formation phase lasted more than 6 months, corroborated by one of the participants: “It took 6 months for the actors to get to know each other and to establish the project” (UAIPL). From an empirical standpoint, the project was considered established once collaborative structures were in place. Critical factors during this phase clarify what collaborative structures should be established for effective project execution.

Critical factors

Establishing a detailed work plan is a critical factor for UIC (Sand et al., 2021) and requires focus on milestones (Ankrah and AL-Tabbaa, 2015). The work plan in the formation phase was empirically characterized by differing perspectives between universities and MSEs, consistent with findings by Sjöö and Hellström (2019, 2021). Overall, the work plan was perceived as realistic, as recommended by Fernandes et al. (2023). However, all MSEs agreed that it was too vague: “The plan has no milestones. We need to know if it’s slipping […] even if it is not exactly right” (MSE3DE). MSE1 pushed for a more detailed plan and eventually created one, but not until the execution phase. In contrast, the universities were less concerned. Establishing communication structure is considered critical during formation. A meeting structure was established for all 3 years, with fixed weekdays and times based on a 4-week cycle. Online meetings were a prerequisite for maintaining the recommended high meeting frequency (Mohr and Nevin, 1990). Project meetings were held every fourth week, technical development meetings biweekly, and the final week of each cycle was reserved for additional meetings. The structure relied on all participants attending all meetings relevant to them. The frequent meetings were intended to ensure the project’s progress, simplify scheduling, and help build confidence and trust among the actors, thereby facilitating collaboration beyond this structure. This was especially important for the MSEs: “The regularity of the meetings creates a strong sense of community, even though we don’t meet in person. It also makes it easier to follow up and make further contact” (MSE1DE). Even the agenda for each meeting was predefined, structured to cover all aspects of the project or innovation, giving each participant a dedicated slot.

Establishing a shared knowledge base and shared language among the project participants—individually and collectively—was supported through several lectures on the prototype technology, as recommended by, for example, Ankrah and AL-Tabbaa (2015). These lectures also contributed to the development of a shared language within the project. “Initially, we said that we come from different worlds […] the lectures were good for helping us speak the same language” (UBAPL). Establishing economic reporting structures: The funding agencies required detailed economic reporting, which proved particularly challenging for the MSEs. Despite a lecture provided by Funding Agency 1, the MSEs repeatedly turned to the project financial officer for assistance: “They were so unaccustomed. Universities are more used to working, say, 20% on a project while companies are used to charging exact hours to customers” (UAPFO). The micro company MSE3 could neither extract the required data from its accounting system nor afford a new one (as suggested by one of the funding agencies), and therefore had to hire an external accounting firm, an unbudgeted cost. “What a circus the financial reporting was—we barely have any minutes left in the week for things like this in a small company” (MSE3DE). The MSEs frequently turned to each other for support, which they found invaluable. Such peer-to-peer support among the MSEs has not been highlighted in previous literature.

The execution phase and its critical factors

Phase overview

The execution phase (Barnes et al., 2006) corresponds to what others have called the function (Johnston, 2022), implementation (Sand et al., 2021), interaction (Sjöö and Hellström, 2021), operation (Ankrah and AL-Tabbaa, 2015), and post-award phases (Fernandes et al., 2023). It is generally the longest phase of an innovation project; in the present study, it lasted just over 2 years. Due to limited literature, defining the end of this phase was discussed empirically. The question was whether it concludes when UIC objectives are achieved (e.g., prototype completion, even before the funding period ends), or when funding is depleted and the project terminates. The findings suggest that the former situation naturally leads to further development within the scope of the existing UIC. In the studied UIC, activities continued beyond the depletion of initial funding, sustained through faculty resources and self-financing from companies. Despite this, the agreed definition of the phase was that it begins with actual innovation work within the established structures and concludes with project termination when funding is depleted. Critical factors during this phase center how effective collaboration can be sustained.

Critical factors

The execution phase is where collaboration boundaries and established structures are truly tested, revealing how well they support effective collaboration and project success. Resources were generally available, though several episodes of limited resource availability became critical. While the MSEs generally showed a high degree of flexibility, pushing forward to catch up on delays by working after hours on the project or running production overnight, there were times when machines became bottlenecks and were temporarily inaccessible. One MSE faced financial constraints and had to prioritize higher-paying customers, while another had a fully booked production schedule during a crucial stage, leaving no spare machinery, personnel, or space for project activities.

In exploring solutions, one participant half-jokingly asked: “Do you [UB] have larger facilities we can borrow?” (MSE1TS). Even if tentative, such suggestions highlight the seriousness of the problem, an issue particularly pronounced for MSEs. One participant from the research-intensive university (UA) observed: “Production time slots at the companies are fixed, whereas in academia we can shift between tasks more freely. We rarely face hard deadlines” (UAPC). These episodes illustrate the resource challenges small companies face when balancing cash flow with the need to allocate sufficient resources for project work (e.g., Ankrah and AL-Tabbaa, 2015; Löfqvist, 2017; Rybnicek and Königsgruber, 2019). In this context, production resources such as machinery, testing equipment, or staff were occupied by activities outside the UIC’s control, making them inaccessible when needed for project work, even if theoretically available. A different situation occurred at the teaching-oriented University B, where teaching staff had formally allocated project time but were unable to use it effectively. In contrast to the MSEs, whose resources were diverted to other urgent activities, the university case reflected a loss of time rather than a redirection. The design engineer’s (UBDE’s) project time was consumed while waiting for the required CAD software to be procured and installed: “By the time the software was available, my time window for the project was already over and my teaching period had begun.” We therefore use the term inaccessible time to describe time that could have been used for project work (available time) but was, in practice, lost due to scheduling conflicts or other external constraints.

Although a late identification of CAD software requirements triggered a delay, the problem was intensified by university bureaucracy and limited administrative support, as mentioned by Rybincek and Königsgruber (2019), which slowed the acquisition. The procedures for obtaining licenses, installing software, and updating versions took considerable time, and were even affected by holidays, when key staff were not replaced. By the time the software was ready, the design engineer’s accessible time had been lost. This example illustrates how university bureaucracy can hinder collaboration (Sjöö and Hellström, 2019), and to illustrate the interdependencies of critical factors. At this stage of the project, we, as the accompanying researchers, questioned whether universities sometimes lack the sense of urgency required in industry, interpreting this as an illustration of a cultural gap (Barnes et al., 2006). The example also highlights the different time scales at play within universities: the flexibility expected in research projects, contrasts with the rigidity of fixed teaching schedules, particularly at the teaching-intensive UB and to a lesser extent in the research-intensive UA. As a result, more critical factors are likely to influence UICs when teaching-intensive universities are involved. Collectively, these examples highlight a difference between MSEs and universities, where the MSEs display a generally higher sense of urgency, and a focus on keeping deadlines. This was articulated by UASPL: “In academia, we have a different perception of time. These short time frames are new and unfamiliar to us.” Overall, we observed that MSEs focused on finding solutions, while the universities often preferred to extend the delayed project. Both strategies were needed and used. Awareness of other actors’ perceptions of time can help ease tensions when problems arise, allow time-related issues to be addressed early, ensure that buffer measures are in place, and that plans help account for the unplannable.

The project was initially led by a project leader, who handed over the role early in the execution phase to a successor, with both leaders supported by the same assistant project leader. Competent project management (Fernandes et al., 2023) was strengthened by recruiting a project coordinator after the need for stronger planning, coordination, and practical guidance was recognized. Rather than replacing existing leadership, this addition complemented the existing skills for more complete project management. Combining academic experience with hands-on industry collaboration, including technical management, the project coordinator provided the cross-boundary competence needed. The value of this addition was recognized: “His [UAPC’s] role was to bridge universities and companies” (UATL) and “The project coordinator, who had not been budgeted for, should have been included earlier, given his planning competence” (UASPL). Projects often compete with regular tasks, creating potential conflicts for project management. Dedicated management support that actively promotes the project is therefore a critical factor for UIC projects. Maintaining a detailed work plan: During execution, the universities began to voice concerns about the lack of a detailed work plan. “We are not very good at this type of planning” (UASPL). However, with the project coordinator’s inclusion, the situation began to change: “He coordinates and ensures communication and planning […] now this is clear” (UATL). Planning competency (Lock, 2013; Sand et al., 2021) may reside within companies or, as in this case, within universities, and should be budgeted as part of project management.

Maintaining communication (Fernandes et al., 2023; Sjöö and Hellqvist, 2019), implemented through a meeting structure, had a positive impact by placing the UIC in a shared context: “You know what everyone is doing” (MSE1DE). The formalized meeting structure and agenda required active participation from all actors, which encouraged maintaining communication structure, balancing planned and routine interaction with room for spontaneous bidirectional exchange (Mohr and Nevin, 1990). This helped all voices be heard, strengthening mutual understanding of the task. The high meeting frequency and, by extension, high communication frequency, kept the innovation work on track and supported trust development, in line with AL-Tabbaa and Ankrah (2016). It also spurred informal communication outside the meetings, further reinforcing collaboration. However, the communication structure was not without challenges. Certain participants were often absent due to inaccessible time, causing them to miss crucial context and opportunities for giving and receiving feedback, which in turn created communication gaps and design mistakes. This contrasts with Mohr and Nevin (1990), who emphasize the importance of bidirectional communication. When participants were absent, their voices were effectively silenced, leaving them unable to influence decisions or steer the project’s course, an outcome resembling the position of weaker actors in buyer-supplier relationships (Spence and Bourlakis, 2009). In this case, however, the weakness was not externally imposed but resulted from self-exclusion, a consequence of non-participation despite mechanisms designed to ensure that all actors remained involved.

The evaluation phase and its critical factors

Phase overview

Although evaluation is widely recognized as necessary in UIC (e.g., Barnes et al., 2006), Sand et al. (2021) is the only study explicitly mentioning it as a distinct phase. We argue that evaluation should not be viewed merely as a recurring factor across phases, but as an independent phase in its own right, addressing how a UIC is assessed and formally concluded. This delineation was empirically reinforced and shaped by funding agencies’ particular requirements for project evaluation. Given the limited conceptualization of an evaluation phase in existing literature, we draw on our empirical findings to provide one below.

Critical factors

The foremost task is to ensure that evaluation actually takes place and captures both positive and negative lessons (Sand et al., 2021). This phase involves documenting experiences, reflecting on outcomes, and considering whether to continue the collaboration or initiate new collaborations. Several practical questions for evaluation design arise, including what to evaluate. Project evaluations often emphasize outcomes such as goal fulfillment, timing, and budget adherence. When collaboration itself is evaluated, the process becomes more central, along with the knowledge generated and its practical value. However, individuals may gain significant knowledge without recognizing its practical relevance to current or future roles. This raises important questions about how such learning should be valued and whether the right individuals were involved or assigned the most suitable tasks.

In this case, procurement principles and the project’s funding structure led UB to carry out the CAD construction. This arrangement created several challenges and resulted in learning that was largely project-specific and of limited future value. MSE1, whose core competence lies in magnet construction, was arguably better positioned to develop CAD expertise and strengthen its long-term capabilities in cryogenic magnet design. One MSE1 representative critically noted: “With so much consultancy support involved, did the [UB] design engineer actually learn anything?” This illustrates how funding structures and role allocation can inadvertently constrain learning and capacity building. Project evaluations should therefore also consider whether the chosen constellation of actors supports sustainable learning and whether alternative task allocations could generate more enduring outcomes. Finally, evaluation should address the UIC project’s future trajectory: should it continue, and if so, with essentially the same constellation of partners?

Who evaluates the project? In this case, third-party evaluations were funded. Additionally, each partner can assess the project from their own perspective: “Naturally, we’ll do our own internal evaluation [as well]; that’s important” (MSE1PCI). However, for the smallest companies, the capacity to do so may be limited. When should evaluation occur? Insights from the accompanying researchers were regularly shared with and acknowledged by project management. As noted, “Evaluation has to be continuous […] don’t do it too late” (UASPL), and “Collaboration can be evaluated while the project is still running” (MSE1TS). Such an ongoing approach demonstrates that evaluation often overlaps with earlier phases rather than following a strictly sequential order, supporting Sand et al.’s (2021) argument and challenging linear phase models. It also aligns with Segbenya et al. (2023), who advocate a combination of formative and summative evaluations to provide holistic feedback. Consequently, we define the evaluation phase as a continuous process that begins diagnostically during formation and concludes with a summative assessment.

Conclusions, contributions, limitations, and further research

Conclusion

To explore critical factors in university–MSE collaboration from the perspectives of both actors and to assign these factors to UIC phases, a 3-year UIC was studied, characterized by extensive access and rich data collection. The critical factors identified were mainly institutional and relationship-related, and were assigned across four delineated UIC phases, illustrated in Figure 1. MSE-specific factors are underlined. Delineated UIC phases with critical factors in university–MSE collaboration.

Contributions to literature

To realize the potential benefits of UIC (Bui and Takuro, 2024; Sjöö and Hellström, 2019), it is essential to understand its critical factors (Johnston, 2022). Although numerous critical factors have been identified in earlier research, most studies have neither (1) adopted the dual perspective of both MSEs and universities, (2) specifically highlighted critical factors for MSE, nor (3) presented these factors in an accessible manner. This study advances our understanding of critical factors by empirically examining UIC between universities and seldom-researched MSEs from both actors’ perspectives, addressing gaps highlighted by Mura et al. (2025), Rybincek and Königsgruber (2019), and Goel et al. (2017). MSE-specific factors include challenges in establishing economic reporting structures and reliance on peer-to-peer support to manage them (factors not previously noted in the literature). Earlier literature highlighted certain MSE limitations, such as a lack of R&D capacity (Ankrah and AL-Tabbaa, 2015) and lower education levels (Silva Néto and Teixeira, 2014). In contrast, this study shows that the MSEs contributed strongly to the UIC. Critical factors that differ between MSEs and universities include the MSEs’ stronger and earlier need for detailed work plans. The study also reinforces earlier findings on the risks of involving teaching-oriented universities in UIC (Gibson et al., 2016; Sjöö and Hellström, 2019), where available and accessible project time may diverge due to organizational rigidity arising from fixed teaching schedules. This shows how mismatched structures and timelines between universities and MSEs can shape collaborations.

Critical factors identified in the literature are typically aggregated at broad analytical levels or organized in other content-based ways. Rybnicek and Königsgruber (2019) emphasize the need for more accessible and comprehensible structures. Although previous studies have recognized that UICs evolve over time, phases are seldom delineated. In this study, we were able to delineate the beginning and end of four distinct UIC phases, complementing earlier work (e.g., Ankrah and AL-Tabbaa, 2015). This clarification improves the ability to link critical factors to specific UIC phases, addressing the challenge acknowledged by Barnes et al. (2006). We include a distinct evaluation phase to explicitly capture both formative (diagnostic, during the process) and summative (evaluative, after completion) assessments (e.g., Segbenya et al., 2023). This addition addresses a gap in prior phase models, where evaluation is often embedded but not explicitly delineated. As expected, relationship factors emerged as the most frequently observed. Several factors were found to interact, creating complex interdependencies that significantly affected project success, as exemplified by the CAD software acquisition.

Contributions to practice

Presenting critical factors chronologically across UIC phases enhances practical understanding of achieving successful collaboration and realizing its benefits for both universities and MSEs. Including a distinct evaluation phase also provides clearer guidance for practice.

This helps practitioners better anticipate challenges, proactively address hindering factors, and set realistic expectations for UIC. The study may also encourage managers in universities and MSEs to pursue additional collaborations. Deans and departmental heads in teaching-intensive universities should be particularly aware of the need for detailed staff planning during both the formation and execution phases to avoid delays. From a risk management perspective, MSEs and funding agencies could instead favor research-intensive universities where greater flexibility may reduce such risks. However, such a shift would risk creating a negative spiral, further weakening research engagement in teaching-intensive universities. Funding requirements were found to influence several critical factors, such as the inclusion of specific actor types and economic reporting. To better support future UICs between MSEs and universities, funding agencies could increase their awareness of how such requirements shape collaboration and consider revising them to enable rather than constrain collaboration. Concrete measures could include encouraging MSEs to explicitly budget for economic reporting when applying for UIC funding or reducing reporting burdens where possible. These findings therefore also have implications for policymakers, by highlighting how funding and governance frameworks can be designed to support collaboration-friendly environments for MSEs, which are central for income generation and employment.

Limitations and further research

Transferability refers to the extent to which qualitative research findings can be meaningfully applied across settings and over time (Halldórsson and Aastrup, 2003). Although this exploratory study is based on a single, illustrative case, it provides rich, in-depth insights into critical factors and actor dynamics offering empirically grounded understanding that is meaningful for informing both literature and practice. Transferability, however, is inherently limited, particularly in the Swedish context and the advanced machinery industry. Future research could increase transferability by complementing this study with broader quantitative surveys to test and validate the proposed insights and relationships. Additional studies could also examine UIC in different geographic and industrial settings to assess the robustness of the identified critical factors. Moreover, future research could investigate critical factors in relation to UIC outcomes, such as patent applications, time-to-market, and financial performance. Finally, examining outcomes across different UIC phases, in line with a formative and diagnostic evaluation approach (Segbenya et al., 2023), represents another promising avenue for continued research.

Footnotes

Acknowledgements

The authors would like to thank the European Regional Development Fund through the Swedish Agency for Economic and Regional Growth (grant no. 20292704) and Region Kronoberg, Sweden (grant no. 20290751) for funding this project.

Ethical considerations

Consent to participate

All participants were informed about the study’s purpose and provided verbal informed consent to participation and publication. The accompanying researchers’ study, aimed at examining the collaboration process, was part of the larger collaborative innovation project and was funded by the same agencies that supported the UIC. Given the small number of participating organizations and the publicly funded nature of the project, full anonymity cannot be guaranteed. However, confidentiality was maintained using participant ID’s and omitting identifiable details.

Consent for publication

Not applicable (see above). No identifiable personal data are included in this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the European Regional Development Fund through the Swedish Agency for Economic and Regional Growth (grant no. 20292704) and Region Kronoberg, Sweden (grant no. 20290751).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The qualitative data collected (interview transcripts, observations, and researchers’ notes) from the university–industry collaboration (UIC) are not publicly available due to the context specific nature. Sharing the data in full could reveal commercially or contextually sensitive information. Access is therefore restricted to the accompanying researchers. However, illustrative quotations that reflect participants’ perspectives are included throughout the article and additional aggregated information may be provided upon reasonable request.

Writing assistance

The authors acknowledge the professional language editing services provided by Edit My English.