Abstract

This article examines how algorithmic management (re)shapes managerial understandings of risk within homecare. Engaging with the sociology of risk and care theory, we extend algorithmic management debates by examining its application in homebased disability and aged care. Drawing upon interviews with senior managers (n = 15) from Australian homecare organisations, we explore how algorithmic management influences how risk is recognised and responsibilised. A notable difference of algorithmic management in homecare compared with other sectors is that a primary use for these systems is to support compliance and reduce harm, with the management of risks framed as a key care practice for managers. Our findings reveal that in homecare these systems, from a care perspective, enable organisations to operationalise risk, yet at the same time, institutionalise new managerial rationalities that both recalibrate and complicate care oversight. Finally, our findings highlight the importance of regulatory pressures in shaping how algorithmic management is used.

Introduction

Algorithmic management describes the application of self-learning algorithms and associated institutional devices for the organisation and control of labour traditionally enacted by human managers (Lee et al., 2015). Commonly associated with the on-demand ‘gig’ or ‘platform’ economy (Alacovska et al., 2022), and most frequently studied in the context of food delivery, rideshare driving and crowdwork (Möhlmann et al., 2021; Veen et al., 2020; Wood et al., 2019). Another emerging area where these systems are used is in homebased disability and aged care (McDonald et al., 2023). Here this technology is used to match care recipients and support workers, to manage and oversee this geographically dispersed service delivery (Naderi et al., 2023) and – as this study will focus upon – the management of risk.

To date, the use of algorithmic practices in disability and aged homecare sectors has received limited attention (for notable exception see Glaser, 2021). Unlike the transactional, short-term encounters characterising archetypal industries using algorithmic management like rideshare, food delivery or crowdwork (e.g. Lee et al., 2015; Veen et al., 2020; Wood et al., 2019), homecare involves ongoing relational interactions in the private sphere of people’s homes (Macdonald, 2021). Identifying how the application of algorithmic management in homecare harmonises or diverts from existing assumptions may impact how these interventions are adapted to a highly interpersonal industry and what novel solutions and challenges they introduce. In Australia, homecare is a highly regulated activity. Owing to the social importance of the industry and the vulnerabilities of care recipients, successive Australian governments have introduced a range of rules and regulations to minimise ‘risk’ for care recipients. Providers must register and adhere to strict standards and codes of conduct to receive funding. Managing risk to meet compliance standards is then central for policy makers, regulators and care organisations (Macdonald, 2021).

Focusing on algorithmic management and ‘risk’ in homecare requires understanding the broader link between technology and risk (Dean, 1998). Managerial motivations for the introduction of technology are often multifaceted but tend to be grounded in pursuit of organisational objectives such as innovation, productivity and/or control. This includes the use of technology to mitigate risks in production and/or labour processes. Yet, the adaptation of new technologies can lead to and produce new risks (Freudenburg and Pastor, 1992). In labour contexts, algorithmic management has mostly been studied from workers’ perspectives, highlighting physical risks (e.g. delivery injuries), economic precarity and privacy concerns (Adams-Prassl et al., 2023; Lefcoe et al., 2023; Veen et al., 2020). Although these insights are vital for grasping the lived impact of algorithmic systems (Alacovska et al., 2022), understanding managerial perspectives, those responsible for implementing and overseeing algorithmic systems, remain understudied.

Responding to calls for greater visibility of the role of management and organisational perspectives in algorithmic management (Veen et al., 2020), this study sets out to examine how senior leaders (hereafter, managers) within homecare view their organisations’ adoption of algorithmic practices – either as an in-house developed technology or as third-party software (Renieris et al., 2023) – and what it means for ‘risk’. The research question that we set out to answer is: How is algorithmic management (re)shaping managerial perspectives of risk in the Australian homecare sector?

This study draws on interviews with managers from intermediary platforms, software-as-a-service (SaaS) firms, and aged and disability care providers using SaaS technologies in the Australian homecare sector. Under Australian law, directors and senior executives have fiduciary duties to manage organisational risk, with heightened responsibilities in homecare due to the sector’s distinct regulatory regimes. These include ensuring appropriate use of public resources and the safeguarding and wellbeing of care recipients (e.g. NDIS Quality and Safeguards Commission, 2024). Management perspectives further merit empirical focus as they inform how organisational processes take place, with managers having significant decision-making power (Richards, 1996). Focusing on managers in homecare extends current research by moving from the impacts of algorithms on workers’ experience to gaining direct insights into how managerial decisions shape the use and implementation of algorithmic technologies.

To understand how managers in homecare organisations using algorithmic management come to recognise risks and how risks identified through, and created by, algorithmic management are responsibilised within their organisations, the article draws upon the sociology of risk (Lupton, 2006) and theories of care (de La Bellacasa, 2017; Tronto, 1993). Our findings reveal how algorithmic management in homecare is used to mitigate clinical, behavioural and compliance risk associated with incident reporting and bring it in line with regulatory obligations. The article also highlights how algorithmic management in homecare creates new technological risks, which in turn are managed through solutions such as human-in-the-loop configurations, noisy algorithms and co-design principles.

Our research makes several contributions. First, we show how homecare managers’ perspectives of risk are influenced by how algorithmic management is becoming woven into the industry. Second, by bringing together the sociology of risk literature and relational care theories, we are better able to show and understand how risk becomes central to how managers practise care. Third, we contribute to algorithmic management debates by exploring the use of such technology in a less frequently studied industry context and highlight the importance of regulatory pressures in shaping how the technology is used.

The remainder of the article is structured as follows. First, we cover the relatively sparse, existing literature on algorithmic management in care. This is followed by the theoretical background, in which we outline how we bring the sociology of risk and theories of care into dialogue. Hereafter, we detail the research methods of our study. This is followed by our main findings, which highlight first how algorithmic management is used to visibilise risk, how it is employed to responsibilise risk and how the technological risks of algorithmic management are managed. Finally, in our discussion, we unpack how algorithmic management is (re)shaping understandings of and approaches to risk in the Australian homecare sectors, discuss the study’s limitations and outline avenues for future research.

Algorithmic management and its use in homecare

Algorithmic management systems rest on a foundation of sophisticated data collection, monitoring and machine-learning to facilitate decision-making related to the management of labour (Lee et al., 2015; Möhlmann et al., 2021). The technology has been mainly studied as used by intermediary labour platforms, although there is a growing recognition that these systems are also increasingly spreading to other parts of the economy (Dupuis, 2025), including the disability and aged homecare sectors (Pulignano et al., 2023). Research has explored the technological risks that algorithmic management systems can produce, including the problems of biased and unfair decision-making (Wood et al., 2019), the increasingly omnipresent nature of worker surveillance (Newlands, 2020) and how the technology can deskill and erode working conditions (Schaupp, 2021). However, most of this research was conducted in the context of intermediary ‘gig’ work platforms across sectors where regulatory conditions are markedly different from government-funded homecare delivery – which in a country like Australia is subject to a series of regulatory pressures tied to funding.

In the context of homecare, algorithmic management has largely been a secondary consideration of studies on platform-mediated homecare delivery (Pulignano et al., 2023; Rodríguez-Modroño, 2024). Subsequently, the main functions of these systems that have been examined have been algorithmic performance management and matching (Khan et al., 2023; Pulignano et al., 2023). These studies have highlighted, for example, how care workers conduct unpaid labour to reduce the likelihood of receiving a negative review, suppressing their presence on the platform (Pulignano et al., 2023; Rodríguez-Modroño, 2024).

Internationally, algorithmic management in homecare has been associated with increased standardisation, improving organisational efficiencies but in doing so eroding relational aspects of care (Glaser, 2021). Simply stated, digital interventions tend to overlook aspects of care that are not easily measurable or standardised, raising concerns about the erosion of interpersonal care (Glaser, 2021). Similarly, research has also highlighted the tensions between data and information to facilitate decision-making using predictive tools to identify fall risks. One assumption in this research is that ‘good quality care relies on the availability of good quality data’ (Ludlow et al., 2021: 1). However, the concept of quality care is contested, with varied interpretations and indicators across stakeholders (Aase et al., 2021). This creates challenges in applying algorithmic technologies to manage and standardise care-related practices. These tensions underscore the central role of algorithmic management in transforming risk into a quantifiable and governable object, one that promises operational clarity while simultaneously narrowing the scope of what constitutes meaningful care and according to whom.

To better understand how algorithmic management is (re)shaping managerial perspectives of risk, we need to better understand how risk is perceived, visibilised and responsibilised by managers in the Australian homecare sector that use these technologies in the first place, while we also require theoretical tools to unpack how, in the context of highly relational work like care work, the introduction of these systems shapes and recasts existing relations. Therefore, in the next section, we outline our theoretical approach.

Theoretical background

Theoretically, we draw upon the sociology of risk (Lupton, 2006) and care theory as inspired by Tronto’s (1993) ethic-of-care and the nature of caring. Bringing together this literature enables us to unpack the complex relations between risk, responsibility and care, and provides a lens to explore how algorithmic management (re)shapes managerial perspectives of risk across Australian homecare.

The sociology of risk literature highlights how risk is concerned with the management and diminishment of uncertainty (Dean, 1998). This literature theorises the increasing social and political focus on identifying and controlling risk, and how institutions foreground its management. The sociology of risk literature makes two assumptions about the nature of risk: first, what is a ‘risk’ is rendered knowable through sociocultural understandings of uncertainty; second, risk co-constitutes responsibility (Giddens, 1999). Such a perspective is not without precedent in the context of the platform economy, with the sociology of risk used for instance to show how algorithmic management systems in food-delivery work generates a range of physical and financial risks for workers (Gregory, 2020).

Historically, the management of risk has increasingly become individualised (Petersen, 1997), including in homecare, where the management of life-course ‘risks’ associated with disability and ageing are increasingly managed at the level of individuals (and their families) as they assume responsibility for managing personal budgets and organising services on the private market. The sociology of risk literature describes processes by which experts assess and categorise risks to streamline their management at an individual case-based level as ‘case management’ risks (Dean, 1998). Here, risk is made sense of as a socio-political process regarding how it is recognised and classified. That is, risks and how they are interpreted are assumed to be socially and culturally constructed. In Australian homecare, guidelines on incident reporting across the disability and aged care sectors resemble this case-management approach, which requires that risks to care recipients are assessed, categorised and reported at the level of individuals (Aged Care Quality and Safety Commission, n.d.).

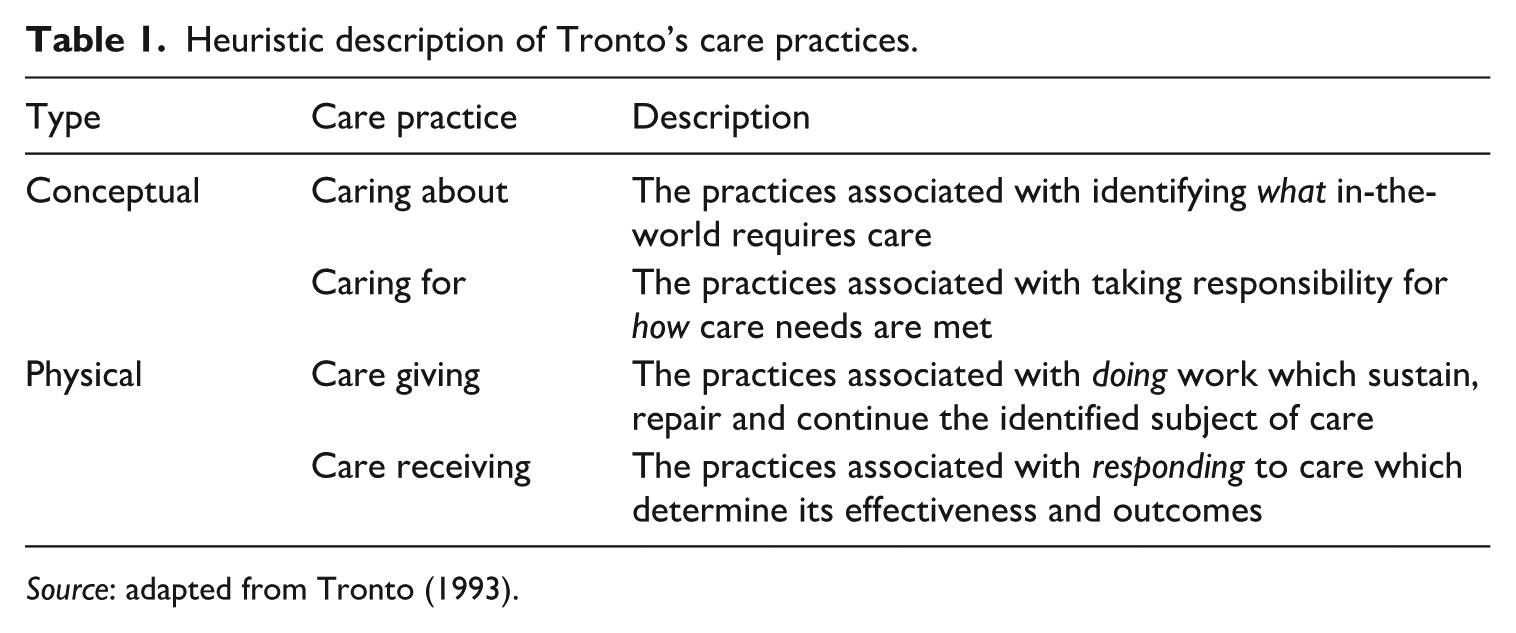

Within the risk literature, risk tends to be viewed as discrete and calculable. To extend this into how risk and risk categories are embedded in interpersonal and highly relational practices, we augment the sociology of risk with a care theory perspective. Tronto’s ethic-of-care presents the challenge of making the values of caring, which are ‘attentiveness, responsibility, nurturance, compassion, [and] meeting others’ needs’ (Tronto, 1993: 3), central to political and economic decision-making. Tronto distils this broad description of care into a four-part conceptualisation of analytically distinct but practically overlapping ‘care practices’ (Table 1): caring about, caring for, care giving and care receiving (Tronto, 1993: 106–108).

Heuristic description of Tronto’s care practices.

Source: adapted from Tronto (1993).

Before we can study care giving and receiving processes involving risk, we must first know how risk is rendered knowable and who is made responsible for its management. Therefore, we must first focus on the conceptualisation of risk by focusing on caring about and for to frame how risk in the context of homecare is identified and responsibilised. Caring about extends beyond interpersonal care to include attention to technologies and environments identifying not just ‘who’ requires care, but ‘what’ (de La Bellacasa, 2017). Caring for, on the other hand, involves assuming responsibility for practices that maintain and repair that which is cared about, whether human or non-human (de La Bellacasa, 2017; Tronto, 1993). In essence, the combination of the sociology of risk and care allows for a close examination of how risk is attended to and responsibilised as a matter of care.

In this article, we focus on how managers in homecare organisations make risk recognisable as something that can be cared about and who becomes responsible for the risks revealed, and the role of algorithmic systems in surfacing and taking responsibility for risk and its management. Next, we outline the study’s methods.

Methods

Research design

This article employs a qualitative inquiry using ‘elite’ interviews (Richards, 1996). Elite interviewing is suited for research where participants are in positions reflecting a small portion of the population, typically inaccessible, and with a strong influence over their organisation and industry (Richards, 1996). As ‘elite’ individuals typically wield influence over organisational and industry practices (Collett, 2023), their perspectives are often significant in understanding how an industry or organisation evolves. In the case of this study, we explored the perspectives of senior managers from organisations active in the Australian homecare sector that deployed and/or developed algorithmic management systems.

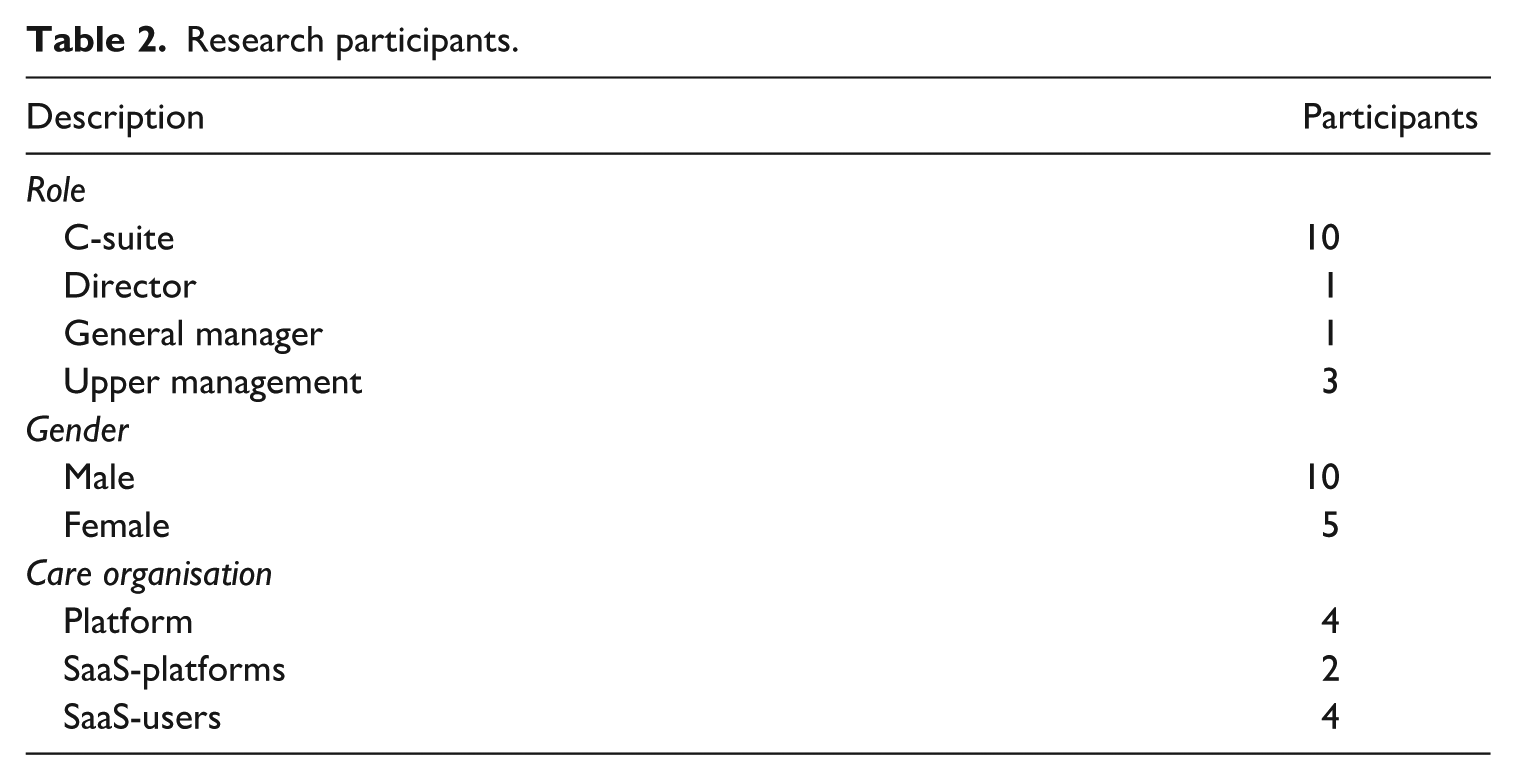

Our participants typically had executive decision-making power and sign-off capabilities about how algorithmic technologies were used and for what purpose within their organisations. Importantly, in the context of homecare, they also had organisational responsibilities around and/or understanding about risk management and therefore were uniquely placed to comment on their organisations’ engagement with algorithmic practices. Semi-structured interviews were conducted from mid-2023 to mid-2024 by the first and third authors. A total of 16 interviews with 15 managers across 10 care organisations were conducted online and in-person. During and after interviews, some organisations further provided demonstrations of their technologies, while others sent reports and additional information in follow-up communications. Demo-observation notes and reports alongside regulatory and policy documents were used as secondary sources for triangulation during data analysis. Participants were recruited through a multichannel approach including online recruiting, snowballing and cold calling. Table 2 provides descriptive information about the sample.

Research participants.

Our sample included managers from the three types of organisations: Intermediary care platforms, SaaS-developers, and aged and disability care providers using SaaS-platforms. This reflects the sector’s organisational diversity across Australian homecare organisations using algorithmic management. Intermediary platforms operate as digital-marketplaces, matching care recipients and workers through in-house algorithmic management systems (McDonald et al., 2021). Although some care platforms used independent contracting arrangements and others used casual employment arrangements – the Australian equivalent of zero-hour contracts – a characteristic for these firms was their digital-first approach, meaning that they started with the technology as their product rather than a brick-and-mortar operation while also seeing themselves as marketplaces rather than employers. What unifies these organisations is that algorithmic management systems were designed and developed in-house. SaaS-developers similarly design and develop technologies in-house, which are part of the enterprise resource planning software services that they provide. Unlike intermediary platforms, they do not actively facilitate the engagement of homecare. Instead, their products, which have algorithmic management capabilities, are used by traditional care organisations seeking digital solutions to aid in up-scaling and modernising their business. Hence, SaaS-users did not design the algorithmic technologies they used to manage their workforce. These firms, which can be for-profit or non-profit, use the technology to manage workforces engaged through an employment relationship (including casual employment). As part of our study, we only spoke with for-profit providers.

Interview questions examined how managers perceive algorithmic management practices and the intended uses for such technology, how these technologies affect the industry and how algorithmic management technologies are used by care workers. Echoing wider research on small-sample studies (Tsoukas, 2019) and ‘elite’ interviewing (Collett, 2023), the objective was analytical refinement and empirical richness over generalisability.

Interviews were transcribed and imported into NVivo 15 for data organisation. The process of data analysis entailed open and theoretical coding through an iterative approach for abductive thematic analysis (Timmermans and Tavory, 2012). An initial round of preliminary flexible open coding was conducted by the first author for data-sensemaking and organisation. This round focused on general patterns across the data involving where risk algorithms were used and for what purpose, and coding for the types of risks being discussed by managers (e.g. clinical, compliance, behavioural). This was followed by a round of structured and thematic coding by the third author (Auerbach and Silverstein, 2003). Themes were based on managerial decision-making, its linkages to regulatory frameworks and technological pitfalls. For example, this included coding for risks around disintermediation, incident reporting, human oversight and co-design practices.

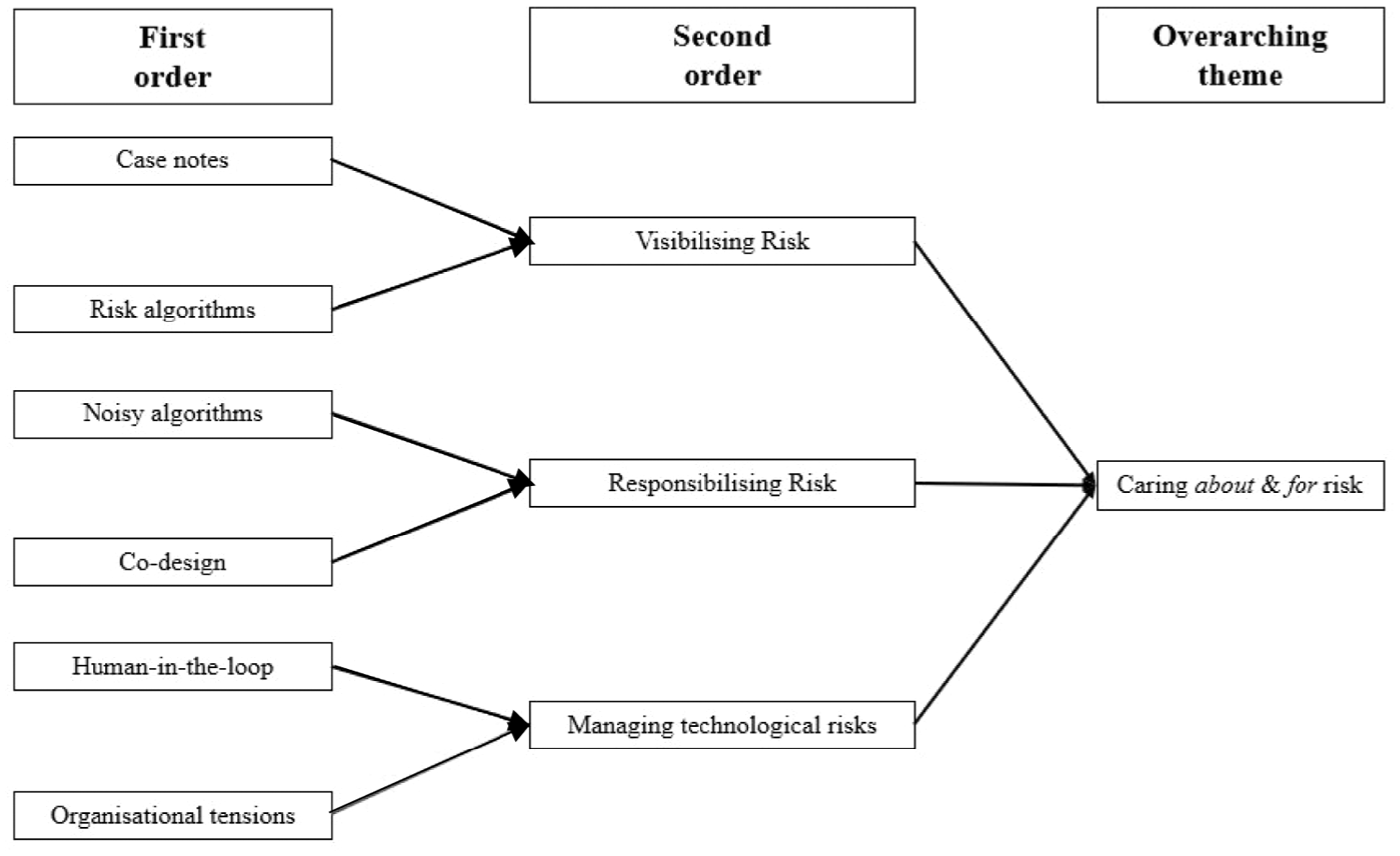

A third round of coding was undertaken by the first author, focusing on sensitivities around caring about and for (de La Bellacasa, 2017; Tronto, 1993) and the sociology of risk (Lupton, 2006), bringing the empirical material and theory into dialogue. This round focused on theoretical coding for how risk is recognised by managers and how responsibility is defined and directed. Following this iterative process, we thematised the data by aggregating and connecting codes and ideas. Figure 1 provides a general overview of how themes were aggregated.

Data structure of caring about and for risk.

Industry context

To understand the attitude of management in the Australian homecare sectors towards risks, as well as how risk is visibilised and responsibilised using algorithmic management, we need to foreground the nature of homecare work as well as the industry’s broader regulatory context in Australia. Homecare work is interpersonal, often insecure and gendered (McDonald et al., 2021). Homecare organisations provide care to individuals in their homes, with the work ranging from tasks such as gardening and domestic support to interpersonal needs, such as showering (Macdonald, 2021). We focus on homecare organisations operating in the regulated Australian disability and aged care sectors – that is, providers operating in the space of the National Disability Insurance Scheme (NDIS) and, in aged care, the Commonwealth Home Support Programme (CHSP) and Home Care Packages (HCP). Homecare is among the fastest-growing industries in Australia, growing at three times the national average (Macdonald, 2021).

Homecare in Australia is delivered in a marketised, neo-liberal context (Macdonald, 2021). Successive policy reforms by Australian governments of all political persuasions have moved the sectors towards individualised models, which is reflected in funding arrangements and has been justified on the grounds of consumer choice and responsibility (Macdonald, 2021). This has encouraged ‘marketised’ business models (Macdonald and Charlesworth, 2021), which has created the conditions for increased competition between care providers, and provided fertile ground for intermediary care platforms. In 2021–2022, for example, around 16,000 NDIS participants used such platform providers (NDIS Review, 2023).

To receive funding, Australian homecare providers, including platforms, must adhere to rules and regulations, which include requirements for them to (pro-actively) monitor for, and report, risks. Following several controversies in recent years, including the abuse and neglect of care recipients, there has been a growing emphasis on compliance and oversight (e.g. NDIS Quality and Safeguards Commission, 2022). There is, for instance, a regulatory compliance obligation for organisations to report actual or alleged incidences (NDIS Quality and Safeguards Commission, 2019), events where harm or potential harm is caused to or by the care recipient. Incident reporting obligations are therefore concerned with identifying risks of harm, making risk management central to ensuring care providers adhere to regulatory standards.

Findings

In comparing the similarities and differences across the types of organisations and their engagement with algorithmic systems, the data revealed three main themes around how algorithmic management is reshaping how managers across Australian homecare attend to and become responsible for risk. First, we reveal how management attended to risks through algorithmic management to make risks visible to reach compliance needs. Second, algorithmically produced risks entailed a process of responsibilisation. This included co-design processes where organisations included a range of stakeholders in the design of algorithmic systems. Lastly, managers noted the difficulties in managing the technological risks produced using algorithmic management. This came in the form of human-in-the-loop configurations to oversee algorithmic outputs and attempting to overcome organisational culture where transparency is viewed as threatening, and in doing so attempting to manage the risks associated with using algorithmic technologies.

Visibilising risk through algorithmic management in homecare

Across Australian homecare, managers highlighted that risk management was a core motivator for the design and use of algorithmic systems. This was driven by external compliance risks as part of regulatory obligations, such as incident reporting. For intermediary platforms it also involved managing internal compliance pressures to ensure care recipients and support workers adhered to these platforms’ terms of service. Although the systems and functions of the different types of organisations – platforms, SaaS-developers and SaaS-users – somewhat varied, they universally relied on the NDIS and My Aged Care codes of conduct as a source of quality assurance and foundation for their incident reporting systems. This is perhaps unsurprising considering this is a necessary commitment if an organisation wishes to receive funding under these schemes.

Algorithmically visibilising risk

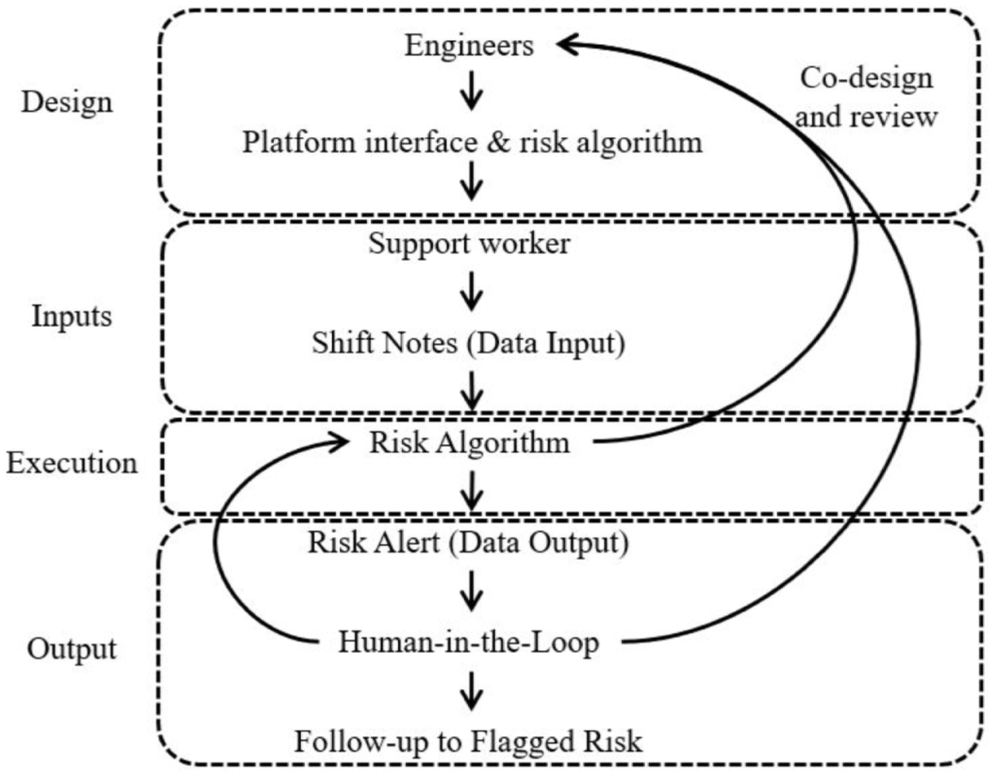

The algorithmic surfacing of risks was viewed as necessary to make risks knowable and to become responsible for their management. Participants highlighted how ‘risk algorithms’ were used across homecare organisations to manage risk. These algorithms identify and facilitate mitigating various risks. A key input for these algorithms is so-called ‘case’ or ‘shift’ notes, which managers explained played an important role in risk management and incident reporting. These notes are prepared by support workers at the end of their care visits and detail day-to-day care activities and the conditions of care recipients. These notes are written on mobile applications. On some intermediary platforms the notes had to be submitted before a visit could be finalised and a worker could be compensated – with workers further algorithmically nudged, prompting them to provide this critical input data.

A dominant type of ‘risk’ algorithms found in homecare were Natural Language Processing algorithms trained on case notes. These were used to identify whether care recipients were presenting either directly (through using particular terms) or indirectly (through word association) with clinical risks that would require organisational follow up. They enabled homecare organisations to more quickly and systematically visibilise risks which previously would have required significant human review and analysis. From a design perspective, these algorithms differ from those commonly found in sectors like rideshare and food delivery (e.g. Veen et al., 2020) since the data input for these algorithms is qualitative rather than quantitative in nature.

To determine the types of risks that these algorithms would flag – and thus the types of risks visibilised – the interviewees from most intermediary and SaaS-developers explained that this was shaped by compliance pressures as well as identified through a process of co-design. Participants from across the homecare sectors strongly argued that the processes making risk ‘knowable’ required human involvement. Therefore, for their design, they employed co-design principles. In practice, this meant that software engineers would work closely with other organisational stakeholders such as clinical experts, support workers, care coordinators (who act as humans-in-the-loop of algorithmic outputs, discussed below) and/or legal experts as part of the development and maintenance of risk algorithms: We do have a very active and involved legal team [. . .] they’re involved, because of the regulation. So, we have an age care package and NDIS, and so they [legal] will typically be involved, I’d say probably at the design phase, when we’re working through [we ask], what is the data that we’re going to be using? What is the way in which we’re going to be using that information? And legally, what are the impacts of us doing that? (Participant 5, C-suite, Platform organisation)

As participants further explained, co-design helped these organisations to sensitise their engineers to the risks that needed to be surfaced: [T]hey [the algorithmic technologies] are almost all co-designed with the people that use them, is probably the more shorthand way of saying that. And so, on face value, ‘why is it called this’, and then you dive into it. (Participant 1, C-suite, Platform organisation)

Figure 2 provides a heuristic overview of the logics described by participants that were used for the development and operation of ‘risk’ algorithms in homecare. As a senior executive of an intermediary care platform explained, their ‘risk’ algorithm was designed to flag different clinical and behavioural risks, which then could be responsibilised: [W]e have a regulatory obligation to make sure that the health of clients that are using platforms like [platform name] is not deteriorating, and so we use the shift notes [. . .] And secondly, we use the data in the shift notes to identify potential incidents or inappropriate behaviour on the platform. So, we have a number of rules that we run across those shifts to determine whether or not any inappropriate or like unacceptable behaviour has happened on the platform, and then that will trigger an investigation by our trust and safety team. (Participant 1, C-suite, Platform organisation)

Heuristic illustration of the logic-flow of algorithmically managing risk.

The drivers for risk identification

The use of risk algorithms across the homecare sector must be viewed considering the regulatory obligations placed upon these organisations and their directors. Participants explained that care providers for certain care activities and incident reporting purposes were legally required to capture and collate case notes (NDIS, 2024) – with algorithms thus used to automate previously manual compliance processes. As the senior executive of an intermediary care platform emphasised, regulatory pressures were a key driver for risk algorithms and enabled homecare organisations to pro-actively manage risk: [W]e are a highly regulated industry, we have the Aged Care Quality and Safety Commission coming, [and] the NDIS Quality and Safeguards Commission coming in. And if they can see . . . this type of not just oversight which is generated through the algorithm and through the use of AI but then processes and actions off the back of that, that lead to outcomes, that’s a great thing. (Participant 2, C-suite, Platform organisation)

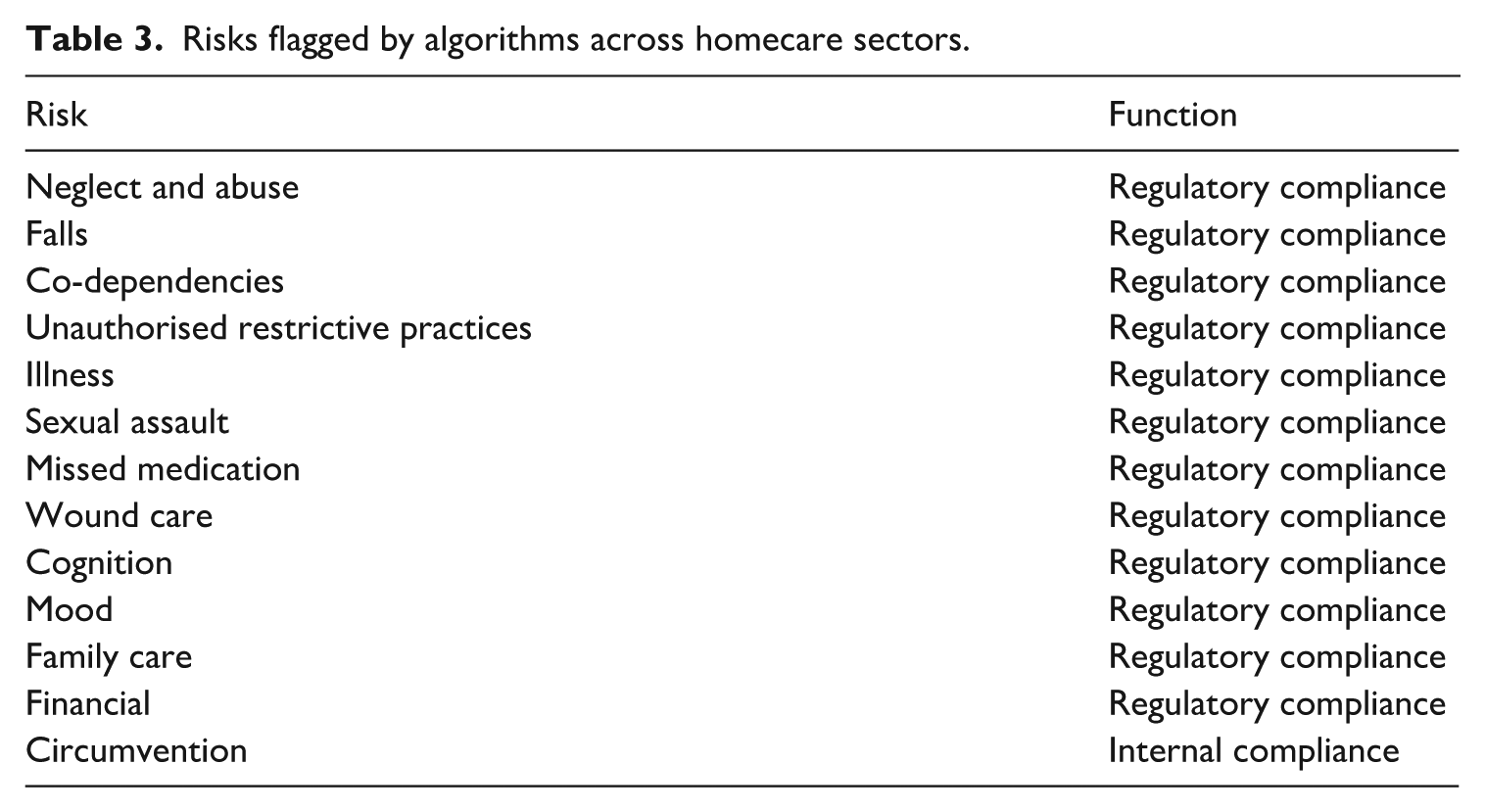

Across the studied organisations, risk algorithms were used to identify a range of clinical, environmental and/or workplace safety risks. Table 3 provides an overview of the different risks that organisations surfaced using case notes and other data, such as the chats between support workers and care recipients on the intermediary platform. As Table 3 reflects, most risks directly relate to external, regulatory compliance expectations, chiefly around incident reporting (e.g. NDIS Quality and Safeguards Commission, 2019). However, as discussed below, for the intermediary platforms, at times, internal organisational risks were also identified using these systems.

Risks flagged by algorithms across homecare sectors.

Interviewees argued that the automated identification of risk had two main advantages. First, organisations were able to optimise workflows, generating automated notifications for middle-management which otherwise would have required significant manual handling, including the reading of hundreds of case notes. Second, the systems helped organisations identify risks that are overlooked due to human error or are difficult for humans to recognise: [T]he clinical risk one [algorithm] for me was always really exciting . . . I used to be an exec[utive] in aged care. And one of the things that always worried me as an exec in aged care was that we were doing a lot of notes, but we don’t generally have a lot of time to actually review them. And there could be some important stuff that is getting, you know, noted that nobody is picking up. (Participant 3, Senior Manager, SaaS-developer)

Managers of intermediary platforms and SaaS-developers were well-versed in the risk algorithms embedded in their digital ecosystems; perhaps unsurprisingly, the managers of care organisations that used SaaS-platforms as third-party software were less aware of its algorithmic architecture. Some even questioned whether algorithmic management was present in their organisations at all: ‘At the moment, I don’t think we use any [algorithmic technology]’ (Participant 11, Senior Manager, SaaS-user). Interviews with the SaaS-developers, confirmed that such technologies were indeed part of these products. This highlights a potential information asymmetry, extending beyond the established asymmetry between front-line workers and management (Veen et al., 2020), to include how managers themselves may not be cognisant of the operation of algorithmic management in their organisation. Traditional homecare providers relying on third-party software may lack the capabilities and/or necessary fluencies to assess the risks of these systems, making them potentially ill-equipped to meet future compliance regulations and regulatory changes around algorithmic management.

Participants from some intermediary platforms explained that their risk algorithms were also used to monitor care recipient [O]bviously, [we use risk algorithms to detect] circumvention, so where people are caught talking about taking work off the platform, in order to avoid the [platform name] fee, or for some other reason, then we’ll look at those kinds of things, too. (Participant 5, C-suite, Platform organisation)

Although the adherence to external compliance pressures was often framed as socially responsible, given the duty-of-care responsibilities of these organisations and potential liabilities if incidences go unreported it also reflects a level of organisational self-interest. In this sense, caring about and for risk as a way to enable compliance became an alternative configuration of balancing care-for-others and self-care (Khan et al., 2023).

Responsibilising algorithmically produced risks

Using algorithms to identify risks creates questions of who becomes responsible for these risks (Lupton, 2006). As aforementioned, algorithmic ‘risk’ management enables homecare organisations to identify potential risks to the physical health and wellbeing of care recipients. Algorithmic technologies were a way to computationally identify care recipients ‘at risk’, with the technology enabling organisations to instigate interventions that could lead to harm reduction: [I]t [the algorithm] would alert the clinician who would just review and determine whether or not there is anything that needed to be followed up. Because sometimes it can be as simple as a care worker making a note that a particular client was wobbly on their feet today. Now, if nobody pays attention to that, there could be something substantially wrong going on in the background. So, it starts as the wobbly walk and then, you know, before you know it, she is passed out on the ground, because there was some other things going on. Or sometimes things like UTIs [urinary tract infections] can get picked up just from some key words that are entered. So that is kind of the idea that it is [the algorithm] just utilising the data that is there. (Participant 3, General Manager, SaaS-developer)

At times, there were also conscious reflections from managers around how to use algorithmic systems responsibly. One executive of an intermediary platform was, for example, particularly concerned about the risks associated with profile ranking and how to oversee algorithmic technologies responsibly: Algorithmic control, I think, is something that we’re starting to look at more and more, [. . .] There’s a line you cross somewhere, and I don’t know where it is, [it] starts with just two humble little profiles on a really crappy website. There’s not much algorithmic control to the data manipulation going on. Then when you’ve got 1000s of users [. . .] What about if we have a mother of three that’s working two jobs and is working as a support worker, and she’s not very responsive in terms of messaging. If you use that [information], in terms of ranking that worker, i.e. someone with a high message response time is highly desired [. . .] If you’ve got that person that’s got a very, very complex life, they’re a great worker, and all that sort of thing. But you’re using then an algorithmic kind of hierarchy to then . . . suggest and recommend good workers, that person might be offered less work in a platform environment, which is, you know, there’s real ethical questions around that. (Participant 6, C-suite, Platform organisation)

One sectoral challenge that managers highlighted with algorithmically assessing risk was the issue of potential underreporting, which happens when algorithms are under-sensitive to reportable risks. Given their regulatory obligations, some managers explained that their organisations resorted to a combination of human-in-the-loop configurations with what they described as ‘noisy’ algorithms. These algorithms deliberately over-captured risks (creating more ‘false’-flags) and therefore required considerable manual handling. They explained that the upside of this approach was that it reduced the potential risk of missing a reportable incident which could cause serious harm to a client, but also to the organisation (from a compliance, funding and reputational perspective). It also meant that humans remained ultimately responsible for managing risk: [W]e took a conservative stance [when designing the algorithm] and said: ‘Let’s be a little bit more noisier’. And then, the get-out-of-jail card for us was, we put the ability to discard in there as well. So that the flow is that observation comes in, the nurse looks at it. And then they can basically say, they can basically qualify, or they can discard it. And we felt that was a good compromise. (Participant 8, C-suite, SaaS-developer)

Co-design was also an important feature in the sector around the responsibilisation of risk. Rather than merely having engineers exposed to clinical and other experts in the development stage, most organisations (intermediary platforms and SaaS-developers) that developed ‘risk’ algorithms had systematic co-design processes for ongoing review in place: So, weʼre really committed to keeping our engineering team close to our care business, and to understand the problems that they’re really solving. (Participant 3, C-suite, Platform organisation)

Managers viewed caring about and for risk through algorithmic technologies to reduce perceived harms. While algorithms are used to visibilise risks across the homecare sectors, their identification subsequently requires the responsibilisation of individuals to respond to them. Participants framed this as being responsible for reducing harms towards care recipients, and to a lesser extent, the support workers. In this sense, caring about and for risk through algorithmic practices was a surrogate avenue to care about and for organisational stakeholders.

Managing the technological risks of algorithmic management

Participants explained that a consequence of using ‘risk’ algorithms was that novel and unexpected risks became visible when these technologies were used (i.e. the ‘risk’ algorithms produced unique technological risks). This reflects how technological progress reshapes what becomes recognisable as ‘a risk’ (Giddens, 1999). On the one hand, the technological risks were a byproduct of the algorithmisation of risk management. On the other, they were a consequence of the potential of these systems to surface risks previously invisible, creating new organisational liabilities. Resultingly, participants explained an unease about both the volume and the types of risks that were rendered knowable through the introduction of algorithmic practices and the tensions this caused within their organisations.

To minimise the technological risks of algorithmic management, interviewees stressed the importance of human oversight to ensure that contextual nuance was not rendered invisible or lost due to technological limitations. Managers pointed towards the various internal safeguards they had in place – which were often more geared towards care recipients than workers. Human-in-the-loop configurations were further the default in homecare, with humans overseeing and correcting algorithmic outputs (Adams-Prassl et al., 2023). Interviewees explained that human-in-the-loop configurations were essential due to the imperfections of the input data and the risks these imperfections entail: [I]t’s 100% human-in-the-loop process . . . there may be false-positives. And a lot of the time what will happen is that there needs to be a conversation with the client or the support worker to understand what’s actually occurred. Because if the shift notes [are] not robust enough, then we’re not able to tell that it’s fake from the shift note. (Participant 5, C-suite, Platform organisation)

To correct for the technological risk associated with ‘noisy’ algorithms, humans were delegated with the responsibility (caring for) to oversee and correct the algorithms. For example, false-positives – a situation where the algorithm misidentifies or miscategorises a risk – were a major concern. To mitigate against these errors, most organisations had processes in place which required staff to undertake investigations to verify and corroborate algorithmically identified risks: ‘So, we are using automated features, but essentially, we are the ones double checking at the end, you know, making sure that the selection [of risks] is correct’ (Participant 7, Senior Manager, SaaS-user). ‘Double checking’ also involved a risk team member changing the flagged risk to a more appropriate category and identifying ‘false-positives’ (Participant 4, C-suite, Platform organisation) or ‘noise’ (Participant 8, C-suite, SaaS-developer).

The tensions around surfacing ‘new’ risks

One concern managers had with algorithmic risk recognition was issues of algorithmic opacity (Lefcoe et al., 2023; Veen et al., 2020). Depending on how algorithms were used, algorithmic practices generated tensions where transparency is simultaneously increased intentionally and unintentionally across the organisation. This resulted in tensions between the increased knowability of algorithmically surfaced risks and associated liabilities, echoing Giddens’ (1999) work on how technological changes generate new uncertainties. Simply, the use of algorithms can surface risks beyond their intended scope.

Some participants explained how algorithmically monitoring risks created tensions between responsibility and discomfort. For instance, the increased disclosure of risk enabled by algorithmic systems was viewed as a source of anxiety across some organisational stakeholders. The ability to identify risks, managers explained, came with an increased duty of care due to an increased awareness of these added risks. These changes in both perceived and actual responsibility were considered avoidable if the technology was not used. This at times resulted in managers observing a culture where not using the technology means that ‘you can cover your eyes legally’ (Participant 8, C-suite, SaaS-developer). One intermediary platform’s manager highlighted the internal tensions within their organisation around their willingness to render risk knowable and the discomfort brought about by seeing risk: We have been capturing those [case] notes for four years and stuff about the star ratings. And [that was] before we even had the algorithm reading the notes. So even controlling in here at the time the idea of absolutely properly surfacing the risk that is out there was really frightening to people. Because you have worked in an industry that has never been able to do it [detect risk] before [. . .] And, you know, they would say, ‘Oh, yeah, I believe in transparency, I believe in this’. And then we’d show them [. . .], and they go, ‘Oh, I didn’t want to be that transparent’. (Participant 1, C-suite, Platform organisation)

The tensions around surfacing previously invisible risks stemmed from the relationship between technology, regulatory regimes and the nature of risks themselves. Having increased awareness of clinical and environmental risks increased organisational responsibility – creating new, potential liabilities if not acted on. Across those organisations using risk algorithms, we found that there were conflicting orientations. One manager explained how this revealed an implicit culture of blissful ignorance across their organisation that had to be addressed for the responsible use of algorithms: It took us a long time to figure out how to use it [the risk algorithm]. There was definitely a reticence in our business because there’s also sometimes, as much as people wouldn’t want to say it, there’s at times a culture of ‘I don’t really want to know too much’. And these types of technologies give you the ability to see more. (Participant 9, C-suite, Platform organisation)

Some managers, however, saw these increased uncertainties and changes as an opportunity to de-stigmatise aspects of organisational culture such as negative connotations around incident reporting, framing the use of algorithmic technologies optimistically: The stigma is ‘incidents’ as a negative thing. We’re seeing it not as a negative thing. This is a positive thing. Yes, generally a positive thing that people, especially employees, are open to communicating to an employer about something that might have gone wrong, potential to have gone wrong and hopefully push it towards the proactive state of all employees here go ‘I can actually ask them and talk about this rather than feel that there’s going to be negative implications if I raise anything with them’. (Participant 10, C-suite, Platform organisation)

The quote above reflects the idea that risks produced through technological change can prompt reconfigurations of values (Giddens, 1999). Although participants from other organisations faced similar emerging risks stemming from algorithmic technologies, there were slight differences in how and what values and responsibilities became negotiated across their organisations. Some participants placed emphasis on how emergent risks produce an opportunity to renegotiate an organisation’s responsibilities around incident reporting obligations. Others, instead, emphasised the tensions produced when blissful ignorance becomes displaced, and how to negotiate these tensions. Together, this highlights that algorithmically enabled risk identifications bring about tensions between external liability risks for falling out of compliance, and organisational risks associated with the discomfort produced by algorithms making the volume of incidents more recognisable.

From the lens of care theory, the processes that enable and constrain what becomes knowable in day-to-day experiences shapes what actors can become responsible for (de La Bellacasa, 2017), which extends beyond human-to-human care. An unintended consequence of algorithmic management in homecare was the discomfort produced by making ‘new’ risks knowable. Most managers interviewed approached the increased responsibility optimistically and viewed dealing with these risks as necessary to meet the increased compliance needs of incident reporting and the changing nature of the industry. This was driven in part by the growing demand for homecare, which requires the delivery of care at scale, leading managers to reach towards algorithmic solutions. From a more critical stance, the interpersonal aspects of how care is traditionally understood are backgrounded in service of reaching organisational goals and duty-of-care obligations.

Discussion

This article set out to examine how algorithmic management is (re)shaping understandings of risk across Australian homecare. Focusing on senior managers, we showed how they come to recognise (care about) and responsibilise (care for) algorithmically identified risks – with our focus mainly on the use of so-called ‘risk’ algorithms. As de La Bellacasa (2017) reminds us, caring consists of practices that repair and sustain worlds that are not solely reserved for interpersonal care. In sustaining risk as a central matter of care, managers contribute to a hardening of individualised values due to processes foregrounding the knowability and management of risk (Petersen, 1997). Our work makes three contributions. First, we revealed how managers’ views of risk are influenced by how algorithmic management is increasingly woven into the industry. Second, by integrating the sociology of risk and care theories, we showed how risk is increasingly foregrounded as a matter of care influencing what managers care about and for. Third, by examining how algorithmic management practices proliferate and affect the homecare industry, we extend empirical insights beyond the dominant food-delivery and rideshare contexts and highlight the importance of regulatory pressures in shaping how this technology is used.

By exploring managerial perspectives in homecare organisations, we were able to generate insights into how algorithmic technology reshapes managerial perspectives of how risk is visibilised and responsibilised in homecare. Our findings highlight the importance of regulatory context around the nature and deployment of algorithmic management. Where earlier research has highlighted the limited regulatory enforcement with regards to improving the conditions of care workers (Pulignano et al., 2023), our exploration of the regulated government-funded segments of homecare in Australia shows that attendance to risk was a key managerial concern due to the sector’s regulatory apparatus. Here, the existing regulatory framework lends itself to using algorithmic management technologies to optimise the recognition and management of risk at the level of individual cases (Dean, 1998; Lupton, 2006). Yet, we also highlight how this attention to risk was framed as a harm-reduction tool, caring about risk through algorithmic management practices to attend to care recipients and to a lesser extent care workers. This is not to say algorithmic technologies reduce harm or do not pose a technological risk but was rather the motivation espoused by managers. Future care recipient and support worker-focused research will be needed to determine if harm is legitimately reduced from the perspective of these critical stakeholders through these technologies and to what extent the algorithmic management of risk is conducive to interpersonal care.

By bringing the sociology of risk and contemporary care theory together, we have shown how risk is not only made visible but actively managed within homecare through algorithmic management, foregrounding risk as something to be cared about and for. Traditionally, the sociology of risk focuses on how risk is made knowable and who bears responsibility for its management (Giddens, 1999). Recent work has shown how workers in the platform economy make sense of work-related risks (Gregory, 2020). We extend this debate, showing that when using algorithmic processes to optimise how risk itself is detected, predicted and reduced, organisations redefine how care is being practised, and how managers make sense of organisational responsibilities. Rather than centring on interpersonal relations, care becomes oriented towards the management and control of uncertainty, a shift that relocates what is cared about and for in more-than-human terms (de La Bellacasa, 2017). This transformation is deeply tied to market logics, where risk is not just managed but sustained and amplified through algorithmic processes (Macdonald, 2021). Consequently, risk becomes entrenched as a matter of care, reinforced through managerial assumptions, technological infrastructure and regulatory frameworks. While previous research has shown that algorithmic management reshapes organisational and worker identities (Sullivan et al., 2024), our findings suggest a deeper sociological shift in homecare organisations, one where algorithmic management influences what individuals recognise and become responsible for when providing care. This opens further avenues to theorise risk as a matter of care, inviting reflection on whether algorithmic logics harmonise or come into tension with an ethos of human-to-human interpersonal care that has long defined the homecare sector.

Previous research has provided much-needed theoretical insights into how digitally mediated platforms affect the relationalities of care underpinning care worker and recipient engagements (Khan et al., 2023). We extend this theoretical debate by situating care within the more-than-human assumption that ideas and concepts can become a subject of care through how they are made recognisable and materially attenable in the world (de La Bellacasa, 2017). In connecting the sociology of risk with this more-than-human approach to care, we demonstrate how the use of algorithmic technologies facilitates making risk recognisable and subject to care. Hence, in bringing together the sociology of risk with more-than-human care, we provide a theoretical lens to examine how this risk-centred care is accomplished.

Algorithmic management across homecare organisations is shaped by internal organisational and external environmental pressures, including compliance and social expectations which shape what algorithmic management functions become possible in homecare. This was evidenced by organisations adapting algorithmic practices to fit the compliance needs around incident reporting expectations (NDIS Quality and Safeguards Commission, 2019). Considering the ongoing regulatory debates in Australia and other countries (Franke et al., 2023; Macdonald, 2021), our findings are a timely reminder for policymakers and regulators that regulations can and do shape the nature and purpose of these systems.

In homecare, due to the nature of the work performed, the vulnerabilities of care recipients and the technological risks associated with algorithmic management, managers argued that there was a need for proper human oversight, which in practice was enacted using co-design processes and human-in-the-loop configurations. With co-design being central to best-practice in the design of new technologies for risk-related decision-making dashboards (Ludlow et al., 2021). Concerning human-in-the-loop configurations, it could be reasoned that these are necessary for the use of algorithmic technologies to maintain at least some level of human oversight in an increasingly mechanised process and avoid rendering interpersonal care and care work further invisible through technological practices (Glaser, 2021). More critically, with concerns of technological erasure (Glaser, 2021) and the erosion of interpersonal care in the service of care that can be algorithmically assessed, there are opportunities to further explore the appropriateness of algorithmic practices in homecare, and what these mean for interpersonal care in an increasingly digitalised world. For instance, with multiple meanings and interpretations of ‘care quality’ being a feature of care-related industries (Renieris et al., 2023), and assumptions involving the importance of data in achieving quality care (Ludlow et al., 2021), there is an opportunity for future research to examine what vision of quality-of-care is represented in data, whose perspectives are amplified and backgrounded, and to what degree different stakeholder views of quality care are reconcilable as data objects.

We note that while managers framed their perspectives as well-intentioned and driven by improvements to the wellbeing of stakeholders, the use of technology was not altruistic and reflects a level of self-interest. The algorithmic screening of case notes, for instance, reduces manual handling and labour costs. The technology helps homecare organisations to operate more efficiently and meet their compliance obligations. While co-design and human-in-the-loop practices were framed as reducing harms associated with algorithmic practices, the use of these safeguards also enabled homecare organisations, including their directors, to comply with their duty-of-care responsibilities – an obligation placed upon them by the relevant regulatory frameworks. This somewhat highlights the challenges in existing best practices associated with multiple safeguards to technological pitfalls (Renieris et al., 2023) but equally shows that lessons for other sectors can be learned from homecare.

Limitations and future research

Our work is not without limitations. First, this was a ‘small-n’ study, meaning that analytical refinement was the goal of this research, backgrounding generalisability as a result (Tsoukas, 2019). Second, our research foregrounded the understudied perspective of managers to advance algorithmic management scholarship (Veen et al., 2020). This focus has rendered other important voices, including care recipients and support workers, beyond the scope of this article. This is particularly important with the gendered nature of care work. Future research could explore how algorithmic management is affecting the gendered nature of care work, including how gender dynamics affect the technological design and oversight of these systems. We are also aware that a single theory of care does not wholly represent the complexities at play in how care is enacted and embodied (de La Bellacasa, 2017). Thus, we only provide one angle describing how care is enacted and located by managers.

Future research may also wish to interrogate humans-in-the-loop as a proposed solution to the problems of algorithmic practices, since the effectiveness of this fix to unchecked algorithms remains subject to debate (Sullivan et al., 2024). In homecare, this solution raises questions about how much influence algorithms have over human overseers, what role support workers play in these systems as producers of input data and its impact on workers’ emotional labour (Baines et al., 2021) and how processes of human-in-the-loop may transform or distort the concept of person-centred care that dominates social care policymaking (Baines et al., 2021).

Alternatively, closer examination of humans-in-the-loop drawing on theoretical literature focused on looking beyond rational, instrumental strategies for managing risk and uncertainty to the experiential and intuitive (e.g. Zinn, 2008), could reveal avenues to ensure relational aspects of care are not diminished alongside digitalisation. In the case of making risk quantifiable and communicable to an overseer, what data are included or excluded for risk categorisations may shape what decisions become available to humans-in-the-loop and what becomes recognisable as ‘a risk’ and who is seen as ‘at risk’. Hence, future research could focus more closely on what becomes reinforced or atrophied across increasingly digitalised homecare sectors and how algorithmic management is transforming where care is located. Looking ahead, the broader proliferation of artificial intelligence, robotics and emergent digital technologies will continue to transform how care and risk are understood, operationalised and responsibilised, offering fertile ground for future inquiry into the evolving intersection of automation and relational care practices.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project received funding from the Australian Research Council’s Discovery Early Career Researcher Award (DECRA) scheme, project number DE210100368. Dr Veen’s contribution was, in addition, also partially supported by a University of Sydney Business School Emerging Scholar Research Fellowship.