Abstract

While research on platform work has grown exponentially in recent years, the power dynamics between creators and algorithms on digital platforms, as well as their role in shaping online visibility, are yet to be fully understood. Against this backdrop, we ask: How does algorithmic power maintain its dominance and shape the nature of work for content creators? Through a systematic review of the literature on the relationship between algorithms and content creators, this article identified four core themes, namely: (i) market rationality underpinning visibility, (ii) potential power dislocation caused by folk theories, (iii) neo-normative control of creators through algorithms and (iv) subversion of beatific fantasies. Drawing from Tirapani and Willmott’s framework to theorise the power relations framing interactions between algorithms and creators, we argue that the fantasies fabricated by neoliberalism justify, endorse and ultimately support the dominance and dynamic power of algorithms over creators in content creative platforms.

Introduction

Algorithms have emerged as a critical component of management on digital platforms, implicated in the exploitation of workers (Liang et al., 2022; Vallas and Schor, 2020) and the control of economic transactions between workers and customers (Rosenblat and Stark, 2016). Initially portrayed as offering a high degree of flexibility (Wood et al., 2019), platform work is increasingly framed by highly segregated work situations (Wood et al., 2019), unstable working hours (Liang et al., 2022), competitive environments (Murillo et al., 2017) and unequal power distribution (Shanahan and Smith, 2021). These negative features undermine the open employment relationship and flexible working conditions promoted by digital platforms (Kalleberg, 2011), affecting a broad range of individuals – including content creators.

‘Content creator’ is an umbrella term encompassing a diversity of users, from prominent influencers to individuals with ‘low-key’ channels. Content creators are individuals participating in digital cultural production, generating economic value and involved in labour relations within platforms (van Dijck, 2009). Platforms, such as X, YouTube or Facebook, enable users to distribute and potentially monetise content as a service or product (Marwick, 2013). Compared with more widely recognised gig work, typically referring to platform-mediated, short-term and unstable work (Liang et al., 2022), content creators are required to invest significantly more creativity in their work. They must take responsibility for every aspect of their job to gain visibility, from content production to managing business-related matters (Hancock et al., 2021), creators thus operate like independent business owners with significant autonomy over their work (Kost et al., 2020). Conversely, gig workers typically perform narrowly defined tasks, with their work content and pace largely dictated by the demands of clients and under strict algorithmic control (Wood et al., 2019).

While content creation is regarded as flexible, liberating and ‘cool’, content creators are comparable to gig workers in terms of non-standard employment, app or platform-based engagement and algorithmic control (Veen et al., 2020). Unlike many gig jobs where tasks are set through algorithms, creativity – at the core of content creation – incurs a high level of uncertainty (Duffy et al., 2021). Content creators rely on quantified algorithmic metrics (views, likes and shares) to anticipate demand and predict potential success (Napoli, 2014), making visibility a critical yet contingent factor in content creation. If algorithmic control in organisations helps managers fortify the control they hold over the labour process (Kellogg et al., 2020), in the context of platforms, it is both a means of supervision and management through customers (Duggan et al., 2020; Wood et al., 2019), with visibility serving as a form of governance. In response, creators have developed counter-narratives questioning the fairness of algorithms (Bishop, 2019) and tried to ‘reverse engineer’ them (Haenlein et al., 2020). Yet, these acts of resistance fail to challenge the hegemony of platforms and materialise real change, hinting at complex power dynamics between creators and algorithms. Importantly, we still lack a clear understanding of the process of content creation in the context of digital platforms, the power dynamics between creators and algorithms and the impact of algorithms on the work of digital content creators, particularly in shaping visibility.

This article aims to address this gap through a comprehensive, systematic literature review on the relationship between algorithms and content creators. Examining research on content creators in an algorithmic environment, we ask: How does algorithmic power maintain its dominance and shape the nature of work for content creators? Power can be defined as the implied authority derived from the influence of ideas, economic strength, culture and so on. It encompasses the systematic manipulation of behaviours and the establishment of hegemony through both conflict and consent, ultimately conferring advantage to one group over another (Dowding, 2012). Our article has three main objectives: (i) to provide an overview of research on the development of content creation across different digital platforms over time; (ii) to conduct an in-depth analysis of the complex interplay between content creation and algorithmic management; and (iii) to gain an understanding of the power dynamics at play within this working environment, thus laying the foundation for future research into content creative platforms.

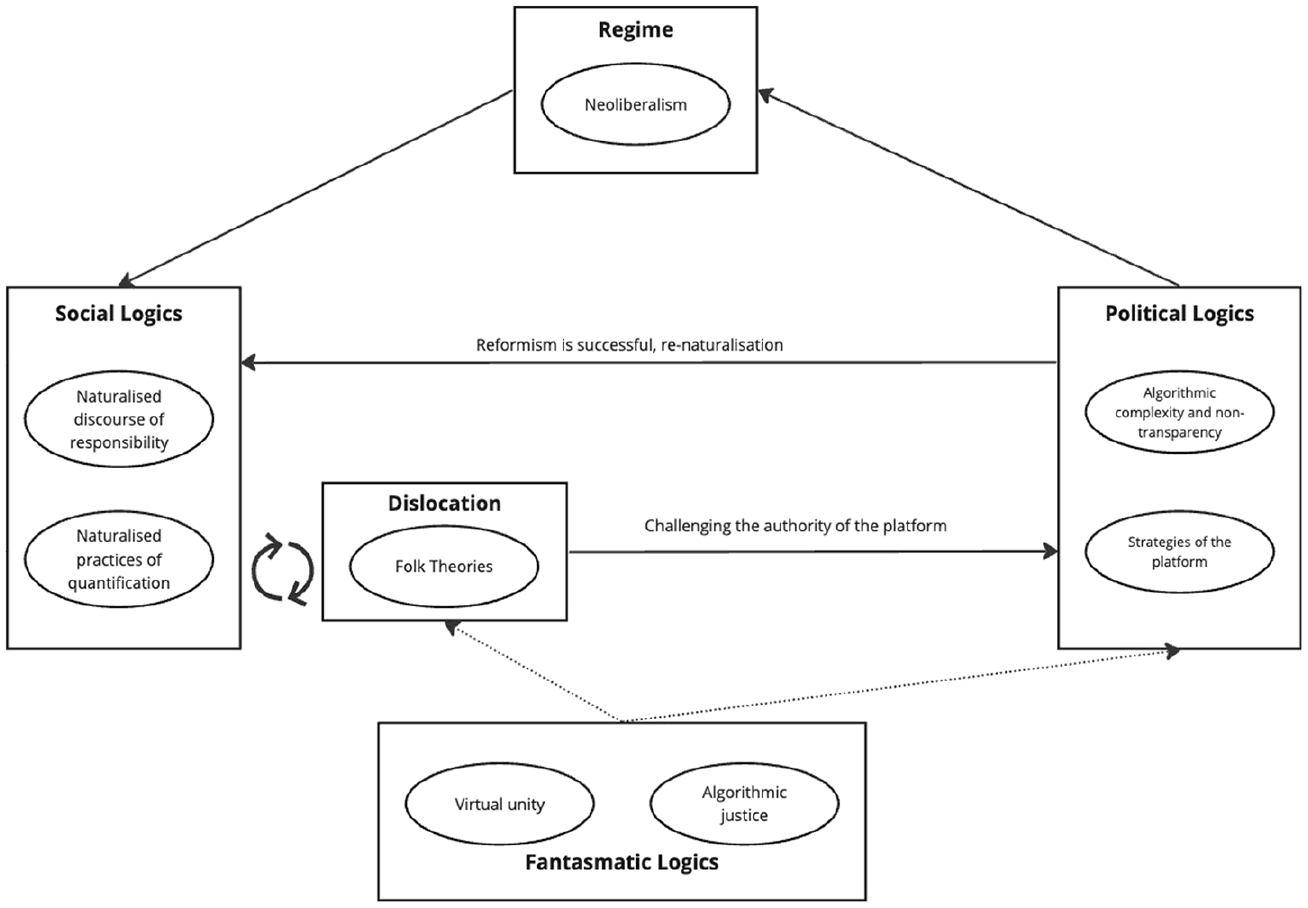

We identified four key themes that frame existing discussions on content creation in an algorithm-mediated digital environment. To further theorise the dynamic but asymmetrical power relationship framing interactions between algorithms and creators, this article draws from Tirapani and Willmott’s (2023) conflict Logics framework. This provides a dynamic understanding of work conflicts under neoliberalism (understood as a system favouring deregulation, free markets and competition), where the expression of discontent appears in conjunction with fantasies around work (Tirapani and Willmott, 2023). The framework acknowledges the ever-present tension between lived experiences of workers and the fantasies of self-entrepreneurialism, and the moderation of radical conflict by these fantasies. Mobilising this framework, we contend that neoliberalism promotes, advocates for, and rationalises the dominant and dynamic power of algorithms over content creators within content creative platforms. In this context, in pursuit of greater visibility, creators attempt to reverse-engineer algorithms and develop folk theories to manipulate them; this is part of a continuous process of dislocation where social logics are denaturalised. Most dislocations are mitigated through the influence of fantasmatic logics, which work to maintain platform stability by masking discontent and preserving ideological control. However, others resist such mitigation and instead pose challenges to the platform’s hegemonic ideological control, potentially destabilising its dominant narrative. Platforms, leveraging their authority, strategically neutralise and re-naturalise these conflicts, suturing them back into the prevailing social logics.

This article is structured as follows. The next section reviews the literature on algorithms, algorithmic management and content creation work in algorithmic environments. This is followed by a presentation of Tirapani and Willmott’s (2023) framework. The methodological approach underlying our systematic review is outlined in the fourth section. The fifth synthesises the existing literature through the four themes aforementioned. In the sixth section, we theorise the power relationship between creators and algorithms through Tirapani and Willmott’s (2023) framework, provide directions for future research and outline limitations. Finally, the conclusion reiterates the main contributions of the article.

Algorithms and content creation

Algorithms and algorithmic management

Algorithms are technological procedures driven by computer programs that utilise data inputs for targeted outcomes, primarily focusing on efficiency, profit generation and advancement (Kellogg et al., 2020). The concept of algorithms is traced to the emergence of computer science with step-by-step computer programming. Computer scientists, software designers and machine learning practitioners use ‘algorithm’ as an insiders’ term with specificities that elude non-specialists (Dourish, 2016) and which are outside this article’s scope. Algorithms are used for data mining (Boyd and Crawford, 2012; Dourish, 2016) and structuring (patterns of) information (Bucher, 2012). They also provide data for mechanical decision-making (Bishop, 2018; Dourish, 2016; Velkova and Kaun, 2021), task-achieving (Duggan et al., 2020; Kellogg et al., 2020) and problem-solving (Dourish, 2016; Neapolitan and Naimipour, 2010). Their efficiency is lauded, notwithstanding potential biases (see Kordzadeh and Ghasemaghaei, 2022).

With the rise of algorithms in the age of social media and the platform economy, their technical impacts on social realities are increasingly salient. Many articles document the socio-technical consequences of algorithms (Boyd and Crawford, 2012; Bucher, 2012; Velkova and Kaun, 2021), conceiving of them as technologies, processes or practices framing and shaping social structures. Citron and Pasquale (2014) conceptualise algorithms as scoring systems technically ranking individuals in numerous aspects of their existence in order to make predictions. In management research, scoring systems combining task distributions act as a form of managerial control in which remote workers respond to individual demands through algorithms (Dourish, 2016; Duggan et al., 2020; Kellogg et al., 2020). To achieve this, algorithms match workers and customers without recourse to (human) managerial involvement (Wood et al., 2019). The system alters the traditional control of customer-oriented management strategies via the ranking system (Wood et al., 2019), and techno-normative control through peer pressure and emotional labour (Gandini, 2019). Through the reputation systems that produce evaluation metrics, algorithmic management regulates the conduct and performance criteria for workers by collecting and monitoring their work data (Duggan et al., 2020).

Content creation in an algorithmic environment

A key aspect of algorithm management in content creation is the use of visibility, but this is easily overlooked and is rarely explicitly addressed in discussions about algorithmic control. While algorithms tend to be more flexible on content creative platforms, there are commonalities with those on ‘regular’ gig work platforms (Bishop, 2019; Velkova and Kaun, 2021). Here, attention is given not only to the algorithm itself (Duggan et al., 2020), but also to metrics, recommender systems and other representations of algorithms (Goldenberg et al., 2012). Algorithms have socio-technical impacts because they structure communications through which regimes of visibility become materialised (Bucher, 2012; Velkova and Kaun, 2021). Algorithms make decisions on who should see what (Bishop, 2019; Bucher, 2012; Haenlein et al., 2020; Velkova and Kaun, 2021), via complex calculations based on multiple metrics (Bucher, 2012). The dominance of certain types of content, in terms of popularity, has significant impacts on the avenues for success available to aspiring content creators (Bishop, 2018). The actual functioning of the algorithm is opaque and complex, constituting a ‘black box’ (Bishop, 2018, 2019; Bucher, 2012; Dourish, 2016), prompting creators to construct their own interpretations of them (Haenlein et al., 2020).

Like other forms of platform-mediated work, digital content creation is associated with hyperbolic and positive characterisations (Liang et al., 2022). Control over creators through algorithms occurs within the labour process, specifically in terms of expected outcomes before content creation and the metrics observed afterward (Napoli, 2014), helping to maintain the impression of creators as entrepreneurs with autonomy over their work (Kost et al., 2020; Wood et al., 2019). Research shows that content creators face significant pressures due to the unpredictability of creativity generation, of the emergence of ‘hot’ topics and the uncertainty of platforms’ policies, which may require them to work out-of-hours or engage in extensive unpaid meta work to ensure the professionalism and continuity of their content (Arriagada and Ibáñez, 2020; Liang et al., 2022; Nemkova et al., 2019; Wright, 2015). This creates a platform culture where creators are ‘always-on’.

Additionally, creators must undertake other professional activities to make ends meet due to the difficulty of obtaining returns in the early stages of content creative-related work, or the delay in distributing returns (Kost et al., 2020; Wright, 2015). These pressures are closely related to algorithms that achieve soft control over creators by managing the visibility of content (Bishop, 2019; Petre et al., 2019). From this perspective, visibility appears to act as a currency symbol or proxy, leading to the few best performing creators achieving elevated visibility levels (Frenken et al., 2015). To obtain higher visibility, creators attempt to manipulate the algorithm according to their own understanding, which inevitably influences their behaviour (Bishop, 2018). Control over their work remains elusive for most (Nemkova et al., 2019). Against this backdrop, we ask: How does algorithmic power maintain its dominance and shape the nature of work for content creators? Before presenting our methodological approach, we outline the article’s theoretical framework.

Theoretical framework

Tirapani and Willmott (2023) draw from Glynos and Howarth’s (2007) Logics of Critical Explanation to analyse ‘the relationship between social structure, human subjectivity and power dynamics’ (Howarth, 2013: 6–7), focusing on conflicts between workers and platforms in the gig economy. Tirapani and Willmott’s (2023) framework, which seeks to explain why, in practice, the structure of labour–capital relations is reinforced rather than altered, lends itself particularly well to exploring the complex and multifaceted power dynamics between creators and algorithms. First, the framework facilitates an analysis of how algorithms exert power over the work of creators through technological means that carry wider political and social resonance. Second, it captures how creators navigate, adapt to, and resist algorithmic power, rather than framing them as passive subjects. Finally, the framework’s extensibility allows for its application beyond ‘regular’ gig workers and, in our case, to content creators who encounter specific challenges related to visibility, audience engagement and unpredictability. This, in turn, allows us to account for the asymmetrical yet fluid power relations that characterise the algorithmic environment in content creation. The framework is articulated around five key concepts (regime, social logics, dislocation, political logics and fantasmatic logics), which we now explain.

According to Tirapani and Willmott (2023), a regime reflects social structures embedded in hegemonic political practices that mobilise sources of power, which, in the gig economy, manifest as neoliberalism. Social logics uphold norms and governance of regimes, serving to reduce chaos and maintain order through contextual self-interpretation. The gig economy perpetuates the order by promoting a self-perception among workers as independent entrepreneurs, thereby legitimising their exclusion from conventional employment rights. Dislocation is an ongoing process, which occurs when social practices or institutions are incomplete, creating a sense of instability. Successful dislocations can activate political logics, leading to ‘suturing’ (stitching/holding together) – when efforts are made to restore stability by highlighting the benefits of flexibility, or, potentially, radical transformation – when gig workers seek to challenge the instability of their contractor status. These political logics aim either to re-naturalise social logics after a dislocation or to transform them fundamentally. For instance, gig workers’ awareness of their precarious status may trigger efforts to restore stability by emphasising the advantages of flexibility or potentially ignite radical shifts as workers push for recognition as employees. Finally, fantasmatic logics represent the most innovative element within the framework, providing continuous emotional impetus for either sustaining or challenging established systems of practice. Some gig workers may hold onto the illusion of freedom and entrepreneurship, while others may push for more significant change in the light of injustice. Fantasmatic logics serve to mitigate the conflict brought about by dislocation, preventing it from disrupting political logics in most instances. However, unusual dislocations can challenge the status quo and question platform authority. Such challenges may be neutralised by established political logics, which re-naturalise the dislocation, allowing social logics to persist.

For Tirapani and Willmott (2023), econormativity is the way in which neoliberal economic systems reproduce the social order by normalising market-centred values and practices. This process is driven by individualisation and sustained by hegemonic ideology, leading workers to internalise competitive, market-driven behaviour as natural and beneficial, maintaining system stability. Individualisation plays a social logic, whereby responsibility for success and failure is placed on individuals. For example, as gig workers internalise the belief that they are entrepreneurs, they become less likely to join collective actions or unions, perceiving their successes and setbacks as individual rather than systemic. Individualisation has two main manifestations: responsibilisation and quantification. Responsibilisation entails behaving as though content creative platforms operate within a fiercely competitive environment governed by economic principles (Shamir, 2008), while quantification helps to facilitate this shift by providing usable metrics (Tirapani and Willmott, 2023). This involves the widespread use of self-tracking technologies to meticulously document, assess and quantify the labour process. These are observable in the development of applications aimed at personalising work engagement (e.g. food delivery platforms tailor work to individual availability and location) while glossing over the reality of collective participation in the labour process (Tassinari and Maccarrone, 2020). In addition, these apparatuses operate as political logics that respond defensively (suture mode) to social dislocations to prevent subversion (Tirapani and Willmott, 2023). For example, food delivery platforms often respond to challenges with minor adjustments, like temporary pay increases or flexibility allowances, to restore stability without addressing deeper systemic issues.

Meanwhile, hegemonic ideology supports this process by portraying neoliberalism as the rational and effective way to organise work and society (Tirapani and Willmott, 2023), leading to a situation where alternatives are excluded. It is manifested through universalism and disembeddedness, which also serve as a political logic for suturing conflicts (Tirapani and Willmott, 2023). Universalism rests upon assumptions of boundaryless freedom, while disembeddedness reflects the illusion of an institutional separation of economic and social relations. As such, hegemonic ideology also manifests as fantasmatic logics that sustain the established order (Tirapani and Willmott, 2023). When disruptions arise within the algorithmic regime, these are obscured by beatific fantasies (idyllic visions of societal development), thereby averting radical conflicts (Tirapani and Willmott, 2023). For example, beatific fantasies on food delivery platforms emphasise autonomy and universal market principles, suppressing demands for change and thereby maintaining the neoliberal status quo.

Methodology

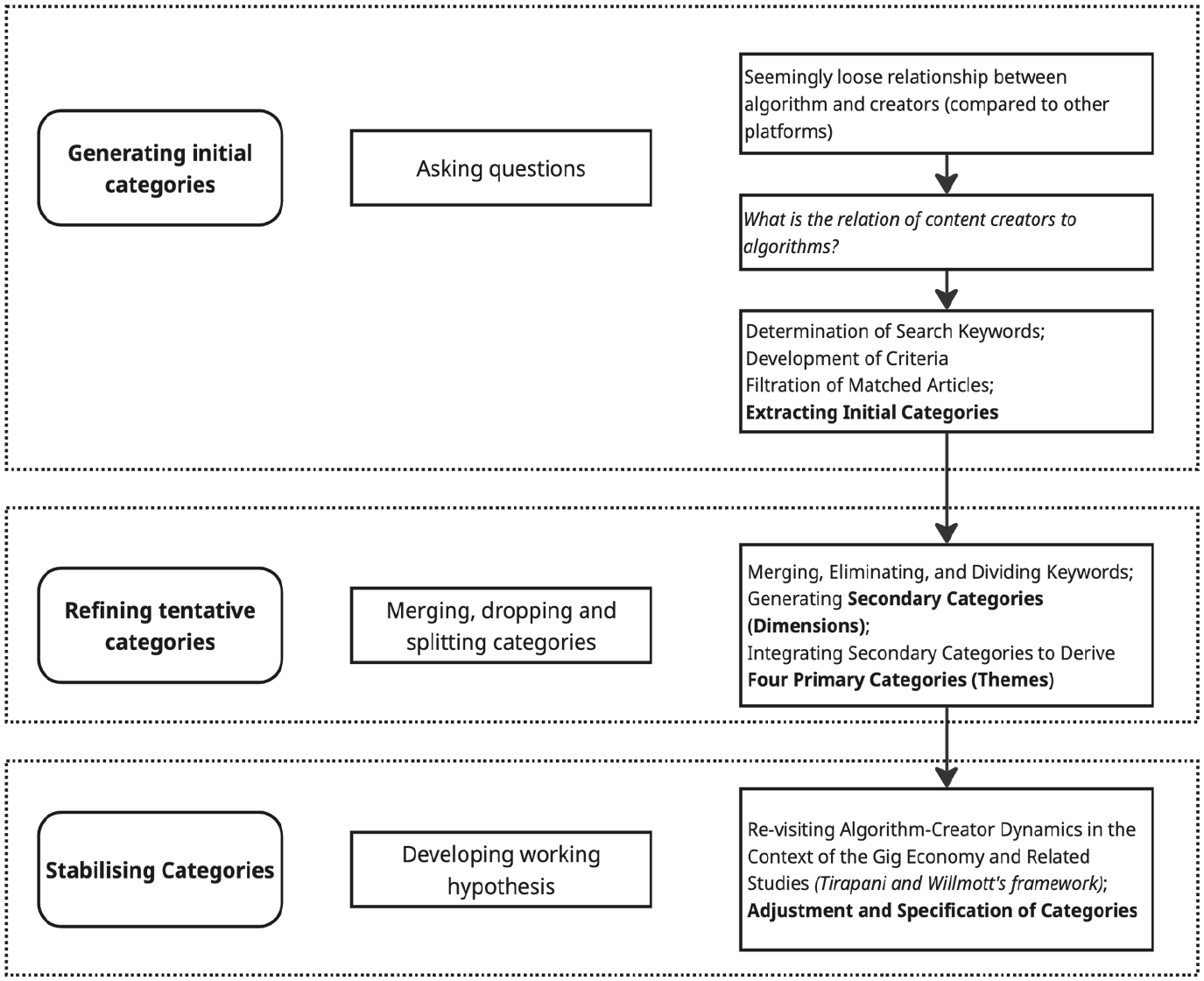

This article adopted a theory-building qualitative approach, extensively used in qualitative research (e.g. Cini, 2023), to explore algorithms and content creation on digital platforms. Inspiration was drawn from the three-step active categorisation framework of Grodal et al. (2021), understanding that the move of data to theory should be a dynamic process, subject to ongoing, active refinement by researchers. Figure 1 outlines this process.

Analytical process.

Generating initial categories

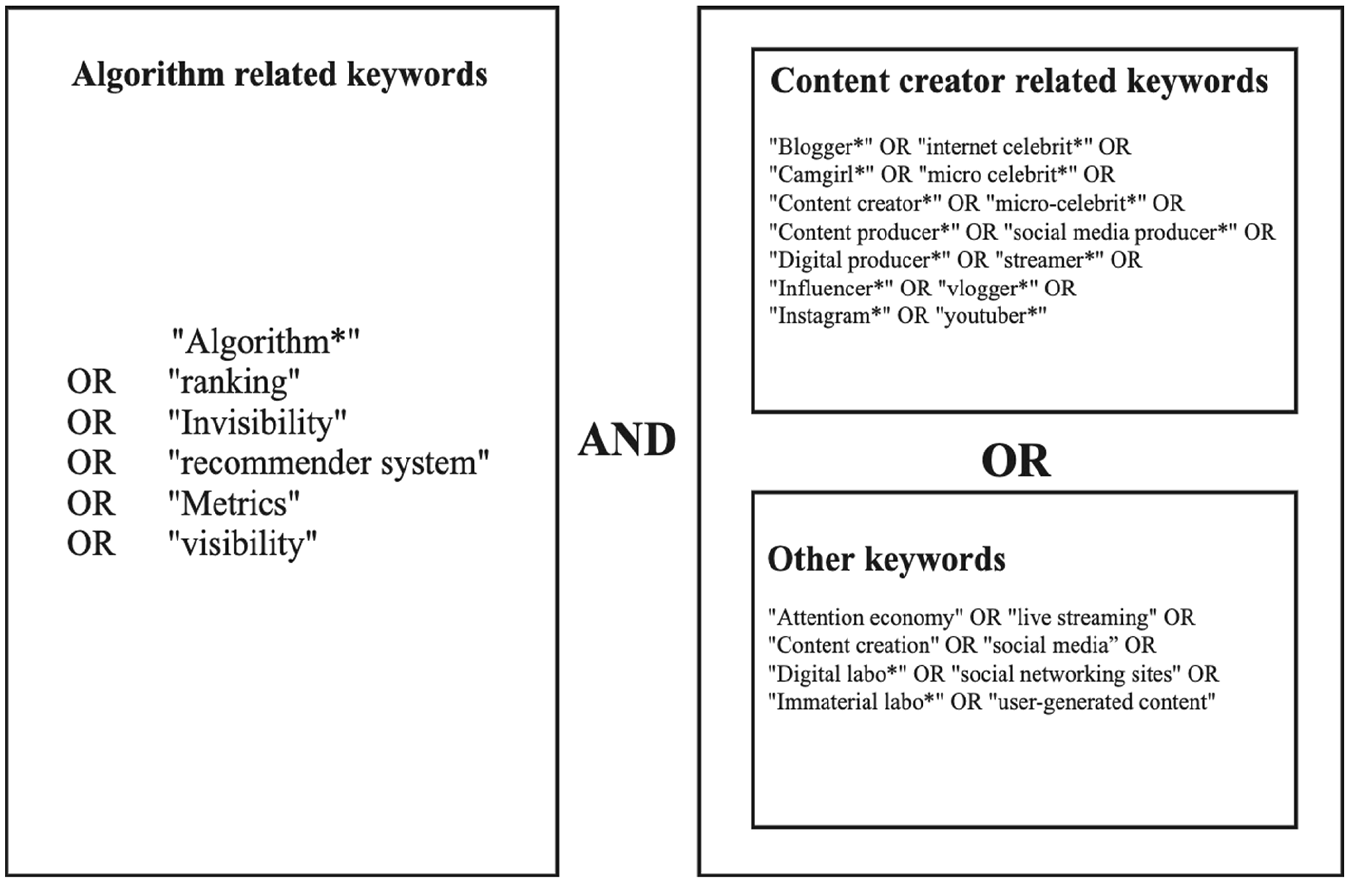

We started the research with some expertise in content creation in the gig economy and an interest in examining the relation between creation and algorithms. Compared with workers in other areas of the gig economy, content creators seemingly have a loose connection with algorithms. It appears that creators enjoy greater autonomy, encompassing not only the ability to choose when and where to work but also what work to undertake – the content they create. Thus, our inquiry began with a fundamental question: What is the relation of content creators to algorithms? Addressing this question, we performed a systematic literature review, using the most recognised databases in business and management, namely Scopus, Web of Science and EBSCO Business Source Ultimate. The key search terms are presented in Figure 2.

Search terms used in databases.

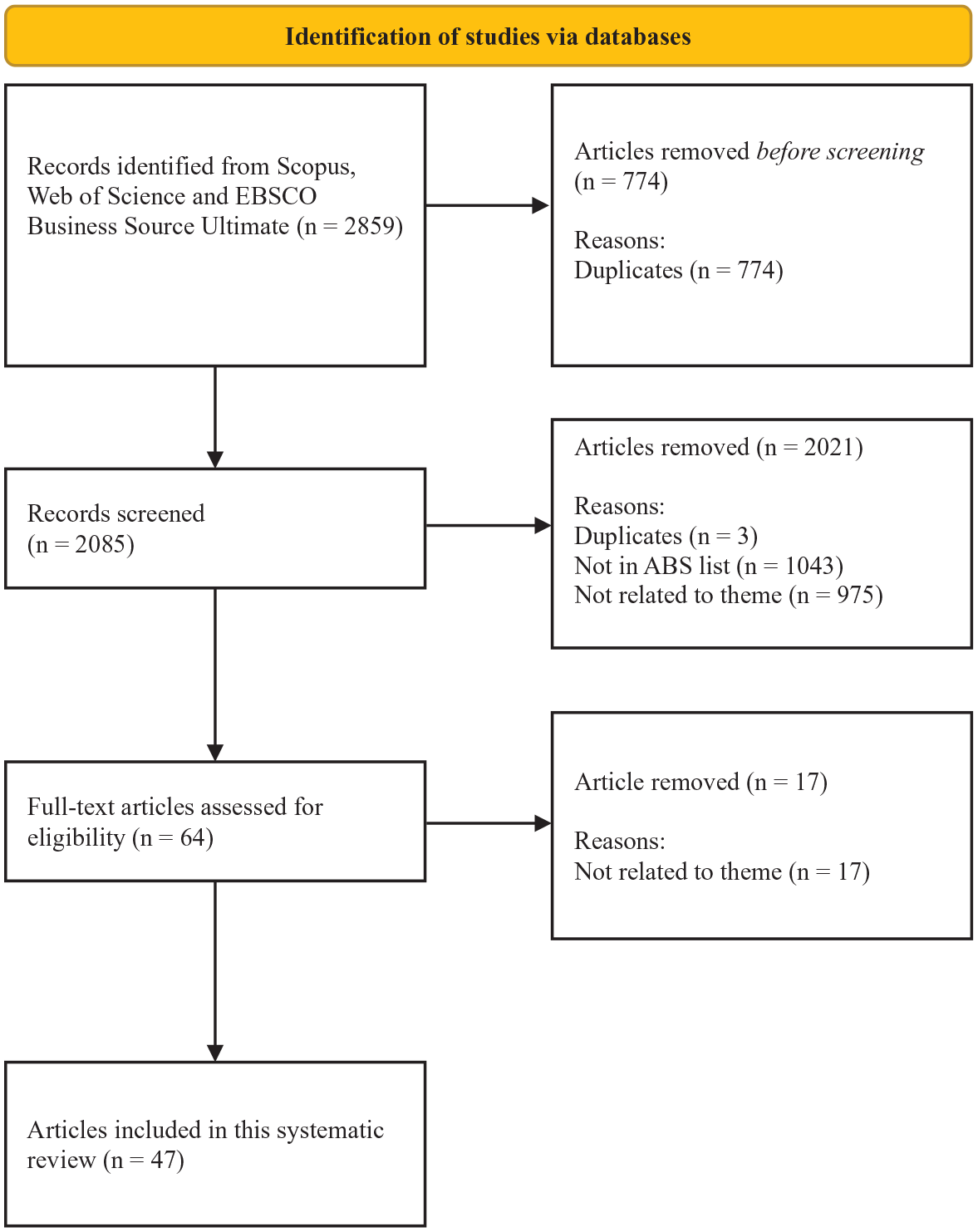

We queried and searched for these terms in the keywords, abstracts, titles and texts of articles published in double-peer-reviewed journals. Formal exclusion criteria, including language (language diversity among the authors meant non-English articles were excluded), as well as article type (to ensure consistency and quality, working papers, announcements, proceedings, dissertations, books and book chapters were removed from the selection process), were employed, resulting in 2859 articles. After removing duplicates, the sample size was reduced to 2085. To ensure the consistency and relevance of our analysis, articles in journals out of the Chartered Association of Business School 2021 list were excluded, in line with common practice for systematic reviews. Additionally, three further duplicates were identified and removed at this stage, reducing the sample to 1039 articles.

In the second step, the authors acted as independent coders to determine the thematic relevance of the articles based on the title, abstract and keywords. In this process, two key inclusion criteria were used: (i) algorithms used by the platform (e.g. ranking, etc.) are well-defined and constitute the core content of the article; (ii) content creators constitute the main actors in the article. In so doing, articles in which algorithms and content creators were not central features were excluded. The authors screened the articles simultaneously to ensure the accuracy of the results. Articles about which there were doubts were discussed by the authors.

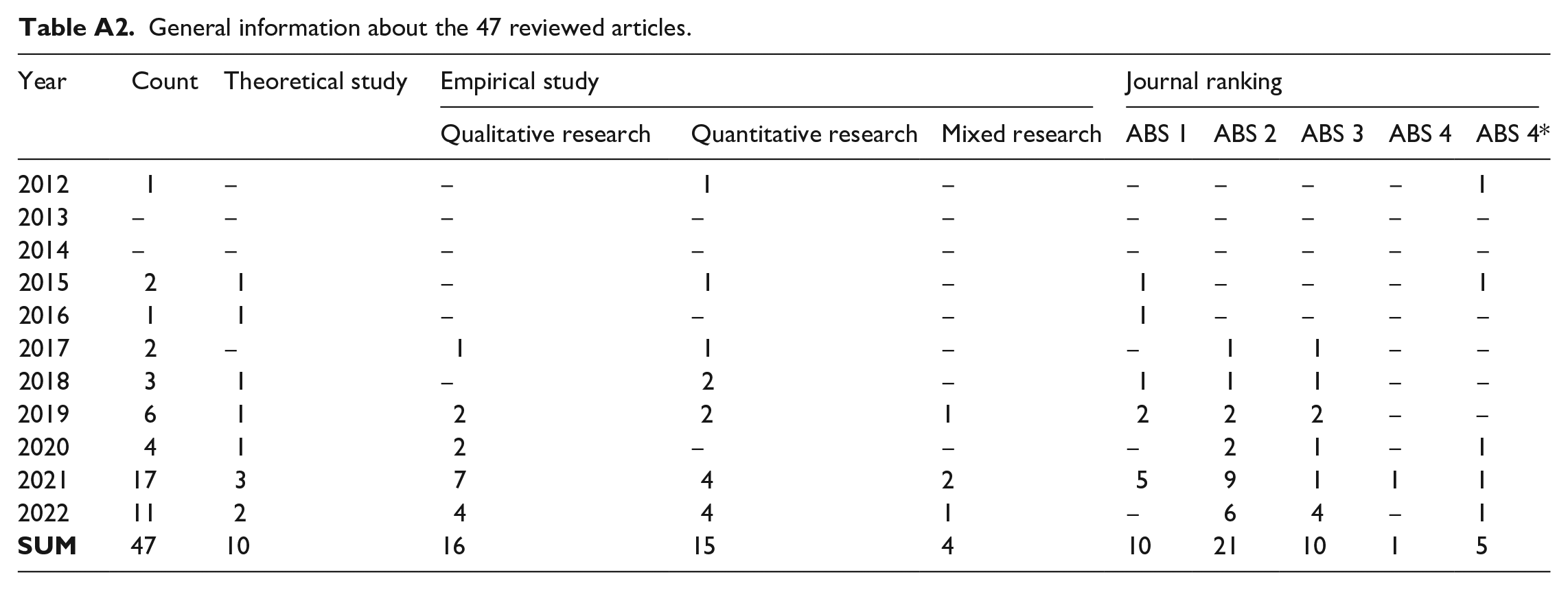

Through this process, 955 articles in which algorithms were not well-defined and 20 articles unrelated to content creators were excluded. A final round of screening was then performed on the remaining 64 articles. The authors read the full articles separately to further determine whether the articles met the two key criteria outlined above. We finally retained 47 articles as our sample after excluding 17 articles not sufficiently related to our core themes and one article identified as an essay. Figure 3 provides an overview of the searching and filtering processes. Further information about the articles is provided in the Appendix.

Flowchart of the systematic search process.

Refining tentative categories

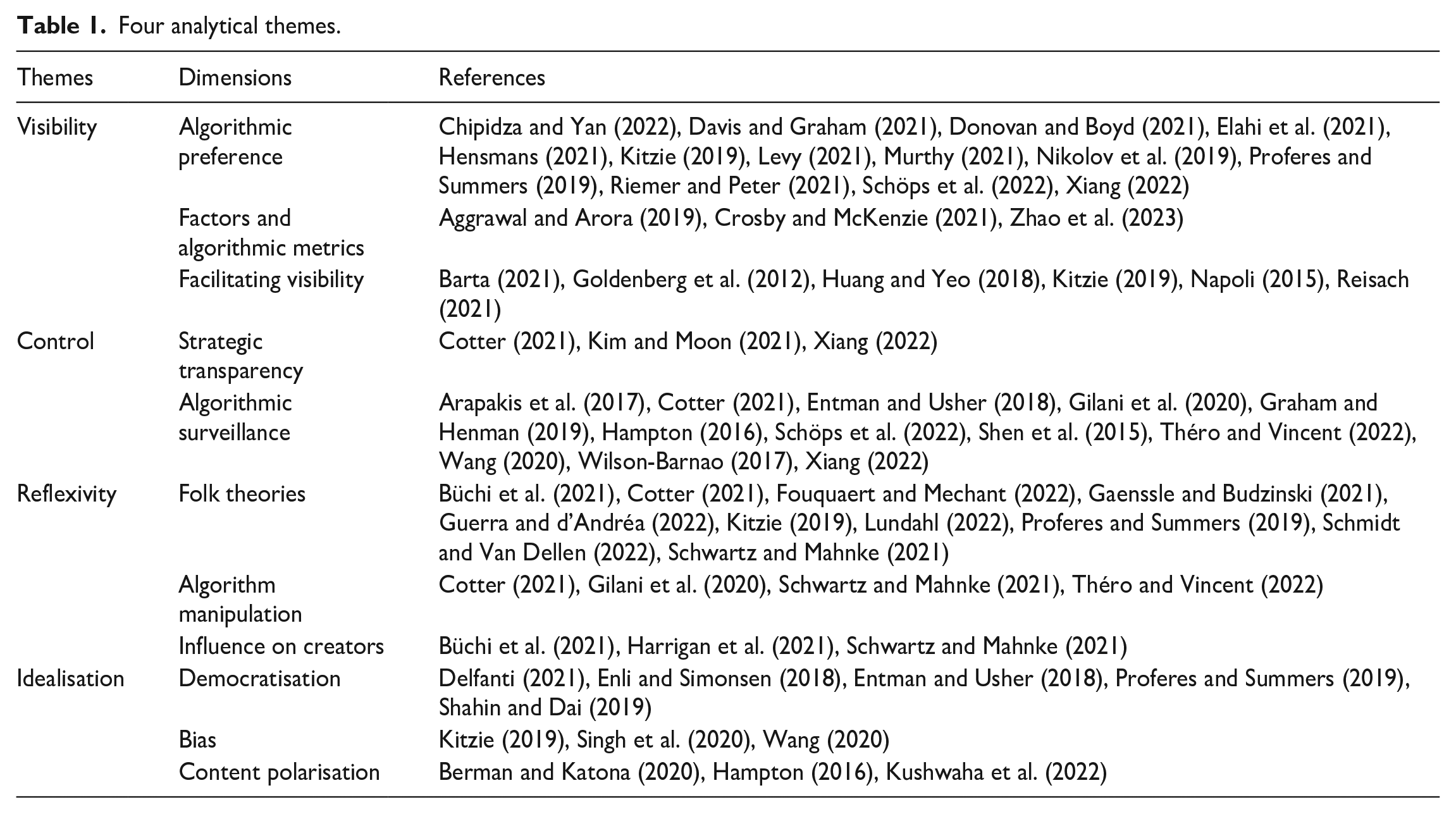

We analysed the content of the 47 articles selected, informed by our own readings and knowledge of the wider literature, with the objective of identifying key areas of interest characterising this set of articles. Initial categories were articulated in order to classify the articles – these were then discussed through meetings among the authors, thereby merging, dropping and splitting the initial categories contributed by each author, which resulted in the articulation of 11 secondary categories (Dimensions). Thereafter, the authors consolidated these 11 secondary categories into four broad primary categories (Themes), namely: visibility, control, reflexivity and idealisation. The dimensions and themes are shown in Table 1.

Four analytical themes.

Stabilising categories

After data analysis, prior research and Tirapani and Willmott’s (2023) conflict framework were synthesised, with the dynamic and asymmetrical power relationship between content creators and algorithms revisited. This re-evaluation prompted adjustment and finalisation of our themes. Our final themes are the market rationality underpinning visibility, the potential power dislocation caused by folk theories, the neo-normative control of creators through algorithms and the subversion of beatific fantasies. We use these themes to structure our findings section.

Findings

Here, we integrate the analysis of our 47 articles with Tirapani and Willmott’s (2023) framework to explore the power dynamics between algorithms and creators through four main themes: the market rationality underpinning visibility, the potential power dislocation caused by folk theories, the neo-normative control of creators through algorithms and the subversion of beatific fantasies. These are matched to the themes outlined in Tirapani and Willmott’s (2023) framework.

The market rationality underpinning visibility

Algorithms shape how users interact with information by regulating visibility (Donovan and Boyd, 2021; Schöps et al., 2022). Algorithmic content distribution amplifies or suppresses the reach of particular messages, which determines the nature of content made available to us (Riemer and Peter, 2021). For example, platforms use algorithmic curation to show recommended content based on viewers’ previous behaviours, emphasising similarity and popularity (Murthy, 2021; Proferes and Summers, 2019). Decisions regarding what content should be visible are mainly made by consumers, favouring the polarisation of content (Chipidza and Yan, 2022; Levy, 2021; Riemer and Peter, 2021), the development of popularity bias (Elahi et al., 2021; Hensmans, 2021; Kitzie, 2019; Nikolov et al., 2019; Schöps et al., 2022) and ultimately the spread of radical content (Murthy, 2021). The more popular creators are, the greater their visibility (Proferes and Summers, 2019), with hashtags and algorithmic recommendations valorising content mainly generated by influencers and partisans (Hensmans, 2021; Schöps et al., 2022). It appears that content creative platforms endorse the unequal distribution of algorithmic visibility through their focus on increasing viewers’ engagement (Xiang, 2022) and financial profits (Elahi et al., 2021; Hensmans, 2021; Riemer and Peter, 2021; Schöps et al., 2022). In other words, visibility functions as the main currency within virtual communities, with its increase symbolising the accumulation of social capital by creators.

The underlying mechanisms of visibility are also rooted in complex algorithmic logics, manifesting through quantifiable outcomes. Popularity bias is not always consistent (Davis and Graham, 2021). Although users who are already popular are likely to become more popular through recommendations, unpopular tweets or content can also increase visibility (Elahi et al., 2021). Other factors, such as changes in the characteristics of the content or the behaviour of the creator, may also affect visibility; for instance, video age and length influence the viewership of YouTube videos (Aggrawal and Arora, 2019). Some unintentional actions of creators, such as disclosing income (Crosby and McKenzie, 2021) and switching between channels (Zhao et al., 2023), can negatively impact visibility. This phenomenon resonates with the social logics articulated by Tirapani and Willmott (2023), wherein content creators can assess the value of their work (visibility) using quantified metrics, and employ targeted strategies to enhance it.

Content creation is characterised by heterogeneity, where the intended purpose of the published content influences the strategies creators adopt to enhance visibility. Algorithms’ affordance helps creators to foster the ‘retweetability’ (Huang and Yeo, 2018) and spread of user-generated product links (Goldenberg et al., 2012). Algorithms perform a type of disclosure, different from simply increasing visibility, enabling creators to enhance their visibility and reachability within certain groups. For example, the searchability of sexual assault disclosure is facilitated by hashtags (Barta, 2021) and that of information needed by LGBT groups by YouTube’s search engine (Kitzie, 2019). Other algorithmic scenarios, such as filtering and prediction of online behaviour, significantly affect the opinion and process of decision-making of audiences (Napoli, 2015; Reisach, 2021). This heterogeneity exacerbates the complexity of algorithms managing visibility.

The potential power dislocation caused by folk theories

The ‘black-box’ nature of algorithms makes it difficult for creators to formulate strategies (Büchi et al., 2021; Schwartz and Mahnke, 2021). Although how algorithms operate is difficult to understand, creators are keen to use the results of metrics related to visibility (view counts, content ratings, etc.) to ‘reverse engineer’ the algorithm (Büchi et al., 2021; Cotter, 2021; Kitzie, 2019). This may be achieved through a process of continuous attempts and iterative reflections (Proferes and Summers, 2019). Another practice that represents an active attempt by creators to understand algorithms (Büchi et al., 2021) is the crafting of folk theories, which correspond to an individual’s development of an intuitive and informal theory aimed at explaining the functioning of algorithms. Based on intuition, these theories tend to be vague but are nonetheless integral to the behaviours of creators (Lundahl, 2022). Some studies have attempted to design additional algorithms as a more standardised and professional means of clarifying these circulating folk theories to assist creators (Fouquaert and Mechant, 2022). Ambiguous folk theories can also be discussed and circulated with other creators in content creative platforms through reflective content (Guerra and d’Andréa, 2022; Schmidt and Van Dellen, 2022). For example, content creators hold various lay theories and justifications regarding Facebook’s profile analysis activities. These theories are often shaped by creators’ personal experiences rather than by scientific knowledge, making them less reliable or accurate (Büchi et al., 2021) This collaborative effort highlights the effectiveness of algorithmic management, which relies on the lack of transparency, leading creators to respond by actively anticipating compliance (Heiland, 2025). Folk theories also appear to be the product of collaboration among creators, embodying a form of beatific fantasy, or ‘fantasmatic logics’. If more, and more robust, folk theories were articulated, creators would be more confident in gaining greater visibility (Gaenssle and Budzinski, 2021).

Resulting from collaboration among creators, folk theories seemingly endow creators with collective power, leading to the potential dislocation of power between creators and algorithms. Creators consciously manipulate algorithms based on their acceptance of folk theory regarding algorithmic functioning. This manipulation revolves around visibility, as creators attempt to alter the results of algorithmic metrics through purposeful content curation (Abidin, 2022; Barta, 2021; Gilani et al., 2020). Manipulating algorithms can also allow creators to escape punishments if the content violates the platform’s rules (Théro and Vincent, 2022). Although some creators claim to be ‘experts’ in algorithm manipulation, this is less effective than they think (Schwartz and Mahnke, 2021), perhaps because the folk theories to which they adhere are unstable. This instability may be the result of a combination of creators’ mistrust towards folk theory and their excessive trust in platforms, or, paradoxically, vice versa. In fact, content creators may be able to maintain both positions simultaneously. However, platforms are generally regarded as the authoritative interpreter of the algorithm, so once the platform issues a statement questioning the folk theory, the folk theory falls apart (Cotter, 2021). For instance, content creators were convinced of the existence of shadowbanning (i.e. restrictions placed on creators’ content without their knowledge) by observing and reflecting on visibility metrics. Yet, Instagram issued a public statement denying the existence of shadowbanning, which led to confusion and self-doubt among content creators. Ultimately, this undermined the folk theories that had developed around shadowbanning (Cotter, 2021). This shows how platforms can strategically dismantle carefully constructed folk theories, reasserting control through hegemonic ideology. This allows conflicts to be neutralised and re-naturalised, seamlessly suturing them back into the dominant social logics.

Yet, once creators realise that algorithms are not as powerful and useful as they think, algorithmic disillusionment occurs (Büchi et al., 2021). Algorithmic disillusionment results from a conjunction of folk-theory inaccuracies and excessive trust in algorithms. Although some of the negative effects of algorithms are widely perceived by creators, this is unlikely to deter creators from using the platform (Schwartz and Mahnke, 2021). Creators can delineate identity boundaries, such as between influencers and regular creators, based on the results of algorithmic metrics (Harrigan et al., 2021), although this boundary is not clearly defined in the broader context of content creative platforms.

Neo-normative control of creators through algorithms

Platforms leverage algorithms to control creators, thereby maintaining their dominant position. When confronted with the power dislocation caused by creators’ folk theories, platform control (or political logics) is activated. Although there is a lack of explanation regarding the inner workings of algorithmic ranking and moderation (Cotter, 2021; Xiang, 2022), platforms can appear to ‘open up’ the black box, offering creators supposed insight into the working of the algorithm. For example, Instagram leveraged its authority over the algorithm, employing narrative-based strategies to reinterpret the algorithm. Specifically, Instagram addressed accusations of shadowbanning by publicly denying its existence, describing it as a myth stemming from user misunderstandings or glitches in the system (Cotter, 2021). The strategy not only distracts creators from issues such as visibility manipulation (Cotter, 2021; Kim and Moon, 2021), but also serves to appease creators, thereby reducing scrutiny and criticism of the platform. Yet, this does not always work as influencers’ knowledge can help others to identify the algorithms’ shortcomings and reflect on the purpose of platforms (Cotter, 2021). Their opacity can inadvertently fuel conspiracy theories and suspicion about platforms (Kim and Moon, 2021).

Platforms occupy a privileged position regarding the manipulation of visibility (Schöps et al., 2022). Algorithms form the infrastructure and the protocol that separate sources from searchability (Xiang, 2022), so they control the visibility of information by modifying engagement for certain content (Théro and Vincent, 2022) – generating a mythology around ‘shadowbanning’ (Cotter, 2021). As such, algorithmic surveillance is characterised by a form of pervasive awareness, with platform users being simultaneously observer and observed (Hampton, 2016), which is reminiscent of the metaphorical panopticon of technology (Leclercq-Vandelannoitte et al., 2014). By extension, platforms can quantify users’ engagement and creators’ performance by mining data (Arapakis et al., 2017; Entman and Usher, 2018). This creates a recursive loop through which algorithms are fed by viewers to help those same viewers navigate platforms, offering a personalised experience (Graham and Henman, 2019; Wilson-Barnao, 2017). This surveillance environment thus creates a form of disciplinary power prompting creators to engage in different forms of self-management (Gilani et al., 2020).

Algorithmic metrics allow behavioural data to be combined, calculated and ranked (Schöps et al., 2022; Xiang, 2022), with rankings recognised by creators and audiences as useful indicators of future behaviour (Hampton, 2016). The metrics build a power relationship between content creators and their followers in the process of improving visibility (Gilani et al., 2020). The diversity of methods for making such calculations increases competition among creators (Shen et al., 2015; Wang, 2020). This form of neo-normative control appears to represent a ‘free control’, with creators willingly and actively engaging with distributed control (Leclercq-Vandelannoitte et al., 2014). Leclercq-Vandelannoitte et al. (2014) further suggest that the foundation of this ‘free control’ is trust, indicating that creators’ faith in the scientific rationale and fairness of algorithms diminishes their perception of such control. In other words, creators’ beatific fantasies continuously fertilise the ground for algorithms’ neo-normative control (Tirapani and Willmott, 2023).

Subversion of beatific fantasies

As previously mentioned, content creative platforms have crafted and propagated a series of beatific fantasies that hold immense allure for creators. These fantasies lead creators to believe in a (misleading) form of collective power, place strong faith in the justice of algorithms and actively participate in the platform’s neo-normative control. In parallel though, content creative platforms extend creators’ social networks through virtual communities. Fostering intra-group communication, and increasing the accessibility to other creators, seem to encourage a form of platform democratisation (Shahin and Dai, 2019), with creators contributing to the implementation of their own agenda (Proferes and Summers, 2019). Yet, gatekeepers are usually specified groups of users, and not any creator (Enli and Simonsen, 2018; Proferes and Summers, 2019), suggesting that the process of democratisation is fraught with limitations. It should be remembered that the monetisation of user data is the core business of platforms (Delfanti, 2021), highlighting the imperative for platforms to closely monitor activities while creating the impression of agency.

Perspectives of algorithmic justice encompass the absence of bias in the virtual world. The digital environment is built by algorithms, which reproduce the stigmatisation of marginalised groups, and platforms with the reputation of hostility towards them persist (Kitzie, 2019). To be accepted by algorithms and certain virtual communities, creators from marginalised groups have resorted to the use of fake social network profiles to boost their visibility (Kitzie, 2019; Wang, 2020), or quit altogether, due to negative psychological impacts such as low self-esteem (Wang, 2020). Unsurprisingly, gender stereotypes are re-performed on platforms (Singh et al., 2020), with institutionalised inequalities simply enduring.

The persistent contact and pervasive awareness created by algorithms may fundamentally restructure virtual communities (Hampton, 2016), shaping a rhetorical space with its own shape, size and preponderant topics (Kushwaha et al., 2022). This process favours the emergence of content polarisation (Berman and Katona, 2020). Intentionally or not, algorithms may limit creators’ access to different viewpoints, leading to social fragmentation and political divisions (Berman and Katona, 2020). In turn, this means that virtual solidarity among creators on platforms, and the achievement of algorithmic justice, remain at the level of utopian fantasy.

Discussion

This section highlights how the four dimensions identified play out through the themes of individualisation and hegemonic ideology underlying Tirapani and Willmott’s (2023) framework. The findings illustrate how neoliberalism, operating within content creative platforms, fosters, promotes and legitimises the dynamic yet overwhelming power of algorithms over content creators. Figure 4 provides a graphical summary of our main argument.

Power relationships between content creators and algorithms.

Individualisation

Through the support of social logics, content creative platforms accentuate individualisation, attributing personal success to individual efforts. Most content creators possess a sense of ownership over their creative outputs, with a subset even perceiving themselves as micro-entrepreneurs (Duffy and Pruchniewska, 2017). Responsibilisation is particularly noticeable where the rise of autonomy is associated with decentralised systems and entrepreneurship, shaping a strong sense of independent creativity and marketability for creators (Ashman et al., 2018). Algorithms play a dominant role in the quantification of user engagement and creators’ performance (Arapakis et al., 2017; Entman and Usher, 2018), as transforming behavioural data into metrics makes creators’ performance evaluable by platforms. This emphasises the connection between metrics and the threat of invisibility – the withholding of the lifeblood of online content creation (Bucher, 2012). Content creators deliberately use algorithmic outcomes (metrics) to modify their content, enhancing its value through strategic adjustments.

Folk theories emerge organically through content creators’ interpretations of algorithms. While the essence of folk theories is to assist creators in understanding algorithms and enhancing the value of their content, the opacity of algorithms makes such endeavours complex, leading creators to deviate from their original intent (thus resulting in dislocation). They thus begin to experiment with the algorithm, using folk theories as a means to challenge platforms’ authority. Content creators seem to have the capacity to manipulate algorithmic metrics, but successful manipulations are rare and often fail to deliver the expected outcome. Within political logics, the opacity of algorithms effectively reformulates creators’ attempts to challenge algorithms, thereby preserving the algorithms’ overwhelming power.

Value is created by matching workers and customers through the use of algorithms. Platforms maintain a position of power through their knowledge of how algorithms are constructed and operationalised. Creator–audience relations are situated within the architecture of algorithmic metrics that monitor users’ online actions and transform the data that can be evaluated for viewers’ engagement and platforms’ value realisation. Therefore, the process of content producing remains under algorithmic control. In addition, how individuals and groups adapt to change their situation remains, ultimately, under the aegis of platforms’ power (Nafstad et al., 2007). The inner workings of algorithms still profoundly shape creators’ (capacity for) agency, with agency shaped through personal experiences and attempts at reverse engineering (Büchi et al., 2021). Content creators, though reflexively seeking entrepreneurial, autonomous, or agentic solutions, are still influenced by the algorithm, even at a cognitive level. This represents a form of manipulation through which metrics are internalised as a form of potential bargaining power within platforms’ normative framework. The established political logics thus negate threats of resistance from content creators, subsequently reasserting platforms’ hegemonic control and algorithms’ power.

While positive portrayals of platform work proliferate, it is apparent through the analysis of the literature that under present capital–labour relations, there is a burden of responsibility on creators, without concomitant levels of real autonomy. They are responsible for creating content itself, but also for monitoring their own profile, assessing and reacting to their own metricisation, and attempting to negotiate their place in algorithmic matrices. Autonomy exists but only to the extent that it is embedded in algorithmic management (Reiche, 2023). Platforms, operating under the logic of neoliberalism, have never ceased their efforts to control creators. Although there is a common belief of work autonomy, the results seem to confirm the platforms’ leverage of algorithmic management to shift responsibility to the workers to control them (Duggan et al., 2020).

Hegemonic ideology

Fantasmatic logics can facilitate foundational changes or the re-naturalisation of political logics (Tirapani and Willmott, 2023). This is evident in the dynamic, yet asymmetrical, power relationship between content creators and algorithms, which can be explained through the concepts of universalism (virtual unity) and disembeddedness (algorithmic justice). Neoliberalism rationalises all social orders as governed by universalism, and within that, understandings of algorithms can be summarised and further constructed into entire virtual communities (Tirapani and Willmott, 2023). Creators’ understandings of algorithms shape interactions between creators and algorithms, flowing dynamically between creators in the form of ‘folk theories’ and thereby becoming general consensus (Toff and Nielsen, 2018). Folk theories emerge organically through content creators’ individual interpretations of algorithms, forming the initial basis for understanding algorithmic dynamics. However, these interpretations rarely remain isolated; ambiguous folk theories are often discussed, refined and circulated within content creative platforms. This collective effort highlights how individual understandings evolve into shared narratives through collaboration. The circulation of folk theories in the virtual community, therefore, represents a dynamic interplay between individuation and collective effort, where creators’ personal insights contribute to and are shaped by the collective knowledge of the group. It seems to hint at the power of creators to manipulate algorithms, but in truth, creators have unwittingly become captive to neoliberalism (Pekkala, 2024). Rather than reflecting creators’ implicit control over algorithms, these folk theories are a post hoc, inaccurate understanding of algorithmic behaviour (Kost et al., 2020). Folk theories thus amount to a post hoc, inaccurate understanding of algorithms. The opacity of algorithms makes creators powerless against algorithms, and resistance can only occur at the level of complaint and annoyance (Ytre-Arne and Moe, 2021). Creators’ efforts to build widely circulated and well-accepted folk theories are easily undermined by algorithms’ opacity and excessive trust in platforms. The discourses of autonomy, entrepreneurship and agency underlying neoliberalism obscure the authentic manifestation of algorithmic exploitation.

Faced with these contradictions, content creators attempt to materialise democratic ideals by creating virtual communities (Goode, 2009), where they attempt to affect wider social and cultural discourse, enacting a form of disembeddedness from neoliberal society’s dominant economic and ideological order. Yet they operate in a space where algorithms remain the guarantor for the equitable distribution of visibility. Fed data that reflect an unequal field, algorithms may actually replicate or even amplify the biases that exist offline (Kelan, 2024), thereby transferring to online spaces long-existing inequalities (Kelan, 2024; Tambe et al., 2019).

Mirroring the digital divide where economic power and privilege are reflected in the online world, the power that individuals are able to wield, varies depending on their social capital (Pekkala, 2024). Algorithms categorise individuals and prepare different results accordingly (Vassilopoulou et al., 2024). Thus, attempts at separating, shaping and disembedding are always already doomed to fail, since the ideological, economic and cultural currents of the ‘real’ world are continually thrust into the online sphere of the creator; curated though it may be through a particular corporate and technical infrastructure. That algorithmic justice can be characterised as illusory implies that algorithms dominate power relations and shape the behaviour of creators. Algorithms secure power on behalf of the platform by bringing creators into a virtual space that claims to be democratic and detached from real society, but where the dynamics of the real world are continually cast in media res.

Limitations and future research

Our article presents limitations and we outline three. First, most of the reviewed articles did not specify the platform studied, which restricted our ability to explore potential differences between platforms. Algorithms are known to vary across platforms, affecting notably rankings and visibility, pointing to the importance of investigating a broad range of platforms in detail. Second, some popular platforms, such as TikTok/Douyin, did not feature in this article. This may be attributed to the restriction of the exclusion criteria that we used. Broadening the search to the field of communication technology might have provided a broader range of platforms, enriching the analysis. Finally, the lack of relevant data makes it difficult to differentiate between various categories of content creators (e.g. full-time vs part-time) and conduct comparative studies.

We see three potential areas of interest for future research. First, there is a need for more in-depth explorations of how visibility shapes interactions between content creators, platforms and algorithms. As a proxy monetary unit for creators’ work, visibility plays a central, yet arguably overlooked, role in content creation. Further research could entail a more granular examination of the ramifications of algorithmic power, with a focus on the impact of algorithmic control on various aspects of platform-mediated creation work, such as the retention rate of ‘good’ creators, their creativity or marketability. Additionally, studies could examine the impact of visibility data (as a monetary unit) on creators’ work.

Second, more research is needed to analyse folk theories to explain how these theories might paradoxically produce knowledge reinforcing the authority of the algorithmic power and the compliance of content creators. Future research could explore how creators understand and manipulate algorithms (looking into the process of reverse engineering), the shift of attitudes towards algorithms in the process (e.g. from trust to distrust) and the potential impact of that shift on content creation. This would provide the basis for a stronger understanding of the nature and quality of content creators’ work.

Third, further research on the constitution of virtual communities through algorithms is required. Fairness and equality remain, on digital platforms, illusory (Vassilopoulou et al., 2024). Algorithms not only derive existing biases from society, they transfer and amplify them in the digital arena. Future research could focus on the paradox of algorithmic fairness in content creative platforms, and the impact of ‘fake fairness’ on creators and their work. This would enrich research on how algorithms shape the environment of content creative platforms.

Conclusion

Our article makes three main contributions to research on the future of work. First, by systematically reviewing and organising previous research on creators and algorithms, it provides a holistic understanding of digital content creation in the context of algorithm-mediated platforms. Second, by mobilising Tirapani and Willmott’s (2023) framework, it explicates the intricate relationship between creators and algorithms. Visibility, mediated by algorithms, plays a crucial role in the process of creating digital content, which partly accounts for creators’ attempts to reverse engineer algorithms. With the view to gaining more control over the system, this represents an attempt to reshuffle the cards and grant more agency to creators. Third, we show that individualisation is the means through which algorithms impart beatific fantasies to creators, concealing the underlying nature of neo-normative control and serving to support the domination of algorithms over virtual communities. Ultimately then, there is a dynamic yet asymmetrical relationship between creators and algorithms, where the dominant power status of algorithms remains unchallenged, and creators, under the spell of fantasmatic logics, unwittingly accept it.

Footnotes

Appendix

General information about the 47 reviewed articles.

| Year | Count | Theoretical study | Empirical study | Journal ranking | ||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Qualitative research | Quantitative research | Mixed research | ABS 1 | ABS 2 | ABS 3 | ABS 4 | ABS 4* | |||

| 2012 | 1 | ‒ | ‒ | 1 | ‒ | ‒ | ‒ | ‒ | ‒ | 1 |

| 2013 | ‒ | ‒ | ‒ | ‒ | ‒ | ‒ | ‒ | ‒ | ‒ | ‒ |

| 2014 | ‒ | ‒ | ‒ | ‒ | ‒ | ‒ | ‒ | ‒ | ‒ | ‒ |

| 2015 | 2 | 1 | ‒ | 1 | ‒ | 1 | ‒ | ‒ | ‒ | 1 |

| 2016 | 1 | 1 | ‒ | ‒ | ‒ | 1 | ‒ | ‒ | ‒ | ‒ |

| 2017 | 2 | ‒ | 1 | 1 | ‒ | ‒ | 1 | 1 | ‒ | ‒ |

| 2018 | 3 | 1 | ‒ | 2 | ‒ | 1 | 1 | 1 | ‒ | ‒ |

| 2019 | 6 | 1 | 2 | 2 | 1 | 2 | 2 | 2 | ‒ | ‒ |

| 2020 | 4 | 1 | 2 | ‒ | ‒ | ‒ | 2 | 1 | ‒ | 1 |

| 2021 | 17 | 3 | 7 | 4 | 2 | 5 | 9 | 1 | 1 | 1 |

| 2022 | 11 | 2 | 4 | 4 | 1 | ‒ | 6 | 4 | ‒ | 1 |

|

|

47 | 10 | 16 | 15 | 4 | 10 | 21 | 10 | 1 | 5 |

Acknowledgements

The authors wish to thank the editor and anonymous reviewers for their constructive feedback on this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.