Abstract

We analyze nonlinear Friedrichs systems where the differential equation and the initial data contain the inverse of a small parameter

Keywords

Introduction

High-Frequency Wave Propagation

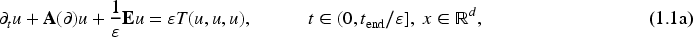

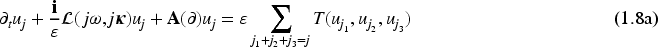

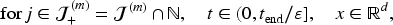

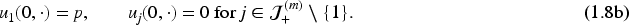

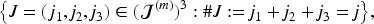

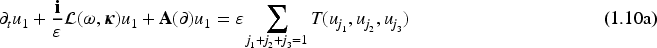

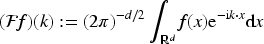

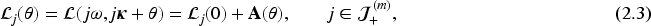

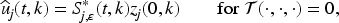

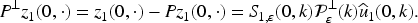

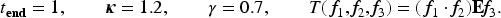

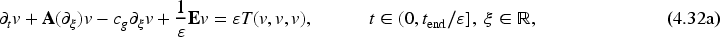

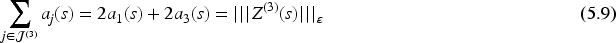

High-frequency wave propagation in nonlinear, dispersive media can be modeled by Friedrichs systems of the form:

The partial differential equation (PDE) (1.1a), the initial data in (1.1b), and the time interval involve a small positive parameter

The small parameter

In this paper, we consider wave packets where the wavelength of the oscillations is much shorter than the scale on which the envelope varies. This assumption excludes short or chirped pulses, which have been analyzed, for example, in Alterman and Rauch (2000, 2003), Barrailh and Lannes (2002), Chung et al. (2005), Colin and Lannes (2009), Colin et al. (2005), and Lannes (2011).

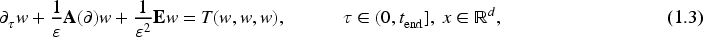

A feasible approach is to replace (1.1) by a different model, which can be solved numerically with significantly less efforts and at the same time provide a decent approximation to

The kernel of The function

Assumption (i) is only made in order to keep the notation simple; cf. Remark 4.6. Assumption (ii) is a polarization condition, which was also imposed in a similar way in Baumstark and Jahnke (2023), Colin and Lannes (2009, Theorem 1), Lannes (2011, Theorem 2.15), and other works.

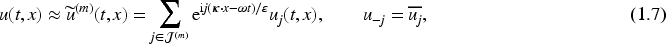

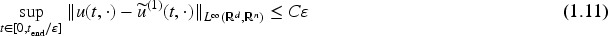

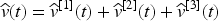

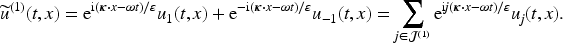

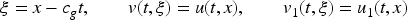

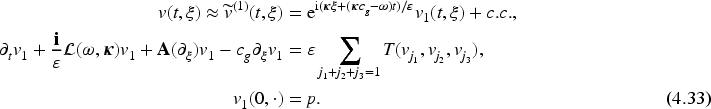

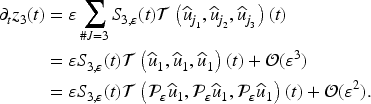

As in Baumstark and Jahnke (2023), we seek an approximation of the form:

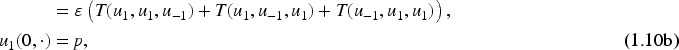

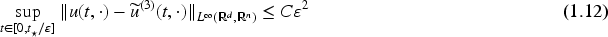

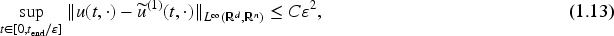

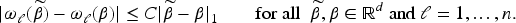

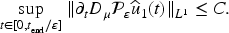

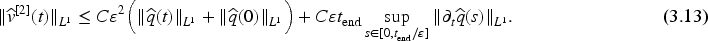

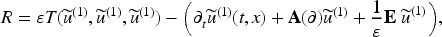

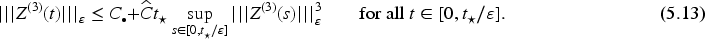

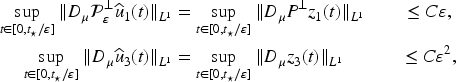

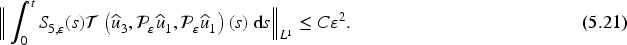

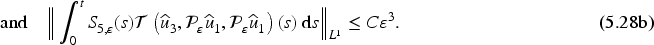

For the error of the SVEA (1.9), (1.10a), and (1.10b), the bound

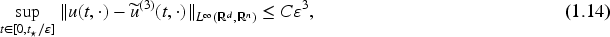

In this paper, we consider the situation where an error of

Numerical experiments indicate that the estimates (1.11) and (1.12) are both not optimal; see Sections 4.2 and 5.3. In this work, we will prove the following stronger error bounds.

Mathematically rigorous formulations of these two results with a detailed specification of the assumptions are given in Theorems 4.3 and 5.7. The bound (1.13) explains the error behavior which appears in numerical examples where a reference solution can be computed. Moreover, this inequality shows that the SVEA yields a significantly higher accuracy than the classical nonlinear Schrödinger approximation, which has an error of

Natural questions are whether and under which additional assumptions the approximations

In Donnat and Rauch (1997a), Joly et al. (1993, 2000), Rauch (2012), and other contributions, asymptotic expansions of solutions to problems similar to (1.1) have been analyzed in the regime of geometric optics, that is, for time intervals of length

Structure of the Paper and Notation

In Section 2, we specify the analytical framework, we review results on local wellposedness of (1.1) and (1.8), and we introduce a transformation of the coefficient functions

Notation

Throughout the text,

Analytical Setting

Wiener Algebra and Evolution Equations in Fourier Space

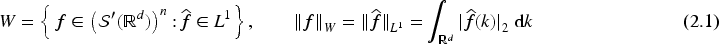

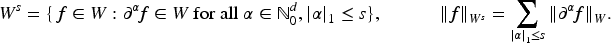

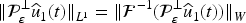

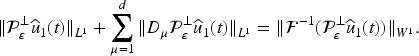

As in Baumstark (2022), Baumstark and Jahnke (2023), Baumstark et al. (2024), Colin and Lannes (2009), and Lannes (2011), we will analyze the accuracy in the Wiener algebra

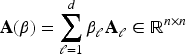

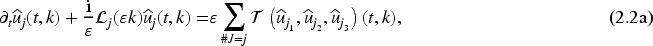

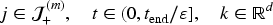

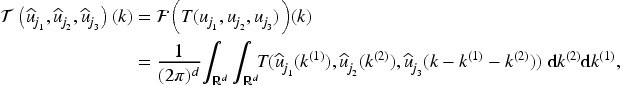

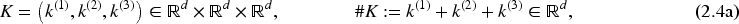

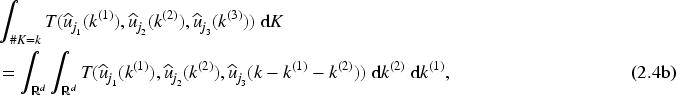

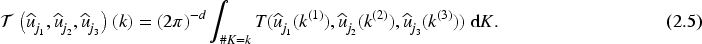

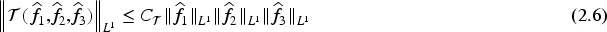

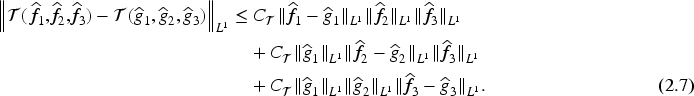

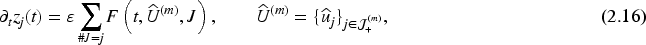

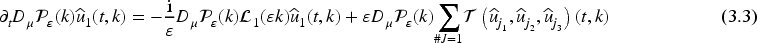

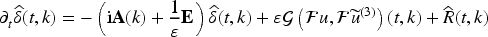

In order to work in the Wiener algebra, we apply the Fourier transform to the PDE system (1.8a). This yields

The polarization condition (Assumption 1.2(ii)) is not needed to prove existence and uniqueness of solutions to the original problem (1.1) and the PDE system (1.8). For the sake of consistency, however, we always allow for

If

We omit the proof, because Lemma 2.1 can be shown with the usual fixed-point argument. Other proofs for wellposedness of (1.1) via approximation by the SVEA are given in Colin and Lannes (2009, Theorem 1) and Lannes (2011, Theorem 3.8).

(Local wellposedness of (1.8))

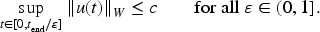

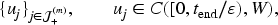

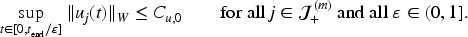

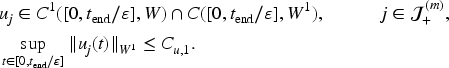

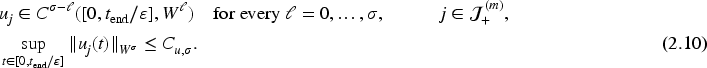

Let

If (2.9) holds with If (2.9) holds with If (2.9) holds with

The constants

For

Although

The highly oscillatory behavior of the coefficient functions

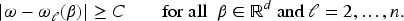

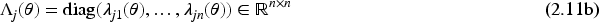

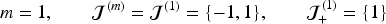

The matrix Every eigenvalue The eigenvalue

Assumption (i) corresponds to Assumption 2 in Colin and Lannes (2009), whereas Assumption (iii) is a part of Assumption 3 in Colin and Lannes (2009).

Explicit formulas for the eigenvalues in case of the Maxwell–Lorentz system and the Klein–Gordon system are given in Colin and Lannes (2009, Examples 3 and 4), and one can check that the Assumptions (i) and (ii) on the eigenvalues are true. Assumption (iii) is true if we choose

For

The matrices

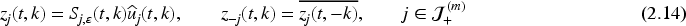

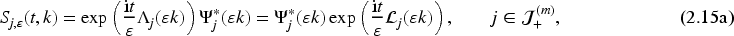

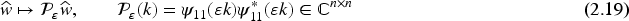

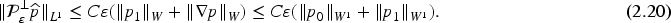

The strategy in the proofs of (1.13) and (1.14) is, roughly speaking, to distinguish the oscillatory “parts” of the solution from the nonoscillatory ones, and to carefully analyze how these parts interact in the nonlinearity. For this purpose, the following transformation was introduced in Baumstark and Jahnke (2023).

Let

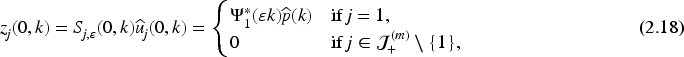

The transformations (2.14) and (2.15) are motivated by the fact that in the linear case, the exact solution of (2.2) is

Recall that by Assumption 1.2(i), the matrix

Throughout, we will frequently use the following facts. Since we have chosen the Euclidean vector norm

In this and the next section, we analyze the SVEA (1.9)–(1.10), which corresponds to setting

Let

See Colin and Lannes (2009, Lemma 2).

Let

See Colin and Lannes (2009, Lemma 3).

In Baumstark (2022), a similar result was shown without Assumption 2.3(iii), but on a possibly smaller interval

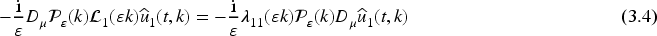

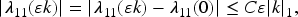

These results can be interpreted as follows. The term

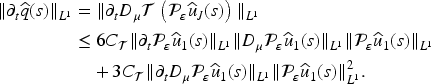

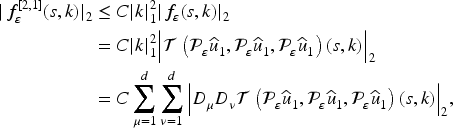

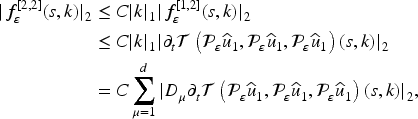

For the time derivatives of the other part

Let

Let

The proof is similar to the proof of Lemma 3.1. We choose

With Lemma 3.3, we can now show the following extension of Proposition 3.2.

Let

Choose a fixed

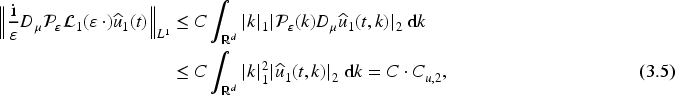

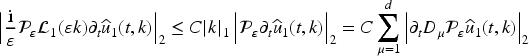

For the first term

For the third term

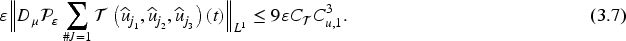

Now we consider the second term

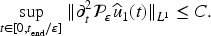

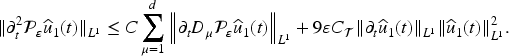

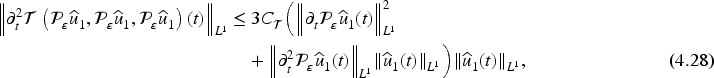

Before closing this section, we prove that even the second time derivative of

Let

Applying

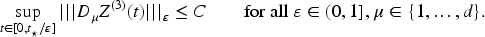

By taking more derivatives of (2.2a) and proceeding as in the proof of Lemma 3.5, it can be shown that

With the results from the previous section we are now in a position to prove the error bound (1.13), where

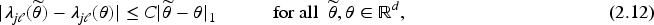

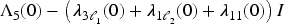

The error bound requires the following assumption on the eigenvalues of

The matrix

As mentioned earlier, explicit formulas for the eigenvalues in case of the Klein–Gordon system and the Maxwell–Lorentz system can be found in Colin and Lannes (2009, Examples 3 and 4). For these applications, one can check that Assumption 4.1 holds if the chosen eigenvalue

The following theorem is our first main result. It states that the SVEA converges with second order. We recall that the SVEA (1.9)–(1.10) is identical to (1.7)–(1.8) with

(Error bound for the SVEA)

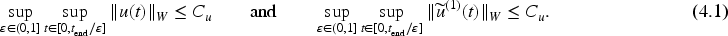

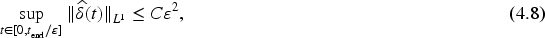

Suppose that (2.9) holds with

The following lemma is a preparation for the proof of Theorem 4.3.

Let

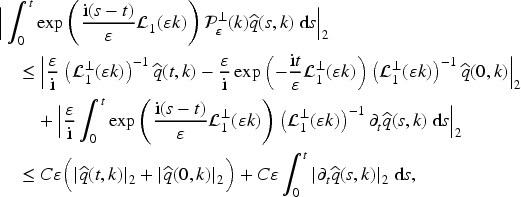

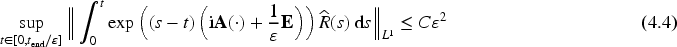

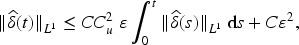

We will prove that (4.4) implies (4.2). Then, the second error bound (4.3) follows directly from (4.2) via the embedding

Since (4.2) is equivalent to

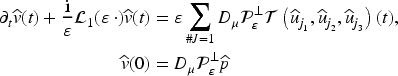

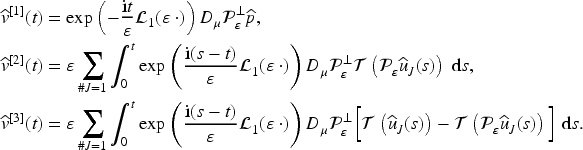

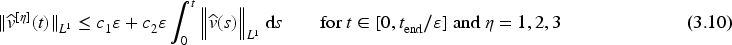

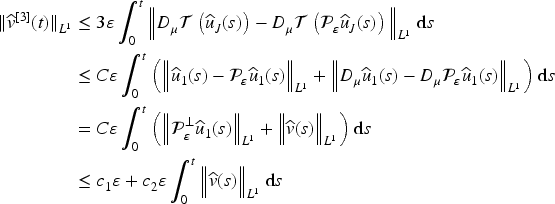

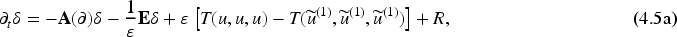

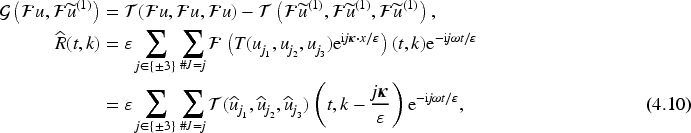

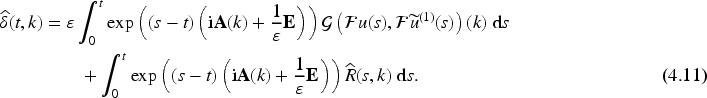

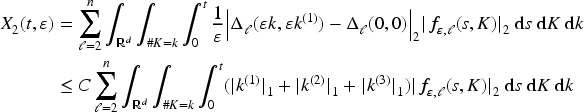

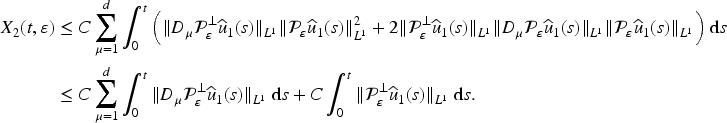

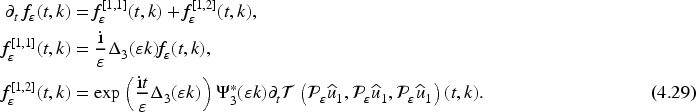

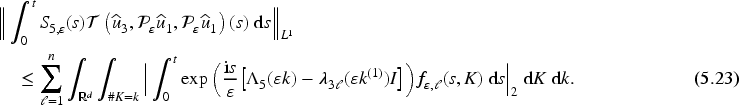

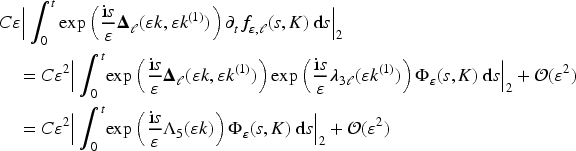

The central task in the proof of Theorem 4.3 is thus to prove (4.4). Equation (4.10) shows that

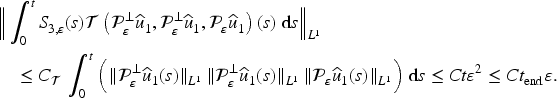

In view of Lemma 4.4, we have to prove (4.4). The strategy, notation, and presentation are very similar to the proof of Theorem 4.2 in Baumstark and Jahnke (2023), but there are some crucial differences which we point out below.

Step 1

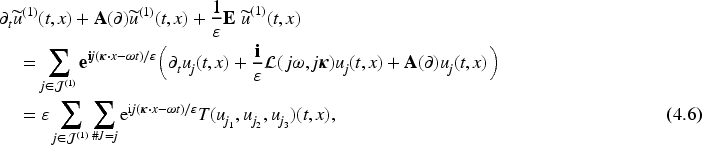

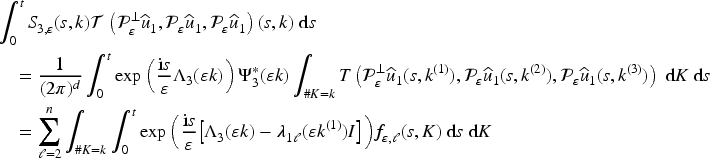

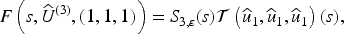

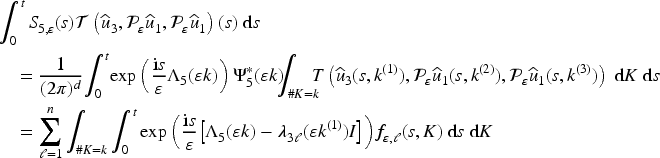

In this step, we express the integral term from (4.4) in an appropriate way. We use (1.4), (2.3), and (4.10) to obtain

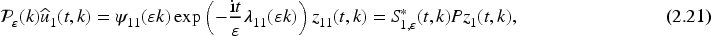

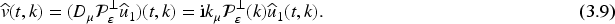

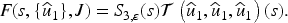

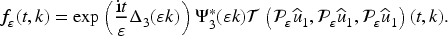

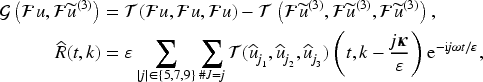

With (2.17) and (2.15), we can represent the integrand as

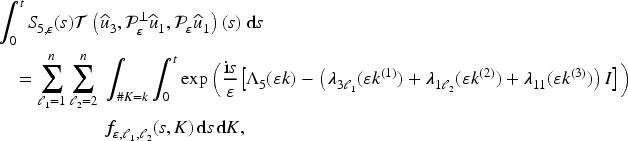

Step 2

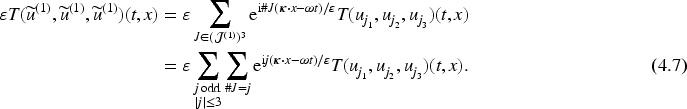

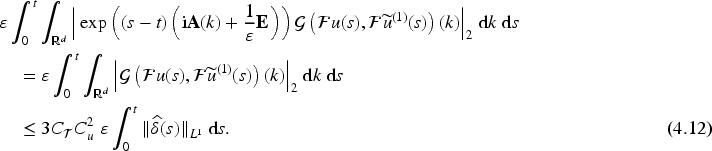

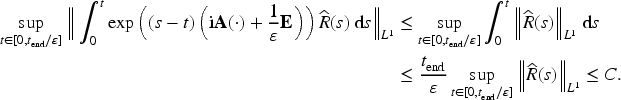

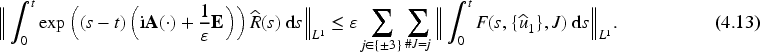

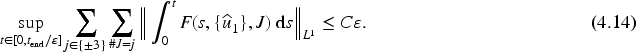

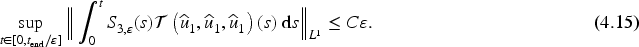

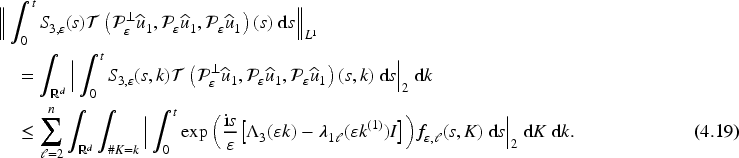

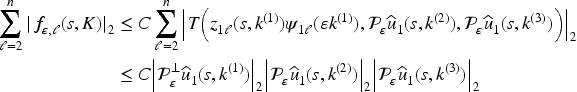

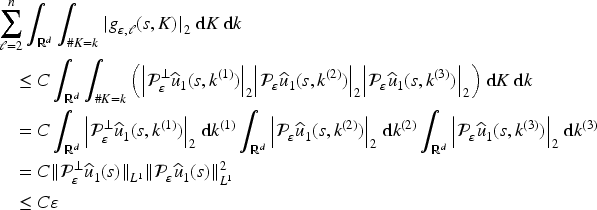

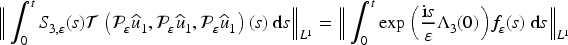

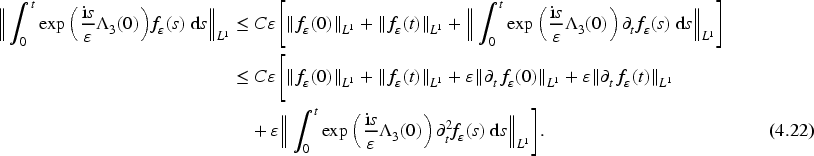

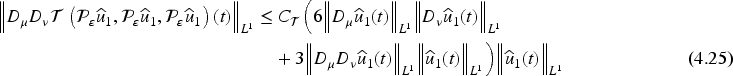

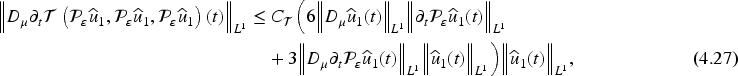

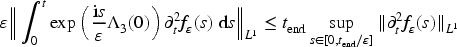

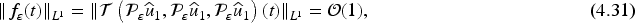

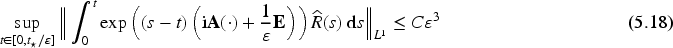

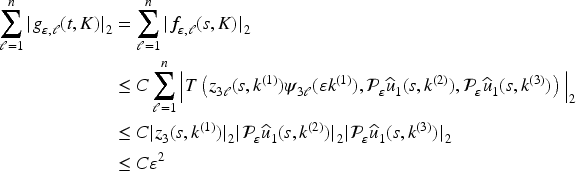

The goal in this and the following steps is to prove that

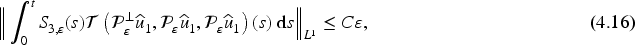

Step 3

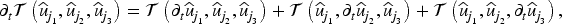

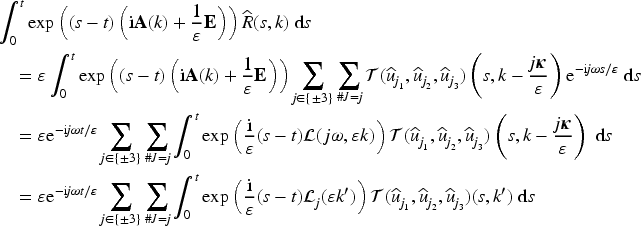

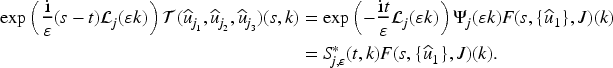

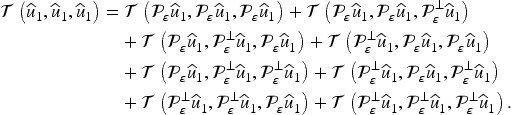

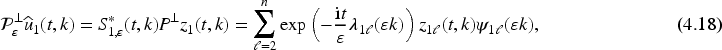

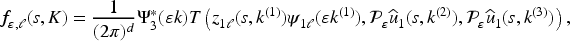

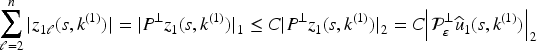

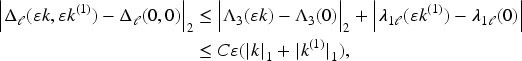

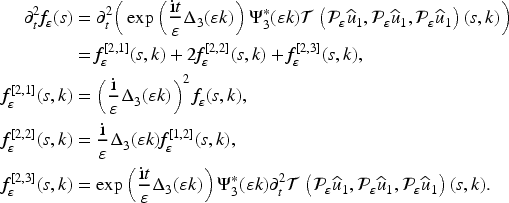

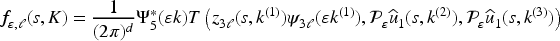

In this step, we prove (4.16). To accomplish this, we have to identify the oscillatory “parts” of the integrand. We use that (2.21), (2.14), and (2.15) yield the representation

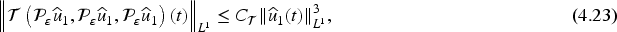

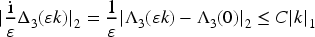

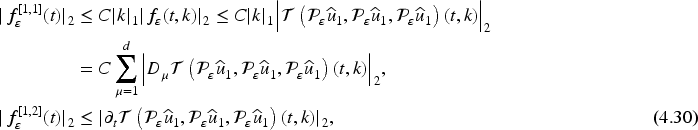

For

In a similar way, one can show that

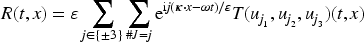

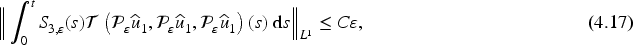

Step 4

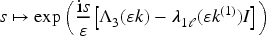

In this step, we prove (4.17). For the proof of (4.16) in the previous step, it was crucial that

We set

Since the matrix

According to step 2, the inequalities (4.16) and (4.17) imply the bound (4.15) and hence (4.4). Now the assertion of Theorem 4.3 follows from Lemma 4.4.

The proof shows that, in general, the error of the SVEA cannot be expected to be smaller than

We have assumed throughout that the kernel of

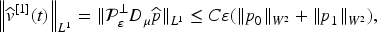

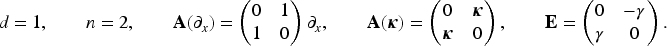

We illustrate Theorem 4.3 by a numerical example. As a model problem, we use a Klein–Gordon system in one space dimension; cf. Example 2 in Colin and Lannes (2009) and Example 1.5 in Lannes (2011). This system is a special case of (1.1a) with

Since numerical approximations of (1.1) and (1.10) can only be computed on a bounded domain, we switch to comoving coordinates

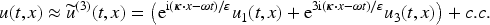

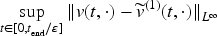

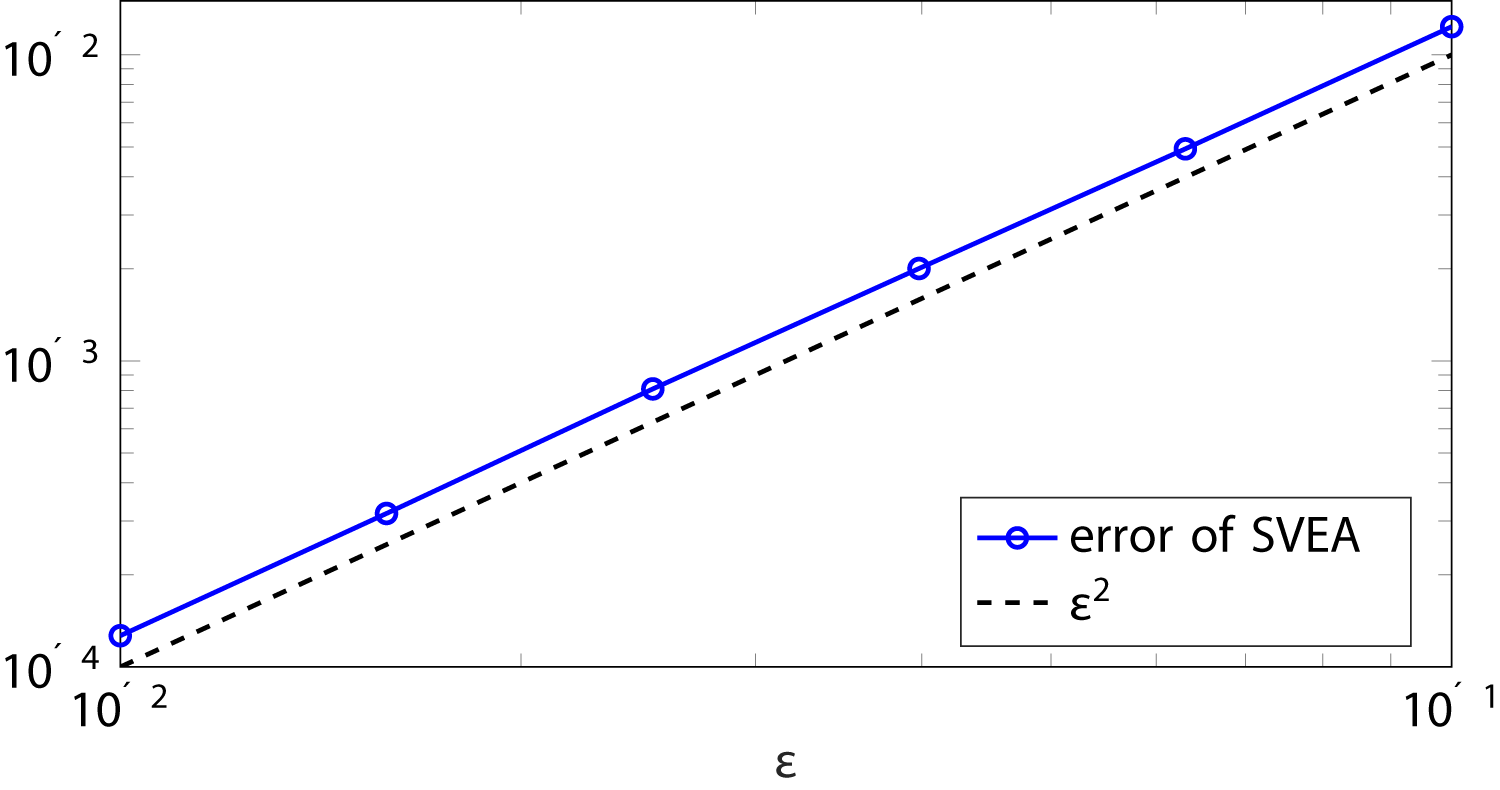

Figure 1 shows the numerical counterpart of

Accuracy of the Slowly Varying Envelope Approximation (SVEA) for Different Values of

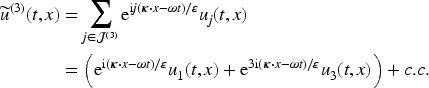

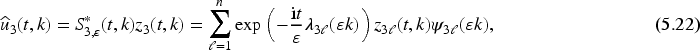

In this section, we analyze the approximation (1.7) with

By definition the approximation

Let

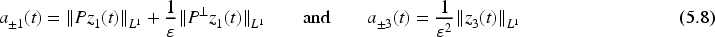

The bound (5.1) was shown in Baumstark and Jahnke (2023, Lemma 3.5). To show (5.2) and (5.3), the proofs of Lemmas 3.3 and 3.5 carry over almost verbatim. The only difference is that for

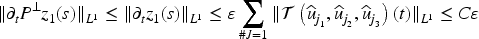

As a first step, we prove that for

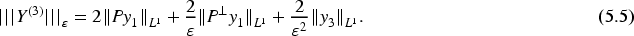

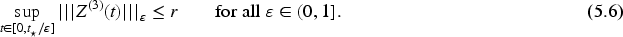

Suppose that the initial data in (1.8b) have the form (2.9) with

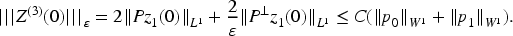

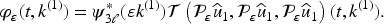

The proof yields an explicit formula for

Before we prove Proposition 5.2, we note that the following corollary is an immediate consequence of (2.25), (2.24), (5.5), and (5.6).

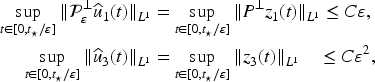

Under the assumptions of Proposition 5.2 the bounds

Corollary 5.4 reveals that Proposition 5.2 can be understood as an extension of Proposition 3.2 from

We integrate (2.16) for

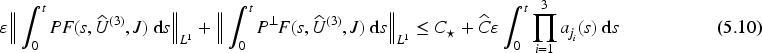

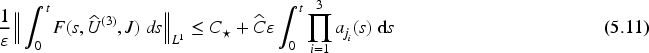

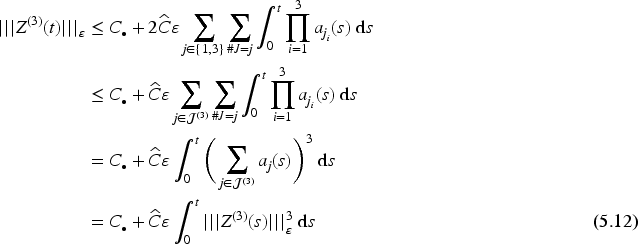

To prove the first inequality (5.10), we can adapt the arguments from Baumstark and Jahnke (2023, Section 3.2.2), because the fact that

Now let

Before we proceed, we have to extend Corollary 5.4 to a stronger norm as in Section 3. The following result is the counterpart of Proposition 3.4 in the case

Suppose that the assumptions of Proposition 5.2 hold, and that in addition (2.9) is true with

Using the higher regularity and, in particular, (5.4), the bound

For the error analysis of

(Nonresonance condition)

The matrix

We are now in a position to formulate and prove our second main result.

(Error bound for

)

Let

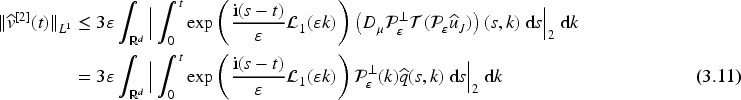

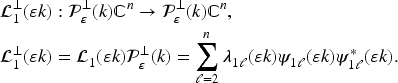

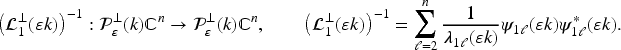

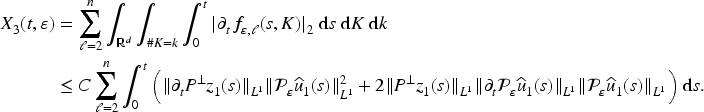

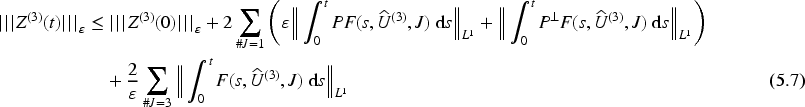

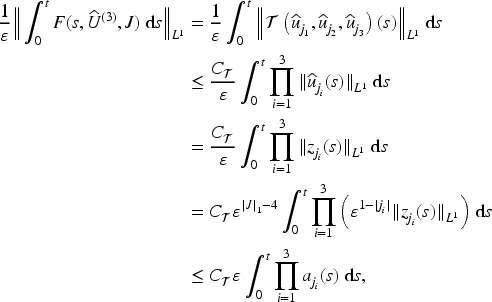

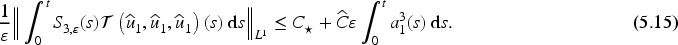

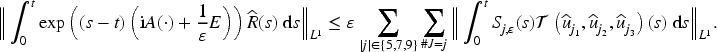

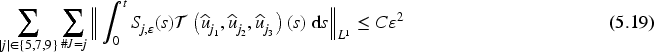

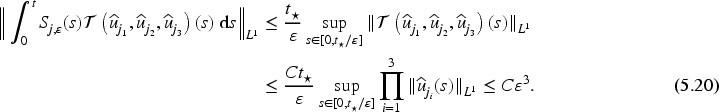

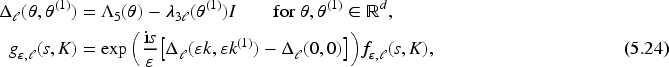

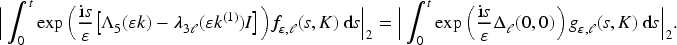

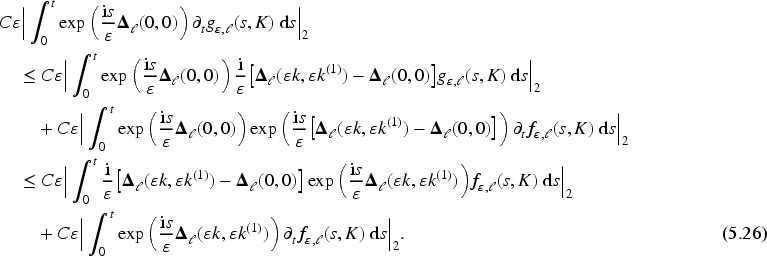

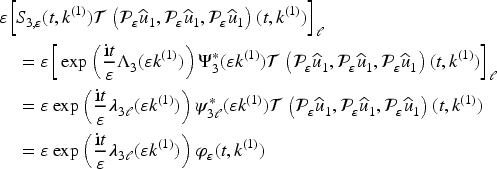

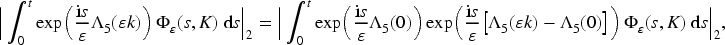

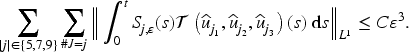

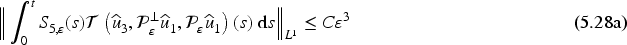

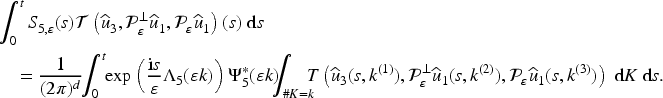

We use the proofs of Theorem 4.2 in Baumstark and Jahnke (2023) and of Theorem 4.3 in the present paper as a blueprint and focus on what has to be changed. In Baumstark and Jahnke (2023, proof of Theorem 4.2), we have shown that the Fourier transform

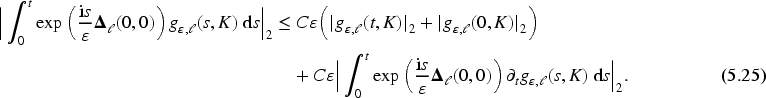

In Baumstark and Jahnke (2023, proof of Theorem 4.2), we have already derived the inequality

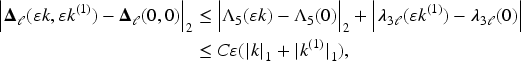

As before, we consider several cases. First, suppose that

Since

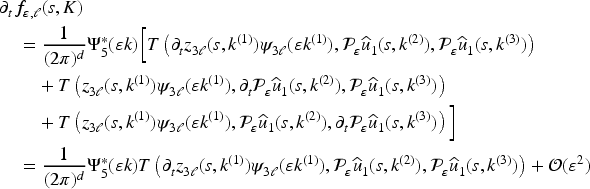

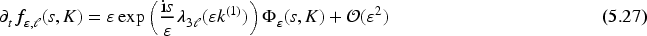

We use the representation

Unfortunately, the second term in (5.26) requires a bit more efforts. By definition of

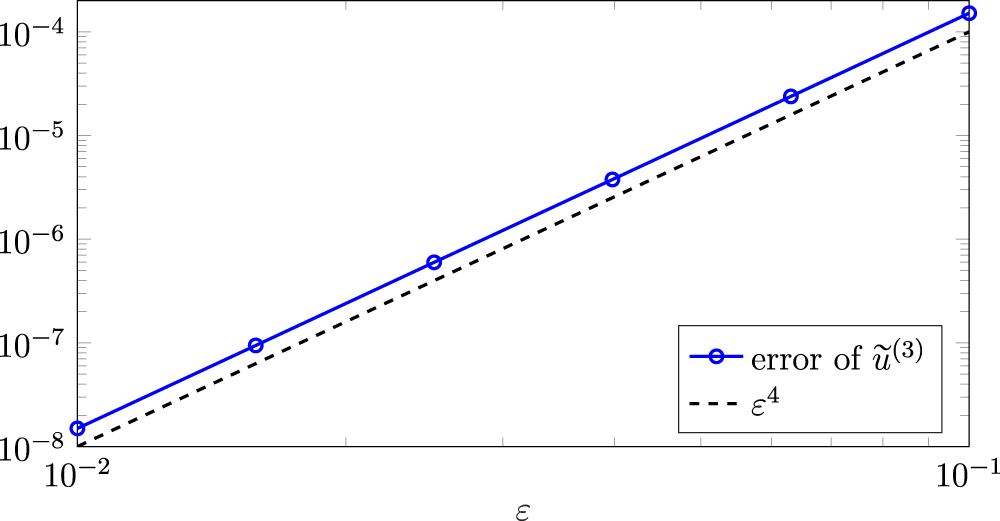

We have repeated the numerical experiment described in Section 4.2 with

Accuracy of

If we want to improve (5.17) in such a way that The largest eigenvalue

Recall that

A noteworthy exception is the Klein–Gordon system in one space dimension (

This discussion raises the question if the convergence behavior predicted by Theorem 5.7 could be observed in a numerical example with a two-dimensional Klein–Gordon equation, because then the eigenvalues have also the property (P2). The problem is that in order to test the accuracy of the approximation

The approach to approximate the solution

Footnotes

Acknowledgments

The authors thank the anonymous referee for her/his helpful remarks and suggestions.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was funded by the Deutsche Forschungsgemeinschaft (DFG, German Research Foundation), Project ID 258734477-SFB 1173.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.