Abstract

Nonprofits and public organizations may benefit from using evaluation data to improve programs and managerial decisions. Guided by organizational learning and institutional theory, this study investigates how partners, coalitions, and technical assistance organizations influence these organizations’ professional use of evaluation data within collaborative networks. Data were collected from 449 organizations embedded in an ego-centric network of partnerships and purpose-oriented coalitions on education reform. Results from the hierarchical linear models demonstrated that the only significant source of learning was organizations’ relationships with peer organizations in the ego-centric partnership network. This effect remained significant even after controlling for all the other factors. We discuss our findings and offer implications on integrating learning efforts at the coalition network and ego-centric network levels when motivating nonprofits and public organizations to conduct performance evaluations. (128 words)

Keywords

Introduction

Nonprofits and public organizations often collaborate to address societal issues (Kelman, 2007). They may form coalitions or purpose-oriented networks (Shumate & Cooper, 2022). Due to the nature of their work, these organizations face increasing demand to collect performance data for evaluation, budgeting, promotion, and learning (Behn, 2003). Existing literature shows how these data can be used strategically to make sense of changing environments, create knowledge and innovation, and inform organizational decision-making (Gieske et al., 2019; Lee & Nowell, 2015). This study focuses on what drives nonprofit and public organizations to collect and use performance data, referred to as “evaluation capacity building”—the intentional work to increase an organization’s ability to conduct and use evaluation (Cheng & King, 2017).

Though a common practice in the private sector, evaluation capacity building is more complex for nonprofits and public organizations (Lee & Nowell, 2015), primarily due to their roles in providing public goods and difficulty quantifying effectiveness (Mitchell & Berlan, 2018). Nevertheless, evaluation capacity building could help assess activities supporting their goals and how they may improve (Gieske et al., 2019). Nonprofit evaluation literature and the accountability movement for government organizations were founded on the premise that greater evaluation data use improves both outcomes and sustainability of programs (Bourgeois et al., 2018; Carman & Fredericks, 2010; Despard, 2016).

Scholars note that more research is needed to build evaluation capacity (Cheng & King, 2017; Suarez-Balcazar & Taylor-Ritzler, 2014). In particular, more research is needed to understand evaluation capacity building in collaborative networks where organizations from different sectors work together (Mandell & Keast, 2007). Existing literature has argued that evaluation capacity building is context- and culture-dependent (Taylor-Ritzler et al., 2013). Thus, the culture of a particular organization and its community influence evaluation capacity-building activities.

We use organizational learning theory and institutional theory to examine how institutional pressures influence professional data use by public and nonprofit organizations in collaborative networks and the effectiveness of different organizational learning mechanisms. We conceptualize professional data use as a form of evaluation capacity building toward better performance (Bryan et al., 2021) and interrogate three factors at the network level: roles of partners who engaged in ego-centric collaboration, coalitions (i.e., purpose-oriented networks), and technical assistance organizations through the purpose-oriented networks. Data were collected from 449 nonprofit and public organizations embedded in both ego-centric networks of partnerships and purpose-oriented coalitions focused on education reform.

In this study, ego-centric networks capture structural networks consisting of direct collaborative relationships among organizations. These structural networks are essentially connections with immediate partners and could facilitate organizational learning (Fu, 2024). In contrast, coalitions are purpose-oriented networks that are “comprised of three or more autonomous actors who participate in a joint effort based on a common purpose” (Carboni et al., 2019, p. 210). These networks are purposefully designed and formed to serve a specific goal or function, with the intention to facilitate the collaborative achievement of an objective. To illustrate, structural networks can be compared to pairwise dancing, where couples form connections and move between partners, while purpose-oriented networks resemble a flash mob or line dancing, where all participants engage together in a collective choreography toward a shared goal.

Including both structural and purpose-driven networks in this study allows us to examine both the direct effects of partners and the trickle-down effect at the coalition level on organizational learning. Our results demonstrate that evaluation capacity can be gained through organizations’ relationships with peer organizations in a collaborative network. Moreover, they suggest that mimetic pressure (DiMaggio & Powell, 1983) and lateral collaboration function as significant inducements for organizational learning rather than the presence of technical assistance organizations or lead organizations in a purpose-oriented network (via normative or coercive pressure; DiMaggio & Powell, 1983).

This study makes several contributions. First, it represents a new application of the neo-institutional theory to test multiple learning mechanisms through a network perspective. Specifically, we examined how learning may occur through two types of networks: structural and purpose-oriented. It provides empirical evidence that direct collaboration with peer organizations in ego-centric networks is the primary driver of organizational learning, confirming mimetic pressure. Second, our study challenges the assumption that purpose-oriented network effort in performance evaluation could have spillover effects that benefit member organizations directly due to normative or coercive pressure. We found that the effects of these efforts remain at the purpose-oriented network level, perhaps to demonstrate accountability for external stakeholders. Third, findings offer practical implications for funders and coalition leaders. The results suggest that orchestrating direct collaborative activities between partner organizations may significantly impact evaluation capacity. Providing technical assistance or affiliating with purpose-oriented networks is not sufficient. Organizations need opportunities for direct peer-to-peer learning and knowledge sharing.

Organizational Learning, Institutional Theory, and Evaluation Capacity

An increased emphasis on performance measurement and program evaluation in the nonprofit sector in the United States in the late 2010s was primarily driven by the sector’s rapid growth, professionalization, and competition (Mitchell & Berlan, 2018). Evaluation has thus emerged as an essential tool for managing nonprofit accountability issues (MacIndoe & Barman, 2013; Mitchell, 2014) and social legitimacy (Benjamin et al., 2023). The literature on nonprofit performance measurement highlights the complex and often externally driven nature of evaluation practices in this sector. Scholars have argued that nonprofits may engage in evaluation to establish legitimacy and secure funding from donors, rather than for genuine performance improvement (Coupet & Schehl, 2022). This “warm glow” orientation toward measurement stands in contrast to the more internal, decision-making focus that is often the aim of evaluation capacity-building efforts.

Nevertheless, in keeping up with pressures related to performance measurement and evaluation effort, nonprofits also experience resource challenges around limited funding for data collection and hiring evaluation staff, as well as an unclear understanding of monitoring and evaluation (Carman, 2007; Carman & Fredericks, 2010). Furthermore, they may lack knowledge on how to evaluate effectiveness or apply information collected in the first place. Increasing evaluation demands often leave organizations engaging in multiple strategies to prove they are doing “good work” without improving their evaluation capacity (Carman, 2007). Thus, merely collecting data or information about performance does not guarantee its use for effective decision-making. Some scholars have further suggested that meaningless data collection and reporting can lead to mission drift or goal displacement (Ebrahim, 2005; Lee & Clerkin, 2017).

In the government sector, the need to conduct performance evaluations originated from concerns about corruption (Lee & Liu, 2022). Evaluation focuses on developing government organizations’ intrinsic ability to develop and direct the right resources to the right places at the right time (Ingraham & Donahue, 2000). However, scholars have argued that government organizations are driven by various motivations to collect performance data: stakeholder pressure, power dynamics, leadership support, and innovative culture (Agasisti et al., 2020; de Lancer Julnes & Holzer, 2001; Kroll, 2015). Performance data collected by government organizations can help fight corruption and inform and assist policymaking (Wholey, 2010). However, these activities are often presentational in that formal processes are in place to collect and disseminate data, but data are rarely used. Furthermore, government agencies face numerous challenges in program evaluation, including political and bureaucratic obstacles, power struggles between federal and state governments, tensions between the legislative and executive branches, divisions between major political parties, and conflicting constituencies or interest groups (Wholey, 2010).

Professional Data Use for Evaluation Capacity Building and Organizational Learning

Following Umar and Hassan (2019), we conceptualize evaluation capacity building as an organizational learning behavior that involves detecting and correcting errors. Recent literature has identified several goals regarding how nonprofits and public organizations can use evaluation data: professional or anticipatory use (hereafter, professional use), compliance use, and negotiated use (Lee & Clerkin, 2017). Professional evaluation data use is conducted internally to improve operations and strategic decision-making. Compliance and negotiated uses are externally applied as compliance measures toward external stakeholders (e.g., funders, politicians, and the general public). Research has consistently shown that compliance and negotiated use of evaluation data are the most common ways nonprofits and public organizations show accountability to funders (Kim et al., 2019). These short-term activities hardly contribute to long-term performance assessment (Maxwell et al., 2016). This study focuses on professional data use whose benefits are explicitly related to improving organizational practices and management decisions (de Lancer Julnes & Holzer, 2001; MacIndoe & Barman, 2013; Taylor-Ritzler et al., 2013). This approach thus allows us to focus on an organization’s internal orientation and intention toward data use, rather than just reporting to donors and other stakeholders.

Nonprofits and public organizations can be viewed as learning organizations by formalizing performance evaluation, generating knowledge and understanding about data collection and use, and informing behavior change (Levitt & March, 1988; Newcomer & Brass, 2016). Organizational learning theory is a meta-theory rooted in organizational science that examines how firms “build, supplement, and organize knowledge and routines around their activities and within their cultures, and adapt and develop organizational efficiency by improving the use of the broad skills of their workforces” (Dodgson, 1993, p. 377). From a process-based perspective, it considers multilevel factors that influence organizational learning, including the socio-organizational context of learning, individual-level factors, and macro-environmental influences (Argote & Miron-Spektor, 2011; Argyris & Schön, 1996). Generally, organizational learning is viewed as a social and institutional process where learning takes place via leadership, communication, and partnerships (Choi & Woo, 2022; Perkins et al., 2007). Organizations can learn from each other by recognizing the value of new knowledge and practices and applying them to improve performance, known as improving absorptive capacity (Lichtenthaler, 2009).

Neo-institutional Theory and Isomorphism in Organizational Learning

Although much of the research on organizational learning has been conducted in the private sector (Basten & Haamann, 2018), organizational scholars have argued that it can be applied to nonprofits and public organizations (Greiling & Halachmi, 2013; Perkins et al., 2007). Several factors induce organizational learning. For a review of those factors, we refer to concepts from the neo-institutional theory of organizational behaviors and the different forms of isomorphism, or processes that prompt “one unit in a population to resemble other units that face the same set of environmental conditions” (DiMaggio & Powell, 1983, p. 149). Neo-institutionalists emphasize the need for organizations to conform to externally determined expectations concerning their structure and functioning to obtain legitimacy and support (Minkoff & Powell, 2006). DiMaggio and Powell (1983) suggest that institutions may experience three types of isomorphism. Coercive isomorphism describes pressures to resemble other units that result from political influence or a need for legitimacy. In contrast, mimetic isomorphism is an enacted response to uncertainty experienced by the unit, which organizations resolve by enacting practices they observe in peers. Finally, normative isomorphism is associated with professionalization and enacting the practices of highly esteemed organizations (Hwang & Powell, 2009).

Isomorphism has previously been used to examine organizational learning in the for-profit setting (Powell et al., 1996). More recent literature has identified that evaluation practices may also result from isomorphic pressures external to the nonprofit. For example, a funding agency that expects evaluation or a common evaluation practice enacted by peers can motivate an organization to build evaluation capacity (Eckerd & Moulton, 2011). Although isomorphism is a long-standing idea, it has become a dominant frame for nonprofit scholars over the past 20 years. Researchers argue that nonprofits do not collect evaluation data to measure their performance but rather because it is necessary for establishing legitimacy in their environments (Benjamin et al., 2023). However, the literature still needs to address the network aspects of organizational learning via neo-institutional theory regarding learning from whom. The following section shows examples of each form of isomorphism in the context of purpose-oriented networks and performance evaluation.

Mechanisms Underlying Organizational Learning: Toward Evaluation Capacity in Collaborative Networks

Interorganizational networks are often formed in response to a problem, and they can take on different forms, ranging from collaborative networks to purpose-oriented networks or coalition networks (Carboni et al., 2019; Cooper et al., 2024; Shumate & Cooper, 2022). These networks can be important for organizational learning by offering opportunities for knowledge exchange, collaboration, and acquisition of new skills (Du & Wang, 2019; Perkins et al., 2007). In particular, direct partnerships and coalition networks driven by collaboration play critical roles in organizational learning as partner organizations are bound within an institutional field to share resources and risks (Powell et al., 1996; Uzzi, 1997).

Studies suggest that institutional pressures from external stakeholders (funders, accrediting bodies, or national associations) often motivate nonprofit evaluation activity (Carman, 2011) and that government organizations may benefit from agreements on approaches to evaluation and the creation of performance partnerships involving joint accountability (Moynihan & Kroll, 2016; Wholey, 2010). These findings indicate that interorganizational partnerships may function as a conduit for organizational learning toward evaluation capacity building. However, key questions still need to be explored, including the available mechanisms and from which organizations learn.

Learning From Organizational Partners Within Ego-Centric Networks

Organizational learning related to evaluation capacity can occur through partner organizations within a collaborative initiative. Organizations form alliances and coalitions with other organizations based on a common purpose (Bryson et al., 2015). We refer to these interorganizational collaborative relationships as networks to capture the shared affiliations and the interdependence among organizational activities (Isett et al., 2011). Partners in these networks may come from various sectors, including nonprofits, private companies, and government agencies (Boyer, 2016; Wang et al., 2020). They are thus embedded in an institutional environment where organizations’ decisions are subject to the norms and social expectations deemed appropriate (AbouAssi et al., 2021).

Interorganizational collaboration is a communicative process in which interdependent organizations or stakeholders work together to solve problems (Keyton et al., 2008). However, the nature of these communication practices may depend on the structures enacted. Existing research has suggested multiple forms of interorganizational relationships in response to local social issues, including community collaboration (Heath & Frey, 2004), bona fide networks (Cooper & Shumate, 2012), and social impact networks (Shumate & Cooper, 2022), and recommended that nonprofits maintain different types of relationships (Fu & Cooper, 2021).

There are long-standing arguments that engaging in collaborative or network activity can help organizational learning (Knight & Pye, 2005; Kraatz, 1998; Powell, 1998). More recent research affirms this argument. Moreover, varied network structures are seen as contributing to learning, inviting further exploration of different network types and methodologies (Anand et al., 2021). In this study, we examine two types of collaborative networks. The first type is ego-centric partnership networks that describe sets of collaborating organizations that engage in data-sharing, joint delivery of service or programs, joint budgeting, staff cross-training, or policy work. In ego-centric networks, partners are only connected if they directly engage in these collaborative practices with the other organization (Scott, 2000). The second type is coalitions, which, in contrast, are purpose-oriented networks of organizations working toward (a) common goal(s). Organizations join coalitions through membership, regardless of participation or activities. Considering these two types of networks enables us to examine both the direct effect of learning from collaborating partners and the trickle-down effect of network affiliations.

In ego-centric networks, working alongside other agencies may allow mimetic isomorphism in nonprofit evaluation (Lee & Clerkin, 2017), which refers to organizations’ tendency to imitate each other when facing uncertainty or unclear goals (DiMaggio & Powell, 1983). For government organizations, existing literature has documented their mimetic behaviors in program evaluation through collaborative relationships with their peers (Piña & Avellaneda, 2018). Some even argue that government organizations are more vulnerable to this institutional process than other sectors (Frumkin & Galaskiewicz, 2004). Based on these studies, we argue that ego-centric partnerships help to create a culture that motivates nonprofits and public organizations to share knowledge and insights related to data use in program evaluation (Milway & Saxton, 2011). Therefore, we propose the following:

Learning From Coalitions

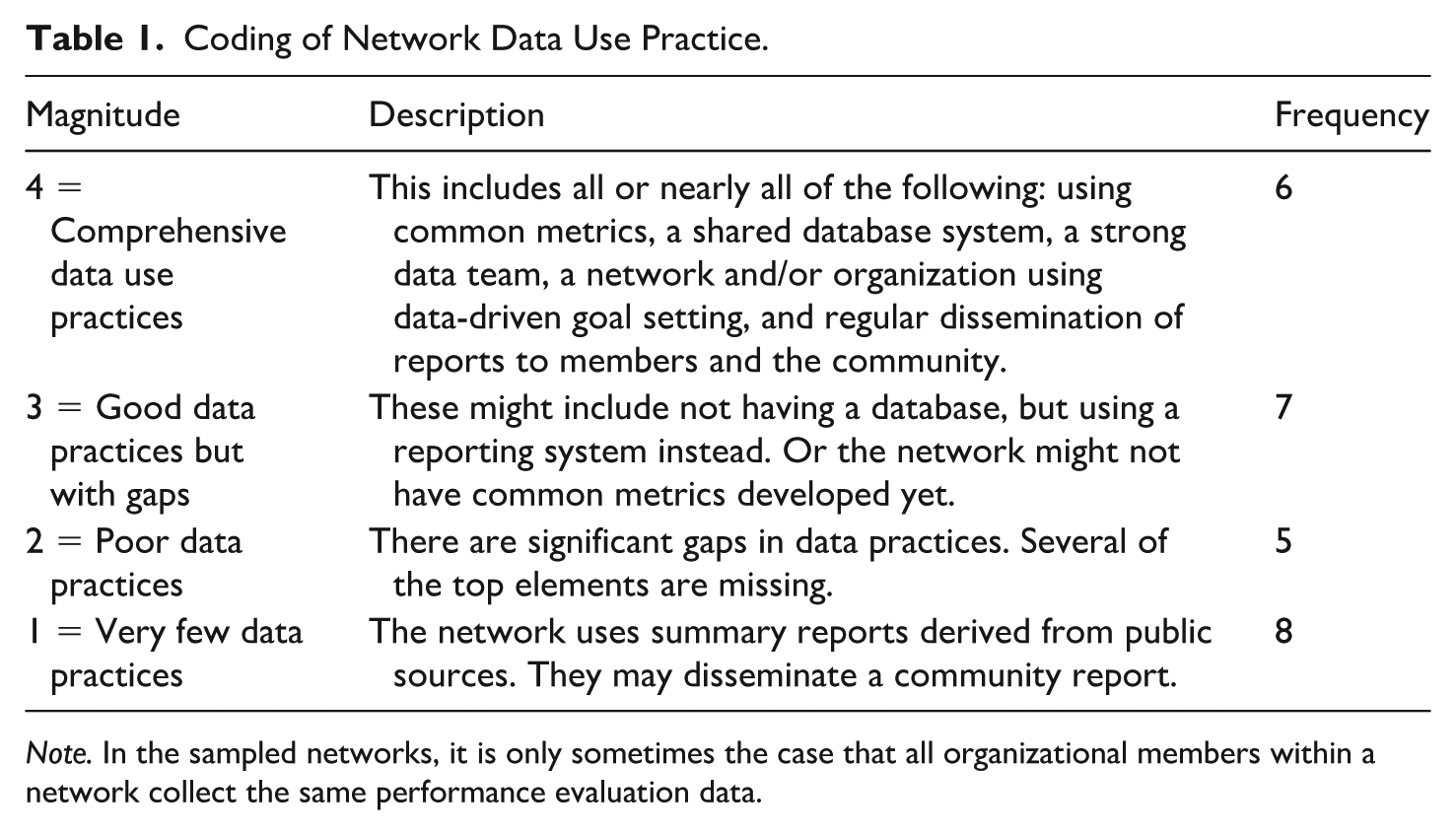

Besides the influence of partner organizations in ego-centric networks, coalitions that function as collaborative networks could also improve evaluation capacity building in nonprofits and public organizations. Coalitions are purpose-oriented networks and can operate with various governance models, which influence how organizational members provide input toward collective goals, how coordination among partners occurs, and how to assess network effectiveness (Nowell & Albrecht, 2024; Provan & Kenis, 2008; Shumate & Cooper, 2022). Due to their collaborative nature, coalitions often set rules and practices regarding what metrics to track and how member organizations share data and results from the evaluation (Wolff et al., 2016). Coalition networks with clearly defined metrics to track, a shared database among partners, a robust data team, and frequent communication about data-driven reports have good data use practices (Kania & Kramer, 2011). In contrast, coalition networks that need to track data to support their theory of change, need more data systems, or report data haphazardly in response to stakeholder demands, have poor data use practices (Shumate & Cooper, 2022).

In coalitions with strong data practices, member organizations may face coercive pressure in learning, which refers to external forces compelling an organization to conform to certain practices due to funding requirements, influences of specific stakeholder groups, or fidelity of a collaboration model (Wang et al., 2020). As organizations face increasing scrutiny from coalition lead agencies, they might be more motivated to adopt specific data use practices to maintain their legitimacy in the coalition.

Similarly, coalitions may also generate normative pressure, which captures the tendency for organizational activities in a specific field to homogenize over time and demonstrate professionalization (DiMaggio & Powell, 1983). Evaluation goals for coalitions move beyond individual organizations, encompassing information sharing and service coordination. Evaluation data aid coalitions in assessing resource allocation, enhancing community outreach, and influencing policy change (Fyall & McGuire, 2015). Organizations that actively participate in a coalition with good data use practices could learn through observation. Normative pressure has been found to influence how nonprofits and government organizations adopt performance data practices (George et al., 2020; Lee & Clerkin, 2017). Member organizations can formally or informally conform to normative practices and organizational protocols about evaluation capacity through training, meetings, and workshops in the network (Ofek, 2015).

To summarize, organizational learning can occur through affiliations with coalition networks that engage in strong data practices due to either coercive or normative pressures. We propose:

Learning From Technical Assistance Organizations

Organizational scholars have long recognized the role of technical assistance organizations in promoting effective evaluation (Katz & Wandersman, 2016; Olson et al., 2020; Umar & Hassan, 2019). These organizations function as consultants, facilitating conventions and coordinating coalitions (Reisman, 2024). Normative pressure originates primarily from the need for professional standardization (Zorn et al., 2011). From the lens of organizational learning, technical assistance organizations can generate normative pressure by demonstrating good practices in relevant industries and encouraging the adoption of best practices (Gage et al., 2024; Gibbs et al., 2009; Olson et al., 2020; Umar & Hassan, 2019).

Previous research has primarily examined the role of technical assistance organizations in supporting organizational evaluation (Gibbs et al., 2009). We extend this line of research to examine whether evaluation technical assistance in purpose-driven networks or coalitions in which organizations are embedded has spillover effects (Wolff, 2001). Nonprofit and government staff can benefit from technical assistance at the network level. Coalitions may suffer from inconsistent member attendance and data collection (Jarosewich et al., 2013). This inconsistency in participation can be attributed to a lack of communication to achieve consensus on critical metrics, a lack of user-friendly formats to follow, or simply a lack of enforcement. Technical assistance can help coalitions create evaluation systems that serve the network and its organizational members.

However, the effective role of technical assistance organizations on organizational learning is contingent upon other factors. For example, organizations typically require technical assistance to be provided both intentionally and over time for it to be effective (Meehan et al., 2013) as well as widely applied across participating organizations (Gage et al., 2024). Nevertheless, we expect that technical assistance organizations could foster a culture of accountability and continuous improvement, encouraging member organizations in a purpose-oriented network to adopt and align with good data practices. In other words, normative pressure driven by a collective commitment to enhance performance could be in place. Therefore, we propose the following:

Method

This study uses interview and survey data from 26 cross-sector coalitions to improve educational outcomes. These initiatives were sampled through the following procedures: a). We compiled a database of collective impact coalitions working on education by identifying member affiliations with national networks (e.g., StriveTogether, Collective Impact Forum, Ready by 21, and the Campaign for Grade-Level Reading). Collective impact coalitions follow a structured partnership model where partners are formed under a common agenda, share measurement data, engage in mutually reinforcing activities, and communicate regularly under the leadership of a backbone organization (Kania & Kramer, 2011). We successfully recruited 13 collective impact coalitions that provided consent to participate. b). We used the following matching criteria to recruit another 13 coalitions that were less structured compared to the first half of the sample: geographic (e.g., population density, coverage area, and the number of school districts or municipalities), demographic (e.g., race, and poverty rate), and labor market (e.g., unemployment rate, and median income). All the sampled coalitions were in 13 states.

The matching process was conducted to minimize institutional differences across educational contexts and to enable the research to compare the outcomes of networks of different design features while controlling for potential confounding factors. This matching technique helps strengthen the internal validity of the study by reducing the likelihood that observed differences in outcomes are attributable to underlying differences in the coalition environments. The use of matched pairs from the same state also ensured consistency in the policy and regulatory context affecting the coalitions, further enhancing the ability to isolate the impact of the coalition activities on educational improvements. This paper is part of a larger study in which the matching strategy was incorporated into the design, but it is not directly pertinent to the current investigation.

Coalitions in our sample varied on several factors: the model of collaboration (collective impact or not), year of establishment (2006 to 2015), location, size (M = 45.964, S.D. = 27.594), composition (what types of organizations are in the network), and social network structure. Notably, nonprofits, education organizations (e.g., K-12 public schools, early childhood schools), and government agencies (e.g., the Department of Public Health, Department of Children and Families, Department of Labor, and Department of Social Services) made up the majority of each of these coalitions, with less representation from the business sector. There is no co-membership across coalitions.

Network-Interviews

Semi-structured interviews were conducted with the coalition leads of all 26 communities, lasting about an hour. Questions included their history, mission statement, funding sources, strategies for aligning partners, community engagement activities, and data collected. Each interviewee also provided a roster of their member organizations. In addition, we also requested archival data from all these communities, including their founding documents such as Memorandum of Understanding (MOU), meeting notes, and partner rosters. The interviews were recorded and transcribed with their permission. The interview data and archival data were used to measure coalition-level attributes.

Surveys of Organizations in Coalitions

A total of 920 organizations were identified from these coalition networks, using rosters collected from network leads. We collected data from these sampled organizations through a phone-based survey. We used emails and phone calls to recruit participants. Our final sample included 449 surveys (51% response rate) from 273 nonprofits and 176 government agencies. Respondents who filled out the survey occupied a leadership position at their organization (e.g., executive director, CEO, community engagement director of a nonprofit, or department director at a government agency). Likewise, organizations in these coalitions also varied in size, scope, budget, and other organizational factors.

Questions in the survey included items about the organizational sector, ego-centric partnerships, and data use activities. When reporting ego-centric partnership activities, each organization was given the roster of all member organizations in their affiliated coalition and was asked to identify as many partners as possible for specific collaboration activities. The collaboration activities were having positive and close relationships with senior leaders, competing for public and private funds, staff, and clientele, sharing data, delivering joint services or programs, joint budgeting, staff cross-training, and policy work. The data reported were used to construct ego-centric networks for each organization sampled. We then used ego-centric data to calculate the partner organization’s average data use score; thus, our measure was less sensitive to missing data.

Measures

Coalition-Level Variables

Two coalition-level variables are measured using interview data with coalition leaders. They were corroborated with archival documents requested from the coalitions.

First, coalitions that use data to support theories of change and have developed the technical and human resources needed to track and report their data have

Coding of Network Data Use Practice.

Note. In the sampled networks, it is only sometimes the case that all organizational members within a network collect the same performance evaluation data.

Second, some coalitions received

We also included several control variables at the coalition level. Coalition age was measured as the years since the coalition was founded (M = 8.85, S.D. = 6.60). Coalition size was measured as the number of organizational members (M = 34.42, S.D. = 24.12). The coalition model was measured as a categorical variable where 1 = collective impact and 0 = non-collective impact.

Organizational-Level Variables

First, the sector is measured to indicate whether an organization is a nonprofit (n = 283) or a public organization (n = 188). This variable was created to capture the potential differences between public organizations and nonprofits. Second,

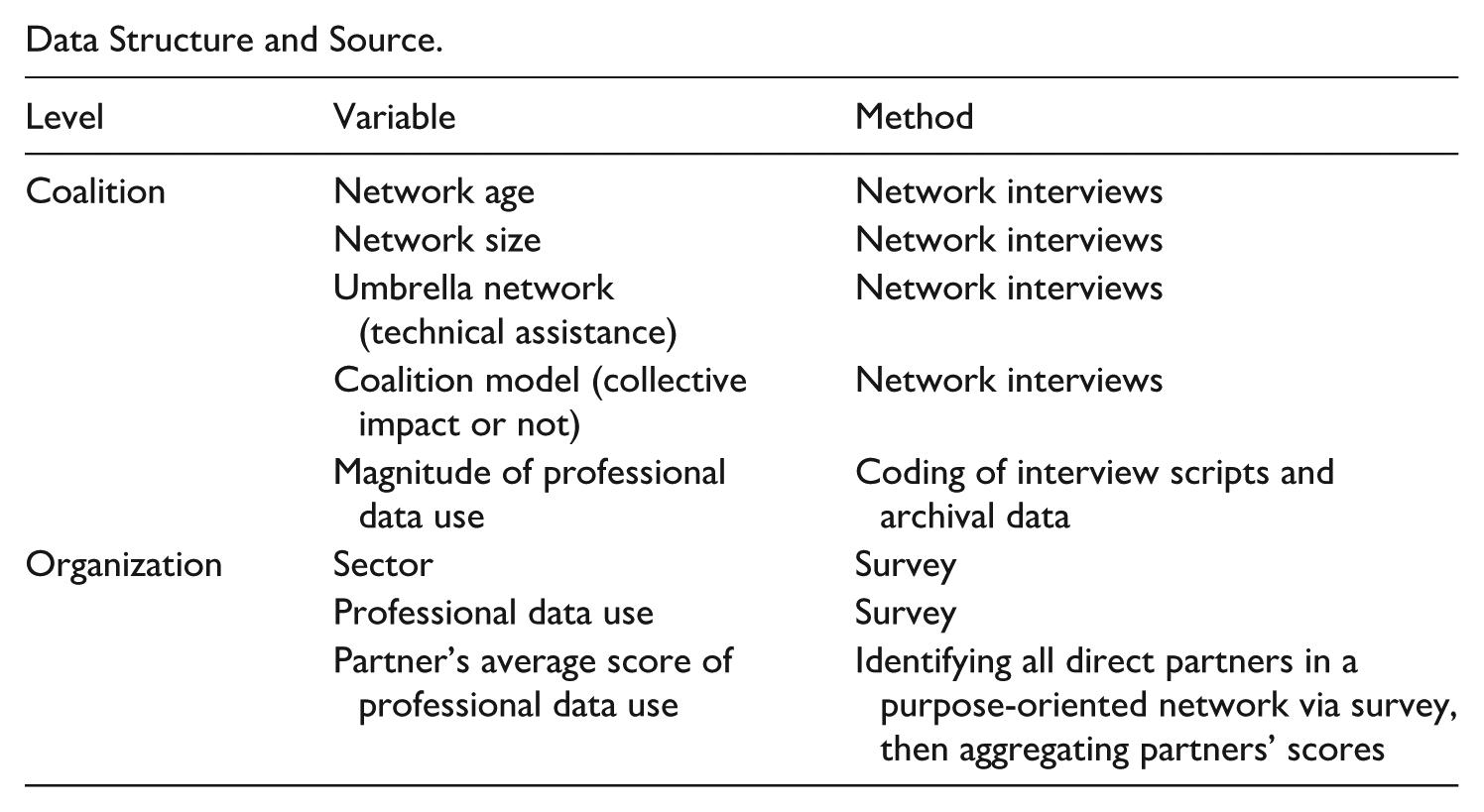

Analysis

A summary of key variables at both organizational and coalition levels and data collection methods is shown in Appendix B. To test the hypotheses, we used hierarchical linear modeling (HLM; Raudenbush & Bryk, 2002). We used the Linear and Nonlinear Mixed Effects Model package nlme in R (Pinheiro et al., 2021). This allowed us to examine both fixed (i.e., population-level effects) and random effects (i.e., subject-specific) in a single model. Following the guidance of Raudenbush and Bryk (2002), we created several models to assess the incremental contribution of specific variables to the overall model fit and the amount of variance explained. The first model was the null model, where there was a random coefficient linear mixed-effect model by maximum likelihood. The second model contained all the covariates at both the organizational and network levels. And the final model contained all the factors from model 2 and all the independent variables that were intended to test our proposed hypotheses.

Results

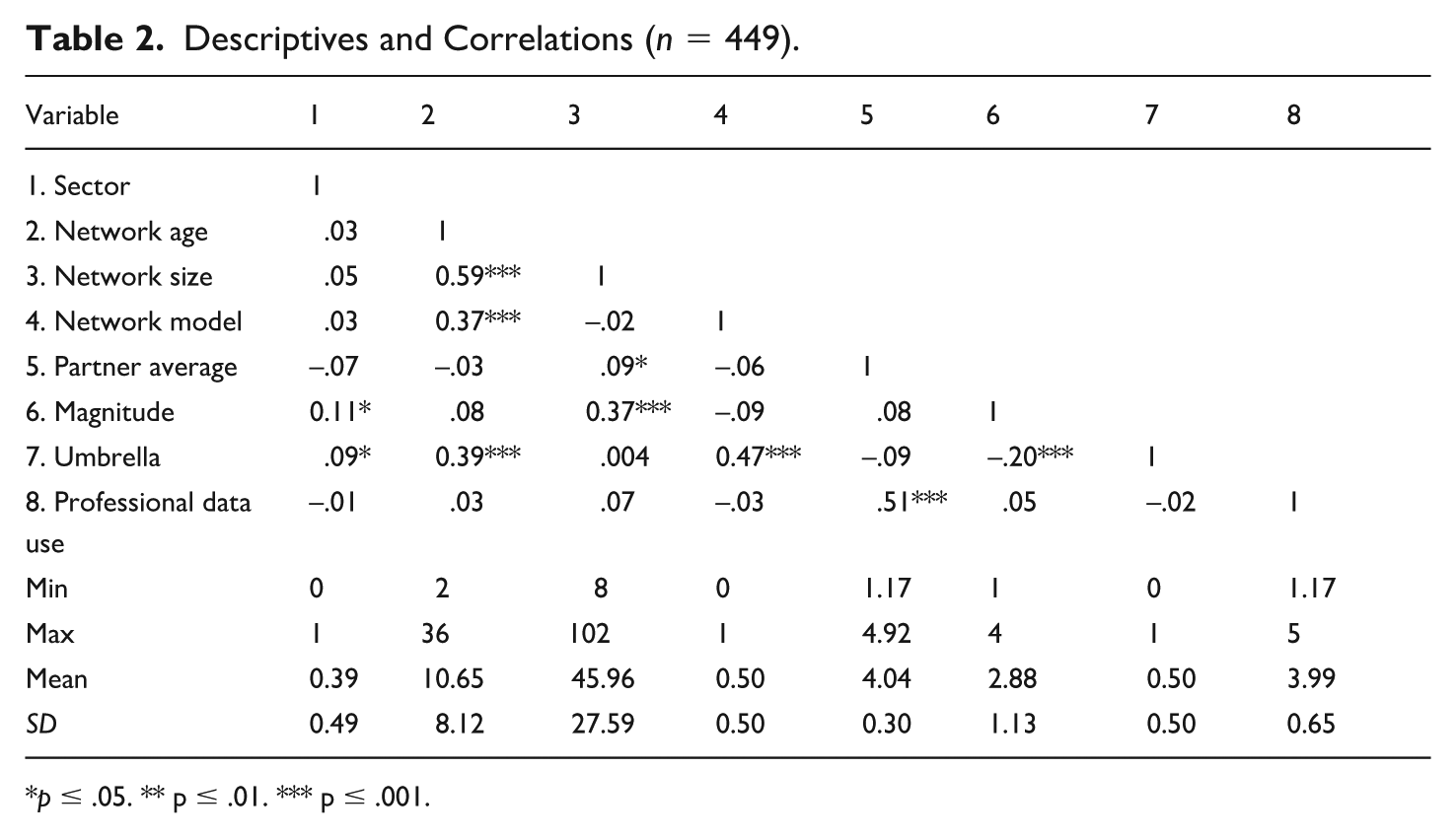

We presented descriptives of all the variables and bivariate correlations among them in Table 2. The findings show that significant correlations occurred between network size and age, network age and collective impact coalitions, network age and umbrella networks, network size and magnitude of data use practices, and professional data use practices and partners’ average score of data use.

Descriptives and Correlations (n = 449).

p ≤ .05. ** p ≤ .01. *** p ≤ .001.

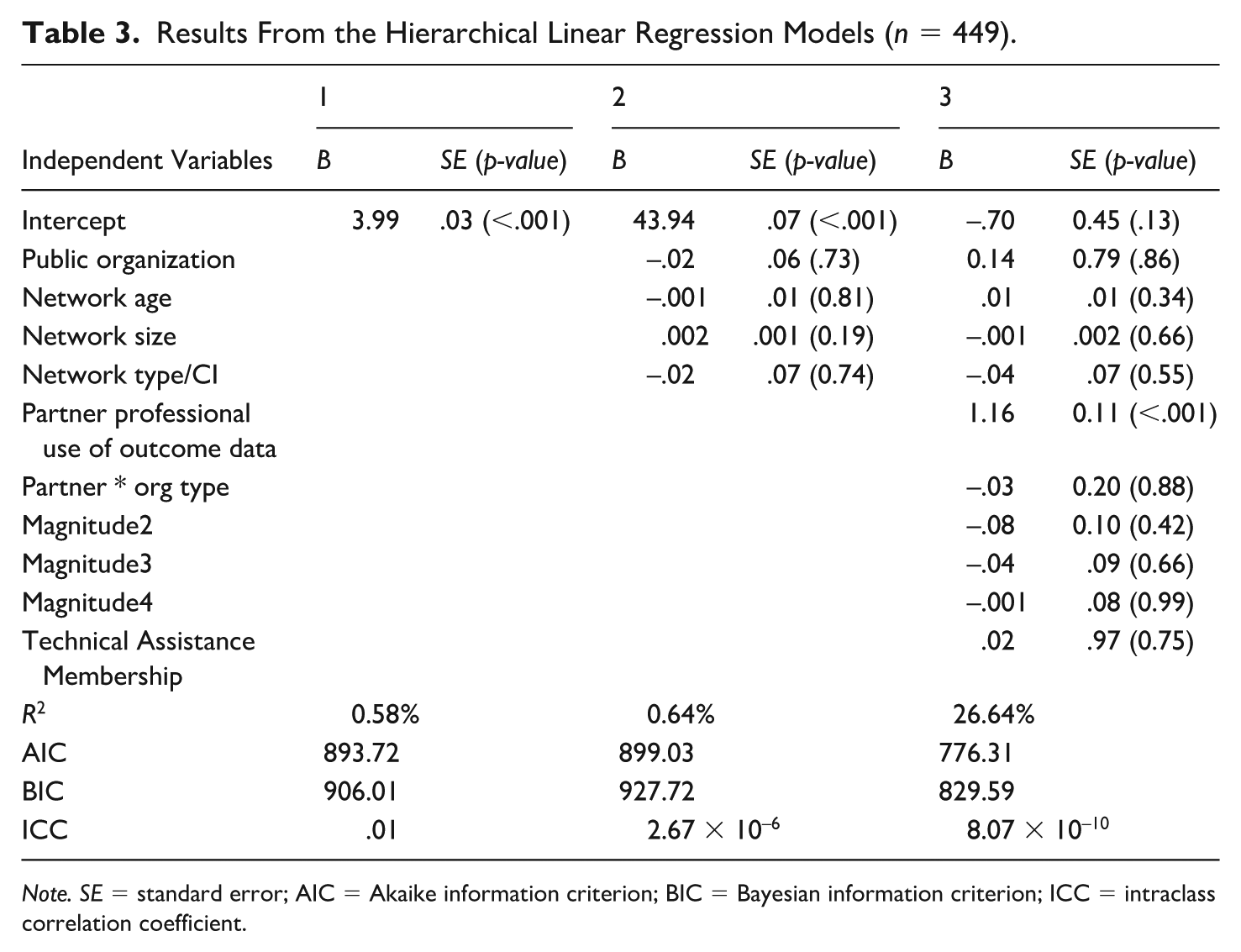

Table 3 shows findings from the three models tested with HLM. As shown in Model 2, none of the network-level (level 2) factors were significant. In model 3, which included all the level 1 and level 3 factors, the only significant effect came from partners’ professional data use (1.16, S.E. = 0.11, p < .001). H1 was thus supported. H2 and H3 were not supported. The results indicate that coalition-level factors (i.e., coalition data use practices and technical assistance membership) did not influence organizations’ professional data use, evidenced by a low intraclass correlation coefficient (ICC). It suggests that an extremely small proportion of the total variance in professional data use at the organizational level is attributable to the network-level influence.

Results From the Hierarchical Linear Regression Models (n = 449).

Note. SE = standard error; AIC = Akaike information criterion; BIC = Bayesian information criterion; ICC = intraclass correlation coefficient.

We conducted multiple robustness checks to verify the findings. First, we ran a multiple linear regression using all the IVs and covariates in one model (including the interaction effect of organizational type and professional data use by partners) without considering the multilevel or cluster effects that may occur within networks. The model was significant, F(10, 434) = 15.72, p < .001, adjusted R2 = 0.25. The results showed that the only significant effect remained from the average partner’s professional data use activity, confirming the findings from the HLM (B = 1.16, p < .001).

Second, we ran two separate multiple linear regression models: one with only organizational-level variables and the other with only network-level variables. The organizational model was significant, F(3, 441) = 52.25, p < .001, adjusted R2 = 0.26, with the only significant factor being partner’s professional data use activity (B = 1.15, p < .001). The network model was not significant, F(7, 437) = 0.76, p = 0.62, adjusted R2 = -.004. The negative R2 suggests that the model does not explain any of the variation in the DV better than simply using the mean of the DV as the prediction, and thus, other factors should be accounted for beyond the network level variances. So far, the two steps confirmed the findings revealed in the HLM reported in the manuscript.

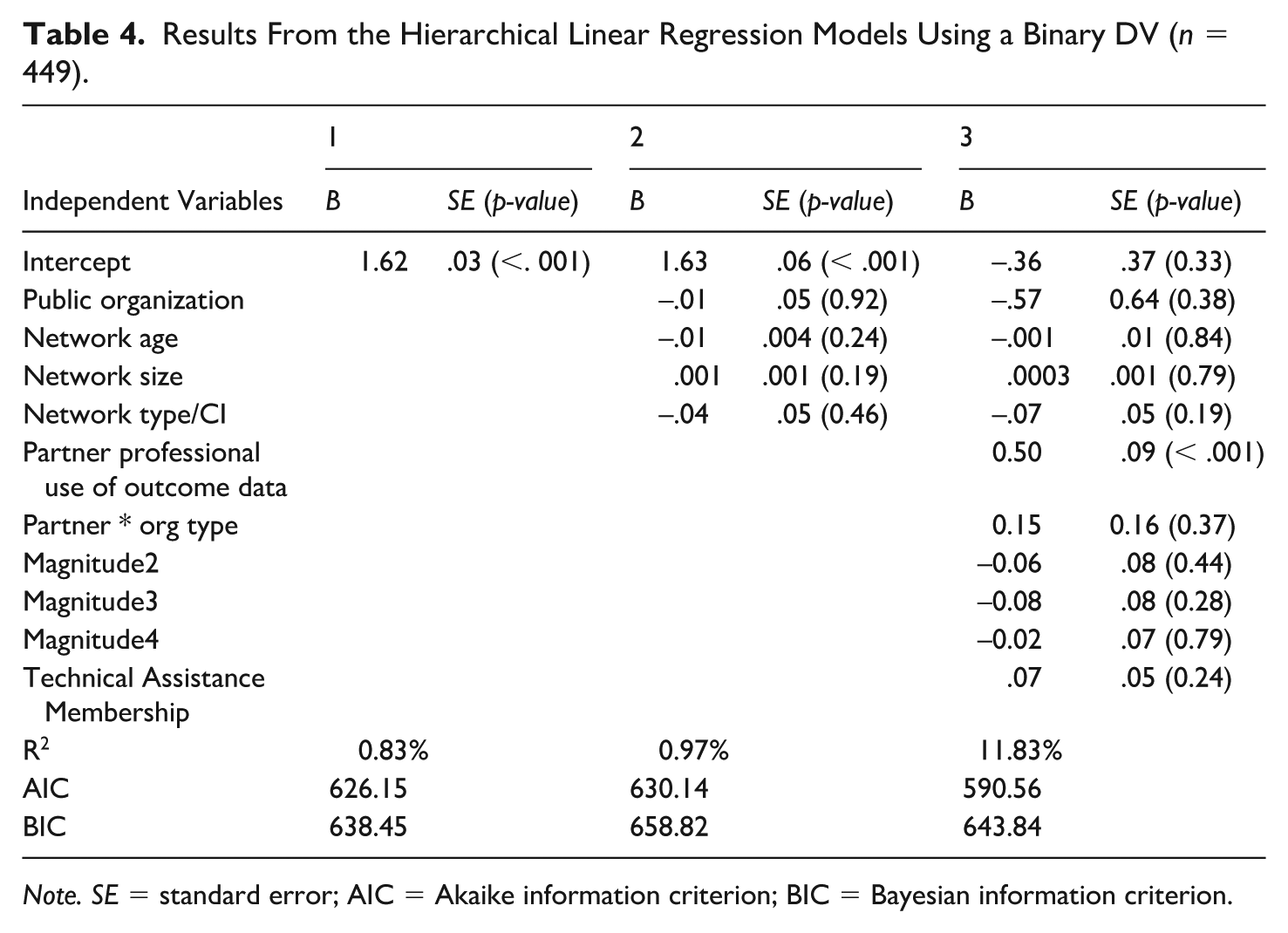

Finally, we recoded the DV into a binary variable and reran the HLM. The three new models tested did not reveal higher R2. Findings remained consistent that the only significant effect was from the partner’s average score in professional data use. See Table 4 for the summary. The findings holding up across various robustness checks suggest that the results are robust and not overly sensitive to the specific modeling choices or method assumptions. This provides greater confidence in the reliability and validity of the conclusions drawn from the original analysis. It also helps to strengthen the evidence and supports the generalizability of the study's conclusions.

Results From the Hierarchical Linear Regression Models Using a Binary DV (n = 449).

Note. SE = standard error; AIC = Akaike information criterion; BIC = Bayesian information criterion.

Discussion

Guided by organizational learning and neo-institutional theories, this study examines how partners, coalitions, and technical assistance memberships influence evaluation capacity in nonprofits and public organizations which was measured as the use of evaluation data to improve professional practices. Results from HLM showed that partner organizations’ professional data use was the only significant factor, indicating that the only driver underlying these organizations’ evaluation capacity building was the influence of their peer organizations within a purpose-oriented network. We discuss our main findings through the lens of organizational learning and offer implications on what interorganizational partnerships can function as effective pathways for organizational learning toward evaluation capacity building.

Organizational Learning and the Lack of Influence of Coalitions

In response to increasing demands of demonstrating accountability and social legitimacy (e.g., Benjamin et al., 2023), nonprofits and public organizations often collect evaluation data without possessing the information and knowledge to benefit from it. As a result, evaluating data to inform strategic decision-making and improve organizational practices is also limited. In other words, organizational learning related to evaluation capacity often only captures the single loop within the existing goals organizations have set (Ebrahim, 2005). This study hypothesized that from ego-centric networks and purpose-oriented networks, nonprofits and public organizations would be motivated by mimetic, normative, and coercive pressures (e.g., DiMaggio & Powell, 1983).

In networks with good data use practices, nonprofits and public organizations showed no greater professional use of evaluation data than those in networks with poor practices. Literature indicates that effective network governance is crucial for managing the interdependence of member organizations when conducting evaluations (Ofek, 2015). Many education reform coalitions lack established norms and rules for tracking metrics, sharing data, and utilizing data to achieve network goals (Wang et al., 2020). Variation in member involvement, such as differing attendance at coalition meetings, may also affect engagement in agenda-setting related to data use (Jarosewich et al., 2013). This suggests that neither coercive nor normative pressure was activated.

Given the prevalence of technical assistance organizations offering support in evaluation capacity to coalitions, we expected that member organizations might face more normative pressure to learn about how to use evaluation data to inform decision-making (Wolff, 2001). However, we found that consulting roles of technical assistance organizations did not necessarily result in strong professional data use. This challenges the assumption that technical assistance organizations effectively promote professional data use or facilitate follow-up assessments on implementation plans (Olson et al., 2020). This finding suggests that technical assistance to coalitions does not create spillover effects for its organizational members.

The lack of effect at the coalition level suggests that membership provides insufficient incentives or observation opportunities to improve evaluation capacity. The lack of coalition-level trickle-down effects may indicate that the potential costs of collaborative efforts surpass the benefits (Nowell & Albrecht, 2024). Network governance and the varying levels of organizational participation may explain why training, technical assistance, and accountability requirements at the network level do not result in the formation of community ways of building evaluation capacity.

Mimetic Pressure and Organizational Learning Through Ego-Centric Partnerships

Although prior research on neo-institutional theory has suggested that, through interorganizational collaboration (Powell et al., 1996), more knowledge is needed regarding learning mechanisms. Some studies have demonstrated the importance of mimetic isomorphic pressures in adopting nonprofit evaluation methods (Eckerd & Moulton, 2011). The network context of this study offers insights into the peer dynamics that may shape evaluation practices. The only significant factor from our analysis is peer organizations’ professional data use. In other words, ego-centric partnerships within a coalition signal more than shared affiliation and could help build a culture to motivate nonprofits and public organizations in evaluation capacity building (Milway & Saxton, 2011).

A classroom analogy provides an illustration. Classrooms may provide opportunities for active learning (e.g., students participate in and reflect on activities) and passive learning (e.g., students consume material, typically through lectures; Bonwell & Eison, 1991). Likewise, coalitions may encourage organizations in ego-centric partnerships to participate in activities such as joint programming or staff training. In contrast, coalitions may also feature more passive learning strategies in which organizations do little together outside of attending meetings. Ego-centric partnerships may provide a better context for active learning and professional data use.

Such a distinction makes sense in professional data use because of its tacit knowledge instead of explicit knowledge. Tacit knowledge describes “know-how,” or the processes and techniques one uses to accomplish a goal. In contrast, explicit knowledge refers to “know what” or codified knowledge. Research on interorganizational learning has long realized that partnerships are ideal for gaining tacit knowledge (Mariotti, 2012). The trust and close proximity that partnerships between organizations provide is an ideal opportunities for observing and trying out tacit knowledge.

Similarly, professional data use requires know-how. Although many evaluation guides are available to leaders to learn how to collect the correct data and the appropriate analysis techniques (e.g., Fitzpatrick et al., 2017), they do not necessarily demonstrate how an organization might use the data in everyday management decisions or refine its practice. Knowing how to embed these techniques into organizational routines is a tactic and may best be learned in the context of active partnerships.

Prior research (e.g., Mandell & Keast, 2007) suggests that sectors are rarely explored alongside one another despite the potential insights that may come from this approach. Findings indicate that no sector-level characteristics would generate additional challenges when motivating organizations from either sector to engage in organizational learning toward evaluation capacity building. Regardless of their sector affiliation, public organizations and nonprofits can equally practice mimetic learning via their peer partners in the same coalition.

Implications for Organizational Learning

Since nonprofits and public organizations have limited time for collaboration, this study offers insights into where leaders who want to improve their evaluation capacity should invest their time. Participating in coalitions is time-consuming; consequently, partner organizations may participate to varying degrees. If the goal is to increase evaluation capacity, their time is better spent learning partnerships with peers than joining coalitions with greater evaluation capacity.

The effect of lateral-level knowledge sharing among partner organizations also demonstrates the need for partnerships to emulate know-how. It suggests significant challenges exist when networks aim to integrate organizational learning through the traditional consulting model (Boyer, 2016). Therefore, another implication of this research is that coalitions may leverage direct partnerships among member organizations to formalize expectations about evaluation capacity and select leading organizations to champion performance evaluation efforts. These champion organizations can be local schools that use performance data to serve students better or nonprofits with the knowledge or experience of using performance data for community engagement.

Furthermore, technical assistance organizations can also consider incentivizing peer organizations to implement evaluation programs (Brown & Duguid, 1991). Regardless of their engagement as a partner in a coalition, funders may consider simplifying data reporting formats and advocate evaluation use beyond accountability purposes. Funders can also invite beneficiary organizations to lead the effort in evaluation capacity building to showcase that evaluation data can serve organizations’ strategic planning.

Limitations and Future Research

Several limitations of the current study warrant future research. First, the outcome variable was measured using self-reported behavioral data. Future research could collect other data sources, such as reports and action plans generated with evaluation data tied to actual behavioral outcomes, to examine if the effect of partner organizations remains significant. In addition, future research can directly measure if an organization has an evaluation team or how much money they have for data use activities as a proxy of their evaluation capacity.

Second, we did not collect data on organizations’ engagement with coalitions or technical assistance organizations. Coalitions or technical assistance organizations may already have influenced their data use practices. With the affiliation with a coalition or support from a technical assistance organization, we assumed that resources regarding data use were available to organizations. In other words, normative pressure was measured indirectly by comparing the level of performance evaluation to their partners and to the network as a whole. We call for future research to explore other direct measures (e.g., rating of certain norms in their own sector, behavioral variables such as participation in technical training or meetings hosted by coalition backbone organizations or organizations that provide consulting services) and verify if they affect learning experience.

Third, cross-sectional data and HLM do not reveal causality. The significant effect revealed from direct partners’ data use practices could only be inferred as a correlation. Future research should incorporate a longitudinal study design to examine changes in professional data use over time. It should also apply relevant methods to test causal relationships among factors.

Last, this current study focused solely on isomorphism. We acknowledge that other theoretical frameworks, such as resource dependence theory, contingency theory, or stakeholder theory, could have informed the research questions and study design. We call for future research to examine other frameworks together with longitudinal data to verify our findings further.

Conclusion

Nonprofits and public organizations face increasing demands for evaluation, yet simply collecting data does not equal improving organizational performance. We draw on institutional and organizational learning theory (DiMaggio & Powell, 1983; Dodgson, 1993) to unpack what drives these organizations to use performance data to inform decision-making and modify organizational norms and practices within collaborative networks. Findings demonstrate challenges and the importance of integrating knowledge sharing at the organizational and coalition levels (Greiling & Halachmi, 2013; Perkins et al., 2007). We highlight the critical role that peer organizations in the ego-centric network play in facilitating evaluation capacity building.

This study makes several contributions. First, it applies the existing neo-institutional theory to test multiple learning mechanisms in networks (i.e., effects of structural networks and purpose-oriented networks). Empirical evidence demonstrates that despite multiple venues of organizational learning to build evaluation capacity, direct collaboration with partner organizations is the only driving force. When partner organizations have good data use practices, mimetic pressure through structural ego-centric relationships results in a focal organization’s willingness to engage in evaluation capacity building.

Second, our study challenges the assumption that the network-level effort in performance evaluation could have spillover effects to benefit member organizations directly due to normative or coercive pressure. Nonprofits and public organizations sampled in this study were not subject to normative or coercive pressure to build evaluation capacity in coalition networks. The spillover effect may be associated with surface-level evaluation to demonstrate accountability for external stakeholders. Evaluation data collected to please funders and network leaders do not necessarily serve organizational goals or stakeholders (e.g., employees and clients).

Third, our findings offer practical implications that funders and coalition leaders should focus on fostering strong relationships and collaborative activities between partner organizations. Simply providing technical assistance or having a network affiliation may not be sufficient. Organizations need opportunities for direct peer-to-peer learning and knowledge sharing within ego-centric networks. Funders and coalitions can better support public and nonprofit organizations in developing the evaluation skills and practices needed to maximize performance and impact.

This study demonstrates the importance of evaluation capacity building for improving the offerings of public organizations and nonprofits in the context of education reform. Through the lenses of organizational learning theory and institutional theory, our findings offer critical inquiries into how to govern coalition networks and motivate network members to engage in good data practices, what it means to be an active learner in a coalition, and how to integrate network and organizational-level learning toward performance evaluation.

Footnotes

Appendix A

Appendix B

Data Structure and Source.

| Level | Variable | Method |

|---|---|---|

| Coalition | Network age | Network interviews |

| Network size | Network interviews | |

| Umbrella network (technical assistance) | Network interviews | |

| Coalition model (collective impact or not) | Network interviews | |

| Magnitude of professional data use | Coding of interview scripts and archival data | |

| Organization | Sector | Survey |

| Professional data use | Survey | |

| Partner’s average score of professional data use | Identifying all direct partners in a purpose-oriented network via survey, then aggregating partners’ scores |

Appendix C

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The Army Research Office Grant W911NF1610464 funded the work.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The datasets analyzed during the current study are available in the IPCSR repository, which can be accessed at ![]() . All relevant data and analytic scripts that support the findings of this study are included. Access is unrestricted, and the files are made available under a CC BY license to permit reuse, subject only to proper citation of the dataset and associated publication.

. All relevant data and analytic scripts that support the findings of this study are included. Access is unrestricted, and the files are made available under a CC BY license to permit reuse, subject only to proper citation of the dataset and associated publication.