Abstract

Involving volunteers to perform tasks through crowdsourcing projects is gaining popularity. However, attracting volunteers and keeping them engaged throughout a project sets great challenges to project managers. This article analyzes the effectiveness of recruiting and engagement instruments on volunteers’ activity. A mixed-method approach has been used, including interviews with project managers and quantitative data from a large Dutch crowdsourcing platform. The research results show that crowdsourcing projects benefit from being part of a platform because of the higher activity of experienced participants. The study also provides empirical evidence supporting the effectiveness of timely communication and the speed of quality checks, both of which require project management resources. Finally, the study suggests that material rewards are less important for volunteer engagement than the intrinsic motivation of a point-based reward system.

Introduction

Crowdsourcing, as a form of online volunteering, has become increasingly popular in the cultural heritage sector, especially among public libraries, museums, archives and research institutions (Bonacchi et al., 2019). Crowdsourcing refers to initiatives in which an individual or organization (crowdsourcer) offers to a group of people the voluntary performance of a task through the internet (Estellés-Arolas & González-Ladrón-de-Guevara, 2012). Crowdsourcing allows nonprofits to involve a larger group of volunteers than would otherwise be possible with their often limited budgets (Johnson & Liew, 2020; Troll et al., 2019). The following fictional vignette illustrates the application of crowdsourcing in a nonprofit cultural heritage organization.

“A regional archive decided to set up a crowdsourcing project and asked Sarah to take the lead. The project was aimed at making the work and sources of the archive more visible and accessible to citizens by involving them in digitally indexing population registers from the 18th century. Sarah had not led a crowdsourcing project before and had no specific deadline. With advice from other archives and her experience in working with in-house volunteers, she managed to set up the project through an online platform. She announced the project on both traditional and social media to recruit sufficient participants, created a project manual and guidelines, and spent countless hours reviewing contributions and replying to volunteers’ questions. The project successfully finished within a surprisingly short period of time.”

This fictional vignette illustrates a successful nonprofit crowdsourcing project and refers to some instruments that are usually recommended in the literature to recruit volunteers and keep them engaged, such as using diverse channels to announce a project, communicating and supporting volunteers with feedback (Crall et al., 2017; Einolf, 2018; Johnson & Liew, 2020; West & Pateman, 2016). However, most recommendations are rather general and do not indicate how effective the discussed instruments are for maintaining volunteers active throughout a project. Actually, the majority of research focuses on understanding volunteers’ motivations and how these in turn influence participation over time (Cox et al., 2018; Einolf, 2018; Rotman et al., 2014). Understanding why people volunteer is not sufficient to know what the best means are to recruit volunteers and keep them engaged to achieve desired outcomes (Einolf, 2018); hence the call for more research about the effectiveness of volunteer management practices (Einolf, 2018; Sauermann & Franzoni, 2015; West & Pateman, 2016). The aim of this article is to identify the recruitment and engagement instruments used by project managers and examine their effectiveness on volunteer activity. To this end, we carry out a mixed-method multiple case study to answer the following research question:

We study multiple projects within the cultural heritage sector. In most projects, volunteers perform similar, fairly repetitive, and yet intellectually demanding (e.g., requiring paleographic skills) data processing tasks, such as indexing and transcribing handwritten documents. Although these tasks are typical for the cultural heritage sector, we believe our findings can be applicable to projects and platforms dealing with data processing by volunteers in other fields.

Our study contributes to the volunteering and crowdsourcing literature in two ways. First, we expand the knowledge about recruitment and engagement in online volunteering, by examining crowdsourcing projects initiated by nonprofit organizations (Cox et al., 2018). As the number of crowdsourcing projects and platforms increases, so does the need to understand how being part of a platform contributes to the success of online volunteering projects. We study projects from one specific crowdsourcing platform: VeleHanden.nl. VeleHanden specializes in crowdsourcing for the cultural heritage sector, with most projects coming from regional and municipal archives, and from university researchers seeking help in digitizing historical sources. Volunteers contribute to these projects by entering and processing data from scanned documents. The platform follows a one-format-for-all approach, using the same database structure and similar procedures to collect and process data. Although all projects on the platform use the same approach, project managers have substantial freedom to choose among a variety of instruments to recruit volunteers and keep them on board, allowing us to assess the effectiveness of different recruitment and engagement instruments on volunteers’ level of activity and hence projects’ progress.

And second, we combine qualitative and quantitative research methods: We use interviews to unravel the perceptions of project managers on what drives their projects’ success; and we use quantitative data on volunteers’ activity on the platform to assess the actual effectiveness of recruitment and engagement practices. This gives us a unique comparative perspective on what is believed to be an effective instrument (based on interviews) versus what really works in this context (based on more than 8 years of the platform’s entry data).

In the next pages, we first review the literature used to guide our empirical study. Given crowdsourcing’s broad applicability, from innovation to nonprofit humanitarian projects and scientific research (Nevo & Kotlarsky, 2020), and its proximity to phenomena such as volunteering and citizen science, we include relevant literature from the fields of volunteering, citizen science and crowdsourcing. We follow with a description of the mixed methods used to examine the specific case of crowdsourcing by culture heritage institutions in the VeleHanden platform. We then report the findings of our analysis, and conclude the article with a discussion and recommendations for practice and future research.

Literature Review

The volunteering literature distinguishes between individual and organizational factors that influence volunteer retention (Faletehan et al., 2021). Individual factors that influence the individuals’ decision to volunteer include their personal characteristics, background, values, and motivations, as well as their skills and their particular circumstances, such as the time they have available for volunteering (West & Pateman, 2016). Among the individual factors, it is said that intrinsic motivation, the satisfaction with the work done and a strong identification with the organization affect the chance that individuals continue volunteering (Faletehan et al., 2021). Research on crowdsourcing and citizen science projects have also examined the role of motivations on sustained engagement, and conclude that motivations to volunteer are diverse and change over time, that they are mostly intrinsic in nature and influenced by personal interest in a topic (Cox et al., 2018; de Vreede et al., 2017; Rotman et al., 2014; West & Pateman, 2016). Although individuals’ motivations cannot be changed, project managers can, however, design and apply recruiting and engagement instruments that appeal to these motivations (West et al., 2021). Recruiting and engagement instruments are organizational factors that can influence volunteers’ actions (Penner, 2002).

Organizational factors include organizational reputation, task-related factors and management practices (Faletehan et al., 2021). Although organizational reputation influences volunteers’ decision to join a project (Faletehan et al., 2021), we do not consider it an engagement instrument but rather a characteristic of the crowdsourcer as it is built over time and not easily changed for a specific project. The nature of the task is crucial for motivating and engaging participants (Troll et al., 2019), but once a project has started, a task is not likely to be changed as it is a defining feature of a crowdsourcing project. For instance, the classification of galaxies (Cox et al., 2018) or the transcription of 17th-century premarriage acts (De Moor et al., 2019) are tasks that define their corresponding volunteering initiatives. Hence, in this study, we only concentrate on management practices such as training and feedback, incentives or rewards, recognition and appreciation, and regular communication to keep volunteers engaged (Faletehan et al., 2021; West & Pateman, 2016), which we refer to as engagement instruments.

Current research has focused on the overall management of crowdsourcing projects, including the decision to crowdsource, task design, efficient workflows, participant recruiting, incentive systems and feedback (Nevo & Kotlarsky, 2020). However, recommendations for managing volunteers are often general and applicable to various types of projects (Crall et al., 2017; Johnson & Liew, 2020; West & Pateman, 2016). It remains unclear what instruments are most effective for actualizing such recommendations. This study focuses on two management practices of nonprofit crowdsourcing, recruiting and engagement instruments, and examines their effectiveness on volunteer activity.

Recruiting Volunteers

Volunteer recruitment involves the use of different communication channels, such as word-of-mouth, press releases, social media, or even third-party organizations (West & Pateman, 2016). The latter may include platform services. It is argued that crowdsourcing projects might benefit from joining a platform with an existing pool of volunteers (Sauermann & Franzoni, 2015). Crowdsourcing platforms can address potential negative experiences for volunteers with registering and accessing a project, allowing crowdsourcers to focus on the content and tasks (Troll et al., 2019). However, to our knowledge, the effectiveness of recruiting through a platform has not yet been studied.

Engaging Volunteers

The literature shows that a small number of people make most contributions in crowdsourcing projects (De Moor et al., 2019; Feng et al., 2018; Sauermann & Franzoni, 2015). Hence, project managers need to both recruit and keep volunteers engaged. This is especially important for projects that require training to perform tasks with sufficient quality, as retaining trained volunteers can save costs. In addition, engaged volunteers can act as project ambassadors and attract new participants over time.

Engagement is a multidimensional phenomenon that involves intellectual concentration, positive feelings and effort (de Vreede et al., 2019; Troll et al., 2019) and is closely related to motivation (Martin et al., 2017). Motivation is a psychological factor that refers to the will or reason why people do what they do (Martin et al., 2017). Engagement is an experiential factor or state of being that emerges while performing an activity (de Vreede et al., 2019). Recent research indicates a “mutually reinforcing” relationship between motivation and engagement, where motivation drives engagement, and engagement reinforces prior motivations (Martin et al., 2017). This may explain why the motivations to start and continue participating in crowdsourcing can differ (Cox et al., 2018; Rotman et al., 2014). That is, engagement arises as a positive affective-cognitive state of mind when volunteers evaluate their crowdsourcing experience, and it is fundamental for their sustained participation in current or future initiatives (Troll et al., 2019). Therefore, it is vital to understand the effectiveness of engagement instruments in crowdsourcing projects.

Support chats and discussion forums are often used in crowdsourcing platforms as means to engage participants (Troll et al., 2019). However, for these platform features to be effective, content needs to be added and queries replied in a timely manner. The response time in such chats or forums has not been examined in the volunteer nor in the crowdsourcing literature, and yet, it seems that in online communities, the frequency of messages or the speed at which questions are replied indicates the level of participant engagement (Wise et al., 2006).

Rewards and recognition are also means to stimulate individuals with extrinsic motivations (de Vreede et al., 2017). In nonprofit crowdsourcing and other types of volunteering, participants do not receive monetary payment for their contributions, but they do appreciate expressions of gratitude and recognition (Phillips & Phillips, 2010). However, as volunteers differ in their motives for volunteering, they also vary in their preferences for rewards (Phillips & Phillips, 2010). Although rewards and recognition are considered as means to engage and retain volunteers (Troll et al., 2019; West & Pateman, 2016), we are not aware of any research that investigates their effectiveness.

Research Methods

To study the effectiveness of volunteer recruitment and engagement instruments, we examine multiple crowdsourcing projects. To avoid differences between projects due to the technological possibilities offered by various crowdsourcing platforms, we selected one single platform: VeleHanden.nl. This platform was selected both because of its size (about 8,768 active volunteers in February 2020 and 102 public projects since the start of the platform) and its substantive period of existence (10 years). VeleHanden, which translates as “many hands,” was launched in 2011 by Picturae, a Dutch company that digitizes and helps to preserve, manage and enrich cultural heritage collections (Picturae, n.d.). The types of projects and tasks in the platform include: The indexing and transcription of scanned notarial deeds and population registers from the 17th to the early 20th century, as well as the classification and description of digitalized photographs and the georeferencing of scanned maps from the 16th up to the early 20th century. Besides being a platform where volunteers can enter and process data, VeleHanden also offers several instruments, such as newsletters, discussion fora, tools to allow reviewing of multiple data entries by volunteers, and the possibility to issue reward points. These instruments are available to all projects, but not all project managers use them, nor do they use them in the same way. This variation in the use of recruitment and engagement instruments allowed us to examine their effectiveness. For this purpose, we used a sequential mixed-methods approach, starting with qualitative research followed by quantitative research to complement and verify the findings from the qualitative phase (Saunders et al., 2019).

Qualitative Research

We started the research interviewing project managers from archive organizations, to identify the instruments they used from the crowdsourcing platform. Picturae provided us with a list of VeleHanden projects and basic details of the type of data they collected and summary stats about the evolution of the projects. We selected only completed projects for the interviews to gather as much information and experiences as possible about the engagement instruments used over time. Completed projects were those classified as having reached their pre-set targets in terms of data entry and subsequently closed by VeleHanden (in agreement with the organizations) or those classified as active but with a progress status of 100% processed objects but not yet officially closed by VeleHanden. This resulted in a list of 62 completed projects ran by 35 unique organizations. VeleHanden contacted project managers from these organizations to inform them about our research, after which, we sent an email to 76 project managers inviting them for an interview. We received a reaction from 25 organizations, and we were able to plan 20 interviews in the period November 2019 to January 2020. The project managers received a consent form via email and a printed version was signed by both parties on the day of the interview. The interviews took place in a location chosen by the interviewees and had an average duration of 1 hour. We carried out semi-structured interviews organized in six themes based on our learnings from the literature review. First, we asked general information covering the start of the project, its objective, and current status (a). We then discussed the role of volunteers including their tasks and responsibilities (b). We asked how volunteers were recruited (c), and what project managers did to engage participants (d), what the role of the platform was in that respect, and how they felt about the usefulness of the engagement actions they took. We inquired about the reward system and what project managers thought about it (e). The last theme was a general evaluation of the project (f), where project managers reflected on their projects’ progress and outcomes, their lessons learned and recommendations for future projects.

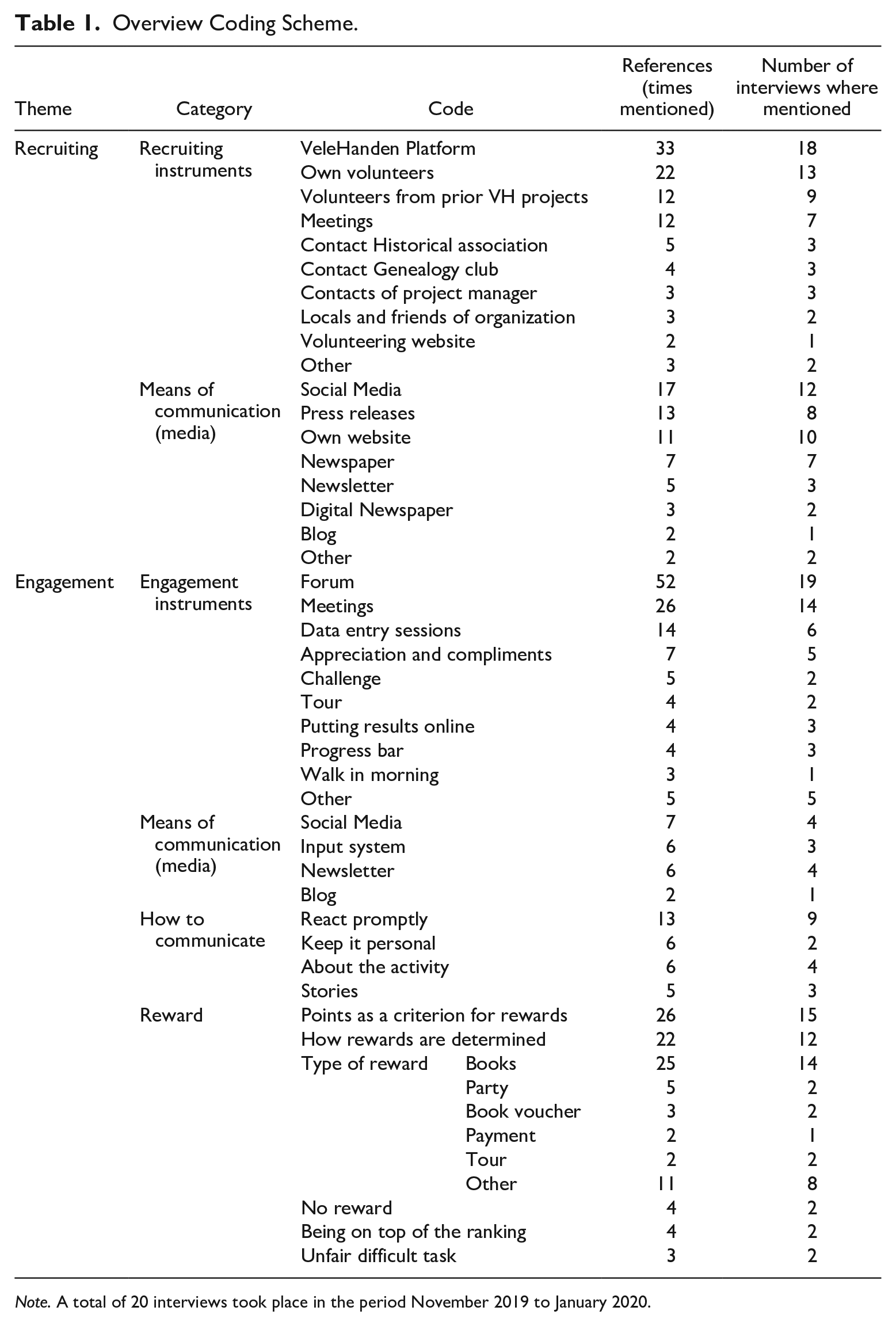

The audio recordings were transcribed and then analyzed using NVivo. The interview guide themes were the basis to create a preliminary coding scheme. For the reliability of the analysis, two of the authors and a research assistant used the coding scheme to independently code a set of three interviews. Two interviews were coded individually by two and one by the three of us. We discussed the codes to reach consensus and revised the coding scheme accordingly (Saldaña, 2016). One of us used the scheme to code all other interviews and fine-tuned it in a few iterations until no new codes emerged. The results of the coding process (Table 1) were used to identify and describe various recruitment and engagement instruments, to understand why they were used and how effective they were according to the project managers. We concluded the qualitative analysis with propositions about the perceived effectiveness of the most commonly used instruments. To verify the propositions, we analyzed the log data registered by platform use.

Overview Coding Scheme.

Note. A total of 20 interviews took place in the period November 2019 to January 2020.

Quantitative Research

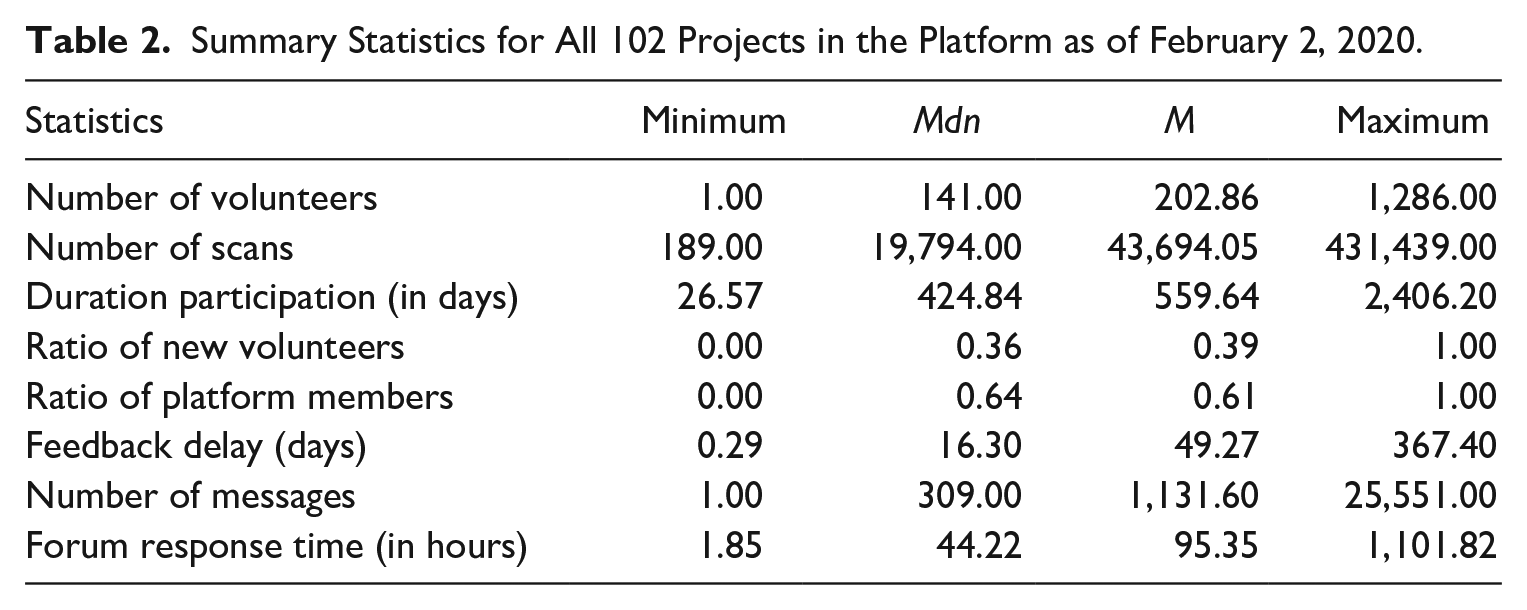

Besides the qualitative information of the interviews, we use a rich dataset from the VeleHanden platform with data from June 20, 2011 to February 2, 2020. Across 102 projects and 8,768 active volunteers, the dataset contains volunteers’ activity on the platform, including their entries, checks, and all metadata surrounding these two actions such as timestamps, user IDs, and comments. All data are at the level of the individual volunteer entry and are registered as separate observations. We also have metadata on the forum activity level. In the quantitative analysis, we do not use the content of volunteers’ entries (i.e., transcriptions of scans) nor the content of their forum messages. Finally, we have project-level information such as project’s name, description, start and end dates, and data on the reward system such as the number of points given to volunteers for their contributions and the points exchanged for a tangible reward. Table 2 includes a summary of the statistics for the projects in the platform used in this study.

Summary Statistics for All 102 Projects in the Platform as of February 2, 2020.

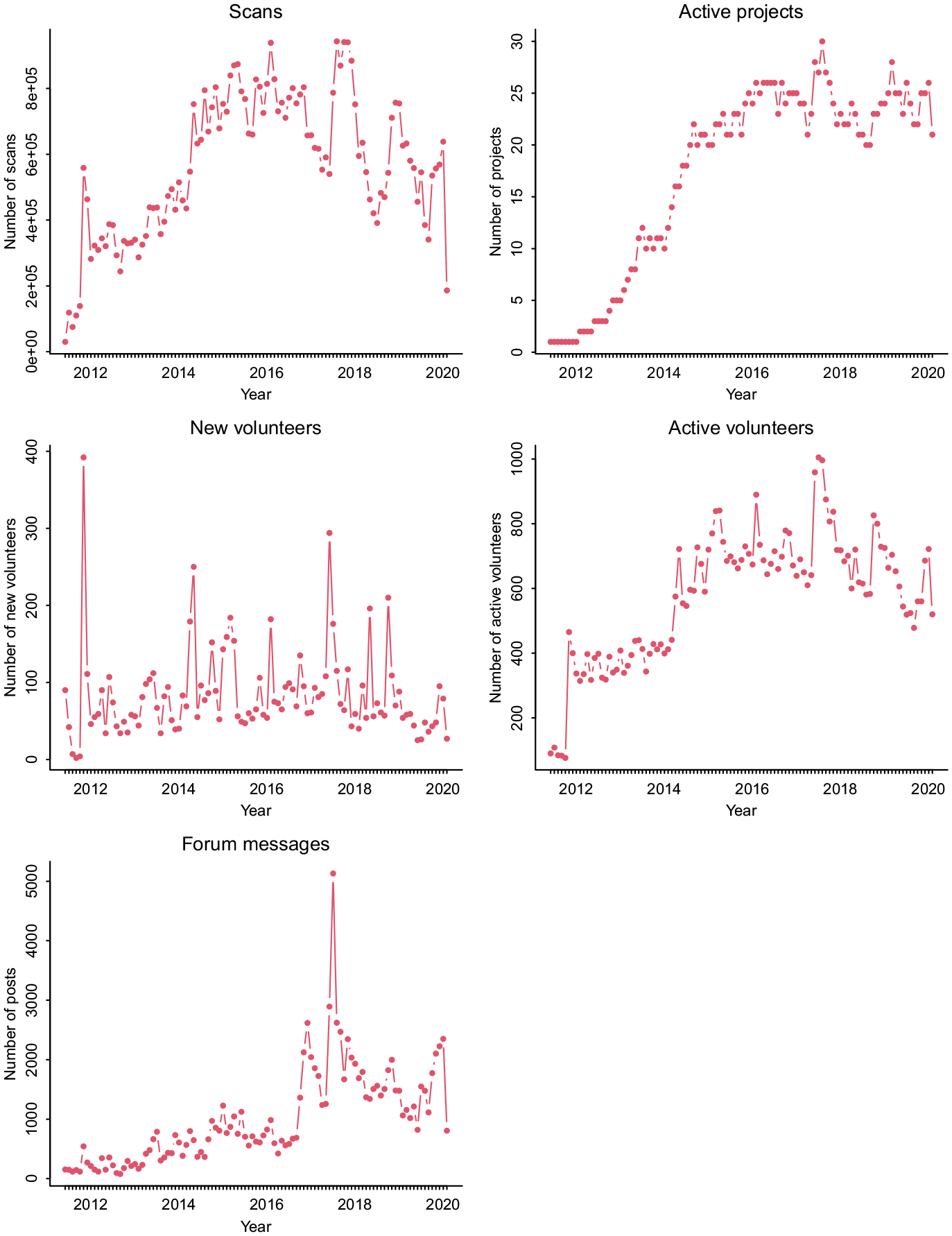

Figure 1 shows the evolution of the VeleHanden platform from 2011 to 2020. During this period, the platform grew substantially in number of projects. There was a stable inflow of new volunteers over the entire period, with some peaks observed. The first peak occurred in November 2011 after the official launch of the VeleHanden platform (November 3, 2011). Other peaks were usually due to the start of new, high-profile projects, such as WieWasWie population registers (May 2014), Surinam Slave Registers (June-July 2017), Captions for Cas [Oorthuys photographs] (May 2018), and Holland Amerika Lijn passenger lists (October 2018).

Key Indicators of Activity on the VeleHanden.nl Platform over Time (2011–2020).

Findings

The objective of this study is to understand how effective recruiting and engagement instruments are in nonprofit crowdsourcing projects. Hence, in the following pages, we focus on the instruments mentioned during the interviews that can be quantitatively traced throughout all projects.

Recruitment Instruments

The Platform

The analysis of the interviews reveals three approaches for recruiting volunteers. One approach entails recruiting among the organization’s existing volunteers (mentioned 22 times). Since archives have a long tradition of working with volunteers, project managers invite their volunteers to contribute to the crowdsourcing project. According to project managers, many volunteers may not join a crowdsourcing project because they prefer to go to the archive, to see the original manuscripts, or to enjoy the social interaction with other volunteers and the staff. A project manager explained: “besides data entry, volunteers come also here of course to enjoy each other’s company and sometimes chat, there is then less speed but we are okay with it” (17.13). Conversely, others feel attracted to the crowdsourcing project because they can contribute from home and outside opening hours, or because a trial fires them with enthusiasm about a certain topic or task. One project manager described it as follows: I think that from the 85 about 10 also worked on the VeleHanden project. I mean, that was not a must, but people also liked to do that. Ehm, and that was also something that they could do in the evening. Look, during the day you have to come here. And you can do the things here that you can do here, often by using the original, which you cannot take home. Thus, they also liked to do something in the evening. And I think that they were about 10 or so, yes. (15.10)

Another recruiting approach involves using the organization’s website (mentioned 11 times) and social media tools (mentioned 17 times), and even traditional media, to announce a crowdsourcing project and attract participants. One project manager said, “. . . and if I recall correctly, there was a call via Twitter and through our Facebook channel, and that was about it” (05.20). Another project manager recalled, “We have of course posted it in the newspapers and magazines here in our surrounding area” (06.22).

However, the most mentioned approach is recruiting from the pool of volunteers who are already active on the crowdsourcing platform (mentioned 33 times). Project managers get access to them by adding their project to the VeleHanden platform and by announcing it in the platform’s monthly newsletter. It seems that project managers are convinced that joining the platform is a good way to recruit lots of participants. One project manager said, “The nicest thing is of course that VeleHanden has its own community who can join new projects. Thus, we gladly make use of it” (02.12). And yet another stated, “On VeleHanden there is of course a whole crowd already active, who go through all the sources and see what kind of interesting things there are” (13.09).

Since we do not know how many participants were already physically going to the archives, nor how many joined after reading the project’s announcement on social media, we focus here only on the effectiveness of recruiting volunteers already active on the crowdsourcing platform. From the interviews, we derive the following proposition:

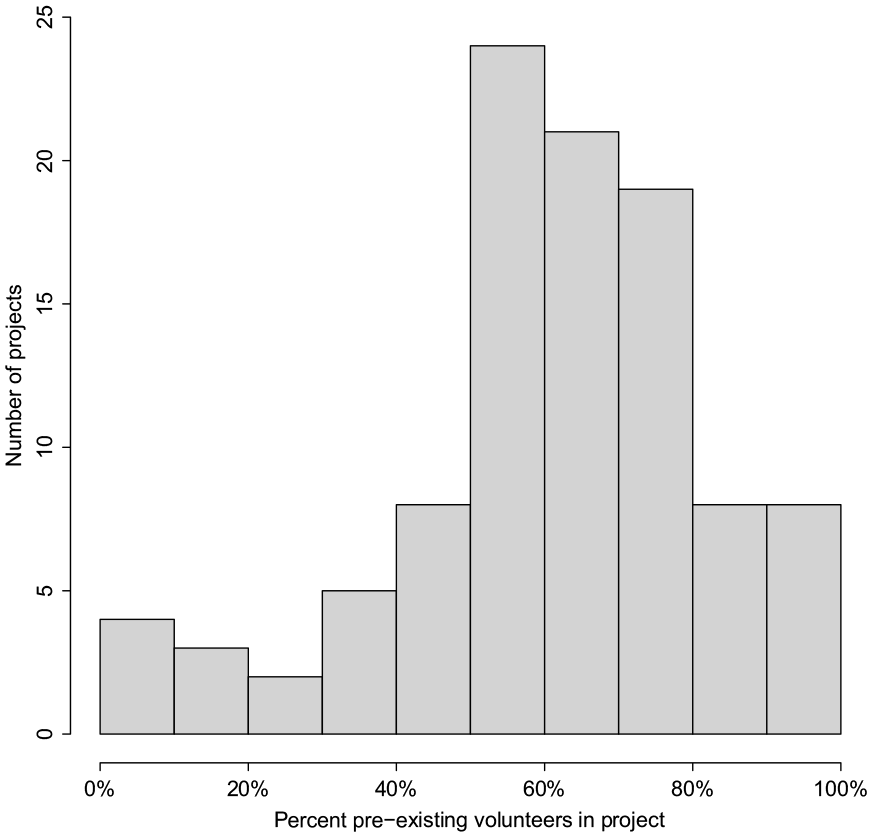

To investigate how important the platform’s existing pool of volunteers is, we first distinguish between new and pre-existing volunteers. A new volunteer is a volunteer who makes his or her first contribution (entry) on the platform. A pre-existing volunteer is one who has made any contribution on the platform prior to contributing to a given project. We identify new volunteers per project by finding new IDs in the date-sorted dataset of entries. The distribution of the share of pre-existing volunteers per project is shown in Figure 2.

Distribution of the Percentage of Pre-Existing Volunteers by Project.

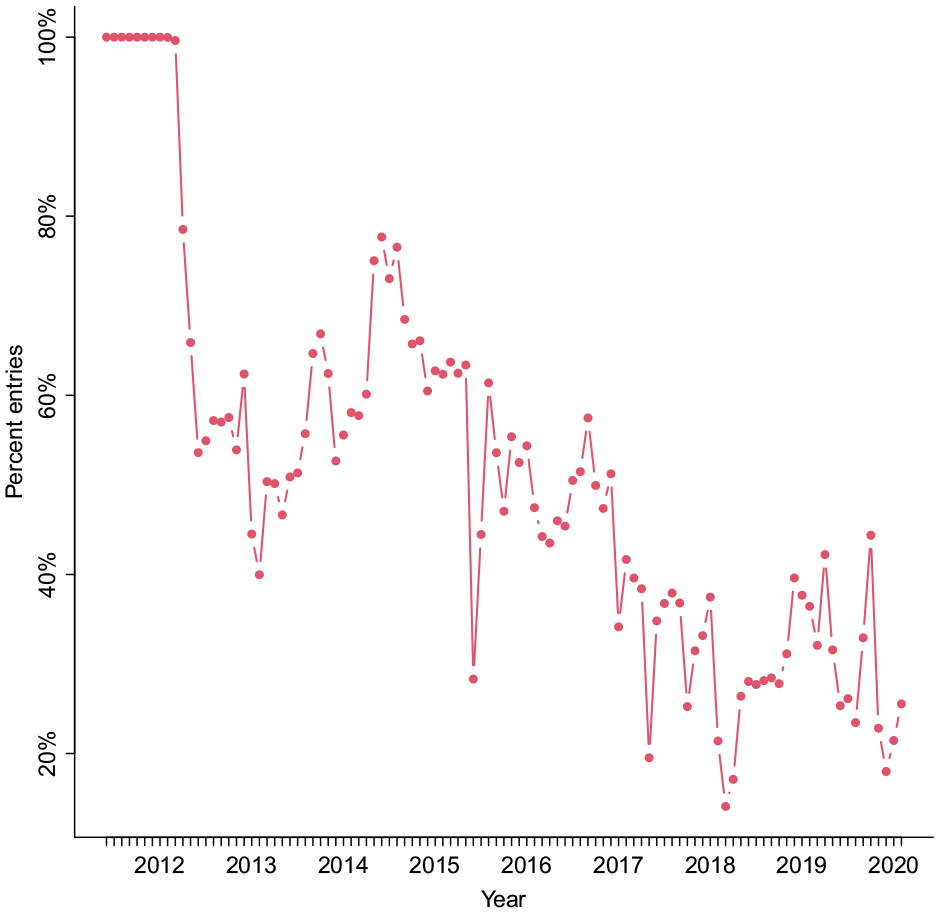

Using the above definitions, we calculate the share of new and pre-existing volunteers for each project based on the entry data, and use these project-level shares to calculate an overall volunteer-weighted average, excluding projects that started before 2012 when the platform was very young. We found that pre-existing volunteers accounted for an average of 60% of the projects’ volunteers. Over time, the share of new volunteers decreases, and so does the average share of projects’ activity by new volunteers on the platform (as shown in Figure 3).

Average Share of Projects’ Entries by New Volunteers Over Time, 2012–2020.

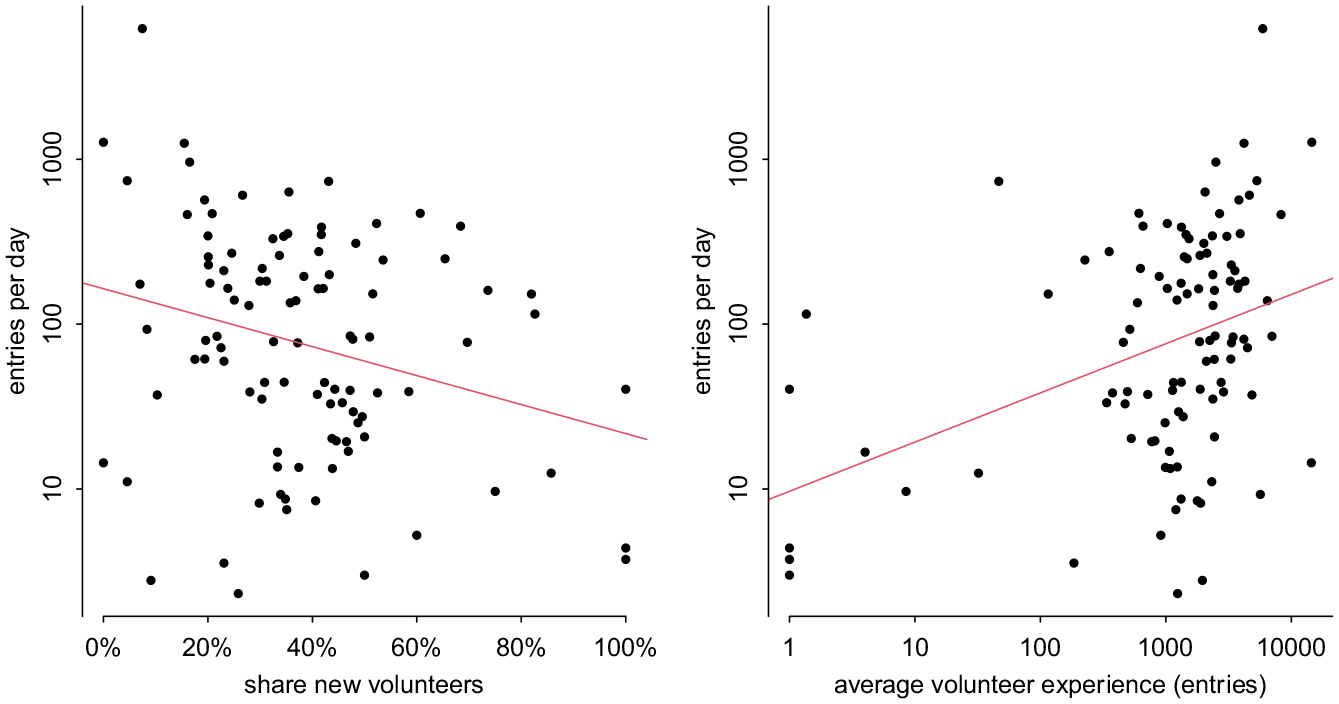

Next, we assess the effectiveness of recruiting from the pool of existing platform volunteers. Using the entry data, we identify all the entries in a project that were made by pre-existing volunteers as defined above. We observe that on average, projects got 62% of their entries from pre-existing volunteers. However, there is considerable variation between projects in this regard. Projects with a higher share of new volunteers have lower levels of activity, with a one percentage point increase in the share of new volunteers being associated with a 2% decline in scans per day (Figure 4, left; Table B1). Projects that attracted volunteers with higher average experience on the platform (measured as the number of scans entered when starting in a new project) had higher daily activity in the project, with an 1% increase in pre-existing/experienced volunteers associated with 0.3% increase in daily activity (Figure 4, right; Table B1). Together, this suggests that many new volunteers were not as productive as the platform veterans, possibly because they were still learning. We conclude that hosting a project on a platform with similar projects and thus with a pre-existing pool of “transferable” volunteers is beneficial for the project’s productivity.

Volunteer Profile of Projects and Their Daily Activity.

Previous Projects

Those archival organizations that had previously organized crowdsourcing projects at VeleHanden, could build upon volunteers’ positive experiences with their prior projects to recruit for new projects (mentioned 12 times). Project managers invite volunteers from their own prior crowdsourcing projects to join a new project, especially if these volunteers have been very active and/or made high-quality contributions. Their task-specific experience makes them a potentially very valuable subset of pre-existing volunteers. One project manager explained, “we have just asked the volunteers from earlier days, from the old [project], just asked them for that. Some of them joined and there were a few new people who joined afterwards” (02.12).

It is, therefore, likely that organizations that used the platform for prior crowdsourcing projects try to recruit participants from their old projects (Proposition 2). But if volunteers are very interested in specific types of archival sources (such as maps) or particular types of tasks (such as georeferencing), they could switch to similar projects offered on the platform by other organizations. Given the considerable similarity of projects in this platform, we focus on Proposition 2 and recommend that future research examines volunteers’ switch between projects in platforms with more diverse types of sources and tasks.

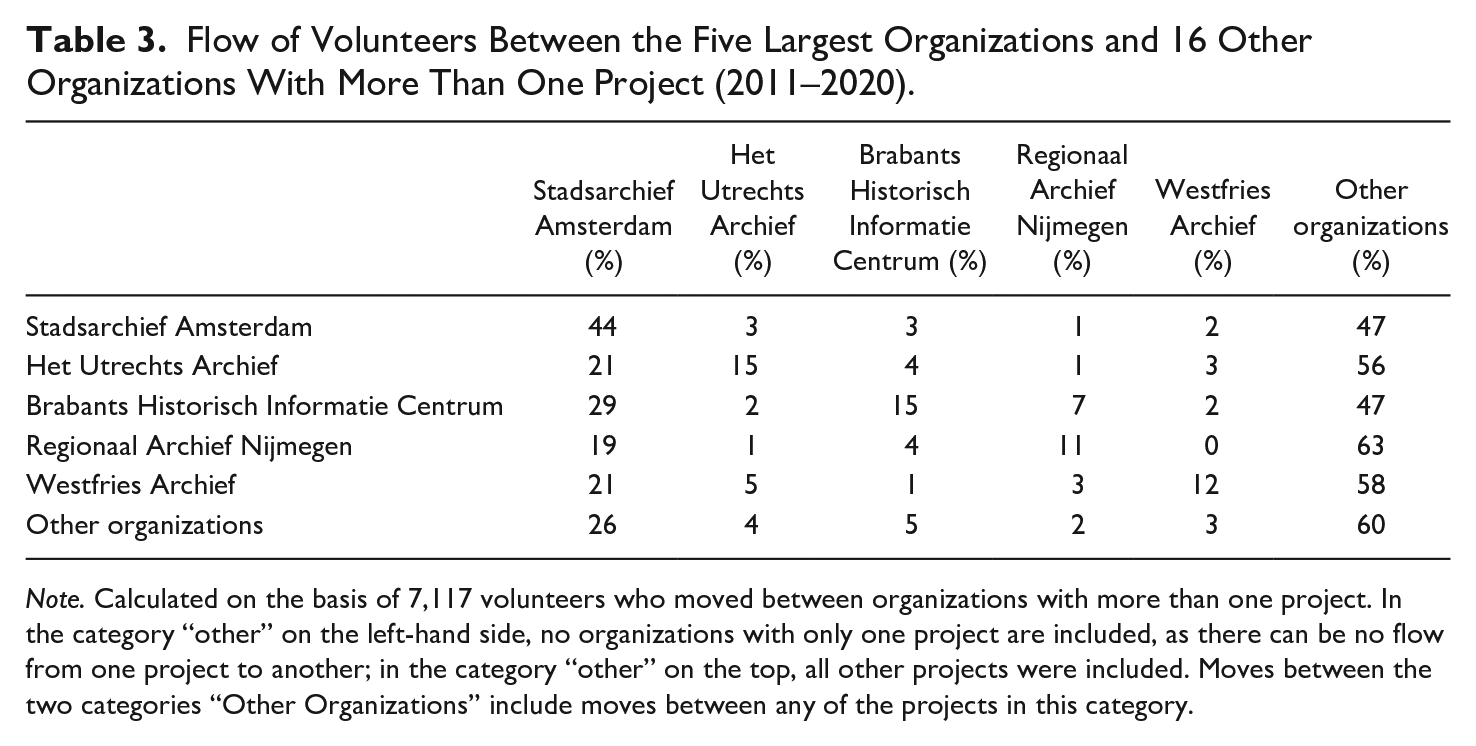

To determine whether participants return to projects from the same organization or not, we calculate the flows of volunteers between projects by looking at the five organizations with most projects (Table 3). Organizations with only one project are excluded as a starting point for volunteers since they cannot return to a project by the same organization. Table 3 shows the mobility of volunteers between organizations, grouping all organizations not in the top 5 under an “other” category. The table shows that volunteers from Stadsarchief Amsterdam were fairly loyal while other volunteers were more likely to switch to other organizations. Organizations with more than one project retained on average 15% of their volunteers from one project to the next, while organizations with more projects (such as Amsterdam, Utrecht, and Brabant Archives) had higher volunteer retention. About 44% of volunteers from Stadsarchief Amsterdam remain active on projects run by that same organization. This could be because they run more large projects for volunteers to return to. We turn to a more detailed analysis to investigate this.

Flow of Volunteers Between the Five Largest Organizations and 16 Other Organizations With More Than One Project (2011–2020).

Note. Calculated on the basis of 7,117 volunteers who moved between organizations with more than one project. In the category “other” on the left-hand side, no organizations with only one project are included, as there can be no flow from one project to another; in the category “other” on the top, all other projects were included. Moves between the two categories “Other Organizations” include moves between any of the projects in this category.

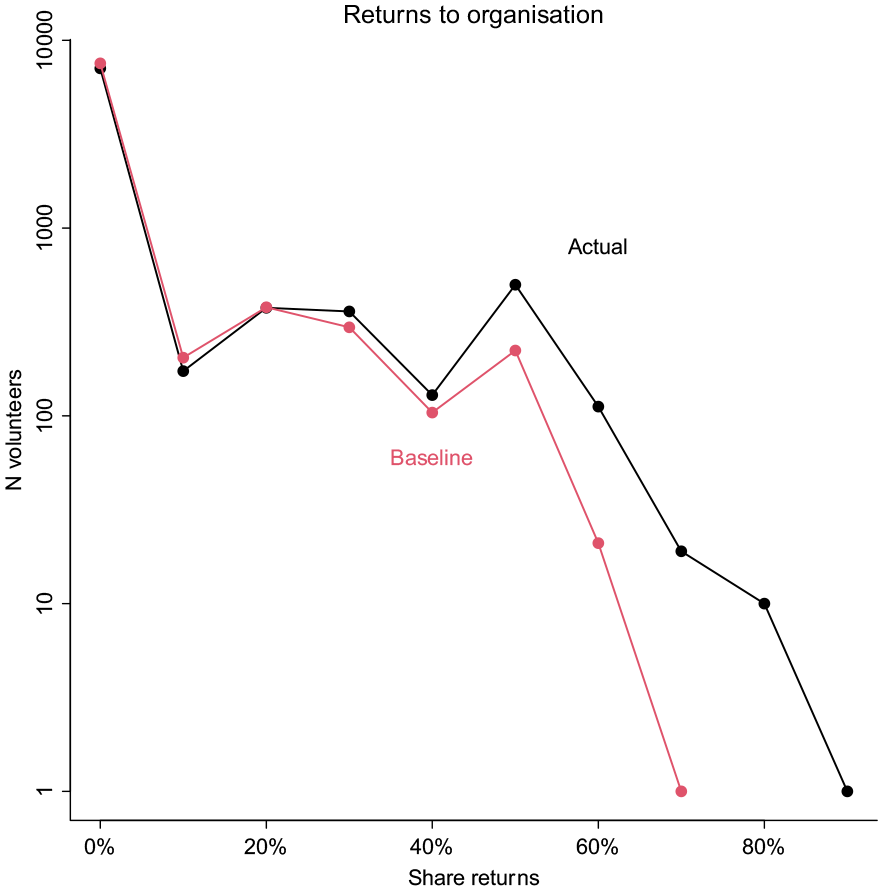

With a skewed distribution of volunteers over organizations and types of projects, one would expect a certain number of volunteers to return by chance alone. After all, some organizations had many (large) projects, and a volunteer that participated in one of these projects could be expected to return to the same organization even if the volunteer selected projects at random. To examine whether our findings are not an artifact of such random patterns, we calculate the return rates expected from the distribution of random volunteer-project-organization interactions alone (see Appendix A). We refer to this natural return rate as the baseline and compare it to the actual rate of return. Figure 5 shows the distribution of the share of projects per volunteer which was a return project.

Distribution of Share of Volunteers’ Actual Returns Compared with Baseline Share Projects.

Comparing the actual versus the baseline returns, we see that more volunteers joined projects by the same organization than expected by chance. On average, 18% of volunteers of new projects returned to a project of the same organization. If volunteers had moved at random, the share of returning volunteers would be 11%. From the above, we understand that recruiting volunteers from a prior project seems to be effective only for organizations with the largest number of projects and volunteers.

Engagement Instruments

Support and Communication

Each project in the platform has a dedicated forum, which is perceived by project leaders as the most important instrument to keep volunteers engaged (mentioned 52 times). The forum includes sections for different types of messages, such as questions, announcements, remarks, and advice. It is regularly used to share information about tasks, upcoming events, interesting findings from the data (i.e., short stories), but mainly used to answer participants’ questions and deal with issues brought up by them. Most project managers kept track of messages posted on the forum, as one of them explained, Yes, they were very active there. Consistently, I kept an eye on it too. Because you know that’s where people go to ask their questions or to tell about nice things they come across in the data. And you know, just you see that also in the other successful project, that if you manage it well, and you have good interaction, then you also have satisfied participants. Thus, we tried to do it in that way. (02.12)

Project managers emphasized the importance of prompt reactions to participants’ queries in the forum (mentioned 13 times). For instance, two project managers discussed the following: “a volunteer that has a question, he stops. He raises his hand: I have a question! And the faster you can help him, the faster he will go back to work” and a colleague continued: “yes and it is also annoying if you have a question and it is not answered, or after a week. Yes, that. . . it is also demotivating” (11.29). The reasoning is that the faster a question is answered, the faster the volunteer will go back or continue with the task. Project managers also understand that it can be frustrating when a question is posed and the answer takes long to arrive. Therefore, we propose the following:

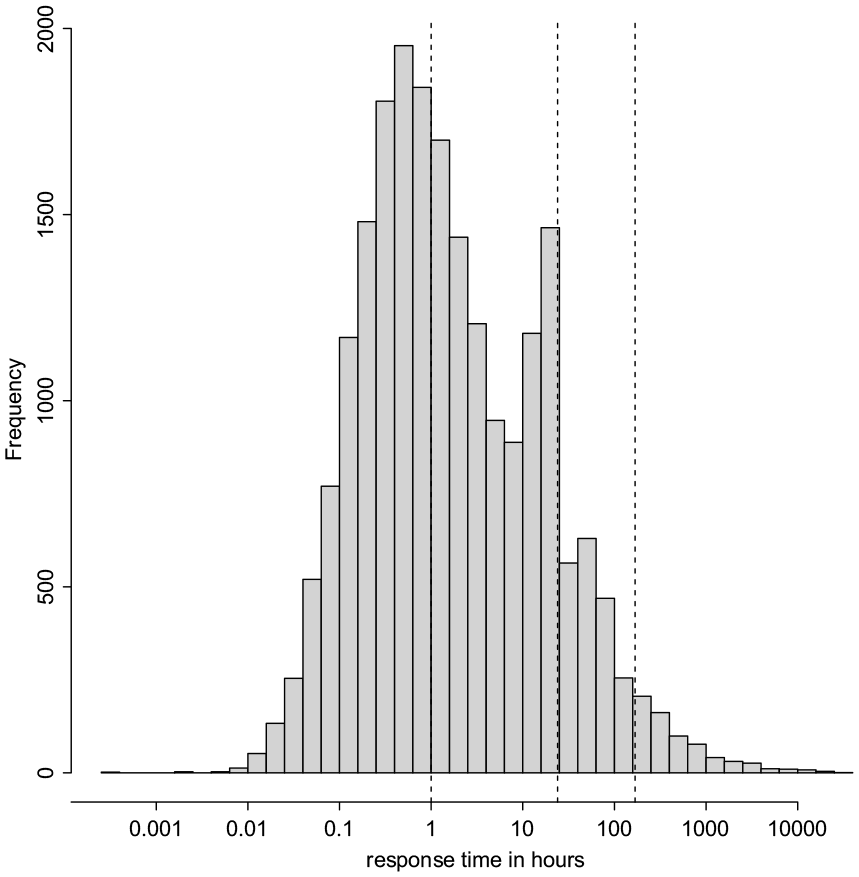

To measure responsiveness, we use the time difference between the opening post of a forum thread and the first post in response to this topic starter. We thus assume this new post is a response to the original post. 1 First, we look at the distribution of response times, which varied widely (Figure 6). Nearly half of the messages received an answer within an hour, 13% waited more than a day, and 3% did not receive a response in a week.

Distribution of Response Times in Hours (Semilogarithmic Scale).

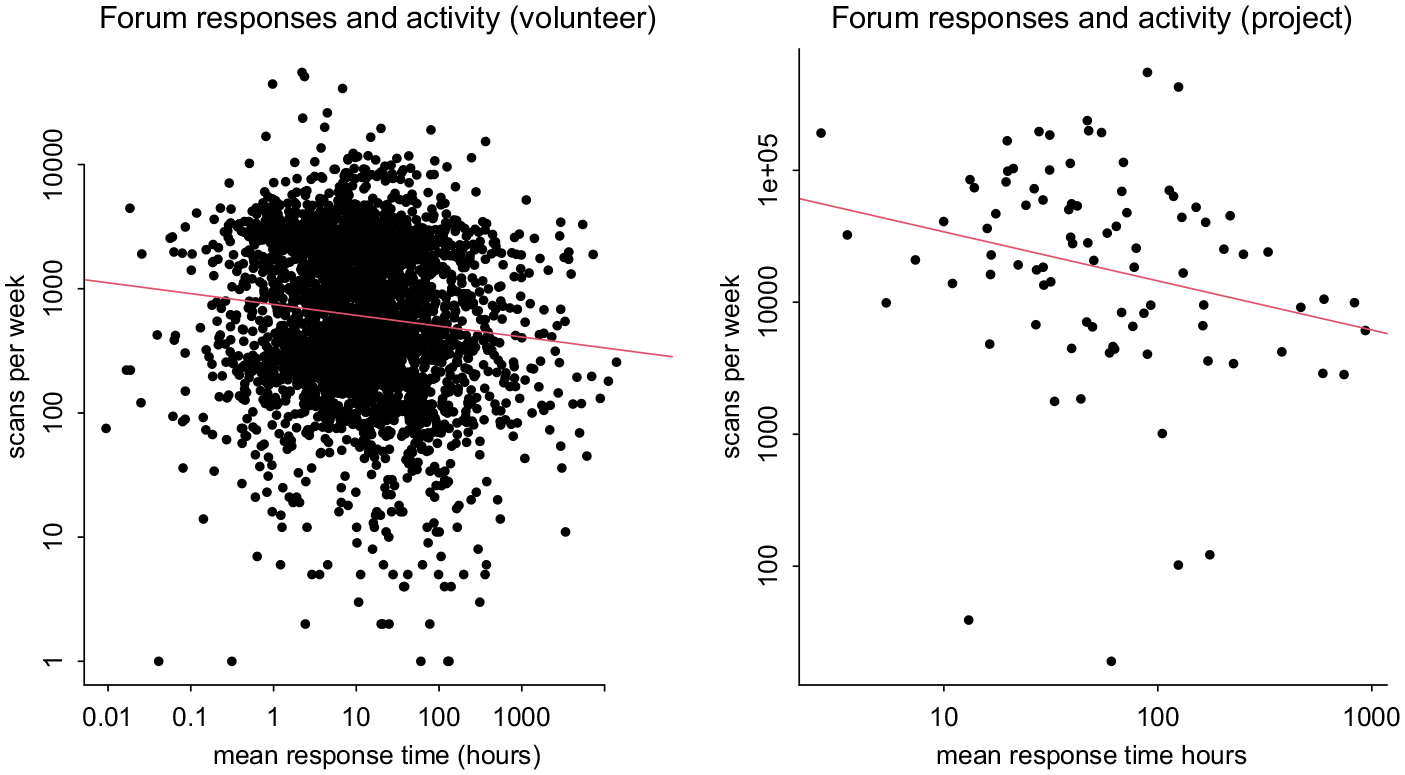

Next, we examine the correlation between forum responsiveness and entry activity. We find a negative correlation between forum response times and the number of entries at both the project and on a per week levels (Figure 7). A bivariate log-log regression of scans per week on average weekly response time shows that a 1% increase in forum response time predicts a −0.1% drop in activity. At the project level the relationship is stronger, with a 1% increase in project average response time associated with a −0.4% drop in project-average weekly response time (Appendix Table B2). There are two mechanisms that could explain this pattern. One is that a faster response time on the forum leads to more activity, because volunteers are no longer stuck waiting for feedback or the efforts of project managers motivate them to reciprocate that effort by continuing their volunteering. Alternatively, it could be that more active projects also have more active forums, for example, because there are more volunteers or there is more to talk about, and this in turn motivates staff and volunteers to respond to queries faster. Because we cannot intervene to change forum activity in an experimental fashion, we cannot distinguish between these two possibilities on an empirical basis. Hence, we can only conclude that there is a negative relationship between the speed of forum response and the level of volunteer activity.

Volunteer Activity and Response Times in Forum (Semilogarithmic Scale).

Reward System

The platform has a built-in reward system based on points that participants can gain with their contributions (mentioned 26 times). Each project can set up a system to allow volunteers to exchange points for a physical reward such as books or scanning credits at the archives, but not all project managers use it. As soon as a volunteer enters data, he or she receives a point. Each scanned document is circulated twice in the system, so that in total two different volunteers perform the same task. Once two volunteers have entered data for the same scan, a third person (either an appointed experienced volunteer or a project staff member 2 ), who we call “controller,” compares the entries and decides what the final version should be. This task is facilitated by the technology of the platform, which highlights the differences between entries. Once entries have been checked and a decision has been made, each of the volunteers receives points again.

These entry checks are important for the quality of project outcomes. One project manager explained it: Look, on the one hand you have the entry check . . . there were volunteers [controllers] who reported for example that someone uses “k” instead of “c” very often. We then contacted these people so that we can avoid again new errors when controlling. But we also checked entries proactively, especially for new volunteers because the entry check is always somewhat delayed compared to the entry itself. Thus, before the first scans that someone entered are actually controlled, it has passed a week or so. And in the meanwhile, there are lots . . . thus if you find an error by then there will be lots of scans with the same error. That is why we thought we should check the first three scans of new volunteers, then we could avoid a great deal of this [errors]. (04.19)

This explanation brings to the fore an important issue: the delay between entries and their check or quality control. Since volunteers receive additional points after the control, such delays have consequences for them. Project managers are aware of that: We try to keep them as close as possible. It is nice for the volunteers to see ‘eh, something happens with the entries I have done, it moves on.’ One step further in the process. And if the progress bars [between entry and check] would diverge too much, then if I was a volunteer I would think ‘does anything happen?’ (11.29)

Based on this reward system and the potential negative consequences of an entry check delay, we formulate the following proposition:

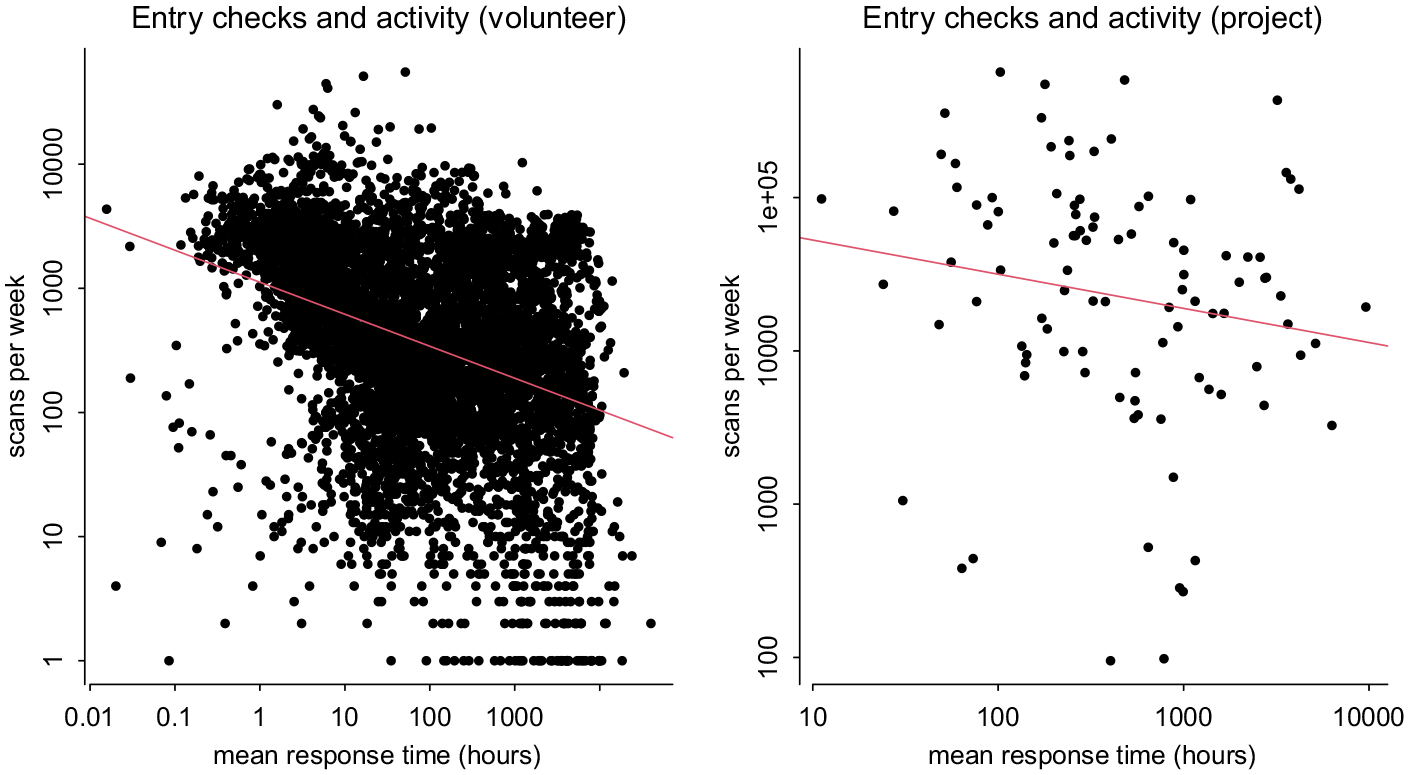

On a project level, the delays in entry checking show a weak, negative relationship (Figure 8, right), while weekly volunteer-level data show a stronger and significant negative relation between entry checking delays and activity (−0.26% scans per week for each 1% longer entry check time—Figure 8, left; Appendix Table B2).

Volunteer Activity and Entry Checks (Semilogarithmic Scale).

We also assess the effectiveness of rewards. Project managers who used the reward system are unsure whether rewards effectively sustain participants’ engagement, because they observe that the coupons to exchange points for material rewards are not used a lot. One project manager said, “I am not sure whether this has helped a lot . . . mostly people had far more points than what they needed for such a book, but it was . . . you know, a kind of gift” (4.19). That is, on one hand, there are people who trade points for a book, as project leaders explained: “there is no one who says: I do not need a gift” (16.12) and “Yes, sure. In general they were people who had enough points and who for example after a lecture they liked to get a book, they appreciated that. Some people asked: what can I get with my points?” (4.19). On the other hand, project leaders believe that most volunteers are motivated by the task and that they do not care very much about rewards. For instance, one project leader said, But afterwards I think that it wasn’t even needed. VeleHanden has lots of people and maps are apparently a nice thing, thus I think that we gave nice books but they were no 50 or 60 euro books. Thus I cannot imagine someone would do this just for that book. I think that people just did this because they enjoyed it. (14.09)

and another explained, “There were people who ordered, yes. They had indeed enough points for it. And there were also people who said: I enjoy very much participating but I do not need anything in return” (18.17).

It, therefore, seems that rewards are more a token of appreciation than an engagement instrument to increase contributions and it is unclear whether rewards help to bind volunteers to the project. Hence, we use the quantitative data to dig deeper into the following:

To evaluate this proposition, we begin by checking how many points volunteers spent in exchange for material rewards. VeleHanden provided us with data on the total points spent and the current point balance per project for each user. In addition, we know how many points each project awarded for an entry, as well as the number of points awarded once it was checked. The sum of spent points over all projects shows that volunteers exchanged approximately 10 million points for reward coupons in the studied period. We do not have direct data on the points earned by volunteers (only their current balance), so we calculate this by multiplying their total number of entries by the points the project awarded for each entry, which add up to c. 40 million points. With c. 10 million points spent, this means that 75% of all points earned remained unused. One possible explanation for this finding could be that many volunteers did not earn enough points to claim a reward. However, this is not likely, as 94% of all points earned by volunteers within a single project were sufficient to claim a typical 1,000 point reward. 3

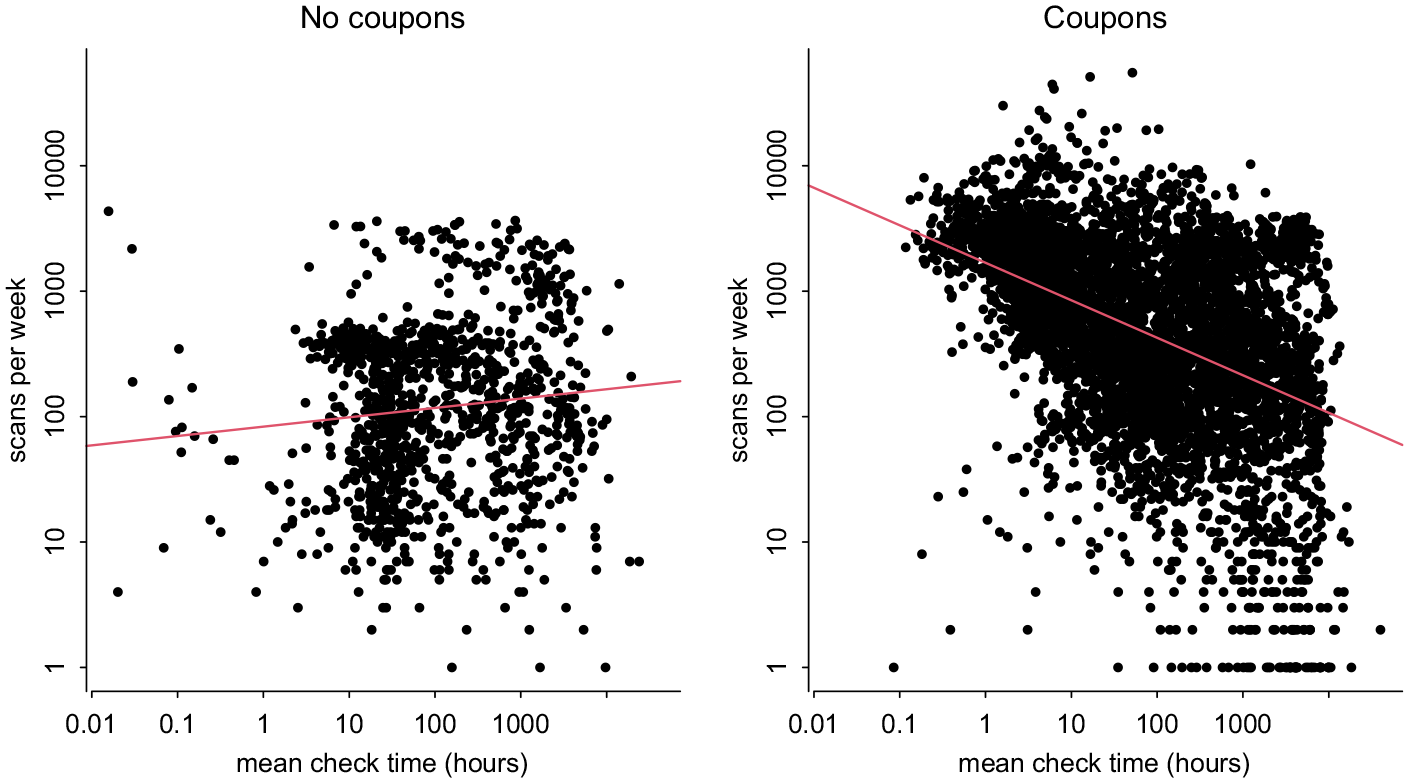

To further investigate the effectiveness of rewards, we break down the relationship between weekly delays in entry checking and project activity by the use of a coupon system (Figure 9). We find that the negative relation between checking delays and activity only holds for projects that exchange points for coupons (Appendix Table B3).

Relation Between Weekly Check Delays and Activity, by Usage of Point System (Semilogarithmic Scale).

The observations above suggest that rewards are not the prime motivation of volunteers to contribute and remain in a project. It is noteworthy here that if points were exchanged for a material reward, the equivalent of the points would be deducted from the total number of collected points that is displayed on the website. Claiming material rewards would thus have a visible effect on the total number of points, which expresses the effort a volunteer has done for a project. This also means that a top volunteer can lose his or her position in the project’s volunteer ranking when claiming material rewards.

Discussion

Recruiting and engaging volunteers are essential management practices for the success of crowdsourcing projects (Johnson & Liew, 2020) as they are for volunteering (Harp et al., 2017) and citizen science (West & Pateman, 2016). This article offers a retrospective longitudinal analysis of the functioning of nonprofit crowdsourcing projects on a specific platform, focusing on the instruments to recruit and to keep volunteers engaged. In terms of recruitment, our analysis shows that projects are able to attract a relatively large number of new volunteers through different media channels. However, the existing pool of volunteers on a crowdsourcing platform is also an effective recruitment instrument, because project progress is mainly driven by the contributions of experienced platform volunteers. These results confirm the expectations expressed in prior research about the benefits of an installed base of volunteers in a crowdsourcing platform (Sauermann & Franzoni, 2015). A potential drawback of recruiting from such installed base of volunteers is the lack of participants’ diversity. That is, despite the broader accessibility of crowdsourcing, participants in cultural heritage crowdsourcing seem to have similar high education and socioeconomic background as the visitors to museums, galleries and archives (Bonacchi et al., 2019). Recent research proposes targeted invitations to random individuals as a way to recruit more diverse participants for citizen science (Brouwer & Hessels, 2019). Although our research does not focus on diversity, given that both science and cultural heritage seek to engage more diverse audiences, future research should include diversity when examining recruitment effectiveness.

In this study, we also consider the role of volunteers’ loyalty to an organization when recruiting for a new project. Our findings indicate that recruiting volunteers from a prior crowdsourcing project does not seem to be effective for all but the largest organizations in terms of the number of projects and volunteers. There is a general preference for projects from larger organizations, which could be explained by the sense of pride that individuals gain from participating in projects from such organizations (Boons et al., 2015). Through prior participation, volunteers may develop a feeling of belonging and pride toward the crowdsourcing organization, which in the case of larger organizations could be strengthened by media interest (Boons et al., 2015) and organizational reputation (Faletehan et al., 2021). Yet, a sense of pride can also emerge among volunteers in smaller organizations with less crowdsourcing projects and might hence be less visible in our analysis. Therefore, another explanation could be that positive experiences of volunteers resulted in feelings of attachment or loyalty toward the nonprofit organization or crowdsourcer (Troll et al., 2019), leading to recurring volunteering. More research is needed to understand the role of organizational reputation, the quality of volunteers’ prior experience, their interest in a topic and preference for a task on their decision to contribute to a new project from the same organization.

In terms of engagement, communication through chats and discussion forums and the use of rewards to express recognition are important means to engage volunteers, but little empirical evidence is offered (Phillips & Phillips, 2010; Troll et al., 2019; West & Pateman, 2016). In the literature of online communities, response rates and delays have been considered as reasons for individuals being less active, but with limited evidence (Sun et al., 2014; Wise et al., 2006). Our findings empirically show that the response time to questions and issues on a forum, as well as timely entry checks, both influence volunteer activity, which confirms prior research on the negative relation between entry check delays and volunteer activity (De Moor et al., 2019).

Finally, our study indicates that a point-based reward system contributes to volunteers’ engagement, but that the reputation effect is probably more important than the material rewards themselves. The importance of accumulating points and its effect on engagement and long-term participation can be explained in relation to volunteers’ intrinsic motivations. Prior crowdsourcing research shows that rewarding points has a positive effect on individuals’ intrinsic motivation, in particular on self-presentation by displaying their skills, self-efficacy as points confirm the belief in their skills, and a sense of fun by accumulating points or competing with peers (Feng et al., 2018). We can conclude that the accumulation of points is important for volunteer engagement not because points can be exchanged for material rewards (extrinsic motivation) but because of the status conferred by these points (intrinsic motivation).

Recommendations for Practice

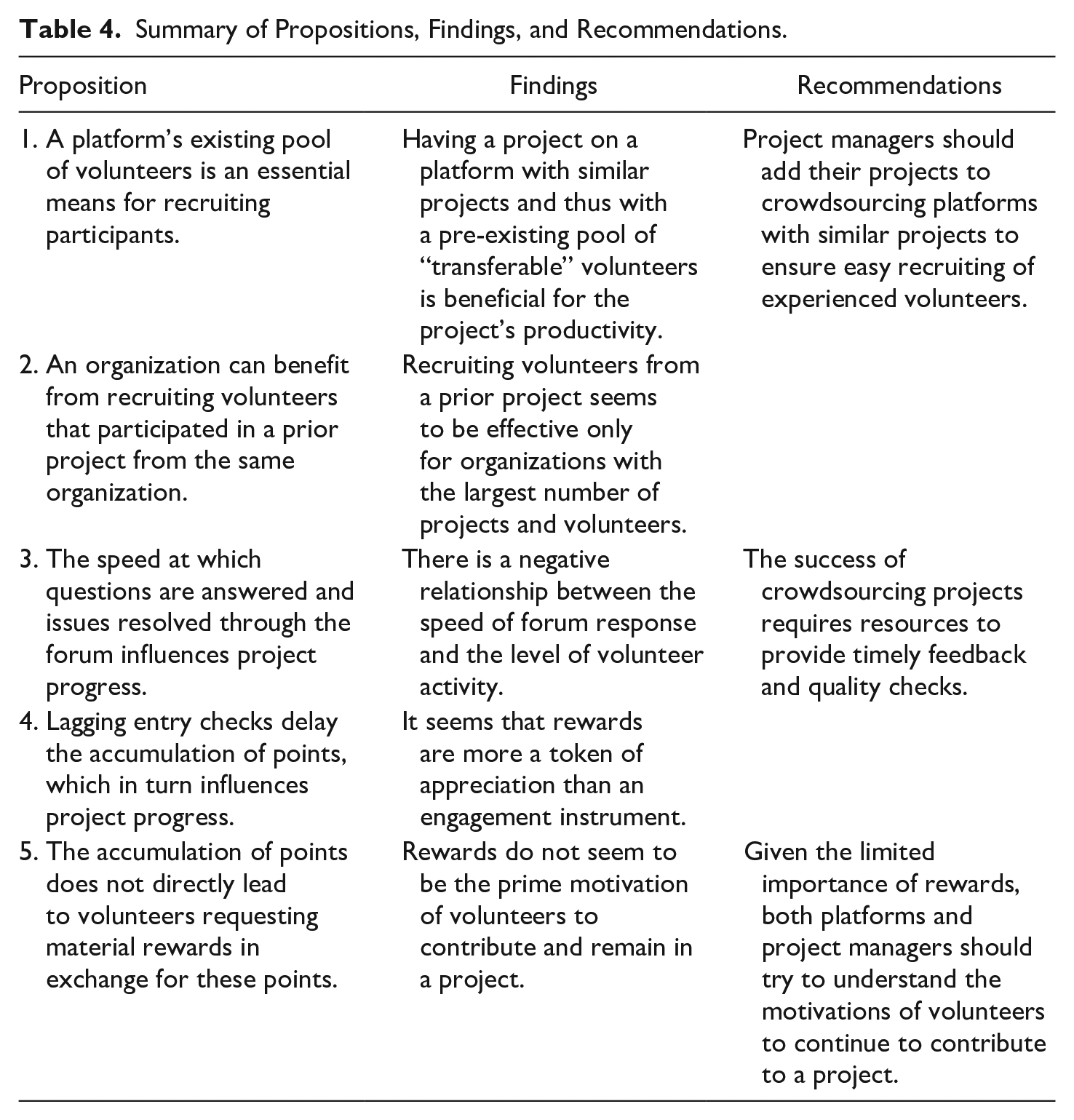

Based on our research, project managers should add their projects to crowdsourcing platforms with similar projects to ensure easy recruiting of experienced volunteers and use their often limited resources for other important project management tasks (Harp et al., 2017; Troll et al., 2019). In this sense, both platforms and individual project managers should pay attention to such veteran volunteers, and—given the limited importance of material rewards—understand their motivations to continue contributing. Moreover, nonprofit organizations should be aware that the success of crowdsourcing projects requires resources to provide timely feedback and quality checks. In Table 4, we summarize the propositions based on qualitative research, the findings from the platform’s quantitative analysis and the recommendations we derive from these findings.

Summary of Propositions, Findings, and Recommendations.

Limitations and Future Research

The limitations of our study provide avenues for future research. First, in the analysis of forum response effectiveness, reverse causality between the level of activity in the forum and the project activity in terms of entries remains an issue. Are projects more active because volunteers can communicate on the forum and response times are short, or do volunteers communicate more on the forum because the project is active and gives volunteers more to talk about? Future research could consider an experimental setup to analyze the importance of these communication channels.

Second, we have studied one platform with a standardized way of presenting projects and managing volunteers’ contributions. By using the same data-entry methods for all projects on the platform, it is possible to shorten volunteers’ learning process, which may contribute to the mobility of volunteers within the platform and their willingness to remain engaged on that same platform. However, some crowdsourcing platforms bring together projects with different data-entry methods (e.g., Zooniverse). Comparing VeleHanden.nl with other platforms may tell us more about the effectiveness of such a coordinated, standardized data entry approach for recruiting and engaging volunteers. Finally, our study did not focus on the motivations of volunteers for choosing a platform. Future research could study the factors that drive volunteers to choose between different platforms.

Conclusion

Recruiting volunteers can be easily achieved by tapping into the large crowd of platform members, as the availability of experienced platform volunteers may considerably speed up a project’s progress. Our research also shows that timely forum interactions are related to larger data contributions and that a point-based reward system is only effective when tied to prompt data quality feedback. That is, the effectiveness of engagement instruments depends on their timely enactment.

Footnotes

Appendix A

Appendix B

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This publication is part of the project Building a UNified theory for the development and resilience of Institutions for Collective Action for Europe in the past millennium (UNICA) with project number VI.C.191.052 which is (partly) financed by the Dutch Research Council (NWO).