Abstract

Digital trace “data donation” studies offer researchers a unique opportunity to collect high-quality behavioral data, but decisions about the scope of requested data can impact both dataset richness and participant compliance. This paper examines the tradeoffs between requesting larger data packages, which include more extensive historical records, and participants’ willingness to donate. In a randomized experiment with Facebook and Instagram data donations, we compare a control condition where participants are asked to request the default 1-year data period to a treatment condition in which they are asked to request data for their entire account history. We analyze how different request sizes affect (1) participants’ compliance rates and (2) the characteristics of the data resulting from these different requests. We find that participants asked to request more data are less likely to complete the task. However, we propose that this is not primarily due to heightened privacy concerns, but rather because these data packages are significantly larger and therefore take longer for the platforms to deliver. This additional time to deliver data packages results in increased attrition. In terms of the effects on the data itself, we show that decisions about the time-span of the data impacts not only the volume of data requested, but also has implications for measurement validity, as the temporal window fundamentally redefines what key constructs represent, potentially transforming intended static indicators into narrow snapshots of recent behavior. We provide guidance for researchers navigating these decisions, considering both the benefits of richer longitudinal data and the risks of reduced participation.

Introduction

The proliferation of digital trace data has opened unprecedented avenues for social science research, offering granular insights into human behavior, interactions, and societal trends at scale (Lazer et al., 2009; Salganik, 2019). Within this landscape, digital trace “data donations” where individuals voluntarily share their personal data from online platforms emerges as a user-centric method (Ohme et al., 2023), empowered by data portability rights like those enshrined in the General Data Protection Regulation (GDPR) (Ausloos & Veale, 2020). This approach holds the promise of overcoming some limitations of traditional survey self-reports (e.g., recall bias and social desirability) and restricted platform APIs (e.g., data access limitations and platform-driven biases) (Breuer et al., 2022; Freelon, 2018) by providing logged data of actual behavior on these platforms. However, like most data collection endeavors, data donation inherits fundamental methodological challenges concerning participant compliance and the representativeness of the resulting samples, which can significantly impact the validity and generalizability of research findings (Boeschoten et al., 2022; de León et al., 2025; Kmetty et al., 2024), problems that are also suffered by survey research (Groves & Lyberg, 2010; Couper, 2000).

A critical, yet under-examined, design choice in data donation studies is the scope of data requested from participants: specifically, the temporal span of the digital traces. Researchers often face a dilemma: should they request a limited, recent snapshot of data (e.g., only the last year of data) or a more comprehensive historical archive (e.g., data for the entire period of the accounts lifetime)? While more extensive historical data offers undeniable analytical advantages, such as the ability to study long-term behavioral trajectories, reconstruct responses to critical past events (van Driel et al., 2022), and integrate with longitudinal panel surveys (Trappmann et al., 2023), this ambition confronts a classic tension. Requesting more extensive data may inadvertently increase participant burden—cognitively, temporally, or emotionally (Bradburn, 1978; Tourangeau et al., 2000). This increased burden, alongside potential heightened privacy concerns (Pfiffner & Friemel, 2023), could lower compliance rates and, crucially, lead to non-donation bias if willingness to share larger datasets varies systematically across different demographic or behavioral groups, similar to a nonresponse bias in surveys (Groves & Lyberg, 2010). The decision, therefore, can have direct implications not only for the quantity of data collected but also the nature of who participates and what data is ultimately analyzed, directly impacting the representational quality of the study.

Most work on the tension between burden and compliance focus on survey length and attrition in repeat-wave panels. Relatively little has been said about this new tension between people’s willingness to share more data, with most focusing on their willingness to share data at all. This paper confronts this dilemma empirically within the context of Facebook and Instagram data donations. We exploratorily investigate the effects of requesting an account’s full history of activity versus the default limited “last year” data on two critical dimensions: (1) participant compliance with the multi-stage data donation task and (2) the resulting characteristics of the donated data itself (how many datapoints are received, what these datapoints contain, and the size of the download packages). Specifically, we explore whether requests for more extensive data affect participation (and whether this is associated with heightened privacy concerns), increased procedural complexity (as requesting “all time” data deviates from platform defaults), or other logistical factors such as platform processing times for data package delivery. Understanding how these nuanced procedural differences influence how many participants complete the donation process, and whether the data fundamentally differs between those donating the 1-year and “all-time” periods is vital for the ethical and effective design of such studies.

To provide empirical grounding, we embedded an experiment within a data donation study. We compare a control condition (the default “last year” data request) to a treatment condition (asking participants to request data from “all time”). Our findings reveal that asking participants to request their “all time” data indeed leads to lower compliance. However, we argue this is not primarily due to heightened privacy concerns. Instead, the significantly larger data packages associated with “all time” requests result in considerably longer delivery times from the platforms, and this extended waiting period appears to be a key driver of participant attrition. Additionally, we find that the time period requested does not only significantly alter the amount of data received, but also changes the very nature of the data and the research questions that can be answered,

These findings provide empirical evidence to the emerging field of data donation methodology as well as insights relevant to broader discussions on survey methodology and digital data collection. The exploratory approach allows us to not only address this in regards to the interplay between request demands, participant attrition, and the potential for systemic biases not only in who is ultimately represented in behavioral datasets, but also, as a unique contribution of this study, the resulting data itself.

By systematically examining the interconnected aspects of participant behavior, platform responsiveness, and data characteristics, this paper aims to offer evidence-based guidance. In the subsequent sections, we provide the theoretical background of the data donation process, discuss the potential effects of the volume of requested data on participation behavior and on the data quality, present our experimental methodology, and report on our findings. Ultimately, we seek to help researchers navigate the complex tradeoffs between data richness and research feasibility, ensuring the continued development of data donation as a robust and representative method for social scientific inquiry.

Theoretical Background

What are Digital Trace Data Donations

Data donation is a promising new approach for accessing the digital traces individuals leave behind in their daily lives, enabled by the General Data Protection Regulation (GDPR)’s provisions on data access and portability (Ausloos & Veale, 2020). Under this legislation, data controllers—such as digital platforms—are legally obliged to provide individuals with a copy of the personal data collected about them, upon request. These datasets, typically provided in structured, machine-readable formats, known as Data Download Packages (DDPs) are available from any data controller, including platforms such as Facebook, Instagram, Google, Netflix, TikTok, or WhatsApp. Researchers can leverage individuals’ rights to data access and data portability, by inviting research participants to share these DDPs voluntarily for the purposes of scientific research. This process, where individuals obtain their personal digital trace data, and then share these traces with researchers for scientific purposes, is referred to as data donation (Boeschoten et al., 2022). Examples of data donation studies can be found in the context of social media platforms (van Driel et al., 2022; Kmetty & Németh, 2022; Yap et al., 2024) instant messaging (Corten et al., 2024), video and streaming platforms (van Es & Nguyen, 2025; Bechmann et al., 2025), physical activity measures (Keusch, Pankowska, et al., 2024), browsing history (Welbers et al., 2024), and smart home devices (Gómez Ortega, Bourgeois, & Kortuem, 2023).

However, researchers who make use of data donation are faced with several challenges (Ohme et al., 2023). One of these challenges is that DDPs potentially contain sensitive data, as the amount of data in such packages is usually quite large. Often, researchers do not require all that data to be collected to be able to answer a specific research question. To tackle this challenge, Boeschoten et al. (2022) developed a workflow that allows to extract from the DDP only the information relevant to the researchers. This workflow consists of the following steps. The research participant … 1. … requests their personal DDP at the platform of interest to the research project. 2. … downloads the DDP on their own personal device as soon as the platform has the DDP ready. 3. … visits a website built specifically for the research project. 4. … opens the DDP on the website enabling a local processing step. This means that only the parts of the DDP that are of interest to the research project are extracted. 5. … inspects the extracted data and decides whether they want to donate this (or decline donation).

Only after the participant has clicked the “donate” button, the extracted variables are sent to a server that can be accessed by the researcher. Such workflows, with different variations of how local processing is implemented (step 4), have been implemented in research tools such as OSD2F (Araujo et al., 2022), Designerly data donation (Gómez Ortega, Bourgeois, & Kortuem, 2021), the Data Donation Module (Pfiffner et al., 2022), the Social Media Donator (Zannettou et al., 2023), WhatsR (Kohne, 2023), and Port (Boeschoten et al., 2023).

When an individual requests a digital copy of their personal data (step 1, above) platforms often offer an option to choose the temporal span that this data will cover. In the case of Facebook and Instagram, this option ranges from “last week” up to “all time” data, meaning that all data is provided since the account had been created. If participants request their “all time” data, the DDPs contain larger volumes of data compared to when participants request their “last year” data (unless the account has been created within the previous year). While larger DDPs can illuminate complete historical patterns that unlock analytical possibilities that shorter time windows simply cannot provide, it also brings additional challenges and responsibilities for a researcher. In the following section, we describe some of these potential benefits and challenges.

The Benefits of Data Donations with Extended Temporal Spans

If participants request DDPs with a larger temporal span, this can have several benefits for a research project. Firstly, using “all time” DDPs enables researchers to capture and analyze critical, transient events (such as the onset of the COVID-19 pandemic (de León et al., 2023), a particularly consequential election night, or the sudden onset of armed conflict) from an individual-level perspective in a way that neither retrospective surveys nor API querying can match (van Driel et al., 2022). When participants donate their complete Facebook and Instagram histories, researchers can engage with data about events not immediately present, reconstructing behavior for moments that, in retrospect, were critical for current societal developments.

Secondly, by capturing time-stamped content across an individual’s entire social media history, scholars can employ time series methodologies to trace behavioral and linguistic shifts over longer periods of time, uncovering gradual trends as well as sudden responses to external shocks. Furthermore, these rich behavioral chronologies can be integrated with established panel surveys that hold several years of attitudinal and demographic measures. Such a combination amplifies research potential, allowing for hybrid datasets that marry self-reported beliefs with real-world engagement metrics across temporal spans that have not been achieved in the current literature, as efforts have focused on asking participants to install tracing technologies for a limited period of time (Trappmann et al., 2023; Stier et al., 2020).

Third, from a practical standpoint, requesting “all-time” DDPs in a single data donation workflow would allow researchers to address multiple research questions. Designing studies to retrieve comprehensive archives upfront reduces the potential number of data collection efforts, as well as technical costs associated with iterative requests (Carrière et al., 2025). Requesting one comprehensive dataset of social media activity (rather than several staged requests for different time periods) can reduce overall participant burden, guarding against potential attrition in participant panels that require the participation in multiple sequences of a data donation study (Keusch, Pankowska, et al., 2024).

However, collecting data that allows researchers to answer multiple research questions comes with challenges and responsibilities for researchers when designing and implementing their studies. First, participants should be appropriately informed about potential secondary use of the data they are sharing, potentially by external researchers, when consenting to participate in the study. Second, researchers should continue to be mindful of utilizing local processing to prevent sensitive or third-party data from being collected. Third, most data donation software enables the local processing step to take place in the browser of the participant, which has limitations in terms of file sizes that can be processed. The advantages of collecting rich data should be weighed against these challenges, as well as the potential non-willingness to share larger amounts of data, which we describe in the next section.

The Impact of a DDP’s Temporal Span

When changing the temporal span of a DDP in a data donation study, this can have an impact on the outcomes of that study in various ways. In this context, Total Error (TE) Frameworks (Biemer, 2016; Japec et al., 2015) are a convenient way to describe each step of a data collection process as well as the errors that might arise from that step. TE frameworks, including the TE framework for data donation by Boeschoten et al. (2022), typically distinguish between representation and measurement. Here, representation focuses on how the obtained sample of participants diverges from the defined population in each step of the data collection process, while measurement deals with the extent to which the constructs of theoretical interest are adequately measured by the procedure performed in the study. In the remainder of this section, we explore how adjusting the temporal span of a to be requested DDP can affect a data donation study both in terms of representation (by leading to reduced participation in the study) and measurement (by investigating the characteristics of the resulting data). With respect to representation, this paper focuses specifically on participation-related errors—that is, how the temporal span of the requested DDP affects donation rates and attrition—rather than covering all sources of representation error encompassed by the TE framework.

The Impact of a DDP’s Temporal Span on Participants

Data donation is a user-centric approach to data collection, meaning that active involvement of research participants is required (Ohme et al., 2023). As with any user-centric approach to data collection, participants might not be willing to participate in the study or drop out somewhere in the process (de León et al., 2025). In this context, designing a data donation study means striking a balance between on the one hand a wish to collect rich data to be able to answer research questions, and on the other hand the intensiveness of the data donation procedure on the participant (how taxing and time-consuming the procedure is).

A growing body of literature focuses on disentangling participant and study characteristics that might be related to successful participation in data donation (see e.g., Xiong et al., 2025 for a systematic review on willingness to donate in the context of social media data). These studies illustrate that of all participants that start with a data donation study, only a subset successfully donate their data (see e.g., Corten et al., 2024; Keusch, Pankowska, et al., 2024; Strycharz, Meppelink, Zarouali, Araujo, & Voorveld, 2024 for reporting on these numbers). Therefore, our first research question focuses on understanding whether changing the temporal span of the DDP of interest affects attrition rates of the study (

Studies have also shown that participants that are more concerned about the sensitivity of the data with respect to their privacy might be less willing to donate their data (Pfiffner & Friemel, 2023; Silber et al., 2022). This perceived sensitivity is known to depend on the nature of the data or the specific platform from which they originate. However, less is known about whether data is also perceived as more sensitive when the temporal span of the data increases, for example, because participants have more trouble overseeing what data exactly entails, or what behaviors they have shown at the platform under investigation. Therefore, our second participant-focused research question focuses on whether participants who are invited to donate data with a longer temporal span show more concern regarding their privacy (

Lastly, one mechanism potentially affecting attrition in data donation studies is the complexity of the study design. We can for example see substantial variations in the amount of time that participants must wait in between steps in the data access request procedure, as well as variations in the number of steps this data access request process entails (Hase et al., 2024). Here, researchers can even further complicate the study design by instructing participants to not go for the default option, but to for example request a specific subset of the DDP. In the context of Facebook and Instagram, requesting a DDP of 1 year is the default option, which can be adjusted to “all time” data by adding some extra steps to the process. Our final participant-focused research question therefore focuses on whether the waiting time differs when changing the temporal span of the DDP of interest (

The Impact of a DDP’s Temporal Span on Measurement

While various studies have focused on the effects of data donation study design characteristics on donation rates and subsequent representation issues, surprisingly little systematic work has focused on investigating how changing study design characteristics, such as the DDP’s temporal span, affects a study in terms of how accurately and completely the concepts of interest can be measured. We foresee several ways in which the temporal span of a DDP can affect data quality in terms of measurement.

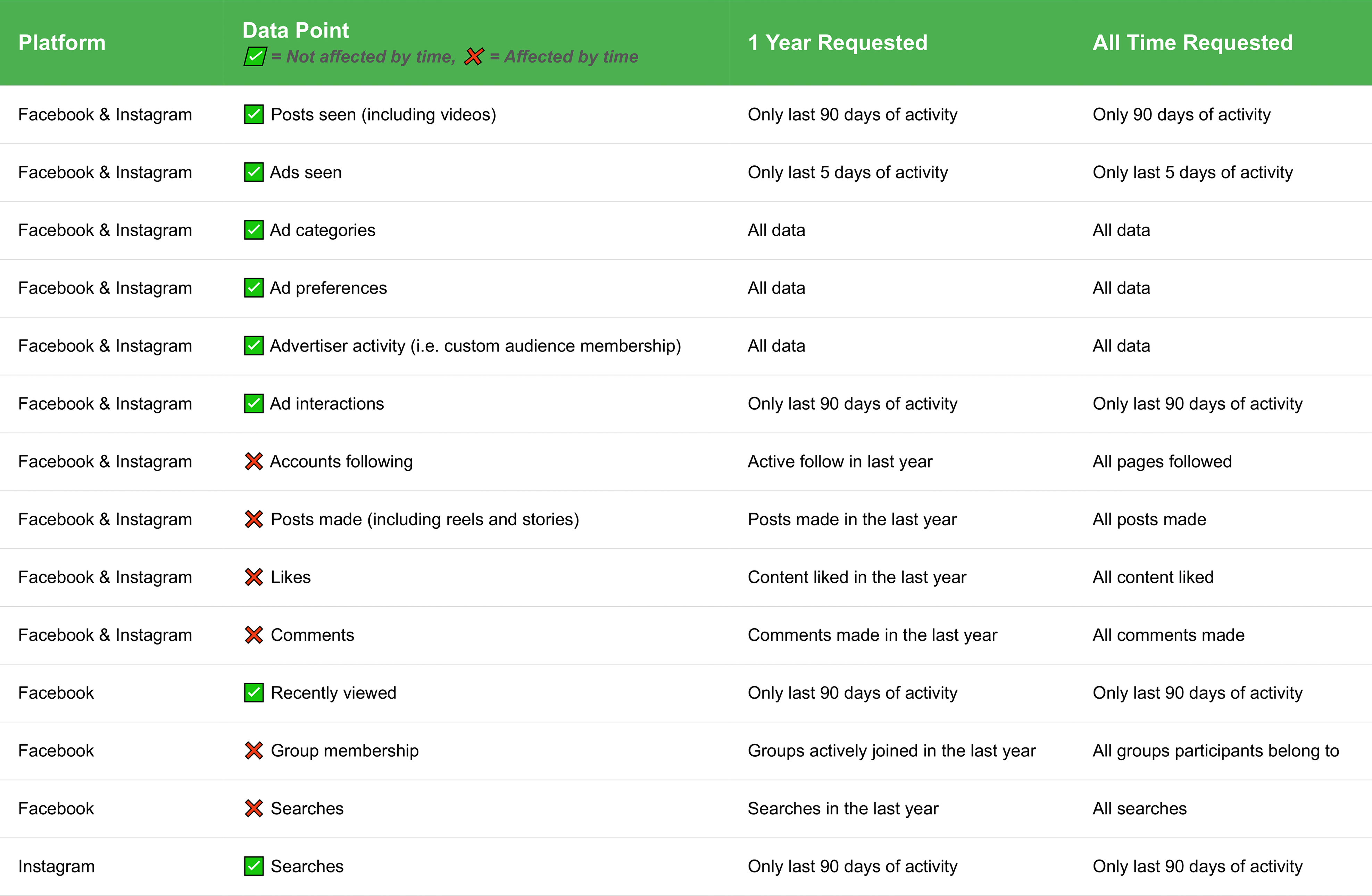

A first potential issue is the completeness of the DDP. In this context, researchers might assume that an “all time” data request will yield a comprehensive archive containing all data since the account creation date. However, platform-specific data retention policies or technical limitations can curtail what will be provided in the DDP in practice. For example, Loecherbach et al. (2024) highlight that Google Takeout only contains Chrome browsing history of the last 3 months, regardless of whether the participants changed the temporal span of the DDP to “all time.” This illustrates that there can be a disconnect between what is requested and what will be provided. Such discrepancies can lead to systematic missingness for certain periods or for certain platform features. Therefore, our first research question regarding measurement issues focuses on understanding which platform features found in a DDP are affected by changing the temporal span of the DDP (

Another potential issue is that the temporal span of a DDP can alter the actual concept that is measured by specific elements within that DDP. While some studies have focused on performing inventories of which platform features are available in DDPs of different platforms (see e.g., Hase et al., 2024; Janssen et al., 2025), to our knowledge no documentation is available on how the concepts that can be measured using these features are sensitive to the timespan of the DDP. To illustrate, a researcher can be interested in seemingly static concepts such as the accounts a participant follows on a platform, the groups they are a member of, or the pages they have liked. Because of past API and scraping based research that are able to gather the list of accounts followed as a static concept (see e.g., Barrie & Ho 2021; Bakshy et al., 2015), researchers using the DDP method might expect to find a current snapshot. However, a platform may treat these as a series of discrete, time-stamped events (e.g., “User X followed Account Y on Date Z”; “User X joined Group Y on date Z″). In such cases, requesting a DDP with a temporal span of the last year would yield only those accounts followed, groups joined, or pages liked within that specific timeframe, rather than a comprehensive list of current affiliations. As a result, the interpretation of the concept that is measured with this list is fundamentally different compared to the same list in a DDP that has a temporal span of “all time”: it measures the recent activity related to that state instead of the current state. Therefore, our third research question regarding measurement issues focuses on understanding how the temporal span of DDPs changes the concepts measured within those DDPs (

Data and Methods

Data Collection

To study the impact of data package size on participants’ willingness to share their data, an experiment was conducted on all members of the Centerpanel, an online research panel of Dutch households that was based on a probability sample (but did not undergo regular refreshments), managed by Centerdata (CentERdata, 2024). The Centerpanel is not based on self-selection but recruited from a random address sample drawn from national postal drop-off points. For this study, a total of 1,196 household members were contacted online and invited to participate in a data donation study between September 13 and 24, 2024, where participants could receive additional incentives of 5 euros besides the regular incentives of 5 euro they received per survey by sharing their Facebook, Instagram, Google, and/or TikTok data. 978 responded to the survey (response rate: 81.8%), and of this initial sample, 701 completed the survey. While data from all four platforms were collected, this study focuses on Facebook and Instagram, as they are the two platforms that default to a single year of data. Of the 277 non-completers, 276 had social media and were eligible for the donation task but broke off at some point during the process. The remaining 115 participants who did not use any of the four study platforms were ineligible for the donation task, but almost all of them (n = 114) completed the background questionnaire and are therefore counted among the 701 completers, not the 277 non-completers.

After a detailed explanation of how digital trace data donations work (see Appendix 3), participants were first asked whether they were willing to share the data collected by the platforms under study. Those who consented were guided through a series of step-by-step instructions. Participants were prompted to log into their accounts, navigate to the “Data Requests” section of each platform they used and from which they were willing to request data, and select the specific data types required by the research project. After initiating the data request, participants were asked to wait for an email or a notification from the platform indicating that their data was ready for download. Once the data became available, participants were guided through the process of downloading it and processing it locally in the Port system (Boeschoten et al., 2023). This system used local processing (i.e., running scripts locally in the user’s browser without sending data to university servers) to perform a set of actions to the data before presenting it to the participant. It first minimized the data, excluding all non-textual data (images, videos, files) and selecting only the fields that were predetermined by the researchers. It then performed steps aimed at data anonymization, including the removal of any instances of phone numbers, emails, and predefined pornographic content. After these steps, the system displayed the data to participants, allowing them to view and search their data and delete any entries they did not wish to share. Finally, after reviewing their data, participants were able to donate it to the researchers.

Participants followed this process for each of the four platforms they had previously indicated to use. We randomized the order of the platforms from which participants were asked to request data.

Experimental Treatment

To empirically assess the effect of requesting larger data donation packages, participants donating Facebook or Instagram were randomly assigned to either a treatment or a control condition, after they indicated that they used these platforms. In the treatment condition, participants were prompted to change the default data request period from “1 year” to “all time.” In the control condition, participants were not given any additional instructions. The default data request remained set to “1 year.”

Survey Measurements

After participants indicated that they had received an email or notification informing them their data package was ready to download, they were asked how long they had to wait for the package to arrive (see Appendix 1). Their responses were recorded and subsequently categorized into a binary measure: “within 1 day” or “more than a day.”

At the end of the survey, participants also answered two items measuring their general privacy concerns. Participants were asked to self-assess their level of worry regarding two aspects of online privacy: (1) websites collecting excessive personal information (from Xu et al., 2008) and (2) overall privacy when using the internet (from Buchanan et al., 2007). Both items were presented in tabular format and rated on a 7-point Likert scale ranging from 1 = Not at all worried to 7 = Very worried. These measures were selected for their established use in prior research and their brevity, which allowed us to capture general online privacy concerns without overburdening participants. At the same time, we acknowledge that privacy is context-dependent (Masur, 2018), and concerns may vary depending on whether data are shared with corporations, governments, or researchers. We therefore interpret these items as indicators of baseline online privacy concerns rather than context-specific attitudes toward data donation. As a criterion validity check, we also examined whether scores on these items predicted willingness to donate data among platform users who completed the survey; these results are reported in Appendix 11.

Analysis Plan

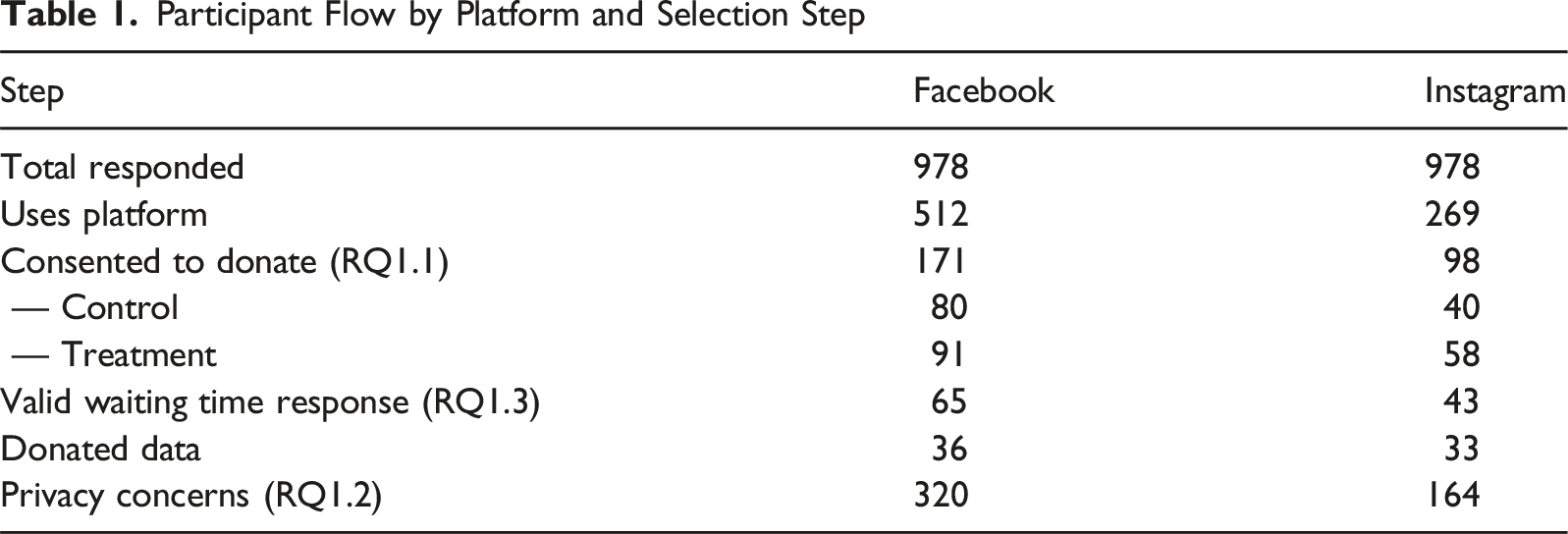

Participant Flow by Platform and Selection Step

We first assessed the impact of longer data request periods on participants. Our primary outcome is the effect of requesting larger data donation packages on data donation rates. We first calculated the proportion of participants who successfully completed data donations in both the control and treatment conditions, separately for Facebook and Instagram data. These comparisons were performed using two approaches: (1) including all participants who consented to donate data, regardless of the order in which data requests were made, and (2) restricting the analysis to participants who were first asked to donate Facebook or Instagram data, respectively. This second approach controls for potential order effects, acknowledging that participants who are asked to donate multiple data packages may become less likely to continue donating. This narrower comparison is also relevant because most data donation studies typically involve only one platform. Group differences were assessed using one-sided proportion (z) tests.

We next examined two potential explanations for differences in donation rates: privacy concerns and longer processing times. To examine the effect on privacy concerns, we compared the average privacy concern score for the control and treatment groups using two-sided t-tests. To examine whether requesting a larger data package led to longer processing times, we calculated the proportion of participants in each condition (control vs. treatment) who experienced delays of at least 1 day and compared these proportions using a test of proportions. This analysis was conducted separately for Facebook and Instagram data, and we further stratified the analysis by whether participants were first asked to donate data from these platforms.

Next, we investigate the effects of longer data request periods on the data itself. To examine whether requesting larger data packages resulted in actually receiving larger files and whether larger files were associated with longer processing times, we did the following. First, we compared the average size (in MB) of data packages received by participants in the control and treatment conditions for both Facebook and Instagram (using independent samples t-tests), as well as the relationship between package size and processing time. Second, we further assessed the effect of requesting data packages for longer periods of time on the data by visually inspecting differences in the volume of data between packages.

A full replication package, including code and anonymized data for these analyses can be found in: https://doi.org/10.17605/OSF.IO/ZJUWR.

Results

512 respondents reported having Facebook and 269 having Instagram. For Facebook, 171 consented to sharing their data; 98 for Instagram. In total, 158 data packages were donated by 112 participants—36 donated their Facebook data, 33 donated their Instagram data (87 donated their Google data and 2 donated their TikTok data, but these are not the focus of this study). 74 participants donated only one package, 30 donated 2 packages, and 8 participants donated 3 packages.

Effect on Participants

We first examine the effect that requesting DDPs covering longer time periods have on participants—specifically, whether it influences: overall data donation rates, participants’ privacy concerns, and self-reported waiting times for the data packages.

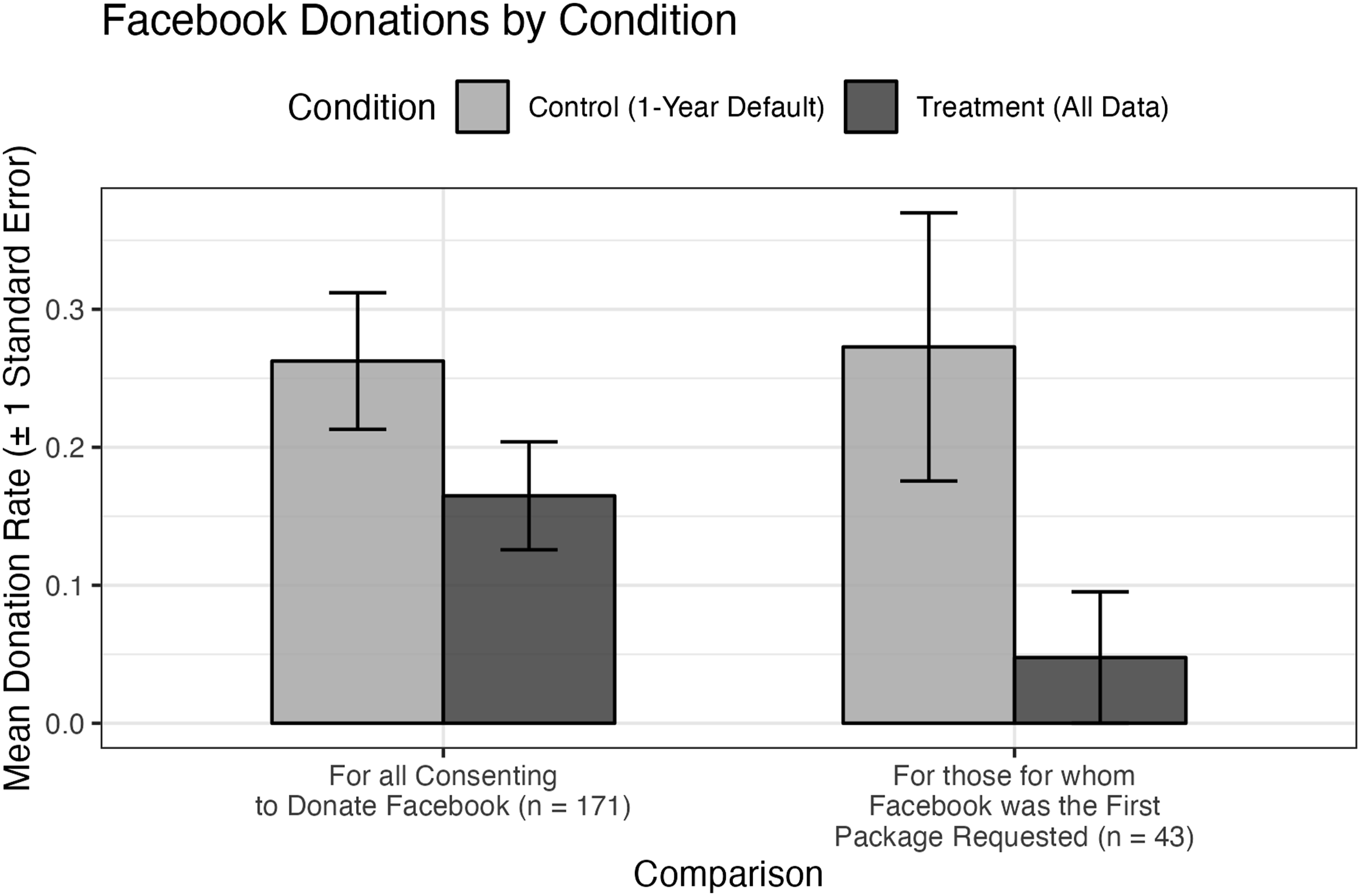

Requesting Packages Covering Longer Time Periods Leads to Fewer Donations

Figure 1 shows data donation rates for two groups: participants in the control condition, who were asked to donate the default 1 year of Facebook data, and those in the treatment condition, who were asked to actively request their full Facebook history. We conducted two analyses. First, we compared donation rates across all participants who consented to Facebook data donation, regardless of whether it was their first, second, third, or fourth data request. Second, we focused only on participants who were first asked to donate Facebook data. Figure 1 shows that, overall, 26% of participants in the control group who consented to donate successfully completed the data donation, compared to 16% in the treatment group. This difference was not statistically significant (z-test: p = 0.08). Among those who were assigned to donate Facebook data first, 6 out of 22 participants in the control group (27%) completed the data donation, compared to only 1 out of 21 participants in the treatment group (6%). This difference was not statistically significant (z-test: p = 0.056). Facebook data donation rates by experimental condition. Note. Mean Facebook data donation rates (±1 SE) by experimental condition. The left panel shows participants for whom Facebook was the first platform requested (n = 43: n = 22 control, n = 21 treatment); the right panel shows all participants who consented to donate their Facebook data (n = 171: n = 80 control, n = 91 treatment)

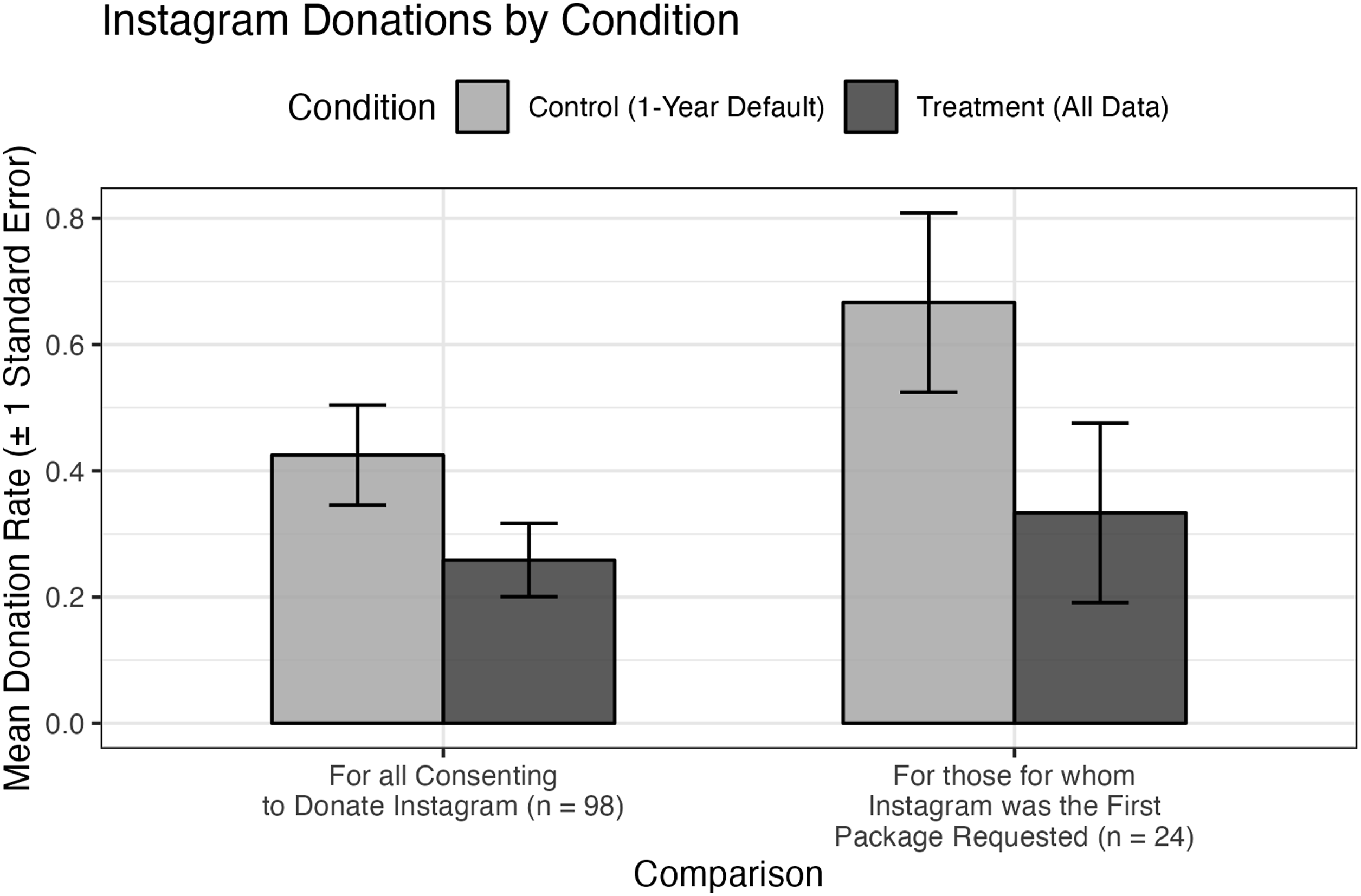

Figure 2 shows that, overall, 43% of participants in the control group who consented to donate their Instagram data successfully completed the donation, compared to 26% in the treatment group. While this difference was not statistically significant (z-test: p = 0.066), the 17 percentage point gap may still be substantively meaningful. Among those assigned to donate Instagram first, 67% of the control group completed the data donation, compared to 33% of the treatment group. This 34 percentage point difference was not statistically significant (z-test: p = 0.1103), though the 34 percentage point gap is substantively large. Instagram data donation rates by experimental condition. Note. Mean Instagram data donation rates (±1 SE) by experimental condition. The left panel shows participants for whom Instagram was the first platform requested (n = 24: n = 12 control, n = 12 treatment); the right panel shows all participants who consented to donate their Instagram data (n = 98: n = 40 control, n = 58 treatment)

In short, to answer RQ1.1 on the effects of DDPs spanning larger time periods, we found some significant effects for participants who were asked to donate Facebook and Instagram data first. For other groups, the differences were in the expected direction but not statistically significant. The size of the difference, and level of significance, as well as the fact that significance testing is likely limited by the sample size, suggests that this effect is worth further investigation.

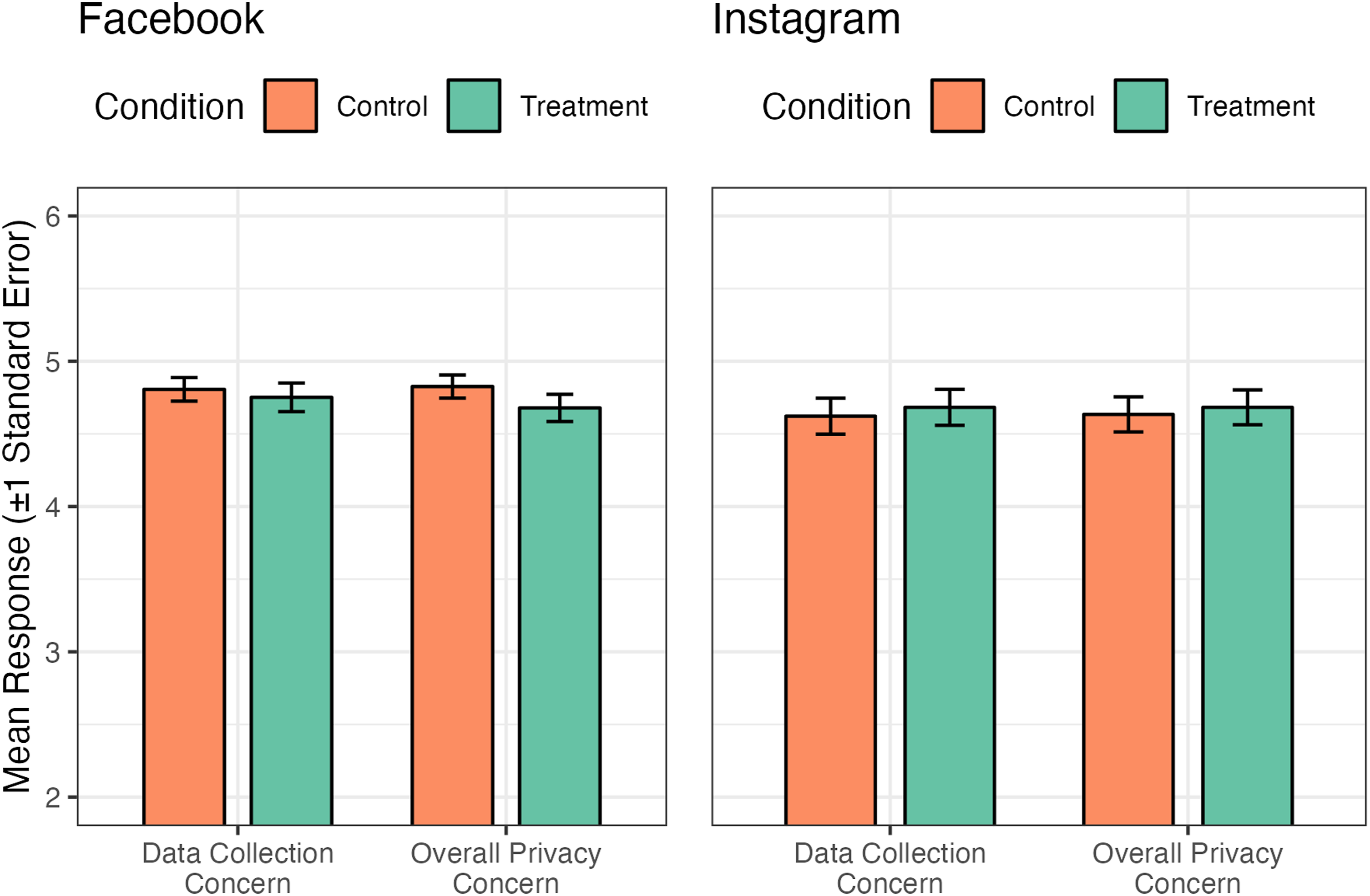

Requesting Packages Covering Longer Time Periods is Not Associated with Higher Privacy Concern

A possible explanation for the lower success rates in donating data when requesting the entire account history is heightened privacy concerns by individuals (RQ1.2). A potential concern is that requesting a longer data history may be associated with participants’ higher levels of privacy concerns, potentially leading to higher attrition in the treatment condition. To test this, we compare mean privacy concern scores between the control and treatment groups. For this analysis, we focus on participants who were asked to donated these packages, and who provided an answer to the privacy questions: of the 504 participants who were asked to donate their Instagram or Facebook data, 347 completed the privacy questions and were used for this analysis. Figure 3 presents the results. The left set of bars (concerns about websites collecting too much information from users) and the right set (overall privacy concerns online) show mean privacy concern scores for each condition across Instagram and Facebook, which were captured on a 7-point scale. For Facebook, the scores are similar between the control and treatment groups: 4.81 and 4.75 for online data collection concerns (p = 0.722), and 4.83 and 4.68 (p = 0.323) for overall privacy concerns. For Instagram, a similar pattern emerges: 4.62 and 4.68 (p = 0.773) for concerns about online data collection, and 4.63 and 4.68 (p = 0.813) for general online privacy concerns. These findings suggest that requesting a larger dataset is not significantly associated with higher levels of privacy concerns, at least as measured by these two variables. As a criterion validity check for the privacy items, we additionally tested whether privacy concern scores predicted willingness to donate data more generally. Among Facebook platform users who completed the survey (n = 320), higher privacy concern was associated with a lower likelihood of consenting to donate, in the expected direction, for both items (Privacy1: t = −1.93, p = .055, d = −0.29; Privacy2: t = −1.84, p = .067, d = −0.28). Although these effects are marginal, the direction and effect sizes are consistent with prior research and support the items’ suitability. No significant association was observed for Instagram (both ps > .60), likely due to insufficient power given the smaller sample of consenters (n = 32 of 164). Full results are provided in Appendix 11. As analyses were performed with individuals who did and did not consent to donating their data, the sample size for this analysis is much larger, with 323 for Facebook, and 164 for Instagram (please see Appendix 8 for details of the analysis). Reported participant privacy concerns by platform and experimental condition. Note. Figure 3 displays mean responses (±1 SE) for two privacy concern items, measured on a 7-point scale (1 = no concern at all, 7 = very much concern): (1) “I am concerned about threats to my personal privacy online” (Data Collection Concern) and (2) “I am concerned that online companies collect data about me” (Overall Privacy Concern). The figure is based on all participants who consented to donate their data, regardless of whether they successfully completed the donation (Facebook: n = 320; Instagram: n = 164). A separate analysis restricted to non-donors among consenters yielded very few valid privacy responses (Facebook: n = 17; Instagram: n = 6), as privacy items were measured at the end of the survey and were therefore not reached by many non-completers. Full results are reported in Appendix 8

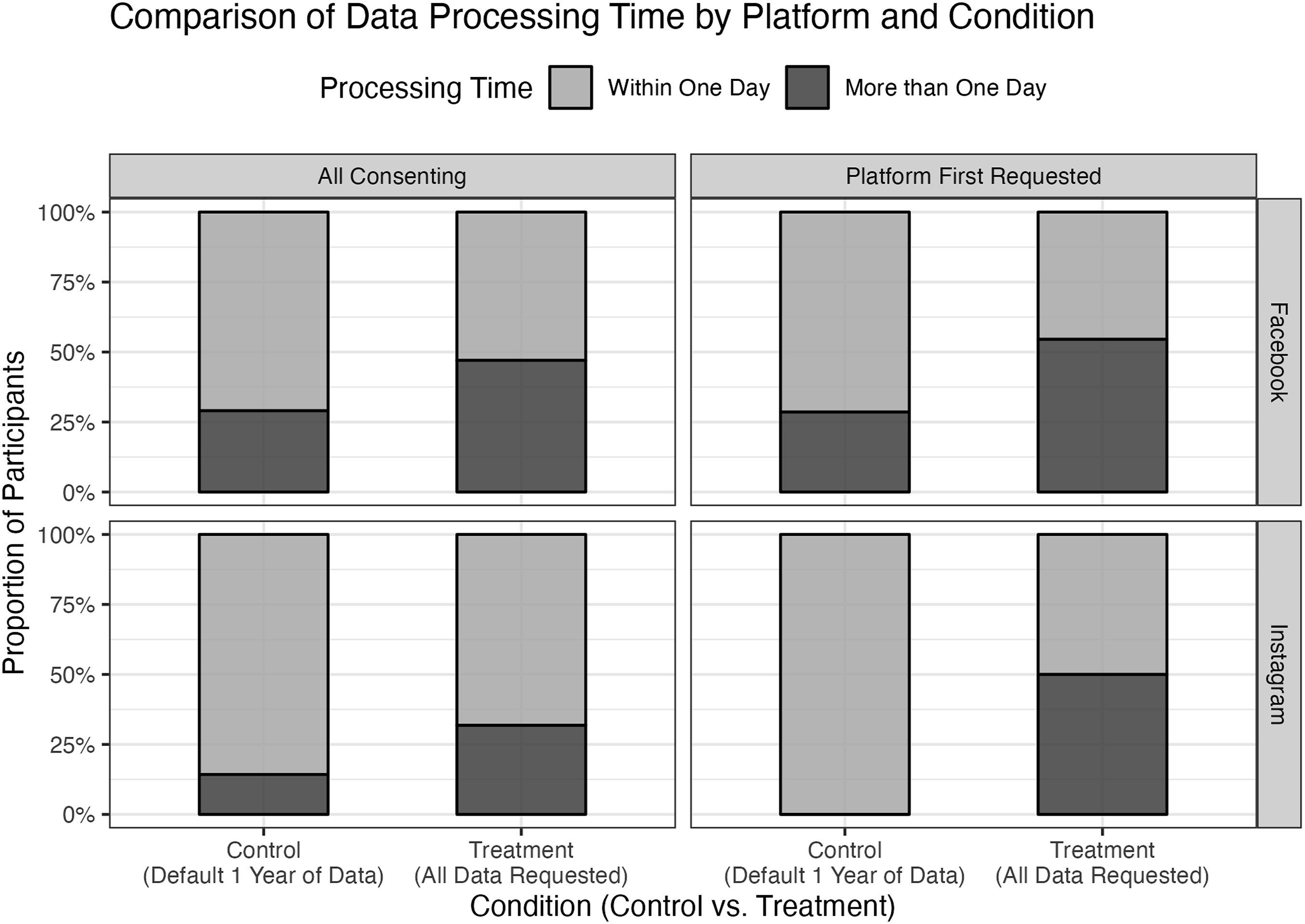

Requesting Packages Covering Longer Time Periods Results in Longer Waiting times

One possible explanation for the lower success rates in donating data when requesting the entire account history is the increased processing time required by the platform. We investigate whether requesting data packages covering longer time periods leads to longer waiting periods (RQ1.3). This could lead to higher attrition rates as participants drop out of the study while waiting for these DDPs.

This suggestion is tested in Figure 4. Participants were asked to report how much time elapsed before they received a notification that their data package was ready. Responses were categorized based on whether the data was returned within a day or took longer. However, several respondents did not provide an answer for this question, further reducing the sample for analysis. Comparison of data processing time by platform and experimental condition

The upper row of Figure 4 focuses on Facebook data. Among the 31 participants in the control group that reported how long it took to receive the data package, 9 (29%) reported waiting more than a day for their data package; in the treatment group of 34 participants, 22 reported waiting more than a day (47%); these differences were not statistically significant (z-test: p = 0.0678. When considering only participants who were first asked to donate Facebook data, 2 of 7 participants (28%) in the control group reported waiting more than a day, compared to 6 out of 11 participants (55%) in the treatment group. These differences were not statistically significant (z-test: p = 0.14). However, these figures likely underestimate the actual delay. The 55% represents only those who waited longer than a day and still completed the donation process. Many participants who experienced longer delays may have abandoned the process entirely, meaning their responses were never recorded.

The bottom panel of Figure 4 presents the same analysis for Instagram data. Among 21 participants in the control condition, 3 (14%) reported waiting more than a day for their data. This percentage was higher in the treatment condition, where 18 out of 22 (32%) of participants experienced delays of more than a day; these differences were not statistically significant (z-test: p = 0.0869). Restricting the analysis to participants who were first asked to donate Instagram data, no control group participant out of the 10 waited longer than a day, whereas in the treatment group, 3 out of 10 50% waited longer than a day. These differences were statistically significant (z-test: p = 0.007). Nevertheless, due to the limited sample size, it is likely these tests do not have the power needed to detect statistically significant effects. Statistical significance (and lack of) should therefore be interpreted with caution. All details of these comparisons can be found in Appendix 7. Robustness tests were carried out for these analyses by using the original 4-category response scale and reported in Appendix 2—results remain largely the same.

Effects on Data Received

Next, we turn to the effects of requesting longer-spanning social media data packages on the quantity and quality of the data being received. Specifically, we examine how being asked to request the “all time” version of the package influences (1) the amount of data received (RQ2.2); (2) what DDP data points are affected (RQ2.1); and (3) the concepts that can be measured within those DDPs (RQ2.3).

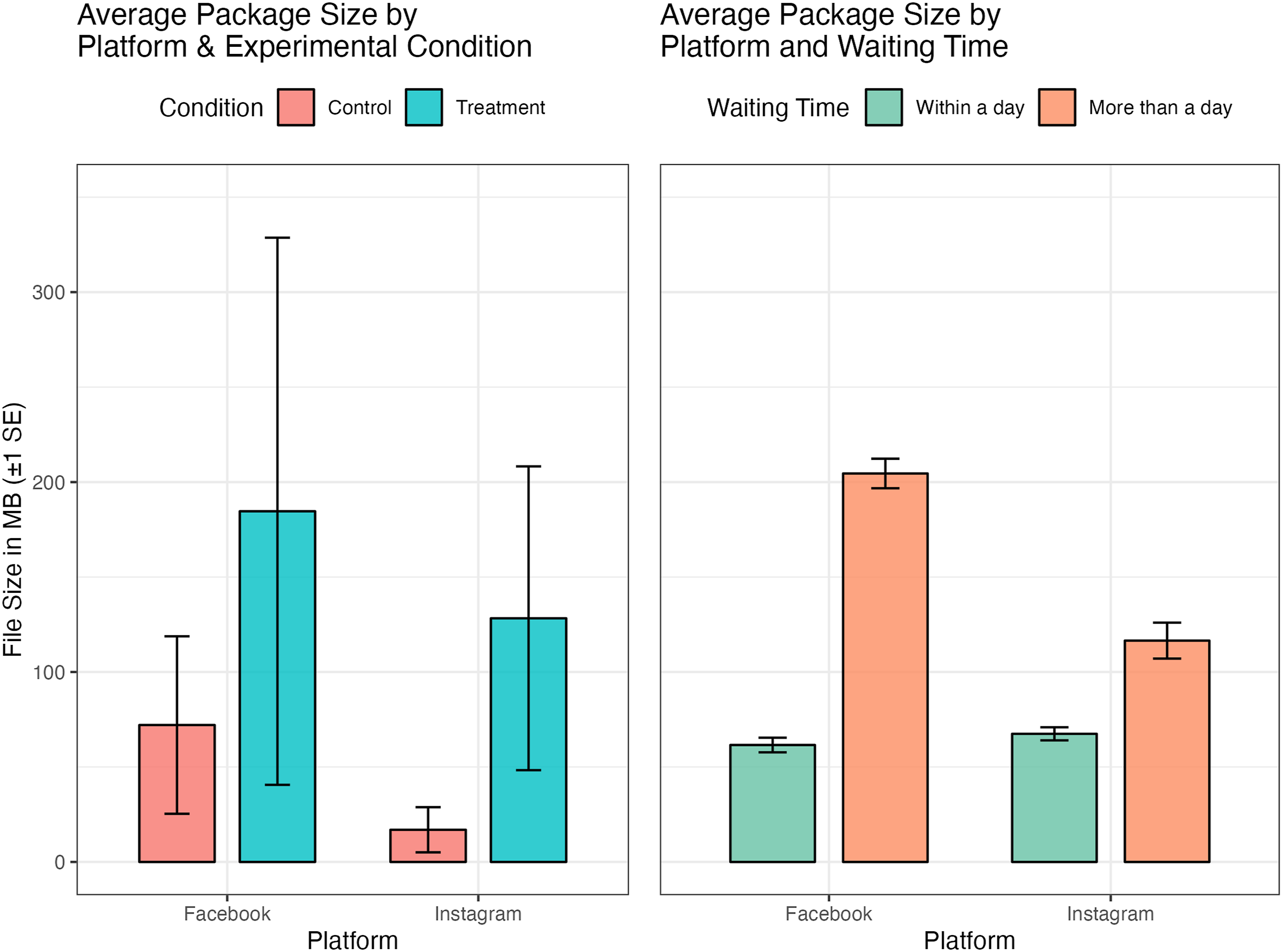

Requesting Packages Covering Longer Time Periods Results in Larger Data Packages

One potential barrier to successful data donation is the size of the requested data package (RQ2.2). If requesting a longer account history results in significantly larger files, and if larger files take longer to be delivered by the platform, this could explain higher attrition rates in the treatment condition. We test these two claims: (1) whether requesting more data results in larger data packages and (2) whether larger packages take longer to be delivered. Figure 5 presents evidence supporting both clams. The left panel illustrates the average package size (in MB) for Facebook and Instagram data across experimental conditions (control vs. treatment; see Appendix 5 for distribution of file sizes). Participants in the treatment condition, who were asked to donate their entire account history, received larger data packages compared to those in the control condition, who were asked to donate only a 1-year history. This pattern holds for both Facebook (184 mb vs 72 mb, p = 0.2304) and Instagram (128 vs 17 mb, p = 0.021), with Facebook data packages showing a wider range of variability in size. In a way, this can be interpreted as a manipulation check, showing that asking participants to request data for a more expansive period of time results in more data. Data package size by platform, experimental condition, and processing time

The right panel of Figure 5 further examines whether larger data packages are associated with longer processing times. Here, package sizes are categorized based on whether they were returned within a day or took longer. The results indicate a clear relationship: larger data packages are significantly more likely to take longer than a day to be processed. This effect is particularly pronounced for Facebook, where data packages that took longer than a day were, on average, substantially larger (206 MB) than those received within a day (62 MB). A similar, though less extreme, pattern is observed for Instagram (116 MB vs 67 MB). While these differences are not statistically significant (p = 0.077 and p = 0.260, respectively), the size difference is substantial and warrants further investigation.

Taken together, these findings suggest that requesting a longer data history results in larger data packages and that larger packages, in turn, take longer to be processed and delivered.

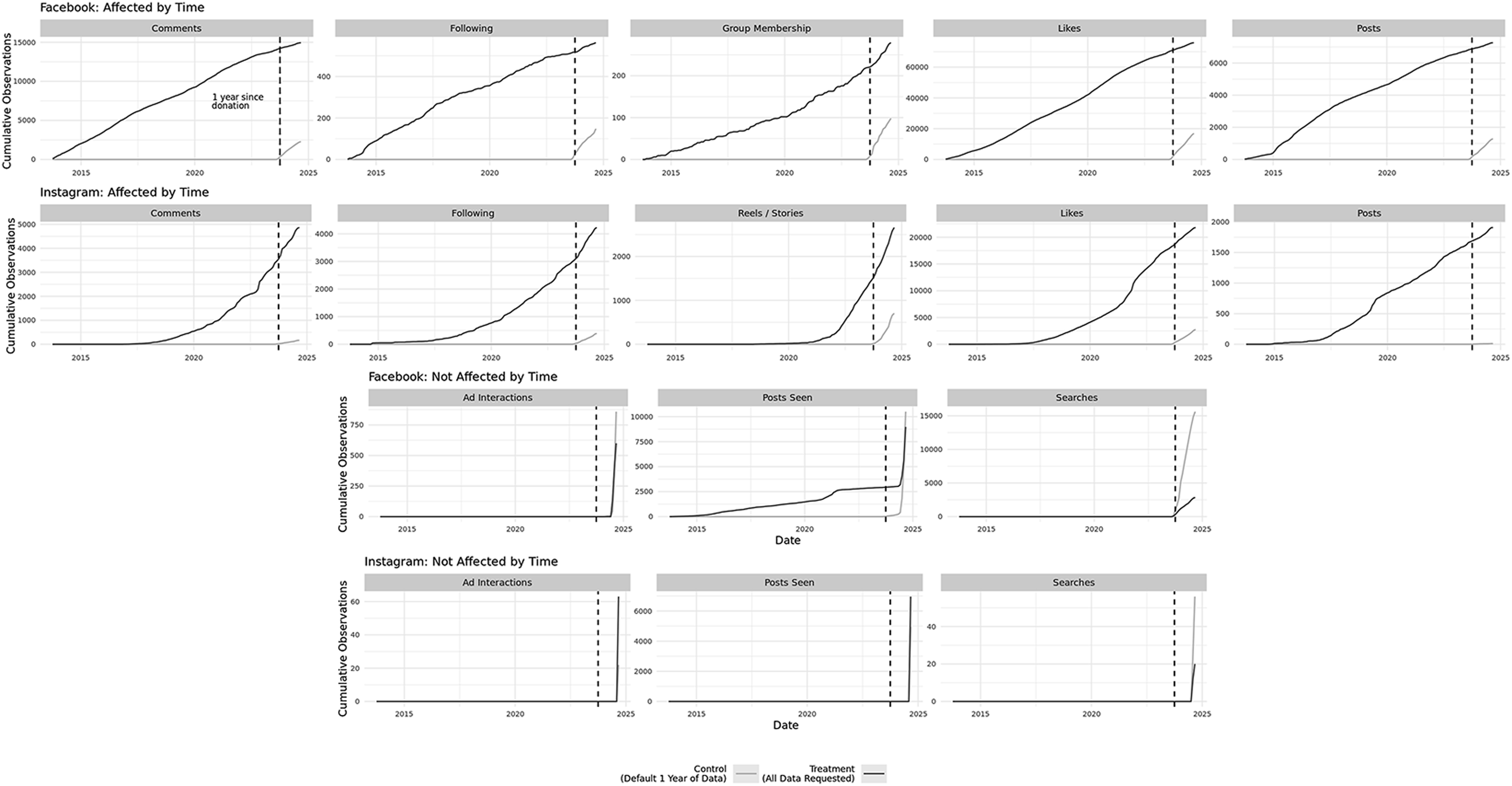

Different Time Period Requests Affect the Data Received

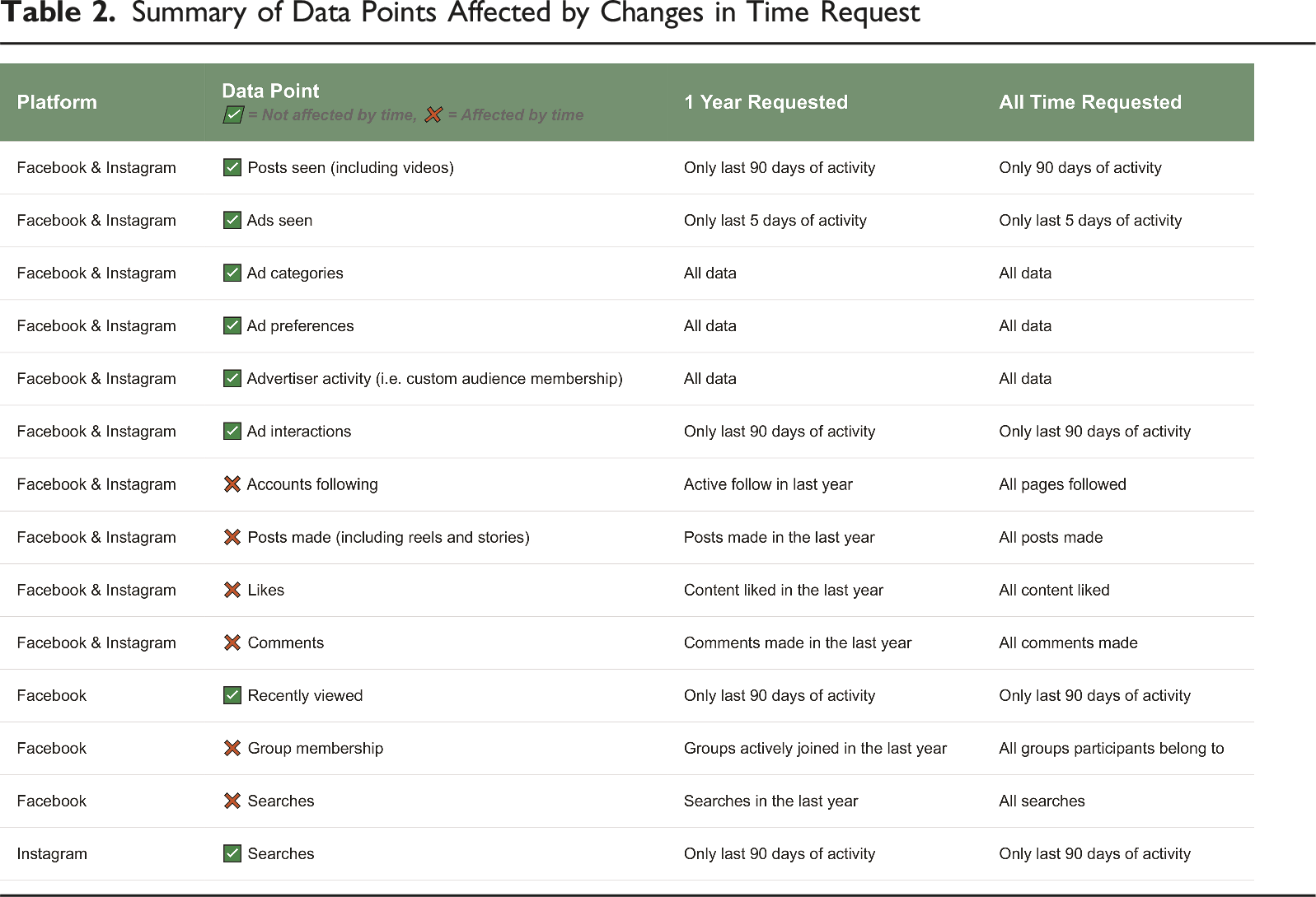

Figure 6 visualizes the cumulative number of observations retrieved from Facebook and Instagram in the treatment condition (requesting “all time” data) versus the control condition (the default 1-year timespan). Table 2 summarizes the features that show sensitivity to the temporal cut-off. Visual overview of data points affected by changes in time request Summary of Data Points Affected by Changes in Time Request

In line with RQ2.1, we observe that several platform features are clearly affected by the time limitation. On Facebook, variables such as accounts followed, group comments and posts, group memberships, likes, and posts return substantially more observations when “all time” data is requested. These differences are substantial: for example, for comments on Instagram, there were about 2000% (20 times) more observations collected in the treatment condition. These data points are therefore sensitive to the temporal scope of the DDP. In contrast, variables such as ad interactions, recently viewed posts, and searches appear to be invariant to the time period requested, yielding the same number of observations regardless of whether the user donates 1 year or their entire history.

Crucially, this sensitivity is not just quantitative but conceptual (RQ2.3). For features like pages followed or group memberships, platforms treat these as time-stamped events, not as static states. For instance, when researchers request only the past year of data, they receive a list of pages or groups the user has followed or joined within that year, rather than a current snapshot of the user’s affiliations. This distinction means that what is often treated in research as a stable indicator of identity or interest is, in fact, measuring recent changes to identity-related behaviors, highlighting a key conceptual shift based on temporal scope.

On Instagram, a similar pattern emerges. Features affected by the time limit include pages followed, liked comments, liked posts and stories, post comments, posts, reels, and stories. Conversely, features such as account searches, ads clicked, ads and posts viewed, videos watched, and keyword searches appear temporally stable, returning the same data regardless of whether the timespan is 1 year or all time.

These results speak directly to RQ2.2 as well: requesting DDPs with a longer temporal span generally yields higher data volumes. However, this increase is not universal across features, underlining the importance of understanding platform-specific data retention and structuring practices.

In sum, the temporal scope of a DDP request substantially impacts both the volume of data researchers receive and the conceptual interpretation of key variables. Researchers designing DDP-based studies must carefully consider not just whether they are getting more data, but whether they are getting the right kind of data to capture the constructs they aim to measure.

Discussion

Digital trace data donation offers researchers a unique opportunity to study human behavior and social processes in a naturalistic, unobtrusive, and temporally rich manner. Compared to traditional survey methods, such data can mitigate issues like recall error and social desirability bias, while also providing behavioral measures that are difficult to capture through self-report. With this promise comes a temptation: to request the most comprehensive data possible. Longer time spans, in particular, allow for the examination of behavioral dynamics over time, including responses to exogenous shocks such as election cycles, pandemics, or media controversies. Yet, our findings show that requesting more data is not without its costs—both in terms of participant burden and the overall feasibility of data donation studies. In this paper, we examine these tensions by nesting an experiment in a data donation study asking participants to share their Facebook and Instagram data. Participants in the treatment condition were asked to request all the data available for their accounts, while those in the control condition were asked to donate the default 1-year of data.

A central finding from our experimental design is that requests for longer time spans (i.e., full account history data) reduce participant compliance, varying from 10% to 34%, depending on the platform and analysis. Interestingly, these effects were stronger when participants were sharing their data for the first time instead of for the second or third time—this suggests that difficulties in donating data are compounded when participants are unfamiliar with the process (a finding initial research is confirming; Wedel & Ohme, 2025). Contrary to what might be expected, the identified drop in compliance does not appear to be associated with heightened privacy concerns. Participants who donated the data did not systematically express greater privacy concerns when asked to share their “all time” data compared to donating only the past year. Instead, the issue appears more procedural: larger packages take significantly longer for platforms like Facebook and Instagram to process and deliver. These delays—often lasting several days—introduce critical points of attrition within the already demanding multi-step data donation process. It is important to note, however, that privacy concerns were measured after the donation task, possibly missing participants who attrited earlier due to high concerns; future studies should assess privacy prior to donation to separate attitudinal from procedural barriers. Nonetheless, as a partial validity check, we examined whether privacy concern scores predicted consent to donate among platform users who completed the survey, and found the expected negative associations for Facebook (see Appendix 11).

The methodological implications extend beyond participation rates. Lower compliance in the “all time” condition suggests a risk of systematic non-donation bias. That is, individuals who complete the data donation process under more demanding conditions may differ meaningfully from those who drop out. This has been observed in related domains: for example, Keusch, Pankowska, et al. (2024) found that older adults who were healthier and more mobile were more likely to donate physical activity data. Similarly, in our context, it is plausible that only the most digitally literate or motivated participants endure the procedural delays of large-package donations.

This finding has clear implications for the design of data donation studies and other participant-based digital trace methodologies (Adam et al., 2024; de León et al., 2024; Gil-López et al., 2023). If requesting longer time periods results in increased dropout simply because participants lose motivation or forget to return to the task, then interventions aimed at reducing turnaround times or keeping participants engaged during waiting periods could potentially improve compliance. Still, the fact that platform-side latency alone can shape the representativeness of donated data raises important methodological concerns, especially since researchers often have limited control over these platform-driven timelines. In fact, researchers have suggested that platforms potentially create obstacles throughout the data donation process (Valkenburg et al., 2025; Hase et al., 2024), including failing to provide “information on when and how subjects can access their data” meaning that “neither platform users nor researchers can estimate when DDPs will be ready” (Hase et al., 2024), with questions being raised as to their compliance with data-sharing obligations (Janssen et al., 2025).

Although requesting shorter time periods improves compliance and lowers participant burden, it comes at a cost. The data packages from participants in the control condition (1-year request) contained, as one could expect, only a fraction of the observations: in the most extreme case, the treatment condition was associated with a 2000% increase for the Instagram comments datapoint. This introduces risks for measurement validity, especially for researchers interested in long-term behavioral trends, baseline behavior, or rare events that may not be observable within a 1-year snapshot. This trade-off is not unique to our study. A similar tension arises in other behavioral data contexts. For instance, in app-based and wearable-based data collection, researchers frequently confront the question of how many diary entries or days of observation are enough to ensure reliable insights (e.g., Remmerswaal et al., 2025). Data donation research must now grapple with a related design dilemma: how much behavioral data is “enough,” and at what cost?

Beyond questions of participant compliance and differences in volume of data received, our findings also point to important, and underexplored, implications for measurement validity in data donation studies. Specifically, the temporal span of requested DDPs can significantly influence not only the quantity but also the quality and interpretability of the data researchers receive. First, while researchers may assume that requesting “all time” data will yield a complete historical archive, platform-side data retention policies can limit what is actually included. Even when users request data covering their full account history, platforms may only return a subset, leading to systematic and often opaque missingness (Hase et al., 2024). This was observed in this study, with numerous data points (such as posts seen, ad interactions, searches) only providing a small window of data, regardless of whether 1 year or full data was donated. In this case, it makes little difference if researchers request only a year or the full repository of data—they will only receive a couple of days of activity. Nevertheless, it conceals a more nefarious point: platforms may either not be retaining exposure-related activities beyond a very restricted time period, or may not be living up to their data-sharing responsibilities.

Most critically, the temporal span can shift the meaning of key concepts. For instance, our study shows that a list of followed accounts or liked pages retrieved from a 1-year DDP does not reflect a participant’s current affiliations, but rather only those followed within that year—effectively transforming what has commonly been used as a “static” measure (e.g., Bakshy et al., 2015) into a record of recent behavior. This disconnect risks misinterpretation if researchers fail to consider how the scope of the data request redefines the concept being measured. These findings underscore the need for researchers to critically assess not only what data they request, but what that data actually represents, and how its structure and coverage may shape the validity of their inferences.

Researchers may consider tailoring data requests to participant subgroups (de León et al., 2025). For example, shorter time spans may suffice for exploratory or low-risk research with vulnerable populations, while longer spans might be justified for studying phenomena that unfold over time, provided that researchers invest in strategies to mitigate the increased burden, such as reminder systems, progress tracking, or staged requests. These tailored approaches align with similar practices in experience sampling and mobile sensing studies, where the intensity of data collection is often calibrated to participant capacity and the study goals.

It is relevant to note, however, that this study was not pre-registered, and as such, its exploratory findings should be interpreted with caution. The measurement of privacy concerns followed earlier research but was focused on a broader set of concerns by participants, covering not only privacy concerns raised by the data donation process. Participants with high privacy concerns might have attrited disproportionately before completing the donation task. The findings should, therefore, be replicated by future research with concerns about privacy measured prior to donation. Additionally, our sample was limited to participants from a single platform-facilitated data donation interface, and platform-specific dynamics (e.g., Facebook’s data delivery time or interface design) may not generalize to other platforms or donation tools. Future work could benefit from cross-platform replications and more direct measurement of participant motivations and perceived burden at each stage of the data donation process. Furthermore, several analyses are based on a limited sample of 36 Facebook and 33 Instagram DDPs; this raises questions of statistical power, as small effects would be impossible to detect through null hypothesis significance testing. Finally, while the TE framework is used as an organizing lens, the empirical scope of this study is limited to specific components: participation-related representation errors and measurement validity. Other sources of representation error identified in the TE framework—such as frame error, sampling error, or adjustment error—are beyond the scope of this study and would benefit from targeted investigation in future work.

Further research might also explore how different types of participants (e.g., younger versus older users, political versus apolitical individuals) respond to varying data request demands, or how interface design changes (e.g., progress bars, deadline reminders, or just-in-time nudges) might improve retention during platform wait times. Additionally, since this study only examined two timespan conditions (1 year versus all-time), it would be interesting to explore to what extent the found differences extrapolate to other time span options (such as 1 week or 1 month), since this could further alter study designs. To illustrate: Would a data request with a time span of a week result in a DDP that is immediately ready for download? And what concepts can still meaningfully be measured through such DDP? Researchers regularly make use of study designs where ESM methods are employed for a number of days or weeks, followed by a data donation at the end of the study. If in such a case a DDP with only data of last week or last month suffices, we now know that altering the request settings as such can substantively improve the response rates for that data donation request.

These limitations notwithstanding, this study contributes to a growing methodological literature that examines the conditions under which digital trace data can be ethically and effectively collected via user-centered means, as well as broader discussions of the tension between data quality and participant burden. While survey researchers have long studied the impact of questionnaire length on response rates and sample quality (Bradburn, 1978; Tourangeau et al., 2000), relatively little is known about the digital equivalent: how the scope and complexity of data requests affect who donates behavioral data and what data gets donated. Our work begins to fill this gap by empirically demonstrating how even subtle design decisions, such as the time span of a data download request, can have cascading effects on participation and data quality.

Supplemental Material

Supplemental How Much Data Should I Request? Balancing Richness and Compliance in Digital Trace Data Donations

Supplemental Material for How Much Data Should I Request? Balancing Richness and Compliance in Digital Trace Data Donations by Ernesto de León, Laura Boeschoten, Fabio Votta, Joris Mulder, Bella Struminskaya, Daniel Oberski, Theo Araujo, and Claes de Vreese in Social Science Computer Review

Footnotes

Funding

The work was supported by the Sociale en Geesteswetenschappen, NWO, through the Public Values in the Algorithmic Society (ALGOSOC) grant 024.005.017.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.