Abstract

Data donation, a research approach in which users voluntarily contribute their personal digital data, offers a solution to the limitations of traditional self-reporting and digital trace methods by enabling the collection of comprehensive, ethically sourced usage information across multiple devices and digital interfaces. However, this promising method remains underutilized due to low participation rates. Therefore, this review pursued two integrated aims. First, to synthesize evidence on factors that influence three forms of participation: hypothetical willingness (stated intention in imagined scenarios), actual willingness (consent to donate when asked), and successful completion (following through with the full donation process). Second, to appraise existing workflows, frameworks, and methodological tools and integrate this appraisal with the factor synthesis to derive best practices for improving participation. We synthesized 35 articles, of which 14 examined factors influencing participation and 21 provide methodological guidance. Five key factors were identified: sensitivity of the data, privacy concerns, perceived autonomy and control over the donation process, complexity of the process, and participant characteristics. To overcome barriers related to these factors, we recommend maximizing participant privacy through robust data donation frameworks, enhancing transparency and user-friendliness, empowering participants by increasing autonomy and control over their data, and proactively addressing potential selection biases.

In the field of media research, self-reported measures have long served as the dominant method for assessing individuals’ media usage patterns (de Vreese & Neijens, 2016). These measures typically require participants to recall and report detailed information on usage frequency, duration, sources, and content consumed (Jones-Jang et al., 2020). However, relying on participants’ subjective recall introduces challenges in accurately capturing media usage, as it requires them to correctly recall from memory and provide unbiased estimates of their usage (de Vreese & Neijens, 2016; Ohme et al., 2021). In recent years, these challenges have been further compounded by the design features of mobile devices and applications, such as constant push notifications, which lead to subconscious and habitual phone pickups, making it even more difficult for participants to recall and report their media usage accurately (Ohme et al., 2021; Siebers et al., 2024).

The limitations of self-reported data have prompted researchers to adopt alternative methods for collecting media usage information. One such advancement is the use of digital trace data, which devices and platforms passively record during user activity. Compared to self-reported measures, digital trace data provide a more direct and continuous record of media usage, thus reducing the dependence on participants’ memory and subjective reporting (Ságvári et al., 2021). To harness the potential of digital trace data, researchers have primarily relied on two well-established data collection methods: application programming interfaces (APIs) and tracking (Ohme et al., 2021, 2023; Wu-Ouyang & Chan, 2022). APIs are platform-provided tools that enable researchers to access specific types of data in a structured, machine-readable format (Ohme et al., 2023). Tracking entails installing tracking software on users’ personal devices or employing already integrated tracking features, such as iOS Battery, iOS Screen Time, and Android’s Digital Well-Being, which constantly capture users’ media usage (Baumgartner et al., 2023; Ohme et al., 2021; Wu-Ouyang & Chan, 2022).

While APIs and tracking are promising methods to collect digital trace data, they both have their shortcomings. APIs focus on retrieving aggregated, content-level data rather than individual-level media usage and can therefore not be linked to specific users (Ohme et al., 2023). Moreover, researchers are entirely dependent on the platforms, which impose restrictions on both data types and volumes that can be accessed (Ohme et al., 2023). For instance, Facebook only allows researchers to collect public content, such as videos or posts set as publicly accessible that appear on users’ recommendation page, while private content and user behaviors remain inaccessible under the guise of privacy concerns. Twitter/X went even further by completely shutting down free access to its API in February 2023 (Coalition for Independent Technology Research, 2023).

Tracking methods provide more control for the researcher and allow for the analysis of data at the individual level. However, tracking data is often limited to generic metrics such as usage duration, frequency, or time spent on specific devices or platforms. As a result, these methods fall short in addressing research questions related to specific activities or content users are exposed to (Ohme et al., 2021). To overcome these limitations, researchers have developed advanced tracking tools that not only capture usage metrics, but also specific activities and the content users engage with. One such innovation is the Screenomics tool, developed by Reeves et al. (2020). This tool automatically takes screenshots at intervals determined by researchers, as frequently as every 5 seconds. Building on this concept, Yee et al. (2023) introduced ScreenLife Capture, an open-source Android-based tool that provides a more user-friendly interface and requires minimal programming skills, making advanced tracking methods more widely available to the research community.

However, tracking methods—both standard and advanced—come with notable financial, technical, and practical challenges. Developing or purchasing tracking software often requires substantial investments of time and resources. Moreover, to collect unbiased data on users’ online behavior, such software would ideally need to be installed on all devices they use to access a platform or service. In practice, however, tracking software is frequently installed on only a subset of devices (e.g., Barthel et al., 2020; Bosch et al., 2024). Furthermore, tracking tools often rely on a single type of browser extension or operating system—typically Android-based and not compatible with iOS devices (Bosch et al., 2024). These restrictions may result in incomplete data capture and systematic bias in media exposure estimates. Beyond these implementation constraints, analyzing trace data requires advanced analytical skills and specialized tools, adding further complexity to the research process (Sultan et al., 2023).

In addition to these financial, technical, and practical challenges, recruiting participants for tracking studies can be particularly challenging, as many perceive tracking tools as highly invasive. This concern is particularly pronounced with advanced tracking tools that automatically take screenshots every few seconds. These tools can inadvertently record private messages, personal photos, or other sensitive information without offering participants meaningful control over what is collected. The absence of such control, and the possibility of capturing highly sensitive data, raises significant ethical concerns. As a result, these methods are particularly difficult to adopt in compliance with stringent privacy and data protection frameworks such as the European General Data Protection Regulation (GDPR) or national or regional privacy laws. Taken together, these challenges highlight the need for alternative methods that strike a more sustainable balance between data accuracy, participant privacy, and practical feasibility.

A Promising Digital Trace Data Collection Method: Data Donation

In response to the challenges posed by tracking methods and APIs—ranging from limitations in capturing comprehensive usage patterns to concerns about privacy and perceived invasiveness—a third digital trace data collection method known as “data donation” has emerged as a compelling alternative. Leveraging regulatory frameworks like the GDPR, which require digital platforms to provide users access to detailed records of their profiles and online activities, researchers can invite users to request their data download packages (DDPs) from platforms and voluntarily donate these for research purposes. Users can typically access their DDPs through in-app tools provided by platforms such as Instagram or TikTok. These DDPs may contain a wide range of granular data types, such as social media posts, direct messages, search history, watch history, likes, and comments. Importantly, the process of data donation empowers users to review and selectively share their data, allowing for a more privacy-conscious and transparent form of data sharing.

Compared to APIs, data donation allows for the retrieval of non-public content with participants’ active consent, ensuring they are fully aware and in control of the information they choose to share. This level of user agency enhances trust and meets the increasing call for participant empowerment in data-driven research. Furthermore, compared to tracking tools, which are typically restricted to installing software on a specific device, data donation is inherently more flexible, accommodating data collection across interfaces and devices, offering a more complete depiction of users’ behavioral trace data (Boeschoten et al., 2021; Breuer et al., 2023; Keusch et al., 2024). Additionally, by eliminating the need to install tracking software on participants’ devices, data donation also enhances transparency and reduces perceptions of intrusiveness (Ohme et al., 2023).

Challenges With Data Donation Studies

Despite its advantages, data donation studies are not without challenges. A primary challenge is the relatively low participation rate, with research consistently showing that participation is considerably lower in data donation studies than in self-report studies (Máté et al., 2023; Mulder & de Bruijne, 2019). A complicating factor in understanding this issue is the variation in how participation is conceptualized across studies, ranging from hypothetical willingness (i.e., individuals’ stated intention to donate data in imagined scenarios) and actual willingness to donate data (i.e., participants’ consenting to donate their data when asked in actual studies), to successful completion of the data donation (i.e., whether individuals follow through with the full donation process). These three forms of participation are conceptually and practically distinct, and each may be shaped by different factors. Yet, an overarching overview of the factors that (differentially) influence the different forms of participation is currently lacking. Therefore, the first aim of this review is to identify and synthesize the factors that affect individuals’ participation in data donation studies, across hypothetical willingness, actual willingness, and successful completion.

A second, related challenge is that, as a relatively new method, data donation lacks a clear, mature, and accessible end-to-end procedure, which may underpin low participation (Pfiffner & Friemel, 2023). Although a variety of workflows, frameworks, and practical strategies have been presented in previous studies, these resources have not been systematically integrated into a set of best practices aimed at improving participation. Accordingly, the second aim of this review is to appraise existing workflows, frameworks, and methodological tools, and, by integrating this appraisal with our factor synthesis, to articulate best practices for improving participation in future data donation studies.

Method

Identification Process

This systematic literature review was conducted in accordance with the PRISMA 2020 guidelines (Page et al., 2021). The first author conducted searches in three databases: Web of Science, Google Scholar, and Scopus, complemented with backward citation tracking, using June 2024 as the cutoff. The search strategy combined terms related to data donation and participation willingness. Boolean search strings were constructed using the following keywords: “Data Donation,” “Willingness to donate,” “Willingness to participate,” “Data Download Packages,” and “DDPs.” The search encompassed titles, abstracts, and keywords of articles. As many of the initial results were unrelated to the topic of data donation for assessing individuals’ media usage patterns, focusing instead on other forms of donation such as blood, organ, or charitable donation, the search was refined.

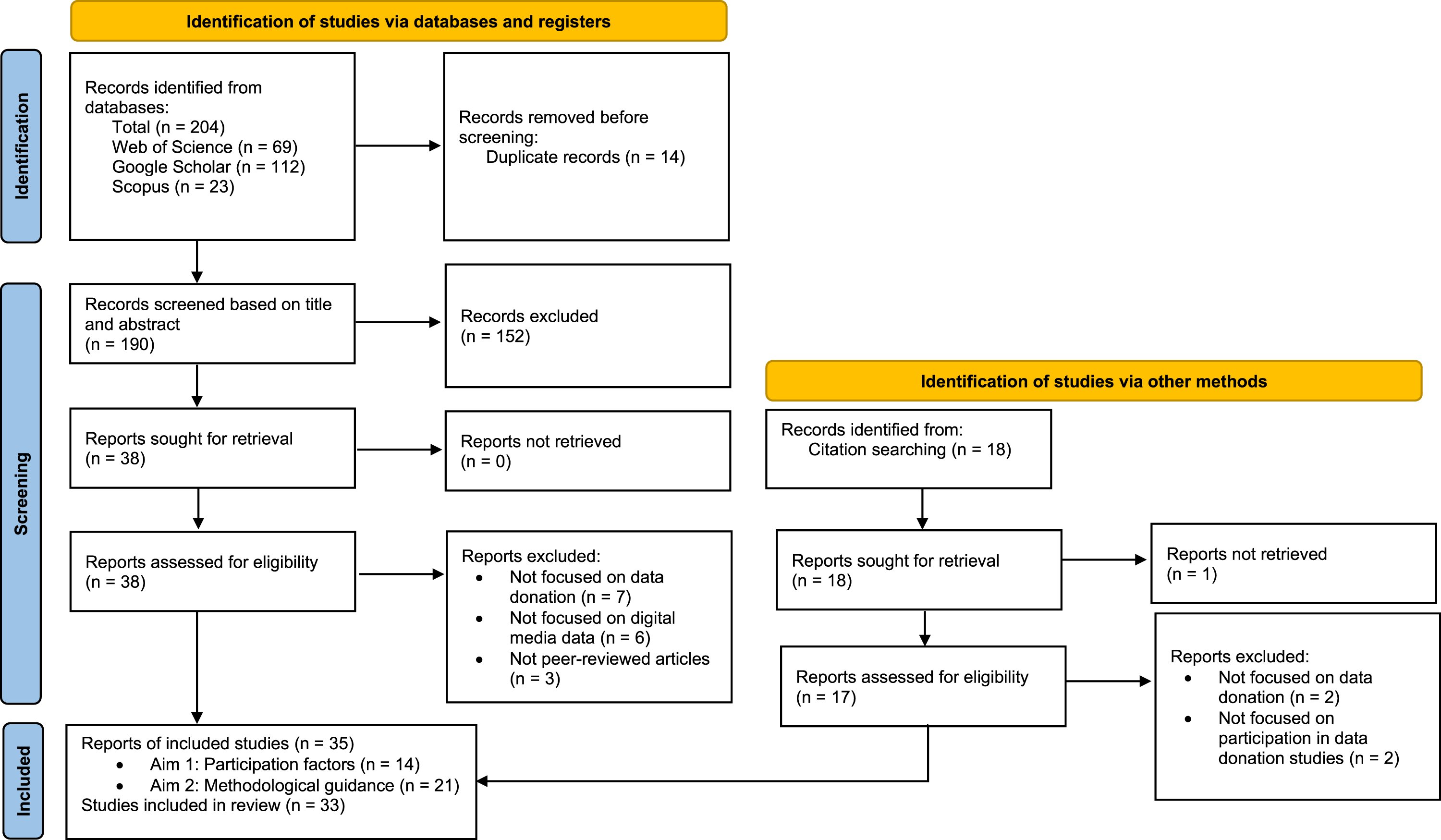

To limit the scope to the social sciences, disciplinary filters available in Web of Science and Scopus were applied. For Google Scholar, where such filters were unavailable, disciplinary relevance was assessed manually. Additional filters restricted the results to English-language publications from 2019 to 2024. This timeframe was selected because the implementation of the GDPR in May 2018 provided users in Europe with the legal right to access their personal data, enabling the emergence of data donation studies. As shown in the PRISMA flowchart presented in Figure 1, the database search yielded 204 records (69 from Web of Science, 112 from Google Scholar, and 23 from Scopus). After removing 14 duplicates, 190 records proceeded to the screening phase. PRISMA Flowchart Depicting the Study Selection Process

Screening Process

All 190 records identified through the database searches were screened by the first author. Based on their titles, abstracts, and keywords, the first author excluded 152 records that were unrelated to the topic. This step reduced the sample to 38 potentially eligible records. Then, backward citation tracking was conducted on the reference lists of these 38 records, yielding 18 additional potentially eligible records. Of these 18 records, one could not be retrieved. The full texts of the remaining 55 reports were assessed for eligibility. At this step, each article was examined in detail by the full research team to validate the inclusion decision. After discussing the eligibility of each article and a few borderline cases, full agreement was reached on the final sample.

Inclusion Process

The 55 articles that resulted from the screening process were assessed for eligibility according to specific inclusion and exclusion criteria. All articles had to be peer-reviewed, published in a journal or conference proceeding, and address participation in the donation of digital media use data in a social science context, for example, by the sharing of data download packages (DDPs), screenshots, or screen recordings. Studies were excluded if they did not focus on the donation of digital media use data (e.g., biobank sharing), addressed legal or regulatory issues unrelated to participation in data donation studies, or used the term “data donation” to describe passive tracking processes instead of participants’ active engagement in the donation of digital media use data. In addition, we excluded studies published in formats other than peer-reviewed journal articles or conference proceedings (e.g., theses, slide decks, extended abstracts).

To address the first aim of our study, articles had to examine factors influencing participants’ willingness to donate their data and/or successful completion of the data donation. To address the second aim, studies were included that present workflows, frameworks, or other practices aimed at facilitating or enhancing active participation in digital media data donation studies. After applying the inclusion and exclusion criteria, a total of 35 articles were included in the final sample, representing 33 unique studies. Two pairs of articles reported findings from the same study (i.e., Hase & Haim, 2024; Haim et al., 2023; Pilgrim & Bohnet-Joschko, 2022a; Pilgrim & Bohnet-Joschko, 2022b). Apart from peer-reviewed journal articles, the sample also included two peer-reviewed articles published in conference proceedings. Fourteen articles examined factors influencing participation in data donation studies. The remaining 21 articles focused on workflows, frameworks, and practical strategies for data donation.

Results

To address our first aim—synthesizing the factors influencing individuals’ participation in digital media data donation studies, an inductive thematic synthesis approach was used, drawing on established principles of qualitative research (Braun & Clarke, 2006). This approach was chosen because it allows for a more flexible, bottom-up analysis suited to the novel and interdisciplinary nature of the data donation literature. Rather than applying a predefined coding scheme, themes were generated from the findings and discussions reported in the reviewed articles: Summaries of the articles are provided in Appendix Table A1.

After an initial round of independent reading and open coding by the first author, emerging themes were discussed and refined in collaboration with the full research team. We developed and agreed upon a final thematic structure comprising five overarching themes that shape individuals’ participation in data donation studies—whether in terms of hypothetical willingness, actual willingness, or successful completion: (1) Sensitivity of the requested data, (2) trust and privacy concerns, (3) participants’ autonomy, (4) the complexity of the donation process, and (5) individual-related factors. In the following sections, we elaborate on each of these in detail.

Sensitivity of the Requested Data

The sensitivity of data requested by data donation studies, especially data obtained through DDPs, has been widely discussed in the literature (e.g., Boeschoten, Ausloos, et al., 2022; Carrière et al., 2025). Four studies examined in detail how this factor affects individuals’ hypothetical willingness to donate their data (Kmetty et al., 2024; Kraakman et al., 2023; Máté et al., 2023; Pfiffner & Friemel, 2023). Three of these studies found that perceptions of lower data sensitivity were associated with greater (hypothetical) willingness (Kmetty et al., 2024; Kraakman et al., 2023; Pfiffner & Friemel, 2023), whereas Máté et al. (2023) found no evidence to support an association between the requested data type and hypothetical willingness. Consistent with the predominant pattern for hypothetical willingness, Silber et al. (2022) reported that lower perceived data sensitivity led to higher rates of successful completion.

The perceived sensitivity of data depends on (a) the broader data category, (b) the specific platform data are requested from, and (c) the type of information being requested. Concerning the data category, social media platforms in particular are often viewed as sources of highly sensitive data due to their comprehensive coverage of personal details regarding social connections, private interactions, and individual preferences (Pfiffner & Friemel, 2023; van Driel et al., 2022). Consequently, participants showed lower levels of (hypothetical) willingness to donate their social media data (Pfiffner & Friemel, 2023).

Regarding (b) the specific platform involved and (c) the type of information requested, some platforms are perceived as more sensitive than others. For example, both in hypothetical settings and in response to actual requests, individuals tended to be more inclined to donate data concerning their activities on YouTube or Spotify than on Twitter/X, Facebook, Instagram, or Google (Pfiffner & Friemel, 2023; Silber et al., 2022). Participants demonstrated the least hypothetical willingness to donate data from Facebook (Pfiffner & Friemel, 2023), likely because Facebook is primarily used for maintaining strong-tie relationships and therefore contains more sensitive data than platforms like Instagram and YouTube. Similarly, Kraakman et al. (2023) found that participants showed more hypothetical willingness to share sleep duration data than calorie intake, indicating that even within the domain of one type of data, perceived sensitivity shapes participation. Work on successful completion of the data donation process (Silber et al., 2022) also supports this finding: Participants were more likely to successfully complete the data donation process when the data requested were from platforms like Facebook or Twitter/X, compared to data requests containing physical information and health states.

Trust and Privacy Concerns

Establishing and maintaining trust in researchers is a key factor in encouraging participation in data donation studies, particularly given the private and sensitive nature of the data involved. For example, Kmetty et al. (2024) found that participants who reported higher privacy concerns showed significantly lower hypothetical willingness to donate their data for research purposes. Similarly, participants who expressed fewer privacy concerns and higher trust showed a higher actual willingness to donate (Hase & Haim, 2024), and were more likely to successfully complete the data donation process (Hase & Haim, 2024; Ohme et al., 2021). Moreover, Keusch et al. (2024) found that among participants who consented but did not complete the data donation process, 24% cited privacy concerns and 20% cited fear of data misuse as their reasons for opting out.

One possible explanation for the privacy concerns and low trust toward data donation may be that this approach is relatively unfamiliar to most individuals. In a survey conducted by Pfiffner and Friemel (2023), the majority of participants had never heard of “data donation” before. When the concept of data donation and its relevance were explained (Pfiffner & Friemel, 2023) and its purpose for academic research was emphasized (Skatova & Goulding, 2019), participants’ (hypothetical) willingness to donate was higher. In further support of this notion, among those who understood the concept and opted to donate, non-completion was primarily due to technical issues rather than privacy concerns (Keusch et al., 2024).

Another explanation relates to the extent and kind of information disclosure in the study description (Kmetty et al., 2024). For example, when presented with various hypothetical scenarios, participants regarded transparency in data processing and assurances that their data would not be sold to third parties as crucial factors in their decision to participate (Heidel et al., 2021). However, information disclosure may have its limits, as another study showed that extensive detail and warnings about requested data seem to lower individuals’ hypothetical willingness to participate (Kmetty et al., 2024). Together, these findings suggest that participants are more willing to participate in data donation studies when they trust the researchers and have a clear understanding of data donation and how their data will be protected.

Autonomy and Control Over the Data Donation Process

In addition to data sensitivity, trust, and privacy concerns, participants’ perceived control seems another key factor influencing their decision to donate. Two studies have reported that participants’ hypothetical willingness to donate data depends on the perceived control they have over their data and the donation process (Máté et al., 2023; Pfiffner & Friemel, 2023). For example, in hypothetical scenarios, participants who were given the option to temporarily pause participation exhibited greater willingness to engage compared to those who were unable to pause the data collection (Máté et al., 2023). Furthermore, the ability to review the data in the DDP(s) and decide which data to share also increased hypothetical willingness (Máté et al., 2023). These findings collectively suggest that perceived control supports individuals’ participation during the earlier stages of the data donation process.

Complexity of the Data Donation Process

Across the six studies that considered the complexity of the donation process, a clear pattern emerges: Perceived complexity negatively impacts all forms of participation. The more burdensome participants perceived the tasks, the lower their (hypothetical or actual) willingness to participate (Kmetty et al., 2024; Kraakman et al., 2023; Welbers et al., 2024). Among those who did consent, higher complexity led to increased dropout rates, thereby decreasing successful completion (Hase & Haim, 2024; Keusch et al., 2024; Silber et al., 2022; Welbers et al., 2024).

Silber et al. (2022) explored the impact of task complexity on successful completion across different platforms and procedural conditions. They found that when participants were asked to download data from the application and upload it through a specific web tool, only 1.1% of Twitter/X users successfully completed the process. This completion rate was significantly lower than the 5.4% who completed the simpler task of providing their user handle (Silber et al., 2022). The rate of successful completion dropped even further to 0.9% when users were asked to take extra steps to identify and upload specific files from a health app (Silber et al., 2022).

Participants’ perception of task complexity is influenced not only by the effort per procedure but also by the number of platforms they are asked to retrieve and donate data from. Participants showed less hypothetical willingness when data was requested from multiple platforms (Kmetty et al., 2024). A similar pattern was found for successful completion rates: When participants could choose the number of platforms, more than half successfully donated data from only one platform, whereas fewer than 10% completed donations for more than two platforms (Hase & Haim, 2024). These findings consistently suggest a preference for donating data from a single platform, as (hypothetical) willingness and completion rates decrease when the number of requested platforms increases.

Individual-Related Factors

Each participant brings unique characteristics to the table, underscoring the necessity of considering these individual-level factors when examining participation in data donation studies. Factors such as digital savviness, age, educational level, and prosocial motivation appear to shape both (hypothetical and actual) willingness to participate in data donation studies as well as individuals’ likelihood of successfully completing the donation process.

Digital Savviness

Six studies examined how various aspects of digital savviness (e.g., familiarity with technology, digital skills) affect individuals’ participation (Hase & Haim, 2024; Kmetty et al., 2024; Máté et al., 2023; Ohme et al., 2021; Pfiffner & Friemel, 2023; Silber et al., 2022). In studies examining (hypothetical or actual) willingness, avid users of multiple media platforms generally expressed more favorable attitudes toward donating their data for academic research (Hase & Haim, 2024; Kmetty et al., 2024). Regarding successful completion, participants who donated their data tended to have stronger technical skills, higher technological affinity, and greater algorithmic awareness (Hase & Haim, 2024; Silber et al., 2022), although Ohme et al. (2021) did not find a significant difference in digital savviness between participants who successfully completed the donation process and those who did not.

Differences in smartphone usage patterns further illustrate the role of digital savviness. By using hypothetical scenarios, Máté et al. (2023) found that participants who mainly use their phones for social media and entertainment purposes were more willing to donate data compared to those who primarily used them for basic functions like calling, texting, and searching for basic information. This indicates that participation is shaped not only by digital skills and familiarity with technology but also by a user’s regular engagement with more interactive or complex digital environments. With greater digital savviness, participants may perceive fewer barriers and see the donation process as more manageable and less intimidating.

Age

Across seven studies, younger participants generally showed higher (hypothetical or actual) willingness and were more likely to complete the data donation process (Kraakman et al., 2023; Máté et al., 2023; Pfiffner & Friemel, 2023; Pilgrim & Bohnet-Joschko, 2022b; Silber et al., 2022; Skatova & Goulding, 2019; Welbers et al., 2024), whereas three studies found no significant age effects on participation (Hswen et al., 2022; Keusch et al., 2024; Ohme et al., 2021). Two features help explain these latter null results. First, Keusch et al. (2024) grouped respondents into broad bands (under 30, 31–50, over 50). Yet, work using narrower age brackets suggests that individuals in their 30s may resemble younger users in participation (e.g., Kraakman et al., 2023). This suggests that the age grouping approach used by Keusch et al. (2024) might have masked more nuanced age-related patterns. Second, Hswen et al. (2022) and Ohme et al. (2021) drew relatively young samples (mean ages ≈34 and 40 respectively), limiting contrast with older groups—who tend to participate less in both hypothetical and actual donation settings.

A plausible explanation for most studies finding that younger participants are more likely to participate in data donation studies than older people is that younger participants also reported higher technological knowledge (Máté et al., 2023), which may result in greater confidence in their ability to control and complete the donation process. This notion is further supported by a study by Welbers et al. (2024), who found that 41.7% of those over 60 reported actual willingness to participate, yet this same group accounted for only 24% of participants who successfully completed the data donation, illustrating substantial age-related attrition.

Gender

Six articles reported that males are more likely to participate in data donation research than females (Kmetty et al., 2024; Kraakman et al., 2023; Pilgrim & Bohnet-Joschko, 2022a, 2022b; Silber et al., 2022; Welbers et al., 2024). At the same time, seven articles did not report any significant gender differences in (hypothetical or actual) willingness as well as successful completion (Heidel et al., 2021; Hswen et al., 2022; Keusch et al., 2024; Máté et al., 2023; Ohme et al., 2021; Pfiffner & Friemel, 2023; Skatova & Goulding, 2019), and one article showed higher completion among women in a university student-based sample (Hase & Haim, 2024).

Several reasons may explain these mixed findings. Cultural context appears to play a role: Kmetty et al. (2024) observed that gender differences in hypothetical willingness varied across countries, with males in Hungary being more willing to donate data than those in the United States. This suggests that cultural or regional norms may shape individuals’ participation in data donation studies. Moreover, other individual characteristics may overshadow the effects of gender. For instance, Silber et al. (2022) reported that technological affinity could diminish perceived gender differences in successfully completing the data donation process.

Educational Level

Educational level was examined in seven studies, yielding somewhat mixed outcomes. Two studies found that individuals with higher educational levels showed greater actual willingness and higher success rates in completing the donation process (Silber et al., 2022; Welbers et al., 2024). Five studies, however, did not observe significant differences in (hypothetical or actual) willingness and successful completion (Keusch et al., 2024; Kraakman et al., 2023; Ohme et al., 2021; Pfiffner & Friemel, 2023; Pilgrim & Bohnet-Joschko, 2022a).

These inconsistencies may be explained by differences in how participation was operationalized and by variations in task complexity. Studies that identified effects of educational level required participants to demonstrate actual willingness and complete a data donation task (Silber et al., 2022; Welbers et al., 2024), rather than merely state their willingness in hypothetical scenarios (e.g., Pfiffner & Friemel, 2023; Pilgrim & Bohnet-Joschko, 2022a). Task complexity also varied substantially (Otto et al., 2022; Otto & Kruikemeier, 2023). For instance, Silber et al. (2022) asked participants to download, locate, and upload health app data, a considerably more complex procedure than Keusch et al. (2024), where participants donated Facebook data, or Ohme et al. (2021), which involved simpler screenshot-based donations. As participants with higher educational levels tend to have a stronger understanding of their technical skills (Welbers et al., 2024), this may have enhanced their ability to manage these procedures and increased their likelihood of participation. Furthermore, individuals with higher education, such as university graduates, are often more familiar with and trusting of academic research, which may increase their willingness to participate in academic studies using data donation methods.

Prosocial Motivation

Beyond demographic factors and digital savviness, four articles have emphasized the role of prosocial motivations and moral beliefs in shaping participation in data donation studies (Pfiffner & Friemel, 2023; Pilgrim & Bohnet-Joschko, 2022a, 2022b; Skatova & Goulding, 2019). When participants believe their data will contribute to societal or scientific benefits, they become more hypothetically willing to donate data (Skatova & Goulding, 2019). This is especially notable in the context of digital health data. For example, participants encouraged to donate data for the benefit of society reported higher hypothetical willingness than those who were offered individualized health feedback or peer recognition (Pilgrim & Bohnet-Joschko, 2022a). Furthermore, individuals who engaged in other forms of giving—such as charitable donations—also expressed higher hypothetical willingness to donate their health data (Pilgrim & Bohnet-Joschko, 2022b), suggesting that an individual’s prosocial values may play a broader role in the willingness to participate in data donation studies.

Workflows, Frameworks, and Methodological Tools

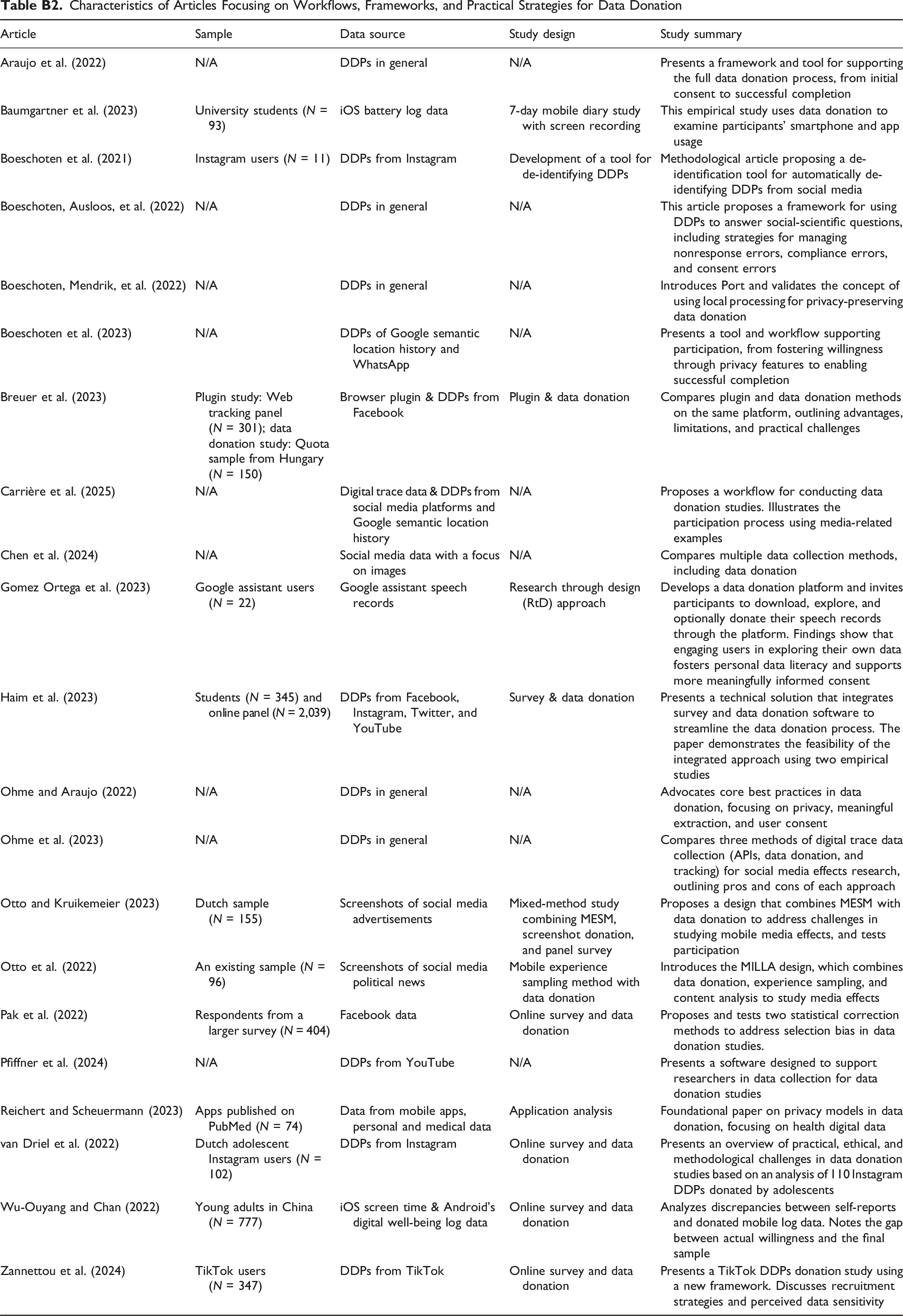

To address the second aim of this study—to appraise existing workflows, frameworks, and methodological tools, and, by integrating this appraisal with our factor synthesis, to articulate best practices for improving participation in future data donation studies—we reviewed 21 articles that present frameworks, conceptual/ethical considerations, or practical strategies for implementing data donation in social science research. Because these pieces function as methodological resources rather than empirical evidence, they were cataloged. Detailed summaries are provided in Appendix Table B2. We draw on them in the Discussion section, along with the insights from our review of the 14 articles that examined factors influencing individuals’ participation in digital media data donation studies, to formulate best practices.

Discussion

This review discusses the factors that (differentially) influence three different forms of participation in data donation studies: hypothetical willingness (i.e., individuals’ stated intention to donate data in imagined scenarios), actual willingness to donate data (i.e., participants’ consent to donate their data when asked in actual studies), and successful completion (i.e., whether individuals follow through with the full donation process). We have synthesized five key factors that influence some or all of these participation forms: (1) The sensitivity of the requested data, (2) trust and privacy concerns, (3) participants’ autonomy and sense of control, (4) the complexity of the donation process, and (5) individual-related factors such as digital savviness. Notably, the five factors echo in large part classic participation determinants identified in survey methodology—such as study complexity, individual characteristics, and trust in research (Groves & Couper, 2012). Yet, data donation’s technical and privacy-sensitive features appear to amplify their effects (Glass et al., 2015). Drawing from our synthesis of key factors influencing participation combined with our appraisal of existing workflows, frameworks, and methodological tools, we outline four best practices that future studies can implement to improve participation.

Best Practice 1: Implement a Donation Framework that Maximizes Participant Privacy

Many studies in this review emphasize the importance of transparency and privacy protection in shaping individuals’ participation in the data donation process (e.g., Araujo et al., 2022; Boeschoten, Ausloos, et al., 2022; Boeschoten et al., 2023; Reichert & Scheuermann, 2023). To protect participants’ data and consequently maximize their engagement in data donation studies, one of the most critical best practices is to implement a robust and privacy-sensitive data donation framework. This practice is primarily aimed at increasing individuals’ willingness to participate, but it also helps reduce post-consent attrition by ensuring a safer and more ethically transparent participation experience. Several donation frameworks have been developed in recent years, including Port, developed by Boeschoten, Mendrik, et al. (2022), the Social Media Donator (SMD) by Zannettou et al. (2024), and the Data Donation Module (DDM) by Pfiffner et al. (2024).

Data donation frameworks such as Port, SMD, and DDM emphasize data minimization principles, allowing researchers and participants to specify and limit the amount of data requested. This often involves carefully choosing the platform(s) from which data are requested, to minimize the collection of highly sensitive information. These data minimization principles help lower participants’ perceived data sensitivity. Moreover, most frameworks (e.g., Port and SMD) come with built-in de-identification functions. These functions remove participants’ personal details in textual data and facial identifiers in images, thereby reducing the risk of exposing sensitive information. Such privacy-preserving tools help address participants’ privacy concerns, strengthen their trust in the data donation process, and improve both participants’ willingness and likelihood of successfully completing data donation studies.

While current data donation frameworks have advanced considerably, further refinement is still needed. In particular, existing de-identification techniques remain technically limited and often insufficient to fully safeguard participants’ privacy. For instance, tools such as Port face challenges in accurately detecting and removing personally identifiable information embedded within donated data. This challenge is especially pronounced in studies that analyze self-produced materials such as Instagram stories, which may include sensitive information like faces or voices (Boeschoten et al., 2021). To address this, Boeschoten, Mendrik, et al. (2022) have suggested implementing an extra de-identification step to further anonymize such data—rather than removing entire messages—to preserve the integrity of the DDPs. Refining de-identification processes and expanding the applicability of current frameworks is an important step towards decreasing privacy concerns, increasing trust, and ultimately increasing participation in data donation studies.

Best Practice 2: Ensure a User-Friendly Procedure and Clear Communication

As highlighted in the reviewed literature, given the complexity of data donation procedures, it is crucial that participants fully understand what participation entails and feel supported throughout the process (Boeschoten et al., 2021; van Driel et al., 2022). However, researchers face a delicate trade-off between ensuring transparency and minimizing participant burden. On the one hand, detailed explanations are essential for enabling informed consent, thereby boosting participants’ actual willingness to participate in the data donation process. On the other hand, overly complex instructions or excessive choices may create additional burdens, deter participation, or even cause participants who were willing to share their data to drop out before successfully completing the donation.

This tension highlights the need for thoughtful, participant-centered procedures that are clear enough to ensure transparency but simple enough for achieving greater completion rates. One of the most effective yet underused strategies to achieve this is pilot testing (Carrière et al., 2025; Pfiffner & Friemel, 2023), which can help researchers anticipate and address procedural challenges, refine the clarity of communication, and evaluate the user-friendliness of the data donation workflow before the main study begins. We recommend that future studies incorporate and report on pilot testing as a formal part of the data donation workflow.

Pilot testing is particularly valuable for optimizing communication. At the study entrance stage, clear informed consent is needed to convert interest into actual willingness to participate. While researchers often believe that they have provided clear and detailed information about the study’s purpose, design, and privacy safeguards, consent may not always be truly informed from the participants’ end (Struminskaya & Sakshaug, 2023; Welbers et al., 2024). For instance, Welbers et al. (2024) found that some participants either skim-read or misunderstood the informed consent, which later led to refusals to participate. This also suggests that extensive text-based instructions are not always effective. To better support participants and increase consent rates, interviews during the pilot and/or recruitment phase can help address their needs and clarify uncertainties (Kraakman et al., 2023).

It is important to note that issues concerning informed consent are not solely due to participants’ failure to comprehend; commonly used consent forms may be inadequate in helping participants fully understand what data will be used and how (Gomez Ortega et al., 2023). In other words, participants need to know the consequences of participating (Skatova & Goulding, 2019). Particularly for individuals who are less willing to donate their data due to limited skills or experience, detailed communication at the point of consent can enhance their understanding of what their data entails and why it may be valuable. For example, Gomez Ortega et al. (2023) showed that participants who initially believed their data contained little meaningful information changed their view after reviewing the content they had donated, realizing how much could be inferred. This indicates that individuals may underestimate the research value of their data due to a lack of awareness.

Clear and ongoing communication is also crucial during the data donation study, as it may help prevent instances where participants, who showed actual willingness, ultimately fail to successfully complete the data donation process. Despite efforts to create user-friendly processes, technical challenges may persist, particularly for those unfamiliar and less confident with complex device functions and interfaces (Keusch et al., 2024; Welbers et al., 2024). To ensure a positive user experience, researchers are advised to select or design applications with straightforward instructions and offer additional support, such as a phone help desk for immediate assistance (Strycharz et al., 2024). By setting step-by-step instructions, researchers can enhance participants’ confidence in their ability to contribute effectively.

Best Practice 3: Enable Participants to Exert Control Over the Process

Our review demonstrates that granting participants a strong sense of control—such as the ability to decide which data to donate, how it is donated, and the possibility to opt out at any point during the donation—is a powerful strategy that positively influences participation in the donation process (Máté et al., 2023; Ohme & Araujo, 2022; Pfiffner & Friemel, 2023). Specifically, it facilitates actual willingness to donate data by fostering trust during the consent stage and improves successful donation rates by reducing uncertainty and friction throughout the process. To strengthen participants’ sense of control, some data donation frameworks have integrated local processing features that enable participants to extract, inspect, and manage their data directly on their own local devices before sharing (Ohme & Araujo, 2022). Others have incorporated customization mechanisms that allow participants to select specific data files and fields for donation.

The customization of which data participants are willing to donate can even extend to the level of individual data items. For example, Port allows participants to select specific messages from a group chat while excluding all other messages (Boeschoten et al., 2023). However, this flexibility may result in participants donating a restricted amount of data, thereby limiting the analytical scope of the dataset (Araujo et al., 2022). Researchers should therefore balance the practical feasibility of data donation with maximizing participant autonomy. An example of a framework that achieves this balance is the SMD framework used in a TikTok data donation study conducted by Zannettou et al. (2024). To encourage data sharing while ensuring sufficient data collection, participants were required to donate at least their video viewing history. For each additional type of data provided beyond the viewing history, they received an extra monetary incentive.

Best Practice 4: Address Selection Bias

Our review of factors influencing individuals’ participation in data donation studies also highlights potential risks for data quality and representativeness. A central concern is sample selection bias (Chen et al., 2024; Hase & Haim, 2024; Keusch et al., 2024; Kmetty et al., 2024; Pak et al., 2022; Silber et al., 2022; Welbers et al., 2024), which occurs when those included in the analytic sample differ systematically from those excluded by the selection process (Pak et al., 2022). In data donation studies, this operates on at least two levels: (a) the level of actual willingness, where there are differences between individuals who consent and those who do not, and (b) the level of successful completion, where there are differences between those who complete the donation and those who discontinue after consenting. For example, higher digital savviness and trust in researchers are linked to greater (actual) willingness and higher completion (Hase & Haim, 2024), which may lead to an underrepresentation of more privacy-conscious and less digitally skilled individuals. If unaddressed, this may limit the generalizability of findings and skew estimates of the phenomena under investigation.

To address selection bias and improve sample quality, incorporating error-correction methods is needed, including ex-ante error correction and a-posteriori error correction (Hase & Haim, 2024; Pak et al., 2022). Ex-ante error correction addresses bias before and during data collection. This entails researchers designing their study through the eyes of the study’s specific target group, by identifying how people within this group (and key subgroups) may differ (e.g., in trust, privacy concerns, digital skills) and adapting outreach and materials accordingly, using the messengers and channels this group trusts, tailoring consent language and examples to their context, and providing support that matches their abilities (de León et al., 2025). Where feasible, oversampling underrepresented subgroups within the target group may bring the achieved sample closer to relevant population benchmarks.

If there is still selection bias despite these efforts after completion of the data collection, a-posteriori error-correction methods can be employed. This entails using statistical modeling techniques that adjust for potentially bias-related sample characteristics in the analysis (Hase & Haim, 2024; Pak et al., 2022). Addressing selection bias through these methods is essential for ensuring that findings from data donation studies are robust, reliable, and representative of the target population.

Limitations of the Current Review

While this review offers timely insights into what shapes participation in data donation studies and outlines practical guidance for future research, several limitations should be noted. First, due to feasibility constraints, the initial screening and eligibility assessment were conducted by a single author. Although eligibility criteria were applied transparently and discussed with the broader research team to reach consensus on inclusion decisions, the use of a single screener during identification and screening may have introduced subjectivity compared to a double screening approach.

Second, the thematic grouping of factors influencing participation in data donation and the proposed best practices in this review were developed through an inductive synthesis approach. This method was chosen to accommodate the field’s diversity and the early stages of data donation as a research method, but it inevitably involved interpretive judgment, and alternative groupings or emphases may have emerged under a different analytic lens. Future work may benefit from applying a structured coding scheme or more deductive framework to enhance conceptual clarity and reduce potential interpretive ambiguity.

Gaps in the Literature and Directions for Future Research

In addition to the methodological considerations of this review, there are also important gaps in the literature that call for further attention. First, despite growing interest in data donation, a primary challenge is the methodological inconsistency in how participation is measured. Most studies have examined hypothetical willingness to donate, only five studies assessed actual willingness and successful completion of the data donation process, and these varied widely in their procedural design, such as the complexity of the donation task and the level of technical support provided to participants (e.g., face-to-face guidance vs. text instructions), making it difficult to compare findings regarding participation.

The wide variety in methodological approaches also makes it difficult to examine the gap between stated willingness and successful completion. This gap matters, as hypothetical or actual willingness does not necessarily translate into successful completion, with several studies reporting stark gaps between those willing/consenting and those who ultimately donate (Coyne et al., 2023; Ejaz et al., 2023; Ohme et al., 2021). For instance, Ohme et al. (2021) observed that only 15% of individuals who expressed high willingness ultimately donated their data. As such, a high priority for future work is to explain—and reduce—the discrepancy between willingness and successful completion.

Encouragingly, recent studies have begun to tackle this issue in greater depth. For example, Hase et al. (2024) analyzed low completion compliance across six major social media platforms and showed that the willingness-completion gap is not always due to factors such as study design or participant willingness. Rather, it often reflects platform-imposed technical burdens, such as convoluted access procedures or the provision of incomplete data packages. These issues act as barriers that stop already-consenting participants mid-process. Other researchers have also noted these issues and advocated for platforms to ensure better compliance and usability (Valkenburg et al., 2024). To further move the field forward, future studies should adopt shared definitions of participation and consistently report and compare hypothetical willingness, actual willingness, and successful completion.

Additionally, there is a notable lack of qualitative studies on participants’ participation in data donation studies. One study by Gomez Ortega et al. (2023) employed an interview approach to investigate participants’ perspectives on their data donation experiences and identify challenges throughout the data donation process. These interviews provided insights into participants’ emotional responses and decision-making rationales at each stage, and the perceived value and impact of their participation on their data literacy. More recent contributions (e.g., Gaballah et al., 2025; Strycharz et al., 2024) further confirm the value of incorporating qualitative perspectives. For example, using open-ended survey questions helped reveal deeper motivational factors and barriers to participating in data donation studies (Strycharz et al., 2024). Together, these studies highlight how qualitative methods can capture dimensions of motivation, uncertainty, and reflection that are often inaccessible through standardized questionnaires. Therefore, for researchers aiming to refine and improve their data donation studies or tools, qualitative approaches provide a necessary complement to quantitative methods.

Conclusion

By reviewing the current literature on factors influencing individuals’ willingness to participate in and successfully complete data donation studies, this study focuses on a critical issue encountered by researchers analyzing individuals’ digital device use and media use behaviors. As the success of data donation studies hinges on participants’ willingness to share their data, we emphasize refining approaches to increase this willingness and reach successful completion of the data donation process by addressing the perceived sensitivity of data, fostering trust with the research team and data donation approaches, ensuring participant autonomy, and clarifying the procedures involved. Key recommendations include employing robust data donation frameworks to ensure privacy protection, maintaining open communication to build trust and simplify the participation process, empowering participants by granting greater control over their data, and implementing error-correction strategies to minimize selection biases. These enhancements are crucial for strengthening data donation as an essential tool for assessing individuals’ digital media use behaviors.

Footnotes

Author Contributions

Project Administration: AvdW.

Formal analyses: YX, AvdW.

Supervision: AvdW, IB.

Writing—original draft: YX.

Writing—review and editing: YX, AvdW, IB.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The pre-print of this study can be found on OSF.

Author Biographies

Appendix

Characteristics of Articles Focusing on Workflows, Frameworks, and Practical Strategies for Data Donation

| Article | Sample | Data source | Study design | Study summary |

|---|---|---|---|---|

| Araujo et al. (2022) | N/A | DDPs in general | N/A | Presents a framework and tool for supporting the full data donation process, from initial consent to successful completion |

| Baumgartner et al. (2023) | University students (N = 93) | iOS battery log data | 7-day mobile diary study with screen recording | This empirical study uses data donation to examine participants’ smartphone and app usage |

| Boeschoten et al. (2021) | Instagram users (N = 11) | DDPs from Instagram | Development of a tool for de-identifying DDPs | Methodological article proposing a de-identification tool for automatically de-identifying DDPs from social media |

| Boeschoten, Ausloos, et al. (2022) | N/A | DDPs in general | N/A | This article proposes a framework for using DDPs to answer social-scientific questions, including strategies for managing nonresponse errors, compliance errors, and consent errors |

| Boeschoten, Mendrik, et al. (2022) | N/A | DDPs in general | N/A | Introduces Port and validates the concept of using local processing for privacy-preserving data donation |

| Boeschoten et al. (2023) | N/A | DDPs of Google semantic location history and WhatsApp | N/A | Presents a tool and workflow supporting participation, from fostering willingness through privacy features to enabling successful completion |

| Breuer et al. (2023) | Plugin study: Web tracking panel (N = 301); data donation study: Quota sample from Hungary (N = 150) | Browser plugin & DDPs from Facebook | Plugin & data donation | Compares plugin and data donation methods on the same platform, outlining advantages, limitations, and practical challenges |

| Carrière et al. (2025) | N/A | Digital trace data & DDPs from social media platforms and Google semantic location history | N/A | Proposes a workflow for conducting data donation studies. Illustrates the participation process using media-related examples |

| Chen et al. (2024) | N/A | Social media data with a focus on images | N/A | Compares multiple data collection methods, including data donation |

| Gomez Ortega et al. (2023) | Google assistant users (N = 22) | Google assistant speech records | Research through design (RtD) approach | Develops a data donation platform and invites participants to download, explore, and optionally donate their speech records through the platform. Findings show that engaging users in exploring their own data fosters personal data literacy and supports more meaningfully informed consent |

| Haim et al. (2023) | Students (N = 345) and online panel (N = 2,039) | DDPs from Facebook, Instagram, Twitter, and YouTube | Survey & data donation | Presents a technical solution that integrates survey and data donation software to streamline the data donation process. The paper demonstrates the feasibility of the integrated approach using two empirical studies |

| Ohme and Araujo (2022) | N/A | DDPs in general | N/A | Advocates core best practices in data donation, focusing on privacy, meaningful extraction, and user consent |

| Ohme et al. (2023) | N/A | DDPs in general | N/A | Compares three methods of digital trace data collection (APIs, data donation, and tracking) for social media effects research, outlining pros and cons of each approach |

| Otto and Kruikemeier (2023) | Dutch sample (N = 155) | Screenshots of social media advertisements | Mixed-method study combining MESM, screenshot donation, and panel survey | Proposes a design that combines MESM with data donation to address challenges in studying mobile media effects, and tests participation |

| Otto et al. (2022) | An existing sample (N = 96) | Screenshots of social media political news | Mobile experience sampling method with data donation | Introduces the MILLA design, which combines data donation, experience sampling, and content analysis to study media effects |

| Pak et al. (2022) | Respondents from a larger survey (N = 404) | Facebook data | Online survey and data donation | Proposes and tests two statistical correction methods to address selection bias in data donation studies. |

| Pfiffner et al. (2024) | N/A | DDPs from YouTube | N/A | Presents a software designed to support researchers in data collection for data donation studies |

| Reichert and Scheuermann (2023) | Apps published on PubMed (N = 74) | Data from mobile apps, personal and medical data | Application analysis | Foundational paper on privacy models in data donation, focusing on health digital data |

| van Driel et al. (2022) | Dutch adolescent Instagram users (N = 102) | DDPs from Instagram | Online survey and data donation | Presents an overview of practical, ethical, and methodological challenges in data donation studies based on an analysis of 110 Instagram DDPs donated by adolescents |

| Wu-Ouyang and Chan (2022) | Young adults in China (N = 777) | iOS screen time & Android’s digital well-being log data | Online survey and data donation | Analyzes discrepancies between self-reports and donated mobile log data. Notes the gap between actual willingness and the final sample |

| Zannettou et al. (2024) | TikTok users (N = 347) | DDPs from TikTok | Online survey and data donation | Presents a TikTok DDPs donation study using a new framework. Discusses recruitment strategies and perceived data sensitivity |