Abstract

As visual content becomes increasingly prominent on social media, automated image categorization is vital for computational social science efforts to identify emerging visual themes and narratives in online debates. However, the methods based on convolutional neural networks (CNNs) currently used in the field are unable to fully capture the connotative meaning of images, and struggle to produce easily interpretable clusters. In response to these challenges, we test an approach that leverages the ability of Vision-and-Large-Language-Models (VLLMs) to generate image descriptions that incorporate connotative interpretations of the input images. In particular, we use a VLLM to generate connotative textual descriptions of a set of images related to climate debate, and cluster the images based on these textual descriptions. In parallel, we cluster the same images using a more traditional approach based on CNNs. In doing so, we compare the connotative semantic validity of clusters generated using VLLMs with those produced using CNNs, and assess their interpretability. The results show that the approach based on VLLMs greatly improves the quality score for connotative clustering. Moreover, VLLM-based approaches, leveraging textual information as a step towards clustering, offer a high level of interpretability of the results.

Keywords

Introduction

Study Context

Automated image categorization represents a valuable analytical tool in the field of computational social science (Peng & Lu, 2023; Williams et al., 2020; Zhang & Peng, 2024). The visual turn in contemporary social media (Carah, 2014; Highfield & Leaver, 2016; Leaver et al., 2020) has further increased the importance of computational methods for the analysis of visual content, including image clustering, for a wide range of research that leverages online social data (Bernasco et al., 2023; Deng et al., 2023; Joo & Steinert-Threlkeld, 2022; Segerberg & Magnani, 2025; F. Zhang et al., 2019) and social media analysis (Lynch et al., 2020; Nguyen et al., 2018; Said et al., 2019; Won et al., 2017; Zhang & Peng, 2024). In this context, automated annotation and categorization of visual content can be used to better understand a wide range of collective patterns, such as the spread of misinformation (Ghai et al., 2024; Giachanou et al., 2020) and polarization dynamics (Joshi & Buntain, 2024). These and other potential applications have motivated the development of computational data analysis pipelines for visual content in social research (Alam et al., 2017; Magnani & Segerberg, 2021; Torres, 2024; Torres & Cantu´, 2022; Peng, 2024).

Nevertheless, recently used techniques for image clustering are still limited from the perspective of social science research (Yu et al., 2023). Much of the limitation stems from the fact that approaches based on Convolutional Neural Networks (CNN)—currently popular in computer-vision clustering for social and political sciences (Peng & Lu, 2023)—-focus exclusively on visual features. CNN-based approaches are extremely effective at recognizing visual elements that characterize image content and use these as the foundational elements for clustering. A good example is given by Zhang & Peng (2024), where the best solution clusters images based on what they represent, successfully distinguishing gatherings of people, hand-written or typed petitions, screenshots and protesters with banners. The problem is that, while this type of clustering is valuable for understanding the visual objects through which a protest is represented, it is less useful when studying protest movements with broader scope, longer duration, or that deal with multiple issues. For such purposes, images are often more important for what they mean than for what they represent.

To address what an image means, it is typically crucial to approximate a socially and culturally embedded understanding of the content. Yet, traditional approaches to image clustering based on CNNs miss the embedded meaning by focusing on object detection, often disregarding the broader contextual and relational dynamics within the image (Cai et al., 2023). They also tend to neglect the spatial relationships between visual elements in images, and the external knowledge needed to interpret visual themes, all of which make it difficult to go beyond literal meaning (He et al., 2005; Laurenson & Olson, 2021).

The difference between approaches is not always apparent or salient when literal and embedded meanings align, and there are surely cases in which the description of what is represented in the images or the clever adoption of a CNN-based approach illuminate pertinent social science research questions (Peng & Lu, 2023; Rossi et al., 2023). Still, the inability to address embedded meaning hobbles the scope of social research, and the limitations become all the more troubling in the digital age, given the prominent role in online social dynamics and political participation of Internet memes (Du et al., 2020; Wiggins & Bowers, 2015) and similar types of visual communication that operate on shared subcultural knowledge (Mortensen & Neumayer, 2023).

Semantic Clustering

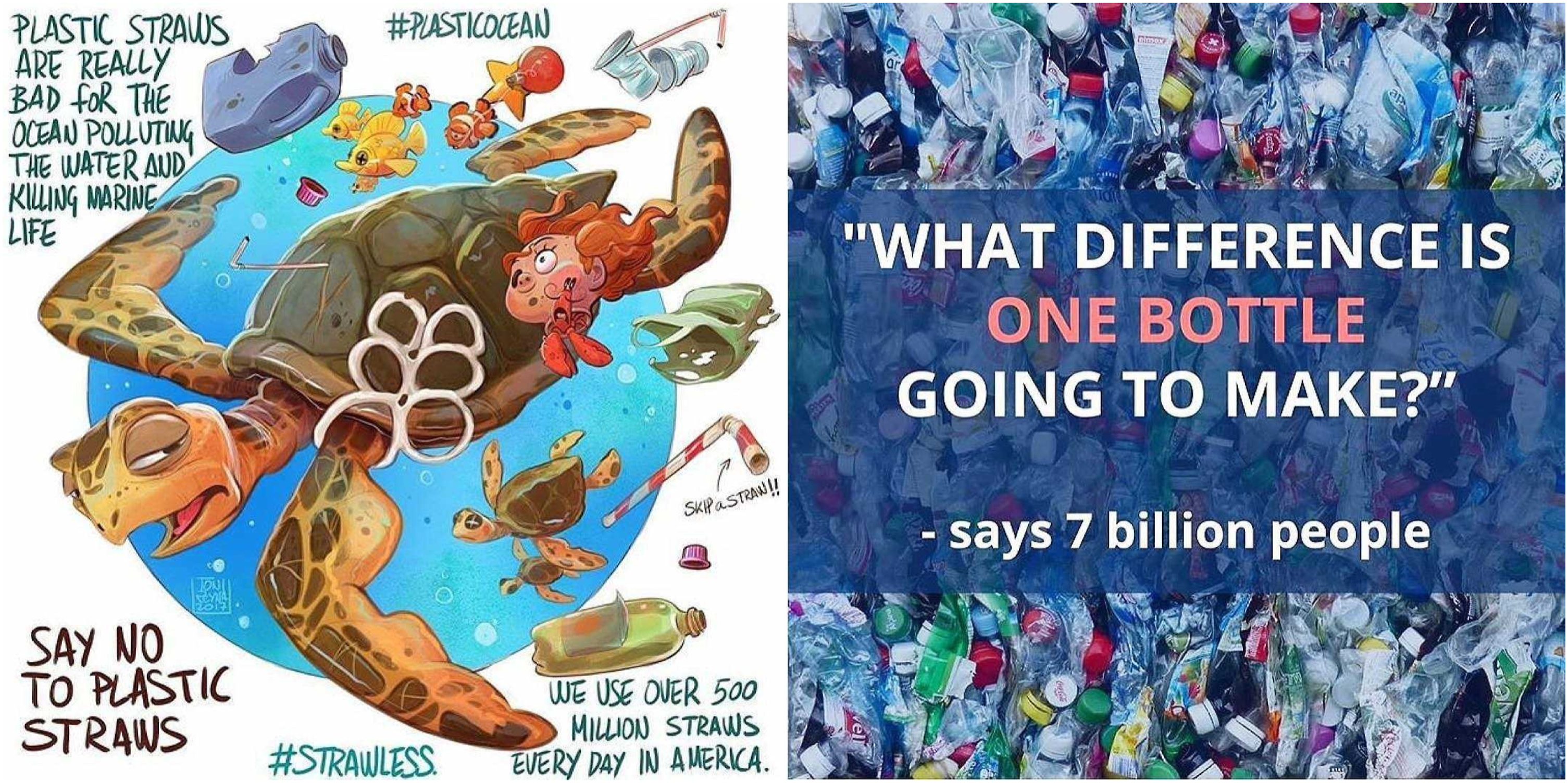

In response to these limitations, researchers have developed the concept of semantic clustering. While the concept of semantic clustering might appear straightforward on the surface—images would be clustered according to their meaning, as exemplified in Figure 1 — researchers have used the term to refer to different concepts and to address different problems. Example of two images showing denotative difference but connotative similarity that should be clustered together in semantic clustering.

Sheikholeslami et al. (1998) refer to semantic clustering as a clustering that is able to operate with the set of heterogeneous features that can be found in an image, and provide an example in which the two features are the texture and color of an image. Similarly, Patin˜o-Escarcina and Costa (2008) attempted to derive semantic meaning from sets of visual items through low-level variables such as the color layout of the images and MPEG-7 color-structure descriptors. Pandey et al. (2016) see semantics as a hierarchical property of the visual data in which proximity is defined within a hierarchical structure of visual subgroups.

Over the years, the data science literature has developed a variety of strategies to incorporate semantics into visual clustering (Ouni et al., 2023; Patin˜o-Escarcina & Costa, 2008; Wiggins & Bowers, 2015; M. Zhu & Badii, 2007). Earlier studies focused on employing images’ collateral textual information (such as captions or existing textual descriptions associated with a given image) along with low-level visual features in image clustering (M. Zhu & Badii, 2007). The reliance of this approach on the presence of textual annotations, though beneficial, remains a limitation, given the inconsistent availability of detailed textual metadata for all images.

Recent work attempts to integrate established methods such as Bag of Visual Words (BoVW) into the clustering pipeline, leading to an approach called Bag of Visual Phrases (BoVP), designed to identify dominant visual patterns in image collections while also connecting objects to their meanings and addressing their spatial relationships (Ouni et al., 2023). However, since it prioritizes dominant visual cues, this kind of approach might obscure elements that are less prominent but still significant for identifying visual narratives, particularly in the context of political analysis. For example, when processing a picture of a collective event like a political rally, approaches based on BoVP may not prioritize small and diverse visual elements such as symbols, party logos, or specific banners. This may in turn overlook significant differences between apparently similar visual representations that actually refer to disparate socio-political subcultures, collective actors, or claims.

An alternative approach is the Semantic-Enhanced Image Clustering (SIC) method (Cai et al., 2023). SIC employs the CLIP neural network model, pre-trained on a large dataset of image-text pairs, to convert both pictures and their textual label into high-dimensional vectors, establishing a multi-modal embedding space (Radford et al., 2021). In this case, these vectors are projected into a semantic space constructed from a refined list of nouns from the WordNet lexical database (Cai et al., 2023; Fellbaum, 1998). With this approach, SIC aims to categorize images based on their semantic similarity, harnessing CLIP’s ability to model image-text relationships and the semantic variations captured by specific nouns in WordNet (Cai et al., 2023). Taking advantage of CLIP’s multimodal capabilities, SIC methodology can improve understanding of subtle connections between visual elements within images (Y. Zhu et al., 2022).

The approach tested in this paper is instead based on VLLMs, which have repeatedly shown the potential to reveal latent contextual nuances within images (Lyu et al., 2023; Roth et al., 2023; X. Zhang et al., 2023). In addition, VLLMs are available off-the-shelf, without requiring any ad-hoc selection of terms or training.

Clearly, no method for clustering can be universally “best” across all contexts (Grimmer & King, 2011; Watanabe, 1969). For present purposes, given the importance of socially and culturally embedded meaning in social and political analysis of visual content, we propose to frame the problem of semantic clustering as a problem of connotative value. We assume that each image has a denotative and connotative value. The denotative value stems from the literal meaning of what is actually represented in the image, and can be directly identified in its visual features. The connotative value refers to the conceptual, ideological, and other possible meanings that people may connect with the image and the context-bound cultural codes with which it is inscribed (Barthes, 1977). We also assume that it is this social meaning encompassed by the connotative level that is typically of key analytical interest in social science research. Therefore, in this paper, we are interested in the ability of semantic clustering methods to prioritize the connotative proximity of images over their denotative proximity.

VLLMs as a Tool for Semantic Clustering

Against the background of the previous discussion, in this paper, we conceive of the problem of semantic clustering as a problem of connotative clustering, and identify it as a meaningful clustering problem for social science research (Peng & Lu, 2023). We propose to address it by leveraging the visual capabilities of recent large multimodal models (Wu et al., 2023).

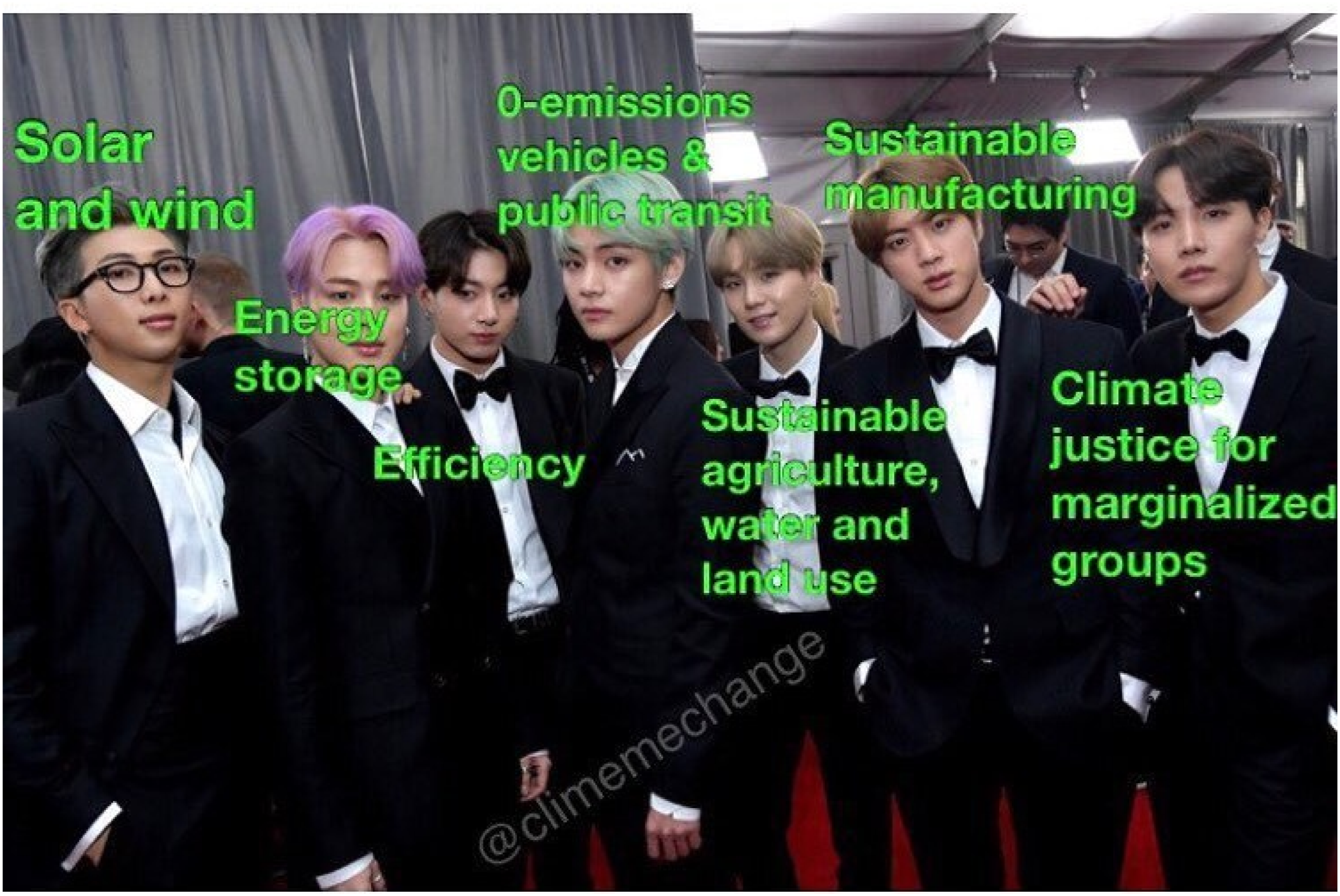

Large Language Models with visual capabilities (VLLMs) have been able to identify subtle nuances and contextual clues (Gertz et al., 2024; D. Zhu et al., 2023), and extensive recent work shows the remarkable capacity for contextual understanding offered by these models (Yang et al., 2023). Figure 2 shows an example of these capabilities. The image, taken from the publicly available dataset used in this study, shows a meme with several layers of interpretation and considerable cultural complexity. The image represents a picture of the Korean boy band BTS, with superimposed text on the respective band members that refers to innovations to support a greener transition, such as energy storage and sustainable manufacturing. Depicting a popular band uses social desirability within a certain social group to promote action for a more sustainable society. When asked to describe the connotative meaning of the image, the GPT model used in this study produces the following output: “The image conveys a powerful visual blend of modern celebrity culture with pressing environmental issues, using the popular music group as a metaphorical representation of various solutions to climate change. Each member is labeled with a different aspect of sustainability—such as “Solar and wind,” “Energy storage,” and “Climate justice for marginalized groups”—symbolically aligning their influence and teamwork with the collective efforts needed to address environmental challenges. The setting suggests that environmental consciousness is as valuable and noteworthy as red carpet prestige, encouraging the viewer to see sustainability not just as necessary, but glamorous and influential. The use of a popular culture iconography to discuss critical issues also suggests that the fight against climate change needs to be mainstream and integrated into all facets of society, leveraging popular figures to raise awareness and inspire action.” While not explicitly citing the name of the band, the VLLM perfectly captures the connotative meaning of the image, producing a text that connects and reflects its multiple meaningful visual and cultural dimensions. This example illustrates how we can use a VLLM to produce textual descriptions of the connotative semantics of the input images. This then makes it possible to compute clusters (or other summaries of the input images) working directly with the text. Having an intermediate textual step allows us to test the interpretability of the results for the end user, an element that is central in social science research (Grimmer & King, 2011), but often difficult to achieve with traditional approaches based on CNNs (Peng & Lu, 2023). One of the memes present in the dataset. This type of image shows a high level of cultural complexity that cannot be fully interpreted by simply looking at the objects represented in the picture.

In this paper, we compare the semantic quality, focusing on connotative value, of VLLM-based clustering with clustering obtained using pre-trained CNNs (Joshi & Buntain, 2024; Mooseder et al., 2023; Muise et al., 2022; Peng, 2021; Peng & Lu, 2023). We compare the VLLM-based pipeline with a CNN-based approach for several reasons. CNN-based methods have been commonly used in social science research. What is more, they have recently been shown to achieve the best results for social image clustering when thoroughly compared with other approaches used in the social science literature (but not VLLMs), and the evaluation leading to this conclusion is based on publicly available data that enables us to incrementally extend the previous evaluation with an additional approach (Zhang & Peng (2024)). We therefore regard this comparison as the one that is most urgent, realistic, and relevant, as opposed to focusing on other methods that have been defined to achieve different types of semantic clustering and are not currently used in social science research.

In particular, we address the following research questions: RQ1: Can a VLLM-based image clustering pipeline improve the connotative semantic validity of clusters compared with a CNN-based pipeline? RQ2: Are the results obtained with a VLLM-based image clustering pipeline interpretable by the end-user?

Data and Methods

The data used in the experiments consists of 11,873 images used in climate change communication (H. Zhang & Peng, 2022a). More specifically, the dataset is composed of images gathered from Instagram accounts that focused extensively on issues related to climate change. The accounts were selected based on their tendency to publish content frequently associated with the keywords “climate change” and “global warming”. The data includes images from a wide range of domains connected to the visual discourse surrounding climate change, including protest-related images, photos from scientific conferences, landscapes, science communication visuals (such as infographics), and more. The dataset has already been used for clustering tasks, and it has recently been used to showcase the potential of visual data clustering for social science research (Zhang & Peng, 2024).

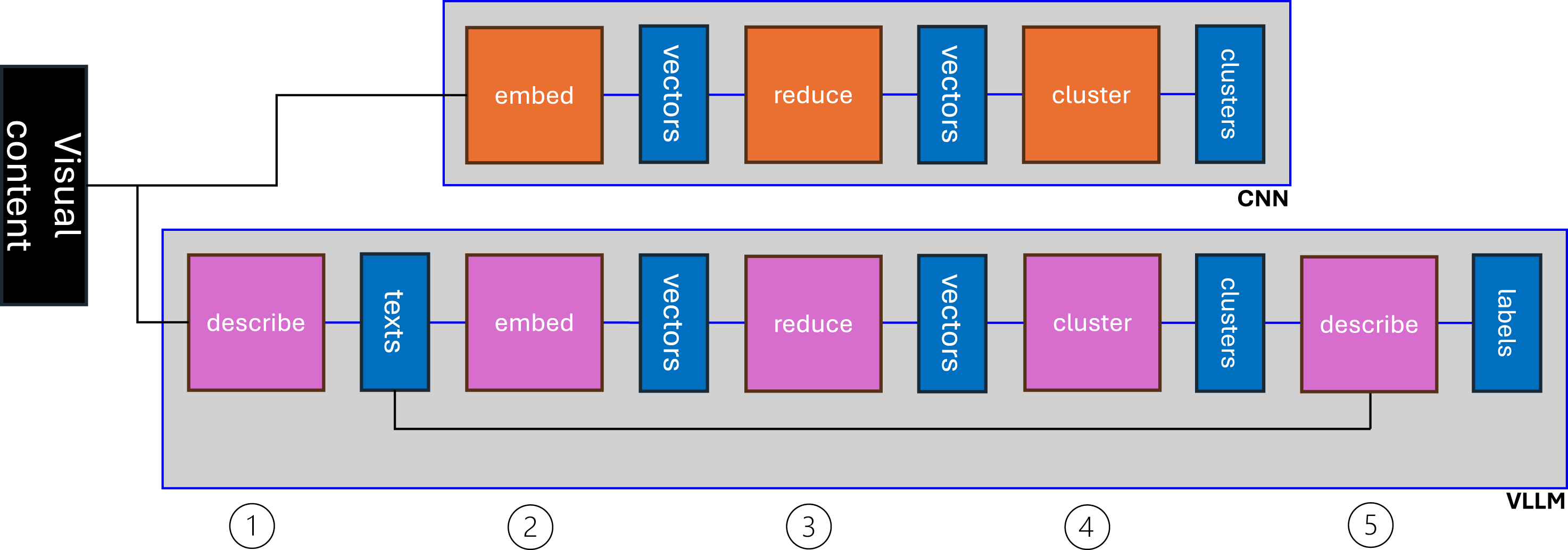

Figure 3 shows the two pipelines tested in this paper. The current mainstream approach relies on a CNN trained on an image recognition task to extract visual features (S2), followed by dimensionality reduction (S3) and clustering (S4) steps. Here, we introduce Step 1 with the generation of textual descriptions using a VLLM (S1), followed by a textual embedding (S2). We also leverage the text produced in this phase to add an additional description step (S5) that increases the overall interpretability of the result. We note that the two parts of the pipeline that are different from the CNN-based pipeline are not in themselves new in the computer science literature (Stephan et al., 2024); we discuss the different angles explored by this study in the conclusion. Pipeline.

As a CNN we use VGG16, which has been shown to work well on the data used in this paper and so provides a strong baseline for comparison (Zhang & Peng, 2024). Other CNNs have also been tried, as reported in the appendix.

As a VLLM we use OpenAI’s gpt-4-turbo. We note that some open source VLLMs also exist at the time of writing, e.g., LLaVA (Liu et al., 2024) and BLIP (Li et al., 2022). While the objective of this paper is to compare approaches, to see whether this type of model (rather than a specific model) improves connotative clustering, it is practically important to consider whether our results can also be obtained using free and open tools. Therefore, we also test the pipeline using LLaVA.

As a prompt we used: “Describe the connotative meaning of this image, in one paragraph”. We did not set model parameters (e.g., temperature), to test a default behavior, but manually checked that the generated texts provided good representations of the images instead, which was normally the case. The only specific instruction we added (“in one paragraph”) is used to generate texts that are not too short (so that there is space to provide a connotative description, mentioning what meaning the image conveys) and not too long (so that the model does not deviate from our instructions). We note that the model parameter limiting the number of tokens could not be used for this, as it resulted in truncated text rather than a shorter but complete text.

To generate textual embeddings, we used BERT (model: all-MiniLM-L6-v2), which is commonly used since it is open and has shown high accuracy in several studies. Given our research questions, we only need a good enough model (which we can evaluate downstream in the pipeline looking at the cluster results), as our objective is not to compare the accuracy of different text embedding models. We have, however, also tested the OpenAI text-embedding-3-small model, which has approximately the same accuracy as the large version of the same model while being significantly smaller (and thus cheaper and more energy-efficient). This does not lead to significant differences in our results; we report the details in the appendix.

Several dimensionality reduction methods exist, and we have no strong theoretical reasons to expect some to work better than others. Therefore, we tested one approach that preserves distances (UMAP) and a classical PCA, both for different parameter settings. For UMAP we tested 5 and 10 components, and 5, 15, and 30 nearest neighbors (for a total of 6 settings), for PCA we tested 5, 10, and 20 components, and preserving 80% variability (4 settings).

As a clustering algorithm, we used HDBSCAN, for three main reasons. First, it does not assume globular clusters or clusters of similar sizes, which are not guaranteed to be produced by UMAP. Second, it does not require deciding the number of clusters in advance. Third, it removes outliers, which is a reasonable choice for this study: our evaluation is focused on the generation of embeddings, and using a clustering method that forces all data points to belong to one cluster would make it difficult to discern whether a wrongly clustered image very different from the rest is a result of the embedding step, or if the embedding indeed clearly separated the image from the rest but the clustering method forced the image to be clustered anyway. HDBSCAN requires specifying the minimum cluster size. We argue that this is analytically valuable. The minimum cluster size should be large enough, in order not to create too many clusters or non-generalizing clusters. It should also not be too large, in order not to miss interesting clusters or force the merging of semantically diverse clusters. The specific value depends on the research design, which implies that it should be optimized for specific empirical studies. Here, we test 50, 100, and 200. We note that when used in an empirical study, this parameter (min. cluster size) would not alone determine the final clustering, because the analyst would then post-process the result of the algorithm, for example merging analytically similar clusters. However, given the objective of this study, we refrain from introducing any subjectivity and only evaluate the output of the algorithms.

Denotative and Connotative Validity

The first part of the analysis is designed to evaluate if the VLLM-based approach creates higher-quality semantic clustering with respect to connotative validity than CNN-based approaches. In this context, we conceive of denotative semantic validity as referring to the extent to which images in a cluster represent the same or highly similar visual elements, and connotative semantic validity as referring to the extent to which images in the cluster refer to the same social meaning.

To perform this analysis, we adapt the cluster quality measure defined by Grimmer and King (2011). In the original version, cluster quality measures the extent to which intracluster similarities outdistance intercluster similarities. To use this approach to inspect denotative and connotative semantic validity, we split the similarity concept into denotative similarity, indicating the extent to which two images represent the same or similar visual elements, and connotative similarity, indicating the extent to which two images refer to the same social meaning. In this way, we can measure the extent to which clusters are internally cohesive and yet distinct with respect to denotative and connotative similarity, allowing us to quantify both the denotative and connotative validity of each clustering.

We randomly drew 500 pairs of images and asked expert human coders (unaware if the images were sampled from the same cluster of from different clusters) to rate the denotative and connotative similarity of the images within each pair on a three- point scale: (1) unrelated, (2) loosely related, (3) closely related. In this paper, we respectively assign numerical values 0, 1, and 2 to these three classes. Then, we compute the average score s s for same-cluster pairs, and the average score s d for different-cluster pairs. The final score is s s − s d , ranging from −2 to 2 and with expected value zero for random assignments. The evaluation developed in three phases. First, the coders rated denotative and connotative clustering quality for 250 pairs reaching an intercoder agreement measured with Krippendorff’s α of .55 for the denotative scores and of .56 for the connotative scores. Then, the coders discussed their differences during a consensus session and reached an agreement for all the scores. Next, the coders individually assigned scores to the remaining 250 pairs and a new intercoder agreement was measured (α .81 for the denotative scores and .71 for the connotative scores). After that, a new consensus session was held, and consensus score was obtained for all the 500 pairs. We have also computed pair-counting F1-measure, which highlights the same trends in the results.

For this experiment, we only considered results where at least 25 pairs of manually labeled images were included in the same cluster and at least 25 pairs of manually labeled images were included in different clusters. This is not necessarily the case for all clusterings, because for 6 images we could not generate a text description and because some images are not included in any cluster but labeled as outliers. As an example, the best clustering with respect to the connotative quality score labels 3869 images as outliers, leading to 85 same-cluster and 146 different-cluster pairs of images.

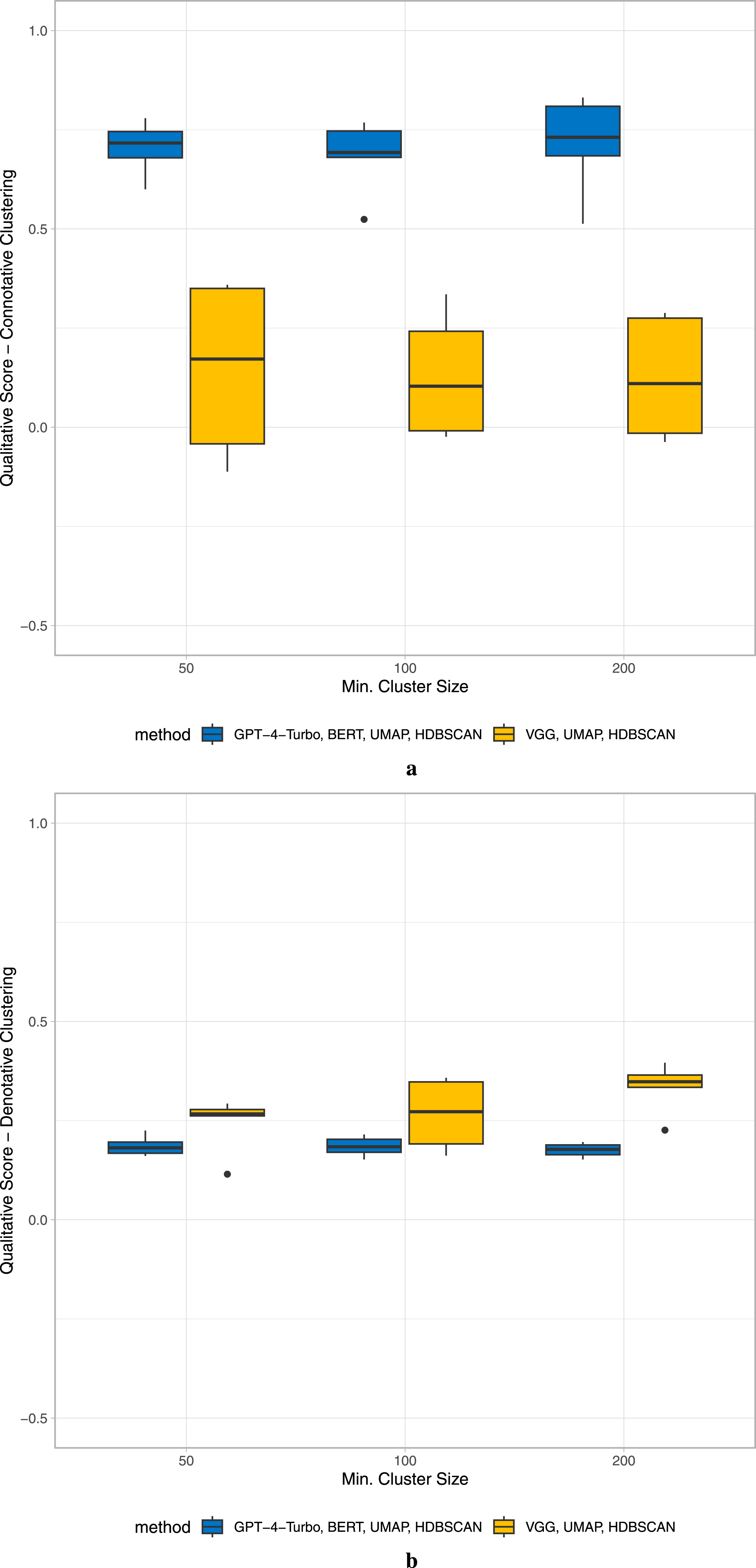

Interpretability

The next part of the analysis concerns the interpretability of the LLM-based approach. In the context of the typical use scenario assumed in this paper, interpretability refers to the interpretability of visual clustering via textual data. Since intermediary textual data is not produced for CNN-based approaches, we assess interpretability only for the VLLM-based approach. LLMs, and over-parametrized models more generally, are often labeled as black boxes. However, since here we are interested in the interpretability of the clustering, this does not necessarily require explaining the models that generate textual descriptions or embeddings, as argued by Grimmer et al. (2021). Clustering quality score for connotative (Figure 4(a)) and denotative (Figure 4(b)) clustering for VLLM (gpt-4-turbo) and CNN (VGG16). We visualize a boxplot to report the results for different strategies of dimensionality reduction. (a) Connotative clustering quality. (b) Denotative clustering quality.

To assess interpretability, we produced a description of each cluster by joining the descriptions of all images in the cluster and returning the k terms with the highest TF-IDF score. This is a common procedure in information retrieval, also available in commonly used text clustering software packages. 1 To reduce the number of terms and make the descriptions more compact, we removed terms that do not provide topic- specific meanings, such as conveys and suggests. Then we tested to what extent the descriptions corresponded to the clusters. To do so, we randomly selected sets of α images, where each set only contained images from the same cluster, and checked how often a human evaluator could correctly match a set with the explanation assigned by the algorithm. We also tested the reliability, using three evaluators.

The reason for using α > 1 is that we assume that some images can be clustered by mistake. The fact that such images cannot be matched to the descriptions is not a sign of low interpretability, but a mistake in clustering that is already assessed as part of the connotative and denotative validity.

Results

Connotative and Denotative Clustering Quality

Figure 4 shows a quality score for denotative (a) and connotative (b) clustering for the proposed VLLM-based strategy (using GPT-4-turbo as a specific model) and for the CNN-based clustering (using VGG16). This measure, defined by Grimmer and King (2011) for a generic similarity function and here differentiated into denotative and connotative similarities, is the difference between the average similarity of intra-cluster pairs of images and the average similarity of inter-cluster pairs of images, from a sample of manually annotated pairs (see the details in the methods section). Values range between −2 and +2, with zero indicating a random clustering. Per each clustering strategy, we show the scores per different values of the minimum cluster size parameter, with each boxplot summarizing the different scores obtained with multiple parameters for dimensionality reduction performed using UMAP (McInnes et al., 2018). Recall that for UMAP, we tested with 5 and 10 components, and with 5, 15, and 30 nearest neighbors, for a total of 6 experimental settings. An example of the impact of increasing minimum cluster size. Connotative semantic coherence of clustering degrades when moving from a minimum cluster size of 50 to a minimum cluster size of 200. (a) Min. cluster size 50 (b) min. cluster size 200.

The data shows a higher quality of connotative clustering for the VLLM-based approach regardless of the minimum cluster size. A larger minimum cluster size increases the variance and produces a few results of lower quality — although all significantly higher than the results of the CNN-based approach. The quality of the connotative clustering obtained by the CNN-based approach is stable overall, and it is not clearly affected by the minimum cluster size. The reason why the minimum cluster size impacts the connotative clustering quality of the VLLM-based approach seems to be that forcing clusters to be larger, images that look similar but are connotatively diverse can be clustered together (e.g., images of street protests about two different issues).

This can be seen from comparing Figure 5(a) and (b). The cluster represented by the random sample in Figure 5(a) has a very precise focus on the issue of plastic pollution in water, showing both sources and effect. The semantically comparable cluster showed in Figure 5(b) has a less well defined focus, mixing images referring to plastic pollution in the sea as well as on land together with images more generally connected with waste disposal and pollution. Within this perspective, as is often the case in clustering exercises, the right granularity of the concepts that should be clustered together is ultimately a decision for the researcher to make.

Looking at the quality score for denotative clustering, we observe that the CNN-based approach is marginally better than the VLLM-based approach. This is unsurprising, since CNNs have proven capable of clustering images representing the same objects or similar pictures while the VLLM-based method can easily ignore direct visual similarity in favor of connotative semantic proximity. A good example of this, which also exemplifies the limits of a denotative clustering, can be seen in Figure 6, where we compare a cluster obtained using the CNN-based approach (Figure 6(a)) with a cluster obtained with the VLLM-based one (Figure 6(b)). On the one side, it is clear that the CNN-based approach prioritizes shapes, objects, and colors, producing a cluster that, while centered around the visual representation of planet Earth, is semantically diverse (ranging from calls for veganism to climate data). On the other side, the VLLM-based approach clusters together the different shapes of wind turbines and solar panels with text-only messages calling for a transition to renewable energies. The resulting cluster is highly coherent from a connotative point of view at the expense of denotative coherence. A cluster obtained by the CNN-based approach (Figure 5(a)) compared with a cluster obtained by the VLLM-based approach (Figure 5(b)). The CNN-based approach favors shapes and object similarity while the VLLM-based approach ignores the visual difference between solar panels and wind turbines and produces a highly coherent cluster around the concept of renewable energy. (a) CNN-based method (min.cl.size 50). (b) VLLM-based method (min.cl.size 50).

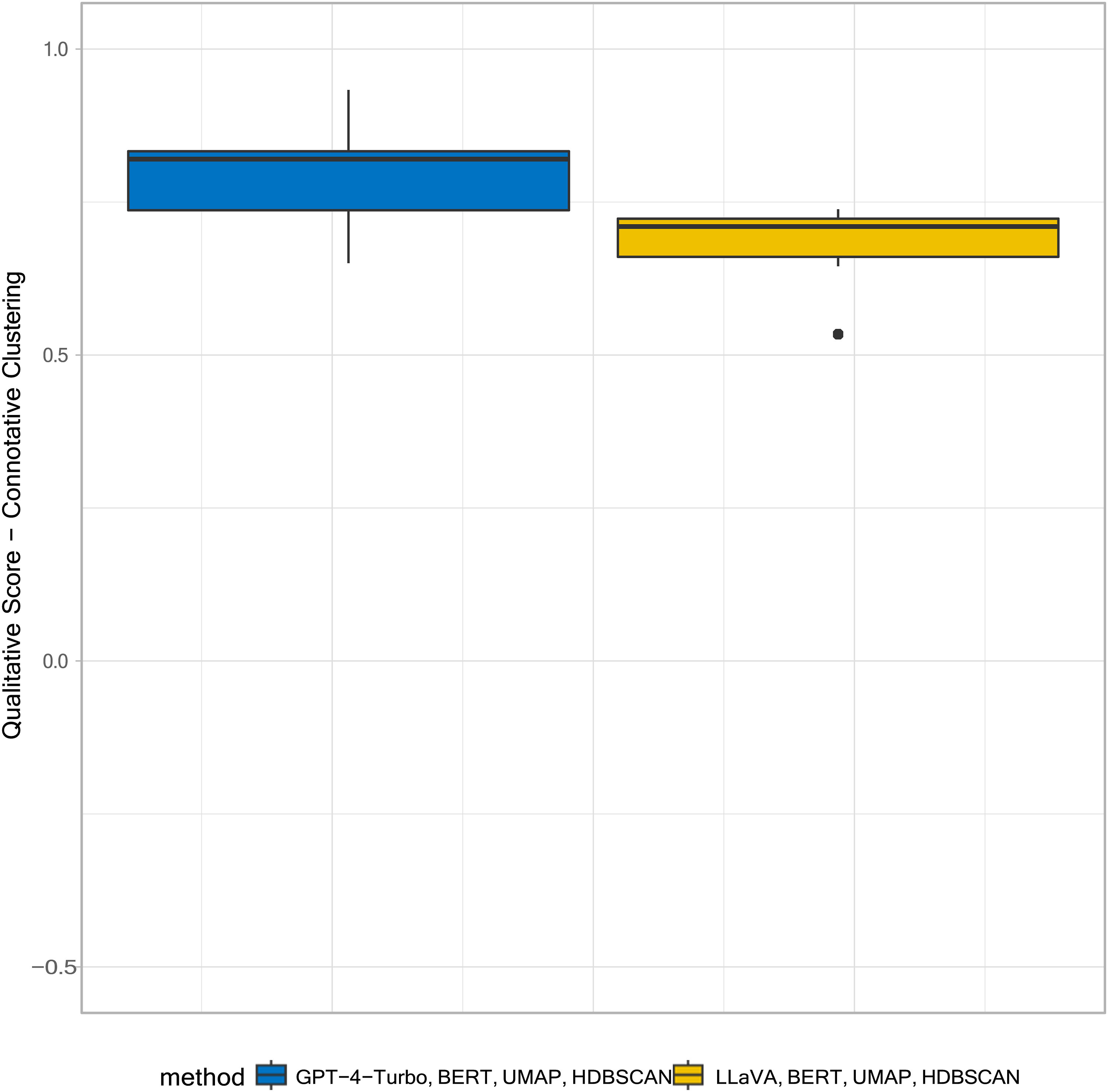

Figure 7 shows the connotative quality of clusterings obtained using a free and open VLLM (LLaVa), using the same settings of the best OpenAI model. Also in this case, the connotative quality obtained using a VLLM is significantly higher than the one obtained using a CNN. While the objective of this paper is not to assess specific models, we observe that connotative scores in this experiment are lower than those obtained using the commercial model, while still showing the same trend. A qualitative inspection of the descriptions produced by LLaVa indicates that the free model is less accurate at recognizing text, and that it misses some of the symbolic knowledge present in GPT. For example, GPT recognized a runic symbol present in a nature image, and successfully associated the image to eco-fascist themes. By contrast, the LLaVa model missed the symbol and symbolism, leading to an interpretation of calm and religiousness. In addition, in a few cases, LLaVa produced completely wrong results (specifically, sequences of random words), which also contributed to the lower scores. Clustering quality score for connotative clustering for LLaVa (llava-1.5-7b-hf) compared with the results obtained with gpt-4-turbo with the same set of parameters. We visualize a boxplot to report the results for different strategies of dimensionality reduction.

Building on these results, we can confidently answer the first research question. We demonstrate that a VLLM-based approach greatly improves the connotative semantic validity of visual clustering over a CNN-based approach from both a quantitative and a qualitative point of view. We also observe that adopting a VLLM-based approach results in a loss in quality of denotational clustering. This decline in denotational quality, albeit small, reflects an inherent trade-off when introducing a connotational approach. If the dataset contains images that are connotatively similar but visually distinct, we would naturally expect the denotational quality to drop when prioritizing connotational proximity.

Interpretability

To assess interpretability, we compute a description of each of the 32 clusters generated by one of the best VLLM-based models, where each description is a list of terms with high TF-IDF score. As an example, the explanation for the cluster in Figure 6(b) is: energy, renewable, solar, wind, turbines, sustainable, message (see the appendix for the list of all descriptions). Then we had three human evaluators tasked to assign a random sample of sets of images (five sets for each cluster, three images per set, in total 160 sets) to the clusters only using the descriptions.

The average Cohen’s Kappa between the three pairs of evaluators was .74. We then considered the majority label, which was available for 150 of the 160 samples (in the ten cases with three different labels, we just took the label of the first evaluator). As a result, the average precision and recall over all 32 clusters are, respectively, .83 and .83, with an overall accuracy of .83. As a reference, the expected precision and recall for a cluster assuming equal cluster sizes and random assignments is .03.

The values of precision and recall are not equally distributed across clusters (the detailed values for all clusters are provided in the appendix). Respectively 18 and 19 of the clusters obtain perfect precision and recall. Differences in cluster assignment are in some cases due to two clusters being semantically close. The following are three clear cases: (1) One cluster about conferences and one whose description includes terms: youth, formal, and speaking. (2) Two clusters about nature and water, with very similar terms (one having life, boat, the other beauty, river). (3) One cluster about protests and one whose description includes the terms: youth, activism, and action. (4) One cluster about climate change, nature, message and one with arctic themes.

Merging these pairs of clusters with similar semantics increases accuracy to .87.

This evaluation clearly demonstrates how, exploiting the intermediate textual representations, the obtained clusters are not only semantically meaningful, but also easily interpretable. This is a great advantage over CNN-based methods, for which the interpretability of the resulting clustering is often difficult to evaluate, especially when the clusters contain many images. Moreover, representative words can be used to communicate a digestible summary of cluster contents, in addition to displaying images.

Conclusion

In this paper, we assess the ability of a VLLM-based image clustering pipeline to produce semantically meaningful and interpretable clusters. We do so by defining denotative and connotative similarity functions and manually labelling randomly sampled pairs of images, and by generating textual descriptions of the clusters and testing the ability of human evaluators to match selected image samples with the right description, in both cases with the evaluators not being aware of the clusters produced by the algorithms. This procedure also allows us to use these labels to evaluate and compare a large number of settings for the two pipelines.

We note that the two parts of our pipeline that are different from the CNN-based pipeline have already been used in the computer science literature. Stephan et al. (2024) recently tested both the impact of clustering text descriptions and interpretability based on TF-IDF keywords. The use of TF-IDF keywords within text clustering is also a common task, implemented in popular libraries such as BERTopic. 2

This said, our research questions focus on new aspects that are fundamental for application in social research. The evaluation by Stephan et al. (2024) assumes that methods based on deep neural networks can map together semantically similar images, and that a text-based approach can outperform one not based on text. Yet, while not explicitly discussing this, their experiments focus on denotative clustering, e.g., showing that a text-based approach is better at identifying and explaining clusters about objects depicted in the images (bridges, churches, towers, etc.). In this paper, we differentiate between denotative and connotative semantics, suggesting that the latter is what is often important in social science research, and show how the different approaches target one or the other. We also study the concepts of semantics and interpretability in the context of social research, which is visible in our validation based on online communication images and alignment with human-defined and reliable notions of connotative and denotative similarity (instead of, e.g., benchmark datasets used to train classifiers, such as ImageNet and CIFAR), and also in the design of the interpretability tests.

The generation of intermediate image descriptions not only improves the connotative quality of the clusterings (which we have argued is the most important aspect of semantic clustering for common social science use cases) and interpretability, that is the focus of the experimental part of this paper, but also opens several new directions. For one, based on the evidence that a VLLM-based approach can provide a good representation of semantic similarity, one could consider using topic modelling instead of an embedding + clustering approach, e.g., LDA and its variations (Blei et al., 2003). Directly testing topic modelling would not have been methodologically appropriate for this paper, where our objective was to perform a comparison with CNN-based pipelines. To enable this comparison, we only replaced the CNN-based embedding step. In this way, both pipelines would produce the same type of intermediate output (embeddings), which could then be processed without changing the rest of the pipeline. However, the fact that VLLMs enable other pipelines should also be considered an advantage of using this general approach, and something to be tested in future work.

Another interesting direction following from this study concerns prompt engineering. In this paper, we focus on semantic clustering and interpretability, but one additional potential advantage of a VLLM-based method is flexibility, as the same approach can in principle be used to generate descriptions highlighting different types of visual cues (e.g., emotional and aesthetic) and considering different contexts (e.g., specifying the meaning of the image based on the knowledge of which type of actor produced it). Stephan et al. (2024) suggest how prompting can also be used to inject external knowledge. This requires a different type of experimental setting. We note that we also tested alternative prompts in our experiments (without prompt engineering), and obtained no conclusive evidence, and thus suggest a separate and focused experimental study as future work.

While offering clear benefits, the use of VLLMs for image clustering also raises questions about result interpretation and appropriate use. One aspect that should be checked is the potential bias introduced in the analysis, both with respect to possible mistakes, but also with respect to possible preferences assigned to one instead of a different cluster. This requires a specific analysis, but we anecdotally note that the VLLM-based approach produced a cluster about culture, showing people wearing non-western clothes. While this interpretation was aligned with that of the human evaluators, and thus did not result in lower validity scores, it is contextual and could also well have not been aligned if using other models or evaluators. It is also interesting to observe, again anecdotally, the prevalent arctic themes in a cluster supposedly about nature and climate change, suggesting that this association may be present in the training data (Segerberg & Magnani, 2025).

In our experiments, we did not post-process the outliers produced by the clustering method, for example assigning (part of) them to the clusters. This would have introduced an additional variable, making it more difficult to control the experiments while not contributing to answering our research questions. However, in studies where it is important to cluster everything that can be clustered, for example to compute a more precise measure of prevalence of the different visual themes, such a post- processing should be considered.

As a last important consideration, we should point out how our experiments show relative improvements (with respect to CNN-based approaches) and also good absolute performances, but the results are still not perfect in two regards. First, while semantically more meaningful (assuming that we are often interested in connotative semantics), the produced clusters are still based on some algorithmic choices that decrease the researcher’s control over the used semantic similarity. One thing is to see that the clusters are meaningful, another thing is to produce the clusters that the researcher would have produced in the absence of computational constraints. We acknowledge that we have not tested this and that it would be important to study this dimension. Second, there are still several mistakes in the clusters, for example, animals incorrectly identified as polar bears by the VLLM or minor visual elements that gain semantic centrality (e.g., an image of an activist eating candy from a bag representing penguins, being clustered together with pictures showing the consequences of climate change in the polar regions). Both issues suggest that, while in this paper we have focused on a specific part of the image clustering pipeline, depending on the research question, we expect many variations of this pipeline to be followed by (or intertwined with) interactive steps. It is noteworthy to observe how the widespread availability of LLMs and VLLMs is leading to renewed interest in clustering visual data for the social sciences, both in the direction of combining textual and visual elements and combining different types of clustering models into unified hybrid pipelines.

Footnotes

Funding

This work was supported by research grants from the Swedish Research Council (2021-02769), and for the POLARVIS project, supported by DFF Independent Research Fund Denmark (0257-00007B) and FORTE, the Swedish Research Council for Health Working Life and Welfare (2021-01646) under CHANSE ERA-NET Co-fund programme, which has received funding from the European Union's Horizon 2020 Research and Innovation Programme, under Grant Agreement 101004509.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.