Abstract

This paper provides a practical example and guide on how to augment or replace human coders with Large Language Models (LLMs) during content analysis. We demonstrate this by replicating and extending an influential study on environmental communication. Our setup, running locally on consumer-grade hardware, makes it feasible for university researchers operating within typical computational and legal constraints. We validate the LLM’s performance by replicating the original study’s codings, scaling the analysis to cover a tenfold increase in articles, and extending the LLM’s application to a comparable German-language corpus, comparing these results to human expert coders. We offer guidelines for instructing LLMs, validating output, and handling multilingual coding, presenting a replicable framework for future research. This paper is intended to systematically guide other researchers when integrating LLMs into their workflows, ensuring reliable and scalable coding practices. We demonstrate several advantages of LLMs as coders, including cost-effective multilingual coding, overcoming the limitations of small-sample content analysis, and improving both the replicability and transparency of the coding process.

Content analysis is a fundamental method in communication and media studies, enabling systematic examination of text for patterns, themes, and trends (Coe & Scacco, 2017). Traditionally, this requires human annotation of large corpora, which is both time-consuming and resource-intensive. With the advent of computational text analysis, scholars could algorithmically scale up research on journalistic and political texts (Laurer et al., 2024; Van Atteveldt et al., 2022; Wilkerson & Casas, 2017). Supervised machine learning, especially BERT-style classifiers, has become essential for natural language processing/NLP (Osnabrügge et al., 2023). However, these methods demand large, manually annotated datasets and multiple training-validation cycles (Laurer et al., 2024; Wilkerson & Casas, 2017). Reliability can also drop when models encounter out-of-domain data, and minor input alterations (e.g., typos) may disrupt classification (Gröndahl et al., 2018). Large Language Models (LLMs) build on transformer architectures and can learn from context without requiring task-specific training (Corral et al., 2024), potentially complementing or replacing conventional approaches.

In this paper, we outline a practical guide to integrating LLMs into content analysis, demonstrated by replicating and extending Feldman et al. (2015)’s study on newspaper coverage of climate change. By adopting LLMs, we address high costs, limited scalability, and replication challenges inherent in human coding. Our key objectives are to: (1) provide guidelines for LLM setup, validation, and handling of multilingual or proprietary data; (2) replicate Feldman et al.’s original coding; (3) demonstrate scalability by analyzing ten times more articles; and (4) assess the model’s multilingual capabilities through a comparable German-language corpus. To streamline and clarify our objectives, we pose the following research questions:

Literature Review

Current State of the Methodological Integration of LLMs

The field of NLP was revolutionized by LLMs—massive pre-trained models with the capacity to generate and seemingly “understand” text. Trained on extensive sources (e.g., web scrapes, open-access books, and Wikipedia) (Brown et al., 2020), LLMs have demonstrated remarkable capabilities in zero-shot and few-shot learning scenarios, where they can perform tasks without explicit training on specific datasets and often perform comparably to human coders (Gretz et al., 2023; Pilny et al., 2024). This in-context learning ability allows them to adapt to new tasks with minimal additional training (Brown et al., 2020). Studies show their effectiveness across tasks like sentiment analysis, stance detection, and accusation detection (Corral et al., 2024; Fields et al., 2024).

Once trained on diverse data, LLMs reduces the need for researchers to provide labeled datasets (Kuzman et al., 2023). Moreover, LLMs can handle a variety of languages, helping to overcome the focus on texts from Anglo-Saxon countries (Zhong et al., 2023) or the analyses of only few languages due to lack of coders proficient in multiple languages. In content analysis, LLMs can outpace manual methods by removing the need for coders to actually read texts and for extensive coder training (Coe & Scacco, 2017).

Huang et al. (2023) found that ChatGPT showed “great potential as a data annotation tool” (p. 2), outperforming traditional machine learning models in certain tasks. Similarly, Gilardi et al. (2023) demonstrated that ChatGPT can outperform human annotators in terms of speed and accuracy. LLMs have also been found to outperform human coders in reliability for annotation tasks (Törnberg, 2023) and can identify complex concepts such as hate speech or hypocrisy claims (Corral et al., 2024; Reiss, 2023).

However, concerns remain about their reliability, consistency, and validity (Reiss, 2023). They are inherently non-deterministic, producing variable outputs for the same input. Although such disagreement parallels that seen with multiple human coders, no standardized procedures yet exist to enhance intercoder reliability in automated LLM workflows.

Strategies to Improve Reliability

Designing effective prompts is critical to improving the performance and reliability of LLMs. This sensitivity and potential randomness require carefully formulated and engineered prompts (Argyle et al., 2023; Wang et al., 2024): Structured, iterative prompting techniques can enhance performance of LLM tasks, while simultaneously reducing bias coming from the model’s parameters (Furniturewala et al., 2024). Below we list techniques that we implemented and hope to be generally useful when including LLMs in content analysis:

Using

Thus, through this approach, the LLM assists

Pooling outputs from

Despite all the above, human involvement remains a crucial part of the workflow in the form of a

Pipeline for Developing LLM Instructions

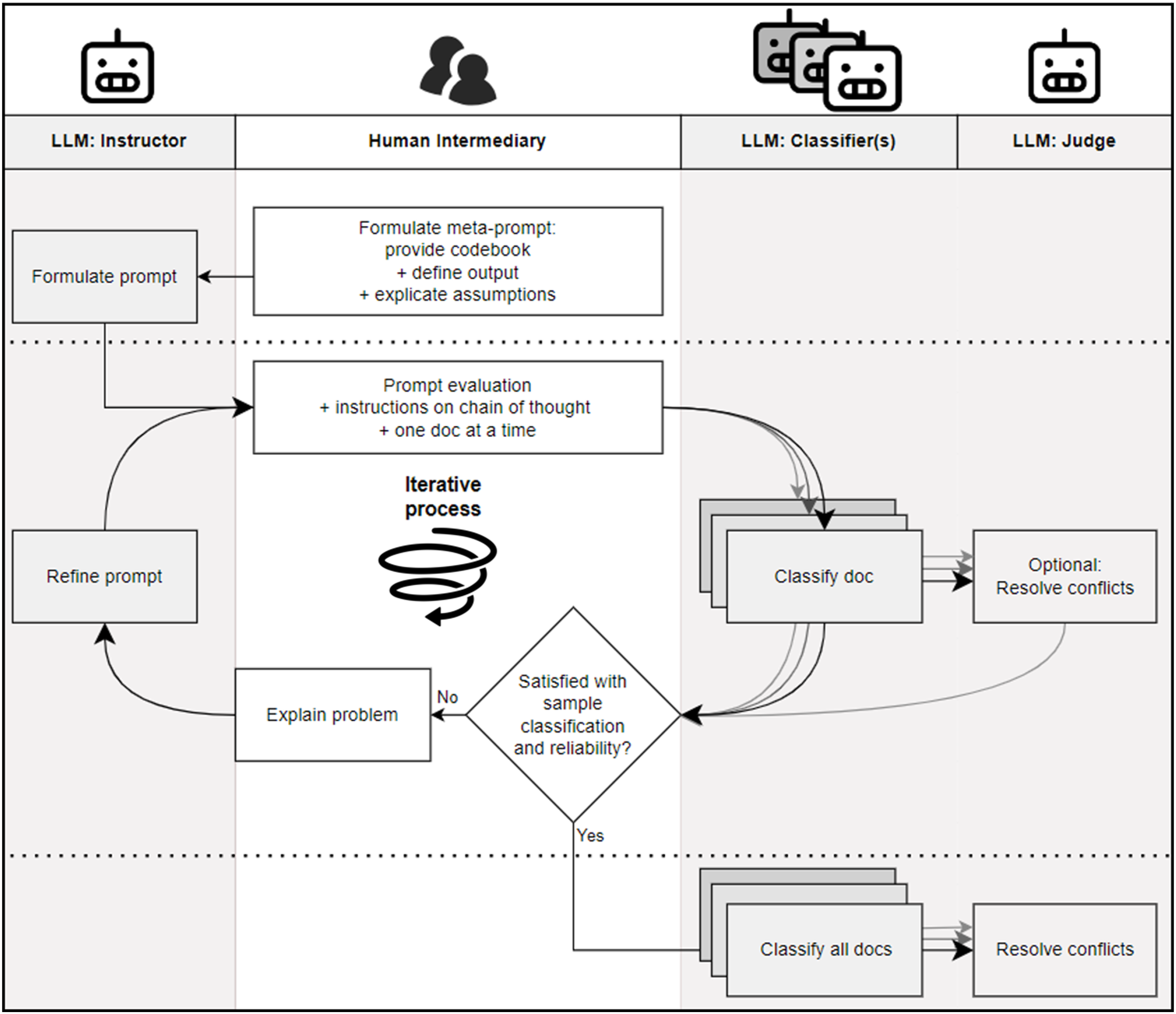

Here, we introduce our agentic workflow for LLM-driven content analysis. It comprises three main entities—Instructor, Classifier, and Judge—plus the Human Intermediary who oversees prompt refinement. We present this general framework before demonstrating its concrete application in a separate section.

Agents and Their Roles

Building on the findings of previous research on the iterative combination of classifications from multiple LLM agents with adjudication by human experts (e.g., Heseltine et al., 2024), we present a pipeline for a continuously self-refining classification process. This classification pipeline is general enough to be useful to other, leveraging the collaborative interaction between three key entities: the Instructor Agent, the Human Intermediary, and the Classifier Agent. The process, visualized in Figure 1, ensures that text classification tasks are refined iteratively, increasing coding accuracy and consistency. Additionally, we found it beneficial to incorporate a Judge Agent to reduce noise and enhance accuracy in the Classifier’s outputs, especially when coding complex concepts like, in our case, “efficacy.” Below, we provide a brief description of each agent’s role. Our agentic workflow when optimizing and validating the prompt for the classifier agent.

The Iterative Workflow

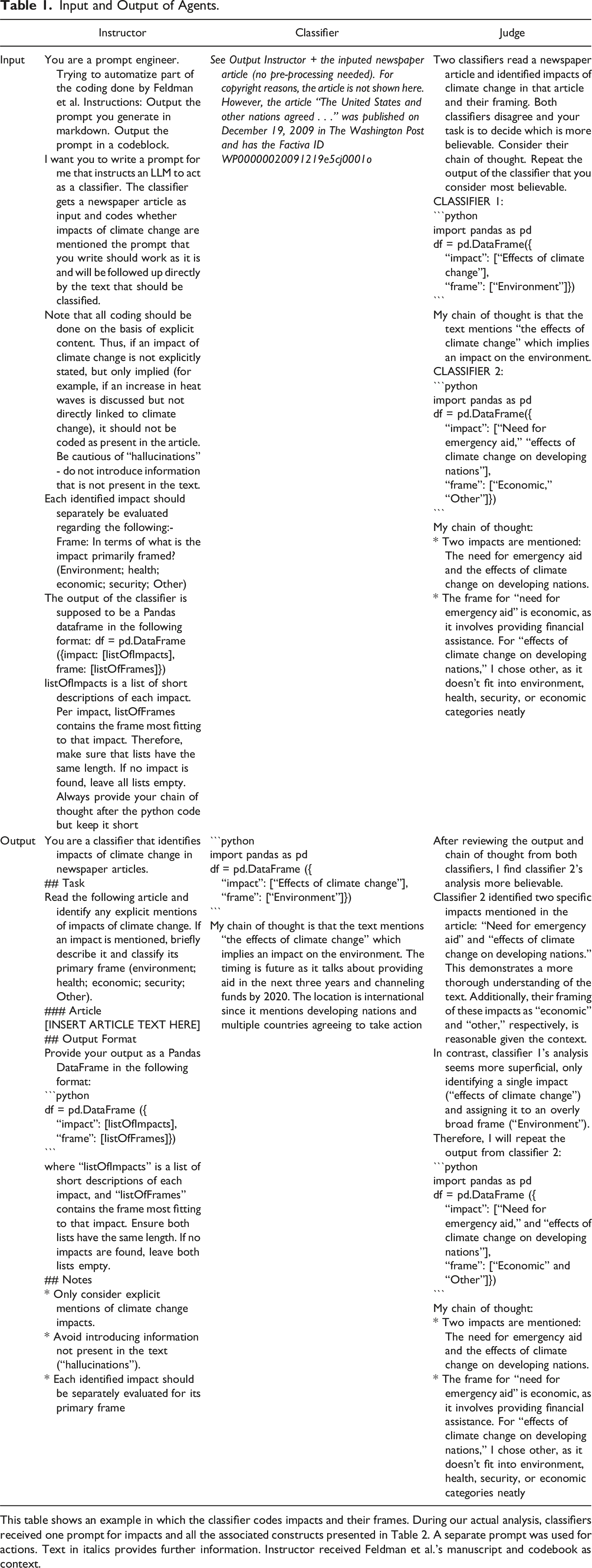

Input and Output of Agents.

This table shows an example in which the classifier codes impacts and their frames. During our actual analysis, classifiers received one prompt for impacts and all the associated constructs presented in Table 2. A separate prompt was used for actions. Text in italics provides further information. Instructor received Feldman et al.’s manuscript and codebook as context.

This prompt is then reviewed and refined by the Human Intermediary. Once the prompt is approved by the human, the Classifier codes a sample of documents based on these instructions. If the classifications are satisfactory, the system moves forward to classify the entire dataset. In our case the criteria were a high intercoder reliability (Krippendorff’s alpha >0.7): (a) within multiple iterations of the Classifier on the same document, and (b) between the Instructor and human codes. If issues arise, the Human Intermediary intervenes, identifying problems and explaining them to the Instructor. The Instructor then is asked to adjust the prompt to address the problem.

This iterative loop, where prompts are continuously adjusted and outputs are re-evaluated, enhances the classification process by improving the accuracy and robustness of the model. This approach leverages both the strengths of LLMs in performing large-scale classification tasks and the precision that human oversight provides. However, we found that after two iterations in the refinement loop, no big gains in terms of reliability are to be expected. If reliabilities are not acceptable by that point, we suggest reconsidering the theoretical concept or dropping some from this workflow.

Our Case Study

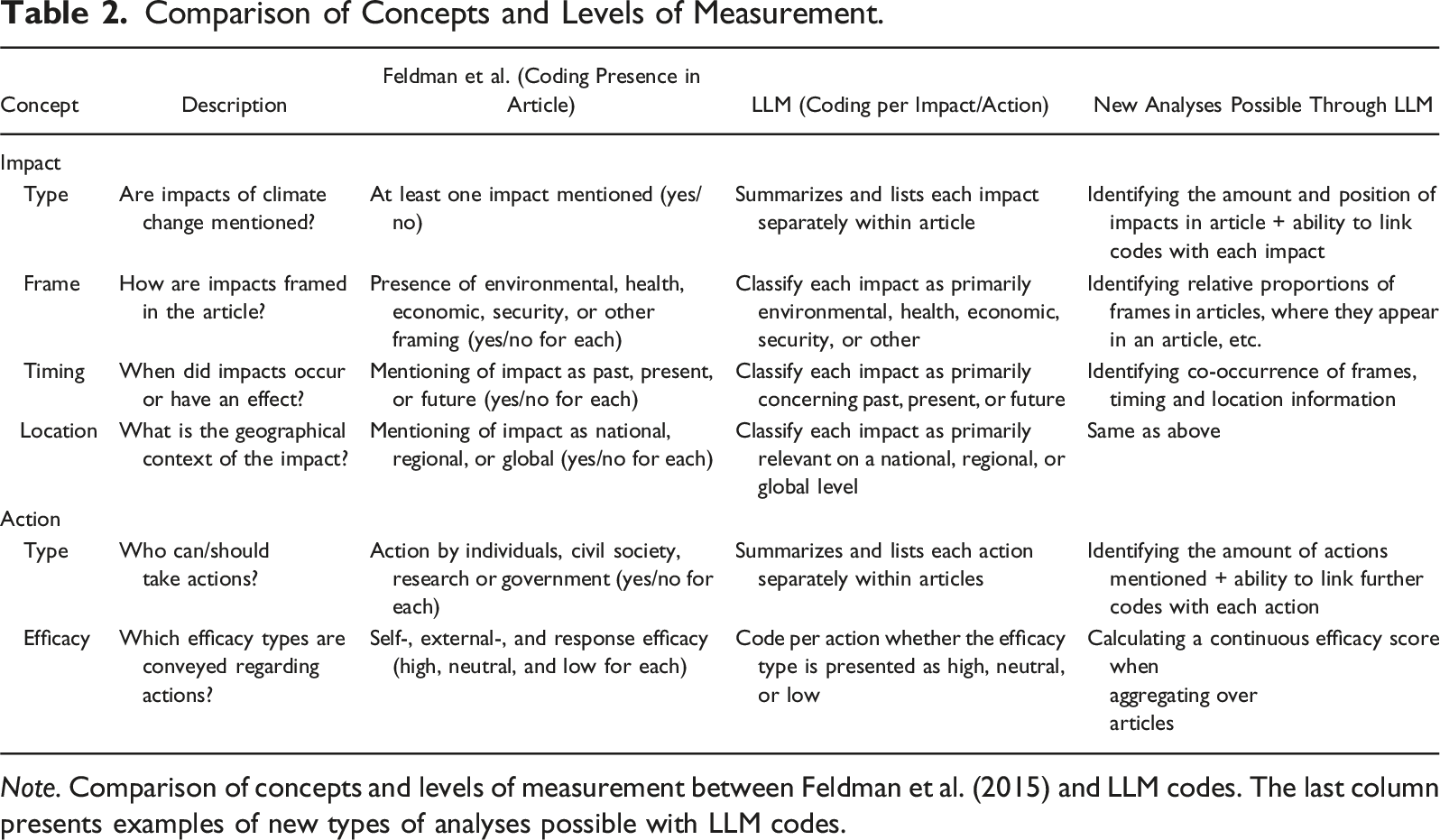

Comparing Feldman et al.’s and Our Approach

In this section, we demonstrate how we applied the workflow described in Section 3 to replicate and extend Feldman et al. (2015). They examined how climate change was portrayed in four major U.S. newspapers between 2006 and 2011. Initially, they collected all articles containing “climate change” or “global warming” in the headlines or lead paragraphs, resulting in 3274 articles. To manage the workload associated with manual coding, they selected every fifth article, yielding a sample of 642 articles that were coded by three human experts.

While commendable given the human labor required, this sampling introduced limitations that restricted their analysis. The uneven distribution of articles across newspapers—for instance, USA Today contributed only 67 articles—made fine-grained comparisons statistically challenging and increased the risk of Type I errors due to multiple testing. This lack of statistical power potentially hindered detecting nuanced differences in climate change reporting over time or across outlets. This is not a fundamental critique of Feldman et al. but highlights the scalability advantages LLMs offer for content analyses.

Comparison of Concepts and Levels of Measurement.

Note. Comparison of concepts and levels of measurement between Feldman et al. (2015) and LLM codes. The last column presents examples of new types of analyses possible with LLM codes.

Our Setup and Sample

We next detail how the agentic pipeline in Section 3 was used to replicate Feldman et al.’s codes, validate the LLM’s reliability, and then scale up our analysis. We aimed to reproduce the original coding decisions from Feldman et al. with our pipeline, thereby assessing the model’s ability to replicate human coding reliably. We then expanded the analysis by applying the LLM to the entire corpus of 3274 articles, demonstrating how LLMs can help overcome the limitations of small-sample studies. Furthermore, we extended the study to a comparable German-language corpus (4863 articles) to evaluate the LLM’s multilingual capabilities. For comparability between the samples, all articles were collected using identical inclusion criteria as those used by Feldman et al. and using the same database (Factiva) to collect all articles. In the United States, we used the same four outlets from Feldman et al. (2015): The New York Times (liberal), The Washington Post (center-liberal), USA Today (center), and The Wall Street Journal (conservative). For Germany, we chose four of the country’s largest dailies—taz (liberal), Süddeutsche Zeitung (center-liberal), Frankfurter Allgemeine Zeitung (center-conservative), and Die Welt (conservative)—based on prior classifications (Donsbach et al., 1996; Eilders, 2004). Both sets of outlets are thus broadly comparable in terms of national reach and ideological diversity within their respective media landscapes.

To achieve our objectives, we selected the Llama 3.1–70b model with 6-bit quantization, executed on a high-end consumer-grade local computing setup. Llama 3.1 was chosen after pre-testing several state-of-the-art models available in 2024, with Llama 3.1 demonstrating robust multilingual capabilities and the ability to handle nuanced, large-scale textual data. We chose the largest model (in terms of numbers of parameters) that could fit on the RAM available to us since larger models perform generally better for classifying complex concepts (Corral et al., 2024). Running the LLM locally allowed us to process proprietary data securely while adhering to data privacy regulations and legal restrictions, constraints typical for research in media studies and whenever dealing with confidential data. The data is not passed to corporate service providers and remains on the scientist’s own research infrastructure. This ensures that no copyright infringement or privacy issues arise from the unauthorized transfer of (training) data.

As our starting point, we provided the LLM with the original codebook used by Feldman et al. (2015). Their codebook included definitions of each coding category, criteria for determining the presence or absence of specific information, and examples from the original dataset. We then instructed the LLM to go beyond the codebook and code each identified impact and action separately. In Section 3, we describe in detail how these instructions were developed.

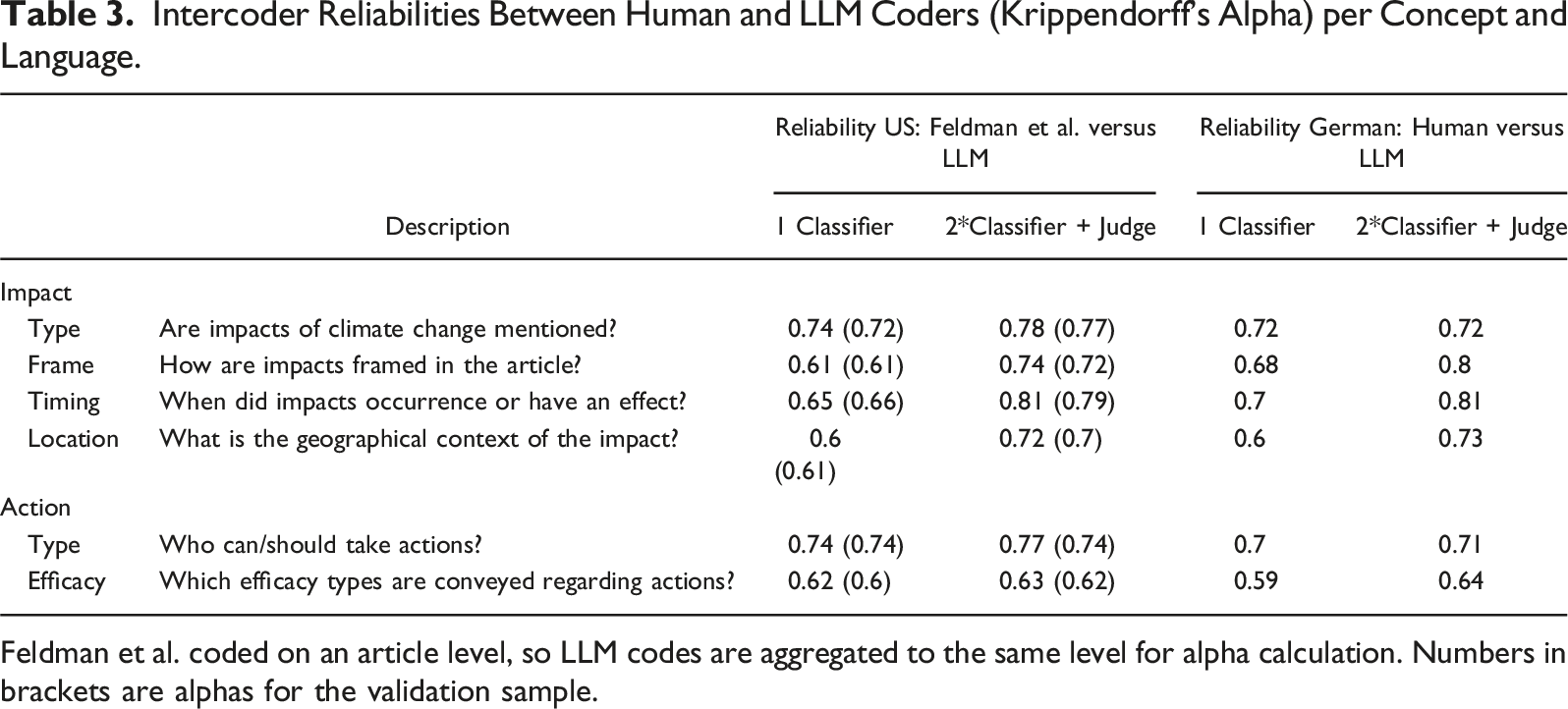

Validation of LLM Classifications

We compared the LLM output with the original human-coded data to assess accuracy and consistency. Iterative refinements were made to the prompts and instructions based on identified discrepancies, aiming for a Krippendorff’s alpha of 0.7 or higher, usually considered indicative of reliable coding. The most successful refinements for improving reliability scores were: (1) explicitly reminding the model not to “hallucinate” (i.e., invent information not present in the source text), (2) breaking down complex coding instructions into separate prompts, and (3) requiring the model to quote directly from the text to justify each assigned code.

Intercoder Reliabilities Between Human and LLM Coders (Krippendorff’s Alpha) per Concept and Language.

Feldman et al. coded on an article level, so LLM codes are aggregated to the same level for alpha calculation. Numbers in brackets are alphas for the validation sample.

We leveraged the LLM’s multilingual capabilities by applying the same coding instructions without translation, allowing the model to process German-language articles directly. Recent LLMs, particularly the Llama version which we used in this study, demonstrate strong performance in classification tasks across both German and English. This effectiveness is proven to remain consistent even when the language of the query differs from that of the document (Kleinle et al., 2024). Results from the LLM were compared with coding performed by two German expert coders on 200 randomly selected articles (see Table 3). Intercoder reliability between the human coders and LLMs was then assessed. When comparing consistencies between LLM and human codings, we see similar performance across the two languages, achieving acceptable levels of reliability. The classifications for “efficacy” form an exception with reliability <0.7. We discuss possible reasons for this in Section 5.

Analyzing the results in Table 3, we observe that incorporating the Judge agent generally enhanced the alignment between the LLM’s classifications and those of human coders. Specifically, using two classifiers in combination with a judge improved the consistency and reliability of the predictions, as evidenced by higher Krippendorff’s alpha values. Additionally, as detailed in Appendix Table A1, the LLM tended to be more sensitive than human coders, identifying the presence of coded variables more frequently within the texts. This increased sensitivity was especially pronounced when the Judge agent was included, suggesting that while the ensemble approach enhances detection rates, it may also lead to a higher frequency of positive identifications compared to human coding. However, we also show in the Appendix that this did not qualitatively change the results found by the more conservative human coders.

Discussion and Recommendations

Our experience integrating LLMs into content analysis demonstrate the practical advantages, including scalability, multilingual reach, and cost-effectiveness in their use. Yet these benefits demand a structured approach, and human oversight remains essential—particularly for prompt refinement and performance evaluation.

Computational overhead varies greatly depending on how LLMs are instructed. Breaking coding into subtasks can boost reliability but multiplies processing time because the model must reset and reprocess each document to minimize hallucinations. Researchers should consider which subtasks are truly necessary and bundle them carefully to manage resources.

Similarly, re-running the classifier to improve reliability significantly adds overhead. With two M3 Max processors, our process took two weeks of continuous operation, so we limited ourselves to two runs per article. Future advances may ease these trade-offs, but balancing computational costs against analytical rigor remains inevitable under current constraints.

A key insight is the transparency provided by the LLM’s chain-of-thought reasoning. Discrepancies between the LLM’s classifications and those of Feldman et al.’s human coders were challenging to reconcile due to the lack of access to human coders’ rationales. In contrast, the LLM offered explicit reasoning for each classification, facilitating troubleshooting and enhancing replicability.

Our procedure uses a Judge agent to review the Classifier’s chain-of-thought reasoning, providing a systematic, transparent way to reconcile discrepancies and enhance accountability. While many content-analysis pipelines rely on a single pass or simple voting, the Judge explicitly weighs chain-of-thought outputs from multiple runs before rendering a final decision—an advantage when nuanced interpretation (e.g., “efficacy,” Table 3) is required. In traditional human-coded analyses, a third expert or internal discussions typically settle coder disagreements without leaving transparent rationales. By contrast, our automated Judge preserves explicit reasoning, enabling future researchers to examine or challenge each decision.

We acknowledge alternative approaches to reconciling multiple LLM outputs, including ensemble-learning techniques from machine learning. Although the Judge agent shares similarities with the concept of an “ensemble,” it differs by leveraging chain-of-thought rationales, rather than merely counting votes. A full benchmark against different ensemble methods would be informative but lies beyond our present scope.

Efficacy coding proved especially challenging, with reliability dropping both across repeated LLM runs and between LLM output and human coders. This may reflect the nuanced, less concrete nature of “efficacy” compared to attributes like geographic focus. Despite three prompt refinement cycles aimed at clarifying this construct, we did not reach our target reliability. This shortfall could stem from the concept’s theoretical vagueness, incomplete instructions, or current LLM limitations—exposing a common issue that even human coders debate. Future work must clarify whether advanced models or refined conceptual frameworks can resolve these persistent gaps.

Looking ahead, we recommend exploring the most recent suggestions to further improve LLM reliability. For example, Kumar et al. (2025), show that a pipeline combining chain-of-thought reasoning with retrieval, self-consistency, and self-verification halves hallucination rates and improves accuracy by 6%–10% points on fine-grained classification benchmarks. Drawing on these findings, we would, first, break the task into a two-stage sequence, detecting the mere presence of any efficacy claim before further classification of the claim; this hierarchical approach lightens the model’s cognitive load and reduces false positives. Second, we would exploit the larger context windows of current models by prefacing each prompt with several previously validated efficacy sentences, giving the model concrete anchor points for its reasoning. Third, we would add a self-verification pass: the model re-examines its own prediction in light of the retrieved evidence and either confirms the code or flags the item for human review. Kumar et al. report that such a retrieval-augmented, self-verifying workflow pushes Krippendorff’s α from roughly 0.65 to above 0.80 on comparable tasks, suggesting that comparable gains are attainable for the efficacy construct analyzed here.

We also found that the LLM’s multilingual capabilities greatly facilitate analyses across different languages. The same coding instructions could be applied irrespective of the language of the text, eliminating the need for translation or separate coding protocols and coders. This is a substantial improvement over traditional methods, where language barriers among human coders and the limitations of language-specific machine learning models often hinder comprehensive multilingual analyses.

LLMs will likely extend beyond text to multimodal data, such as images, audio, and video, greatly expanding the scope of content analysis. Our workflow can adapt to these developments, enabling researchers to examine diverse data while preserving methodological rigor and transparency.

We encourage future work to harness these LLM capabilities for greater analytical depth. Sharing chain-of-thought outputs in scholarly datasets can foster more open, replicable research. Locally deployed LLMs also allow researchers to code proprietary data without breaching privacy or legal constraints, ensuring the benefits of advanced text analysis remain ethically and legally sound.

Despite these opportunities, replacing human coders with LLMs alters both research design and text interpretation. LLMs may match or exceed human reliability but do not truly “understand” content, instead applying learned patterns. Qualitative inquiry, by contrast, relies on critical, in-depth engagement with text. Incorporating LLMs therefore requires rethinking how we infer meaning and draw conclusions, underscoring the need for triangulation with human-led approaches to ensure robust interpretations.

As in all applications, the use of LLMs for content analysis raises ethical concerns related to transparency, accountability, and the risk of perpetuating biases without proper human oversight. Additionally, there are environmental concerns, particularly regarding energy consumption and the associated environmental effects when training the models.

In conclusion, while LLMs present powerful tools for content analysis, their effective deployment requires careful consideration of computational resources, methodological design, and the balance between depth and reliability of analysis. Many of these challenges are not new, however. Rather, novel LLM-based approaches to the application of text analysis highlight in a more pressing way the need for scientific rigor.

Supplemental Material

Supplemental Material - A Practical Guide and Case Study on How to Instruct LLMs for Automated Coding During Content Analysis

Supplemental Material for A Practical Guide and Case Study on How to Instruct LLMs for Automated Coding During Content Analysis by Mike Farjam, Hendrik Meyer, and Meike Lohkamp in Social Science Computer Review

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.