Abstract

Large language models show promising capability in some qualitative content analysis tasks; however, research reporting their performance in identifying initial codes that underpin subsequent analysis is scarce. This paper explores the suitability of GPT-4 to assist in building a codebook for a discourse network analysis (DNA) of a recent alcohol policy reform. DNA is a codebook-driven approach to identifying groupings of actors who use similar policy framings. The paper uses GPT-4 to identify initial codes (‘concepts’) and related quotes in 108 news articles and interviews. The results produced by GPT-4 are compared to a codebook prepared by researchers. GPT-4 identified over two-thirds of the concepts found by the researchers, and it was highly accurate in screening out a large volume of irrelevant media items. However, GPT-4 also provided many irrelevant concepts that required researcher review and removal. The discussion reflects on the implications for using GPT-4 in codebook preparation for DNA and other situations, including the need for human involvement and sample testing to understand its strengths and limitations, which may limit efficiency gains.

Keywords

Introduction

In this exploratory paper, we consider the feasibility of using a large language model (LLM) to assist with the inductive analysis required to build a codebook. In future work, the codebook will be used in deductive coding for a discourse network analysis (DNA) of an alcohol policy debate.

Building the codebook involves identifying policy arguments being used in public media by different stakeholders. While there are benefits to researchers developing a codebook themselves (e.g. familiarity with the data assists in interpreting findings), our project required review of a large number of media items to build a comprehensive codebook, and we aimed to assess whether and how using a LLM, Open AI’s GPT-4, could make the task more manageable. A careful assessment of GPT-4’s performance in this task is critical as errors in the codebook would carry through to affect the study findings.

Study Context

In 2021, the New Zealand government announced its intention to review the Sale & Supply of Alcohol Act of 2012; minor amendments were later enacted in September 2023. We intend to use DNA to identify coalitions that were active in the associated policy debate. DNA has been used in previous studies relevant to public health to examine the complex groupings of stakeholders who attempt to influence policy and the ways they frame policy issues (e.g. Buckton et al., 2019; Fergie et al., 2019).

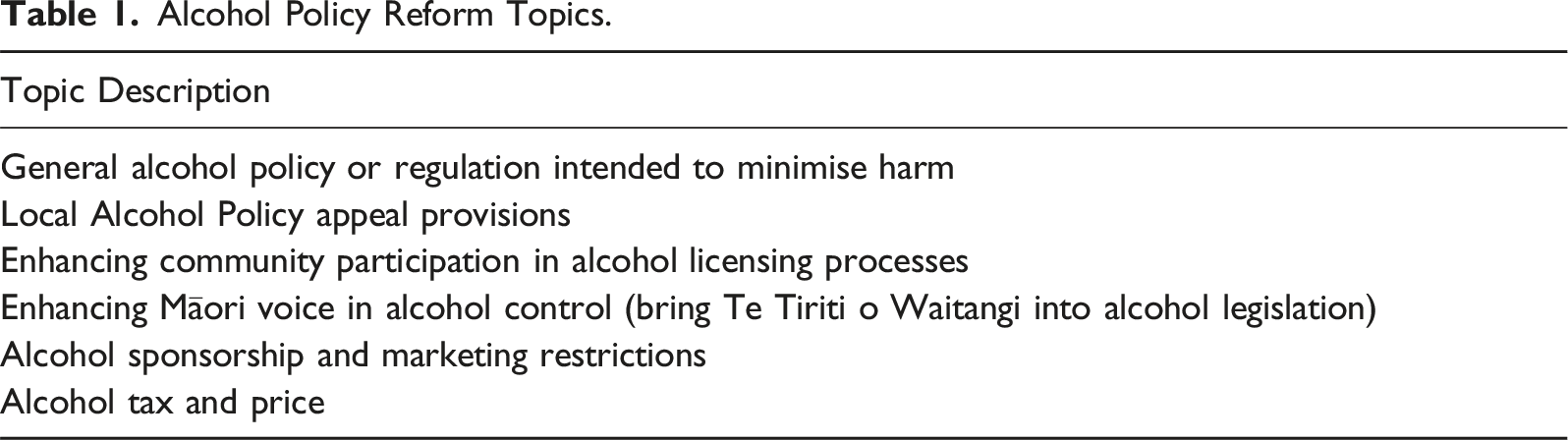

Whereas typical DNA studies cover one policy issue, initial discussions of the New Zealand alcohol reforms revolved around determining a suitable scope for the legislative review. Health advocates called for a broad review of the Act, including attention to alcohol availability, tax and marketing, a review of a process for establishing local alcohol policies, and the role of te Tiriti o Waitangi (the founding document of New Zealand) and voice of Māori (the indigenous people) in alcohol policy and licensing decisions. Ultimately the reform was confined to licensing and local alcohol policy processes.

Discourse Network Analysis

DNA provides visual maps of discourse coalitions or groupings of policy actors that may shape the development of a policy debate. These coalitions are represented in a visual network diagram, where ties between actors are based on their shared support or rejection of similar policy concepts. The targeted concepts may be defined in various ways, for example, as policy instruments (e.g. an alcohol marketing ban), or arguments that support a policy stance (e.g. alcohol marketing bans reduce underage drinking).

The concepts we focus on are justifications or arguments actors have given for or against alcohol policy change (hereafter referred to as ‘concepts’), in order to identify the discourse networks – clusters of actors who framed alcohol policy issues in similar ways – during the policy reform.

The concepts are identified at a content level within public statements to build a codebook. The statements are often drawn from large datasets involving long pieces of text such as media articles or written policy submissions. The codebook is used to conduct a relatively simple content analysis, that is, no further analysis is undertaken to identify themes; for each relevant statement in the text, DNA requires a record of the matching codebook concept used, the actor and their agreement (support for or opposition to the concept). This is sufficient to produce diagrams of the discourse network.

DNA has traditionally involved substantial time and resources to manually code media articles. First a codebook is developed, often based on a sample of articles (e.g. a 10% sample, Buckton et al., 2019). All articles are then read to identify relevant policy statements, which are coded deductively.

Alcohol Policy Reform Topics.

Recent Use of LLMs for Inductive and Thematic Analysis

Early studies using LLMs for qualitative analysis tasks have been exploratory and note the lack of established methodology (e.g. Dai et al., 2023; De Paoli, 2023; Gilardi et al., 2023; Kirsten et al., 2024; Tai et al., 2024; Xiao et al., 2023). They identify various limitations that must be considered, such as potential bias in the model training, production of illogical outputs, and limited transparency as to how these ‘black box’ models reach conclusions.

The studies above have tested accuracy by comparing qualitative analysis by LLMs to that conducted by human coders (HCs) on the same data. Others have used GPT-4 as a second coder or assistant alongside HCs, for instance, to support the identification of initial codes, and the organisation and review of coding schemes and themes (Dai et al., 2023). The studies indicate that LLMs can produce promising results in the type of content analysis tasks that are also involved in DNA, particularly the relatively less complex tasks of deductive coding of text statements against a codebook and sentiment analysis. In the latter, LLM performance was comparable to HCs but with considerable speed and cost advantages. Nevertheless, due to the limitations of the models, the majority of studies indicate it is desirable to maintain human oversight. In large datasets, it is further recommended that manual analysis of test samples be conducted to assess and report the accuracy of tasks completed by LLMs (Riordan et al., 2024).

As a first step, it is important to evaluate the suitability of LLMs for the component tasks of a qualitative analysis process like DNA (Kirsten et al., 2024). As noted, LLMs appear well suited to the type of tasks involved in the deductive steps of DNA; however, to the best of our knowledge, the performance of LLMs in identifying relevant initial codes in a dataset to develop a codebook has not been compared against HCs. However, one study reports most initial codes generated by GPT-4 contained the structural elements they were seeking (Drápal et al., 2023).

We anticipate the inductive coding required to build a codebook will be the most challenging step of DNA for GPT-4 as it involves a degree of interpretation to recognise relevant concepts; in our case, this means identifying both the mention of one of six relevant aspects of alcohol policy (Table 1) and a reason for or against reform. Our research questions are therefore: 1. To what extent does GPT-4’s identification of relevant concepts in media items align with that of HCs for the purposes of building a codebook for DNA? 2. Based on the identified alignment and limitations, to what extent can GPT-4 be used to independently identify the concepts in a large sample of media items?

Methods

As recommended by Riordan et al. (2024), HCs and GPT-4 separately identified concepts in a test sample of media items, and then compared GPT-4’s outputs to those of the HCs as the benchmark to assess the degree of alignment.

Data Sources

Online media items were identified by a commercial media monitoring service using keywords relating to alcohol policy reform. The search returned 3990 media items drawn from New Zealand media outlets including traditional press, blogs, press releases, industry news outlets, and websites. Radio interviews were included to capture wider perspectives on alcohol and because some stations are a key source of news relevant to Māori.

The items were published between January 2021, when the government’s intention to review the alcohol legislation was officially confirmed, and September 2023 when an amendment was enacted. Due to the range of keywords and prominence of alcohol in the media, initial checks indicated the full dataset included many items that were not relevant: for instance, reports on alcohol-involved vehicle crashes and outlet licensing hearings which did not contain policy statements.

Trial Sample

A random sample of 108 media items was drawn with the total number of interviews and text articles proportional to their prevalence in the dataset. The sample size was expected to yield approximately 30 relevant media items, which we considered a practical size for our trial, that is, to assess GPT-4’s performance with regard to how it might assist with identifying additional relevant concepts in the full dataset.

For the majority of articles, a Python script using the web scraping package BeautifulSoup (https://pypi.org/project/beautifulsoup4/) was used to download the article text from the list of links provided by the media monitoring service. This failed for some specific media sources due to their web pages requiring JavaScript code to run in the browser. An additional script was written to download articles from these sources using the browser automation package Selenium (https://www.selenium.dev/) running Firefox in headless mode.

For radio items, the audio files were downloaded and a Python script using the OpenAI API had the audio transcribed by the Whisper model (‘whisper-1’).

Researcher Identification of Concepts

The software package Discourse Network Analyzer (Leifeld, 2023) was used to manually identify and annotate relevant passages of text with the following variables: 1. Actor – a named individual or organisation; 2. The policy topic discussed (of the six relevant topics); 3. Concept (the argument supporting or opposing the policy topic).

Concepts were identified at a descriptive content level, as suggested by Leifeld (2017). Two HCs coded half the media items independently, compared initial findings and coded the remainder collaboratively. Where one sentence or statement referred to more than one concept, each distinct concept was coded. Initially 91 relevant concepts were found in the sample, within 25 of the 108 articles (the remainder contained no relevant statements). Through further discussion, the HCs combined some closely related concepts and removed duplicates, reducing the total number from 91 to 48 for the initial codebook. The concepts were grouped into ‘families’ for ease of reference.

GPT-4 Prompt Design

Drawing on recommended practices for prompt development (Zhang et al., 2023), we tested several prompts on a much smaller sample of 12 media items that had been coded by the HCs, eight of which contained relevant concepts. A small sample size reduces certainty in the effectiveness of the prompt, but allowed for rapid trial and error assessment while fine tuning its design.

We aimed for the prompt to include all relevant concepts, at the cost of including some irrelevant concepts.

A system message can provide task context and parameters to GPT-4. We instructed the model to act as a researcher, to be objective and accurate and to only draw findings from evidence present in the supplied text.

The prompt was designed to apply to each media item. As one media item or one sentence might contain multiple concepts, the prompt instructed GPT-4 to list each distinct concept in a media item separately.

We then tested several prompts informally, observing whether the desired categories of outputs were returned in the desired structure, that is, 1. a short summary of the concept is supplied; 2. a text segment is quoted containing the concept; 3. the concept (argument) was provided by an identifiable actor and relates to one of the six desired policy topics; 4. irrelevant concepts or segments of text are not returned.

We observed that the longer prompts with more context were more effective than the first shorter prompts (available in supplemental file 1) at producing the desired output structure and identifying relevant media items and concepts, and included fewer irrelevant concepts.

One recurring problem was that GPT-4 often identified a policy itself or a policy gap as a concept, for example, ‘Need to reform marketing policy’, instead of the supporting reason or argument we were interested in. By adding an instruction describing this error and asking GPT-4 to avoid it, the issue was largely but not entirely resolved.

Further, GPT-4 often returned irrelevant concepts from drink-drive crash reports and reports on hearings for alcohol outlet licences. Almost all such ‘decoy’ media items contained no concepts relevant to our target policy areas and so we added an instruction to mark them as ‘not applicable’.

The final prompt included the refinements above, and requested quotes from the text containing each identified concept to assist later validation, and stipulated an output structure to support further analysis, setting out the policy topic, the concept, the related quote, or ‘none’ if no concept was found.

It is notable that test runs produced varying results using the same prompt for the same items. We therefore ran tests with the system ‘temperature’ set to 0 (its lowest level of randomness), 0.5, and 1.0. The lower temperatures still produced varying outputs and somewhat fewer of the target concepts, and consequently we chose the default temperature of 1.0. The final prompt was tested twice at temperature 1.0.

GPT-4 Identification of Concepts in the 108-Item Sample

We used Python Script to automate the processes of combining the prompt and each of the 108 media items in our trial sample, requesting analysis by GPT-4 through Open AI’s API, setting the system message and temperature, and requesting the structured outputs from the API to be organised in a structured datafile.

Concept Matching

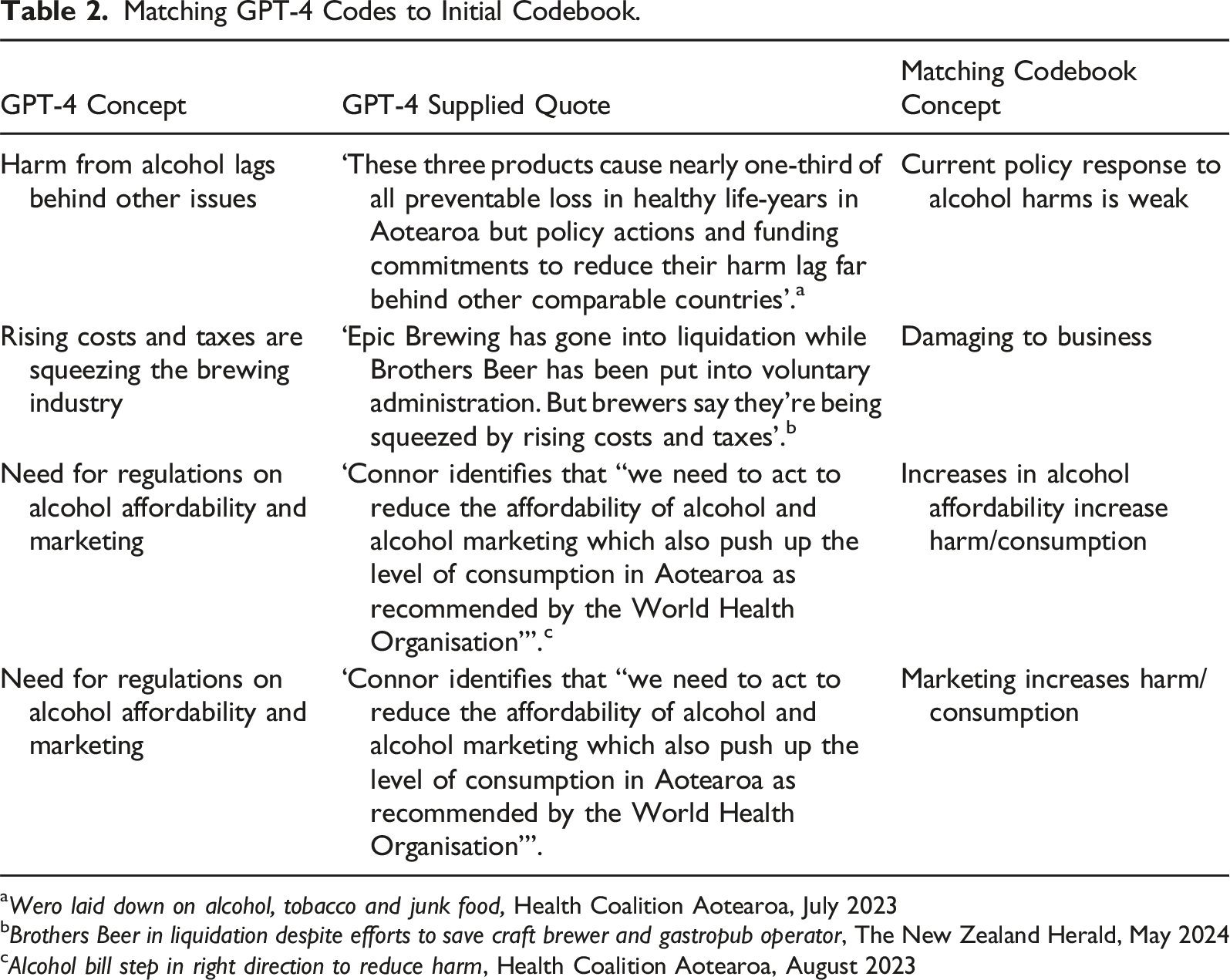

Matching GPT-4 Codes to Initial Codebook.

Wero laid down on alcohol, tobacco and junk food, Health Coalition Aotearoa, July 2023

Brothers Beer in liquidation despite efforts to save craft brewer and gastropub operator, The New Zealand Herald, May 2024

Alcohol bill step in right direction to reduce harm, Health Coalition Aotearoa, August 2023

If the raw concept did not convey a clear reason for policy change, the supplied quote was also considered to aid interpretation and matching, as in the final example in Table 2. Where an item contained two concepts, the HC matched each of these. For example, the final two items in Table 2 concern the effects of both alcohol affordability and marketing on consumption.

Validation Process

Using the initial codebook generated by the HCs as a gold standard for the sample, we calculated the proportion of concepts in the codebook that were identified by GPT-4. Concepts identified by GPT-4 but not the HCs were also reviewed by the HCs for potential relevance.

Results

Assessment of Media Item Relevance by GPT-4

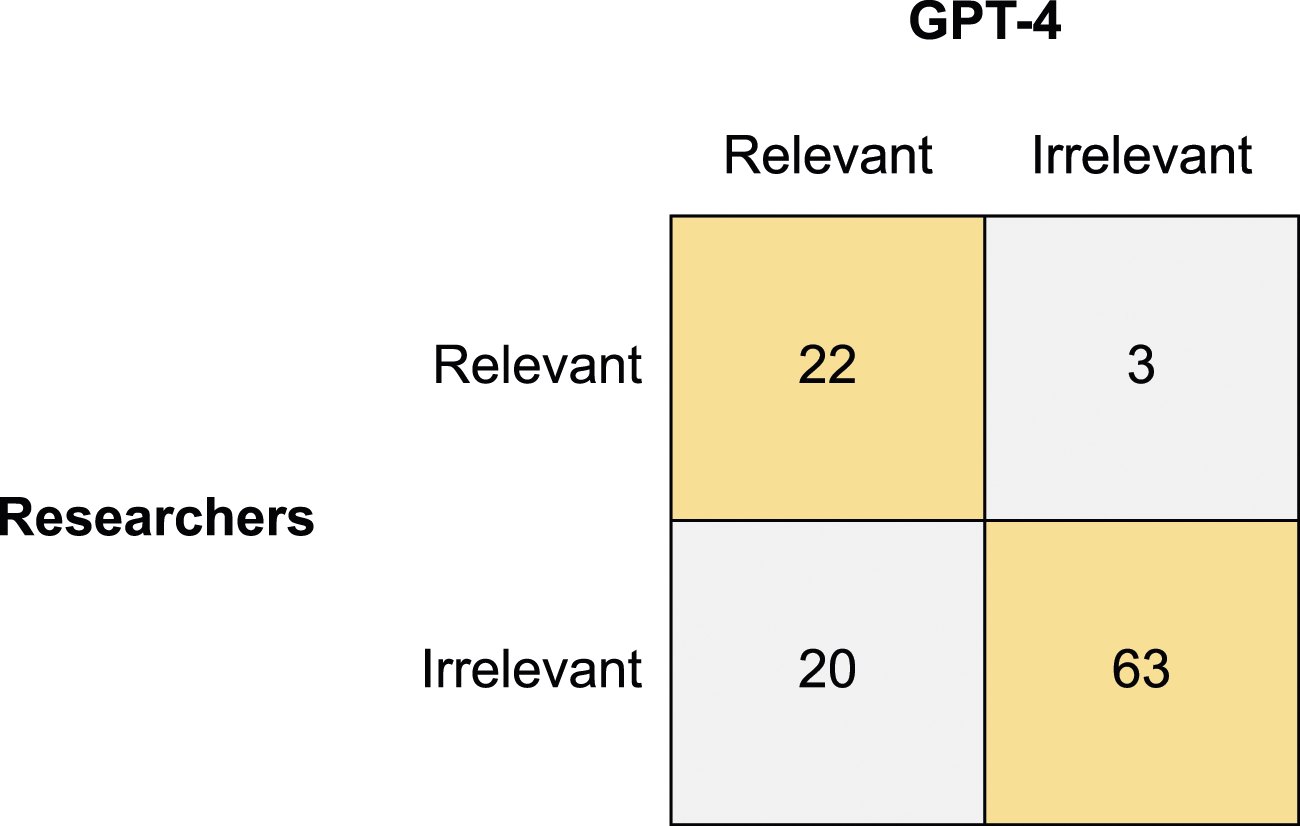

Relevant media items contained a reason given by a person or organisation for or against change in the specified alcohol policy topics. GPT-4 returned the same decisions as HCs on relevance for 78% of the 108 media items (Figure 1). As intended by our inclusive prompt design, errors were mainly the inclusion of irrelevant media items (20) rather than the exclusion of relevant ones (3). Overall, 22 of 25 relevant items in the sample were included by GPT-4. Notably, GPT-4 performed well in ‘screening out’ irrelevant media items; of the items excluded by GPT-4, 95% were judged irrelevant by the HCs. Classification of media item relevance.

One of the three relevant items GPT-4 excluded was primarily about an alcohol licence hearing, and we assume this was due to the instruction in our prompt to exclude such items. The other two contained statements that required a greater degree of contextual interpretation to identify. For example, in Box One the HCs considered the underlined sections of the quote conveyed support for policy change to reduce alcohol’s contribution to suicide. Box One: ‘Acting director of the Suicide Prevention Office Dr Sarah Hetrick said ‘ (New Zealand suicide rate remains unchanged, New Zealand Herald, October 2023) Concept identified: Alcohol causes health harms

Identification of Relevant Concepts

Within the 22 relevant media items identified by GPT-4, our prompt returned 145 raw concepts. These matched 34 of the 48 concepts in the codebook generated by the HCs. An examination of the 14 concepts in the codebook not identified by GPT-4 revealed no clear patterns by topic or media source. Among these concepts, six were related to other codes GPT-4 had identified but differed in focus. For example, ‘alcohol causes health harm’ was found but ‘alcohol causes harm to society’ and ‘alcohol harm is inequitably distributed’ were not.

Two of the codebook concepts GPT-4 failed to identify were contained in the three media items it classed as irrelevant. It is notable that another two codebook concepts that GPT-4 missed in these three media items were ultimately picked up by GPT-4 elsewhere in the sample.

GPT-4 identified 32 raw concepts that did not match the codebook. When reviewed again by both HCs, we determined five were relevant and that these represented three unique concepts that could be added to the codebook. This suggests GPT-4 was sensitive to a total of 37 (73%) of 51 concepts that HCs had identified in the sample.

Among the remaining unmatched raw concepts that were not relevant, common GPT-4 errors were only providing a policy description instead of a reason for policy change (7 instances), lacking a link to a relevant aspect of alcohol policy (5 instances), and providing a reason relating to licensing (3 instances).

Discussion

There is no established benchmark for an ‘acceptable’ result overall in terms of agreement between LLMs and HCs in coding tasks; this depends on the nature and complexity of the task (Riordan et al., 2024). We therefore consider the aims and tasks involved in DNA.

In relation to RQ1, ‘To what extent does GPT-4’s identification of relevant concepts in media items align with that of HCs for the purposes of building a codebook for DNA?’, we note that perfect alignment was not necessarily required for several reasons. First, prominent actors and concepts typically appear in the media multiple times, providing multiple opportunities for detection, as occurred within our sample. In addition, a point of saturation may be reached where no further unique concepts are found. Accordingly, traditional DNA studies conducted on media items have used a selection of national newspapers and a sample of relevant media items to develop a codebook (e.g. Fergie et al., 2019). In comparison, GPT-4 allows rapid analysis of large selections of media items from many sources at little cost, providing more opportunity to find relevant concepts, increasing the potential to identify the wide range of viewpoints sought in our project.

We also consider the task of identifying our target concepts (i.e. reasons for and against alcohol policy change) to be moderately complex, particularly as our study involves six policy dimensions and because a majority of media items in our sample were irrelevant. Although the concepts were largely identifiable in the surface-level meaning of the text, some concepts required interpretation of underlying ideas or contextual knowledge. We would therefore not expect all HCs to identify the same concepts. This was partially demonstrated by the fact that GPT-4 identified three additional relevant concepts that the HCs did not.

We found no patterns in the type of concepts that were missed by GPT-4 or the media source for those concepts. It would be beneficial for future studies to formally assess whether frequency of concept use affects the rate of concept detection by LLMs, and how the concepts that are lost impact the identification of both coalitions and dimensions in the policy discourse.

With each of the above considerations in mind, we believe GPT-4’s identification of 73% of the concepts that HCs identified in our test sample shows a moderate level of agreement with humans in the inductive content analysis required by our particular DNA task. In this respect, a limitation of our study is the lack of an additional independent coder to assess agreement between HCs for comparison.

However, GPT-4 also returned a considerable number of false positives, that is, identifying irrelevant media items and concepts as relevant, which were time consuming to remove. This was to be expected as our prompt was geared toward inclusiveness and the proportion of relevant items in our dataset was low. Similarly, Drápal et al. (2023) found that GPT-4 continued to include some unwanted dimensions of their dataset in its description of potential themes, despite specific instructions to avoid those dimensions.

A key strength of GPT-4 was in screening out a large number of irrelevant media items in our dataset; it classified 63 items (58% of our sample) as irrelevant with 95% accuracy. This was likely supported by the prompt development process.

In relation to RQ2, ‘Based on the identified alignment and limitations, to what extent can GPT-4 be used to independently identify the concepts in a large sample of media items?’, it was clear that GPT-4 could not work entirely independently as irrelevant output had to be manually removed. Human oversight began during the prompt engineering phase, during which researchers would identify at least a portion of the final codebook while testing the prompts on different media items. In this stage, there is potential for the prior knowledge of the researchers to influence the process; however, the key focus was whether GPT-4 returned relevant structures (i.e. a policy argument) rather than pre-specified concepts.

Overall, we consider GPT-4 may assist with analysing a considerably larger sample from our set of media items in order to complete our codebook (e.g. a 10% sample or 400 items), but not the full dataset due to the subsequent human oversight needed to screen and remove irrelevant concepts. In this approach, the core of the codebook has been primarily human created during this exploratory study; however, the future expansion and completion of the codebook would be assisted by GPT-4 in the larger sample. In adopting this approach, larger samples of articles may need to be processed than in traditional HC studies to increase the odds of identifying most of the prominent concepts in the data, or repeat runs could be considered. This should minimise the potential impact on the subsequent deductive phase of the DNA and the study findings.

Although reviewing GPT-4’s outputs requires additional effort, GPT-4 is able to speed up the task in several ways. We are confident it will make sufficiently similar decisions to HCs to independently screen out around three-quarters of the irrelevant media items in a new sample from our dataset. Second, it will be possible to review only the concepts and quoted text returned by the model rather than reading full articles, although this may come at the cost of missing some concepts that appear less frequently in the sample. Third, GPT-4 can facilitate the process by grouping related or overlapping raw concepts that it returns in order to produce the more generally applicable code definitions that HCs would apply (see examples in Table 2). In this process, GPT-4 can assist in rapidly identifying and removing duplicates (see, for example, De Paoli, 2023).

Specific to DNA, we believe LLMs could prove helpful in assisting codebook development in studies of networks connected by other types of concept. For example, studies of advocacy coalitions define their target concepts as policy instruments or policy preferences (Leifeld, 2023), which may be simpler for an LLM than identifying policy framings, which here required finding both the arguments given and their connection to a relevant policy instrument.

Considerations for Wider Use of LLMs to Identify Initial Codes

Given that initial codes form the foundation of subsequent analysis, the fact that LLMs like GPT-4 may miss a substantial portion of relevant concepts or codes in a dataset is a significant problem. LLMs may also be biased by their training. While both issues are true of human coders to some degree, we agree with others that LLMs, at the time this research was undertaken, could best be used in combination with researchers, for instance, as a second coder or to review human identified codes or make suggestions, and, that methodological development is needed to assure quality and validity (De Paoli, 2023; Drápal et al., 2023) and to address the potential to introduce bias (Gao et al., 2024).

We have further suggested GPT-4 may have value in suggesting codes and their relevant text segment from data unseen by the researcher to extend an initial codebook developed with HC oversight. Based on the current exploration, we offer some considerations as to when and how this may be appropriate: 1. The risk of missing codes and bias impacting the final results should be assessed relative to the research aim and design. Importantly, a sample of data should be manually coded and compared to enable the level of agreement with LLM predictions and any patterns in what is ‘missed’ to be reported, as discussed below. 2. LLMs have been more adept in identifying codes at a semantic level rather than a latent level where more interpretation is required (e.g. Kirsten et al., 2024) and may be better suited to content analysis with a more quantitative objective, as in the case of DNA. Where the aim is a deeper thematic analysis and discussion of rich meanings in the data, it is likely to be both more efficient and often desirable for researchers to conduct the analysis and be familiar with the data (Jiang et al., 2021). This is also necessary where reflection is needed on the decision processes that shaped the analysis, which is not possible with LLM predictions. 3. Resources and information technology skills are needed to establish automated prompting processes via an application programming interface. Further time is needed to check the validity of outputs and remove irrelevant suggestions before further analysis. This may outweigh the value of using an LLM for assistance in small datasets. 4. LLMs may be more useful in assessing datasets where the target codes appear multiple times, which may reduce the odds of missing relevant codes. 5. In datasets which contain a large amount of irrelevant material, LLMs may be useful for an initial pass to ‘screen out’ irrelevant text segments or items. We again suggest testing LLM alignment with researcher screening decisions to evaluate the potential for relevant information to be lost and the likely impact on the analysis.

Review and Validation of Outputs

Exploratory studies to date recommend testing should be conducted specific to each use case and dataset so that limitations and biases may be reported (Riordan et al., 2024). Where the coding task seeks concepts that can be observed relatively objectively, comparison of LLM generated codes to a sample of human coding appears appropriate to check relative sensitivity to codes in the data.

In this process, understanding the level of alignment between independent HCs could provide a guide for reporting differences. As LLM outputs here differed from one run to another, even at a zero randomness setting, repeat runs may need to be assessed to give a range of alignment. Deploying multiple runs may enable additional concepts to be identified but will require additional manual checking; computational techniques may make this more feasible by identifying repeated outputs across runs, so that only novel outputs need to be checked.

Patterns in the codes the LLM has suggested and missed relative to HCs should also be explored to assess potential bias or blind spots; are certain actors and topics favoured? This can also yield relevant codes HCs have initially left out.

Future methodological development will need to consider what methods and levels of alignment can be considered robust enough to support the use of LLMs to inform the coding process, which may vary for different tasks or demands for precision. Different considerations will arise for open coding (where any concept in the data may be relevant), and where increasing subjectivity is involved, given LLMs cannot report their decision-making processes in these scenarios.

A complicating consideration for long-term projects, and for ongoing methodological development, is that commercial LLMs such as GPT-4 may be changed or removed in future.

Considerations for Prompt Development

Our testing of multiple prompts on one small sample (12 media items) may have produced relevant outputs by chance and in a way that was attuned to the test articles. Where resources permit, it would be advisable to test more promising candidate prompts on an additional sample to increase confidence in the broader applicability of the final prompt.

Notably, during prompt development we identified and instructed the model to exclude common ‘decoy’ media items that contained salient terms but were not relevant to the policy debate (e.g. drink-drive stories). Such a process appears useful for other contexts given the final success rate in screening out irrelevant items here, although this may be particular to the prevalence of such items in our dataset and the ease of identifying them.

We found longer prompts were more effective; these included more detail about the policy topics of interest and specific parameters about what to include and exclude. The prompts were ordered with the introduction of the topic area, the steps for analysis, and lastly the desired output structure for tabulation (supplemental file 1). To assist the checking of outputs, it was useful to request the relevant text segment underlying each reported code to be returned.

Conclusions

In the task of identifying concepts and related text segments to build a codebook for use in DNA, our findings indicate GPT-4 as at May 2024 offered potential to assist human researchers, but not independently, as it provided irrelevant outputs requiring human review. Testing GPT-4’s outputs against a human-prepared sample was critical to ensure limitations can be reported, including the potential for missing concepts to impact later findings. This limits the potential efficiency gains of using GPT-4 in codebook preparation. One strength of GPT-4 was in accurately screening out over half the irrelevant media items in our dataset, which could save substantial time in assessing a dataset in which relevant content is sparse.

For our project, we consider GPT-4 showed sufficient alignment with HCs to be used to identify potential concepts and text segments in a larger sample to assist with completing the initial codebook that was prepared by the researchers during this exploratory work. The potential for GPT-4 to miss some concepts remains but would be partly offset by the greater opportunity to detect relevant concepts by processing a larger sample than would be feasible by human coders alone.

Supplemental Material

Supplemental Material - Exploring the Use of a Large Language Model for Inductive Content Analysis in a Discourse Network Analysis Study

Supplemental Material for Exploring the Use of a Large Language Model for Inductive Content Analysis in a Discourse Network Analysis Study by Steve Randerson, Thomas Graydon-Guy, En-Yi Lin and Sally Casswell in Social Science Computer Review

Footnotes

Author Contributions

SR wrote the paper and contributed to the concept, study design, and analysis.

TGG collected the data, provided software tools for analysis, and contributed to the study design and writing.

EL contributed to analysis.

SC contributed to the concept and study design.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.