Abstract

This article reports research exploring the benefits of adding text messages to the contact strategy in the context of a sequential mixed-mode design where telephone interviewer administration follows a web phase. In a web-first mixed-mode survey, supplementing the contact strategy with text messages can help increase the response rate at the web phase and, consequently, reduce fieldwork efforts at the interviewer-administered phase. We present results from a survey experiment embedded in wave 11 of Understanding Society in which the usual contact strategy of emails and letters was supplemented with text messages. Effects of the text messages on survey response and fieldwork efforts were assessed. In addition, we also investigated the impact of SMS on the device selected to complete the survey, time to response, and sample balance. The results show a weak effect of the SMS reminders on response during the web fieldwork. However, this positive effect did not significantly reduce fieldwork effort.

Keywords

Introduction

In recent decades, the steady decline in response rates has prompted research into strategies for maximising survey response (Luiten et al., 2020). The response maximisation strategies propose to modify survey design features, like calling patterns, contact modes, or incentives, to increase the likelihood of location, contact, and cooperation (Lynn, 2017). This article explores the benefits of adding text message invites and reminders to the contact strategy in a mixed-mode survey that sequentially combines web and an interviewer-administered mode for remaining nonrespondents. The text messages sought to meet a double objective: increase response propensities, especially during the web-only phase of the fieldwork – when the text messages were sent – and, as a result, reduce the number of panel members issued to the interviewer-administered mode, which might save survey costs.

Prior research has shown that combining text messages with other contact modes, particularly emails, can effectively boost response rates in web surveys (e.g. Marlar, 2017; Mavletova & Couper, 2014). However, this evidence has been limited to cross-sectional web surveys. To the best of our knowledge, there is a lack of experimental research on this topic in the context of web-first sequential mixed-mode surveys, where the primary mission of the text messages is to mitigate fieldwork efforts during the interviewer-administered phase. Furthermore, there is no experimental evidence of the impact of an SMS-enhanced contact strategy in a longitudinal survey context, where most participants have been interviewed before, and previous survey experience could shape the effect of the messages. This article addresses these gaps in the existing literature.

This paper presents the results of an experiment conducted in wave 11 of Understanding Society, a sequential mixed-mode longitudinal survey, where we tested three approaches to augmenting the standard mail and email communications with text messages. These approaches vary in the type – invites and reminders – and number – one, two, and three – of SMS: 1) adding an SMS invite, 2) adding two SMS reminders, and 3) adding both the SMS invite and two reminders. The analysis explores the effect of text messages on response rates after the web-only phase of the fieldwork and in the final response rate. Furthermore, we examine the impact on fieldwork efforts, the device used to complete the web questionnaire, the time to response, and the sample composition.

The results indicate that two SMS reminders increase survey participation at the web stage among those who had provided their mobile number in the previous waves. However, the increase in individual response does not significantly reduce fieldwork efforts. Also, smartphone completion increased among those receiving SMS reminders or the invite plus the reminders, and no effect was observed in the time elapsed between the contact and the response or in sample composition.

In this article, we first summarise previous research about using text messages in survey research and then present our consequent research questions. Second, the methodology section contains a brief description of Understanding Society, the design of the experiment, and the analysis methods. Finally, we report and discuss the results and present the main conclusions.

Background

Four mechanisms can explain the link between adding a new mode to the contact strategy – text messages – and response in a web survey: 1) reinforcing the messages delivered by other modes, 2) reaching sample members who are not contacted by other modes, 3) increasing the likelihood that the communication is read, and 4) facilitating access to the survey.

First, as an additional contact mode, text messages can reinforce the participation message conveyed through the invite and reminders delivered by other modes. The synergy between contacts by multiple modes can increase the likelihood that sample members attend the communication and participate in the survey (Dillman et al., 2014, p. 417). In the context of cross-sectional web surveys, prior research has shown the positive effect of supplementing email contact with mail letters (e.g. Millar & Dillman, 2011) or text messages (e.g. Marlar, 2017; Mavletova & Couper, 2014).

Second, additional contact by text message can help reach participants who cannot be contacted by other modes (Dillman et al., 2014, pp. 418–19). Failure to contact a participant can happen because the contact details do not exist (e.g. individuals with no email account), are wrong (e.g. failure to update contact details), or the sample members overlook or consciously ignore the contact attempt (e.g. message identified as spam). An alternative contact mode to reach the sample members can mitigate this issue. Text messages can serve this purpose, given that most people have mobile phones and are familiar with the technology. For instance, 45% of European (European Commission, 2016) and 73% of British adults receive or send SMS daily (Ofcom, 2019).

Third, text messages can increase the likelihood that the invite and reminder messages are read compared to other modes, especially emails. Text messages are delivered directly to people’s mobiles and can be read immediately (Rettie, 2009). In contrast, emails may require people to take additional steps to access them, such as logging into their email accounts. Furthermore, compared to emails, the prevalence of unsolicited messages is lower for text messages, making it more unlikely to mistakenly identify the survey invite as spam (Bandilla et al., 2012). These characteristics increase the likelihood that a sample member reads and engages with the SMS content. Indeed, these attributes have made text messages a valuable instrument for behavioural interventions (Suffoletto, 2016), yielding positive results in public health (Bobrow et al., 2014; Sallis et al., 2019; Vidal et al., 2014).

Fourth, text messages can facilitate access to a web questionnaire for smartphone users by including a live link. Prior research has reported that using SMS invites or reminders with a link to a web survey increases the number of sample members who complete the survey on their smartphone (e.g. Barry et al., 2021; Crawford et al., 2013). Thus, sample members inclined to complete the survey on a smartphone could be more likely to respond after receiving the SMS with the link.

On the other hand, using text messages poses several legal and logistic challenges. First, it requires the survey organisation to have access to the sample members’ mobile phone numbers and the right to use them. Local regulations impose restrictions on using SMS for research or commercial purposes in some countries, for example, the United States Telephone Consumer Protection Act and the European General Data Protection Regulations (Kim & Couper, 2021). This is less problematic in the context of longitudinal studies, where participants can give permission (at previous waves) to be contacted on their mobiles. Second, to effectively access the survey, the respondent’s mobile needs an internet connection, and the survey must be usable from a smartphone. Third, text messages allow for a significantly lower number of characters than emails and letters, which prevents the survey organisation from including persuasive arguments. Likewise, their brevity and call for immediate action can make the effect of SMS ephemeral (Mavletova & Couper, 2014).

Several experiments have gauged the effect of SMS as prenotifications, invitations, or reminders on response rates to web surveys. Some studies found that using SMS as a contact mode results in lower response rates when substituting emails, but combining the two achieves better results than either individually. Bosnjak et al. (2008) found that email invitations resulted in a higher response rate than SMS invitations, especially if led by an SMS prenotification. Mavletova & Couper (2014), who experimented with email and SMS invites and reminders in a survey from an opt-in web panel, concluded that email performed better than SMS at the invitation stage. However, combining the email invitation with an SMS reminder achieved the highest response rate. In the Gallup Panel and the US Daily Tracking panel, Marlar (2017) found that combining SMS and email for the survey invitation and reminder outperformed using these modes individually. In contrast, other experiments found no effect of text messages on response, such as de Bruijne & Wijnant (2014), Crawford et al. (2013), DuBray (2013), McGeeney & Yan (2016), and Toepoel and Lugtig (2022).

The beneficial effect of using SMS as a prenotification mode has also been tested in mail and CATI (Computer Assisted Telephone Interview) surveys. Virtanen, Sikïa, & Jokiranta (2007) used SMS to substitute a postcard in three mail surveys in Finland, finding a positive effect on the response rates in two. In the Australian Workplace Barometer, a random digit dialling sample, Dal Grande et al. (2016) found that sending SMS prenotifications improved the response rate compared to the group that just received a phone call. In contrast, the experiments that tested the impact of SMS on response rates carried out by Brick et al. (2007), Steeh et al. (2007), and Barry et al. (2021) found no effect.

In addition to boosting response propensities, text messages with a link to the questionnaire can alter the respondents’ choice of device used to complete the survey, particularly for smartphone users. The results from previous experiments show a consistent trend: those receiving an SMS with a link to a web questionnaire are more likely to complete it on a smartphone (Barry et al., 2021; Crawford et al., 2013; de Bruijne & Wijnant, 2014; Mavletova & Couper, 2014). For instance, McGeeney & Yan (2016) found that receiving a text message invitation in addition to an email increased the percentage of smartphone completion from 33% to 51%. This push-to-mobile effect requires that the SMS invitation contains a live link to the survey and that the respondent’s device meets the technical requirements to navigate the questionnaire.

Other outcomes likely to be affected by text messages are the time to respond and sample composition. The importance of the time passed between the survey invite or reminder reaching the sample member and questionnaire completion is relevant for survey costs (Carpenter & Burton, 2017). Earlier participation would prevent the survey organisation from sending additional letters, emails or texts. The evidence from cross-sectional web surveys says that those who receive a text message with the survey link tend to complete the questionnaire faster compared to those who get an email (de Bruijne & Wijnant, 2014; Mavletova & Couper, 2014; McGeeney & Yan, 2016). The effect of text messages on response propensities can also affect sample composition. This effect has been studied once. Bosnjak et al. (2008), who experimented with email and SMS prenotifications and invitations in a web survey, compared the sample profiles of the experimental groups. They found no differences between the groups.

Moderators

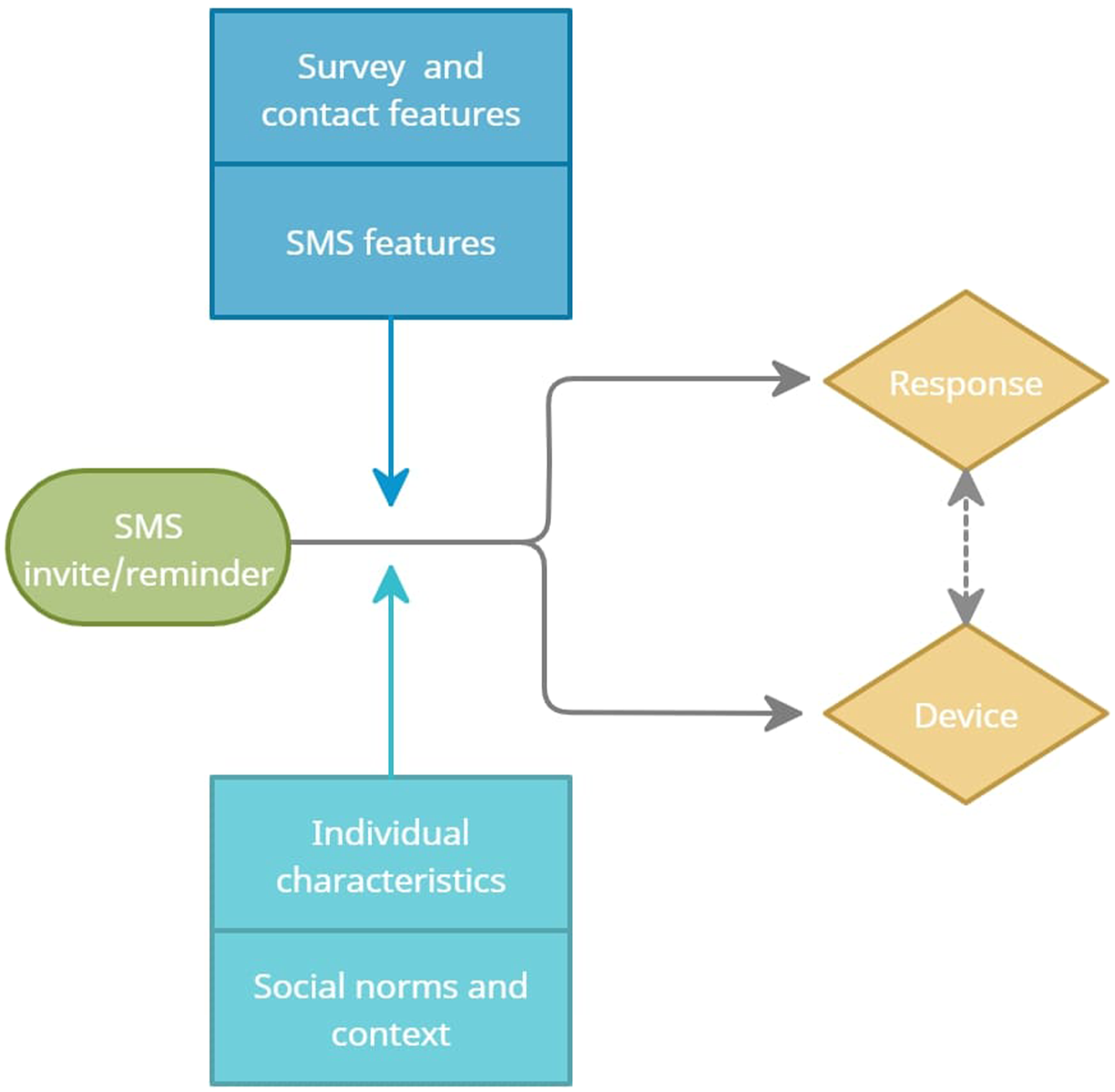

The effect of text messages on response propensities can be moderated by four types of factors: survey and contact strategy features, SMS features, individual characteristics, and social norms and context, as shown in Figure 1. The heterogeneous effects of SMS are of interest to identify the groups more likely to increase their response rate and to target them at the design stage of future surveys. SMS as a contact mode in web surveys.

Survey and contact strategy characteristics can modify the effect of text messages on response or device choice. Some relevant characteristics include survey mode or the topics covered, which have been shown to affect response rates in several studies (Edwards et al., 2014; Groves et al., 2012). The research team can, to some extent, control these features to maximise the effect of the intervention.

The SMS characteristics, also under the researcher’s control, cover the number and frequency of SMS and the content of the message, including the appeal, level of personalisation, and format (Sahin et al., 2019). The effect of modifying these features on different outcomes has been explored in the context of behavioural sciences (Sallis et al., 2019; Suffoletto, 2016). In survey research, Respi & Sala (2017) tested the level of personalisation of the SMS, including a salutation with the participant’s name, which resulted in higher response rates compared to the control group.

The sociodemographic characteristics of the individuals can also shape the effect of the SMS on response or device choice. Several studies have shown that younger respondents are more likely to complete surveys on their smartphones (Gummer et al., 2019; Toepoel & Lugtig, 2014). This predisposition to smartphone completion among young adults could lead to higher response rates if a text message facilitates access to the questionnaire through this device. Having a smartphone can be essential if the objective of the SMS is to push the respondent to the web questionnaire. It is expected that smartphone users are more likely to respond after receiving the text message, given that they can make use of the link to the questionnaire. Furthermore, the use and understanding of SMS vary across cultural backgrounds (Tan et al., 2014). Ethnicity is a proxy of cultural background correlated with response in longitudinal surveys (Watson & Wooden, 2009), which could alter how sample members react to SMS. Other demographics such as gender, urbanicity, or education that have been found helpful in explaining the effect of different response maximisation strategies could moderate the impact of text messages (e.g. Laurie, 2007). In the context of a longitudinal study, previous response behaviour can modify the effect of text messages on response (Watson & Wooden, 2009).

Social norms and context can modify the effect of text messages. Users perceive text messages as more personal and intrusive than emails (Tan et al., 2014). Thus, participants with deeper privacy concerns may react differently to the text message than those who are not concerned about privacy issues (Sala et al., 2012; Struminskaya et al., 2020; Wenz et al., 2019). Also, the immediate social context, the household where the sample member lives, can influence how they respond to the SMS. For instance, Cernat & Lynn (2018), in the context of a longitudinal study, found that sending emails in addition to letters was more effective for sample members whose partner had provided their email in the earlier waves.

The literature outlined in this section provides grounds to expect that text messages can affect response rates and other outcomes, such as the device used to complete the web survey or the time between the invitation and survey completion. Furthermore, the groups defined by the moderators may present a distinctive response rate after receiving the text messages. In the next section, we outline the research questions concerning these aspects.

Research Questions

The main objective of this paper is to assess whether adding text messages to a contact strategy can increase response propensities at the web stage of a sequential mixed-mode survey. Hence, we address the effect of the SMS on response and explore the variations in the effect across sample subgroups. • Does adding text messages to a web-first mixed-mode survey result in a higher response rate at the web stage of the fieldwork? • Does adding text messages to the contact strategy affect the final survey response rates (after both the web and CATI phases)? • What is the optimal number and type of text messages (invite or reminders) to boost response rates? • Does the effect of the text messages on response propensities vary across the levels of the moderators?

Second, we explore whether SMS invitations at the web stage reduce the fieldwork efforts at the CATI phase. • Do text messages sent during the web stage modify the fieldwork efforts at the interviewer-administered phase?

Third, we examine the effect of SMS on device selection, time to response, and sample composition. • Do text messages with the survey link affect the probability of completing the web questionnaire on a smartphone? • Do text messages affect the time taken by respondents to start or complete the web questionnaire? • Does the use of text messages in the contact strategy affect the final sample composition?

Data and Methods

In this section, we present a description of Understanding Society, the survey in which the experiment was embedded and outline the analysis plan and the variables used.

Understanding Society

Understanding Society: The United Kingdom Household Longitudinal Study (UKHLS) is a national probability survey started in 2009 that, since wave two, includes the former British Household Panel Survey (BHPS). The target population of the UKHLS is individuals of all ages residing in the United Kingdom. The fieldwork at wave 1 covered more than 100,000 persons from 40,000 households. The panel, which is representative of the United Kingdom, includes two boost samples, the Ethnic Minorities Boost (wave 1) and the Immigrant and Ethnic Minority Boost (wave 6) (Lynn, 2009; Lynn et al., 2018). At wave 11, where the SMS experiment was embedded, Kantar and NatCen Social Research, the research agencies responsible for the fieldwork, issued 22,400 households.

The design of the UKHLS has changed over time. Most of the interviews were face-to-face from wave 1 to 6, with just a few completed on the phone during a mop-up period following the face-to-face fieldwork. At wave 7, the web mode was offered for the first time, but only to wave 6 nonrespondents. Since wave 8, an increasing number of participants have been moved to a sequential mixed-mode design in which they are invited to a web questionnaire, and after five weeks, the nonrespondents are issued to face-to-face interviewers. Seventy percent of the main sample households were part of the sequential mixed-mode fieldwork strategy (‘web-first’) at wave 10, the wave before the experiment. The remaining sample was issued face-to-face either because the household was predicted to have a low response propensity in web mode (10%) – the ‘low web propensity’ subsample – or because they are part of the 20% of households randomly selected to form the ‘ring-fenced CAPI’ subsample. From April 2020, due to the outbreak of the COVID-19 pandemic and the suspension of face-to-face fieldwork, all sample households were issued web-first, with web nonrespondents being followed up by telephone (Burton et al., 2020). The web-only fieldwork phase (hereafter, ‘web-only phase’) spanned five weeks, after which the CATI interviewing started, although the web questionnaire remained open.

Adults aged 16 or over are invited to participate in the survey every year alongside other household members. Besides the adult questionnaires directed to all household members aged 16 or older, there is a household grid and questionnaire, and children aged 10 to 15 are invited to complete a self-completion questionnaire. The data used in the analysis is available through the UK Data Archive (University of Essex, Institute for Social and Economic Research, 2022).

Experimental Design

The Understanding Society sample is split into 24 random subsamples that are issued to the field on a monthly basis. The SMS experiment was conducted in six monthly samples of wave 11 from April to September 2020 and was initially designed for the web-first subsample. However, the shift of all households to a web-first and telephone sequential design due to the pandemic offered the opportunity to extend the experiment to the (previously) low web propensity and CAPI ring-fenced subsamples to investigate the effect of the text messages in the transition from face-to-face to a web-first design.

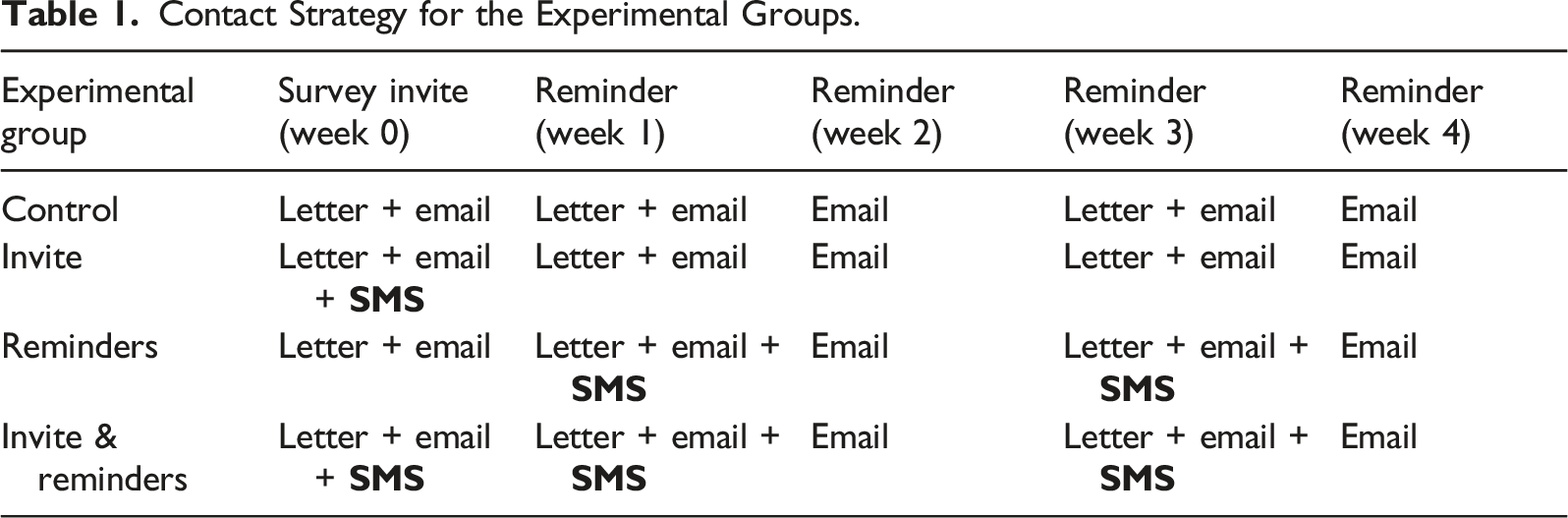

Contact Strategy for the Experimental Groups.

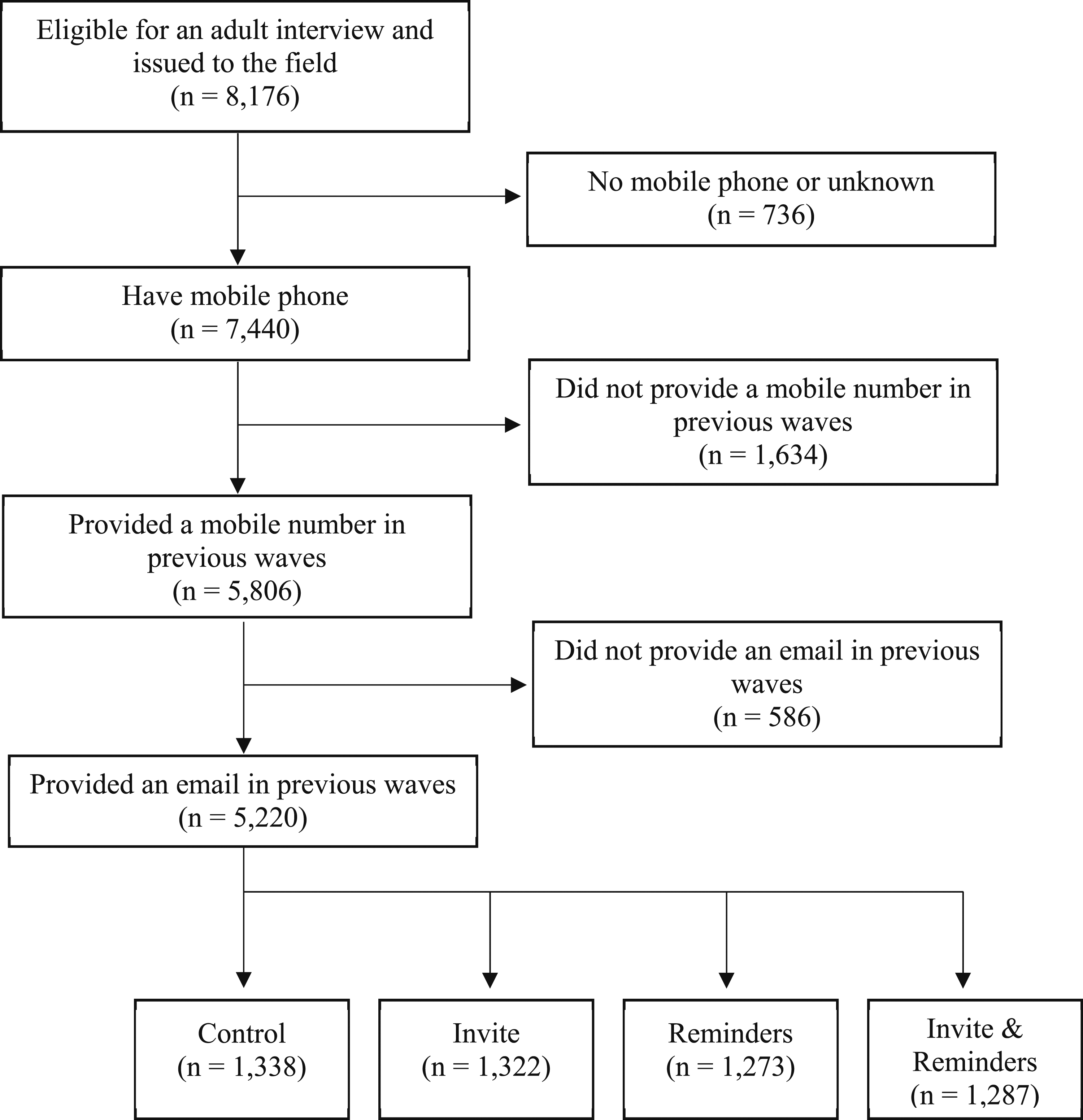

Although all household members were allocated to an experimental group, a substantial proportion did not have a mobile phone or had failed to provide a valid mobile number or email. Therefore, we differentiate between the full sample, which includes all adults eligible for an interview at wave 11, and the treated sample, restricted to those who had provided both a valid mobile number and email address before the start of the wave 11 fieldwork. Figure 2 represents the full sample included in the experiment and the different reasons behind non-compliance. Sample selection into the experiment.

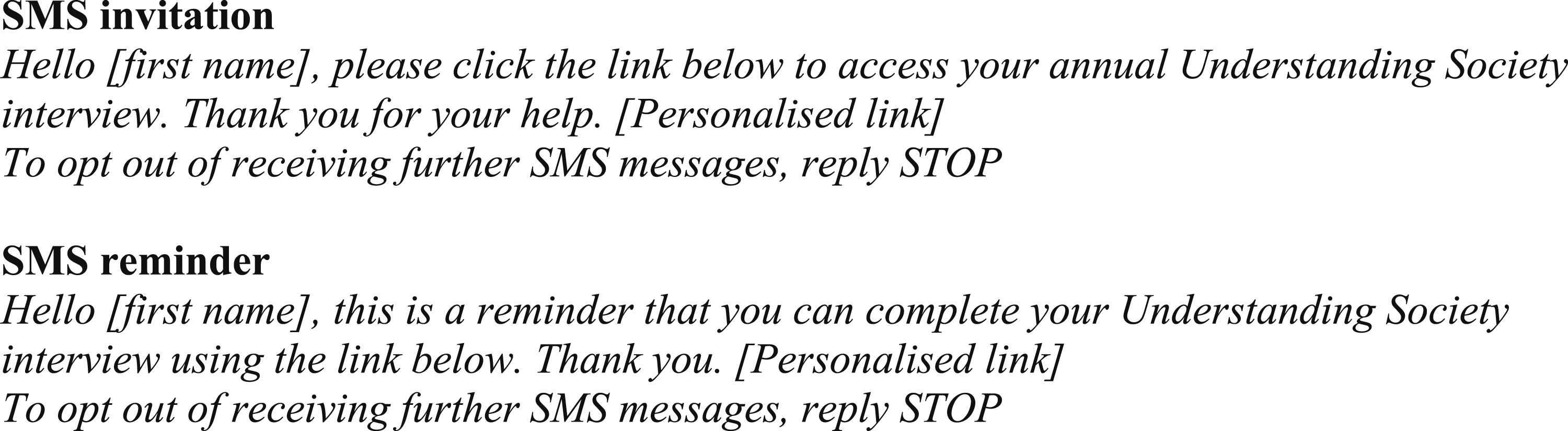

The text messages (see Figure 3), dispatched right after the emails, contained a brief personalised salutation and a link to the questionnaire. The link directed the participants to a landing page where they had to enter their date of birth to access the questionnaire. The invitation letter, which was supposed to reach the participant earlier than the text message, informed about this new contact mode. The SMS also allowed replying with the word ‘STOP’ to avoid receiving further text messages. A total of 43 sample members opted out during the fieldwork. Text of SMS invite and reminder sent to participants.

Methods and Variables

The experiment was carried out during the early months of the COVID-19 pandemic and the subsequent lockdown in the United Kingdom. Hence, we should be cognisant of the possible effects of the health and social consequences of the pandemic on how participants reacted to the text messages. For example, panel members could have been more attentive than usual to text messages and more inclined to cooperate with the survey, given that many activities were suspended and they were instructed to remain at home most of the time.

The analysis of response rates used three approaches. The first approach used the full sample to estimate the intention-to-treat effect. However, a significant part of the sample (36.2%) had not given a valid mobile number or email before the fieldwork. The second approach, which focuses on the treated sample, allowed us to gauge the effect for those who received email and text messages, conditional on giving their contact details. The third approach used a weight that takes into account the selection into the treated sample (i.e. having and sharing mobile and email details). This approach aims to identify what would have happened if those who did not share their details had done so. The analysis of the remaining outcomes – fieldwork efforts, device selection, time to response and sample balance – are based on the treated sample (i.e. those who had shared their mobile number and email address with the survey organisation). All analyses reported in this paper have been weighted to account for the unequal selection probabilities, panel attrition, and selection into the six monthly samples (April to September 2020) of wave 11.

Logistic regression models were used to test the effect of the SMS on response propensities for the whole sample and the subgroups defined by the moderators. The analysis focused on two outcomes: response after the web-only phase and at the end of the CATI data collection (final response). The dependent variable response at the end of the web-only phase is a dummy indicator that takes the value 1 for those responding to the web survey during the first five weeks of fieldwork and 0 otherwise. The final response variable is 1 if the sample member responded to the survey at any fieldwork stage and 0 otherwise. To explore the effect of the SMS across levels of the moderators, multivariate models were fitted, including all the moderators and their interactions with the experimental allocation indicator. These multivariate models were used to predict the response propensities and compute average marginal effects for the subgroups defined by the moderators (Mize, 2019). All the models underpinning the testing of differences are provided in the supplementary materials. All tests presented in this work are two-sided.

The effect of the SMS on the fieldwork effort has been measured using three variables: the full household response rate at the end of the web-only phase, the average number of calls per household and the average duration of the calls in minutes. These indicators are proxies of survey costs. In a household survey, such as Understanding Society, where we seek to interview all adults in the household, the main route to cost saving is likely to be via a reduction in the number of households issued to interviewers. However, even without an effect of SMS on full household response rates, the impact on individual response rates could reduce the effort needed by interviewers in some households. This should be reflected in a reduction in one or both of the number of calls per household and average call duration.

The effect of the text messages on device selection was tested using logistic regression models conditional on response during the web-only phase. The analysis of time to response used four outcomes: time to start and end the household and individual questionnaires. Four linear regression models were fitted to test the difference in response times between the control and treatment groups. Finally, crosstabulations and chi-squared tests were used to compare the sample composition across experimental groups.

The SMS effects on response were tested for different population subgroups to identify those more likely to react positively to the intervention. As the background section outlines, these moderators include demographics, variables related to social norms, technology use, and sample members’ past participation. The demographic variables are sex, age, urban-rural indicator, ethnic background and education.

Social norms cover the influence of the social context and whether the sample member has data security and privacy concerns. To measure the influence of the social context, we derived a variable which is a proxy of the cooperative attitudes of the household towards data sharing. This variable indicates whether another household member had shared their mobile number with the survey organisation before the respondent did, showing a cooperative social context. Privacy and data security concerns were operationalised based on the sample members’ past behaviour when asked to consent to administrative data linkage. We used a measure of individual refusal rate to data linkage based on the work of Jäckle et al. (2021), which indicates ‘low concerns’ if the sample member refused data linkage less than 6-in-10 times and ‘high concerns’ if they rejected 6-in-10 or more of the requests.

Finally, we used several variables related to previous survey participation, such as whether the sample member was a regular respondent and the fieldwork protocol the panel member was allocated to in the previous wave (i.e. low web propensity, CAPI ring-fenced, web-first). The definition of a regular respondent refers to the sample members who participated in at least two-thirds of the waves for which they were eligible.

Results

In this section, we first present findings regarding the effects of the SMS on total survey response and fieldwork efforts at the CATI phase. Then, we explore whether sending an SMS affects the probability of using a smartphone to complete the survey, the response time, and sample composition.

Effect of SMS on Response and Fieldwork Effort

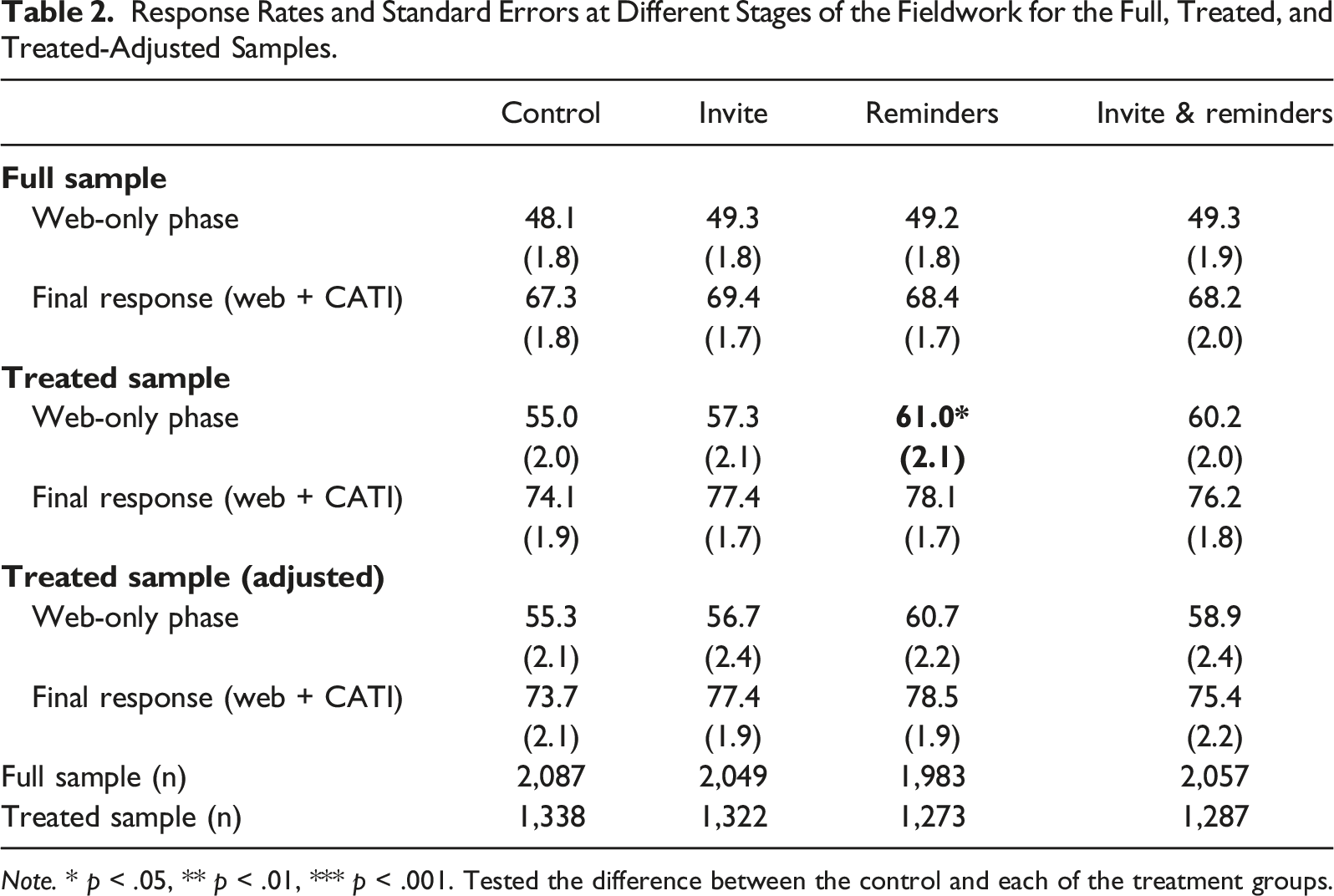

Response Rates and Standard Errors at Different Stages of the Fieldwork for the Full, Treated, and Treated-Adjusted Samples.

Note. * p < .05, ** p < .01, *** p < .001. Tested the difference between the control and each of the treatment groups.

For the treated sample, by the end of the web-only phase, those who received the SMS reminders had a higher response rate (61.0%) than those in the control group (55.0%). This difference faded after the CATI fieldwork to 4 percentage points (p.p.) and was no longer significant. Also, the response rate of the group receiving the invite and reminders was 5.2 p.p. higher than the control group after the web stage, although this difference was not significant.

The analysis of the treated sample adjusted for selection shows the estimated response rates if all sample members had shared their mobile numbers and email addresses with the survey organisation, thus becoming part of the experiment. The results exhibit a similar pattern to the unadjusted treated sample analysis. The reminders and invite & reminders groups showed higher response rates compared to the control group, but these differences were not significant.

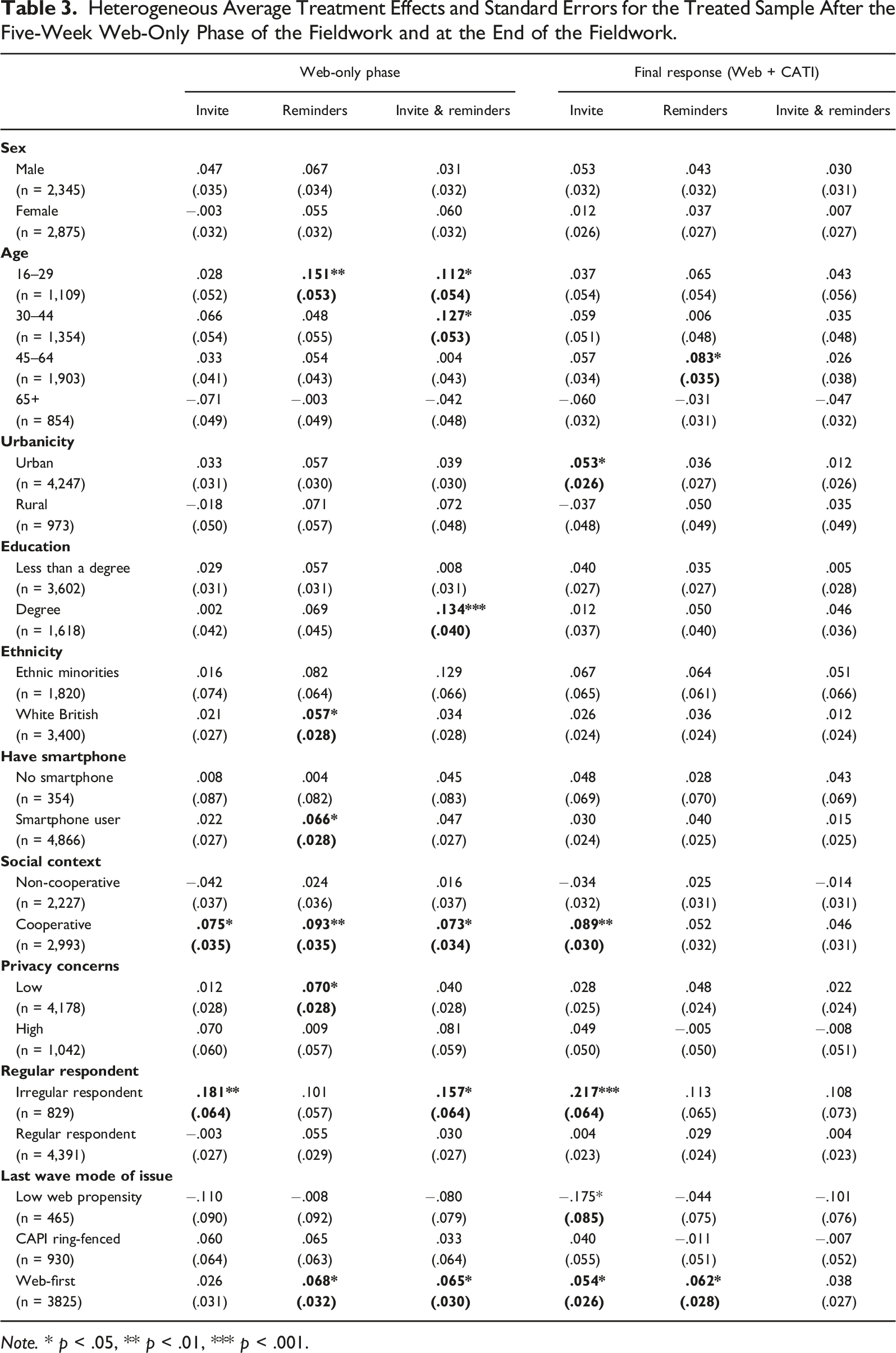

Heterogeneous Average Treatment Effects and Standard Errors for the Treated Sample After the Five-Week Web-Only Phase of the Fieldwork and at the End of the Fieldwork.

Note. * p < .05, ** p < .01, *** p < .001.

Regarding the demographic moderators, young adults (16–29) receiving two reminders increased their web-only phase response propensities by 15.1 p.p. compared to the control condition, while those receiving the invite and reminders exhibited an increase of 11.2 p.p. Also, participants aged 30–44 receiving the three messages increased their response propensity by 12.7 p.p. Finally, panel members aged 45–64 receiving two reminders had a response propensity 8.3 p.p. higher at the end of the fieldwork than the control condition.

In terms of education level, participants with a university degree were more likely to respond to the survey during the web-only phase (13.4 p.p.) if in the invite & reminders treatment group. White British sample members receiving SMS reminders increased their response propensities after the web-only phase by 5.7 p.p. The same was true for smartphone users receiving the SMS reminders (6.6 p.p.). Text messages did not increase the probability of response during the web-only fieldwork for panel members living in rural or urban areas; however, the inhabitants of urban areas receiving an SMS invite exhibited a higher final response rate than the control group (5.3 p.p.). We did not detect differences among the experimental groups for either males or females.

Participants’ perceptions about social norms can also modify the effect of text messages on response. Individuals living in a pro-sharing environment, where another household member was willing to give their mobile phone number first, were positively affected by text messages during the web-only phase. The three treatment groups show higher response rates compared to the control condition: the SMS invite increased response propensity by 7.5 p.p., reminders by 9.3 p.p., and invite & reminders by 7.3 p.p. The effect observed for the SMS invite group remained after the CATI fieldwork (8.9 p.p.). Another factor that moderates the effect of text messages is privacy and data security concerns. The effect of SMS reminders was larger for the group with a lower level of concerns, measured as refusing less than six in ten data linkage requests. This group had a positive effect of 7.0 p.p. at the end of the web-only fieldwork compared to the control condition.

Previous survey participation in a longitudinal study can also modify how the panel members react to a new contact mode. There is a positive effect of the SMS for the irregular respondents, who missed more than a third of the previous waves. The irregular respondents receiving the invite or the invite and the reminders showed 18.1 p.p. and 15.7 p.p. higher response propensities compared to the absence of SMS. This effect did not erode after the CATI fieldwork for those in the SMS invite group, where the response propensity was 21.7 p.p. higher.

The pandemic caused a substantial part of the sample (low web propensity and ring-fenced subsamples) to be shifted from a face-to-face to a web-first strategy. The positive effect of the SMS was only detected for the previously web-first group, which had an average response propensity 6.8 points higher if in the reminders condition and 6.5 p.p. higher when receiving the SMS invite and reminders. After the CATI fieldwork, the invite and reminders groups still showed response propensities 5.4 p.p. and 6.2 p.p. higher than the control condition. An additional finding is that the low web propensity group reacted adversely to the SMS. After the telephone stage of the fieldwork, those receiving an SMS invite present a 17.5 p.p. lower response propensity compared to the absence of the SMS.

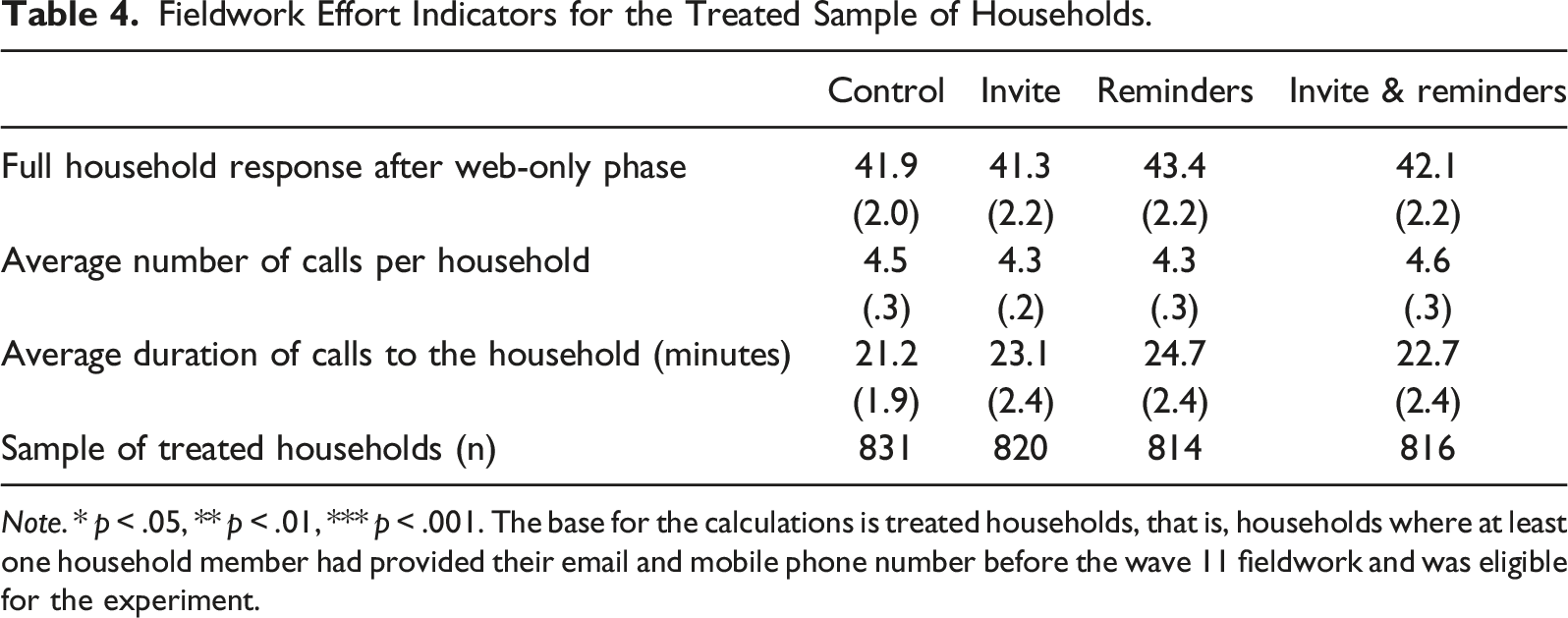

Fieldwork Effort Indicators for the Treated Sample of Households.

Note. * p < .05, ** p < .01, *** p < .001. The base for the calculations is treated households, that is, households where at least one household member had provided their email and mobile phone number before the wave 11 fieldwork and was eligible for the experiment.

Device Selection, Time to Response, and Sample Balance

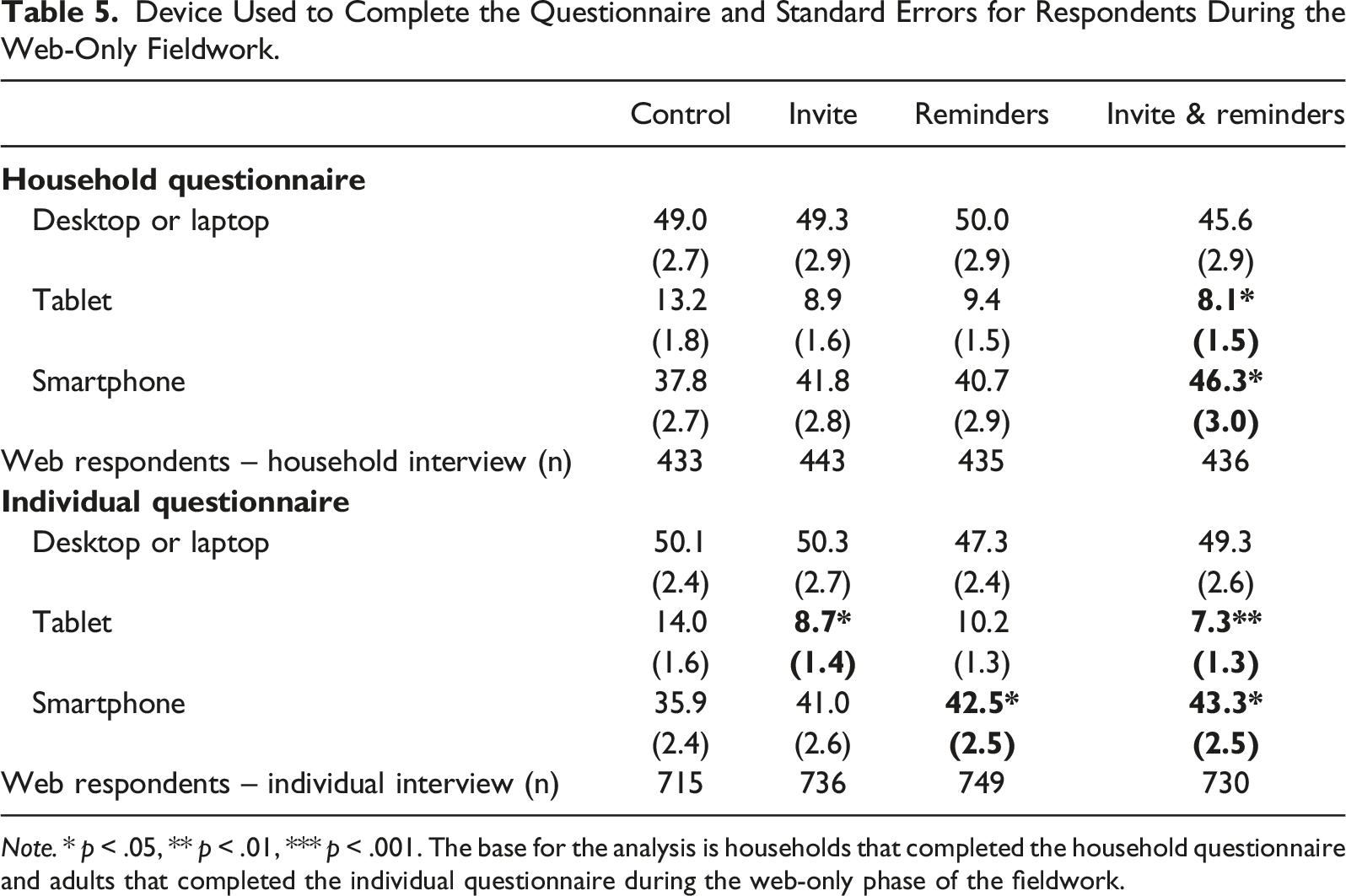

Device Used to Complete the Questionnaire and Standard Errors for Respondents During the Web-Only Fieldwork.

Note. * p < .05, ** p < .01, *** p < .001. The base for the analysis is households that completed the household questionnaire and adults that completed the individual questionnaire during the web-only phase of the fieldwork.

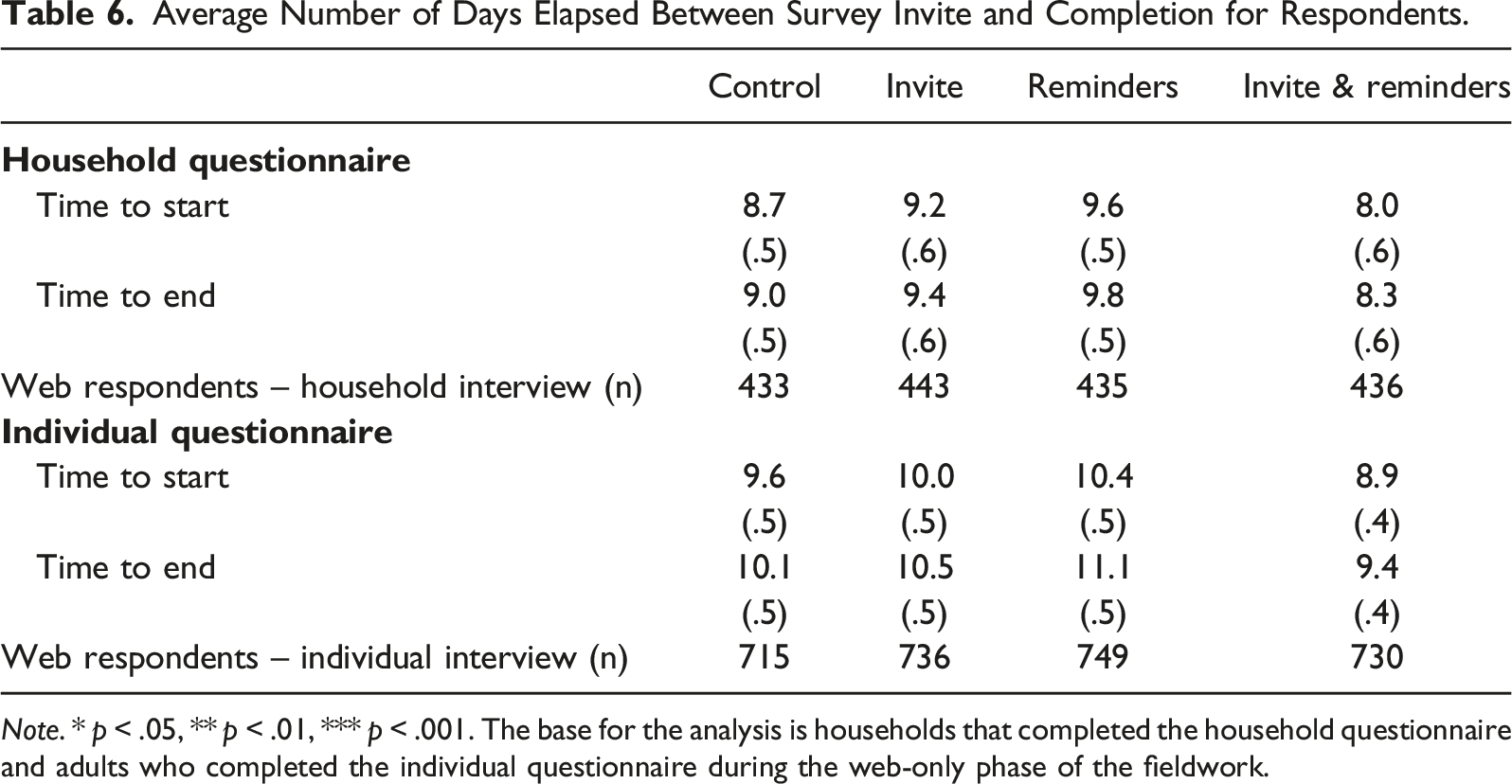

Average Number of Days Elapsed Between Survey Invite and Completion for Respondents.

Note. * p < .05, ** p < .01, *** p < .001. The base for the analysis is households that completed the household questionnaire and adults who completed the individual questionnaire during the web-only phase of the fieldwork.

Finally, we address the effect of the text messages on the sample composition. A response maximisation strategy may be more effective for some sample groups than others, affecting sample balance. We tested a selection of wave 11 variables covering various topics, including demographics or political attitudes, to assess the level of sample balance after the web fieldwork. None of the eleven variables included in the analysis present differences across experimental groups. This lack of effect was expected partly due to the modest impact of the intervention on response propensity. Results of these comparisons are available in the supplementary materials (Table A12).

Discussion

This article explored the benefits of adding text messages to the contact strategy in a sequential mixed-mode design where an interviewer-administered phase follows a web questionnaire. The expected benefits of SMS could include higher response rates and a reduction in fieldwork efforts during the interviewer-administered phase. In addition, other possible effects of the SMS on participants’ behaviour include an increase in the smartphone completion rate or a reduction in the time to start the questionnaire. We tested these aspects using data from an experiment conducted in wave 11 of Understanding Society, a longitudinal study that uses a web-first sequential mixed-mode design.

The results show that adding text messages to a contact strategy that includes letters and emails slightly improves the web survey response rates for those who shared a valid mobile number and email at previous waves. This experiment tested three different configurations of text messages: an invite, two reminders, and a combination of both. The two reminders resulted in a higher response rate at the end of the web-only stage. The experimental groups receiving the invite or the invite plus reminders also exhibited higher response propensities, but these increases were not significant. This finding corroborates the conclusion reached by other experiments involving text messages in web surveys: text messages combined with other contact modes can produce a modest increase in response rates (Bosnjak et al., 2008; Marlar, 2017; Mavletova & Couper, 2014). Furthermore, it is worth noting that this return in response rates comes in exchange for a modest investment, given the relatively low price of the SMS and the logistics compared to other response maximisation strategies. The positive effect of the texts on response faded after the CATI stage of the fieldwork.

The positive effect of SMS on response rates and the optimal number and type of messages vary across sample subgroups. The SMS reminders effectively increased response rates at the web-only phase for most sample subgroups. Younger sample members (16–29), white British people, smartphone users, those allocated to a web-first protocol in the previous wave, participants with lower data security and privacy concerns and those living in cooperative environments were more likely to participate in the survey after receiving two SMS reminders. The invite and reminders boosted response rates for the younger panel members (16–44), those with a university degree, living in a cooperative household, irregular respondents, and panel members allocated to a web-first design in the previous wave. In turn, only two groups reacted positively to the SMS invite (without SMS reminders): irregular respondents and participants living in a cooperative environment.

For some subgroups, the effect of the SMS observed during the web phase remained at the end of the telephone fieldwork, increasing the final response rate. The invite text message worked well for participants living in urban areas, a cooperative environment, irregular respondents and those allocated to a web-first fieldwork strategy at the previous wave. The reminders helped increase the response propensity of the panel members aged between 45 and 64 and those allocated to the web-first treatment in the previous wave.

Another valuable insight of this analysis suggests that some sample subgroups could suffer an adverse effect from including the SMS in the contact strategy. Two subgroups show a negative trend in response propensity after receiving the SMS: older participants and, particularly, the low web propensity group allocated to a CAPI-first design in the previous wave. When sent text messages, participants over 65 exhibited lower average response propensity after both the web-only and the telephone fieldwork phases, although none of these negative differences was found significant. The low web propensity group showed a consistent negative effect of the SMS, which was significant for the final response rate in the SMS invite condition. A likely explanation of this finding is that people reluctant or unable to take part online might feel frustrated when invited to complete the questionnaire in a mode that, in some cases, is not a real possibility for them due to technical barriers or a lack of skills.

In the context of a web-first sequential mixed-mode survey, the expectation was that an increase in response rates during the web stage would result in a lower workload for the interviewers, reducing survey costs. However, the results of the experiment could not confirm this hypothesis. The primary reason for this was the modest increase in individual response rates produced by the text messages at the end of the web-only phase of the fieldwork. Therefore, the proportion of individuals to be interviewed on the phone was similar across experimental groups, resulting in only marginal reductions in fieldwork efforts. Furthermore, in a household survey where the objective is to interview all adults residing in the household, the modest increase in individual response rates did not translate into a larger number of households being fully interviewed before the onset of the CATI fieldwork. Thus, a reduction in fieldwork efforts did not materialise.

Using text messages to contact participants could shape their decision about the device used to complete the web survey. Text messages with a survey link pushed some respondents to complete the survey on their smartphones. This finding was expected in view of the previous research about the use of SMS invites in cross-sectional web surveys (Barry et al., 2021; Crawford et al., 2013; Mavletova & Couper, 2014). However, two patterns emerged that are unique to this study: the percentage of participants completing the survey on a desktop or laptop did not vary across experimental groups (smartphone completion appears instead to have substituted tablet completion), and the increase in smartphone completion was lower than in other experiments. An important distinctive feature of this experiment is that it was conducted in a longitudinal survey where participants already knew about the complexity and length of the questionnaire from previous waves. This accumulated knowledge could play a role in deciding whether to complete the questionnaire on a smartphone.

Another aspect that text messages can affect is the speed of response following receipt of the invite. Quicker responses could help reduce survey costs if, for instance, they prevent reminder letters from being sent. In this study, we did not find that sending text messages in addition to letters and emails resulted in significantly quicker responses. This finding contradicts the empirical literature that showed a systematic earlier completion for those receiving SMS (de Bruijne & Wijnant, 2014; Mavletova & Couper, 2014; McGeeney & Yan, 2016). This contraction could be due to previous knowledge about the survey. The accumulated experience could prevent participants from accelerating their participation in the study since they know that completing the questionnaire might take a significant amount of time and prefer to wait for a more convenient moment. Finally, the increase in response propensities caused by the text messages did not affect the sample composition.

The research presented here has some limitations. First, the survey experiment was conducted during the COVID-19 pandemic. The social and psychological consequences of the pandemic could have affected how participants reacted to the text messages and the invitation to participate in the survey, so findings may not be fully generalisable to non-pandemic conditions. Second, the sequential mixed-mode design of Understanding Society combined web and CATI. The impact of the text messages on fieldwork efforts and survey costs could differ if a CAPI mode were used instead, not least because CAPI is more costly than CATI and has a different cost structure. More research is needed on the effect of text messages on survey costs in a sequential mixed-mode design. Finally, this experiment was embedded in a longitudinal study, and nothing guarantees that the observed effects would remain unaltered at either earlier or later waves.

Supplemental Material

Supplemental Material - Text Messages to Incentivise Response in a Web-First Sequential Mixed-Mode Survey

Supplemental Material for Text Messages to Incentivise Response in a Web-First Sequential Mixed-Mode Survey by Pablo Cabrera-Álvarez and Peter Lynn in Social Science Computer Review.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Understanding Society is an initiative funded by the Economic and Social Research Council [G2014-70] and various Government Departments, with scientific leadership by the Institute for Social and Economic Research, University of Essex, and survey delivery by NatCen Social Research and Kantar Public. The research data are distributed by the UK Data Service.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.