Abstract

The response patterns across the fieldwork period are analyzed in the context of a panel study with a sequential mixed-mode design including a self-administered online questionnaire and a computer-assisted telephone interview. Since the timing of participation is modelled as a stochastic process of individuals’ response behaviour, event history analysis is applied to reveal time-constant and time-varying factors that influence this process. Different distributions of panelists’ propensity for taking part in the web-based survey or, alternatively, in the computer-assisted telephone interview can be considered by hazard rate analysis. Piecewise constant rate models and analysis of sub-episodes demonstrate that it is possible to describe the time-related development of response rates by reference to individuals’ characteristics, resources and abilities, as well as panelists’ experience with previous panel waves. Finally, it is shown that exogenous factors, such as a mixed-mode survey design, the incentives offered to participants and the reminders that are sent out, contribute significantly to time-related response after the invitation to participate in a survey with a sequential mixed-mode design. Overall, this contribution calls for a dynamic analysis of response behaviour instead of the categorization of response groups.

Keywords

Introduction

Current research on computer-assisted survey methods investigates both the response rate as well as the timing of participation in web surveys and other survey modes (Callegaro et al., 2014; Dillman et al., 2009; Göritz & Stieger, 2009; Gummer & Struminskaya, 2020; Rao & Pennington, 2013; Tourangeau et al., 2013; Van Mol, 2017). This focus continues a long tradition of research on this issue (Becker et al., 2019; Chebat & Cohen, 1993; Göritz, 2014; Houston & Ford, 1976; Huxley, 1980; Rao & Pennington, 2013). However, there are only a few empirical studies that are concerned with the time dimension of the participation in computer-assisted surveys. It is interesting to know how long it takes for invitees to respond to a researcher’s request that they take part in a survey (Faria & Dickinson, 1992, p. 51; Rao & Pennington, 2013, p. 652). How can one shorten the time that elapses before invitees respond? The distribution of the speed of response – that is, the time that elapses between the researchers’ invitation and the invitees’ response in an online survey or computer-assisted telephone interview – among the target sample provides important information for the survey management in regard to cost calculations, sending follow-ups, offering additional incentives, switching to another survey mode, and the final response rate (Becker et al., 2019; Gummer & Struminskaya, 2020; In Lynn, 2009; Truell et al., 2002).

In regard to the distribution of the speed of response to a request, on the one hand there are several studies which distinguish between ‘early’ and ‘late’ respondents; on the other hand, there are few studies which analyze the time-related processes and events in the fieldwork period in a theoretically and methodologically adequate way (Becker et al., 2019; Becker & Glauser, 2018; Chebat & Cohen, 1993; Sauermann & Roach, 2013; Van Selm & Jankowski, 2006). Those studies that do exist demonstrate, indirectly at least, that epistemological uncertainty is encountered when one seeks to distinguish between ‘early’ and ‘late’ respondents (Green, 2014; Kreuter et al., 2014; Lugtig, 2014; Rao & Pennington, 2013; Sigman et al., 2014). First, the definition of these types of respondents is inconclusive, across these studies (Klingwort et al., 2018, p. 4). Second, in several studies, there are different operationalizations of these respondent groups.1 However, these harbour some problems. Due to different value ranges, the definitions are incompatible, due to different fieldwork period durations. Thus, because of different standards of time, it is not possible to compare the findings across surveys. This is also the case for the classification of response groups by reminders: the results for response groups depend on the point in time at which reminders are sent out, which vary across surveys (Sigman et al., 2014, p. 654). Third, the categorization and operationalizations used in these studies are theoretically arbitrary since they are not based on arguments that are deduced from a well-established theory of survey participation (Singer, 2011). They do not contribute to explanations of the timing of survey participation, response speed across target groups and consequences within the fieldwork period. Fourth, there are methodological issues in these studies using such classifications of response groups. These issues arise because these studies are based on a comparative-static view and the use of statistical procedures that are suited for cross-sectional data. Thus, existing findings are often misleading (Chebat & Cohen, 1993; Sauermann & Roach, 2013; Sigman et al., 2014). For example, it cannot be ruled out that target persons would have answered the survey invitation if the fieldwork phase had been longer. Overall, this problem of right-censored cases is not taken into account by cross-sectional designs. The existing findings are also invalid because both the timing of survey participation and the response rate varies within a survey at different time-related stages of the fieldwork (Becker & Glauser, 2018), as well as between surveys or waves within a panel study (Becker et al., 2019). It is observed that the value ranges for ‘early’ and ‘late’ respondents across several studies overlap. Consequently, the definition of respondent groups varies systematically between either surveys or survey modes, and therefore becomes meaningless. Fifth, and finally, studies offer a poor record in terms of the comparison of ‘late’ respondents with non-respondents, in order to detect the ‘causes’ of, or ‘reasons’ for, non-response bias (Chen et al., 2003, p. 200; Lahaut et al., 2002, p. 133; Studer et al., 2013, p. 316). In general, this comparison is based on an inadequate design and on the wrong reference groups. It is made using a cross-sectional design after the entirety of the fieldwork. However, it has to be considered that responses can occur at any point in time. Therefore, we need comparisons between (potential) respondents and non-respondents at any point in time at which responses take place, regardless of whether respondents are ‘early’ or ‘late’, in order to understand the occurrence of non-response bias.

In other words, there is a question as to whether we need such a classification of response groups. What we need is a theoretically driven and methodically sound analysis of response behaviour across the fieldwork period. From a dynamic longitudinal perspective, there is a need to analyze both dimensions: the timing of survey participation as a stochastic process of individuals’ decisions, and the response rate, as its time-dependent consequence (Singer, 2006, p. 640; Tourangeau et al., 2013, p. 38). There are several reasons for this requirement. From the theoretical angle, analysis of the timing and extent of survey participation contribute to an (indirect) empirical test of theoretical approaches that strives to answer the question of when and why individuals take part in scientific surveys. From the methodological angle, the process of survey participation can be described more realistically by taking into account time-constant factors (e.g. gender) and time-varying covariates (e.g. consecutive reminders, the weather situation) on different analytical levels – micro, meso and macro levels (Blossfeld, 1996). By considering time-varying covariates in an event-oriented design, it is possible to reveal the causalities of this stochastic process of survey response (Blossfeld & Rohwer, 1997). From a statistical angle, event history analysis provides techniques and procedures for handling these theoretical and methodological premises (Blossfeld et al., 2019). There currently exist a few studies that demonstrate the validity of this claim (Becker et al., 2019; Becker & Glauser, 2018; Chebat & Cohen, 1993; Durrant, D’Arrigo, & Steele, 2013, Durrant, D’Arrigo, & Müller, 2013; Sauermann & Roach, 2013). From a practical angle, evidence-based in-depth knowledge of participation timing, consisting of the time interval between survey launch and first response, as well as of the rate of survey participation at any point in time until the end of fieldwork, is useful for rational survey management and efficient organization (Gummer & Struminskaya, 2020, p. 19). For example, it contributes to improving the efficiency of fieldwork as well as savings in terms of survey time and costs (Lipps et al., 2019).

In order to demonstrate the benefits of a dynamic investigation of the timing of survey participation based on event history analysis, the following questions have to be answered: How long does it take for a number of individuals to reciprocate the invitation to participate in a survey? Which factors – such as invitees’ resources and abilities, or features of the survey management (e.g. follow-up invitations and reminders) – contribute to the timing of individuals’ survey participation? What can researchers do to reduce respondents’ delay in responding to a survey, and the related duration of the fieldwork period? In regard to the inducement of a quicker response rate and the effect of several strategies (such as invitation, reminders and incentives) on individuals’ enhanced survey response, these three questions address one of the main research problems in the area of survey methodology.

Theoretical Consideration

Since participation in a survey is voluntary, the individuals who are asked to take part are free to accept or reject that request (Groves & Couper, 1998, p. 1). Their decision regarding survey participation is thus based on their ‘free will’ (Blossfeld, 1996, p. 197). Therefore, they can choose their own time to respond to the request (Groves & Couper, 1998, p. 32). They can respond immediately after the invitation, at a later more convenient point in time or never. Thus, survey participation, as observed by social researchers, is the result of individuals’ decisions and can occur at any point in time (Sigman et al., 2014). Survey participation therefore has to be modelled as a time-continuous, discrete-state stochastic process (Singer, 2006). Such a probability process presupposes the mathematical description of timely ordered and random proceedings. Stochastic phenomena are events that develop over time (Aalen et al., 2008, p. 23). A response, as an event, can occur at any point in time across the fieldwork period and is not restricted to a predetermined point in time. Furthermore, there are time-constant factors (e.g. gender and social origin) and/or time-dependent factors (e.g. follow-ups and time restrictions) that influence the outcome and timing of events, such as survey participation, at any time (Blossfeld & Rohwer, 1997, p. 361). In this respect, the response rate of a survey is the consequence of the history of invitees’ responses, which consists of events such as a response occurring over discrete or continuous time in the fieldwork period for a number of individuals who are eligible for survey participation. The invitees’ response speed – that is, the timing of their survey participation – is a function of the time that elapses between the researchers’ invitation until the target persons’ response. Thus, an analysis of response speed has to take the timing and the number of responses into account simultaneously (Chebat & Cohen, 1993, p. 21).

The techniques and statistical procedures of event history analysis can be used for dynamic analysis related to the timing of events such as survey response (Aalen et al., 2008). Event history analysis involves statistical methods for analyzing stochastic processes with discrete states, such as survey response, and continuous time, such as time elapsed in the fieldwork after survey launch (Blossfeld et al., 2019; Kalbfleisch & Prentice, 2002). Thus, event history models might be particularly helpful instruments because they allow a time-related empirical representation of the theoretical arguments in regard to the structure, number and timing of survey participation (Blossfeld & Rohwer, 1997, p. 363). From a theoretical viewpoint, only time-changing variables provide the most convincing empirical evidence of the invitees’ propensity to take part, in terms of the transition to response.

Understanding and explaining social processes, such as the propensity for survey response, by time-related specification of the past and present conditions under which individuals with a ‘free will’ obviously act, the preferences individuals pursue at the present time and beliefs and expectations guiding their survey behaviour, and the survey response that probably will follow immediately or in the future have to take into account. This means that such an event occurs contingent on previous events and the stochastic process initiated by previous events, such as invitations, incentives or reminders. In sum, the aim of this kind of modelling as applied in the present contribution is to specify the likelihood of survey participation – that is, the hazard rate – as a stochastic and time-variant function of individual resources and the settings of the survey. This hazard rate

Given that

This probability reflects the fact that an event y occurs in the time interval from

According to Blossfeld et al. (2019: 29), this transition rate can be interpreted as the actors’ propensity to change state, such as from non-response to response. This propensity is defined in relation to a risk set at moment

Parametrical estimations of hazard rates are particularly appropriate for this aim. For fine-grained parametric analysis of the speed and time-dependent selectivity of response, the piecewise constant exponential model will be utilized. According to Blossfeld et al. (2019, p. 124), the ‘basic idea is to split the time axis into time periods and to assume that transition rates are constant in each of these intervals but can change between them’. Using this model makes it possible to analyze the participation pattern in the initial phase of fieldwork in comparison to the other phases of the entirety of the fieldwork period. Applying this procedure, the socially selective differences between ‘early’ and ‘late’ participants are analyzed across time within the fieldwork period. Given theoretically defined time periods, the transition rate for survey participation is defined as follows:

Additionally, using non-parametrical procedures such as the Kaplan–Meier method of calculating the product-limit estimator, the pattern of response across waiting time and its speed since the invitation to the current wave are described on the basis of relative prevalence across time. By calculating indices, such as the median or other quantiles (quartiles or percentiles), it is possible to show how long it takes a number of panelists to respond. The median value, for example, shows how long it takes till 50 per cent of the eligible invitees have responded. In this way, ‘early’ and ‘late’ panelists can be distinguished empirically for descriptive purposes, on a continuous scale, instead of based on arbitrary cutoffs. Furthermore, it is then possible to compare the points in time at which survey participation occurs and the development of the response rate across the timeline of the fieldwork period and between different surveys.

According to Schuster et al. (2020), estimations can be biased systematically when competing events such as the choice of survey modes offered to invitees – that is two or more cause-specific hazards (Kalbfleisch & Prentice, 2002) – are ignored in the analysis of survival data. Against the background of competing risk – the potentially simultaneous occurrence of mutually exclusive events, such as participation in the online mode versus the computer-assisted telephone interview (CATI) mode – the traditional survival analysis (i.e. Kaplan–Meier product-limit estimations) is inadequate to describe the timing and rate of survey participation. The assumption of standard survival analysis, namely, that the censoring of events (i.e. their non-occurrence) is independent, is not valid in this case. Thus, the Kaplan–Meier estimator is biased since the probability of the event of primary interest is overestimated (Noordzij et al., 2013, p. 2672). The overestimation of probabilities increases with the running risk time. Therefore, alternative non-parametric procedures of competing risk analysis – the cumulative incidence competing risk method – are used to describe the patterns of panelists’ participation across the fieldwork period. Since Kaplan–Meier plots are biased in the presence of competing risks, the cause-specific cumulative incidence function (CIF), which is the probability of using a specific survey mode offered before the end of fieldwork period

For the multivariate analysis of the competing risks in terms of participation in the online survey versus CATI, the exponential model –

Another approach – the sub-distribution hazards approach proposed by Fine and Gray (1999) – is often seen as the most appropriate method to use for analyzing competing risks. In contrast to the cause-specific hazards model, ‘subjects who experience a competing event remain in the risk set (instead of being censored), although they are in fact no longer at risk of the event of interest’ (Noordzij et al., 2013, p. 2673). This precondition is necessary in order to establish the direct link between the covariates and the CIF to predict the hazard ratios. However, this makes it difficult to interpret them in a straightforward way and is therefore not appropriate for etiological research (Schuster et al., 2020, p. 44). By taking competing risks into account, the coefficients estimated by the stcrreg module implemented in the statistical package Stata can be used to compute the cumulative incidence of competing risks and to depict their hazards in a CIF plot. In sum, the ‘cause-specific hazard model estimates the effect of covariates on the cause-specific hazard functions, while the Fine-Gray subdistribution hazard model estimates the effect of covariates on the subdistribution hazard function’ (Austin & Fine, 2017, p. 4393).

Data and Variables

Data Set

For the empirical demonstration of the event history analysis of survey participation, the paradata on the fieldwork period of the DAB panel study and information about the panelists are used (Becker et al., 2020).3 Since the paradata provide exact time references for the individuals’ receipt of an invitation to participate in the survey, and their survey response (Kreuter, 2015), it is possible to utilize the techniques and procedures of event history analysis discussed above. This panel study was initiated to investigate the dynamics and social mechanisms of educational and occupational trajectories after compulsory schooling. In 2012, the project started with the collection of longitudinal data regarding origin- and migration-related educational opportunities and occupational situations of adolescents and young adults in the German-speaking cantons of Switzerland. The target population of DAB consists of 8th graders in the 2011/12 school year (born around 1997) who were enrolled in regular classes in public schools. The panel data are based on a random and 10 per cent stratified gross sample of 296 school classes, out of a total universe of 3045 classes. A disproportionate sampling of school classes from different school types, as well as a proportionate sampling of school classes regarding the share of migrants within schools, was applied. At school level, a simple random sample of school classes was chosen. The initial probability sampling is based on data obtained from the Swiss Federal Statistical Office.

Between January 2012 and June 2020, eight waves of the DAB panel study have been realized, using sequential mixed-mode surveys. Push-to-web procedures are used, ‘encouraging as many sample members as possible to participate by web [which] minimizes costs, while the use of interviewer-administered modes to follow-up non-respondents can result in improved response rates’ (Lynn, 2020, p. 19; see also: de Leeuw, 2018, p. 76). From a cost and response rate perspective, the first survey mode is a web-based online questionnaire, the second mode is a CATI and the third mode is a paper-and-pencil interview (PAPI). Initially, the adolescents are asked to take part in the online survey. Individuals who do not respond in the first mode of the push-to-web survey after three digital reminders are asked after about 12 days to respond using the other modes. Due to the low number responding in the PAPI mode (106 out of 13,145 individual units), this mode is not considered.

While in the first three waves the panelists were interviewed within their school classes, after leaving compulsory schooling, since the fourth wave (in October and November 2014), they have been followed continuously. As incentives are effective as regards improving the response rate in push-to-web surveys (Singer & Ye, 2013; Göritz, 2015), the survey invitees in the DAB study received an incentive. In the fourth wave, one half of the contacted panelists received a voucher as a prepaid incentive, while the other half did not receive any incentive (Becker & Glauser, 2018). After the fifth wave (June–August 2016), different incentives – such as a voucher (worth 10 Swiss Francs), a ballpoint pen (worth 2 Swiss Francs) or cash (10 Swiss Francs banknote) – have been used for eligible panelists (Becker et al., 2019). The average response rate was about 80 per cent for each of the waves (Becker et al., 2020, p. 130). In the first wave, the gross sample consisted of 3815 individuals; this declined to 2363 panelists contacted in Wave 8. The response rate is defined as the ratio of eligible units and their response in terms of starting and completing the online questionnaire or the CATI (RR1; AAPOR, 2016, p. 61).

Dependent and Independent Variables

The dependent variable is a panelist’s survey response as a stochastic event across the fieldwork period. The delay (measured on a daily basis) between the invitation to take part in the survey and the start of completing the online questionnaire or the start of a telephone interview is of interest (Truell et al., 2002, p. 47).

As a time-varying independent variable, the panel waves are indicated by dummy variables; it is interesting that the different waves also involve different unconditional incentives, which are enclosed in the personalized invitation letter, including an official header, sent via traditional mail (Becker et al., 2019). The participation in a previous panel wave is also considered, and the chosen survey mode is taken into account.

The follow-up invitation, as well as the series of digital reminders (e-mail), represents a type of time-varying covariate which indicates the impact of survey management on the invitees’ timing and speed of response. Each of these survey-related interventions is indicated by a time-varying dummy variable. They change their value over the course of the observed time interval of the fieldwork period. Using this design, causal inference on the speed of survey response will be revealed from a series of treatments, such as the reminders given to the same individuals at different points in time (Blossfeld & Rohwer, 1997).

To control for social heterogeneity in the sample, different time-constant sociodemographic characteristics of the panelists are considered. Based on previous studies, the panelists’ gender (reference category: male) as well as social origin is included in the multivariate analysis. The panelists’ social origin is measured by the well-established class scheme suggested by Erikson and Goldthorpe (1992). The reference class consists of the offspring from the upper service class. The interviewees’ language proficiency is indicated by their standardized grade point average in the German language. Their language ability is operationalized by the use of the German language in the private household (reference category: other languages). The panelists’ education is measured by the school type in which they were enrolled until the end of their compulsory schooling. Each of these characteristics correlates with the individuals’ computer literacy, competences and skills on the use of computers (Göritz, 2014).

Empirical Results

The empirical analysis consists of three steps. First, the speed and time-related pattern of survey participation is described. Second, the social selectivity of this timing is analyzed within different stages of the fieldwork period. The effect of time-varying covariates, such as incentives or previous response behaviour, on the speed of response to the survey invitation is estimated in the third step. The effect of the digital follow-up invitation and reminders on the timing of response is also revealed in this step.

Description of Speed and Time-Related Pattern of Online Survey Participation

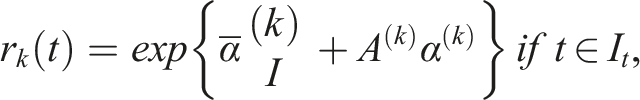

First of all, the timing of survey participation is described by survival curves, that is, the relative prevalence of panelists who did not start completing the questionnaire at a specific point in time. Regardless of the competing risks between the offered survey modes, the survival curves estimated using the Kaplan–Meier method, with the product-limit estimator (i.e. the marginal value of the lifetable estimates for time intervals decreasing to zero) as the statistical outcome.

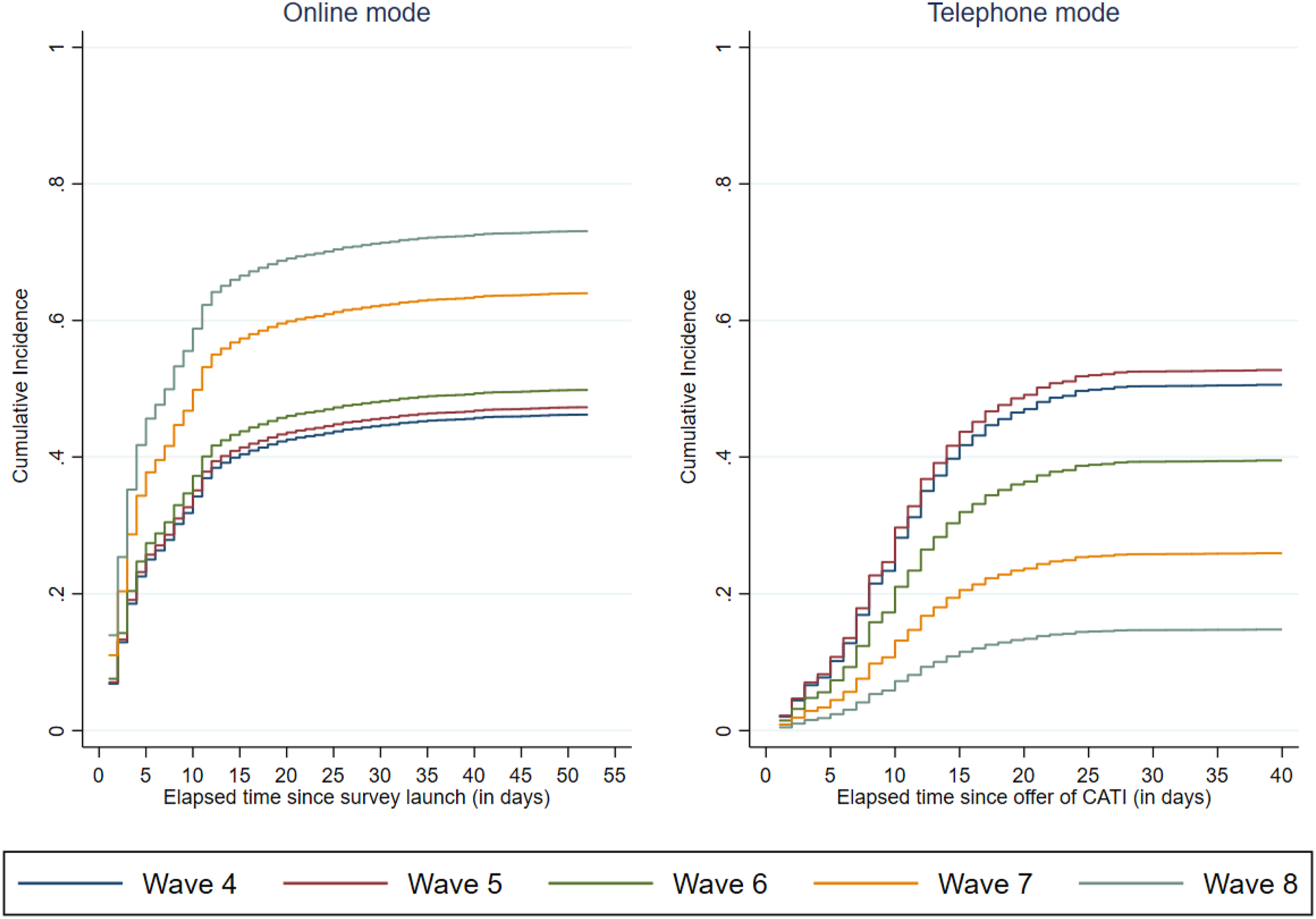

Figure 1 shows for the product-limit estimator (left-hand panel) that the response rate and response speed are relatively high in the initial fieldwork period and are highest for panelists in the most recent waves, 7 and 8, compared to previous waves. The response rate is 84 per cent in Wave 4, 80 per cent in Wave 5, 76 per cent in Wave 6, 79 per cent in Wave 7 and 81 per cent in Wave 8. The samples decreased from 2645 panelists in Wave 4 to 2492 in Wave 8. The failure estimates, that is, the relative prevalence of responses at any time, as its complementary measure (right-hand panel) confirm this finding. Overall, this response pattern – rapid start and response in the early stages of the fieldwork period, followed by a gradual decrease in later stages (lowest for Wave 4) – is observed for each of the waves. The timing of survey participation can be described by the median values across waves (see the vertical dotted lines crossed by a horizontal dotted line in the left-hand panel of Figure 1). In Wave 4, it took exactly two weeks till at least 50 per cent of the invitees had responded. This parameter increased to 15 days in Wave 5 and 16 days in Wave 6. We then observe an acceleration of the response speed, since it took just 1 day till 50 per cent of invitees had responded in Wave 7, and 7 days in Wave 8. Timing of survey participation across panel waves (Kaplan Meier method).

Overall, we demonstrate that the quantiles of the empirical response time distribution seem to be adequate for describing the timing of survey participation. If the first quarter is used for measuring the increased response speed across panel waves, it is found that it took five days after the invitation till 25 per cent of the invitees had responded. This parameter decreased to four days in Wave 6, then to three days in Wave 7 and finally to two days in Wave 8.

If one uses this parameter for the classification of ‘early’, ‘intermediate’ and ‘late’ respondents, it becomes obvious that the tempo of responses for each of these groups is different for each of the waves. While in Wave 4, for example, the invitees could be defined as ‘early’ respondents when they responded within four days after being invited to take part in the survey, and they were classified as ‘intermediate’ respondents if they responded within 15 days, for Wave 8, the class levels are much lower: they are ‘early’ respondents if they respond within two days, and the remaining non-respondents are ‘intermediate’ respondents if their response occurs before the end of the first week; the respondents in the remaining risk sample after a week are ‘late’ respondents. It should be noticed that the definition of an ‘intermediate’ respondent in Wave 8 is almost consistent with the definition of an ‘early’ respondent in Wave 4. This finding demonstrates that the definition of such response groups is rather confusing. The use of quartiles, however, makes it possible to compare the timing of response across surveys without any reference to determined value ranges of the response.

The finding is replicated by considering the competing risks regarding the choice between different survey modes across the fieldwork period and across panel waves. For this purpose, the CIFs are estimated (see Figure 2). In the left-hand panel, it is shown that for the online mode the response rate increased across panel waves. While the median values of the timing of the response in this initial mode are the same as those for each of the survey modes, the response rates are different. The response rate increased continuously across the waves, from 46 per cent in Wave 4 to 76 per cent in Wave 8. At the same time, the response rate in the telephone mode (right-hand panel) decreased from 52 per cent in Wave 4 to 15 per cent in Wave 8. The median value estimated by the traditional survival analysis is 10 days after the first offer of the CATI mode in Wave 4 (i.e. 22 days after the survey launch), 15 days in Wave 5 and 27 days in Wave 6. For waves 7 and 8, it is not possible to calculate the median value. In these waves, most of the invitees responded in the initial mode, while the non-respondents to whom the alternative mode had been offered were less willing to respond in time. CIF – comparison between survey modes across panel waves.

In sum, the findings confirm our conclusion that the classification of response groups is not useful. However, the positive message is that the use of non-parametric procedures and related univariate parameters, such as quartiles, provides descriptions of the timing of survey participation and the development of the overall response rate across the time that elapses after survey launch.

Dynamics of Period-Specific Survey Participation

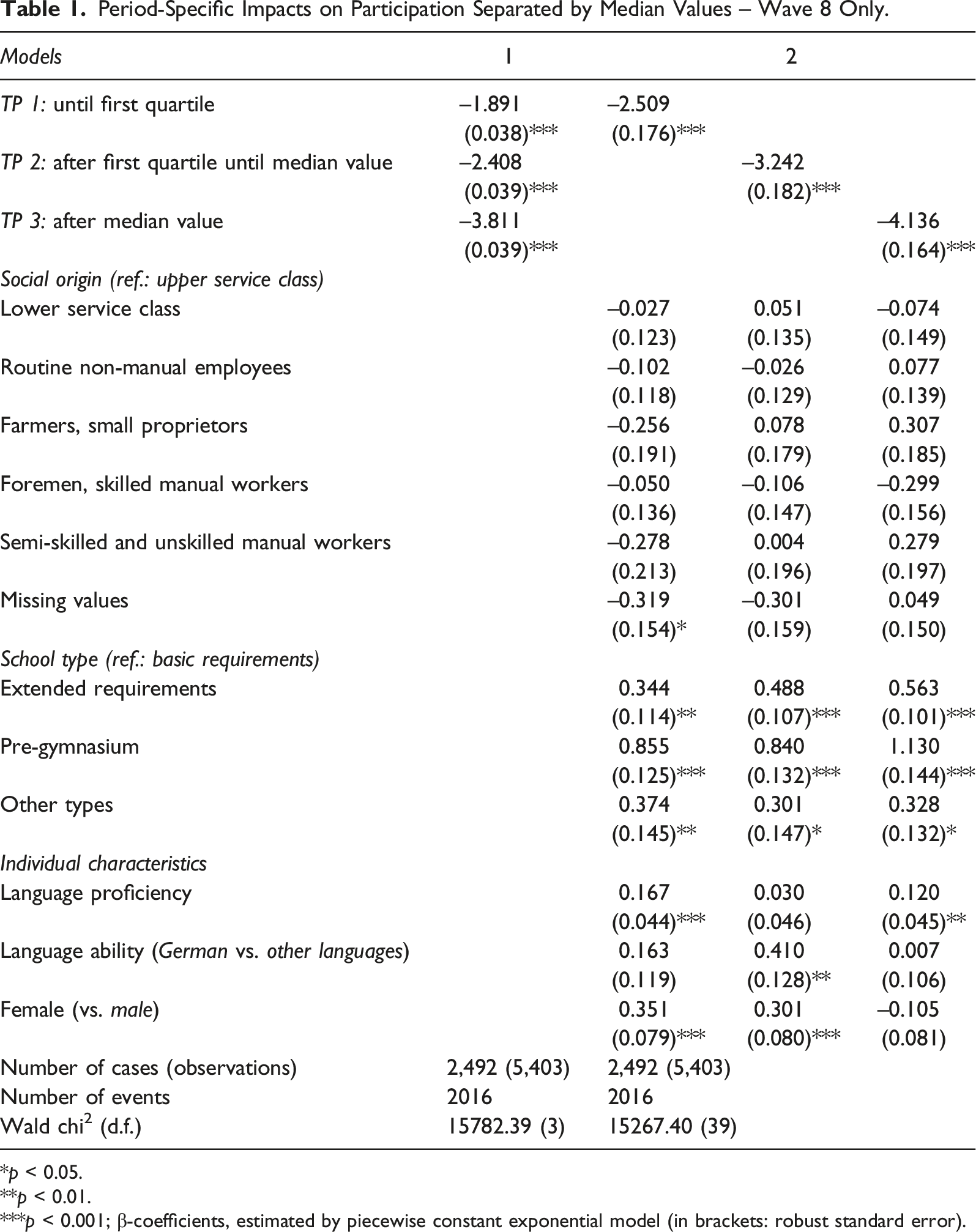

Period-Specific Impacts on Participation Separated by Median Values – Wave 8 Only.

*p < 0.05.

**p < 0.01.

***p < 0.001; β-coefficients, estimated by piecewise constant exponential model (in brackets: robust standard error).

The first sub-period lasts two days, and the second sub-period lasts seven days. The third sub-period consists of the rest of the fieldwork period. By considering three points in time (TP), it becomes obvious that the likelihood of survey participation decreases across the fieldwork period (models 1 and 2).

Furthermore, it is revealed that the patterns of socially selective participation are somewhat different for the different stages of the fielding. First of all, there is no selectivity of participation in terms of social origin. Second, however, the effect of panelists’ education increases across the sub-periods in favour of well-educated individuals compared to panelists with a lower educational level. In particular, this is true for panelists who were enrolled in a pre-gymnasium and a secondary school with extended requirements. This type of selectivity might explain the education bias in the realized survey. Third, panelists with pronounced language proficiency are more likely to take part in the survey, while language ability provides no systematic effect across each of the sub-periods. However, until the median value of the delay in survey response, it is found that individuals with a strong ability in the German language are more likely to respond than their counterparts. Fourth, and finally, it is revealed that women are more likely to respond immediately after survey launch or in the first stages of the fieldwork period, than the male panelists. This persistent gender effect should be subjected to detailed analysis in the future (Becker, 2021).

In sum, this analysis demonstrates again that it is interesting to analyze different phases of fieldwork, instead of classifying different response groups. These stages provide information about how likely is it for invitees to catch up for their delayed response.

Ways of Accelerating Survey Response Speed

How can an acceleration of the survey response speed, in terms of a shift in the timing of response to earlier stages of the fieldwork, be explained? What are the consequences of this development within a panel with a sequential mixed-mode design? Incentives of an increasing value across panel waves might be expected to increase the response speed across the waves, while the response rates remain rather constant. While in the fourth wave, half of the invitees received a voucher (worth 10 Swiss Francs) and the other half received no incentive, each of the panelists received a voucher in Wave 5, an engraved ballpoint pen in Wave 6 and cash (a 10 Swiss Francs banknote) in Wave 8.

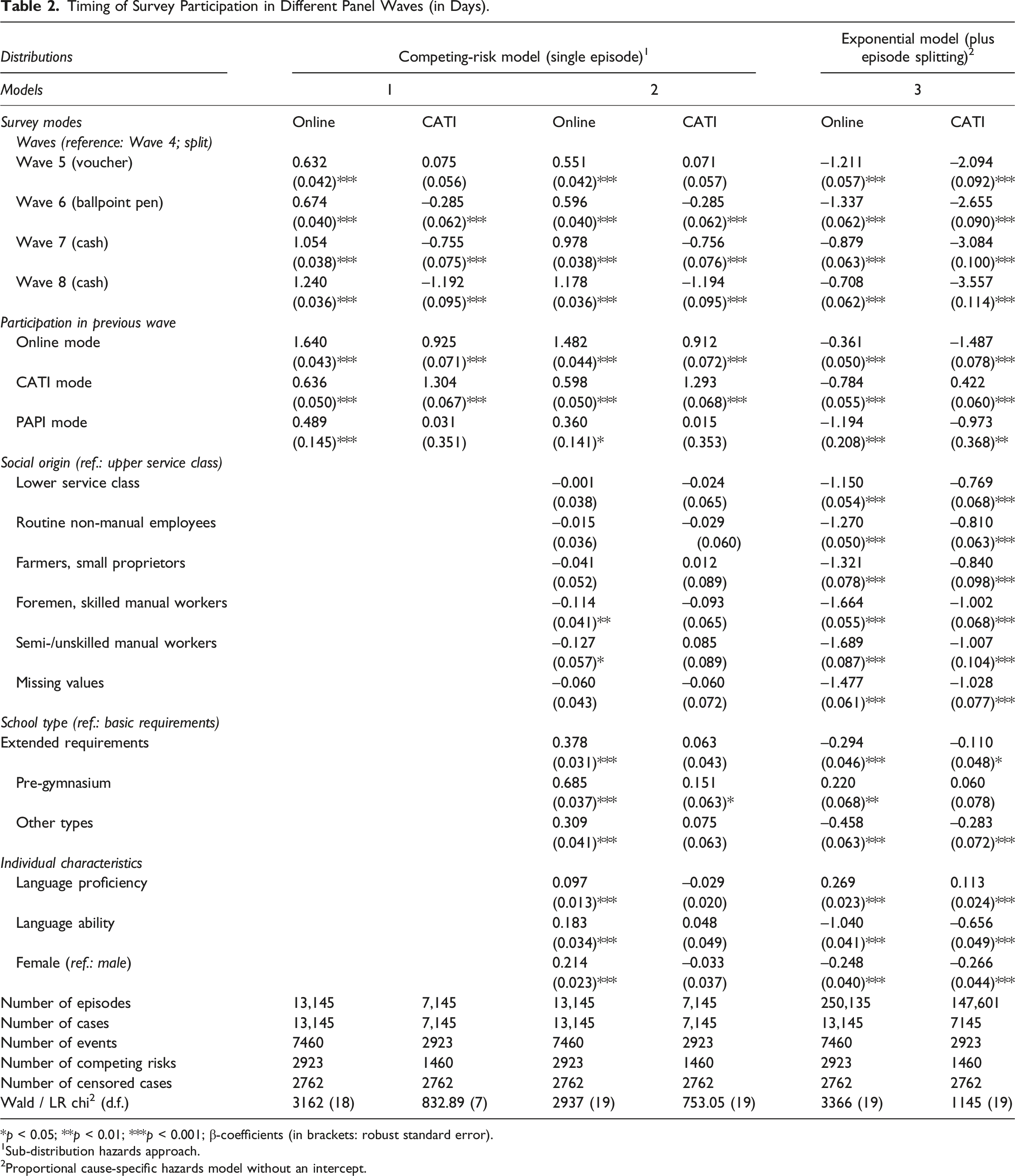

Timing of Survey Participation in Different Panel Waves (in Days).

*p < 0.05; **p < 0.01; ***p < 0.001; β-coefficients (in brackets: robust standard error).

1Sub-distribution hazards approach.

2Proportional cause-specific hazards model without an intercept.

Even in-kind gifts, such as a voucher or a ballpoint pen, result in a decreased delay in survey response. They also lead to an increased response in the initial survey mode. The positive impact of participation in the previous panel wave and previously chosen survey mode on the timing of the survey response confirms this conclusion. On the one hand, the reproduction of mode choice probably indicates panelists’ mode preference. Panelists who previously took part in the online mode mostly choose the same mode in the next wave. This is also true for the preference for the CATI mode, but there are quantitative differences in favour to the online mode. On the other hand, it is also found that panelists switch to another mode in the next wave. However, the trade-off is in favour of the online mode. These findings are valid even if one considers the panelists’ characteristics (model 2).

By utilizing the proportional cause-specific hazards model (without an intercept), it is possible to quantify the impact of time-varying covariates on the individuals’ timing of their response. While for panelists who received cash in Wave 8, an average delay of

For panelists invited to participate in Wave 8 and who took part in the online mode previously, one could expect an average delay of

Furthermore, it is possible to evaluate another effect of fieldwork period management on the timing of survey participation. It is assumed that the digital follow-up invitation sent additionally after the prior invitation letter has been sent by regular postage mail, as well as the digital reminders, ‘caused’ an increase in survey participation and stopped the target persons who received them delaying their response to a future point in time.

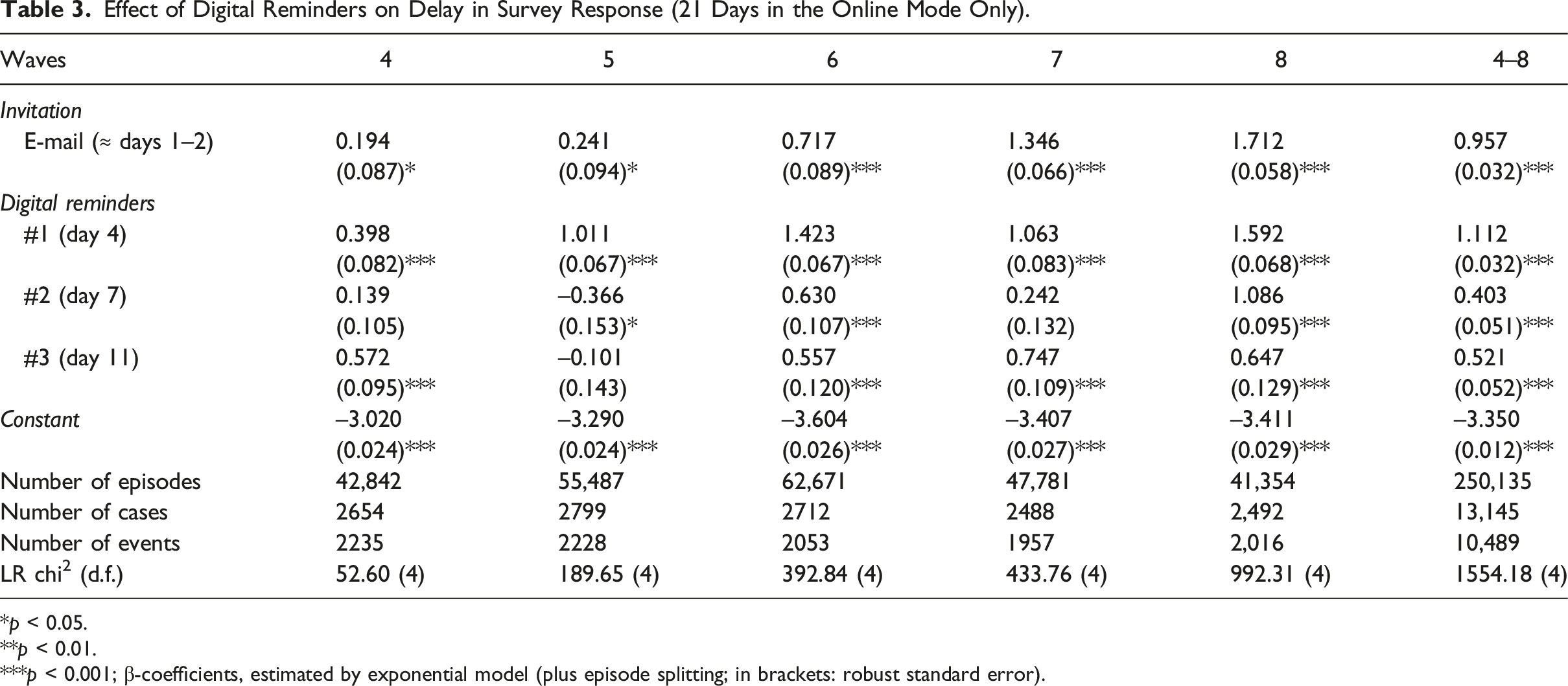

Effect of Digital Reminders on Delay in Survey Response (21 Days in the Online Mode Only).

*p < 0.05.

**p < 0.01.

***p < 0.001; β-coefficients, estimated by exponential model (plus episode splitting; in brackets: robust standard error).

This development is probably also based on the effect of different prepaid incentives across the waves. Overall, this feature of survey management is useful for enhancing panelists’ participation in terms of the timing of their response. For 1 to 3 days, on the one hand, the first reminder enhanced the response rate by between 49 per cent (in Wave 4) and 391 per cent (Wave 8).

Summary and conclusion

In conjunction with research on the timing of survey participation and the development of response rates across a fieldwork period, the aim of this contribution is twofold. On the one hand, it demonstrates that different classifications of invitees into ‘early’, ‘intermediate’ and ‘late’ respondents are without any epistemological or methodological foundation. On the other hand, both dimensions – the timing of survey participation as a stochastic process of individuals’ response and the development of the response rate as its time-related consequence – are investigated by analyzing paradata of the fieldwork period of an established panel study. The paradata are linked with the characteristics of surveys and eligible panelists. For the description and multivariate estimations of response speed across sequentially mixed modes and panel waves, the techniques and procedures of event history analysis are utilized to demonstrate an alternative way to analyze the time dimensions of target persons’ response behaviour.

Different procedures of non-parametric survival analysis and parametric models of event history contribute theoretically, methodologically and statistically adequate alternatives to answer the question: How long does it take for a number of individuals to respond to the invitation to participate in a survey? These procedures deliver precise information on the timing of survey participation which is more useful than the categorization of ‘early’, ‘intermediate’ and ‘late’ respondents. They also provide information on the trajectory of responses and non-responses across the time that elapses after the survey launch. For the analysis on the impacts of various factors on invitees’ timing of their survey participation, it is possible to consider individuals’ resources and abilities, as well as features of the survey management. It becomes obvious that important information is missing which is necessary to understand the survey participation completely. It is difficult to collect this information, as it would involve interviewing non-respondents or observing the reasons behind their survey behaviour. Mostly important are events and processes occurring across the running fieldwork period – for example, time-varying opportunities of invitees to take part in the survey or changes in their attitudes towards the survey and their evaluation of the costs and benefits of participation. Finally, answers to the third question about the practical implications of procedures in the fieldwork are found. Prepaid incentives or follow-up reminders, to give an example, are helpful in regard to significantly boosting the response rate and the speed of return. It is also found that the effect of such strategies fades across time.

As a by-product of these analyses, it became evident that it is not empirically useful to categorize different types of ‘early’ or ‘late’ respondents. First, individuals’ survey response is a stochastic event that results in different tempi and in different social structures of response across panel waves. Second, there is a change in the sample, in terms of size and social structure; therefore, the arbitrary definition of such categories is not useful (Gummer & Struminskaya, 2020). Third, the theoretical surplus value of such a category scheme is unclear. Finally, for the survey methodology, it is of interest how we can realize a survey in an efficient and less selective way. Categorizing types of interviewees or controlling for their psychological traits does not help us to understand the time-varying decision to participate or the outcome in a survey or among surveys in the context of a panel study (Groves et al., 1992). In order to achieve a rather limited fieldwork period in order to minimize the costs and thus reduce the project budget, it might be more important to reveal which treatments – such as incentives, reminders or other events happening at different points in time during the fieldwork – are significant for the timing of survey participation. Which of these increase the participation rate in a mixed-mode probability-based panel survey? For example, the significant impact of prepaid incentives and digital reminders on panelists’ timing of their response in different waves was revealed by non-parametric and parametric procedures based on adequate techniques of event history analysis. In sum, this contribution also calls for dynamic longitudinal analyses of response behaviour, instead of a rather useless classification of response groups in the manner of comparative-static approach.

Taking a dynamic view in a longitudinal design, evidence-based practical implications become empirically clearer than in a comparative-static design. Evidence-based advice regarding the length of the fieldwork period until the cutoff of the data collection and other dimensions of survey management are provided by such data and statistical analysis (Chebat & Cohen, 1993). Because the timing of survey participation ‘has cost implications, slow response tends to increase the extensiveness of follow-up efforts and therefore the cost of study’ (Houston & Ford, 1976, p. 397). Thus, it is clear that the timing of survey participation is a function of the time that elapses after mailing the invitation letter, which is tailored to the target persons and encloses an incentive, and the reminders. In addition, we now know when panelists endowed with different resources and abilities start responding to the invitation to take part in a survey. Furthermore, time- and event-related analysis shows why a mixed-mode design is a rational approach when dealing with procrastinating target persons, to motivate them to respond.

With the help of in-depth knowledge on such stochastic processes, it might be possible to plan and manage fieldwork in a rational way, as opposed to applying an apparently plausible patchwork, such as is often observed in survey methodology. What remains a problem is that we still do not know how, why and when special arrangements work in the field. However, possession of this evidence-based knowledge might save time and costs for researchers, as well as for the target persons of their research (Houston & Ford, 1976). It would also prevent a hasty shortening of the fieldwork period since this would increase the negative consequences in terms of the social selective response rate and the selectivity of surviving panel samples. However, based on such longitudinal designs and dynamic analysis, we would be able to shorten the fieldwork period without adversely affecting the quality of the data produced (Sigman et al., 2014). This would be enabled by direct testing of theories seeking to explain survey participation and its timing.

However, there are limitations to our arguments. First, as none of the theories on survey participation have really been tested empirically, it is difficult to realize the ideas discussed in this contribution. Collecting time-varying information on the processes and mechanisms behind target persons’ response behaviour is a huge challenge. In general, it would involve interviewing non-respondents. Second, for the empirical demonstration, this involved a special population of youths in a single birth cohort (born around 1997) living in German-speaking cantons of Switzerland. Therefore, it may be true that the empirical results are not generally valid for other populations. However, this does not contradict the ‘philosophy’ of the call for dynamic longitudinal analysis of response behaviour in an event-oriented design, instead of cross-sectional analysis in a comparative-static design. The results regarding the effect of incentives and reminders are in favour of this argument since they are in line with the findings for other target populations. In sum, there is a need to replicate the analysis for other populations and different types of survey modes not considered in this contribution. Third, it is difficult to disentangle different causes of response speed and response rate. On the one hand, this is a theoretical and methodological problem. On the other hand, this leads to a need for different longitudinal designs in future, which are well-constructed, in order to reveal causal impacts on the timing of survey participation.

Footnotes

Acknowledgements

For helpful comments and discussions on earlier versions of this manuscript, I wish like to thank Peter Blossfeld, Richard Nennstiel and Thorsten Schneider. The author is responsible for all remaining inadequacies.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication. The DAB panel study is substantially financed by the State Secretariat for Education, Research and Innovation (SERI). The interpretations and conclusions are those of the authors and do not necessarily represent the views of the SERI.

Data Availability Statement

The data for the first nine waves of the panel study are available as Scientific Use Files at FORS in Lausanne (DOI: https://doi.org/10.48573/dqgk-ja58) and can be found in the SWISSUbase online catalogue under the reference number 10773 (https://www.swissubase.ch/en/catalogue/studies/10773/latest/datasets/946/2519/overview). The Stata syntax used for this contribution is available from the author. ![]() .

.