Abstract

Survey scientists increasingly face the problem of high-dimensionality in their research as digitization makes it much easier to construct high-dimensional (or “big”) data sets through tools such as online surveys and mobile applications. Machine learning methods are able to handle such data, and they have been successfully applied to solve predictive problems. However, in many situations, survey statisticians want to learn about causal relationships to draw conclusions and be able to transfer the findings of one survey to another. Standard machine learning methods provide biased estimates of such relationships. We introduce into survey statistics the double machine learning approach, which gives approximately unbiased estimators of parameters of interest, and show how it can be used to analyze survey nonresponse in a high-dimensional panel setting. The double machine learning approach here assumes unconfoundedness of variables as its identification strategy. In high-dimensional settings, where the number of potential confounders to include in the model is too large, the double machine learning approach secures valid inference by selecting the relevant confounding variables.

Introduction

A key attribute of “big data” is the large volume of data that is collected or generated, often for the purpose of statistical analysis (for further attributes see, for example, Japec et al., 2015). When a large number of observed characteristics are available for only a limited number of observations, however, the high-dimensionality of the data sets poses challenges. Moreover, big data comes in a variety of forms, including many sorts of paradata (Kreuter, 2013b) such as call records, time stamps, or device-type and questionnaire-navigation data from online surveys (Callegaro, 2013), as well as sensor data from mobile surveys (Struminskaya et al., 2020) and data from outside sources that can augment survey data and be linked to persons or population groups by unique personal or group identifiers. These outside data contain, for example, administrative records (cf. Durrant & Steele, 2009, for nonresponse analysis), data from social media (an extensive discussion on the role of social media in public opinion research can be found in Murphy et al., 2014) or regional information (e.g., Feddersen et al., 2016, study the impact of weather and climate on self-reported life satisfaction). Increasingly, the field of survey analysis is facing the challenges posed by high-dimensional data sets. Long-lasting panel surveys produce big data, for example, by collecting large numbers of variables over many panel waves. Some frequently used methods cannot be employed with big data sets that have comparatively few observations and numerous variables. To deal with problems of high-dimensionality, machine learning methods have found their way in survey research modeling (see, for example, Buskirk et al. (2018), Buskirk (2018), Kirchner and Signorino (2018), Eck (2018) and Kern et al. (2019a) for introductions of the use of machine learning techniques with survey methodological questions).

Generally speaking, there are two main kinds of statistical modeling: causal inference (also known as explanatory analysis) and predictive modeling. Both have their own model-building logic and evaluation tools (Breiman, 2001). As Shmueli (2010) states, high predictive power does not necessarily imply high explanatory power, so different tools should be used to explain and to predict. The aim of prediction models is to predict the dependent variable y for individuals who were not among those used to build the model. The best model is found, for example, by minimizing the out-of-sample mean squared error (MSE). Modern machine learning methods have been highly successful at building predictive models. In contrast to predictive modeling, causal inference entails learning the effect of a particular variable on the dependent variable y while holding all other variables constant. Being able to draw ceteris paribus conclusions in this manner, researchers can think about interventions (i.e., changing x will affect y in a known way) and use this to design future studies. Applying modern machine learning methods to gain explanatory insights, however, is more challenging than building predictive models because machine learning methods inevitably introduce some bias in the estimation (Belloni et al., 2014a) to avoid overfitting. In recent years, progress has been made in applying machine learning to causal inference, and tools for doing so, such as the double machine learning framework, have been developed. The identification strategy employed by the double machine learning approach here relies on the so-called unconfoundedness assumption or exogeneity assumption which is widely adopted in social sciences (Imbens & Rubin, 2015) and implicitly assumed when performing standard regression analysis. In this paper, we demonstrate how survey statistics can benefit from these methods. We provide insights into dealing with high-dimensional survey data sets by applying the double machine learning method to learn about nonresponse in the recruitment of the GESIS panel, a probability-based, mixed-mode online and postal mail panel conducted bimonthly by GESIS—Leibniz Institute for the Social Sciences in Germany.

Survey nonresponse is arguably one of the chief problems in survey research (Kreuter, 2013a) and many decades of study have been invested in developing methods to explain and thereby prevent or adjust for it (for recent examples, see Durrant & Steele, 2009; Roßmann & Gummer, 2016). With the rise of big data and the increasing number of variables being considered, one of the more recent methods is machine learning. Multiple studies have demonstrated its usefulness in this context: For example, Kern et al. (2019a) show that regression trees can effectively be used to predict nonresponse in the German Socio-Economic Panel; Phipps and Thoth (2012) use trees to analyze nonresponse in an establishment panel and Buskirk and Kolenikov (2015) use random forest classification models and random forest relative class frequency models to predict response propensities in a simulation study. Other examples are Signorini and Kirchner (2018), who employ adaptive lasso to predict nonresponse in the National Health Interview Survey; Earp et al. (2014), who use an ensemble of classification trees to predict nonresponse in an establishment survey’s subsequent wave; Kern et al., 2021, who apply different machine learning methods to predict nonresponse using information from multiple waves of the GESIS panel; and Zinn and Gnambs (2020), who use Bayesian additive regression trees to predict temporary and permanent dropout in an event history analysis in the German National Educational Panel Study. Finally, Liu (2020) compares the use of random forests, support vector machines and lasso regression to predict response in the second interview of the Surveys of Consumers national telephone survey.

As mentioned above, one must be careful when the results produced by machine learning algorithms are interpreted beyond predictions. While nonresponse prediction can be seen as a goal in its own right, one must be clear about its limitations: the effects of the variables cannot be interpreted because machine learning algorithms—when applied directly—inevitably introduce bias, and thus no understanding of any causal effects of explanatory variables on the dependent variable of interest can be gained. Nonresponse prediction models help to identify individuals who are most likely to drop out but do not allow us to understand the driving factors, which are, however, key to identifying and developing prevention strategies (Lynn, 2017).

In this paper, we use machine learning methods not only to predict nonresponse, but to analyze explanatory factors in a high-dimensional setting for survey statistics. Recently, double machine learning techniques to deal with high dimensions and to deliver unbiased estimates have been developed (cf. Belloni et al., 2017; Chernozhukov et al., 2015; Chernozhukov et al., 2018). We give an introduction to the double machine learning approach and show how double lasso can be applied to explain nonresponse in the welcome survey of the GESIS panel. Our findings can help survey researchers who design and implement panel surveys to develop targeted strategies to prevent nonresponse.

The rest of the paper is structured as follows: In the (Double) Machine Learning section, we introduce the basic principles of double machine learning, focusing on double selection for logistic regression models. In the Application: Nonresponse Modeling for the GESIS Panel section, we describe an application for nonresponse modeling in the GESIS panel. We conclude with a discussion in the Discussion and Conclusion section.

(Double) Machine Learning

The term machine learning covers lots of data analysis techniques, for example, regression analysis methods and variants that are frequently used in social sciences. When working with high-dimensional data, standard regression analysis using ordinary least squares (OLS) estimation is often not appropriate because it can only include a limited number of variables. In addition, including many covariates bears the risk of overfitting by including irrelevant variables that model the random noise in the existing data. This leads to biased estimates of the coefficients of the variables of interest and poor predictive performance when applying the model to a new data set. To avoid the problem of overfitting, machine learning procedures have been developed. One prominent method is the lasso that performs model selection. While the lasso delivers great predictive performance, the resulting regression model cannot be interpreted because lasso introduces a regularization bias which is inevitable to avoid overfitting. The lasso can fail to select confounding variables that are strongly correlated with the variables of interest but only weakly correlated with the dependent variable. While these confounders do not harm the predictive performance of the lasso, they introduce omitted variable bias (Belloni et al., 2014b), which biases the inference results. The intuition is that the effect of the omitted variable/the not selected confounders is taken up by the coefficient of the target variable we are interested in because they are strongly correlated. The problem of omitted confounders is inherent to all machine learning methods and leads to biased estimates of parameters and relationships and hence invalid post-selection inference, despite their predictive power (Belloni et al., 2014a).

Often the machine learning algorithm is considered to be a black box that delivers acceptable prediction accuracy but in which the relationship between the variables is not understood. In many situations, however, scientists and practitioners are interested in learning the effect of certain variables, often called treatment variables, on one or more dependent variables, holding all other factors constant. This is more challenging than building a predictive model because here the black box must be opened and the inner mechanism learned.

The double machine learning framework, which we present in more detail in the following section, allows for such valid post-selection inference and hence learning about parameters and explanatory variables in a high-dimensional setting. The key idea is that double machine learning techniques make sure that all relevant confounders are included in the model and hence the parameter of interest can be estimated without the problem of omitted variable bias due to unobserved confounders. The key assumption behind double machine learning is that the model is selected in a way to include all necessary confounders. We will explain how this is done in more detail in the next section.

Basic Setting and Idea behind Double Machine Learning

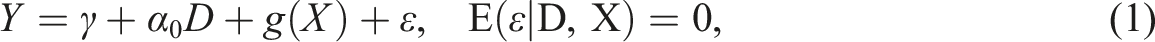

In this section, we introduce the basic ideas behind double machine learning. The goal is to estimate the treatment effect α0 of a treatment variable D on the dependent variable Y in a high-dimensional setting, namely

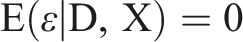

The unconfoundedness assumption or exogeneity assumption is given by

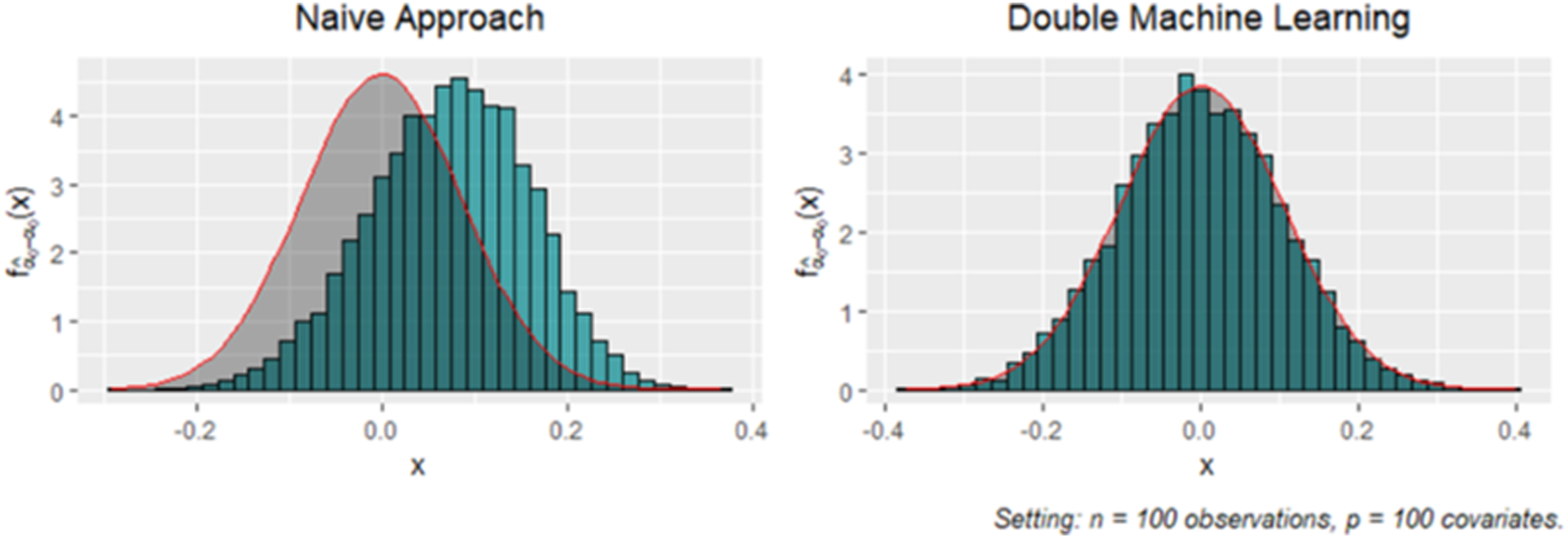

We demonstrate the different properties of the estimates received from the naive approach and the double machine learning approach by a simple simulation study of the model above with n = 100 observations and p = 100 covariates. Figure 1 shows the empirical distribution of the estimation errors Distribution of

In the following section, we show how double machine learning can be used in the context of the logistic regression model. We use this approach in our application.

Double Selection for Logistic Models

In many survey applications, the dependent outcome variable is binary, and for binary outcome variables, logistic regression is often the approach of choice. For logistic regression, the same arguments as outlined above apply when modern machine learning methods such as lasso are used to select variables and estimate the coefficients in high-dimensional linear regression models. To enable valid post-selection inference for the logistic regression, the double machine learning approach has to be modified appropriately (cf. Belloni et al., 2013).

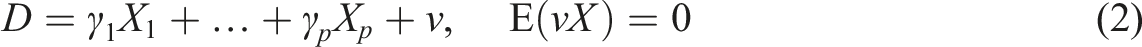

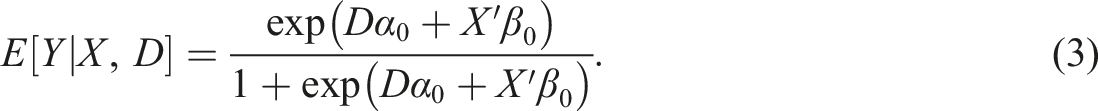

In the logistic regression, a binary dependent variable Y relates to a scalar treatment D of interest and a p-dimensional control X through a link function G

For logistic regression, the link function is given by G(t) = exp(t)/{1 + exp(t)}. We aim to perform statistical inference on the coefficient α0, which represents the impact of the treatment on the dependent variable through the link function. Estimation is usually based on the (negative) log-likelihood function associated with the logistic link function, as follows

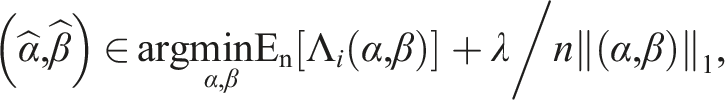

For estimation in a high-dimensional setting, an ℓ1-penalty term,

Application: Nonresponse Modeling for the GESIS Panel

To illustrate the double machine learning lasso, we apply the technique to model nonresponse in the 2013 recruitment to the GESIS panel.

Nonresponse in Panel Recruitment

Recruitment to a probability-based panel is arguably the most important and most expensive part of the panel life-cycle. The recruited sample needs to represent the target population and the sample size needs to be large enough to obtain precise estimates. The recruitment process usually includes several steps: contacting sampled cases and inviting them to a first recruitment survey, conducting this recruitment interview and, often during it, obtaining consent to proceed in the panel. Consenting respondents are then invited to a welcome survey (or profile survey), and those who complete it are considered to be panel members. The panel members are then surveyed on a regular basis.

Even if the regular panel waves are conducted in a self-administered mode (e.g., by mail questionnaire and/or online), it is common to approach sampled persons and conduct the recruitment interview in an interviewer-administered (face-to-face or telephone) mode (Blom et al., 2016). Respondents to the recruitment survey are then asked to proceed with the subsequent survey using cost-saving self-administered modes. This, however, includes a switch in response mode that may be subject to systematic nonresponse.

For our application, we choose nonresponse in the first interview after this switch of modes. We consider this stage to be very important for several reasons: First, this is when a large number of respondents to the recruitment survey are usually lost (for nonresponse rates in four large-scale scientific (mixed-mode) online surveys, see Blom et al. (2016)), and there is a need to understand nonresponse in order to prevent it, that is, by tackling likely nonresponse through targeted invitations (Lynn, 2020). Second, nonresponse among respondents to the face-to-face interview is costly if we consider that they have completed the cost- and labour-intensive personal interview but are no longer available to take part in the less expensive self-administered part of the panel. In addition, refreshment samples are usually planned for panels once the number of respondents has fallen below a certain minimum number. Starting with a smaller sample means that costly new recruitment is needed sooner. Third, nonresponse can introduce bias to the panel. If the respondents are not lost at random, analyses of panel data can be severely biased.

While a number of studies have been published on modeling panel attrition, for example, nonresponse to individual panel waves or dropout from the panel, the literature about correlates of nonresponse at the recruitment stages is surprisingly scarce. Comparing survey responses to official benchmarks, Sakshaug et al. (2020) analyze total recruitment error, which they define as error from initial nonresponse plus error from non-consent to be contacted again. In their comparison of a self-administered (mail/web) and CAPI recruitment, they find, for both modes, nonresponse bias to be larger than non-consent bias and total recruitment bias to be similar in both groups: both recruited samples overrepresent older and more educated population groups, currently employed persons, and higher-wage groups. They underrepresent foreign-born persons. For the GESIS recruitment panel which we also use in the present study, Bosnjak et al. (2018) compare socio-demographics of respondents of the different recruitment stages to benchmarks from the German Microcensus. They find age, citizenship, marital status, household size, place of birth, education, and household income to be distributed differently among the sample of respondents compared to the general population. The differences tend to be larger for the welcome survey than for the recruitment survey. While univariate benchmark comparisons are very useful to get an impression of bias in sample composition, they do not inform about the effect of the interplay between the respondents’ characteristics on the response decision.

Models for nonresponse in the initial recruitment survey are usually limited to only a few variables from the sample frame. The recruitment interview, however, usually generates a lot of information on the respondent that can be used to study nonresponse in the welcome survey: In addition to basic sociodemographic information, it usually also includes information on attitudes and survey experience. In interviewer-administered surveys, the interviewers often provide information about the interview situation and their expectations of the respondents’ future participation in the panel. In particular, interviewers’ ratings of a respondent’s propensity to participate in a future survey, as well as ratings of cooperativeness and enjoyment, have been found to improve nonresponse models (see, for example, Plewis et al., 2017; Sinibaldi & Eckman, 2015). Understanding the nonresponse process better can help to identify measures to address the problem, for example, through targeted invitations (Lynn, 2020).

While having a rich set of factors that potentially influence nonresponse is very helpful to understanding the nonresponse decision, it poses a challenge to nonresponse modeling. Indeed, including a large number of variables, possibly split into multiple dummy variables, and interactions requires big data solutions.

Data and Methods

The GESIS Panel Data

The GESIS panel (Bosnjak et al., 2018) is a probability-based, mixed-mode online and postal mail panel conducted bimonthly by GESIS—Leibniz Institute for the Social Sciences in Mannheim, Germany. The first cohort of the GESIS panel was recruited in 2013 and refreshment samples were recruited in 2016 and 2018. Recruitment to the GESIS panel in 2013 was based on a random sample of 21, 870 German-speaking residents of Germany aged 18 to 70 during the year of recruitment. In the first step, all sampled cases were invited to participate in a face-to-face recruitment survey. During this survey, respondents were asked for their consent to be invited to the GESIS panel by means of the online or the paper-and-pencil mode. Consenting respondents were then invited to participate in the welcome survey in the mode of their choice. Only after completing the welcome survey were respondents considered to be GESIS panel members.

In our study, we analyze nonresponse in the 2013 welcome survey among consenting respondents. We use data from the GESIS panel registration survey in 2013 (GESIS, 2020) to model nonresponse (or drop-out) (yes/no) in the subsequent welcome survey. In total, 7, 599 persons participated in the face-to-face registration survey, of whom 6, 210 agreed to being invited to the welcome survey and participating in the GESIS panel. Of these individuals, 4, 938 responded to the welcome survey and thus became regular panel members (dropout rate: 20.5%).

Nonresponse Model

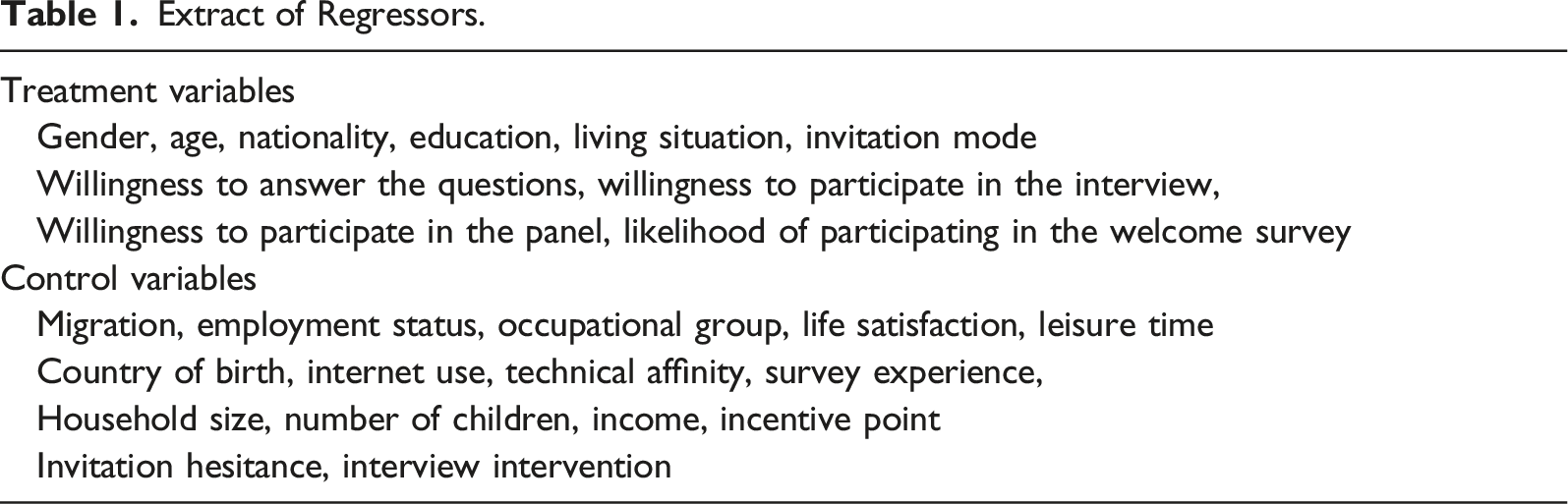

For our final sample, we drop 302 observations with missing information, leaving us with 5, 908 respondents from the registration survey, of whom 4, 720 completed the welcome survey (dropout rate: 20.1%). In our analysis, we use 63 initial regressors representing information collected in the recruitment interview. This includes socio-demographic characteristics of the individuals and their cooperativeness throughout the interview. The variables we include in the analysis are listed in Table 1. We transform categorical variables into level-wise dummies and add interaction terms of the regressors. This ultimately leads to a high-dimensional logit model with a total of 329 regressors: • How would you rate the respondent’s willingness to answer the questions? (answer categories: good, moderate, low, good in the beginning but got worse, low in the beginning but got better) • How difficult or easy was it to persuade the respondent to take part in the interview? (answer categories: very difficult, rather difficult, rather easy, very easy) • How difficult or easy was it to persuade the respondent to take part in the follow-up interview? (answer categories: very difficult, rather difficult, rather easy, very easy) • How likely is it that the respondent will take part in the first online- or paper questionnaire? (answer categories: very likely, rather likely, rather unlikely, very unlikely) Extract of Regressors.

Sparse categories are combined with other categories for our analysis. We recode the answer categories into good vs. bad/all other categories for “willingness to answer the questions” and combine very difficult and difficult for the two questions on the difficulty of persuading respondents to take part in the interview and follow-up interview. For the rating of the likelihood of response to the first online or paper questionnaire, we combine rather unlikely and very unlikely. With regard to sociodemographics, we include age, gender, highest educational degree, country of birth, and living situation. We generate the living situation variable from information on marital status, partnership and living in a shared household leading to the five categories: no partner; partner, not in household; partner, in household; married, living together; married, living apart. An overview of the coding for all treatment variables can be found in Table 2 in the Appendix. We include interactions of the choice of mode for the welcome survey with age, education and living situation to account for differential effects of the choice of mode on nonresponse.

Model Training

We apply the double machine learning approach introduced in Double Selection for Logistic Models for our logit model (3). The model training includes the three main steps described in Appendix B. The estimation is performed in R using the function rlassologitEffects(x,y,index), which is provided by the R-package hdm (Chernozhukov et al., 2016). The input parameter x is the matrix of our 329 regressors serving as controls and treatments. The input parameter y is our outcome variable nonresponse. The input parameter index indicates the position of variables of x which we use as treatment variables (cf. Table 1). The regression functions are estimated via post-lasso with default penalty levels

For refined models using the double machine learning approach, we refer to the Python and R package DoubleML (Bach et al., 2021, 2022). This package provides a general implementation of the double/debiased machine learning framework and makes it possible to base inference on a large collection of classification algorithms including non-linear methods for the nuisance parameter estimation in the auxiliary regression, for example, random forests and gradient boosting. A detailed user guide for readers interested in applying the double machine learning approach can be found at https://docs.doubleml.org/stable/index.html.

Results

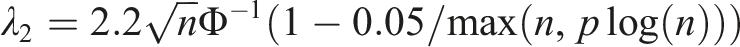

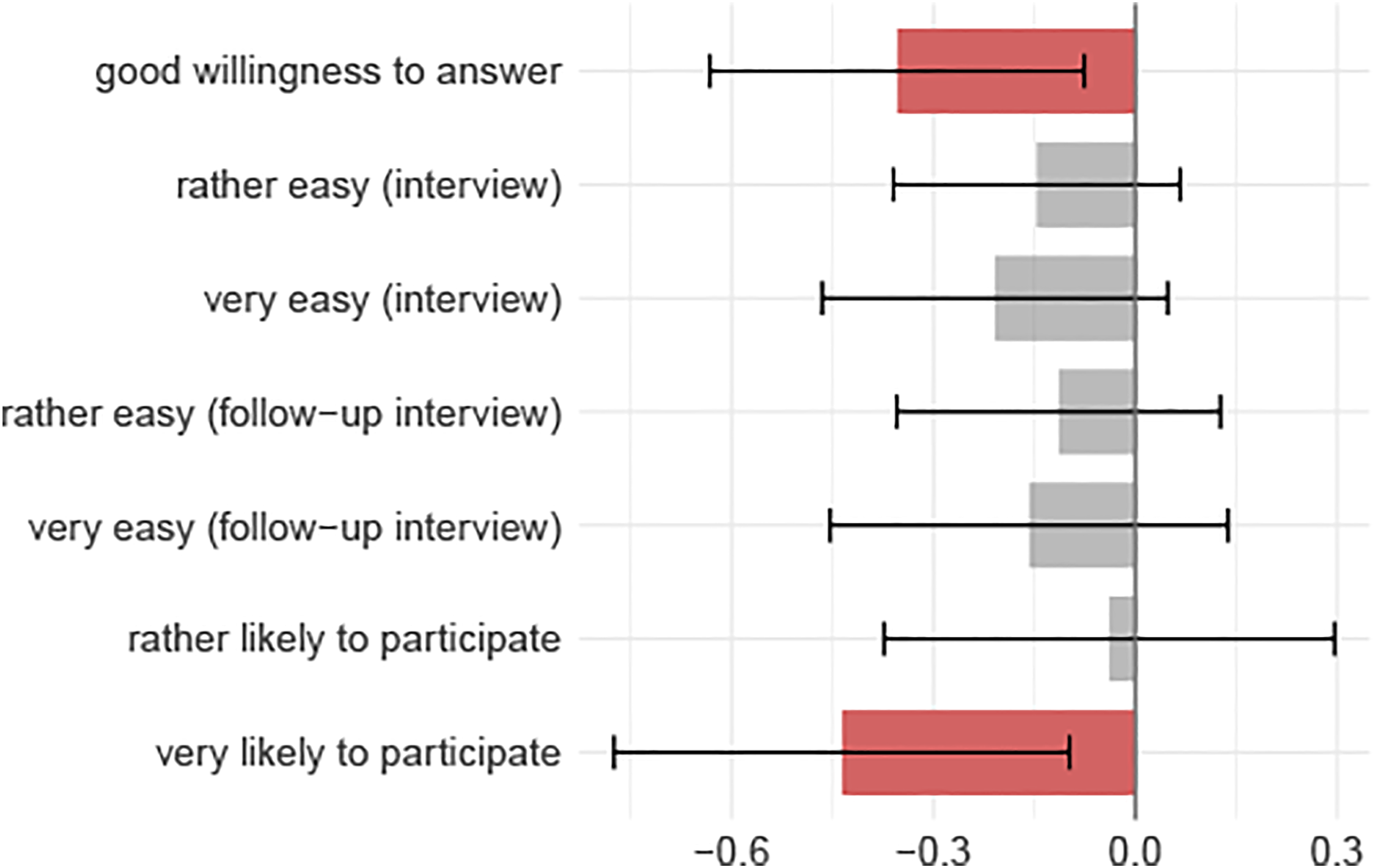

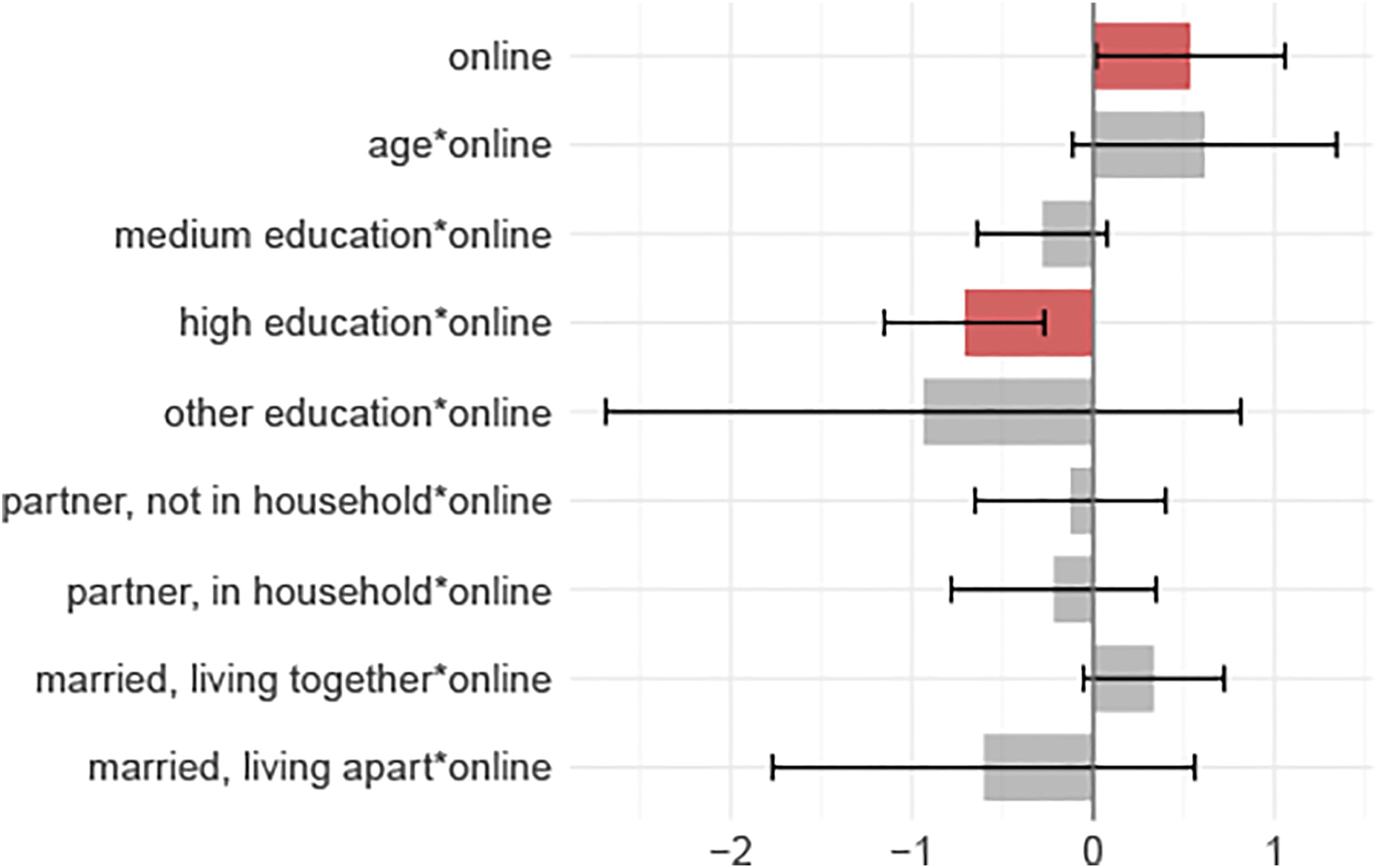

In this section, we present the results of our double machine learning approach to the inferential analysis of nonresponse in the GESIS panel. The results of the double lasso for logistic regression are visualized in Figures 2–4, and a regression table can be found in Table 3 in the Appendix. We start with the interpretation of the interviewer ratings. The estimated coefficients of the interviewer ratings from the logistic regression together with the corresponding confidence intervals are displayed in Figure 2. Regression coefficients of the interviewer ratings in the logistic regression model. Regression coefficients of the socio-demographic characteristics in the logistic regression model. Regression coefficients of the chosen survey mode in the logistic regression model.

Cooperativeness

We find that the interviewer observation of respondents’ willingness to answer the survey questions in the recruitment survey had a highly significant negative effect on survey nonresponse. Respondents who were rated as having good willingness to respond to the recruitment survey dropped out of the survey after the recruitment stage to a lesser extent than respondents who were rated as having low willingness. We do not find significant effects for the ease of persuading respondents to participate in the interview nor for the ease of persuading respondents to consent to be contacted again for the follow-up interview. The effects however tend in the same direction as the observed willingness to answer the questions: respondents who were rated as being rather easy or very easy to persuade were less likely to drop-out.

Rated likelihood of participation

Respondents who were rated as being rather or very likely to participate in the welcome survey dropped out after the recruitment survey to a lesser extent than did those who were rated as being rather unlikely or very unlikely to participate. We, however, find that the only significant effect in this regard is for “very likely” category.

Socio-demographics and chosen survey mode

Next, we discuss the effects of socio-demographics and chosen survey mode for the welcome survey. The results are found in Figures 3 and 4.

We do not find a significant effect for respondents’ gender but do find a positive effect for having German citizenship: respondents with German citizenship dropped out after the recruitment survey at a higher rate than respondents without German citizenship.

We find that survey mode interacts with age, education (though only significantly with high education) and living situation (only being significant at the 10% level for “married, living together”). We interpret the effects of all variables that show a significant interaction with chosen survey mode. The online mode variable has a positive coefficient and is positively interacted with age, which itself has a negative coefficient. For both survey modes, we find that the older the respondents are, the lower is their likelihood to drop out after the recruitment survey. The effect is much stronger for respondents who chose the paper-and-pencil mode (−2.126) than those who chose the online mode (−1.511). Having medium, high or other education is negatively interacted with online survey mode. Medium and other education both have negative main effects and negative (though not significant) interactions with online mode. Drop-out was lower for these two groups than for respondents with low education for both survey modes and the decreasing effect is less pronounced for respondents who chose the paper-and-pencil mode than it is for those who chose the online mode. For high education, we find a positive effect on drop-out for respondents who chose the paper-and-pencil questionnaire (0.081); this turns into a negative effect for highly educated respondents who chose the online mode (−0.630). We find positive but not significant effects for the living situations “not married with partner, separate households,” “not married with partner, joint household” and “married, living apart” and negative interactions with online mode for these categories. This means that, compared to respondents who were not married and did not have a partner, the risk of drop-out was higher for respondents who chose the paper-and-pencil mode but lower for those who chose the online mode. Compared to respondents who were not married and did not have a partner, respondents who were married and lived together with their spouse showed a significant reduction in drop-out after the recruitment interview that was stronger if they chose the paper-and-pencil questionnaire (−0.634) than if they chose the online mode (−0.300).

Discussion and Conclusion

In this study, we analyze nonresponse in the welcome survey of the probability-based mixed-mode GESIS panel. Losing respondents after the face-to-face recruitment interview is not only very costly but can, through selective nonresponse, put the validity of panel inference at risk. Thus, the goal of panel recruitment should be to prevent panel drop-out among population groups that are found to be unlikely to become panel members. Knowing which population groups are likely to drop-out can help in the identification and development of targeted strategies for these groups (Lynn, 2017).

Using double machine learning for logistic regression, we are able to provide valid confidence intervals for the regression coefficients of interest and are thus able to discover significant variables that affect the likelihood to drop out. Bosnjak et al. (2018) find that the GESIS panel shows composition bias for several socio-demographic variables. We go beyond this analysis and show that these variables explain nonresponse even after controlling for several other characteristics. Furthermore, the effects of age, education, and family status are moderated by the choice of the paper-and-pencil or online survey modes. Knowing this, it might be worthwhile to develop targeted interventions that increase response depending on the mode the respondents choose. For example, older respondents who choose the paper-and-pencil mode are less likely to need an intervention than those who choose the online mode. Our findings, however, are not generalizable to countries with strongly different degrees of digitalization and different digital-divide than Germany. For the face-to-face recruited respondents of the German Internet Panel, Herzing and Blom (2019) show that age and education are associated with Internet use. If the Internet penetration within population subgroups strongly differs from Germany (e.g., only young individuals are able to respond online), the mode choices will be different than in Germany and thus our findings concerning the interactions of mode-choice and socio-demographic characteristics are not transferable.

Our study supports the findings from previous studies that interviewer ratings on the likelihood to participate in a subsequent survey are associated with respondents’ actual participation. Also, an observed good willingness to answer the questions of the survey just completed is positively associated with the likelihood to respond to a subsequent survey. While this is strong support for the usefulness of collecting such ratings in a panel survey, it is not clear, however, how to best ask interviewers to provide these. For the observed likelihood to participate in a subsequent survey, previous studies have used different scales (from 1 to 100 in Sinibaldi and Eckman (2015), from 1 to 5 in Plewis et al. (2017) and from 1 to 4 in the present study) and more research is needed on comparing these in their ability to classify respondents and explain nonresponse in a subsequent panel wave. Collecting the observed willingness to participate in follow-up surveys is only useful in a panel context. Whether collecting the observed willingness to answer the questions of the just completed questionnaire has any use beyond predicting future nonresponse, for example, in assessing response quality in a cross-sectional survey, is beyond the scope of this study.

The findings presented in this study are also limited to the nonresponse process in the face-to-face recruitment to a (mixed-mode) online panel. More research is needed on the explanation of nonresponse in different recruitment strategies. For example, recruiting respondents by mail and subsequently switching them online (see, for example, Cornesse et al., 2021) might be prone to very different nonresponse mechanisms. Furthermore, the mechanisms of nonresponse in the recruitment step are likely to differ from the mechanisms of nonresponse in subsequent waves. For example, comparing the sample composition of a panel study of employees in Germany to official benchmarks, Sakshaug and Huber (2016) find that nonresponse bias increases over time. However, the largest wave-to-wave increase in nonresponse bias occurred after the initial wave and the wave-to-wave increases get smaller over time. Future research should focus on understanding differences between nonresponse in recruitment and subsequent waves to learn more about respondents’ decision-making and find optimal ways of maintaining high motivation throughout the panel life-cycle.

The logistic regression model we consider in this paper is only one exemplary setting where double machine learning can be used for valid inference in high dimensions. The double machine learning approach can also be combined with nonlinear regression methods, like random forests, boosted trees, and neural nets, for both continuous and categorical dependent variables (Chernozhukov et al., 2018). Nevertheless, the double machine learning strategy has some limitations. First, like many statistical methods, the double machine learning strategy can only be applied to data sets that contain no missing values and cannot correct for possible measurement error in the observed variables. Second, and most crucial, it strictly relies on the unconfoundedness assumption, that is, the assumption that all relevant confounders are observed. As this assumption is not always plausible, the double machine learning approach has been adapted for the case of unobserved confounders using instrumental variables (IV) methods, see Belloni et al. (2012). In this context, Kueck et al., 2022 provide results for valid inference in an instrumental variable model when L2-Boosting is used for variable selection. Further adaptations of the double machine learning approach have been developed, for example, for causal mediation analysis (Farbmacher et al., 2022) or dynamic treatment effects estimation (Bodory et al., 2020).

The double machine learning approach can be successfully applied in all kinds of settings where researchers are interested in explaining the effects of treatment variables while controlling for a high number of covariates. Recently, many studies have been published which apply the double machine learning technique in economics, for example, to analyze gender differences in wage expectations (Bach et al., 2018a; Fernandes et al., 2021; Wunsch & Strittmatter, 2021) or to estimate the effect of policies/programs (Denisova-Schmidt et al., 2021; Goller et al., 2021; Huber et al., 2021; Knaus, 2021; Knaus et al., 2020).

In survey methodology, we intend to analyze how double machine learning might be used to select and include high numbers of control variables in imputation or weighting models. An application from political science could be the study of voting behavior on the neighborhood level, including detailed information about the socio-demographic structure, economic situation, and welfare. Another interesting research topic could be the identification of the parental effect on educational attainment during the COVID-19 pandemic, controlling for measures of children’s online behavior like social media usage. Given that social scientists are increasingly confronted with many types of big data, such as digital behavioral data and geo-spatial information of all kinds, the applications for which social scientists might benefit from the double machine learning technique are numerous.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Open access was funded by the Deutsche Forschungsgemeinschaft (DFG, German Research Foundation) – Project number 491156185. Kück and Spindler acknowledge (Bach et al., 2022) financial support by the Deutsche Forschungsgemeinschaft – Project number 431701914.

Author Biographies

Data and Empirical Results.

Double Machine Learning for Logistic Regression.

The double machine learning approach for logistic regression includes three main steps: 1. initial estimation of the regression function via post-lasso logistic regression, 2. estimation of instruments that are orthogonal to the weighted controls via weighted post-selection least squares, and 3. estimation of α0 based on the nuisance estimates obtained in (1) and (2).

The estimation procedure for α0 is summarized in more detail in the following algorithm:

Algorithm 1. DML for Logistic Regression 1: Run a post-lasso-logistic regression of Yi on Di and Xi where Λi (α, β) is the (negative) log-likelihood function associated with the logistic link function. For i = 1, …, n, keep the value with the logistic link function G(t) = exp(t)/{1 + exp(t)} 2: Run a post-lasso OLS regression of

Keep the residual 3: Run an instrumental logistic regression of where and