Abstract

The day of the week on which sample members are invited to participate in a web survey might influence propensity to respond, or to respond promptly (within two days from the invitation). This effect could differ between sample members with different characteristics. We explore such effects using a large-scale experiment implemented on the Understanding Society Innovation Panel, in which some people received an invitation on a Monday and some on a Friday. Specifically, we test whether any effect of the invitation day is moderated by economic activity status (which may result in a different organisation of time by day of the week), previous participation in the panel, or whether the invitation was sent only by post or by post and email simultaneously. Overall, we do not find any effect of day of invitation in survey participation or in prompt participation. However, sample members who provided an email address, and, thus, were contacted by email in addition to postal letter, are less likely to participate if invited on Friday (email reminders: Sunday and Tuesday) as opposed to Monday (email reminders: Wednesday and Friday). Given that no difference between the two protocols is found for prompt response, the effect seems to be due to the day of mailing of reminders. With respect to sample members' economic activity status, those not having a job and the retired are less likely to participate when invited on a Friday; this result holds also for prompt participation, but only for retired respondents. Also, sample members who work long hours are less likely to participate when invited on a Friday; however, no effect is found for prompt response.

Introduction

Survey researchers using online data collection methods continue to invest in efforts to identify ways of improving response rates (Daikeler et al., 2022; Jäckle et al., 2017). Response speed is also of importance, particularly for surveys that use methods other than email to send reminders or to seek participation from initial nonrespondents (i.e. push-to-web mixed-mode surveys), due to the additional costs associated with slow response (Carpenter & Burton, 2018). For example, if sample members do not participate in the survey by a certain date, mail reminders may be sent to obtain participation by slow respondents, incurring in additional postage and printing costs; furthermore, in sequential mixed-mode designs, with web as a first mode of data collection, respondents that do not participate by the end of the web fieldwork period are followed up with other survey modes, typically interviewer administered modes (i.e. Face-to-face or telephone interviewing), increasing the overall survey costs.

In this context, the tools used to improve web response rates and response speed have become more sophisticated, particularly various types of adaptive designs (Biffignandi & Bethlehem, 2021; Schouten et al., 2017; Schouten et al., 2017) have been introduced. Researchers no longer focus on the average effect of survey design features but are instead interested in the effect on subgroups of particular interest, namely, those with otherwise low response rates or a propensity for slow response. This reflects a recognition that both outcomes of interest (response rate, response speed) and the effectiveness of design features that influence the outcomes may vary substantially over sample subgroups. In other words, adaptive or targeted designs might be more effective than uniform designs if design features are more effective for specific sample subgroups. In this respect, panel surveys provide a particularly rich environment for the application of this type of design as the wealth of prior information available can be used to identify subgroups with (likely) variation in the outcomes of interest and to inform the choice of design features that might provide improved outcomes (Lynn, 2017).

The design feature of interest in this article is the day of week on which an invitation to participate is mailed (i.e. day of mailing). We study this feature in the context of a web-first mixed-mode panel survey, where slow response leads to considerable cost as the follow-up data collection mode is face-to-face. Specifically, we focus on the interaction between day of mailing and survey participation characteristics of sample members, namely prior participation in the panel and previous provision of an email address. The former is related to co-operation of sample members, while the latter allows invitations and reminders to be sent by email, thus affecting the frequency and volume of reminders that can be sent and could well therefore interact with mailing day. Testing if and to which extent the interaction between day of mailing and previous cooperation/previous provision of an email address is significantly associated with survey participation/prompt participation is of our interest because adaptive (or targeted) designs might be more effective (compared to uniform designs) if variation in the design features (in our case, day of mailing) and sample members characteristics (in our example, prior participation/previous provision of an email address) interact.

We also examine the interaction between day of mailing and socio-demographic characteristics of sample members. The socio-demographic characteristic in which we are interested is, specifically, economic activity status as we hypothesise ways in which the association between mailing day and outcomes might vary depending on economic activity status, as this characteristic is expected to be associated with leisure time at weekends. As above, our motivation to test the interaction between day of mailing and sample members’ economic activity status is motivated by the consideration that the effect (on participation and prompt participation) of different days of mailing may vary by sample subgroups and, hence, that survey designs should be tailored by subgroups.

Literature Review

Web surveys usually achieve lower response rates than surveys administered in other modes (Lozar Manfreda et al., 2008; Daikeler et al., 2020; for a comparison between web versus mail surveys: Shih & Fan, 2008). The survey methodological literature has investigated the effectiveness of different strategies to boost web response, including, among other aspects, variations in the format, content and length of survey invitations (Fan & Yan, 2010; Kaplowitz et al., 2012; Mavletova et al., 2014; Petrovčič et al., 2016). Nevertheless, research on the effect of invitation timings (time of the day or day of the week) on response rate and response speed is limited, and evidence is mixed (as documented below, in this section). Indeed, while for interviewer administered surveys, the best time for contacting respondents seems to be weekday afternoons (for face-to-face interviews), evenings (for telephone interviews) and weekends, a clear indication for timing the invitation to a web survey does not seem to exist (Callegaro et al., 2015).

As noted by Andreasson et al. (2017), on one hand, defining optimal invitation timing in web surveys seems crucial as receiving an invitation at an inconvenient time may lead sample members to ignore the survey request and eventually compromise participation altogether; on the other hand, invitation timing seems less relevant in web surveys compared to interviewer administer modes, like telephone and face-to-face surveys. Indeed, in web surveys, researchers have only control on when the invitation is dispatched (either by email or postal letter), while they cannot fully control when the invitation reaches the sample member (e.g. when the postal letter arrives) nor when the sample member reads the invitation. Also, in web surveys, sample members do not need to be immediately available to participate in the survey, as participation can be postponed to a more convenient time.

In this review, we focus on the day of the week on which the invitation was sent, given that the experiment tested this dimension (while the effect of time of the day was not tested). Evidence from the literature on this aspect is mixed. Lindgren et al. (2020) test experimentally, using the Swedish Citizen Panel, the effect of sending invitation emails to a web survey on the seven different days of the week on net participation rates – these are calculated as responses (including partial responses) divided by the number of invitees in the initial sample, excluding e-mail bounce backs. The authors find that the highest prompt participation (within one day of fieldwork) is observed if invitations are sent on Wednesday and that prompt participation is significantly lower if respondents are invited on Fridays, Saturdays and Sundays, as opposed to Wednesday. Nevertheless, the difference is eroded with time, with similar response rates after six days (even in absence of reminders). When analysing the effect separately for groups with different economic activity statuses, the authors find that both employed and not-employed sample members are less likely to respond within the first 24 hours if they receive the invitation on a Saturday or Sunday compared to Wednesday; however, the effects disappear after 6 days from the survey invitation. Furthermore, Lindgren et al. (2020) find indications that matching respondents’ preferred day with the actual day for the survey invitation can increase participation rates, at least within a week.

Consistently with Lindgren et al. (2020), Faught et al. (2004) also conclude that Wednesday is the most effective day to send email invitations, while in a large scale study conducted on Federal employees, Lewis and Hess (2017) found that invitations sent on Tuesday morning achieved the highest participation rates (as opposed to Wednesday and Thursday). Their experimental design however excluded Monday and Friday, which are the two days of interest in our research design. The days of interest for our research were tested by Bennett-Harper et al. (2007) who find that sending the survey invitation on a Friday increases response rates compared to an invitation sent on a Monday or on a Wednesday; however, Friday invitations required more reminders to achieve the final level of cooperation. Contrasting to this evidence, an experimental study (Wolff & Göritz, 2022) documents higher response rates at the beginning of the working week, which falls to a low on Friday; the analysis of socio-demographic characteristics shows that the decline is higher for employed (vs. nonemployed) sample members.

Sauermann and Roach (2013) found no significant effect on response rates of varying the survey invitation day comparing experimentally all seven week days in a career survey addressed to junior scientists and engineers.

Similarly, Shinn et al. (2007) find no effect on response rates or response speed of varying the day on which the invitation is sent: the authors however conducted the survey on a very small sample size (N = 192) with five treatment groups, finding response rates that vary between 21% (Monday invite) to 44% (Wednesday invite) and response speed (i.e. number of working days elapsed from invitation to response) range from 3 (Monday invite) to 6 (Friday invite).

Observational, rather than experimental, studies include Zheng (2011), who analysed response rate from 100.000 surveys implemented through the platform Survey Monkey, focussing on customer surveys (i.e. surveys collecting customer feedback) and internal surveys (i.e. surveys run on employees of an organisation). The author finds the response rates were higher for survey invitations sent on Monday and lowest for invitations sent on Friday (all week days were tested). Similarly, Callegaro et al. (2015) report results from other unpublished observational research on data from 2007 from the Knowledge Networks online panel, which signals that prompt response (within one day) was highest on a Sunday and a Monday (compared to other days), but overall response rates were very similar after an email reminder (sent three days after the initial invitation, leaving no ultimate effect on the survey response rate).

Finally, using the same experimental data analysed in this paper, Culinane and Nicolaas (2013), comparing household outcomes, did not find any effect on response rate of the day (Monday vs. Friday) of invitation mailings (sent by post, and email whenever possible) on overall response. We extend the analysis of Culinane & Nicolaas (2013) in several respects, by considering individual-level response, by exploring interactions of the mailing day with economic activity status with prior survey participation indicators, and email availability, and by including response speed as an additional outcome variable.

Research Questions

Previous research into the effects of mailing day on response rates is limited and has found mixed results. Further evidence of this effect is therefore valuable. Furthermore, it is of interest to know whether the effect, if any, differs between relatively co-operative and relatively reluctant sample members. If so, panel survey researchers would be able to vary the approach depending on prior wave participation of sample members. Similarly, mixed-mode panel surveys may have a valid email address for only a proportion of panel members, meaning that only some can be sent survey invitations and reminders by email while others must rely on postal mailings. But the effect of mailing day could depend on whether the survey invitation is received by email or letter as the response mechanisms are different. In one case, sample members are already online and just need to click on the link to complete the survey, while, in the other case, they need to open the invitation letter, then go online and type in the URL. Moreover, researchers have less control over the timing of arrival of postal letters than of emails. Also, the number of reminders could differ, as researchers may opt for sending email reminders (which are less costly and logistically easier to dispatch) in addition to postal mail reminders. To our knowledge, there is no empirical evidence to guide decisions about whether day of mailing should differ between postal mailings and emails. Our first set of research questions is:

Does propensity to participate in a web survey depend on whether the invitation is received on a Monday or on a Friday?

Does any effect of mailing day differ between previously cooperative sample members and previously less cooperative sample members?

Does any effect of mailing day depend on whether the invitation is sent by email in addition to postal letter or by postal letter only? With respect to RQ1c, we acknowledge that respondents who provided an email address might have a higher willingness to cooperate and, thus, a higher response propensity than other respondents which may confound the invitation letter mode effect with the respondents’ willingness. As discussed in the methodological section, we attempted to minimise this confounding effect by fitting regression models that control for factors which are associated with respondents’ cooperativeness (e.g. participation in earlier survey waves). We hypothesise that mailing day might also affect the speed of participation. We introduce the notion of prompt response as participation within two days from the invitation. The rationale is that with web, if sample members have time to complete the survey when they receive the invitation, then they would probably do it immediately. Our second set of research questions is thus:

Does propensity to participate promptly in a web survey depend on whether the invitation is received on a Monday or on a Friday?

Does any effect of mailing day on prompt participation differ between previously cooperative sample members and previously less cooperative sample members?

Does any effect of mailing day on prompt participation depend on whether the invitation is sent by email or by postal letter only? Further, we argue that individuals with different organisation of time over the week might have different responding behaviours depending on the day they receive the invitation. Our hypothesis is that individuals with busy working weekdays and leisure time during the week-end may show higher response rates and/or higher response speed if the invitation arrives on a Friday, rather than on a Monday. This is expected to be particularly true for ‘employment-busy’ people (these are defined here as people working more than a certain number of hours, see section ‘data’ for details). Conversely, individuals with a less structured distinction between busy time and leisure time (e.g. retired persons) may not show any difference in response rates and response speed if invited on a Monday as opposed to a Friday. Thus, our third research question is:

Does any effect of mailing day on propensity to participate or propensity to participate promptly depend on sample member’s economic activity status?

Data

Survey Design

We use experimental data from Wave 5 of the Innovation Panel of Understanding Society: the UK Household Longitudinal Study. To our knowledge, this is the first time a study of mailing day effects on a web survey has been carried out on UK data. Understanding Society is a multidisciplinary panel study that addresses a wide range of topics such as living arrangements, fertility, housing, economic activity, income, health and political attitudes (Buck & McFall, 2012). The Innovation Panel (IP) is a separate sample from the main Understanding Society sample and is used to test methodological innovations applicable to Understanding Society and longitudinal surveys more generally (Uhrig, 2011). The IP closely mirrors Understanding Society in its design. The target population is all persons living in Great Britain and a sample from this population was selected using a stratified, clustered, probability sampling design (Lynn, 2009). Address-based sampling was used, with an initial sample of 2,760 addresses included from Wave 1 of the survey in 2008. A refreshment sample was added at Wave 4 in 2011 using the same design, including an additional 960 addresses. Each adult (aged 16+) initially resident at a sampled address is defined as a sample person. The aim at the initial wave is to interview each sample person, while subsequent waves are carried out each 12 months and aim to interview both the sample persons (regardless of whether they still reside at the same address) and all other current members of the sample person’s household. Sample persons are followed when they move home, as long as they remain within Great Britain. The fieldwork for Wave 5 was conducted between May 11th and August 30th, 2012.

From Wave 5 onwards, experimentation with a sequential mixed-mode design (web and face-to-face) was introduced (Bianchi et al., 2017; Jäckle et al., 2015). Specifically, at Wave 5, two thirds of the sample was randomly allocated to the mixed-mode design and one third was randomly allocated to a face-to-face-only design. The data used in this article are from an experiment mounted on the part of the sample allocated to the mixed-mode design. The analysis presented here is based on the 1,885 panel members that were eligible for interview at both Waves 4 and 5; new entrants at Wave 5 are excluded. This analysis base represented an estimated 30.8% of all eligible sample members (AAPOR RR1). 1

The fieldwork procedures for the group of sample members allocated to the mixed-mode design at Wave 5 were as follows. Adult sample members (aged 16 or older) were sent by postal mail an invitation to participate in the survey online, along with an unconditional incentive. The incentive level varied experimentally. The invitation letter to the web survey included a URL and a unique user ID; respondents were invited to enter this user ID into the welcome screen of the web survey. Sample members who had supplied a valid email address (a little over one third of the mixed-mode sample) also received a version of the invitation letter by email. Only sample members who signalled at previous survey waves that they do not use internet regularly for personal use, were informed in the letter of the possibility to do the survey through a face-to-face interview (26.9% of the sample). Sample members with a known email address were sent reminder emails after two days and four days if they had not yet responded. After around a week, all sample members who had not yet completed the web survey were sent a reminder by post (on Saturday 19th May, 2012). For those without a known email address, this was the first reminder. Starting from 24th May 2012, interviewers then started visiting sample members at their homes to attempt a CAPI interview. In this phase, the web survey remained open (Jäckle et al., 2015).

The survey consisted of a household grid (collecting information on who is living in the household and basic demographic indicators for each), a household questionnaire (collecting information about housing, payments for rent, mortgage and utility bills, and other household attributes) and a personal interview. The first person in the household logging in the web survey was asked to fill in the household grid. An item on this instrument queried who is responsible for paying the bills in the household. The household questionnaire was addressed to either this person or his/her spouse or partner (whichever logged in first). After the household questionnaire is completed, the survey carries on into the individual questionnaire (Jäckle et al., 2015).

The Wave 5 questionnaire was not optimised for smartphone use: respondents attempting to complete the survey with a mobile device received a message asking them to log in to the survey using a computer (Jäckle et al., 2015).

Experimental Design

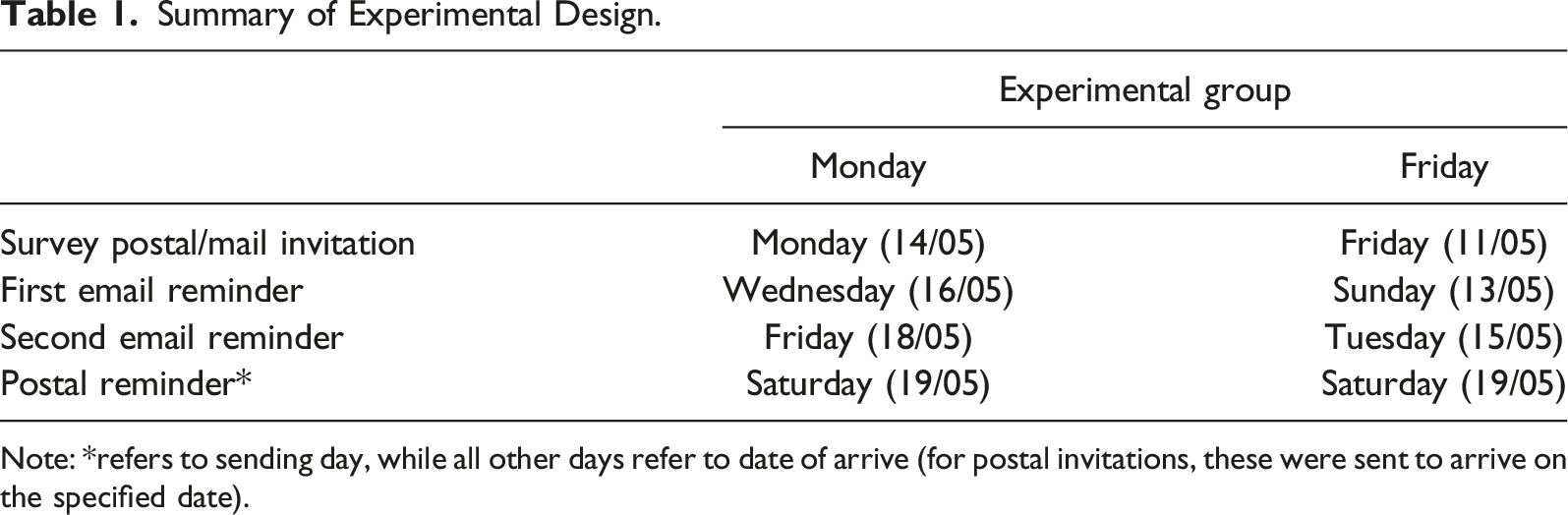

Summary of Experimental Design.

Note: *refers to sending day, while all other days refer to date of arrive (for postal invitations, these were sent to arrive on the specified date).

Measures of Interest

The two outcomes of interest in this study are participation in the web survey, and prompt participation. Both are indicated by dichotomous variables. The first is coded 1 if the web survey was completed at any point during the field work period; 0 otherwise. The second is coded 1 if the survey was completed within two days of the day that the invitation should have arrived. 2 The first email reminder was sent after two days, so defining prompt response as response within two days avoids confounding the effect of the initial invitation with that of the reminder. Response to the CAPI follow-up phase is ignored as it is not the focus of this study.

Prior participation takes the value 1 if a personal interview was given at the previous wave; 0 otherwise (proxy or no interview at Wave 4). Whether or not an email address was previously supplied is similarly indicated by a binary variable.

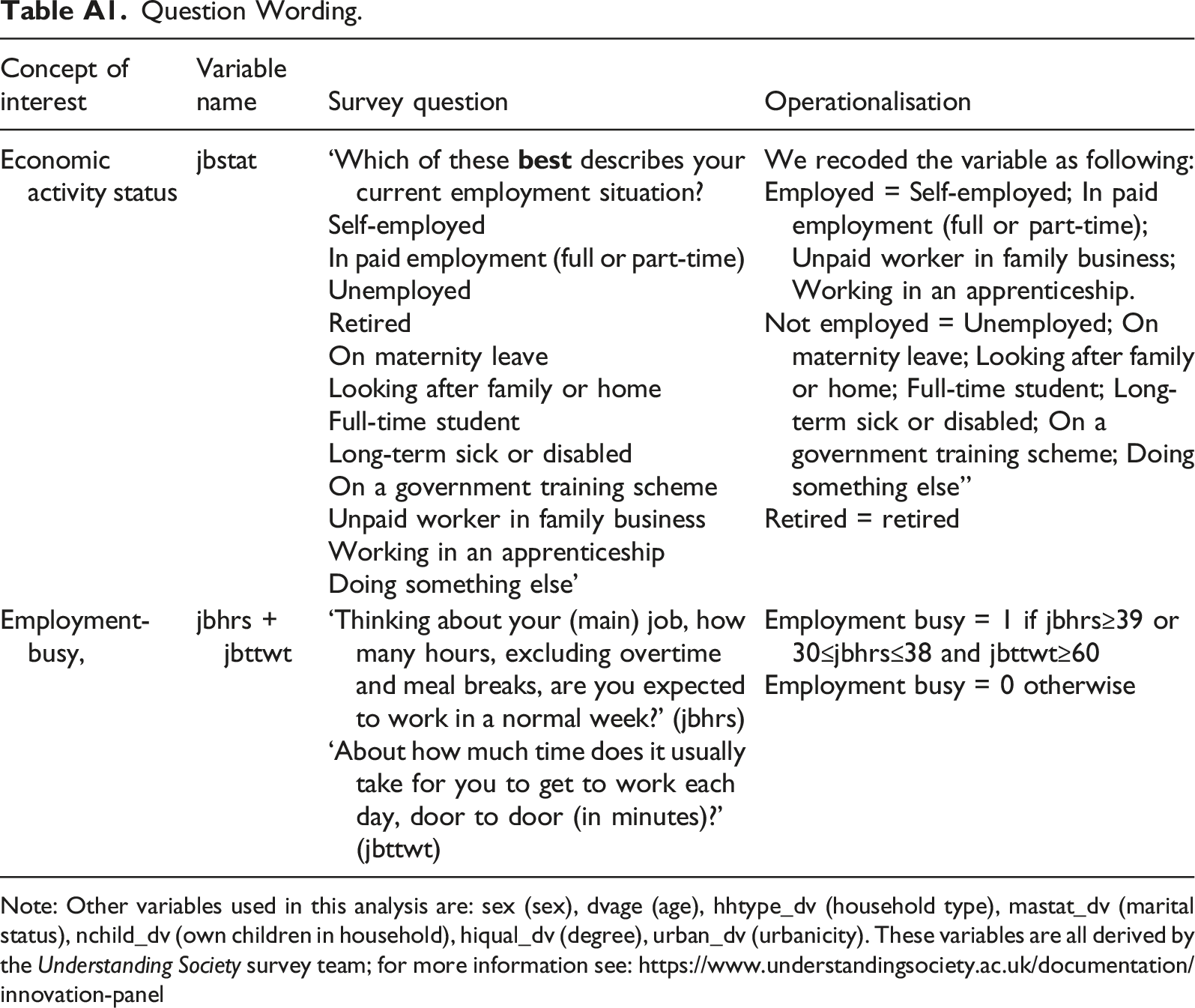

Economic activity status is derived from the IP variable jbstat (job status), as reported at the most recent wave in which the sample member had previously participated, and has three categories. 3 People having a job are those who reported their main activity as being employed, self-employed, an unpaid worker in a family business, or in an apprenticeship; people not having a job are those who were unemployed, on maternity leave, looking after family or home, full-time student, long-term sick or disabled, on a government training scheme, or doing something else; retired people are those who reported their status as ‘fully retired’. Additionally, we define ‘employment-busy people’ in the way introduced by Fumagalli et al. (2013): employed for at least 39 hours per week or employed for 30–38 hours with a commute of at least 60 minutes each day.

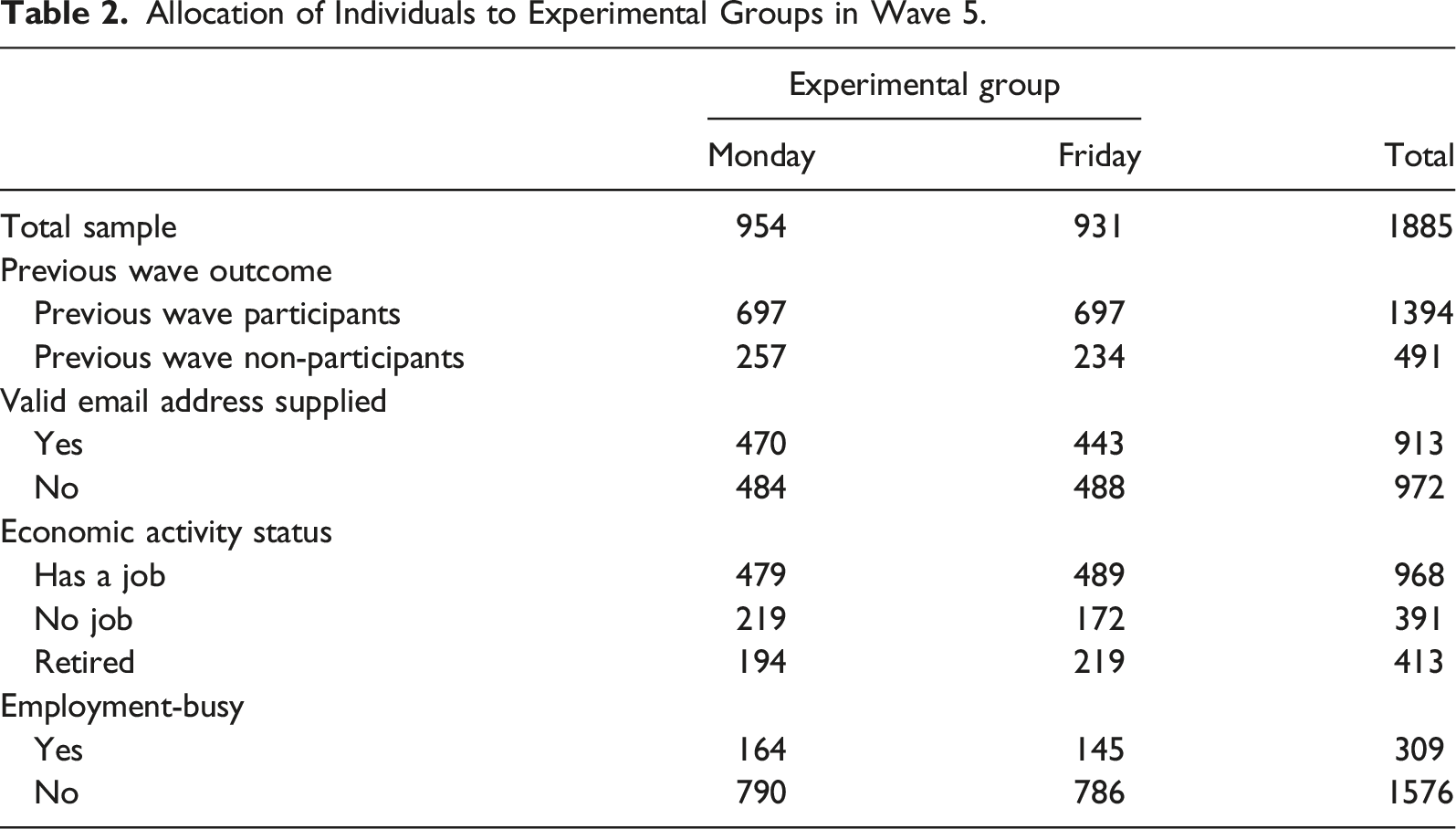

Allocation of Individuals to Experimental Groups in Wave 5.

Results

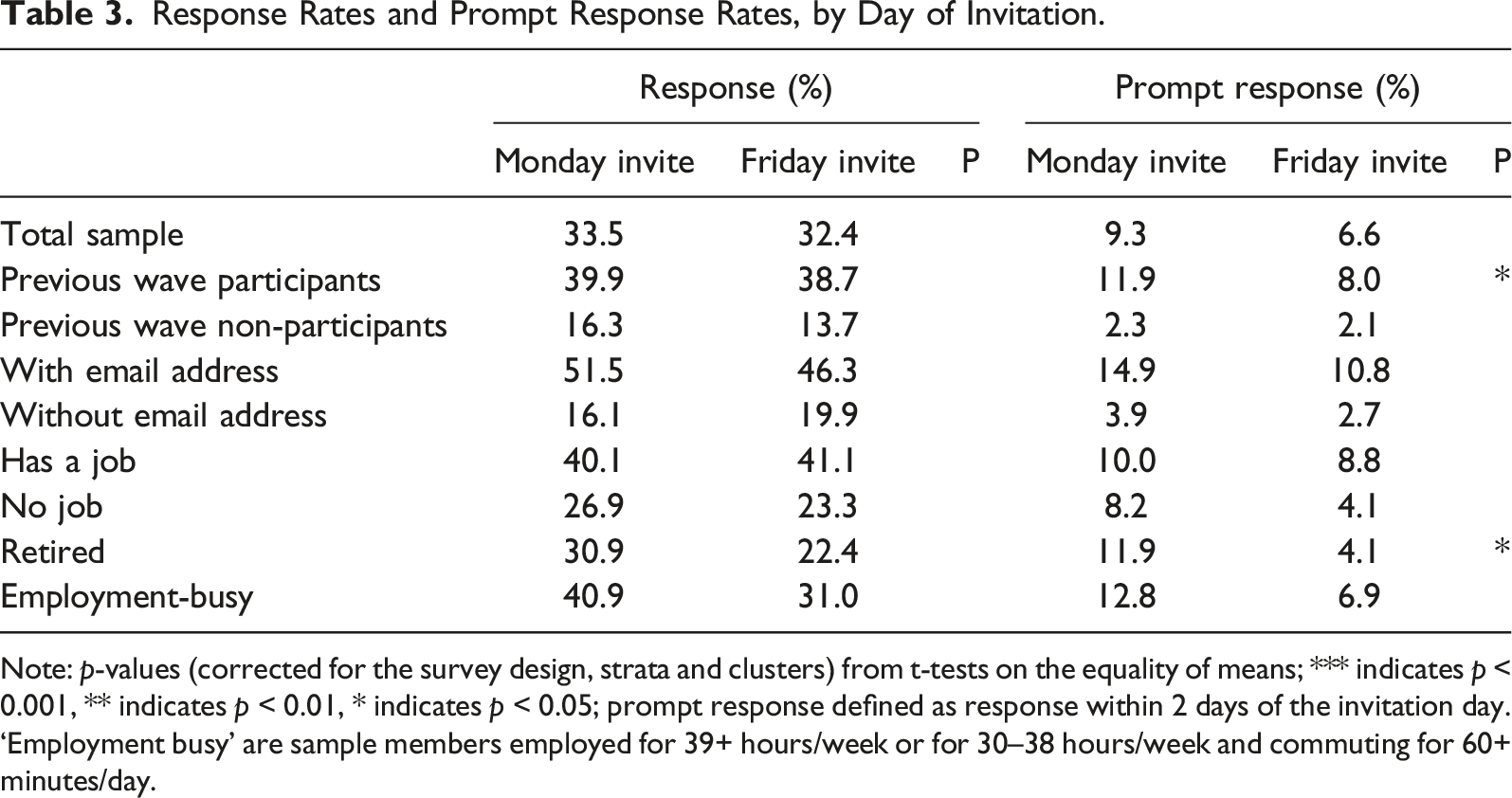

Response Rates and Prompt Response Rates, by Day of Invitation.

Note: p-values (corrected for the survey design, strata and clusters) from t-tests on the equality of means; *** indicates p < 0.001, ** indicates p < 0.01, * indicates p < 0.05; prompt response defined as response within 2 days of the invitation day. ‘Employment busy’ are sample members employed for 39+ hours/week or for 30–38 hours/week and commuting for 60+ minutes/day.

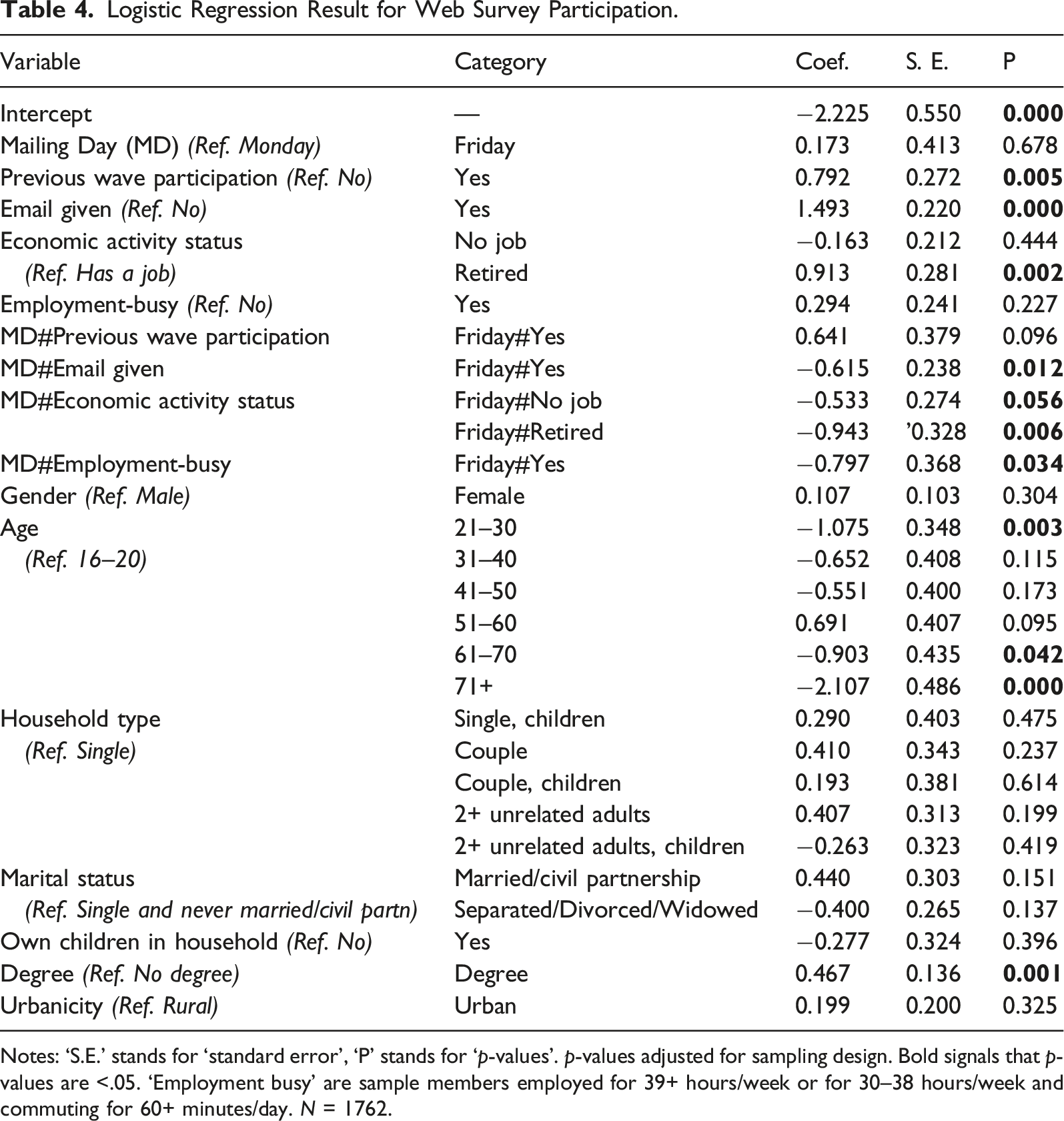

Logistic Regression Result for Web Survey Participation.

Notes: ‘S.E.’ stands for ‘standard error’, ‘P’ stands for ‘p-values’. p-values adjusted for sampling design. Bold signals that p-values are <.05. ‘Employment busy’ are sample members employed for 39+ hours/week or for 30–38 hours/week and commuting for 60+ minutes/day. N = 1762.

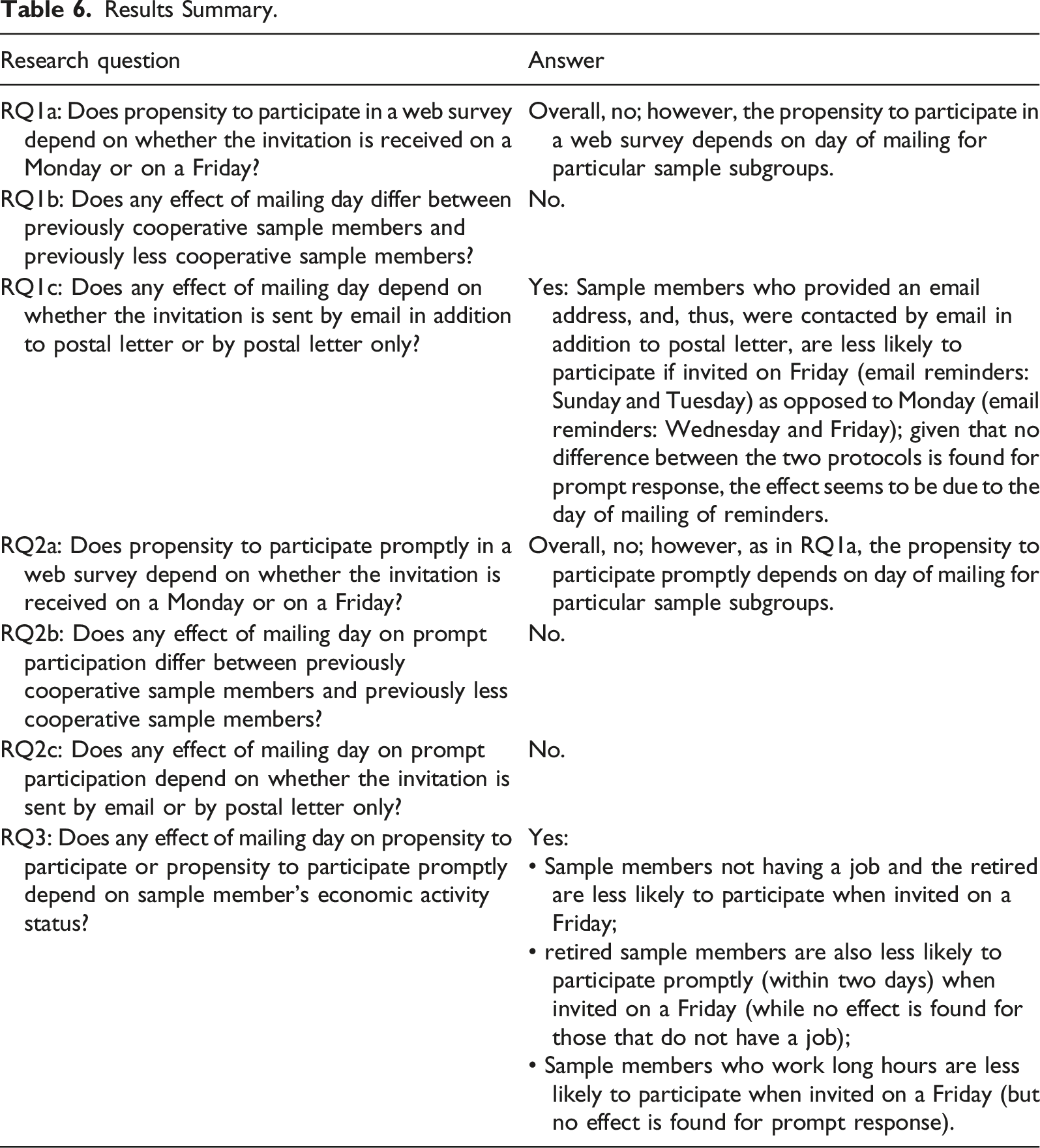

We find no statistically significant difference in the effect of mailing day on response between previously cooperative sample members and previously less cooperative sample members (RQ1b; p = 0.096, Table 4). As for email availability, a significant interaction with mailing day is observed, with only the group that provided an email address having higher probability to participate when invited on a Monday (RQ1c; p = 0.012).

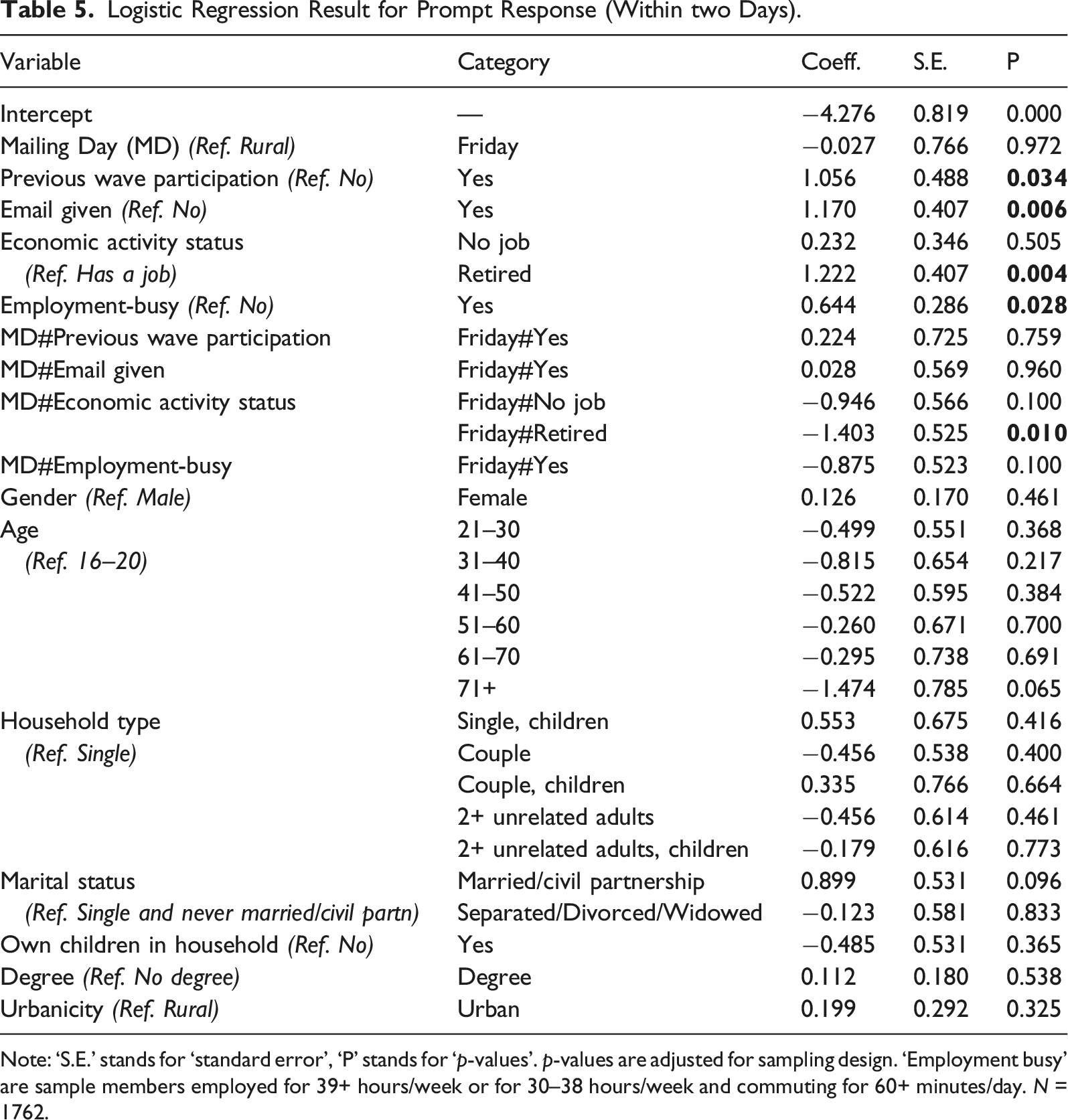

Logistic Regression Result for Prompt Response (Within two Days).

Note: ‘S.E.’ stands for ‘standard error’, ‘P’ stands for ‘p-values’. p-values are adjusted for sampling design. ‘Employment busy’ are sample members employed for 39+ hours/week or for 30–38 hours/week and commuting for 60+ minutes/day. N = 1762.

We turn now to examine our third research question (RQ3), regarding whether any of the possible effects of invitation day could be moderated by economic activity status. It turns out that the effect of mailing day on propensity to participate depends on sample member’s economic activity status, with those not having a job and the retired being less likely to participate when invited on a Friday (p = 0.056 and p = 0.006, respectively; Table 4) compared to those having a job. Also, sample members who are ‘employment-busy’ are less likely to participate when invited on a Friday (p = 0.034). For prompt response, the positive effect of Monday invitation is significant only for retired people (p = 0.010; Table 5).

Discussion and Conclusions

We have presented the results of an experiment implemented in a large scale probability-based survey, which tests the effect on response rates and response speed of varying the day of invitation to a web survey. Invitations were sent timed to arrive on Monday or Friday by postal mail to all sample members; in addition, sample members who at previous survey waves provided an email address also received an email invitation: this was also timed to arrive on Monday or Friday (on the same day as the postal invite). Overall, we do not find any difference either in survey participation or in prompt participation (defined as participation within two days from the invite) between respondents receiving the invite on Monday or Friday; however, group differences emerge.

Sample members who, at previous survey waves, provided an email address, and, thus, were contacted by email in addition to postal letter, are less likely to participate if invited on Friday (with email reminders on Sunday and Tuesday) as opposed to Monday (with email reminders on Wednesday and Friday). The effect seems to be due to the day of mailing of reminders, given that no difference between the two protocols is found for prompt response (i.e. after only the invitation is sent and reminders are not sent yet). Indeed, we argue that it is the receipt of reminders on weekdays (Wednesday and Friday 5 ) rather than during the week-end/at the beginning of the week (Sunday and Tuesday) that encouraged response. Further research might usefully investigate this aspect.

In general, sample members who are able and willing to share with the survey researchers/agency additional contact details (e.g. email, telephone number) tend to be more cooperative than those who do not share their contact details, and, thus, may respond differently than other sample members to variations in the survey design. In the analysis of these experimental data, we control for previous wave cooperation and we also test the interaction between previous wave participation and variation in the survey invitation day: we do not find a significant difference (at standard statistical levels p < 0.05) in participation or prompt participation between previously cooperative sample members and previously less cooperative sample members. We conclude that the effect found for the subgroups of respondents who provided email contact details is likely driven by the contact mode (which allows more precise timing of day of mailing) rather than by the supposed higher cooperation propensity of this subgroup of respondents.

Another important group difference which emerges from this study concerns the respondents’ economic activity status. The rationale for this subgroup analysis is that sample members may have different organisation of time by day of the week depending on their economic activity status (i.e. whether they are employed, students/unemployed, or retired); this different organisation of time may result in different preferences for the timing of survey participation requests. Our study shows that employed respondents who work long hours (i.e. 39+ hours weekly or 30–38 hours/weekly with a 60 minutes commute) are less likely to participate if invited on a Friday; this finding contrasts with our hypothesis that respondents with a fixed organisation of time, with busy working days and free time over the week end would be more likely to participate if invited just before the weekend, when they have more free time for survey participation. The opposite seems to be true, with busy respondents being less likely to participate if invited on Fridays. However, no effect is found for prompt participation. As above, given that we only find an effect in the ‘longer’ term, that is, only after reminders are sent (and no effect for prompt participation), we speculate that the effect might be driven by the subgroup of respondents that provided an email address, and, hence, received email reminders after two and four days from the survey invitation (i.e. on Wednesday and Friday for the ‘Monday treatment group’).

Also contrary to our hypothesis, respondents who are retired are less likely to participate and to participate promptly (within two days) to the survey if they are invited on a Friday rather than on a Monday. Further analysis, not shown here, signals that the effect is persistent even after sample members receive further reminders (not timed to arrive on a specific day), leading to a lower response after two weeks of survey invitation for retired sample members who receive their first invitation on a Friday. This finding contrasts with our expectation that survey participation would not vary by day of invitation for individuals that do not follow a Monday-Friday working routine. A possible explanation of this result is that retired respondents might be busier on weekends (when they may gather together with family and friends who work on week days) than on week days. If this proves to be the case, this finding would be consistent with the idea that survey contacts need to be timed in a way so that they are received by participants when they are not too busy (Callegaro et al., 2015). On this respect, research on time use might provide useful insights for survey practice.

Results Summary.

Overall, our findings confirm how survey design features that are not effective overall for a general population sample, might significantly improve response rates for population subgroups. This result emphasises the importance of implementing targeted survey design to meet the needs of different subgroups, which vary in their response propensity and differ in how salient survey design features are in motivating sample members to participate in surveys (Groves et al., 2000). Longitudinal surveys offer a favourable environment to time the survey invitation according to sample members’ preferences. Indeed, information collected at previous survey waves may be used for tailoring the survey design: respondents may be asked at one survey wave to identify their preferred time to complete a survey request (e.g. week day or week end) and then be invited to participate on their preferred timing. Further research might explore the feasibility of this approach and experimentally test its effectiveness.

One important characteristic of our study is its novelty, as, to the best of our knowledge, this is the first study on mailing day effects on response rates in a web survey conducted in the UK. Given the relatively limited research on the topic and the absence of other research in the UK context, we encourage the replication of these findings to confirm their robustness.

This study has also important limitations. Indeed, while we expect these results to guide survey practitioners in choosing the optimal invitation timing, this is only possible under the assumption that researchers have some control over when respondents receive survey invitations. If survey invitations are sent by postal mail, the date on when the invitation is received may differ depending on the reliability of the postal service adopted; this may vary by country and by the geographic proximity of respondents to major postal hubs. When invitations are sent by email, it is easier for researchers to control the time when the invitation reaches the respondent; however, the researcher has no control on when respondents check their email and read the content of the survey invitation; this latter consideration also applies to mail communication which may pile up for some time before respondents check them. This limitation needs to be considered when tailoring the invitation timings to web surveys.

Finally, while we advocate the identification of preferable timing for survey invitations and reminders to improve response rates in the context of large scale studies, effects may be different in the case of opt-in panels. In these contexts, panellists may receive a large volume of invitations (which are preferably spaced over the week rather than grouped in specific time/days) and agencies may have limited capacity (i.e. server capacity, personnel, etc.) to control the day of survey invitation.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The contribution of Lynn was supported by UK Economic and Social Research Council award G2014-70 for Understanding Society: the UK Household Longitudinal Study and the contribution of Bianchi was supported by the ex 60% University of Bergamo, Bianchi grant.

Notes

Appendix

Question Wording. Note: Other variables used in this analysis are: sex (sex), dvage (age), hhtype_dv (household type), mastat_dv (marital status), nchild_dv (own children in household), hiqual_dv (degree), urban_dv (urbanicity). These variables are all derived by the Understanding Society survey team; for more information see: https://www.understandingsociety.ac.uk/documentation/innovation-panel

Concept of interest

Variable name

Survey question

Operationalisation

Economic activity status

jbstat

‘Which of these

Self-employed

In paid employment (full or part-time)

Unemployed

Retired

On maternity leave

Looking after family or home

Full-time student

Long-term sick or disabled

On a government training scheme

Unpaid worker in family business

Working in an apprenticeship

Doing something else’We recoded the variable as following:

Employed = Self-employed; In paid employment (full or part-time); Unpaid worker in family business; Working in an apprenticeship.

Not employed = Unemployed; On maternity leave; Looking after family or home; Full-time student; Long-term sick or disabled; On a government training scheme; Doing something else”

Retired = retired

Employment-busy,

jbhrs + jbttwt

‘Thinking about your (main) job, how many hours, excluding overtime and meal breaks, are you expected to work in a normal week?’ (jbhrs)

‘About how much time does it usually take for you to get to work each day, door to door (in minutes)?’ (jbttwt)Employment busy = 1 if jbhrs≥39 or 30≤jbhrs≤38 and jbttwt≥60

Employment busy = 0 otherwise