Abstract

Panel attrition poses major threats to the survey quality of panel studies. Many features have been introduced to keep panel attrition as low as possible. Based on a random sample of refugees, a highly mobile population, we investigate whether using a mobile phone application improves address quality and response behavior. Various features, including geo-tracking, collecting email addresses and adress changes, are tested. Additionally, we investigate respondent and interviewer effects on the consent to download the app and sharing GPS geo-positions. Our findings show that neither geo-tracking nor the provision of email addresses nor the collection of address changes through the app improves address quality substantially. We further show that interviewers play an important role in convincing the respondents to install and use the app, whereas respondent characteristics are largely insignificant. Our findings provide new insights into the usability of mobile phone applications and help determine whether they are a worthwhile tool to decrease panel attrition.

Panel surveys are one of the most valuable data sources in the social sciences. Compared to cross-sectional studies, they provide a stronger basis for causal inference, as data analysis can be based on within-variation at the respondent or household level—thereby controlling for unobserved time-constant heterogeneity (Brüderl & Ludwig, 2015). However, panel surveys also face specific threats to data quality, one of which is panel attrition. Panel attrition describes the fact that some sample elements drop out of the panel over time, for instance, because they are unwilling to further participate in the study or because the field agency loses track of respondents when they move. We use a panel study on refugees, an extremely mobile population, as a case study to identify whether a mobile phone application can reduce panel attrition and in turn increase data quality.

One group that is prone to cause panel attrition in particular are recent immigrants. Recent immigrants move more frequently due to job search or leaving preliminary living arrangements. As immigration in the past decades has increased drastically (due to accelerated globalization of markets and humanitarian crises), many large-scale and renowned panel studies have incorporated targeted migration and minority samples in their surveys over the last couple of years. For example, in 2016, the German Socio-Economic Panel (SOEP), an annually conducted nationwide panel study (Goebel et al., 2019), implemented a refugee-specific enlargement sample, focusing on asylum seekers and refugees who have arrived in Germany since 2013 (Kühne et al., 2019, further prominent examples are the Panel Study on Income Dynamics, and the UK Household Longitudinal Study).

As refugees comprise a population that is exceptionally mobile due to official regulations and allocation procedures, panel attrition and address quality were expected to be a serious issue. Thus, in addition to SOEP standard panel care measures, a mobile app was developed in order to improve address quality, maintain interest in the study, and, in turn, decrease panel mortality. The app incorporates five features:

Allowing respondents to inform about changes to their address;

Collecting respondents’ email addresses;

Geo-tracking of respondents’ devices;

Implementing short questionnaires; and

Providing news on the situation of refugees in Europe.

The article at hand aims to analyze whether the usage of a mobile phone app decreases panel attrition, which factors affect the consent to using a mobile phone app, and which role the interviewers play in convincing participants to use an app.

With these research objectives, we build on and extend earlier research that primarily focuses on consent and data quality regarding mobile phone apps in survey research (Keusch, Leonard, et al., 2019; Sugie, 2018). In order to answer the above-mentioned questions, first, we describe the self-selection of respondents into downloading the app along with the permission to activate geo-tracking and the usage of email addresses for recontacting purposes. Second, we explore interviewer effects regarding consent and app usage. Finally, we assess address quality for the second wave and analyze whether the app and its features reduce the number of unknown whereabouts and increase the willingness to participate in the second wave of the Institute for Employment Research (IAB)–Federal Office for Migration and Refugees (BAMF)–SOEP Survey of Refugees.

Literature Review

In this section, we give an overview of the different strands of literature this article makes a contribution to. We begin with panel attrition, followed by recent developments in the usage of mobile phones in survey research and consent to linking survey and digital trace data. In the end, we will discuss research concerning interviewer effects.

Panel Attrition

Panel attrition describes the phenomenon that some respondents or households drop out of the panel over time. In many existing large-scale panel surveys, panel participation rates are rather high when compared to initial first wave or cross-sectional study response rates (RR). For instance, in the SOEP, the participation rate is above 90% from Wave 3 onward (Kroh et al., 2018). The same holds true for the UK Understanding Society longitudinal study (Lynn et al., 2012), the Michigan Panel Study of Income Dynamics (Sastry et al., 2018), the Swiss Household Panel (Lipps, 2007), and the HILDA Survey (Watson & Wooden, 2006). While attrition rates remain fairly low in many existing panel surveys, their cumulative impact can result in a serious loss in sample size over time. For instance, a 10% attrition rate (i.e., a 90% participation rate) decreases an initial Wave 1 sample size of 5,000 households down to 3,281 in Wave 5 (−34%).

Generally, panel attrition is caused by three main factors: (1) missing/invalid information needed to recontact respondents such as addresses or telephone numbers, (2) individual loss of interest in the study and, in turn, refusing to participate in the next wave or in general, and (3) death and morbidity.

Research shows that the reasons underlying panel attrition are not fully random and some subpopulations are more likely to drop out of the panel. Factor 1, changes in information needed to recontact panelists, is most frequent in highly mobile populations, that is, participants who move often and across large distances (e.g., Trappmann et al., 2015). This holds true not only for younger people but also especially for refugees as they represent a rather mobile population (Buber-Ennser et al., 2016; Kühne et al., 2019). With respect to Factor 2, a loss in interest and, thus, refusal, there is indication that recent migrants and refugees are more likely to refuse further participation in the panel as they experience a high respondent burden due to language and cultural issues (e.g., Siegers et al., 2019). Finally, regarding Factor 3 (and fairly trivial), older panelists are much more likely to drop out of the panel due to their demise (for a general overview of the reasons underlying panel attrition, also see Schoeni et al., 2013).

Nonrandom panel attrition poses major problems for maintaining quality in panel surveys (Lugtig, 2014). Not only does panel attrition decrease the sample size, and thus, statistical power, but it also introduces potential nonresponse bias into estimates if those who drop out of the panel systematically differ from those who further participate. Although there are a vast number of post hoc measures to account for nonrandom panel attrition by means of weighting adjustment (e.g., Rendtel & Harms, 2009), such means are not a magic bullet (e.g., Vandecasteele & Debels, 2007). In particular, they reach their limits if panel attrition is constantly high, sample sizes become too small, or some groups of the target population drop out (almost) completely. Therefore, field agencies have introduced several measures to keep attrition as low as possible: There is evidence that sending out letters and postcards or even providing monetary incentives are helpful in this regard (Castiglioni et al., 2008; Laurie, 2008; Schoeni et al., 2013). Additionally, in face-to-face surveys, interviewer continuity is proven to maintain high participation rates (Campanelli & O’Muircheartaigh, 1999; Lynn et al., 2014; Vassallo et al., 2015) and response quality (Kühne, 2018). However, most of these measures seek to keep interest in the study high, and the improved address quality only appears as an accidental side effect. High address quality includes up-to-date information on residential addresses and only a small number of unknown whereabouts or nonexisting addresses. Especially in the case of (face-to-face) panel surveys, maintaining address quality is a crucial aspect in order to recontact households and respondents. Ongoing panel studies show that address quality is a smaller problem than respondent motivation (Couper & Ofstedal, 2009; Siegers et al., 2019). Nevertheless, research suggests that this not necessarily holds true for mobile target populations such as immigrants (Couper & Ofstedal, 2009). In contrary to native-born respondents, immigrants tend to move back and forth between their country of origin and other third countries. Recent immigrants, on the other hand, are more likely to move within one country for job-seeking purposes or leave the country when their residence permits expire.

In addition to maintaining large sample sizes, there is a second reason why knowing the actual place of residence is important for panel studies. In order to correct for panel attrition by means of nonresponse weighting, field agencies need to differentiate between quality neutral dropouts (no longer part of the target population) and dropouts that are still eligible to participate. In some national surveys, people who leave the country are considered to be data quality neutral dropouts, as they are no longer part of the underlying target population. Thus, in order to estimate unbiased nonresponse weights, knowledge about residency is important.

As panel attrition poses a severe problem to survey quality, survey providers constantly look for ways to decrease attrition, one of which can be the use of mobile phone applications with the purpose of keeping in touch with the panelists.

Usage of Mobile Phones in Survey Research

Since the 1990s, digitalization of survey practice is increasing (Groves, 2011). While self-administered web surveys on a computer can already be considered state of the art, mobile phones are still not widely used (e.g., de Bruijne & Wijnant, 2014b; Poggio et al., 2015, p. 5; Stier et al., 2019). Smartphones have a high potential utility in different aspects. For example, smartphones can deliver small questionnaires between the main waves of panel studies, or they replace computer-assisted and pen-and-paper interviews in general (Keusch, Leonard, et al., 2019; Sugie, 2018). Furthermore, they are able to gather digital trace data about survey participants. Most smartphones provide hardware that allows, among other features, voice identification and geo- and eye-tracking (Höhne & Schlosser, 2019; Lane et al., 2010). However, substantial research and the development of theoretical frameworks on how survey data and digital trace data can be integrated sustainably have only recently gained the attention of survey methodologists, and thus, research on this matter is comparably scarce (for an overview, see Stier et al., 2019).

Despite its great potential, evidence regarding the utility of smartphones and apps in social science research is mixed and many challenges remain. To begin with, presumably not all members of a target population own a smartphone (Keusch, Bähr et al., 2020). Therefore, field institutes need to find solutions that avoid underrepresenting substantial parts of the target population such as the elderly or poor (Fuchs & Busse, 2009; Hoogeveen et al., 2014). In order to tackle this challenge, Hoogeveen et al. (2014) suggest that the field institutes should, if necessary, supply mobile phones and solar chargers (to remain independent from energy sources). Additionally, from a technical perspective, mobile phone applications for the purpose of survey research need to be designed in a way that they run on a vast array of operating systems (Dalmasso et al., 2013).

Generally speaking, the evidence regarding data quality of surveys conducted via smartphones is quite mixed. Compared to standard web surveys conducted on and designed for a computer, some scholars report that surveys designed for mobile phones reach a similar quality level (Ballivian et al., 2015; Dillon, 2012; Topoel & Lugtig, 2014; Wells et al., 2013). Others report substantial problems regarding data quality including poor RR and the detail and length in open-answer response formats (de Bruijne & Wijnant, 2013).

Additionally, lower completion rates are shown to be a problem (Mavletova, 2013), along with social desirability (Mavletova & Couper, 2013) and the interview length (Couper & Peterson, 2016). Studies assessing the reasons for lower quality come to the conclusion that the individual characteristics of the respondents are not the only factor, but rather the deviating presentation of a questionnaire on a mobile phone, such as smaller pictures and truncated scales, shows an impact (Couper & Peterson, 2016; Peytchev & Hill, 2010). Dillon (2012), on the other hand, finds only small differences between smartphones and other modes of data collection.

Additionally to using mobile phone applications for interviews, smartphones are also used to implement geo-tracking of individuals, as the majority of current devices are equipped with GPS sensing (for a discussion see Bähr et al., 2020). Besides gathering information on individual movement, researchers are increasingly able to use geo-spatial data to learn about, for instance, the respondents’ place of work, or their leisure activities (Colak et al., 2016).

However, employing such technology raises the question of selectivity. For example, in a study by Toepoel & Lugtig (2014), only about a quarter of all participants agreed to apply geo-tracking (see also Kreuter et al., 2018, who report similar consent rates). Furthermore, younger participants, people with high income, and respondents living in small households were more inclined to use such an application (Topoel & Lugtig, 2014, p. 551). This is in line with findings of de Bruijne and Wijnant (2014a), who report that besides the young, especially progressive thinking respondents use mobile phones to answer web surveys.

One crucial aspect of selectivity is the informed consent to linking survey data to additional data sources. The effect of consent to linkage has thus been the subject of many analyses in the past to determine the loss in survey quality when linking survey data to additional data sources.

Consent to Linking Survey Data to Additional Data Sources

A considerable amount of literature has been published on consent to linking survey data to additional data sources. Most of this work focuses on record linkage, meaning the connection between surveys and administrative records. In our study, we focus on consent to linking survey to mobile phone data (Keusch, Struminskaya, et al. (2019) provide a comprehensive overview of the current research.) In most countries, using a mobile phone or an app to gather additional information requires informed consent from the respondents and their agreement to current data protection regulations (e.g., Kreuter et al., 2018). Past research on informed consent in surveys indicates that receiving consent to data linkage, in general, and to geo-tracking, in particular, is by no means trivial, introducing a vast array of potential sources of bias and errors (see Couper et al., 2017, for an overview).

Previous research shows that similarly to consent to administrative data, also consent to use a mobile phone app for data collection is selective. Moreover, consent rates vary from study to study. For example, Couper and colleagues (2017), in their review, found that consent rates range between 5% and 67%. Similarly, Jäckle and colleagues (2017) report selectivity regarding smartphone usage within the Understanding Society Innovation Panel. For the purpose of their study, an application used the camera, measured physical movements, and had access to GPS sensing. Results show that not only the consent in downloading the app but also the consent to the various features was selective (Wenz et al., 2019). Similar results are presented based on the Dutch Longitudinal Internet studies for the Social Sciences panel (Elevelt et al., 2019) and based on the German PASS, a panel study on Labor Market and Social Security (Kreuter et al., 2018).

Drawing on experiences from consent to record linkage, some efforts have been made in order to reduce errors introduced by data linkage. Sakshaug et al. (2013) propose placing consent questions at the beginning of the interview. Additionally, respondents are more likely to give consent when they are promised a reward such as a shorter interview (Sakshaug & Kreuter, 2014). A further feature that proves to increase consent rates is confidentiality. If the field agency is perceived as trustworthy and respondents believe that the data is handled with care, they are more inclined to agree to linkage (Singer, 1993). On a similar note, scholars report that respondents who have a high sense of privacy tend to refuse consent more often (Fobia et al., 2019). For example, respondents who do not want to report their income are likely not to give consent to data linkage (Sakshaug et al., 2012; Sala et al., 2012). Additionally, if respondents believe that there is a greater benefit resulting from linkage, consent rates increase as well (Dunn et al., 2004; Fobia et al., 2019; Kahnemann & Tversky, 1979, 1984; Kreuter et al., 2015).

Interviewer Effects in Survey Data

Despite their comparatively high costs compared to other modes of data collection such as web surveys, social scientists still rely on interviewers. Interviewers contribute to data quality by maintaining high participation rates, handling complex survey instruments, and providing assistance and clarifications to respondents (Fowler & Mangione, 1990). However, interviewers can also introduce interviewer effects in the data (see Schaeffer et al., 2010; West & Blom, 2017, for an overview of the topic). These interviewer effects introduce variance and/or bias into survey estimates, thus threatening the reliability and validity of survey measures.

Turning to panel participation and attrition, research shows that interviewers vary in their ability to motivate respondents into further participation in a panel (Haunberger, 2010; Pickery et al., 2001; Vassallo et al., 2015). So far, however, existing research cannot identify a robust set of interviewer characteristics associated with higher participation rates. One exception seems to be interviewer experience, with more experienced interviewers obtaining higher (re-)participation rates (Durrant et al., 2010; Hox & de Leeuw, 2002).

Moreover, interviewers also vary in their ability to obtain consent to (record) linkage requests (Eisnecker & Kroh, 2017; Korbmacher & Schröder, 2013; Sakshaug et al., 2017, 2012; Sala et al., 2012). Sakshaug et al. (2017) use an experimental approach to show that consent rates are generally higher in interviewer-administered surveys (face-to-face, 93.9%) compared to those that are self-administered (mail/web, 53.9%). Are certain interviewer characteristics associated with higher consent rates? Sala et al. (2012) indicate that the interviewer’s sociodemographic characteristics, personality traits, and attitudes to persuading respondents do not affect consent. Only the interviewer’s experience within a survey wave has a significant negative effect on consent rates (which is in line with Korbmacher & Schröder, 2013). Similarly, Sakshaug et al. (2012) also show little evidence for systematic interviewer effects. Moreover, they do not indicate that matching respondents and interviewers based on demographic characteristics increases consent. Korbmacher and Schröder (2013) find a U-shaped interviewer age effect on consent rates (with a turning point at about 55 years).

Regardless of interviewer characteristics associated with consent rate, the literature points to substantial interviewer variance in consent rates (Korbmacher & Schröder, 2013; Mostafa & Wiggins, 2017; Sakshaug et al., 2012). For instance, Korbmacher and Schröder (2013) observe interviewer intraclass correlation levels on the consent decision of over 40% even after controlling for respondent- and interviewer-level characteristics.

Thus far, the literature lacks empirical evidence related to interviewer effects on the respondents’ decision whether to download a mobile app, provide an email address, or consent to geo-tracking. However, given the research on the interviewers’ influence on respondents’ consent to record linkage, expecting interviewer effects seems plausible.

In summary, the literature shows that panel attrition is a severe problem. Mobile phone applications might help decrease panel attrition because they have considerable potential in gathering additional data on survey respondents (e.g., GPS locations). Moreover, mobile phone applications are able to provide respondents with information and questionnaires. Although previous research has shown that consent to using mobile phone apps is selective, there is little evidence on the reasoning behind this selectivity (an exception is the recent publication by Keusch & Bähr et al., 2020). Previous research on face-to-face interviews and interviewer effects gives rise to the hypothesis that interviewers play a crucial role. Additionally, to date, most survey apps have been used for analytical purposes. However, it remains unclear whether these apps are also capable to improve survey quality by decreasing panel attrition. We contribute to the current literature on the usage of smartphones in surveys in two ways: First, we assess aspects of self-selection into using the app. Second, we analyze whether such an app is capable of reducing panel attrition.

The IAB-BAMF-SOEP Survey of Refugees

In order to answer our research questions, we employ data from the IAB-BAMF-SOEP Survey of Refugees (Kühne et al., 2019). The IAB-BAMF-SOEP Survey of Refugees is a random sample and panel study of asylum seekers and refugees (called anchor persons) and their households who migrated to Germany between 2013 and 2016. The sample is drawn from the Central Register of Foreigners (AZR) in Germany (von Gostomski & Pupeter, 2008) and is the result of a joint project between the German IAB, the research centre of the German BAMF, and the German SOEP. The AZR comprises all people without a German passport who live in Germany for longer than 3 months and stores information about their residence permit. Hence, the IAB-BAMF-SOEP Survey of Refugees is a unique source of data that allow inference on the 2013–2016 cohort of refugees in Germany. The first wave RR (RR2 according to American Association for Public Opinion Research [AAPOR] standard definition) of this survey is approximately 50% (Kühne et al., 2019). 1 We work with the first and second waves (2016 and 2017), comprising 4,465 individuals living in 3,273 households for the first wave and 3,343 individuals living in 2,303 households for the second wave (RR2 = 69%). 2 Fieldwork for the first wave took place from July to November 2016, and fieldwork for the second wave was from September 2017 to March 2018 (see Table A1 in the Online Appendix for detailed sample characteristics in terms of, e.g., age, gender, and education).

Design of and Consent to Using the App

Each anchor person was asked for consent to download and use the mobile phone application at the end of the personal questionnaire in the first wave (N = 3,273). Due to the random sampling of anchor respondents, this approach subsequently leads to a random allocation of requests within households. The respondents were asked and guided to install the app right away in order to ensure that participants do not forget about the app. The consent to the various features however did not necessarily take place during the interview so that respondents would not feel pushed. The request for geo-tracking and providing an email address for recontacting purposes was implemented separately within the app and thus not part of the initial consent request (for the wording, see the Online Appendix. Figure A1 in the Online Appendix additionally shows a picture of the app design). All aspects of the application (including the informed consent) were in line with the General Data Protection Regulation standards at that time in Germany (2016).

Ultimately, the application comprised five main features:

Geo-tracking of respondents’ devices;

Collecting respondents’ email addresses;

Allowing respondents to inform about any address changes;

Answering short questionnaires (rewarded with €20); and

Providing news on the situation of refugees in Europe.

Geo-tracking and the provision of email addresses were supposed to facilitate the tracking of respondents in the event that they moved and did not actively provide their new address. Address changes and the provision of email addresses could be introduced into the app through a separate feature that was available at all times. The GPS tracking allowed to allocate the respondent in the case that a participant could not be located during fieldwork for the second wave. Implementing short questionnaires and offering information on the situation of refugees in Europe sought to keep interest in the study high. From July 2016 to May 2017 (between Wave 1 and Wave 2 interviewing), a total of 27 different news articles and four small questionnaires were provided through the app (see Table A2 in the Online Appendix for the headlines of the news articles. The questionnaire is also shown in the Online Appendix). The small questionnaires and the news outlets were not presented at once but implemented one after the other in between the fieldwork of Wave 1 and Wave 2.

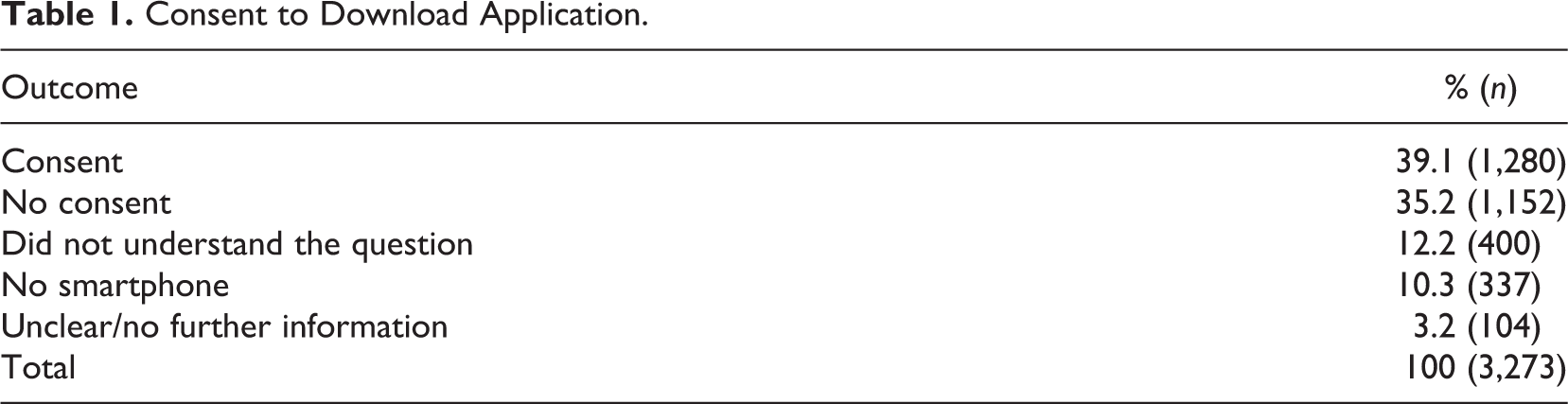

Table 1 indicates that roughly 40% of the respondents agreed to download the app. However, only 35% explicitly declined consent, whereas a quarter of the respondents either did not understand the consent question, had no smartphone, or encountered technical issues.

Consent to Download Application.

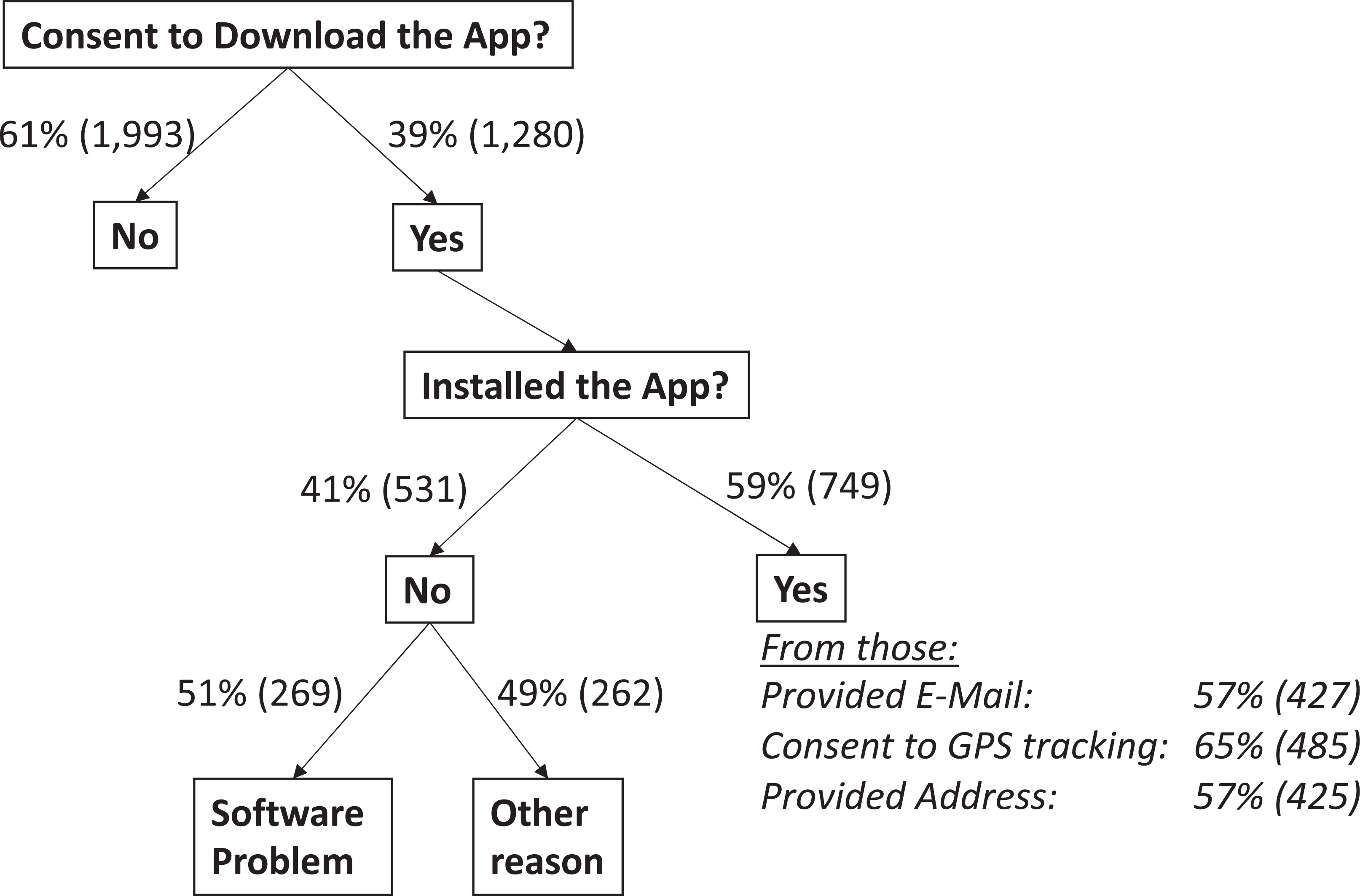

Furthermore, as Figure 1 indicates, only 60% of those who gave consent actually installed the app on their smartphone (thus, overall, 23% of the total sample). In addition, of those who installed the app, only 57% provided their email address, 65% allowed geo-tracking, and 57% provided their address. Interestingly though, of those 485 who allowed geo-tracking, only 231 (48%, not displayed as a table) actually activated their GPS signal between panel waves so that the field agency could use the provided information. Therefore, the consent rate that actually translates into GPS tracing is substantially lower. Furthermore, 51% of those who consented but did not install the app encountered software problems during the download. Reports from the field reveal that most technical problems occurred because the software could not be installed due to outdated or unfitting hardware. In cases where technical problems were only minor or a matter of comprehension, the interviewer helped in resolving issues (n = 33). Despite consenting, the remaining 49% did not download the app without providing further information on the underlying reason.

Installation of the app and consent to different features.

Translation of Field Instruments and Interviewer Allocation

All field material (including the app) was translated from German to English, Standard Arabic, Farsi/Dari, Pashto, Kurdish-Kurmanji, and Urdu (see Table A3 in the Online Appendix for the distribution in the sample and how many respondents could choose their mother tongue). All field material was first translated to English. Either English or German (depending on the availability of translators) was then the basis for the translation into the other languages. Two translators working separately conducted a one-way translation and then produced a reconciled final version.

At the beginning of each interview, respondents had to choose a language that could not be changed during the course of the interview.The mode of the interview was either Computer Assisted Personal Interview (CAPI) or (Audio) Computer Assisted Self-Interview [(A)CASI], defaulting to CAPI. However, in the event that the interviewer did not speak the chosen language and the interviewee did not speak German, the respondent could fill out the questionnaire himself/herself. Additionally, audio recordings of each question were provided to address other language barriers, like illiteracy. In this event, the interviewers did not leave, rather they provided general guidance (see Jacobsen, 2018, for more details).

As all field material was available in the most important languages and self-interviewing was an option, the field institute did not rely on interviewers capable of speaking the most common mother tongues. The most important interviewer selection criterion, according to the field agency, was that the interviewers were experienced in working as interviewers prior to this study. Additionally, all interviewers underwent a thorough training and preparation, especially in regard to cultural and refugee-specific particularities.

A total of 95 interviewers interviewed 3,273 households. On average, each interviewer interviewed 34 households (median: 24). About 65% of the interviewers were male. The mean age of the interviewers was 50 (median: 52). About 70% of the interviewers had no prior experience as interviewers for the fieldwork agency. On average, the interviewer’s experience amounted to 1.8 years.

Estimation of Self-Selection into Downloading the App and Its Utility Regarding Panel Attrition

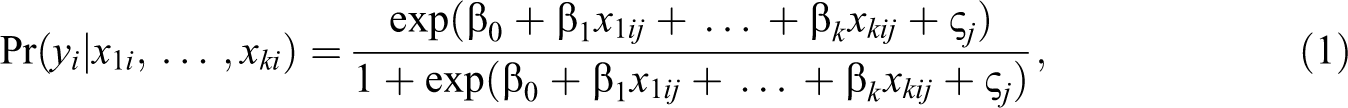

Our analysis consists of two steps. First, we aim at estimating a potential self-selection of respondents into (a) consent to download the app, (b) consent to geo-tracking, and (c) provision of an email address. Second, we estimate whether using the app and its various features improves (a) address quality and (b) participation in the next wave. For all models, we rely on hierarchical, multilevel logistic regression analysis with respondents clustered in interviewers. In each case, the dependent variable represents a dummy taking the value 1 if a respondent consents

where

Estimation of Interviewer Effects

In many surveys, including the SOEP, interviewers are not randomly allocated to respondents or households, but rather to geographic areas, the primary sampling units. Often, only a single interviewer is working in a given area, and interviewers are allocated to one area only. In these cases, estimated interviewer effects are likely to be confounded with area effects. Answers observed by a single interviewer may be more homogeneous not because of the interviewers’ (biasing) effects on responses but due to the homogeneity of individuals living in the same geographic area. However, research shows that interviewer effects are usually larger than area effects (e.g., Schnell & Kreuter, 2005). In order to minimize potential confounding with area effects, we include a variety of interviewer- and area-level fixed effects in order to mitigate endogeneity of area/interviewer selection effects (as in Korbmacher & Schröder, 2013; Kühne, 2020; West et al., 2013). A full list of all interviewer-level and area-level variables used as controls in the models is presented in the Online Appendix in Table A4.

While biasing effects of interviewer characteristics are captured in regression coefficients, the interviewers’ contribution to the variance of a survey measure—the interviewer variance—is usually quantified by estimating the intra-interviewer correlation coefficient (Kish’s

Explanatory and Control Variables

Since the usage of an app in a survey of recent immigrants is rather new, we assess the self-selection with an explorative strategy regarding demographic and immigrant-specific variables, language proficiency, and respondent burden. As demographic characteristics, we use gender, age, employment status, education, and country of origin. In order to estimate language effects, we further include German proficiency and whether the questionnaire was available in the respondents’ mother tongue. We assume that being proficient in the official language in a foreign country increases trust. Third, we test for immigrant-specific features such as residence status (asylum seekers might not want to allow tracking in case of a rejection of their asylum application) and the year of immigration. We chose the year of immigration because we assume that newly arrived refugees might feel more obligated to participate. In order to observe the effect of respondent burden, we also include the total length of the interview in the model and the number of other individuals in the household, which are part of the target population (since this would increase the time, the interviewer spends in the household). Additionally, we insert a variable counting the amount of unanswered questions in the survey prior to the consent questions. For a detailed description of all variables on individual level, see Table A5 in the Online Appendix.

In addition to the explanatory variables at respondent and household level, a variety of controls are included in all models. At the geographic area level, we include information about the county (e.g., unemployment rate, share of university students), the municipality (e.g., size, share of population 65+), and the neighborhood (e.g., type of house, purchasing power index; see Table A4 in the Online Appendix for more information about area controls). At the interviewer level, we control for gender and age (see Table A5 in the Online Appendix at the bottom). Additionally, for models analyzing recontacting and reparticipation, we test whether the interviewer changed between the waves in 2016 and 2017.

Key explanatory variables for the models testing address quality and participation in the next wave are four dummy variables (1 = yes, 0 = otherwise), reflecting the usage of GPS, providing their email address, successful download of the app (to test the effect of short questionnaires and news outlets), and providing info about address changes through the app. The explanatory variables of the previous models serve as additional control variables.

Results

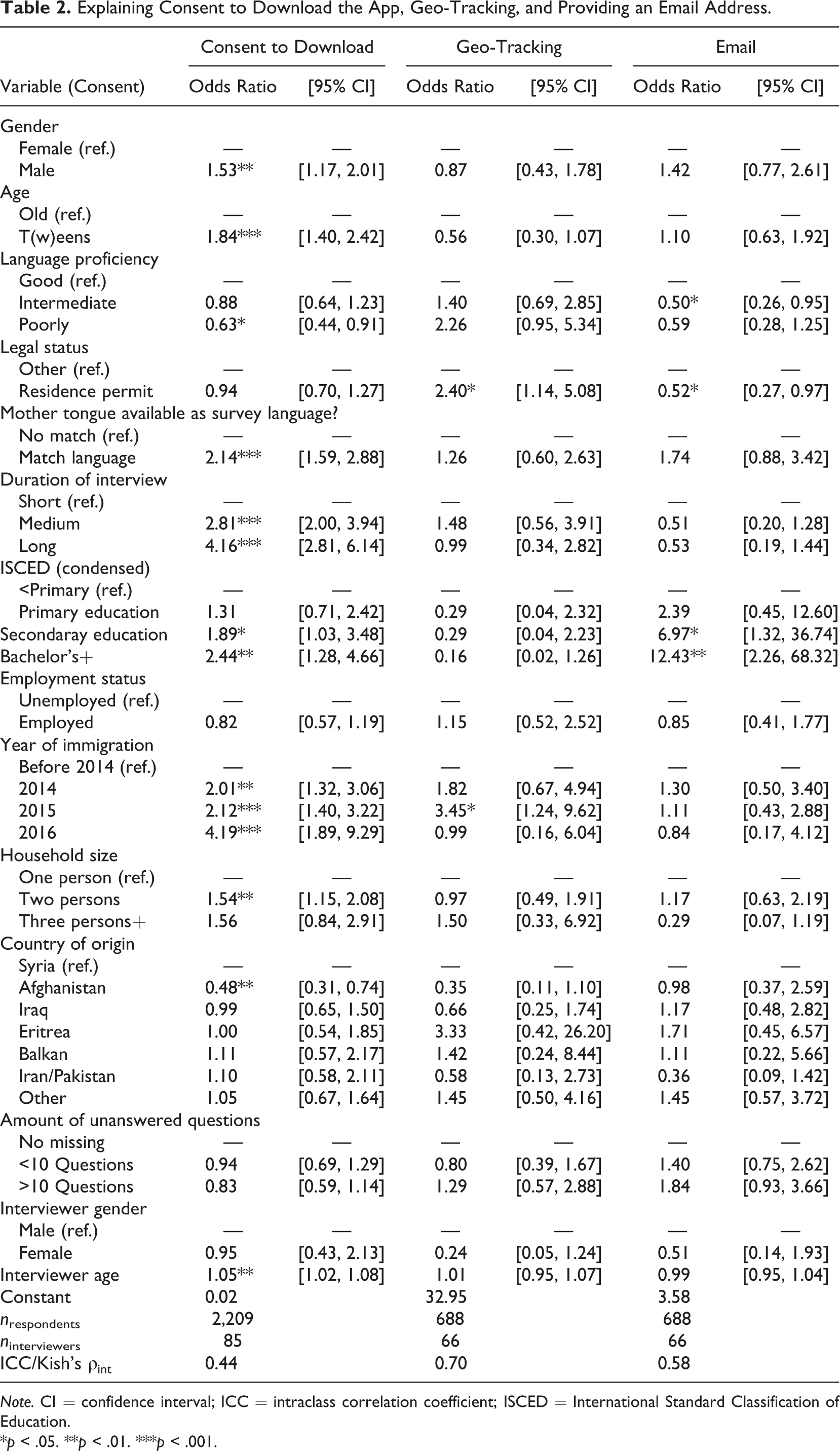

Table 2 displays three different models estimating (1) consent to download the app, (2) consent to provide geo-tracking, and (3) consent to provide an email address. In the first model, we see that the respondent-level variables play an important part in explaining consent to download the app. Regarding sociodemographics, the model shows that men and young respondents have a higher chance of consenting to download the app. However, education, the country of origin, and employment status are not correlated. Regarding language barriers, the estimation indicates that chances to consent increase when the survey language matches the mother tongue and when respondents are proficient in German (compared to a total lack of language proficiency). Regarding migrant-specific variables, the table shows that respondents who just recently migrated have a substantial and significantly higher chance of consenting. Further, the type of residence permit is not crucial in this regard. Finally, if the survey took more time, people had a higher chance of complying and the bigger the household, the higher the chance to consent as well. Surprisingly, the number of unanswered questions during the interview is not correlated with giving consent. We expected otherwise as missing values are often the consequence of high respondent burden, sensitivity, or low comprehension; all of which are factors we assumed to be correlated with giving consent.

Explaining Consent to Download the App, Geo-Tracking, and Providing an Email Address.

Note. CI = confidence interval; ICC = intraclass correlation coefficient; ISCED = International Standard Classification of Education.

*p < .05. **p < .01. ***p < .001.

Looking at the correlates regarding the consent to geo-tracking and the provision of email addresses, two effects stand out. It is striking that respondents with a residence permit have a significantly higher chance of giving consent to geo-tracking and providing an email address. It is plausible that asylum seekers without a residence permit and people with a suspension of deportation avoid being tracked in case of a negative decision on their asylum claim. Additionally, respondents with German language proficiency also have a higher chance to allow geo-tracking.

Turning to (biasing) effects of interviewer gender and age, we see that older interviewers are more likely to obtain consent to download the app. As age correlates with interviewing experience, interviewer work experience may also positively influence download success. Analyzing the interviewer variance in each model (intraclass correlation coefficient,

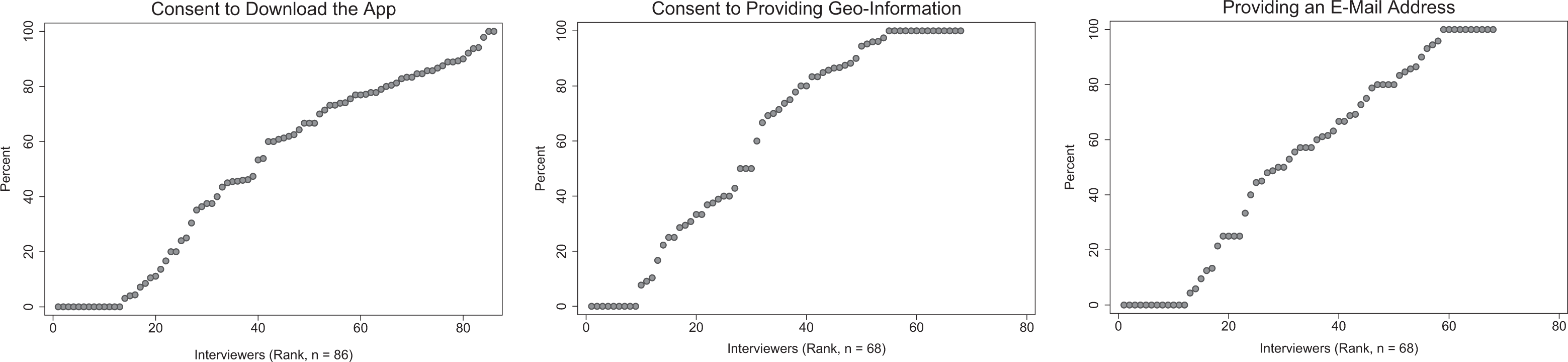

The large interviewer effects may in principle be due to a relatively small group of interviewers that achieve extraordinary low or high consent rates. Figure 2 reports the interviewer-specific rates (percentages) for consent to download the app (left), consent to providing geo-information (middle), and providing an email address (right).

Interviewer-level rates in consent to download the app (left), providing geo-information (middle), and providing an email address (right).

As can be seen, there is a large variation in success rates across interviewers. While some interviewers achieve very low (down to 0%) and very high (up to 100%) consent rates, the majority of interviewers lie in between. Thus, the observed interviewer effects cannot be explained by only few interviewers performing very well or very badly.

Effects on Recontacting Rates and Participation

The Wave 2 reparticipation rate amounts to roughly 69% (not displayed as a table), which is substantially lower compared to other SOEP subsamples (Siegers et al., 2019). Additionally, address quality is also worse. More than 4% (4.2%) of the addresses were not traceable (usually over 99% of the SOEP households’ addresses are known in a consecutive wave; Siegers et al., 2019, p. 58). We assume that this difference is due to the specificities of refugees who during their first years move frequently due to allocation procedures and preliminary housing arrangements.

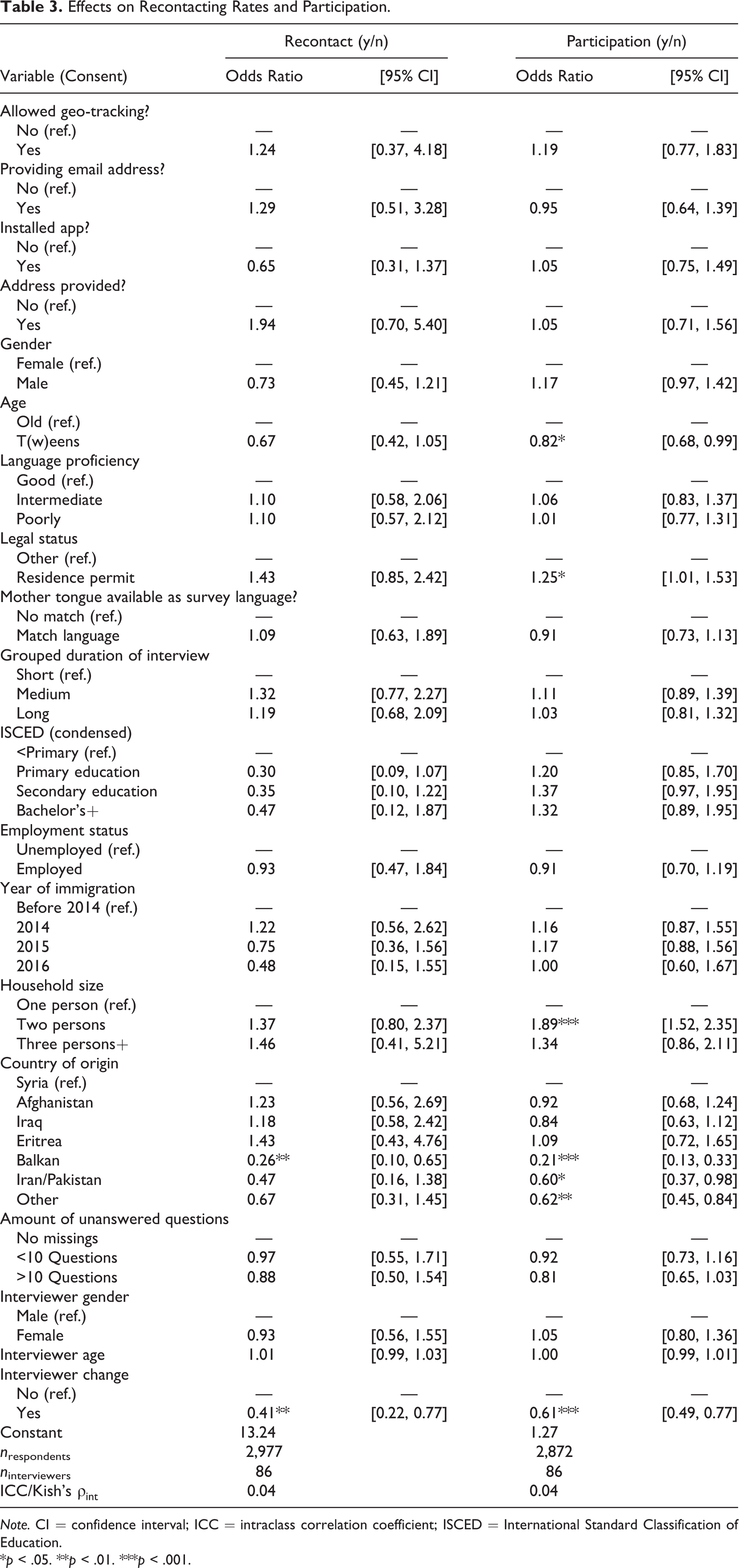

Table 3 displays the effect of allowing geo-tracking, the provision of email addresses, installing the application, and information regarding address changes on address quality (by means of a successful contact) in the second wave (Model 1) and participation when the household could be contacted (Model 2). The results indicate that respondents who allowed geo-tracking, provided their email address, or used the app to provide information about address changes did not have a higher chance of being recontacted by the field institute. 3 Additionally, in the event that the members of a household were contacted in the second wave, their motivation to participate did not increase when the respondents had downloaded the app in general.

Effects on Recontacting Rates and Participation.

Note. CI = confidence interval; ICC = intraclass correlation coefficient; ISCED = International Standard Classification of Education.

*p < .05. **p < .01. ***p < .001.

Given these results, we have serious doubts that the usage of this mobile phone app and its various features improves address quality and increases participation rates in upcoming panel waves.

Turning to interviewer effects and compared to the results in the previous section, the share of variance estimated to be due to the interviewer level is much smaller (

Discussion

By estimating multilevel logistic regression models with respondents clustered in interviewers, we show that providing an app with geo-tracking, which collects email addresses and offers a way to inform about address changes within the app, does not significantly improve address quality nor the respondents’ motivation to participate in the second wave of a refugee panel study. Additionally, we provide indication that the provision of short questionnaires and news outlets does not increase participation rates among contacted households. We therefore conclude that using a panel app is not necessarily an advantage for decreasing panel attrition in contrast to “classical” measures such as sending out postcards or providing monetary compensation (see, e.g., Schoeni et al., 2013). Regarding the consent to use a mobile phone application, we provide indication that interviewers play a substantial role in convincing respondents to comply. Additionally, individual characteristics such as age, gender, and language correlates also influence consent.

Address Quality and Respondent Motivation

Admittedly, the absence of an effect of geo-tracking on address quality is somewhat surprising, as geo-tracking, in theory, provides detailed information on the respondent’s abode. We assume that most participants switched off their GPS hardware at some point as it decreases battery runtime, or respondents just forget about the app and its purpose. Additionally, GPS coordinates can only be a rough estimation of the actual residence (e.g., if someone moved abroad permanently). Our results therefore confirm what has already been known: GPS coordinates provided without further information are insufficient when it comes to identifying people’s homes. However, if software is available, GPS tracking data might be used for testing geographical eligibility.

The absence of an effect of the provision of email addresses and changing addresses is equally surprising. After consulting the field agency, we have three potential explanations for the absence of an effect. First, the provision of address changes was imprecise; second, the new address had already been outdated during the actual fieldwork; and third, if respondents moved frequently, they forgot to inform about multiple address changes.

Regarding the implementation of short questionnaires and news outlets, we assume that the missing effect can be explained by referring to respondent burden. Some respondents may experience the questionnaires and news outlets provided via the app as somewhat burdensome or just boring. These rather negative experiences with the survey project may impede a higher motivation to participate in the next wave. Additionally, some of the news outlet might reflect a negative connotation toward refugees (see Online Appendix), resulting in hostile feelings. If this is the case, the absence of an effect is less surprising and appears in a more plausible light.

Moreover, some respondents could have replaced their smartphones, or given them to friends or family members, thus rendering the app redundant. Future projects should keep this in mind when implementing a smartphone application. Therefore, monitoring the app activity in future projects would be most valuable in order to observe whether the application is used in general and by the intended respondents.

Given these somewhat disappointing results, we raise the question whether the ineffectiveness is due to the feature itself or rather its implementation. For example, the application itself did not produce constant reminders to provide address changes or to keep the GPS tracking switched on, which could have lead to outdated addresses. Additionally, most of the questionnaires were unintentionally designed like a test about the knowledge on German society and could have raised some concerns regarding their utility and usage. In addition, the questionnaire in the app deviates from the SOEP questionnaire, which may result in some worries about the actual purpose of the app. Given that refugees are particularly vulnerable in terms of their legal status, this might have posed a problem. For future research on this matter, we therefore conclude that the development of such an app should keep the particularities of the target population in mind and should avoid that the design unintentionally decreases the app’s utility.

Consent to Using the Application

Regarding the analysis of consent, what stands out are the large interviewer effects. Our results strongly indicate that interviewers play a crucial role in achieving high consent rates regarding the usage of a panel app. What are possible explanations for these strong effects? First, interviewers differ in their skills, and more experienced interviewers may be more successful in obtaining consent as they have had time to improve their interviewing and communication routines. 4 Second, interviewers differ in how they communicate, manage to establish a relationship with respondents, and create a pleasant interview situation (“rapport”)—all of which likely affect the willingness of respondents to consent to download an app or agree to share sensitive GPS information. More detailed (qualitative) data about the interview and social interaction are needed in future studies in order to analyze this aspect. Third, the interviewers’ own opinion toward the app and consent requests likely affect their motivation while providing information about and asking for consent. In this regard, for instance, Sakshaug et al. (2013) show that interviewers who would not personally agree to the request they are supposed to ask ultimately obtain lower consent rates. While the observed interviewer effects in principle may also in part be due to area (sample point) effects, controlling for a large number of area and respondent-level characteristics minimizes potential confounding. Moreover, our results match the existing literature on interviewer effects in consent to record linkage requests, showing high interviewer variability in consent rates (Sakshaug et al., 2013, Sala et al., 2012).

The strong language effects on consent are a plausible result. Respondents have a higher chance to consent to download the app when they are proficient in German and the survey is conducted in their mother tongue. Thus, the provision of the mother tongue seems crucial. Using the mother tongue increases the understanding of the consent question and, thus, builds trust, subsequently increasing consent rates. Therefore, if recent migrants are a substantial part of the target population, consent questions need to be translated.

Further, crucial factors are immigrant-specific variables. These are not necessarily transferable to surveys on nonmigrants but are also important to mention. Consent rates for geo-tracking were especially low for refugees with a pending residence permit or with a suspension from deportation. Respondents with a pending asylum case or a suspension of deportation may be more skeptical toward being easily tracked as they may face deportation. However, those who migrated to Germany more recently are more likely to download the app. We assume that this is because they feel obliged to participate either because they were granted protection (as a sign for being grateful) or because they think that their cooperation in this regard poses an advantage for future residence issues (however, as the SOEP questionnaire clearly points out that the interview is independent from their asylum claim, this seems rather unlikely).

Our findings have substantial implications for future research and survey practice: In light of our study, it is at least questionable whether the costs of developing a mobile phone application and its utility are balanced. As classic panel care measures (interviewer continuity and monetary incentives) are historically proven to work, it might be better to use conservative methods in this respect, especially given limited budgets. The design of mobile apps needs to keep the particularities of the target population in mind. In line with previous research on interviewer effects, we strongly recommend training interviewers accordingly in order to achieve high consent rates. Relying on interviewers provides the opportunity to give additional information to the consent question, and interviewers can answer any remaining questions or concerns expressed by the interviewees. Our analyses suggest that using the mother tongue of respondents is crucial in order to receive consent to data linkage in surveys of recent immigrants. In contrast to earlier studies, our data do not indicate that smartphone-based data collection can be applied easily to the refugee population in Germany. Around 10% of the respondents did not own a smartphone; 21% encountered software problems. Having the field agency provide smartphones might be an effective (albeit very expensive) way to overcome these technological hurdles and coverage issues.

Supplemental Material

Supplemental Material, sj-do-1-ssc-10.1177_0894439320985250 - Using a Mobile App When Surveying Highly Mobile Populations: Panel Attrition, Consent, and Interviewer Effects in a Survey of Refugees

Supplemental Material, sj-do-1-ssc-10.1177_0894439320985250 for Using a Mobile App When Surveying Highly Mobile Populations: Panel Attrition, Consent, and Interviewer Effects in a Survey of Refugees by Jannes Jacobsen and Simon Kühne in Social Science Computer Review

Supplemental Material

Supplemental Material, sj-docx-1-ssc-10.1177_0894439320985250 - Using a Mobile App When Surveying Highly Mobile Populations: Panel Attrition, Consent, and Interviewer Effects in a Survey of Refugees

Supplemental Material, sj-docx-1-ssc-10.1177_0894439320985250 for Using a Mobile App When Surveying Highly Mobile Populations: Panel Attrition, Consent, and Interviewer Effects in a Survey of Refugees by Jannes Jacobsen and Simon Kühne in Social Science Computer Review

Footnotes

Data Availability

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This work was partially funded by the German Federal Ministry of Education and Research (BMBF) under Grant 01UW1603AX.

Software Information

All work was carried out using Stata Version 14. The DoFile is provided as a Supplementary file. The mobile phone application was produced for the Apple and Android app store but is not available for download anymore.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.