Abstract

In addition to hosting user-generated video content, YouTube provides recommendation services, where sets of related and recommended videos are presented to users, based on factors such as co-visitation count and prior viewing history. This article is specifically concerned with extreme right (ER) video content, portions of which contravene hate laws and are thus illegal in certain countries, which are recommended by YouTube to some users. We develop a categorization of this content based on various schema found in a selection of academic literature on the ER, which is then used to demonstrate the political articulations of YouTube’s recommender system, particularly the narrowing of the range of content to which users are exposed and the potential impacts of this. For this purpose, we use two data sets of English and German language ER YouTube channels, along with channels suggested by YouTube’s related video service. A process is observable whereby users accessing an ER YouTube video are likely to be recommended further ER content, leading to immersion in an ideological bubble in just a few short clicks. The evidence presented in this article supports a shift of the almost exclusive focus on users as content creators and protagonists in extremist cyberspaces to also consider online platform providers as important actors in these same spaces.

Introduction

For youth, the Internet is often their first port of call for background and information on topics with which they are unfamiliar or, indeed, for discussion and networking around topics with which they are (Agosto & Hughes-Hassell, 2006a, 2006b; Ito et al., 2010; see also Livingstone & Haddon, 2009). Political extremists are aware of this trend and seek to exploit it through the use of not just dedicated websites but also by pushing out their content across the whole of the Internet, including via social media platforms such as Facebook, Twitter, and YouTube (Conway, 2012; Conway & McInerney, 2008; Europol, 2011, pp. 11–12; U.K. Home Office, 2011, p. 35). In this way, they aim to reach a much wider audience than they previously had access to, which may be a factor in explaining the high numbers of European children and teens who report having accessed hate content via the Internet: a major study found that some 12% of European 11- to 16-year-olds reported seeing online hate in the year prior to interview, rising to one in five 15- to 16-year-olds (Livingstone, Ólafsson, O’Neill, & Donoso, 2012, p. 11). YouTube’s status as the most popular video sharing platform means that it is especially useful to political extremists. In addition to hosting user-generated video content, YouTube provides recommendation services, where sets of related and recommended videos are presented to users, based on factors such as co-visitation count and prior viewing history (Davidson et al., 2010).

YouTube, and other social media sites, expends a lot of effort on making “strategic claims for what they do and do not do, and how their place in the information landscape should be understood” (Gillespie, 2010, p. 347). However, online platform providers are facing increasing scrutiny from users, advertisers, activists, policy makers, and other key constituencies about their civic responsibilities including, in particular, along a key fault line between intervening in online content delivery versus remaining neutral. YouTube have been at pains “to position themselves as just hosting—empowering all by choosing none” (Gillespie, 2010, p. 357; see also Fiore-Silfvast, 2012, p. 1982). They insist that: YouTube encourages free speech and defends everyone’s right to express unpopular points of view. We believe that YouTube is a richer and more relevant platform for users precisely because it hosts a diverse range of views, and rather than stifle debate

In fact, as critical media scholars point out, social media platforms do not merely transmit content, but filter it on the basis of claiming to augment it, thereby making the content more relevant to its potential consumers (and the platforms more attractive to advertisers; see, e.g., Bucher, 2012; Langlois & Elmer, 2013). Tania Bucher has shown that “APIs have ‘politics’” (2013, p. 2); in this article, we show that recommender systems also have politics. This means that they too can be seen as having “powerful consequences for the social activities that happen with them, and in the worlds imagined by them” (Gillespie, 2003, as quoted in Bucher 2013, p. 2). In particular, the YouTube recommender system ensures that, contrary to YouTube’s own assertions, users are explicitly

Whereas previous studies in this area have highlighted the online ideological bubbles or echo chambers resulting from choices made by content

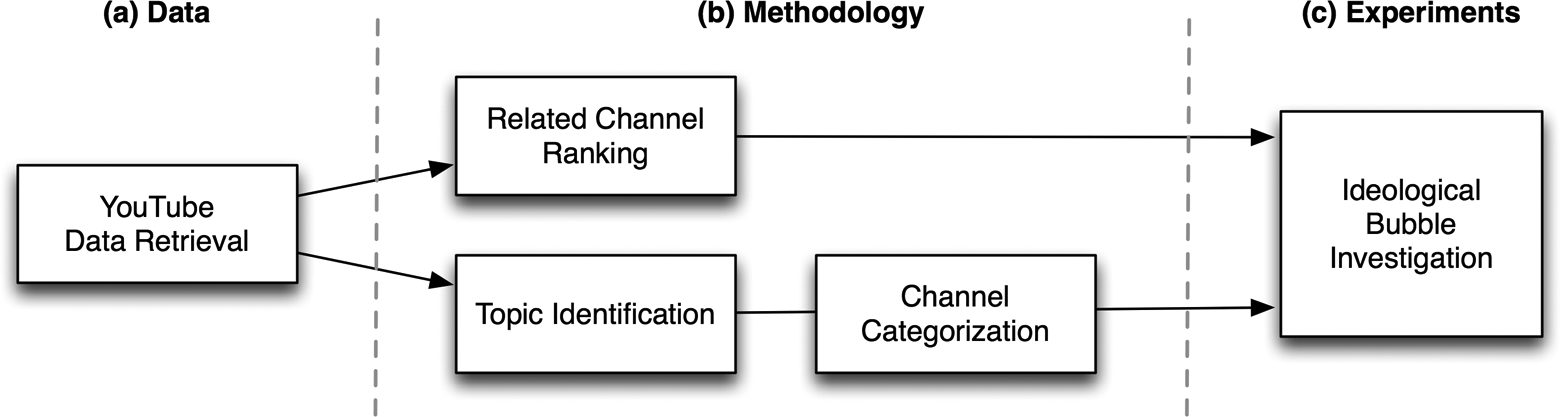

The article is divided into four sections. The first section provides description of prior work on YouTube categorization and recommendation, along with a review of research concerned with the categorization of online ER content. The retrieval of YouTube data based on links originating from ER Twitter accounts is the subject of the second section, while in the third section, we describe the methodology used for related channel ranking, topic identification, and channel categorization. An overview of this entire process can be found in Figure 1. Our investigation into recommender politics and its potential impacts can be found in the fourth section, which is focused on two data sets consisting primarily of English and German language channels, respectively. In the Discussion section, we emphasize the hidden politics of online recommender systems, particularly the way in which the immersion of some users in YouTube’s ER spaces is a coproduction between the content generated by users and the affordances of YouTube’s recommender system, and the potential implications of and suggested responses to this. The Conclusion synopsizes our research and findings and makes a suggestion for future research.

Overview of the process used to investigate the extreme right content found on YouTube.

Related Research

Video Recommendation and Categorization

Video recommendation on YouTube has been the focus of a number of studies. For example, Baluja et al. (2008) suggested that standard approaches used in text domains were not easily applicable due to the difficulty of reliable video labeling. They proposed a graph-based approach that utilized the viewing patterns of YouTube users, which did not rely on the analysis of the underlying videos. The recommendation system in use at YouTube at the time was discussed by Davidson et al. (2010) where sets of personalized videos were generated with a combination of prior user activity (videos watched, favorited, liked) and the traversal of a co-visitation graph. In this process, recommendation diversity was obtained by means of a limited transitive closure over the generated related video graph. Zhou, Khemmarat, and Gao (2010) performed a measurement study on YouTube videos to determine the sources responsible for video views and found that related video recommendation was the main source outside of the search function for the majority of videos. They also found that the click-through rate to related videos was high, where the position of a video in a related video list played a critical role. A similar finding was made by Figueiredo, Benevenuto, and Almeida (2011) where they demonstrated the importance of key mechanisms such as related videos in the attraction of users to videos.

Turning to the task of YouTube video categorization, Filippova and Hall (2011) presented a text-based method that relied upon metadata such as video title, description, tags and comments, in conjunction with a predefined set of 75 categories. Using a bag-of-words model, they found that all of the text sources contributed to successful category prediction. More recently, a framework for the categorization of video channels was proposed by Simonet (2013), involving the use of semantic entities identified within the corresponding video and channel profile text metadata. Following the judgment that existing taxonomies were not well suited to this particular problem, a new category taxonomy was developed for YouTube content. Roy, Mei, Zeng, and Li (2012) investigated both video recommendation and categorization in tandem, where videos were categorized according to the topics built from Twitter activity, leading to the enrichment of related video recommendation. Video text metadata were used for this process, and the topics were based on the categories proposed by Filippova and Hall (2011) in addition to the standard YouTube categories at the time. Separately, they also analyzed diversity among related videos, where they found that there was a 25% probability on average of a related video (up to a depth of 2) being in the same category.

ER Categorization

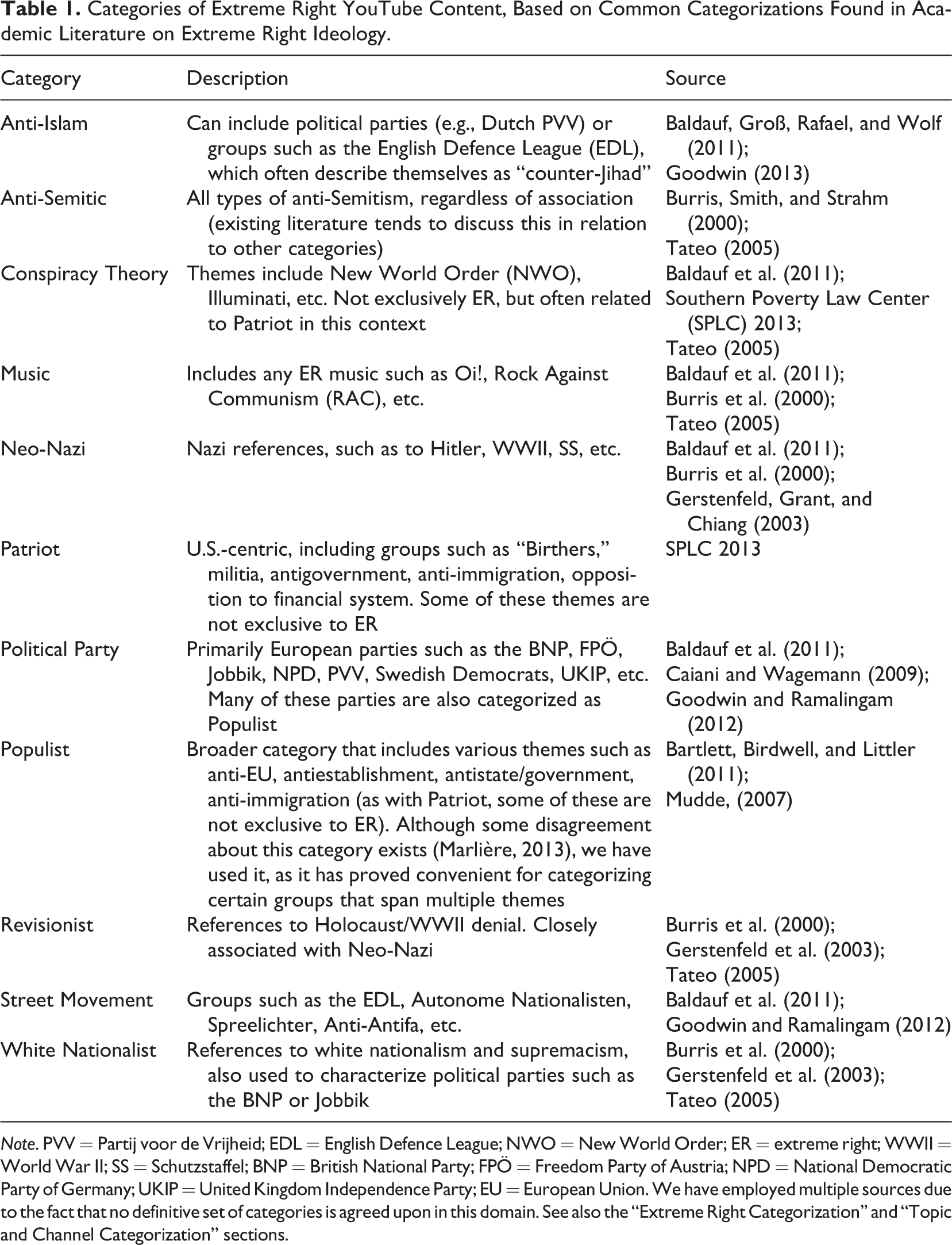

In the various studies that have analyzed online ER activity, certain differences can be observed among researchers in relation to their categorization of this activity and associated organizations (Blee & Creasap, 2010; see also Table 1). Burris, Smith, and Strahm (2000) proposed a set of eight primarily U.S.-centric categories in their analysis of a White supremacist website network; Holocaust Revisionists, Christian Identity Theology, Neo-Nazis, White Supremacists, Foreign (non-US) Nationalists, Racist Skinheads, Music, and Books/Merchandise. A similar schema was used by Gerstenfeld, Grant, and Chiang (2003), which also included Ku Klux Klan and Militia categories. They also discussed the difficulty involved in the categorization of certain subgroups, where a general category (Other) was applied in such cases. These categories were adapted in separate studies of Italian and German ER groups, where new additions included Political Parties and Conspiracy Theorists, while others such as Music and Skinheads were merged into a Young category (Caiani & Wagemann, 2009; Tateo, 2005). Rather than focusing on ideological factors, Goodwin and Ramalingam (2012) proposed four organizational types found within the European ER milieu; political parties, grassroots social movements, independent smaller groups, and individual “lone wolves.” Other notable categories include the Autonomous Nationalists identified within Germany in recent years. These groups focus specifically on attracting a younger audience, where social media is often a critical component in this process (Baldauf, Groß, Rafael, and Wolf, 2011).

Categories of Extreme Right YouTube Content, Based on Common Categorizations Found in Academic Literature on Extreme Right Ideology.

The popularity of YouTube has led to its usage by ER groups for purposes of content dissemination. Its related video recommendation service provides a motivation for the current work to analyze the extent to which a viewer may be exposed to such content and thereby highlighting “Whether these interventions are strategic or incidental, harmful or benign, they are deliberate choices that end up shaping the contours of public discourse online” (Gillespie, 2010, p. 358). Separately, disagreements over the categorization of online ER activity suggest that a specific set of categories may be required for the analysis of this domain.

Data

In our previous work, we investigated the potential for Twitter to act as a gateway to communities within the wider online network of the ER (O’Callaghan, Greene, Conway, Carthy, & Cunningham, 2013b). Two data sets associated with ER English language and German language Twitter accounts were generated by retrieving profile data over an extended period of time. We gathered all tweeted links to external websites and used these to construct an extended network representation. In the current work, we are solely interested in tweeted YouTube URLs. Data for these Twitter accounts were retrieved between June 2012 and May 2013, as limited by the Twitter REST application programming interface (API) restrictions effective at the time. YouTube URLs found in tweets were analyzed to determine a set of channel (synonymous with uploaders or accounts; see Simonet, 2013) identifiers that were directly (channel profile page URL) or indirectly (URL of video uploaded by channel) tweeted. All identified channels were included, regardless of the number of tweets in which they featured. Throughout this work, we refer to these as

Our first step in this investigation is the categorization of YouTube channels. To do this, we use text metadata associated with the videos uploaded by a particular channel—namely, their titles, descriptions, and associated key words. Although user comments have been employed in other work (Filippova & Hall, 2011), they were excluded here. This decision followed an initial manual analysis of a sample of tweeted videos, which found that comments were often not present or had been explicitly disabled by the uploader. We also excluded the YouTube “category” field, as it was considered too broad to be useful in the ER domain. Using the YouTube Data API (see https://developers.google.com/youtube/), we initially retrieved the available text metadata for up to 1,000 of the videos uploaded by each seed channel, where the API returns videos in reverse chronological order according to their upload time. In cases where seed channels and their videos were no longer available (e.g., the channel had been suspended or deleted since appearing in a tweet), these channels were simply ignored.

To address the variance in the number of uploaded videos per channel, and to reduce the volume of subsequent data retrieval, we randomly sampled up to 50 videos for each seed. For each video in this sample, metadata values were retrieved for the top 10

For both seed and related channels, the corresponding uploaded video sample was used for categorization; this is described subsequently. It is worth noting here that the videos in question may have been uploaded at any time prior to retrieval, where these times are not necessarily restricted to the period of either Twitter or YouTube data retrieval.

Method

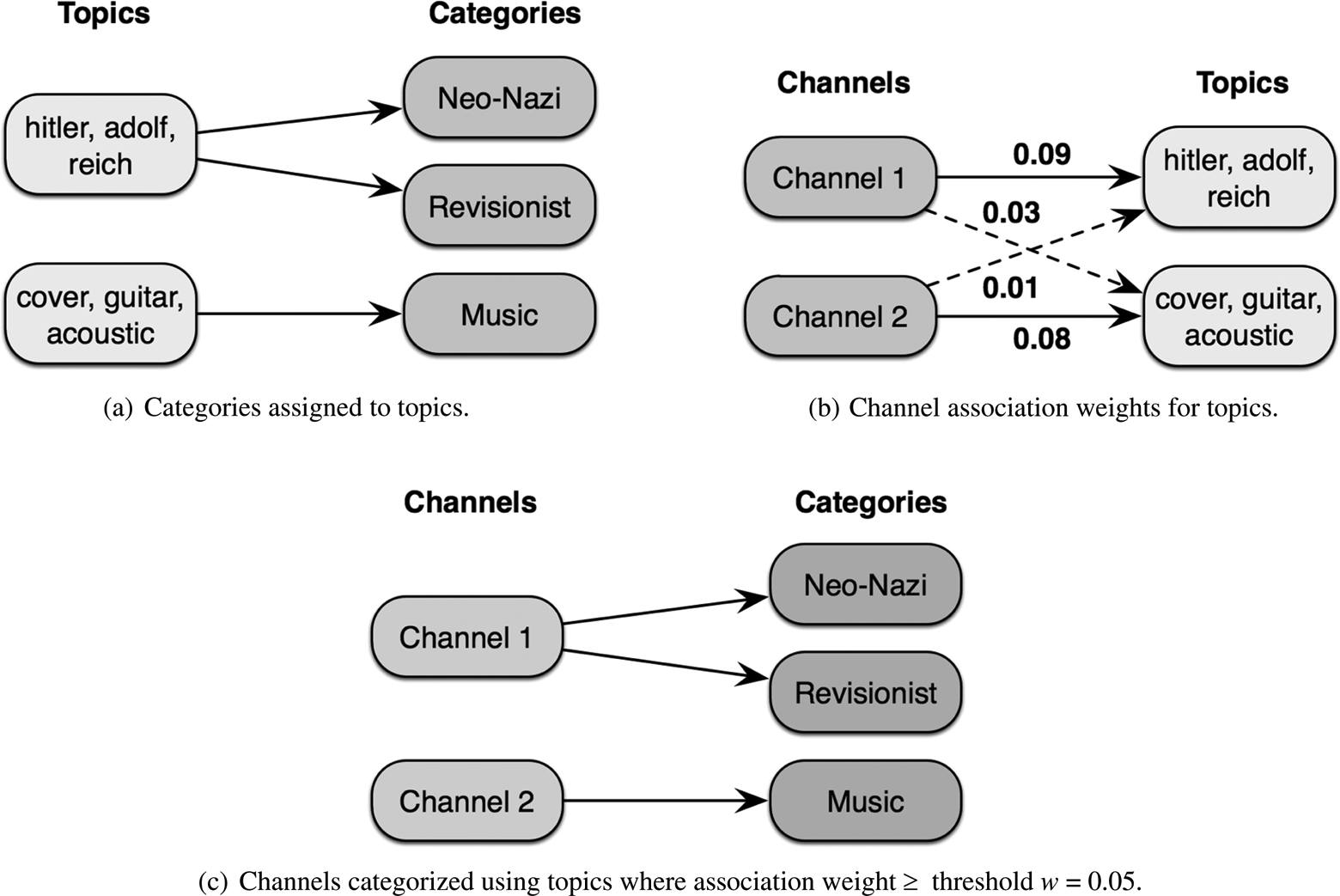

Having retrieved the channel and video data from YouTube (part (a) of the process overview diagram found in Figure 1), the next steps were concerned with the processing of these data prior to the investigation of YouTube’s recommender system. This section corresponds to part (b) of the diagram in Figure 1. First, a ranking of related channels was generated for each seed channel, using the singular value decomposition (SVD) rank aggregation method proposed by Greene and Cunningham (2013). Next, the channels were represented as “documents” based on an aggregation of their uploaded video text metadata, within which latent topics were identified with Nonnegative Matrix Factorization (NMF; Lee & Seung, 1999). The channels were then categorized using these topics in combination with a proposed categorization based on various schema found in a selection of academic literature on the ER, where a simple example of the process that maps channels to categories is shown in Figure 2.

Topic and channel categorization process.

Related Channel Ranking

It was first necessary to generate a single related channel ranking for each seed channel, according to the multiple related rankings returned by the YouTube API for the sample of videos uploaded by the seed. The SVD rank aggregation method proposed by Greene and Cunningham (2013) was used to combine the rankings for each video uploaded by a particular seed into a single ranked set, from which the top ranked related channels were then selected. It was decided to restrict the focus to the top 10, given the impact of related video position on click-through rate (Zhou, Khemmarat, & Gao, 2010). These are the channels used for our detailed investigation into the political articulations of YouTube’s recommender algorithm given subsequently.

Topic Identification

For the purpose of channel categorization, latent topics associated with the channels in both data sets were initially identified based on their uploaded videos. Following the approach of Hannon, Bennett, and Smyth (2010), a “profile document” was generated for each seed and related channel, consisting of an aggregation of the text metadata from their corresponding uploaded video sample, from which a tokenized vector representation was produced. Topic modeling is concerned with the discovery of latent semantic structure or topics within a text corpus, which can be derived from co-occurrences of words in documents (Steyvers & Griffiths, 2006). Popular methods include probabilistic models such as latent Dirichlet allocation (LDA; Blei, Ng, & Jordan, 2003) or matrix decomposition techniques such as NMF (Lee & Seung, 1999). Both LDA and NMF-based methods were initially evaluated with the seed channel document representations described. However, NMF was found to produce the most readily interpretable results, which appeared to be due to the tendency of LDA to discover topics that overgeneralized (Chemudugunta, Smyth, & Steyvers, 2006; O’Callaghan, Greene, Conway, Carthy, & Cunningham, 2013a). There was an awareness of the presence of smaller groups of channels associated with multiple languages in both data sets, and it was decided to opt for specificity rather than generality. The process was undertaken in two stages. First, topics for the seeds were generated by applying NMF to the seed channel documents, resulting in a set of basis vectors consisting of both ER and non-ER topics for both data sets. The second stage involved producing assignments to these seed topics for the related channel documents.

Topic and Channel Categorization

Some prior work discussed earlier has proposed generic categories for use with YouTube videos and channels (Filippova & Hall, 2011; Roy et al., 2012). However, as these studies focused on the categorization of mainstream videos, they were not sufficient for the present analysis where categories specifically associated with the ER were required. We have also discussed prior work that characterized online ER activity using a number of proposed categories but, as indicated, no definitive set of categories is agreed upon in this domain (Blee & Creasap, 2010). We therefore propose a categorization based on various schema found in a selection of academic literature on the ER, where this category selection is particularly suited to the ER videos and channels we have found on YouTube. Some categories are clearly delineated while others are less distinct, reflecting the complicated ideological makeup and thus fragmented nature of groups and subgroups within the ER (Gerstenfeld, Grant, & Chiang, 2003). In such cases, we have proposed categories that are as specific as possible while also accommodating a number of disparate themes and groups. Details of the categories employed can be found in Table 1.

As both data sets contained various non-ER channels, we also created a corresponding set of non-ER categories consisting of a selection of the general YouTube categories as of June 2013, in addition to other categories that we deemed appropriate following an inspection of these channels and associated topics. These non-ER categories were Entertainment, Gaming, Military, Music, News & Current Affairs, Politics, Religion, Science & Education, Sport, and Television. Having produced a set of

The set of categorized topics was then used to label both seed and related channels, based on the channel topic assignment weights, as determined by NMF, exceeding a threshold

In order to confirm the reliability of the above NMF topic categorizations, we employed the automatic Freebase topic annotation of videos by YouTube (hereafter referred to as

The ER and the Political Articulations of YouTube’s Recommender System

In this section, we discuss the political articulations of the English and German language YouTube data sets. The experimental steps taken were as follows: Generation of an aggregated ranking of related channels for each seed channel. Generation of channel document vectors and identification of topics using NMF. Categorization of the identified topics according to the set defined in Table 1. Categorization of the channels based on their topic association weights. Investigation of whether information was excluded from viewing by users if it was not aligned with their existing—in this case, ER—perspective, potentially leading to immersion within an ideological bubble.

For Step 5 above, we define an ER ideological bubble in terms of the extent to which the related channels for a particular ER seed channel also feature ER content. It has been shown that the position of a video in a related video list plays a critical role in the click-through rate (Zhou et al., 2010). Therefore, we investigated the existence of an ideological bubble using the top

For each ER seed channel: The top The total proportion of each category assigned to the ≤

Then, for each ER category: All seed channels to which the category has been assigned were selected. The mean proportion of each related category associated with these seed channels was calculated.

We considered an ideological bubble to exist for a particular ER category when its highest ranking related categories, in terms of their mean proportions, were also ER categories.

English Language Categories

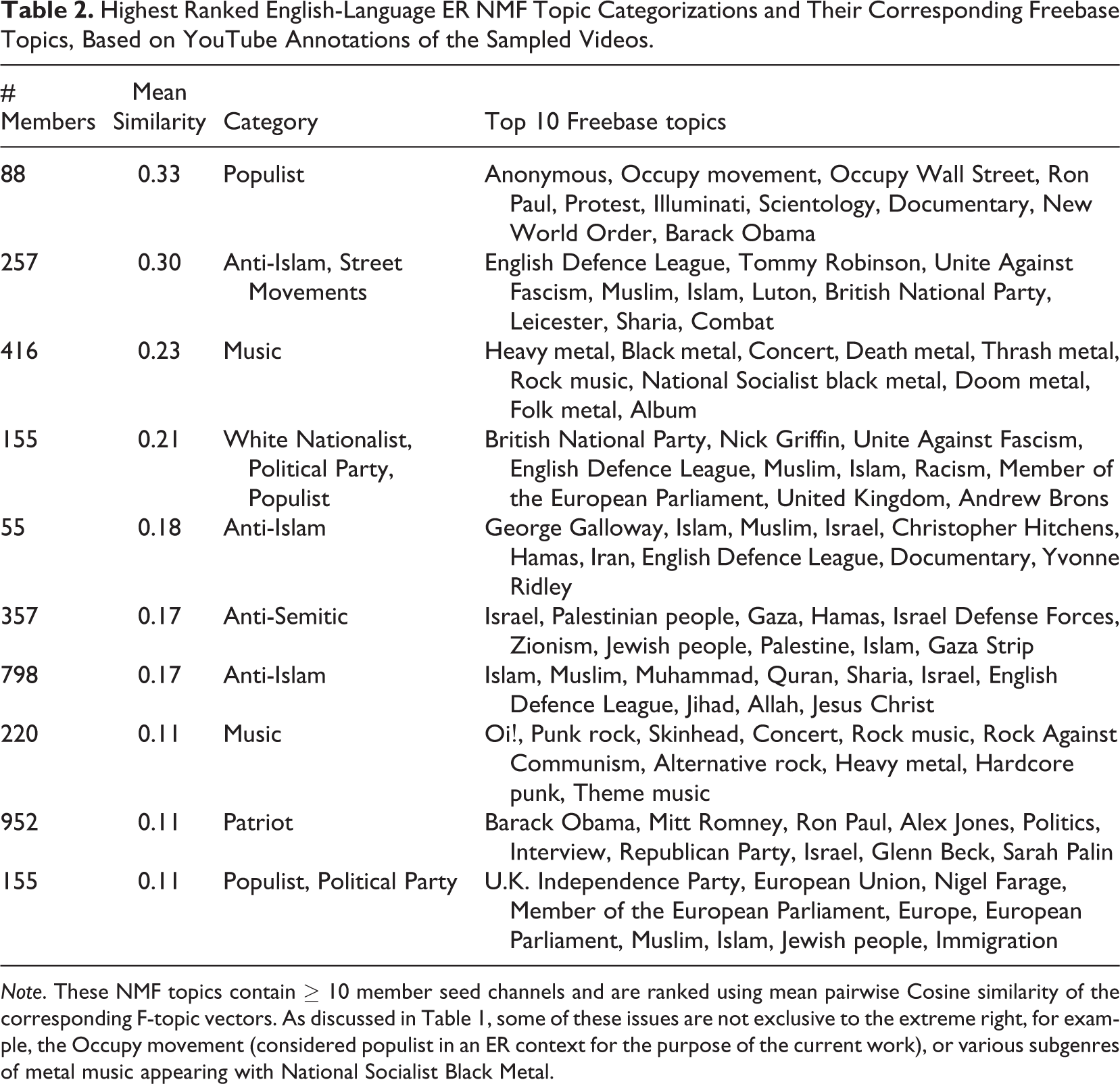

From the total number of channels in the English language data set, we generated 24,611 seed and 1,376,924 related channel documents, using a corresponding seed-based vocabulary of 39,492 terms, and topics were identified by applying NMF to the seed documents. To determine the number of topics

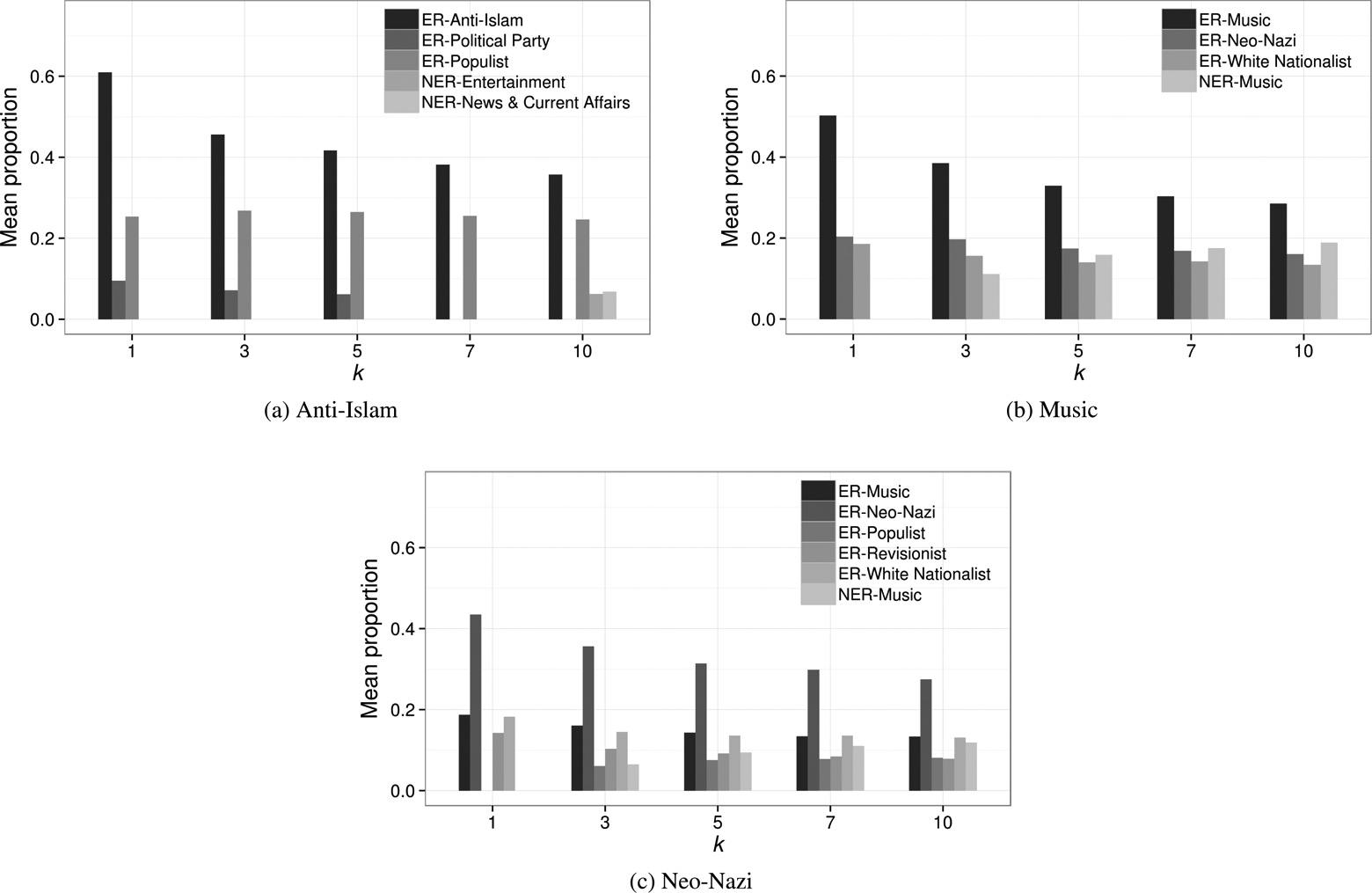

Highest Ranked English-Language ER NMF Topic Categorizations and Their Corresponding Freebase Topics, Based on YouTube Annotations of the Sampled Videos.

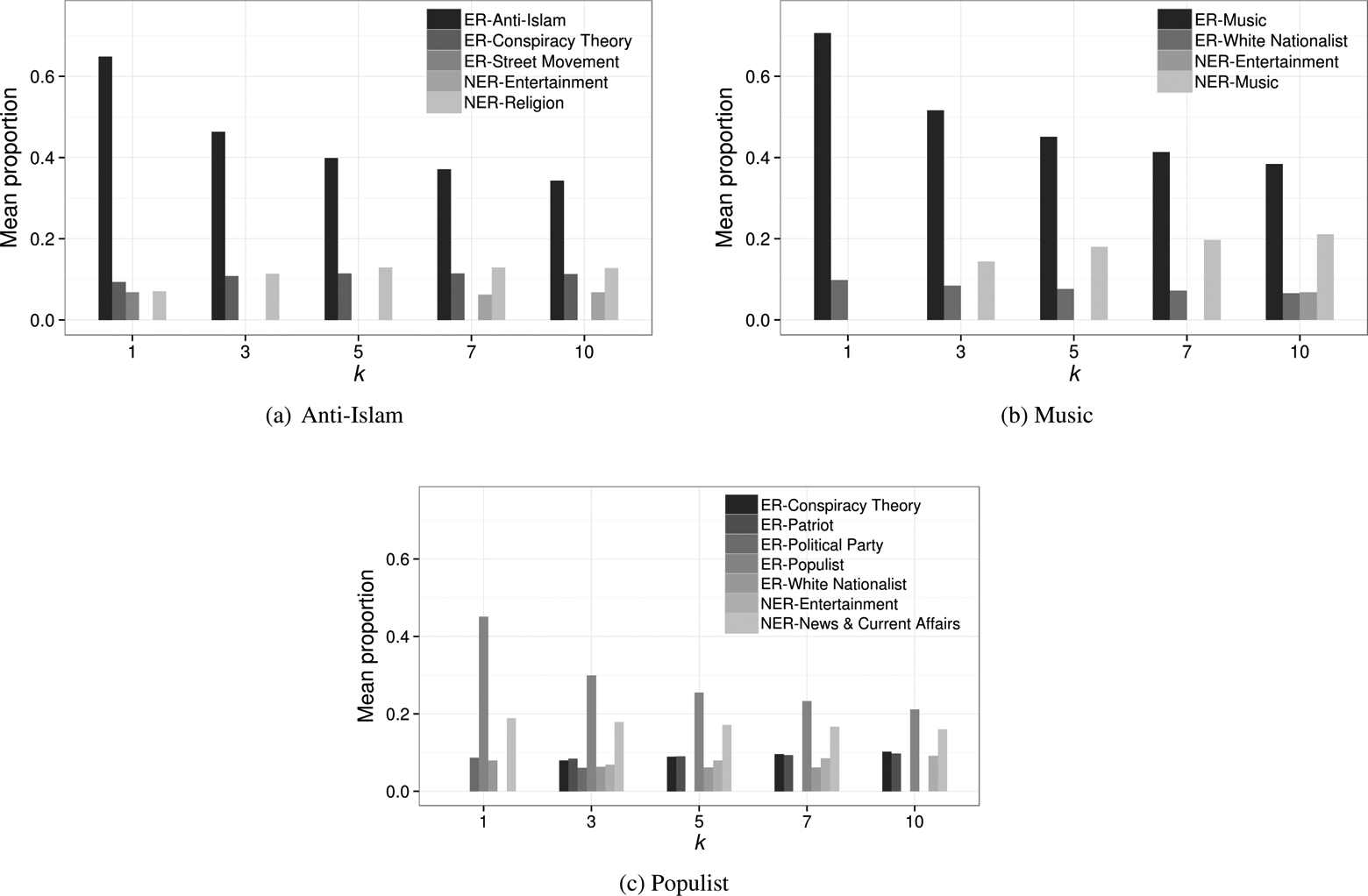

Figure 3 contains plots for three ER categories that were selected for more detailed analysis, where ER and non-ER categories have been prefixed with ER

English mean category proportions of top

The ER Music seed channels usually upload video and audio recordings of high-profile acts associated with the ER. For example, content from bands such as Skrewdriver (United Kingdom) or Landser (Germany) can be found, along with other bands from genres such as Oi!, Rock Against Communism and National Socialist Black Metal (NSBM; Baldauf et al., 2011; Brown, 2004). Given this, the consistent presence of the White Nationalist related category would appear logical. At the same time, we also observe that non-ER Music becomes more evident as

German Language Categories

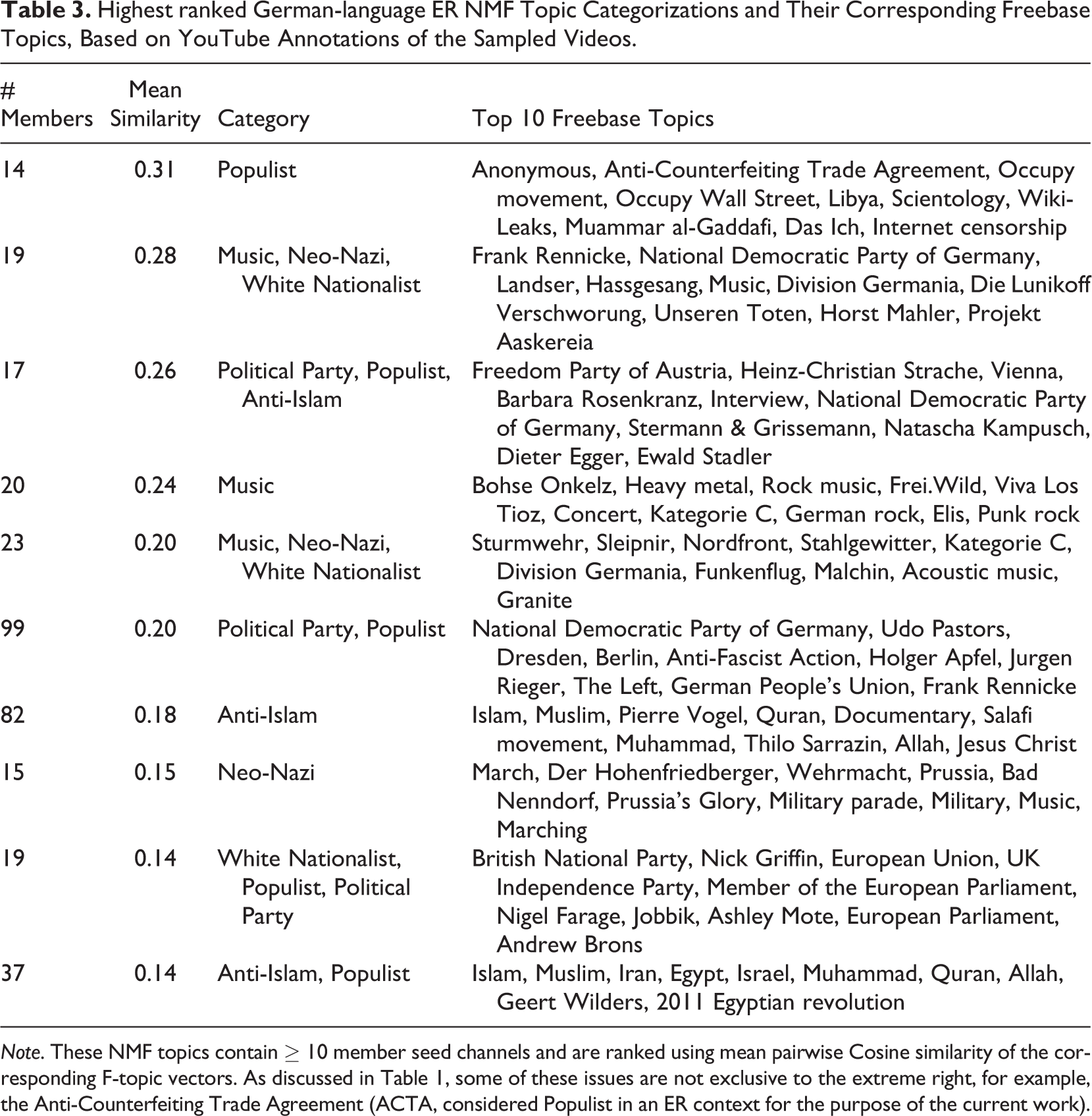

A total of 2,766 seed and 177,868 related channel documents were generated from the German language data set, with topics identified using the former. As before, we experimented with values of

Highest ranked German-language ER NMF Topic Categorizations and Their Corresponding Freebase Topics, Based on YouTube Annotations of the Sampled Videos.

Figure 4 contains plots for three ER categories that were selected for in-depth analysis. As seen in Figure 3, the seed category is the dominant related category for all values of

German mean category proportions of top

This data set also features many ER Music seed channels that upload recordings of high-profile acts, with the main difference being the prominence of the Neo-Nazi related category for all rankings. These recordings and videos, along with other nonmusic videos uploaded by these channels, often feature recognizable Nazi imagery. Separately, channels that upload videos associated with bands that have alleged ER ties, for example, Böhse Onkelz or Frei.Wild (Baldauf et al., 2011), may explain in part the presence of the non-ER Music category, given the mainstream success of these bands. This may also be explained by material associated with hip-hop acts such as “n’Socialist Soundsystem,” which provide an alternative to traditional ER music based on rock and folk. The close relationship with Music is also present for the Neo-Nazi seed category, although further related diversity can be observed. Seed channels featuring footage of German participation in World War II, including speeches by high-ranking members of the Nazi party, are likely to be the source of the White Nationalist and Revisionist-related categories. We can safely assume that the prominence of Music is responsible for the appearance of its non-ER counterpart here.

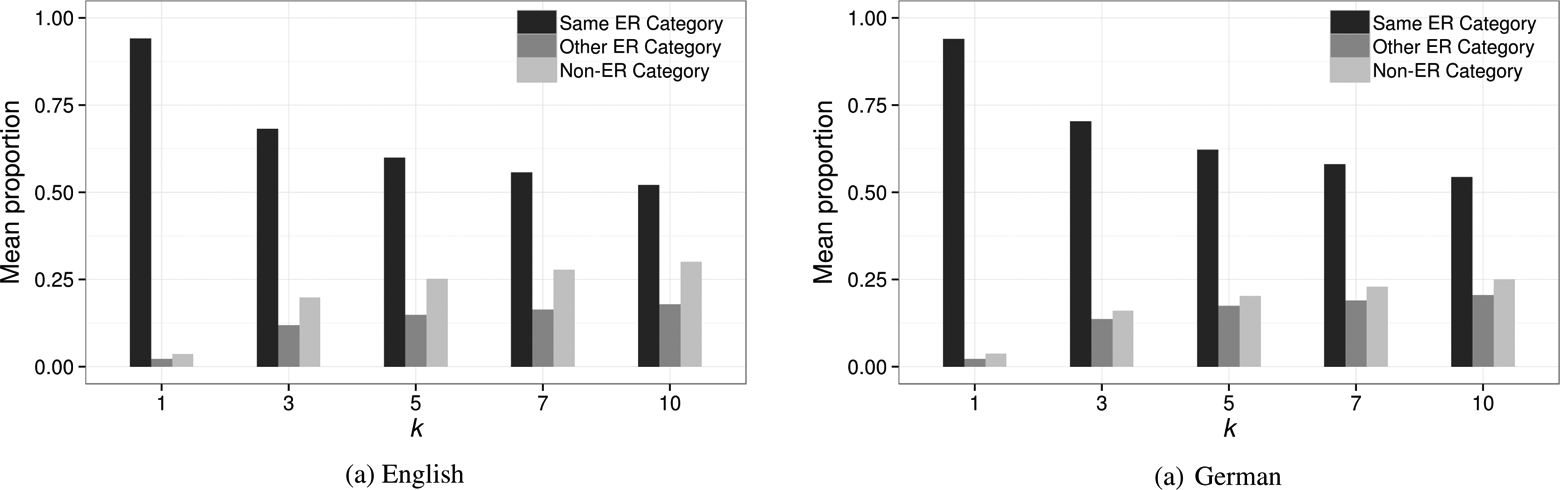

Aggregate Category View

We conclude our analysis of the ideological bubble created by YouTube’s recommender system by measuring the mean proportions for the seed ER categories as a whole, where the possible aggregated related categories were (1) the same ER category as that of the seed, (2) a different ER category, or (3) a non-ER category. The results for both data sets can be found in Figure 5. As with the individual seed categories, an ER ideological bubble is also clearly identifiable at the aggregate level. Although the increase in diversity for lower

Aggregated mean category proportions of top

The data retrieval process described involved following related video links for only one step removed from the corresponding seed video; no further related retrieval was performed for related videos themselves. Of the ER seed channels used in the ideological bubble analysis, we identified those that appeared in top

Discussion

There is no yet proven relationship between consumption of extremist online content and adoption of extremist ideology (McCants, 2011; Rieger, Frischlich, & Bente, 2013), and some scholars and others remain sceptical of a significant role for the Internet in processes of online radicalization (Benson, 2014; U.K. Home Affairs Committee, 2014, pp. 6–7). There is increasing concern on the part of other scholars, and increasingly also policy makers, that high levels of always-on Internet access and the production and wide dissemination—and hence easy availability—of large amounts of extremist online content may be (violently) radicalizing some of its consumers however (Edwards & Gribbon, 2013). From the producer perspective, this is almost certainly its main purpose. Much recent work in the field of online political extremism has focused upon the relationships among protagonists on specific platforms (Fisher & Prucha, 2014; O’Callaghan et al., 2013a) and the potential outcomes of these actors’ online content production, dissemination, and interaction strategies (Berger & Strathearn, 2013; Carter, Maher, & Neumann, 2014). A majority of this work is thus focused on the convergence of self-communication and mass communication: what Castells (2013, xix) terms “mass self-communication.” This article takes a different approach focusing on the role of the platform, in this case YouTube, and its sociotechnical infrastructure, specifically its recommender system, and the affordances this mechanism provides for political extremists, in this case, the ER. This is a complex issue, as YouTube’s users are its content providers while the platform itself intervenes via its recommender algorithm to channel specific content to specific users. The immersion of some users in YouTube’s ER spaces is thus a coproduction between the content generated by users and the affordances of YouTube’s recommender architecture (Fiore-Silfvast, 2012, pp. 1967–1968).

The detailed analysis contained herein of how automated social media “recommendation” can result in users being excluded from information that is not aligned with their existing perspective, potentially leading to immersion within an extremist ideological bubble, supports a shift of the almost exclusive focus on users as content creators and protagonists in extremist cyberspaces to also consider platform providers as important actors in these same spaces. This, in turn, suggests that YouTube’s recommender system algorithms are not neutral in their effects but have political articulations: Together, these algorithms not only help us find information, they provide a means to know what there is to know and how to know it, to participate in social and political discourse, and to familiarize ourselves with the publics in which we participate. They are now a key logic governing the flows of information on which we depend. (Gillespie, 2014, p. 167)

Gillespie goes on to call for close attention to be paid “to where and in what ways the introduction of algorithms into human knowledge practices may have political ramifications.” One of the ways in which he suggests we accomplish this is through reflection upon “the production of calculated publics” or “how the algorithmic presentation of publics back to themselves shapes a public’s sense of itself, and who is best positioned to benefit from that knowledge” (Gillespie, 2014, p. 168). Gillespie supplies the examples of Amazon’s book-buying recommendations invoking a community of readers and Facebook’s “friends of friends” setting transforming a discrete set of users into an “audience”; our focus on YouTube’s creation of an ER milieu is more explicitly political but is nonetheless also an “algorithmically generated group” that “may overlap with, be an inexact approximation of, or have nothing whatsoever to do with the publics that the user sought out” (2014, pp. 188–189), but within which they are then invited to become enmeshed. At the same time, it should be mentioned that YouTube is not the only social media site to be criticized for some of the outcomes of its recommendation practices and the “calculated publics” they “help to constitute and codify…[P]ublics that would not otherwise exist except that the algorithm called them into existence” (Gillespie, 2014, p. 189). Twitter’s recommender system has been described by one analyst of violent online jihadism as providing “robust tools … to aspiring extremists” and “a running start for users who are interested in pursuing ideologically motivated violence” (Berger, 2013).

It may well be the case that the potential cures for these unintended outcomes are worse than the disease however. Gillespie provides a warning in this respect when he states that “What Twitter claims matters to ‘Americans’ or what ‘Amazon’ says teens read are forms of authoritative knowledge that can and will be invoked by institutions whose aim is to regulate such populations” (2014, p. 190). The regulation of online extremist populations—particularly those espousing violence and/or terrorism—is, for good or ill, now a hot button policy issue. Suggested interventions, besides the legislated takedown of online hate and terrorism content in Germany, the United Kingdom, and elsewhere, have included the setting of tighter standards for acceptable content by platform providers; the insertion of alternative viewpoints into recommended lists associated with certain types of content; the assignment of some videos to the adult category (registered users below a certain age cannot directly view them, and all others users must click their assent to viewing objectionable content); and technical (i.e., algorithm-based) demotion of certain videos containing objectionable content that would otherwise appear on recommended lists (i.e., making otherwise popular content harder to find Berger, 2013; CleanIT, 2013; see also Fiore-Silfvast, 2012). Many of these suggested interventions raise the specter of social media companies policing political thought, which is palatable to neither the companies nor many users, and is especially problematic in the absence of rigorous empirical research that analyzes the Internet’s role in processes of radicalization.

Conclusions and Future Work

YouTube’s position as the most popular video sharing platform has resulted in it playing an important role in the online strategy of the ER. We have proposed a set of categories that may be applied to this YouTube content, based on a review of those found in existing academic studies of the ER’s ideological makeup. Using an NMF-based topic modeling approach, we categorized channels according to this proposed set, permitting the assignment of multiple categories per channel where necessary. The existence of resources such as Freebase allowed us to independently confirm the reliability of the categorization where a high level of consistency was observed between our qualitative categorization and YouTube’s automated Freebase annotations. This categorization helped us to identify the existence of an ER ideological bubble, in terms of the extent to which related channels, determined by the videos recommended by YouTube, also belong to ER categories. Despite the increased diversity observed for lower related rankings, this ideological bubble maintains a constant presence. The influence of related rankings on click-through rate (Zhou et al., 2010), coupled with the fact that the YouTube channels in this analysis originated from links posted by ER Twitter accounts, would suggest that it is possible for a user to be immersed in this content following a short series of clicks.

It might be argued that our findings merely confirm that YouTube’s related video recommendation process is working correctly, which is true to a certain extent. Lessig famously stated “code is law” (2006, p. 1); he might equally have said “code is politics.” We were concerned with the specificity of YouTube’s recommender system in terms of how it works in the world. The article presents a case study of a portion of the politics associated with YouTube’s code, along with its potential lived effects; in the absence of any broadly acceptable quick fixes, it seeks to contribute a rigorous evidencing of the already existing political articulations of YouTube’s recommender system and to draw attention to the underexplored way in which this may already be influencing political thought and thus potentially also action. The infrastructural affordances of other online platforms differ from those of YouTube; future research into the role of platform providers and their architectures in online extremism could certainly explore whether other popular online platforms thus have similar or different potential effects.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This research was supported by 2CENTRE, the EU funded Cybercrime Centres of Excellence Network, the EU-funded VOX-Pol Network of Excellence, and Science Foundation Ireland (SFI) under Grant Number SFI/12/RC/2289.