Abstract

Freedom of speech has long been considered an essential value in democracies. However, its boundaries concerning hate speech continue to be contested across many social and political spheres, including governments, social media websites, and university campuses. Despite the recent growth of so-called free speech communities online and offline, little empirical research has examined how individuals embedded in these communities make moral sense of free speech and its limits. Examining these perspectives is important for understanding the growing involvement and polarization around this issue. Using a digital ethnographic approach, I address this gap by analyzing discussions in a rapidly growing online forum dedicated to free speech (r/FreeSpeech subreddit). I find that most users on the forum understand free speech in an absolutist sense (i.e., it should be free from legal, institutional, material, and even social censorship or consequences), but that users differ in their arguments and justifications concerning hate speech. Some downplay the harms of hate speech, while others acknowledge its harms but either focus on its epistemic subjectivity or on the moral threats of censorship and authoritarianism. Further, the forum appears to have become more polarized and right-wing-dominated over time, rife with ideological tensions between members and between moderators and members. Overall, this study highlights the variation in free speech discourse within online spaces and calls for further research on free speech that focuses on first-hand perspectives.

The present study aims to describe the moral discourse of ordinary individuals embedded in free speech communities. Freedom of expression has long been considered essential for the proper functioning of democratic nations, yet its moral and legal limits continue to be contested globally at many scales of society, including governmental and educational institutions, in the media, private businesses, and online (Chemerinsky 2018; Gelber 2010; Hallberg and Virkkunen 2017; Kim and Douai 2012; Wasserman 2010). One major contested boundary to free speech relates to hateful speech—dehumanizing epithets or ideas that harm individuals psychologically, physically, materially, or harm society through the spread of dangerous ideologies (Eschmann 2019; Gelber and McNamara 2016). Many countries have explicit hate speech laws, including Australia, Canada, Jordan, India, and several European countries. Virtually all large social media companies, such as Twitter, Facebook, Instagram, and Reddit, have also set up policies prohibiting the use of hateful or violent language in recent years. In parallel, entire social media websites claiming to be dedicated to free speech, such as Gab, Parler, and other subcommunities on Reddit and Facebook, have emerged.

Several scholars have characterized hate speech restrictions as a balancing act between opposing moral values: individual free speech liberties must be weighed against considerations of justice, equality, dignity, and respect for others (e.g., Butler 2017; Fish 1994; Parekh 2012; Waldron 2012). But, as Howard (2019) notes, this framing misrepresents the debate, as individuals who oppose hate speech restrictions are seen as the “real defenders” (Howard 2019, 94) of free speech and liberty, while those who do not support such restrictions are seen as the real defenders of other, conflicting, moral values. But free speech can be understood in many different ways, and these different ways of understanding free speech can lead to different conclusions about hate speech. For example, political philosophy has long suggested that freedom of speech can be conceptualized in at least two distinct ways—in a positive or negative sense (Berlin 1969). When thinking about free speech in a positive sense, hate speech restrictions could work to promote justice and liberty by stopping the spread of bigoted ideologies, thus freeing certain groups from their oppression, and enabling them to pursue their highest aspirations (Fish 1994; Harell 2010).

In contrast, when thinking about free speech in a negative sense, any form of censorship may be seen as a threat to liberty and justice, particularly because those in oppressed positions or with minority views are more vulnerable to having their views silenced than those in positions of structural advantage. To those who hold a negative liberty view, then, alternative policy approaches, such as “counter speech” policies that provide hate speech victims with institutional support, may be seen as a more effective and less risky means of combatting bigotry and promoting justice than censorship (Gelber 2002; Strossen 2018). In this way, those with positive and negative (and potentially other) conceptualizations of free speech may weigh the exact same moral values (e.g., liberty, equality, justice) to make very different arguments about censorship and hate speech.

Despite the recent growth of free speech communities online and offline, and its potential for helping us parse out these different viewpoints, there is a lack of empirical research examining how people outside academic circles make sense of free speech and hate speech. In this study, I aim to address this gap by analyzing posts, comments, and ethnographic data in a rapidly growing online forum dedicated to free speech: the r/FreeSpeech subreddit (a discussion page dedicated to a specific topic) on the Reddit website. I ask: how do people in this online community describe and justify their understanding of free speech and its boundaries vis-à-vis hate speech? What issues are most salient to them, and how might this motivate their participation? And how does the online environment shape the discourse? In answering these questions, we may be better positioned to understand people’s motivations for joining and engaging in self-proclaimed free speech communities and why the issue has become so polarized. Through this analysis, I find that while most members of the forum understand free speech in a negative and absolutist sense (i.e., without restrictions or sanctions), they use a range of arguments to justify their position regarding hate speech. I also find that the subreddit has become increasingly polarized and right-wing ideologically dominated in more recent years.

Definitions: Free Speech and Hate Speech

What exactly is meant by “free speech” and “hate speech” from a moral and legal perspective? The moral definition of free speech typically refers to the right to express oneself and receive information openly and without interference (Howard 2019; Mill 2018). Free speech can be described in terms of speakers’ and listeners’ (or thinkers’) rights. As the speaker, free speech involves the right to express oneself in the public sphere, including political opinions, without state interference or fear of ostracism (Mill 1978). As the listener or thinker, the right to free speech involves access to information to promote dialogue, mutual understanding, and cooperation on moral matters (Habermas 1981; Shiffrin 2014). The right to free speech is often also a legal one, entrenched in many constitutional documents—such as the First Amendment to the U.S. Constitution, Section 2 of the Canadian Charter of Rights and Freedoms, or Article 10 of the European Convention on Human Rights—all of which state that individuals have the legal right to express opinions and receive information without government interference (Equality and Human Rights Commission 2020).

Hate speech can be defined in moral terms as speech that hurts others by promoting physical violence or harming victims psychologically, socially, or financially (Gelber and McNamara 2016). Most countries also have limits on speech that can be hurtful. Even the United States (U.S.), which boasts some of the most robust free speech protections, does restrict some forms of speech, such as threats, libel, and defamation. Many other democratic nations also have specific hate speech laws. For example, in Canada, criminal hate speech is defined as words that “communicate, except in private conversation, statements that willfully promote hatred against an ‘identifiable group’” (Walker 2018, 1). Hate speech can also be restricted through institutional policies, such as campus speech codes and social media codes of conduct. However, from a legal and organizational perspective, a significant challenge with such restrictions is that the line between free and hate speech is blurry enough that hate speech laws and policies, such as Canada’s, can often be challenged on moral or legal grounds.

It is important to highlight that hate speech is not the opposite of free speech. Free speech is a broad concept referring to many forms of expression and access to such expression (e.g., political expression, freedom of the press, religious expression, freedom to protest, expressions on social media, and artistic expression, among many others) far beyond the scope of this article, with very different implications globally. However, questions around hate speech run through a large body of scholarly work on free speech and social justice (e.g., Benhabib 1997; Cohen 2017; Gelber 2002; Mill 1966). These questions usually center around whether some types of speech are (a) harmful enough to be delineated from what ought to be free forms of expression in democratic societies, and (b) have sufficiently concrete boundaries to be effectively and equitably banned. Such questions are also at the core of many contemporary moral debates about the limits of free speech and have important practical implications for numerous institutions today (e.g., on university campuses and social media websites). This study then pays particular attention to the discourse around hate speech.

Empirical Research on Free Speech: Emerging Trends

As noted, there is a surprising lack of empirical work examining tensions in the free speech and hate speech debate. This gap is especially surprising given the theoretical attention this topic has been given in the academy over the past few decades (e.g., Armstrong and Wronski 2019; Benhabib 1997; Chamlee-Wright 2018; Cohen 2017; Gelber 2002; Habermas 1989; Howard 2019; LaSelva 2015; Matsuda et al. 2018; Mill 1966, 1978; Parekh 2012; Sponholz 2016; Shiffrin 2014). There are some exceptions, though, and existing empirical work in the area of free speech—broadly speaking—points to three potential trends. First, as of recently, liberals and leftists tend to view the issue of hate speech restrictions as involving a balance between moral values, while conservatives tend to adopt a more absolutist stance on free speech. For example, Boch’s (2020a, 2020b) research on the general population finds that while political tolerance has increased in the U.S. overall, tolerance for hate speech, in particular, has decreased among educated liberals. This decrease may be driven by increased awareness of racial discrimination and its harms (Boch 2020a). Similarly, Kidder and Binder (2021) interviewed students at four U.S. universities. They found that students on the political left tend to be concerned about balancing free speech rights against other considerations—such as the harms of hate speech—while students on the political right tend to reject any form of censorship.

Second, balancing these important moral values can be thorny from a practical standpoint. For example, Miller et al. (2018) interviewed university administrators who served on bias response teams designed to address violent incident reports, microaggressions, hate speech, and other related incidents. While these administrators expressed the need to weigh conflicting interests (freedom of expression and an inclusive campus environment), they struggled to strike a good balance in practice. As a result, they avoided punishing perpetrators of harmful speech and instead tried to promote dialogue between opposing parties. Similarly, Kidder and Binder’s (2021) study (described above) also found that university students on the political left appeared internally conflicted when discussing free speech issues, often contradicted themselves, and expressed uncertainty about how universities should regulate speech. The authors argue that unlike conservative students, whose views are influenced by the absolutist viewpoints endorsed by right-leaning organizations, students on the political left receive little institutional or external support to make sense of campus free speech (Kidder and Binder 2021).

And finally, so-called online free speech communities seem to be attracting individuals on the extreme right, at least in recent years. For example, one study by Zannettou et al. (2018) quantitatively analyzed posts on the censorship-free social media website called Gab. This website is dedicated to free speech and was developed in 2016 (several months before the 2016 U.S. Presidential Election), and it explicitly welcomes people who have been banned from other social media websites. The authors found that hate words were used in over 5% of posts, representing 2.4 times the rate of hate words compared to Twitter, and that some accounts were designed to recruit millennials to the alt-right (Zannettou et al. 2018). However, this rate of hate words is lower than what is found on other online forums, such as 4chan’s Politically Incorrect board (Zannettou et al. 2018). Other forums, such as the (now banned) r/The_Donald Subreddit, also have reportedly high rates of hate speech (Gaudette et al. 2020; Rieger et al. 2021). It is then difficult to know whether Zannettou’s findings simply reflect increasing far-right extremism on the internet over the past decade (Rozado and Kaufmann 2022).

Methods

Empirical Case: R/FreeSpeech Subreddit

In this study, I use data from the website Reddit. Reddit is an online community where individuals can post, comment, and vote on a wide variety of topics. Comments are posted under “subreddits” associated with different topics (which are labeled as r/[topic]). Several studies have suggested that the Reddit platform offers users the possibility of a “virtual community,” allowing them to share information, engage in meaningful discussions, and develop attachments (Darwin 2017; Foeken and Roberts 2019; Lundmark and LeDrew 2019; Robards 2018). Further, some research has suggested that the voting feature enables collective identity formation and boundary construction, as users “upvote” the opinions they agree with and “downvote” those they disagree with (Gaudette et al. 2020). As an empirical case, the r/FreeSpeech subreddit has the advantage of being an organized online space where users are requested to limit their posts to “discussions about freedom of speech and for news about free speech-related issues from all around the world” (Free Speech Subreddit, n.d.). Therefore, I expected that posts on the subreddit would provide a rich source of data on free speech perspectives instead of simply being a space for users to comment on a range of irrelevant topics without guiding principles.

The r/FreeSpeech subreddit was created in 2009 and had 31.1 thousand members at the time of initial data collection (October 2020). The subreddit grew slowly and steadily from 2009 to 2018, but its popularity increased sharply in late 2018: in November 2018, it had approximately 5,700 followers, and by November 2019, it had over 18 thousand followers. It continued to grow steadily after that, and by August 2022, it had over 49 thousand followers (Subredditstats 2022). While I cannot obtain information about the subreddit members’ demographic characteristics, in 2021, approximately 49% of desktop traffic from Reddit as a whole came from the U.S., 8% from the United Kingdom (U.K.), 7.5% from Canada, 4% from Australia, and 3.12% from Germany (Clement 2022a). These demographic characteristics speak to the dominance of U.S. debates in English and on so-called global forums. Further, the use of Reddit is more prevalent among younger internet users. As of 2022, in the U.S., 36% of internet users aged 18–29 use Reddit, and this declines with age: 22% of internet users aged 30–49 use the platform, 10% aged 50–64 use it, and only 3% aged 65+ use it (Clement 2022b). The platform is also most popular among men: 23% of U.S. males use Reddit, while only 12% of U.S. females do (Clement 2022c).

Methodological Strategy and Data Collection

Like traditional ethnography, the virtual ethnographic method allows me to immerse myself within a community to examine the group’s social interactions, dynamics, and localized culture, where meanings can be contested and co-constructed in a naturalistic setting (Caliandro 2017; Davis 2012; Hine 2000; Kaur-Gill and Dutta 2017; Murthy 2008; Pink et al. 2016). In addition, as an anonymous forum, we should expect users to have the ability to express themselves without fear of stigmatization, which is especially important for topics that can be uncomfortable or taboo offline (Kavanaugh and Maratea 2016). Further, examining the behaviors of the moderators and changes to the forum over time allows me to glean insights into how the online environment itself shapes the discourse. Because the content captured online is textual, this study incorporates aspects of discourse analysis (the language used in conversations and interactions such as posts, comments, and replies), content analysis (subreddit rules, “pinned” and popular posts), and ethnography (features of the online environment such as changes over time, moderation, and upvotes). These methods can complement each other nicely in online environments where internet activity creates textual data that can be analyzed (Myles 2020; Hine 2015; Hyland and Paltridge 2011).

In contrast to the more structured digital ethnographic approaches, such as Kozinets’s (2010) 12-step “Netnography,” the analysis in the present study did not follow a particular set of steps. Instead, it used discourse analysis, content analysis, and ethnography in a pragmatic and iterative way to meet the objectives of the research study. And in contrast to a Critical Discourse Analysis (Fairclough 2012), I did not seek to evaluate the moral content of the statements in the forum; instead, I followed the topics of free speech and hate speech as “empirical objects” (Caliandro 2017, 570) and the social dynamics and formations surrounding them. In short, I analyzed speech events and the context in which they occur to start theorizing about the meanings and motivations underlying participation in this online free speech community.

I began data collection in late July 2020. I joined the online community using my personal Reddit account. Previously, I had not been a member of the subreddit and had never (and still have not) posted on it. I began by collecting posts that specifically related to the boundary of free speech and hate speech, as well as other information about the subreddit, such as the subreddit rules and membership. Within the subreddit, I used a maximum variation sampling technique to collect five different discussion threads explicitly related to the meanings of free speech and hate speech, focusing on “information-rich cases” (Ghaziani 2015). More specifically, I wanted to find posts that would provide me with both confirmatory and surprising data on how people make sense of free speech and hate speech, capturing a wide range of opinions. In doing so, the data could help to advance empirical knowledge beyond common-sense or purely theoretical understandings of the issue.

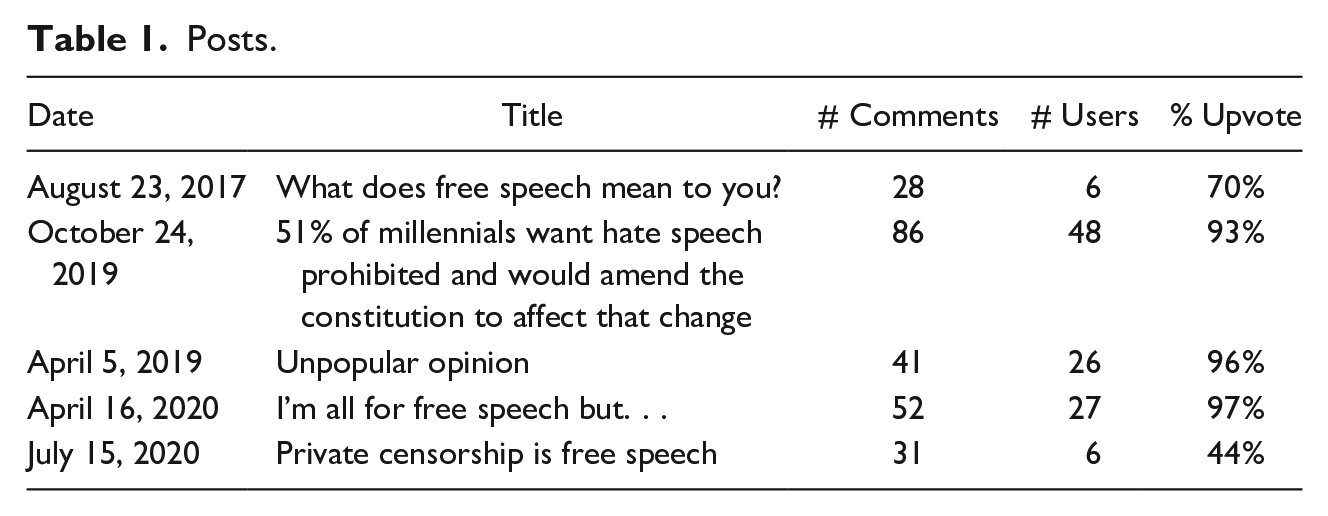

I selected threads in three steps. First, I used the search function with the keywords “free speech” and “hate speech.” I looked at posts from 2017 to 2020 (three years), as I wanted posts relevant to contemporary debates around free speech and hate speech. I looked for posts that prompted users to debate definitions of free speech through a written comment or link to an article. Second, I selected threads with a minimum of 25 comments, allowing for richer dialogue and debate between Reddit users. And third, when posts appeared relevant to the research questions, I briefly scanned the comments to check for relevance. Comments were considered relevant if they referred to a boundary or definition of free speech or hate speech. For instance, by calling upon official definitions of free speech (e.g., in a constitutional document) or providing their own definition of free speech (e.g., “free speech is. . .”). Comments were considered irrelevant when users started discussing other topics. In short, I kept threads only if they met the following criteria: (a) the original post was posted in 2017 or later, (b) the original post had at least 25 comments, and (c) of those 25 comments, a majority (approximately >50%) were relevant to the boundary of free speech or hate speech. Based on these criteria, I ultimately selected five threads for analysis with a total of 418 posts. These threads—including the date of the original post, title post, number of comments, the number of original users who posted in the thread, and the upvote percentage (percentage of users who upvoted the post relative to those who downvoted it)—are described in Table 1.

Posts.

Following the analysis of selected threads, in February and March 2022, I began examining the subreddit ethnographically to contextualize the findings and identify characteristics of the forum that could be shaping people’s behavior. I searched through posts from 2015 to 2022, which gave me a broader view of the topics people discussed and how the site has changed over time. To sift through the large volume of posts and comments, I first scrolled through posts and noted any observations I made. I clicked on interesting or surprising posts to observe the conversations within the threads. Next, I again used the search terms “free speech” and “hate speech,” sorting through posts by popularity (i.e., upvotes) and number of comments. It should be noted that upvotes and comments have different implications for how the post is received in the community. A higher number of upvotes relative to downvotes generally signals that community members agree with a post or would like to elevate it to the top of the forum. On the other hand, a high number of comments may suggest disapproval, disagreement, or even identity threat (see Davis, Love, and Fares 2019). Finally, I examined posts made by the moderators to glean insights into how they might influence the subreddit’s culture and content.

Data Analysis

In total, the data for this study comprised 418 posts from approximately 108 different users and ethnographic observations from continuous exploration of the online forum. Posts were extracted to NVivo, a qualitative analysis software program, using its NCapture feature. Of the 418 comments extracted, 238 were visible, while 180 were hidden. These 180 comments were hidden because they received a high number of downvotes. I could only access hidden posts by going back to the original threads on the Reddit website and clicking on hidden comments individually. As a result of this technical challenge, I analyzed the visible comments first using a retroductive approach, which involves using a combination of inductive and deductive codes iteratively (Ragin 1994) in NVivo. In line with the study’s research aim and research questions, I focused my analysis on statements that attempted to define the boundaries of free speech or hate speech. I initially focused my analysis on three deductive codes based on Howard’s (2019) framework—rights, obligations, and roles—to help me make sense of the data. I also created several inductive codes, iteratively revising and refining codes.

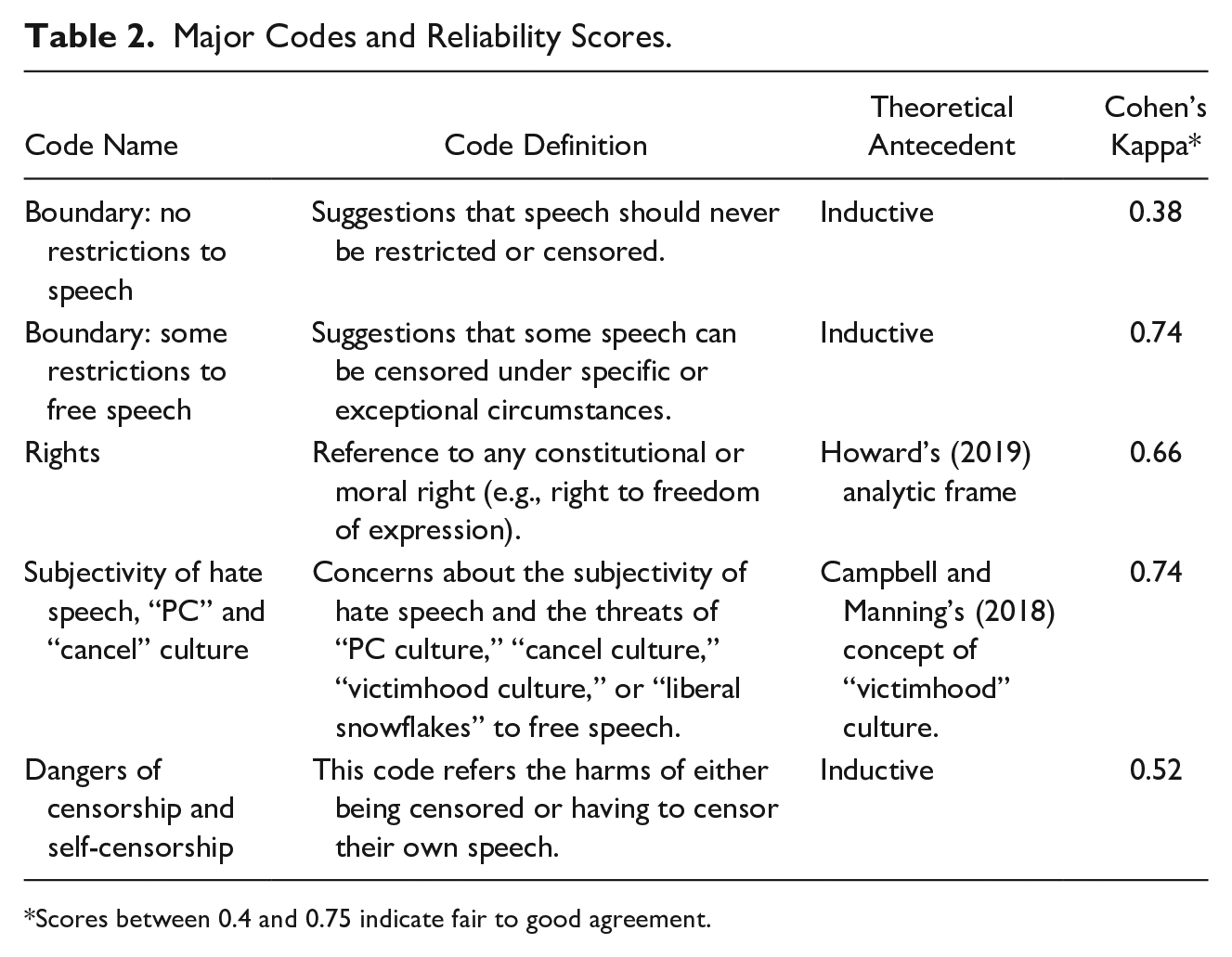

Subsequently, I recoded my “boundary” code to identify major themes. I refined these themes using heuristic strategies such as creating a storyboard, a 2 × 2 table, and other visualization techniques (Booth, Colomb, and Williams 2003; Swedberg 2014). The resulting principal codes are outlined in Table 2 below. Finally, I analyzed the hidden posts abductively to see whether these hidden posts contributed to or contradicted the themes already identified in the analysis of visible posts. Abduction involves looking for “surprises” in the data using the logic: “The surprising fact C is observed. But if A were true, C would be a matter of course. Hence, there is a reason to suspect that A is true” (Pierce 1934, 117; Timmermans and Tavory 2012).

Major Codes and Reliability Scores.

Scores between 0.4 and 0.75 indicate fair to good agreement.

An independent researcher coded five of the major codes across 20% of the data to ensure reliability across coders, and intercoder reliability tests were performed. The second researcher was trained on the analytic framework of the study. Once the test was complete, Cohen’s Kappa scores were calculated (Table 2). Many have suggested that this statistical test is ideal for content analyses because it considers the possibility that the agreement between coders could be due to chance (Lombard, Snyder-Duch, and Bracken 2002; Neuendorf 2016). In general, the results suggest fair-to-good agreement using the criterion proposed by Ellis (1994).

As noted above, following my analysis of the five selected threads, I collected ethnographic data through continuous observation of the forum. More specifically, I collected interesting, surprising, confirmatory, and popular posts from different years using NVivo’s NCapture feature to compare dominant themes and the nature of interactions (e.g., debates, conflicts, grievances, positive exchanges) over time. To analyze the ethnographic data, I documented my observations by creating memos over several weeks, which I also analyzed abductively to generate insights.

Limitations to the Study

This study has several limitations worth noting. First, given the nature of the virtual ethnographic method, I did not ask Reddit users targeted questions regarding their perspectives. It is then possible that the threads selected for analysis guided Reddit users toward expressing certain perspectives that are not reflective of their true beliefs. I was also unable to analyze nonverbal behavior during interactions that may have been indicative of peoples’ emotional states or the resonance of certain statements. Second, given that users are anonymous, I could not conduct analyses on potential relationships between demographic characteristics and the different perspectives identified or examine how these demographic characteristics compare with those of the general population. Third, as with any qualitative study utilizing a purposive sampling technique, I cannot determine whether the individuals in the subreddit are different from or similar to those belonging to other free speech communities. The views presented in the study are thus limited to users of this forum and cannot be generalized to populations outside of it. In particular, this forum is likely dominated by young males from the U.S. (although the youngest generations of internet users are probably more likely to use other platforms such as Instagram and TikTok). While the forum is technically open to anyone, some views are excluded because of aspects of the forum itself, which I discuss in the results section.

Despite these limitations, the methodological approach used in this study provides a unique window into an online community dedicated to free speech; the contentious nature of this debate makes this anonymous forum an ideal location to gain insight into the opinions held by free speech proponents. It is also worth noting that the threads analyzed were selected because they discussed abstract ideas about free speech and hate speech, which allowed me to better understand their idealized conceptualizations of free speech and its boundaries, as well as the beliefs and values they hold. Abstract values can have important implications for moral behavior, social action, and policy positions (Feldman 1988; Miles 2015). Still, it is possible that examining more concrete cases would yield different conclusions regarding the negotiation of boundaries, and comparing how people respond to abstract versus concrete cases could be the subject of future research. In the following sections, I thematically synthesize findings from all stages of the project (i.e., analysis of posts and ethnography).

Results

Subreddit Rules and Moderation

As of October 2020 (at the start of data collection), the subreddit had a list of clear rules visible on its front page that state that posts should be about free speech, and avoid shitposting, 1 ranting about moderators and users, posting memes, and defending the indefensible. These rules can be contrasted with the ethos of other self-proclaimed free speech websites, such as the website “Gab,” which openly welcomes users who have been banned from other websites and “champions free speech, individual liberty, and the free flow of information online” (Gab n.d.). The subreddit also has a list of “axioms,” which state that the First Amendment to the U.S. Constitution does not define free speech. The axioms then refer to the U.N definition of free speech, which reads: “Everyone has the right to freedom of opinion and expression; this right includes freedom to hold opinions without interference and to seek, receive and impart information and ideas through any media and regardless of frontiers” (United Nations 1948).

It is noteworthy that by stating that the First Amendment does not define free speech, moderators appear to be inviting discussions that challenge or expand upon its definition of free speech (the First Amendment does restrict certain speech types, including threats, fighting words, 2 libel, and defamation). Given the international userbase of Reddit, we would expect users to have their own definitions of free speech inspired by constitutional documents, court cases, or other legal documents from their home countries, such as the Canadian Charter of Rights and Freedom (in Canada) or the Lange v. Australian Broadcasting Corporation court case (in Australia). Yet, as I discuss later, views that were not in line with the First Amendment were nearly absent from the forum.

Additionally, after the initial data collection (sometime between October 2020 and March 2022), an additional clause was added to the rules and axioms listed above, reading “‘Censorship’ is not a value judgment: removal of material is sometimes the best course of action.” And indeed, moderators seem to engage in occasional censorship, as suggested by several other threads. For example, one of the “pinned” posts on the subreddit, written by one of the most active moderators, reads as follows:

I’m going to start banning people for misrepresenting the right to free speech. Too often on this subreddit have I seen gross misrepresentations of the right to free speech. The right to Free Speech is not the First Amendment to the U.S. constitution. The first amendment provides limited protection for free speech by disallowing the government from restricting it, but the first amendment does not define what free speech is. Randall Munroe is to a large extent to blame for this belief, and his widely quoted XKCD comic [see: https://xkcd.com/1357/] has damaged the idea of free speech immeasurably. Anybody that expresses a belief that free speech can only be restricted by the government will be banned from this subreddit under the long-standing Rule 7.

The linked comic suggests that the First Amendment to the U.S. Constitution does not protect a person from criticism, being yelled at, boycotted, banned from a social media website, or having their show canceled. In essence, the moderator is suggesting that the concept of free speech extends beyond the government and that social, institutional, or material sanctions can also constitute threats to free speech.

Although most subreddit members would be expected to agree with the moderator’s criticism of the comic, several hundred comments criticized the moderator’s threat of being banned. Many asked whether they would get a warning and claimed that the moderator’s stance on censorship is ironic given that they are on a subreddit dedicated to free speech. Although the moderator appears to be genuinely threatening users’ community membership at first glance, other commenters hypothesized that the moderator was acting facetiously. The moderator does, in fact, state that they are joking (in one of the earlier, buried comments), writing, “Of course it’s a joke! But how far do you think I’m going to take it?” in response to someone’s question about the moderator’s seriousness. Yet, confusion and anger permeate the thread, which the moderator does very little to assuage, for example, responding to one comment, “Actually it’s more fun just to ban first and ask questions later. But the existence of capricious and arbitrary censorship teaches a valuable lesson!”

Similarly, the rules and axioms described above are outlined in a separate thread from December 2018, entitled “This subreddit’s rules have been documented,” posted by the same moderator. This post was also met with heavy criticism from users. For example, one user stated that “free speech is all or nothing” and that the moderators are overly censorious (unlike those, the user claims, in the r/libertarian subreddit). Again, the moderator responds puzzlingly by saying, “I’m afraid we’re going to have to destroy free speech to save it,” which is also met with anger and confusion. Even if the moderator is not, in fact, censoring users for their views, the moderator’s provocative stance creates uncertainty, confusion, and insecurity among members about what the policies of the rules actually are and how they will be enforced.

Beyond these posts, ideological tensions between the users and the moderators are evident throughout the forum. Based on several posts from the moderators, it appears that some of the more active moderators are politically left-leaning or, at the very least, sympathetic to the left, in contrast to the forum’s more vocal conservative userbase. For example, in one thread, a commenter suggested that the subreddit should engage in more left-wing analysis, to which a moderator responded, “I wish that more than one in ten people in here knew what that meant.” Indeed, a large proportion of the userbase appears right-leaning, as suggested by the content of many comments, including explicit identification with the right (e.g., “I certainly am right leaning”), criticism of the left (e.g., “They’re [people on the political right] still more reasonable than the other guys!”), and claims that the subreddit is dominated by the right (e.g., “I know this sub is conservative leaning and I came to bust the safe space/bubble. Hopefully this is a learning lesson for intellectually honest free speech enthusiasts, such as myself.”).

It is, of course, impossible to confirm how moderators monitor and delete posts on the subreddit. The moderators and the users seem to agree that removing content online is censorship, yet, as the moderators suggest, censorship might sometimes be acceptable. Some users seem to think that the forum has had problems with “memes” and “trolls,” which moderators have taken a heavy-handed approach to remove, yet I observed many comments that violated the subreddit’s rules and were not deleted or flagged (e.g., bigoted language). Others had been flagged but not deleted. It seems that the rules are either not strictly enforced or too difficult to enforce, given the growing popularity of the forum and the low number of moderators. Overall, though, given the perception (even if it were inaccurate) that most commenters on the subreddit are right-leaning, left-leaning individuals interested in free speech may eventually turn to other online communities, leading the forum to become more ideologically unified in a self-reinforcing way. And the moderators’ behavior, as well as the official rules and “pinned” posts on the subreddit (which signal what the community norms are and which topics are important), may dissuade some people from posting if they worry that they will be banned or criticized for having views that fall outside these norms and guidelines (cf., Noelle-Neumann 1974).

The U.S. Context

In my analysis of the five selected threads, I found that most comments were made with the presumption of an American context. Members described free speech in ways aligned with negative liberty (i.e., freedom from interference) instead of positive liberty (i.e., right to self-actualization) and supported the U.S.’s First Amendment to the Constitution’s definition of free speech, either explicitly or implicitly. More specifically, users of this subreddit were, by and large, united on the belief that hateful speech should be legally protected, particularly at the federal level, thus understanding freedom of speech in an absolutist sense. Illustrative of this, when one user asked another if they considered hate speech to be legal, the user responded, “most of us here do. That’s the main idea of this subreddit.” As such, they did not believe that hate speech should be outside the boundary of free speech.

Among all 418 posts analyzed, there was only one discussion in which users debated a definition of hate speech that diverged from the First Amendment to the U.S. Constitution. Interestingly, this discussion occurred in one of the hidden threads (i.e., a thread that had been downvoted enough to be removed from visibility). In this thread, several subreddit users debated whether the definition of hate speech includes threatening language. In the comment that received the most downvotes of the entire sample (–15 points), one user suggested that hate speech is defined as “abusive or threatening speech or writing that expresses prejudice against a particular group, especially on the basis of race, religion, or sexual orientation,” a definition the user claimed to have found through a Google search of the term “hate speech.” This definition is in fact similar to what some countries, such as Canada, consider punishable speech. This comment led five other users to argue that hateful speech is constitutionally protected (as it is in the U.S.). Given that posts become hidden when downvoted, the few who questioned the First Amendment’s stance on speech were, in essence, excluded from these debates; Reddit’s externally imposed algorithm served as precisely the type of censorship tool that many free speech proponents oppose.

Surprisingly, though, a minority of users brought up the First Amendment to make what initially seemed to be an argument against free speech. Specifically, these users argued that, while hate speech is constitutionally protected, private censorship is also protected. As such, they argued that censorship is an expression of freedom of speech. One user maintained, “This is an opinion you are expressing (your speech). The platforms removing your opinion from their private property is their response (their speech). You’re welcome to dislike it, but that doesn’t get you anywhere.” Another user advised, “Choose the venues where you say things more carefully and use privacy settings online. Sometimes a degree of self-censorship is necessary.” While these types of comments were subjected to scrutiny, the subreddit rules actually highlight this legal fact (i.e., within the U.S., private entities can censor speech otherwise protected by the First Amendment). One user noted this, “It’s literally in the rules of this very sub. It’s also the law in the U.S. Obviously you cannot be forced to say things you don’t want. . .. Forcing others to host your speech on their private property is unconstitutional or illegal, depending who does it.” Another user even downplayed the negative material impacts of censorship, suggesting that “Your job firing you is not the same as the government stopping or limiting your speech.”

Growing Polarization Over Time

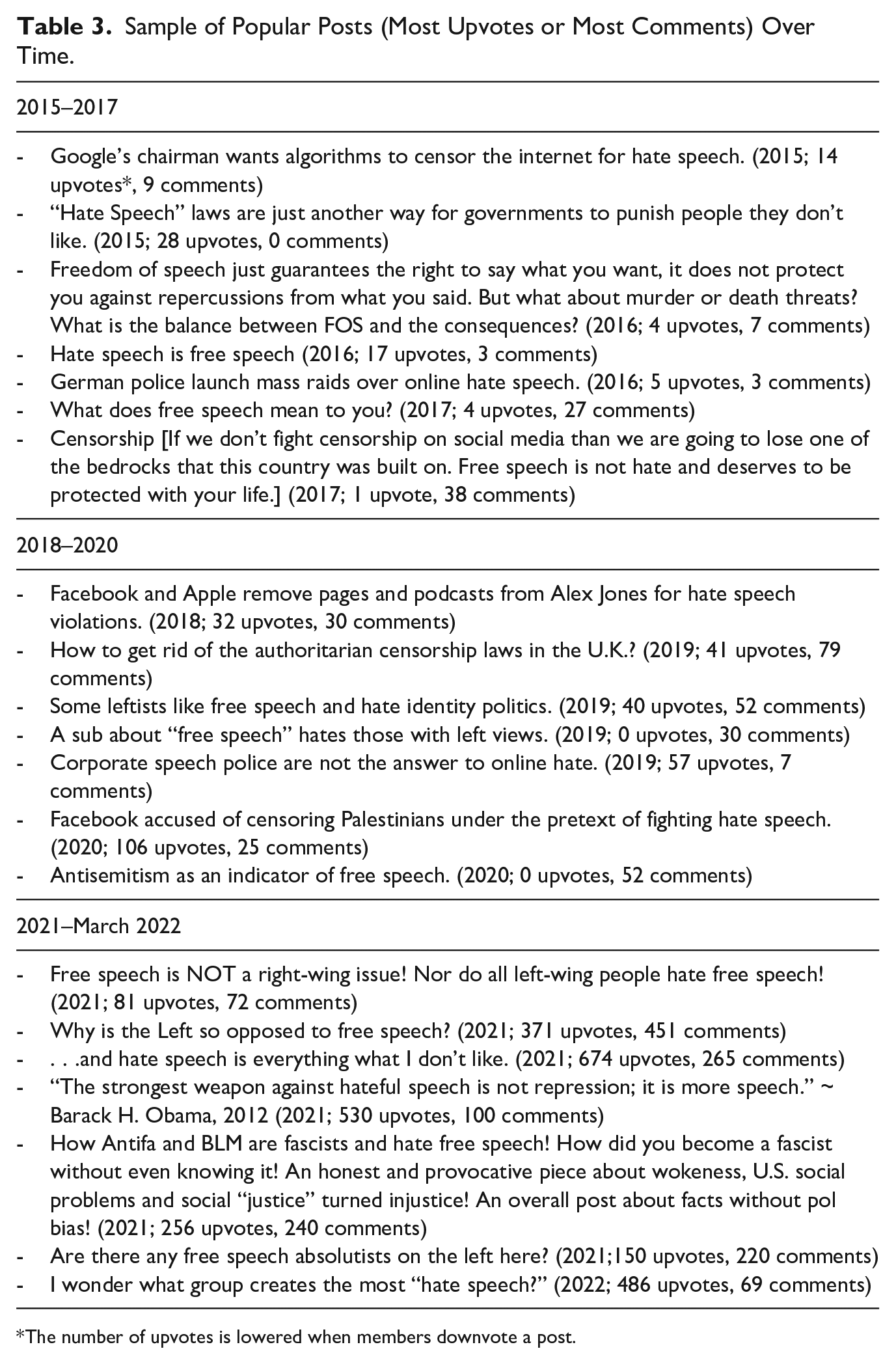

As previously noted, the r/FreeSpeech subreddit grew tremendously starting around November 2018. I looked at posts going back to 2015 to examine changes over time. First, it should be noted that it is unclear exactly what the catalyst was for the large influx of members around November 2018. While the polarizing U.S. midterm elections happened around then, very few posts discussed the elections. Overall, though, the subreddit appears to have become more polarized over time, particularly in the latest years of data collection (2021–2022). Before 2018, for example, most posts were nonpartisan and related to issues of censorship in Big Tech (e.g., Google banning hate speech). Several posts also related to the censorship of anti-Israel speech, the moral value of free speech and how hate speech bans are not effective (e.g., “why hate speech laws have more in common with fascism than democracy”), and free speech issues in other countries (e.g., Japan, Canada, and Germany). Very few posts related to the “culture wars” in the U.S., and very few posts presented the issue of free speech as a left-right partisan issue. In general, earlier posts received few comments, if any. In contrast, posts after 2018 were more politicized and presented free speech issues as an issue divided along ideological lines, for example, “Why are so-called free speech loving conservatives silent whenever Trump goes after SNL & ‘The Media’” and “Why are so many leftists trying to abolish free speech?” and “I wonder what group creates the most ‘hate speech?’” Please see Table 3 for a selection of popular posts over time (including year, number of comments, and number of upvotes).

Sample of Popular Posts (Most Upvotes or Most Comments) Over Time.

The number of upvotes is lowered when members downvote a post.

This left-right divide was especially visible with respect to “silences” (Kavanaugh and Maratea 2020) about traditionally leftist issues, such as book banning in U.S. schools. As Kavanaugh and Maratea (2020, 11) argue, dynamics of the online environment, such as moderation, censorship, and popularity algorithms, can restrict the liberatory potential of a platform as users lose control over the visibility of their words. Silences can then be an important opportunity for examining how features of the online environment might be shaping and restricting the textual material that does remain available, and whose views have been excluded. Interestingly, there were very few posts on banned books at all (approximately 20 from February 2021–February 2022), despite significant news coverage about conservative-led book banning and burning in U.S. liberal or left-leaning media during that time period (e.g., Goldberg 2021; Wilson et al. 2021). And among the posts that did discuss banned books, for example, the post, “Bad Takes: Even as we celebrate Banned Books Week, Texans keep asking for school board censorship” only one person responded, and this was in defense of censorship, arguing that it was reasonable to limit children’s exposure to certain ideas. However, there were some exceptions; several users who identified as being on the political right were also critical of book bans and burning. For example, one user wrote, “Burning books is simply ridiculous. I certainly am right leaning, and I also am opposed to certain things being taught in a public school curriculum, but I am opposed to burning books, it is a failure in my eyes” while another wrote, “These are the fringe for sure, don’t be fooled into thinking we’re all about that.”

As noted earlier, the subreddit also appears to be increasingly dominated by individuals on the political right, and there were several posts expressing resentment about this right-wing dominance (e.g., “A sub about ‘free speech’ hates those with left views”) or seeking to connect with others on the left (e.g., “Are there any free speech absolutists on the left here?”). It could be that this right-wing bias is mainly driven by U.S. users and not those from other countries, given increasingly popular messaging in U.S. right-wing media about free speech issues, cancel culture, and a politics of grievance (see Hochschild 2016; Kidder and Binder 2021; Maratea 2008; Marcus 2021). Increasing polarization on this subreddit also coincides with growing extremism and polarization—as well as a pervasive culture of “victimhood”—across the political spectrum both online and offline (Lerner 2022; Rozado and Kaufmann 2022).

Justifying Absolutism

Despite users’ seeming consensus around the First Amendment and the absence of hate speech laws in the U.S., members used a range of justifications to support their absolutist positions. Some users did not seem to think of hate speech as a problem, emphasizing legal arguments instead of moral ones. For example, as one user argued “When people say hate speech is not free speech they are wrong, according to the highest judges of our land who hold seats meant to protect our rights.” Others echoed these statements, suggesting that hate speech is “imaginary” or “kinda like assault weapons. It doesn’t really exist outside of legislation.” And others emphasized that words cannot be harmful in the same way as physical violence. For example, one individual suggested that racial or other slur types are “just words, someone calling you a slur is not like someone assaulting you or whatever.” Another user stated that the U.S. courts do not care about “hurt feelings” and that “the people trust that the Supreme court protect our rights using logical reasoning not sensitive irrational feelings, in order to prevent both Anarchy and Biased laws.” Some even used hate words in what appeared to be “displays” of their freedom of speech, for example, by using racial slurs or insults directed at LGBTQ+ communities, demonstrating a sense of entitlement and immunity within the space. In discounting the emotional harms associated with hateful speech—particularly for people in marginalized groups—and using language and provocations that would ordinarily be socially sanctioned in the larger public sphere, these users “weaponized” the unlimited free speech that the forum claims to provide (Bangstad 2014; Eschmann 2019; Scott 2018; Sultana 2018). As in many other online environments, covert racism becomes overt, or “unmasked,” on this forum, with implications offline: racial dynamics online can impact how people understand and respond to racial dynamics offline (Eschmann 2019, 433).

Taking this argument further, some members believed that people should not be held responsible for the consequences of their speech, even if these consequences are violent. Illustrative of this, the two most upvoted comments among those analyzed are as follows: “In a perfect world even a call on violence is free speech, but violence itself is forbidden” (upvoted 53 points), and “Free speech is always ‘free speech’ without restrictions” (upvoted 42 points). Others debated where responsibility should lie if an individual murders in response to someone else’s request. As one user asked, “If I say something that you interpret as me asking you to kill someone, how am I responsible for you going and killing that person (especially if that’s not what I meant)?”, later suggesting that mobsters following orders “can just as easily choose to refuse.” Many agreed with this statement, although others disagreed, for example, by arguing that Hitler was indeed responsible for Nazi soldiers’ actions. To some users, then, only individual actions matter, not words, illustrating a more individualistic and extreme view of personal responsibility and agency, which may be shaped by aspects of American culture (Bellah et al. 1985).

In contrast, other users justified their absolutist position by arguing that censorship is inherently undemocratic. For example, one user argued that hate speech, while harmful, is an expression of dissent within tolerant societies, stating, “Now, the reason why I think that hate speech should be protected is that, in societies built around the ideals of tolerance and diversity, hate speech is dissent. A society in which intolerance is not expressed is not a tolerant society. One in which intolerance is dealt with in democratic ways might be.” The same user then suggested that those responsible for defining the limits of free speech should consider the harms of hate speech and how it grows, but questioned the desirability of hate speech laws, rhetorically asking, “do we really want to live in a so-called ‘democratic’ society in which the only thing keeping such horrors at bay is not letting people speak? . . . For free speech to exist, we must tolerate the existence of hate speech.” Instead, users argued that they would prefer to come to their own conclusions about whether different views “hold merit,” thus advocating for a “marketplace of ideas” position on free speech (Chemerinsky 2018).

Similarly, some argued that hate speech is too subjective to regulate effectively, particularly at the federal level or in the public sphere (as opposed to private settings), where leaders could abuse their power to censor. For example, one user asked: “How are we going to determine what hate speech is?” arguing that, with hate speech laws in place, any person of power would have the authority to send individuals to jail based on their subjective understanding of hate speech. Similarly, one user argued, “If you want to criminalize speech while ‘your guy’ is in power, you have to be OK with letting ‘the other guy’ restrict your speech.” Some referred to then-U.S. President Donald Trump, suggesting that restrictions on any speech would be taken advantage of by Mr Trump as a “tool for power” to avoid political speech and dissent. Similarly, another stated, “I understand that hate speech upsets people and why it might seem attractive to some people to prohibit it. They just don’t understand where it would be leading. It definitely wouldn’t be good.” In this way, these users demonstrated what is often referred to as a “slippery slope” argument. A slippery slope argument is defined by its logical leap from an acceptable situation (e.g., a reasonable policy decision) to a dangerous, unacceptable outcome (e.g., the collapse of moral society) (Walton 2015). Yet, while slippery slope arguments are typically understood as a logical fallacy, as Walton (2015) argues, they can be reasonable in some cases depending on the specific circumstances, for example, if the agent controlling the dreaded outcome could plausibly lose control.

Several members also expressed disapproval of political correctness (PC) culture, particularly on the political left, and its potential impact on discourse. One user described how, on a separate subreddit, they had observed a person say that there is no freedom of speech in the U.K. because of PC culture, and then “there was probably more than 20 people saying if you have been told you can’t say something it must be because your a cunt and were saying nasty things.” The user argued that no one had bothered to ask the individual what types of things they had been talking about before concluding that they should not be talking about it. Another user noted that U.K. subreddits are “the worst” for this behavior and that “it’s not possible to have a discussion about freedom of speech, because anyone who even wants to discuss the concept must be ‘bigoted’ in some way.” Similarly, one user suggested that supporting “total freedom” is now considered the new “Nazim” by the political left, while others claimed that “hate speech is just speech that Liberals hate.” Some believed that young people drive this extremist PC culture. For example, as one user stated, “we’ve got an entire generation that has been conditioned and encouraged to think that being offended is the same thing as being a victim.” It should be noted, though, that concerns about PC culture have been around for quite some time: as Loury (1994, 428) wrote almost 30 years ago, “Political correctness is an important theme in the raging ‘culture war’ that has replaced the struggle over communism as the primary locus of partisan conflict in American intellectual life.”

In one particularly vivid story denouncing PC culture, one user, who claims to be a university student, described how another student attacked their conservative campus organization. As the user explained, this other student destroyed their “signs, table, buttons, stickers, and personal belongings all in the name of ‘hate speech isn’t free speech.’” Although it is impossible to validate this claim, this comment brings up the question of whether some conservative or centrist individuals within predominantly liberal settings (e.g., on left-leaning college campuses) feel excluded from the dominant ideology or moral worldview, and as such, interpret the campus environment as hostile. This hostility may drive the high rates of self-censoring behavior reported among college students (College Pulse 2021; Knight Foundation-Ipsos 2022), resentment, and perhaps ultimately, prompt participation in communities that claim to promote ideological plurality, such as free speech groups. This explanation would be consistent with evidence that individuals’ moral values (e.g., the importance attributed to free speech) are not static but are triggered by social situations and one’s identity in relation to others in the environment (Luft 2020).

Other users were more concerned about censorship on social media websites and its impact on the world offline. As one user explained, Twitter, Reddit, Instagram, and other social media websites “all openly and unashamedly remove people who advocate for anything with right leaning ethos or defend constitutional rights,” while allowing individuals from the extreme left to “do whatever the hell they want, calls to violence, racism, spread misinformation as fact.” Another user spoke on behalf of the subreddit’s members, stating that “Most of us here are speaking out against what is simply an overt attempt to control people’s online activity in the hopes of influencing society at large,” suggesting that, while there’s still a difference between reality and the reality of “Twitter mobs,” it “won’t always be like that.” While these users were not worried about hate speech laws and policies per se, they feared that a growing culture of internet censorship and mob justice would bleed into the offline world.

Conclusions and Future Directions

In this study, I aimed to describe the moral discourse of ordinary individuals embedded in a free speech community. I examined how these individuals understand the boundaries of free speech and hate speech, how people justify their arguments, the issues most salient to them, and what might motivate them to participate in the community. I did this while considering the social context under which these dynamics unfold: the r/FreeSpeech subreddit online environment. I found that users described hate speech as being within the bounds of protected free speech and assumed a U.S. context. Comments that differed on these points or even contemplated other perspectives were typically downvoted or criticized. Still, users expressed a range of moral and legal arguments to justify this absolutist position, highlighting the nuances in contemporary free speech discourse within online spaces. Building on Howard’s (2019) claim about the inadequacy of the “balance of moral values” frame around free speech issues, my findings suggest that the debate does not represent a simple opposition between those who support justice versus liberty, the left versus the right, or any other simple dichotomy.

Overall, the data suggest that users are motivated by their desire to advocate for a more absolutist, or negative, view of free speech as a principle, express concerns about authoritarianism and censorship, air grievances about what they perceive to be an overly punitive PC culture, connect with like-minded individuals, and philosophize about free speech and its practical implications. Several brought attention to the perceived ambiguities and injustices involved in censorship, fearing a “slippery slope” of speech restrictions. Others appear motivated by a desire to galvanize a perceived ideological other or use the space to test the limits of their free speech through provocations using denigrating language.

My results also suggest that the forum has become increasingly polarized along left-right ideological lines over time, particularly in the most recent years (i.e., 2021–2022) of data collection, and increasingly dominated by right-wing views. This last finding is in line with recent evidence connecting right-wing ideology to free speech absolutism, likely driven by messaging from right-wing organizations and media outlets (Kidder and Binder 2021; Zannettou et al. 2018). However, there were some exceptions to this as several commenters raised concerns about authoritarianism on the political right. Lastly, through the downvoting feature present on the Reddit website, the moderators’ provocative stance, as well as the increasingly hostile environment of the forum, people on the political left, people from outside of the U.S. or whose views diverged from the First Amendment, and people who disagreed with the moderators may have been pushed off the forum, leaving only a relatively narrow window of acceptable views.

One ideological position that appears to connect many of the members across time is libertarianism. The libertarian-authoritarian ideological dimension is a second dimension, internally stable and independent of the right-left one, on which British voters’ fundamental political values can be mapped (Feldman 1988; Heath, Evans, and Martin 1994; Tilley 2005). Libertarianism on the left and right can look very different, but both are centered on the rights of individuals to pursue the “good life” (Vallentyne 2000). It could be that a libertarian ideology, as well as a distrust of the government and its misuse of censorship, is more strongly tied to absolutist perspectives on free speech than conservatism. (Although, interestingly, some members of the subreddit explicitly appealed to the law, which would be inconsistent with a libertarian ideology.) The findings from this study raise questions about the structural and life-course factors that may lead some individuals to adopt more libertarian attitudes or distrust the government, which could be explored in future studies. Research on forums that are not U.S.-dominated is also needed.

To conclude, the findings from this study provide a humble starting point for further investigation into the first-hand perspectives of individuals on all sides of this debate and the moral language they use (cf., Bellah et al. 1985; Jensen 1998; Martin 2011). Principles and values such as freedom of speech, liberty, justice, and dignity cannot be divorced from the meanings and contexts in which they emerge (Norton 2013). Any research on moral action suffers when it does not consider the wide set of values, identities, meanings, practices, situations, and environments that make up the moral, social world (Abend 2011; Hitlin and Vaisey 2013). Individual and situational-level experiences likely interact with structural factors and media narratives to shape people’s moral worldviews: what they view as the most pressing concerns, how they weigh and interpret different moral values, and why they choose to act on a particular issue. Understanding these moral worldviews from the perspective of those who experience them, and investigating the factors that shape them, is crucial for reducing moral conflict and polarization on a wide range of social problems.

Footnotes

Acknowledgements

I am deeply grateful for the invaluable feedback I received from Amin Ghaziani, Seth Abrutyn, Howard Ramos, and Ryan Jamula on earlier drafts of this article. I also thank Yasmin Koop-Monteiro and Adam Pepi for their helpful insights at all stages of this project.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The author received support from the Canadian Social Sciences and Humanities Research Council (SSHRC) for the research, authorship, and/or publication of this article.