Abstract

Personality self- and informant-reports have been ascribed complementary value based on the asymmetric knowledge of the two perspectives. However, this study is the first to investigate what personality (item) content is reflected in the shared and unique components in multi-rater personality judgments. In two large data sets (Sample 1: 664 targets/1,615 informants; Sample 2: 478 targets/1,434 informants), we used latent variable models to separate judgments into variance that is shared across targets and informants (the Trait factor), unique to self-reports (Identity), and unique to informant-reports (Reputation). Then, we predicted the personality items’ loadings for each factor from the items’ content. This included items’ affective, behavioral, cognitive, or desire-related content, observability and evaluativeness, and centrality to identity or reputation. We found that Trait consensus was generally promoted by items reflecting observable, behavioral, but also affective content. Unique self-perceptions were captured especially by cognitions and non-observable content. Evaluativeness had inconsistent effects across samples. Similarly, unique informant-views reflected different content across samples. Both may depend on the types of informants or the available item sample. These insights build the foundation for leveraging the power of multi-rater perspectives on personality for advancing theory and measurement across different perspectives.

Plain language summary

Someone’s personality can be judged from two different perspectives: by the person themselves or by someone else (e.g., a friend). Self-judgments and judgments made by others show some agreement, but it typically is moderate: That is, there are some things about our personality that everyone agrees on, but there are also things that only we think about ourselves (our identity) or that only others think about us (our reputation). This may have different reasons: For example, other people can only observe what we do and say, whereas we also know what we are thinking and feeling. In this study, we wanted to find out what content makes up the different perspectives of the self and others on someone’s personality. In two samples, people judged their own personality and were also judged by 1–3 people from their social networks (informants) by answering personality questionnaires. The samples, respectively, included 664/478 people judging themselves and 1,615/1,434 informants. We found that self and others agreed more on questions describing personality traits that are observable from the outside or describe behaviors (e.g., “talks a lot”), but also emotions (e.g., “often feels blue”). For traits that cannot be easily observed, especially if they concern how we think (e.g., “believes they are better than others”), people tend to have more unique self-perceptions. There was no clear pattern for informants’ unique views: The content of a person’s reputation may depend on the type of informant (e.g., friend, coworker, or stranger). Understanding the shared and unique components in personality self- and informant-judgments is important for different reasons: Researchers may develop personality questionnaires specifically for self- or informant-judgments rather than assigning everyone the same questions in spite of their differentiated insights. It also helps decide whose perspective is relevant in a given context and how to integrate their value.

Introduction

Personality research has a long history of relying heavily on self-report questionnaires to assess personality traits (Robins et al., 2007; Vazire, 2006). Informant-reports, though having their own tradition in personality psychology, have mostly been used by researchers to bolster the findings of self-reports (e.g., Hofstee, 1994; McCrae & Costa, 1982; Norman, 1963). That is, the perspective of others has generally been treated as an alternate and interchangeable assessment method. In recent decades, however, discussion has increasingly turned toward understanding the unique and complementary value of informant-reports. In particular, self- and informant-reports may be uniquely suited to provide insights into different traits due to their asymmetrical access to different target information (e.g., Vazire, 2010; Vazire & Mehl, 2008). For instance, informants may be better poised for describing outwardly observable behavioral manifestations of a target’s personality, whereas targets may be better poised to evaluate patterns of internal affect and cognition. This multi-rater research not only furthers our understanding of personality judgments but also has implications for their application. For example, these insights position self- and informant-reports as appropriate predictors for different criteria that are aligned with their different perspectives.

Despite the possibilities offered by more recent multi-rater research, personality psychologists have not yet incorporated its insights when building personality assessments. Most typically, questionnaires are developed using self-reports, and informant-reports are created by simply rephrasing items from first- to third-person pronouns (McCrae, 1994; McCrae & Weiss, 2007). Such approaches do little to capitalize on the differentiated perspectives of self- and informant-reports (McCrae, 1994; Olino & Klein, 2015). This could include, for example, considering that self-reports may be better poised to answer items covering content that is less easily observable by outside perceivers (e.g., “trusts others” vs. “talks a lot”). Informant-reports, on the other hand, may offer more of a bird’s eye perspective on highly evaluative compared to more neutral item content (e.g., “completes tasks successfully” vs. “avoids crowds”). Additionally, researchers and practitioners are often interested in representing the discrepant views offered by self- and informant-reports (e.g., Kluemper et al., 2015). For example, this may be relevant in clinical assessments: a clinician may report on different questions in a structured interview than what the client self-reports on in a questionnaire, and discrepancies in the perspectives of self-, clinician-, and informant-reports may be part of a diagnosis. In some fields such as child clinical psychology, practitioners may be relatively more used to integrating multi-rater perspectives and including informant-reports. Such integration has, however, not been adopted in other applied settings or in personality psychology research at large. In addition, there are currently no systematic ways for assessing and scoring the shared and unique insights of self- and informant-reports contained in personality questionnaires (which could include developing questionnaires specifically for multi-rater applications). This, however, could be a promising approach to future personality assessment.

Integrating multi-rater perspectives into personality assessments requires answers to a more fundamental question: what personality insights are (a) shared by targets and informants, (b) held solely by the target, and (c) held only by informants? Personality research has acquired substantial knowledge on individual parts of this picture. This includes knowledge about factors that drive the moderate self-other alignment in person judgments that has been found and factors that are unique to either perspective. Yet, the unique and shared insights held by targets and informants have never been simultaneously disentangled from one another at the item level to see what type of personality content each reflects. That is, there is currently no evidence on what unique insights are contained in self-reports (or informant-reports)

This study aims to build the foundation for optimizing the measurement and harnessing the power of multi-rater perspectives in personality. To this end, we build on a recently introduced integrative approach to disentangle the shared and unique components in multi-rater judgments. Specifically, the Trait-Reputation-Identity (TRI) Model (McAbee & Connelly, 2016) uses latent variable models to separate multi-rater judgments into variance that is (1) shared across targets and informants (the Trait factor), (2) unique to informant-reports (Reputation), and (3) unique to self-reports (Identity). We apply this model to two relatively large samples of multi-rater personality judgments. Then, we evaluate what personality insights each component reflects by analyzing the content of items that contribute most to a given factor, examining items’ (1) affective/behavioral/cognitive/desire-related content, (2) observability and evaluativeness, and (3) centrality to identity and reputation. This is informed by the combined insights from personality and person perception research on impression formation and self-other differences. The following sections discuss the theoretical prerequisites in more detail, explaining what we know about self-other differences in judgment formation, how multi-rater perspectives have been traditionally viewed, and how the TRI Model can work as a framework to disentangle unique and shared insights in multi-rater judgments.

Self-other differences in personality judgments

“From inside” and “from outside” reflect the two qualitatively different points of view from which a person can assess someone’s personality. One core finding of personality psychology is that measures solicited from these two perspectives—self- and informant-reports, respectively—usually align meaningfully but moderately (Connelly & Ones, 2010; Connolly et al., 2007; Kenny & West, 2010). That is, although a target and their informants share some understanding of the target person’s underlying traits, the target may also have unique self-perceptions, and others may hold views about the target’s personality independent of the target (Letzring & Funder, 2021; Vazire, 2010; Vazire & Mehl, 2008).

To understand how and why self-other differences in personality judgments arise, it is helpful to picture the process of judgment formation (e.g., Brunswik, 1956; Funder, 1995; Kenny, 1994). When making personality judgments, perceivers typically rate the target on a set of items that capture different traits (e.g., the Big Five; Goldberg, 1990). To make such a judgment, the perceiver first needs some information about the target. For example, the perceiver may draw on their observations of the target that span across different situations. There, the perceiver gains access to cues such as different behaviors that the target displays or the target’s physical appearance (Kenny, 1994). Perceivers may then form a judgment from that information by assigning meaning to the cues in regard to the trait they have to judge and integrating them (Kenny, 1994). Notably, this model applies to both self- and informant-reports (i.e., both are subsumed under the term perceiver). For example, Penny may have observed her classmate Tina in many different situations in school. When she is asked to judge Tina’s friendliness, Penny thinks of Tina often smiling at her and greeting everybody she meets as positive indicators. Similarly, when Penny is asked to judge her own sociability, she may think of how often she meets with friends or how exhausted she feels after social gatherings. We focus on three important reasons why self-other differences may occur during this process: (1) self and informant have access to different information; (2) self and informant are affected by different evaluative biases; and (3) self and informant have different goals when providing judgments.

Information availability

Perceivers can only directly use the information about the target that is available to them, which differs systematically depending on who the perceiver is (Funder, 1995; Vazire, 2010). Specifically, anyone but the target is an outside observer, which means the information available to them is limited to cues which the target emits while the perceiver is present. Moreover, these cues have to be observable, that is, perceivable by a present outside observer (i.e., “availability”; Funder, 1995; Furr, 2009). In person perception, this includes two types of information: (1) the physical appearance of the target and their associated belongings (e.g., their clothes) and (2) behaviors displayed by the target in situations available to the observer (Kenny, 1994). Behaviors in the narrow sense include all verbal expressions and movements, and they are by definition observable (Baumert et al., 2017; Furr, 2009). As external manifestations of personality, behaviors are considered the most important source of information for person judgments by outside observers (Funder & Sneed, 1993).

However, personality is not just behavior but someone’s characteristic pattern of affect, behavior, cognition, and desire (ABCD; Baumert et al., 2017; Wilt & Revelle, 2015). In short, affect refers to how people feel, cognition to how people think, and desire to what people want (Wilt & Revelle, 2015). Thus, personality comprises internal traits that become observable only if the target manifests them behaviorally (e.g., by talking about feeling anxious or displaying a tense facial expression; Furr & Funder, 2007). The levels of observability can also vary within each ABCD-component. For example, some affective traits may be linked to various behaviors that manifest in many situations (e.g., anxiety expressed as pacing, sweating, and irritability), whereas others manifest rarely (e.g., individuals concealing depressive thoughts and feelings from others for decades). One relevant aspect here is also the level and context of acquaintance between target and informant. Specifically, close acquaintances may have observed the target in more and more intimate situations where they witnessed manifestations of thoughts or emotions otherwise kept internally by the target (e.g., Colvin & Funder, 1991; Funder, 1995; Vazire, 2010).

The target, on the other hand, is the one person with privileged (i.e., direct) access to a host of self-information including their own thoughts, feelings, and desires (Osberg & Shrauger, 1990; Vazire, 2010). Targets are also the only individuals to witness their personality in every situational context. Thus, they are able to observe, and even control, many of their behaviors. Compared to informants, however, targets do not have a full visual of their body. This limited salience may lead targets to be less aware of some of their own behaviors compared to simultaneous internal processes (DePaulo, 1992; Vazire, 2010). Subtle behaviors (e.g., minor movements/facial expressions) may even elude the target’s awareness.

In summary, there is a knowledge asymmetry where self and informants have access to different types of information: Informants rely on observable behaviors, whereas the self has privileged access to their own unobservable emotions, thoughts, and desires in addition to observing their own behaviors. Accordingly, self-other agreement should be higher for observable information because it is accessible to both the self and informants (Kenny, 1994; Vazire, 2010). Similarly, self-other agreement should be higher for behavioral than for affective, cognitive, or desire-related traits. This has been bolstered by previous research. Higher self-other agreement has been found for personality domains deemed to be more observable (e.g., Funder & Dobroth, 1987; John & Robins, 1993; Watson et al., 2000) and to reflect primarily behavior (e.g., Conscientiousness and Extraversion) rather than internal patterns (e.g., Neuroticism and Openness; e.g., Connelly & Ones, 2010; Pytlik Zillig et al., 2002). Thus, we expect the shared perspective in multi-rater personality judgments to reflect observable and behavioral content. On the flipside, there is limited knowledge about what is reflected in the unique perspectives if they are separated from shared insights. We may reasonably expect unique self-views to center around unobservable content which targets have exclusive access to. The access of informants to observable content, however, may lead to different outcomes: On the one hand, we anticipate informants to draw heavily on more observable information which could result in more unique views on observable traits. On the other hand, we expect that observable items will especially generate self-informant agreement and it is unclear whether and how much variance remains for informant uniqueness which could result in null or negative relationships of informant uniqueness and observability.

Evaluative biases

If information is available to the perceivers, the next question is if and how they will use it to make their judgment. In this stage of cue utilization, perceivers may detect and utilize available trait information (Brunswik, 1956; Funder, 1995). Beyond being impartial perceivers of cues, however, individuals are susceptible to evaluative biases that impact how trait information is detected and utilized (Funder, 1995; Vazire, 2010). Specifically, perceivers may have an overall attitude toward the target that can range from positive to negative (Leising et al., 2015). Notably, this is true for both informant-reports of another person and perceivers’ self-reports. Personality traits (and their associated items) vary in their social desirability, and traits that are especially desirable (e.g., “intelligent”) or especially undesirable (e.g., “immoral”) are likely to elicit more evaluative bias than traits with more neutral social desirability (e.g., “quiet”; e.g., Edwards, 1953; John & Robins, 1993; Leising et al., 2015). Positively biased perceivers, for example, will endorse positive traits more and negative traits less (Borkenau et al., 2009; Leising et al., 2015). As highly evaluative (i.e., very positive/negative) items will be more strongly affected by these biases than more neutral items, “non-evaluativeness” is a property associated with greater judgmental accuracy.

Self-reports of personality are widely regarded as reflecting biases geared toward creating or maintaining a positive self-image. In particular, targets can self-enhance or self-protect by interpreting traits in a self-serving way, externalizing negative outcomes, or choosing self-serving comparisons (e.g., Alicke & Sedikides, 2009; Dunning, 1999; John & Robins, 1994). Notably, however, targets still vary considerably in their tendency to hold a more negative or positive self-image (Leising et al., 2015). Informants have sometimes been considered valuable based on the believed ability to judge evaluative information more objectively with their outsider perspective. However, this certainly does not mean informants are unaffected by evaluative biases: Close acquaintances, especially, will typically tend to like their targets which is reflected in the positivity of their ratings; strangers may take a comparably more negative or neutral stance (Allik et al., 2010; Kim et al., 2019; Vazire & Mehl, 2008). And even regardless of the target, perceivers have been shown to bring different levels of global positivity/negativity to their ratings (i.e., perceiver effects; e.g., Heynicke et al., 2022; Rau et al., 2021). The key point here is that if self and informants are affected by different evaluative biases, or by similar biases but in differing degrees or ways, they will agree more on non-evaluative information because it reflects those biases less (Vazire, 2010). Accordingly, previous studies tend to show lower self-other agreement for personality dimensions considered to be more evaluative (e.g., Agreeableness; Connelly & Ones, 2010; John & Robins, 1993).

Apart from the (unconscious) evaluative biases of perceivers, the evaluative nature of traits may also fuel more conscious impression management efforts by the target. Socioanalytic theory, for example, states that targets may aim to create a certain impression in their self-reports (Hogan, 1996; Hogan & Blickle, 2013). Moreover, targets may use impression management tactics in the information they make available to outside observers (Leary & Kowalski, 1990; Schlenker, 1980) by enhancing or limiting the manifestation of certain behaviors, thereby exacerbating the informational asymmetry based on its evaluative nature. Notably, both unconscious evaluative biases and conscious efforts to control the display of evaluative information would lead to less consensus on evaluative traits.

In the present study, we focus on social desirability as one prominent bias in personality judgments. Other biases may affect judgments beyond that: For example, targets may tend to maintain a consistent perception of themselves by ignoring inconsistent cues or focusing on intentions rather than behaviors (e.g., Funder, 1999; Sadler & Woody, 2003; Swann, 1981). This would affect which cues are translated at all into self-reports.

Perspective-dependent trait centrality

Besides availability or evaluativeness, traits may also differ in how central (i.e., relevant) their content and its implications are to targets and informants, which may in part depend on the different goals the different perceivers pursue when making judgments. Funder (1995) proposes the main purpose of judging others’ personalities is to predict the target’s future behavior. This allows perceivers to make decisions about their future with the target such as whether they want to continue to interact with the target. Following this perspective, informants should be interested in gaining and reporting accurate impressions of the target, but especially of traits that could affect the informant directly (e.g., traits with clear interpersonal consequences; Leising et al., 2014). Whereas accurately gauging the target and establishing an impression of them may be especially important for less intimately acquainted informants, well-acquainted informants may also be motivated by other goals. For example, informants may accentuate what they value about the target (i.e., why they have chosen to stay acquainted) which may in part depend on the informant’s own identity as well (see below). That is, in either case, there may be traits that informants consider to be more central to evaluating another person, and targets changing on those traits would strongly affect how the informants perceive them. Thus, we would expect more unique informant-views on traits that are more central to a target’s reputation.

In providing self-reports, targets may also have the general desire to appraise themselves accurately (e.g., Strube et al., 1986) and to convey their self-perceptions to others (Paulhus & Vazire, 2007). Following socioanalytic theory, targets may use self-reports to achieve a specific self-portrayal and thus reputation with others (Hogan, 1996; Hogan & Blickle, 2013). In any case, some traits will be more important to the target because a change on them would fundamentally alter their (intended) self-portrait, whereas other traits play a peripheral role to identity (Lee et al., 2009; Thielmann et al., 2020, 2023). We would thus expect more unique self-views on traits relevant to the target’s identity.

Summary

Differences in what type of personality insights the shared and unique perspectives of multi-rater judgments reflect may arise because (1) the self and informants have access to different types of personality information. Informants rely on observable behaviors emitted by the target, whereas the target has direct access to their (unobservable) affect, cognitions, and desires. (2) The self and informants tend to have evaluative biases, likely to cause their ratings to diverge. (3) Different traits may be central to the self and informants because of their implications.

These reasons may lead to differences in multi-rater perspectives related to the following characteristics of personality information: Their ABCD-content, observability, evaluativeness, importance for the target’s perspective, and their importance for outside observers. These characteristics are reflected by the person-descriptive items used to capture traits in personality questionnaires (Letzring et al., 2021). For example, an item may reflect a very evaluative and observable behavior (e.g., “insults people”) or a less well observable cognition (e.g., “has a vivid imagination”). Previous research has shown that such item characteristics can be rated by (relatively few) study participants with high reliability (Leising et al., 2014; Letzring et al., 2021; Wilt & Revelle, 2015).

Approaches to multi-rater perspectives on personality

The robust finding of the overlapping but distinctive perspectives of self- and informant-reports along with the myriad factors that may affect them have fueled long-standing debates among researchers on how traits can best be assessed and conceptualized. Broadly, three distinct perspectives have traditionally pervaded this discussion, each with different measurement implications.

First, some scholars argue that either self- or informant-reports reflect what they understand personality to be and thus should be the preferred reference point (e.g., Hofstee, 1994; Hogan, 1998; McCrae & Costa, 1982; Osberg & Shrauger, 1990). Under such formulations, either self- or informant-reports would be the more authoritative assessment. Second, some argue that more accurate personality measures come from averaging scores across self- and informant-reports to maximize the variance accounted for by the overlap in perceptions (e.g., Letzring & Funder, 2021). Although this will reduce the error variance associated with individual raters, it also treats any unique perspective of self- and informant-reports as error that will increasingly cancel out the more raters are averaged across. The underlying rationale is the same as when creating a scale score from multiple items: Averaging across multiple items produces a more reliable representation of a construct of interest. Item-specific variance is considered error and is increasingly averaged out as more items are included. Third, more contemporary scholars argue that self- and informant-reports contain asymmetries in knowledge about traits such that a preference for self- versus informant-reports should depend on which trait is being assessed and which criteria are being predicted (Vazire, 2010; Vazire & Mehl, 2008). For example, Carlson et al. (2013) found that personality self-reports were better predictors of internalizing personality disorders, but informants-reports were better predictors of externalizing personality disorders.

Arguably, however, the value of multi-rater perspectives hinges on knowing what personality insights each perspective—shared and unique—contains. This cannot be achieved with these three approaches but requires simultaneously considering both the shared and unique perspectives across self- and informant-reports. This fourth approach is closely related to the knowledge asymmetries perspective but extends it in such a way that it becomes possible to disentangle the unique and shared perspectives from one another allowing us to subsequently examine what content they respectively reflect. The most recent and extensive elaboration of this approach is the TRI Model developed by McAbee and Connelly (2016). The TRI Model presents an extension and fusion of existing traditions, integrating insights from person perception, classic trait personality, social cognition, and socioanalytic theory. The model builds on prior work such as the Johari window (Luft & Ingham, 1955) and the Self-Other Knowledge Asymmetry Model (Vazire, 2010). It presents an analytical framework that enables the empirical disentanglement of the shared and unique perspectives in multi-rater personality judgments and is thus the missing component to test our assumptions.

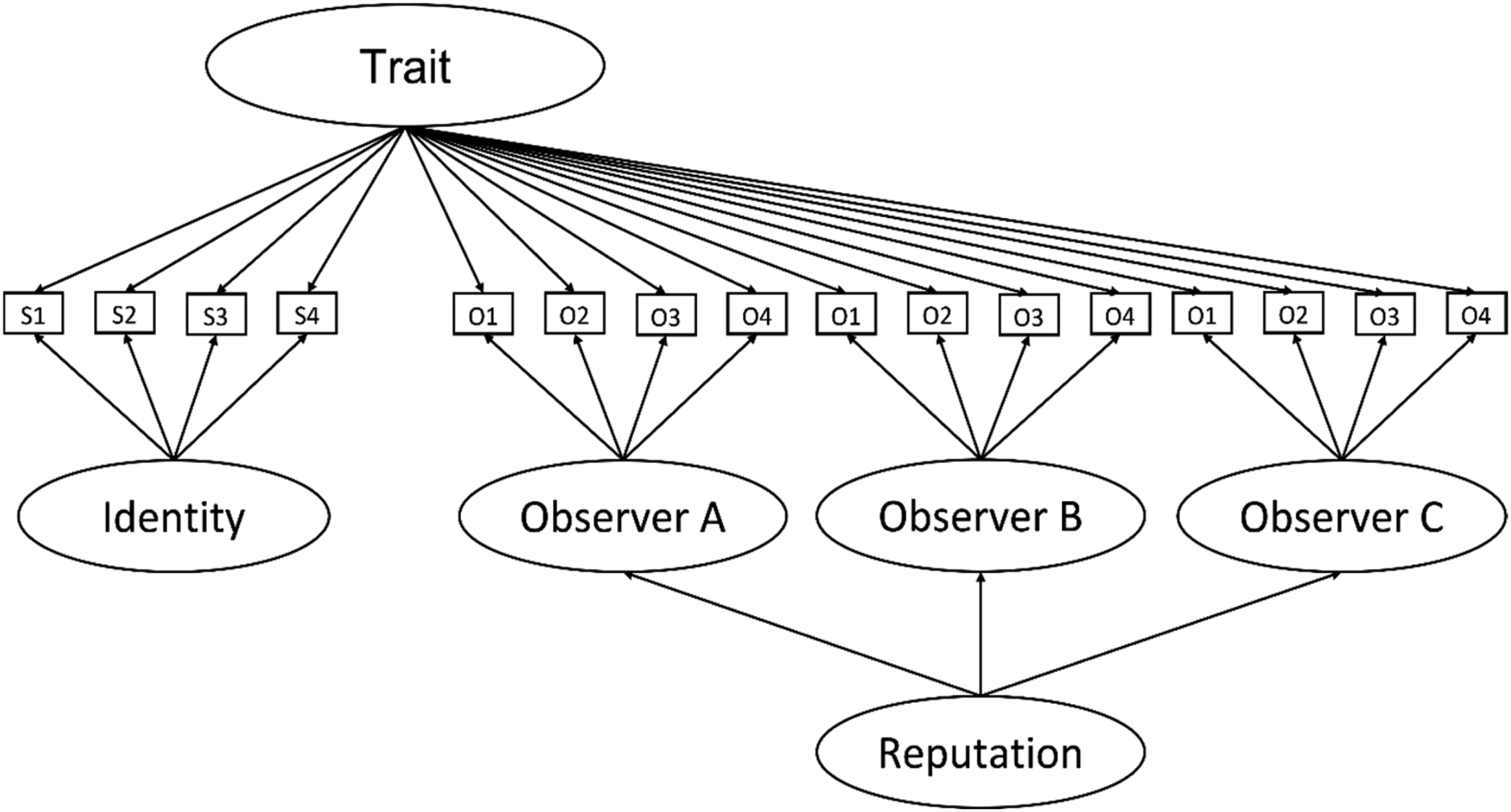

The TRI Model (see Figure 1) defines three different perspectives or areas of personality insights that can exist for a given target person. First, the The Trait-Reputation-Identity Model by McAbee and Connelly (2016).

The TRI Model is especially valuable as a methodological framework. Using latent variable modeling, it enables the extraction of Traits, Reputations, and Identity as latent factors by separating the variance of multi-rater judgments into shared and unique portions. This approach has proven to provide valuable insights in studies that have employed the TRI Model thus far (e.g., shedding light on the origins of gender differences in personality perceptions or the predictive value of reputations for job-related outcomes; Connelly et al., 2022; McAbee & Connelly, 2016).

The present study

The goal of this study is to investigate what defines Traits, Reputations, and Identity (i.e., the shared and unique contributions) in multi-rater personality judgments. More specifically, we want to investigate what personality insights each perspective reflects: (1) How do Traits, Reputations, and Identity differentially reflect content components of personality—namely, affect, behavior, cognition, and desire? (2) How do Traits, Reputations, and Identity reflect information depending on its observability and evaluativeness? (3) Do the respective unique contributions of the Identity and Reputation factors reflect information depending on its deemed importance for the target themselves versus for an outside observer?

We preregistered our expectations based on the rationale presented above (https://osf.io/gqmsf); they are as follows:

The Trait factor captures shared variance across self- and informant-judgments. Thus, Traits should reflect information that is available to and used similarly by self and informants.

T1: Information is more strongly reflected in the Trait factor the higher its behavioral component is. The Trait factor reflects behavior more than affect, cognition, or desire.

T2: The Trait factor reflects observable information and non-evaluative information more. The Reputation factor captures variance unique to informant-judgments. Thus, Reputations reflect information that is either exclusively available to and/or used by informants or that is used differently by informants than by the self.

R1: Information is more strongly reflected in the Reputation factor the higher its behavioral component is. Reputation reflects behavior more than affect, cognition, or desire.

R2: Reputation reflects evaluative information more.

R3: Reputation reflects information deemed more important for an outside observer. The Identity factor captures variance unique to self-judgments. Thus, Identity reflects information that is either exclusively available to and/or used by the self or that is used differently by the self than by informants.

I1: Information is more strongly reflected in the Identity factor the higher its affective, cognitive, or desire-related component is. Identity reflects affect, cognition, and desire more than behavior.

I2: Identity reflects items low in observability and items high in evaluativeness more.

I3: Identity reflects information deemed more important for the self. We do not define expectations for the connection of observability with Reputation and treat this as an open research question. This is based on the above-mentioned considerations that while informants should draw more heavily on observable information, we cannot predict whether this will generate informant-rating variance beyond Trait consensus which should be driven by observability. Additionally, the item characteristics are likely not fully independent from one another (e.g., behavioral items may also be more observable). Thus, we also explore whether their effects overlap.

Method

Testing our hypotheses requires the following steps: First, we use the TRI Model to construct latent variable models specifying Trait, Reputation, and Identity factors in samples of personality self- and informant-reports. This results in factor loadings for each item on each perspective (Trait, Reputation, and Identity). Second, we use multilevel regression to predict the factor loadings from the items’ characteristics (e.g., their ABCD-content). Our primary preregistered analysis (https://osf.io/gqmsf) performs these steps on a large multi-rater data set used for the first time in the present study that stems from the Scarborough Integrative Perspectives on Personality Project. Any deviations from the preregistration are explicitly stated. We then replicate the procedure with an existing public data set from the Eugene-Springfield Community Sample (Goldberg, 1999; Goldberg et al., 2006). We report how we determined our sample size, all data exclusions (if any), all manipulations, and all measures in the study. All supplements, materials, data, and code referenced in the following can be found on the Open Science Framework (OSF) at https://osf.io/wq3jm.

Sample 1: Scarborough Integrative Perspectives on Personality Project (SIP3)

The SIP3 is a large-scale multi-year data collection effort. Specifically, between 2016 and 2022, cohorts of first-year students from the co-operative management program at the University of Toronto Scarborough were invited to partake in an online self-assessment and subsequently invite 3–6 people from their own social networks to provide informant assessments to receive personality feedback as part of their first-year coursework (REB #32440). In addition, in 2018, management co-op students beyond their first year were recruited as paid research participants to complete the same multi-rater personality inventory (along with an extended set of assessments not analyzed in the current study; REB #36048). Informants were primarily friends (86%) or other personal acquaintances (8%, e.g., significant others). A small portion of informants were professional acquaintances (5%), which were omitted from the present study. Common across all cohorts are personality self- and informant-ratings on the IPIP-NEO-120 (Johnson, 2014).

Measures

Targets and informants, respectively, filled out first- and third-person versions of the IPIP-NEO-120 (Johnson, 2014) which assesses the Big Five personality domains, each with six facets assessed with four items. Responses were given on a 5-point Likert scale (

Participants

We merged the data sets from all cohorts and applied the following general exclusion criteria: (1) Targets were excluded if they agreed to participate but did not share their ratings with the team to be used for research. To eliminate careless responders, we excluded participants who (2) completed the entire assessment procedure in less than 3 minutes, (3) had 50% or more missing values in the IPIP-NEO-120 ratings, or (4) had no or minimal variance in the IPIP-NEO-120 ratings (i.e., items were presented in two blocks of 60 items, exclusion was warranted if variance was <.10 in either of the blocks). (5) We excluded duplicates, meaning targets or informants who had participated again in a second cohort keeping only the original ratings. (6) We aimed to exclude falsified informant entries by checking for multiple (i.e., more than two) responses coming from the same IP address. (7) We excluded informants that after the previous criteria remained without a target as well as targets without any informants. (8) Last, for targets with more than three informants, three were randomly selected. The three informants per target were randomly assigned to the roles of Observers 1−3 for the purpose of estimating TRI Models (see below). For targets with fewer than three informants, vacant informant roles were included as missing values.

The final sample used in the present study includes 664 targets and 1,615 informants; 409 targets had three informants, 133 targets had two, and the remaining 122 targets had one. This total sample size was determined by a combination of methodological concerns (i.e., surpassing

Sample 2: Eugene-Springfield Community Sample (ESCS)

To investigate the robustness of our findings, we replicated our analyses with a different multi-rater data set of personality judgments. Specifically, we used a subsample of the ESCS, a longitudinal data collection effort that started in 1993 recruiting participants from available lists of homeowners who completed a host of psychological measures. The relevant subsample consisted of 478 targets who provided self-reports on the Big Five Inventory (BFI; Benet-Martínez & John, 1998; John & Srivastava, 1999) in 1998 and three informants per target from their respective social networks (

Analytic approach

Estimation of TRI Models

In a first step, we constructed latent variable models with confirmatory factor analysis specifying Trait, Reputation, and Identity factors in accordance with the procedures in McAbee and Connelly (2016). The TRI Model is a bifactor structure (see Figure 1) in which each item loads on the Trait factor and one rater factor (Identity for self-reports or Reputation for informant-reports); informant-specific Reputation factors also load on a higher-order Reputation factor. Consistent with McAbee and Connelly (2016), Reputation and Identity were kept orthogonal to help stabilize the model and to preserve the Trait factor as representing consensus between self- and informant-reports. We correlated residual variances for like items across raters. All latent factor variances were fixed to 1, and informants were modeled as interchangeable (i.e., factor loadings, intercepts, residual variances, and residual covariances for like items/factors were constrained to equality across informants, and model fit estimates were adjusted using Interchangeable-Saturated Models; Olsen & Kenny, 2006). Missing values were handled using robust maximum likelihood estimation. To aid interpretability when encountering common problems during model estimation, we refit models following the a priori strategies of (a) constraining factor loadings to be in a consistent direction and (b) fixing residual variances to zero for any items with initially negative residual variances. Latent variable models were fit using Mplus (version 8.7; Muthén & Muthén, 1998−2017). Analysis code is available on the OSF. In Sample 1, we fit a separate TRI Model for each of the 30 IPIP-NEO-120 facets (4 items per facet). 1 In Sample 2, we drew on analyses reported in McAbee and Connelly (2016) that fit separate TRI Models for each Big Five domain (8–10 items per domain). Fit statistics for the TRI Models can be found in Supplement C for Sample 1 and in Table 1 of the original paper for Sample 2 (McAbee & Connelly, 2016, p. 576). Indices of close fit were in similar ranges for both samples, largely within typical benchmarks showing moderate to strong fit (e.g., Hu & Bentler, 1999; Kline, 2015).

Item content and characteristics

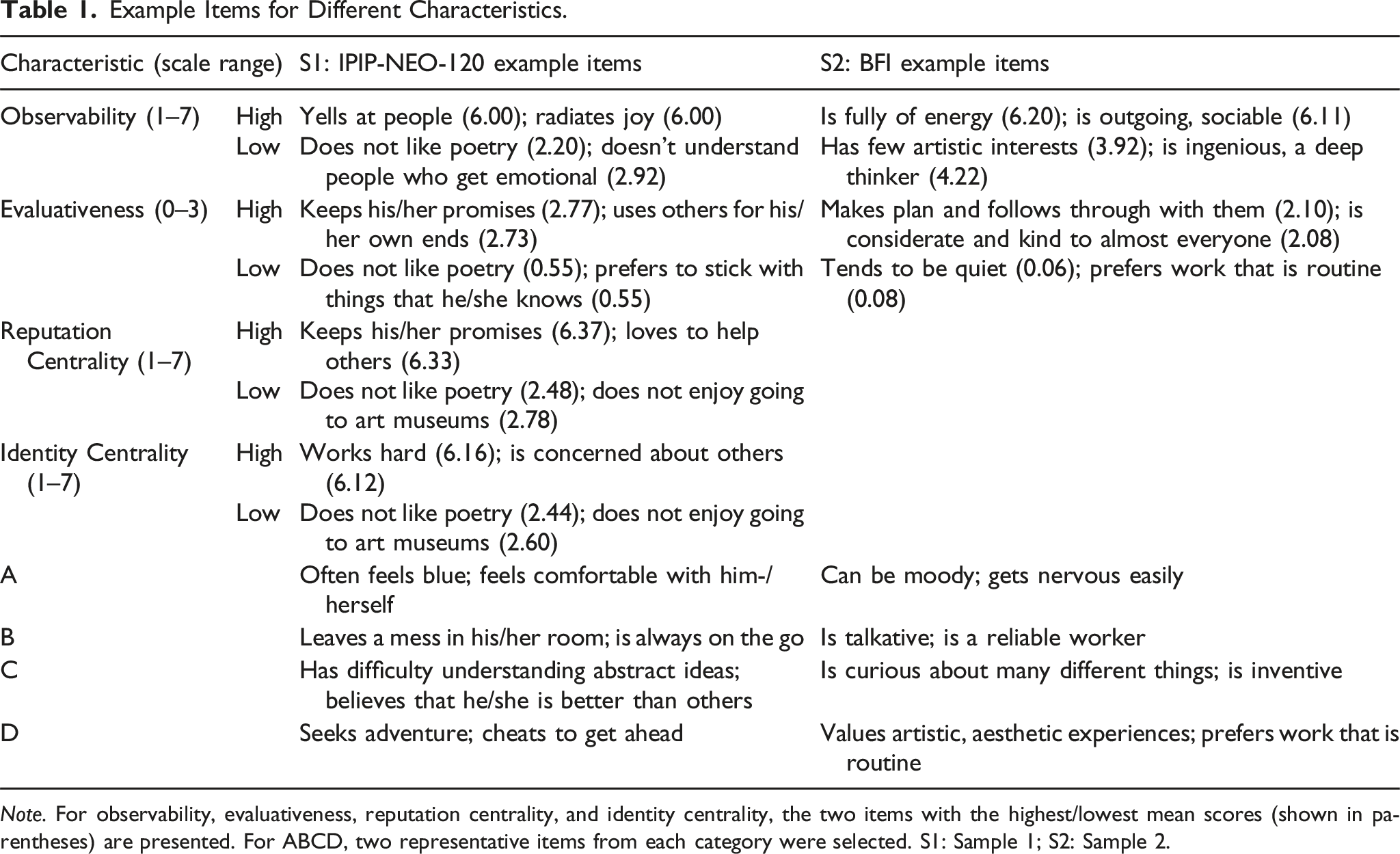

Example Items for Different Characteristics.

Sample 1

For Sample 1, we drew ratings of affect, behavior, cognition, and desire for the IPIP-NEO-120 from Wilt and Revelle (2015). In their study, participants indicated the proportions (in %) to which each item (from longer IPIP-versions) contained affective, behavioral, cognitive, or desire-related content. The reliability of these ratings ranged between .78 and .88 for the four ABCD-components (i.e., reliability of average raters indicated by ICC(3,6) following Shrout & Fleiss, 1979). We used the ABCD-ratings as categorical variables: An item with the highest percentage in affect (compared to B/C/D) is considered an A-item, and so on. The other option of using them as continuous variables where each item is associated with four percentages (for A/B/C/D, respectively) was only available for Sample 1 and is reported in Supplement D.

To obtain ratings of observability, evaluativeness, and items’ centrality to reputation/identity, we collected ratings from MTurk participants who were randomly assigned to rate one of the four characteristics for all IPIP-NEO-120 items. That is, they were asked to indicate for each item (1) how easy it would be to observe for an outside observer, (2) how positive (desirable) it would make a person look (i.e., social desirability from which evaluativeness is derived; see below), (3) how important each statement is for the understanding of one’s own identity, or (4) how important each statement is for a target’s reputation. Observability and social desirability have been successfully assessed for other instruments in previous studies; we adapted instructions based on John and Robins (1993). Responses were made on 7-point Likert scales (

Per dimension, we aimed to collect 30−35 complete responses. Even expecting to exclude some participants (e.g., due to careless responding), previous studies have shown this to be more than enough for highly reliable ratings of item characteristics (e.g., Condon et al., 2020; Leising et al., 2014). Participants were required to live in North America and speak English. They received 4 USD for completing the assessment. Of 123 approved responses, we excluded participants that completed the survey in less than 3 minutes or showed clear indication of being fake (i.e., multiple responses from the same IP with the same nonsensical response to an open-ended question). The final sample comprised 99 participants (22−27 per characteristic) with a mean age of 39.99 years (

Sample 2

For Sample 2, the ABCD-content of BFI items was rated by three of the authors of the present study, who are experts in personality and psychological measurement. Each rater separately assigned one content category to each item (κ = .85). In cases of disagreement, the final categorization was decided via discussion.

Ratings of observability and evaluativeness for BFI items were taken from a study by Huelsnitz et al. (2020) where a sample of 123 undergraduates provided ratings on 7-point Likert scales (

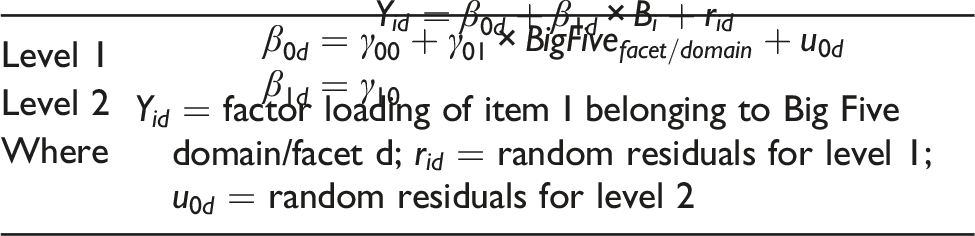

Prediction of factor loadings

We used multilevel modeling to predict items’ factor loadings on the TRI factors from different item characteristics. Separate mixed effects models were calculated for each of the three latent factors. In these models, items are clustered within Big Five facets for Sample 1 (instead of domains as TRI Models had been estimated for each facet) and within Big Five domains for Sample 2. Additionally, for the Trait factor, the rating source (self vs. informant) was clustered within items. All continuous predictors were Z-scored. To examine our predictions, we constructed regression models with the following item-level predictors (in different models): (1) The categorical ABCD-ratings (dummy-coded to compare behavioral vs. non-behavioral items), (2) observability and evaluativeness, and (3) importance for one’s identity and importance for a target’s reputation (for Sample 1 only). To illustrate, below is the equation of the model which predicts factor loadings on the Identity factor testing whether behavioral items load higher than non-behavioral:

Identity Model I1b: ABCD-Content (Dummy-Coded B vs. ACD)

All model equations can be found in the preregistration. Additionally, we explored models containing multiple predictors (e.g., ABCD-content as well as observability and evaluativeness) to gauge whether their effects are independent or not. Multilevel regression analyses were performed using R (version 4.1.1; R Core Team, 2021) and the lme4-package (Bates et al., 2015). Analysis code is available on the OSF.

Results

Estimation of TRI Models

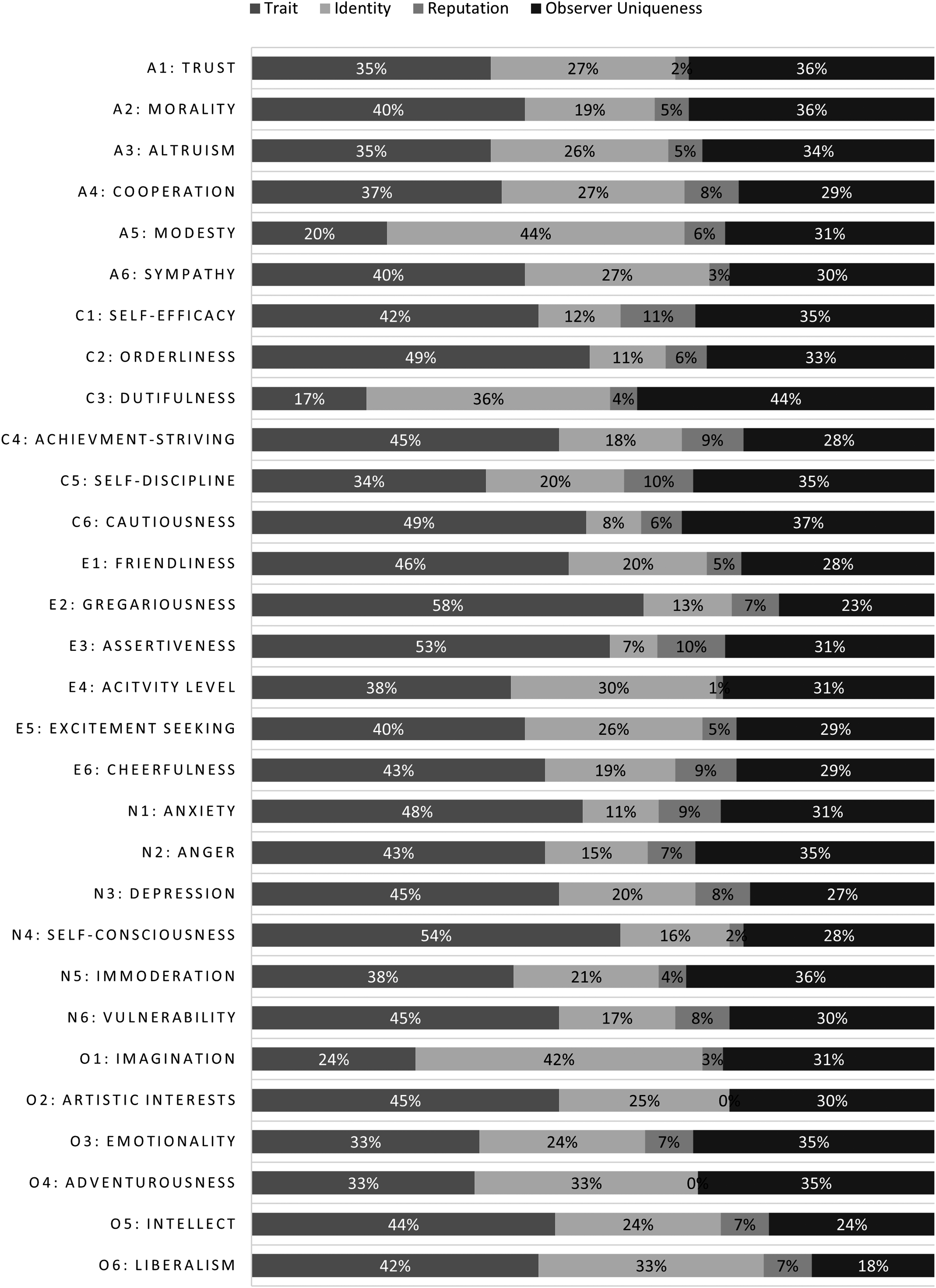

Figure 2 shows the proportion of explained variance in the TRI Models for the 30 facets in the IPIP-NEO-120 in Sample 1 that can be attributed to Trait, Reputation, and Identity. The equivalent figure for Sample 2 (comprising the Big Five domains in the BFI) is found in Figure 2 of the original paper (McAbee & Connelly, 2016, p. 577). In Sample 1, Trait variance ranged from 17 to 58%, Identity from 7 to 44%, Observer Uniqueness from 18 to 44%, and Reputation from 0 to 11%. In Sample 2, ranges were similar for Trait (34−69%) and Identity (8−39%), but somewhat lower for Observer Uniqueness (2−27%) and higher for Reputation (8−19%). Also note that in Sample 1, we re-estimated two TRI Models (for the Openness facets “Artistic Interests” and “Adventurousness”) without the second-level Reputation factor as they originally failed to converge with first-level Reputation factors not loading onto the higher-level factor. Therefore, Reputation variance is 0% for those facets in Figure 2. The remaining models only required constraints in line with a priori strategies (i.e., constraining factor loadings to positivity or fixing residual variances to zero

2

) or no modifications at all. Proportion of Explained Variance Accounted for by Trait, Reputation, and Identity Factors.

The smaller Reputation variance may reflect the overall moderate inter-informant agreement in Sample 1 (see Supplement B for descriptive statistics for the personality ratings). In this sample, targets were first-year university students asked to solicit informants from their own network which included an array of contexts (e.g., peers from university and hometown friends) and from individuals with whom they may not have developed a cogent reputation that spans across informants. Accordingly, consensus between informants generally was not markedly stronger than self-informant consensus. We return to the pattern of lower Reputation variance in Sample 1 in the Discussion section.

Prediction of factor loadings

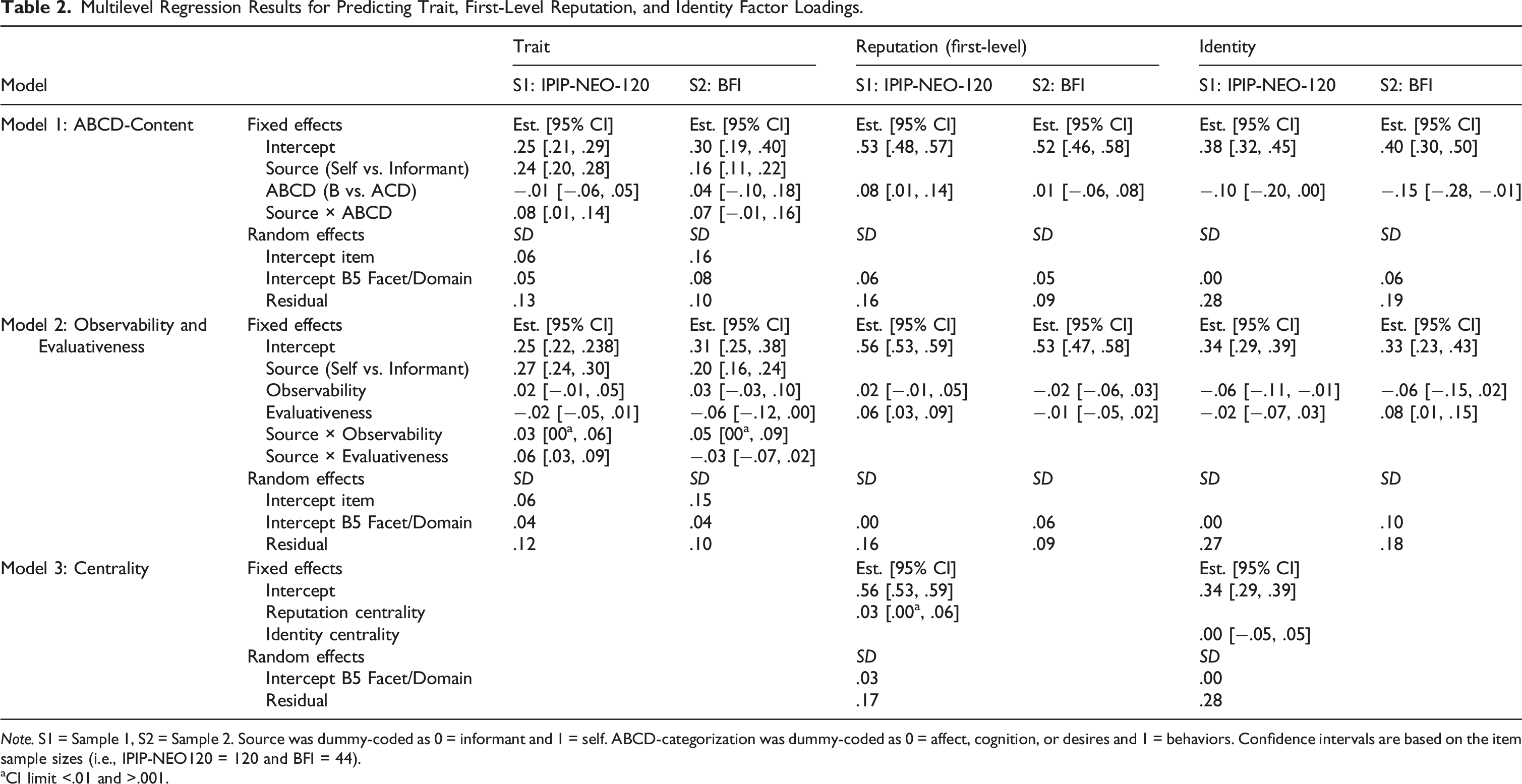

Multilevel Regression Results for Predicting Trait, First-Level Reputation, and Identity Factor Loadings.

aCI limit <.01 and >.001.

Trait

To examine how item properties affect self-informant consensus, we predicted the items’ Trait factor loadings from the items’ characteristics. We also included main and interaction effects of the rating source, that is, whether the rating stemmed from the target or informants (dummy-coded as self = 1, informant = 0). Thus, the effects for the Trait factor loadings in Table 2 can be interpreted as follows: (1) The

Overall, we found that behavioral items had higher Trait loadings than non-behavioral items for self-reports (Sample 1:

Reputation

To examine how item characteristics relate to informants’ distinct reputation perceptions, we regressed first-level (rater-specific) Reputation factor loadings on sets of item characteristics. The results were overall less clear: Behavioral items had higher loadings for Sample 1 (

Identity

For loadings on the Identity factor, negative effects emerged for behavioral items (

Post-hoc exploration

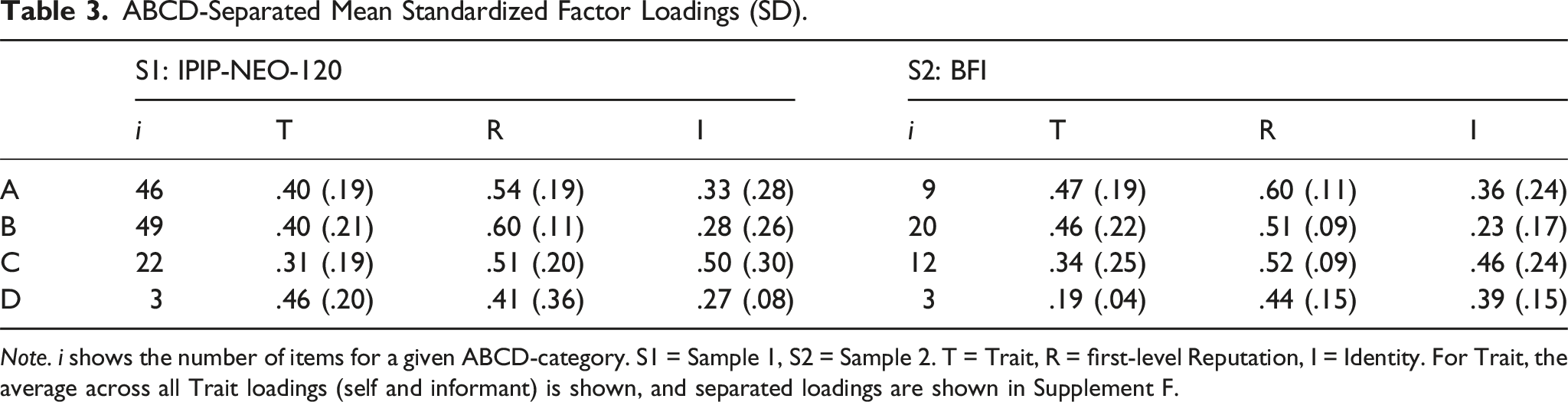

ABCD-Separated Mean Standardized Factor Loadings (SD).

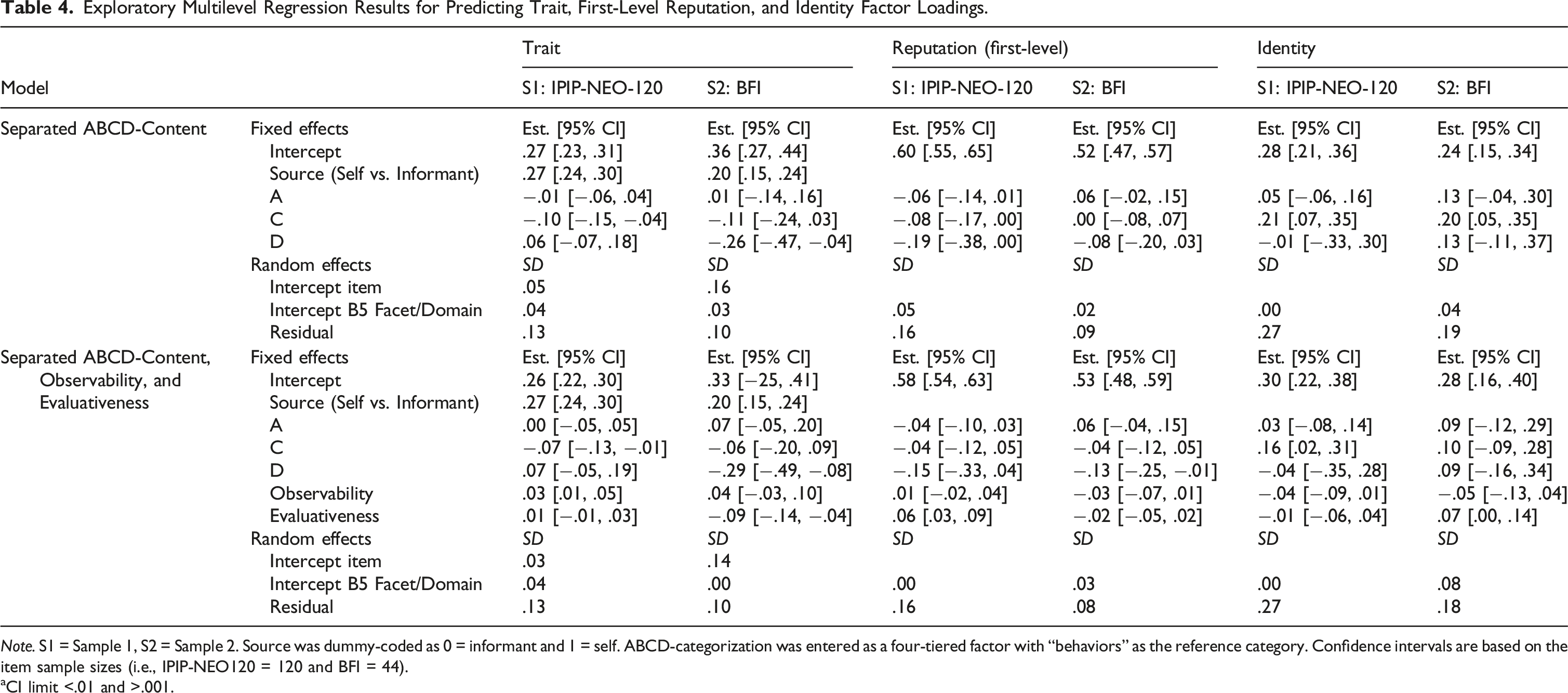

Exploratory Multilevel Regression Results for Predicting Trait, First-Level Reputation, and Identity Factor Loadings.

aCI limit <.01 and >.001.

The ABCD-separated mean factor loadings in Table 3 descriptively show an interesting pattern that is consistent across the two samples: For the Trait factor, affective and behavioral items show similar mean loadings (S1:

Finally, we sought to disentangle the effects of ABCD-content from observability and evaluativeness. When observability and evaluativeness were entered into the regression model additionally, effects of both ABCD-content and observability or evaluativeness were somewhat diminished or enhanced. However, the overall patterns were still consistent with previous results: For example, affective items had similar loadings for the Trait factor as behavioral items, whereas cognitive items drew especially unique self-views and less self-informant agreement. Observability had positive effects on Trait loadings but negative ones on Identity loadings. The effects of evaluativeness and those for Reputation loadings remained inconsistent across samples. Overall, this exploration suggests that ABCD-content and observability/evaluativeness contribute overlapping but non-redundant information about which items are associated with consensual versus discrepant personality perspectives.

Discussion

Although personality psychology has a long history of using self- and informant-reports, there is a lack of knowledge on how to actually integrate and leverage the personality insights and knowledge asymmetries from these perspectives. The present study shows how disentangling and understanding the insights held by the different perspectives may build a foundation toward that goal. Specifically, in two large multi-rater data sets, we examined how item characteristics relate to the shared (Trait factor loadings) and unique (Reputation and Identity factor loadings) perspectives across self and others.

The findings from this study support several general conclusions. First, we saw that the ABCD personality content reflected in items was important. At first glance, behavioral items seemed to promote Trait consensus, though they were much more important for the target’s than the informants’ Trait factor loadings. However, we found that contrasting behavioral with non-behavioral content obfuscated a more nuanced picture where affective and cognitive items had rather specific effects. In fact, items assessing cognitions generally produced the weakest Trait consensus between self and informant, whereas the agreement on affective content was comparable to that on behaviors. Conversely, cognitive items were among the strongest markers for Identity and behavioral items were among the weakest.

These results may be grounded in different reasons. First, they may in part be explained by the type of informants in the studies. Specifically, informants were relatively intimately acquainted with targets (i.e., friends, family, and significant others), which likely afforded them more privileged access to targets’ feelings (e.g., Colvin & Funder, 1991; Vazire, 2010). Although intimacy would seemingly also afford access to cognitions, it may be that informants attend more closely to emotional expressions than cognitions as anchor points when forming trait perceptions. Alternatively, even at increased intimacy, cognitions may be more rarely expressed in behavior than emotions—or their expression may be more controlled by the target (and therefore less aligned with the target’s internal state). That is, we may deem both emotions and cognitions to be primarily internal processes, yet emotions may more readily and easily bleed into behaviors including subtle and even unconscious expressions such as posture or facial expressions, whereas cognitions have to be expressed verbally. In any case, targets’ thoughts appear to be relatively private and constitute markers for navigating the inner world of a person’s unique self-views. Regarding desire items, we could not draw clear conclusions: Both questionnaires contained only three items categorized as such and results were thus accompanied by large standard errors. The underrepresentation of desire items in Big Five measures has been noted before by Wilt and Revelle (2015). If personality researchers believe that desires represent an important component of the personality space, future measures should include a greater proportion of desire-relevant content to elucidate the role of desires in the formation of trait judgments across rating sources.

Second, as hypothesized, more observable items generally were associated with stronger loadings on the Trait factor and weaker loadings on the Identity factor. This finding corresponds to long-standing person perception theory emphasizing the centrality of trait information being contextually relevant and perceptually available for reaching consensus (Funder, 1995; Kenny, 1994). The importance of observability was more pronounced for loadings of targets’ self-reports on the Trait factor than for informant-reports. In contrast, items low in observability generally produced especially strong loadings for self-reports on the Identity factor, suggesting that Identity factors largely emphasize more private, low visibility trait content.

Our results for evaluativeness were less consistent between samples. Though there was some evidence suggesting more evaluative items had weaker loadings on the Trait factor, moderation by source and the impact of evaluativeness on Reputation and Identity factors did not produce replicable patterns between the two samples. One source of these differences may lie in the relation of evaluativeness and social desirability in the two different measures (see also Supplement E): In the BFI, these two dimensions were positively correlated (

Next, we also examined the notion that some items may assess content that is more central to targets’ identity or reputation. However, item centrality ratings were generally unrelated to loadings on the respective Identity and Reputation factors. Thus, how central an item is for a person’s identity or reputation may represent more of an individual difference. Accordingly, identity/reputation centrality may be better studied from analytic approaches equipped to studying idiographic elements of consensus and discrepancy in personality perceptions (e.g., Biesanz, 2010).

It is notable that in contrast to Trait and Identity, the effects for predicting Reputation factor loadings were less consistent and clear. In Sample 1, for instance, informants had more unique views on behavioral than non-behavioral items as well as on more evaluative items. In Sample 2, there were more unique informant-views on affective content specifically as well as less observable content, but no effect of evaluativeness. It is difficult to establish whether that stems from differences in the measures or informants (see limitations) or is a property of informants’ unique insights being less content-specific.

Finally, one subtler but important finding was the strong effects of rater uniqueness (both in the strength of the Identity factor and in the observer uniqueness factors). For most facets, uniqueness accounted for between 25 and 35% of variance in Figure 2, reflecting nontrivial discrepancies in how individual raters viewed a target despite the presence of generally stronger Trait factors. Thus, although there is meaningful consensus across raters, measuring a target’s personality from a single rater will always afford an incomplete picture that is mired with rater method variance. This is in line with Connelly and Ones’ (2010) findings that personality interrater reliabilities are meaningful but modest, and these findings highlight the importance of adopting multi-rater measures of personality (much in the same way that researchers may aggregate multiple items to assess a trait with improved reliability).

In addition, it was a notable divergence from past TRI Model research (Connelly et al., 2022; McAbee & Connelly, 2016) that variance accounted for by the second-order Reputation factor was relatively weak across most facets in Sample 1, suggesting that raters’ unique perspectives do not converge strongly beyond the Trait factor. On one hand, this weak Reputation variance may be a product of this particular sample: Informants were generally friends and classmates, and since targets were only first-year university students, a meaningful reputation may not have emerged (yet) within this particular context. On the other hand, it may be that the present study’s TRI modeling of facets instead of factors produced relatively weaker Reputation variance. Indeed, it may be that Reputations exist at a much more general level (e.g., a reputation for agreeableness) rather than at the level of more specific traits (e.g., a reputation for sympathy). Regardless of whether observer uniquenesses coalesce into a distinct reputation or diverge (beyond the Trait factor), these findings underscore the value added by multi-rater measures.

Implications

These results have important implications for studying person perception, integrating multi-rater perspectives, and improving personality measurement. The person perception literature has typically emphasized how accuracy is facilitated by high observability and inhibited by high evaluativeness (e.g., Funder, 1995; John & Robins, 1993; Kenny, 1994), suggesting that behavioral manifestations of neutrally desirable personality traits should produce the strongest consensus. Finding that items’ Trait factor loadings were strong for not only behaviors but also for affect suggests that either (acquainted) informants may be similarly attuned to some more internal trait tendencies or that affective traits may be less internal than we typically believe. By contrast, items assessing cognitions produced the least consensus between self and informants, strongly defining Identity factors and reflecting elements of traits relatively unknown to others. The informants in these studies, who were primarily friends and family members, may have been particularly attuned to affective trait content in targets, though it is notable that the benefits of intimacy for consensus did not extend to cognitions. Accordingly, person perception researchers may fruitfully further explore what makes cognitions so differentiated.

Our findings also have clear implications for how personality measures can be created to leverage the knowledge asymmetries between self- and informant-raters. That is, inventory creators can specifically orient their instruments toward assessing Trait, Reputation, or Identity components of personality dimensions. Traits, for example, may be best assessed by writing a mix of affective and behavioral items that are outwardly observable to others. Writing items about associated cognitions may provide specifically useful anchor points in assessing Identity for a given personality dimension. Notably, this requires creators to explicitly consider these content dimensions, especially the ABCD-content, when designing their instruments. Currently, most instruments assessing the Big Five are compiled without such considerations and thus are unbalanced in that regard (Jackson et al., 2010; Wilt & Revelle, 2015). For example, Conscientiousness items in both questionnaires used were largely behavioral, which has been found for Big Five instruments in general (Pytlik Zillig et al., 2002). An interest in assessing the unique self-views on this dimension could be aided by including related cognitions. Our results were less pronounced and somewhat inconsistent for Reputation. Gearing instruments toward assessing unique informant-views will require additional research, but researchers may also need to consider the specific type of informants used. In Sample 1, Reputation factors seemed to be defined by evaluative items that were related to behaviors. However, these effects did not replicate in Sample 2, which showed substantial unique views on affective items in particular. One final point for personality assessments is to consider that consciously including evaluative items can be valuable if the goal is to capture unique views which is distinct from existing efforts to make items non-evaluative to reduce social desirability in responses (e.g., Bäckström et al., 2009).

Limitations and future directions

The following potential limitations should be considered in interpreting the present findings: First, our samples of informants comprised individuals that were (1) relatively acquainted or even well-acquainted with their respective target and (2) nominated by the target. Thus, these informants may have had (more) opportunities to gain insights into the targets’ feelings (etc.) and may also have generally positive attitudes toward the target. Both knowing and liking can affect the pattern of self-other agreement (e.g., Leising et al., 2010; Wessels et al., 2020). Additionally, the samples included primarily informants from personal contexts, which still comprised quite different groups (e.g., university peers, longtime friends, and relatives). Future studies may thus consider and systematically vary (1) the level of acquaintance as well as the (2) contexts of informants to see how they define Reputation.

Second, our findings may also be influenced by the particularities of the two personality inventories used (i.e., IPIP-NEO-120 and BFI). For example, both questionnaires contained very few desire items making it difficult to draw any conclusions for this content component. Future research may aim to explicitly write and include such items. As desires are included in definitions of personality, it would be important to understand how they operate in terms of perceptions of them. Additionally, we must consider the overall sample size of items included in inventories: The BFI consists of just 44 items, limiting the sample size in the respective regression models. The IPIP-NEO-120, on the other hand, offers a greater range in the different item characteristics with its 120 items. At the same time, however, it proved difficult for estimating Trait, Reputation, and Identity factors in the first place due to its facet structure. Even at the facet level, not all TRI Models converged, and the corresponding Trait, Reputation, and Identity factors often reflected facet-content. This may in part stem from the lack of internal consistency for some of the facets (see Supplement B). For future investigations in this line, personality inventories should be selected with consideration for both (1) their overall item number but also (2) the degree to which their items actually assess one unidimensional facet or domain.

Finally, our central analyses focus on the prediction of a vector of factor loadings which have been shown to require large sample sizes to be estimated reliably. For example, Hirschfeld et al. (2014) showed that for some personality inventory items factor loadings (in exploratory factor analysis) can be unstable even with more than 500 or 1,000 participants. We do use two of the largest multi-rater data sets that TRI modeling can be applied to: With their target sample sizes of 664 and 478, they are in a range where many items do stabilize. Additionally, the Reputation-relevant loadings are based on the larger numbers of informants which should make them more stable. However, collecting larger multi-rater samples as well as increased item sample sizes will be essential to further progress in future studies.

Conclusion

Across the last few decades, personality psychologists have considered the asymmetric and complementary value of self- and informant-reports. However, this was the first study to investigate what personality content is reflected in the disentangled shared and unique components of multi-rater judgments. Our findings suggest that observable, behavioral, but interestingly also affective content may be well suited to assess consensus, whereas targets’ unique self-perceptions are captured especially by cognitive content. These insights build the necessary prerequisite to successfully leverage multi-rater perspectives in personality judgments. Specifically, we invite researchers and practitioners to not only consider which perspectives are important for their purpose in line with what content they reflect but that future personality measures are explicitly developed with the aim of capturing those insights. This could be an important step in advancing the goal-directedness and specificity of personality assessments.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Preparation of this manuscript was supported by funding granted to Brian S. Connelly from the Canada Research Chairs Program (Title: Canada Research Chair on Integrative Perspectives on Personality, File number: 233149).

Open science statement

Supplements, study materials, analysis code, the item characteristics data set for the IPIP-NEO-120, and data sets necessary for the multilevel regression procedures can be accessed at https://osf.io/wq3jm. The preregistration is available at https://osf.io/gqmsf. The SIP3 (i.e., Sample 1) data set necessary for TRI Model estimation containing individual ratings is not publicly available because this was explicitly assured to participants in the consent form. However, the data set was uploaded to a private component on the OSF and will be made available to interested researchers upon request. Information and data for the Eugene-Springfield Community Sample is available at ![]() .

.