Abstract

Since the 1940’s, job evaluation methods are being used to establish the relative value of jobs within organizations in order to determine salaries. Job evaluation methods have been studied, but there has not been much systematic reflection on the research conducted so far. This scoping review of 199 articles demonstrates that topics changed over decades, starting with methodological questions in the 1940’s, reflecting a start-up period. Historic overviews on wage policies appeared in the 1960’s, and the topic of gender wage inequality in the 1980’s. Guidelines were published in the 1990’s. From 2000 onwards, the main topic was adapting job evaluation methods to specific contexts. Research declined since 2010. There was hardly any research on appending changes in job evaluation methods and criteria. Given the ever-changing nature of work and the upcoming demographic changes, we recommend to revive scientific research on job evaluation and propose an agenda with research questions.

Keywords

Introduction

Since the early 1940’s, job evaluation is increasingly being used in organizations to rationalize wage structures and reduce wage inequalities (Figart, 2001; Oettinger, 1964). Job evaluation is a systematic process for weighing and grading jobs within an organization in order to generate a basis for determining fair, transparent, and equitable renumeration (Armstrong, 2018). According to the International Labour Organization (ILO), job evaluation methods are “the tools that help to establish the relative value of jobs and thus determine whether their corresponding pay is just” (Oelz et al., 2013). There are different job evaluation methods, and in all methods HR professionals evaluate jobs on the basis of content and place them in order of job weight and value. The two main methods for job evaluation are point-factor rating and job matching or levelling. Point-factor rating is an analytical approach in which separate factors are scored, such as qualifications (knowledge and skills), effort, responsibility, and the conditions under which the work is performed. The scores are added together to produce a total score for the job which can be used for comparison and grading purposes. With job matching or levelling, first a grade structure is produced and jobs will be placed in a grade of the grading structure, by matching them against profiles describing broadly similar types of work. This can be done in a more analytical way by matching jobs against factor-levels, or it can be done by matching a job description with a grade description in a more generalized way. Job evaluation is always based on the job description and not on the competences or performances of the jobholder (Armstrong, 2018).

Although it is widely assumed that job evaluation is common practice, especially in EU organizations, it is hard to find scientifically collected data on the exact use. A survey shows that in 2017, 77% of the UK organizations use a formal job evaluation method or scheme to weigh and grade jobs for wage setting (e-reward, 2017). According to Cordis, which coordinates EU-supported R&D activities, 49% of European organizations in the private sector use a formal job evaluation scheme (Cordis, 2023). It is claimed that almost all public sector organizations use a form of job evaluation (Cordis, 2023; Heneman, 2003; Human Capital Group, 2021). An employer’s association in the Netherlands states that around 85% of all Dutch jobs are weighed with a job evaluation method for wage setting purposes (AWVN, 2023). At the same time it is claimed that in the last two decades the point-factor methods are increasingly being exchanged for less time-consuming methods, like job matching or levelling (Brown et al., 2016; Brown & Munday, 2016). So there are indications of the use and the trend towards easier methods, but the topic of job evaluation has not been systematically studied recently. Hence, it remains unclear if and how job evaluation methods are used and how they have developed throughout the years. One of the issues we are interested in is the question whether their evaluation criteria have changed. The literature states that job evaluation practices are subject to societal changes and need to be periodically evaluated to check whether the criteria or factors on which the system is based are still compatible to societal changes in norms and values (Armstrong, 2018; Bussin & Swart-Opperman, 2022; Heneman, 2003). Also, views on the internal job hierarchy and its fairness can be influenced by labor market developments and changing societal ideas on what is fair (Boxall, 2016). Thus, it could be likely that changes have occurred, but there is little systematic scientific knowledge on these changes.

At least three reasons exist to revive scientific research on job evaluation systems. The first reason is that recent legislation on pay transparency endorse the use of more formal methods of job evaluation. The recent EU directive and the US legislation on pay transparency both highlight the use of job evaluation as instrument for equal and fair pay and for decreasing existing pay inequalities (Harrington, 2023; McMullen & Dahle, 2024; The Council of the EU, 2023; Wagner, 2021). If job evaluation will be used more, it would be good to get insight into the evidence base. The second reason concerns the different upcoming changes in the context of work: changes in demography, the labor market, and in the content and organization of work itself. Demographic changes involve an aging population (EU, 2023; EuroStat, 2023, International Labour Organisation, 2019), and changes in educational attainment with a higher percentage of the population attaining higher education (EuroStat, 2023b). These demographic changes will lead to further labor market shortages, especially in the public sector such as healthcare and education (EU, 2023; International Labour Organisation, 2019). Work itself also changes as technological developments influence both the content and the organization of work, with intensification and flexibilization of work as examples (Kremer et al., 2021; OECD, 2018). These changes might put pressure on the existing job evaluation methods, criteria, and the weighing of criteria. The third reason why it is important to scientifically study developments in job evaluation, is that there are initiatives on updating the tools and criteria for job evaluation in several countries and industries (Dutch Federation of University Hospitals (NFU), 2023; Human Capital Group, 2021; Ministerie of Defensie, 2022; Salminen-Karlsson & Fogelberg Eriksson, 2022; Wagner, 2022). Thus, a lot is happening simultaneously that potentially has impact on the tools, methods and criteria that are used to determine the relative worth of jobs and their corresponding outcomes in terms of wages.

Our research aims to take stock of the academic research on job evaluation. The latest literature review from Heneman dates from 2003 and summarizes different job evaluation methods using literature, but the methods and selection procedures did not yet comply with the present-day required methodology and reporting items (Heneman, 2003). As a consequence, it is difficult to determine what kinds of studies on job evaluation have been conducted so far, what the key themes are, in which countries research took place, and how research on job evaluation evolved over time. This scoping review answers this knowledge gap by categorizing the topics studied, and identifying where knowledge gaps exist. Our scoping review is, to the best of our knowledge, the first to systematically map the research on job evaluation. A scoping review is a rigorous and systematic way to map the extent of knowledge in a field (Arksey & O'Malley, 2005; Peters et al., 2015). In comparison with systematic reviews that ask very specific questions, scoping reviews are more exploratory in nature and are very suitable for understanding the breadth of a topic or field. Scoping reviews can produce specific recommendations for future research (Arksey & O'Malley, 2005). The goal of this scoping review is to provide an overview of the existing literature on job evaluation and to take the first steps to develop an agenda for future research on job evaluation.

Materials and Methods

This scoping review involved the five phases described by Arksey and O'Malley (2005): (1) identifying the research question(s); (2) identifying relevant studies; (3) selecting studies; (4) charting the data; and (5) collating, summarizing, reporting results (Arksey & O’Malley, 2005; Munn et al., 2018; Peters et al., 2015; Peters et al., 2020; Tricco, 2018).

Identifying the Research Question

This scoping review aimed to identify scientific publications about job evaluation and to provide an overview of the literature and research available throughout the years, in order to get insight into the topics studied and to propose an agenda for future research. Specifically we were interested if there are studies on updating or evaluating the criteria on which job evaluation are based since its inception.

Identifying Relevant Studies

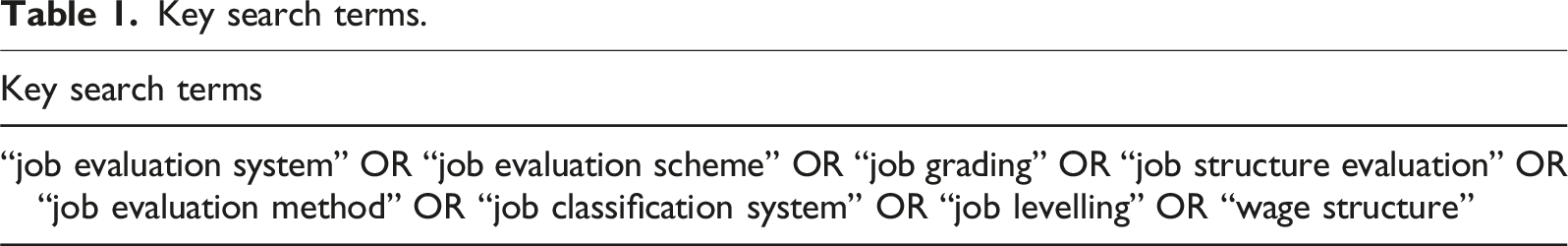

Key search terms.

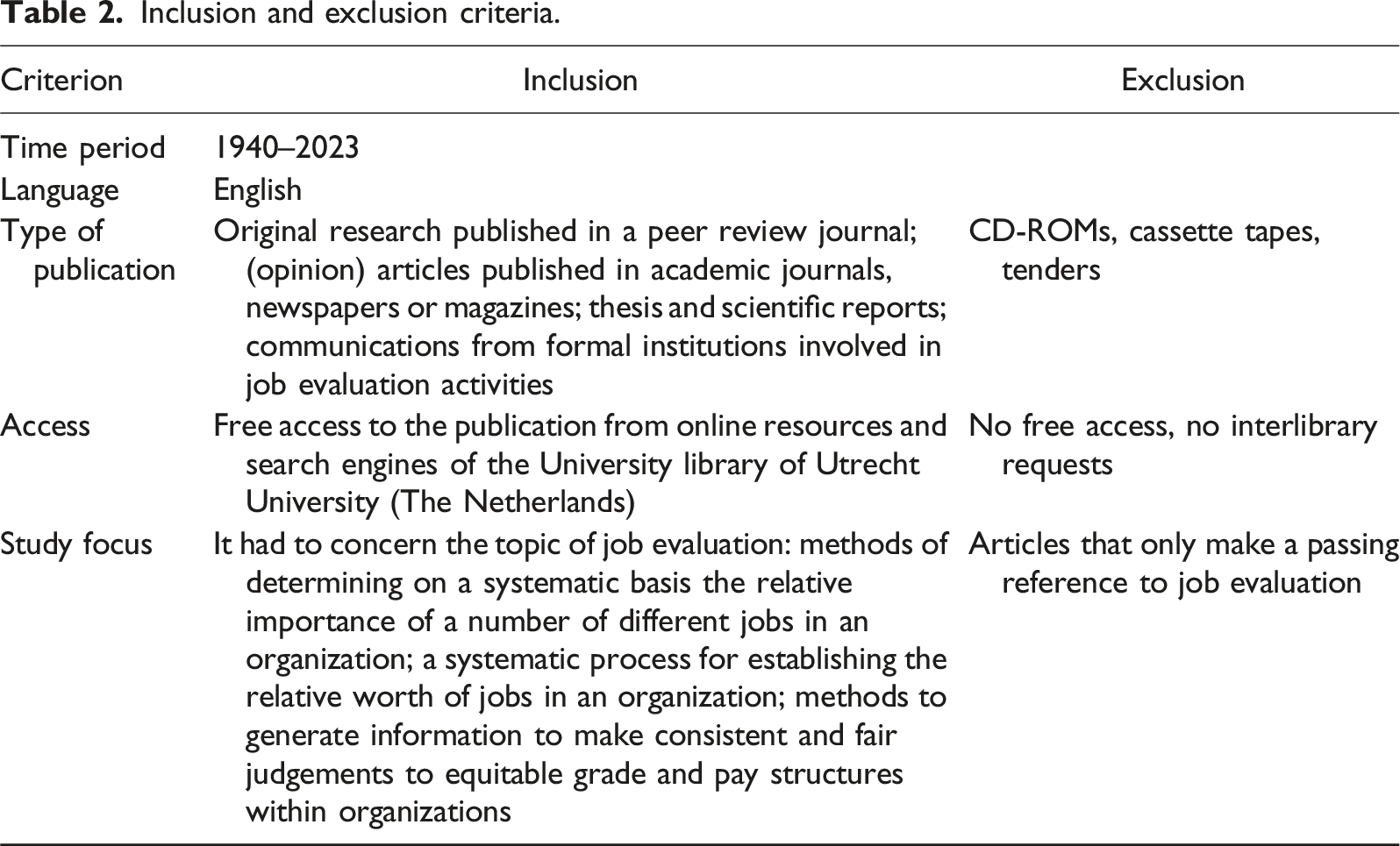

Inclusion and exclusion criteria.

Selecting Studies

All results were imported into EndNote software, where all duplicate results were identified and removed. Several groups were created in EndNote to manage and document all choices made during the process of screening and eligibility (Peters, 2017). A team of five researchers was put together (IVDG, RM, GVDV, JVR, and JB), all experienced in evaluating research papers from a variety of fields. The screening process consisted of two phases: (1) the first pass on the basis of title/abstract; (2) the second pass after reading the full text. The procedures were as follows.

The First Pass: Title and Abstract

Of each reference, the title and abstract were screened. Clearly irrelevant references were removed, using the inclusion and exclusion criteria as reported in Table 2. References were also removed if there was no information available on which a decision could be made. Of the first 25 references title and abstract were screened and discussed together by the team to establish screening reliability. After that, all references were screened by the team members individually. Disagreements were discussed until consensus was reached. Full text publications were retrieved from all selected titles from the first pass.

The Second Pass: Full Text

All available full texts were fully read, and a decision was made for each reference, using the inclusion and exclusion criteria as reported in Table 2. The first five full text publications were discussed together in the team to create a norm and establish screening reliability. After that, all full texts were read and decided upon by the team members individually. Any doubts were discussed between at least two researchers until a decision was made.

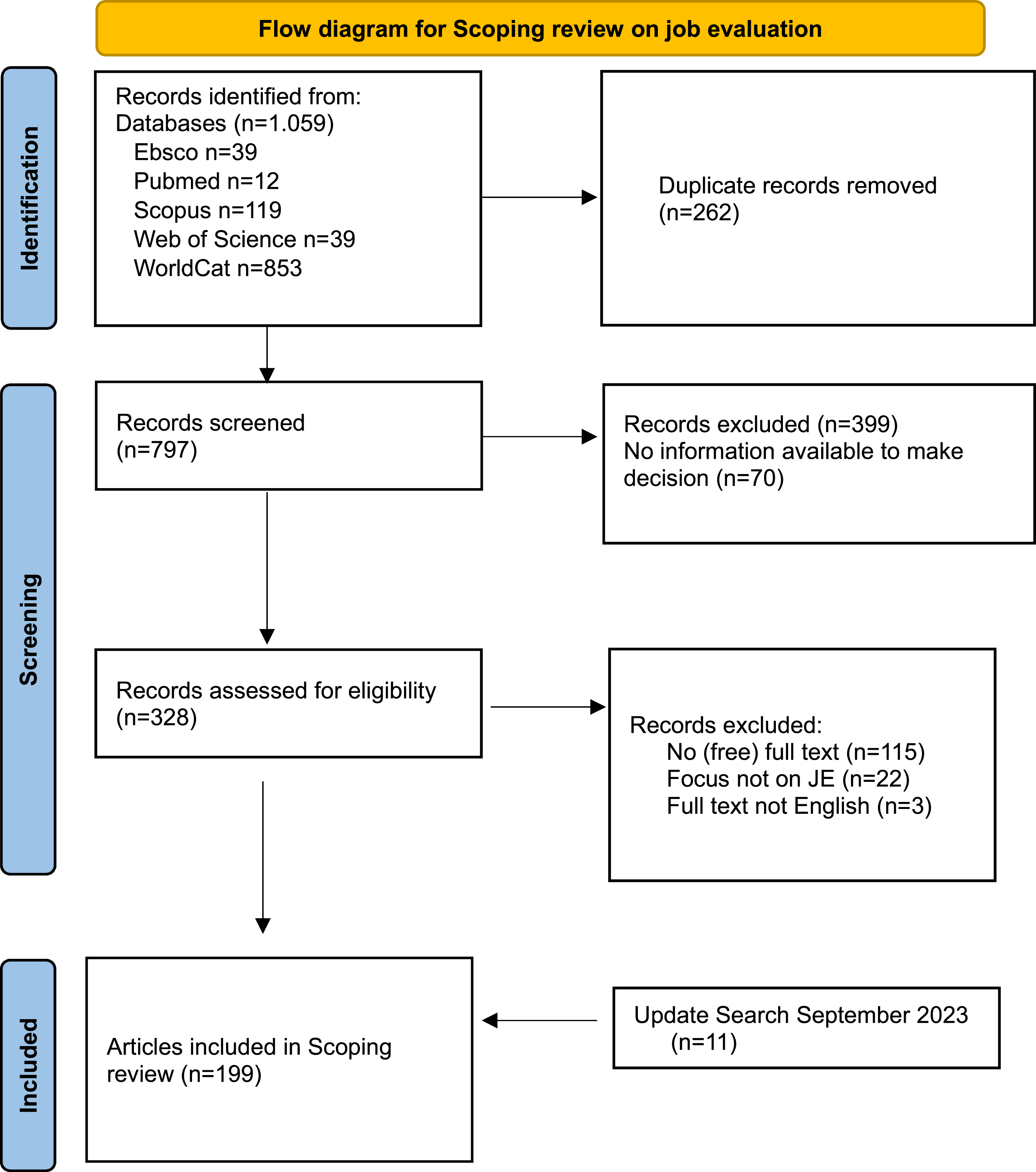

A total of 1.059 titles were identified in databases. After removing duplicates, 797 studies were screened in the first pass (title/abstract), and 328 were screened in the second pass (full text). One hundred and forty articles were excluded for the following reasons: no (free) full text (n = 115), not focused on job evaluation (n = 22), and study published in a language other than English (n = 3). This resulted in 188 articles that were first included. Then, an update of the search was carried out in October 2023, one year after the first search. This lead to including n = 11 articles, so in total this scoping review included n = 199 publications (Supplement 1). Figure 1 illustrates the process in a Prisma Flow Diagram (Peters et al., 2020; Tricco, 2018). Prisma Flow Diagram for scoping review on job evaluation.

Charting

The extracting and charting of data from the selected articles is the fourth stage of the scoping review framework of Arksey and O’Malley (2005). A data extraction spreadsheet was developed to extract the following information: author, year, location of the study, publication type, study field, name of the journal, aim/purpose of the study, key theme or subject, study population and sample size (if applicable), research methods and key findings, and research approach (normative or empirical). The spreadsheet was piloted and found to be suitable in terms of understanding, reviewer calibration and of providing sufficient freedom to extract the results of research papers from a variety of fields. In the data extraction spreadsheet, reviewers used their own words to describe the main focus of the publication. These open fields were all read by two researchers (IVDG and RM). With regard to formulating and assigning a key theme to each article, the two researchers together developed the initial key themes. Then one researcher categorized all articles in one theme (RM), while a second researcher checked the categorizing (IVDG).

Summarizing and Reporting Findings

The findings of our scoping review were summarized and presented in several ways. Tables and charts were produced, featuring the distribution of studies by year of publication, country of origin, publication type, key themes, and research methods.

Results

Distribution of Studies by Country

This scoping review yielded 199 articles from 23 countries (Supplement 1), meaning the research was conducted in that country or, in case of a theoretical analysis, the researchers were from that country: Australia, Bahrein, Canada, China, Egypt, Finland, France, Iceland, India, Indonesia, the Netherlands, New Zealand, Romania, Saudi Arabia, South Africa, Spain, Sweden, Switzerland, Thailand, Turkey, the UK, the USA, and Zimbabwe. In total, 74% of the studies (148/199) originated from high income countries where English is the most common language. Most articles were from the USA (n = 106), the UK (n = 24), Canada (n = 10), and Australia (n = 7).

Distribution of Studies by Publication Decade

Articles were published in years from all decades in our search period, ranging from 1940 to 2023. Most articles were from the 1990’s (27%, n = 54), the 1980’s (16%, n = 32), and the 2000s (17%, n = 33). After the year 2010, there is a decline in the total number of publications.

There were different kinds of publications: journal articles (52%, n = 104); guidelines, standards, and schemes (27%, n = 53); scientific reports (8%, n = 16) and “other,” like news articles, opinion letters, book chapters, and leaflets (13%, n = 26).

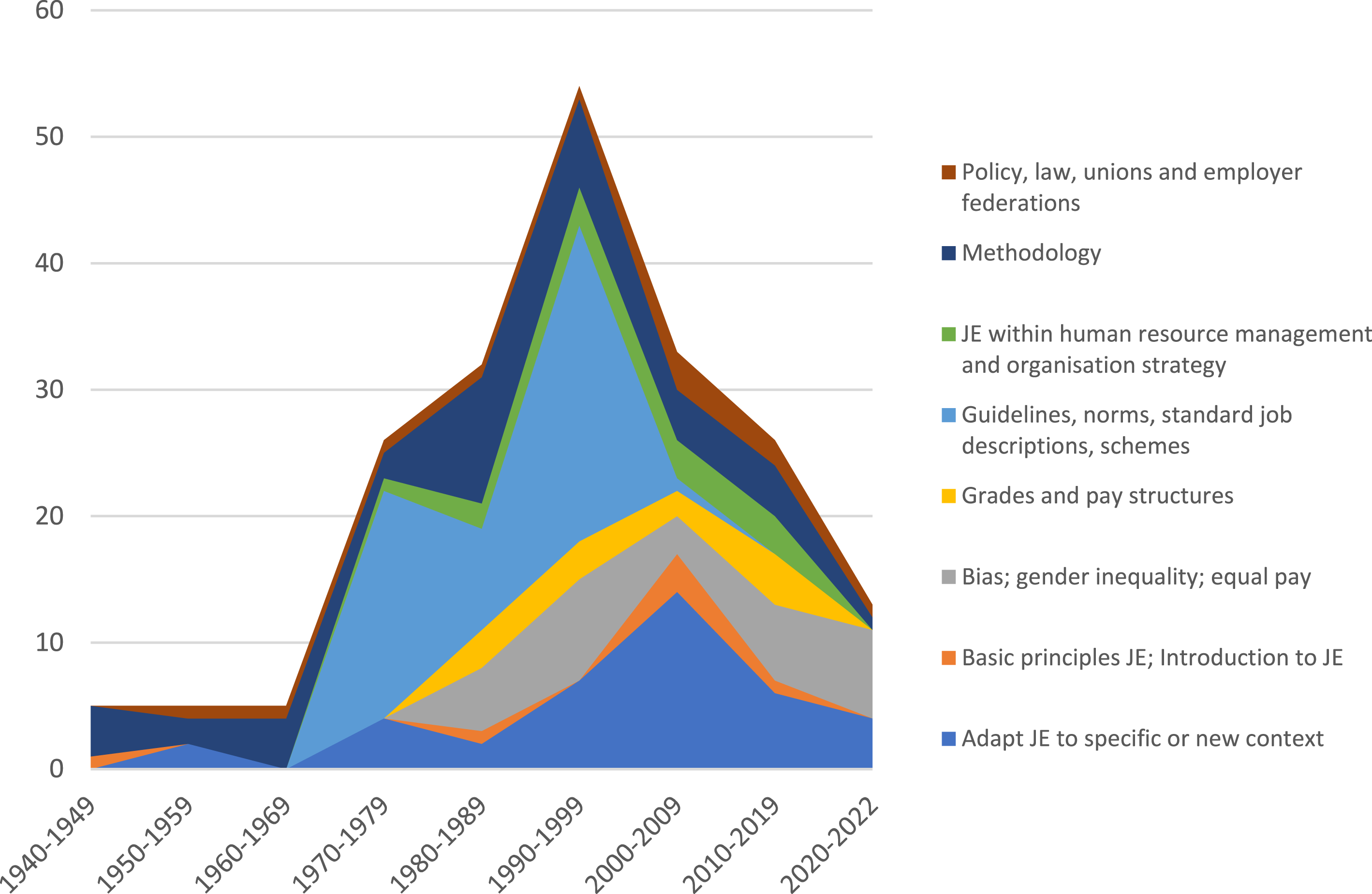

Distribution of Studies by Key Themes

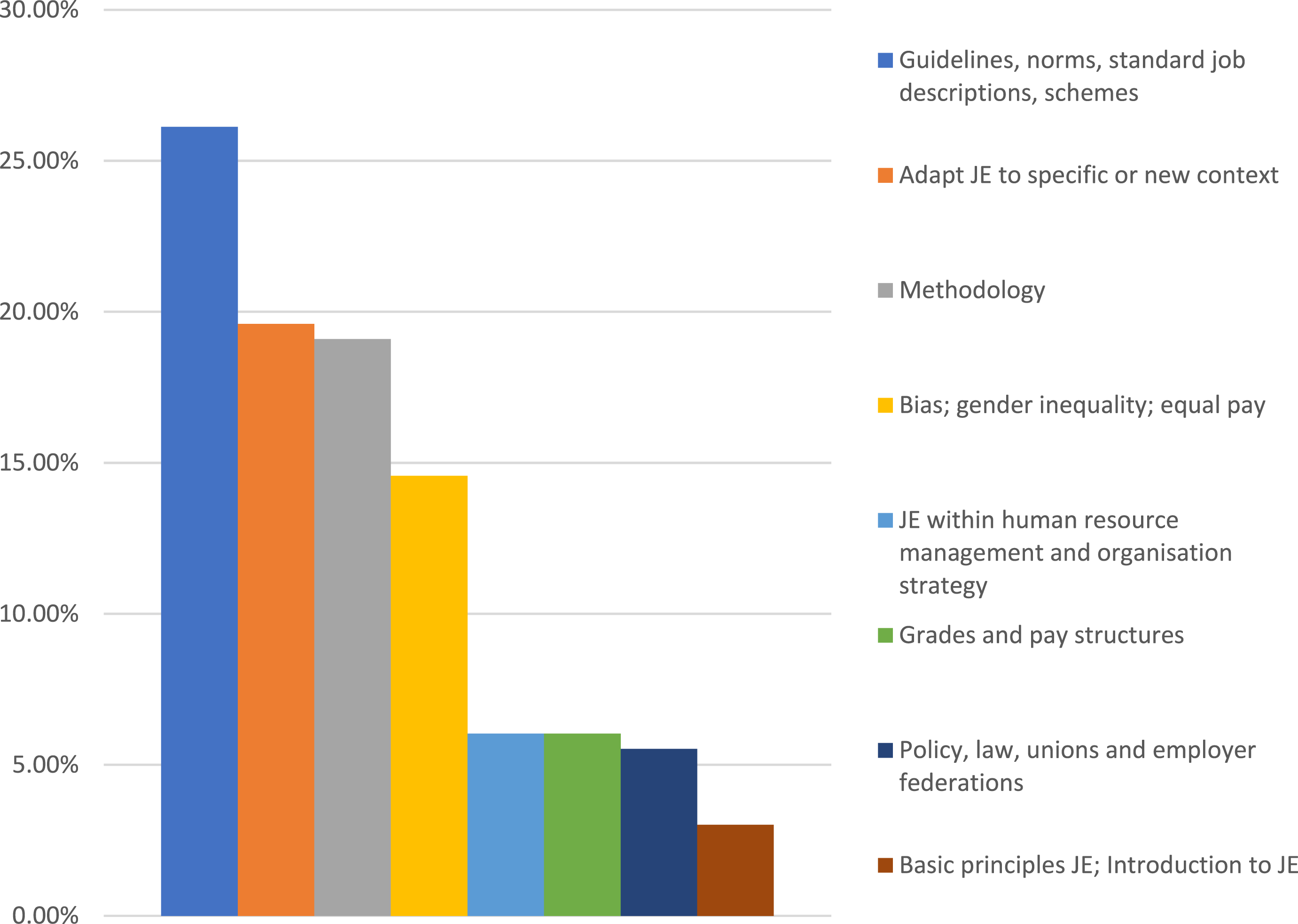

Eight key themes were identified in the reviewed literature (Figure 2), in substantive order: from generic fundamentals and principles of job evaluation, methodology, to topics on dissemination and implementation in new contexts and the more specific topics such as equal pay. 1. Basic principles of job evaluation (n = 6; 3%). 2. Methodology: validity, reliability, comparing different job evaluation methods (n = 38, 19%). 3. Job evaluation guidelines, norms, standard job descriptions, and schemes (n = 52; 26%). 4. Grades and pay structures (n = 12; 6%) 5. Policy, law, unions, and employer federations: the role of job evaluation in national wage policies and collective labor agreements (n = 11; 6%). 6. Adaptation of job evaluation to a new or specific context, a new organization or industry (n = 39; 20%). 7. Job evaluation within human resource management and organization strategy (n = 12; 6%). 8. Equal pay, bias, and gender inequality (n = 29; 15%). Key themes in the academic literature on job evaluation in % (n = 199).

Below, we provide a short overview of what is researched in terms of topics and what not. In order to illustrate a topic, we sometimes highlight specific publications (not exhaustive). See Supplement 1 for the full overview of included publications.

Basic Principles of Job Evaluation

There were six articles within this category, describing different types of methods and procedures of job evaluation: job classification, job ranking, job elements, and point evaluation (Moore, 1944). An example is the publication of Arthurs (2001), who wrote an overview of what job evaluation is, including some organizational aspects as influence, bias and bargaining power (Arthurs, 2001). El Hajji’s paper (2015) provides an overall insight of the Hay System of job evaluation (El-Hajji, 2015). Armstrong’s handbook of job evaluation (2018) describes the different types of job evaluation, including all procedures and aspects to take into account when applying job evaluation (Armstrong, 2018).

Gaps

None of the publications reported generic numbers on spread, dissemination and the use of the different methods across countries, sectors, and type of organizations. Furthermore, the topic of maintenance of systems (as basic principle) was only briefly touched upon, but not empirically researched or debated. What to do with job evaluation systems years after they are implemented? How to incorporate changes? There was no reporting of how criteria have changed throughout the time or across countries or sectors.

Job Evaluation Methodology

A relatively large theme concerned methodological issues (19%, n = 38), such as reliability and validity of job evaluation methods. There is research that focused on comparing different methods in terms of outcomes and in terms of time to complete the job evaluation process (Armstrong & Brown, 2017; Berrocal et al., 2018; Chesler, 1948; Collins & Muchinsky, 1993; Herndon, 1986; Lawler, 1986 Van Sliedregt et al., 2001). Snelgar (1982) showed that different forms of job evaluation (point factor, classification, and ranking) yielded comparable results (Snelgar, 1982). Studies in construct validity were done by Chesler (1948), Davis and Tiffin (1950) and Robinson et al. (1974). Other research focused on the reliability of the raters and the rating procedures (Chesler, 1948; Cooper et al., 1987; Harding, Madden, & Colson, 1960; Harding & Madden, 1960; Lawshe Jr. et al., 1948; Madden & Bourdon, 1964). Then, another subtheme was the selection of factors, the scoring of factors, the rating scale-format, the use of abbreviated versions and the use of predictors (Badenhorst & Kellerman, 1987; Blomskog & Södertörns, 2007; Bouyer & Hemon, 1993; Davis & Sauser, 1991; Davis & Tiffin, 1950; Kahya, 2018; Lawshe & Farbro, 1949; Madden & Bourdon, 1964; Su, 2000; Yu & Tang, 2011). The reliability of job evaluation in general was studied or discussed in some other articles (Gilbert, 2012; Harding, Madden, & Colson, 1960; Heneman, 2003; Schwab & Heneman, 1986). Also the link between job analysis and job evaluation was discussed, emphasizing the relationship between trustworthy data of the actual work being done by job holders, accurate job descriptions, and job evaluation. For example, the research of Taber and Peters (1991) indicated that a job analysis questionnaire might describe some classes of jobs more completely than others, affecting outcomes of job evaluation scores (Taber & Peters, 1991). Finally, the use of algorithms and machine learning were explored for job evaluation purposes in two studies (Hisham, 2021; Loyarte-López & García-Olaizola, 2022).

Gaps

There was little research on the exact use in practice: is job evaluation used as designed? There was hardly any recent research on the validity and reliability. Research on methodology was mainly focused on the internal validity of job evaluation. There was hardly any research done on external validity, that is, the alignment between the job evaluation system and the labor market. Related to this, there was no empirical research available on updating factors, criteria for evaluation or their weighing throughout the years. When research reported on the selection of factors, this was done in the phase of developing and introducing job evaluation. Furthermore, there was no large-scale empirical research on the extent to which job descriptions reflect the work that is actually being done in practice, and giving insight in the question of how trustworthy job descriptions are for job evaluation.

Guidelines, Norms, Standard Job Descriptions, Schemes

Between 1970 and 2000, a total of 52 articles were published by institutes, such as the US Office for Personnel Management, the Civil Service Commission or the Ontario Pay Equity Commission. Most of the times, these are voluminous reports, with separate appendices containing standard job descriptions for diverse jobs. Because appendices are often published as separate documents on different points in time, these were all separately counted and therefore great in number.

Gaps

There were no recent publications of guidelines and it is not clear why. Were handbooks and guidelines only published once? Also, we did not find any handbooks or guidelines published by the more commercial agencies and firms that often own job evaluation systems.

Grades and Pay Structures

Grade and pay structures can be seen as the framework within the pay policies of an organization or sector can be implemented. Grades, bands, or levels are often grouped in a sequence into which (groups of) jobs are placed, based on the outcomes of job evaluation. In the literature, there were 12 articles that specifically studied this topic in relation to job evaluation. For instance, Dauffenbach explored differences in grades in terms of job analytic variables and conventional economic variables (Dauffenbach & Greer, 1984). Law and Carlopio (1993) described two statistical aids for determining the optimal number of pay grades (Law & Carlopio, 1993). Akyildiz (2007) measured the effects of job evaluation and seniority on the wage structure in the metal industry (Akyildiz & Güngör, 2007). Maloa (2012) found that employee skill, employee performance, job family, and job grade were the factors strongly related to employee compensation (Maloa, 2012). In Armstrong’s handbook of job evaluation, a chapter on grade and pay structures is included (Armstrong, 2018).

Gaps

We did not find any recent empirical research on grades (as outcome of job evaluation) and pay structures in relation to job evaluation. In addition, we did not find any normative research on what is perceived as a fair pay structure and how to maintain or innovate pay structures in relation to job evaluation.

Policy, Law, Unions, and Employer Federations

There were a number of articles that looked at the role of job evaluation systems in the political context. For instance, Gomberg (1951) wrote about how a trade unionist looks upon job evaluation as a minor tool in collective bargaining, because job evaluation would only produce a limited concept of job content and worth (Gomberg, 1951). The work of Oettinger (1964) describes a post-World War II history of job evaluation and compares the policy context of Britain with that of the Netherlands (Oettinger, 1964). Gill et al. (1977) published a “political” study of job evaluation, and they focused on the pressures of different stakeholders to rationalize payment systems (Gill et al., 1977). Cogill (1984) analyzed the role of job evaluation in labor relations in four countries (Cogill, 1984). Akkerman et al. (2009) looked at differences in the pattern of wages as the outcome of different wage setting procedures and collective agreements (Akkerman & Allen, 2009).

Gaps

There was no empirical research available on spread, dissemination and use of job evaluation methods across countries, sectors, and type of organizations. Also, there was hardly any research on the use of job evaluation methods within collective agreements. We did not find any research on the role of policy, law, unions, and collective agreements with respect to changing criteria and weighing of the criteria used in job evaluation.

Adaptation of Job Evaluation Methods to Specific or New Context

Thirty-nine publications reported on the development, adaptation, or implementation of a specific job evaluation system in a specific context. The first publication within this theme is from Gray, who wrote in 1950: “Readymade systems of job evaluation seldom fit because their factors do not differentiate between jobs. Valid job evaluation systems must fit the jobs being evaluated” (Gray, 1950). To illustrate the kinds of studies done within this topic, we highlight a few here. McCormick and North (1954) conducted a pilot study to develop a job evaluation method for naval jobs (McCormick & North, 1954). Hanna (1973) developed a job evaluation method for administrative and service staff of the American University of Cairo (Hanna & American University in, 1973). Beuhring (1986) wrote about choosing or designing a job evaluation method for University staff. Three articles reported on a job evaluation method specifically for the metal working industry and the steel industry (Eraslan & Atalay, 2013; Serarslan et al., 1991; Su, 2000). Van Rooyen (2005) implemented a job evaluation system in municipalities in South Africa (Van Rooyen, 2005). Raju (2014) applied a job evaluation method in an Egyptian library (Raju, 2014). Zhang et al. (2021) developed a job evaluation method for Chinese public hospitals. Research has particularly taken place in the public sector or public sector organizations, for instance municipalities, healthcare, defense, libraries, and universities. Next to that, studies were found in sectors that are traditionally known for their use of collective labor agreements, such as the steel and metal industry.

Gaps

Academic reflection on the adaptation and implementation of job evaluation systems in different cultures and across countries and sectors has not yet taken place. However, we did find literature with respect to changes in the job evaluation system in the healthcare sector (the NHS) in the UK, known as the Agenda for Change (AfC) (Kahya, 2006). We found news articles reporting of the changes and the impact of the changes (Travis, 2007; unknown, 2004a, 2004b). Furthermore, there was academic correspondence between researchers about definitions, use and adaptability of certain criteria (Ransom, 2007). To our knowledge, the NHS case is the biggest case that has been described and reflected upon in the academic literature when it comes to changing a job evaluation system for a whole sector in a country.

Job Evaluation within Human Resource Management and Organization Strategy

A relatively small research theme concerned the relation between job evaluation and human resource management and organization strategy. There is Seccombe’s article (1984), that focuses on how a flexible and comprehensive job evaluation system can play a role in human resource planning by integrating salary administration into the total concept of management development (Seccombe, 1984). The article of O’Neill and Graham (1995) presents a total reward strategy that matches the organizational strategy and values (O'Neill, 1995). Ivergård and Hunt (2005) propose a basis for how different human resource systems can be integrated into the business development of an organization. In 2015, Köykka studied the connection between job evaluation and competency management (Köykkä, 2015).

Gaps

We didn’t find any research on how organizations deal with their strategic needs (in particular their demand for skilled employees) and how these needs can or cannot be translated into changes in job evaluation criteria or weights. Also, there was no recent research on how job evaluation systems fit with other HR practices. How can job evaluation systems contribute to organizational outcomes and strategy?

Equal Pay, Bias, and Gender Inequality

Within this theme, there were 29 studies. A number of publications focused on equal pay outcomes (Forth et al., 2023; Gilbert, 2005, 2012). There were also studies that studied the effects of different kinds of raters and evaluation methods on (genderized) bias, see for example, Schwab (1985) (Schwab & Grams, 1985). Other publications focused on the political debate of comparable worth and equal pay (Chassonnery-Zaïgouche, 2023; Doherty & Harriman, 1981; Graham & Hyde, 1991; Tompkins et al., 1990); legislation and case law on equal pay (Doherty & Harriman, 1981; Weiner, 2002); or the question whether job evaluation methods itself are biased (Figart, 2000, 2001; Halsema & Halsema, 2006; Koskinen-Sandberg, 2017; National Academy of Sciences - National Research Council et al., 1981; Salminen-Karlsson & Fogelberg Eriksson, 2022; Steinberg, 1990; Steinberg, 1992; Steinberg, 1999).

We highlight some articles to further illustrate this theme. Publications that focused on the concept of equal pay are about discriminatory pay differentials and the underlying causes, such as occupational segregation. The 1980s emphasized comparable worth in the USA (Doherty & Harriman, 1981; Graham & Hyde, 1991; Tompkins et al., 1990). Doherty (1981) described the difference between “equal worth” and “comparable worth” as follows: the problem of equal pay for equal work occurs when women are paid less for doing the same as men; the problem of equal pay for comparable worth occurs when whole classes of jobs, those that are traditionally held by women, are relegated to low pay and low status (Doherty & Harriman, 1981). The upsides and downsides of using job evaluation in determining comparable worth were discussed, and for example, Chassonnery-Zaïgouche (2023) discussed economic arguments in the comparable worth debate. Also, the work of Treiman and Hartman (1981) is often cited in other publications within this category (National Academy of Sciences - National Research Council et al., 1981). They examined wage discrimination of women and minorities, compared to non-minority men, using statistics to assess pay differentials. Also the work of Ronnie Steinberg is often cited in the field of job evaluation. Steinberg (1990) described several forms of bias in job evaluation methods (Steinberg, 1990). Steinberg argues that women’s work is invisible in job evaluation methods and in job descriptions. The more vague a job description, the higher the risk of lower points. Even if characteristics of the work of women are included in the framework of job evaluation, the work is not valued equally as male work. Steinberg argues that systems chose factors and factor weights to best capture and reproduce existing wage structure. That means that valuable job content was in design associated with traditionally high-paying (male) jobs. Consequently, factors such as authority, coordination, and responsibility, are often double counted, especially in managerial functions. Another article of Steinberg (1999) offers instruments to measure components of emotional labor to be included in job evaluation methods (Steinberg, 1999). In 2013, the International Labour Organisation (ILO) published the report ‘Equal Pay An Introductory guide' (Oelz et al., 2013). Different national examples are provided for inspiration, including the role of job evaluation as instrument for achieving equal pay. More recent, a number of studies appeared. Koskinen Sandberg (2018) studied the Finnish local government sector as case for research on undervaluation of women’s work (Koskinen-Sandberg, 2017). In 2022, Salminen-Karlsson et al. investigated processes of gender pay audits in five municipalities in Sweden. Wagner (2022) described the Icelandic Equal Pay Standard (IEPS), a novel and publicly praised gender equality policy that is based on a job evaluation tool. Williams and Werner (2022) examined the similarity of Production Designer (male-dominated) and Costume Designer (female-dominated) positions in the Hollywood film and television industry (Williams & Werner, 2022). They showed that traditional methods of job analysis and job evaluation can be applied to compensation practices in new industries, such as the film and television industry. They expect that job analysis and job evaluation can be meaningfully applied to address current pay equity concerns.

Gaps

There was no research reporting to what extent job evaluation systems have changed according to the critics, and to what extent new factors are incorporated. Therefore, it is not clear to what extent job evaluation systems today are (still) biased. Another gap is that there was hardly any empirical research on the extent to which job evaluation methods produce equal pay outcomes (how and where, and where not) and what interventions can be developed to increase equal pay outcomes through job evaluation systems.

Themes throughout the Years

We mapped the eight key themes throughout the years to get insight into how research on the different topics has evolved. From Figure 3, it can be seen that research and publications on job evaluation seem to have developed alongside the emergence, standardization and spread of job evaluation systems in different countries and organizations. Reflection and debates occurred when a new system emerged (for instance the Hay system), a new system was implemented or updated (for instance the Agenda for Change in the NHS), or legislation changed (for instance on pay transparency). Figure 3 makes also visible that in the 1940’s most articles concerned methodologic issues, with specific interest in the reliability and validity and in comparing different approaches to job evaluation. Then, from the 1970’s the variety of topics increased with upcoming themes as policy, law, labor agreements and the role of unions in relation to job evaluation. Also some publications on HR strategy and organization strategy appeared. In the 1970’s and 1980’s, most articles still focused on methodologic issues, but then also emerged the guidelines, standard job descriptions and schemes. A tipping point in the number of publications was reached in the 1990’s, with the theme “guidelines, norms, standard job descriptions and schemes.” Publications within this theme were mostly from institutions that developed and maintained job evaluation methods in the USA, Canada or the UK. An example is the manual from the USA Federal Wage System and accompanying job grading standards (USA Office of Personnel Management, 1996). Then, in the years from 2000’s onwards, the key theme in the literature was the adaptation and implementation of job evaluation methods to new contexts, such as new organizations, industries, or countries. Notable is the emergence of literature in the 1980’s on gender inequality, bias in job evaluation and equal pay. Also visible is a recent increase in studies on equal pay from the year 2000 onwards. At the same time, it visible that the total number of studies on job evaluation is declining from the year 2010. Key themes in the academic literature on job evaluation throughout the years (n = 199).

Gaps

There was hardly any recent research on job evaluation.

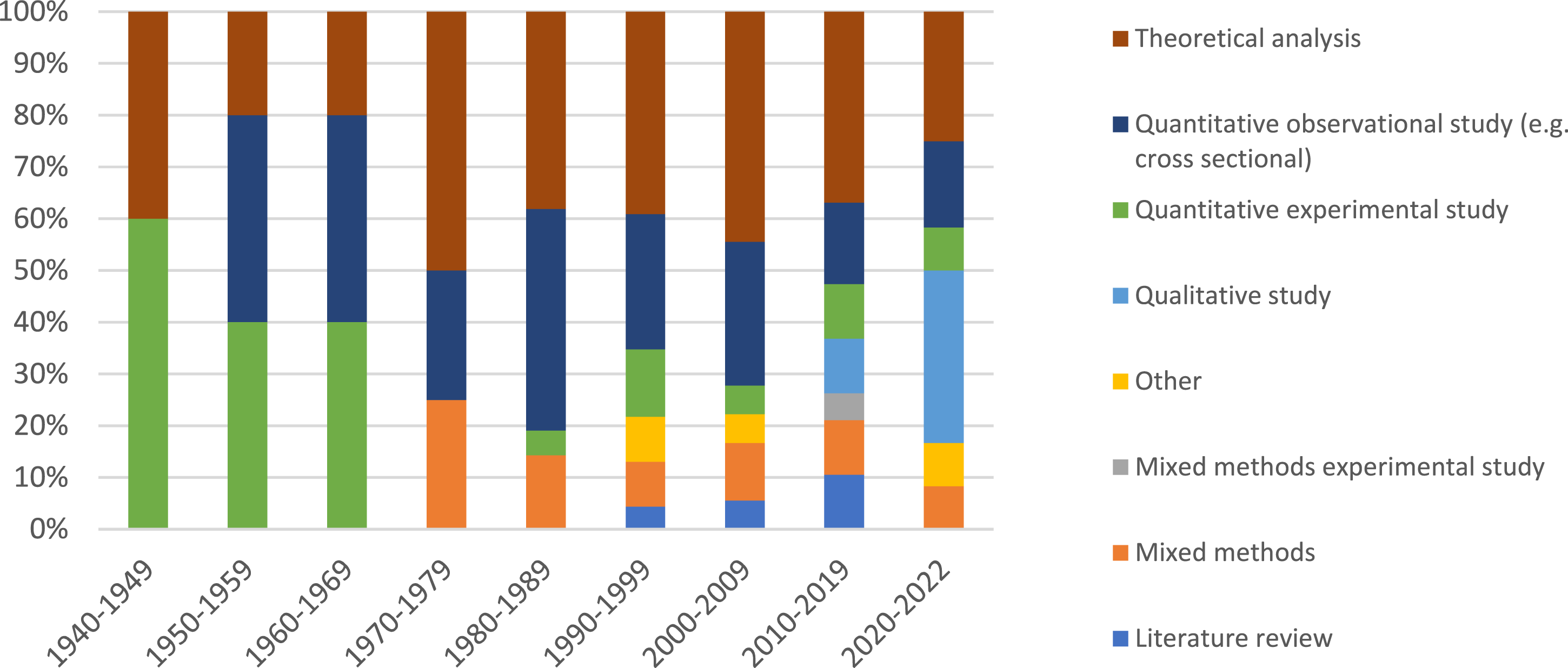

Distribution of Studies by Research Method

Of all included articles, 58% (n = 116) were reporting results of scientific research. Although we found that a wide range of methods was applied (Figure 4), a large amount of studies were theoretical analyses (n = 41; 36%), quantitative observational studies (n = 31; 27%) or quantitative experimental studies (n = 15; 13%). Less often found were mixed methods studies (n = 13; 12%), literature studies (n = 4; 4%) and qualitative studies (n = 6; 5%). Figure 4 visualizes that research between the 1940’s and 1970’s only used quantitative methods and theoretical analyses. Pure qualitative research on job evaluation first appeared in an academic journal in the 2010’s. Type of research method used throughout the years (n = 116).

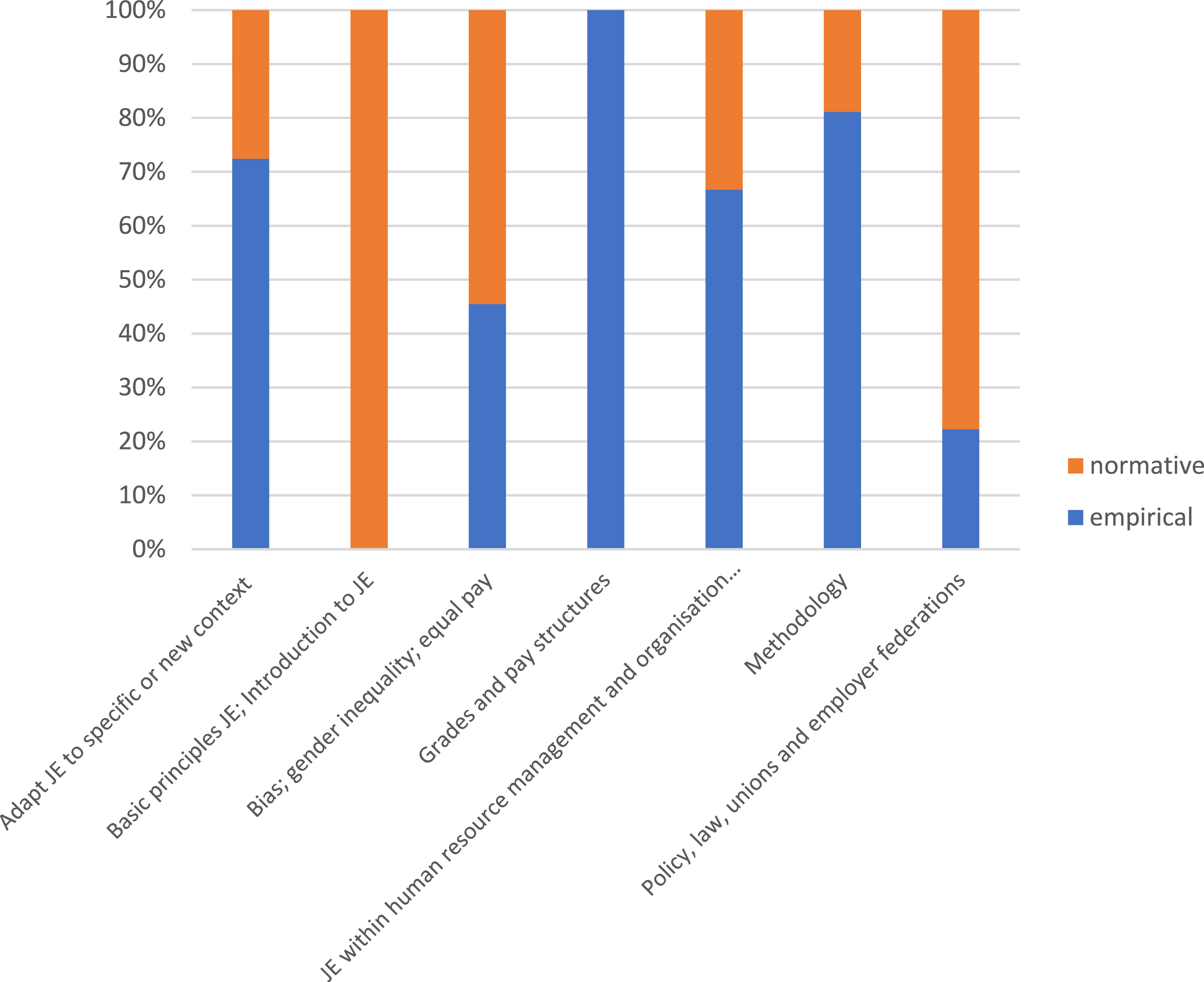

Figure 5 presents the studies categorized in either an empirical or a normative and theoretical approach. In total, 66% of these 116 studies had an empirical approach (n = 76), with research questions and methods based on retrieving observable facts and measuring the current state of things. The other studies (34%, n = 40) had a more normative or theoretical approach, with research questions and methods focusing on how job evaluation ideally should work, its norms, values and discussing the more subjective judgements. Studies were more often empirical when they focused on “methodology” and “adaptations to a new context.” Studies that focused on “bias, gender inequality and equal pay” and on “policy, law, collective agreements and labor unions” were in particular more often normative and theoretical. Empirical and normative research on job evaluation themes (n = 116).

Gaps

The themes that were more often researched in a normative and theoretical way would benefit from more empirical research to feed the debate. The topics that were mostly empirically studied need more reflection, debate, and theoretical analysis. One normative question that is not yet explicitly asked in the literature is: are job evaluation methods used in the right way? Are the outcomes that current job evaluation methods produce ideal? What can be done better?

Discussion

This scoping review provides an overview of the existing academic literature on job evaluation and proposes an agenda for future research. Using deliberately broad search terms and inclusion criteria, we were able to generate a comprehensive overview of the academic literature on job evaluation (n = 199). The findings show that the focus of research can be categorized into eight main themes, with two themes being the largest in number: publications on guidelines, norms, and standard job descriptions and research on the methodology of job evaluation. Throughout the years, the focus of research changed. Job evaluation comes up from the 1940’s. The following three decennia (1940–1970) can be regarded as a start-up period, with the main focus of research being methodological issues, such as validity and reliability of newly developed job evaluation methods. In the years 1980s and 1990’s, the broader dissemination and implementation of job evaluation methods took place, with research focusing on adapting existing methods for specific contexts, industries, or countries. The years between 1980 and 2010 counted the most publications in number. In particular, these decades produced guidelines, norms, standard job descriptions, and schemes. The topic of gender inequality and pay equality emerged as a theme in the 1980’s, reflecting societal emancipatory movements (Figart, 2000, 2001). However, scientific attention for job evaluation seems to have come to a halt since 2010. Although a small recent revival in research is noticed after 2020, solely on the topic of equal pay.

This scoping review points out several gaps in the literature, such as the lack of recent research on the validity and reliability of job evaluation methods, on the acceptability of outcomes of job evaluation and on the selection and adaptation of criteria for evaluation. The review also showed a decline in the number of studies after 2010. The decline of academic research is in particular problematic, in particular when looking at the grey literature. One explanation could be that organizations replaced the more formal methods by other more informal methods. For instance, Brown et al. stated that job matching and job levelling are increasingly being used because of experienced problems with point-factor methods (Brown et al., 2016; Brown & Munday, 2016). However, there is no research available underpinning this with numbers. Another explanation could be that from 2010 onwards, job evaluation is increasingly being seen as an established administrative practice and not so much as a topic for scientific research. At the same time, institutions studying labor, work, and wages as well as public sector employers, in particular HR professionals, are asking for innovation and repairment of certain pitfalls in job evaluation practices (Dutch Federation of University Hospitals (NFU), 2023; Human Capital Group, 2021; International Labour Organisation, 2019; Kremer et al., 2021; Ministerie of Defensie, 2022; NEF, 2009; OECD, 2018). They argue for less bureaucratic time spent on job evaluation, more flexibility and adaptation of norms in order to better compensate high expertise, temporary project roles, temporary tasks of employees, self-management skills, and delegated or complex intertwined regional responsibilities. The HR literature demonstrated that employee perceptions also influence attitudes and behavior (Nishii et al., 2008). Thus it is important to study the link between job evaluation systems, other HR practices and organizational outcomes. At the same time, employers need to guarantee and document objective wage setting procedures and this is becoming more important due to recent legislation. Organizations need to comply to equal pay legislation and pay transparency directives (Directive 2023/970, 2023; Harrington, 2023; The Council of the EU, 2023). This means that job evaluation methods and tools will be used more often than now. At the same time this review shows a lack of recent research. For tools and methods to produce fair outcomes, it is crucial to study and understand the use, the processes, the values and criteria of the methods itself. Therefore, we think that research needs to empirically investigate current job evaluation practices, especially when they will be used more.

The scoping review also made visible that job evaluation methods are not very much reflected upon by researchers since the 2000’s. We were specifically interested in research on changes in criteria used for determining the relative value of jobs, but found that this is a gap in the literature. This is an important finding, because from historic overviews on job evaluation we learn that is important to investigate and critically question the more normative aspects of the tools and methods. The available historic overviews describe the start and rapid spread of job evaluation (Craig, 1977; Figart, 2000, 2001; Oettinger, 1964). These analyses are of great value to understand the course of events, developments and normative ideologies behind job evaluation. We learn from these studies that job evaluation must also be viewed as a social construct. As Figart writes: “Wages are derived from a complex interaction of market forces, government regulation, and social and cultural assumptions. Wages, therefore, are also a social practice, that is, a habit or custom that can either reproduce or alter social relations and institutionalize norms. In particular, as the case of job evaluation reveals, wages reflect and influence gender and class relations” (Figart, 2001). As is always the case with tools and techniques, there are implicit assumptions in the construction. These assumptions often reflect the contemporary Zeitgeist, values, norms, and relations. By now, eight decades after its first development, job evaluation as wage-setting technique is fully institutionalized as social practice. This includes all its (assumed) positive benefits and ideas regarding equal pay for equal work. But, as of now, research is needed to give input to a normative reflection and debate.

The issue of maintenance and changes in job evaluation is touched upon by Armstrong, who published his handbook on job evaluation in 2018 (Armstrong, 2018). One of the chapters concerns the relevance of maintaining job evaluation by frequently reviewing benchmark roles and jobs. According to Armstrong, schemes and methods must be maintained to make sure the factors are still appropriate in the light of changes in roles and the way in which work is organized. This will prevent that employees become cynical about the scheme or even openly hostile to it. Equality and equity are two important principles in job evaluation, as these concepts relate to employee perceptions of fairness (Boxall, 2016). In HR-theory a distinction is often made between external equity and internal equity. Internal equity is related to the job evaluation system and the outcomes it produces within an organization. External equity describes the alignment between the job evaluation system and the external labor market (Romanoff, 1986). Thereby, a job evaluation system ideally supports other HR-practices, for instance the recruitment and retention of employees. A good alignment between the external labor market and the job evaluation system will help organizations to stay competitive and relevant for employees, as employees will perceive their pay structure as “fair” in comparison to trends in the labor market. One of the key questions to be asked within an organization is: does job evaluation still provide a satisfactory basis for maintaining an equitable grade and pay structure? From the scoping review, we learn that this question has not been asked recently. This can be considered as an important gap in the literature.

This review featured both strengths and limitations. With respect to strengths, we used systematic and transparent scoping review methods. To our knowledge, this is the first systematic scoping review on job evaluation. To be able to find studies from all kinds of disciplines, we used broad search terms, searched in different kinds of academic databases and covered the whole meaningful period with respect to job evaluation. With respect to limitations, as with most reviews, our search strategy may not have identified all relevant studies. We could not include publications that were not freely available or written in other languages than English. We might have missed some publication because of that. In addition to this, there are several relevant textbooks on compensation that also address job evaluation practices and methods (Belcher, 1955; Belcher & Atchison, 1987; Gerhart et al., 2023; Gerhart & Ryes, 2003; Martocchio, 2019). Our review did not include these, as textbooks are generally not considered academic research because they represent a (selective) overview of how the academic field has developed from a practical perspective, rather than making new contributions to the knowledge of job evaluation systems. Nevertheless, developments in the publication and content of textbooks can provide valuable insights into how practice has adopted scientific advancements. Another reflection on our methods and findings is that job evaluation is mainly a national practice. Perhaps it has not gained much scholarly attention, because it is strongly tied to country, language, and systems. It could be that most publications occur nationally in their own language, and could not be found in scientific databases. This makes it hard for countries to learn from each other, while the underlying issues and questions are similar. This type of research should therefore be replicated and complemented by including non-English language literature and the grey literature, perhaps studied by a consortium with participation from multiple countries.

This review has several implications for research and practice. It shows the dearth of recent research on job evaluation methods and outcomes, criteria, and values. Therefore, we recommend scholars to revive research on job evaluation, and conduct more studies. This review can serve as a basis for knowledge development, and facilitate the formation of new ideas and directions for the field of job evaluation. Below, we propose the first steps of a research agenda to take the field further. We recommend practice to anticipate increasing pressure on their current job evaluation methods. Pressure may come from developments such as pay transparency and equal pay legislation, and increased labor market shortages. We recommend organizations to be open to innovation, evaluate job evaluation practices, publish best practices, participate in scientific research, learn from others, and innovate where needed. Although this review does not directly provide specific recommendations or actions for practice, it may serve as the grounds for future research and thereby contribute to innovation and solve or address current problems.

Job Evaluation: A Research Agenda

Based on this scoping review, we propose the following research agenda for starting and extending research. (a) Empirical research questions: 1. What is the exact use (dissemination and implementation) of the different job evaluation methods? Are there differences between countries, sectors and industries? To what extent are the methods used as designed? 2. What is the validity and reliability of current job evaluation methods and procedures? To what extent are the methods and procedures viewed as reliable by employers and employees? What is the effectiveness of matching and levelling (as different forms of job evaluation methods) compared to point-factor methods? What are the strengths and limitations of (different forms of) job evaluation? 3. What outcomes do job evaluation methods produce, and what are the effects of job evaluation methods on wage inequalities? Are there differences between countries and industries? What explains the variation in (equal pay) outcomes? To what extent are job evaluation and its outcomes accepted as fair and equitable by employees and society? How do citizens weigh the factors in job evaluation and how does that differ from the existing weighing of factors? 4. How have job evaluation methods and criteria evolved and changed over time? How are current job evaluation methods maintained and updated? What methods are used? Specifically, we recommend research comparing criteria across contexts. For instance, comparing the development and use of evaluation criteria in different countries and industries. This will provide insights regarding the cultural sensitivity of job evaluation methods. 5. How do job evaluation systems fit with other HR practices? How can job evaluation systems contribute to organizational outcomes and strategy? How do organizations deal with tensions between internal equity and external competitiveness? (b) Normative research questions: 1. Are job evaluation methods used in the right way? 2. Are the outcomes that job evaluation produce ideal? What can be done better? 3. Should job evaluation methods, criteria and their weighing be modified?

Conclusion

This scoping review of 199 articles found that the literature covered 8 themes in the period of 1940–2023. The most substantial and substantive research in job evaluation occurred between 1980 and 2010. The number of studies on job evaluation declined considerably since 2010. This demonstrates that science is falling behind, comparing to present developments and challenges. This is unexpected and problematic, because changes happen in the content and organization of work and the labor market. These changes affect job evaluation, but there is hardly any recent research available. At the same time, it is known from grey literature that institutions and governmental organizations are asking for innovation of job evaluation tools and methods. The ideal and benefits of securing equal pay through job evaluation and setting fair wages are still valued and needed. Therefore, we highlight the importance of expanding research in job evaluation and present a research agenda that consists of both empirical and normative research. An important research gap concerns validating current job evaluation methods and criteria against contemporary values and working conditions.

Supplemental Material

Supplemental Material - Mapping the Literature on Job Evaluation: A Scoping Review

Supplemental Material for Mapping the Literature on Job Evaluation: A Scoping Review by Irene van de Glind, Roos Mulder, Agnes Akkerman, Mieke van der Biezen, Jael Bootsma, Evelyn Finnema, Lex Heerma van Voss, Niek Mouter, Jos van Rooij, and Geertje van de Ven in Compensation & Benefits Review.

Footnotes

Author Contributions

IVDG, GVDV, MVDB, RM were involved in the design of the study. IVDG, GVDV, JVR and RM screened titles and abstracts. IVDG, GVDV, RM, JB and JVR extracted data. AA, EF, LHV and NM discussed the first analysis and results. IVDG wrote the first draft of the manuscript, all authors gave their feedback and approved the final version.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Data Availability Statement

The data that support the findings of this scoping review are available from the corresponding author upon reasonable request.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.