Abstract

Young people around the world are increasingly impacted by technology-facilitated harms, yet research shows that teens often do not seek help from adults in their lives to deal with these harms. This article draws data from 25 focus groups with 146 young Canadians (aged 13–18) as they explain why they are reluctant to seek adult help when experiencing technology-facilitated harms. Young Canadians consistently said that adults speak to them in ways that are judgmental, emotionally reactive, and disempowering. To make them more likely to seek help from adults, young people want adults to avoid scare tactic approaches, listen to their perspectives and needs in the aftermath of harm, provide non-judgmental supports, and give them space to openly discuss all the “weird stuff” that they might encounter in digital spaces. These findings underscore the need for adult interventions in young people’s digital lives to shift from fear-driven, judgmental approaches toward balanced, non-judgmental, and youth-centered responses that empower young people’s agency—an imperative for fostering trust, encouraging help-seeking, and developing more effective support systems—and offer critical guidance for educators, community workers, legislators, and policy makers seeking to build useful and responsive structures for youth dealing with technology-facilitated harms.

Keywords

Introduction

While digital spaces and tools can be used positively by young people to connect with friends, access entertainment, find support, and learn new things, young people can also encounter technology-facilitated harms in those spaces. Technology-facilitated harms can range from acts that are often labeled as “bullying,” such as name calling, rumor spreading, and making fun of someone’s appearance, to those that might rise to the level of violence, such as the nonconsensual distribution of or threats to distribute intimate images, harassment, impersonation, doxing, hate speech, or nonconsensual exposure of someone’s sexuality or gender identity (Bailey, 2016; Bailey et al., 2021; Dodge, 2024a; Dunn, 2021). When young people are impacted by these harms, research shows that many will not feel comfortable seeking help from adults in their lives, especially parents, due to fears of negative responses (Dodge & Lockhart, 2022; Jørgensen et al., 2018; Mishna et al., 2023; Pijlman et al., 2025).

Although there are many situations where young people may be able to navigate technology-facilitated harms well on their own or with peers (Dodge & Lockhart, 2022; Setty, 2019), it is necessary to understand why they might avoid help-seeking from adults even in those scenarios where adult support or guidance could be helpful. In this article, we share the voices of Canadian teens as they explain why they are reluctant to seek adult help when experiencing technology-facilitated harms. Most importantly, this paper discusses what young people said adults could do to create the conditions that make help-seeking more likely.

This article is based on our analysis of data from 25 focus groups with 146 young Canadians, aged 13 to 18, where they discussed harms experienced in digital spaces or using digital tools, ranging from the mundane to the severe. We also discussed where they go for support when things go wrong and what supports they wish were available. Young people across Canada consistently said the messages they hear from adults about digital safety and the responses they receive in the aftermath of technology-facilitated harms can be judgmental (e.g., implying young people deserve the harmful consequences for how they used technology or shared content), emotionally reactive (e.g., increasing levels of distress due to adults’ fears of technology), and disempowering (e.g., reporting incidents to police or other authorities regardless of the desires of the young person). To make help-seeking from adults more likely, young people shared that they wish adults would avoid scare tactic approaches, listen to their perspectives and needs in the aftermath of harm, provide non-judgmental supports, and give them space to openly discuss the “weird stuff” that they might encounter in digital spaces.

These findings will be beneficial for anyone who is working to support young people in their technology-facilitated social lives and should be considered by legislators and policy makers who are interested in creating institutionalized supports for young people. While our findings support existing research on help-seeking regarding technology-facilitated sexual violence (Jørgensen et al., 2018; Mishna et al., 2023; Pijlman et al., 2025), we expand on this work by illuminating young people’s feelings on help-seeking regarding a wider range of technology-facilitated harms. Whereas most existing qualitative research on such harms has been undertaken in the United Kingdom, the United States, and Australia, we build on the existing literature by providing rich qualitative evidence from the Canadian context.

Help-Seeking Behaviors of Those Impacted by Technology-Facilitated Harms

Technology-facilitated harms, like offline harms, can result in a range of impacts, including anxiety, depression, reputational harms, and social isolation (Beran et al., 2015; Henry et al., 2020). Despite this, many impacted people do not seek help or disclose harms, even in more severe cases (Douglass et al., 2020; Horeck et al., 2023; Pijlman et al., 2025; Ruvalcaba & Eaton, 2020). Among young people, disclosing technology-facilitated violence or bullying to an adult is often found to be the “least common coping strategy,” due to fears of having their digital access taken away or being blamed and shamed by adults (Horeck et al., 2023; Jørgensen et al., 2018; Mishna & Van Wert, 2015, p. 36; Mishna et al., 2023). These fears are well-founded as adult responses to, and education about, these harms regularly engage in blaming and shaming and digital access is often revoked in arbitrary or disempowering ways (Albury et al., 2017; Dodge, 2024a; Henry et al., 2017; Horeck et al., 2023; Mainwaring et al., 2025; Mishna et al., 2023). In cases of technology-facilitated sexual violence, young people may experience additional reporting barriers and may be especially reluctant to seek help from parents due to the stigmatization of young people’s sexuality (Dodge & Lockhart, 2022). Additionally, research on help-seeking in cases of image-based harassment and abuse among young people shows that the reason for not reporting to anyone is sometimes due to the fear of adults, especially parents, responding negatively (e.g., victim blaming and shaming) or using criminal law in an unhelpful manner (Dodge & Lockhart, 2022; Horeck et al., 2023; Pijlman et al., 2025).

In terms of what facilitates help-seeking, McLoughlin et al. (2024) found for those impacted by nonconsensual intimate image distribution, “the absence of stigma informed positive help-seeking experiences and highlights the importance of expecting a non-judgmental reaction” (p. 8). Ray and Henry’s (2025) review of sextortion literature similarly found that victims viewed help-seeking as successful when the person they disclosed to “actively listened, encouraged them to continue sharing, and showed empathy without judgment or accusation” (p. n.p.). Young people may also be more likely to report if they believe they will be given some agency over how the incident is responded to (Dodge & Lockhart, 2022). These findings highlight that young people might be more willing to seek support if they knew they would be met with a non-judgmental response and receive support or resources without losing control over how the incident is responded to.

Method

This paper draws data from a mixed-methods research project on young people’s experiences of technology-facilitated harms, including the messages they receive about these harms and the supports they currently use and/or wish were available to them. In this article, we analyze data from our 25 focus groups with 146 young people aged 13 to 18 from across Canada. Focus groups were conducted in 2023 and 2024 in rural and urban locations in Alberta, Nova Scotia, Ontario, Quebec, and the Yukon.

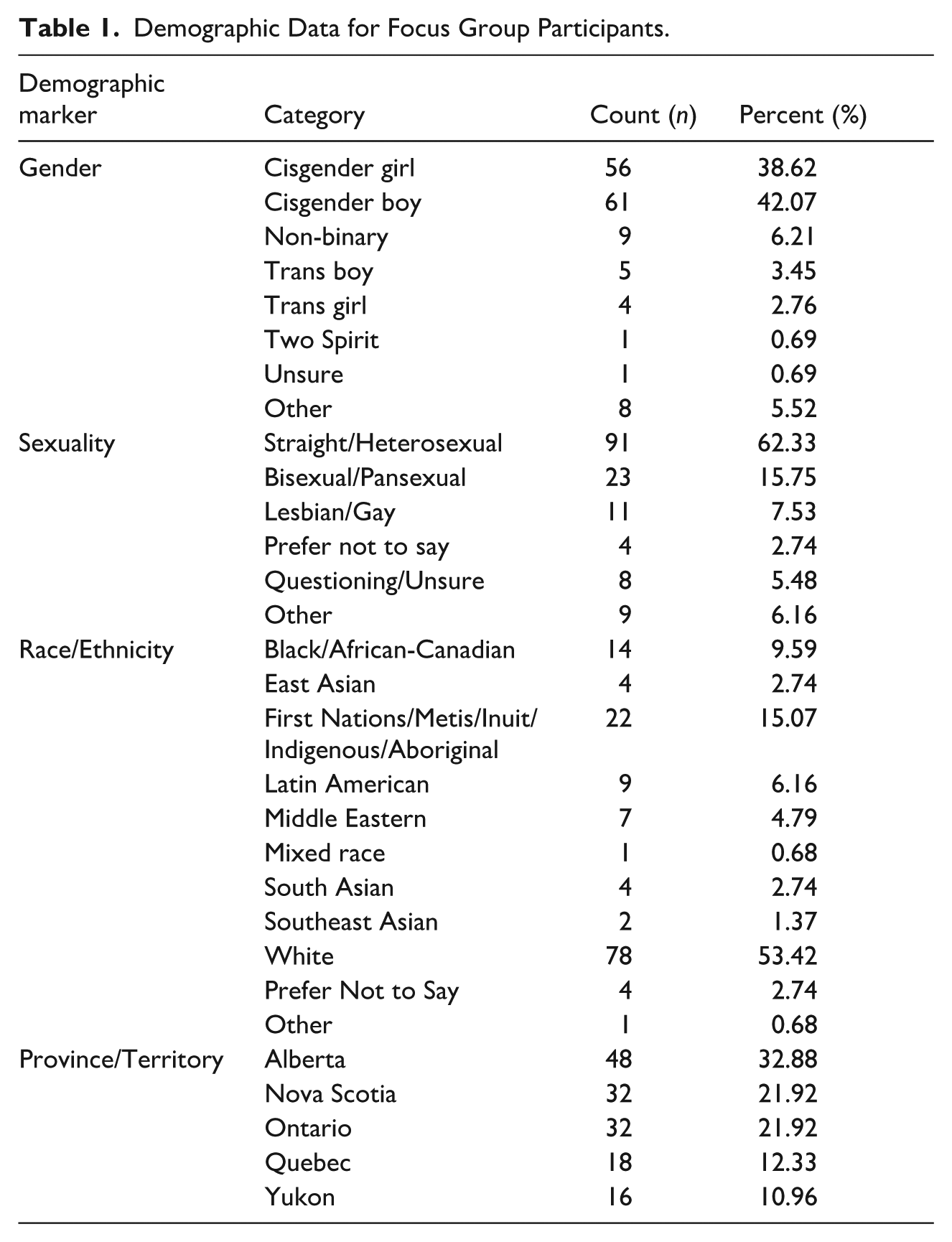

Our focus groups were primarily conducted in partnership with youth-serving organizations. To ensure a diversity of perspectives and experiences, we deliberately partnered with a wide variety of organizations. For example, we heard from rural youth involved in farming clubs, urban youth who visit a LGBTQ+ focused youth centre, boys’ sports teams, rural youth involved in cadets, and youth centres servicing low-income and immigrant youth. Our strategy also resulted in hearing from youth with diverse identities. The table below provides information on the characteristics and location of our sample (Table 1).

Demographic Data for Focus Group Participants.

The use of arts-based methods in focus groups is part of a growing move toward participatory research processes aligned with collaborative research (Dietzel et al., 2025), in which participants not only data for scholars, but produce data with them in meaningful ways (Koro-Ljungberg, 2015). We carried out two arts-based activities. The first used tokens with social media logos and emojis, asking youth to rank the apps according to most frequent use and then to convey their feelings when using those apps. Next, we drew from methodology developed by Ringrose, Regehr, and Whitehead (2021) where young people use social media and gaming templates to recreate (in drawing and writing) a common scenario they have witnessed involving digital technologies that caused harm, drama, or discomfort. The focus group guide was semi-structured and encouraged participants to build on the examples shared through their art to discuss their feelings of how harmful these examples are, how peers respond to these scenarios, how (un)helpful adults are when such issues occur, and what rules, policies, or laws they think apply. Focus groups lasted between 45 and 90 min, were conducted in English and French, and included between 1 and 11 youth. They were digitally recorded, professionally transcribed verbatim (and for the French focus groups, professionally translated into English), and analyzed using NVivo.

Data Analysis

Focus group data was coded using a grounded theory approach (Charmaz, 2006). A team of five research assistants initially completed open coding of the transcripts to document all emergent themes, resulting in an initial codebook of over 40 codes/themes. The five research assistants and four project leads then met to review and revise the codebook to ensure our shared understanding of the meaning of each code and to reorganize related codes into groups (using child/leaf codes) where necessary. This process resulted in a final codebook with 21 parent codes and 26 child/leaf codes. The research assistants then coded all transcripts, with two research assistants coding each transcript to ensure that no relevant content was missed. This article focuses on the codes titled “messages from adults about tech” and “supports and resources.”

Findings and Discussion, Part 1: Negative Perceptions of Adult Responses

Young people told us about a range of technology-facilitated harms they experienced, including receiving unsolicited nude images, being pressured to send nude images, being catfished, witnessing violent and abusive content, being forced into physical fights that were filmed and distributed, being scammed, being the recipient of nasty or mean comments, being “outed,” and having their location digitally tracked which led to physical attacks. Regardless of the specific incident, participants regularly expressed their reluctance to seek help from adults when experiencing these harms. This reluctance was most commonly due to fears of, or experiences with, responses from adults that were judgmental (e.g., engaged in shaming and blaming of the impacted person); emotionally reactive (e.g., expressing strong negative emotions); and/or disempowering (e.g., bringing in authorities, such as police or school principals, without allowing young people to have a say in next steps). Young people felt that adult anxiety about digital spaces results in little opportunity to seek help without adults taking over and escalating emotions and stress, misunderstanding the issue, or presuming what is helpful. Following this discussion, we share what young people want from the adults in their lives to make them more comfortable seeking help.

Making it Worse: Judgmental and Emotionally Reactive Responses From Adults

Many young people discussed how the education they receive on “cyber safety” influences their understanding of the adult world as a judgmental, emotionally reactive and, therefore, unhelpful place for support. This seemed to be especially so regarding discussions of technology-facilitated

[In cyber safety assemblies, they say] “don’t send pictures” and it’s like. . .

They state the obvious. Yeah. Like, don’t send nudes.

But it’s like for the people who do send it, you’re scaring them, you know, and it’s like, “Don’t send nudes because it’s child pornography and you can get this, this and that [punishment].” Like, we know that . . . but is it going to stop people? No. You know? [. . .]

I went to an assembly, and my friend [sends nudes], so I was so scared for her during this time. [. . .] And then, these people, they’re like, “Oh, if you know people that are doing this, they can be charged and [. . .] you should call the police.”

Many educational presentations in Canada frame both consensual and nonconsensual intimate image sharing among minors as

[In sex ed] they talk about nudes.

Yeah? And what are they saying?

Don’t send them.

[. . .] Well, what do they say will happen to you if you send them?

[. . .] Well, they just say it’s going to be really embarrassing. [. . .]

Like, it could get out around school and then you could get bullied.

Yeah. And your reputation will definitely decrease if other people find out about it. That’s basically what they say.

Research has consistently found that scare tactic approaches that shame consensual image sharing are unsuccessful and even harmful, as they reaffirm the stigma toward consensual image sharing that fuels victim blaming and shaming beliefs (Albury et al., 2017; Angelides, 2013; Dobson & Ringrose, 2016; Naezer & van Oosterhout, 2020) and can make young people less likely to seek support if they experience nonconsensual intimate image distribution (Dodge & Lockhart, 2022; Jørgensen et al., 2018; Steeves et al., 2020). Despite the consistent academic critiques of this approach, young Canadians regularly described receiving these messages from schools and their parents. Alex, from an urban focus group, described the shaming message she received from her mom:

My mom’s the one who’s like, “Alex, if you ever send a nude, you are the dumbest person ever.” And I’m like, “Okay thanks.”

Rose and Sarah, in a rural location, reflected how these messages made them avoid seeking parental help when their nude images were shared without consent:

Who do you go to, to figure the situation out [when nudes images are nonconsensually shared of you]?

My best friend.

Yeah, best friend.

I feel like Mom will just like, judge me.

Yeah.

My mom would be like, supportive of me. But the first like, five minutes she would be like, “Why are you sending nudes? I told you not to do it. Blah blah blah.” [. . .]

[. . .] I feel like adults, like, some adults can be judgmental and get mad at you for actually sending the picture.

That’s what I’m scared of, people getting mad at me for doing it.

Yeah, I’m always scared of that situation, so I just don’t tell anyone and just tell my friends.

These perspectives echo previous research on technology-facilitated sexual violence that consistently finds high levels of victim blaming and shaming experienced by targets (Mainwaring et al., 2025) and that young people may avoid help-seeking if they feel they will have to manage strong emotional responses or victim blaming from adults (Dodge & Lockhart, 2022; Mishna et al., 2023).

While the above examples focused on judgmental responses to technology-facilitated sexual violence specifically, young people also expressed feeling that parents quickly judged and strongly reacted to other challenging dynamics they encountered in digital spaces. Two groups from a rural setting spoke about these concerns:

[. . .] it’s the yelling and the shaming that gets me, especially from my own parents. Like, that’s what hurts most. [. . .]

They make you feel bad about it, and then it’s like, why would I even try to get help for that if you guys are just going to make me feel like shit?

Yeah, it’s a lecture, and like I know how I messed up, I just want to fix it. I just want that parent support, But I can’t get that because now you’re judging me for something that I did because I’m still a kid, learning things.

Young people expressed that strong emotional reactions from parents leave them unable to have open conversations to explain their perspective on the harm and the support they want. Therefore, adult responses were perceived as often aggravating young people’s distress, rather than helping them de-escalate the situation or put it in perspective:

[In cyber safety education at school] they’re just like, “If this happened, you’re in trouble and your entire life will be ruined. Your future employers will find you and find your old dirt and stuff and like, not hire you and your life will be ruined.” Internet safety talks, they really need to, like, approach it differently.

[The adult reaction can be stressful], if they take it too far and you’re still a child, you’ll think it’s way too big of a deal. [. . .] you’re already scared, and then there’s an adult freaking out, and it’s scaring them.

It is clear that work is needed on better educating and supporting adults to receive these disclosures, informing them about the available resources and supports. 1 Indeed, young people reported that adults often exaggerate digital harms, amplifying fears and responding in emotionally reactive ways that increase young people’s distress. For example, teens told us how many adults tell them “once an image is out there, it’s out there forever,” which is not necessarily true. Large-scale web-crawling and takedown sites, such as Project Arachnid, run by the Canadian Centre for Child Protection, remove intimate images of children through hashing technology—something which most teens (and we suspect adults) were unaware of. This combination of limited knowledge about available resources and supports, along with perceptions that adults are unhelpful and exacerbate distress rather than de-escalating, comforting, or offering hope, leaves young people feeling unsupported.

Disempowering: Escalating to Authorities and Removing Technology

Young people also worried that adults would escalate the issue without their consent, including by bringing in authorities like the police. Young people explained that their fears of automatic escalation from adults are fueled in part by scare-tactic approaches to cyber safety education in their schools that frame these issues as

Like, we have assemblies sometimes, like cyber safety, or cyber bullying or whatever. But it feels so [. . .] scary. Like, “Oh, if you get bullied you need to tell the principal so we can call the police.”

[. . .] maybe [for the older generation] calling the police was probably the first thing that you would do, but now people usually take it into their own hands.

The adult assumption that a police response is needed, even when young people might prefer informal responses, demonstrates how adults are perceived as aggravating an already difficult situation and bringing more attention to incidents that young people may want to remain confidential. We recognize that, depending on the jurisdiction, there may be mandatory legal requirements for adults to report certain types of child abuse or risks of abuse to children. However, many forms of technology-facilitated harms do not reach the threshold for mandatory reporting and, even when required, adults could better inform young people about mandatory reporting requirements and prepare them for what that could look like in practice. The popularity of scare-tactic educational approaches to digital harms, often provided by police or public safety officers that focus on the most severe cases and consequences, can create an intimidating atmosphere that may make young people less likely to seek support (Dodge, 2024a; Oliver & Flicker, 2024).

Young people may also avoid help-seeking due to past experiences of perceived undue escalation from adults. Betty provided a humorous, but illustrative, example of how disempowering it can feel when adults quickly escalate issues without allowing young people to have some say in what level of response they feel is helpful:

[. . .when you confide in an adult] all of a sudden your guidance counselor is calling your parents, and all of a sudden your parents are calling the Mounties, and all of a sudden the Mounties are calling the Navy SEALs. All of a sudden, you’ve got the entire cavalry coming.

Betty depicted how out of control it can feel to have adults escalate issues without asking young people what feels helpful. This lack of control can be especially impactful for those already feeling disempowered. As has been found in research on nonconsensual intimate image distribution, depictions of digital harms as always requiring a strong, and often police-based, response can make young people less likely to seek adult support and more likely to conceal their troubling or challenging digital experiences (Dodge & Lockhart, 2022). Instead, adults could follow a survivor-centered approach that listens to the specific needs of the person impacted. Additionally, while it can be tempting to assume bringing in formal resources, including police, is the required next step, adults should question this mandate. Research shows those impacted by digital harms often prefer informal responses and may have negative experiences with formal systems (Flynn et al., 2023; McLoughlin et al., 2024; Woodlock et al., 2020).

Relatedly, a few young people also spoke about feeling disempowered by a lack of confidentiality from the adults they seek help from. While there may be legal requirements to report certain forms of severe technology-facilitated violence, young people felt they were not informed about the limits of confidentiality, who their experiences might need to be shared with, and why. Additionally, some young people felt that their experiences were passed to other adults through the “rumour mill” in ways that were not in their best interest. Avery, from an urban area, was blindsided and distraught after confiding in a guidance counselor who they believed would keep the information confidential:

[. . .] no one teaches you the rule that the guidance [counsellor] will immediately tell your parents. [. . .] he’s like, “Okay, we’re going to get the vice principal in here.” [. . .] And they called my mom, and my mom is screaming on the other end, the vice principal is like, “That’s too much.” [. . .] Then they’re like, “Okay, this is a gray area about whether or not we can call the cops,” [. . .] like it was too much. [. . .] They will tell everyone. They’ll tell the principal. They’ll tell the teachers. The teachers will tell each other. Okay, I’m letting you know right now because the teachers all knew, okay. And the next day it was crazy [. . .] and really terrible. And so, they call the cops. And then the cops had to come speak to me, my family, and that person’s family. And it was like a week-long thing. And it was a really terrible experience because I had so many tests, but they would keep pulling me out of classes [. . .]. So, everyone, don’t tell the guidance counselor anything.

The feelings of disempowerment are evident in the advice to other focus group participants to not “tell the guidance counselor anything,” demonstrating the need to create more clarity for young people about the availability and limits of confidentiality when disclosing to adults. It also suggests the need for adults to carefully consider who needs to be informed about a given case and how the young person might be appropriately involved in decision-making about next steps.

Finally, young people also said their reluctance to seek adult help, namely parents, was sometimes due to fears that their technology would be withdrawn in overly broad or otherwise inappropriate ways. The following quote from an urban focus group demonstrates this:

If I tell my parent how I get called racial slurs almost daily [in video games], they’re going to take away the games [. . ..].

Young people were left feeling that they could not talk to their parents about challenging aspects of their digital social lives, as the response might be to simply blame the technology without engaging with the deeper issue that the young person is

[. . .] And like my dad, [. . .] he’d blame it on me. If I tell him, “Oh, there’s like a school hate page.” He’d be like, “Why are you on Instagram?”

[Parents just say,] “If you don’t want to see, get off it.”

Yeah.

Responses that overemphasize technology as “risky” without recognizing preexisting relational or discriminatory issues leave many young people feeling that adults will simply respond to complex techno-social problems by removing access to digital spaces without addressing the underlying issue. As explained by Dodge (2024a), an overemphasis on technology in technology-facilitated violence and bullying education can also “contribute to the blaming and shaming of targets, as cyber safety approaches often emphasize that the target of harm did not use technology responsibly rather than discussing the ways the perpetrator of harm mistreated someone and the ways our culture might excuse and normalize this behaviour” (p. 136). Likewise, Mishna et al.’s (2023) study of young people’s experiences with receiving unsolicited sexts and requests for sexts found that “some girls believed that out of concern, their parents would monitor their accounts ‘excessively’ or compel them to delete the apps” (p. 11). Therefore, overly focusing on technology’s role and removing it as a response when young people seek help can result in them being less likely to “seek support when they encounter issues online” (Mishna et al., 2023, p. 11; Steeves et al., 2020).

Young people in our study wanted adults to realize that, while some limits on technology use in response to harm may be appropriate, taking away access to technology in an overly broad way can also limit access to the positive aspects of technology, such as connection to friends and access to online supports. For example, Mia described how, after confiding in her mom about a man pressuring her to share nudes on Discord, her mom responded by taking away her phone, this made Mia feel disconnected from the support of her friends and unlikely to confide in her parent in the future:

I kind of wish that if you do one thing, that parents wouldn’t take away the device, cause I’ve had it happen to me. And my mom also sometimes threatens if I don’t, if I have one thing [go wrong], then she will take away my electronics, which is a way to vent my feelings out to others, like my close friends. [. . .] Now I’m afraid to tell her things because I’m afraid she’ll just do that.

It is necessary to trouble responses that simply blame technology and instead engage in critical thinking about both the technological and social dynamics that fuel digital harms (Dodge, 2024a; Ringrose et al., 2024). As Ringrose et al. (2024) explain, “young people are aware of the challenges with social media networks, platforms and apps [. . .], but their own solutions are not to ban phones or take draconian measures that would reduce the important fun and sociable elements of mobile media,” but rather to find better support strategies, more education for perpetrators of harm, and better responses from both schools and social media companies (p. 1072). We recognize this is a serious challenge for parents, particularly as most digital technologies were not designed with children’s best interests in mind, and that apps are removing—rather than investing in—Trust and Safety teams and content moderation.

It is understandable that adults who are trying to protect young people from harm may respond to disclosures by removing technology in broad ways, “calling in the cavalry” and/or becoming emotionally upset and expressing fears of worst-case scenarios. However, our findings, which echo and extend existing research, show that such reactions can discourage young people from seeking support, as they fear adults will worsen the situation through responses that feel (or are) disempowering or judgmental, or that escalate their distress. The following section will detail young people’s suggestions about what adults can do to create an environment where young people feel more comfortable seeking support when they need it.

Findings and Discussion, Part II: What Young People Want

Across our diverse focus groups, young people consistently expressed the desire for non-judgmental supports to help them navigate technology-facilitated harms. Young people shared that adults could encourage help-seeking by: (a) providing safety information that avoids scare tactics in favor of balanced and reassuring discussions of challenging dynamics in digital spaces; (b) empowering young people by listening to their perspective on instances of harm and on the supports they see as useful; (c) creating spaces to openly discuss the “weird stuff” they might encounter in digital spaces rather than providing vague “cyberbullying” education; and (d) both creating more non-judgmental supports provided by adults in their lives and providing confidential and anonymous supports that young people can access when they really need adult guidance but are worried about judgmental responses. The following subsections detail each of these recommendations from young people.

Provide Balanced and Reassuring Safety Messaging

Young people felt that scare tactics and abstinence-based approaches to digital safety send messages that are upsetting, unrealistic, and unhelpful. Instead, they want education that speaks to the realities that digital spaces can provide both connection

It’s like drinking alcohol. Kids are going to want to drink alcohol because they [. . .] know it’s fun and all their friends are doing it. And parents, their responsibility is to make sure they do it safely. You can’t stop your child from doing it [. . .]. I think it’s more important to be like, “Take a sip, try it, and I’m going to watch you, make sure you’re safe.” And that’s the same with social media, it’s like, “If you want to be on social media here’s what you need to do, here’s how you’re safe about it, if anything happens that you are unsure of, like if 30 bots follow you or 30 women named Jessica follow you and you don’t know what to do you come to me about it.” And [. . .] the stricter the parent is, the more sneaky the kid is going to be, so you need to educate the parents to be able to then educate their kids.

Other young people similarly explained that they want guidance from adults that avoids strong emotions and fears and is instead calm, reassuring, and open to their perspectives:

I need you to be able to be a safe person and not have you freaking out. [. . .]

[I want] parents not getting mad at the child for it. [Because if you get mad] then like, it’ll make it feel lonely [. . .]. So, I think that it’s a lot better [to] just be more supportive.

[. . .] I really think they should be like giving some resources to, like, tell you, “It’s okay” and like, “If it happens, like you’ll be okay.”

The messaging young people are asking for here aligns well with research on the most effective pedagogical practices. Critical digital literacy approaches avoid scare tactics and prescriptive rules and instead facilitate conversations with and between young people to help them think critically about the content they consume online, the impact of tech on their physical and mental health, and what useful codes of conduct would look like for the digital/physical realities they live in (Setty, 2024). For example, in their writing on educational approaches to intimate image sharing among young people, Oliver and Flicker (2024) argued that “pedagogical practice could shift to allow students to say what they know and to share their experiences of the cultures and communities they are navigating in their day-to-day lives,” as education can be more effective when students are “given opportunities and information to reach their own conclusions and develop their own [. . .] ethics in collaboration with their teachers, rather than being told what to believe and how to behave” (p. 13). It’s worth noting that these kinds of dynamic conversations are difficult to have in large groups and are better held in small group formats. Echoing Jørgensen et al.’s (2018) research on youth perspectives of education regarding sexting, several young people in our study spoke about how education often failed to engage them because it spoke

Young people expressed wanting education that provided information about tools they could use to prevent harm or to help respond when things go wrong online, rather than messages that try to make them afraid of the consequences of engaging online. For example, several young people expressed not liking how Snapchat automatically makes their location public to everyone, but did not know how to change that setting without adult guidance. Valentia explained that she does not recall getting information about available tools or supports, but only hearing about how terrible the impacts of digital harms can be:

So, I’ve heard kids that like, well, this is coming from my mom, because she’s all crazy about that stuff, where, like, kids kill themselves because they don’t know how to handle it.

Yeah. After they get exposed.

And they’ll be like, 14 years old and will kill themselves in their bedroom because they just can. I think one time my cooking teacher was talking about that, and she said this one girl in her class had to transfer schools because she was getting bullied.

Young people wanted help navigating harm reduction approaches, such as safety tools and privacy settings, rather than scare tactics that frame digital spaces as all bad and focus on digital harms that led to the most tragic outcomes. Therefore, young people want non-judgmental spaces to share the tools and resources that they know about and to ask for the information and support that they need. We recognize that for this to happen, adults and teachers will also need support and training. Unfortunately, research shows that teachers and parents struggle to address online harms and need more guidance (Horeck et al., 2023). For example, in a U.K. study, while most teachers reported receiving some training, nearly 40% felt it was unhelpful in preparing students to respond to online risks and harms (Horeck et al. 2023). In the same study, parents’ digital literacies varied starkly, with 60 to 90% unsure how to change privacy settings across a range of popular apps.

Empowerment Through Listening to Their Perspective on Situations and Responses

Young people want adults to treat them and their concerns seriously, and to listen openly and with genuine curiosity to how

[. . .] to the adult, it’s either nothing or it’s way too much. It’s either [they treat it like it’s] normal and it’s [. . .] just something that happens to everyone, something so little. [Or they treat it like] it is a big deal, and they take it way too far. [. . ..] They either give you too little of a reaction and they might get angry at you for overdoing it, or [. . .] on the other side, they’ll get angry at you for taking it as such a little thing.

So, are there situations where you would trust an adult to talk to them about, like some of this kind of stuff that’s happening online? [. . .]

I think it really depends on the situation. Sometimes the other person [doesn’t] help because, say, you just want to talk, they are like, “Oh, we got to call the cops. Oh, we’ve got to do this. . .”

Yeah. So, they escalate it?

Yeah. Or they don’t do anything at all. And then it’s like, well, how the heck am I supposed to get out of the situation? And they’re like, “Well, nothing has happened in this school, so we can’t personally do anything.” It’s either like, “Oh, we have to talk to somebody right now and cause more problems” or “This problem is not for us to deal with because it’s not happening in the school right now.”

Young people wanted adults to go through the experience alongside them, rather than assuming what aspect of a situation is most impactful and what action needs to be taken as a result. While in many cases young people felt that adults overreacted to harms in unhelpful ways, such as the emotional reactivity and disempowering escalations described above, in other cases, young people felt adults underreacted to something that they experienced as very harmful because, from an adult perspective, it was seen as normal. For instance, a young person may think it is no big deal and easy to block a stranger who is messaging them for a sexual purpose, but they might be deeply impacted by a close friend posting a secret they shared in confidence. This demonstrates the importance of listening to young people’s perspectives on where the harm is located for them and why. Young people want adults to be open to their perspective on what responses are needed. For instance, Ruth described the importance of adults who don’t assume what action is needed to “fix” the problem and will listen to them when that is all they need:

I feel like sometimes, based on like the personality and relationship you have with adults, you can find ones, like if you just need to vent and you just need an outlet, there are teachers and people who will just listen, and not cause more problems. But really, you just have to talk to them and find out what kind of a person they are.

As Ruth explained, young people can sometimes stumble upon these adults by chance, but it is not always clear who will be open to hearing their perspectives.

Open Discussions About the “Weird Stuff”

Young people said that adult messaging often focuses on “cyberbullying,” a term that they find both vague and disconnected from many of the harms or, as they often say, “weird stuff” they are navigating in digital spaces. They want help grappling with the “weird stuff” by digging into the social issues—such as issues of identity, power, sex—and relational dynamics that often underly digital harms. For instance, in a rural focus group, most participants said they never had the chance to learn about nude image sharing or the other digital harms we discussed in the focus group, but rather learned about “cyberbullying,” which they see as detached from deeper social issues:

[. . .] cause one of the things we think about a lot [. . . is nude image sharing], so when they come in to talk to you about that [at school], what do they tell you?

They don’t talk to us about that.

They haven’t talked to you about it at all?

No, they talk about cyberbullying.

They don’t talk about like sexting or anything like that?

They don’t talk about that, they don’t talk about the weird stuff really. [. . .]

And then they teach us about cyberbullying and that’s all they teach us about every year. [. . .]

In our school, they don’t like do anything to help us, they just say cyberbullying is this, this and this.

That’s what happens, and they never help us, they don’t tell us what to do.

They just research everything for just us to look at.

Oh, okay.

Didn’t really learn anything.

When teachers and your schools are talking to you about cyberbullying, what do they mean about that? Do they mean the kinds of things you talked about here?

They mean bullying except online.

Another group likewise explained that they wanted space to acknowledge and openly discuss the “taboo stuff” that they may encounter in digital spaces because “there’s not going to be a way to block everything.” As this young person stated, adults are not going to be able to protect young people from exposure to all harms, whether it be online or off, so they need space for open conversations about how this content might make them feel and assurances that they can reach out for support if something is troubling them.

Non-Judgmental and Confidential Supports

Young people expressed wanting access to non-judgmental adults whom they could share their digital worlds with and talk through difficult situations with openly. Although rare, there were some examples where young people felt they could go to a parent for support without being judged. Mariam felt comfortable seeking help from her mom due to reassurances from her mom that “whatever you do, I’m going to accept it. Like you did it, but people make mistakes.” Samara described feeling comfortable speaking with her mother because she is “open-minded” and attentive to her “point of view.” This trust enabled sustained, open dialogue, with Samara routinely sharing aspects of her digital life to gain her mother’s perspective on online content and behaviors. These examples demonstrate that young people may seek support from parents if they believe the response will be non-judgmental and open to their perspective. Likewise, some young people felt there were occasions where adults at their schools could be trusted. For example, Lucy felt that her guidance counselor and some of her teachers are “really helpful” because they will sit and listen for “however long you need” and will get you help and say, “I’ll support you.” As one recent Canadian study has shown, these adult responses are uncommon (Almanssori et al., 2025). Participants wanted to build trust with adults and have open conversations with fair responses based on the situation, rather than on generalized fears of technology and worst-case scenarios.

Finally, young people called for more confidential support options in situations where they wanted adult guidance but were uncomfortable speaking with someone who might tell their parents. This was especially true in cases involving technology-facilitated sexual violence, where stigmas of discussing sexual topics are strong. In one focus group, young people spoke about how shame, stigma, and discomfort made them reluctant to seek adult help when experiencing digital harms such as sextortion or being inappropriately contacted by adults in digital spaces:

I think most of the reason is because if you told anyone there’d either be like harsh consequences or like they’d have to tell someone. And when you’re in that situation, like you don’t really want to tell anyone, especially if someone’s like blackmailing you for your stuff [. . . or] they are your friend [. . . and] you don’t want this person to be in trouble. Or like, you just don’t want to be in trouble with your parents. Because there were times where I’d [. . .] want to tell an adult, but like [. . .] I know they’d be obligated to tell my parents, and like, you know, my parents aren’t very understanding when it comes to stuff like that, especially when I’m a kid. So, that was definitely the main fuel for not wanting to tell anyone.

I feel like for me [. . .even though I don’t think my parents would be mad] I was still scared of how my parents would view me as weak or gullible or just like, just it’s embarrassing [. . . and] it’s very vulnerable. [. . .] I just, I was so embarrassed, and I couldn’t tell anyone. [. . .].

I would [want to tell] a guidance counsellor who won’t immediately jump the gun and call my parents because that does not help because, they’ll just create a stigma for children who are sexually abused online and sexually exploited online, and children who end up accidentally clicking on porn. Or children who have been doxed. Like they will not tell someone, or they won’t seek help because there will be that fear that their parents will punish them. And there needs to be someone who will help them and won’t immediately tell their parents, or even immediately tell their teachers, because teachers almost always jump the gun and tell their parents. I am lucky that when a certain situation [happened to me that I don’t want to explain. . .] and I told the [. . .] social worker, [. . .] and basically that person went to the teacher. Luckily, the teacher didn’t immediately just jump the gun and tell my parents [. . .]. And I’m glad the teacher decided to [. . .] respect confidentiality. I still haven’t told my parents because I don’t want them to know and be like, [. . .] “I’m going to start restricting where you can go because I don’t want that to happen again.”

These comments demonstrate the importance of informing young people about confidential supports, such as the Canadian supports Need Help Now or Kids Help Phone. Especially in relation to technology-facilitated sexual violence, young people may perceive telling their parents as the most difficult hurdle (Jørgensen et al., 2018) and may not seek help from anyone if they are unaware of entirely confidential options for support.

Conclusion

When adults receive most of their information about young people’s digital lives from news coverage of the most tragic cases (such as those linked to suicide) and fictional portrayals of worst-case scenarios (such as the murder plot of Netflix’s Adolescence), it is unsurprising that they often express strong emotions and jump to scare tactic approaches to try to protect the young people in their lives. However, young people told us that these approaches are backfiring by making them feel that, in most cases, adults are not a safe place to turn for help. Adult responses can often feel judgmental, emotionally reactive, and disempowering. Young people consistently expressed that they are—and in some cases desperately—seeking adults who will provide non-judgmental responses that do not assume what is helpful but, rather, let young people have some say in next steps.

To feel more open to help-seeking, young people shared that they are looking for adults who provide balanced portrayals of technology (e.g., demonstrating an understanding of young people’s realities of finding both connection and challenges in digital spaces), non-judgmental responses (e.g., avoiding victim blaming), empowering responses (e.g., allowing young people to have a say in when and how the response might escalate and informing them about when confidentiality may need to be breached), and open discussions about the “weird stuff” they see online (e.g., replacing vague presentations about “cyberbullying” with interactive discussions about troubling or harmful content, including sexual and discriminatory content, that young people are encountering in digital spaces). Young people want adults to listen to their perspective and then help them problem-solve the responses and supports in ways that feel most helpful to them. As adults continue to struggle with precisely how to regulate and support young people’s digitally entangled lives, it is imperative that we listen to young people as they tell us what feels like support to them.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The authors received financial support for this research from Canada’s Social Sciences and Humanities Research Council: Insight Grant #1154711.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interests with respect to the authorship and/or publication of this article.