Abstract

Publisher consolidation, open access, mega-journals, predatory publishing, and other innovations have changed the scholarly information landscape. This paper asks, what issues are raised for planning by the recent increase in numbers and types of journals? These trends have enabled a complex publishing landscape with proliferating journals, new kinds of peer review, and predatory journals that use fraudulent practices but can be hard to identify. Examining the case of literature reviews for evidence-based practice I argue article quality is more uneven, requiring additional assessment. Education can warn about pitfalls. A new generation Council of Planning Librarians could provide leadership.

The Problem

In the past few decades, academic publications have exploded in numbers. The same period has brought calls for more systematic evidence-based practice (EBP) in urban planning. These two trends seem a perfect coincidence that will bring about positive change—more evidence to help practice.

However, the rapid growth in publishing has changed the nature of published work. Many new journals have prioritized speed of publication over quality control, reduced peer and editorial review, and placed the onus on the reader to assess quality. Some journals have published hundreds of thousands of articles annually, primarily via a dispersed guest editor model or through reviewing for methodological soundness only rather than contribution (Sharp et al. 2023). Others, often termed predatory journals, have used deceptive practices (Grudniewicz et al. 2019). Many of these are open access (OA), available on the open web not via subscription, and not subject to selection and curation by librarians.

This paper asks, what issues are raised for planning by the recent increase in numbers and types of journals? It does this in part by examining the case of those reviewing the literature for EBP. This paper is not about whether to publish in or review for particular types of journals, as important as those issues are. Instead, it poses questions about published work. This includes new concerns as to which existing materials should become part of the evidence base for EBP. Of course, planners have been dealing with thinly reviewed literature for years—planning reports and professional magazines—so one might wonder how is this different? However, recent changes have been substantial, and when faced with a specific article, it is often unclear which changes it represents.

Evidence-based, or evidence-informed, practice takes many forms. While potential evidence is narrowly defined in some more scientific fields, urban planning draws on academic research using a broad range of methods. EBP is part of a more comprehensive planning process that includes other evidence—from local knowledge and technical data to “information generated through deliberative processes” (Davoudi 2006; Krizek, Forsyth, and Slotterback 2009, 467; Rydin et al. 2018; Tate 2020). Reliable and relevant academic research evidence is important, however, and someone needs to synthesize it, often via a literature review. This may be done by academic experts, but practitioners may also take this on, particularly now they can also easily access many of the new publications on the open web.

The paper is in three main parts. The first section examines how the landscape of publishers and journals has evolved in recent decades. Publishing has consolidated into fewer, larger publishers with economies of scale. OA, funded by author processing charges (as most currently is), incentivizes some journals to publish more and reject less. New OA mega-journals use different kinds of peer review. Predatory journals use fraudulent practices but may appear legitimate. In this landscape, the move toward more EBP is complex, as the second section explains. Planners are generalists, meaning they are unlikely to be experts in every subfield relevant to practice. For academics it is difficult enough to navigate this new landscape; for practitioners trying to read research themselves, OA publications are more accessible than those behind paywalls but raise concerns. Those accessing studies to undertake literature reviews face problems either in selecting the most relevant studies or assessing quality, issues that vary with different types of literature reviews. As is outlined in the third section, this is a rapidly changing landscape suggesting the need for more education for researchers and practitioners about potential pitfalls. A revamped Council of Planning Librarians could take a lead.

Review Methods

This paper is a narrative review critically assessing the state of knowledge on what changes in the landscape of journal publishing mean for urban planning. As explained in a later section, a narrative review is a “scholarly report of a body of literature that includes interpretation and critique…. to provide authoritative argument based on published primary evidence that is convincing to readers” enhancing understanding and theory development (Furley and Goldschmied 2021, 2). As this is an area that has not had much writing in planning and urban studies, it is also exploratory and widely scoped (Spezi et al. 2017).

To undertake it I searched using Google Scholar, Web of Science, and Google, as well as in Society for Scholarly Publishing blog, The Scholarly Kitchen. Avery Index and Urban Studies Abstracts did not cover this material. I started by searching for terms related to predatory journals, narrative reviews, and systematic reviews but then expanded to other publishing innovations such as mega-journals, publisher consolidation, and soundness-only peer review. I searched forward and backward—looking at work cited by, and work citing, key articles. Sources included empirical studies of publishing changes; literature reviews in those areas with substantial work, for example, predatory journals; and reports, commentaries, and blog posts by expert commentators, including journal editors and publishers. I also relied on my decades-long experience as an author, editor, associate editor, editorial board member, and reviewer across several urban studies and planning subfields. While health and medicine dominated discussions of such phenomena as systematic reviews and predatory journals, as was found earlier (Mertkan, Onurkan Aliusta, and Suphi 2021), there was growing interest in the social sciences.

As is typical with narrative reviews, I was not searching for every article in a narrow area where an article count would be relevant. Rather I sought to find recent work about planning-relevant trends, a range of views, and pieces representing or reviewing substantial empirical studies or reflective practice. Many blind reviewer questions probed the details of conducting literature reviews in EBP. These were important questions though this paper uses EBP as one example area where new publishing trends affect planning, rather than as a main focus.

Finally, this was a tricky review and commentary to write as it deals with shades of gray. While there are disreputable publishers, others are merely questionable, and their status may have changed over time. In this fast-evolving landscape, people, including my friends and colleagues, have made different decisions about editing, publishing in, and using particular publications. As I wrote the review, my thoughts also evolved as some issues became more murky to me, and some less so. I convey some of this complexity in the article.

Significant Changes in Journal Publishing

Since the 1990s, journals have proliferated and, in many cases, commercialized and become more expensive. This has resulted in increasing numbers of journals, consolidated ownership in traditional publishing, expanded fee-paying OA, the rise of mega-journals, and the predatory or questionable journal phenomenon. Several innovations, such as in peer review, have supported these changes. I address these issues below.

Proliferating Journals and Articles

There are tens of thousands of extant academic journals. While only some are in urban studies and planning, research in those areas is often interdisciplinary. While there is no definitive listing of journals, several partial counts exist (Nishikawa-Pacher 2022). The Web of Science Core Collection, often seen as the most selective of the large databases, includes sciences, social sciences, arts and humanities, and emerging sources. As of 2024, it covered 21,800 journals, up from around 9,000 in 2005 (“Clarivate Reveals World's…” 2024; Jacso 2005). Elsevier's Scopus covered over 29,200 as of January 2024, up from about 15,000 in 2000 (Scopus Content | Elsevier n.d.; Thelwall and Sud 2022). These overlapping databases are dominated by English-language publications, with 96% and 90% of documents, respectively (Visser, van Eck, and Waltman 2021).

Largely separate are the journals using the Open Journal Systems platform, started in 2002 from a university base (PKP Timeline, n.d.). By 2022, it had over 25,000 journals publishing at least five items per year, of which only “1.2% are indexed in the Web of Science and 5.7% in Scopus” though 88.3% were in Google Scholar, a benefit of that platform (Khanna et al. 2022, 912). Very few (around 1%) were listed in various predatory journal lists and the majority were free to submit and access. Researchers detected sixty languages (Khanna et al. 2022, 916, 918).

Articles have increased along with journals. A working group on the future of research publication, primarily composed of MIT affiliates, identified a “nearly fivefold increase in the number of articles produced annually from 1995 to 2022” (Sharp et al. 2023, 11). Geographically, a Japanese team found that from 1998 to 2000, U.S. scholars published just over 203,000 articles per year, by 2018 to 2020 this increased to just over 293,000; however, in the same period, articles published annually by scholars from China increased from just over 22,500 to over 407,000 (Kanda et al. n.d., 13; Sharp et al. 2023).

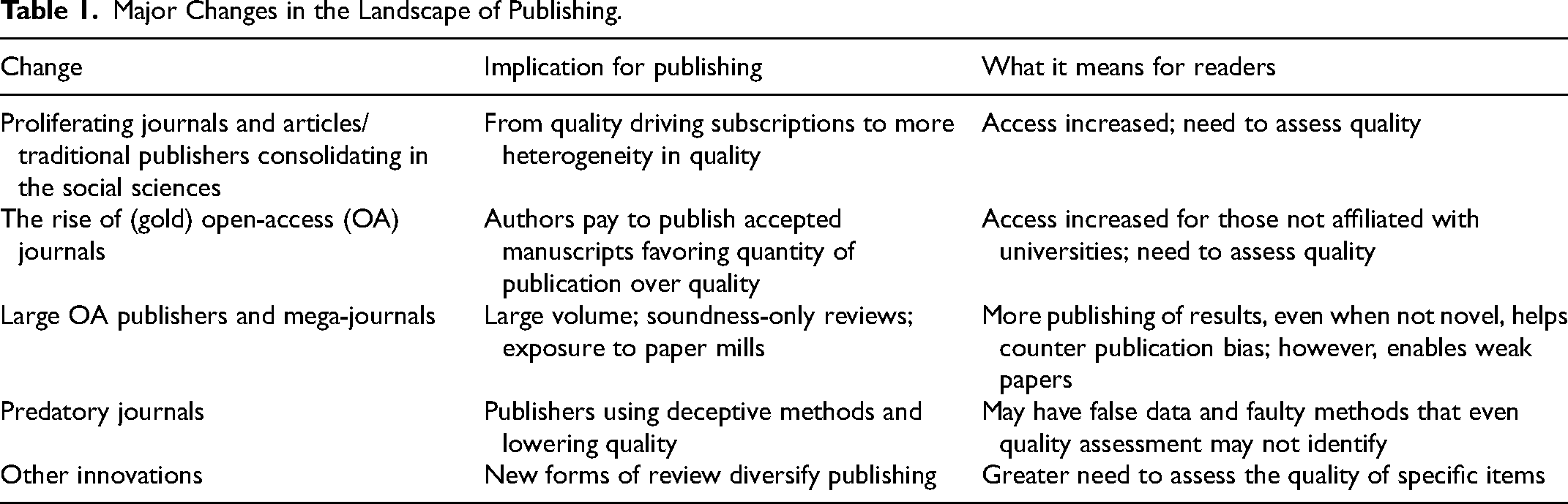

Increasing publication numbers make navigating the information landscape more complex in interdisciplinary fields such as urban planning (Table 1). While it provides new opportunities to access published research, it means that searches are likely to turn up pieces in unfamiliar journals. As I explain later, this requires additional review.

Major Changes in the Landscape of Publishing.

Traditional Publishers Consolidating in the Social Sciences While New OA Publishers Grow

Regarding publishers, competing trends include traditional publishers buying competitors and a proliferation of new publishers often spurred by the OA model. Both trends have implications for the consistency of journal quality.

There are many publishers. Using web scraping methods, Nishikawa-Pacher (2022) identified 414 publishers each publishing at least fifteen journals. The 100 largest publishers by journal numbers published over 28,000 serial titles and included traditional major publishers as well as seventeen “university-based presses headquartered in research institutions in the Global South,” mainly in Latin America and Indonesia. It also included thirty publishers classed as predatory, publishing 4,517 journals (Nishikawa-Pacher 2022, 455). Another database, Dimensions, includes OA journals in the Directory of OA Journals (DOAJ), PubMed Central, and elsewhere; by 2020, it had over 8,000 publishers (Shu and Larivière 2024).

Most academics, however, are familiar with traditional publishers, associated with a scholarly association, a university press, or a legacy commercial publisher. Commercial publishing of academic journals took off after World War II. By the 1990s, they published about 40% of journal “output” with professional societies and university presses about the same (41%) (Larivière, Haustein, and Mongeon 2015, 2; Tenopir and King 1997).

Since the mid-1990s, the largest commercial publishers have bought smaller commercial publishers and taken over association journals, particularly in the social sciences (Larivière, Haustein, and Mongeon 2015; Sharp et al. 2023). For example, in urban planning, the Journal of the American Planning Association and Journal of Planning Education and Research, formerly independently produced, are now published by Taylor & Francis and Sage, respectively. Major commercial publishers benefit from economies of scale to provide critical infrastructure from peer-review management software to article layout, archive digitization, OA support, and subscription services. They can also bundle journals into discounted packages for libraries, called big deals (Sharp et al. 2023).

Larivière, Haustein, and Mongeon (2015) examined over forty-four million articles published from 1973 to 2013 in the Web of Science, identifying the five major traditional publishers in the social sciences—Taylor and Francis, Elsevier, Wiley, Springer Nature, and Sage. They found that for the social sciences, which includes planning, “while the top five publishers accounted for 15% of papers in 1995, this value reached 66% in 2013” (Larivière, Haustein, and Mongeon 2015, 7). Since that period, consolidation has continued (Crotty 2023).

Consolidation has implications for how much readers can rely on a traditional publisher as a sign of quality (Table 1). Formerly academic quality was crucial to their business model, protected by promoting selective, high-prestige titles. However, they have acquired a wide range of titles that have threatened this selectivity (Wiley's purchase of Hindawi is described below). Furthermore, big deals make it unviable for libraries to select titles for quality and fit.

Publisher consolidation has had mixed effects. It has professionalized journal publications such as those run by associations in planning. The rise of the big deal may have helped less well-known journals and journals in smaller fields, such as urban planning, achieve more readership (Clarke 2018). However, it has likely made quality less consistent, a concern for those writing literature reviews. Publishers also have been criticized for expensive subscriptions, fees, and excessive profits (Frank, Foster, and Pagliari 2023).

The Rise of OA Journals

Into this context has come the phenomenon of OA. Emerging at the start of the century it means readers do not need to subscribe to journals but can read online for free. This has involved traditional publishers but also provoked the rise of new online-only publications.

OA is partly driven by key funders including governments and foundations requiring OA publication (Frank, Foster, and Pagliari 2023; Sharp et al. 2023). As OA has evolved, a rainbow of options has developed. Green OA puts an accepted manuscript into a depository for free, though the final, copyedited article needs a subscription, and there is often an embargo period of a year or more on the free version. Gold OA involves paying a publication fee upon acceptance. The first gold OA author publishing charges (APCs) or fees were charged by BioMed Central in 2002 (Sharp et al. 2023, 18). Platinum or diamond OA is where a third party pays for the OA, often a university, foundation, or government (Bates 2017). A study of nineteen million publications found that OA publications were cited by geographically, institutionally, and disciplinarily more diverse authors. However, green OA (free) did better than gold OA (paid), perhaps reflecting the prestige of traditional subscription journals (Huang et al. 2024).

OA journals are diverse. Many new journals are entirely gold OA; in contrast, traditional journals are often hybrid where a subscription journal allows gold OA for a fee and authors can also use green OA. Most OA fees in the big five publishers in the social sciences go to hybrid journals, about 85% (Butler et al. 2023, 792). Some OA journals are smaller and in specialized areas, such as the Journal of Transport and Land Use (JTLU), supported by the University of Minnesota. Initially a platinum journal it later adopted a gold model. The University of London-based Open Library of the Humanities has a non-profit model that allows diamond OA and publishes “500 articles across 30 journals each year” including the journal Architectural Histories (OLH Model n.d.). The micro-publication journal publishes concise results (Herman et al. 2020, 218). In planning, Findings is one such journal with a length of 1,000 words, plus references and up to three figures and tables (Findings n.d.). Transportation professor David Levinson, who established JTLU, also founded Findings.

An unintended consequence of the calls for OA was that the gold OA model—where authors pay a fee—generated a significant new commercial market for journals. Because commercial OA journals make money from publishing articles, they are incentivized to publish more articles and minimize editorial costs. This contrasts with subscription journals, at least before the big deal, where an individual journal's quality mattered in the market “to attract readers and increase the reputational value of the journal” (Sharp et al. 2023, 18–19).

Publication fees have been controversial (Butler et al. 2023). At the high end, in 2024, Nature (Springer Nature) listed an APC of £8,890/US$12,290/€10,290 for gold OA. A review examining publishing charges by big five publisher journals in the Web of Science found an average APC of nearly $2,000 for OA-only journals and almost $3,000 for hybrid journals in 2015–2018 (Butler et al. 2023, 793). Butler et al. cited $1,000 as a likely high level of actual cost to the publishers. Two Web of Science-listed double-blind peer-reviewed planning and urban studies journals manage at about this level: Urban Planning (Cogitatio Press, €1,050) and Buildings and Cities (Ubiquity Press, £1,240–1,360) (Editorial Policies | Urban Planning n.d.; Submission Guidelines n.d.). Other estimates of needed fees are higher, however, particularly for smaller publishers and association journals with substantial remits to nurture emerging scholars (Johnson 2024).

Fees raise complicated equity issues. Some universities have negotiated minimal or zero publication fees as part of major subscription deals or transformative agreements (different from the big deal) (Sharp et al. 2023). The University of California has many such agreements (OA Publishing Agreements and Discounts n.d.). However, many wealthier private universities do not and expect authors to use research grants (Frank, Foster, and Pagliari 2023). In some countries, such as Australia, most universities have such agreements. In all cases, someone pays for publication; the difference is who and when.

These trends have varied implications for the literature in planning and urban studies. Green OA is widely available, but such documents are often dispersed in university depositories and rely on authors to deposit them. Gold OA is more accessible on the open web but of more uneven quality. Given that most academics have access to libraries, it likely makes more of a difference in public and professional access to original research. This is relevant for EBP in a professional field in planning as practitioners Googling for research articles will be put off by paywalls for subscription articles but may lack skills to understand problems with some OA journals, issues I explain below.

Large OA Publishers and Mega-Journals

A related set of changes involves the possibilities of scale that OA brings including mega-journals, often defined as journals publishing more than 2,000 articles per year, and new publishers publishing many articles per year across multiple journals. These have rapidly expanded (Ioannidis, Pezzullo, and Boccia 2023, 1253).

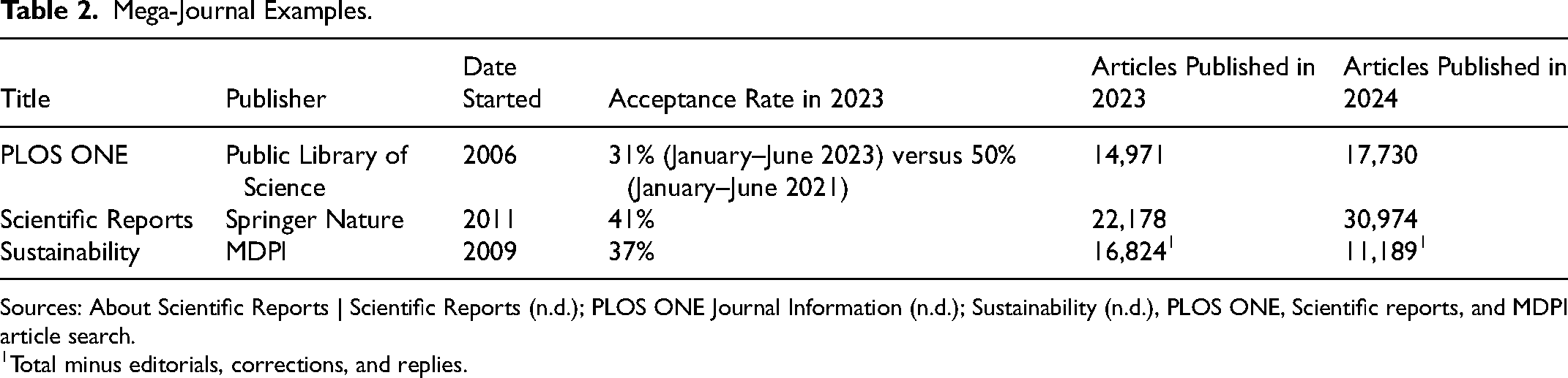

Several mega-journals publish more than 10,000 articles per year. Two of the largest mega-journals are Scientific Reports and PLOS ONE (Table 2). Each has over 10,000 editorial board members (About Scientific Reports | Scientific Reports n.d.; Editorial Board | PLOS ONE n.d.). They have very wide remits and key word searches indicate both publish hundreds of articles related to urban planning each year. Slightly more focused but still broad is Sustainability, which publishes in urban studies and planning areas amongst others, and has the Urban Land Institute as one of three affiliated societies. In 2023, it published more articles than PLOS ONE but due to controversies, outlined below, it has been overtaken (Petrou 2020).

Mega-Journal Examples.

Sources: About Scientific Reports | Scientific Reports (n.d.); PLOS ONE Journal Information (n.d.); Sustainability (n.d.), PLOS ONE, Scientific reports, and MDPI article search.

1Total minus editorials, corrections, and replies.

Many of these journals review articles in terms of soundness only, that is, without assessing “novelty, significance or ... relevance to a notional readership” (Ioannidis, Pezzullo, and Boccia 2023; Journal Information | PLOS ONE n.d.; Sharp et al. 2023; Spezi et al. 2018, 138). Rather than being concerned with how it contributes to knowledge, as Scientific Reports explains, this means reviewers examine “the technical soundness and scientific validity of your methods, analysis and interpretation, all of which must be appropriate, properly conducted, ethically robust and fully supported by the data” (Editorial Process | Scientific Reports n.d.).

Soundness-only review has benefits and costs. As Spezi et al. (2018) found in a study based on interviews with thirty-one publishers and editors, it is potentially democratizing and less prone to subjective gatekeeping around questions of contribution. It can also lead to people publishing papers that find no effect, thus undoing some publication bias where studies that find effects are overrepresented. However, it leaves critical assessment to a post-publication phase of review where the scientific community decides the importance (Spezi et al. 2018). It allows the publication of sound but trivial work. However, as of 2024, some mega-journal publishers were using additional criteria, including novelty, although with a single-blind review (MDPI | Guidelines for Reviewers n.d.).

Overlapping but not the same as mega-journals is a set of large gold OA publishers. These include MDPI (launched in 1996), Hindawi (founded in 1997, purchased by Wiley in 2021), BMC/BioMed Central (started in 1999, in the Springer Nature group since 2008), and Frontiers (founded in 2007) (About BMC n.d.; Frontiers | History n.d.; MDPI | History n.d.; Peters 2007). By 2022, MDPI was the third largest scholarly publisher in the world in terms of articles (Sharp et al. 2023), publishing over 300,000 papers in 2022, up from 110,000 in 2019 (Petrou 2023). In 2023, it leveled off at 285,000 articles with a submission to publication median time of six weeks (MDPI 2023). It also used a guest editor model prominent in big OA-only publishers (Petrou 2023). For example, in 2023, the MDPI journal Sustainability was set to host 3,512 special issues, or more than nine per day (Grove 2023).

MDPI and Hindawi have faced substantial controversy. In March 2023, Clarivate, which hosts Web of Science and the Journal Impact Factor, delisted fifty journals. The delisting included MDPI's highest-volume publication, the International Journal of Environmental Research and Public Health (IJERPH) (Irfanullah 2024). In 2022, according to its website, it had published just under 17,000 articles, including hundreds related to urban planning topics (Ioannidis, Pezzullo, and Boccia 2023). While Scopus still lists IJERPH, its website indicates articles fell dramatically to around 1,700 in 2024 (Petrou 2023). MDPI's Sustainability has shrunk less (Table 2). It had other issues, however. In 2019, it had a self-citation rate of over 27%, high for journals with a broad scope, and potentially inflating citation metrics; in 2018 and 2019 close to half the citations in the journal were to other MDPI publications (Oviedo-García 2023). 1 At the same time, MDPI APCs were still high; in 2024, Sustainability charged 2,400 Swiss Francs (about US$2,800), and the delisted IJERPH 2,500 Swiss Francs.

Clarivate also delisted nineteen Hindawi journals in March 2023, involving titles that published about half its papers in 2022. Hindawi's guest editor model suffered from paper mill submissions, or articles for sale, often with fabricated results, leading to many thousands of retractions and a loss in value for the new owner of the publisher, Wiley (Flintoft et al. 2023; Petrou 2023). Wiley has stopped using the Hindawi brand, and the Wiley CEO stepped down (Kincaid 2023; Oransky 2024).

Frontiers and BMC have generally fared better. Frontiers has received criticism for having an overly automated approach to requesting reviews (Horbach, Ochsner, and Kaltenbrunner 2022). However, in recent years, it has increased its rejection rates and put in controls to avoid some of the more significant problems of paper mills (Petrou 2023).

Mega-journals and large OA publishers raise issues for literature reviews in planning. Peer review can be so fast, for example, a week, that it is hard to get high-quality reviewers. A fundamental problem has been guest editors manipulating peer review and opening journals to paper mills (Flintoft et al. 2023). Wiley, in a review of the Hindawi experience, noted that citation rings or cartels, where authors boost each others’ papers, are also a concern (Flintoft et al. 2023). In traditional journals with stronger editors, some of this may be addressed, though not always! Overall, the main implication is that those undertaking literature reviews for EBP need to do more work to assess quality.

Predatory Journals

Drawing on some of these same trends is the predatory journal phenomenon. First publicized by a librarian, Jeffrey Beall, in 2010, the term predatory has been controversial. Most commentators, however, see a problem related to unscrupulous publishers where checks on academic merit are absent or minimal (Mills and Inouye 2021; Ross-White et al. 2019; Swire-Thompson and Lazer 2022). To help conversations, a working group developed a much-cited definition involving journals that “prioritize self-interest at the expense of scholarship and are characterized by false or misleading information, deviation from best editorial and publication practices, a lack of transparency, and/or the use of aggressive and indiscriminate solicitation practices” (Grudniewicz et al. 2019, 211).

Such journals became possible as a business model due to gold OA, which provides payment on acceptance of the article and does not rely on gatekeeper-subscribers such as librarians. They also benefitted from the complexity of the evolving publications landscape. They typically have names that sound like legitimate journals. Most academics have received hundreds of emailed requests to submit to such journals and might unwittingly submit. A group of education researchers commented: “In a complex, rapidly expanding global science system and regional research landscape, it can be hard for both new and experienced researchers to know how to assess the reputation of scholarly journals” (Mills et al. 2021, 278). However, motivations can be complex as demonstrated by a review of studies of authors (Mills and Inouye 2021). For example, in some countries students need to publish to graduate and such journals provide an outlet.

A key issue is that predatory journals are disproportionately accessible to the public and professionals including planners. Predatory journals are OA, but many other journals require subscriptions typically not accessible to practitioners. Even for planning students who like to Google rather than use the library catalog, they are also disproportionately accessible and may be unwittingly used.

Lists to Determine More Reputable Journals

Several organizational databases attempt to list more reputable journals. The DOAJ, the Committee on Publication Ethics (COPE), the OA Scholarly Publishing Association, and the World Association of Medical Editors developed “principles of transparency and best practice for scholarly publications” and an associated list (Directory of Open Access Journals – DOAJ n.d.). However, a large team of researchers showed that some predatory journals had made it onto lists such as DOAJ (Grudniewicz et al. 2019). The website Think. Check. Submit., a collaboration of major publishing groups, guides authors about submission (thinkchecksubmit.org).

Commercial entities also provide lists. Many consider the Web of Science to be a high-quality list; though recent de-listings of journals show its limits. Cabells publishes a listing of predatory journals as part of an expensive suite of journal analytics (Cabells n.d.-a). Urban studies and planning are not in their disciplinary areas; however, they have a helpful set of criteria for predatory journals related to integrity, peer review, publication practices, indexing, fees, and copyright (Cabells n.d.-b).

Several countries have published warning or rating lists, including China, India, Finland, and Norway (Consortium for Academic and Research Ethics n.d.; Early Journal Warning List n.d.; JUFO Portal n.d.; Norwegian Register for Scientific Journals, Series and Publishers n.d.; Tao 2020). The Norwegian list is free, updated annually, has relatively transparent criteria, and lists both journals that are ranked (scored 1, satisfies requirements, and 2, highest level) and that have been rejected (0) or are in doubt (X). The Finnish ranking (JUFO) is similar, though it adds a score of 3 (top level). For example, as of 2024, IJERPH was ranked 1 in the Norwegian Register, meaning it met their minimum criteria, but it was a 0 in the Finnish equivalent. In comparison, in 2024, MPDI's Sustainability was at 0 in Norwegian and Finnish lists, meaning it was considered but did not meet the minimum criteria (About | Norwegian Register n.d.; Early Journal Warning List n.d.).

Such lists can be helpful if imperfect. As can be seen above, the lists have different ratings of the same journals and the ratings change over time. They can be complicated to parse. For example, I have published one co-authored commentary in a Frontiers journal in a planning-adjacent field with senior academic co-authors. Before agreeing to this submission, I checked it was in the Web of Science core collection and did well on key journal metrics. The Norwegian Register's scientific level (1) in 2023 and 2024 was the same as typical urban studies journals such as the Journal of Planning Literature. In the Finnish listing in the same two years, it was a 1, compared with Journal of Planning Literature as a 2. This is reasonable work to do as a submitting author; writing a literature review would involve scrutinizing the whole reference list.

Other Innovations in Peer Review

Changes in journal types and numbers are not the only innovations in scholarly publishing in recent decades. Instead, other innovations have tried to keep OA revenue within the same journal family and redefine peer review.

Cascade journals take articles rejected by other journals, along with their peer-reviews, and consider them for publication. Cascading allows publishers to keep articles in their company (Sharp et al. 2023). In selective journals, many articles are processed, but few are accepted, meaning their revenue is low in an OA APC world. If rejected articles go to journals of the same publisher, they can still retain the revenue, while authors get some prestige from being part of a suite (Spezi et al. 2017). A classic example is the Nature, Nature Communications, to Scientific Reports cascade.

Peer review has also undergone innovation in addition to the soundness-only review mentioned earlier. Researchers and experienced editors have drawn on various studies to show that double-blind peer review has repeatedly been shown to be most fair compared with less anonymous forms (Forsyth 2021; Fox 2023; Smith et al. 2023). However, when not using soundness-only reviews, many mega-journals use single-blind reviews where authors are named; while a tradition in many fields, it raises questions. Furthermore, there are more changes in the current period.

One example is open peer review, where reviews are published alongside the article. The open review may be combined with an open identity where both reviewer and author are named during the review process. As an extension, the overlay journal model uses post-publication peer review, that is, review following the online publication of a preprint. In this case, the article (preprint) is placed in a depository and then has editorially managed comments and open peer review with all versions individually citable (About F1000Research | How It Works | Beyond A Research Journal n.d.; Herman et al. 2020). For example, Open Research Europe is a platinum OA overlay journal funded by the European Commission for work in their Horizon programs that include urban planning. Articles are submitted, checked for eligibility, ethics, and intelligibility; then typeset, posted, and indexed in Google Scholar (How It Works | Open Research Europe n.d.). Editors then invite peer reviewers. Peer review is open, unblinded, and iterative. After peer review, authors revise the manuscript, and the revised version is indexed in such databases as Scopus (How It Works | Open Research Europe n.d.).

These innovative forms of peer review are complicated for literature reviewers and readers. For example, open peer review with open identity may expose the reviewer to critique and will require additional polishing, making it hard to get good reviewers (Fox 2021). It may take time to get reviews in overlay journals, delaying identification of conceptual or methodological problems. Furthermore, a reader may be left to interpret various balances of review outcomes, for example, along the lines of two approved with reservations, two not approved.

Overall, these trends provide options for authors but make a more complicated landscape for those using the work in evidence-based planning practice. Of course, planners have drawn evidence from a wide variety of publications in the past, including reports. However, the sheer volume and variety of materials add to the literature reviewer's workload.

Literature for EBP

At the same time as these innovations in publication, there has been a move to move toward using research evidence more transparently in guidance for practice, often dubbed evidence-based or evidence-informed practice (Davoudi 2006; Krizek, Forsyth, and Slotterback 2009; Tate 2020). First used in the health field, the aim is to avoid applying ineffective practices by using “the best available, current, valid and relevant evidence” (Dawes et al. 2005, 4). Of course, planning is multidisciplinary and implementation-oriented, with significant differences among places. As well as using academic research evidence, planners also need to draw on “experience, general professional knowledge, new data collection, formal education, and interactions with various decision makers and community members” (Krizek, Forsyth, and Slotterback 2009, 459; Rydin et al. 2018; Tate 2020). That is the evidence in EBP is varied. However, one change in recent years has been a move to using academic research in addition to the other forms of evidence such as technical assessments and the results of community engagement.

Knowledge also may be contested in practice as proponents and opponents of plans and projects critique methods and sources, and their implications, as shown in a study of renewable energy projects (Rydin et al. 2018). This may include the academic research base.

There are many ways to translate quantitative and qualitative scholarly research evidence into guidance, but a typical step is some kind of literature review. An issue relevant to this paper is a gap between the need for literature reviews in a wide variety of areas and researcher capacity, meaning some interested practitioners may try to fill the space with OA articles. Even researchers will struggle, however, with quantity and quality issues. In the following sections, I examine how literature reviews have evolved under EBP and address how the changing information landscape complicates these trends. That is, I am using the example of EBP to indicate one way that changes in publishing intersect with the work of planners.

The Range of Literature Reviews

There are dozens of different terms for types of literature reviews used across various academic fields, including in planning (Grant and Booth 2009; Xiao and Watson 2019). While terminology varies, reviews fall on a spectrum. At one end are systematic reviews that follow a well-defined protocol to identify all studies in a narrow area, assess their quality, and synthesize results, sometimes using statistical methods to calculate the size of effects (Snyder 2019). At the other end of the spectrum are narrative, critical, or integrative reviews that involve more qualitative “interpretation and critique” (Furley and Goldschmied 2021, 2). They use “more creative” literature selections and “combine perspectives” to create taxonomies, theoretical models, and conceptual frameworks (Snyder 2019, 334). Between the two ends is a middle-ground of methods that relax some of the systematic review protocols while still aiming for more transparent methods, for example, scoping reviews or state-of-the-art reviews. Their contribution typically identifies the state of knowledge, historical development, a research agenda, or a theoretical model (Snyder 2019, 334). All have been used in EBP to create research summaries and guidelines.

Some authors propose that any review with an explicit method is a systematic review, with all others being narrative reviews (vom Brocke et al. 2015). In planning, a form of content analysis of primary planning documents related to municipal interventions, not a review of academic research, has also been called a modified systematic review. The aim was to broaden the search for practice precedents, improve comparisons, and thus increase “rigor and transparency” (Agnello et al. 2023, 1).

Of the literature reviews, systematic reviews have attracted much recent attention. Emerging from health fields in the 1990s, to summarize clinical trials and the like, they have expanded to other fields (Boell and Cezec-Kecmanovic 2011; Purssell and McCrae 2020). As the number of empirical articles has grown, so has the number of systematic reviews. Generally, they try to be comprehensive, transparent, and unbiased in selecting all literature, diligent in assessing quality, and logical in synthesizing it (Snyder 2019). Proponents propose this overcomes problems of clinical research, such as inconclusive and contradictory findings and small sample sizes. By rigorously assessing multiple studies, authors hope to understand the bigger picture and balance of evidence (Boell and Cezec-Kecmanovic 2011). To do this, they work on very narrow questions, with multiple studies using similar methods, and from a single discipline or a very narrow disciplinary range.

At their most extreme, systematic reviews have a somewhat formulaic protocol though they are difficult to do well. An initial step is defining the review's narrow objective, which requires either experience or a prior review. Best practice includes reproducibly searching in multiple databases, as well as forward and backward citation searches for critical articles (also called snowballing) including commentaries and reviews not included in the review, asking experts, and searching specific authors. While the method is often associated with database searching, a review of search strategies found manual, snowball, or forward and backward searches worked as well or better than database searches in relevance and the number of final articles found (Wohlin et al. 2022). It is also meant to include reports and theses (Haddaway et al. 2020). A systematic review provides a numerical diagram of articles found, assessed for relevance, and included (e.g., Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA), introduced in 2009); uses multiple investigators to extract data; does a quality assessment related to issues such as sample, methods, or author qualifications; and synthesizes results in a narrative or diagrams (Briscoe, Abbott, and Melendez-Torres 2022; Haddaway et al. 2020; Purssell and McCrae 2020; Uttley et al. 2023; Xiao and Watson 2019).

Quality Assessment

If there is one issue that changes in publishing raise it is variation in quality. How to assess research quality and relevance is key to literature reviews. This is where systematic reviews have some lessons about opportunities and challenges.

Systematic reviews require quality assessment. As a first step, many reviewers place studies on a version of a ladder of evidence. At the top are systematic reviews and meta-analyses of randomized control trials (RCTs), RCTs, and cohort studies, and lower are case-control studies, cross-sectional surveys, and case studies (Purssell and McCrae 2020, 10). This obviously favors a particular slice of the research world. In response, some propose a less hierarchical matrix with different methods performing better in answering questions such as effectiveness, salience, and satisfaction (Petticrew and Roberts 2003).

Authors then use quality checklists or matrices that differ by study type (Tate 2020; Xiao and Watson 2019). Common criteria include potential for bias in sampling, data collection, analysis, and confounders (Ravensbergen and El-Geneidy 2023; PRISMA Statement n.d.). Such assessment is most appropriate for studies similar in research questions, spatial scale, and methods and taking a more narrowly scientific approach. Planning evidence is rarely so homogenous. In a few planning specialties, however, primarily transportation and health, quality assessments have been possible (Ravensbergen and El-Geneidy 2023). Even in these areas, assessment faces questions about weighting of criteria, data fabrication, and the like. Such assessment is also very time-consuming and is typically only done on the key empirical studies that are the focus of the review. Findings are then synthesized in terms of the certainty of evidence through various tools (Granholm, Alhazzani, and Møller 2019; Ravensbergen and El-Geneidy 2023)

Such methods favor certain kinds of empirical studies so authors undertaking narrative reviews typically suggest a looser set of quality dimensions such as key results, limitations, suitability of methods, and impact (Ferrari 2015). For example, one set of planning guidelines used three levels of certainty: whether a claim was directly from research, informed by it, or good practice (Forsyth, Salomon, and Smead 2017). Others use indicators related to publishers and journals. For example, in a narrative literature review published last decade providing guidance on systematic reviews in planning, the authors “deemed journal articles and books published by reputable publishers as high-quality research,” and excluded most “technical reports and on-line presentations … because of the lack of peer-review process” (Xiao and Watson 2019, 94). However, after several years of changes in publishing these indicators are no longer as clear. Where would peer-reviewed publications by less reputable publishers fit?

Varied Kinds of Reviews

As is apparent by now, planning literature reviews are varied. While some see systematic reviews as the most rigorous, others have tried to push back on the idea that systematic reviews are of higher quality. As Boell and Cezec-Kecmanovic (2011) point out, it may be possible to define a very narrow question in medicine, but “Generally in social sciences research questions are much wider with unclear boundaries” (p. 7). Systematic reviews are poorly suited to some areas, for example, questions related to the historical development of an idea or practice, or more speculative arguments (Ferrari 2015). As one early critic proposed in the context of education “Systematic reviewers often set out to map fairly small fields with secure fences, and do not expect to look over the hedge” (Boell and Cezec-Kecmanovic 2011; MacLure 2007, 52). As Cornish reflected, having undertaken a systematic review of community mobilization related to HIV/AIDS, such methods often ignore context, which is key in areas such as planning and international development; they can also devalue professional insight and local wisdom (Cornish 2015; Rydin et al. 2018). Furthermore, rather than endlessly searching for more articles, a deep reading in a hermeneutic cycle can be better (Boell and Cezec-Kecmanovic 2011).

Also emerging have been forms of automated analysis in literature reviews, used for some steps in a review. Text mining or analytics helps extract information from text through approaches such as natural language processing which is a subset of machine learning (IBM 2025). In planning, text mining has been used to classify article topics (Fang and Ewing 2020). An investigation of generative artificial intelligence or large language models in business research found they are currently mostly helpful as a counterpoint to manual assessment, lacking transparency and consistent results (Tingelhoff, Brugger, and Leimeister 2024). While some way off from being widely used in EBP, they also need to address the changing information landscape.

Overall, publication changes pose challenges for the range of literature reviews. Comprehensive searching in systematic reviews is likely to uncover articles with minimal review and as there are more of these the burden of additional review will be greater. Other review types need to add a quality assessment to their workflow. This may be easy enough for focused reviews using a narrow range of methods but challenging for reviews that are more broadly scoped, as many are in planning.

Literature Reviews Versus the Changing Landscape

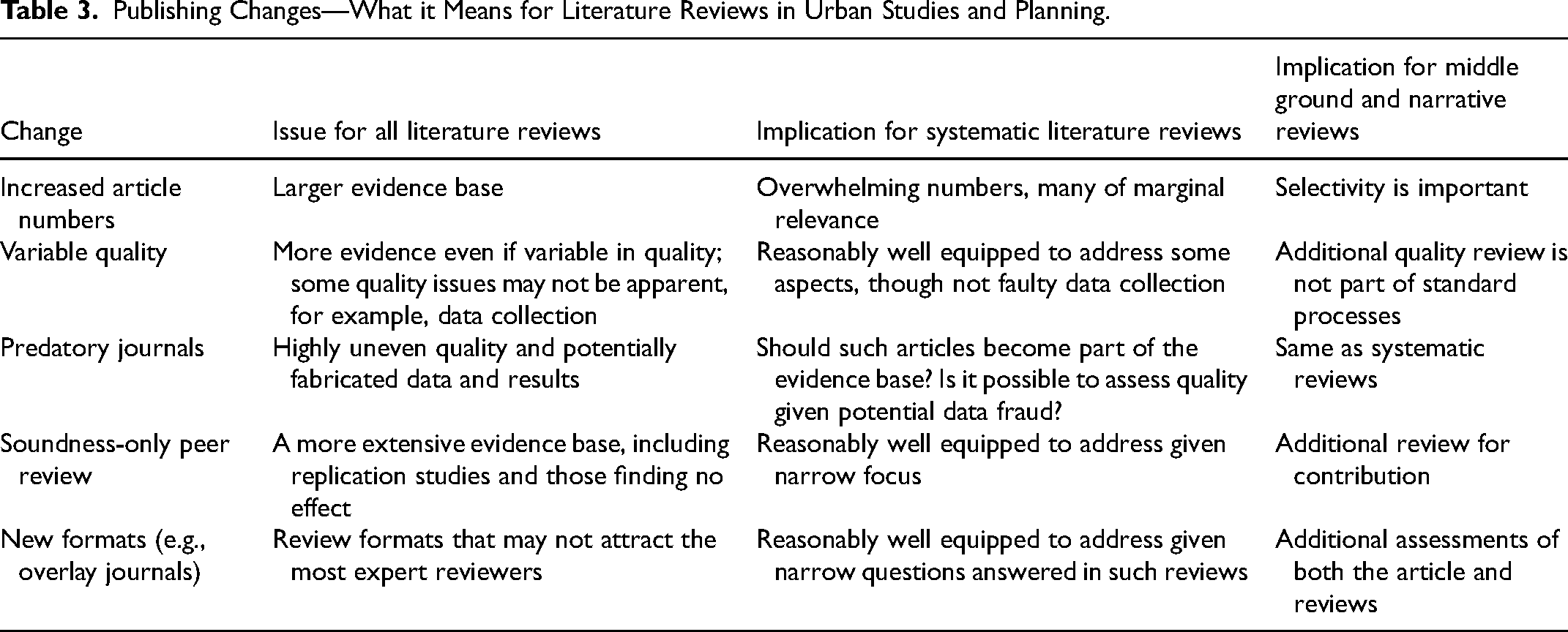

While there has been advice for authors of systematic reviews as they address predatory journals, many other questions need to be addressed due to these significant changes in publication. Table 3 outlines some of the planning-relevant concerns.

Publishing Changes—What it Means for Literature Reviews in Urban Studies and Planning.

Increased Article Numbers and Variable Quality

Several trends have increased article numbers and variation in quality, even among more traditional publishers. OA has incentivized publishing more. Big deals from consolidated publishers mean libraries no longer select the journals they most want. For literature reviews, this means a more extensive evidence base.

Having more articles to choose from has costs as well as benefits. Literature review methods requiring exhaustive searching, such as systematic reviews, widen the likelihood of finding work of less relevance (vom Brocke et al. 2015). Articles may also have less peer review (e.g., soundness only or extremely fast) and the literature review writer may be forced into what is essentially a retroactive peer review process. Systematic reviews include quality assessment but are better suited to methodologically similar and generally quantitative studies. The protocols are not well suited to assessing normative and ethical arguments, which are vital in practice (Mertz 2019).

None of this is totally new. In fields such as urban planning, scholars and practitioners have always drawn on a range of reports, white papers, and the like. Indeed, in this article, I cite editorials and blogs by experts, notably the Scholarly Kitchen, sponsored by the Society for Scholarly Publishing. Even in planning journals, formal peer review is a relatively recent phenomenon; for example, it only reached the Journal of the American Planning Association under Mel Webber's editorship (1958–1962) (Krueckeberg 1980). However, the scale of this issue and speed of change do pose a challenge.

Other factors reinforce these publisher-side changes, such as salami slicing, where authors split what might have been a single article into multiple smaller articles (Yadava 2021, 378). With so many journals, papers could be accepted somewhere. This raises the question of how many of these salami slices to cite. Should someone be rewarded for this practice?

I have faced this challenge with a scoping review where including the range of evidence was a priority. The search process identified a relevant journal article that was taken off Clarivate and the Norwegian register but remained on Scopus. I assessed that the article quality could have been higher, but the publication had occurred before the most severe controversies. The team had other legitimate publications, and the qualitative methods were ones I felt confident about assessing. I included a notation about quality issues. However, this does raise complicated questions about inclusion.

Predatory Journals in the Literature Review

In terms of predatory journals, librarians and other experts have provided advice to literature reviewers (Barker et al. 2023; Munn et al. 2021; Rice, Skidmore, and Cobey 2021). Without a consensus, they offer several approaches.

- Authors could exclude all predatory or questionable journals, for example, those ranked 0 on the Norwegian and Finnish lists. This will not launder citations, though it may omit some reasonable studies misplaced in the journals. - They might provide additional reviews of articles from all questionable journals; this might remove low-quality articles, but it would not address data fabrication and processing problems. - They can consider how sensitive the overall findings are to the results of such articles (Munn et al. 2021).

A survey of those conducting systematic reviews found that only a small number thought predatory journal publications were always invalid due to the lack of peer review. However, even those who thought they could be included advocated for substantial and complicated additional assessment (Barker et al. 2023; Granholm, Alhazzani, and Møller 2019). There remains the potential for the underlying data to have errors or even be fabricated, meaning academics’ confidence in determining quality from the additional review might be misplaced (Barker et al. 2023).

Given the quality assessment in systematic reviews, the problem may be limited. One study examined 6,750 citations in Cochrane reviews in the health field and found only fifty-five citations of concern (Boulos et al. 2022). Another study examined 459 journals published by one predatory publisher and found articles cited in 157 systematic reviews (Ross-White et al. 2019).

It could be more of a problem in narrative or middle-ground reviews or background sections of articles. As a reviewer, I have noticed authors citing predatory journals in background sections; I imagine they looked for a citation for a minor point outside their expertise and ended up with something from a predatory journal. As noted earlier, it can be time-consuming to assess journal quality thoroughly as academics. Many practitioners may lack the skills to do so.

Soundness-Only Peer Review and New Formats (e.g., Overlay Journals)

Other innovations, while sweeping publishing, may not be as well-known. For example, a paper that gets through soundness-only peer review may need a review about novelty. While systematic reviews can address this, other types will need additional review. New journal types, such as overlay journals, need more attention to understand the relationship between the article and the published reviews. Overall, these are areas where there needs to be more awareness among planning academics and practitioners.

Conclusions

What issues are raised for planning by the recent increase in numbers and types of journals? Publisher consolidation, OA journals, mega-journals, large OA publishers, predatory journals, and new kinds of peer review such as the overlay journal and open peer review have changed the scholarly publications landscape. These have led to rapidly increasing numbers of articles, variability in quality even within one publisher, and new formats that may not be well understood (Table 3). Systematic reviews face a huge number of potential articles; other review types need to address quality issues. As the Sharp Committee explained: “Many in the research community remain unaware of the drivers of change in academic publishing and the potential consequences for the research enterprise” (Sharp et al. 2023, 5). Managing these changes involves extra work, such as checking journal listings and undertaking additional reviews of specific source articles.

These innovations may seem to have less to do with planning than the sciences. However, the OA phenomenon has made some research very accessible on the open web or Google Scholar. The issue is that OA material is, on average, not the highest quality research for reasons to do with publication business models. Much is of high quality but there is a swath of OA publications at the bottom of a cascade model, in more minimally reviewed mega-journals, and even in predatory journals. While many authors of subscription journal articles do publish green OA (on archiving sites), these are not always easily found in searches.

Undertaking this review also showed the need for additional research and debate. Planning could benefit from discussion about which kinds of publications to submit to and cite. Who should do the work of flagging problematic work—literature review authors, publishers, or someone else? Critiques of literature reviews could unpack the strengths and blind spots of systematic and other reviews. OA and literature review automation are both topics deserving more planning-oriented examination. After a couple of decades of implementation, it may be time to review forms of evidence in EBP.

For planning practice and research one implication of the changes in publication is the need for better guidance about the new publication landscape. Both academics and practitioners interested in using research evidence need more accessible information. Until 2000, a Council of Planning Librarians provided leadership (Council of Planning Librarians Bibliographies n.d.). Perhaps it is time to rehabilitate the Council of Planning Librarians to address new concerns. Such a council could provide advice to both researchers and practitioners and help to bridge the evidence-practice gap.

Footnotes

Acknowledgments

I would like to thank Dr. Yingying Lyu, anonymous reviewers, and the Editor for comments on the manuscript.

Data Availability

Data are cited in the reference list.

Declaration of Conflicting Interests

The author declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: I am the former editor of the Journal of the American Planning Association, published by Taylor and Francis. I have been an editorial board member for many journals, in recent years largely published by Sage and Taylor and Francis, but in prior years by associations.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Ethical Approval

Ethical approval is not applicable.