Abstract

The applications of artificial intelligence (AI) in radiology are rapidly advancing with AI algorithms being used in a wide range of disease pathologies and clinical settings. Acute thoracic pathologies including rib fractures, pneumothoraces, and acute PE are associated with significant morbidity and mortality and their identification is crucial for prompt treatment. AI models which increase diagnostic accuracy, improve radiologist efficiency and reduce time to diagnosis of acute abnormalities in the thorax have the potential to significantly improve patient outcomes. The purpose of this review is to summarize the current applications of AI in acute thoracic imaging, highlighting their strengths, limitations, and future research opportunities.

Introduction

The applications of artificial intelligence (AI) in radiology are rapidly advancing. AI algorithms have been developed for segmentation, diagnosis, localization, and report generation in a wide range of diseases. 1 Acute thoracic pathologies are associated with significant morbidity and mortality and their identification is crucial for prompt treatment. AI models which increase accuracy and reduce the time to diagnosis of acute abnormalities in the thorax have the potential to significantly improve patient outcomes. 2

Thoracic trauma is a common presentation to the emergency department, accounting for up to 15% of all trauma and conferring a mortality rate of up to 25%.3,4 Blunt thoracic injury is most commonly associated with rib fractures which in turn pose substantial morbidity and mortality, particularly as the number of rib fractures increases.5,6 Detection of rib fractures is therefore not only important to guide treatment decisions and avoid complications but also for indicating trauma severity. Chest X-ray (CXR) and computed tomography (CT) are the mainstay of diagnosing rib fractures with CXR typically employed as first line screening given its widespread availability and reduced radiation dose. However, up to 50% of rib fractures can be missed on CXR. 7 Although CT is more accurate for the detection of rib fractures, up to 20% of rib fractures are missed on CT. 8

Spontaneous or trauma-related pneumothorax is another common, potentially life threatening presentation to the emergency department. Erect CXR is typically employed as the first line investigation for patients with suspected pneumothorax although superimposed structures or patient rotation can limit interpretation and create opportunity for missed diagnosis. 9 CT is more sensitive for the diagnosis of pneumothorax but confers a higher radiation dose. The early recognition and treatment of pneumothorax is crucial to reduce complications and improve patient outcomes. 10

Acute PE poses substantial risk of morbidity and mortality, however, due to the non-specific presentation of chest pain and shortness of breath only a minority of patients investigated are typically diagnosed with PE.11,12 CT pulmonary angiography (CTPA) is the mainstay of diagnosing PE, although it is vulnerable to cardiac or respiratory motion artefact and relies on adequate contrast opacification of the pulmonary arterial system. Acute PE is missed on CTPA in 14% with an over-diagnosis rate of 10%. 13 Both under and overdiagnosis confer risk to the patient via treatment delays or commitment to unnecessary anticoagulation for prolonged periods of time.

Other diagnostic applications for AI-models in acute thoracic imaging include screening for thoracic aortic dissection and parenchymal lung abnormalities such as acute respiratory distress syndrome (ARDS). A number of AI models have also been evaluated in the triage of patients with blunt chest trauma and for assessing disease severity or risk of complication. Beyond the chest, AI tools have also been developed for a range of cardiovascular imaging applications relevant across the patient journey. 14

AI models for detection of pneumothoraces, rib fractures, acute PE, and other acute disease processes on thoracic imaging have the potential to increase diagnostic test performance, improve radiologist efficiency, and reduce time to final diagnosis. The purpose of this review is to summarize the current applications of AI in acute thoracic imaging, highlighting their strengths, limitations, and future research opportunities.

Clinical Applications

Rib Fractures

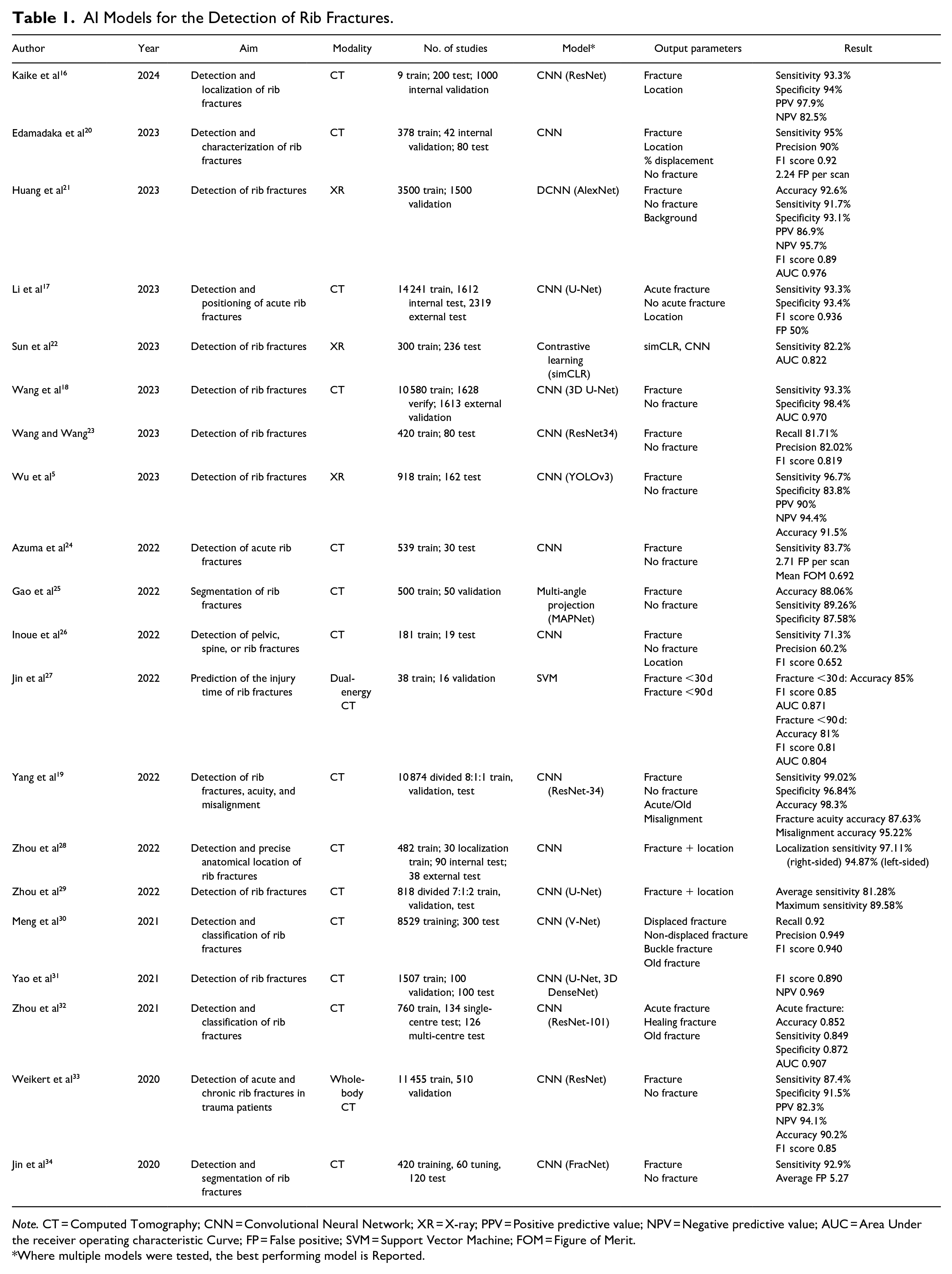

Multiple AI deep learning models, including convolutional neural networks (CNN), have been developed for the identification of rib fractures with reported improvements in rib fracture detection of up to 20%, 15 as outlined in Table 1. A number of these algorithms report sensitivities and specificities in excess of 90% with the models proposed by Kaike et al, Li et al, Wang et al, and Yang et al among the highest performers.16-19

AI Models for the Detection of Rib Fractures.

Note. CT = Computed Tomography; CNN = Convolutional Neural Network; XR = X-ray; PPV = Positive predictive value; NPV = Negative predictive value; AUC = Area Under the receiver operating characteristic Curve; FP = False positive; SVM = Support Vector Machine; FOM = Figure of Merit.

Where multiple models were tested, the best performing model is Reported.

AI tools may be useful beyond identifying the presence of rib fractures. FasterRib, for example, specifies the laterality, rib involved and percentage displacement with a sensitivity for rib fracture detection of 95%. 20 The ResNet-34 model evaluated by Yang et al also describes misalignment and additionally evaluates rib fracture acuity with an accuracy of 95.22% and 87.63% respectively. 19 The discrimination of rib fracture acuity was similarly evaluated by Jin et al using dual-energy gemstone spectral imaging with a sensitivity of 92.9%, however, an average false positive rate of 5.27%. 27 One study evaluated the detection of both rib and costal cartilage fractures using a commercially available CNN. However, the detection rate was only 58.2% for costal cartilage fracture with a large number of false positives. 35

The benefit of AI-assisted diagnosis of rib fractures has been evaluated at different reader experience levels with a significant improvement in sensitivity at both junior and senior levels. Tan et al report an increase in sensitivity for detection of rib fractures among junior readers with AI-assistance of 22.94% (P < .05). 36 They observed a similar result with experienced readers, in whom sensitivity was 86.47% without AI-assistance and 94.56% with AI-assistance (P < .05). 36 Radiologist and AI model collaboration has also been demonstrated to improve accuracy when compared with the AI model alone. For example, in the prediction of rib fracture injury time, Jin et al report an area under the receiver operating characteristic curve (AUC) of 0.912 for the human-model collaboration compared with an AUC of 0.871 and 0.690 for the AI and human readers respectively. 27

One major potential benefit of AI-models for the detection of rib fractures is reduced interpretation times. Jin et al report a reduction in clinical time dedicated to interpretation of 771 seconds for their junior reader and 718 seconds for their experienced reader when CT images were interpreted in conjunction with the FracNet deep learning algorithm. 34 Zhou et al report similar findings with a CNN model mean interpretation time of 18.66 ± 5.27 seconds compared with a radiologist mean interpretation time of 144.81 ± 47.77 seconds (P < .01). 32 Yao et al report less dramatic improvements with an average reduction in radiologist interpretation time of 65.3 seconds with the use of their proposed three-step CNN, possibly due to their high false positive rate. 31

The extent of pulmonary contusion has prognostic value in the setting of blunt chest trauma, however, manual assessment of volume impacted is time consuming. Choi et al propose a scalable deep learning algorithm to automate pulmonary contusion percentage calculated using chest CT in 332 patients with rib fractures. 37 A pretrained CNN (U-Net) model was used to segment lung volumes, subtract pulmonary blood vessels and segment contused lung parenchyma using binary thresholding and achieving volume estimates within 1% of the manually labelled contusion on batch review. 37 Increased lung parenchyma contusion volumes were associated with a higher odds of undergoing mechanical ventilation (OR 1.5; 95% CI 1.1-2.1) and prolonged hospitalization (OR 1.6; 95% CI 1.1-2.2) but not mortality (OR 1.1; 95% CI 0.6-2.0). 37 Automated pulmonary contusion calculation using deep learning algorithms has the potential to improve prognosis without the need for manual three-dimensional reconstruction and volumetric calculations, improving reproducibility and reducing interpretation times.

Typically, in training AI models, the reference standard or “ground truth” is set by one or more interpreting radiologists. Reliance on the radiologist’s sensitivity may generate selection bias whereby studies are incorrectly excluded from the training set. Zhou et al propose using follow-up CT to increase the sensitivity of detecting rib fractures. 8 Within 2 to 3 weeks, fracture healing is evident in the form of new bone formation, increased density of the adjacent bone marrow and fracture ends, thus providing an opportunity to identify subtle fractures that may have been missed on initial CT. 8 They report 127 rib fractures missed on initial CT that were evident on follow-up imaging 3 to 12 weeks after the injury and highlight the potential utility of follow-up CT results for training AI models in the future. 8

One study looked at the assessment of bone mineral density (BMD) on CT in patients with traumatic rib fractures using a deep-learning model. 38 A two-stage model was adopted to segment trabecular bone and predict complete volumetric BMD of the L1 vertebral body retrospectively in 2076 test patients and prospectively in 205 validation patients. Osteoporosis and rib fractures were significantly associated with a sex- and age-adjusted odds ratio (OR) of 2.7 (95% confidence interval [CI] 1.1-6.3). Lower bone mineral density (osteopenia and osteoporosis) was also associated with an increased number of fractured ribs (P < .001) and a higher proportion of flail chest (P < .001), potentially providing prognostic information. However, when used to predict flail chest in the prospective cohort, BMD alone achieved an AUC value of 0.613 (95% CI 0.582-0.643). Opportunistic BMD assessment using a deep-learning model in patients with rib fractures may allow identification of patients warranting treatment for osteoporosis at an earlier stage however, this requires further study and validation.

Pneumothorax

Multiple studies have proposed deep learning models for the detection of pneumothoraces on CXR. A meta-analysis of such studies by Katzman et al reports a pooled sensitivity of 87% (95% CI 81%-92%) and specificity of 95% (95% CI 92%-97%) from 23 studies comprising 34 011 patients which evaluated deep learning algorithms for the detection of a pneumothorax on CXR. 39 A similar meta-analysis by Sugibayashi et al pooled results from 68 studies, reporting a sensitivity of 84% (95% CI 79%-89%), specificity of 96% (95% CI 94%-98%), and AUC of 0.97 (95% CI 0.96-0.98) for deep-learning models. 40 The authors also pooled results from 21 studies reporting on physician metrics, resulting in a sensitivity of 85% (95% CI 73%-92%), specificity of 98% (95% CI 95%-99%), and AUC of 0.97 (95% CI 0.96-0.98). 40 Lind Plesner et al evaluated the performance of 4 commercially available AI tools on 78 CXRs with a diagnosis of pneumothorax, reporting a sensitivity of 63% to 90%, specificity of 98% to 100%, and AUC 0.89 to 0.97. 41 The AI tools performed similarly to the human readers within these parameters, however, the false positive rate for the AI models ranged from 1.1% to 2.4% compared with 0.2% for the human reader (P < .01). 41 Monti et al retrospectively evaluate the performance of GLEAMER ChestView (v1.3), a commercially-available AI tool which labels CXRs as positive, doubtful, or negative for pneumothorax. 9 The study cohort was heterogenous, including patients imaged in the emergency department, as well as inpatients or outpatients, and also included all CXRs regardless of positioning. They report an overall accuracy of the AI model for detecting pneumothoraces comparable to human readers (74% vs 77%; P = .355) with higher specificity (93% vs 85%; P = .034) although lower sensitivity (66% vs 73%; P = .040). 9 Novak et al report similar performance parameters with a commercially available AI tool for the detection of pneumothorax on CXR, however, the difference in mean reporting time with and without the AI tool was not significantly different (30.2 seconds per image without AI, 26.9 seconds per image with AI; P > .05). 42 These studies demonstrate the potential for AI tools to triage CXRs for reporting with or as a second reader to improve detection rates for pneumothoraces.39,40 However, future training should focus on reducing the number of false positives.

Chest radiography should be performed in the erect position to maximize the likelihood of detecting pneumothorax. 43 However, supine radiographs are often obtained in the emergency department or intensive care unit when patient mobility is limited despite a reduction in sensitivity for the detection of a pneumothorax. 10 Kirkpatrick et al report supine anteroposterior CXR has a sensitivity of 20.9% for the detection of pneumothorax in 225 trauma patients. 44 Wang et al propose a deep learning-based computer aided diagnosis (CAD) system to detect and localize pneumothorax on portable supine CXR. 10 The authors developed 2 separate CAD systems that were detection-based and segmentation-based respectively. The detection-based CAD system achieved a sensitivity of 68.7% (95% CI 59.8%-77.6%), specificity of 99.1% (95% CI 98.4%-99.6%), and AUC of 0.940 (95% CI 0.907-0.967) whereas the segmentation-based CAD system achieved a sensitivity of 92.6% (95% CI 87.4%-97.2%), specificity of 92.6% (95% CI 90.9%-94.2%), and AUC of 0.979 (95% CI 0.963-0.991). Performance was similar to that of the radiology reports; sensitivity 91.4% (95% CI 86.2%-96.1%), specificity 99.2% (95% CI 98.6%-99.7%), and AUC of 0.953 (95% CI 0.927-0.976). Smaller pneumothoraces were more difficult to detect, however, the segmentation-based CAD system maintained an AUC of 0.964 (95% CI 0.942-0.981) and a negative predictive value of 99.2% (95% CI 98.6%-99.8%).

The training dataset used for the development of AI models is typically either annotated from clinical report data or manually by expert readers. Rueckel et al hypothesize that noisy datasets with limited annotation derived from radiology reports often used for algorithm training are strongly influenced by confounding factors such as chest tubes and pneumothorax size. 45 They performed subgroup analysis of the performance of 2 AI algorithms (CheXNet and Algorithm 1.5) on a large, manually annotated dataset comprising 1476 cases of unilateral pneumothorax, 176 bilateral pneumothorax, and 4782 control cases without pneumothorax. The presence of a chest tube was identified as a significant confounding factor with near total elimination of the CheXNet algorithm discriminative power, despite published AUCs of up to 0.8887 with this model. 45 Chest tubes were present in more than 30% of the pneumothorax-negative group and further proof of their impact on algorithm performance is evidenced by an AUC increase of approximately 0.05 with their exclusion. 45 However, pneumothorax-positive CXRs with chest tubes were significantly more likely to be detected than similar cases without chest tubes. 45 This evidence suggests AI models are not yet sufficiently trained to monitor pneumothorax resolution with chest tubes in situ and highlights the need for comprehensive annotation in future algorithm training datasets. Seah et al assessed the performance of a commercially available CNN for the detection of tension and simple pneumothoraces in a large dataset of 2557 CXRs. 46 Subgroup analysis was performed to determine the model’s performance in non-erect CXRs and those without subcutaneous emphysema, intercostal drains, or fractures. No statistically significant difference in performance was identified in any of the subgroups (AUC 0.979-0.998), demonstrating the ability of a comprehensively trained CNN algorithm to withstand hidden stratification. 46 Re-training AI models which have been initially trained on open-source datasets with real world data has been proposed as a method of improving their translational accuracy. Kitamura et al report an increase in AUC to 0.90 from 0.59 after retraining with 841 institutional CXRs; however, manual annotation of a large training dataset prior to implementation would slow the integration of AI models in clinical practice. 47 Yoon et al tested the impact of varying imaging contrast levels on algorithm performance with reduced accuracy when contrast levels in the test dataset differed from that of the training dataset. 48 This suggests training with various contrast levels may be important in preparing AI models for real-world interpretation.

AI models for the detection of a pneumothorax on CT are much less numerous than those designed for use on CXR, likely due to the excellent visualization of pneumothoraces on CT and low false negative rates. Two authors, however, propose AI models for automated triage of patients with suspected pneumothorax who undergo CT in order to triage reporting.49,50 Röhrich et al trained a deep residual UNet to identify and quantify pneumothorax on CT with a sensitivity of 95.8%, specificity of 99.4%, and AUC of 0.979. 49 Li et al developed an eight-layer CNN to identify and locate pneumothorax on CT, achieving a sensitivity of 100% and specificity 82.5%. 50 These studies demonstrate AI model accuracy sufficient to be useful in rapid triage, potentially reducing the time to notification of this essential finding. An additional application for AI models in the diagnosis of pneumothorax on CT is automated volumetric assessment. Manual volumetric calculations are not feasible in most clinical settings and pneumothorax size is often estimated. Automated volumetry using AI has shown excellent performance when compared with manual volumetry yielding correlation coefficients of.999, .996, and .996 by Cai et al, Do et al, and Röhrich et al respectively.49,51,52

Evaluation for pneumothoraces in the emergency department prior to CXR or CT is often performed using point-of-care lung ultrasound (LUS) with high negative predictive values potentially reducing the need for further radiological investigations. 53 However, its performance is highly user dependent and AI models for real-time detection of absent lung sliding have been proposed to improve diagnostic accuracy and reproducibility. A deep neural network binary classifier proposed by VanBerlo et al was trained on 2535 LUS clips from 614 patients and tested on 540 clips from 124 patients with a sensitivity of 93.5%, specificity of 87.3%, and an AUC of 0.973. 54 Further research from the same group prospectively assessed the performance of this AI assisted LUS model for real time recognition of absent lung sliding with a sensitivity of 92.1% (95% CI 79.2%-97.3%), specificity of 80.2% (95% CI 73.5%-85.6%), and AUC of 0.885 (95% CI 0.828-0.956). 53 Furthermore, Yang et al report diagnostic efficiency of intelligent LUS, which utilizes AI to identify the pleural line, comparable to that of portable CXR; intelligent LUS sensitivity 79.4% and specificity 85.4% versus portable CXR sensitivity 82.4% and specificity 80.5%. 55 Real-time AI-assisted LUS in the emergency department setting appears to be a feasible adjunct to clinical exam without exposure to radiation and may avoid CXR or CT particularly where the pretest probability is low.

Pulmonary Embolus

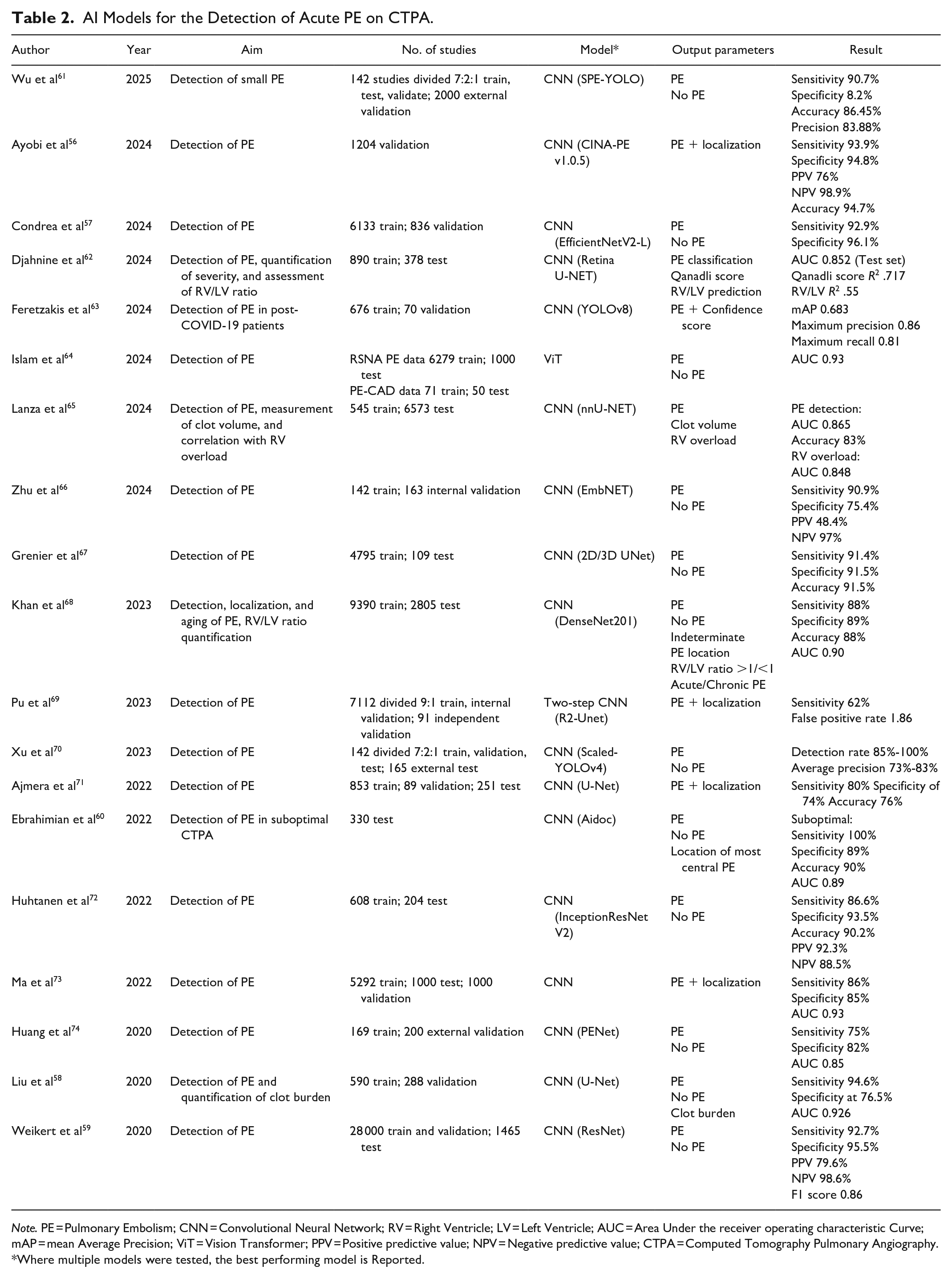

Multiple AI models have been developed for the detection of acute PE on dedicated CTPA as outlined in Table 2. AI models for the detection of incidental PE on non-dedicated CT are outside the scope of this review and excluded. Among the highest performing algorithms are those proposed by Ayobi et al, Condrea et al, Liu et al, and Weikert et al, each achieving a sensitivity of greater than 90% (range 92.7%-94.6%).56-59 Liu et al report a CNN with the highest sensitivity of 94.6%, however, specificity was 76.5%. The other 3 algorithms report higher sensitivities, exceeding 90% (range 94.8%-96.1%). Poor contrast opacification, streak or motion artefacts can limit radiologist’s assessment for PE on CTPA. Ebrahimian et al report similar performance of their AI algorithm on suboptimal CTPA (sensitivity 100%; specificity 89%; AUC 0.89) and optimal CTPA (sensitivity 96%; specificity 92%; AUC 0.87) highlighting another area in which AI tools could improve diagnostic performance. 60

AI Models for the Detection of Acute PE on CTPA.

Note. PE = Pulmonary Embolism; CNN = Convolutional Neural Network; RV = Right Ventricle; LV = Left Ventricle; AUC = Area Under the receiver operating characteristic Curve; mAP = mean Average Precision; ViT = Vision Transformer; PPV = Positive predictive value; NPV = Negative predictive value; CTPA = Computed Tomography Pulmonary Angiography.

Where multiple models were tested, the best performing model is Reported.

Vallée et al compare the diagnostic performance of radiology residents with and without a deep learning based AI tool for the detection of PE, identifying a significant increase in sensitivity (92.5% vs 81.7%; P < .001) and AUC (0.958 vs 0.897; P < .001). 75 Wittenberg et al report similar findings assessing the use of AI in an on call setting with high negative predictive values providing reassurance to less experienced readers; however, the false positive rate as 4.7 per scan. 76 Müller-Peltzer et al also assessed the translation of AI models in a real world setting, testing the performance of a commercially available computer-based algorithm for detecting PE in patients presenting to the emergency department. 77 The algorithm performed poorly in this setting with a sensitivity of 47% on the lobar level and an average of 2.25 false positive findings per study. 77 These results highlight the need for continued algorithm training to reduce false positive rates in real world datasets. Multiple studies have evaluated deep learning algorithms for the automatic detection of PE in order to triage the study for reporting. Batra et al evaluated the impact of an AI tool that reprioritised potentially positive CTPA examinations to the top of the radiologist’s worklist. 78 They identified a significant improvement in report turnaround time with the use of the AI tool, reporting a mean reduction of 12.3 minutes (95% CI 0.6-26.0 minutes). 78 Rothenberg et al also identified a reduction in mean wait times from 21.5 to 11.3 minutes (P < .001) with the use of an AI triage system; however, the AI tool had no significant impact on diagnostic accuracy. 79

Many of the AI models are designed to detect PE, while others have also evaluated localization and laterality.68,71 Others have additional output parameters including quantification of clot burden and identification of right ventricular strain. The Qanadli index (QI) is a scoring system used to determine the degree of vascular obstruction as a surrogate for PE severity.80,81 In most clinical settings, routine calculation of the QI is not feasible as it is time-consuming. Djahnine et al developed an AI model which estimates the QI from blood clot volume, reporting an R2 value of .55 on the training set and .717 ( 95% CI 0.668−0.760) on the test set. 62 Liu et al highlight the practical utility of automatic clot volumetry for estimation of the QI, reporting a measuring time of 12.9 seconds for the AI model compared with 9.8 minutes for manual calculation with a correlation coefficient (r) reaching .825. 58 Lanza et al also trained a CNN to determine blood clot volume, using this parameter as an indicator of PE presence or absence. 65 They report a median clot volume of 1 μL in PE negative cases and 345 μL in PE positive cases, identifying a statistically significant correlation between clot volume and the presence of PE and right ventricle (RV) to left ventricle (LV) ratio (P < .0001), 65 further highlighting the potential for automated clot volume assessment in both diagnosis and prognosis of acute PE.

Right heart strain in the setting of acute PE is a poor prognostic indicator often assessed on CTPA by calculating the RV/LV ratio, with RV/LV ratio >1 to 1.5 indicating RV dilation. 82 This is a reasonably quick and reproducible manual calculation. 82 However, it has been incorporated in a number of AI algorithms. Foley et al report strong agreement between automatic and manual RV/LV ratio calculations in 88 PE positive CTPA studies (intraclass coefficient correlation .83) and identified a RV/LV ratio of 1.18 as an optimal cut-off for prediction of 30-day mortality with a sensitivity of 100% and specificity of 54%. 83 Other studies assessing right heart strain on CTPA include Djahnine et al who report an R2 of .55 for automatic versus manual RV/LV ratio calculations and Lanza et al who achieved an AUC of 0.848 for the prediction of RV overload. 65 Cahan et al propose a weakly supervised AI model (SANet) for assessment of right heart strain on CTPA with a sensitivity of 87%, specificity 83.7%, AUC 0.88, NPV 93.2%. 84 They also demonstrate the model’s generalizability, achieving similar performance parameters for flattening of the interventricular septum (sensitivity 82.7%, specificity 81%, AUC 0.83, NPV 83%) and reflux of contrast into the inferior vena cava (sensitivity 84%, specificity 74.7%, AUC 0.871, NPV 89.7%). 84 While this may not impact diagnostic accuracy, it does offer potential for earlier detection of right heart strain.

Acute Thoracic Aortic Dissection

Contrast-enhanced CT is highly accurate in the detection of acute thoracic aortic dissection with reliable identification of the dissection flap and false lumen. 85 However, patients may present with non-specific symptoms and may undergo CXR first on presentation to the emergency department. Suspicion for acute thoracic aortic dissection is raised in the presence of a widened mediastinum on CXR, however, the sensitivity of this sign is approximately 70%.86,87 Lee et al propose using a residual neural network (ResNet) to enhance the detection of acute thoracic aortic dissection on CXR with reported accuracy of 91.78%, precision of 77.75%, and an F1 score of 81.57%. 88 Rapid screening of patients presenting to the emergency department with chest pain using this model could raise the suspicion of acute thoracic aortic dissection earlier and result in faster diagnosis. The mortality rate of acute thoracic aortic dissection rises by 2% each hour and more rapid diagnosis is likely to translate to improved prognosis.88,89 Multiple studies have evaluated AI tools in the evaluation of aortic dissection on CT, although outside the scope of this review. 90

Acute Respiratory Distress Syndrome/Parenchymal Disease

Sjoding et al trained a CNN with dense neural network architecture to detect the probability of ARDS on CXR, achieving an AUC of 0.92 (95% CI 0.89-0.94) on the internal validation dataset of 1560 CXRs from 455 patients. 91 On an external validation dataset of 958 CXRs from 431 patients, the model achieved an AUC of 0.88 (95% CI 0.85-0.91) however, this improved to 0.93 (95% CI 0.92-0.95) when CXRs which had been annotated as equivocal were excluded. 91 Röhrich et al propose a radiomics-based algorithm to predict ARDS on initial CT in patients presenting with polytrauma achieving an AUC of 0.79 compared with an AUC of 0.66 and 0.68 for the injury severity score and abbreviated injury score of the thorax respectively. 92 Lind Plesner et al evaluated 4 commercially available AI tools for the detection of airspace disease on CXR reporting an AUC ranging from 0.83 to 0.88 (95% CI 0.81-0.90). 41

AI models are not only useful in the diagnosis of acute thoracic pathology but have been developed for the triage of patients to determine the need for further imaging and to identify patients at risk of deterioration. Shahverdi Kondori and Malek have developed a machine learning algorithm utilizing personal information, incident details, trauma mechanism, vital signs, level of consciousness, clinical examination, history and imaging findings to determine the need for CT in patients who have experienced blunt chest trauma. 93 Data from 600 patients comprised the training dataset, 200 patients the validation dataset, and 200 patients the test dataset for each machine learning algorithm. A decision tree model was then adopted and 700 patients used to find the optimal decision tree depth. Data from the remaining 300 patients comprised the test set with the final model demonstrating an accuracy of 99.91%, sensitivity of 100%, and specificity of 99.33%. 93 Banerjee et al propose an AI model to generate a patient-specific risk score for acute PE, the Pulmonary Embolism Result Forecast Model, to optimize the use of CT. 94 The best performing model reached an AUC of 0.90 for predicting a positive PE study (95% CI 0.87-0.91), outperforming the Wells score’s AUC of 0.51. 94 If utilized in the emergency department for the triage of patients presenting following acute thoracic trauma or with symptoms of acute PE, these AI decision tools have the potential to optimize patients referred for CT scanning and have a positive impact on cumulative radiation exposure, cost, and time.

Machine learning algorithms have also been applied to risk-stratify patients presenting with thoracic trauma. Hefny et al analyzed 212 patients with a history of blunt thoracic trauma admitted to a single hospital over 3 years. 95 They looked at ambulance data, physical examination findings, laboratory findings, radiologic findings, and radiomics data on the initial chest CT to determine the optimal machine learning algorithm to predict intensive care unit (ICU) admission. When trained on the radiomics data, the classification algorithms predicted ICU admission with a sensitivity of 96.3%. 95 Staziaki et al report similar machine learning algorithms for severity prediction in thoracic trauma, however, they analyzed clinical data with and without raw CT data rather than radiomics, achieving an AUC of 0.87 with a support vector machine (SVM) classifier trained on both clinical and imaging findings. 96 Chen et al also evaluate machine learning algorithms for the prognostication of blunt chest trauma, specifically the prediction of prolonged duration (≥7 days) mechanical ventilation. 97 Importance analysis identified the volume of pulmonary contusion as the most important feature to predict duration of mechanical ventilation, followed by age, body mass index, C-reactive protein, and injury severity scores 48 hours after admission. The authors evaluated a number of machine learning and deep learning algorithms reporting an accuracy of 87.5% and an AUC of 0.812 with a random forest classifier model. 97

Prognostic applications of AI are also evident in the setting of acute PE. Xu et al propose a machine-learning model to identify patients at risk of haemodynamic deterioration in patients with intermediate risk PE admitted to the intensive care unit. 98 Their extreme boosting gradient machine learning model achieved the highest predictive performance, reporting an AUC of 0.866 (95% CI 0.800-0.925) which further improved to 0.885 (95% CI 0.822-0.935) following isotonic regression recalibration. 98 Gao et al propose an AI model which predicts deterioration at admission based on RV/LV ratio and the presence of pulmonary vein filling defects, achieving a sensitivity of 88.5% and specificity of 83.4%. 99 Accurate, easy to use AI models for risk stratification of patients with acute PE may lead to earlier recognition of those at highest risk; however, further validation is needed.

Conclusion

The applications of AI tools in acute thoracic imaging include detection of rib fractures, pneumothoraces, and PE. Many results are promising with respect to improved diagnostic test performance and reduced reporting times. However, further study is needed to ensure that clinical implementation of AI tools is optimal given the potential for AI deployment in radiology to contribute to symptoms of burnout. 100 Furthermore, training and interference of AI models is very energy intensive and strategies are needed to reduce their environmental impact.101,102 Future research and AI model development should focus on reduction of false positive rates with robust external validation and ongoing monitoring to evaluate for potential model drift.

Footnotes

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Dr. Patlas received royalties from Springer and Elsevier.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.