Abstract

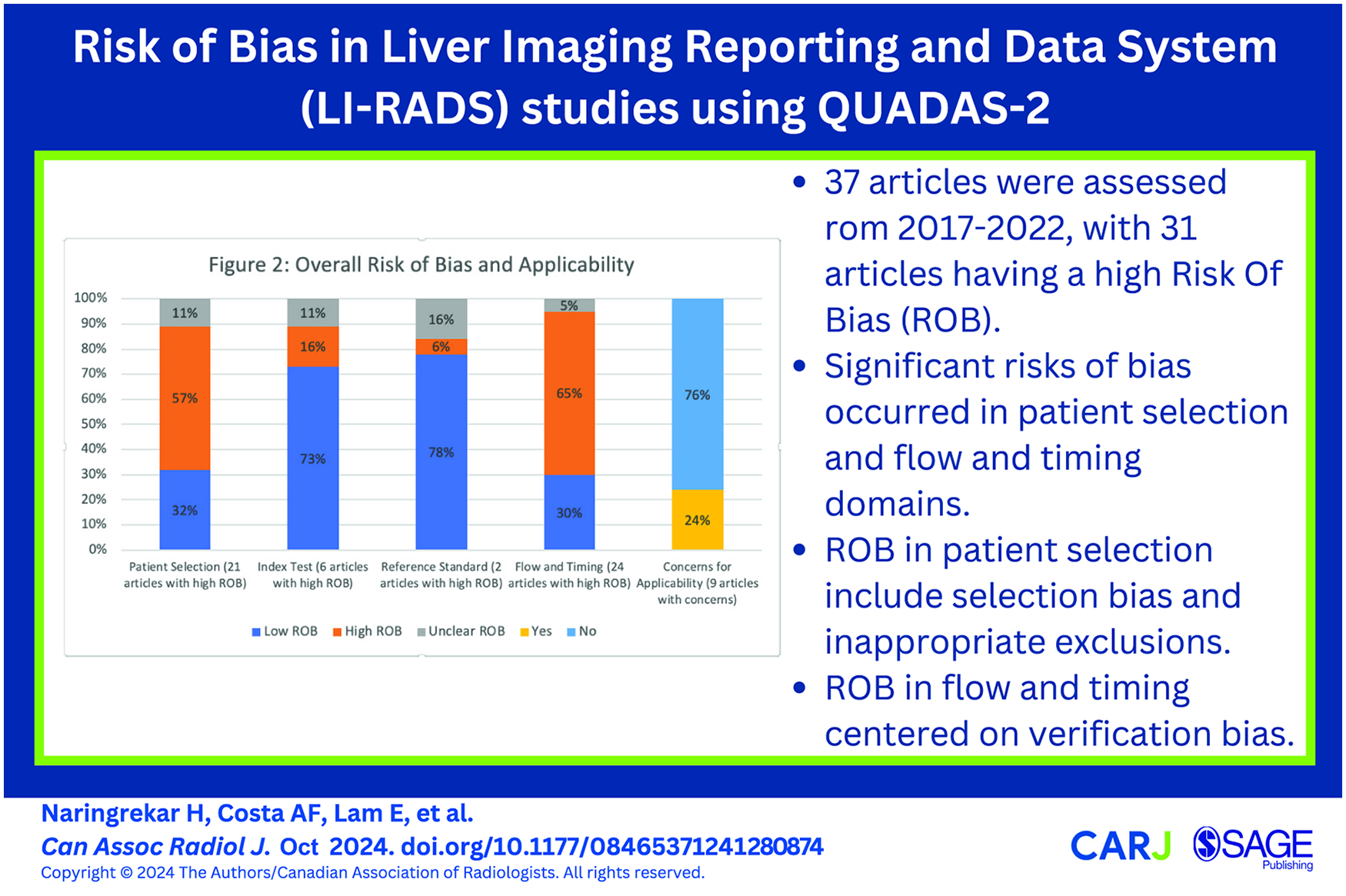

This is a visual representation of the abstract.

Introduction

The Liver Reporting and Data System (LI-RADS®) standardizes the nomenclature, technique, interpretation, data collection, and reporting of imaging examinations in patients at risk for developing hepatocellular carcinoma (HCC). 1 Since its inception in 2008, diagnostic LI-RADS algorithms have undergone many updates, with the most recent version released in 2018. Extensive peer reviewed studies have been published which have evaluated various components of LI-RADS. Unfortunately, there has not been much research into the specific risks of bias for LI-RADS related research studies. Risk of bias (ROB) is the chance that the characteristics of a study design or how a study is conducted will lead to misleading results, and can occur when there are significant flaws or limitations in the design or conduct of a study which can alter the veracity of results. 2 Discerning bias in LI-RADS research can be challenging given the complexity of LI-RADS, variability of study methodology and variable completeness of reporting. 3 Several tools are available to evaluate studies for risk of bias, including the SIGN methodology (Scottish Intercollegiate Guidelines Network), AMSTAR (A Measurement Tool to Assess systematic Reviews), and CASP (critical appraisal skills program). 4 The Quality Assessment of Diagnostic Accuracy Studies (QUADAS-2) tool is appropriate for most LI-RADS research because these studies often evaluate diagnostic accuracy. 5

The QUADAS-2 tool is used to evaluate for risk of bias and concerns regarding applicability. Four domains are used to assess risk of bias: patient selection, index test, reference standard, and flow/timing. 2 Signalling questions are developed to help classify the level of risk of bias in each of these 4 domains as low, unclear, or high. Concerns regarding applicability of the study are also assessed in the patient selection, index test, and reference standard domains as low, high, or unclear. The LI-RADS Individual Participant Data (IPD) group conducts systematic reviews to investigate the diagnostic performance of LI-RADS for diagnosis of HCC. 6 As part of the group’s work, the QUADAS-2 tool has been optimized for assessing risk of bias and applicability in LI-RADS research. 7 The QUADAS-2 tool was initially tailored for evaluation of LIRADS research using a consensus approach by a team of radiologists, data specialists, and a hepatologist. 3 Signalling questions were created and utilized to assess LI-RADS research studies for potential risks of bias and concerns regarding applicability.

The purpose of this study is to present results from our application of the tailored QUADAS-2 tool to LI-RADS research, and to identify common areas of sub-optimal methodology and reporting. This work may serve as a guide for readers to critically appraise LI-RADS diagnostic accuracy studies and for researchers to optimize the design and reporting of LI-RADS diagnostic accuracy studies.

Materials and Methods

Study Design

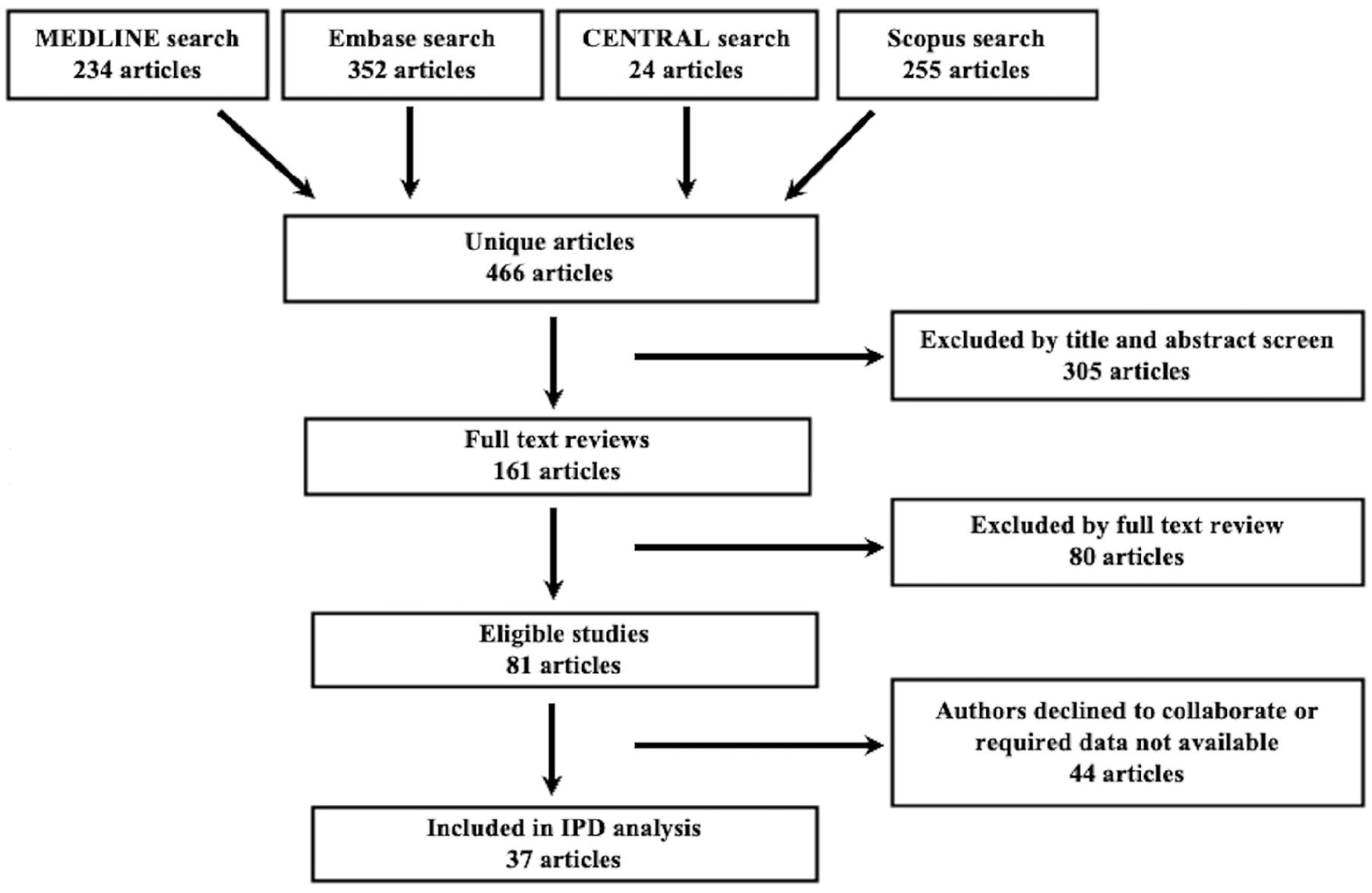

The IRB for IPD analysis and transfer of data was approved by the Research Ethics Board at The Ottawa Hospital Research Institute (Protocol ID#: 20190664-01H). Thirty-seven studies from our LI-RADS IPD database have been assessed with the QUADAS-2 tool in published IPD-meta-analyses,3,8 ranging from 2017 to 2022. Only relevant LI-RADS studies where the author consented to participating in the IPD data base were included. Using the assistance of experienced hospital librarians, studies evaluating the diagnostic accuracy of CT, MRI, or contrast-enhanced ultrasound (CEUS) for HCC using LI-RADS (CT/MRI v2014, v2017, or v2018; CEUS v2016 or v2017) were searched for through MEDLINE, Embase, Cochrane Central Register of Controlled Trials (CENTRAL), and Scopus databases. Studies were excluded because of incomplete or redundant data with other studies, unavailable data, patient level rather than observation level data, and issues with the data formatting7,9-12 (Figure 1). No language or publication type restrictions were applied. Dates included 2014 to Jan 2022 of when our studies were performed based on publication date of LI-RADS 2014. Grey literature was assessed via search of abstracts presented from 2014 to Jan 2022 of when our studies were presented at the annual meetings of the Radiological Society of North America (RSNA), American Roentgen Ray Society (ARRS), European Society of Radiology (ESR), International Society for Magnetic Resonance in Medicine (ISMRM), Society of Abdominal Radiology (SAR), and European Society of Gastrointestinal and Abdominal Radiology (ESGAR).

Flow diagram demonstrating study inclusion and exclusion strategy.

Development and Application of the QUADAS-2 Tool

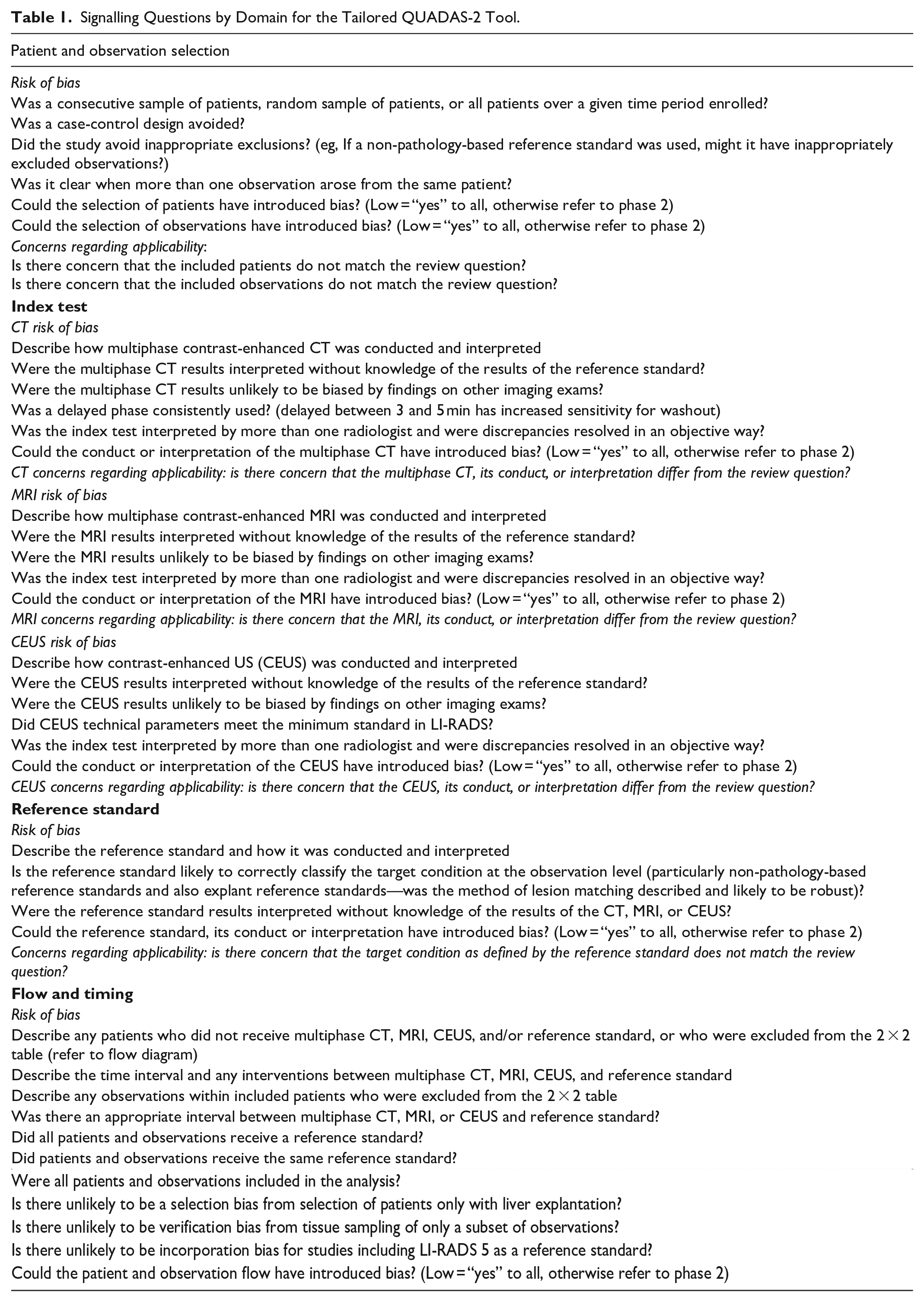

The tailored QUADAS-2 tool used by the LI-RADS IPD team is provided as Table 1. Using a consensus-based process, 4 radiologists with 5 to 10 years of experience using the QUADAS-2 tool developed the signalling questions; co-authors were also given the opportunity to contribute to the creation of these questions. The questions were piloted and refined after several rounds before their application in each of the QUADAS-2 domains (patient selection, index test, flow and timing, and reference standard). 2 Each article was then analyzed systematically using the signalling questions for potential sources of bias. If an article answered one or more signalling questions per each domain as “no,” the article was designated as “high risk” for bias.2,13 If all signalling questions for each domain were answered “yes,” risk of bias was assessed as low. When there were insufficient data to answer the signalling questions, the domain was assessed as unclear risk of bias. QUADAS-2 assessments were performed in duplicate and independently by 2 authors (JPS, CvdP) with experience using the QUADAS-2 tool. Disagreements were resolved with a third expert reviewer (MM). Consensus QUADAS-2 assessments were tabulated for each domain. Proportions of studies at low, unclear, and high risk of bias were calculated.

Signalling Questions by Domain for the Tailored QUADAS-2 Tool.

“Concerns for applicability” were assessed for each study and structured similarly to each bias section (patient selection, index test, reference standard) using questions which differed from the signalling questions (Table 1). If a study had one or more questions for each domain answered as “high,” the article was deemed as “concerning” for applicability. If all questions for concerns of applicably were answered “low,” the study was categorized as “low” concerns for applicability. If data were insufficient for classification, the study was categorized as “unclear” concerns for applicability. Analysis of the results was performed through descriptive measures and summarized in a standardized Excel sheet format. 7 Consensus QUADAS-2 assessments were tabulated for each domain.

Results

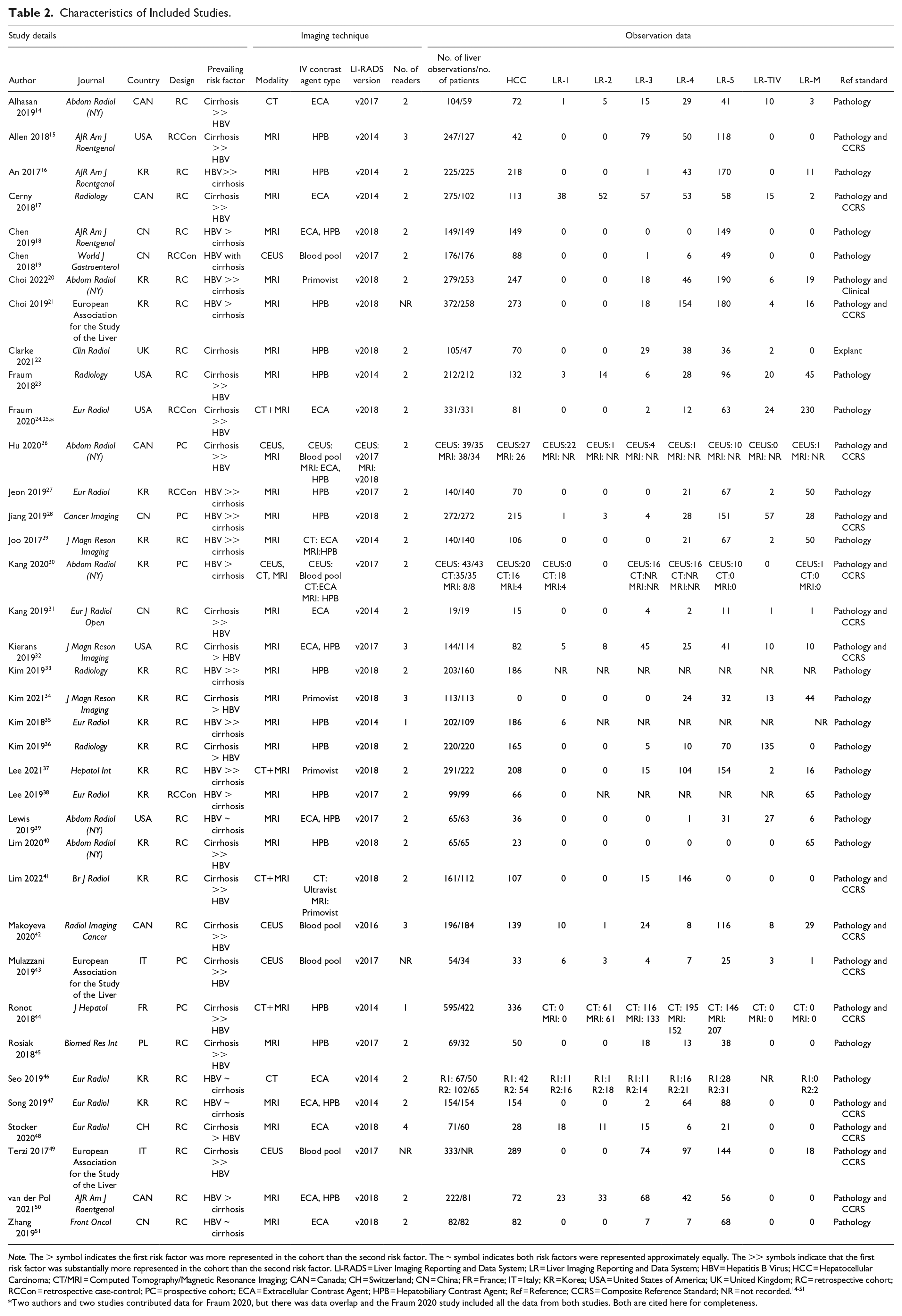

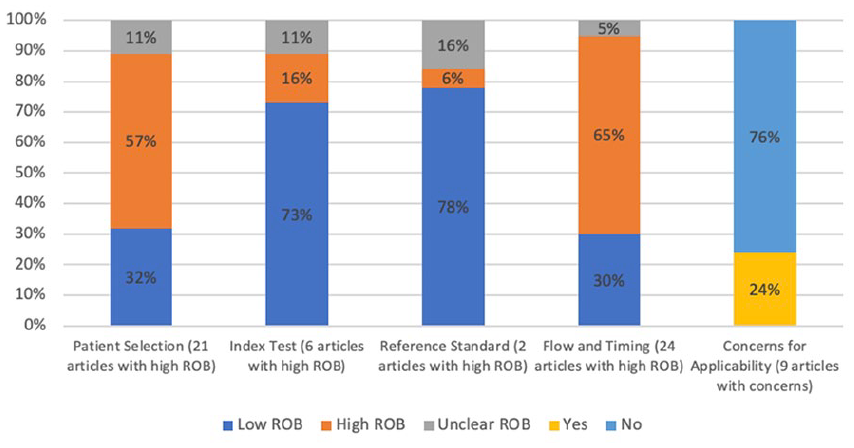

The cohort of original research studies and their characteristics are summarized in Table 2. Using the QUADAS-2 tool, 31 of the 37 included studies were assessed as high risk of bias, and 6 studies were at low risk of bias (meaning all domains were deemed to be at low risk of bias). 8 9 out of 37 studies demonstrated concerns for applicability (Figures 2 and 3).

Characteristics of Included Studies.

Note. The > symbol indicates the first risk factor was more represented in the cohort than the second risk factor. The ~ symbol indicates both risk factors were represented approximately equally. The >> symbols indicate that the first risk factor was substantially more represented in the cohort than the second risk factor. LI-RADS = Liver Imaging Reporting and Data System; LR = Liver Imaging Reporting and Data System; HBV = Hepatitis B Virus; HCC = Hepatocellular Carcinoma; CT/MRI = Computed Tomography/Magnetic Resonance Imaging; CAN = Canada; CH = Switzerland; CN = China; FR = France; IT = Italy; KR = Korea; USA = United States of America; UK = United Kingdom; RC = retrospective cohort; RCCon = retrospective case-control; PC = prospective cohort; ECA = Extracellular Contrast Agent; HPB = Hepatobiliary Contrast Agent; Ref = Reference; CCRS = Composite Reference Standard; NR = not recorded.14-51

Two authors and two studies contributed data for Fraum 2020, but there was data overlap and the Fraum 2020 study included all the data from both studies. Both are cited here for completeness.

Summary graph highlighting percentages and number of articles at high risk of bias by domain and concerns for applicability.

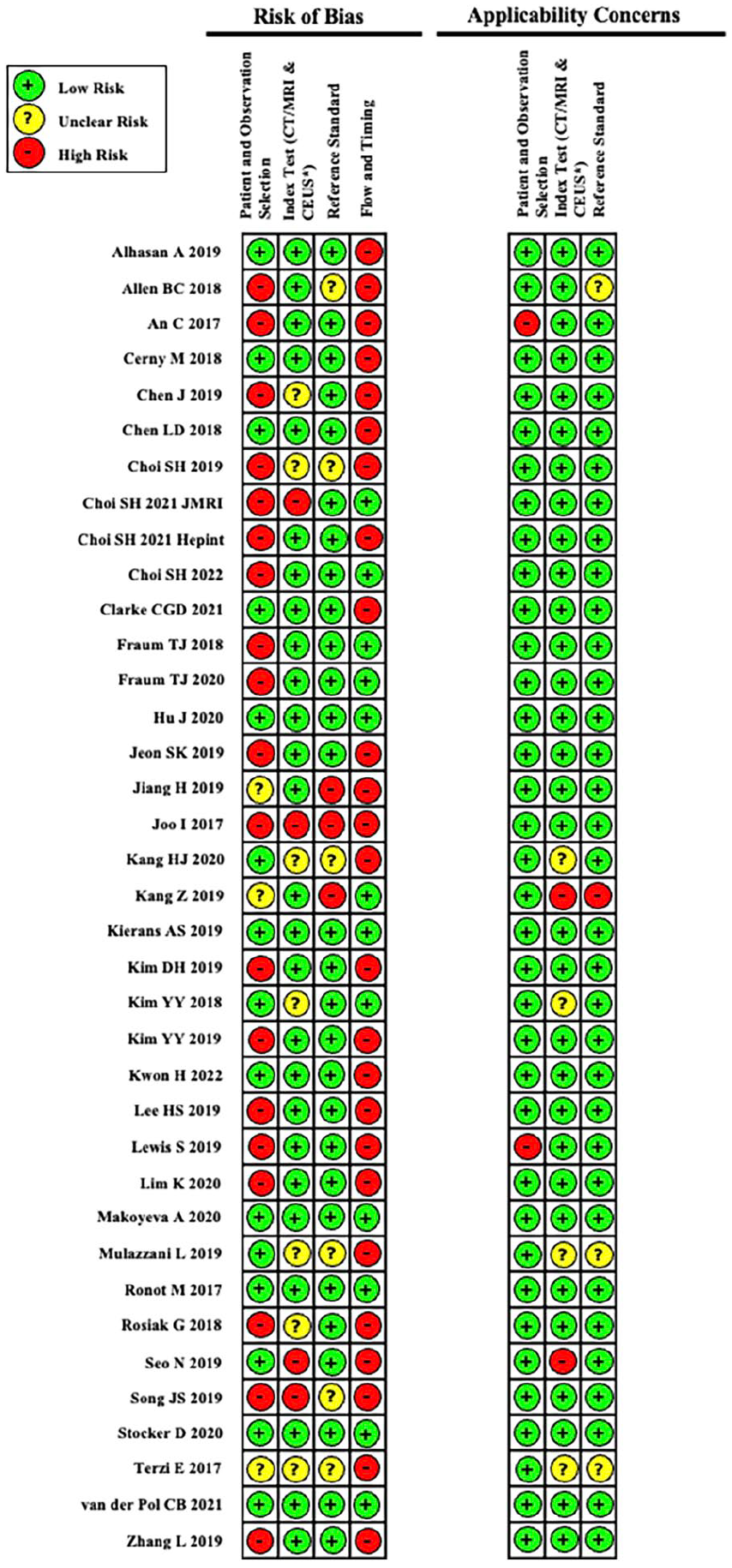

Risk of bias and applicability concerns for each article used in our QUADAS-2 tool assessment.

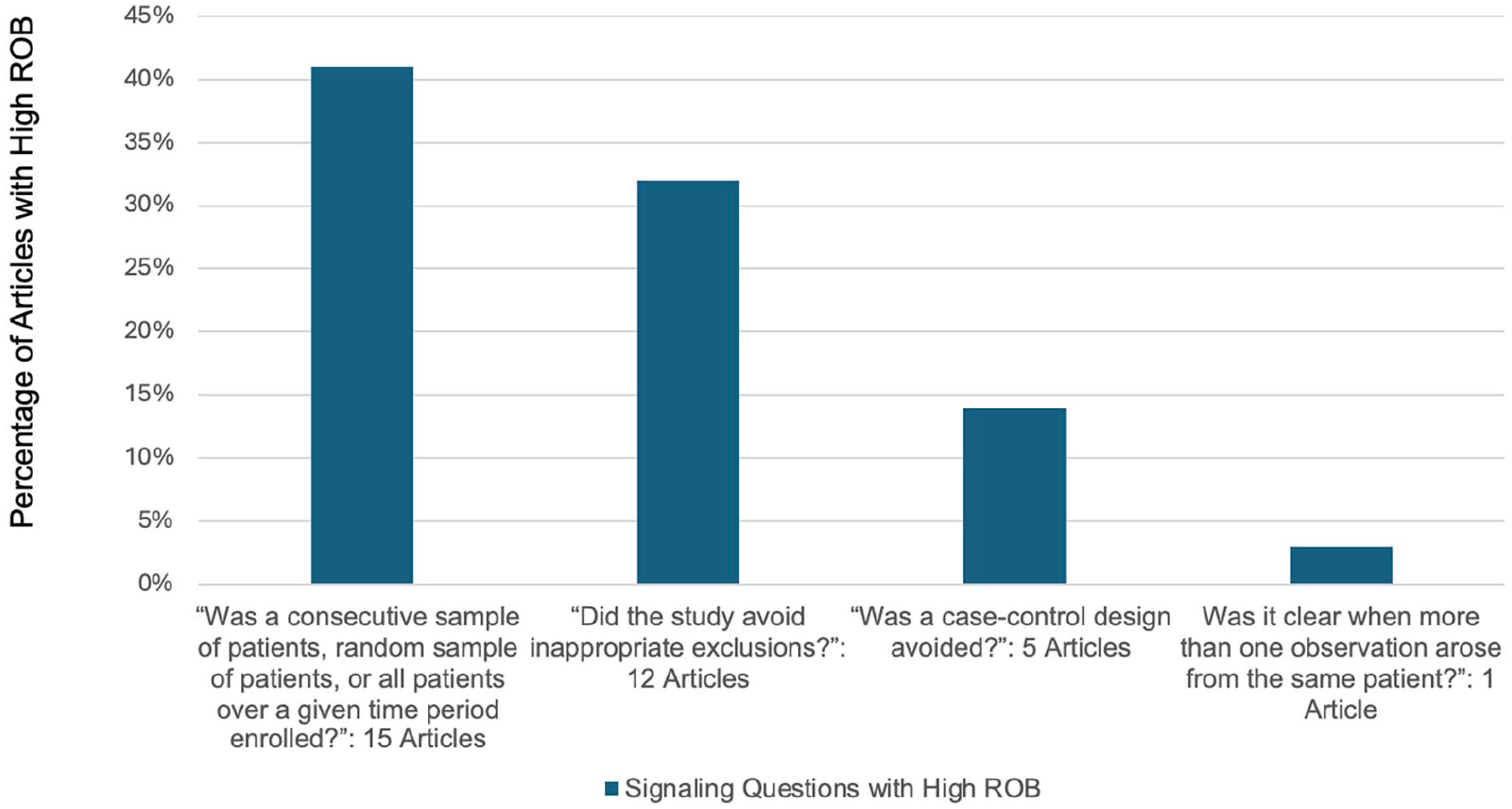

Patient Selection Domain

The

Percentage of articles by signalling question demonstrating high risk of bias in the Patient Selection Domain.

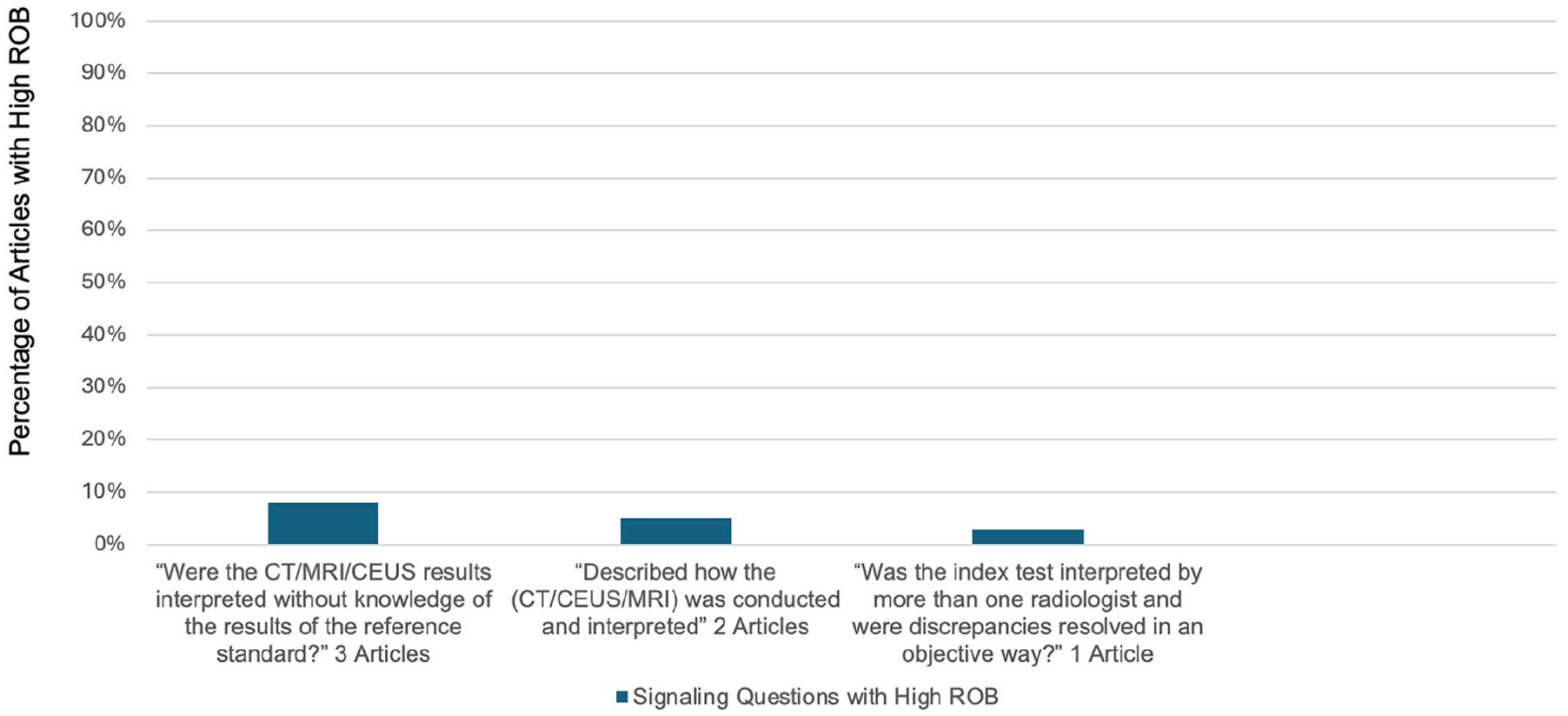

Index Test Domain

The

Percentage of articles by signalling question demonstrating high risk of bias in the Index Test Domain.

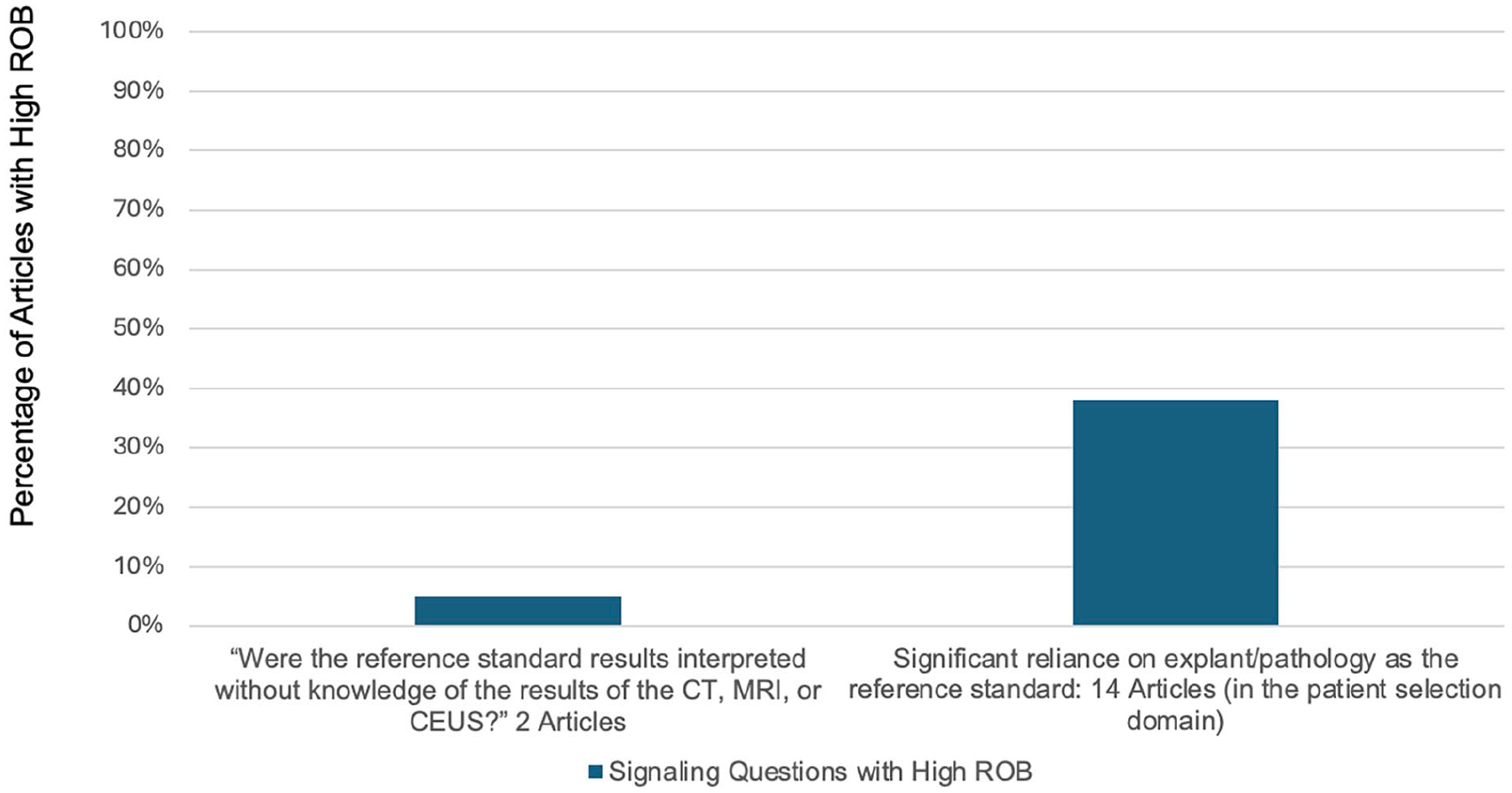

Reference Standard Domain

Bias in the

Percentage of articles by signalling question demonstrating high risk of bias in the Reference Standard Domain.

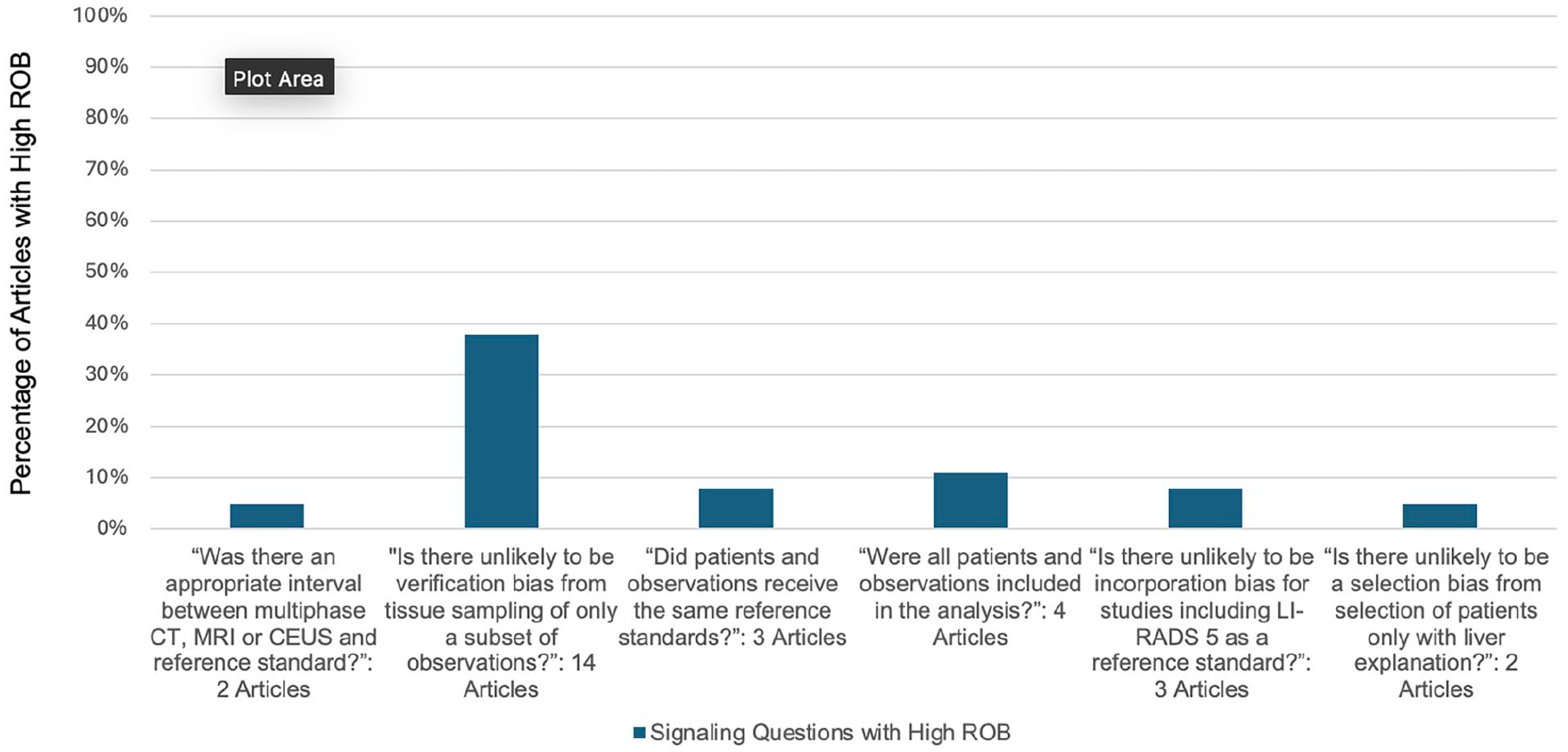

Flow and Timing Domain

Bias in the

Percentage of articles by signalling question demonstrating high risk of bias in the Flow and Timing Domain.

Discussion

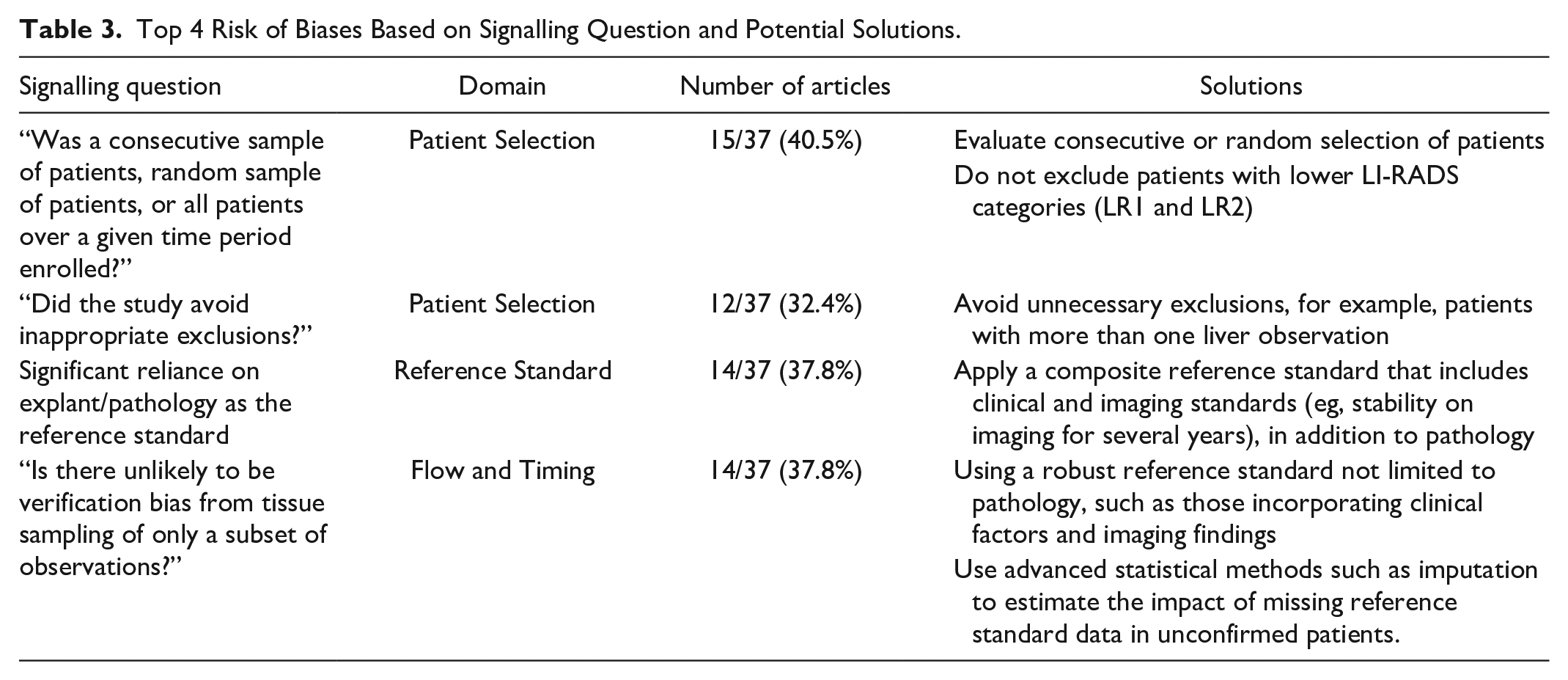

This in-depth QUADAS-2 review of 37 studies retrieved from a systematic review identified several areas of potential bias that readers and future researchers should be aware of. These results add to a previous review article and editorial discussing other aspects of LIRADS diagnostic accuracy research and IPD meta-analysis, respectively.53,54 The QUADAS-2 tool helps evaluate for biases by specific domains, using signalling questions in a systematic manner. We identified several common areas that result in high risk of bias within each of the 4 domains, which are discussed as follows. The highest risk of biases and potential solutions are summarized in Table 3. 55

Top 4 Risk of Biases Based on Signalling Question and Potential Solutions.

In the patient selection domain, a major source of bias in this cohort of studies was the selection of only patients with a higher LI-RADS category, choosing patients treated for suspected HCC, and choosing patients with known malignancy. These study designs are at high risk of selection bias, where subjects selected for inclusion in the study are likely not representative of the target population. A recent systematic review more fully explored this source of bias, which led to higher observed rates of HCC in LR-2 and LR-3 categories. 3 Additionally selecting patients with only higher LI-RADS categories while excluding LR1 and LR2 patients can also lead to spectrum bias (also known as sampling bias). This affects the diagnostic accuracy of these study in terms of sensitivity and specificity. 56

Several studies inappropriately limited inclusion to only one liver observation per patient or selecting patients with only one observation. This is a significant issue as treatment decisions are based not only the presence of HCC, but also on the size and number of HCCs. As such, analysis at the liver observation level is preferred. Finally, a case-control design was used in several studies. Case-control studies artificially set the prevalence of HCC in the study population, which directly impacts the percentage of HCC in each category, which relates to the positive and negative predictive values. Additionally, due to the retrospective nature of case-control studies, only correlation can be made between variables, not causation. Furthermore, case-control studies tend to include fewer indeterminate or intermediate-category observations, affecting the distribution of outcomes in the study population. The control aspect of these studies needs to be reflective of the general population, which may be an issue in single-institution research. 57

In the index test domain, readers were unblinded from results from the reference standard in 3 studies. This can lead to review bias, whereby knowledge of the gold standard can potentially influence the interpretation of an index test. 58 Two studies did not adhere to the LI-RADS technical guidelines. Following the LI-RADS imaging parameters for each modality is paramount in evaluating the diagnostic performance of LIRADS, so that the imaging tests assessed in research are reflective of the tests performed in practice; this also allows for consistent data collection for future studies. 52

The reference standard corresponds to the method used to determine the presence or absence of disease to which the index test is compared to, with the assumption that the reference standard is 100% accurate. In practice, reference standards are rarely able to achieve this, leading to bias. For the reference standard domain, biases stemmed mostly from a heavy reliance on pathology and explant as the reference standard. A recent meta-analysis assessing the impact of the reference standard in the diagnosis of HCC found that pathology-based reference standards were used 4 times more often than clinical reference standards. 8 Additionally, observations that were confirmed to be HCC were twice as likely to have used a pathology reference standard than a clinical reference standard. This can lead to selection bias secondary to focusing on a larger number of observations diagnosed as HCC. For example, LR1-LR3 and even LR5 are typically not pathologically proven. Misclassification bias can also occur when using pathology as the reference standard, as sampling error can lead to false negative results. 59 The emphasis on pathology in designing the reference standard also adversely affects other domains of bias, namely patient selection and flow and timing. Including reference standards such as follow-up imaging and imaging with other modalities in addition to pathology-based reference standards can help these biases. 8

It is also important to evaluate the reference standard without knowledge of the results of the index test. Several studies demonstrated high risk of bias in the reference standard domain secondary to evaluating the reference standard while knowing the LI-RADS category. This can lead to diagnostic review bias, where the results of the index test if positive may drive researchers to scrutinize the reference standard more intensely.

When determining the choice for a reference standard, it is important to note that the appropriateness of the reference standard depends on the research question being asked. In the meta-analysis setting for example, a different study research question can lead to rejection of quality research papers secondary to the reference standard. This can occur despite the reference standard being appropriate to the original paper.

The domain in which the largest number of studies demonstrated high risk of bias was with flow and timing. Many of the reviewed studies were either unclear or did not have an appropriate interval between the imaging study and the reference standard. Too long of a period between the index test (imaging study) and reference standard can lead to misclassification bias, where the disease progresses or improves during the extended interval. Ideally, the timing between performing the index test and reference test should be as short as possible to avoid this type of bias. 59

Additionally, a significant number of studies showed high risk of verification bias through either sampling only a subset of liver observations based on the findings on the index test or using different reference standards for patients. There are several subtypes of verification bias such as partial verification bias and differential verification bias. Partial verification bias occurs if only a certain number of patients or subset of liver observations receive the reference standard (such as tissue sampling), resulting in a low number of false negatives and overestimation of the sensitivity of the test. Sometimes this is unavoidable if the reference standard is invasive, as it may be unethical to apply a particular reference standard to every patient in clinical practice. 60 Differential verification bias occurs when at least 2 different reference standards are used for the study, again leading to an overestimation of sensitivity. Verification bias can be corrected for via statistical methods, and these methods should be considered when either sampling a subset of observations or when having to use more than one reference standard is unavoidable.

Several studies had risk for incorporation bias by including LR-5 classification with the same modality as the index test as a reference standard (eg, using MR LI-RADS 5 status as all or part of the reference standard when evaluating MR as the index test). Incorporation bias occurs when the index test results are part of the adjudication process, leading to a falsely elevated sensitivity and specificity. Sensitivity analyses (excluding studies at high risk of bias) can help mitigate some of the risks of using the index test as the reference standard. 58

Applicability of a research study involves looking at the extent to which conclusions from the primary study apply to the review question. As stated previously, these differ from the signalling questions used in the ROB assessment and fall under the patient and observation, index test, and reference standard domains only when developing the tailored QUADAS-2 tool. This helps address the potential mismatches between the review questions at an individual study versus a systematic review level. Several studies demonstrated concerns for applicability because the included patients or observations did not match the review question. This occurred when only patients with malignancy (both HCC or non-HCC malignancies) were included. Only including patients with malignancy limits a studies generalizability to the population it is targeting. Several studies also raised concerns because the conduct or interpretation of the CT/MRI/CEUS differed from the review question. This happened when the study did not follow the technical parameters for LI-RADS, which is a significant limiting factor when the focus of the review question is analyzing the diagnostic accuracy of LI-RADS. Finally, one study demonstrated concern that the target condition as defined by the reference standard did not match the review question. This study used a reference standard which may not detect the target condition defined in the review question (HCC), which again limits the studies applicability to the target population. 61

Many of the described biases in the LI-RADS literature can be addressed by adhering to the STARD guidelines, a check list of 30 recommendations for research study design to improve evaluation of diagnostic accuracy. 62 A problem in systematic reviews of LI-RADS has been suboptimal reporting of primary diagnostic accuracy studies. Non-reporting of this essential information leads to difficulty in identifying, critically appraising, and replicating studies for future research. While this challenge is prevalent in the imaging literature, the number of “unclear” domains indicates that there is opportunity for improvement. In our cohort of studies, 14 demonstrated unclear domains.63,64 Currently, many of the major radiology journals endorse and require adherence to the STARD guidelines.65,66

While the QUADAS-2 algorithm is an important starting point in determining the quality of research, there remain several limitations. First, while QUADAS-2 assesses for risk of bias, it is also based on assumptions to provide a balance between quality and practicality. Second, QUADAS-2 does not assess for data integrity or directly assess quality of statistical methods, both of which are important aspects in assessing research quality. Finally, the LIRADS IPD only included data from studies that met inclusion criteria and also needed the primary investigators of these studies to be willing to share this data. As such, the QUADAS-2 assessments are not necessarily representative of all LI-RADS research being conducted worldwide.

Conclusion

The advent of LI-RADS in the diagnosis of HCC has led to a plethora of research over the past decade. In our analysis of selected LI-RADS literature, we have demonstrated several areas in study design that are at high risk for bias. Recognizing these sources of bias is important to help readers evaluate the validity and generalizability of results from these studies to the population. Using the QUADAS-2 tool, this systematic review has provided recommendations for designing future studies to avoid these biases. Adherence to the STARD checklist is also essential when designing research studies in LI-RADS, as this can also help mitigate bias and comply with major radiology journal requirements.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: CIHR Operating Grant, Joan Sealy trust.