Abstract

With Artificial Intelligence (AI) tools becoming increasingly commonplace, the usage of AI-enabled tools in education has also grown. AI-enabled tools refer to machines incorporated with human-like capabilities, such as reasoning, interpretation, and problem-solving, to perform tasks that require human intelligence. ChatGPT is one of these tools, which uses large language models (LLM), a type of AI that generates natural language, to give human-like answers to questions. This study investigated nursing students’ perspectives on AI-enabled tools, such as ChatGPT, aiming to identify (1) perceived benefits and challenges and (2) implications for the ethical and responsible use of AI within undergraduate nursing programs. Using interpretive description, we conducted focus group interviews with undergraduate nursing students. Through convenience sampling, sixteen students were recruited. Our findings revealed four key themes - utilization as a support tool, utilization leading to a loss of competency in foundational skills, utilization risking credibility and academic integrity, and the need for further education and resources. Three key factors – evidence-based practice, ethical considerations, and the importance of critical thinking skills – influence nursing students’ perspectives toward AI tools. To ensure the safe and ethical use of AI in academia, robust institutional policies and training are needed. Promoting open dialogues and education can help students understand AI's advantages, potential harms, and risk mitigation strategies. Future research should build a comprehensive understanding of the perspectives of undergraduate and graduate nursing students, and educators on AI usage in academia. Development of interventions that mitigate AI-usage risks is also necessary to improve integration into education.

Introduction

Artificial intelligence (AI)-enabled tools have rapidly gained attention over recent years, with ChatGPT being a prime example (Wong et al., 2024b). AI-enabled tools refer to machines incorporated with human-like capabilities, such as reasoning, interpretation, and problem-solving, to perform tasks that would require human intelligence (Enholm et al., 2022; Wong et al., 2024a). ChatGPT is one of these tools, which uses large language models (LLM), a type of AI that generates natural language, to give human-like answers to questions (Liu et al., 2023; Lu et al., 2024; Mishra et al., 2024). Due to its efficiency, versatility, and accessibility, the usage of AI-enabled tools in education has grown immensely, as it presents an abundance of opportunities to enhance learning (Adiguzel et al., 2023; Nazir & Wang, 2023). These tools also offer a variety of abilities that can assist students in academic work through generating written content, summarizing readings, suggesting research topics, and more (Adiguzel et al., 2023; De Gagne, 2023; Nazir & Wang, 2023).

Within the field of nursing education, AI tools provide an opportunity for students to learn and practice in a safe environment (De Gagne, 2023). Nursing students can practice applying their knowledge in a multitude of scenarios through AI-generated case studies and practice questions (Dorin & Atkinson, 2024; Lane et al., 2024). With the benefit of receiving feedback and recommendations in real-time, students can study efficiently, personalize their learning, and identify weaknesses in their understanding (Baidoo-Anu et al., 2024; Dorin & Atkinson, 2024; Lane et al., 2024). Clinical questions or uncertainties can also be clarified through AI-enabled tools, thereby allowing students to better grasp the rationale behind care decisions and foster clinical decision-making (De Gagne, 2023; Lane et al., 2024; Raymond et al., 2022).

However, concerns about the integration of AI-enabled tools into nursing education and its alignment with core nursing values have been raised (Lane et al., 2024; Sun & Hoelscher, 2023). As students are being trained to enter the nursing profession, the ability to meet professional standards and provide quality care holds great importance, and thus, worries about the over-reliance on AI and its impacts on developing competency and critical thinking are prevalent (Adiguzel et al., 2023; Dorin & Atkinson, 2024; Lane et al., 2024). There is also apprehension regarding the possible biases, cultural exclusivity, and misinformation that exist within AI-generated content (Adiguzel et al., 2023; Lane et al., 2024; Nazir & Wang, 2023). Furthermore, ethical considerations, like privacy, confidentiality, and academic misconduct, have been highlighted across multiple sources (Adiguzel et al., 2023; Baek et al., 2024; De Gagne, 2023; Dorin & Atkinson, 2024; Lane et al., 2024; Nazir & Wang, 2023; Sun & Hoelscher, 2023).

Despite its potential to enhance learning outcomes, educational institutions are still lacking adequate policies around AI usage, and there is limited research on the perspectives of nursing students on the use of AI-enabled tools (Dorin & Atkinson, 2024; Labrague et al., 2023; Lane et al., 2024; Lukić et al., 2023; Montenegro-Rueda et al., 2023). Understanding the attitudes of nursing students around the use of tools like ChatGPT, as well as their perspectives on the potential risks and benefits of AI usage, is key to developing educational guidelines that align with student needs and values (Labrague et al., 2023).

This study aimed to (1) investigate student perspectives on an AI-enabled tool, ChatGPT, to explore perceived benefits, concerns, and challenges, and (2) identify implications for the ethical and responsible use of AI among nursing students. The findings of this research offer much-needed student-centered insights to inform future education, policy, and research.

Methods

Study Design

This study was conducted in British Columbia, Canada. Participants were enrolled in their second semester of a two-year accelerated baccalaureate nursing program located in a university institution consisting of five terms in total. An interpretive description (ID) (2016) approach was employed with focus group interviews as the method of data collection to explore student perspectives on the AI-enabled tool ChatGPT. ID enables researchers in applied disciplines to employ methods that facilitate answering their research question(s), and not only does it allow for a nuanced exploration of individual experiences but also reveals patterns that reflect commonalities across participants. Furthermore, ID supports the development of findings with direct implications for educational practice and research, ensuring that insights are both meaningful and applicable in real-world educational contexts (Chiu et al., 2022). ID was well-suited for studying nursing students’ perspectives on artificial intelligence (AI) in academia, as it allowed for flexibility in the choice of data collection methods to better capture student experiences, while also generating findings that have implications for educational practices and research (Thorne, 2016). Ethical approval was obtained from the University of British Columbia Behavioural Ethics Research Board (H24-00064).

Participant Recruitment

Participants were recruited through a convenience sampling approach based on availability and interest. Undergraduate nursing students enrolled in an undergraduate research course were invited to take part in the study. A total of 150 undergraduate nursing students were enrolled in this research course. The study was presented to the nursing students by a research trainee. A recruitment poster was also used for recruitment.

Data Collection

Focus group interviews were chosen to provide participants with the opportunity to elaborate on their thoughts and their rationale (Krueger & Casey, 2000). As Krueger (1998) suggests, focus group discussions foster rich, intersubjective insights through dynamic and interactive exchanges between participants, producing outcomes that individual interviews cannot achieve.

A researcher and two research trainees conducted focus groups via Zoom. Two individuals facilitated the focus group each time, while one took notes. The research trainees shadowed the researcher's facilitated sessions prior to conducting the focus group and were instructed on facilitation methods and structure. Four focus group sessions were held for 16 students between February and March 2024. Each group consisted of two to six students and lasted for 30 to 45 min. The focus group discussions were audio recorded, which were then transcribed verbatim. The questions for the focus group were developed based on a recent systematic review of ChatGPT in teaching and learning (Mai et al., 2024 ) as well as the conversations among nursing faculty and students on the research team concerning the benefits, ethics, and potential challenges of using ChatGPT in nursing education. Based on recent literature and our experiences and interactions with students and faculty, the focus group questions were centred on 3 main areas, including: (1) How do you view AI-enabled tools such as ChatGPT being utilized in academic schoolwork?; (2) What potential challenges or concerns do you have about utilizing ChatGPT in academic schoolwork?; (3) What needs to be put in place to ensure safe and ethical use for Al-enabled tools such as ChatGPT? Additional clarification questions were asked as deemed appropriate by the facilitator, such as: (1) Could you speak more on that? or (2) Could you elaborate on your thoughts?

Research Team

Interdisciplinary members from Nursing (LH, NA, AFA, ML, RH), Kinesiology (OD), and Social Work (KW) made up our team. We have research and teaching faculty (LH, NA, AFA) as well as graduate (KW) and undergraduate students (ML, RH, OD). LH, NA and AFA designed the study. LH, ML and OD collected data. A team consisting of a researcher and research trainees (NA, ML, and RH) were involved in data analysis, as we took a collaborative team approach on data analysis to ensure different perspectives were incorporated. All team members participated in manuscript writing and review.

Data Analysis

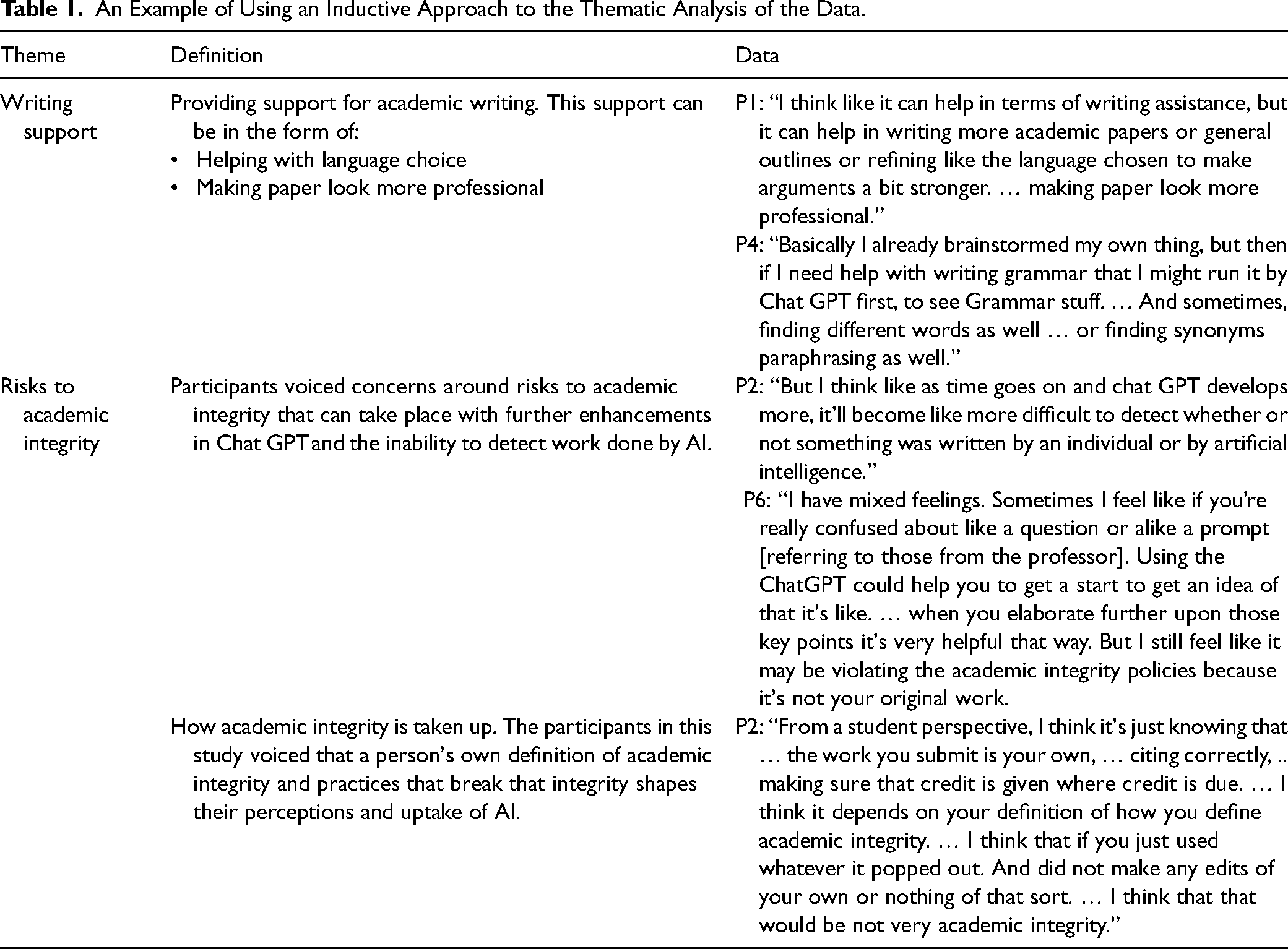

Data analysis involved a team consisting of a researcher and two research trainees. We drew on Braun and Clarke's (2006) framework for conducting a thematic analysis. Our analysis was inductive, iterative, and involved six-phases. Phase one and two involved the analysis team individually and independently becoming familiar with the data through the process of reading and re-reading the focus group transcripts and organizing the data relevant to the research question in a meaningful and systematic way by generating initial codes. The analysis team then met to discuss the data and the various codes that were generated. Phase three and four involved the analysis team independently organizing similar codes into categories and generating themes. Phase five and six involved the analysis team meeting again to review and define the themes. In the last phase, one researcher wrote up the themes as the results section of this research paper; see Table 1. To enhance reliability and rigor in the findings, we adopted approaches to qualitative rigor as outlined by Braun and Clarke (2006) by; 1) confirming the accuracy of the transcripts by checking against the focus group recordings; 2) ensuring congruence between the research objectives and the codes, and theme development at each step of the analysis process; 3) allocating an adequate amount of time to each phase of the data analysis to allow for in-depth analysis.

An Example of Using an Inductive Approach to the Thematic Analysis of the Data.

Results

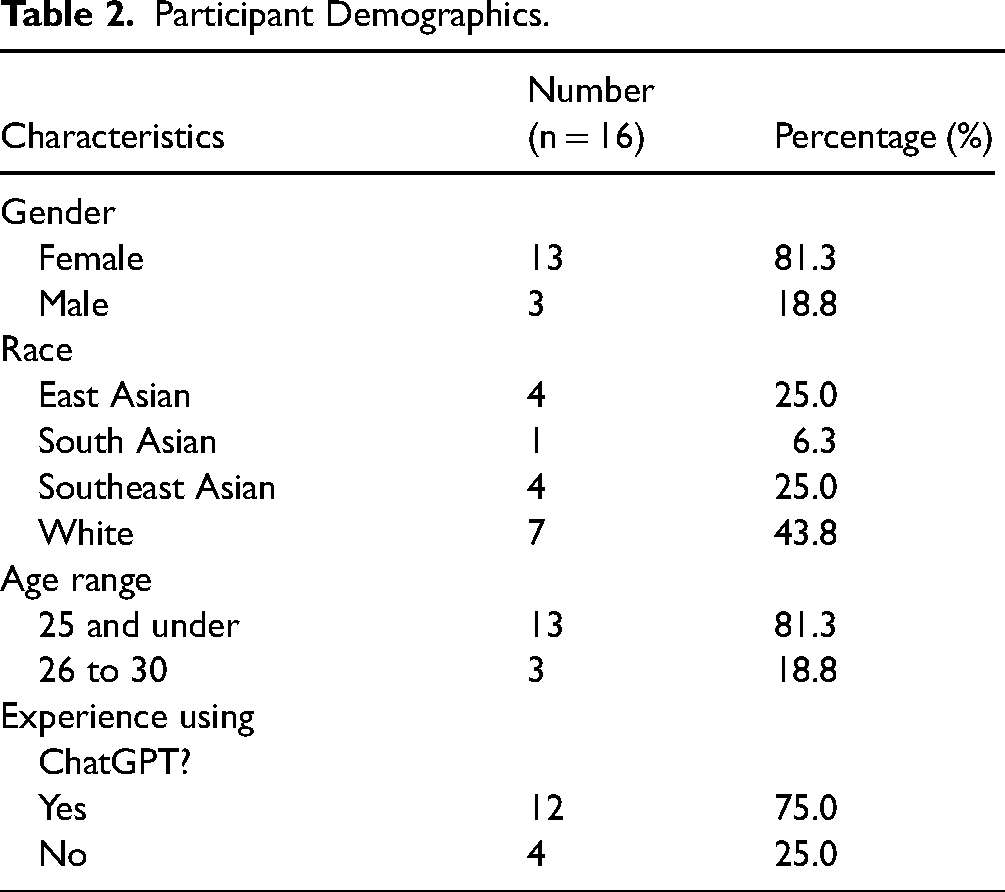

A total of 16 undergraduate nursing students participated in this research. The participants were largely 25 years of age or younger (n = 13), and the majority identified as being a woman (n = 13), white (n = 7), and having previous experience using ChatGPT (n = 12); see Table 2. From our focus groups, four key themes were generated: (1) utilization as a support tool; (2) utilization leading to a loss of competency in foundational skills; (3) utilization risking credibility and academic integrity; and (4) need for further education and resources.

Participant Demographics.

Utilization as a Support Tool

All participants shared the common experience that their uptake of AI tools, such as ChatGPT, have been most commonly in the form of obtaining support from the platforms. The forms of support included writing and studying support. The process of using ChatGPT as a writing support tool included obtaining help with the creation of an outline for writing academic papers and language choice and grammar. “I think like it can help in terms of writing assistance, but it can help in writing more academic papers or general outlines or refining like the language chosen to make arguments a bit stronger. … making paper look more professional.” (Participant 1) “Basically I already brainstormed my own thing, but then if I need help with writing grammar that I might run it by ChatGPT first, to see Grammar stuff. … And sometimes, finding different words as well … or finding synonyms paraphrasing as well.” (Participant 4)

As described by the above two interview excerpts, participants believed that the foundations of their academic writing, including the formation of their ideas and arguments, are developed by themselves and that ChatGPT is used only as an editing tool. Participants described the extent of refinements made by ChatGPT to their academic papers as minimal. For example, Participant 1 described how they create their own content and feed the content into ChatGPT, asking it to make refinements to the writing to make it more professional. The level of refinement from the perspective of Participant 1 has been changes made to one or two words, rather than making significant changes where the writing is entirely redirected. “Like it's just changing one or two words or just making like changing some synonyms in that sense like it's not redirecting my entire paper that I wrote but. … it's like a second pair of eyes that are kind of looking over.” (Participant 1)

For the participants, it appeared to be important to emphasize that not only did the formation of ideas and arguments come from themselves, but that even when ChatGPT was used as an editing tool, it served as a support for refining clarity and coherence, with no changes to substantive arguments and overall structure. Two participants from this study highlighted their own challenges with academic and professional writing, where one struggles with English as a second language and the other struggles with the use of language that may be perceived as ‘rude’. Both participants described how the use of ChatGPT enables them to convey their ideas more effectively, fostering clearer and more respectful communication in academic and professional settings. “And maybe let's say my writing is very poor and it may I may come off as rude or and consider it when sending that email through wording. However, if you ask ChatGPT to I guess change your wording or change how you express your idea which typically it is truly how you feel, however you just don't know the correct way to.” (Participant 6) “Like English is my second language. I also. Struggle with that sometimes and sometimes it works and it benefits and it looks more professional and looks more put together.” (Participant 1)

The limitations of the AI tools in being able to produce new knowledge and creating multiple student papers that look similar were voiced by the participants. “I don't know if AI is capable of doing like original thinking. … In terms of creating the original idea, I think that still has to come from yourself because I don't know if ChatGPT has that like ability to combine all these things [referring to pulling knowledge from different sources] together and create something new out of it when that's not how it's learning.” (Participant 3) “Everyone's work will look relatively the same, no original ideas. … AI comes from many sources, but they would still be collectively, similar ideas throughout.” (Participant 6)

The process of using AI platforms as a study tool was in the form of summarizing and collating vast information that exists in the literature about phenomena of interest. “I think because ChatGPT draws information from all these sources, sometimes really a great way of sort of summarizing the present information and sort of looking at like overarching concepts within which a topic you're looking into.” (Participant 3) “I usually only use ChatGPT for like quick summarizations of topics.” (Participant 9)

Utilization Leading to Loss of Competency in Foundational Skills

The purpose of postsecondary education, where students ought to aim to obtain various foundational knowledge and skills including the ability to write academic papers was highlighted. While participants agreed that the use of AI, such as ChatGPT, can be helpful in assisting with writing, they also believed that its presence could create a state of over reliance, which can in turn risk both the opportunity to develop the foundations of writing and the loss of existing writing skills. “It could create a reliance and that if you use it too much you can begin to lose your own ability to you know, form cohesive thoughts on your own or just trying to think and find the right word independently, you know. … an overreliance could cause. You know, just a regression your own ability to create and write ideas and just Yeah, stuff like that.” (Participant 2) “I think that the biggest challenge with ChatGPT is like people might become too reliant on it. … I see it most often being used in writing, so, I feel like people might just rely on it too much to create the structures of their paper, like how to write sentences, and I guess for me, in school, I feel like it makes more sense to learn how to do it yourself. Because that's why you’re there. I’m in nursing school and when we were learning how to take blood pressure before we learned how to do like using the Viols machine, which is an automated version, we learned the manual way first. So, I kind of view it as the same thing when it comes to like writing papers, like learning how to structure it yourself before just using technology, which might be faster.” (Participant 5)

Overreliance on ChatGPT was also perceived as a risk for ‘replacing’ users’ own critical thinking and judgement, skills that are fundamental for nursing practice. “The potential to replace critical thinking, … the whole point of academia and studying [is to] have a knowledge base and be able to, … use critical thinking and, … for nurses in here, … clinical judgement. … if you’re saying like, hey, think about this for me [referring to ChatGPT] because I don’t want to think about it, I’m too lazy, then you know, that's where it can kind of cross the line. … Such a big part of our education is …. Not only training to have the foundational knowledge base, but training and practicing to become critical thinkers … my worry is that if we resort to using Chat GT during our studies, to make those critical thinking decisions for us, and to think for us, then we don’t really get the training that we need. … Students before ChatGPT were forced to get [knowledge] on their own.” (Participant 7) “It might tell us that, hey, we don’t really need to think. So just take it easy, focus on your inputs to ChatGPT, because that's all that's going to matter in the end. But this is concerning.” (Participant 8)

Utilization Risking Credibility and Academic Integrity

Across the participants, there were concerns related to risks to the credibility of the knowledge produced by AI platforms and risks to academic integrity by the user of AI. The inability to obtain citations for content produced by ChatGPT was raised as a concern for most participants, as it impedes the ability of the user to evaluate the credibility of the content produced. Participants highlighted best practices of academic writing and knowledge synthesis, outlining the importance of critical evaluation of evidence supporting the content that they draw on and the need for drawing on multiple sources of evidence; practices that can be limited by the use of ChatGPT. “AI is not fully, like it's not transparent exactly … like where it's getting the information from or where is the source from or like how credible is this information? … It's really important … to cross-reference and just ensure like getting information from more than one source. So you know it's like real, there's multiple research behind it, you know it's a good resource to be using.” (Participant 1) “Like any other resources that we’ve found, whether academic research papers, website, or medical article, we need to have that critical thinking to evaluate things that was like their limitations.” (Participant 9)

Participant 1 emphasized having both foundational knowledge and experience in the phenomenon of interest, those that come with postsecondary education, as being important in that it provides the user of AI platforms the ability to evaluate the credibility of the content that is produced. “It's really important to have your foundation going into it [referring to using AI]. … Then you know taking … what ChatGPT pushes out with a grain of salt or maybe like double checking that with a credible source for just to make sure that you're not just ‘oh yeah the computer said this and this is what I'm going to take and put in my paper … So like for me … not [being] a first year student, I know that ‘okay this might not be the most credible [source] but there's some piece of this that I agree with [and] I think I could use [in] my paper. In terms of having that for first year [students] I'm not exactly sure of how they would know that it isn't the most credible.’ “. (Participant 1)

With further enhancements in ChatGPT and other AI platforms, where the ability to detect work done by AI can be limited, participants voiced concerns around risks to academic integrity. “But I think like as time goes on and ChatGPT develops more, it'll become like more difficult to detect whether or not something was written by an individual or by artificial intelligence.” (Participant 2)

What is considered a violation of academic integrity was perceived differently by different participants. For Participant 6, using ChatGPT to get prompts or ideas for writing was perceived as a violation to academic integrity, as the origin of the writing is not considered to be the individual's. “Using the ChatGPT could help you to get a start, to get an idea, … when you elaborate further upon those key points it's very helpful that way. But I still feel like it may be violating the academic integrity policies because it's not your original work.” (Participant 6)

For other participants, violations of academic integrity were dependent on the extent to which AI content is paraphrased and the presence of citations to give credit to work by others. “From a student perspective, I think it's just knowing that … the work you submit is your own, … citing correctly, .. making sure that credit is given where credit is due. … I think it depends on your definition of how you define academic integrity. … I think that if you just used whatever it popped out. And did not make any edits of your own or nothing of that sort. … I think that that would be not very academic integrity.” (Participant 2)

Need for Further Education and Resources

To mitigate the challenges associated with the use of AI platforms and to further promote the advantages, participants discussed the need for further education and access to resources that would provide knowledge and skills around best practices. Education was perceived as a method that would enhance equity between students and would provide opportunity for users to make informed choices around utilization. “You're [referring to students] not coming from the same like equal footing. … I think like the use of ChatGPT is inevitable and eventually like universities should provide training … because it's only fair for all students … to be able to choose whether they want to use ChatGPT or not, but like all students should be educated, I think. … So what stopping them is not because of lack of access or lack of education to use it, but more like they just don't want to, you know.” (Participant 4)

The types of education and resources needed varied. Education on how to utilize AI platforms ethically, where risks to academic integrity are mitigated were voiced. “AI enabled tools are, … here now, they’re accessible now and they’re going to be accessible for the newer generations going throughout the school. … It seems like it would require just some education about… ethics, rules, boundaries, … when to use it, when not to use it, what's right, what's wrong.” (Participant 7) “We could put more reminders of academic integrity or you know make assignments on … cons of using ChatGPT or other like Artificial intelligence.” (Participant 2) “Maybe teaching about ChatGPT. Cause I feel like a lot of the time people use it because it's easy and it's accessible, but they may not like fully understand like the implications like the negative implications of using it.” (Participant 5)

Discussion

Our study aimed to (1) explore student perspectives on an AI-enabled tool, ChatGPT, examining perceived benefits, concerns, and challenges and (2) identify implications for ethical and responsible use of AI among nursing students. Our findings suggest that students believe AI-enabled tools, like ChatGPT, can be used as a support tool to aid their learning process, but continue to have reservations about adopting AI, despite acknowledging its benefits and its increase in popularity. Participants shared the convenience of using AI to understand concepts, support writing and grammar, and provide tailored learning assistance. Nonetheless, hesitance in embracing ChatGPT originated from unease surrounding credibility, potential for academic integrity, and impact on self-competency. This is in alignment with the findings in current literature, which examined perceptions of students in general (Baek et al., 2024; Baidoo-Anu et al., 2024; Chan & Hu, 2023; Farhi et al., 2023). In contrast to our focus on nursing, disciplines surveyed in the current literature includes both STEM and non-STEM majors, consisting of fields such as law, pharmaceutical sciences, medicine, dentistry, business, education, and arts (Baek et al., 2024; Baidoo-Anu et al., 2024; Chan & Hu, 2023; Farhi et al., 2023). As such, the responses from our sample of nursing students, which heavily emphasized concerns about credibility, ethical usage, and loss of individual skills, suggest that these students hold a unique perspective towards the use of AI tools that stems from their disciplinary values. Our results highlight three unique key factors – evidence-based practice, ethical considerations, and the importance of critical thinking skills – that influence the perspectives of nursing students towards AI tools.

Evidence-Based Practice

With knowledge-based practice being a central pillar of nursing professional standards in the provision of patient care, evidence-based care is a focal point in nursing education (British Columbia College of Nurses & Midwives [BCCNM], 2020). As nurses are tasked with providing safe patient care, fostering an ability to gauge the credibility and trustworthiness of information is important in nursing programs. Evidence-based interventions and decision making processes are also critical in patient health outcomes, and play a role in guiding changes to policy and procedures to support improvements in patient care (Chhetri & Zacarias, 2021; Connor et al., 2023). This focus was demonstrated in our findings, where participants underscored the importance of using ChatGPT with caution and utilizing trusted references to determine the credibility of ChatGPT's output. As a result, participants also emphasized that their usage of ChatGPT is largely restricted to enhancing their learning and clarifying concepts. Despite ChatGPT's inability to provide real and credible citations, there has been a shift towards AI-enabled tools that claim to incorporate reliable sources (Perplexity, n.d.; University of Alberta, 2024). However, these newer AI-enabled tools are still limited in the number and quality of references that they can provide and may still create false citations (University of Alberta, 2024).

Ethical Considerations

Nursing education places an emphasis on ethical practice, which includes aspects like patient confidentiality, professional responsibility, and professional conduct (BCCNM, 2020). With the expectation for nursing students to engage in ethical practice and withhold these standards, nursing students have an increased aversion towards academic dishonesty in comparison to those from other disciplines (Bultas et al., 2017). Nursing students perceive dishonest academic behaviours to negatively impact clinical practice and quality of patient care, and studies have demonstrated a positive correlation between nursing students’ attitudes towards academic integrity and professional integrity (Krueger, 2014; Lynch et al., 2022; Sheeba et al., 2019; Woith et al., 2012). As such, these ethical principles may have contributed to their reluctance to use ChatGPT in ways that may violate academic integrity. These findings accentuate the need for increased educational guidance surrounding AI usage to support AI integration in nursing education. As providing safe and ethical care is a key responsibility embedded within the nursing profession, it is critical that nursing students are immersed in a curriculum that reflects these values (Canadian Nurses Association, 2017). Educational institutions and educators must therefore carefully structure their curriculums to encourage the development of professional ethical integrity and minimize the potential for academic dishonesty with AI utilization.

Importance of Critical Thinking Skills

Within the nursing profession, critical thinking and decision-making skills are key to providing safe and quality care (BCCNM, 2020). As nursing education aims to develop these competencies in nursing students, study participants shared concerns about the reliance on ChatGPT in potentially hindering the development of their critical thinking skills. With participants reporting that their usage of ChatGPT is generally restricted to idea generation and information summarization, this suggests that nursing students view AI as a supplemental instrument. Rather than trusting the suggestions of AI tools, nursing students may prioritize human judgment and decision-making due to their nursing training.

Implications

This study emphasized the lack of education and resources regarding AI usage and best practices within institutions. This lack of training and education on appropriate AI usage, as well as the importance of providing such training to enhance student comfort in using AI tools, has also been accentuated in past research (Baek et al., 2024; Baidoo-Anu et al., 2024). As AI-enabled tools grow increasingly common and are integrated into healthcare technologies, the adoption of such tools into nursing education curriculums may play an important role in fostering student competency and familiarity with AI technologies in nursing practice (Labrague et al., 2023; Nazir & Wang, 2023; Sun & Hoelscher, 2023).

Focus group participants highlighted the need for educational policies and resources to be developed to guide students on the appropriate usage of AI-enabled tools. This gap has been called to attention by several studies, which further supports the importance of establishing such guidelines to promote adequate competency building amid AI usage (Baek et al., 2024; Baidoo-Anu et al., 2024; Farhi et al., 2023). Open dialogues and education can foster responsible use and competency in navigating AI tools, as well as build capacity around discerning misinformation and biases within generated content. The acknowledgement and acceptance of AI tools in educational institutions is also a contributing factor to the students’ transparency (Yu, 2023). It is only by embracing AI tools that institutions can encourage citations of AI usage, thereby promoting responsible and ethical use. Ultimately, as clinical focus, ethical considerations, and critical thinking skills play a role in how nursing students choose to use AI-enabled tools. As students must juggle the usage of AI tools with maintenance of their foundational skills, developing curriculums and policies that allow nursing students to engage with AI in a way that corresponds with their practice standards is key in nursing education.

Strengths

We adopted rigorous processes in the data collection and analysis processes to enhance the credibility and transferability of our findings. Our study applied an interpretive description approach, and we acknowledge that our positionalities would affect our interpretation of the data. By having a variety of disciplines and roles in our team, diverse perspectives were incorporated throughout the conduct of our study, especially during team data analysis, which enriched our findings. This diversity was key in our data analysis. We also adopted a rigorous analysis process to ensure that the analytic codes and themes were consistent with the objectives of the study and to ensure that the findings reflected what was expressed by participants. Furthermore, by providing the details of the study in this article, we hope that would enhance transferability by helping the readers to ascertain whether our findings are applicable to nursing students in their context or to students in other disciplines.

Limitations

This study was limited by its small sample size and use of a convenience sampling, as well as the students’ prior experience and exposure to AI-enabled tools. As a result, these findings may apply only to this specific group of nursing students. Future research to examine student perspectives across several educational institutions may provide further context. Also, our focus group questions were not based on any existing theoretical framework. While this was considered a limitation, research on the use of ChatGPT in education is still in still in the infancy and to the best of our knowledge, no theoretical framework has been developed or validated. We hope this work would provide a background knowledge to forming a sound theoretical knowledge on ChatGPT in nursing education. Further exploration of these students’ experiences with AI-enabled tools, as well as offering nursing students the opportunity to use such tools in classroom settings, may allow for a deeper understanding of the concerns surrounding AI usage. This will also aid the development of educational policies around AI use that better address student concerns. Research into ethical concerns and the development of solutions to mitigate the potential risks associated with AI usage will provide valuable insights into the optimization of AI integration in educational settings. To understand the implications of AI usage across different educational settings, exploration of the perspectives of graduate nursing students may be beneficial. As well, examination of the views of nursing educators will aid in providing a comprehensive picture on how AI usage may benefit or impair students in meeting foundational nursing competencies. Similarly, more research is needed to explore the public's attitudes towards adopting AI technology into nursing curriculums, as this will inform the approaches of nursing educators and institutions.

Conclusion

The perspectives of nursing students on AI tool, ChatGPT are shaped by three critical factors: evidence-based practice, ethical considerations, and the importance of critical thinking skills. Nursing students, grounded in a knowledge-based practice that prioritizes patient safety and evidence-based care, approach AI tools cautiously, particularly concerning the credibility of information. Nursing students are vigilant about upholding professional standards, expressing concerns over AI's role in patient interactions, documentation, and academic integrity. Also, critical thinking is a key competency in nursing, and students fear that over-reliance on AI tools may hinder the development of these essential skills. Overall, while AI tools have potential as supplemental aids in nursing education, they must be used in a way that aligns with the professional and ethical values of the nursing discipline. Future research should explore education support and AI competency frameworks within nursing education to ensuring that future nurses can use AI responsibly, ethically, and effectively in healthcare environments.

Footnotes

Author Contributions

Study Design: LH designed the study; Data collection: LH, ML, OD; Data analysis: NA, ML, RH; Manuscript preparation: LH, NA, ML, KW, All authors reviewed and provided input for revisions.

Consent to Participate

All participants provided written informed consent prior to participating in this study.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical Considerations

Ethical approval was obtained from the University of British Columbia Behavioural Ethics Research Board (H24-00064).

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was funded by Dr Lillian Hung's Canada Research Chair.

ORCID iDs

Statements and Declarations

The first author of this paper is an undergraduate student.