Abstract

Recent advancements in the field of Artificial Intelligence (AI) provide promising applications of this technology with the aim of solving complex healthcare challenges. These include optimizing operational efficiencies, supporting clinical administrative functions, and improving care outcomes. Numerous AI models are validated in research settings but few make their way into useful applications due to challenges associated with implementation and adoption. In this article, we describe some of these challenges, along with the need for a facilitating entity to safely translate AI systems into practical use. The authors propose a new AI governance framework to enable healthcare organizations with a mechanism to implement and adopt AI systems.

Introduction

Artificial Intelligence (AI) is believed to have the potential to solve complex healthcare challenges with the promise of revolutionizing healthcare systems, operations, and care delivery. Recent research has documented an increase in the number of AI models being validated in various healthcare domains. 1 Popular clinical applications include decision support for detection and diagnosis of disease as well as the development methods for determining prognosis in disease areas such as cancer, neurology, and cardiology with the aim of enhancing treatment and preventing diseases. 2 The availability of large datasets, advancing computing technologies, and sophisticated machine learning algorithms are scaling possibilities into additional healthcare domains, thereby creating opportunities to democratize AI. However, translation of AI into practice has been slower due to challenges related to trust thereby creating an “AI chasm.”3,4 Given the complexity of AI, a traditional healthcare technology implementation and governance framework may not be sufficient. Therefore, there is a greater need to take into consideration broader entities involved in the implementation of safe and trustworthy AI systems. Adoption of AI largely relies on trust, which is impacted by various elements.5-7 As a consequence, there is a need for an all-encompassing, comprehensive, and catalysing approach centred around trust to enable the successful translation of AI in healthcare and further enhance system, operational, and care processes. Crigger and colleagues define trustworthy AI as systems that “require systematically building an evidence-base using rigorous, standardized processes for design, validation, implementation, and monitoring grounded in ethics and equity.” 8 This article will also argue for the need for organizations to establish AI governance as a facilitating structure, and translational catalyst to support the development, use, and sustainability of trustworthy AI systems.

Objectives

This article is written to accomplish the following: define AI and AI governance in healthcare; outline who should be involved in AI governance; describe the key aspects of AI governance that need to be reviewed; describe some of the issues and challenges that need to be considered when adopting AI; and propose an AI governance framework.

Background

Defining artificial intelligence in healthcare

AI or “the science and engineering of making intelligent machines” 9 is a term used to describe a sophisticated system designed to emulate human decision-making by self-learning from large datasets. Over time, the generation of AI algorithms has evolved from basic algorithms such as supervised and unsupervised machine learning to deep learning algorithms that involve many layers of data allowing for more sophisticated methods of identifying patterns to arrive at an output. 9 The development of AI applications for healthcare has focused on administrative and operational efficiency, predictive and prescriptive analytics, and diagnostic support via clinical decision-making tools. 1 Some of the most promising clinical applications of AI rely upon deep learning techniques applied towards image-based diagnosis which have primarily been studied in the fields of radiology, dermatology, ophthalmology, and pathology. 9

Challenges with artificial intelligence implementation and adoption in healthcare

There has been limited actual application of AI in healthcare compared to the number of research studies that have been published. 10 Less than 10% of healthcare organizations have been utilizing AI and most are in the early stages of AI implementation. 11

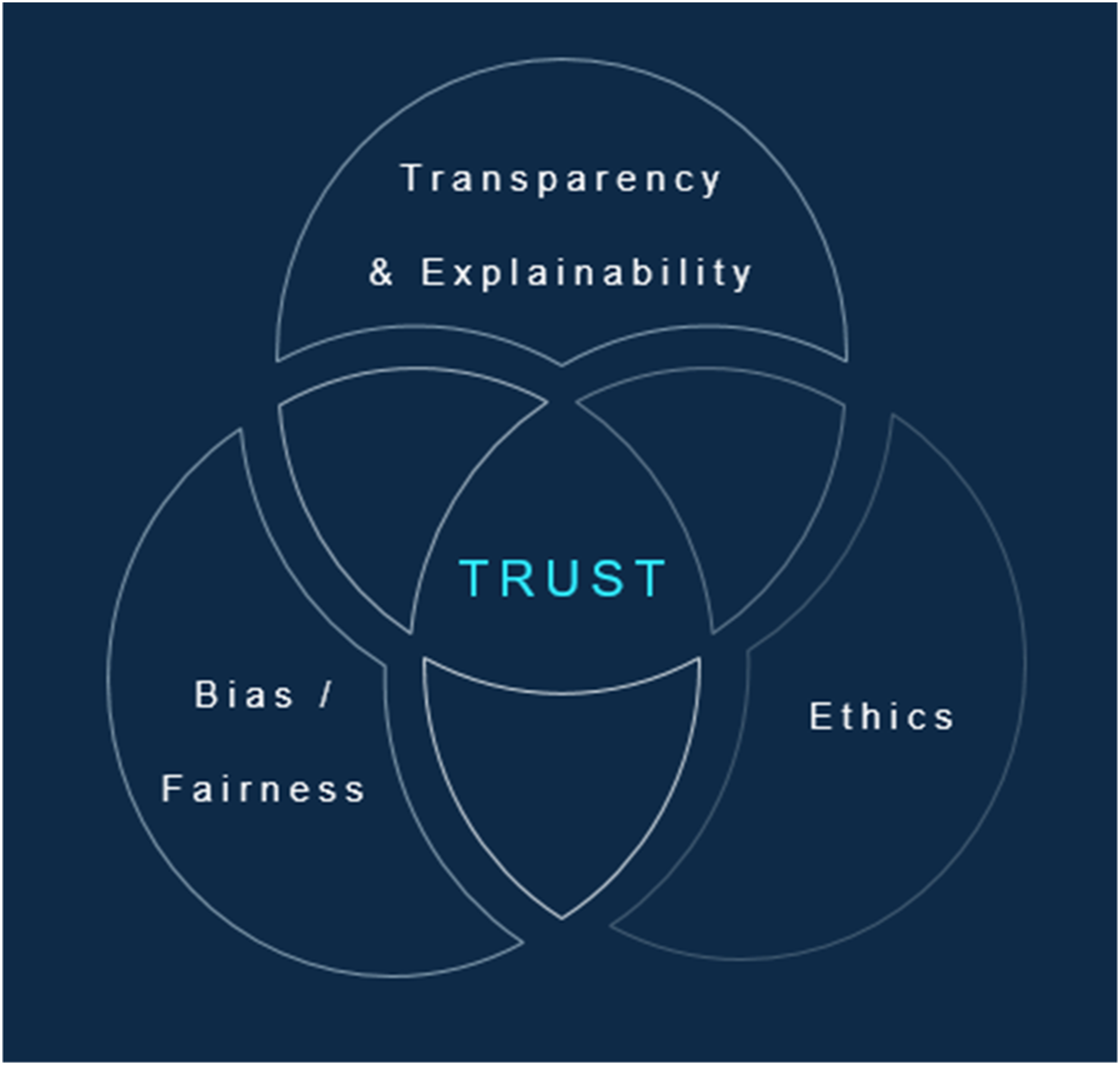

Around the world, several studies have reported on the challenges associated with implementing AI in healthcare. For example, Petersson and colleagues conducted qualitative interviews of healthcare executives in Sweden, identifying three primary areas of AI implementation challenges. These included conditions associated with the external environment, conventions around healthcare professional cognitive and physical work (e.g., reasoning, decision-making, and workflows arising from healthcare procedures and interventions), healthcare practices, the capacity for organizations to support change management, and the new digital organizational structures and processes that will be needed to support successful integration of AI. Their findings were further distilled into specific issues around the safety, liability, and legality of AI tools. These issues include supporting AI implementations, the need for AI educational requirements for healthcare professionals and staff, having a systematic approach to AI implementation, having sufficient organizational resources, including health professionals and staff in AI implementations, transforming current health professional roles to integrate AI competencies, and build trust around AI adoption. 12 Chomutare and colleagues, Radhakrishnan and colleagues, and Hassan and colleagues conducted literature reviews that consolidated and developed a list of the AI adoption barriers and facilitators. The researchers found implementation and adoption challenges were present across healthcare systems (e.g., Canada, United States, and Europe) and settings (e.g., physician office and hospital). Core issues impacting trust were associated with transparency and explainability, bias, and ethics. Other barriers identified across studies included a lack of organizational strategy and roadmap for AI, strategic alignment, support from executive leaders, limitations of digital infrastructure, availability of funds, awareness and training, workflow integration, data quality, data availability, and regulatory maturity.5-7 Radhakrishnan and colleagues and Hassan and colleagues particularly noted the importance of governance as a facilitating factor for the implementation and adoption of AI.

The need for AI governance

As such, it is essential to have a governing body in place to effectively guide the deployment of technologies such as AI to manage the scale and speed of transformation that will take place in healthcare organizations. An adoption centred governance framework (see Figure 1: Role of trust in AI adoption in healthcare) that considers trust at the centre of its paradigm is necessary to ensure that stakeholders are consulted at the right time so that risk, ethics, fairness, transparency, accountability, and socio-technical factors are considered in the end-to-end process of AI implementation. In this way, a governance framework would ensure oversight over the barriers identified and provides a structured mechanism to allow for implementation of trustworthy AI. AI governance is still a developing area of research and practice, thereby disadvantaging organizations who could benefit from use of AI technologies. As a result, organizations may be reluctant to implement AI technologies that could present risks such as safety hazards and discriminatory outputs.

13

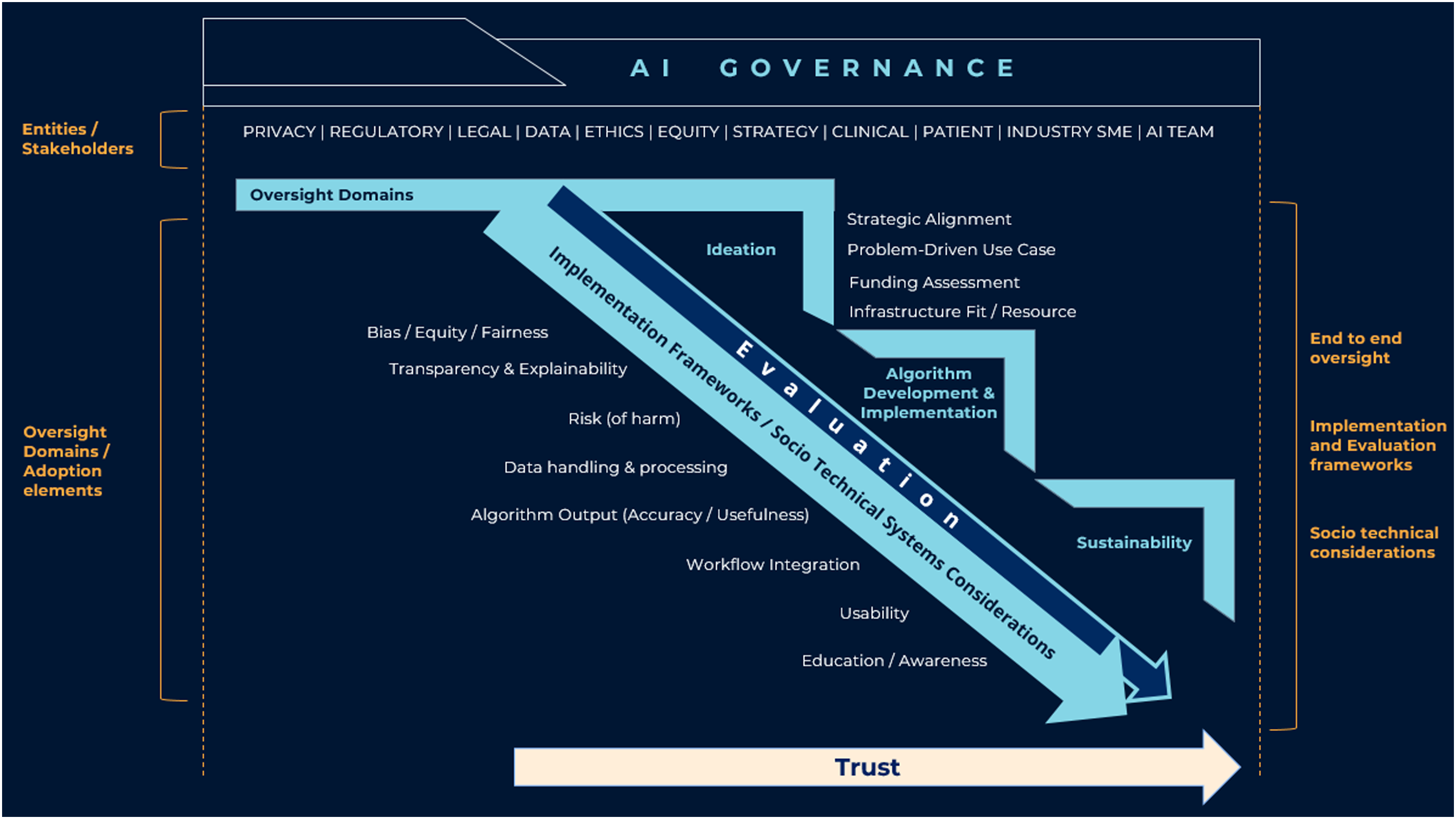

According to Hassan and colleagues, a number of barriers to adoption contribute to this lack of trust. The researchers recommend an adoption-centred governance framework as an essential structure to successfully implement AI systems into clinical settings (see Figure 2). With the presence of AI governance, an organization can ensure that all factors impacting trust are appropriately addressed with the right level of oversight to ensure the safe and trustworthy implementation of AI systems. In particular (see Figure 2), governance should at least include oversight around bias, equity, transparency, ethics (of AI), explainability, data handling, and safety. Considering the evolving nature of the field, organizations may add additional governance domains, structures, and processes to provide appropriate oversight and accountability. By oversight, we mean a process for tracking a specific domain such as bias, ensuring accountability and having gated mechanisms in place through various stages of AI development (i.e., from concept, model development, implementation, and all the way through to sustainability, evaluation, and its long-term use).14,15,16 Role of trust in AI adoption in healthcare. Hassan, Kushniruk, and Borycki, 2023. AI governance framework.

An adoption-centred governance framework

Given the domains identified as barriers to adoption, a governance framework may be structured to provide oversight over these domains to ensure an adoption-centred and trustworthy approach to implementing AI solutions. Considering these, we are proposing a new framework (see Figure 2: AI governance framework) that covers the entire cycle of an AI system, from concept through to sustainability while providing processes to assess for each oversight domain (identified as barriers to adoption). The framework was developed based on the results and analysis of factors that emerged from the authors’ previous review of barriers and facilitators of AI in healthcare (Hassan et al.) with trust being a central construct. 7

This new governance framework allows organizations to consider the following when developing and implementing AI applications: 1. Provide oversight on end-to-end process of AI from ideation, model training and development, evaluation, and implementation through to sustainable long-term continuous monitoring. 2. Identify all entities associated with each of the oversight domains, including impacted implementation stakeholders should be involved to oversee end-to-end development of AI for healthcare. 3. Based on the factors impacting adoption, identify subject matter experts who may be able to deliberate on an AI use cases based on their area of expertise, such as, but not limited to bias or ethics. 4. Embed processes to develop assessment tools and repositories for collecting evidence to support adoption barriers such as bias, transparency, and explainability and other barriers as identified through the evolution of AI implementations. 5. Apply one or more validated implementation, evaluation, and long-term use frameworks (e.g., Unified Theory of Acceptance and Use of Technology or an AI specific implementation framework) to ensure all barriers to adoption are measurably considered via the framework.

To further elaborate, the governance framework (see Figure 2) ensures structures and processes that incorporate matters around providing guidance on regulatory, legal, data governance, bias, ethics, risk, accountability, and facilitating funding are in place to enable successful implementation and adoption of AI systems. For example: • From a regulatory perspective, a governance framework may provide guidance around changing regulations that are external to the organization but impact the development and maturation of AI systems. • From a legal standpoint, guidance around any legalities arising from AI outputs, need to be discussed and appropriate policies and procedures established. • In terms of algorithm development, the governance framework should provide guidance to ensure for equity/fairness, algorithm bias, transparency, explainability, usability, and cognitive-socio-technical considerations. • In terms of data, the governance framework should include details on guidance and adherence around privacy considerations, data storage practices, data security, training data set, and traceability of data. • A governance framework should outline a clear accountability structure. • Finally, ethics presence should be included to ensure relevant ethics frameworks are applied in context of AI.

Furthermore, for each of the adoption domains identified such as bias, ethics, and explainability, assessment tools can be adapted or developed by each organization. These toolkits would contain critical questions or criteria that the governance committee or subject matter expert would assess against the AI solution, thereby gathering evidence along the process to ensure safety and governance criteria are met and adjustments applied where criteria are not met. Examples of tools that can be embedded in AI governance processes for bias include ones developed by Landers and Behrend. 21 It is noteworthy to mention that there are other, technical techniques of assessing bias that should also be included in the AI development process such as ones reported in a systematic review by Kumar and colleagues. 22 Similarly, Chen and colleagues 23 and Rogers and colleagues 24 outline how to assess for ethical principles at each stage of an AI systems development lifecycle. For explainability, the field of explainable AI provides tools to evaluate for explainability such as the ones reported by Markus and colleagues, 25 Preece and colleagues, 26 and Vilone and colleagues. 27 Embedding these types of assessment mechanisms in the governance process allows for a more thorough understanding of the AI system being implemented, and most importantly, one that is aligned with the organizations values. 28

Discussion and conclusion

While there are a number of papers that have proposed a governance framework for AI in healthcare such as by Taeihagh, Reddy et al., and Mäntymäki et al., the proposed governance framework in this paper considers various stages of AI development while integrating the barriers and facilitators to AI adoption as identified by Chomutare et al., Radhakrishnan et al., and Hassan et al. These barriers and facilitators have been woven into a holistic framework to ensure strategies and tools are used in a systematic approach, and driven by validated implementation, adoption, and evaluation frameworks to ensure an adoption-centred process is in place to conceptualize, develop, implement, and sustain AI applications in healthcare. In this way, an AI governance framework would be the facilitating catalyst to translate AI into practice. In considering that organizations are uniquely dispositioned with cultural and structural differences, the proposed governance framework is developed with sufficient malleability in order for organizations to adjust and apply it to their context/environment.

Overall, the governance framework stresses the importance of considering trust and adoption of an AI system from the onset, when an AI system is being developed through to when it is implemented and sustained. The existing technology implementation and acceptance models may not be all-encompassing of adoption factors; therefore, adding additional frameworks around trust, explainability, and ethics will be necessary to foresee the success of an AI innovation. Most importantly, the success of technology implementations is keenly tied to cognitive-socio-technical considerations.17,18 Health technologies such as AI influence cognitive processes such as information seeking, reasoning, and decision-making.19,20 Theories from the cognitive literature and social technical literature can help implementers consider the individual’s social environment and its interacting factors as well as the processes embedded in that environment which could influence the individuals’ adoption process. Therefore, in order to effectively and safely adopt and implement AI, it would be essential to also weave cognitive-socio-technical aspects into a governance framework such as its impacts on patients, clinicians, and workflow processes.

In conclusion, we are offering the definition of AI governance as an entity comprising of processes, guidelines, and specialized personnel, or subject matter experts dedicated to guiding the ideation, development, deployment, and continuous monitoring of trustworthy and beneficial AI systems. A structure that integrates critical principles, including ethics, fairness, transparency, accountability, and safety, to ensure that AI systems are evaluated and implemented responsibly as well as align with organizational and societal standards. The intention of the proposed governance framework is to provide a template for healthcare organizations to adapt into an AI governance model that best fits their cultural and structural context. With the rapidly evolving landscape of technological advancements, organizations may find that additional entities and adoption barriers may need to be added or removed as practical implementation of the framework informs the governance needs of the organization. As such, the governance framework has been intentionally made flexible in order to adapt to organizational needs. Therefore, three components that will drive the evolution of the framework are: who are the entities that need to be continuously involved, what trustworthy and adoption-centric barriers need oversight, and which frameworks will be used to ensure a safe, trustworthy, and evidence-based implementation of AI in healthcare.

Footnotes

Acknowledgements

Elizabeth Borycki would like to acknowledge the support of Michael Smith Health Research BC.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Ethical approval

Institutional Review Board approval was not required.