Abstract

Promoting student self-determination is recognized as the best practice in secondary transition planning. Few self-determination interventions have utilized technology to provide individualized learning opportunities. The Goal Setting Challenge (GSC) App was developed to provide a technology-based instructional approach to support developing self-determination. The purpose of this single-case study was to evaluate the impact of the GSC App on self-determination knowledge outcomes for students with disabilities and explore the feasibility of students’ use of the App in transition planning over an academic semester. Results were significantly impacted due to the COVID-19 pandemic; however, this created opportunities to explore feasibility during in-person instruction and the unprecedented shift to remote learning. Findings suggest mixed results related to outcomes for student use and feasibility but suggest the possibility of the App providing a student-friendly means for engaging in self-determination instruction during in-person and remote instruction. Limitations and implications for future research and practice are discussed.

Keywords

Promoting and enhancing student self-determination is recognized as best practice in secondary education and transition planning (Rowe et al., 2021). Higher levels of self-determination predict in-school and postschool outcomes for students with disabilities (Hagiwara et al., 2017; Mazzotti et al., 2021), and evidence-based practices for promoting self-determination have been developed in school contexts (Rowe et al., 2021), such as the Self-Determined Learning Model of Instruction (SDLMI; Shogren et al., 2018). However, few self-determination interventions have utilized technology to provide more personalized and individualized learning opportunities. Early research has suggested the promise of technology-mediated SDLMI instruction (Mazzotti et al., 2012, 2013). For example, Mazzotti et al. (2012, 2013) used computer-assisted SDLMI to teach elementary students with high-incidence disabilities to set and attain goals. Both of these studies examined the effects of computer-assisted SDLMI (CAI-SDLMI) on students’ knowledge of SDLMI and disruptive behavior. The results of these two single-case studies showed a functional relation between the independent variable (computer-assisted SDLMI) and dependent variables (i.e., knowledge of the SDLMI and disruptive behavior). These two early studies used Microsoft PowerPoint with embedded audio and visual components to deliver the CAI-SDLMI intervention and showed promise that delivering interventions like the SDLMI via technology may potentially enhance student engagement as well as change the demands placed on teachers for delivering personalized interventions.

The SDLMI is an evidence-based model of instruction (Rowe et al., 2021; Shogren et al., 2018) designed to be implemented by trained facilitators (e.g., teachers and transition coordinators) to (a) change the focus of goal-setting and attainment instruction from teacher-directed to student-directed and (b) teach students the skills and abilities needed to engage in self-determined, goal-directed actions. The SDLMI is aligned with Causal Agency Theory (Shogren et al., 2015), which provides a framework for conceptualizing the development of self-determination and support needed to enable its expression by students with disabilities. The SDLMI has three core components: Student Questions, Teacher Objectives, and Educational Supports. When the SDLMI is implemented in classrooms with students, instruction is typically organized into mini-lessons delivered by teachers two to three times per week. The 12 SDLMI Student Questions are linked to Teacher Objectives and Educational Supports organized into three phases (Phase 1—Set a Goal; Phase 2—Take Action; and Phase 3—Evaluate Progress). During instruction in each phase, the student learns a series of steps based on the self-regulated, problem-solving literature that supports them to answer the Student Questions. For example, during Phase 1, the problem is, “What is my goal?” The Teacher Objectives provide the teacher or trained SDLMI facilitator with instructional objectives to target to help students to answer each question, and the Educational Supports are the instructional strategies that teachers can use to enable students to meet the Teacher Objectives and effectively answer the Student Question. The ultimate goal is that the student learns the series of questions and uses them in a self-directed manner to work through the goal-setting and attainment process on a repeated basis working toward more complex goals. Teachers can implement SDLMI instruction cyclically, supporting students to work through the Student Questions repeatedly over multiple semesters. Teachers can use the SDLMI in any content area (e.g., academics, transition planning, and self-management). The key focus of teacher implementation is delivering instruction that meets the Teacher Objectives and uses needed Educational Supports to enable students to respond to the Student Questions as they set and work toward goals in a curricular area. Teachers also learn to prompt skills associated with self-determination as they implement their curriculum, supporting students to generalize learning and make progress toward their goals.

The Goal Setting Challenge (GSC) App (Shogren & Mazzotti, 2020) was developed to build on the evidence-based SDLMI and explore the feasibility of technology-based instructional approaches to support the delivery of the SDLMI in secondary education and transition planning. The GSC App was designed to take the SDLMI format and “translate” it into a technology-based instructional format that enables the Teacher Objectives to be achieved through technology, with built-in and customizable Educational Supports, that enable students to respond to the Student Questions, based on learning needs and preferences. Emerging instructional technologies can promote more personalized and individualized learning experiences as well as reduce demands on teacher time and training needs (USDOE, Office of Education Technology, 2017).

The GSC App development is described more fully in Mazzotti et al. (2022). In the App, students answer the SDLMI Student Questions, after navigating through lessons that integrate personalized learning activities that address the SDLMI Teaching Objectives and provide Educational Supports (e.g., completing an activity, with immediate feedback, to identify key parts of a transition goal). Teachers are able to track student progress and review responses to the Student Questions to support generalization of goals and skills learned in the App to other activities throughout the curriculum. The GSC App has 14 lessons. This includes two introductory lessons followed by 12 lessons aligned with the Student Questions and the three phases of the SDLMI. The specific features embedded in the App (e.g., personalized instructional characters reflecting diverse cultural identities, multiple student response options) and delivery of instruction and education supports (e.g., student-led instruction and examples, different activity formats to demonstrate knowledge) were developed using best practices in instructional technology and universal design (e.g., variety of instructional modes, voice-over audio dialog, speech recognition features, data visualization) as well as significant and sustained input from end-users (i.e., students with disabilities, teachers; Mazzotti et al., 2022). The process model of engagement framework (O’Brien & Cairns, 2016) and principles of instructional design, namely, the model-lead-test format, were adopted to guide development (Coyne et al., 2011) to provide an appropriate level of challenge and aesthetic/sensory appeal for end-users (O’Brien & Cairns, 2016).

We engaged student end-users and their teachers directly and actively in the development process (see Mazzotti et al., 2022), but there has not yet been systematic testing of the GSC App and the delivery of a technology-mediated version of the SDLMI in school contexts with secondary students with disabilities. This single-case study was designed with rigorous methodological standards (Kittelman et al., 2018; Kratochwill et al., 2013) to collect data on the impacts of the GSC App on self-determination knowledge outcomes for students with disabilities as well as explore the feasibility and fidelity of student’s use of the GSC App in transition planning over an academic semester. Our research questions were the following:

More information on the impacts of the COVID-19 pandemic on our design and key findings will be described throughout the paper, including what was learned during this unprecedented time.

Method

Procedure

This study was initiated in January 2020 prior to the onset of the COVID-19 pandemic. The rapid onset of the pandemic and its impact on student instruction and outcomes (Office for Civil Rights, 2021; Rowe et al., 2020; Toste et al., 2021), less than 2 months after initiation of this single-case study, had significant impacts on implementation and data collection (described subsequently). We attempted to maintain our rigorously planned single-case design to the degree possible, but because of the pandemic, there were significant disruptions. We focused on continuing to work with participating teachers and students to not only address our research questions but to also address their new and emerging needs during the rapid transition to virtual instruction. This allowed us to gather unanticipated information on the impacts and feasibility of the GSC App during a global pandemic that can inform ongoing use of the GSC App during in-person and virtual instruction.

Prior to data collection, researchers obtained institutional review board (IRB) approval from the university and school district. Following IRB approval, written consent was obtained from parents/guardians and verbal/written assent from students. Students, whose parents/guardians did not provide consent, were not eligible to participate. The current study was planned for one academic semester, with students working through the GSC App once, completing the two introductory lessons (i.e., Intro A, Intro B) and 12 content lessons (described below). IRB modifications were not needed when we shifted to remote instruction.

The initial implementation site was an English II class serving 15 high school students with disabilities who received special education services and one student who did not receive special education services. This class was chosen based on existing relationships that the researchers had with a teacher in the school and teacher input that the students could benefit from goal-setting/self-determination instruction. Inclusion criteria were secondary students in high school (i.e., ninth through 12th grade) with or at-risk of a disability (e.g., specific learning disability, emotional disturbance, and other health impairment) as the GSC App development work targeted this population of students. Of the 16 students, we obtained parent/guardian consent for 15 students. All 15 consented students began initial baseline data collection in late January and early February. We staggered the entry of participants into treatment conditions using best practices consistent with a multiple-probe across participants design (Gast et al., 2018; Kratochwill et al., 2013); however, this meant that there was not enough time to allow for all participants to enter intervention prior to the shift to fully remote instruction during the COVID-19 pandemic. Only six students entered intervention prior to schools closing because of the COVID-19 pandemic (i.e., Casey, Nico, Levi, Zander, Taryn, Andrew; pseudonyms used). The remaining nine students were still in the baseline phase as schools were closing and were expected to continue in baseline when remote instruction began.

After the shift to remote instruction, there was a 5-week pause in any implementation activities as teachers and schools navigated the rapid and unprecedented closure of schools and determined how they would handle remote instruction. During this break, we worked with teachers to strategize ways to continue to support student access to the GSC App as remote instruction protocols were established. After 5 weeks, we began to engage in remote class sessions to support the ongoing use of the GSC App and data collection for students who were still in baseline. Six of the nine students still in baseline data collection during in-person instruction never began intervention as they never participated in remote instruction offered by the school. Data for these students are not reported here as they did not access the intervention. The nine remaining students who returned and participated in remote instruction represent our sample. They included six students who began intervention with the GSC App during in-person instruction (i.e., Casey, Nico, Levi, Zander, Taryn, Andrew); and three students who were in baseline through in-person instruction and began using the GSC App during remote instruction (i.e., Hannah, Dahlia, Jo).

Participants

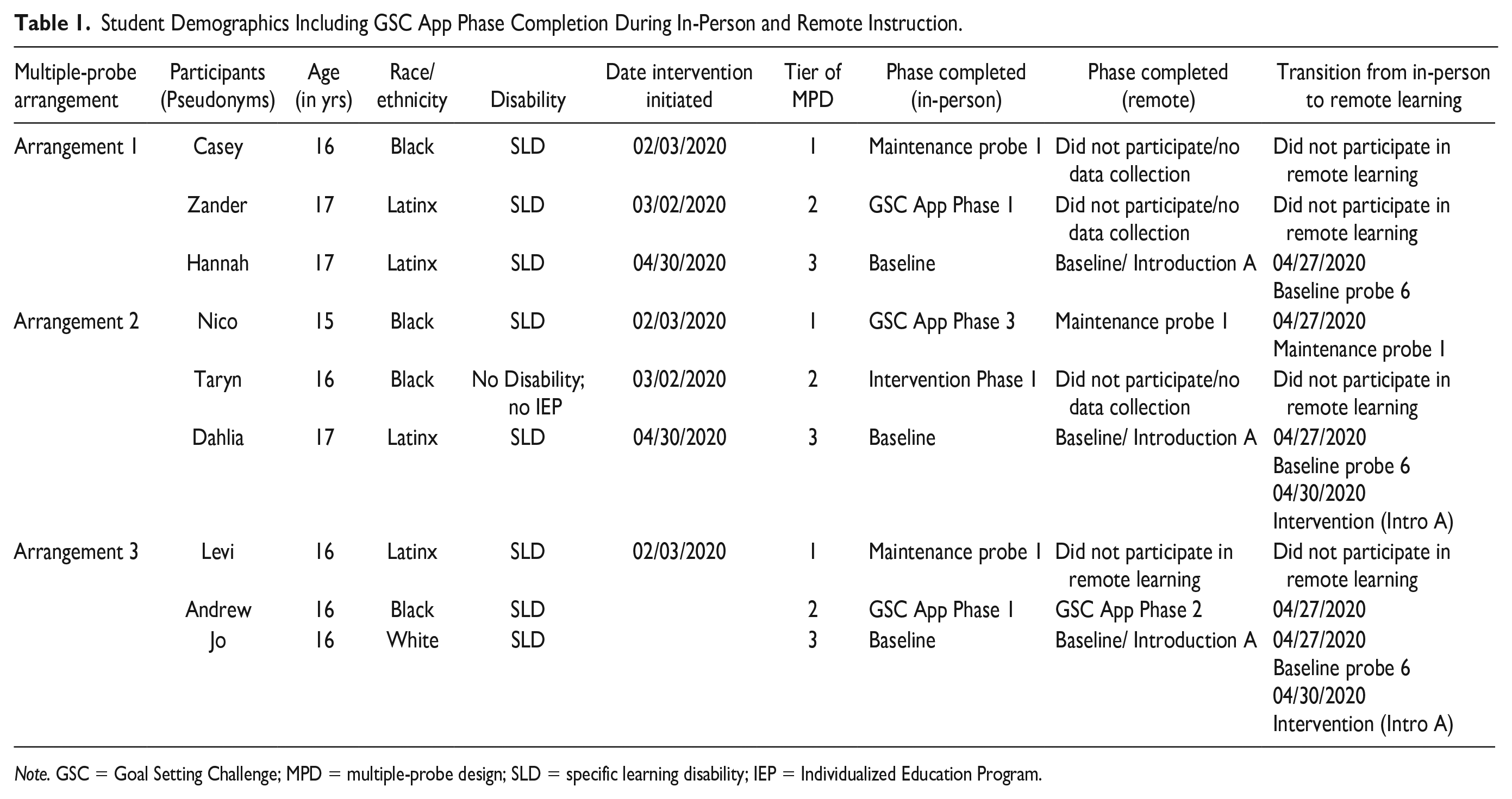

The nine students in our sample included five males and four females ranging in age from 15 to 17 years (M = 16.2). Eight participants had specific learning disabilities and received special education services; one participant, without a disability or special education services, was invited to participate because of her enrollment in the class and the appropriateness of the GSC App for any students in need of self-determination instruction. Four students identified as Latinx, four students identified as Black, and one student identified as White. Table 1 includes student demographics as well as GSC App lesson completion during in-person and remote instruction.

Student Demographics Including GSC App Phase Completion During In-Person and Remote Instruction.

Note. GSC = Goal Setting Challenge; MPD = multiple-probe design; SLD = specific learning disability; IEP = Individualized Education Program.

Setting

In-person instruction

The first half of the study took place in person during an English II class at a large, urban public high school in the southeastern United States (see online Supplemental Table S1 for school district demographics). The class met for 52.0 min between 10:40 a.m. and 11:32 a.m. Baseline and intervention probes were collected online via Google Forms, and GSC App lessons were completed during the first 30.0 min of class using a Hewlett-Packard Chromebook at participants’ desks. Participants who entered intervention during in-person instruction (n = 6) completed two lessons per week. The teachers and research staff provided the following supports: (a) troubleshooting when the App became nonresponsive or students received login errors and (b) answering questions regarding navigating within the App or how students could input their responses into the App (e.g., speech-to-text functioning). Researchers and teachers did not answer questions or provide support regarding goal-setting instruction within the App. The school district transitioned to remote instruction in late March 2020.

Remote instruction

The second half of the study took place remotely due to the district-wide closing of school buildings during the COVID-19 pandemic. The class met remotely led by the general education and special education teachers two times per week for 45.0 min via Google Meet to complete key tasks related to English II coursework (i.e., novel discussion and activities). Teachers agreed to continue GSC App activities during this instructional time. Students in baseline or maintenance phases received a link to complete assessment probes. The teachers provided information to students on logging in to the GSC App and troubleshooting issues that may occur (e.g., screenshots to navigate login) through the Instructure Canvas Learning Management System. Students used the same login credentials for in-person instruction and GSC App usage at home. All activities were completed using a Hewlett-Packard Chromebook supplied by the school district, the same device students used during in-person instruction. Students in intervention continued to spend approximately 25.0 min in the App twice per week. However, as described in the results section, there was significant variability in the degree and consistency with which the nine participants engaged in remote instruction.

Interventionists

Two classroom teachers served as the primary interventionists and facilitators to support students in using the GSC App. Meredith (pseudonym), an inclusion specialist for the English II class, was a 32-year-old White female. She worked at the participating school for 6 years and had a master’s degree in special education. Prior to the study, Meredith had no exposure to the GSC App or self-determination curricula; however, she supported students with their Individualized Education Program transition-related goals. Meredith co-taught the English II class with Christina (pseudonym), a 55-year-old White female who was a general education English teacher. Christina worked at the participating school for 23 years and had a master’s degree in English literature; she reported no previous exposure to self-determination curricula.

Materials

As previously described, the GSC App includes 14 lessons designed to support students in learning the skills necessary to self-direct goal-setting and attainment. Two introductory lessons (i.e., Intro A, Intro B) focus on supporting students to learn about (a) what it means to be self-determined, (b) the three phases of the SDLMI, and (c) definitions and examples of short- and long-term goals. After the introductory lessons, students complete 12 lessons that mirror the SDLMI phases and embedded Student Questions: (a) Phase 1: Set a Goal (Lessons 1–4); (b) Phase 2: Take Action (Lessons 5–8); and (c) Phase 3: Adjust Your Goal or Plan (Lessons 9–12).

Each lesson follows a structured format and includes (a) an introduction, (b) the Student Question to be answered during the lesson, (c) Lesson Objectives, (d) review of previous lessons, (e) activities to support Lesson Objectives, and (f) closure with a review of Lesson Objectives to determine if students gained knowledge of lesson content. Students targeted setting a goal in one of three broad domains (i.e., transition, self-management, and academic). At the end of Lesson 12, students determine if they (a) met their goal and will start a new goal or (b) need to adjust their goal or plan. Students’ answers determine how they will engage with the App in the future. The App is designed for repeated use across subsequent semesters. Therefore, if students during Lesson 12 report they achieved their goal, the App returns students to Phase 1 to set a new goal. If students decide they need to adjust their goal or plan, they return to Phase 1 to adjust their goal or Phase 2 if they want to adjust their plan. This process provides students with ongoing support from the App to remember their previous responses and goals and to continue to move forward with a series of linked goals and action plans.

Within the GSC App, student and teacher characters provide explicit instruction on the content. For example, when users log in, a student character welcomes the learner to the GSC App and encourages the learner to begin the next lesson. After clicking on the lesson, the teacher character: (a) greets the learner; (b) explains the Student Question to the learner (e.g., “What do I know about [my goal] now?”); and (c) describes the Learning Objectives for the lesson. Next, the teacher character provides explicit instruction for the learner based on lesson content to support users in answering the Student Question related to their goal. Throughout each lesson, student characters provide examples and non-examples of implementing aspects of the goal-setting and attainment process. Interactive activities that use multiple methods of expression (e.g., matching and fill in the blank) provide students an opportunity to apply the content learned.

Data Collection

Data collection included a researcher-created GSC App content measure, the online Self-Determination Inventory: Student Report (SDI:SR; Shogren & Wehmeyer, 2017), the fidelity of implementation measure, and a feasibility/satisfaction questionnaire for students and teachers. All data collection was completed via Google Forms and the Self-Determination Inventory System.

Goal Setting Challenge App content measure

The primary dependent variable was the GSC App content measure. This measure was developed specifically for this study to assess student self-determination knowledge of lesson content taught through the App. This measure allowed us to examine growth or gradual change in student self-determination content knowledge across baseline and intervention conditions (i.e., Were students learning the content delivered in the App?). This measure also provided feasibility information about where content needed to be more explicit, added, and/or changed within the App. The measure consisted of 18 questions: six multiple-choice (e.g., Which of the following is a self-monitoring strategy?) and 12 open-ended responses related to skills associated with self-determination taught through the App (e.g., What does self-determination mean? What is a goal?). Student responses for the six multiple-choice questions were scored as correct (i.e., 2 points) or incorrect (i.e., 0 points), and the 12 open-ended questions were scored as correct (i.e., 2 points); partially correct (i.e., 1 point); or incorrect (i.e., 0 points) using a standardized scoring protocol for a total possible score of 36 points. Students completed the content measure in Google Forms, which allowed us to easily distribute the measure and track data during in-person and remote instruction. Google Forms automatically ordered questions randomly so that questions were not given in the same order. Students typically completed the measure within 5.0 min. Students completed the content measure during baseline, following Intro A and B, and after completing each GSC App lesson phase (i.e., Phase 1 [Lesson 4], Phase 2 [Lesson 8], and Phase 3 [Lesson 12]). Specifically, we probed a minimum of three times during baseline, five times during the GSC App intervention phase, and a minimum of one time during maintenance. This was to ensure consistent measurement across all conditions reducing the likelihood of confounding factors that may impact outcomes of this measure (Cooper et al., 2020). We hypothesized, as students moved through the lessons, we would see a change in level and trend related to their self-determination content knowledge.

Interrater reliability

Researchers collected interrater reliability (IRR) data on the scoring of the GSC App content measure for 33.0% of completed measures across participants and study phases. A second research assistant scored students’ responses; a disagreement was recorded if items were not scored identically. Percentage agreement was calculated by dividing the number of agreements by the number of agreements plus disagreements multiplied by 100. The IRR across participants and phases ranged from 83.3% to 100.0% with a mean of 96.9%. Supplemental Table S2 provides IRR for each participant across baseline and intervention phases.

Self-Determination Inventory: Student Report

The Self-Determination Inventory: Student Report (SDI:SR; Shogren & Wehmeyer, 2017) was administered prior to and following student engagement in the study. The SDI:SR is a self-report measure of self-determination. Based on the results of confirmatory factor analysis, the 21 SDI-SR items “showed strong measurement properties, including measurement invariance at the item level” and suggest strong reliability and validity of SDI:SR scores across ages and disability groups (Shogren et al., 2020, p. 110). The SDI:SR is administered in a customized, online platform and includes 21 items. Responses are made on a slider scale that the computer scores as discrete responses between 0 (Disagree) and 99 (Agree). The custom online system includes embedded accessibility features (e.g., in-text definitions and audio playback). The SDI:SR automatically calculates an overall self-determination score as well as scores for the three essential characteristics of self-determination (i.e., volitional action, agentic action, and action-control beliefs) defined by Causal Agency Theory. Students received this score via a downloadable report; scores were saved in a secure data management system for analysis.

Fidelity of implementation

Procedural fidelity

Procedural fidelity data were obtained through a 12-item checklist completed in Google Forms by the special education teacher. The checklist reflected each step a student completed to ensure they had access to each of the 14 GSC App lessons and were able to independently navigate through the App. The checklist was completed for 100% of lessons during in-person instruction. The checklist could not be completed during remote instruction because eight out of 10 questions required in-person observation (e.g., researcher/teacher ensures proper functioning of the computer, GSC App lesson plays on the screen).

Usage statistics

Researchers monitored student usage of the GSC App through backend data collected through the App which was stored on a secure server. Two researchers completed a usage fidelity checklist documenting (a) student completion of each GSC App lesson, (b) time spent in each lesson, and (c) answers provided to activities within the App.

Feasibility and satisfaction

To explore student and teacher perceptions of the feasibility of the App and their satisfaction with using it, teachers and students were asked to complete a feasibility and satisfaction measure adapted from Foster and Price (1996) at the end of the study. The 20-question survey was sent via email to students, and an adapted 17-question survey was sent via email to teachers. Items on the feasibility measure were scored on a 4-point Likert-type rating scale (i.e., disagree = 0, somewhat agree = 1, agree = 2, strongly agree = 2). Questions focused on student and teacher perceptions of the: (a) general design and content of the App (e.g., clarity of directions and the feasibility of lesson timeframes); and (b) functionality of the App (e.g., the usefulness of student navigation and accessibility features). Students were also asked open-ended questions related to whether or not they liked the App, perceptions of the ease of use, and perceptions of the knowledge gained. We added three open-ended questions to the teacher survey to elicit feedback on perceptions of the feasibility of use and student engagement. Online Supplemental Figure S1 includes the GSC App Feasibility Survey for Teachers.

Data Analysis

We planned a rigorous, methodologically sound single-case, concurrent multiple-probe across participants design replicated three times to examine the effects of interaction with the GSC App lessons on students’ content knowledge of targeted self-determination skills (Kratochwill et al., 2013). This design was selected to allow for inter-subject effect replication and to ensure methodological rigor (Gast et al., 2018). Data were analyzed using visual analysis of graphed data representing the number correct on the GSC App content measure across all study conditions (Gast et al., 2018). Specifically, we used features of visual analysis, including level, trend, variability, and stability to determine if a functional relation occurred and the strength of the functional relation (Kratochwill et al., 2013).

During in-person baseline sessions, we collected at least three data points across all 15 participants (Kratochwill et al., 2013). Using random.org, we then randomly assigned three participants with the most stable patterns of responding during baseline sessions to one of three multiple-probe arrangements and introduced the GSC App intervention. Following the introduction of the intervention to the first three participants, we continued to collect baseline data on the remaining participants at two points in time (i.e., after Intro B, after the Phase 1 lessons) to determine if levels of performance remained stable. Based on scores from these probes during the continued baseline, the next three participants with the most stable baseline scores were randomly assigned to one of the three multiple-probe arrangements and entered the intervention phase. This pattern continued until all students were placed in one of the three single-case, multiple-probe arrangements. As mentioned previously, six students who participated in baseline data collection during in-person instruction never began intervention because these students did not engage in remote instruction after schools closed due to COVID-19. Therefore, these students were never randomly assigned to a multiple-probe arrangement, and their baseline data were not included in these analyses. The remaining nine students, who did participate to some degree during in-person and remote instruction, were kept in the design and included in data collection to the greatest extent possible. However, as mentioned subsequently, these students were inconsistently engaged during remote instruction.

Pre-baseline

To ensure fidelity of implementation, we provided training to classroom teachers on accessing and facilitating their students’ use of the GSC App prior to baseline data collection. The training lasted approximately 1 hr and was conducted in the teachers’ classroom. The training involved (a) an overview of the GSC App, (b) options for downloading and accessing student and teacher data within the App, (c) strategies for addressing potential lesson content and technology issues students might encounter, (d) suggestions for potential modifications needed to ensure students could fully access the App content, (e) guidance for integrating content from the GSC App into other classroom activities and instruction, and (f) information related to data collection and implementation fidelity. Prior to baseline data collection, teachers explained to students the purpose of the GSC App and the reason for completing the assessments. Based on teacher feedback, students had not been provided any type of goal-setting or self-determination instruction prior to baseline. Students were also not provided GSC App content information, log in information, or supports to access the GSC App until after we (a) collected baseline data on the content measure and SDI-SR and (b) students were randomly assigned to begin intervention.

Baseline

Prior to the intervention, we collected baseline data on the content measure and SDI-SR. First, students completed the SDI:SR at the start of the study to assess the perceived level of self-determination prior to intervention. Second, during in-person instruction students completed the GSC App content measure a minimum of three times during baseline (Kratochwill et al., 2013) to assess and track baseline content knowledge related to skills associated with self-determination taught through the GSC App. Students received no instruction in goal-setting or self-determination during the baseline phase and did not access the GSC App.

Intervention

In-person instruction

Data collection during baseline and as participants were assigned to the intervention phase occurred during in-person instruction for 7 weeks. Consistent with our implementation plan, developed prior to the pandemic, teachers supported students during class time to access and complete the GSC App lessons. An implementation schedule was developed with teachers during the initial training that involved providing students with time during class to access GSC App lessons twice per week (i.e., Tuesday and Thursday). Specifically, at the beginning of the class period, the classroom teachers directed students, who were in the GSC App intervention phase of the single-case study, to complete one GSC App lesson. Students accessed the GSC App via a secure website, with a unique username and password provided at the start of the intervention phase. Students utilized headphones, a built-in microphone, the computer keyboard, and the computer mouse to listen and respond to GSC App content; other students could not hear the lesson. On average, students completed each lesson in 15.0 min. After finishing lessons, students either (a) logged out of the GSC App website and completed the GSC App content measure on Google Forms (i.e., after completing Intro A, Intro B, Lessons 4, 8, and 12) or (b) logged out of the GSC App website, and the teachers continued typical classroom activities and instruction.

Remote instruction

In early March, due to the COVID-19 pandemic, the participating school closed and began to plan for the transition to remote online learning; this happened 7 weeks into the in-person data collection. Because of the unprecedented demands on schools and the focus on establishing online instructional models and supports, the GSC App study was paused for 5 weeks. The participating teachers indicated a willingness and interest to continue to engage with students using the GSC App after they established remote instruction routines as they needed additional support to engage students during this time and perceived the GSC App (based on experience during in-person instruction) as a useful tool. Because students could log in to the App via the web from any location, and all data collection measures (i.e., content knowledge probe, SDI-SR, and fidelity measures) were available online, students could engage with the App and assessments from home, with some support from their teachers to navigate to the website and login. For students engaged in remote instruction, we planned with teachers for students to complete GSC App lessons and applicable probes twice per week during remote 45.0-min synchronous class sessions using Google Meet. During this time, we met once per week with teachers to discuss student progress and troubleshoot any issues. The research team also joined the online, synchronous class sessions to support teachers and students to ensure students could log in to the App, review progress, and complete assessments when needed. The procedures for remote instruction in the GSC App were consistent with in-person instruction: (a) students were instructed to complete the corresponding lessons and (b) students completed the content measure at assigned times. We were able to collect data for three additional weeks—after the 5-week pause—during remote instruction. As mentioned previously, not all students engaged in remote instruction. Six were not engaged at all and are not included in the present study. And, as described in the results, the remaining nine were inconsistently engaged during remote instruction.

Maintenance

The research team planned for all participants to complete two additional content measures during the maintenance phase at 2 weeks and 4 weeks following the completion of the intervention. Students in the first intervention group (i.e., Casey, Nico, Levi) finished intervention prior to the COVID-19 pandemic. Two weeks after completing the intervention, two students (i.e., Casey and Levi) completed one GSC App content measure maintenance probe during in-person instruction. Nico completed his first maintenance probe 8 weeks following the GSC App intervention, during remote instruction. No other maintenance data were collected due to inconsistent student engagement during remote instruction.

Results

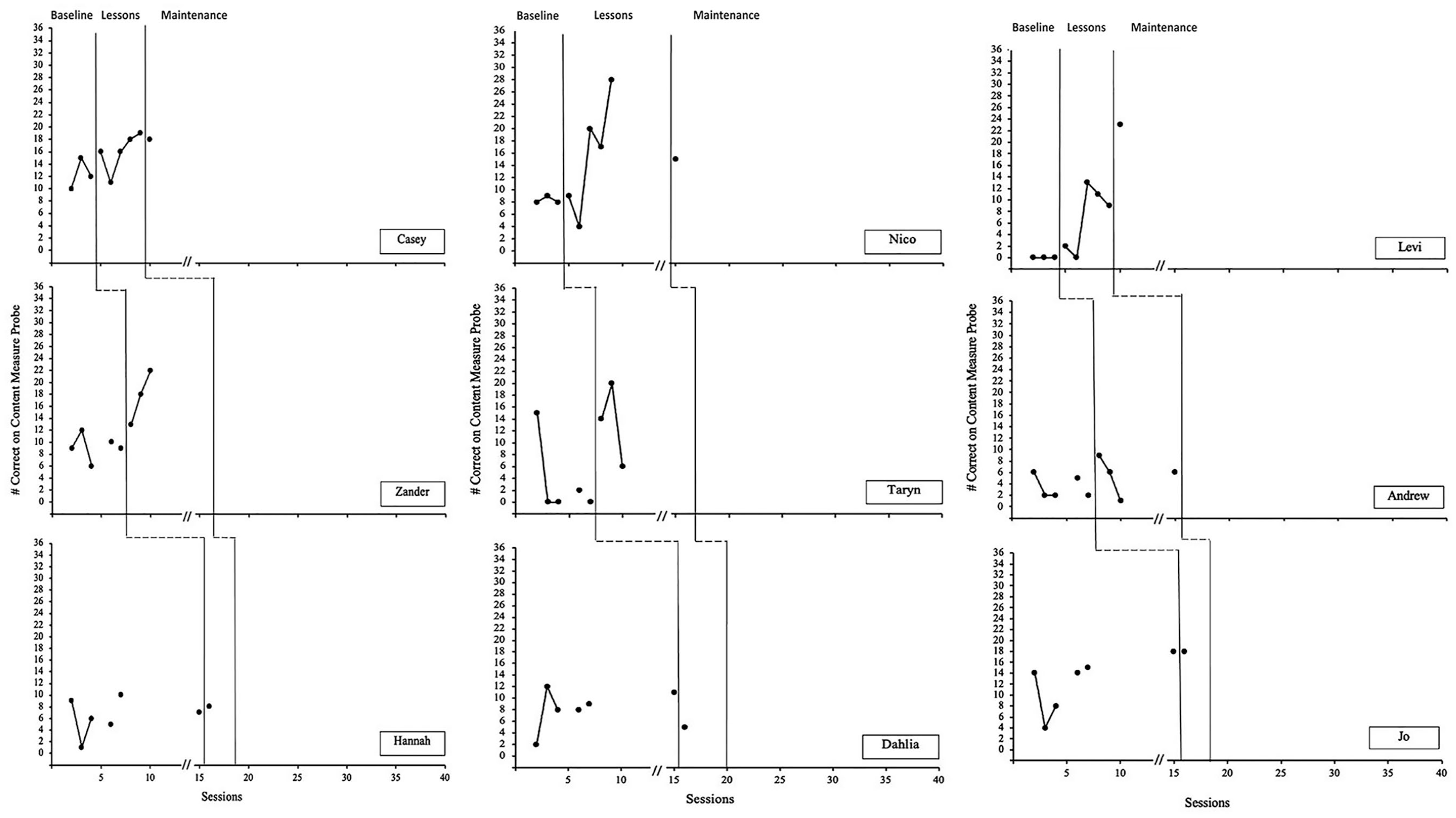

Our research questions and study design, planned prior to the onset of the pandemic, focused on (a) examining the effect of interaction with the App on self-determination content knowledge, (b) evaluating students’ level of self-determination pre- and post-intervention, and (c) evaluating the feasibility and fidelity of students using the GSC App during in-person instruction at schools. The COVID-19 pandemic significantly disrupted our design and ability to address these questions. While our study was designed with a high level of experimental control for single-case research (Kittelman et al., 2018; Kratochwill et al., 2013), we were only able to collect complete intervention and maintenance data for the first three participants in our multiple-probe arrangements (i.e., Casey, Nico, Levi; see Figure 1). Data collection was interrupted for the remaining participants due to the COVID-19 pandemic. We did, however, collect any data we could consistent with our design during remote instruction, five weeks after the pandemic began, as well as collect data on ongoing engagement, the fidelity of implementation, and feasibility/satisfaction during remote instruction. In the following sections, we share the results of our analyses using available data.

Number of points correct on the GSC App content measure for each multiple-probe arrangement.

Research Question 1: Content Knowledge Targeted in the GSC App

We examined data using visual analysis of graphed data for level, trend, and variability/stability to determine the presence of a functional relation (Kratochwill et al., 2013) between the use of the GSC App and self-determination content knowledge targeted in the App. Because of the COVID-19 disruption, there were not sufficient data to establish a functional relation for the three single-case, multiple-probe arrangements; therefore, we did not see an “anticipated change” (p. 73) across multiple series within each multiple-probe arrangement (Kratochwill et al., 2018). However, the data, particularly because of the COVID-19 disruption, can still be examined to provide a preliminary interpretation of the potential impact of the GSC App and can be used to guide future research during in-person and virtual instruction. Figure 1 presents data collected across baseline, the GSC App intervention, and maintenance phases for each arrangement. The dependent variable represents the total score (out of 36) for each participant on the content measure.

Multiple-probe arrangement 1

The first multiple-probe arrangement included Casey, Zander, and Hannah. During baseline (as shown in Figure 1), all three participants displayed variable levels of responses on the GSC App content measure. Casey’s scores ranged from 10 to 15 points (M = 12.3; SD = 2.5). Zander’s scores ranged from 6 to 12 points (M = 9.2; SD = 2.2). Hannah’s scores ranged from 1 to 10 points (M = 6.3; SD = 3.2). During intervention, Casey completed all GSC App lessons. Casey’s scores were variable during intervention, showing an increasing trend, and ranged from 11 to 19 points (M = 16.0; SD = 3.1). Zander completed Intro A and B and Phase 1 lessons. Zander’s scores for these lessons showed an increasing trend and ranged from 13 to 22 points (M = 17.7; SD = 4.5). Hannah only completed the content measure for Intro A with a score of 8 points, which did not show an increase from baseline. Casey was the only participant in this group for which we were able to attain a maintenance score 2 weeks following the completion of the GSC App intervention. Maintenance data for Casey’s performance on the content measure was 18 points, which was above baseline and consistent with his intervention scores.

Multiple-probe arrangement 2

The second multiple-probe arrangement included Nico, Taryn, and Dahlia. During baseline (as shown in Figure 1), all three participants displayed low levels of responses on the GSC App content measure. Nico’s scores were stable and ranged from 8 to 9 points (M = 8.3; SD = 0.6). Taryn’s scores showed a decreasing, stable trend and ranged from 0 to 15 points (M = 3.4; SD = 6.5). Dahlia’s scores were variable and ranged from 2 to 12 points (M = 8.3; SD = 3.5). During intervention, Nico completed all GSC App lessons. Nico’s scores were variable, showed an increasing trend, and ranged from four to 28 points (M = 15.6; SD = 9.4). Taryn completed Intro A and B and the Phase 1 lessons. Taryn’s scores for these lessons showed a variable, decreasing trend and ranged from six to 20 points (M = 13.3; SD = 7.0). Dahlia only completed the content measure for Intro A with a score of five points which showed a decrease from baseline. Nico was the only participant in this group for which we were able to attain maintenance data. Maintenance data for Nico were collected 8 weeks following the completion of the GSC App intervention. These were the only maintenance data collected for Nico during remote instruction due to inconsistent and ceased engagement with the App. Maintenance data for Nico’s performance on the content measure was 15 points, remained at a level above baseline, and was consistent with his intervention scores, however, was three points lower than his last intervention data point.

Multiple-probe arrangement 3

The third multiple-probe arrangement included Levi, Andrew, and Jo. During baseline (as shown in Figure 1), one participant (Levi) displayed low levels of responses on the GSC App content measure, while two participants showed variable, increasing trends. Levi’s scores were stable, and all three baseline data points were zero (M = 0.0; SD = 0.0). Andrew’s scores showed a variable trend and ranged from two to six points (M = 3.4; SD = 1.9). Jo’s scores showed a variable, increasing trend, and ranged from four to 18 points (M = 12.1; SD = 5.2). During intervention, Levi completed all GSC App lessons. Levi’s scores during intervention were variable and ranged from zero to 13 points (M = 7.0; SD = 5.7). Andrew completed Intro A and B and the Phase 1 lessons. Andrew’s scores during these lessons showed a decreasing trend and ranged from one to nine points (M = 5.5; SD = 3.3). Jo only completed the content measure for Intro A with a score of 18 points, which did not increase from baseline. Levi was the only participant in this group for which we were able to attain maintenance data, which was 2 weeks after he completed the GSC App intervention. Maintenance data for Levi’s performance on the content measure was 23 points, remained at a level above baseline, and was higher than his intervention scores.

Research Question 2: Students’ Level of Self-Determination

We were only able to obtain pre- and post-test scores on the SDI:SR for participants who entered intervention first during in-person instruction (i.e., Casey, Nico, and Levi). SDI:SR scores are reported on a scale of 0 to 99 points. Participant self-determination scores varied significantly before and after the completion of the GSC App. Casey had an overall score of 81 on the SDI:SR prior to baseline and 87 after completing the GSC App lessons. Nico had an overall score of 90 prior to baseline and 63 after completing the GSC App lessons. Last, Levi had an overall score of 63 on the SDI:SR prior to baseline and after completing the GSC App.

Research Questions 3 and 4: Feasibility and Satisfaction

Our plan was to examine the perceptions of students and teachers regarding feasibility and satisfaction at the end of the study. Although we emailed and sent reminders to students, we received no student data. There was an 80% decrease in student participation when the school shifted to remote instruction due to COVID-19, and even for those students who remained partially engaged, their participation dropped off as remote instruction continued. Therefore, we are unable to address this question from the student perspective. We did, however, receive the completed survey from the special education teacher who reported finding the GSC App to be practical and easy to implement during instruction. She strongly agreed the directions for the GSC App were clear, and the length of the lessons was adequate to meet the demands of her students and class. She agreed the App was engaging and strongly agreed her students enjoyed instruction provided in the App. She strongly agreed her students benefited from interaction with the App, and the content embedded in the App was important for students to learn to promote better postschool outcomes. She provided qualitative feedback on features of the App, including perceived strengths (e.g., “The App has a great flow in breaking down goals”), helpful features (e.g., “Loved that it was read aloud and text was on the screen”), and suggestions for improvement (e.g., “I would love to be able to give feedback in the App”).

Regarding student usage of the GSC App, the special education teacher offered several suggestions for expanding features to increase satisfaction and support implementation in the classroom. These included: (a) resources teachers could use in class to support and model goal-setting in addition to the App, (b) a pacing guide that outlines Student Questions and lesson objectives, (c) a discussion guide for facilitators to use with students, (d) guided notes for students to use, and (e) a list of supplemental assignments to support student learning.

Research Question 5: Fidelity of Implementation

Procedural fidelity

Procedural fidelity data were captured during in-person instruction and ranged from 72.0% to 100.0% (M = 94%) across sessions. The primary challenges with procedural fidelity resulted in students struggling to log in as well as Student Questions about the meaning of some of the terminology. We were unable to capture similar data during remote instruction given the inability to directly observe student use of the App as we had not planned for virtual observations.

Usage statistics

Pre-pandemic, three students (Casey, Nico, and Levi) completed the entire App during in-person instruction. Three students (Zander, Taryn, and Andrew) completed 43.0% (n = 6) of intervention sessions in-person with the remaining sessions occurring remotely. There was a high rate of completion of lessons during in-person instruction. Out of 108 logins to the App, students completed all activities in the lesson for 107 sessions. However, post-pandemic these numbers dropped significantly. While the school’s policies required participation in remote instruction, many students did not engage in remote instruction, or if they logged in for remote instruction, did not engage with content (e.g., logging into the App when directed by their teachers), which limited their exposure to the App. Of the students who participated in remote instruction and engaged with the App remotely, one student completed 29% (n = 4) of potential intervention sessions, and three students completed 7.0% (n = 1) of intervention sessions. Students completed a total of 12 logins out of 72 possible logins, suggesting highly reduced and variable patterns of engagement during remote instruction.

Across in-person and remote instruction, time spent completing each lesson ranged from 7 min 10 s to 26 min 30 s with a mean of 15 min 3 s. For students who completed Phase 1 in the App (n = 6), four students set a transition goal (e.g., I would like to complete a goal to help out people in need and those less fortunate than me); one student set a self-management goal (i.e., Don't sit near friends); and one student set an academic goal (i.e., I need to either write it down or keep saying it in my head so I don’t forget).

Discussion

The purpose of this study was to examine the impacts of the GSC App on students’ self-determination knowledge and explore students’ level of self-determination pre- and post-intervention, including feasibility and fidelity of implementation of the GSC App during one academic semester. Due to the COVID-19 pandemic and the rapid transition to remote instruction, we were unable to complete planned data collection to fully address our research questions. While we recognize the negative (null) findings within our study, we also suggest these negative findings are informative and benefit our work and future research moving forward (Kratochwill et al., 2018; Therrien & Cook, 2018). We were able to collect data to (a) inform our research questions, (b) identify areas for future research, and (c) suggest future supports that might be needed during in-person and remote instruction to support student engagement with the App. Furthermore, we were able to document the engagement of students during remote instruction and provide added insight into potential learning loss for students with disabilities.

The Impact of COVID-19

When schools shifted to remote instruction in March 2020, neither we nor the schools had clear understanding of how long stay-at-home orders and remote instruction would remain in place. We paused the study for 5 weeks to provide time to determine the likely course of remote instruction and ensure we were cognizant of the additional demands teachers and schools were facing as they were working to rapidly transition their curricula to remote delivery in unprecedented ways. This significantly impacted our research design but also provided a unique opportunity to gain insight into the engagement of students during remote learning. Almost half of our originally consented sample was not engaging at all in remote instruction 5 weeks after the pandemic began; this is why they are not included in the data reported here. Anecdotally, we learned that the included students were not attending class because they had jobs for which they were considered essential workers (e.g., grocery store workers). Because their employers knew they were not required to attend school in person, employers scheduled students to work during the school day given high needs. Another factor that impacted student engagement during remote learning was the lack of reliable internet and technology at home; while all students were sent home with computers, many did not have internet access making logging into remote instruction and benefit from tools like the GSC App impossible.

Of the nine students who remained engaged in remote instruction after 5 weeks, their level of engagement waned as time passed. We re-engaged with students and teachers via Google Meet after 5 weeks, but by 8 weeks into the pandemic, none of the nine students logged into the GSC App or completed assessments, even with prompts during Google Meet instructional time. These findings suggest when schools moved to remote instruction, in our sample, even students who could log in and access instructional time had significant barriers to participating meaningfully in remote instruction. These barriers were likely related to a lack of previous experiences with navigating technology, engaging in virtual activities and learning, and other demands on time and attention at home and given the pandemic (Rowe et al., 2020). Other research on the impact of COVID-19 on students with disabilities confirm these anecdotal findings (Neece et al., 2020). For example, school districts reported difficulty in locating secondary students with disabilities during the pandemic, and researchers are only starting to understand the impact COVID-19 has had on secondary education/transition instruction and resultant postschool outcomes (Rhim & Ekin, 2021; Rowe et al., 2020).

Our findings, after the transition to remote instruction, suggest the critical importance of ensuring students have resources and supports to meaningfully engage virtually, irrespective of instructional condition. For example, this may have implications for student’s use of the GSC App, even during in-person instruction. Importantly, teachers requested we re-engage with them and their students 5 weeks into the pandemic as they highlighted the critical need for supports and resources to engage and teach students during virtual learning as they did not have access to tools and resources. This highlights the need for better planning and resources during unanticipated circumstances, including public health crises, natural disasters, or other events that create significant disruptions, particularly as these disruptions may disproportionally impact marginalized students. While we hope there will not be another global pandemic that impacts instruction as rapidly as COVID-19, our findings suggest the critical importance of enabling students to have the resources and supports to meaningfully engage virtually irrespective of unexpected circumstances. It also suggests that, as we resume in-person instruction, consideration should be given to the lost instructional time for youth, particularly marginalized youth.

Key Findings and Implications for Future Research

Despite the impacts of COVID-19 on our study design, the data collected provide preliminary indications of the potential of the GSC App to impact the self-determination outcomes of secondary students with disabilities. More research is needed given the limitations to addressing our research questions during the COVID-19 disruption.

Content knowledge targeted in the GSC app

A key focus during the study, as originally designed, was to determine if there was a functional relation between students engaging with the GSC App and increases in the skills targeted in the App using single-case design. However, data collection was disrupted as were the opportunities for students to engage with the App. As such, visual analysis of graphed data did not establish a functional relation (i.e., null findings) within and across multiple-probe arrangements (Kratochwill et al., 2018). However, when examining the data from the students who did complete the App during in-person instruction, we see promising patterns that deserve ongoing research with additional single-case designs as well as other larger experimental designs (e.g., randomized controlled trials). While there was some variability in scores on the content measure, overall, in-person participants showed increases in level from baseline to intervention, and maintenance probes suggested content measure scores remained near average intervention scores after several weeks even with COVID-19 disruptions.

It is important to note the content measure was designed for this study and aligned with the skills taught in the App, and we did not have multiple raters for open-ended questions. Further research is needed on this tool; however, the preliminary findings suggest that transition-age students with disabilities did not have the self-determination skills measured or expressed them with variability prior to intervention, and they showed growth as they were exposed to the GSC App. Additional research is needed to investigate other outcomes more directly, such as the actual attainment of goals set during the App as well as longer term outcomes (e.g., academic, transition outcomes). Furthermore, as the App was designed to be implemented over time, it will be useful to engage in longitudinal research to explore how skills continue to grow, and if, skills generalize to other areas.

Students’ level of self-determination

We planned to collect data on student’s overall self-determination before and after engagement in the multiple-probe arrangements as a secondary outcome measure. The short-term nature of this study (one semester) limited the expectation that clear differences in self-determination levels would be found. Researchers suggest a change in self-determination often takes multiple years of engagement with self-determination interventions (Wehmeyer et al., 2013), or some students even show an initial decrease in self-reported self-determination after their first semester of self-determination instruction, perhaps as they are recalibrating their understanding of self-determination and their own use of self-determination abilities (Raley et al., 2020). The findings from three students who did provide self-determination data at the end of the study confirm the variability found in previous work, with one student increasing their self-reported levels, one decreasing, and another staying the same. Ongoing work is needed to examine change in self-determination outcomes over time as students have repeated opportunities to engage with the App. Such research should also examine the relationship between changes in specific skills taught in the App, such as goal-setting and attainment, and changes in self-determination abilities. Finally, it would be useful to explore the relation between changes in student outcomes and student engagement in the App as well as the quality of their responses to the Student Questions. Other research with the SDLMI (e.g., Raley et al., 2020) suggested the nature and quality of student goals vary, which can provide insight into additional learning supports students may need and ways educators can support students to develop goal-setting and attainment skills. Examining the quality of responses made by students as they engage with the App will be useful for exploring engagement, as well as the feasibility of the App, to teach targeted skills. Furthermore, it may suggest additional supports could be provided by teachers to scaffold learning in the App and embed skills targeted in other instructional activities. Work is also needed to explore sociodemographic factors that may impact engagement in the App and student outcomes. While the App was designed with consideration of reflecting multiple cultural identities and ensuring the integration of culturally responsive practices, specific work is needed to explore these issues, particularly as other researchers found self-determination interventions, as implemented in schools, may not fully address the needs of marginalized youth (Shogren et al., 2021).

Feasibility and satisfaction

No data were obtained from students or the general education teacher on the feasibility and satisfaction survey. The special education teacher indicated the GSC App addressed barriers she encountered related to teacher time required to embed and implement self-determination instruction, a challenge reported in previous research (e.g., Grigal et al., 2003). While Meredith felt students enjoyed the App and found it engaging, usage data clearly suggest the App was unable to engage students at home although students could still access it through provided technology, presuming they had stable internet access. Students likely had a number of competing demands. Ongoing work is needed to (a) explore students’ perceptions of using the GSC App as students transition back to in-person instruction and (b) examine if there are other features that could be integrated in the App to support its use at home or during community-based instruction (e.g., directly linking meaningful activities in the App to support student engagement at home, employment, and community settings). This finding also highlights the substantial instructional time lost by students with disabilities during remote instruction, particularly during the spring of 2020.

Usage data did suggest high engagement and completion of all App content during in-person instruction. Out of 108 logins, students completed all activities in the lesson for 107 sessions. This suggests our design process and the feedback from students integrated throughout the development process led to an App aligned with the instructional needs of students during in-person instruction. Ongoing work, however, is needed to further explore if this can be sustained over time as more students engage with the App over a longer period, as well as the most effective teacher supports necessary during in-person or remote instruction to support student engagement. Further testing of the App with a larger and more diverse group of students will be necessary to further evaluate its feasibility and the potential for scaling-up to address student learning needs.

Implications for Practice and Policy

Promoting self-determination is considered a best practice in secondary transition (Rowe et al., 2021) and enhanced self-determination is a predictor of postschool success (Hagiwara et al., 2017; Mazzotti et al., 2021). Given this, practitioners need tools that can meaningfully engage young people in self-determination instruction. To date, there has been limited focus on the technology-based delivery of self-determination interventions. Although our results are preliminary and the impact of the GSC App on student outcomes must be further explored, findings suggest the GSC App may provide a practical, student and teacher-friendly means for engaging students in self-determination instruction, addressing barriers teachers identify related to limited instructional time, resources, and training (Hagiwara et al., 2017).

In addition to the preliminary findings suggesting the promise of the GSC App, we also learned key lessons about navigating the delivery of instruction during a pandemic. The findings related to student engagement in remote learning suggest the critical need for states, districts, and schools to consider the procedures in place or those that should be in place to facilitate a successful transition to remote instruction for students (e.g., in an emergency) and support student engagement. Further considering implications related to technology-based instruction and how barriers during in-person instruction could be eliminated through technology (e.g., website accessibility, instructional resource differentiation; Smith & Basham, 2014) seems like an important area of need. Finally, exploring ways teachers can support students to generalize what is learned through technology into other class and transition planning activities is essential and was identified as a need by the special education teacher in this study. Providing students with access to learning opportunities and supports in multiple ways is essential to promoting transition outcomes and will become more important as steps are taken to address learning losses during the pandemic.

Conclusion

Overall, our findings highlight directions for future research, using the GSC App to support students with disabilities as they set goals and plan for the transition from school to adult roles and responsibilities. Ongoing work is needed to determine effective ways to support students during remote and virtual learning, as well as to support students with disabilities, who, because of the COVID-19 pandemic, experienced significant disruptions in their access to learning opportunities and resources during the critical time when they are planning for their transition to adulthood. Research is specifically needed to explore ways to support self-determination in transition planning for marginalized youth, and to integrate teacher classroom instruction with technology-mediated instruction.

Supplemental Material

sj-docx-1-rse-10.1177_07419325221147698 – Supplemental material for The Goal Setting Challenge App: Promoting Self-Determination Through Technology

Supplemental material, sj-docx-1-rse-10.1177_07419325221147698 for The Goal Setting Challenge App: Promoting Self-Determination Through Technology by Valerie L. Mazzotti, Karrie A. Shogren, Jared H. Stewart-Ginsburg, PhD, Stephen M. Kwiatek, PhD, Mayumi Hagiwara, Danielle C. Wysenski, PhD and Richard A. Chapman in Remedial and Special Education

Supplemental Material

sj-docx-2-rse-10.1177_07419325221147698 – Supplemental material for The Goal Setting Challenge App: Promoting Self-Determination Through Technology

Supplemental material, sj-docx-2-rse-10.1177_07419325221147698 for The Goal Setting Challenge App: Promoting Self-Determination Through Technology by Valerie L. Mazzotti, Karrie A. Shogren, Jared H. Stewart-Ginsburg, PhD, Stephen M. Kwiatek, PhD, Mayumi Hagiwara, Danielle C. Wysenski, PhD and Richard A. Chapman in Remedial and Special Education

Footnotes

Compliance with Ethical Standards

This article has not been previously published and is not pending review in any other peer-reviewed journal. Furthermore, this research was approved appropriately by the Institutional Review Board for the participating agency, and obtained consent and assent were collected appropriately. This article will not be submitted elsewhere pending the completion of the editorial decision.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The research reported here was supported by the Institute of Education Sciences, U.S. Department of Education, through Grant R324A180012 to The University of Kansas. The opinions expressed are those of the authors and do not represent views of the Institute or the U.S. Department of Education.

Supplemental Material

Supplemental material is available on the Remedial and Special Education website with the online version of this article.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.