Abstract

We report on a longitudinal case study (n = 9) about popularization writing skills in undergraduate interdisciplinary students. Writing skills were determined by analyzing components of the cognitive process model of writing proposed by Hayes. Keystroke logging and video observation were used to analyze the text construction process (the process level) in third-year writing. Genre knowledge (the control level) was analyzed through text analysis and assessment of first-year and third-year texts. Results showed that writing was highly individualized at the process level, including switches between processes, timing, number of edits, and reliance on the source text. At the control level, popularization genre knowledge did not significantly change over time and text quality remained low to average, suggesting a lack in genre knowledge. Choices in the writing process are, thus, not reflected in the quality of the writing product. These findings point to a need for explicit training in popularization discourse alongside academic discourse training.

Keywords

Introduction

Students receive extensive training in academic skills during higher education, with the aim of becoming (among other things) competent academic writers. Yet an often-overlooked approach in higher education training is one that fosters communication of academic findings to a broad and nonexpert audience in an understandable and engaging way. This type of communication is called popularization discourse.

Popularization is the act of transforming insights from academic research into communication for nonexpert audiences. These are often, though not necessarily, broad audiences. Fahnestock (1986), who was one of the first to approach popularization discourse as its own genre, 1 defining the process of popularization as a recontextualization of insights from academic research into the context of everyday life. By doing so, there is a shift from what she refers to as “the wonder,” that is, the focus on the existence of a phenomenon, to “the application,” that is, the focus on the significance of findings and their application in everyday life (Fahnestock, 1986, p. 275). In this shift, two processes are used to construct the popularization discourse: recontextualization and reformulation. Through recontextualization, a new context, usually one connected to everyday life or to societal issues, is used to reframe the results of research into a setting that is interesting for a nonexpert. Through reformulation, jargon and language that might be difficult to comprehend for a nonexpert is rephrased (Calsamiglia & Van Dijk, 2004; Hall et al., 1999 in Ciapuscio, 2003; Gotti, 2014; Sterk & Van Goch, 2023). In practice, and in terms of style, the construction of popularization discourse entails the use of narrative, storytelling, humor, anecdotes, analogies, comparisons, metaphors, imagery, and images or visual stories (Baram-Tsabari & Lewenstein, 2013; Bray et al., 2012; Kapon et al., 2010; Luzón, 2013; Mercer-Mapstone & Kuchel, 2015; Motta-Roth & dos Santos Lovato, 2009; Motta-Roth & Scherer, 2016).

There are competing insights about what popularization discourse is, if it is indeed its own genre, and how it should be approached. Misrepresentations of popularization view it as a second-rate academic discourse: “popularization is not viewed as part of the knowledge production and validation process but as something external to research which can be left to non-scientists, failed scientists, or ex-scientists as part of the general public relations efforts of the research enterprise” (Whitley, 1985, p. 3). This view assumes a “boundary between expert and lay participants and the linearity of the diffusion of knowledge” (Luzón, 2013, p. 432). Popularization discourse is better understood as multifaceted, serving different audiences: experts, students, industry, and practitioners (Hyland, 2010, p. 117). Thus, the constructions and forms of popularization discourse are dependent upon the audience that is or audiences that are communicated to.

Even though experts in popularization agree that training should be offered during higher education (Bray et al., 2012), it is not a standard part of undergraduate or graduate programs in either Europe (Davies et al., 2021) or the US (Bankston & McDowell, 2018; Brownell et al., 2013a). This means that most students from these countries do not receive any training in communicating insights from their research to target audiences other than their own disciplinary community or the wider academic community. This is problematic as popularization skills are useful, required even, in both academic (Brownell et al., 2013a; Kuehne et al., 2014) and professional careers (McKinnon & Bryant, 2017; Yeoman et al., 2011).

Studies into the educational design of courses teaching undergraduate or graduate students how to popularize research have shown mainly positive effects of popularization training (Boynton, 2018; Brownell et al., 2013b; Crone et al., 2011; Harrington et al., 2021; Heath et al., 2014; Latimore et al., 2014; Mercer-Mapstone & Kuchel, 2016; Moni et al., 2007; O’Keeffe & Bain, 2018; Poronnik & Moni, 2006; Rakedzon & Baram-Tsabari, 2017a; Yeoman et al., 2011). Evaluations of entire long-running popularization graduate programs also show positive outcomes for career opportunities (McKinnon & Bryant, 2017; Mellor, 2013). Although Rubega et al. (2020) did not find that STEM graduates who partook in a semester-long science communication course improved their communication skills more than untrained controls, those skills were assessed by an audience of undergraduate students, who varied greatly in scoring.

State of Research on Popularization Discourse

An increase in research on popularization discourse and training has so far left gaps in knowledge that hinder our understanding of how these skills can best be taught. First, research into the writing process of popularization discourse focuses on the writing product and on outcome measures: readability of language, correctness of content, presentation (such as framing), use of stylistic aspects, use of analogies and narrative, inclusion of dialogues, inclusion of a title, use of the active voice, use of the inverted pyramid structure, use of a journalistic text format, use of explanations, and readability (Baram-Tsabari & Lewenstein, 2013; Rakedzon & Baram-Tsabari, 2017b). This focus on the writing product, however, means there is little insight into popularization discourse production.

Second, improvement in popularization skills and knowledge is tested in studies working with a pretest-posttest design and conducted over a short period of time, limiting knowledge about the development of these writing skills to a single course (see Latimore et al., 2014; Moni et al., 2007; Rakedzon & Baram-Tsabari, 2017a). Studies of the effectiveness of popularization training often determine writing skills solely on post-course text quality, and any (possible) development in writing skills is not taken into consideration (see Boynton, 2018; Brownell et al., 2013b; Harrington et al., 2021; Mercer-Mapstone & Kuchel, 2016; Poronnik & Moni, 2006). Apart from Sterk et al. (2022), there are no baseline assessments of popularization skills. Yet these types of studies could give insight into the skills and knowledge that students enter a course or program with, and longitudinal studies could show the development of skills over the time frame of an academic program.

Third, multiple studies show that skills in popularization are beneficial for developing academic skills (Bankston & McDowell, 2018) and scientific literacy (Parkinson & Adendorff, 2004; Pelger & Nilsson, 2016). They also facilitate taking the target audience into consideration, choosing content elements, and moving between epistemic levels (Pelger, 2018). As Baram-Tsabari and Lewenstein (2013) explain, becoming a scientist “inevitably involves learning to talk and write science,” a process of learning that includes “generalizing and abstracting rather than building on examples, stories, and anecdotes, and using accurate descriptions rather than analogical approaches” (p. 57). The process of becoming a scientist, therefore, involves learning writing practices that are in some ways the opposite of those needed to construct popularization discourse. Popularization skills are thus helpful for the development of academic skills, yet it remains unclear if the reverse is also true. This could imply that solely training and writing in academic discourse during higher education has a negative impact on students’ popularization skills. In this study, we have investigated to what extent this is the case.

Fourth, most academic literature that discusses popularization is written about the natural sciences. Thus, when popularization is explored as a discourse or as a genre, what is really being discussed is the subgenre of science popularization (cf. Calsamiglia & Van Dijk, 2004; Luzón, 2013; Nwogu, 1991). The same is true for the discussion of training in popularization: courses and programs frequently mentioned in the literature are taught within the natural sciences. Indeed, we have not been able to locate research about education in popularization for the humanities and social sciences.

We aim to make a first step to diversify studies of popularization writing by discussing the popularization writing skills of nine interdisciplinary students from diverse disciplinary backgrounds. As previous studies were unable to offer insight into popularization discourse production or in the development of writing skills, this longitudinal exploration approaches the writing process from a cognitive point of view that considers both the writing process as well as the writing product as indirect measures for these skills. This cognitive approach will show the diversity in these interdisciplinary students’ writing processes, yet also shed light on their lack of progress in popularization knowledge over time. This will add to our understanding of popularization discourse production in general and of students’ popularization writing skills specifically.

Theoretical Background of the Study

In this section, we first focus on the process of writing as a cognitive activity and then approach the writing product from the point of view of popularization as a discourse type.

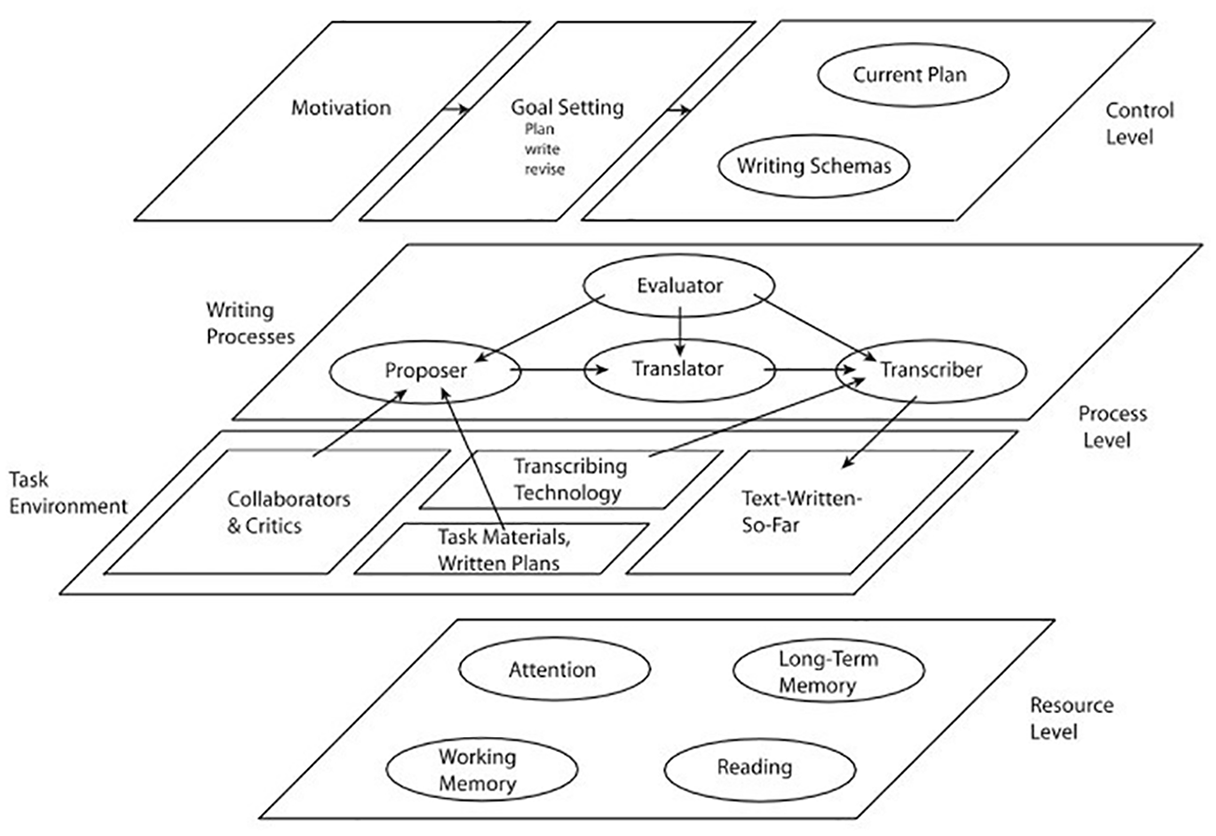

Cognitive Process Theory of Writing

We approach the writing process through a cognitive process lens. Cognitive models propose that writing consists of a number of hierarchical thinking processes and subprocesses that can occur in any order and are usually alternated and interrupted. This alternation in processes is individual for each writer. Flower and Hayes’s first writing model remains the basis for many other models, containing a task environment, the writer’s long-term memory, and the writing process (Flower & Hayes, 1980, 1981; Hayes & Flower, 1980). Over time, revisions to this model have been suggested. Hayes’s (1996) model added motivation, a larger role for working memory, the addition of visual-spatial/linguistic representations, and a different organization of the cognitive processes. Chenoweth and Hayes’s (2001) model contained a control level with task schema, a process level with internal and external processes, and a resource level. We use Hayes’s (2012) model, which adapts and extends Flower and Hayes’s original model and directly builds on that of Chenoweth and Hayes (2001). Hayes’s (2012) model contains three levels: a control level, a process level, and a resource level. The resource level contains those skills and processes that underlie the writing that takes place on the process level and includes attention, long-term memory, working memory, and reading ability. The process level is split into internal processes through which the writing takes place and the external environment that guides that writing. The activity of writing involves a proposer that formulates ideas, a translator that forms ideas into language, a transcriber that produces the language onto a medium, and an evaluator that revises both the proposed and the transcribed language. These internal processes interact with the social and technical environment in which writing takes place, which consists of the text that is already written, the writing task, transcribing technology, and other people reading the text. At the control level, motivation refers to writers’ willingness to write and its impact their goal setting. The control level also contains a current plan, which is a representation of the text that needs to be produced and writing schemas that contain plans about how to perform writing processes (Chenoweth & Hayes, 2001; Hayes, 2012). Hayes’s (2012) model is shown in Figure 1.

Hayes (2012) cognitive writing model.

For Flower and Hayes, composition is a goal-directed thinking process, in that writers create their own goals and change them according to what they have learned in the process of writing, and because they have to adjust to multiple constraints and demands, primarily (a) long-term memory (i.e., knowledge about the topic, the audience, and stored writing plans) and (b) the task environment in the form of the already produced text and the rhetorical problem. In other words, the text that the writer produces needs to fit with the goal of the communication. These constraints not only influence what is written (the product) but also influence how this text is produced (the process) (Flower & Hayes, 1980, 1981; Hayes & Flower, 1980). Planning is a way to handle these constraints and to lower cognitive load. Planning relates to writing activities, to the content of the text, and to the composition (Flower & Hayes, 1980). Experienced writers pay more attention to planning: they use a “knowledge transforming strategy” (p. 186) to access their domain knowledge and consider it in relation to the task constraints, while novice writers use a “knowledge telling strategy” (Alamargot & Changuoy, 2001, p. 186). Experienced writers can distinguish between the planning and translating stages, while novices are often not able to do so. In the reviewing stage, novice writers may only perform low-level revisions while experienced writers revise more often, on a conceptual level, and throughout the writing process (Alamargot & Changuoy, 2001).

What all models have in common is that they show the different components of the writing process as well as the interaction between them. No model supposes a specific order of components: indeed, these models propose that writers move between different (sub-)processes, that the process of producing text is iterative, and that writing looks different for individual writers.

Popularization Discourse as a Genre

We approach the writing product through the lens of popularization as a discourse type. Popularization discourse, at its core, is a discourse type that contains all texts about academic research from all academic fields that are written for a nonexpert audience. A nonexpert audience is a broad term that may be characterized in different ways depending on the communicative setting. The audience of popularization has previously been characterized as that of laypersons (Brownell et al., 2013a, 2013b; Donohue et al., 2021; Fahnestock, 1986; Nwogu, 1991), or as nontechnical (Baram-Tsabari & Lewenstein, 2013), outside of academia (Druschke et al., 2022), noninitiated (Fahnestock, 1986), nonspecialist (Gotti, 2014; Motta-Roth & Scherer, 2016), wider (Hyland, 2010), or diverse (Luzón, 2013).

Writers construct popularization discourse through the processes of reformulation and recontextualization. In the process of recontextualization, academic knowledge is presented in a new context suitable for nonexperts; it thus entails reframing on a conceptual level to reimagine research findings within an everyday setting (Bondi et al., 2013; Calsamiglia & Van Dijk, 2004; Ciapuscio, 2003; Gotti, 2014). Reformulation works on a word-level to create language that engages the intended audience (Gotti, 2014). These two processes lead to textual elements that are pertinent to popularization discourse. Studies of these elements have examined strategies that create proximity to the reader (Hyland, 2010) or adapt the information to the target audience (Luzón, 2013), the rhetorical organization of the moves and, thus, the order of textual components (Motta-Roth & dos Santos Lovato, 2009; Nwogu, 1991), and, more generally, the popularization strategies used (August et al., 2020; Giannoni, 2008).

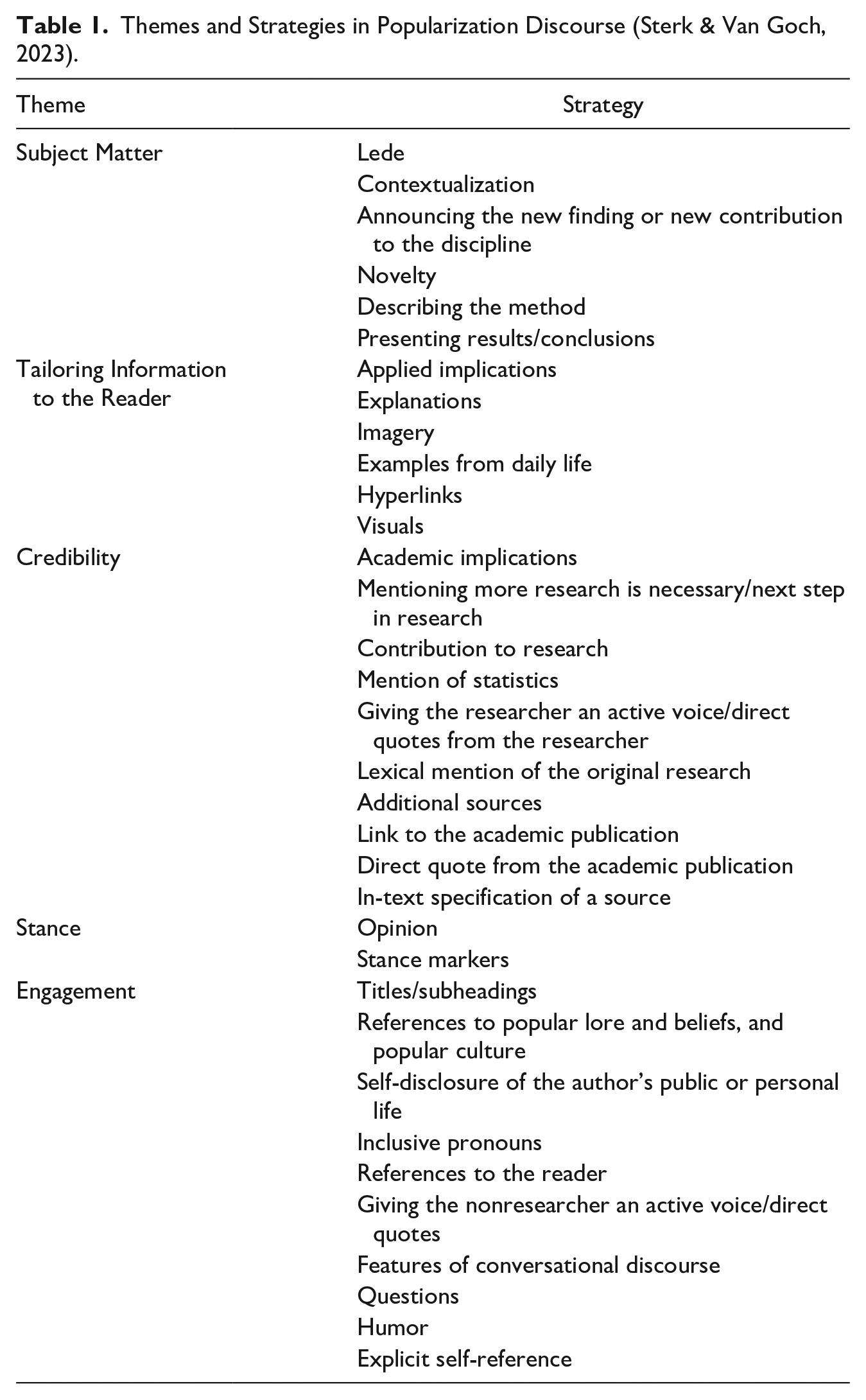

We focus on the framework for popularization strategies proposed by Sterk and Van Goch (2023); see Table 1. This framework is constructed to be usable in all disciplinary, as well as multidisciplinary and interdisciplinary, fields. That is why, for example, the concepts research and researcher are used, and not science and scientist. The framework consists of 34 strategies that are encompassed in five themes. It supposes that strategies can be used at any point in popularization texts; thus, it does not suppose a specific order of strategies or moves. The theme Subject Matter contains strategies that give insight into the content of the academic research. Tailoring Information to the Reader refers to strategies that enable readers to position new academic findings in a framework of their own lives. Credibility contains those strategies that are concerned with constructing the credibility of the research and the text. Stance encompasses strategies concerned with opinions. Lastly, Engagement includes those strategies that increase the readability of the text (Sterk & Van Goch, 2023).

Themes and Strategies in Popularization Discourse (Sterk & Van Goch, 2023).

Research Question

Our aim is to gather data about the popularization writing skills of interdisciplinary students who have taken part in a 3-year undergraduate program that consistently includes explicit training in academic discourse yet offers little to no explicit instruction in popularization discourse. The overarching research question was:

What do popularization writing skills look like for interdisciplinary students at the start and end of a 3-year undergraduate university program?

This research question was answered through two subquestions:

1. How do interdisciplinary students construct popularization discourse at the end of their third year of undergraduate education?

2. What is the quality of the popularization discourse constructed by interdisciplinary students in their first year versus their third year of undergraduate education?

Methodology

Participants, Procedure, and Materials

In this study, undergraduate students from an interdisciplinary Dutch university program participated. The program consists of (at least) 180 EC (24 courses). Every student completes their own individualized program, containing disciplinary specialization in the form of a major of at least 67.5 EC (9 courses) and a maximum of 105 EC (14 courses), combined with 30 EC (4 courses) general education and 30 EC (4 courses) interdisciplinary research training.

Data were gathered on two occasions. In 2018, first-year students were asked to participate in a baseline assessment of popularization writing (for a full report of that study, see Sterk et al., 2022). In 2021, the same students, now at the end of their third year of training, were asked to participate in a second study. Of the 140 students who participated in the first study, 9 responded (all female, mean age = 22.46, SD = 1.13, range = 20-26). Their data from the first-year baseline assessment were included in this study, and additional data were gathered.

Before both data gatherings, participants were asked to read an academic study on the effects of night-time social media use on the mental health of teens (Woods & Scott, 2016). During data gathering, participants had an hour to complete a writing assignment, which consisted of writing a newspaper article detailing the study. It had to be within 400 words, written in Dutch, and publishable in the science section of a quality Dutch newspaper. The proposed target audience was readers interested in science who have not necessarily attended higher education. The goal was to write a text that would interest these readers and present the information in an understandable way. Participants could access the source text and could use the Internet during writing. Both the source text and the writing assignment were identical in both data gatherings, to enable the best circumstances for comparing results longitudinally.

No training in popularization discourse or in journalistic writing was provided to participants before the first data gathering, as these students had just started their academic training. At the second data gathering, six participants reported receiving some training in popularization, though these were never dedicated popularization courses.

In the first data gathering in the fall of 2018, data were collected in class. Only the writing product was gathered; no data was gathered about the writing process. Prior to writing, participants were asked to fill in a questionnaire with items on a 5-point Likert scale pertaining to their preparation for the writing assignment and their interest in the topic of the text. Participants received a briefing on the goal of the research and the writing assignment from the teachers delivering their seminars. The second data gathering took place in the spring of 2021, during which COVID-19 restrictions were effective in the Netherlands. Data could not be gathered on-site at the university’s laboratories, and the research could not be postponed because of participants graduating; therefore, the study was conducted completely online.

A virtual private server in the cloud running Windows was used, with Windows Remote Desktop utilized to access the server remotely. Myrtille (2021) provided a web-based user interface so that participants could use a browser to access the virtual server. A custom web page was created on top of Myrtille to further streamline the login process for participants. Participants could consult the original text in Acrobat Reader and look up information online through Google Chrome. Inputlog (2021), in combination with Microsoft Word, was used to keep track of the writing process. Inputlog is keystroke logging software that records all keyboard and mouse input as well as program switches and can be used for a descriptive analysis of the writing process (Leijten & Van Waes, 2013). A screen recording was made using Activepresenter. Lastly, TeamViewer was used to remotely access the participants’ screens, should they need technical help during data gathering. The briefing was delivered by the first author through Microsoft Teams and included an explanation about the aim of the study, the writing task, the technical aspects of logging in, and the software available on the remote desktop. Participants were also sent a manual that detailed how to handle the software. Participants were first asked to go to Qualtrics and fill in a short questionnaire with items pertaining to their received education and their experiences completing writing assignments in both popularization discourse and academic discourse. Next, they could log in on the virtual machine and write their newspaper article. Immediately following the data gathering, participants were debriefed in an online call through Microsoft Teams. This debrief included a short interview with questions about their experience during data gathering, their text and assignment recall, and their memories of participating in the first data gathering.

Research data were collected in accordance with the guidelines of and after approval from the Faculty Ethics Assessment Committee Humanities (FEtC-H) at Utrecht University. In 2018, participants did not receive compensation for their participation; in 2021, they received a 20-euro voucher.

Analysis and Coding

We determined students’ writing skills by analyzing multiple components of Hayes’s (2012) writing model. Multiple observation methods (see Leijten & Van Waes, 2013) were used: synchronous indirect research methods to observe writing processes at the process level and asynchronous indirect research methods to observe genre knowledge displayed at the control level. Thus, we considered both the writing process as well as the writing product as indirect measures of popularization writing skills.

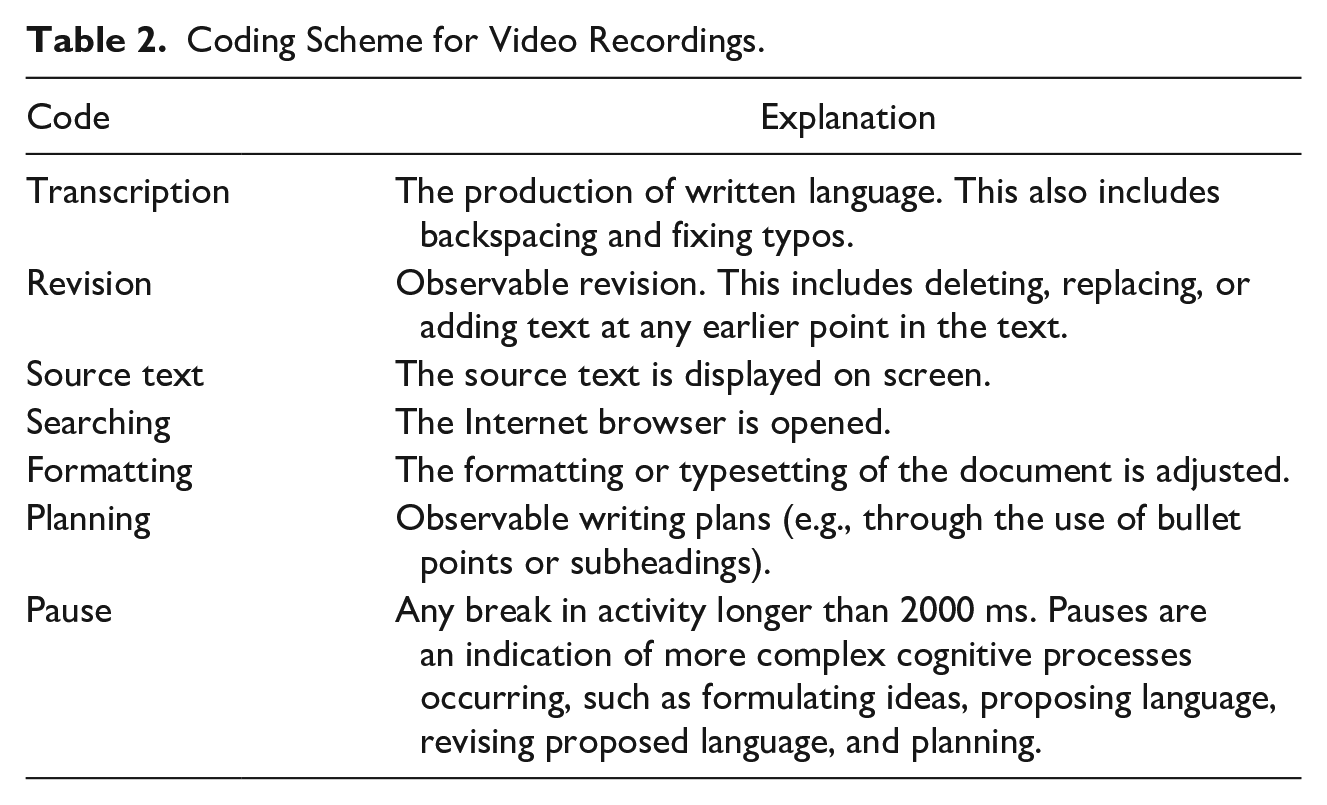

At the process level, Inputlog (Leijten & Van Waes, 2013) gave an overview of time spent per activity and created an XML file with timestamps of the entire writing process and pauses. Screen recordings from Activepresenter were coded manually using a coding scheme that combined Hayes’s (2012) conceptualization of the writing process with codes used by Van Hout et al.’s (2011) study into news writing production. Table 2 shows the seven codes: transcription, revision, source text, searching, formatting, planning, and pauses. Transcription refers to the transcriber function in Hayes’s (2012) model, that is, the production of language onto a medium, while revision refers to the evaluator function that observes and evaluates transcribed language. The proposer that formulates ideas, the translator that forms language, and the part of the evaluator that revises proposed language are internal processes. These processes occur during pauses and, therefore, cannot be coded directly. The source text connects to the task materials at the process level, while searching and formatting are connected to the transcribing technology. While planning is not a process in Hayes’s (2012) model (but rather a specialized form of writing), we included planning because planning practices are connected to goal setting, and the current plan and writing schemas at the control level. In our analysis, we only coded written, and thus observable, plans as planning.

Coding Scheme for Video Recordings.

In keystroke logging research, pause times are indicative of cognitive effort, with longer pauses indicating higher cognitive processes (Leijten & Van Waes, 2013; Van Waes & Leijten, 2015; Wengelin, 2006). For the analysis of writing processes, a fixed pause threshold is typically chosen, for which 2 seconds (2000 ms) is often selected (Van Waes & Leijten, 2015). In this study, a pause threshold of 2000 ms was used to indicate the possible occurrence of more complex cognitive processes that are not directly observable through on-screen activity. This includes the activities of the proposer, the translator, and the evaluator, as well as planning practices and reading in the source text.

Inputlog produces an XML log file for each participant, in which each keystroke logging event is recorded, including pause times between actions. From these files, for each participant, all pauses of 2000ms and above and their corresponding time stamps were filtered. Then, the videos were coded manually to log the start and end time of each observable activity. The time stamps from the XML file and from the manual coding were then combined in Excel to integrate information about activities and information about pauses. Another round of video analysis was then performed to check if the coding was correct. All videos were coded by the first author. In Excel, a macro was used to visualize this data into a temporal bar chart. There were two exceptions: for Participants 4 and 8, Inputlog failed during data gathering, and all data beyond 08:01 (Participant 4) and 44:32 (Participant 8) was missing. For these two participants, the coding of the pauses beyond the cut-off point of the Inputlog-file was determined solely using the screen recording.

Text analysis and rubric application were used to analyze the writing product. The framework for analysis of popularization strategies (Sterk & Van Goch, 2023), shown in Table 1, was used to analyze the textual elements of the writing product. This analysis gave insight into participants’ genre knowledge of popularization discourse. In Hayes’s (2012) model, this relates to writing schema knowledge from the control level. All texts were coded by the first author.

Text quality was analyzed through a rubric (Supplementary Materials 1) that was developed based on five examples from the literature about popularization writing assessment (Moni et al., 2007; Poronnik & Moni, 2006; Rakedzon & Baram-Tsabari, 2017a, 2017b; Yuen & Sawatdeenarunat, 2020). The rubric was tested twice before being used in this research. This analysis gave insight into participants’ genre knowledge of popularization discourse, which relates to writing schema knowledge from the control level in Hayes’s (2012) model. All texts were graded by the first and second author, whose scores were averaged. To assess interrater reliability, the intraclass correlation coefficient (ICC) was calculated using a two-way mixed effects model with average measures. The ICC value was .58 (95% CI –.87, .90), indicating moderate reliability among raters.

Findings and Interpretation

Participant Characteristics

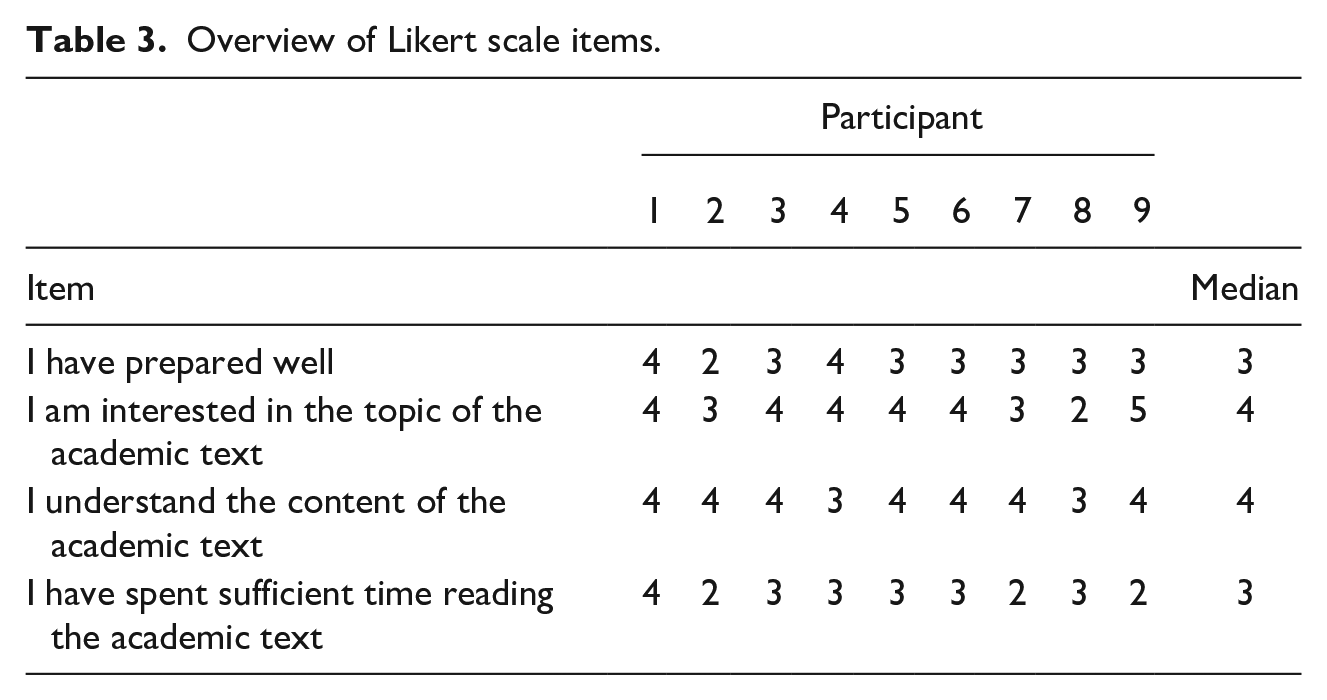

The results of the questionnaire from the first-year data gathering are presented in Table 3. The overall preparation for the writing task can be classified as average, with time spent reading the text as below-sufficient to sufficient. Participants reported an above-average interest in the topic of the academic text (except for Participant 8), as well as an understanding of the paper’s content.

Overview of Likert scale items.

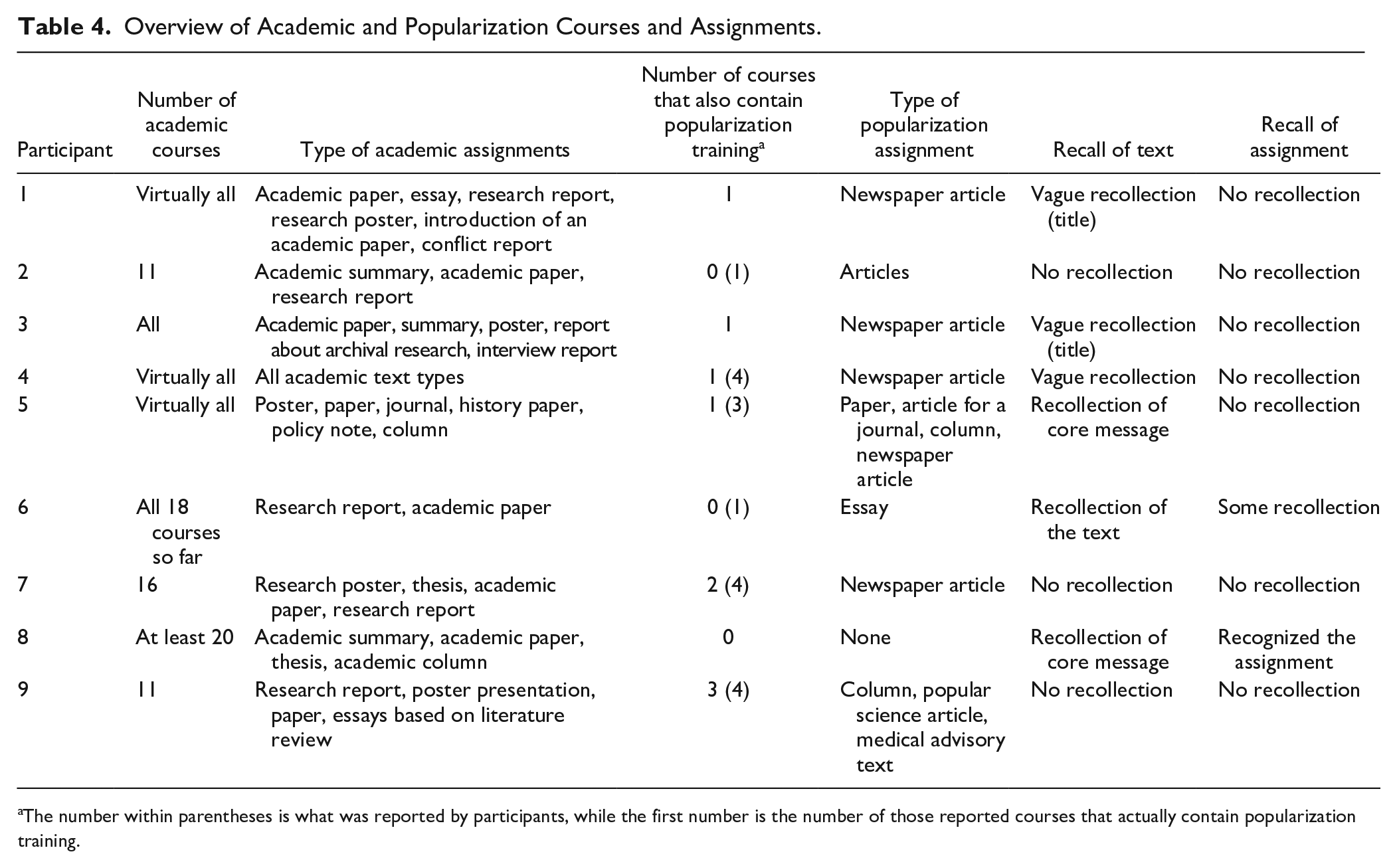

The results of the third-year questionnaire and debrief interview are presented in Table 4. In the questionnaire, third-year participants reported taking between zero and four courses that teach popularization writing in addition to academic training. Participants worked on between zero and three popularization writing assignments, most often newspaper articles, essays, and popularized articles. Four participants worked on academic writing skills in every course of their undergraduate program, the five other students named between 11 and 20 courses. Writing assignments ranged from papers, essays, posters, and summaries to research reports and theses. Although some participants received some training in popularization, it was always in tandem with and interspersed between training in academic writing. In terms of memory of the first data collection, there was little recollection of the writing assignment and some minor recollection of the academic paper.

Overview of Academic and Popularization Courses and Assignments.

The number within parentheses is what was reported by participants, while the first number is the number of those reported courses that actually contain popularization training.

Writing at the process level

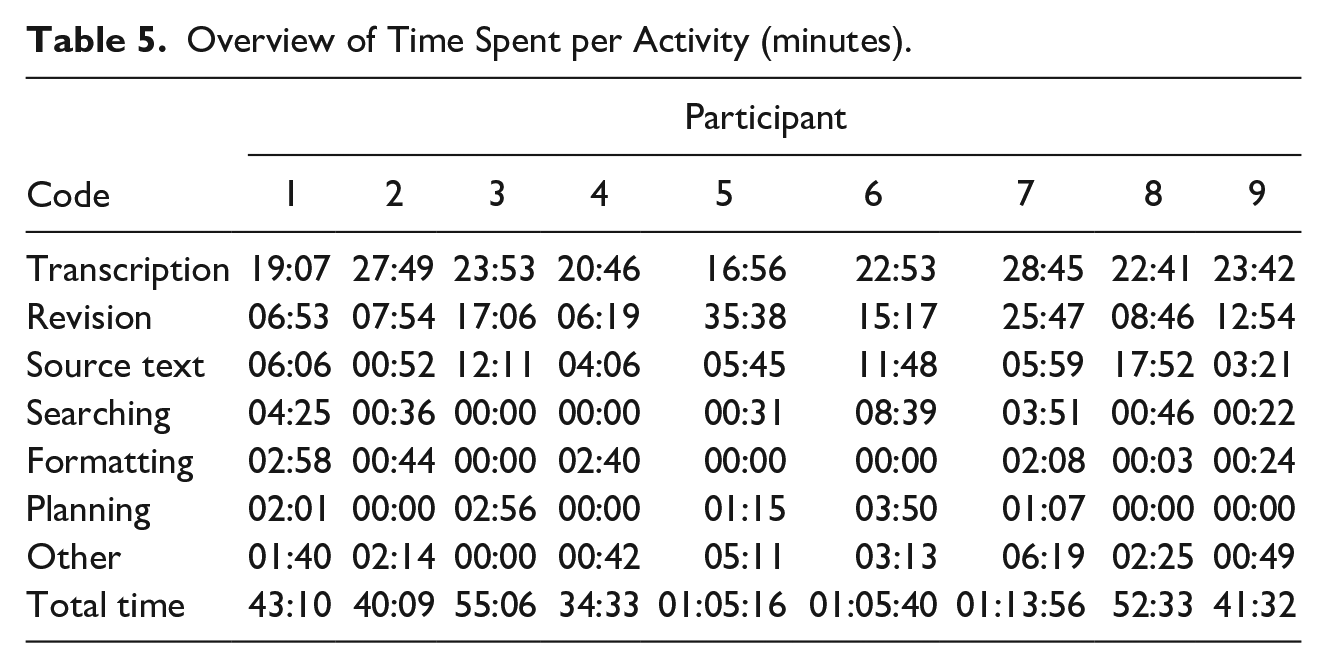

The first subquestion asked how students construct popularization discourse at the end of their third year of undergraduate education. An overview of time spent on each activity is shown in Table 5.

Overview of Time Spent per Activity (minutes).

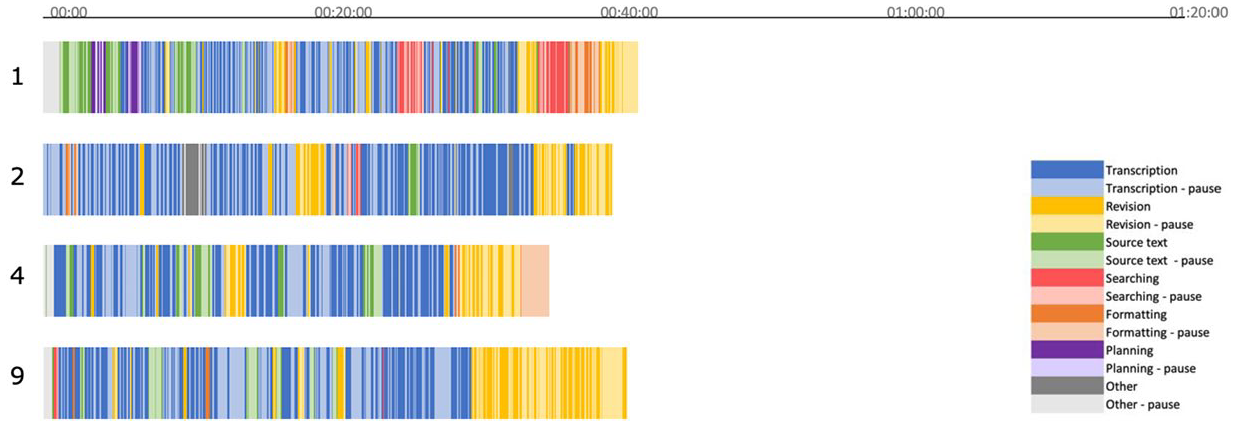

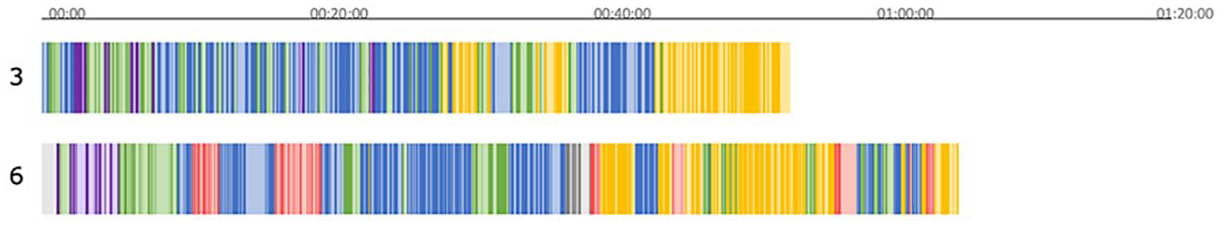

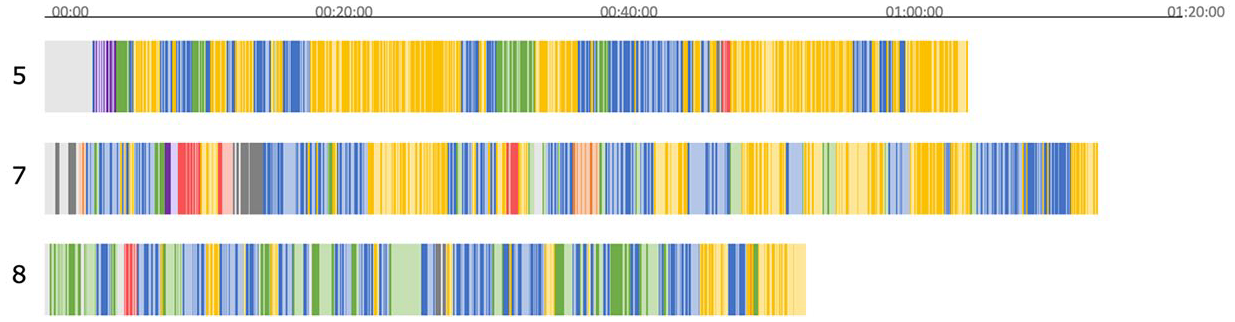

Figures 2, 3 and 4 show the writing process through temporal bar charts. For some participants, writing was a linear process, which is shown in Figure 2. Figure 3 shows participants with revisions in the second half of writing, and Figure 4 shows participants who edited throughout. Overall, some participants only perform low-level revisions, such as fixing typos (1, 2, 4, 6, 8, 9), while others combined transcription and revision throughout the process (5 and 7) or performed high-level revisions at the end of the process (3). Only five participants exhibited any observable text planning (1, 3, 5, 6, and 7), which was always in the form of a rudimentary text structure. In some cases, this text structure was indeed used to structure the text, while in others it was abandoned during writing. The internet was used by seven participants (except 3 and 4), though by some more extensively than by others; to look up information about social media insecurity, to read a science journalism text from a Dutch broadcasting agency, or to search for a picture of a teen using a phone at night. Some read in the source text often or for long periods (3, 6, and 8); others did so minimally (2) or only at the start of the writing process (1). Pauses were interspersed between activities, with some participants showing longer pausing periods (4, 8, and 9) or long periods before the start (1, 4, 5, 6, and 9).

Temporal bar charts for participants with a linear writing process.

Temporal bar charts for participants with revisions in the second half of the writing process.

Temporal bar charts for participants with revisions throughout.

Genre Knowledge Displayed at the Control Level

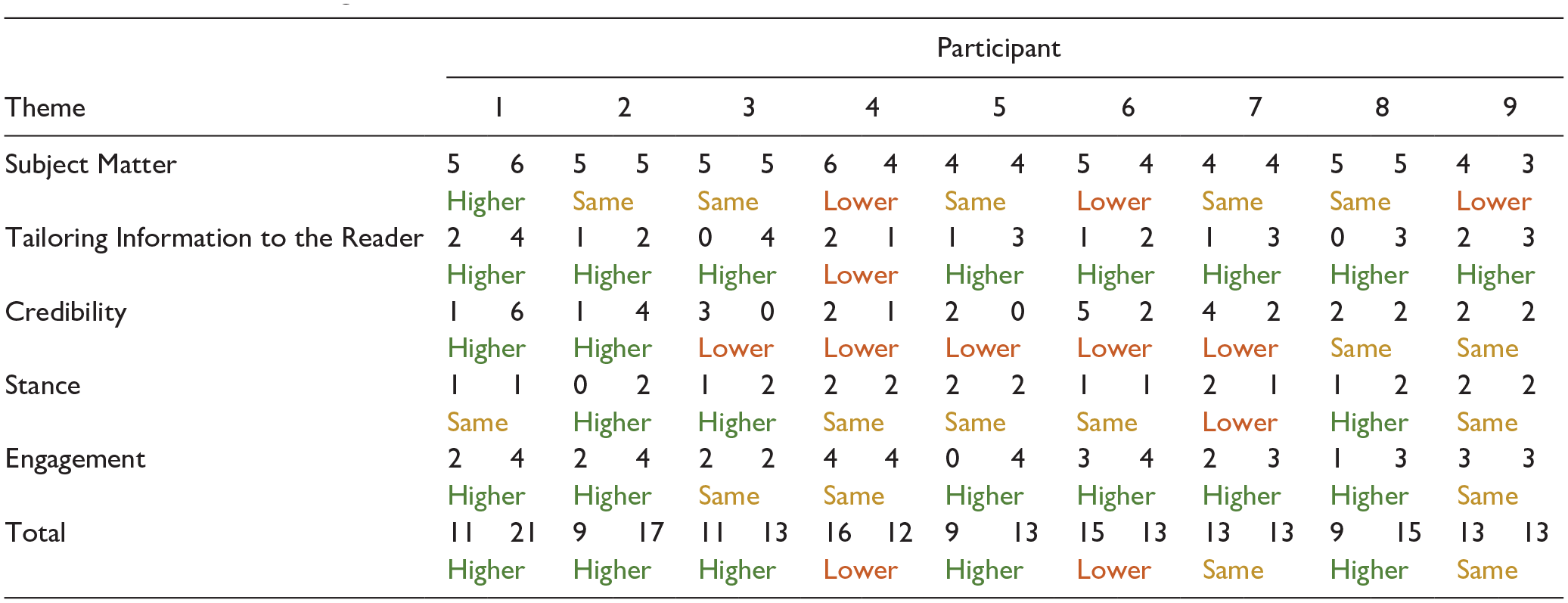

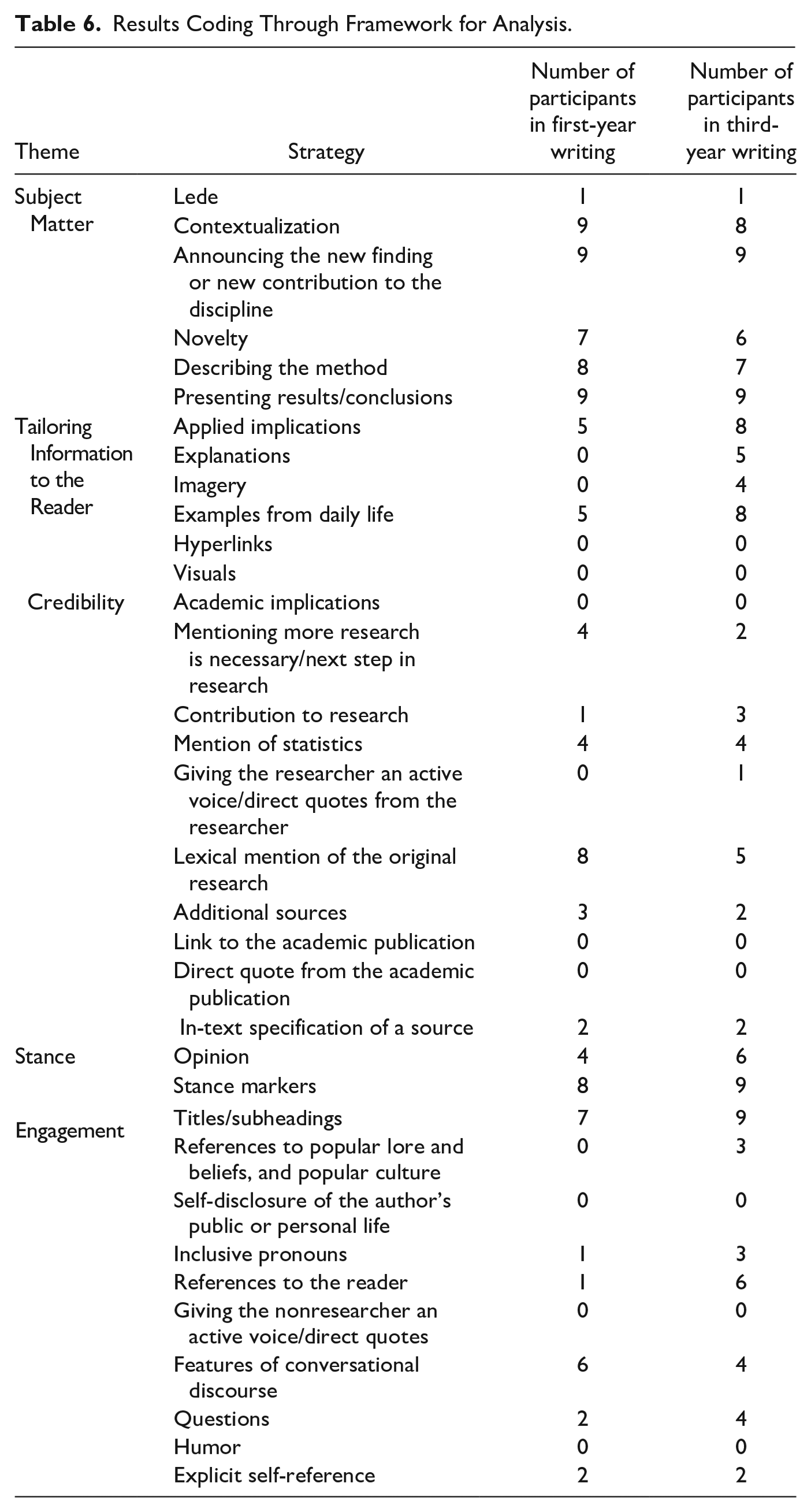

The second subquestion asked what the quality of popularization discourse is that is constructed by students in their first year versus their third year of undergraduate education. Table 6 compares the frequency counts of popularization discourse strategies used by these students in their first and third year.

Results Coding Through Framework for Analysis.

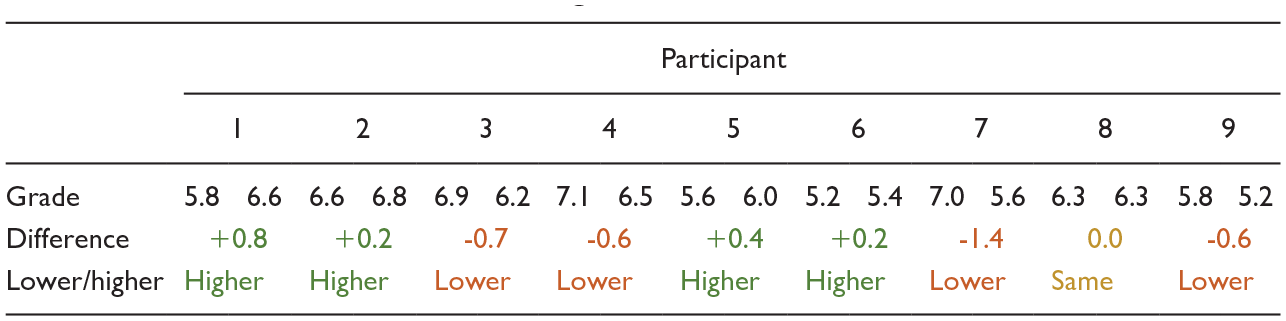

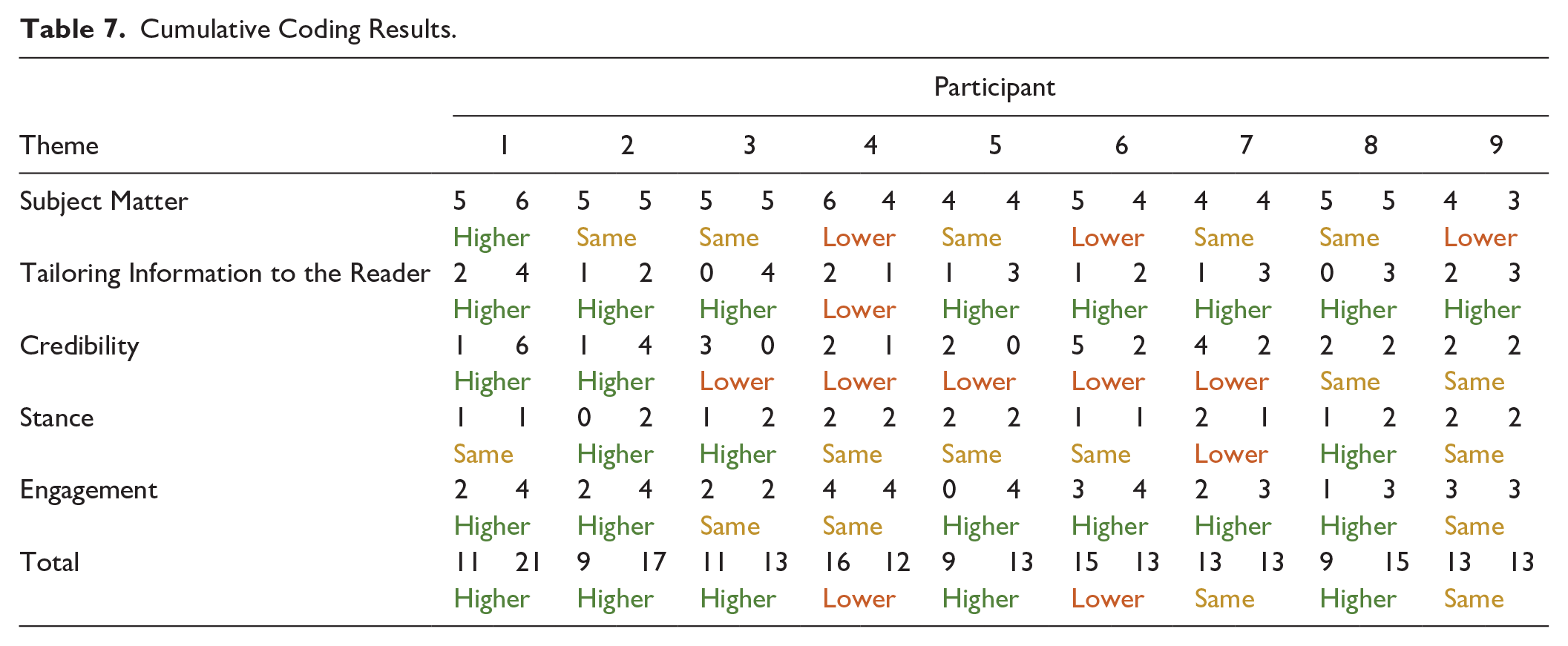

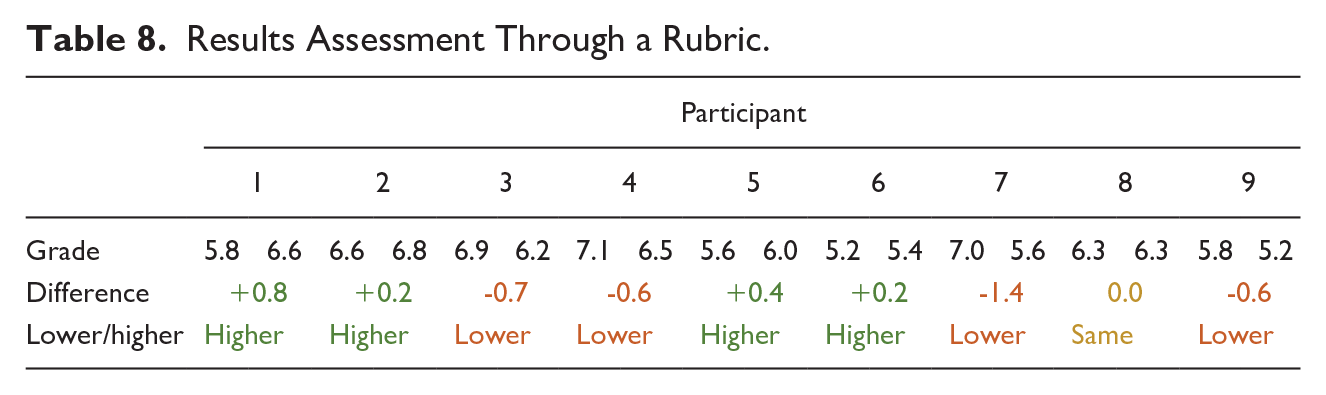

Table 7 shows the aggregated scores per theme, coded either higher, same, or lower for third-year writing compared with first-year writing. Table 8 shows the results for the assessment of popularization texts using the rubric for popularization discourse.

Cumulative Coding Results.

Results Assessment Through a Rubric.

Most participants scored an equal or higher number of strategies in third-year writing, but numbers were relatively low compared with the available strategies. Looking at the scores per theme, third-year students’ scores were generally higher for Tailoring Information to the Reader and Engagement, Stance showed a mix of scores, while Subject Matter and Credibility scores were mostly the same or lower. While students seemed to have learned or decided to use more strategies to adapt information to the audience or boost reader engagement, they seemed to have unlearned, forgotten about, or decided to use fewer strategies related to the content of the academic text or that underpin its credibility. Three years of academic training would likely have improved students’ academic writing skills and discourse genre knowledge. Participants should, thus, have used more strategies more closely associated with this genre, such as novelty, academic implications, mentioning that more research is necessary, contribution to research, or a direct quote from the academic publication, which are all part of the themes Subject Matter and Credibility. It would also imply that strategies not often used in academic discourse, mostly those within Tailoring Information to the Reader and Engagement, would be used less. Thus, we would have expected to see the reverse of the current results. It should be noted, however, that although the use of strategies in Subject Matter decreased, it was still high, and while the use of Engagement increased, it was still low.

The rubric grades showed mixed results. Overall, grades were relatively low, because the rubric was designed for a high quality of popularization discourse. Changes in grades were minimal and mostly contained within one point. These grades suggested little change in writing quality, and indeed four participants seemed to have decreased in skill level.

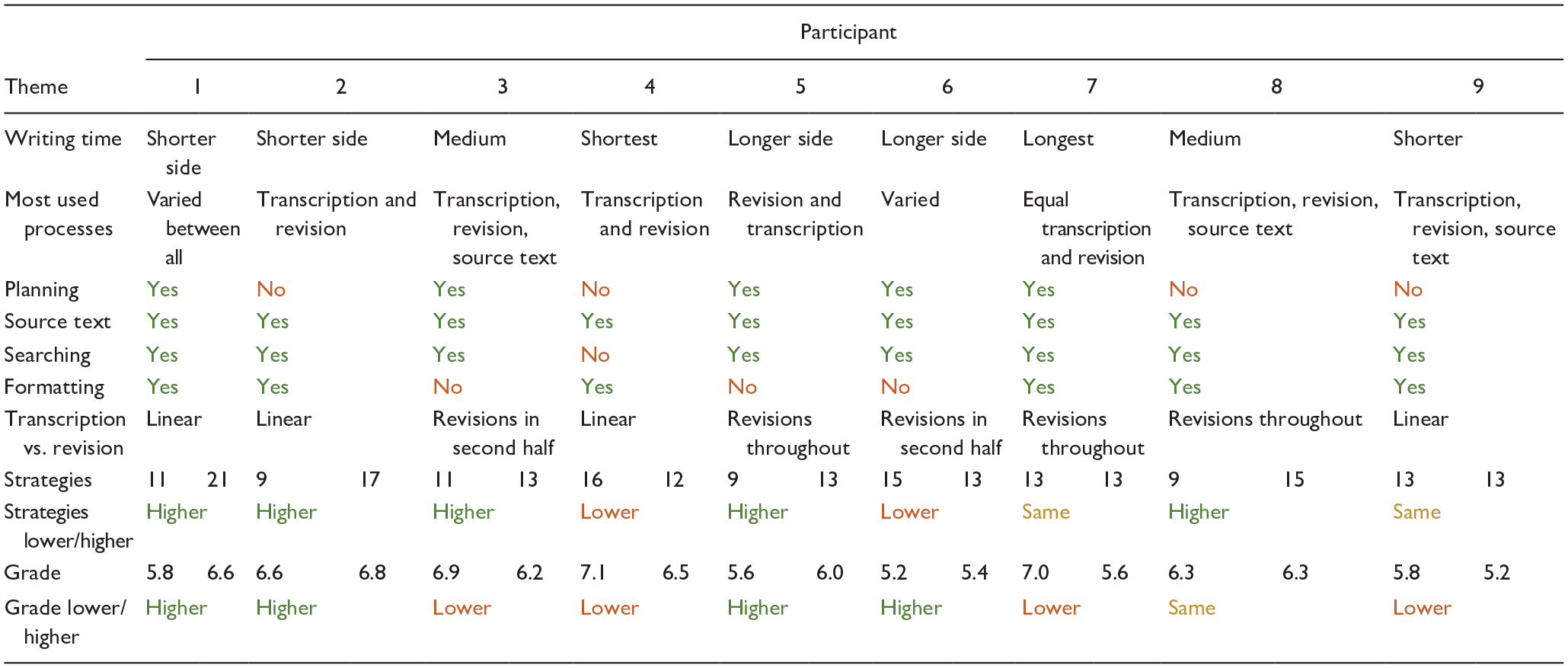

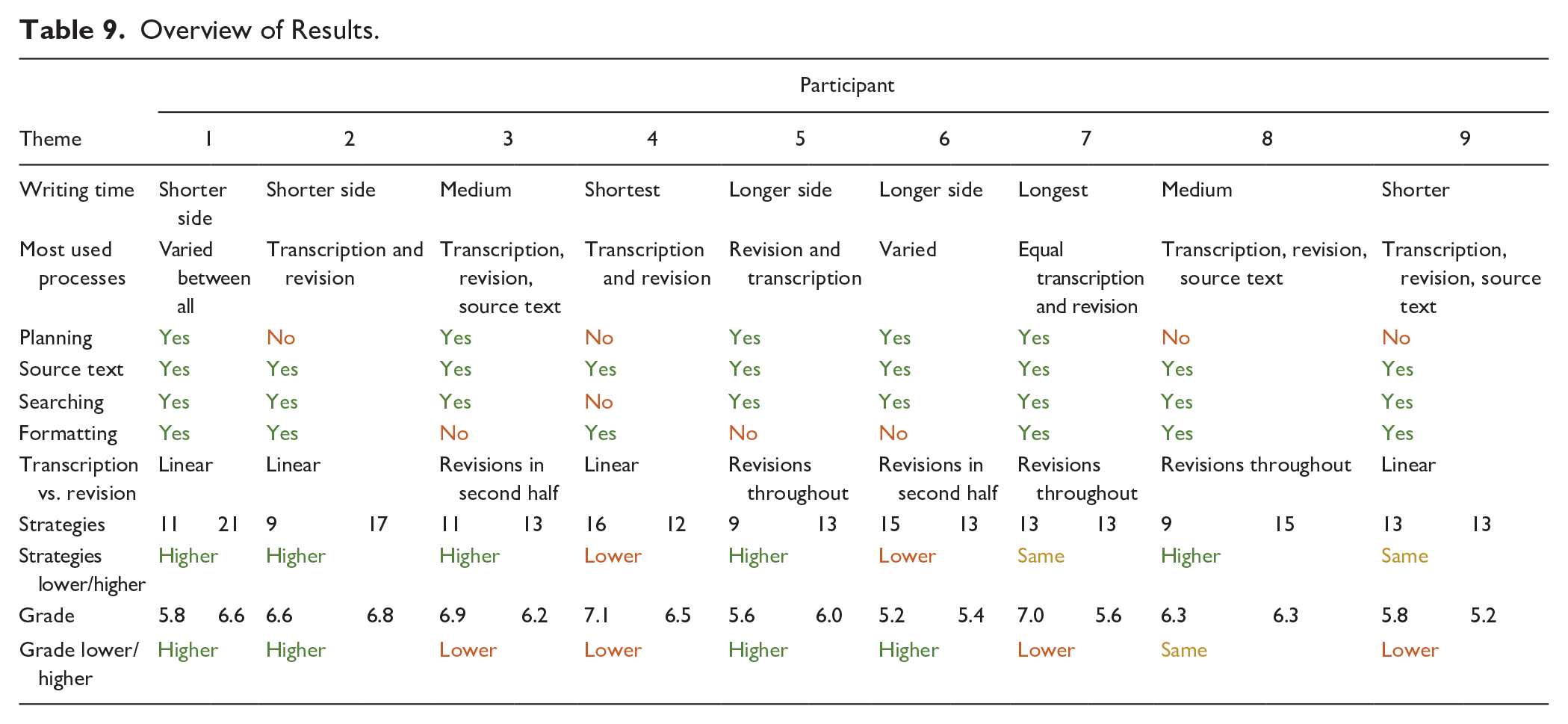

Text Production Versus Text Quality

Table 9 shows a general overview of the results per participant, in which the multiple data sources about the writing process and the writing product are combined. We investigated multiple elements of the writing process as described in Hayes’s (2012) model. The transcription of text connected to the transcriber in the writing processes sub-level of the process level. Overall, participants spent most of their time transcribing text, except for Participant 5, who revised more. The longer writing times of Participants 5, 6, and 7 led to the lowest grades (bar one). As all participants spent most time on the same processes of transcription and revision, we cannot determine a connection between the most-used processes and writing quality. In the writing model, revision was shown as the evaluator as part of the writing process and as the text-written-so-far as part of the task environment. A linear versus a non-linear transcription-revision process might have affected strategy use and grades, with two of the four participants who wrote linearly scoring the highest number of strategies and the highest grades (1 and 2). In the other seven participants, no clear connection between linear versus nonlinear writing, strategies, and grades was found. In the writing model, planning practices were connected to goal setting and to the current plan and writing schemas at the control level. Five participants exhibited observable planning practices: they made an overview of subheadings or bullet points. Planning was abandoned during writing, and time spent on planning was low, between 01:07 and 02:56 min. Planning did not affect strategy use or scores. The source text connected to the task materials at the process level of the writing model. All participants opened the source text, but some more frequently and longer (3, 5, 6, 7, and 8) than others. Time spent on the source text did not affect strategy numbers or grades. In the model, searching online and formatting were connected to transcribing technology. One participant was not able to paste a picture; software limitations thus prevented the use of a strategy.

Overview of Results.

Writing schema knowledge from the control level was analyzed in the form of popularization strategies and quality of the writing product. Higher strategy use was not consistent with higher grades. The first-year text of Participants 2, 5, and 9 contained nine strategies and, respectively, scored 6.6, 5.6, and 6.3 of 10. Yet Participant 1’s third-year text used 21 strategies and scored 6.6 of 10. Participant 9’s third-year text scored the lowest (5.2 out of 10), but they did use 13 strategies. For four participants (1, 2, 4, and 5), the increase or decrease in strategies and scores corresponded; in the other five participants (3, 6, 7, 8, and 9), strategies and scores did not.

Combining self-reported information about the amount of popularization training with text quality did not provide a clear conclusion. Both participants who partook in multiple popularization courses (7 and 9) scored the same number of strategies and received a lower grade for their third-year text. Of the four participants who took part in explicit popularization writing education in the fourth core course of their interdisciplinary program (1, 3, 4, and 7), only one scored higher in both strategies and grade (1); the others scored the same or lower in at least one of the two. Of the three participants who had some recollection of the first data analysis, one scored higher in grade and strategies (5), one scored lower in strategies and higher in grade (6), and one scored a higher number of strategies but the same grade (8).

Discussion

We reported on a longitudinal study into popularization discourse construction and text quality in undergraduate interdisciplinary students. We used the theoretical lenses of writing as a cognitive process and of popularization as a discourse constructed through textual strategies. We approached the writing process and the writing product as indirect measures of popularization writing skills.

Empirical Findings Versus the Literature

Cognitive theories of writing (see Flower & Hayes, 1980, 1981; Hayes & Flower, 1980) propose that different thinking (sub)processes are alternated during the writing process, and that this alternation of processes is operated differently by each writer. This study supports those ideas; each participant switched among processes in an individualized way. Yet the process does not appear to be a predictor for quality: participants who switched between processes more or who used more processes did not unanimously score higher in the number of strategies or grades.

Defining the rhetorical problem is a major component of the writing process, as a writer only solves the problem that they define for themselves. An inaccurate representation of the rhetorical problem thus has major consequences (Flower & Hayes, 1981). Indeed, researchers who are experienced science communicators perform task analysis before they start writing, which includes planning practices like considering the purpose of the text and making a text structure (Negretti et al., 2023). Participants did not accurately define the rhetorical problem, as was seen through a lack of the inverted pyramid structure, the low scores in strategies in the themes Tailoring Information to the Reader and Engagement, a lack of observable planning (see also below), and a lack of improvement in writing quality over time. This points to a lack of writing schema knowledge and thus genre knowledge of popularization discourse.

Experienced writers spend more time on text production than novice writers; they also pay more attention to planning and do so in the context of task constraints (Alamargot & Changuoy, 2001). Planning is an important element in text construction, with Flower and Hayes (1980) marking it as a way to handle cognitive constraint. Observable planning was not a major factor in the writing process of any participant. Those who did use it deployed it minimally and at the start of writing. Given the extensive attention to planning in participants’ four core courses in interdisciplinary research skills—with more attention most likely paid to it in disciplinary academic skills training—this is surprising. We were only able to code observable planning; thus, we cannot draw any conclusions about any internal planning.

Skilled writers revise more often and throughout the writing process, and they revise on a conceptual level, while novice writers often only perform low-level revisions (Alamargot & Changuoy, 2001). Most participants only performed minor revisions, often in the final minutes of writing. Yet the two participants who did perform higher-order revisions did not outperform others. The amount, distribution, and depth of revision were not reflected in writing quality.

Multiple sources describe those strategies and moves that form popularization discourse (August et al., 2020; Giannoni, 2008; Hyland, 2010; Luzón, 2013; Motta-Roth & dos Santos Lovato, 2009; Nwogu, 1991; Sterk & Van Goch, 2023). Though these sources do not specify that the use of more of these strategies would lead to a better-scoring popularization text, a higher use of strategies could lead to a higher quality of popularization discourse. Yet this is not what we observed. Strategy use, even those strategies that are textual components consistent with the genre, did not automatically increase text quality.

Limitations

This is a case study that worked with nine participants; it can give insight into participants’ writing processes and writing development, but the results cannot be generalized to the entire cohort of students. We believe that the fatigue that students experienced regarding computers and being online during the COVID-19 pandemic might have contributed to the low turnout.

We used synchronous and asynchronous indirect research methods to observe student writing. We only measured the expression of the cognitive process in the form of typing and produced text. We were only able to investigate the transcriber and part of the evaluator at the process level of Hayes’s (2012) writing model. We were unable to analyze the internal processes that occur during pauses. We could have supplemented the setup with a think-aloud protocol (synchronous direct research method) or a retrospective think-aloud protocol (asynchronous direct research method) to gather information about choices made during the writing process (see Leijten & Van Waes, 2013).

In the very long pauses that were captured, participants might have performed writing-related tasks, but they might also have grabbed some coffee or were scrolling on their phones. We cannot make assumptions about participants’ behavior during pauses. Ideally, we would have performed the study on-site and combined keystroke logging data with eye tracking data (see, for example, de Smet et al., 2018) to get a better idea of the activities displayed during pauses.

Students read the source text ahead of the research; thus, not every participant might have prepared as thoroughly, and the reading process was not captured fully. We used the same source text and writing assignment in both data gatherings. Though testing took place three years apart and the reported recollection in students was low, this might still have influenced the data.

We cannot determine if students would write this way in other settings. Factors that potentially influenced the data were participants’ awareness of the screen recording and the monetary compensation. Students had to write their text in one sitting but might be used to writing in short bursts or producing text while stressing about a deadline. We also observed that some participants were more accurate and quicker at typing than others. Asking participants to first take part in a copy task in Inputlog would have given us a measure of typing skills; we could have delineated typing from text production skills (see Van Waes et al., 2021).

The framework for analysis of popularization strategies was used to characterize the student writing purely analytical, not evaluative; we showed if strategies were used but did not evaluate their quality or effectiveness. We only indicated if a strategy was used, not how often. The findings from the framework did not offer insight into why participants used a specific strategy. We have assumed that if a strategy was not used, this was due to a lack of genre knowledge or writing skill.

Students who took part in the study each had their own individualized study program, as is the nature of their interdisciplinary training, except for four compulsory courses (30 EC) in interdisciplinary research methodology. No specific claims about the effect of training on displayed writing skills can be made—we can only approach the program in its entirety as an influencing factor.

Conclusion

In this longitudinal study, we researched interdisciplinary students’ popularization writing skills both at the start of their first year and at the end of their third year of training in an undergraduate program. The writing process is highly individualized, with some participants switching between processes and others writing their text linearly. Participants generally show very little interaction with the text-written-so-far, as revisions are mainly low-level and limited to the end of the writing process. At the control level, participants both during their first year and third year lack genre knowledge about popularization discourse, and in their third-year writing show a lack of writing schema knowledge or writing schema execution. Thus, although the writing process is highly individualized, the writing products are similar in terms of quality and display of popularization discourse knowledge, with students not improving their writing skills over time.

Impact on Practice

We have shown that popularization skills do not improve in an environment focusing primarily on academic discourse training. Multiple forms of educational material have been proposed to teach written popularization skills in the literature. Some are unique to the genre of popularization discourse, such as the use expert perspectives (Brownell et al., 2013b), feedback from local journalists or experts (Heath et al., 2014; O’Keeffe & Bain, 2018), a lecture from a science writer (LaRocca et al., 2016), experiential learning opportunities (Crone et al., 2011), and speaking with community members (Petzold & Dunba, 2018). Experts and journalists might, for example, share with students how they approach the writing process, which connects mainly to the process level of Hayes’s (2012) model, while engaging with community members could help students find an angle for their story, which connects to collaborators in the task environment.

Other educational materials are compatible with academic discourse training, such as explicit teaching of assessment rubrics (Moni et al., 2007; Poronnik & Moni, 2006), formative teacher feedback (Boynton, 2018; Crone et al., 2011; LaRocca et al., 2016; Latimore et al., 2014; Mercer-Mapstone & Kuchel, 2016; Moni et al., 2007; Poronnik & Moni, 2006; Rakedzon & Baram-Tsabari, 2017a), in-class writing (Druschke et al., 2022), and peer review (Brownell et al., 2013b; Crone et al., 2011; Donohue et al., 2021; Druschke et al., 2022; Harrington et al., 2021; Heath et al., 2014; Latimore et al., 2014; Poronnik & Moni, 2006). Peer review and teacher feedback could help students improve their skills through rewriting, which connects to the evaluator at the process level as well as goal setting at the control level in Hayes’s (2012) model. Explicit teaching of rubrics could connect to discourse knowledge, which is part of the current plan at the control level.

Another option is comparison exercises (Boynton, 2018), which requires a combination of academic and popularization discourse training and could place the focus on the similarities and differences between the two genres. What is more, Driscoll et al. (2020) showed that genre awareness, even if its simplistic, has a positive effect on writing development. They promoted fostering metacognitive knowledge in the form of awareness of the writing process and of students’ own skill level (Driscoll et al., 2020), which is a form of explicit reflection on both the process level and resource level of Hayes’s (2012) model. Thus, attention needs to be paid to explicit teaching of popularization as a genre, including genre characteristics, which connects to the current plan at the control level. All in all, the results from our study, in combination with the options in educational materials from the literature, support the need for explicit teaching in popularization discourse.

Supplemental Material

sj-docx-1-wcx-10.1177_07410883251349204 – Supplemental material for Popularization Writing Skills Development: A Longitudinal Case Study of the Writing Process and Writing Outcomes in Nine Undergraduate Interdisciplinary Students

Supplemental material, sj-docx-1-wcx-10.1177_07410883251349204 for Popularization Writing Skills Development: A Longitudinal Case Study of the Writing Process and Writing Outcomes in Nine Undergraduate Interdisciplinary Students by Florentine Marnel Sterk, Merel van Goch, Michael Burke and Iris van der Tuin in Written Communication

Footnotes

Acknowledgements

We would like to thank Niels Ockeloen for his support in the technical setup and in the data visualization of the video analysis. It is thanks to his expertise that we were able to conduct this study entirely online during COVID times. We would also like to thank the nine students that participated in this study.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.