Abstract

This article provides three major contributions to the literature: we provide granular information on the development of student argumentative writing across secondary school; we replicate the MacArthur et al. model of Natural Language Processing (NLP) writing features that predict quality with a younger group of students; and we are able to examine the differences for students across language status. In our study, we sought to find the average levels of text length, cohesion, connectives, syntactic complexity, and word-level complexity in this sample across Grades 7-12 by sex, by English learner status, and for essays scoring above and below the median holistic score. Mean levels of variables by grade suggest a developmental progression with respect to text length, with the text length increasing with grade level, but the other variables in the model were fairly stable. Sex did not seem to affect the model in meaningful ways beyond the increased fluency of women writers. We saw text length and word level differences between initially designated and redesignated bilingual students compared to their English-only peers. Finally, we see that the model works better with our higher scoring essays and is less effective explaining the lower scoring essays.

Introduction

Students’ difficulties in writing adversely affect their progression through school and ultimately impact their access to, persistence through, and performance in postsecondary education and careers (Brandt, 2014). Although secondary students struggle to write well, little has changed in the last decade or two in that little time is devoted to writing or teaching writing beyond early elementary school (Graham & Harris, 2019). Available evidence raising concerns about students’ ability to write well comes from a variety of sources, including nationwide test results from the National Assessment of Educational Progress (NAEP; unfortunately the most recent available NAEP writing results available are from 2011 due to measurement issues found in 2017, National Center for Education Statistics [NCES], n.d. -b). For example, performance on NAEP is particularly poor for English learners; the average score for eighth-grade English learners in 2011 was 108 compared to an average score of 152 for non-English learners (NCES, n.d. -a). The low scores for English learners fall below the cutoff score for the NAEP Writing Basic achievement level (120-172). The Basic achievement level reflects students who are able to address the tasks appropriately and mostly accomplish their communicative purposes, compared to the Proficient achievement level for students who clearly accomplish their communicative purposes. Beyond test scores, reports of significant learning delays in reading and math during the pandemic (Center for Research on Education Outcomes [CREDO], 2020; Dorn et al., 2020; Kogan & Lavertu, 2021; Kuhfeld et al., 2020) are likely to be true of writing (see, e.g., Skar et al., 2022, with young children in Norway), which is historically less of an instructional priority than reading and math.

Despite the weak performance of many students on large-scale tests of academic writing in the United States, teachers struggle to teach students academic writing (Harris & McKeown, 2022). Most teachers have insufficient training in writing pedagogy, in both pre-service courses and after they begin teaching (Graham et al., 2014). They struggle to find time in the curriculum; are burdened by low self-efficacy for teaching writing (Graham et al., 2001); and have limited research-based, efficient, and effective curricula to improve student writing in middle and high school. Indeed, teachers—and researchers—have trouble even defining the components of quality academic writing, let alone instruction on how to best teach academic writing.

The goal of this article is to generate insight into what one type of academic writing—argumentative writing—looks like and how it develops over middle and high school in order to potentially develop effective, research-based interventions for the teaching of writing. For this purpose, we closely examine linguistic features—cohesion, use of connectives, syntactic complexity, and word-level complexity—that are likely to predict the writing quality (in this case, defined as holistic scores given by human readers using a rubric defining the qualities of interest) of the argumentative essays of secondary school students. We chose these features based on current research literature and the model of MacArthur et al. (2019), which was successfully used in connection with basic-level undergraduate students. Our analysis was conducted on a corpora of source-based argument writing gathered prior to a well-established literacy intervention for secondary students, the Pathway to Academic Success Project (Olson et al., 2020). This article provides three major contributions to the literature: we provide granular information on the development of student argumentative writing across secondary school; we replicate the MacArthur et al. (2019) model of NLP writing features that predict quality with a younger group of students; and, because the student population at this level is more heterogeneous than college students, we are able to look at the differences for students across language status.

Background

Writing is not done in a vacuum but in conversation with the author’s purpose, audience, tools, and genre. Scholars subscribing to sociocultural theory focus on the social environment, or context, and its effects on learning (Wertsch, 1998). Literacy is seen as multiple situated, mediated sociocultural practices, as motivated and socially organized activity (Deane et al., 2012; Prior, 2006; Scribner & Cole, 1981). With this in mind, we explore differences in writing from several different angles: as students progress in their education (grade level), by sex because some research indicates that women tend to be more fluent at text-production than men, at least during the mandatory school years, and by language status because multilingual learners face particular challenges—and bring specific skills—when writing in a second language.

Effective written compositions convey the author’s intended message clearly and precisely for the given goal and audience. Therefore, language skills are important to the quality of written composition, and this is reflected in the classical and more recent theoretical models of writing (e.g., Berninger et al., 2002; Flower & Hayes, 1981; Y.-S. G. Kim & Graham, 2022). The contributions of linguistic knowledge to written compositions have been shown in studies where language skills such as vocabulary, grammatical knowledge (morphosyntactic and syntactic knowledge), and discourse oral language were examined as predictors of writing quality (Coker, 2006; Y.-S. G. Kim et al., 2015; Y.-S. G. Kim & Schatschneider, 2017). Another approach to examining the role of linguistic knowledge in written compositions is an examination of linguistic features in written comprehension. Studies in this line of work found that essays containing more infrequent words were considered to be of higher quality by expert raters (Crossley et al., 2011; McNamara et al., 2010). In addition, more advanced writers use fewer common, concrete words (Crossley et al., 2011), and fewer short words (Haswell, 2000). Ultimately, more sophisticated words are evidence of higher overall text quality (Crossley, 2020). Text cohesion is also related to writing quality. As young as kindergarten children begin to use cohesive devices, including referential pronouns and connectives (Y.-S. Kim et al., 2011) and continue to develop their proficiency with them until about the eighth grade (McCutchen, 1986; McCutchen & Perfetti, 1982), after which they reduce their reliance on explicit cohesive devices to create coherence (Crossley et al., 2011). Furthermore, syntactic skills are related to writing quality, with more advanced writers showing more refined sentence generation skills than beginning writers (McCutchen et al., 1994) with the development of syntactic complexity developing from first grade through college (Haswell, 2000) and students creating fewer run-on sentences and sentence fragments as they develop as writers over time (Berninger et al., 2011). However, traditional measures such as mean T-unit length have reported inconsistencies across studies that the Coh-Metrix measures are intended to reduce (Crossley, 2020).

Because of the multifaceted nature of writing, the evaluation of writing quality is challenging. As a result, many test companies use holistic scoring by human raters as a parsimonious and practical approach (e.g., like those used by classroom teachers) to evaluate writing quality. Although the use of rubrics has been found to improve the consistency and reliability of human holistic ratings, human raters may be influenced by irrelevant constructs such as the quality of student handwriting or variations in grammar, spelling, or dialect features (Spandel & Stiggins, 1980; Graham et al., 2011; Malouff & Thorsteinsson, 2016; but see Nesbitt, 2022, related to African American Vernacular English). Indeed, this journal has a long history of attending to issues of fairness in the assessment of writing, connecting the measurement and composition communities (Poe & Elliot, 2019). In that same spirit, we acknowledge the biases and constraints of human holistic scoring and urge continued efforts at improvement. We also recognize the widespread use of holistic human scoring (both by teachers and in wider-scale assessments) for secondary students in classrooms and believe that there is a contextual validity to the measure that makes it of interest and that scrutiny of such scoring may inform future practice.

Differences in writing quality by sex (Graham et al., 2017; Reilly et al., 2019; Steiss et al., 2022) and language proficiency (Kim et al., 2021; Graham & Perin, 2007; National Center for Education Statistics [NCES], 2012) are well documented, and we were motivated to better understand the underlying differences in students’ writing in order to inform instruction to close the proficiency gap. For example, only 1% of English learners at both Grades 8 and 12 scored proficient or above in the National Assessment of Educational Progress in writing (NCES, 2012).

Coh-Metrix (McNamara & Graesser, 2012) is a natural language processing tool that provides a wide range of indices of linguistic and discourse representations of texts. It provides 108 different indices categorized into 11 groups, ranging from descriptive information such as the number of words in the text, to complex indices, such as lexical diversity and syntactic complexity (McNamara et al., 2014).

Numerous studies have used Coh-Metrix to identify specific measures of writing that describe the difference between high-scoring and low-scoring writing. For example, McNamara et al. (2010) studied 120 argumentative essays by first-year college students at Mississippi State University to distinguish the difference between those rated by human scorers as high and as low based on the standardized rubric commonly used in assessing SAT essays. They found that the three most predictive indices of essay scores were syntactic complexity (measured by the number of words before the main verb), lexical diversity (measured by the Measure of Textual Lexical Diversity), and word frequency (measured by Celex). None of the 26 measures in Coh-Metrix of cohesion showed differences between ratings.

Coh-Metrix has also been used to explore writing development. In one example, Crossley et al. (2011) examined 9th-grade, 11th-grade, and first-year college students’ argumentative essays along the dimensions of lexical sophistication, syntactic complexity, text structure (a combination of word length, number of paragraphs, and number of sentences), and cohesion. Ultimately, they determined that students produce more sophisticated words and use more complex sentence structure as grade level increases but use fewer cohesive features. Not surprisingly, the strongest predictor of grade level was the number of words in a text. As noted by MacArthur et al. (2019), “research has found consistent correlations of quality with length and with lexical diversity and complexity, but variable correlations of quality with syntactic complexity and cohesion” (p. 1557). Length is consistently the strongest predictor of essay quality for secondary and college students (Crossley et al., 2011; MacArthur et al., 2019; McNamara et al., 2013; Powers, 2005). Syntactic complexity is more complicated, with indications at the college level sometimes showing positive correlations with quality and other times showing no significant relationship (Crossley & McNamara, 2014; Crossley et al., 2011; McNamara et al., 2010). The correlation between cohesion and quality has been even more mixed (see Crossley et al., 2011; McNamara et al., 2010, 2013; Perin & Lauterbach, 2016).

The Current Study

Despite the growing literature on the relations of linguistic features identified by natural language processing to writing quality for college and adult writers, there has been insufficient empirical study at the secondary level to shed light on the features of argumentative writing that has been highly scored by human raters. Therefore, we investigated linguistic features—cohesion, use of connectives, syntactic complexity, and word-level complexity—in the argumentative essays of secondary school students and their relations to writing quality as scored by human raters, using data from students in Grades 7 to 12. We sought to answer the following research questions:

What are the average levels of text length, cohesion, connectives, syntactic complexity, and word-level complexity in this sample across Grades 7-12, by sex, by English learner status, and for essays scoring above and below the median holistic score?

How do text length, cohesion, connectives, syntactic complexity, and word-level complexity predict writing quality (human, holistic score) for secondary school writers’ argumentative texts?

We hypothesized that the average text length and word-level complexity would increase as the grade level increased and in the higher scoring essays compared to the lower scoring essays. We had no clear expectations for syntactic complexity because we had seen in other work that developing writers frequently use run-on sentences that are complex but ineffective.

Method

Data Source

This study is a secondary data analysis of texts from a multisite randomized controlled trial designed to validate and scale up an existing successful professional development program: The Pathway to Academic Success Project (Olson et al., 2020). The Pathway to Academic Success Project trains teachers to improve the reading and writing abilities of English learners who have an intermediate level of English proficiency by incorporating cognitive strategies into reading and writing instruction. From this project, we collected the pretest argumentative essays of students in Grades 7 to 12 (n = 1076), including a subset randomly selected and holistically scored by the independent external evaluator (n = 174; see Supplemental Materials for additional details). The full analytic sample comprised the following demographics: 53% men; 67% Hispanic, 18% White, 2% Asian, 2% African American; and 58% English only, 6% English learners, 9% initially fluent English proficient, and 26% reclassified fluent English proficient. All data were accessed and used in accordance with the University of California, Irvine Institutional Review Board (2019-5085).

The prompts for these texts asked students to read a single text, a nonfiction newspaper article, and then write about the author’s message (i.e., present a theme statement), analyze the author’s use of figurative language, and discuss the author’s purpose (Appendix 1). This type of essay is an argument of interpretive analysis (Smith et al., 2012) or literary judgment (Hillocks, 2011). Pretests were used for both summative and formative purposes in the related intervention and were designed to focus on students’ ability to conduct high-level text interpretation and engage in increased revision to craft improved analytical texts. Such texts are simply a single genre, however, and cannot predict all writing development across genres.

The essays were scored by trained raters (recruited from current and former teachers affiliated with local National Writing Project sites not participating in the intervention) on a prompt-agnostic rubric for evaluation purposes, the Analytic Writing Continuum for Literary Analysis (AWC-LA) developed by the National Writing Project (Bang, 2013). Each handwritten paper was given a holistic rating as well as ratings on each of four attributes: content, structure, sentence fluency, and conventions for a total of five scores. Raters agreed within a single score point for 90% of papers on the holistic score (Olson et al., 2020). These five scores are used in our analyses as the quality of writing. An independent evaluator monitored the scoring to ensure that impartial processes were followed, and reliability of the scoring was assessed separately for each writing attribute measure through double scoring 10% of the papers (Olson et al., 2020).

For this study, the handwritten student texts were transcribed by a third-party service, which was asked to use a standard spelling correction tool and insert any omitted periods to sentence endings where appropriate to enable natural language processing of the texts. Correction of spelling errors at pretest in the MacArthur et al. (2019) sample was more aggressive than those employed with our sample, with researchers correcting homonyms, apostrophes, abbreviations, capitalization, as well as general spelling errors.

Linguistic Indices

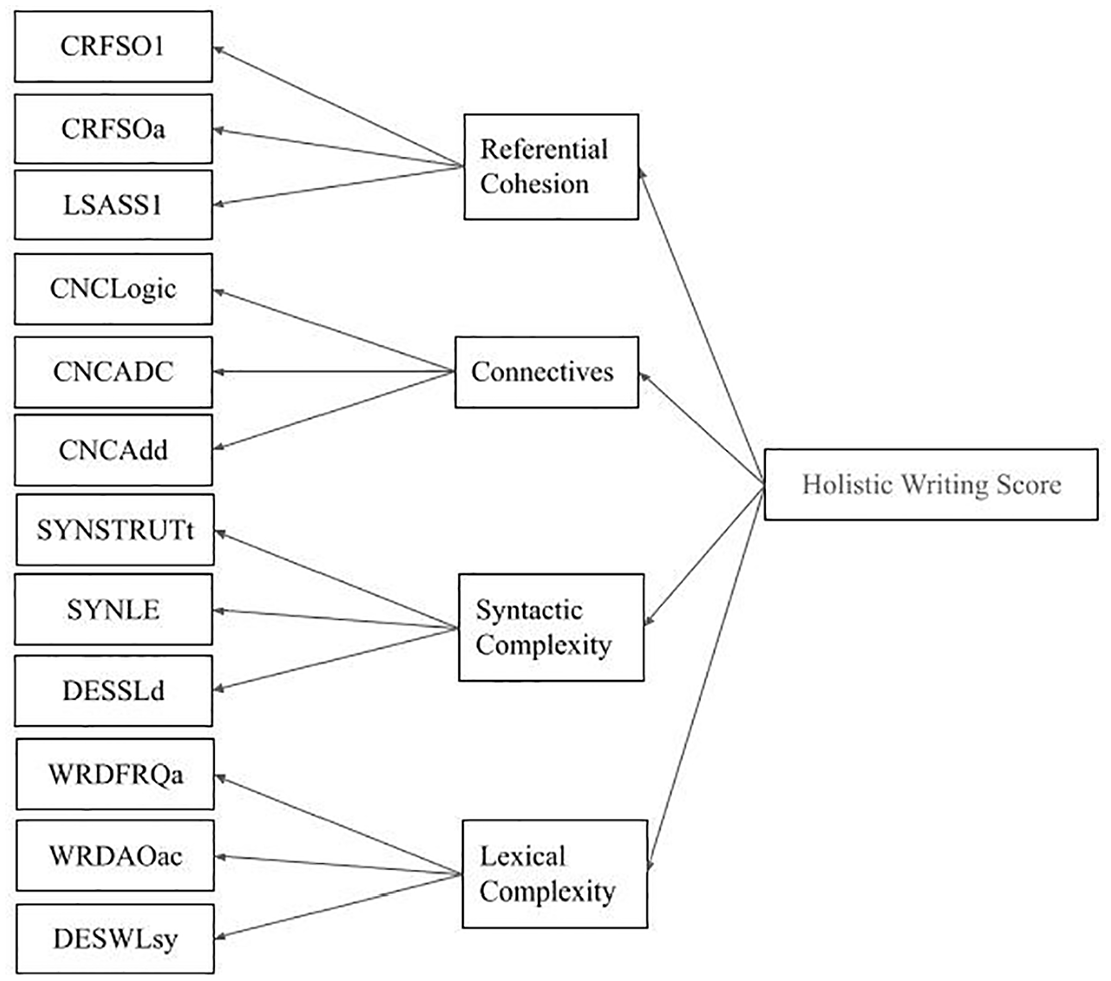

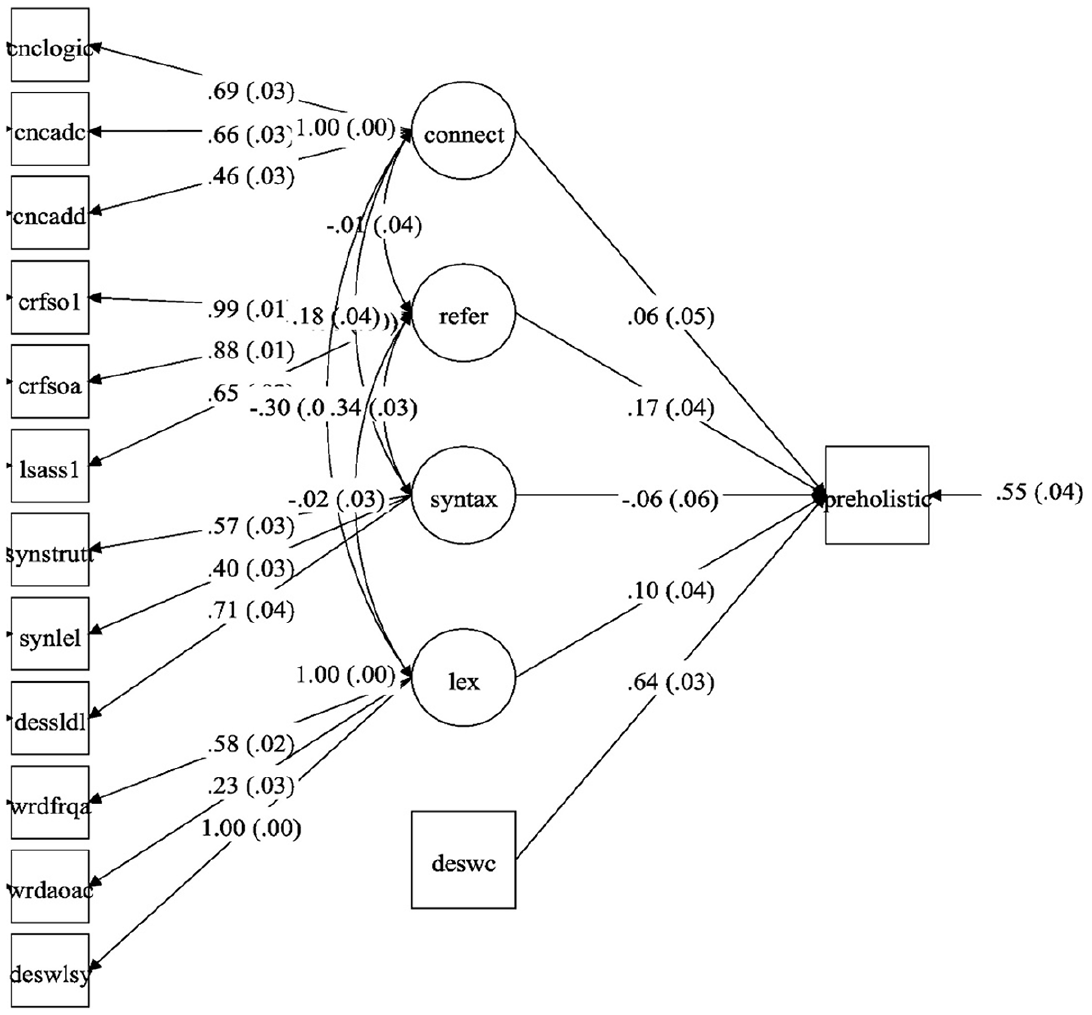

The transcribed written texts were processed by researchers using the Coh-Metrix (Graesser et al., 2004) natural language processing tool. Because studies show that length is highly predictive of quality, text length was controlled for in the model of lexical and syntactic complexity and cohesion. Indices were selected to represent four subconstructs based on theoretical considerations and prior research: word-level complexity, syntactic complexity, and two types of cohesion—referential cohesion and connectives (see Figure 1). Ultimately, we looked to MacArthur et al.’s (2019) study of basic college writers (n = 252) as the model for our work for three primary reasons: Basic college writers were close to our high school students’ developmental level; MacArthur et al. (2019) used a corpus of persuasive essays, a genre closely aligned with our corpus; and their analytical method was compelling because they eliminated indices that were highly correlated with length in order to avoid confounding interpretations.

Model of Coh-Metrix subconstructs, with the underlying variables predicting holistic writing score.

Word-level complexity measures from Coh-Metrix were selected from the categories of Lexical Diversity (the number of unique words compared to total words), Word Information (the frequency with which words are used and indices of age of acquisition of the words, concreteness, and imageability), and Descriptive measures related to words. Syntactic complexity indices were selected from the categories of Syntactic Complexity (e.g., length of nominal phrases and similarity of syntactic structure across sentences), Syntactic Pattern Density (relative incidence of types of phrases and word forms like noun and verb phrases), and Descriptives related to sentences. Referential cohesion came from the Coh-Metrix category of the same name, referring to links between words and across sentences that help with sense-making by readers, and Latent Semantic Analysis which considers semantically related words (“house” and “home”). Finally, connectives came from the category with the same name and describes words that make temporal (“then”), additive (“in addition”), contrastive (“on the other hand”), and other connections within a text. MacArthur et al. (2019) retained variables that had a correlation of less than r = 0.20 with essay length and a correlation with other indices in the same construct of less than r = 0.90 (to avoid collinearity) and at least 0.30 (so they were related to the same underlying construct).

Data Analysis

Descriptive data on means and correlations were used to determine the average levels of text length, cohesion, connectives, syntactic complexity, and word-level complexity across Grades 7-12, by sex, by English learner status, and by holistic score using Stata (v.15). We addressed the first research question, whether there were systematic differences across the writing dimensions as a function of grade, sex, English proficiency, and writing performance, using a series of multivariate analyses of variance (MANOVAs). We used Pillai’s trace statistic as it gives robust results with unbalanced samples with nonnormal and heterogeneous variance (Ateş et al., 2019). We used the Bonferroni adjustment to protect against experiment error. To address the second research question, regarding the contributions of text length, cohesion, connectives, syntactic complexity, and word-level complexity to writing quality, we calculated structural equation models of the four latent subconstructs and their contributions to the essay quality (holistic score). MPlus version 8 (Muthén & Muthén, 2017) was used with maximum likelihood estimation. In order to match the MacArthur et al. (2019) analysis, we transformed the SYNSTRUTt and WRDFRQa variables into negative values. SYNLE and DESSLD were transformed because of their distributional properties by taking the log of each.

To illuminate the difference in higher and lower quality essays, as part of our heterogeneity analysis for Research Question 1, we replicated the McNamara et al. (2010) process for analyzing higher quality essays by splitting the corpus at the median holistic score (in our case, essays of 3 and above on the 1-6 point scale).

Results

Research Question 1: Linguistic Characteristics

Our first research question sought to describe the length and linguistic characteristics of student writing across Grades 7 to 12, with particular attention to individual differences based on sex and English learner status. We also sought to determine how stronger and weaker argumentative essays (based on human, holistic scoring) differ along these dimensions systematically.

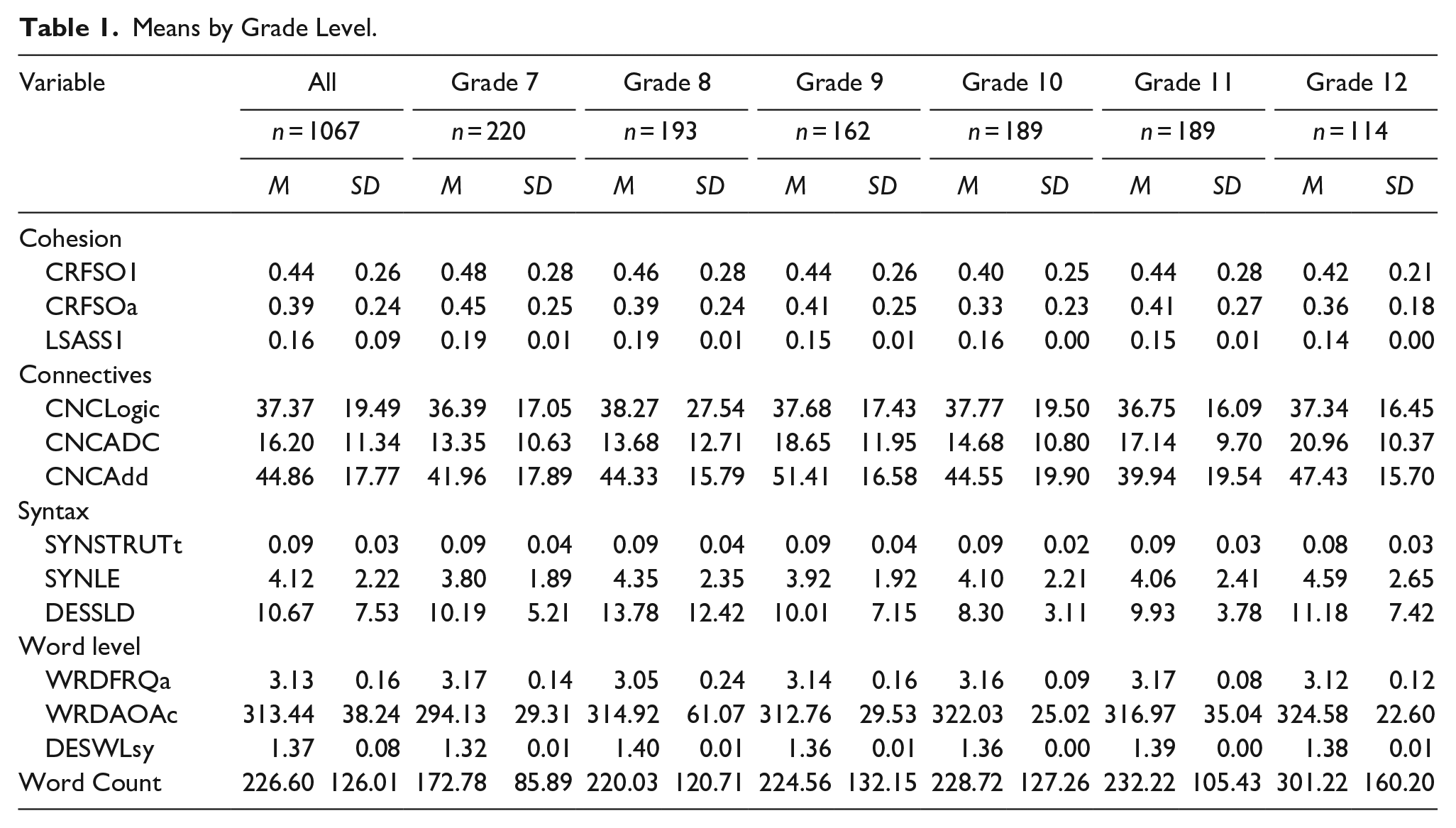

We first examined writing performance on the task in which students read a single text, a nonfiction newspaper article, and then wrote about the author’s message (i.e., present a theme statement), analyzed the author’s use of figurative language, and discussed the author’s purpose across the secondary school grades. Table 1 summarizes the means and standard deviations of the linguistic indices and length of the essays, both in aggregate and by grade level. We found that the variability for most variables is rather high, indicating quite a bit of heterogeneity. We also note that English language status varied across the grade levels and may have confounded some of our grade-level findings. For example, English-only students were a low of 54% in Grade 11 but 64% in Grade 7. English learners ranged from 3% in Grades 11 and 12 to 12% in Grade 8.

Means by Grade Level.

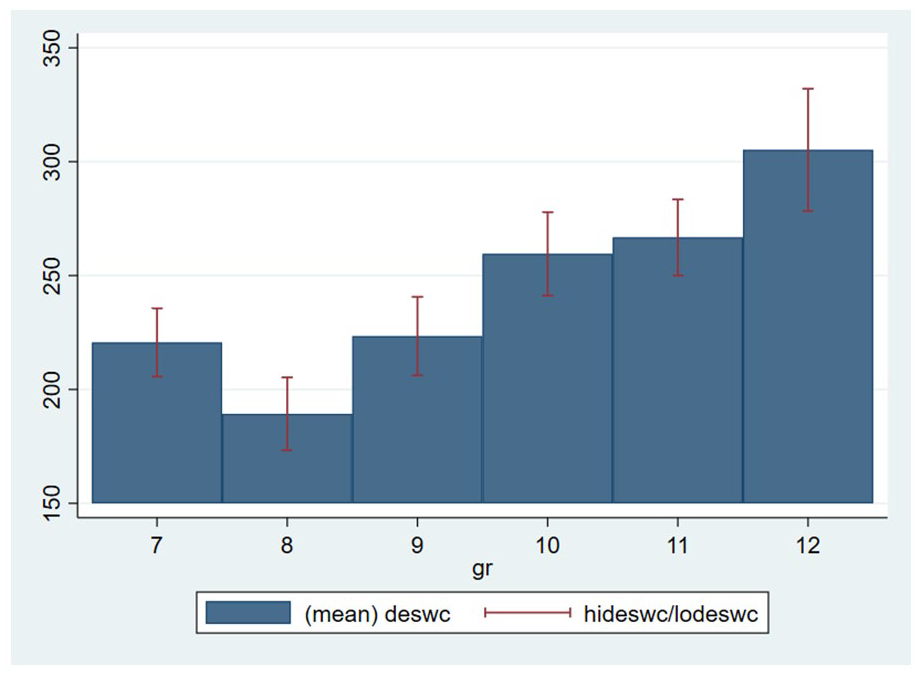

A pair of analyses of variance (ANOVAs) revealed significant grade-level differences in writing length, F(5, 1061) = 16.37, p < .0001. Bonferroni post hoc comparisons revealed differences in essay length, as students in 11th and 12th grades wrote significantly longer essays than students in 7th through 9th grades. Students in 12th grade wrote longer essays than 10th-grade students, but not those students in 11th grade.

We then examined grade-level patterns in the different linguistic features. We calculated a series of MANOVAs on the three measures of cohesion (CRFSO1, CRFSOa, and LSASS1), use of connectives (CNCLogic, CNCADC, and CNCAdd), syntax (SYNSTRUTt, SYNLE, and DESSLd), and word use (WRDFRQa, WRDAOAc, and DESWLsy). Looking across all grade levels, we found significant grade-level differences for cohesion, F(15, 3183) = 2.18, p = .005; the use of connectives, F(15, 3183) = 3.29, p < .0001; syntax, F(15, 3183) = 2.20, p = .005; and word use, F(15, 3183) = 7.44, p < .0001. When considering cohesion, a subsequent series of ANOVAs using the Bonferroni adjustment for multiple comparisons revealed significant grade-level differences only for LSASS1, F(5, 1061) = 4.63, p = .0004. Tukey’s post hoc tests showed that students in 7th and 8th grades had higher LSASS1 scores than students in 12th grade. When considering connectives, we found significant grade-level differences for CNCLogic, F(5, 1061) = 2.84, p = .015, and CNCAdd, F(5, 1061) = 3.78, p = .002, but not for CNCADC, F(5, 1051) = 1.50, p = 19, such that students in 7th grade used logical connectives (CNCLogic) to a greater degree than those in 11th and 12th grades. When considering syntax, we found significant grade-level differences for SYNSTRUTt, F(5, 1051) = 3.42, p = .004, and DESSLD, F(5,1051) = 2.63, p = .02. Students in 12th grade used a wider range of syntactic structures than students in 7th and 8th grades, as indicated by lower SYNSTRUTt scores. Finally, we found significant grade-level differences for each of the word-level variables (WRDFRQa: F(5, 1061) = 4.88, p = .0002; WRDAOAc: F(5, 1061) = 11.05, p < .0001; DESWLsy: F(5, 1061) = 12.58, p < .0001). Students in 7th grade used higher frequency words (WRDFRQa) and words with younger age of acquisition scores (WRDAOAc) than students in Grades 8, 9, 10, and 12. Bonferroni post hoc comparisons indicated that 8th-grade students used lower frequency words (WRDFRQa) than students in Grades 7, 10, and 11. Students in 7th grade used shorter words with younger age of acquisition scores (DESWLsy and WRDAOAc) than students in all other grade levels. No other grade-level effects were significant.

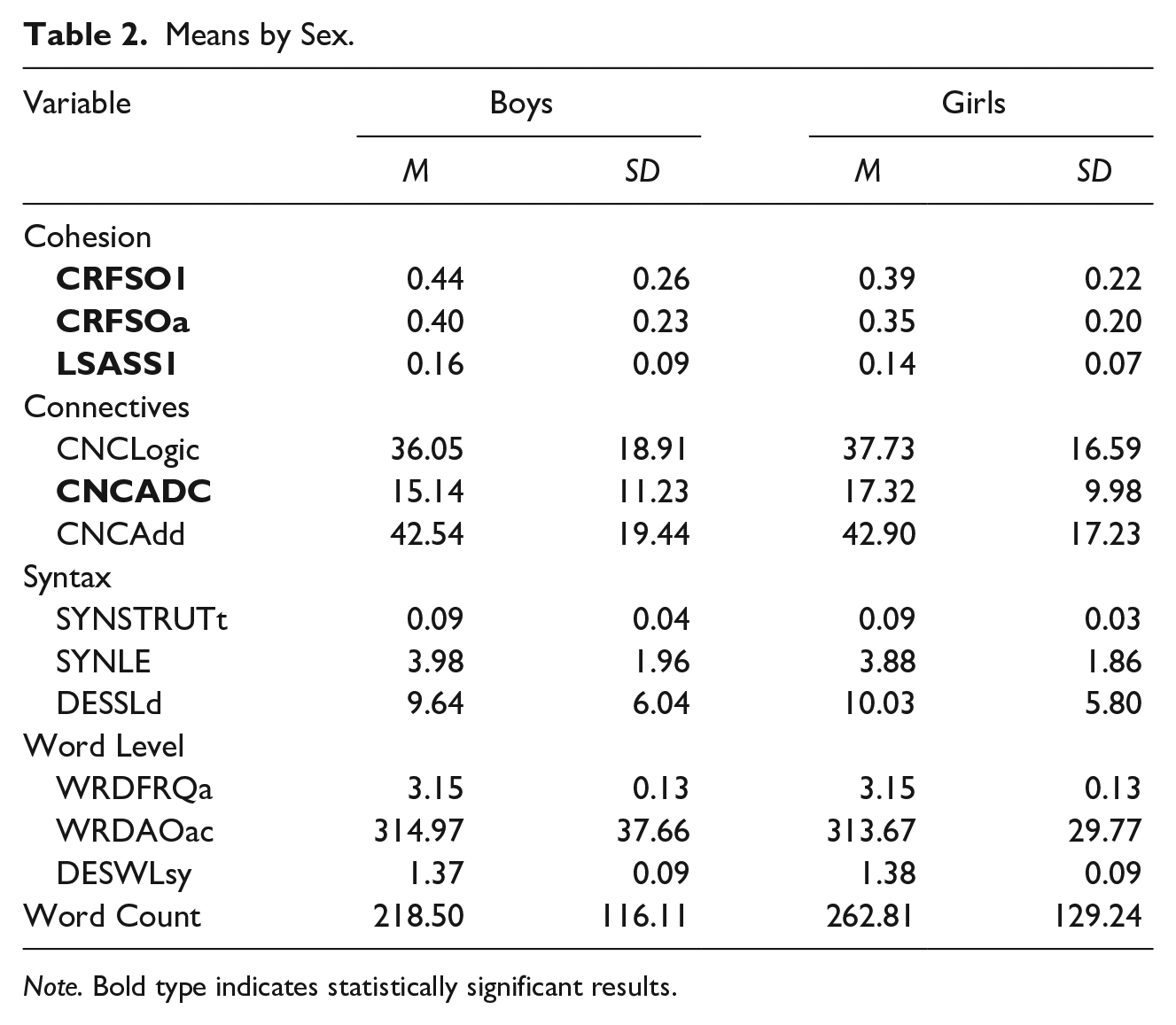

Student performance as a function of sex is summarized in Table 2. Girls wrote longer papers, F(1, 1065) = 34.80, p < .0001. We next calculated a series of MANOVAs to examine variations in the linguistic features as a function of sex. Boys’ writing showed higher cohesion scores, F(3, 1063) = 5.35, p = .001. A subsequent series of ANOVAs using the Bonferroni adjustment for multiple comparisons revealed that boys had higher cohesion scores for CRFSO1, F(1, 1065) = 10.17, p = .001; CRFSOa, F(1, 1065) = 13.94, p = .0002; and LSASS1, F(1,1065) = 10.03, p = .002. While girls showed greater overall use of connectives, F(3, 1063) = 3.89, p = .009, these differences were limited to the use of adversative connectives (CNCADC), F(1, 1065) = 11.09, p = .0001. No other sex differences were significant. Calculating the coefficients of variance (standard deviation/mean), we see that boys’ variability is much higher than girls’ for most items.

Means by Sex.

Note. Bold type indicates statistically significant results.

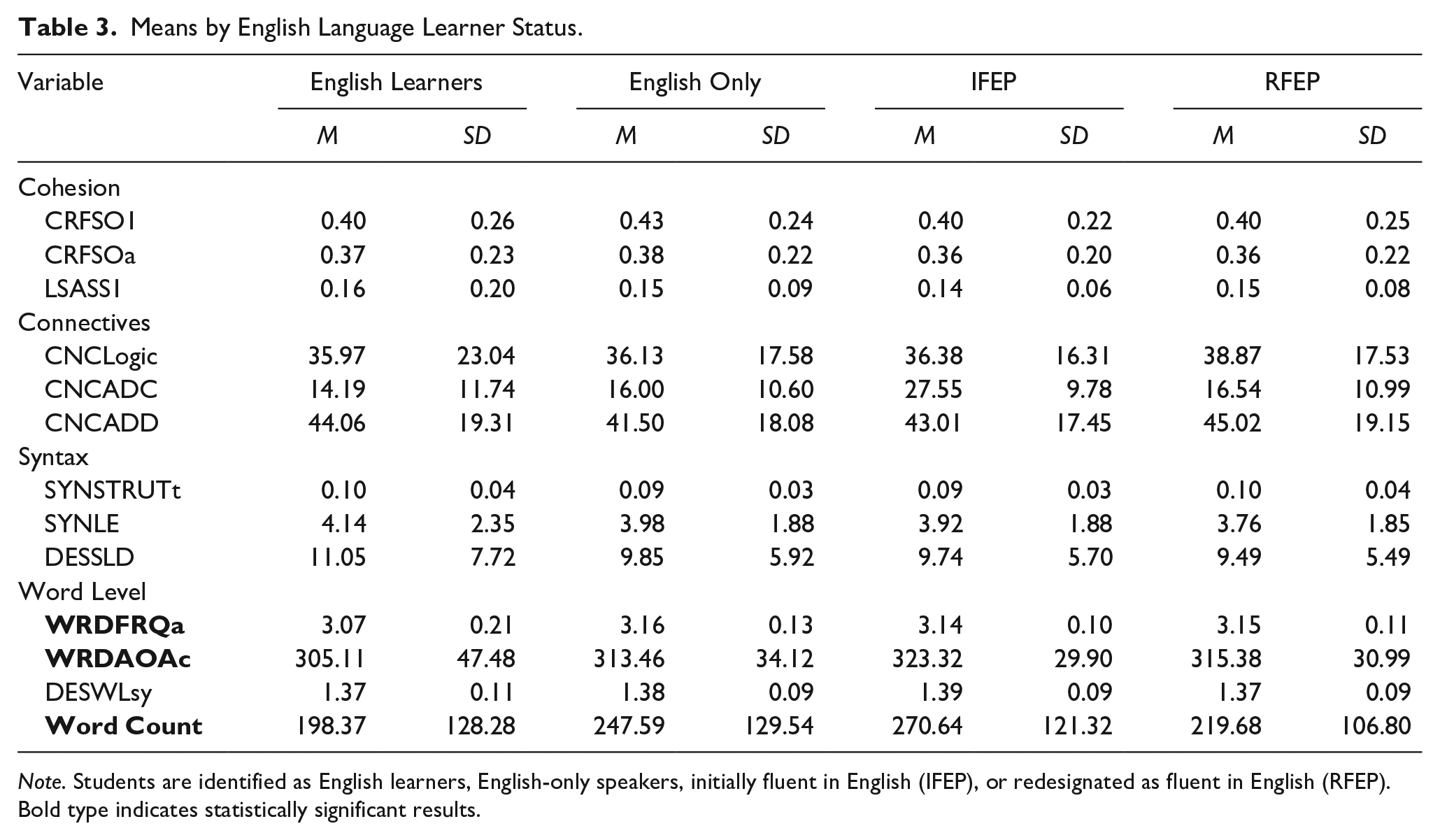

Table 3 presents the mean scores as a function of English language learner status. An ANOVA revealed significant differences in essay length, F(3, 1063) = 7.94, p < .0001. Bonferroni post hoc tests revealed that English Only (EO) and IFEP students wrote longer papers than RFEP and English Learner (EL) students. The sole MANOVA to reveal significant differences based on English proficiency was at the word level (WRDFRQa, WRDAOAc, and DESWLsy), F(9, 3189) = 7.57, p < .0001. A subsequent series of ANOVAs using the Bonferroni adjustment for multiple comparisons revealed significant differences for WRDFRQa, F(3, 1063) = 9.80, p = .0001, and WRDAOAc, F(3, 1063) = 4.25, p = .005. Bonferroni post hoc comparisons indicated that EL students used higher frequency words (WRDFRQa) than students who were proficient in English (EO, IFEP, and RFEP). IFEP students’ writing contained more advanced vocabulary (higher WRDAOAc scores) than both EL and EO students. With respect to variability, we see much more in the English Learner population compared to all the other populations. No other differences were found.

Means by English Language Learner Status.

Note. Students are identified as English learners, English-only speakers, initially fluent in English (IFEP), or redesignated as fluent in English (RFEP).Bold type indicates statistically significant results.

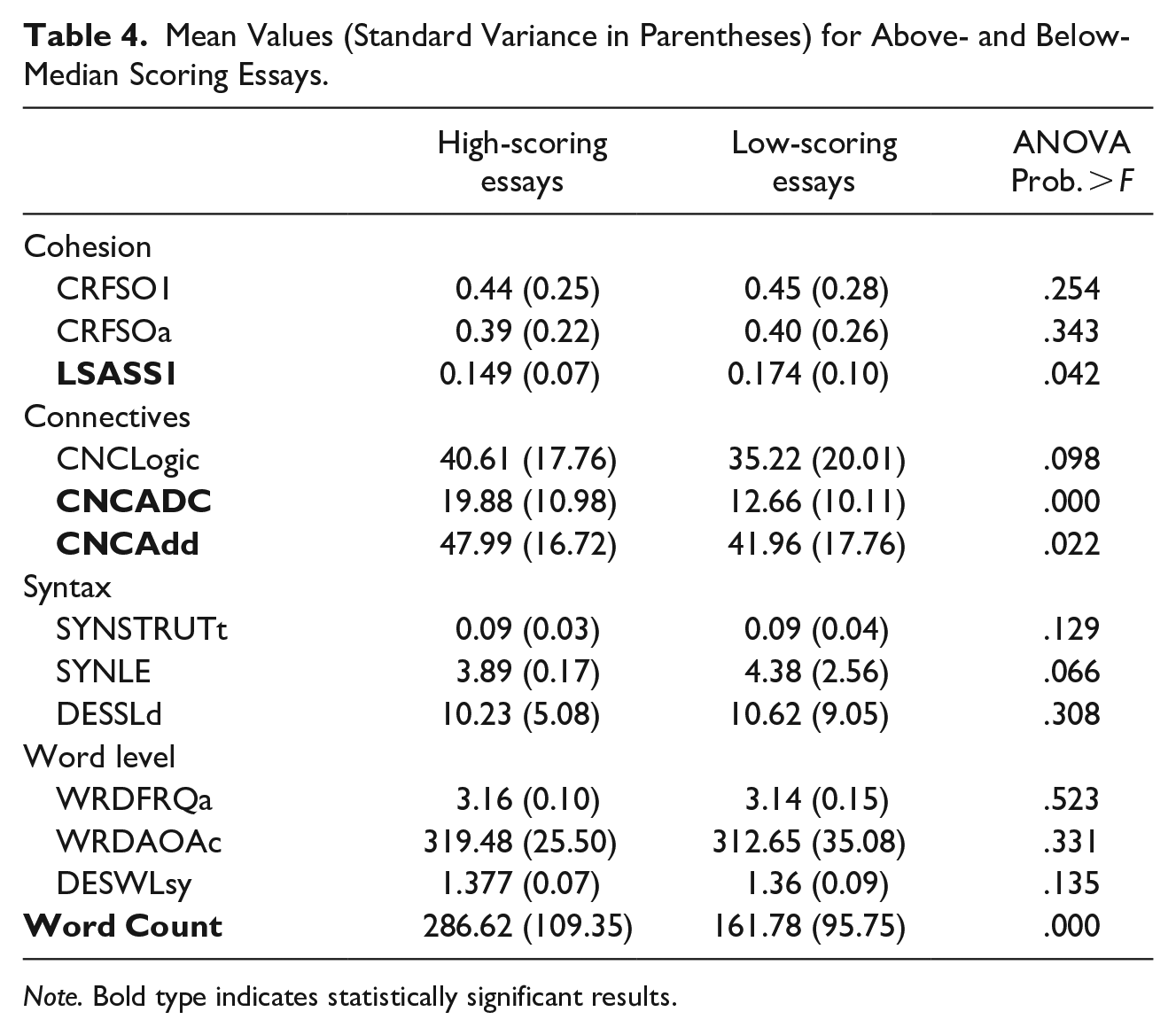

Finally, we considered how the linguistic features varied for papers scoring above and below the median holistic score (please see Table 4 for the descriptives). High-scoring essays were longer, F(1, 72) = 64.36, p < .00001, than low-scoring essays. Similarly, MANOVA found that high-scoring essays showed greater use of cohesive devices, F(3, 170) = 7.14, p = .00015, with subsequent ANOVAs revealing that high-scoring essays had higher CNCADC, F(1, 172) = 20.38, p = .00001, and CNCADD scores, F(1, 172) = 5.31, p = .022. Further, although the MANOVA testing word-level features was significant, F(3, 170) = 2.77, p = .043, high- and low-scoring essays did not differ significantly on any of the individual word-level variables (WRDFRQa, WRDAOAc, DESLsy). No other effects were significant. Descriptively, we note that the percentages of high scoring essays rose along with grade level, 7th grade (26.5%), 8th grade (43.8%), 9th grade (41.9%), 10th grade (50%), 11th grade (70%), and 12th grade (71.4%).

Mean Values (Standard Variance in Parentheses) for Above- and Below-Median Scoring Essays.

Note. Bold type indicates statistically significant results.

Research Question 2: Predictive Indices

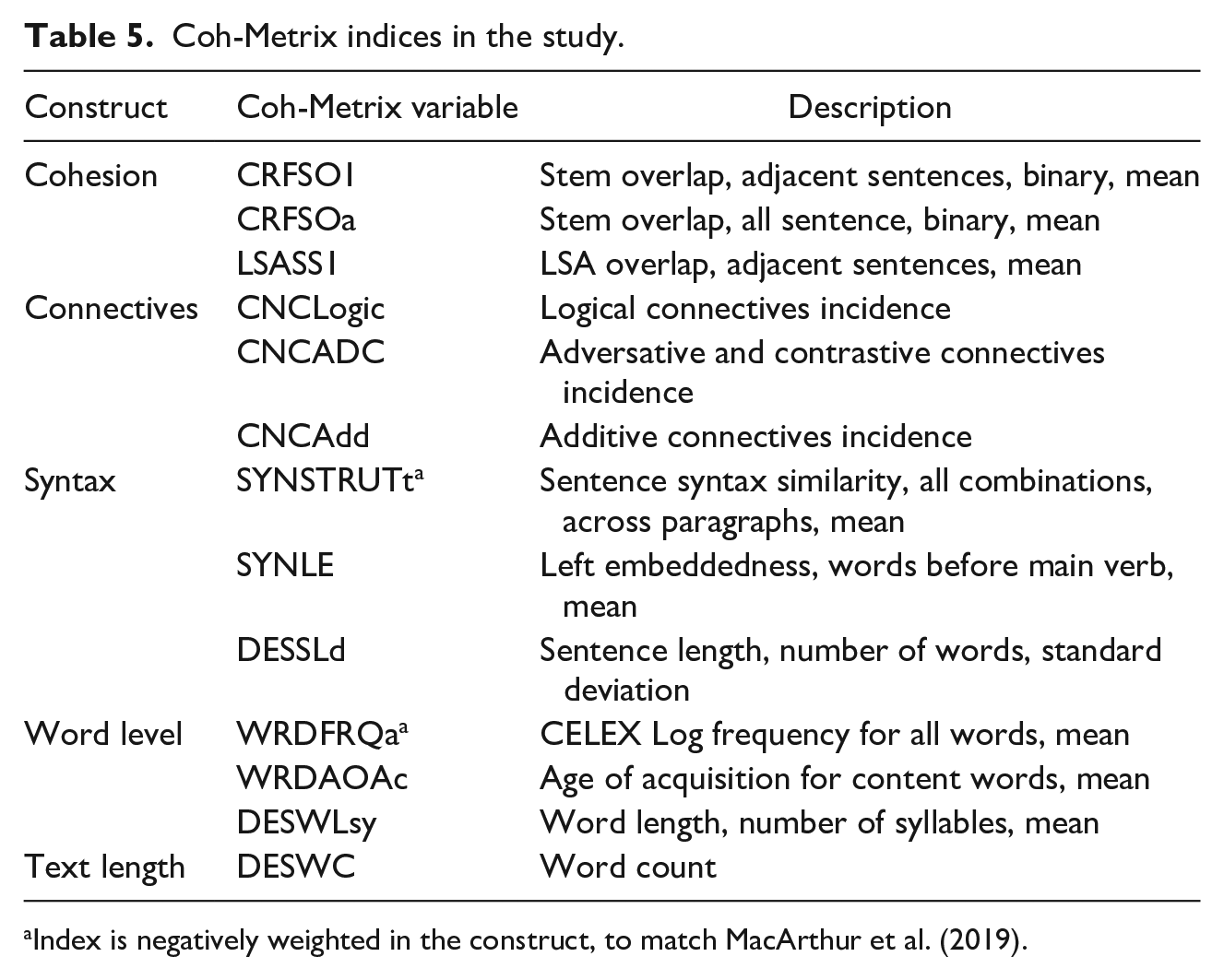

Our second research question used a subset of the sample to understand how text length, cohesion, connectives, syntactic complexity, and word-level complexity predicted writing quality (human, holistic score) for secondary school writers’ argumentative texts. The specific indices in the constructs for cohesion, connectives, syntactic complexity, and word-level complexity are described in Table 5, below, and the model is shown in Figure 2.

Coh-Metrix indices in the study.

Index is negatively weighted in the construct, to match MacArthur et al. (2019).

Diagram of structural equation model (SEM), with the four subconstructs predicting the essays’ human-scored holistic quality rating.

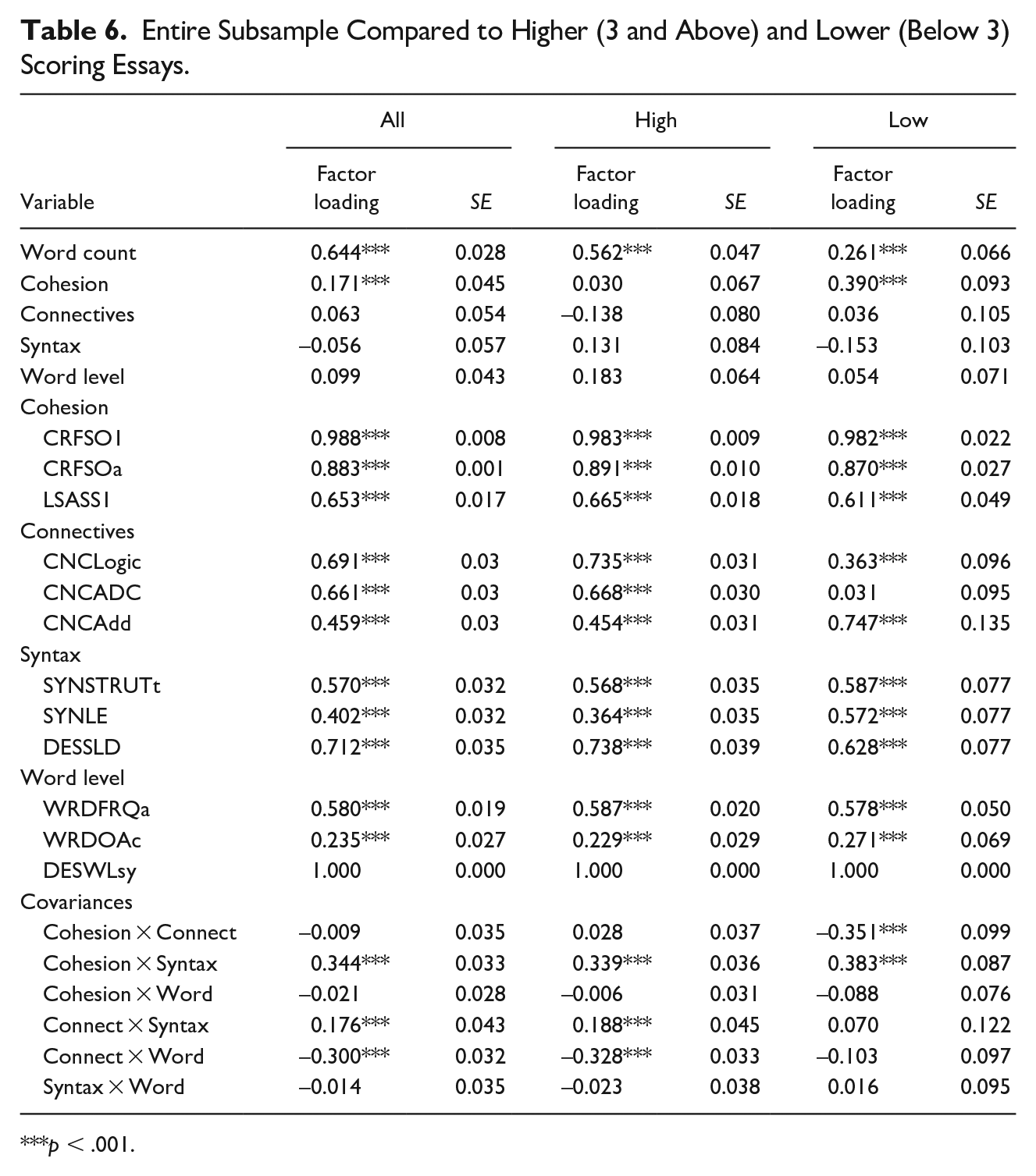

Our structural equation model model with the four latent variables, word count, and holistic scoring of quality showed that connectives and syntactic complexity did not significantly predict human scores for quality writing, but word count, referential cohesion, and particularly the word-level variables did predict quality for our text set (Column 1, Table 6). Our model fit was acceptable and similar to that of the MacArthur et al. (2019) structural equation model. Our model had a chi-squared of 670.948 (df = 69), comparative fit index of 0.876, standardized root mean square residual of 0.073, root mean square error of approximation of 0.083, and Tucker-Lewis index of 0.837.

Entire Subsample Compared to Higher (3 and Above) and Lower (Below 3) Scoring Essays.

***p < .001.

We then separated the high- and low-scoring essays to determine whether the differences in quality are reflected in our model (see Columns 2 [high] and 3 [low], Table 6). We chose high/low scoring rather than grade-level groupings because prior research had suggested that it was the quality of the essays, not the essay writers’ ages or grade levels, that impacted the effectiveness of the model. We were unable to test invariance, however, as our model with groupings failed to converge.

Discussion

Mean levels of variables by grade suggest a developmental progression with respect to text length (word count; Figure 3) of students’ argumentative essays. We found significant grade-level differences, specifically between the high and low end of the grade levels (e.g., 7th and 12th grade). This finding is consistent with widespread findings of student improvement in increased fluency expected as students automate some of the processes of writing (see discussion in Graham, 2019). We also see large standard deviations in our variables other than the word-level ones, suggesting a great deal of variability in our population. The statistically significant differences in cohesion were isolated to LSASS1, with 7th and 8th graders having higher scores than 12th graders. LSASS1 measures how conceptually similar each sentence is to the next. Younger students are more likely to repeat themselves, while older students often have more background knowledge and reasoning ability, which would lead to more variety in the sentences. Grade-level differences in connectives were found for logical and additive connectives (CNCLogic, CNCAdd), with students in 7th grade using them more than older students, but not adversative and contrastive connectives (CNCADC). Logical and additive connectives are less complex and younger students rely on simple connectives (e.g., “and”) to link their thoughts. This finding is consistent with the research showing that use of contrastive connectives occurs later developmentally (Spenader, 2018). With respect to syntax, we saw that students in 12th grade used a wider range of syntactic structures than younger students, which is consistent with their increased familiarity with text and text structures. Finally, students in 7th grade used higher frequency words and words acquired earlier than older students, while older students were able to access more unique and complicated vocabulary.

Word count by grade level.

We consider how our secondary school writers compare to basic college writers to analyze developmental differences, both in the context of argumentative writing. Fluency, or word count, is similar. Cohesion, the overlap in content words between sentences (McNamara et al., 2014), contributed more to college writing quality (0.29) than to secondary writing quality (0.17). This is likely due to the lower ability of younger students to maintain a clear and consistent underlying claim in their writing. Secondary students relied more on connectives (0.06) than college students (–0.03, posttest) to achieve quality. The use of connectives may have made writers’ thinking more explicit and easier to follow—both for themselves and for their readers. Our connectives’ latent variable included logical (“and”), adversative/contrastive (“although”), and additive (“moreover”) words to help create cohesive links between ideas and provide clues about text organization (McNamara et al., 2014).

Syntactic complexity had a negative relation to overall quality scores in our sample, just as it did in the basic college writers’ sample (−0.31 for basic college writers and −0.06 for secondary writers). The decreased negative impact of syntactic complexity for writers may be attributable to the fact that it played a larger role in differentiating between higher and lower quality texts in the older writers (e.g., many of the secondary writers had run-on sentences, so it did not provide as much discrimination). For younger writers, the latent variable for syntactic complexity was less attributable to the mean number of words before the main verb (SYNLE, 0.50 and 0.60, for college writers pre- and posttest respectively, and 0.40 for secondary writers). The uniformity and consistency of the syntactic constructions in the text across paragraphs was similar for younger writers, as was the standard deviation of the mean length of sentences (a larger number indicates more variation in the essay sentence length).

Finally, the word-level latent construct contributed less to students’ text quality than the basic college writers’ sample in the MacArthur et al. (2019) study (0.15 college posttest, 0.10 secondary). The composite measures underlying the latent construct also differed with respect to the indicator for the age when content words become part of a person’s vocabulary, with a higher score indicating words learned later in life. Our model had a loading of 0.24 compared to 0.67 for the older students.

Comparison of means by sex found a large difference in text length (word count of 218.5 for boys and 262.81 for girls). This finding is consistent with findings in other studies (Graham et al., 2017; Reilly et al., 2019; Steiss et al., 2022). Smaller, but statistically significant, differences are found for the cohesion variables (with boys scoring higher on all three components). The higher cohesion score parallels our finding that younger students had higher cohesion scores (although only in one component). Girls showed greater use of connectives, but only the adversative and contrastive ones—again a parallel with our findings by grade level suggesting that these connectives are, in particular, indicative of more mature writers. No other sex differences were significant. Ultimately, in our model the difference in quality of essays was primarily due to the increased word count of women writers. This finding is consistent with the literature base that suggests women writers’ performance reflects increased fluency (Reilly et al., 2019) and provides additional information that the performance increase does not reflect increased cohesion, word or syntax-level complexity, or use of connectives. Instead, the increased writing fluency may reflect unmeasured constructs such as oral fluency (Y.-S. Kim et al., 2014), attitudes (Pajares & Valiante, 1999), or transcription skills (McCutchen, 1996).

We also looked at mean differences across four levels of English language status: English learners, English only, initially fluent in English (IFEP), and redesignated fluent in English (RFEP). Not surprisingly, students writing in a language that they had been fluent in from the beginning of their education (English only and IFEP) wrote longer texts than the English learners or RFEP students. English learners had a mean word count of 198 compared to English-only students of 248 and initially fluent students of 271. Redesignated students had a mean word count of 220, which was significantly different from their English-only and initially fluent peers. The only significant differences in the component scores were at the word levels, with English learners using higher frequency (i.e., less sophisticated) words than their proficient peers and IFEP students’ containing more advanced vocabulary. While the former suggests still-developing English vocabulary, the latter might suggest a bilingual advantage consistent with Thomas and Collier’s (1997, 2002) work finding RFEP students outperforming English-only students starting in middle school. Mean values for our other variables, other than word-level ones, are relatively similar although more variable in the English Learner population. Our results are slightly different from those on human analytic factors found in Steiss et al. (2022), in which language proficiency had a significant effect on performance in each of the evidence use, ideas/structure, and language use factors. Looking at the variables underlying their language use factors, we believe that many of the constructs in our model would be related to that factor. Thus, our model may be breaking down the components of that factor into even more discrete categories and discerning areas where language proficiency has the most impact.

Looking at the means for above and below median essays showed a statistically significant difference in word count, with high-scoring essays averaging 287 words and low-scoring essays averaging 162 words. High-scoring essays also had higher cohesion, specifically both additive and adversative and contrastive connectives. Like McNamara et al. (2010), we did not find a difference between high- and low-scoring essays on the cohesion measures but, unlike them, we did find a difference in the use of connectives in our younger writers. One of the challenges of our NLP measure is that it does not differentiate between the different types of connectives; advanced writers use more sophisticated connectives, while beginning writers are likely to depend on simple ones such as “first,” “second,” and “next.”

Despite some of the differences discussed above, we see that, overall, the model performed well with our text sample. When looking at our correlations among quality, length, and linguistic indices, we find that the variables for this text set generally conform to the suggested parameters used by MacArthur et al. (2019). First, correlation with essay length was less than r = 0.20 for all of our variables, with the slight exception of DESSLd—our sentence-length measure that had r = 0.21. Next, correlation with other indices in the same construct was between r = 0.90 (to avoid collinearity) and r = 0.30. Our cohesion and connectives met these criteria, but our syntactic measure was slightly below the desired r = 0.30 or above with respect to the correlation between SYNLE and SYNSTRUTt (r = 0.20) and SYNLE and DESSLD (r = 0.27), as was our word-level variable, with the correlation of WRDAOAc and DESWLsy r = 0.24 and WRDFRQa and WRDAOAc r = 0.13. The correlation between word count and quality was high as expected, r = 0.64, slightly higher than for the MacArthur sample (r = 0.56), which supports our understanding that text length is a more significant predictor in lower quality writing by less developed writers who often struggle with production. Given the fact that the writing task was a time-limited task, it is not surprising that text length predicts 41% of the variance in quality score.

As seen in prior research, there continues to be a correlation of quality with length, which is even stronger in our writers than the MacArthur writers at posttest. Before writers can successfully employ the constructs in our model, they must first be fluent enough in the language of text production to produce sufficient text or their production must be scaffolded. The one area in which this was not true was in the use of connectives; we hypothesize that our less effective writers used these to support text organization and clarity. Syntactic complexity was a negative indicator for both sets of students, suggesting that both sets of writers struggled with ineffective complex sentence structure and run-on sentences.

We believe that these analyses provide several contributions: we use the MacArthur et al. (2019) model with younger students, and our larger sample allows us to consider variables such as grade level, sex, English language status, and higher versus lower scoring essays. Looking across grades we see that the text length of younger students increased as they aged, but the writing components were otherwise fairly stable. Sex did not seem to affect the components in meaningful ways beyond the increased fluency of women writers. We saw text length and word-level differences between initially designated and redesignated bilingual students compared to their English-only peers. Finally, we see that the model works better with our higher scoring essays and is less effective explaining the lower scoring essays.

These findings suggest that a continued analysis of the writing of emerging bilingual students—and our assessment of such writing—would be valuable to ensure that the writing curriculum not only meets student needs but takes advantage of student strengths, particularly in the context of K-12 education. Researchers need to look closer at possible issues of bias by human raters, even with training and the use of rubrics, when scoring the quality of English Learners’ essays. We also think an important next step is to understand the impact different genres, prompts, and task attributes (e.g., timed vs. untimed) have in the model and underlying variables. As noted above, our texts were from a single genre and cannot predict writing development across genres. In addition, we are using the admittedly imperfect human scoring as our reference point; it is important to continue researching how to improve the quality and consistency of such scoring as well as to reduce biases and judgments unrelated to writing quality. We also note a concern with using Coh-Metrix variables in this way: the variables do not differentiate appropriate, high-quality use of connectives, syntactic complexity, and word-level variation from poor uses. They indicate only the frequency of such usage. While the failure to differentiate between appropriate uses of complex words, for example, or complex sentences versus run-on sentences may be less concerning when analyzing the writing of competent adult writers, it becomes more problematic when trying to understand the meaning of variables on writing quality for younger writers.

Supplemental Material

sj-docx-1-wcx-10.1177_07410883241242093 – Supplemental material for Linguistic Features of Secondary School Writing: Can Natural Language Processing Shine a Light on Differences by Sex, English Language Status, or Higher Scoring Essays?

Supplemental material, sj-docx-1-wcx-10.1177_07410883241242093 for Linguistic Features of Secondary School Writing: Can Natural Language Processing Shine a Light on Differences by Sex, English Language Status, or Higher Scoring Essays? by Tamara P. Tate, Young-Suk Grace Kim, Penelope Collins, Mark Warschauer and Carol Booth Olson in Written Communication

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research reported here was supported by the Institute of Education Sciences, U.S. Department of Education, through Grant R305C190007 to the University of California, Irvine. The opinions expressed are those of the authors and do not represent the views of the Institute or the U.S. Department of Education.

Supplemental Material

Supplemental material for this article is available online.