Abstract

Immersive virtual reality (VR) technology is becoming widespread in education, yet research of VR technologies for students’ multimodal communication is an emerging area of research in writing and literacies scholarship. Likewise, the significance of new ways of embodied meaning making in VR environments is undertheorized—a gap that requires attention given the potential for broadened use of the sensorium in multimodal language and literacy learning. This classroom research investigated multimodal composition using the virtual paint program Google Tilt Brush™ with 47 elementary school students (ages 10–11 years) using a head-mounted display and motion sensors. Multimodal analysis of video, screen capture, and think-aloud data attended to sensory-motor affordances and constraints for embodiment. Modal constraints were the immateriality of the virtual text, virtual disembodiment, and somatosensory mismatch between the virtual and physical worlds. Potentials for new forms of embodied multimodal representation in VR involved extensive bodily, haptic, and locomotive movement. The findings are significant given that research of embodied cognition points to sensorimotor action as the basis for language and communication.

Introduction

Heightened in the wake of the global pandemic, there has been an accelerated shift and greater reliance on digital technologies for communication (Lin & Johnson, 2021). Expanding repertoires of digital media practices are now required by users of all ages, for youth and beyond (Sefton-Green & Erstad, 2017), which now includes meaning making in virtual reality (VR) contexts. VR technologies offer new potential for users to engage in immersive multimodal communication practices within and across virtual and material spaces, which remain underresearched (Henriksen et al., 2021) and which have unexplored implications for communication using novel forms of sensory-motor engagement (Mills & Brown, 2021). Multimodal refers to the combination of two or more semiotic modes or cultural means of representation, such as images, sounds, words, or body movements (Mills et al., 2018), and it is in the relationships between co-present modes that the “expressive power of multimodality resides” (Hull & Nelson, 2005). VR technology may have the potential for democratizing learning by increasing engagement of the body through embodied meaning-making and new three-dimensional, multimodal representational forms that involves the user’s vision, haptics, locomotion, and hearing, rather than privileging vision (Jang et al., 2017).

This article describes VR research conducted with three classes of upper elementary students (ages 10–11 years) who engage in immersive VR technologies with head-mounted display (HMD) and haptic sensors. The focus was to understand the affordances and constraints for the senses and embodiment in students’ digital painting, which is a new form of multimodal composition. Unlike virtual worlds and other online environments, the virtual room scale environment permitted users to move around the virtual space and walk inside their artwork or text, while the real-life movements of participants are projected as interaction within the virtual environment.

What Is VR Technology and Why Is It Important?

VR technologies are a synthetic or computer-simulated environment for user-immersion involving the use of an HMD for stereoscopic vision, or a multiprojected environment, and motion-tracking controls for haptic feedback (Pottle, 2019). VR environments are immersive, multisensory, artificial, three-dimensional virtual spaces involving computer-generated simulations that enable users to interact with virtual objects while the physical world is blocked from view (Jensen & Konradsen, 2018; Velev & Zlateva, 2017). Given that most VR systems allow the user to sensorially experience haptic feedback, to touch and manipulate objects in the virtual environment, and to use locomotion, they have a distinctively different scope for bodily engagement than other technologies for digital text production and for the multimodal representation of ideas (Mills et al., 2022).

VR of the kind researched in this article is distinguished from augmented and mixed reality, but these collectively can be referred to as extended reality technologies (XR). While augmented reality also may involve wearing a headset, the users also might view objects in the immediate physical environment through the camera of a digital device while interacting with virtual objects that overlay the view of that environment (Fernandez, 2017). Mixed reality combines virtual and real worlds, with a coexistence and interaction between real and virtual elements to produce new environments (Mills et al., 2022).

Existing research has pointed to some of the unique and powerful potentials of VR for supporting interactivity and rich immersive learning and presence (Jensen & Konradsen, 2018), creativity and problem-solving skills, and increased learner engagement (Velev & Zlateva, 2017). Well-designed VR technologies have been used to support individual creativity, including flow and attention (Yang et al., 2018). VR environments are three-dimensional simulations that can allow users to practice skills that are too dangerous to perform in the real world (Hussein & Nätterdal, 2015). VR can also be used to explore phenomena of interest in the universe, to view 360-degree films, or to interact with simulations of past or future times and events.

Multimodal Communication and VR Representation

Given the developing and inevitable shift from print-based textual learning environments to digital, information-based, and distributed communities, VR is one of the less understood dimensions of media-based learning environments and multimodal textual production. VR technologies offer different affordances for embodied writing and multimodal communication, distinguished from previous forms of virtual technology with the advent of HMDs that have become prominent since 2013 (Jensen & Konradsen, 2018). These affordances account for multimodal and sensorial ways of interacting with sophisticated, three-dimensional virtual environments and objects, orchestrating vision, sound, haptics (touch to interact with the environment), and head and body movements, in fully immersive environments—which differs from being seated at a computer screen as an observer of one’s avatar in a virtual world (Mills & Brown, 2021; Minogue & Jones, 2006).

VR interactions and texts can be used for multiple and changing social purposes, with different participation structures, degrees of online/offline interactivity, activities, interaction norms, and viewer positions (Mills et al., 2022). VR programs can involve narrative and conceptual depictions, or conventions and compositional meanings common to other textual formats. These typically combine written words, 2D and 3D moving and static images, color, and audio elements.

The grammar of visual imagery has been categorized extensively, such as two-dimensional images (Kress & van Leeuwen, 2021), picture books (Painter et al., 2013), social interactions (Norris, 2004), kineikonic or moving image texts (Burn, 2013), and other technology-mediated practices for learning (Jewitt, 2012). Our aim is to understand the role of embodiment in the VR painting mode, focusing primarily on the sensorial and bodily interactions (e.g., body movement, haptics, and locomotion) of the user or author in the process of designing a virtual painting.

VR technologies support cross-modal meaning making involving multiple modes, such as images, audio, and haptics (Mills & Exley, 2022). VR technologies have recently appeared as one of the fastest growing education and media markets with rapid uptake of these technologies in classrooms (Fernandez, 2017), yet the pedagogical uses of new VR HMD technologies for multimodal composition is a new area of research, with different meaning potentials than two-dimensional texts that have dominated digital screens in the past.

Why Are the Senses and the Body Important to Multimodal Communication?

Central to this research are recent theories of sensory literacies and embodiment in multimodal communication, following a much more expansive corporeal turn in writing and educational research. This includes, for example, demonstrating the role of haptics or touch to interact with the external environment for learning (Minogue & Jones, 2006), embodied cognition (Corcoran, 2018), and embodiment and writing technologies (Haas & McGrath, 2018), that have demonstrated the significance of sensory-motor aspects of cognition and technologies of communication (Sonesson, 2007).

Embodiment is defined here, following Gibbs (2005), as the role of an agent’s body and, in the context of embodied cognition, the role of the body in situated cognition. Theories of embodiment in sensory studies have addressed the neglect of the sensorium and the human body that is associated with ocularcentrism, or the prioritizing of vision, in hierarchical views of the senses (Porteous, 1990). Standard cognitive science has often emphasized perception, memory, attention, problem solving, language, and learning and is most centrally focused on understanding the abstract abilities of the mind (Shapiro, 2011). This has stemmed from the long-held Cartesian dualism that separates the “higher” mental functions from the “lesser” functions of physical bodies (Mills et al., 2018).

In contrast, embodiment theories have pointed to the important recognition that human cognition and knowing is deeply grounded in multisensory processes and bodily experience of the world, with texts, and with technologies of multimodal inscription (Walsh & Simpson, 2013; Wilson, 2002). In fact, all human cognition, including abstract thought, has been shown to be body-based (Gibbs, 2005), the evidence of which is supported by recent research in the field of neuroscience and embodied cognition (Corcoran, 2018). The bodily basis of cognition has important implications for understanding the affordance of VR technologies for multimodal meaning making and representation, since meaning making becomes immersive, involving motion-sensing technologies and haptic feedback in three-dimensional, virtual environments.

Why Does Embodiment Matter to VR Learning?

Given that much of human cognition depends on sensorimotor processes, and given that abstract mental processes are fundamentally body-based (Wilson, 2002), the sensory potentials of VR technologies for immersive learning offer new possibilities for cognition and learning. VR technologies are responsive to haptics with high simulator fidelity, that is, replicating reality, while requiring other movements of the head and body (Jensen & Konradsen, 2018). Haptics is a perceptual or sensory system of the hands and arms, incorporating inputs from multiple sensory systems involving the skin, muscles, and joints (Klatzky & Lederman, 1988). The immersive responsiveness of VR to haptics and body movement holds different potentials for learning, because research on embodied cognition points to integration of cognition and sensorimotor visual systems, and of perception and action, in which these aspects are part of one neurological process. In other words, perception does not occur merely in the brain but, rather, involves the whole person, based on perceptually guided explorations of one’s environment (Gibbs, 2005).

It is not surprising then that immersive VR technologies have shown particular advantages for skill acquisition and factual learning (Rasheed et al., 2015); remembering and understanding spatial and visual information and knowledge (Jensen & Konradsen, 2018); visual scanning and observational skills (Ragan et al., 2015); psychomotor skills related to movements of the hands, head, and body (Sportillo et al., 2015); and affective skills related to managing emotional responses to situations (Pallavicini et al., 2016). However, existing studies have not attended to the role of the senses and the body in VR in classrooms, including for multimodal composition, and even less so at the elementary school level (Kavanagh et al., 2017).

Research Question

In the context of a VR HMD technological environment, the present research aimed to investigate the following question: What are the modal affordances and constraints of VR painting for supporting students’ embodied digital media production? This question is important given the very different sensory potentials of immersive, interactive virtual technologies for learning and textual design, and given that learning and abstract thought are now recognized to occur, not only in the mind but as having important relations with the proprioceptive action of the body (Gibbs, 2005). The term digital media production describes the act of making digital texts (Beavis, 2014), used synonymously with other terms in the relevant literature on digital communication, such as digital composition (Mills, 2015) and multimodal designing (New London Group, 2000).

VR technologies allow a broadened range of modalities for learning in a simulated context (Radianti et al., 2020), with unexplored potentials for creative digital media production. In terms of representational modes, the principal mode of interest was virtual painting—a hybrid mode that uses a virtual brush or controller that emits visible, intangible ink into the air. The virtual brush can be used to produce marks that may follow cultural conventions, such as writing, drawing, painting, sculpting, and many other dynamic visual effects, such as flashing sparkles and drifting snowflakes, that do not have precisely equivalent forms in conventional modes.

Materials and Methods

This section summarizes the research methods applied in this study, including a description of the methodology, ethics, research question, site and participants, data collection, and data analysis.

Observational research combining the use of video recording, screencasts, and think-aloud interviews were conducted, followed by multimodal interaction analysis: a methodological framework that is situated in practice for analyzing the complexity of interactions across modes and materials (Norris, 2004). Multimodal interaction analysis is particularly well suited to analyzing video and interview data by attending to an array of communicative modes, such as proxemics, posture, head movement, haptics, gaze, speech, movement, and other modes in real-time interactions, and their interrelationships (Norris, 2004).

The research was conducted with three classes of upper elementary students (ages 10–11 years) using Google Tilt Brush™ software to produce creative, three-dimensional virtual paintings that were themed to convey a mood—an intuitive, open-ended task to reduce cognitive load in a complex three-dimensional multimedia environment (Mayer & Moreno, 2003). The user selects from a virtual color palette and an array of brush types, while movement with hand controls produces the representations that follow in the immersive environment. Teachers and researchers integrated the use of virtual technology within the primary school curriculum across English, Technology, and the Arts. Research of VR is relatively emergent in education, with a preponderance of applications used in STEM disciplines. In a review of original VR studies, only 27 of 167 VR applications had some relevance to language and communication (Kavanagh et al., 2017; Reisoğlu et al., 2017), despite the fact that representational skills are foundational for all learning.

Research Ethics

The student participants and their caregivers supplied voluntary, informed, understood, and signed consent. The research received ethical clearance from a human research ethics committee (ACU ethics approval: 2018–97H), following the national guidelines for implementing ethical research with minors (see Australian Research Council, 2018). Student participants and their caregivers gave written consent for video recording, and for sharing video imagery of students with faces concealed by the headset, and with pseudonyms. Students whose parents did not supply consent took part as a school activity, but no data were collected from them. The caregiver consent rate to participate in this study was greater than 90%.

Site and Participant Description

The research was conducted with three classes of students, ages 10–11 years, from an independent elementary school in Australia (n = 47). The VR activities were conducted at an off-site media arts center located on the outskirts of a metropolitan city. The VR activities were conducted for 21 hours over 5 weeks. On each full day of the program, students from each of the three classrooms participated in turn to use the VR gaming system, the HTC Vive, including wearing the headset and using hand controls with 24 sensors and high-definition haptic feedback (see Figure 1).

Student using the HTC Vive™ head-mounted display and hand controls.

The majority of students in the cohort spoke English as a first or only language, with only two bilingual students participating in the research. The majority were born in Australia, and those with families from countries outside Australia were from English-speaking countries (e.g., New Zealand, England, and Ireland). The students were from families that were lower in economic advantage compared to the national average. A smaller proportion of parents in the district (11.4%) had attained university-level degrees compared to the state (18.3%) and national averages (22%). In the school district, unemployment rates (8.2%) were higher than state (7.6%) and national unemployment rates (6.9%; Australian Bureau of Statistics, 2016).

Data Collection

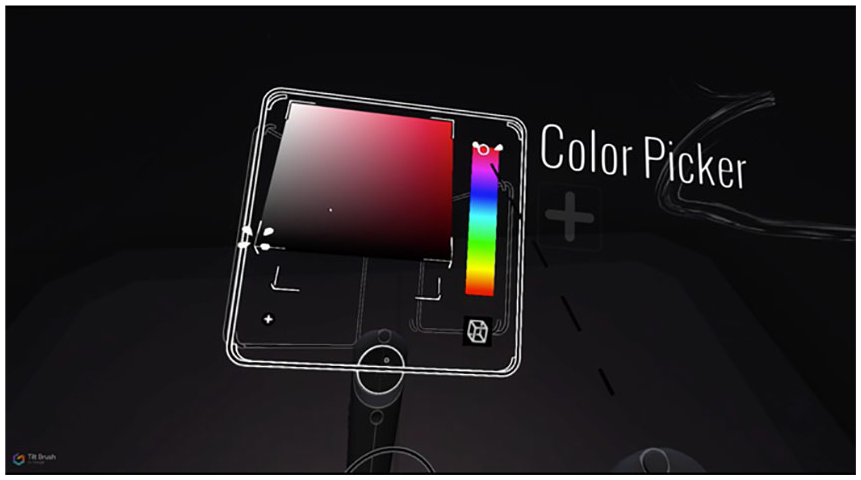

The qualitative research was observational, supported by multimodal analysis of video recordings, screencasts, students’ digital artefacts, and think-aloud interviews. Each of the 47 students practiced using the Google Tilt Brush™ tools (see Figure 2) and created and saved their three-dimensional, virtual designs. Most students had used VR technologies with HMD at least once in their out-of-school lives, including with friends or at video gaming centers. The research team ran the virtual painting activity in a community facility near the school.

Google Tilt Brush™ color picker tool.

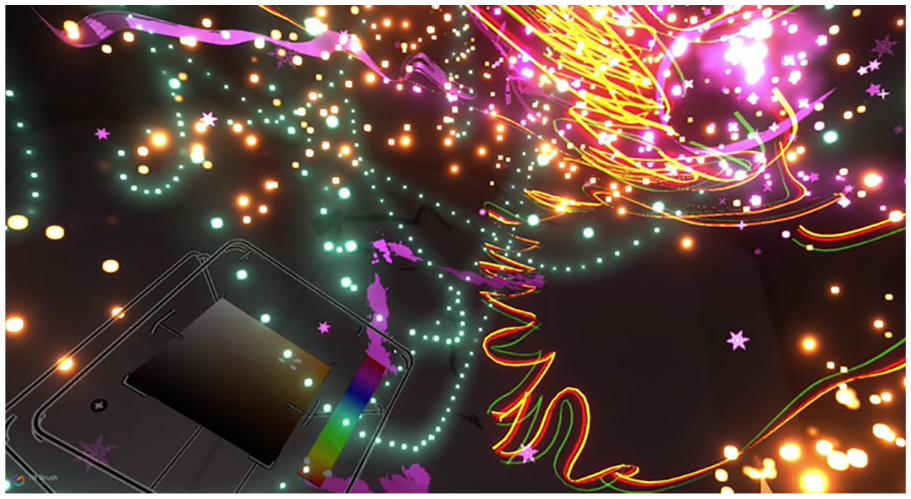

Each group of students was invited to watch their peers and interact as they used the HMD in a supportive environment. During the VR task, a semistructured think-aloud interview was conducted (see Hevey, 2020), and video was used to record how users engaged their senses and bodies to represent their ideas through three-dimensional and immersive VR painting (see, e.g., Figure 3).

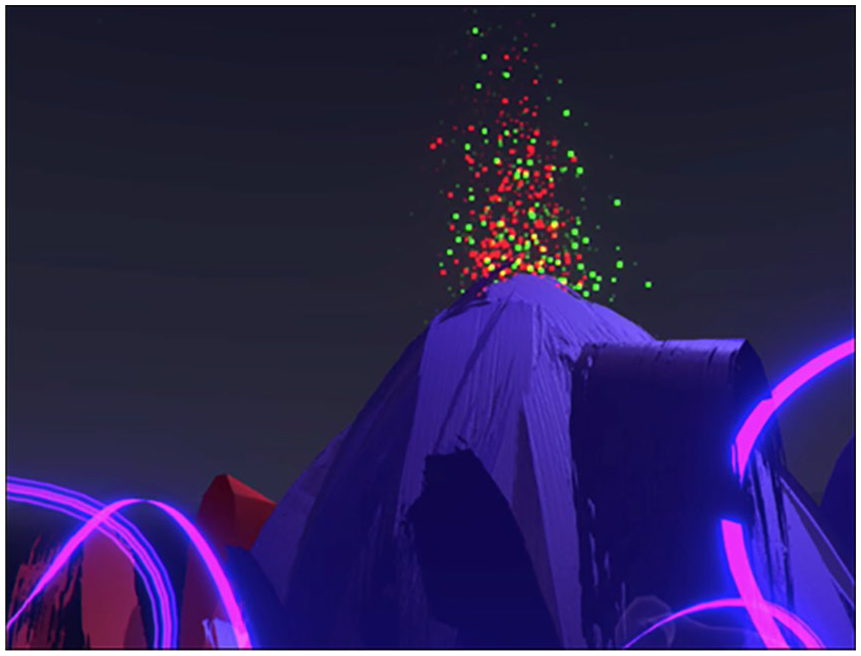

Virtual painting “Happy” by Austin.

Data collection involved three main multimodal and digital data sets gathered about the virtual learning experience for each of the 47 participants. The purpose was to examine the affordances and constraints of VR painting for supporting students’ embodied digital media production:

Continuous video observations of each student using the VR headset and sensors (Click for sample video: https://tinyurl.com/y3p275bj).

Continuous screencasts to enable replaying the process of virtual designing by each student, as well as digital recordings of the virtual paintings (Paste to view in browser: shorturl.at/eivZ4).

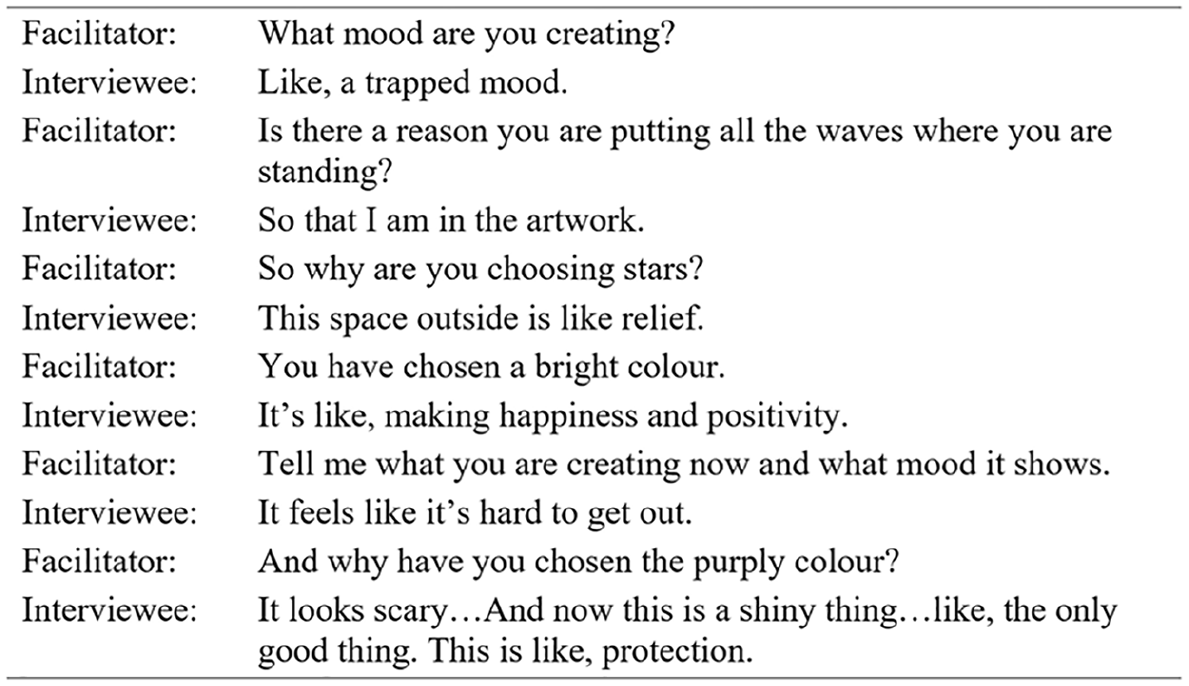

Transcripts of student think-aloud responses during activity (see Figure 4).

Sample think-aloud interview: “Trapped-relief-happiness.”

Think-aloud methods involved participants verbalizing their thoughts as they completed the given virtual task. A sample of the think-aloud questions included items such as “Tell me which mood you have chosen to convey? What are you making now? What are you thinking as you design? How easy or difficult is it to communicate your ideas?” The think-aloud dialogue was intentionally open-ended to capture the individuality of the students’ thinking about their virtual designs. While it is acknowledged that some aspects of a composer’s decision making may not always be conscious, and may also occur after composing, think-aloud research methods are particularly suited to investigating thought processes that occur during learning tasks, generating valuable new understandings about learning processes or other focal phenomena (Hevey, 2020). By interviewing the students during the learning experience, it also reduced the time burden of participation in research during a global pandemic and a crowded curriculum.

The use of video recording enabled the researchers to build up a layered, audio-visual record to analyze the students’ sensorial and embodied participation as they designed while wearing the headset, and this record was synchronized with the digital recordings of their virtual paintings for analysis. Video recording was a necessary method because the users’ haptic, head, body, and limb movements are too complex to capture sufficiently using field notes, or via unassisted observation as the action unfolds (Loizos, 2008). In addition, the audio-visual record could be replayed to attend to separate or joint modes that would go unnoticed without the use of video (Garcez et al., 2011). The think-aloud protocol allowed the researchers to analyze the students’ commentary about what they were designing, feeling, thinking, and sensing as they moved about the virtual space. In addition, these methods supported the analysis of the process and products of multimodal designing.

Data Analysis

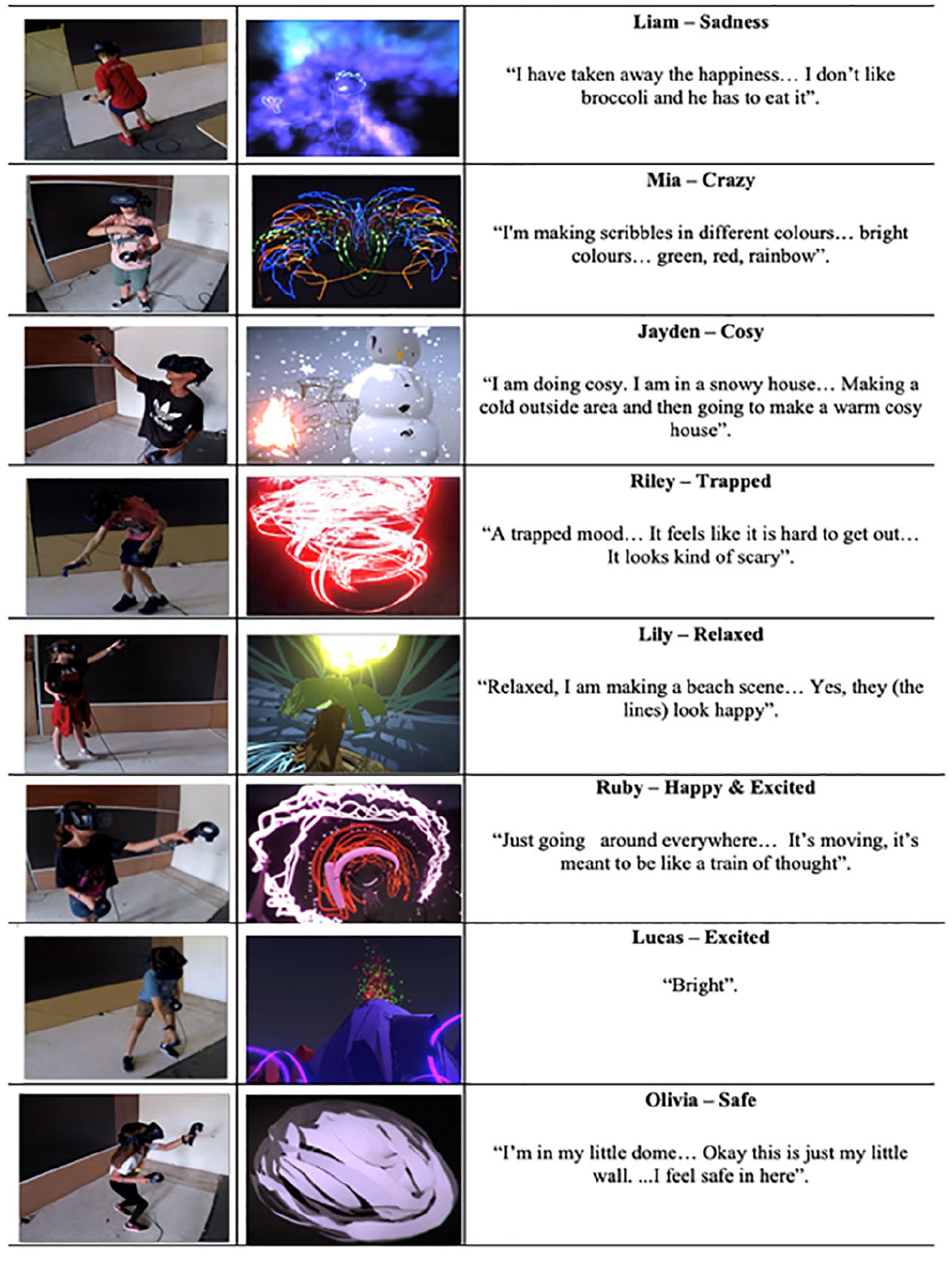

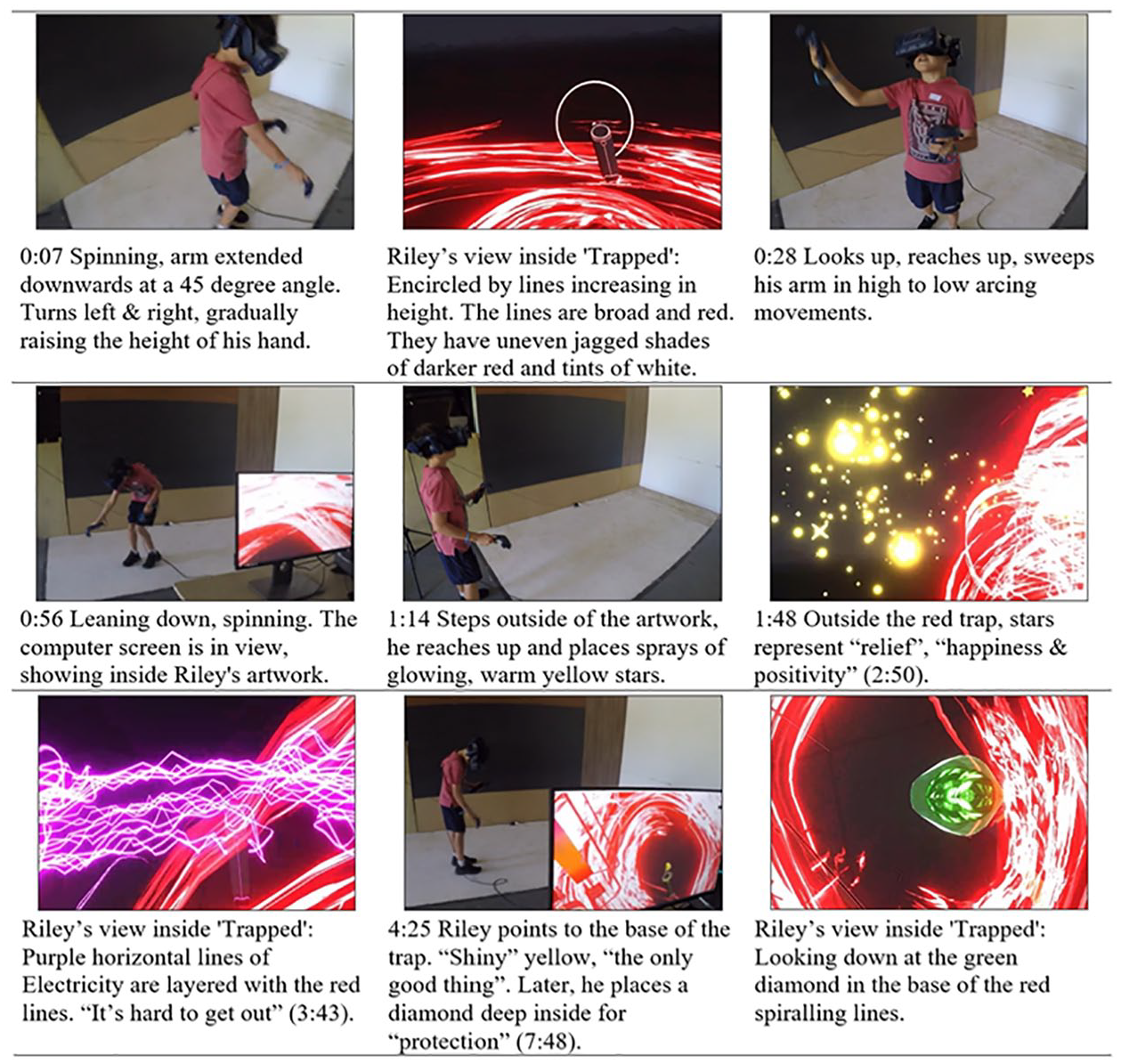

A multimodal analysis of the VR interactions was enacted first by combining screen shots of video recordings of the students’ physical motion with the transcript of their think-aloud speech, matched to the participants’ screencast recordings of their digital designs. Samples from the larger data set are shown here, including body movement, design outcomes, and think-aloud excerpts (Figure 5).

Time-matched video screenshot, designs, and transcript.

The multimodal analysis of the video recordings focused on the embodied resources—head, body/torso, haptic, and locomotive movement—of the participants in virtual painting. The researchers analyzed the connections between the sensorimotor actions and the replayed screencasts of the children’s virtual painting in Tilt Brush™ matched to the think-aloud transcripts (see Figure 6).

Riley’s multimodal transcript.

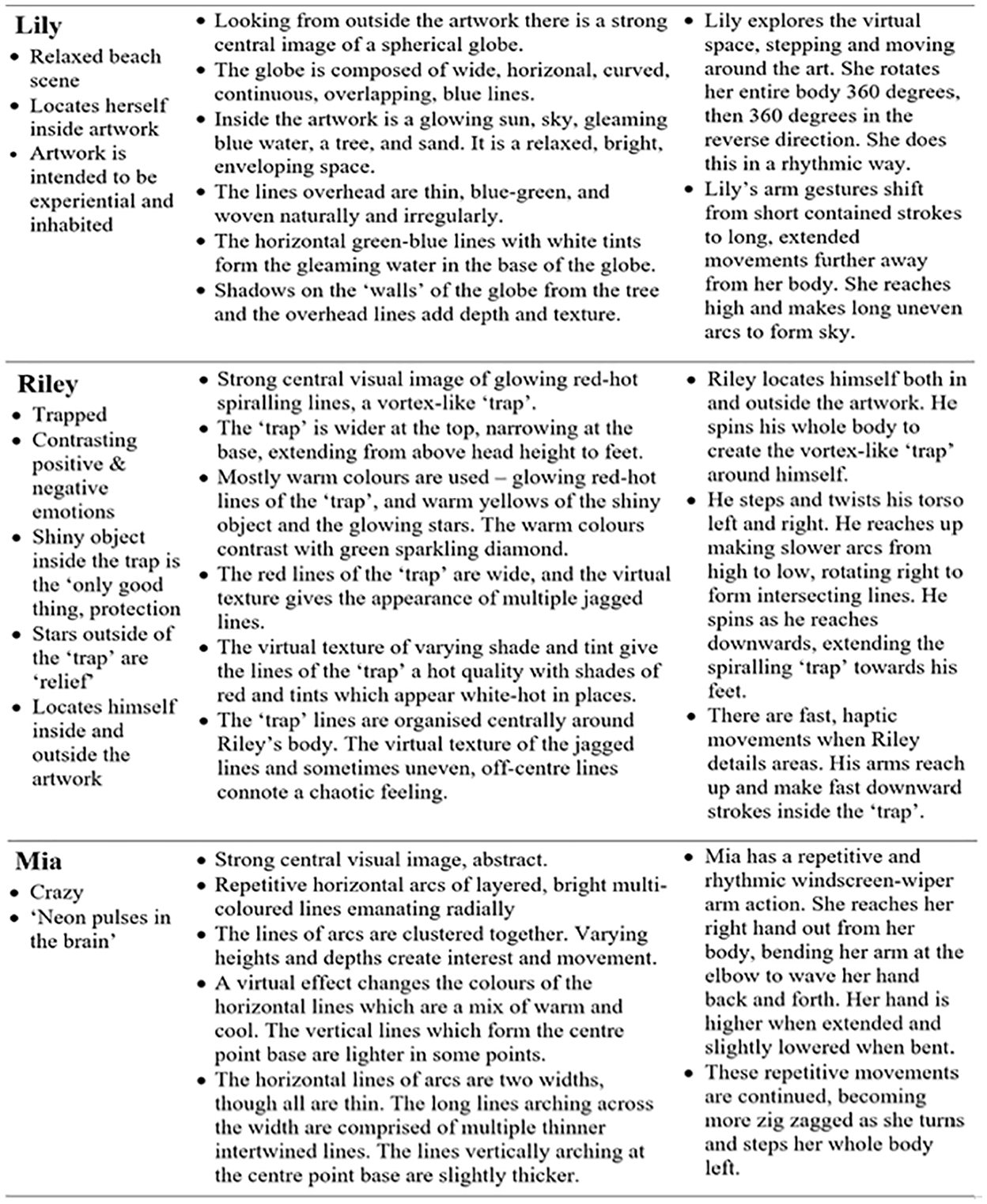

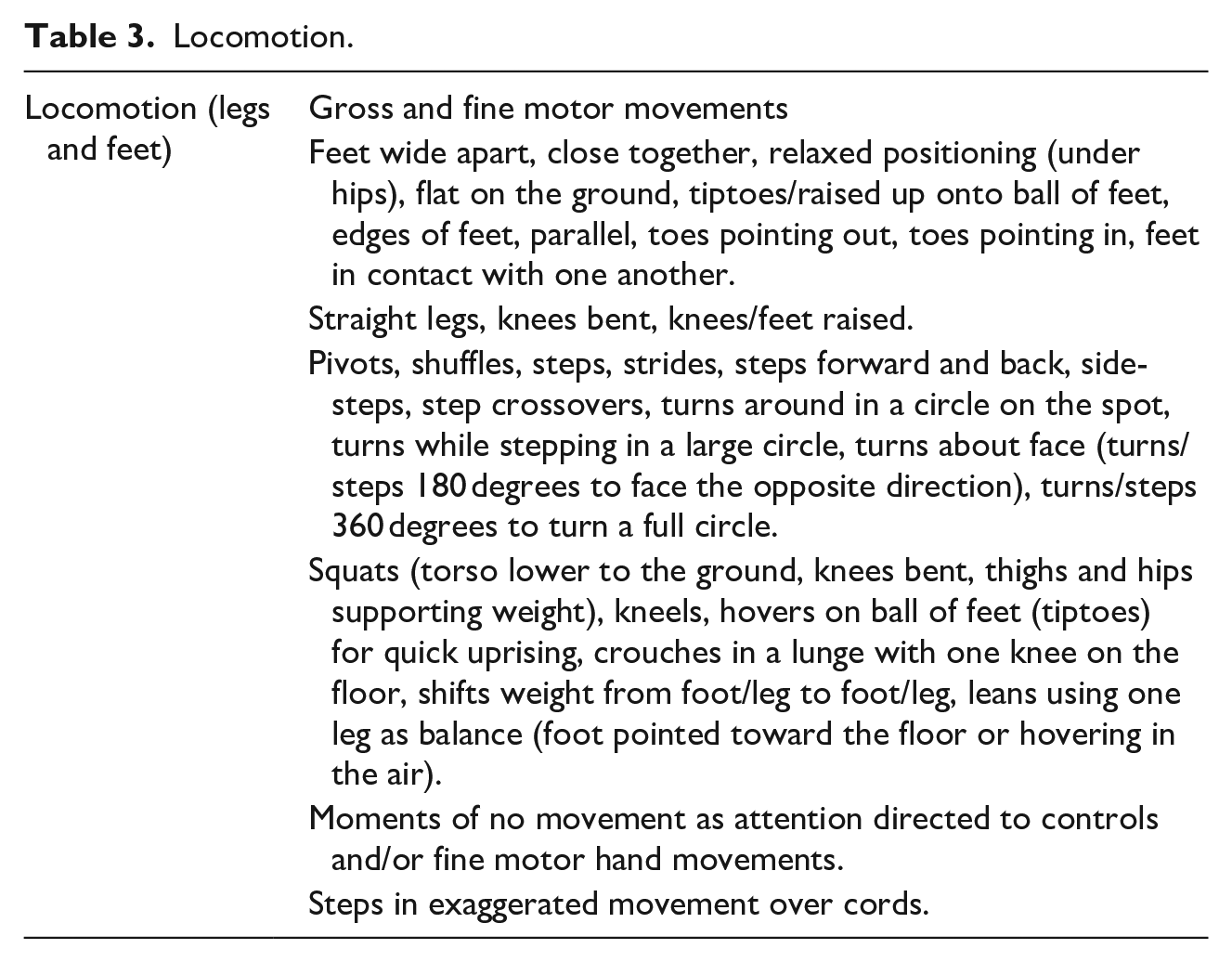

These methods supported the analysis of the process and product of virtual painting with a degree of real-time synchronicity. The think-aloud analysis gave insight into the children’s use of embodied action, and their articulated perceptions of the affordances and constraints of the mode during virtual painting, a multimodal form of interaction (Norris, 2004). Analysis of the video attended to coding the students’ head movements, haptics—the tactile, spatial, and kinesthetic senses in the process of meaning making (Lee & Duncum, 2011), and locomotion—the body’s movement from place to place. We analyzed the modal constraints coded by themes that emerged from the multimodal data: (a) immateriality, (b) disembodiment, and (c) somatosensory mismatches. The researchers also compiled a record of the students’ chosen emotive theme (e.g. crazy, sad, trapped), analysis of the paintings, and student body movements (see Figure 7).

Example students’ painting themes, visual elements, and body movements.

Aligned to the research question, the analytic tools we employed enabled the researchers to identify the repeated and significant modal affordances and constraints for embodied digital media production throughout the virtual painting process across the student cases that emerged from the data. These included (a) Modal affordances: whole body movement, haptics, and locomotion; and (b) Modal constraints: immateriality, disembodiment, and somatosensory mismatch.

Findings

The results are divided into two main sections that describe and theorize the findings about the model affordances and constraints for embodied designing using virtual painting tools. The students’ embodied actions were instrumental in the application of virtual paint and were not addressed directly to a specific audience. Their movements involved tacit, implicit, and often taken-for-granted meanings associated with the practical use of the virtual brush or tool (see Bezemer & Kress, 2014, for a discussion on the implicit forms of touch). In this sense, the movements differ from the use of spoken words, where meanings are communicated with some regularity of signification that is understood by others in a culture. The first section describes and theorizes the modal affordances, and the second, the modal constraints, for multimodal design.

Affordances: Whole Body Movement, Haptics, and Locomotion

Whole body movement

To contextualize the findings, the principal mode of interest here is virtual painting—a hybrid mode of communication through which participants can paint using virtual brushes using a digital game controller that emits visible, intangible ink into the air. Regarding materiality—how the design is tangibly sensed and perceived—the three-dimensional marks have no corporeal substance in the real world, and the marks themselves cannot be felt with the body. Unlike conventional painting, drawing, and sculpting, virtual painting involves no surfaces upon which to paint or mixing of paint. Virtual painting does not conform to the laws of physics, such as gravity. Thus, artists can create gravity-defying, three-dimensional representations through in-air haptics that typically appear to be suspended in the immersive world.

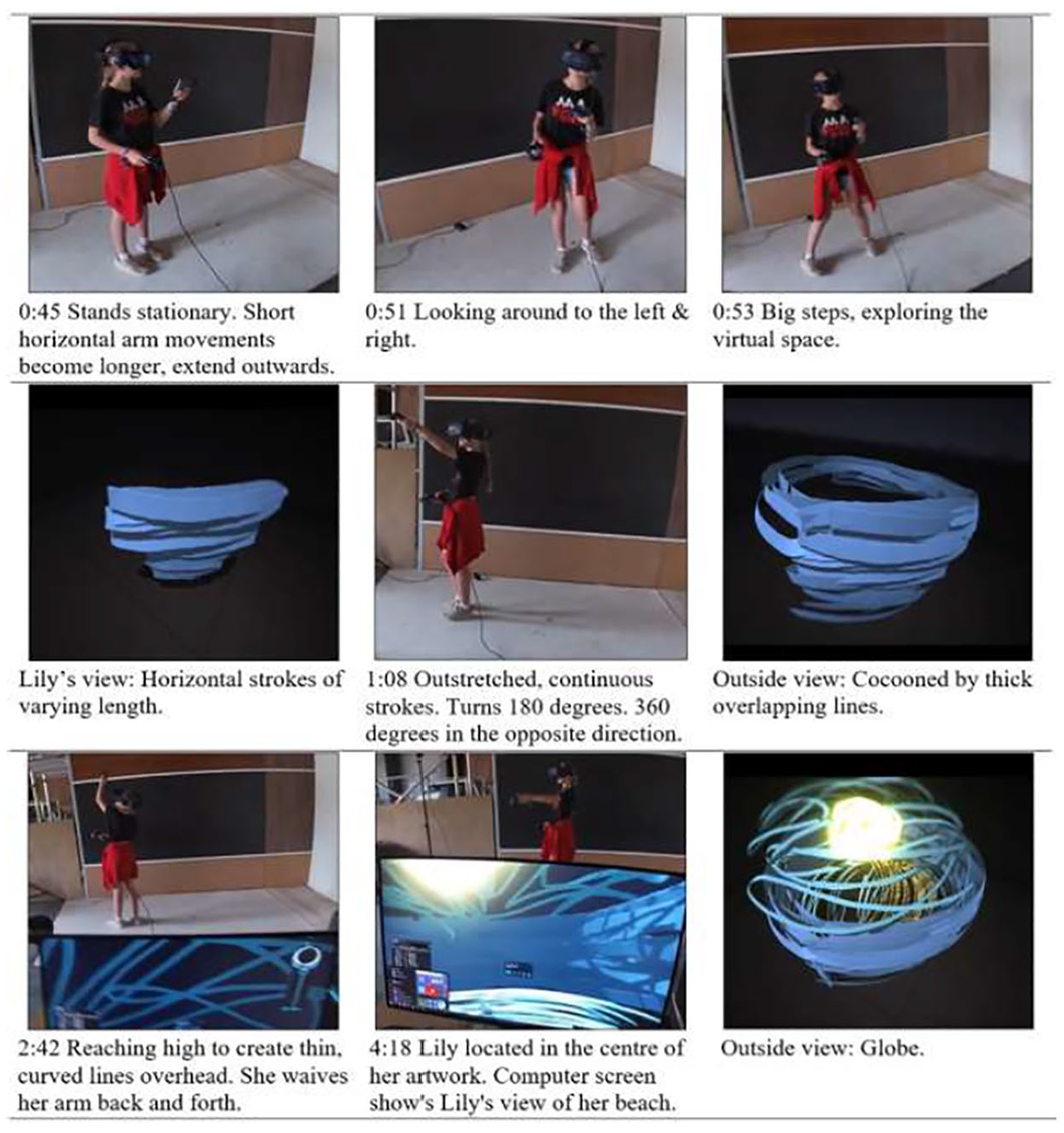

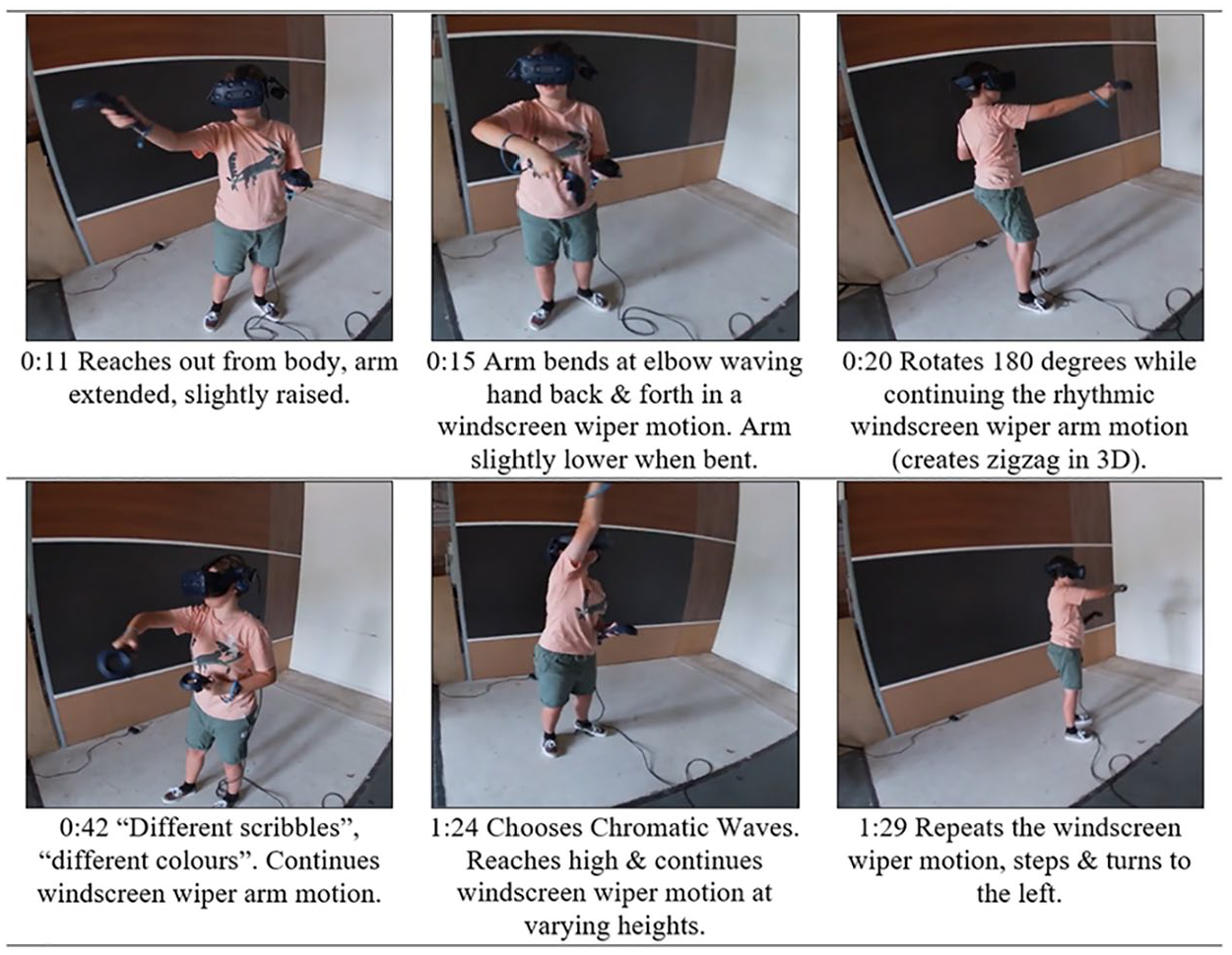

Essential to the students’ creative multimodal designing in the virtual mode were the patterns of whole body meaning-making through sensory-motor interactions. Virtual representation involves bodily experience that is simulated in the virtual environment, involving multiple aspects of body in action. The user’s body movements were simulated in the virtual space to generate the contours of the virtual designs. A sample of the broad variety of head, torso, haptic, and gross-motor movements of the limbs that were integral to meaning making are shown in Figure 8 below, which is a multimodal transcript combining screen shots from Lily’s video record, research observations, and virtual painting screen capture.

Lily’s head and body movement.

In the illustration above, Lily drew on the use of a variety of sensory apparatus, such as vision, and movements of the head, body, hands, and feet. Lily used an array of haptic tools for designing with sensors responding to both large and small arm movements, and controls supporting the selection of brush size. Shown in Figure 8, Lily used outstretched arm movements combined with 360 degree turns of the body to apply a thick, blue, papier-mâché effect depicting a spherical base of overlapping lines, then contrasting this pattern with the thinner “velvet ink” interwoven lines wrapped around herself from a perspective within the virtual display.

Using several large and important 360-degree rotations across this short sequence, Lily created a spherical pattern of lines to immerse herself virtually in a three-dimensional, tropical ball with palm trees and a bright sun—“a relaxed beach scene.” The curved surface was formed of brush strokes that extended in a fixed radius from her body. The potentials of virtual painting for engaging large, gross-motor movement contrasts many digital design and representation tasks that involve fine-motor movements using a keyboard, mouse, controller, or touchscreen (Walsh & Simpson, 2013). It similarly contrasts the kinds of haptics used in many nondigital representational tasks in schooling, such as writing and drawing (Minogue & Jones, 2006). The gross motor movement might appear to be comparable with non-digital art forms, such as painting large murals or sculptures, yet users of VR move while experiencing “presence,” the subjective experience of being in one place while physically situated in another—of being inside the three-dimensional painting (Kavanagh et al., 2017). The immersive virtual environment directly simulates the user’s gross-motor movement, while similarly requiring fine-motor dexterity with the controls.

Head movements were important in the immersive space, enabling participants to attend visually to one part of the design at a time and supporting gaze, co-ordinated with haptic action. For example, in terms of head movement, Lily’s head moved to follow her hand movements, occasionally pausing her painting actions, and turning her head to view the three-dimensional design from multiple angles (see Figure 8, frames 3 and 4). Nate similarly spent several moments rotating his head and body to observe his completed design from different angles in the virtual space. This is because the entirety of the 360-degree, immersive environment could not be within full view of the participants, with objects sometimes being behind, beside, above, or below the child’s line of sight, requiring head movements to bring into view the actions of the hand.

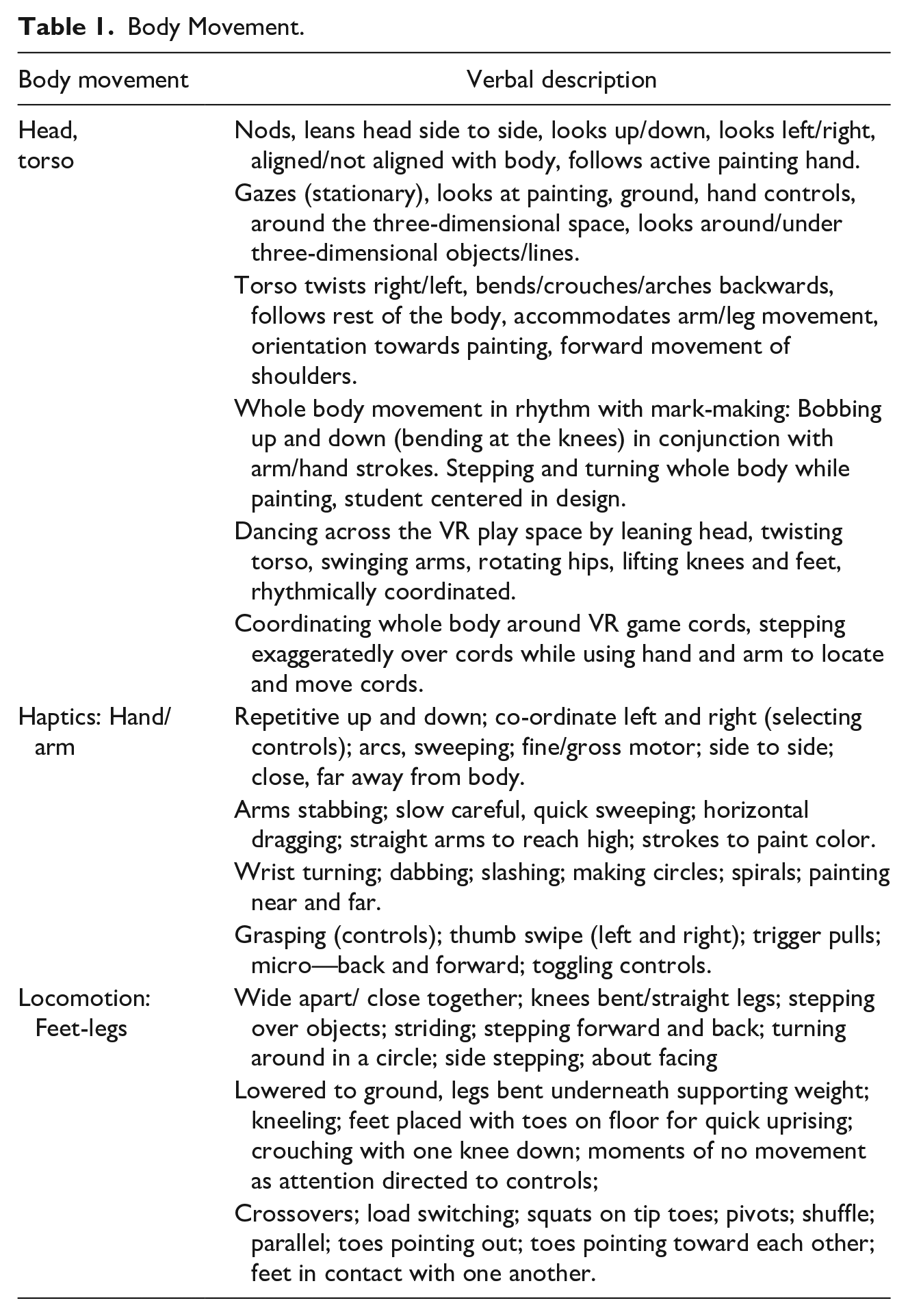

Table 1 collates the array of body movements grouped into three main categories—head and torso, haptics, locomotion—observed from the video analysis across student cases. While these are separated here for analysis, note that students would often combine embodied resources, such as haptics with head movements (see Table 1).

Body Movement.

Having discussed the role of whole body movement in immersive representation, the next section focuses specifically on in-air haptics and movement of the legs (locomotion), because these emerged as the most salient and wide-ranging sensorimotor resources for virtual painting.

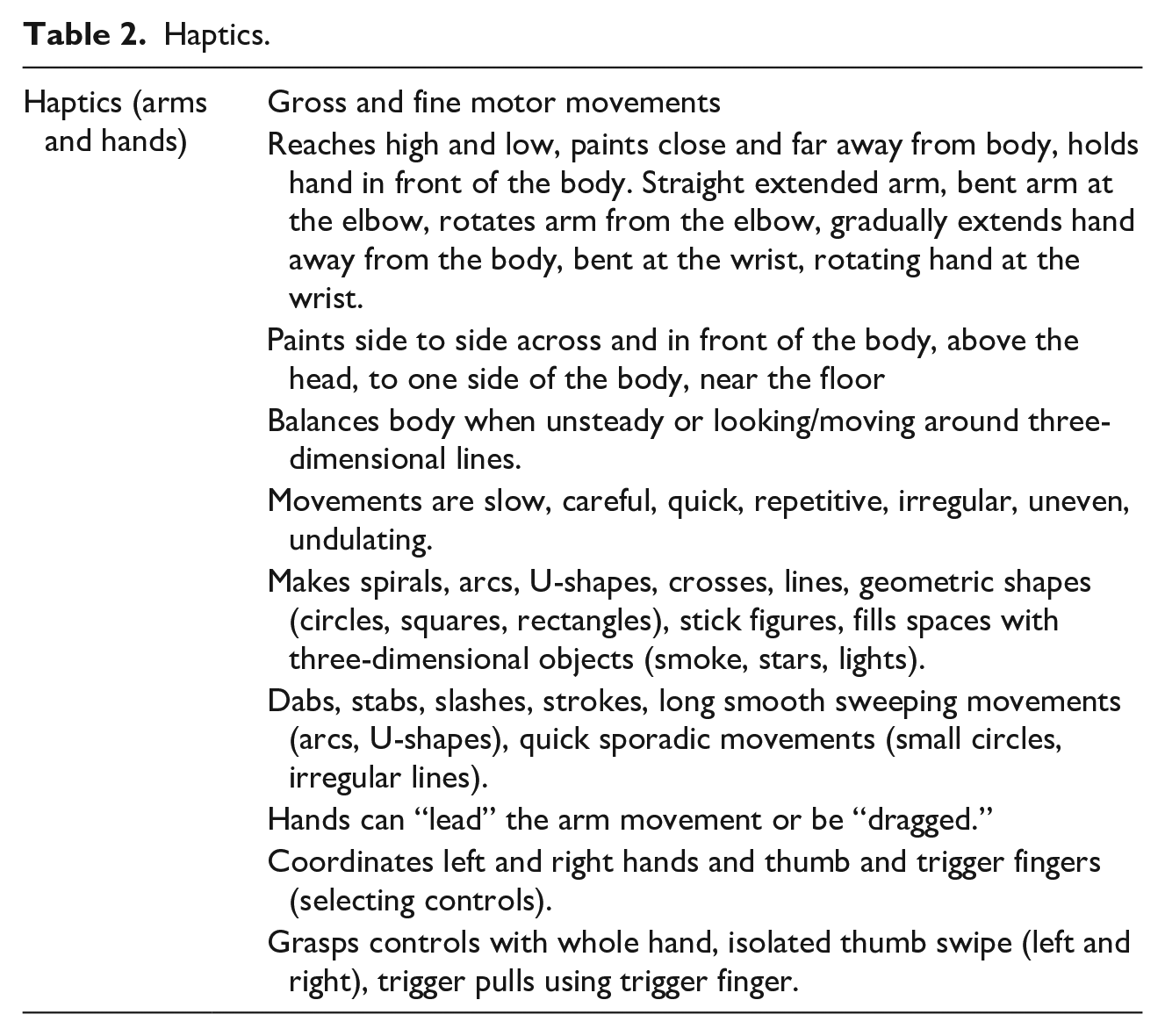

Haptic potentials

Haptics, the ability to touch and movement of the hands (Minogue & Jones, 2006), was continual and fundamental to multimodal composition in VR. We can distinguish between types of touch or haptics, which in the case of virtual painting is seen as transformative touch, because hand and arm movements are instrumental in shaping semiotic material (Friend & Mills, 2021). Virtual painting also involves auxiliary touch or the manipulation of mediating tools (Friend & Mills, 2021). The hand controls or virtual brush selections constitute the mediating tools in a similar way to various conventional brushes.

Viewed within the virtual environment, the virtual hand controls appeared to levitate, disconnected from a visible or virtual body, but moving in response to the user’s physical action. Motoric and perceptual action of the arms and hands is the basis for representing ideas in the virtual world. A variety of dramatic, haptic sequences were central to students’ designing, as illustrated in an excerpt of Mia’s designing shown in Figure 9 below.

Mia’s haptics.

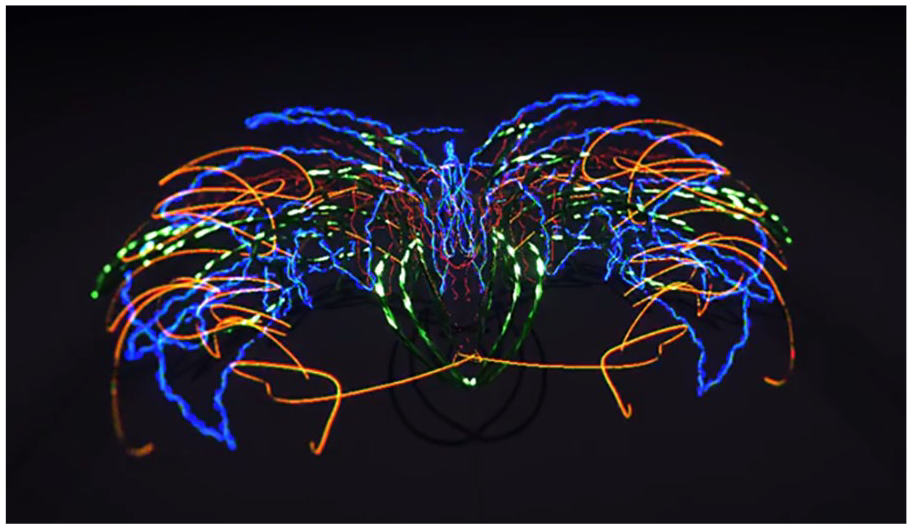

In the example above, Mia draws on a combination of visual and haptic senses to depict “crazy,” including wide, rhythmical waving motions as she extended her dominant painting arm outward, and bending her elbow to bring her arm back to produce repeated, neon, radial, arced lines in a curved pattern, consistently equidistant from her body, which was centered in her room-sized 3D design, represented below as a 2D screen shot (see Figure 10).

Virtual painting “Crazy” by Mia.

Mia continued these windscreen-wiper motions as she gradually turned while stepping to the left, her hand higher when fully extended and lowered to waist height when bent. This and similar turns, combined with the use of muscle memory—the ability to reproduce a movement without conscious thought—enabled her to mirror repeated lines that move outward from both sides of her body to create strong symmetry. Haptics is seen here to be central in producing large-scale virtual representations in a three-dimensional space, while haptics has been previously acknowledged for its role in apprehending spatial phenomena (Klatzky & Lederman, 1988).

Haptics was not used alone but was integral with vision, since the student’s head movements and gaze were focused in the same space (unimanual), as occurs when writing or drawing by hand (e.g., see Mia’s head movements in Figure 9), rather than divided across a visual space and a motor space. Bimanual tasks, such as typing on a computer keyboard, create a split that occurs because the eyes and hands are not focused on the same visual space. The eyes are mostly focused on the screen that is separated from the work of the fingers on the keyboard (Mangen & Velay, 2010). In contrast, new forms of haptic and visual activity of the body in VR were used in a unimanual and orchestrated way, integral to representation using VR painting. Unimanual composition, such as in handwriting, occurs where the eyes and hands work in the same space. Unimanual composition has been found to be superior for learning letter formation and recalling written information, compared to bimanually typing on a keyboard in a divided visual-motor space (Mangen & Velay, 2010).

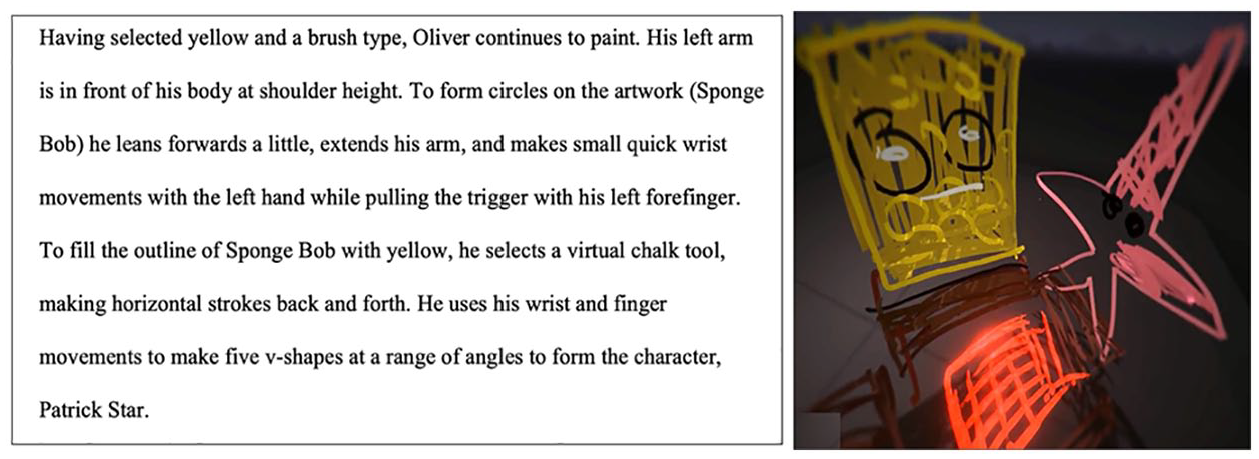

Haptic patterns were often subtle, quick, fine-motor movements, but unlike the use of the fingers when writing, or when using touch screen devices, such as phones and tablets, fine-motor tool-use to emit virtual ink was often combined with amplified action of the hands and arms to produce three-dimensional flowing lines and special effects throughout the 3 × 3-m2 space. Others used a smaller range of movement focused in one area of the room. This is important because more movement, or larger body movement, is not better for meaning making in VR than less extensive movement involving fine-motor movement, rather, and the sensorimotor action, large or small, is always integral to the representational task. This is illustrated in a video observation of Oliver, whose design referenced Nickelodeon’s SpongeBob SquarePants (see Figures 11 and 12).

Oliver using haptics to create an immersive painting.

Sample description from Oliver’s video and virtual painting.

A segment of the video analysis corresponding to the event pictured in Figure 11 illustrates the importance and variety of Oliver’s fine-motor movements involving his arms, wrists, thumbs, and fingers to control the virtual brush.

In these and other examples, haptics in the virtual environment included grasping and supporting the weight of the controller, applying varied pressure with the thumb and fingers, making contours using the arms in the air, and swiping laterally with the thumbs. Small rotations of the wrist produced circles, in this case, to depict the texture of SpongeBob, and five V-shapes to create the points of a large outline of a star—Patrick Star. Across the student cases, the variety of haptic resources for representation was extensive, as indicated in Table 2.

Haptics.

In addition to the real-time dynamic sequence of haptic movement, users experienced sound effects in Tilt Brush™ played in time to the haptic movements of the artists. The participants described these audio elements as helpful, because the timing and shifts in this sonic feedback were coordinated with the rhythms of their haptic action and specific visual representations. For example, the use of “velvet ink” produced a different sound effect than the “oil paint” brush, and when the user stops moving and emitting ink the sound effect stops. This gave the visual design a degree of sonic substance and variety, contributing to the immersive feel of the virtual experience.

This combination of sonic, visual, and haptic sensation can be described as sensory orchestration—the use of the senses together that involves multisensory processing—and which was integral to designing in the virtual world (Howes, 2014). Human attention is attracted to properties that are shared across multiple modalities—not visual information alone. Consistent with existing research, this multimodal attention enriched the students’ representation of ideas because visual learning is often stronger when supported by task-integrated sound and haptics, than when visual cues operate alone (Shams & Kim, 2012; Skulmowski & Rey, 2018).

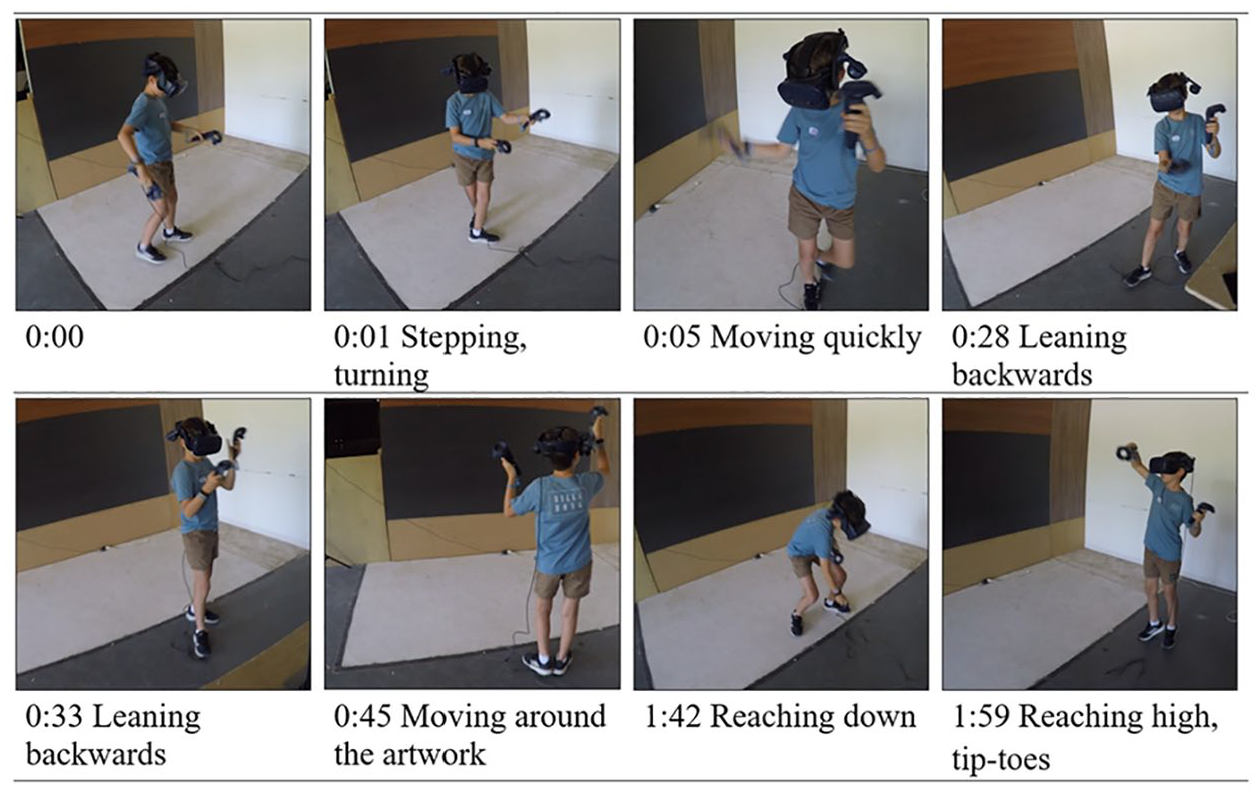

Locomotive potentials

Movements of the students’ feet and lower limbs were typically frequent, interrelated with other body movements. Locomotion guided the users’ design pathway, body positioning, and the length, breadth, and height of the design, such as when standing on toes to paint up high. The children would use their feet to anchor themselves strategically to create parts of the design before moving to a new area, bending at the knees to paint virtual forms that were below mid-body height. Interestingly, locomotion and the movement of feet have historically been relegated outside theories of meaning making (Mills et al., 2013). Virtual text creation was not enacted while seated, but was stepped along the ground. For example, Lily’s feet were observed to be close together, wide apart, parallel, or turned out, facing equally, or out of either side. Her footwork sequence supported the full 360-degree rotations to create body-encircling, three-dimensional forms.

The use of varied footwork was clear across the cases, such as Hannah, who created a spooky house. At times she stood on tip-toes and reached high above her head, left hand extended to create a taller design at the outer limits of her reach. At other times, both feet were planted firmly on the ground. Lucas, who named his design “bright” (Figure 13), moved confidently around the virtual space, optimizing the full 3 × 3-m2 area (Figure 14).

Virtual painting “Bright” by Lucas.

Lucas’ footwork sequence.

Lucas stepped while turning (Figure 14, frame 2), moving quickly (frame 4), leaning backward (frame 5), then sideways (frame 6), and backwards (frame 7) to form the mountain peaks. He then planted his feet wide apart and lowered his body from the knees to create sweeping neon swirls, then reaching up high on his tiptoes, feet closer together to create sparks in the sky (see Figure 14 below).

Physically active and working fluidly with the controls, Lucas’s feet led purposefully through the design. It is along the ground that sign-making or representation was paced out, designs that were rhythmically resonant with the mood of each creative text. Lucas’s finished virtual text was a rocky mountainous landscape and a volcano erupting with sparks of vivid color. The creative, immersive text was made on the move, accompanied by multiple overlapping, curved, and flowing lines that followed Lucas’s extensive and sweeping hand and arm movements. The brightness and lightness of the sparks contrast with the darker, shaded landscape below, serving as a focal point in the artwork.

The students conveyed their creative ideas using digitally mediated, visual, and motoric resources to create rich, immersive, and three-dimensional representations of emotive themes, such as happy, creative, excited, crazy, calm, relaxed, sad, and secure. There were repeated occurrences in which body movements embodied the emotions that the participants aimed to represent in their virtual designs, such as when Isabella danced, spun, and bobbed up and down rhythmically, her design standing for a “crazy” mood. Similarly, Ryan chose “crazy,” and his overall body movement was dynamic, bobbing up and down from the knees in time with his arm movements.

Sophie’s mood was anger and frustration, and her rigid, tightly controlled, and repeated vertical arm movements from the elbow reflected this mood. The speed, force, and direction of arm movement has been associated with different primary emotions of joy, sadness, and anger in dance movements (Sawada et al., 2003). The representation of emotive themes in VR often involved the students’ patterns of emotive movement that were directly relevant to the emotion conveyed in their representations. For example, Sophie created art with deliberate inconsistencies and left sections of the coloring incomplete to denote a “source of frustration.”

Jackson chose the theme of happiness, which was embodied in his quickly changing, varied, open movements at various heights to create a luminous landscape. Georgia also painted happiness, using complete body turns, arm movement twists, and with arms waving excitedly above her to make glowing effects in the virtual space. She then created circular loops with her wrists, moving her body happily as she bobbed her wrists up and down, producing golden waves interspersed with zig-zagging red, orange, and green effects. The students’ dynamic, bodily actions were emotionally task-integrated—a variety of distinguishable emotive body movements were aligned with their self-chosen mood themes. The students’ creative representation of moods through virtual painting involved recurring kinesthetic patterns of bodily action or proprioception that contributed to a form of embodied communication of emotive themes through this hybrid, virtual mode.

In addition to the participants’ observable and immediate body movements, learners represented places, practices, and events in the VR environment that referenced previously experienced and internalized bodily experience in the real world. For example, to show sadness, Liam painted a boy in a monochromatic blue mist confronted with the taste of broccoli: “I don’t like broccoli and he has to eat it.” Olivia created and inhabited a spherical cubby house for “secure,” while Lyla depicted “scary” emotions with a haunted house of Halloween-themed purple coils and red eerie smoke. Jayden depicted a fire to warm himself in the cold, wintery environment beside his snowman. The students drew on bodily experiences and interpretations of the world, including memories of tastes (broccoli), recreational experiences (cubby), customs (Halloween), and inferred temperatures (cold snow/hot fire). Table 3 presents a collated list of locomotion observed across the cases.

Locomotion.

Constraints: Immateriality, Disembodiment, and Somatosensory Mismatch

Virtual designing in the three-dimensional world was not without constraints. Frequent themes that emerged from the data analysis, and which are explored in this section, were associated with the immateriality of the virtual mode, a sense of disembodiment within the virtual environment, and somatosensory mismatches between virtual and real worlds. Somatosensory refers to physical sensations perceived anywhere in the body. These constraints intersected in ruptured ways with the participants’ creative and representational flows—skilled positionings between the perceiver and the world (Cox, 2018). It is noted here that participants were initially given the opportunity to predict the creation process by watching others first, given research of embodied cognition showing that the mind can visualize or rehearse proprioceptive action mentally. Perception is closely tied to thought processes so that objects can be perceived by first creating mental images of how they could be tangibly manipulated (Gibbs, 2005).

An observed limitation of the immateriality of the virtual mode was the difficulty for most students to locate their actions precisely within an environment lacking physical corporeality, a baseline, a canvas, or surface of painted objects in the virtual world. This lack of material substance made it difficult for most users to seamlessly relocate their virtual brush to connect or continue lines. For example, Lachlan tried to fill the surface of a shape he had drawn with color, but the colored lines hovered in front of the shape, like a new layer, rather than filling the outlined shape. The children’s paintings of enclosures were often messy, multilayered, and overlapping by default, rather than neatly joined and sealed, because there was no material canvas or objects for the virtual brush to touch or press against. Similarly, Olivia, was inexact when locating herself inside and outside her virtual dome, experiencing the related difficulty positioning her body within the three-dimensional virtual enclosure without pressing against any physical structures to confirm her position.

Another key constraint was that the infidelity or incongruity between the virtual world and the tangible objects unseen from view in the real world created moments of discontinuity in the realism and immersive qualities of the virtual world, a somatosensory mismatch between virtual and real-world environments (Kavanagh et al., 2017). In the VR environment, the user sees virtual hand controls that appear to levitate in a disembodied way, since there is no virtual person depicted in this world, and users cannot see their hands.

A related issue of incongruity between worlds was that students would come into contact with unseen walls or occasionally swipe at the sensor tripods in the real world, since their view of the physical world was obstructed by the headset. Others, such as Jackson, Lucas, and Chloe would briefly turn, step, or pause to untangle the power cords of the headset that they could feel draped over their shoulders and legs at times. This entanglement occurred when students enveloped the virtual paintings around themselves, such as Chloe’s writing of her name in the air, both virtual letters and the tangible cords encircling her body.

The process of taking up virtual designing was directly responsive to feeling and action in the virtual environment. For example, when students independently executed manual explorations of the virtual world using the haptic tools, they began to show more advanced painting abilities, attending to different sensory properties of objects. A clear example is the participants’ varied take-up of the potential to paint three-dimensionality. The difficulty of imagining or portraying solid objects from all angles proved difficult or inefficient for some students, who at times, resorted to using two-dimensional, schematic drawings to convey concepts and human forms more clearly and quickly.

For example, Lachlan painted a two-dimensional square to represent a birthday cake, filled it with color, and added a series of straight lines protruding from the top of the cake for candles. Lachlan explained that he was using two-dimensional forms to save time. Ava painted a two-dimensional rectangle to represent a fridge using a luminous thin green line. Stepping forward to get closer to the fridge, she could view the drawing from an unfamiliar perspective, and then realized the three-dimensional potentials of the virtual painting mode. She quickly erased the rectangle and began to paint a fridge with a greater sense of depth, adding an open door angled to create perspective. Ava then applied several linguistic and drawing conventions for two-dimensional contexts, writing the words “no milk” inside one of the fridge sides, and drawing a stick person viewing the fridge with a frown. Across multiple cases, different students were seen to misunderstand, knowingly resist, or utilise the three-dimensionality of the virtual mode.

Disrupting the design flow, many students made erasures or deletions before moving to an alternative design choice. For example, Oliver began by experimenting with a single horizontal line of virtual ink, and then at once, erased it. Maddison erased her virtual design after 2.l5 minutes of painting and began again upon discovering a better design choice to meet her intentions. Others, such as Olivia, made significant or lengthy erasures, cocooning herself inside a large dome drawn using thick, circular, overlapping lines. Once Olivia realized that she could move in and out of the digital design, she decided to experiment with other lines and colors. After 3.5 minutes of virtual painting, she erased the entire artwork and began again. However, as students became more proficient through trial-and-error movements, the students’ three-dimensional representation using the tools became more automatic.

Movement was necessary to interpret the sensations that were available to the participants, and to explore the limits of the tools. The students continuously predicted the sensory inputs from their bodies, the tools, and the environment around them. Like other learning contexts, discrepancy or mismatch between the sensory input and the predicted outcomes for the virtual design (i.e., prediction error) often led to participants modifying their intentions or changing their actions (Hald et al., 2016). The sign-makers, typically standing, were enabled or limited by their body, such as height, arm span, and mobility, which influenced the size of the virtual designs. For example, a student on crutches sat on a chair rather than standing, freeing her arms to use the sensors and maintain balance while wearing the HMD. Designers of VR technology presume that the user is sighted, having full use of their limbs, particularly the dextrous use of the arms, hands, fingers, and thumbs. Such variable relations of bodies and tools point to some of the ableist assumptions that are embedded in the VR technology development (Storey, 2007).

Discussion

It has been argued, “Without our bodies—our sensing abilities—we do not have a world” (Arola & Wysocki, 2012, p. 3), a principle that equally applies to embodied action in the VR learning environment. While some might argue that this bodily engagement is so apparent that it is unworthy of attention, mainstream cognitive science exclusively attends to mental processes (Shapiro, 2011). Likewise, Mangen and Velay (2010, para 54) have critiqued a lack of attention to sensorimotor processes in New Literacy Studies: Currently dominant paradigms in (new) literacy studies (e.g., sociocultural theory) commonly fail to acknowledge the crucial ways in which different technologies and material interfaces afford, require, and structure sensorimotor processes, and how these in turn . . . shape, cognition.

Research of VR technologies in education, while still in its infancy, has to date drawn more attention to the benefits for social, collaborative, memory, and skill development (see, e.g., Radianti et al., 2020), overlooking the significance of sensorial engagement of the body, and its connection to virtual text production and multimodal design.

The significance of this research pertains to the understanding that human cognition, creativity, and representation are deeply rooted in sensorimotor processes, inextricably based in bodily experience (Corcoran, 2018). We know that language and mental tasks are often performed better when accompanied by relevant bodily movements, such as the use of gestures to aid speech (Broaders et al., 2007). Yet research has not previously examined the affordances and constraints of VR technologies for embodied communication (Henriksen et al., 2021). This research has demonstrated the modal affordances of VR painting to communicate concepts dynamically using an expanded sensorium that draws on a much larger range of bodily movements than conventional writing and drawing.

In particular, the findings identified an extensive array of bodily, haptic, and locomotive resources for three-dimensional, multimodal designing and representation in immersive virtual environments at a time when VR technologies are predicted to be one of the fastest-growing technologies this decade (Fernandez, 2017). Importantly, the students’ digital media compositions were produced through varied domains of proprioceptive bodily action that was not arbitrary, but was directly task integrated (Skulmowski & Rey, 2018). This involved the use of a unimanual process with a shared visual-motor space, similar in this regard to conventional painting and drawing, which provides an optimal condition for letter recognition (Mangen & Velay, 2010).

However, dissimilar to forms of text creation on either paper, computers, or smartpad-based technologies, these bodily actions were not dependent solely upon fine-motor movements of the hands (e.g. Crescenzi et al., 2014), but involved both fine-motor tactility and extensive gross-motor movement in cross-modal interaction (haptics, locomotion, vision, hearing). Kinaesthetic action involved the use of the head, torso, arms, hands, and feet, most often co-ordinating multiple movements, which is noteworthy because previous studies have shown that even much smaller motor movements, such as those involved in handwriting, sparks motor activation in the brain (Haas & McGrath, 2018).

Students’ thought and actions were never incidental to the creative work, while virtual designing depended upon varying types of muscular experience, feeling their way into an awareness of kinaesthetic, three-dimensional forms that were visible within the virtual experience, forms that possess no physical tangibility or material substance. Movement was the very expression of their creative ideas, mediated by VR technologies. Their multimodal designs flowed from an array of expressive body movements that often tacitly communicated their self-chosen emotive themes or moods that sparked motor activation.

At the same time there were constraints for virtual designing associated with the immateriality of the mode, some aspects of perceived disembodiment within the virtual environment, and somatosensory mismatches between virtual and real worlds. This contributed to difficulties for some students painting three-dimensionally, multiple erasures and false starts, and distracting sensory information on the skin from unintended contact with real world objects that were hidden from view in the virtual environment.

In sum, the increasing availability of VR technologies for the creative representation of ideas generates different possibilities for embodiment in multimodal communication, including large body movements and the engagement of multiple senses. VR technologies may not yet be accessible to all teachers, and more critically, any integration of technology can be used to simply perpetuate old pedagogies in a new guise, become time-fillers, or be used for the passive transmission or mechanistic rehearsal of existing knowledge (Haas & McGrath, 2018). However, the present study has demonstrated that students created virtual texts that involved a broadened range of cross-modal attention in VR environment with novel forms of creativity. The three-dimensional, immersive environments involved the students’ use of multiple senses in creative action through a currently underexplored embodiment in VR texts.

A key implication is that the future of technology will continue to open different possibilities for bringing together digitally mediated forms of perception and sensorimotor action using multiple senses in communication and creative media production (Lin & Johnson, 2021). This brings new challenges and opportunities for understanding, interpreting, and researching new forms of three-dimensional multimodal representation in virtual simulations, classrooms, and written communication contexts. Composing virtual texts moves beyond print in ways that develops new kinds of literate identities (Vasudevan et al., 2010), with new implications for understanding language and embodied cognition (Gibbs, 2005).

A further implication is that worlds of knowledge are now often multisensory environments—both digital and nondigital—that are more powerfully perceived and understood and through multiple senses (Shams & Kim, 2012). The sensory and embodied nature of VR representation calls for a significant shift in the way text creation has conventionally been taught and conceptualized in communication studies and education—a move that holds significant promise for extending the boundaries of current research.

Footnotes

Acknowledgements

The authors acknowledge Mark Williamson (Big Picture Industries Inc.), Dr Lesley Friend (research assitance), and teachers at Silkwood Primary School who collaborated in the implementation of this research. We acknowledge Professors David Howes, Jim Gee, and Theo van Leeuwen for their contribution to theory development and dialogue in the founding of this project.

Author Note

The views expressed herein are those of the authors and are not necessarily those of the Australian Research Council.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by an Australian Research Council Future Fellowship awarded to Kathy A. Mills (FT180100009), funded by the Australian Government.