Abstract

This study examined whether answering focused explanative questions during pauses in an immersive virtual reality (IVR) lesson on pipetting procedures could enhance learning. The goal was to take a generative learning activity, known to be effective for declarative knowledge with conventional media, to the context of procedural knowledge with immersive media. College students with limited pipetting experience were instructed on how to use pipettes in IVR, carried out a serial dilution task in IVR using virtual pipettes, followed by an in-person serial dilution task using real pipettes, and took a test of their knowledge about pipetting. Students in the explanation group were asked to remove their head-mounted display and type responses to explanative questions during five pauses in the IVR lesson, while those in the no explanation group continued the lesson without pausing. The explanation and no explanation groups did not differ significantly in performance on the virtual or in-person serial dilution tasks, or on the knowledge test. The results highlight the challenges of transferring generative learning strategies, successful in teaching declarative knowledge, to the understudied domain of procedural knowledge in immersive environments. It appears that the logistics of the generative learning activity may have caused distraction.

Objective and Rationale

Learning procedural skills is essential in many STEM fields yet mastering these skills through conventional media can be challenging because learners often lack opportunities to physically engage in the procedure. Immersive virtual reality (IVR) can address this problem by allowing learners to actively practice procedural tasks in a simulated environment with assistance from an instructor. However, IVR environments can introduce unique challenges, such as distractions and cognitive overload, that may hinder learning (Lawson & Mayer, 2024; Mayer et al., 2023; Parong, 2022). A possible solution is to incorporate a generative learning activity that has been shown to be effective mainly for learning declarative knowledge in a non-IVR environment, such as in an onscreen multimedia lesson or a paper-based lesson (Fiorella & Mayer, 2015, 2016, 2022). A generative learning activity is a behavior that a student engages in during academic learning with the intent to improve the learning outcome (Fiorella & Mayer, 2015, 2016, 2022). Examples include taking notes, highlighting, outlining, writing a summary or explanation, answering practice questions, explaining to others, or acting out the lesson with one’s body (Dunlosky et al., 2013; Fiorella & Mayer, 2015; Miyatsu et al., 2018). Although explanation has been shown to improve declarative learning outcomes in non-IVR environments, its potential for enhancing procedural learning in IVR remains underexplored. The present study aims to address this gap by investigating whether asking learners to answer a series of focused explanative questions during pauses in an IVR lesson improves procedural learning on pipetting. This value-added approach extends prior research that has demonstrated explaining during pauses to be effective in learning declarative knowledge with conventional media (e.g., Lawson & Mayer, 2021) by testing whether it can be successfully applied to learning procedural knowledge with immersive media. We chose pipetting as the to-be-learned procedural knowledge because it represents a fundamental procedural task (i.e., a step-by-step sequence of manual operations) that is commonly used in some STEM fields and because we had access to the IVR source code, which has been used in previous research (Klingenberg et al., 2024; Petersen et al., 2022).

Background

The distinction between declarative knowledge and procedural knowledge is a long-standing distinction in the science of learning, in which declarative knowledge (or knowing what) refers to knowledge of facts, concepts, and principles such as knowing what a numerator and a denominator are whereas procedural knowledge (or knowing how to) refers to knowledge of procedures and strategies such as knowing how to carry out long-division (Anderson et al., 2001; Mayer, 2021). This distinction is in sync with the distinction between conceptual understanding and procedural fluency as two strands underlying mathematical proficiency (Kilpatrick et al., 2001) or between knowledge and skills in taxonomies for training (Clark & Mayer, 2024). The distinction between virtual and conventional media is a more recent distinction in the field of educational technology (Parong, 2022). Learning in IVR refers to learning by wearing a head-mounted display that creates the feeling of presence, which is an experience of being in a place that actually is computer generated (Blascovich & Bailenson, 2011; Witmer & Singer, 1998). Learning with conventional media refers to learning by viewing a screen via a desktop computer, laptop computer, tablet, or smartphone or even learning from paper-based materials such as a book (Clark & Mayer, 2024).

Given the increasing use of immersive learning environments for skill training (Clark & Mayer, 2024; Parong, 2022) and procedural training (Jongbloed et al., 2024), IVR may be particularly well-suited for procedural learning because it allows learners to repeatedly practice complex, hands-on tasks in a safe, controlled environment before applying them to real-world settings (Jongbloed et al., 2024). IVR allows learners to safely make errors, receive immediate feedback, and refine their skills in an interactive 3D environment. Research on procedural training in IVR is diverse and disjointed, with a dearth of value-added studies aimed at identifying how to improve learning in IVR (Jongbloed et al., 2024). Recent work on procedural training in IVR includes diverse topics such as learning surgical procedures (Turso-Finnich et al., 2023), fire-fighting safety procedures (Calandra et al., 2023), car detailing (Tai et al., 2022), energy inspections (De Lorenzis et al., 2023), and golf skills (Markwell, 2023).

In a recent review, Jongbloed et al. (2024) found 16 studies that compared learning procedural skills in IVR versus with conventional media, yielding a positive medium effect size favoring learning in IVR especially for transfer tasks. Although the literature contains some media comparison studies involving procedural skills such as these (i.e., studies comparing learning in IVR versus with conventional media), Jongbloed et al. (2024) found only one value-added study, but it did not involve procedural learning (i.e., studies comparing learning from a VR-based lesson versus from the same lesson with one feature added).

Generative learning strategies, such as explanative questioning, can be beneficial for procedural knowledge because they promote active cognitive engagement, which aligns with the ICAP framework’s constructive mode of learning (Chi & Wylie, 2014). In procedural learning, asking learners to produce brief explanations of portions of a lesson can help learners understand why each action is performed, anticipate future steps, and recognize errors. This deeper understanding of the procedure can improve their accuracy during the task and potentially their ability to apply this skill in novel settings. By prompting learners to pause, reflect on the procedure, and explain concepts they just learned, explanative questioning may help mitigate the cognitive demands of distractors and perceptually rich stimuli that can hinder learning. This strategy may also promote deeper mental representations of the task, which could reduce errors in subsequent pipetting tasks and improve procedural knowledge test performance by helping learners stay focused and avoid feeling overwhelmed. This present study addresses this gap in the literature by testing whether adding a generative learning activity improves learning procedural skill and knowledge about pipetting in IVR. Although generative learning activities have been shown to be effective with text-based learning (Fiorella & Mayer, 2015, 2016), there is a need to determine whether and how to implement them in IVR environments.

Literature Review on Aids to Text Comprehension

In the reading literature, inserting tailored and relevant prompts to explain in a text lesson have been shown to be effective in increasing text comprehension and learning (McCrudden & Schraw, 2007). Specific relevance instructions are instructions given to readers to improve text learning that highlight highly specific terms or information. The two types of specific relevance instructions are targeted segment and elaborative interrogation. Targeted segment instructions are “what” questions, typically used to draw specific text segments before or during reading. These questions are typically used to guide the reader to important information about specific parts of text. Elaborative interrogation involves “why” questions, which encourage explanatory responses using background knowledge or previous text encounters. The purpose of these style of questions is to improve learning by prompting readers to build relationships to their own knowledge or to previously read text (McCrudden & Schraw, 2007).

In an article by Dunlosky and colleagues (2013), they reviewed various learning strategies aimed at enhancing students’ academic learning. One learning strategy they discussed is elaborative interrogation. The authors suggest that the incorporation of elaborative interrogative questions may benefit learning by helping integrate new information with previous knowledge. In their review of previous studies that have used this technique, they report it generally has a positive effect on learning. However, they note that most studies using this type of questioning used them to assess cued recall, matching task performance, and recognition tasks (Dunlosky et al., 2013).

Another learning strategy that has been demonstrated to help learners engage in meaningful learning is self-explanation. Self-explanation involves prompting learners to elaborate on the content of a lesson they are learning by explaining it to themselves, either in oral or written form (Fiorella & Mayer, 2015). In their review of previous studies, Fiorella and Mayer (2015) observed that in 44 out of 45 studies, students who were asked to self-explain the lesson content performed better on subsequent tests compared to those who did not engage in self-explanation, demonstrating the benefits of including self-explanation as a learning strategy.

For example, in a study by Lawson and Mayer (2021) a computer-based animated lesson on greenhouse gasses was administered to students in four segments. Those assigned to the explanation group were instructed to generate an explanation on the material they just viewed after each segment. Those assigned to the more passive groups (e.g., reading an explanation or not engaging in an activity), were prompted to proceed to the next lesson segment. They found that the active process of explaining the content after viewing a lesson segment resulted in higher performance scores in a delayed posttest than those who were either assigned to the no activity group or the only read an explanation group. Similarly, they found that writing a focused explanation, which required participants to explain specific aspects of the lesson they had learned also resulted in higher delayed posttest scores than those who just read an explanation. While self-explanation and elaborative interrogation questions have differences in their implementation and application, both can be seen as similar and effective methods to use as a learning strategy.

Theoretical Frameworks

Generative learning theory posits that meaningful learning occurs when learners engage in appropriate cognitive processing during learning (Mayer, 2021, 2022; Wittrock, 1974). These processes include attending to the relevant information in the lesson (i.e., selecting), mentally arranging the information into a coherent cognitive structure (i.e., organizing), and relating the information to relevant prior knowledge activate from long-term memory (i.e., integrating). Generative learning activities, such as answering questions during learning in this study, are intended to prime these processes and lead to better learning outcomes (Fiorella & Mayer, 2015, 2016, 2022).

Based on previous findings demonstrating support for the application of self-explanation prompts and elaborative interrogative-style questions, we propose that participants who answer content-specific explanations framed as “what” and “why” questions will help learners in selecting, organizing, and integrating relevant information into their existing knowledge. These questions are expected to enable learners to apply this knowledge in a subsequent posttest and result in committing fewer errors in pipetting tasks. In the present study, we compare the learning outcomes of students who learn to perform a technical procedure in IVR while being asked to answer questions during five pauses in the initial part of the lesson (explanation group) or not (control group). Based on generative learning theory, we offer three predictions concerning learning outcomes:

The explanation group will perform better than the no explanation group on pipetting performance in IVR (based on the number of correctly completed tasks and number of errors committed).

The explanation group will perform better than the no explanation group on an in-person pipetting performance test (based on the number of correctly completed tasks and number of errors committed).

The explanation group will perform better than the no explanation group on a pipetting knowledge test (based on proportion correct). In contrast, as an alternative, cognitive load theory (Paas & Sweller, 2022; Sweller et al., 2011) suggests that the logistics of having to take off the head-mounted display and writing answers to many demanding questions at five points in an IVR lesson may be distracting and thereby mitigate any benefits of generative processing. In this case, we predict no difference between the groups on each of the foregoing research hypotheses.

Research Questions

As exploratory issues, we also examine whether the generative activity will help learners focus on the key material and feel more involved in the lesson as reflected in the following research questions.

Will the explanation group produce a lower mean rating of extraneous cognitive load than the no explanation group?

Will the explanation group produce a higher mean rating of presence than the no explanation group?

Method

Participants and Design

We used the software program G*Power to conduct our power analysis. Our goal was to obtain a .80 power to detect a medium effect size of 0.6 at the standard .05 alpha error probability. According to the power analysis, our recommended sample size was 90 participants, which we achieved. We conclude that the obtained sample size is sufficient to detect medium effects. The participants were 94 college students recruited from the Psychology Subject Pool at a large university in California. They received credit toward fulfilling a course requirement. Concerning gender, 32 students identified as male, 61 identified as female, and 1 identified as non-binary. Concerning ethnic/racial category, 39 students classified themselves as White, 23 classified themselves as Latinx/Chicano/a, 6 classified themselves as Black, 35 classified themselves as Asian, and 2 classified themselves as Middle Eastern or North African. The ages of the participants ranged from 18 to 28 years (M age = 19.32, SD age = 1.81). Thirty-six students reported to be first generation college students. We had originally recruited 102 participants but had to eliminate 8 participants due to technical difficulties (n = 5), missing data (n = 1), and not being 18 years of age or older (n = 2), yielding a final sample of 94 participants. The experiment employed a between-subjects design with 47 participants in the explanation group and 47 in the no explanation group.

Materials and Equipment

Equipment

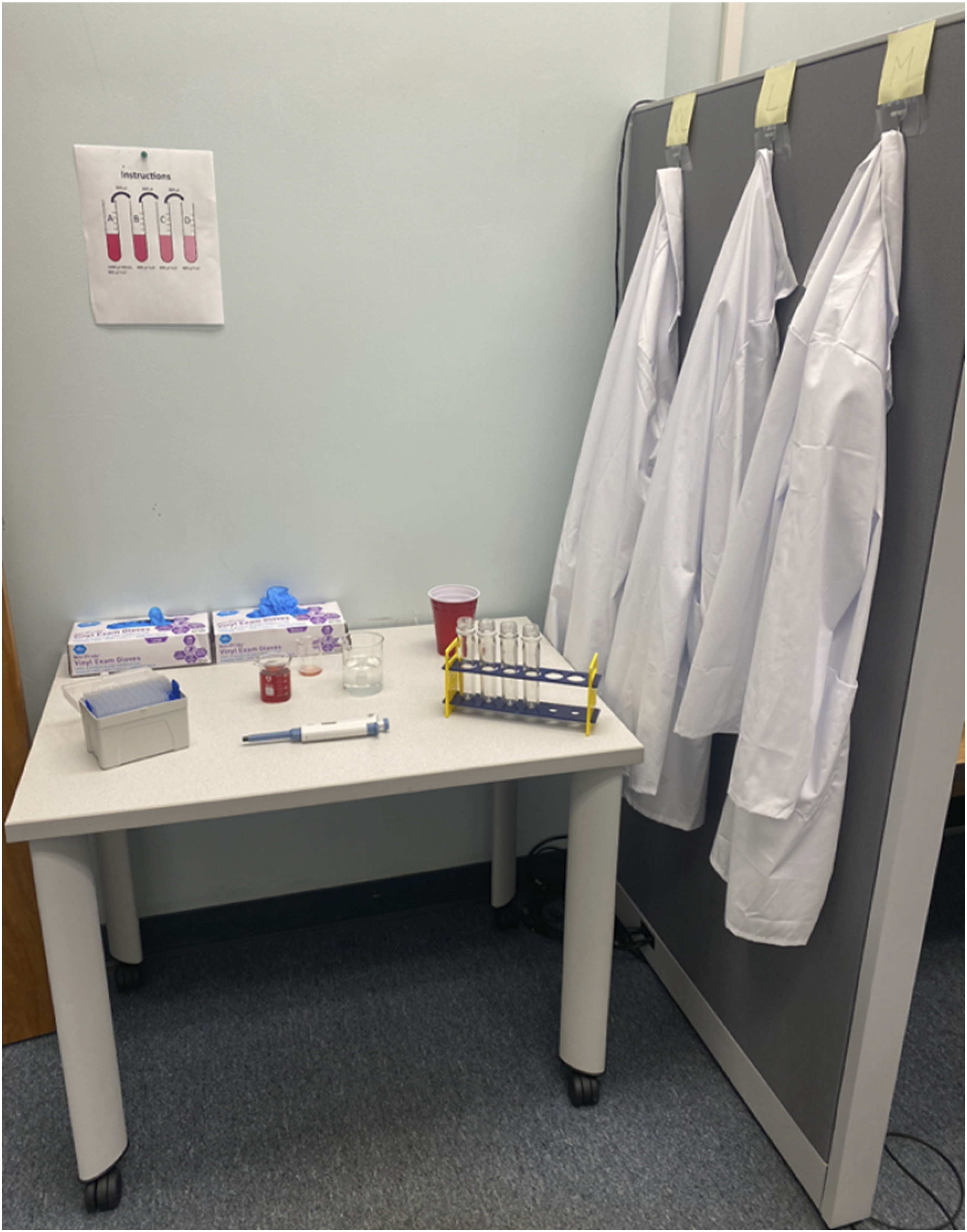

The equipment used in this experiment included a virtual reality system, a laptop computer, and pipetting equipment. The virtual reality system was an Oculus Quest, from the company MetaQuest, formally known as Oculus, used to present the training lesson. The laptop computer was a MacBook Pro used to collect survey responses through the web-based platform, Qualtrics. The pipetting equipment was used for the in-person serial dilution task. The materials consisted of an adjustable micropipette with a volume range of 100 µl to 1000 µl, a set of sterile pipette tips, a set of beakers, four plastic test tubes housed in a rack, a disposable cup for the used pipette tips, cranberry juice and water used for the solution, professional white lab coats, a box of nitrile-vinyl blend exam gloves, and a document displaying the pipetting instructions. Figure 1 shows the arrangement of the pipetting equipment and lab setup. Photo of the lab setup for the in-person serial dilution task.

Prequestionnaire

The prequestionnaire consisted of seven items, which were administered on a laptop computer via the web-based platform, Qualtrics. The first five questions asked about general interest and experience with science (Parong & Mayer, 2018). The remaining two questions asked about knowledge using a micropipette and conducting a serial dilution.

Virtual Reality Lesson

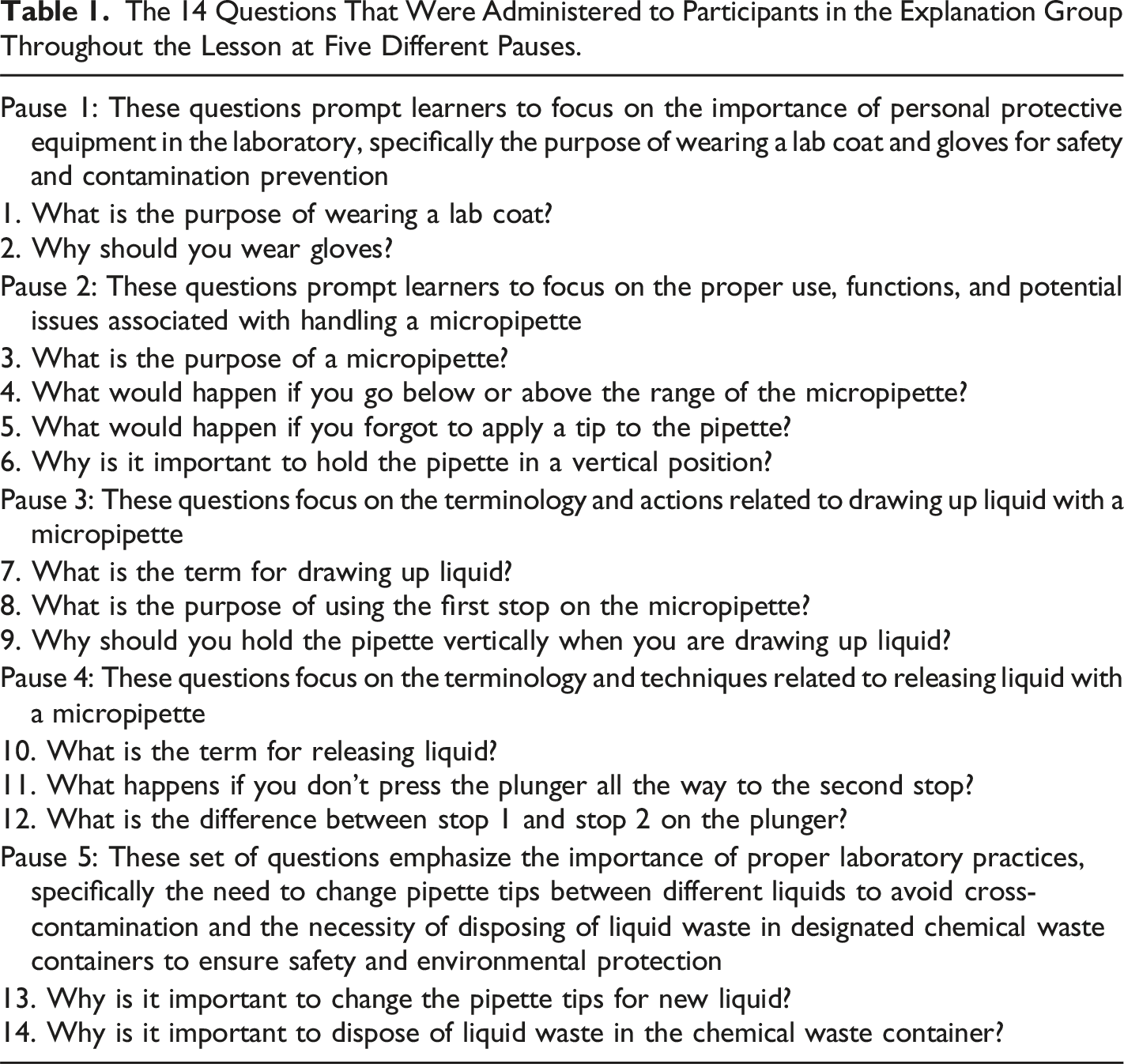

The 14 Questions That Were Administered to Participants in the Explanation Group Throughout the Lesson at Five Different Pauses.

During the pause, the no explanation group was instructed press a continue button using a controller to go on, whereas the explanation group was told to remove the head-mounted display, move to the laptop computer and type in answers to questions presented via Qualtrics before returning to the lesson. Table 1 lists the questions for each of the five pauses along with a brief description of the content of each segment. Overall, 14 questions were administered to participants in the explanation group. These questions focused on asking for a summary (i.e., “what”) or an explanation (i.e., “why”) of specific material in the foregoing segment. After the experiment, researchers scored each answer on a scale of 0 points (no or minimal answer) to 2 points (appropriate answer) yielding a maximum possible score of 28. During the analyses phase, researchers reviewed the quality of the responses. Participants that provided a clear demonstration of understanding and each explanatory prompt were given a score of 2 points per question, whereas participants that give minimal to adequate quality responses were given a score of 0 points per question. See Table 1 to view the full list of questions.

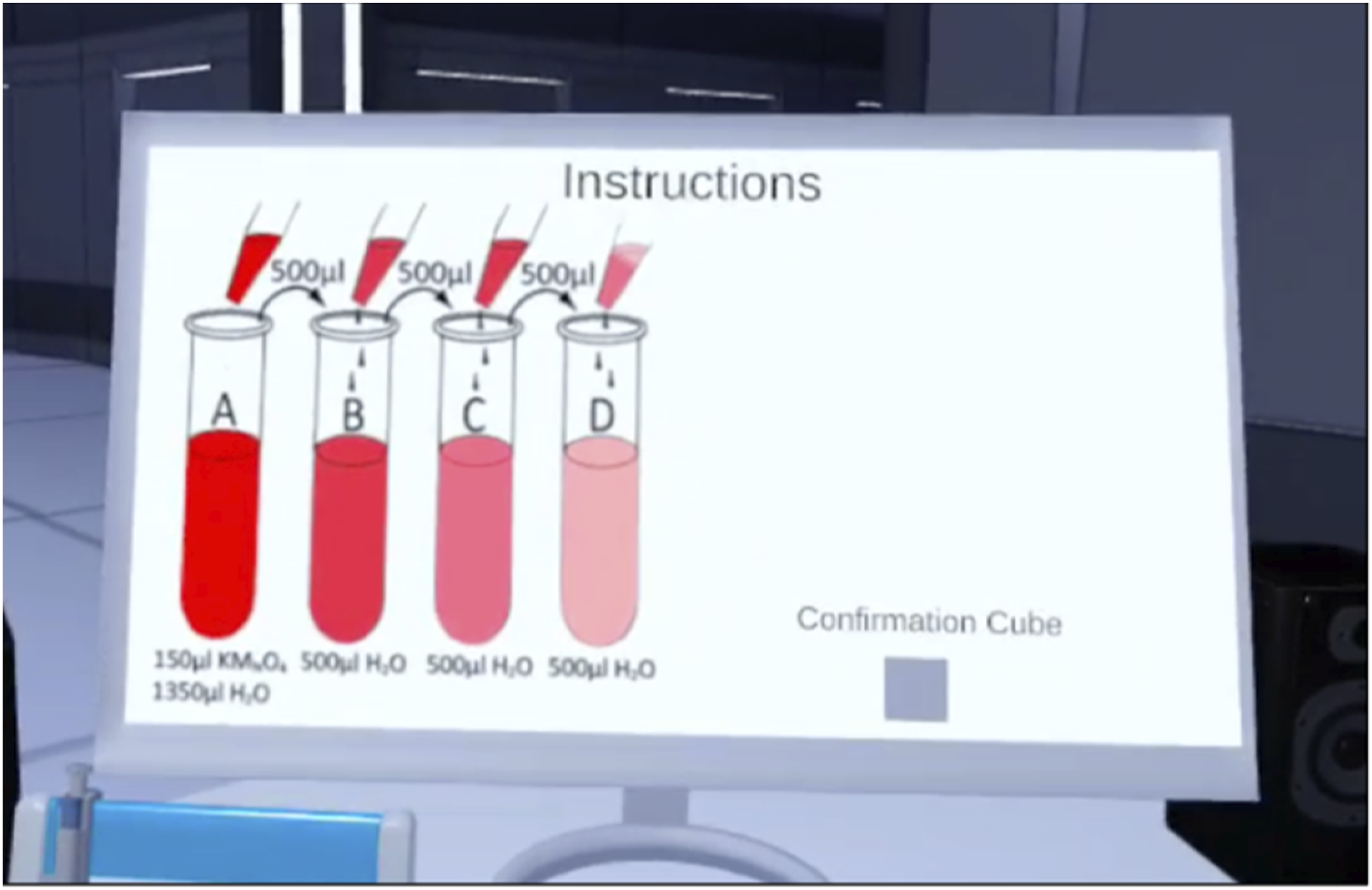

For all participants, the second section served as the virtual pipetting test, where they were tasked to complete a four-step serial dilution by applying the skills they learned in the first section of the training tutorial. In the virtual pipetting test, participants were shown an image of the instructions on a monitor and were told the virtual agent would no longer be available to help them. The participants were given the same materials presented from the first section of the lesson but were tasked to independently complete the four-step serial dilution. After the participant felt they completed the four-step serial dilution, they were instructed to push on a button in the virtual lab to see their feedback from the test. The feedback was displayed on the monitor and outlined whether they put on gloves and a lab coat, the number of times they tilted the pipette, the number of times they did not use the first stop on the plunger, the number of times they did not use the second stop of the plunger, and the number of times they cross contaminated the liquid from the beakers and the tubes.

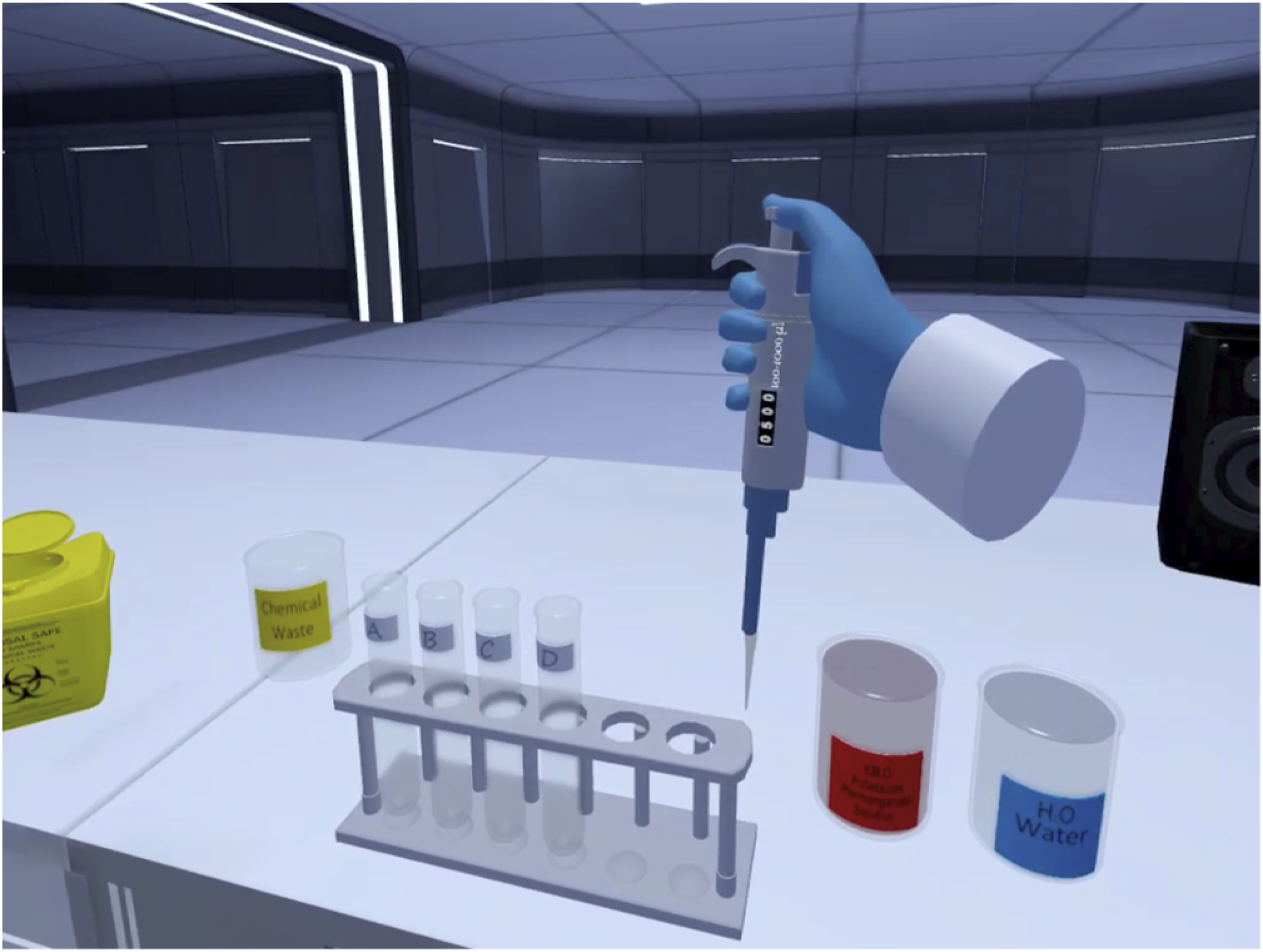

The feedback shown to the participant was generated into a file in the virtual reality headset, wherein the researcher was able to transfer the participant data into a computer. The scoring was calculated based on whether the participant accurately transferred the required solution in each four test tubes. If a test tube contained the correct amount of liquid the virtual simulation marked it either as “True” for correct or “False” for incorrect. The virtual simulation also indicated whether the participant put on a lab coat and gloves as “True” for correct and “False” for incorrect. During the completion of the serial dilution, the virtual simulation recorded the number of instances in which a participant committed the following errors: tilting the pipette tip, pressing the plunger after inserting the pipette in liquid, dispensing liquid without reaching the second stop, using a contaminated pipette tip, and improperly mixing the beaker liquid. Figure 2 shows a screenshot of the virtual pipetting simulation for the training phase. Figure 3 shows a screenshot of the virtual pipetting instructions presented in the second section of the simulation involving carrying out a serial dilution in IVR without guidance. Screenshot of virtual pipetting simulation during the training phase. Screenshot of the instructions for the virtual serial dilution task.

In-Person Pipetting Test

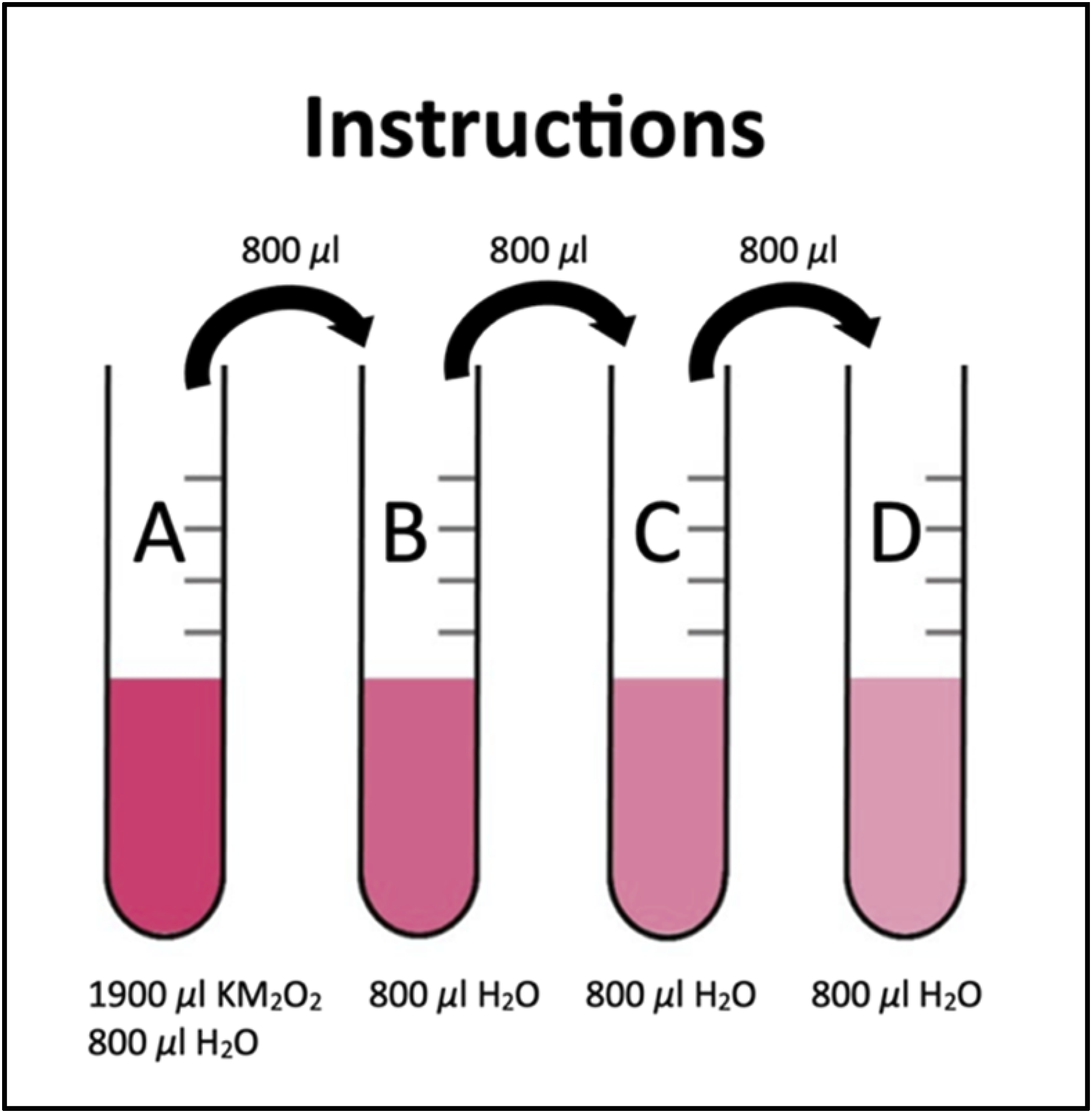

The in-person pipetting task was set up similarly to the virtual pipetting task using concrete objects to determine whether participants could transfer their simulation-based procedure to the real world. To keep the materials consistent across tasks, we used physical materials that were closely similar to the materials in the virtual simulation. After the completion of the virtual simulation task, participants were asked to remove the virtual headset and shown the real pipetting setup. The researchers showed the participants the physical materials to complete the task along with the printed instructions. The printed four-step serial dilution instructions were a modified version of the virtual four-step serial dilution instructions, as seen in Figure 4. Instructions for the in-person serial dilution task.

A researcher was responsible for observing and evaluating the participants performance during the completion of the serial dilution on a printed assessment form. The scoring of the assessment followed the same structure as the virtual assessment, consisting of two sections. The first section was scored as either True or False, with True scored as 1 and False scored as 0. The minimum score a participant could receive was 0, whereas the maximum score a participant could receive was 5. The researcher indicated whether the participant put on a lab coat and gloves as True or False and whether the participant correctly completed each of the four test tubes as True or False.

The second section was scored as a frequency and was summed together to produce a total score of pipetting errors committed in the in-person serial dilution test. The minimum score one could receive was 0, whereas the maximum score one could receive was unlimited. The researcher recorded the number of instances in which a participant committed the following errors: tilting the pipette tip, pressing the plunger after inserting the pipette in liquid, dispensing liquid without reaching the second stop, and cross contaminating the pipette tip across all test tubes and beakers.

Posttest

The posttest, which was administered in Qualtrics, was intended to assess participants’ knowledge of the scientific procedure of pipetting and consisted of 15 questions (i.e., four multiple choice questions, ten true-false questions, and one sorting question (α = −.161). The low internal reliability reflects the construction of the test, which involves three different question formats and individual items that tap completely different pieces of information. The multiple-choice items consisted of four multiple-choice options (e.g., “What happens during a serial dilution? (a) The concentration of a substance is reduced at each step. (b) Water is distilled at each step to increase the concentration of a substance. (c) Each step involves adding a new substance. (d) Each step reduces the acidity of a substance”). The true-false questions consisted of 10 questions (e.g., “Push the plunger to the second stop during aspiration. (a) True (b) False”). The sorting question consisted of eight statements displayed in a random order, which required the participant to drag and drop each statement in the correct order of the procedure (e.g., “Please indicate which step each statement corresponds to from first to last step. (a) Put lab coat (b) Put on gloves (c) Pick up pipette (d) Adjust volume setting (e) Attach pipette tip (f) Aspirate liquid (g) Dispense liquid (h) Discard tip”). Participants who selected the correct answer or sorted each statement in the correct order were coded as 1, while incorrect responses and missorted statements were coded as 0. A participant’s posttest score was determined by the number of correct responses recorded. The total possible points a participant could receive was 22 points.

Postquestionnaire

The postquestionnaire, which was administered in Qualtrics, was intended to assess cognitive load and presence. We assessed participants’ extraneous cognitive load (α = 0.86) by administering the Multidimensional Cognitive Load Scale for Virtual Environments (Andersen & Makransky, 2021). It is anchored by a five-point Likert scale ranging from 5 “strongly agree” to 1 “strongly disagree.” To assess a participant’s sense of presence we administered a modified version of the Presence Questionnaire containing 7-items (α = 0.77; Parong, 2019), in a seven-point Likert scale, ranging from 7 “strongly agree” to 1 “strongly disagree”. Finally, participants were asked to provide demographic information concerning their age, gender category, ethnic/racial category, and first-generation college student status.

Procedure

Participants were tested individually and randomly assigned to either an explanation group or a no explanation group. Upon arrival, the participant was asked to review a paper version of the consent form. If they agreed to participate, they were asked to sign and date the consent form. Following this, the participant completed the prequestionnaire administered on a computer via Qualtrics. After completing the prequestionnaire, all participants were instructed to stand on a designated marked spot to be prepped on how to use the virtual reality head-mounted display. The participant was shown how to use the hand controllers and headset. Next, the participant put on the VR headset and was briefed on the safety features in the headset, then instructed to input their participant ID number using the hand controllers. Both conditions were instructed to complete the virtual simulation that taught them the technical scientific procedure of pipetting. Both conditions were also told that the lesson would pause five times throughout the lesson. When the lesson paused, participants assigned to the no explanation group were told to press a button to continue the lesson. Participants assigned to the explanation group were asked to remove the virtual reality headset, then they were prompted to sit down at a computer and answer some open-ended questions that corresponded to the topic of the virtual reality lesson, and then put the head-mounted display back on and return to the lesson. Participants only experienced the pauses during the first section of the training as this serves as a practical tutorial that teaches lab safety procedures and step-by-step actions on how to use a micropipette. The second section served as an assessment of their skills from the first section, wherein the learners were prompted to attempt a four-step serial dilution task.

After the completion of the second section, the participant removed the VR headset and was directed to complete an in-person four-step serial dilution task in the lab. The instructions for the in-person serial dilution task were based on the VR simulation instructions. A section in the lab was designated for the in-person task. While the participant completed the in-person serial dilution task, a researcher evaluated the accuracy on applying the correct amount of liquid in each tube (e.g., did the participant accurately complete test tube A) and collected the frequency of errors committed while completing the serial dilution task. Once the participant completed the in-person serial dilution task, they were directed back to the original computer to complete the postquestionnaire via Qualtrics. Once the participant completed the postquestionnaire they were thanked for their time and were informed they would receive credit for their participant via the SONA participant pool system. We adhered to guidelines for treatment of human subjects and obtained IRB approval.

Results

Do the Groups Differ on Basic Characteristics?

A preliminary issue concerns whether random assignment resulted in groups that differed on basic characteristics. A chi-square analysis demonstrated that the proportion of men and women did not differ significantly among the two groups, χ2 (2, N = 94) = 1.141, p = .565. The two groups also did not differ significantly in mean age, t(92) = .057, p = .955, mean science-knowledge score, t(92) = 1.234, p = .220, prior knowledge with using a micropipette, t(92) = −0.073, p = .942, and prior knowledge with conducting a serial dilution, t(92) = .086, p = .932. We conclude that the two groups did not differ on basic characteristics.

Hypothesis 1: Does the Explanation Group Perform Better than the No Explanation Group on Pipetting Performance During Learning in Immersive Virtual Reality?

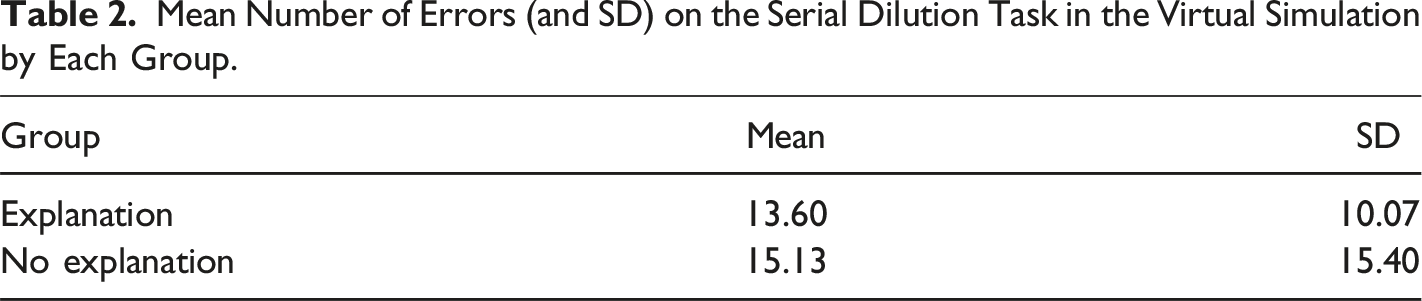

Mean Number of Errors (and SD) on the Serial Dilution Task in the Virtual Simulation by Each Group.

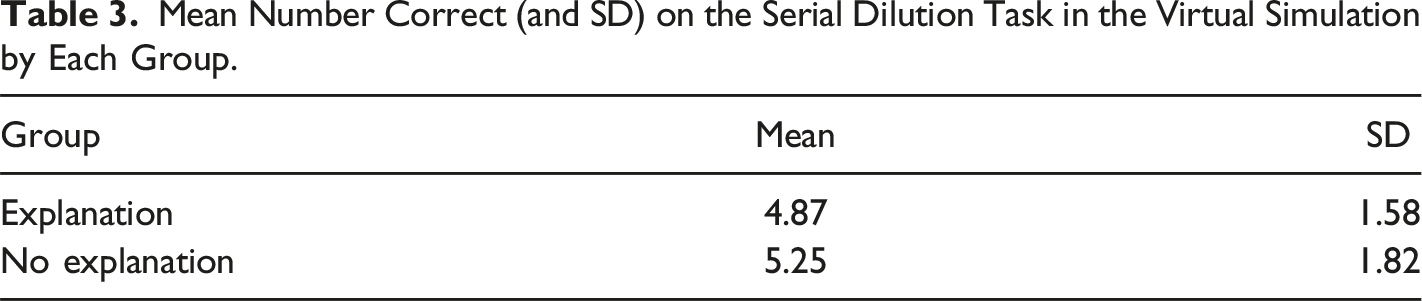

Mean Number Correct (and SD) on the Serial Dilution Task in the Virtual Simulation by Each Group.

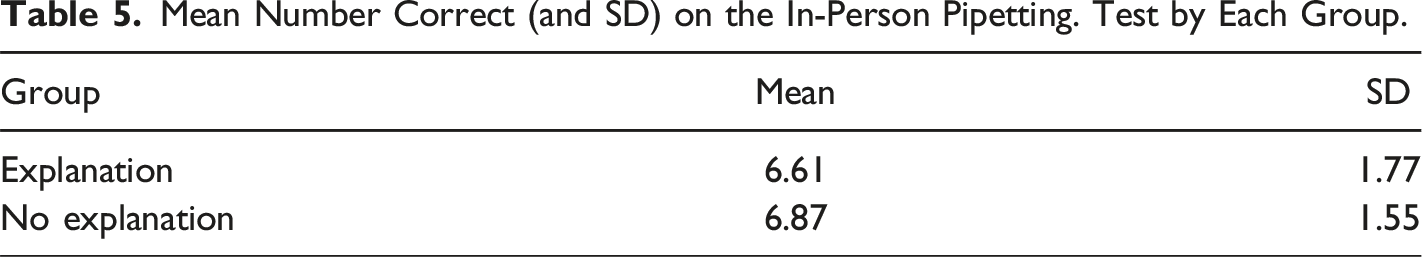

Hypothesis 2: Does the Explanation Group Perform Better than the No Explanation Group on Pipetting Performance in the In-Person Pipetting Test?

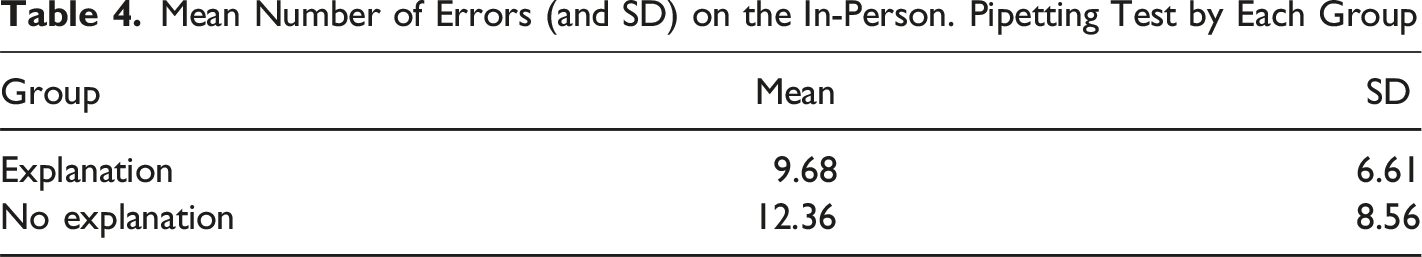

Mean Number of Errors (and SD) on the In-Person. Pipetting Test by Each Group

Mean Number Correct (and SD) on the In-Person Pipetting. Test by Each Group.

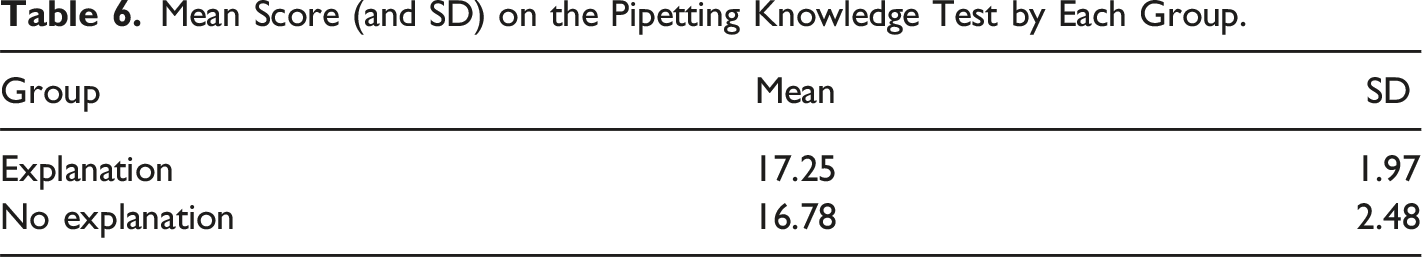

Hypothesis 3: Does the Explanation Group Perform Better than the No Explanation Group on the Knowledge Test?

Mean Score (and SD) on the Pipetting Knowledge Test by Each Group.

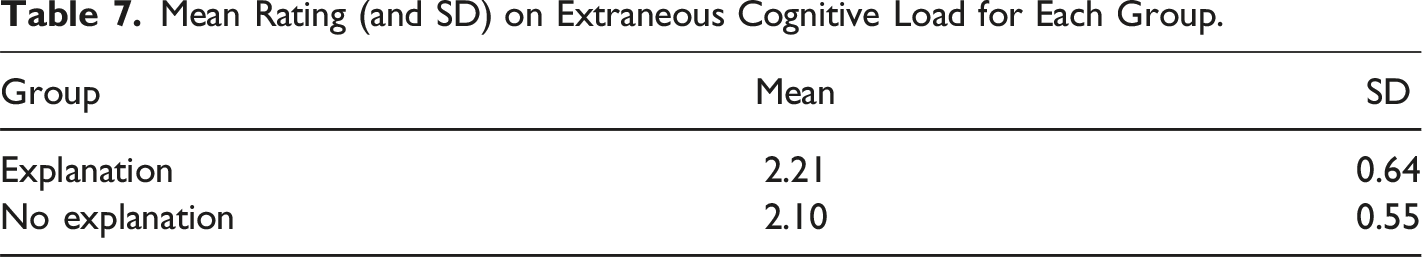

Research Question 1: Will the Explanation Group Produce a Lower Mean Rating of Extraneous Cognitive load than the No Explanation Group?

Mean Rating (and SD) on Extraneous Cognitive Load for Each Group.

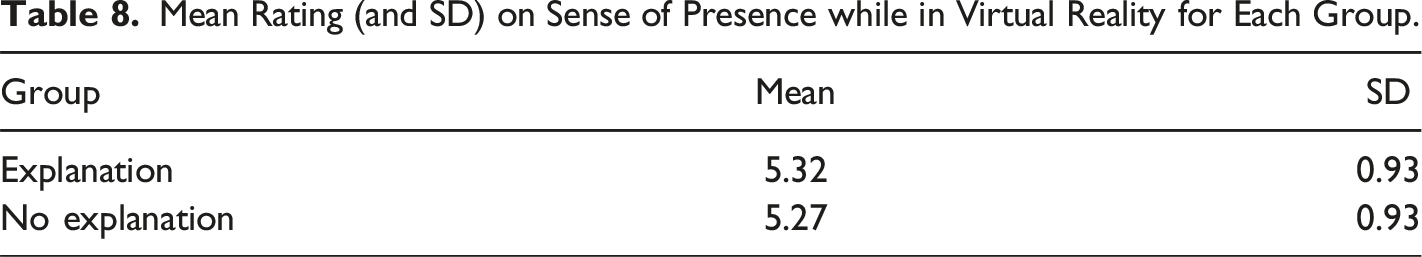

Research Question 2: Will the Explanation Group Produce a Higher Mean Rating of Presence than the No Explanation Group?

Mean Rating (and SD) on Sense of Presence while in Virtual Reality for Each Group.

Discussion

Empirical Contributions

The purpose of this experiment was to examine whether incorporating a generative learning activity inspired by research on learning declarative knowledge with conventional media (Dunlosky et al., 2013; Lawson & Mayer, 2021) could also be effective for learning procedural knowledge with immersive media. Overall, contrary to predictions, we found that asking students to answer challenging questions during pauses in the IVR lesson did not result in better performance on a virtual pipetting task, an in-person pipetting task, or a knowledge posttest. To our knowledge, this is the first study to incorporate the generative learning activity of answering explanation questions in the learning of a technical procedural skill in immersive virtual reality. We speculate that any benefits of writing explanations during pauses in the lesson were mitigated by the distractive harm caused by having to repeatedly take off and put on the head-mounted display and by having to try to come up with answers to challenging questions. Apparently, techniques that work well with learning declarative knowledge using conventional media may need to be modified for learning procedural skills using immersive media.

Theoretical Contributions

According to generative learning theory (Fiorella & Mayer, 2015, 2016, 2022; Wittrock, 1974), engaging in generative learning activities during learning cause learners to engage in generative cognitive processing during learning. Specifically, the proposed mechanism for explanation is that when learners engage in explaining the content to themselves, they are actively selecting, organizing, and integrating the information with their existing knowledge, leading to a deeper understanding. In short, learners are prompted to construct meaning with the learning content in a way that makes sense to them. However, in contrast to the predictions of generative learning theory, the results of this experiment indicate that the learning strategy of explanation was not effective in enhancing learning outcomes when compared to learners who did not engage in the activity of explanation.

In line with cognitive load theory (Paas & Sweller, 2022; Sweller et al., 2011), one theoretical explanation is that the intervention we implemented created a high level of extraneous cognitive processing--i.e., cognitive processing that does not support the instructional objective but consumes the learner’s limited capacity thereby leaving less capacity available for generative cognitive processing. Specially, the need to repeatedly remove and put back on the head-mounted display may have been distracting. This disruption likely interfered with learners’ sense of immersion and presence, further adding to extraneous cognitive load. As a result, these interruptions may have interfered with learners’ ability to stay engaged in the lesson, which could have ultimately hindered performance. We note, however, that although learners in the explanation group reported a higher level of extraneous cognitive load than the no explanation group, the difference did not reach statistical significance.

Another possible theoretical explanation is that procedural knowledge acquisition is qualitatively different from declarative knowledge acquisition. Specifically, theories about the stages of skill learning (Fitts & Posner, 1967; Singley & Anderson, 1989) are fundamentally different from theories of subject-manner learning (e.g., Mayer, 2021). Therefore, instructional interventions that work with learning declarative knowledge (such as generating explanations) may not apply directly to learning procedural knowledge.

Practical Contributions

Based on the results, we do not recommend using prompts for answering explanation questions during pauses in lessons for teaching procedural knowledge in IVR. Our findings contradict previous studies that have highlighted the benefits of elaborative interrogation with conventional media (Dunlosky et al., 2013) and the benefits of summarizing during pauses in IVR lesson involving conceptual knowledge rather than procedural knowledge (Parong & Mayer, 2018). However, it is worth noting that while self-explanation was observed to be beneficial for learning outcomes in delayed posttests, it did not show the same benefit for immediate posttests (Lawson & Mayer, 2021). These findings, along with our results, suggest that explaining newly learning information may not yield benefits in learning outcomes on an immediate test.

Limitations and Future Directions

As an early value-added study of how to improve learning of technical procedural skills in IVR, this work is subject to several limitations. This was a short-term lab study with immediate tests, we suggest that it may be beneficial to include delayed posttests to examine whether focused explanation can be an effective strategy for gaining procedural skills and knowledge. Previous studies had demonstrated the positive effects of learner-generated explanations during pauses in a multimedia lesson emerged in delayed posttests but not immediate posttests (Lawson & Mayer, 2021). Similarly, it would be worthwhile to examine whether procedural skills remain or dissipate over time in a delayed assessment.

While learners were able to respond to prompts outside of the virtual headset, it may have caused distraction or frustration during the learning process. Participants assigned to the explanation group were asked to remove their headset when they reached a pause in the lesson and instructed to respond to a set of questions on a laptop. This sequence may have disrupted the flow and engagement of the lesson. Our attempt in mitigating any negative feelings while learning was to limit the number of pauses to five and to strategically insert pauses after major sections in the lesson. Future studies might benefit by incorporating question prompts inside a virtual lesson, instead of requiring students to remove the headset entirely. Some of the questions we used in this study may have been too demanding, leading to lower active participation from learners. Future research should examine the effectiveness of less demanding prompts that still serve to help the learner reflect on the lesson content, such as selecting a response from a menu or rewording a summary statement. It also would be useful to explore ways to incorporate the generative learning activity within the IVR environment rather than requiring learners to repeatedly take off and put on their head-mounted display.

This study involved a narrow learner population of young adults at a highly selective university and focused on a single learning topic: pipetting. Pipetting was chosen because it requires both gross and fine motor actions, procedural accuracy, stepwise coordination, which are key characteristics in technical procedural tasks. Pipetting is also a foundational skill in biology and chemistry labs, increasing its educational relevance and practical utility. This makes pipetting an ideal task for examining immersive learning interventions that aim to improve procedural skills and knowledge. Additionally, a recent review highlighted that procedural knowledge is the least evaluated learning outcome in IVR applications (Jongbloed et al., 2024). Future research should examine whether the generative learning activity of explanation is effective with other kinds of learners and with other procedural tasks that require stepwise coordination, precision, and procedural knowledge, such as surgical procedures in medicine, equipment assembly and disassembly in industrial operations and maintenance, or safety procedures in domains such as construction or fire safety (Jongbloed et al., 2024).

While the gross motor actions were designed to replicate real micropipette handling, the execution of fine motor actions was subject to some limitations. Students received visual guidance on micropipette use within the IVR lesson; however, discrepancies occurred between the visual demonstrations and the precise fine motor skills required for task completion. Moreover, the task demanded more than replicating movements. Students had to remember specific buttons and their corresponding actions, potentially imposing additional cognitive demands. For instance, while the tutorial demonstrated using the thumb to push the plunger to the first stop, students were required to use their index finger and press halfway down the button to achieve the same result. Subsequently, after completing the tutorial phase, students had to recall which actions aligned with specific buttons during the virtual serial dilution task. Research comparing the usage of classic VR controllers and ergonomic controllers, such as Valve’s Knuckles presents encouraging results (Nobelcourt et al., 2021). After familiarity with Knuckles in an initial study, participants demonstrated quicker task completion and increased manipulation abilities at a subsequent task, showing potential advantages of using more natural-like controllers, such as Knuckles. Despite results demonstrating a decline of pipetting errors from the virtual reality assessment to the real-world assessment, future studies might benefit from aligning fine motor actions more closely with natural actions using more sensitive controllers.

Another limitation pertained to the awareness of researchers regarding participants’ assigned conditions. Researchers were tasked with capturing real-time data as participants completed the task. While instructed to observe and record participants’ actions during the in-person serial dilution task, the possibility exists that some data might have been missed, or certain anticipated behaviors might have influenced observations based on participants’ assigned conditions. All researchers underwent extensive training with the lead researcher to standardize data recording and action and error identification. However, human error remains a possibility. Particularly, our study relied on a single researcher for data collection during the in-person serial dilution task. Future studies could mitigate potential errors by employing two researchers for data collection and conducting inter-rater reliability checks to ensure consistency. Alternatively, including a separate researcher to assess the in-person serial dilution task could help minimize bias.

Finally, future research is warranted that tests different kinds of generative learning activities such as incorporating prompts to reflect within the IVR environment. For example, learners could be prompted to respond to reflection prompts of the lesson that appear as visual overlays within the virtual environment. It also would be useful to test the effectiveness of adaptive scaffolding approaches such as providing feedback based on one’s performance or providing gradual hints or guidance. To broaden the area of inquiry, future research should also examine ways to enhance learning with other types of procedural tasks in IVR and learning with serious games in IVR.

While our sample size was sufficient to detect medium effects, it may be possible that smaller effects have gone undetected. Given our sample’s characteristics, future research should explore whether these findings generalize to broader populations or alternative learning contents.

Conclusion

Overall, this study examined the effectiveness of asking learners to write answers to explanative questions during pauses in an IVR lesson, a learning strategy that has been shown to effective in learning declarative knowledge with conventional media. Our findings demonstrate embedding a content-focused explanative questions did not enhance the acquisition of procedural knowledge and skills taught in an immersive virtual environment. Specifically, the explanation group did not outperform the no explanation group on the knowledge test, nor did they make fewer pipetting errors in the virtual or real-life pipetting tasks. Our findings suggest that asking learners to write explanations during pauses may not be an effective strategy for learning a procedural task in IVR. This study emphasizes the importance of evaluating a range of learning strategies for their effectiveness in teaching technical procedural skills such as pipetting in IVR.

Footnotes

Acknowledgements

We appreciate that Guido Makransky and Gustav Petersen graciously provided the virtual pipetting simulation.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was supported by Grant N000142112047 from the Office of Naval Research.

Ethical Statement

Data Availability Statement

Data is available upon request from the corresponding author.