Abstract

In recent years, the technical possibilities of educational technologies regarding online peer feedback have developed rapidly. However, the impact of online peer feedback activities compared to traditional offline variants has not specifically been meta-analyzed. Therefore, the aim of the current meta-analysis is to do an in-depth comparison between online versus offline peer feedback approaches. An earlier and broader meta-analysis focusing on technology-facilitated peer feedback in general, was used as a starting point. We synthesized 12 comparisons between online and offline peer feedback in higher education, from 10 different studies. Moreover, we reviewed student perceptions of online peer feedback when these were included in the studies. The results show that online peer feedback is more effective than offline peer feedback, with an effect size of 0.33. Moreover, online peer feedback is more effective when the outcome measure is competence rather than self-efficacy for skills. In addition, students are mostly positive towards online peer feedback but also list several downsides. Finally, implications for online peer feedback in teaching practice are discussed and leads are identified for further research on this topic.

Introduction

Topping (1998) described peer feedback or peer assessment as “an arrangement in which individuals consider the amount, level, value, worth, quality, or success of the products or outcomes of learning of peers of similar status” (p. 250). Such peer feedback, Falchikov (2001) argued, is a form of peer learning that can increase intellectual skills or knowledge. Due to its effectiveness, peer feedback is increasingly being used and studied in academic settings (Topping, 2017). Several systematic reviews and meta-analyses concerning peer feedback have been published in the past few years. These reviews show that when peer feedback is used, learning outcomes tend to be better than without any feedback. Moreover, learning outcomes when using peer feedback are at least as good as or even better than when teacher feedback is used (Double et al., 2020; Huisman et al., 2019; Li et al., 2020). With the increasing use of technology in education, peer feedback is often offered online. The current paper aims to address the use of peer feedback in an online setting and what the effects are on learning.

Online Peer Feedback

We distinguish between online peer feedback, which is technology-facilitated without any synchronous interactions between students, and offline peer feedback, which is in-class and paper-based or face-to-face. Offering peer feedback online can have several benefits over paper-based peer feedback. Online peer feedback provides the possibility of anonymity, which can make students feel safer to provide each other with negative feedback (Lu & Bol, 2007; Vanderhoven et al., 2015). Moreover, offering peer feedback online can save the teacher time by speeding up the logistics surrounding peer feedback, especially when the tool in question allows automation (Ashenafi, 2017). It also allows teachers to monitor the peer feedback comments the students give each other. This monitoring is more difficult with face-to-face peer feedback, where the teacher can often only catch small parts of what the students discuss with each other (DiGiovanni & Nagaswami, 2001).

Research shows that online peer feedback can enhance student performance (Latifi et al., 2021; Noroozi et al., 2016), as well as promote effective collaboration and improve critical thinking (Su & Beaumont, 2010), although it remains unclear whether these effects are specific to online peer feedback or whether offline peer feedback has the same effects. However, online peer feedback also poses its challenges. The feedback dialogue between students can be lost because students find it difficult to actively engage with each other in an online environment. In contrast, this dialogue can be very beneficial to understanding and engaging with the feedback (Guardado & Shi, 2007). In feedback dialogue, students have the opportunity to get feedback on the feedback they have given and clarify or negotiate meaning of the received feedback (Zhu & Carless, 2018). The question, therefore, remains whether online peer feedback is at least as or more effective than traditional face-to-face or paper-based peer feedback.

Previous Meta-Analyses

Previous meta-analyses on the effectiveness of peer feedback in general overall conclude that peer feedback has a better effect on learning outcomes compared with no feedback or teacher feedback (Double et al., 2020; Huisman et al., 2019; Li et al., 2020). A meta-analysis conducted by Cui and Zheng (2018) focused specifically on peer feedback in a blended learning environment. The authors found that providing anonymity and using writing essays or artefacts as task types are related to increased learning outcomes. The same conclusion was drawn for the training of students in giving peer feedback, which Cui and Zheng (2018) found to be an important factor. The paper, however, does not specify what online peer feedback is being compared with (e.g., online vs. offline peer feedback). A meta-analysis focusing on the effect of technology-facilitated peer feedback was done by Zheng et al. (2020). This resulted in an effect size of 0.58 for learning outcomes, indicating that technology-facilitated peer feedback effectively improves student performance and the conclusion that anonymous assessment is more effective. In that meta-analysis, technology-facilitated peer feedback is compared with the “traditional approach”, without specifying what this entails. When taking a closer look, most of the studies included in that meta-analysis compared “online peer feedback” with “no feedback” or “teacher feedback”. Therefore, no meta-analysis to date has focused specifically on comparing the effects of online peer feedback with that of offline peer feedback on learning outcomes within higher education. In the current meta-analysis we, therefore, try to answer the question of whether online peer feedback is more effective than offline peer feedback.

Current Research

For the past decade, higher education institutions have been shifting to blended learning, with education being offered both online as well as face-to-face (Albiladi & Alshareef, 2019). Peer feedback is an educational intervention that has already been offered in technology-facilitated settings for many years (Mostert & Snowball, 2013). The Covid-19 crisis has boosted the adoption of online educational technologies for peer feedback in universities worldwide. Together with the fact that there are no previous meta-analyses specifically comparing the effect of online versus offline peer feedback approaches on learning outcomes we believe the current meta-analysis is important and timely.

The current study is a follow-up to the meta-analysis done by Zheng et al. (2020) but focuses specifically on comparing online peer feedback to offline peer feedback strategies. We used those studies selected by Zheng et al. (2020) that included offline peer feedback as a control condition (only a few were eligible), as well as studies found in an additional literature search to update the list to 2020. In the current meta-analysis, we focus on courses in higher education, both at graduate and undergraduate levels to include as many eligible studies as possible in this meta-analysis.

In addition to the meta-analysis, we also extracted data on student perceptions from the included studies if these perceptions were elicited in the studies to systematically review student perceptions of online peer feedback. To the best knowledge of the authors, this has not been reviewed to date. The current study thus aims to answer the following two research questions: 1. What is the effect of online peer feedback on learning outcomes among higher education students compared with offline peer feedback? 2. What are the perceptions of students towards online peer feedback?

Methods

Selection Criteria

We used the recent meta-analysis by Zheng et al. (2020) as a starting point. Studies included in that meta-analysis were selected when they contained the right contrast, namely offline peer feedback. Moreover, we used the selection criteria of Zheng et al. (2020) p. 374, to find studies that appeared after they had performed their search. These criteria were slightly adapted to focus on online peer feedback, rather than technology-facilitated peer feedback, resulting in the following set of criteria for the current meta-analysis (adaptations are underlined): 1. The study should be closely related to technology-facilitated peer feedback in 2. The study should be quasi-experimental or experimental with experimental and control groups. Studies with only one group, case studies, and conceptual studies are excluded. 3. Participants in the experimental group 4. The study should contain a pre-test to measure the equivalence of learning achievements between the experimental and control groups. In addition, the study should report a learning achievements post-test for both the experimental and control groups. 5. The study should present sufficient data to calculate effect size (ES), including means, standard deviations, t or F values, and the number of participants in each group.

Of the 37 studies included by Zheng et al. (2020), five met the above-mentioned criteria. Most other studies did not compare online feedback with an offline approach for the control group and therefore did not meet the third criterion above.

Since the studies used in Zheng et al. (2020) were retrieved from the period 1998–2018, we did an additional search for the years 2019 and 2020. For this search, we used the search string from Zheng et al. (2020): (‘peer assessment’ OR ‘peer feedback’ OR ‘peer review’ OR ‘peer evaluation’ OR ‘peer rating’ OR ‘peer scoring’ OR ‘peer grading’) AND (‘learning outcome’ OR ‘learning achievement’ OR ‘achievement’ OR ‘outcome’ OR ‘learning performance’ OR ‘academic achievement’ OR ‘academic performance’). The additional search was performed in the databases Web of Science and Scopus to match the search by Zheng et al. (2020). Two independent researchers made the selection of studies based on the criteria above.

Data Extraction

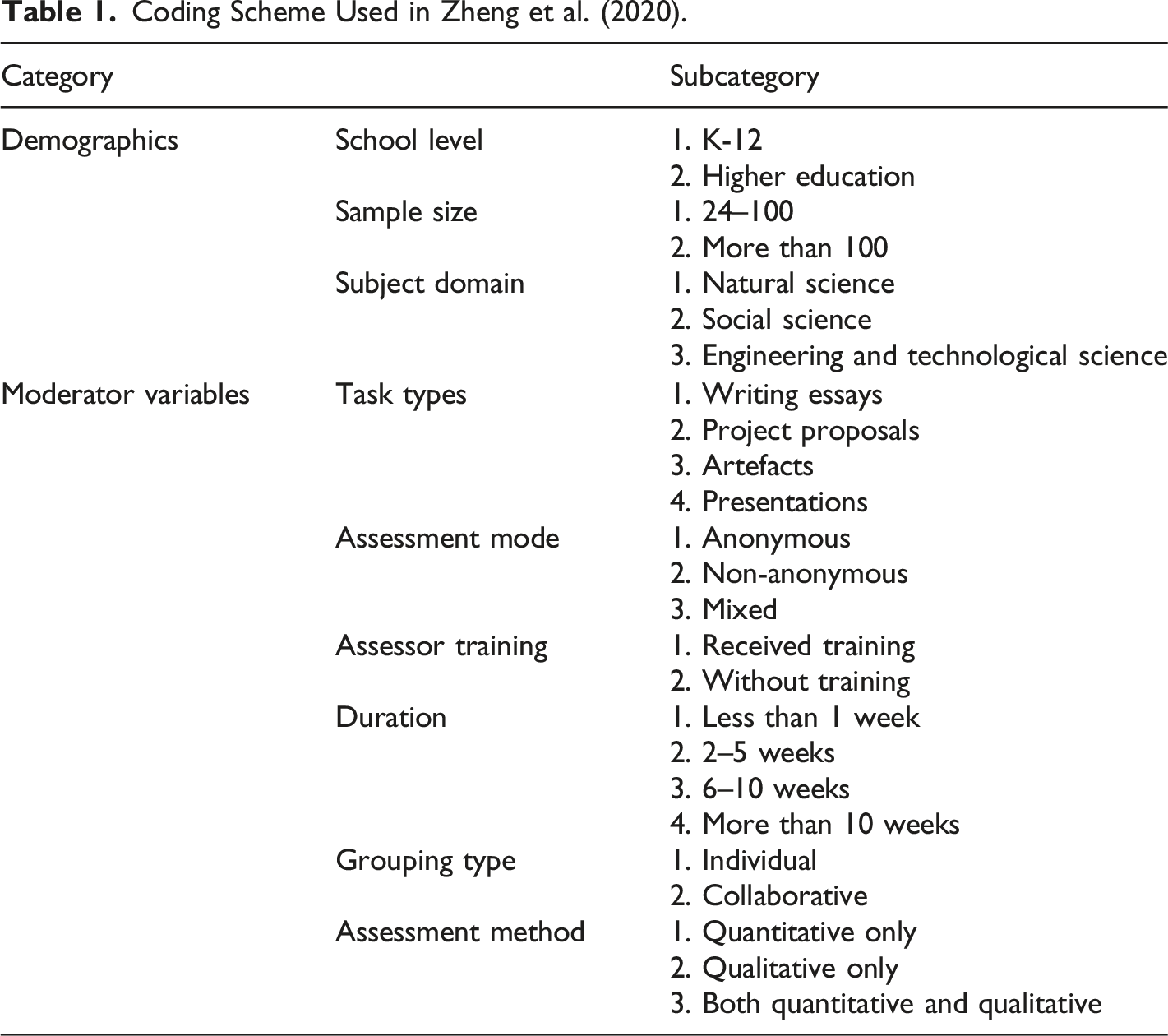

Coding Scheme Used in Zheng et al. (2020).

Quality Assessment

It is important to assess the studies which are included in a meta-analysis for risk of bias. When there are problems with the design or execution of individual studies this could impact the internal validity of the results (Higgins et al., 2022). In this meta-analysis the studies were assessed for risk of bias using the Cochrane Risk of Bias tool for randomized controlled trials (Sterne et al., 2019). This tool was chosen because it allows the assessment, in accordance with the inclusion criteria, of both experimental studies with randomized trials, as well as quasi-experimental studies. The Cochrane Risk of Bias tool provides a framework to systematically assess studies for risk of bias, using five criteria: deviations from intended interventions, missing outcome data, measurement of the outcome, the randomization process, and the selection of reported results. The first and second authors together assessed the included publications on all these criteria, and simultaneously determined the risk of bias using the tool. The tool produces three potential outcomes per risk-of-bias category: low risk, some concerns, or high risk (Sterne et al., 2019). The outcomes for each manuscript were elaborately discussed to determine the risk of bias until a consensus was reached.

Analysis

Calculating Effect sizes

The effect sizes for each paper were calculated according to the method recommended by Morris (2008) for pretest-posttest-control group designs. This method uses a modified Hedges’ g with a pooled standard deviation from the pre-tests of both the experimental and control groups. According to Morris (2008) the pooled standard deviation of the pre-tests is closest to the standard deviation of the population and is therefore recommended to use for pretest-posttest-control group designs. When a study contained multiple control conditions, only the condition with offline peer feedback was included in this meta-analysis. Effect sizes were computed from means and standard deviations when available, and otherwise were computed from the appropriate t- or F-values.

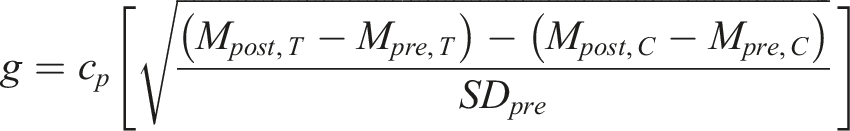

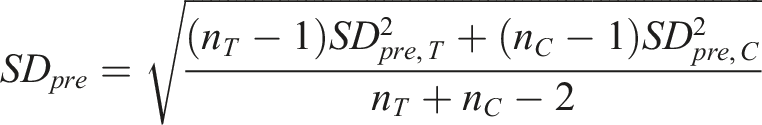

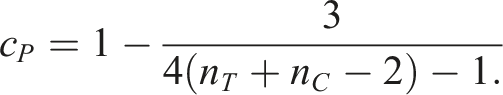

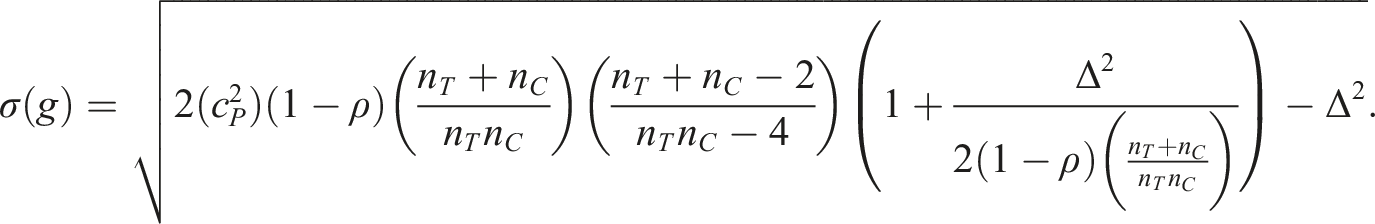

The following equation was used to estimate each effect size with the pooled standard deviation of the pre-tests and a bias adjustment (Morris, 2008).

The pooled standard deviation is defined as

The following equation was used to calculate the standard error of each effect size.

We calculated the standard error three times with a value of 0, 0.45, and 0.9 for ρ. For the meta-analysis, we chose the value ρ = 0.45. Forest plots including standard errors calculated with ρ = 0 (Figure S1) and ρ = 0.9 (Figure S2) can be found in the supplementary material, indicating the differences in heterogeneity. The value for

When the pre- and post-test scores were not available, the effect size was calculated from inferential statistics instead.

Heterogeneity and Publication Bias

To account for the dependence among effect size estimates in certain studies in this meta-analysis, a correlate effects model with robust variance estimates and small-sample corrections was conducted using R studio with the use of the R package “robumeta” (Fisher & Tipton, 2015; Tipton & Pustejovsky, 2015). The

We also conducted a sensitivity analysis excluding the studies with a high risk of bias and a second sensitivity analysis excluding any outliers in heterogeneity (deletion technique of Aguinis et al. (2013)) to check the effect of these outliers on the overall result. A funnel plot was examined to assess the publication bias.

Systematic Review

From the studies that included student perception in their research, additional characteristics were extracted for the purpose of a qualitative review of this subject. These include the number of participants, the measure of student attitudes towards (online) peer feedback, and the outcome of these measures.

Results

Selection and Inclusion of Studies

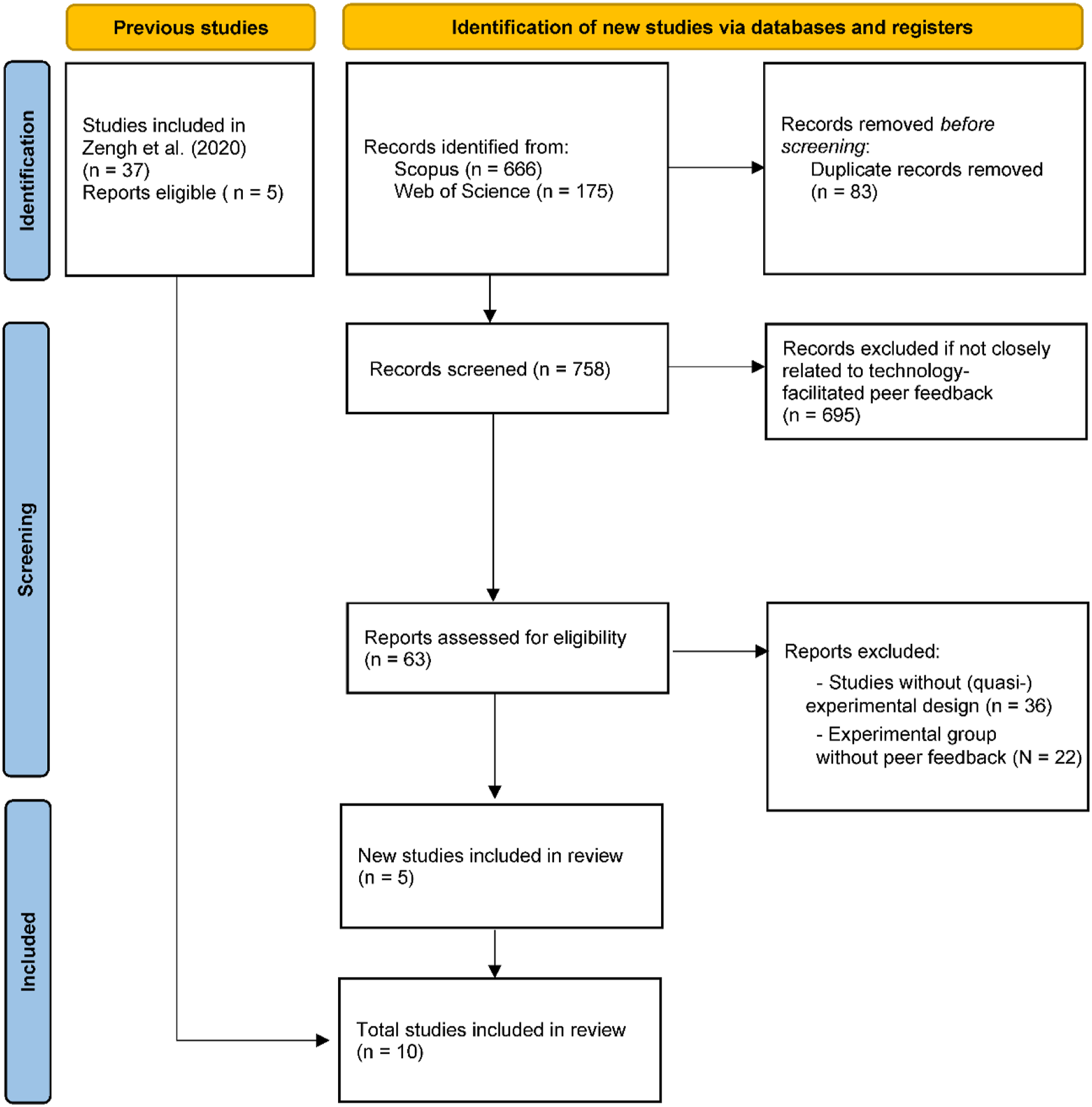

The first author performed the systematic literature search in Scopus and Web of Science databases on February 5, 2021. This search resulted in 758 unique results across databases. Subsequently, the titles and abstracts of the studies were manually assessed with respect to the inclusion criteria. A total of 63 studies were closely related to technology-facilitated peer feedback in an educational context. The full texts of these 63 studies were retrieved and assessed with respect to the inclusion criteria; after several rounds of screening, a total of five articles were included in this meta-analysis. A second researcher assessed the 63 studies independently which resulted in the same five articles and one more. The two researchers were in good agreement with a Cohen’s kappa of k = .88. The extra study was discarded after a discussion between the two researchers. Combined with the studies already used in Zheng et al. (2020), a total of 10 studies were used for the analysis. The PRISMA flowchart of the selection and inclusion of studies is presented in Figure 1 (Page et al., 2021). PRISMA flowchart of the process leading to identification, screening, and inclusion of experimental studies for the current meta-analysis.

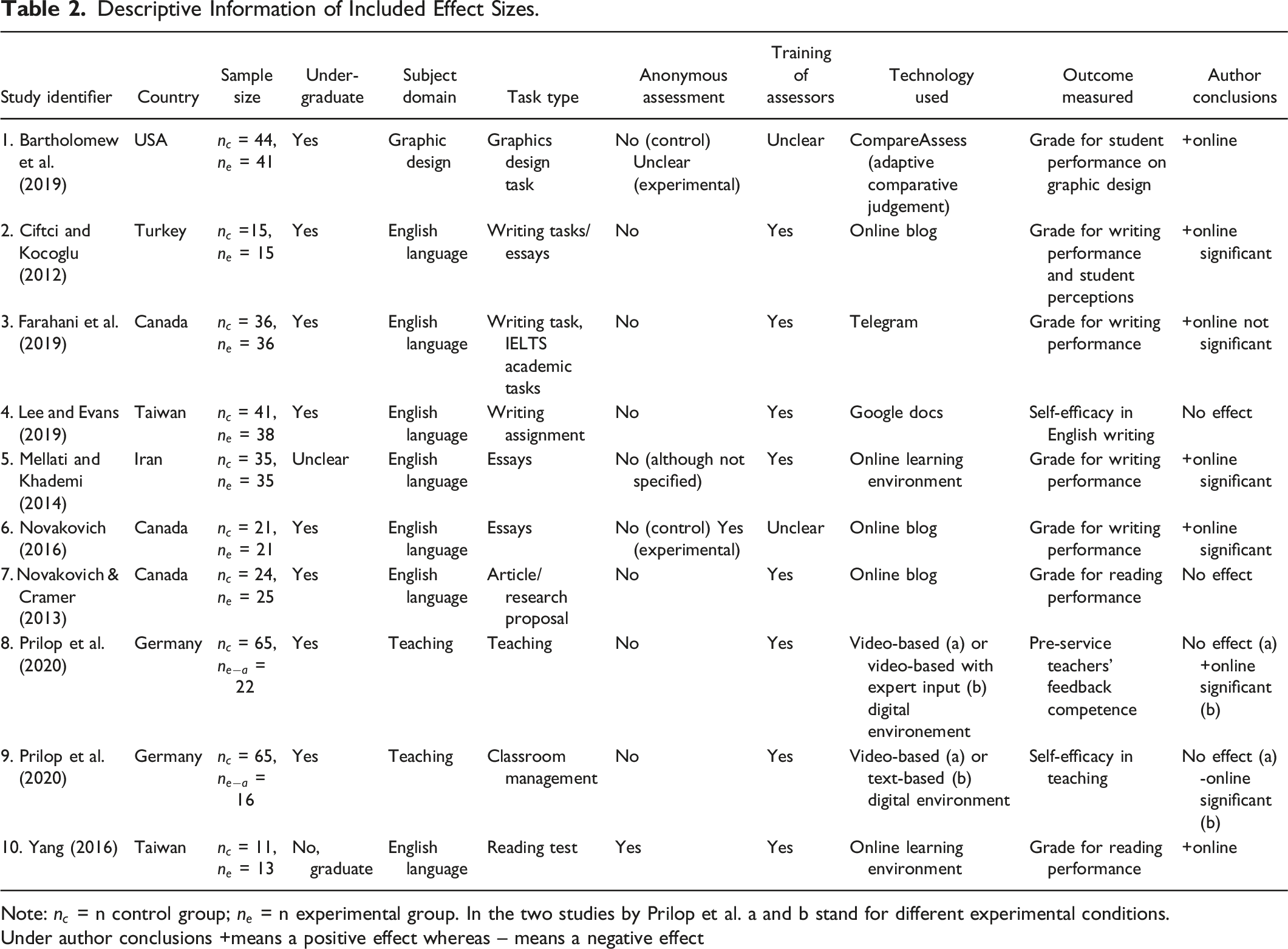

Study Characteristics

Descriptive Information of Included Effect Sizes.

Note:

Under author conclusions +means a positive effect whereas – means a negative effect

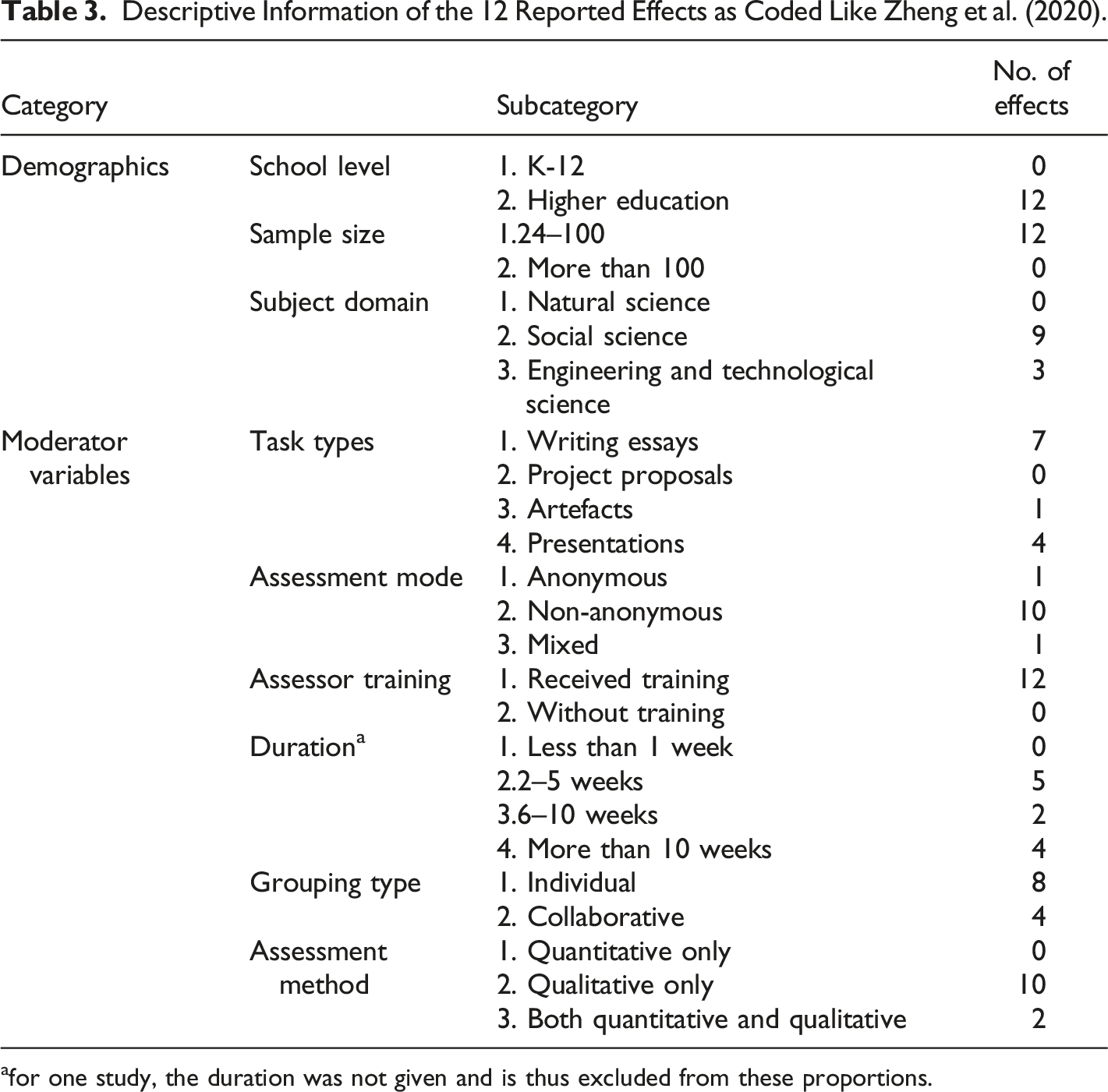

Descriptive Information of the 12 Reported Effects as Coded Like Zheng et al. (2020).

afor one study, the duration was not given and is thus excluded from these proportions.

Overall Effects of Online Peer Feedback

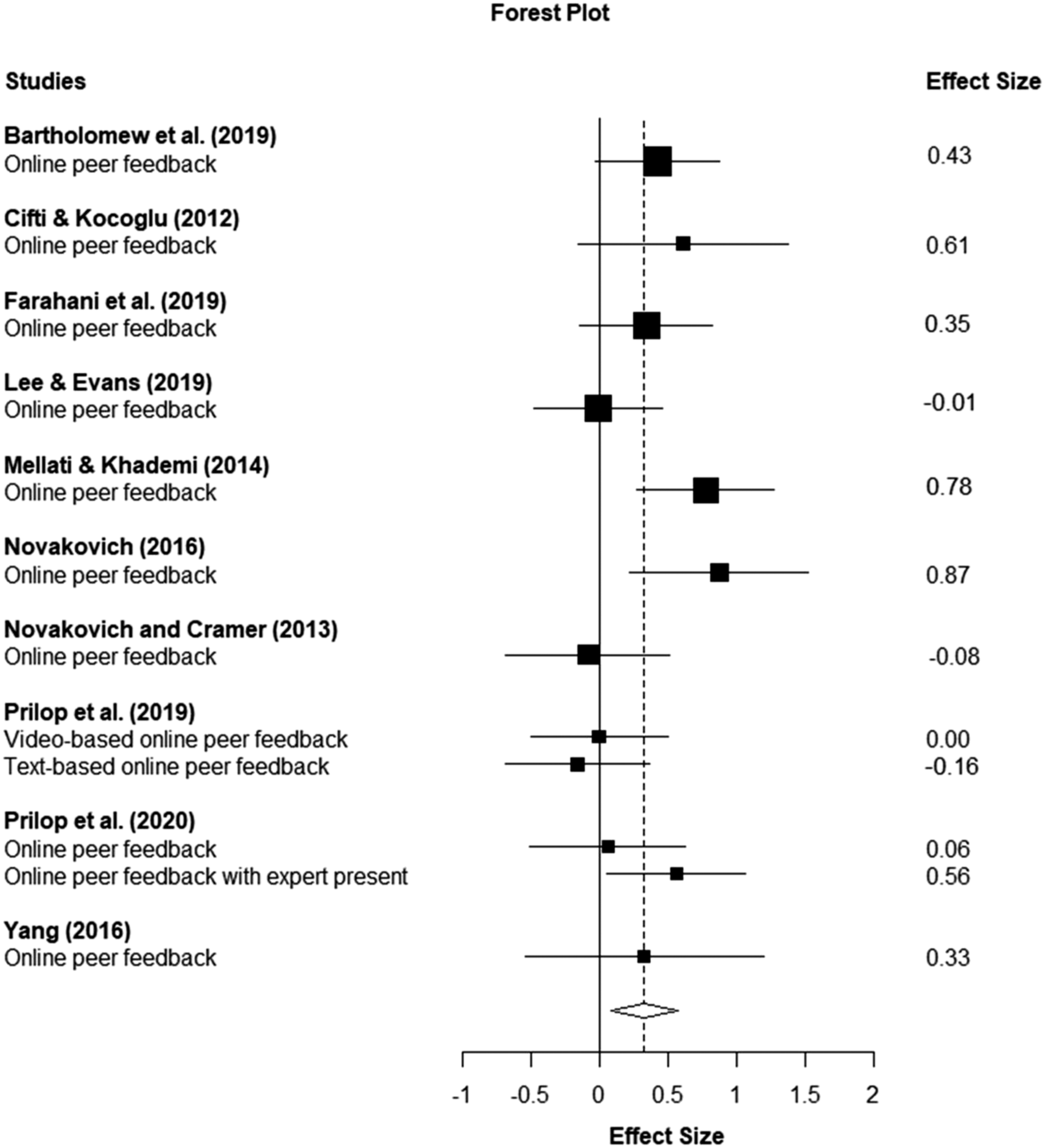

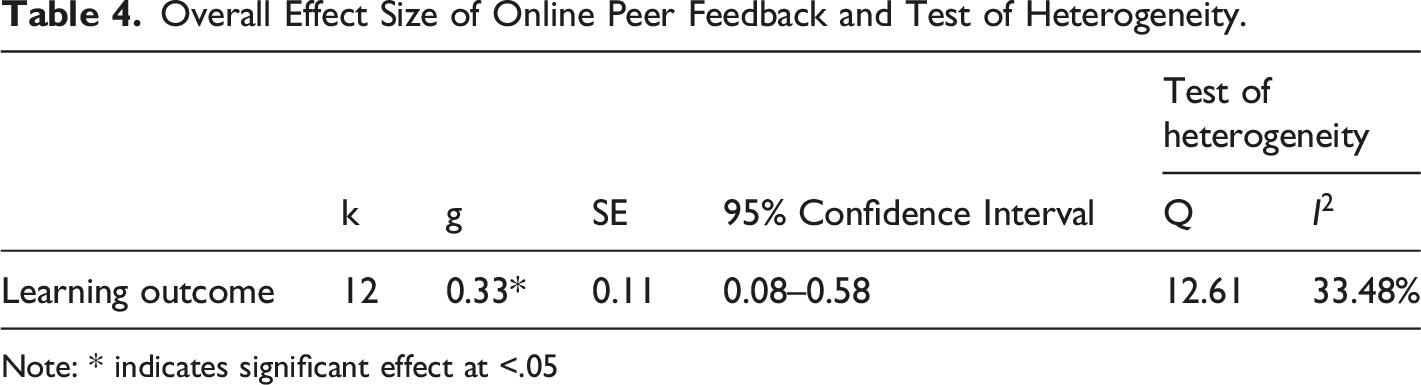

The meta-analysis indicated an overall effect size of g = 0.33, p < .05 for online peer feedback as shown in Table 4. Figure 2 shows a forest plot of the distribution of all the effect sizes and the pooled effect size. Visual inspection of the forest plot showed there are no distinct outliers. Forest plot of estimated effect sizes, with the pooled effect size shown as the vertical dotted line and zero marked with the vertical continuous line. The diamond shape shows the pooled effect size and its 95% confidence interval. The filled black squares represent the effect sizes for the individual studies, whereas the horizontal line through each square shows the 95% confidence interval. The size of the squares is directly correlated to the weight contribution of each effect size to the overall effect size. Overall Effect Size of Online Peer Feedback and Test of Heterogeneity. Note: * indicates significant effect at <.05

Risk of Bias

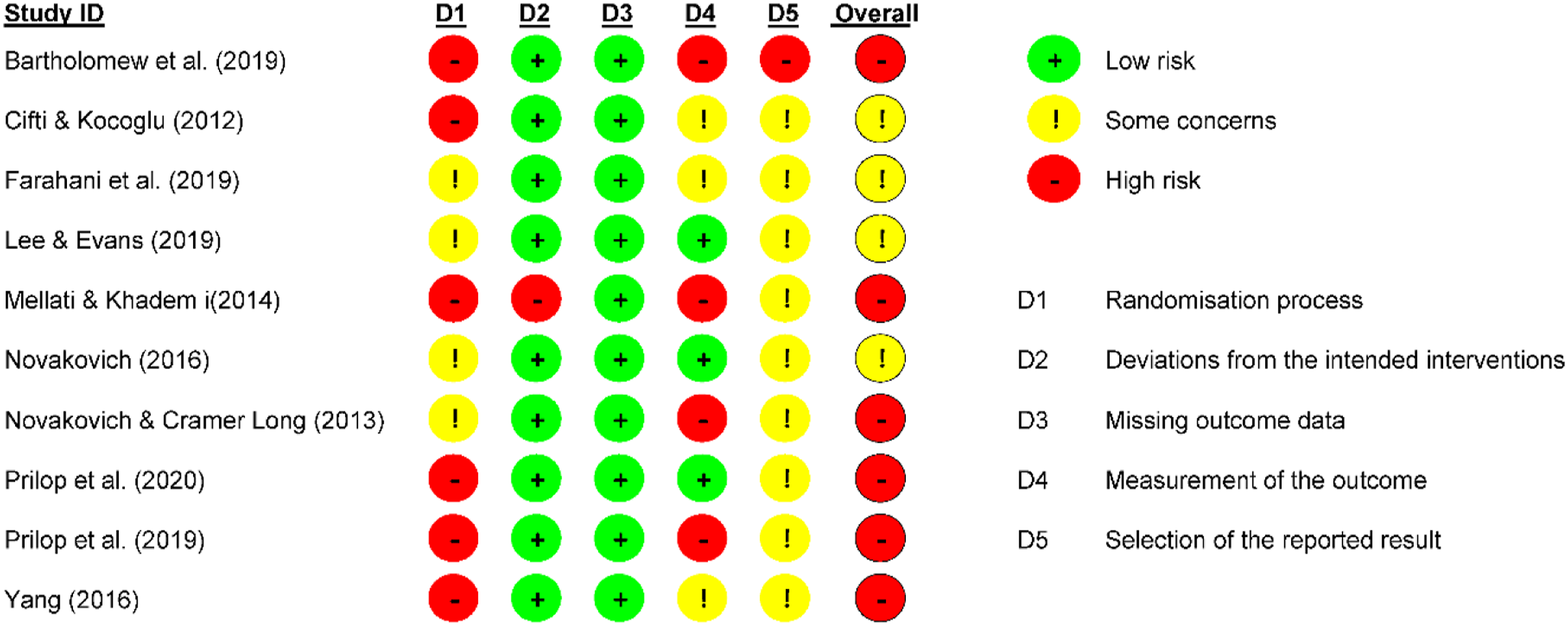

To determine the risk of bias in the overall effect size that might be the result of the individual study setup we conducted a risk of bias assessment using the Cochrane risk of bias tool for randomized controlled clinical trials (Sterne et al., 2019). Since we were only able to find quasi-experimental studies without randomization that met our inclusion criteria, the domains “the randomization process” and the “selection of reported results” were deemed inapplicable in the overall risk-of-bias assessment. The remaining three domains, namely “deviations from intended interventions,” “missing outcome data,” and “measurement of the outcome” were instead considered. The risk of bias assessment resulted in four studies that were identified as having a high risk of bias. As can be seen in the overview of Figure 3, the studies by Bartholomew et al. (2019), Mellati and Khademi (2014), Novakovich and Cramer Long (2013), and Prilop et al. (2020) were at a high risk of bias for these three domains. To review the effect of these studies on the overall effect, a sensitivity analysis was conducted excluding these four studies (five effect sizes) resulting in a similar pooled effect size of g = 0.36, p < .05 compared to the overall effect (g = 0.33, p < .05). As such, possible biases in these four studies did not seem to affect the overall conclusion that online peer feedback has a positive influence on measured learning outcomes. Overview of risk of bias per category (D1-D5) for each of the studies included in the meta-analysis as determined using the Cochrane Risk of Bias tool for randomized controlled trials (Sterne et al., 2019). Each column shows the outcome of the assessment for a category related to risk of bias, including the randomization process (D1), deviations from the intended outcomes (D2), missing outcome data (D3), measurement of the outcome (D4), and selection of the reported result (D5). The last column shows the overall risk of bias. Most studies are considered “high risk”, however, this is mostly due to the randomization process which is not applicable to quasi-experimental studies.

Publication Bias and Heterogeneity

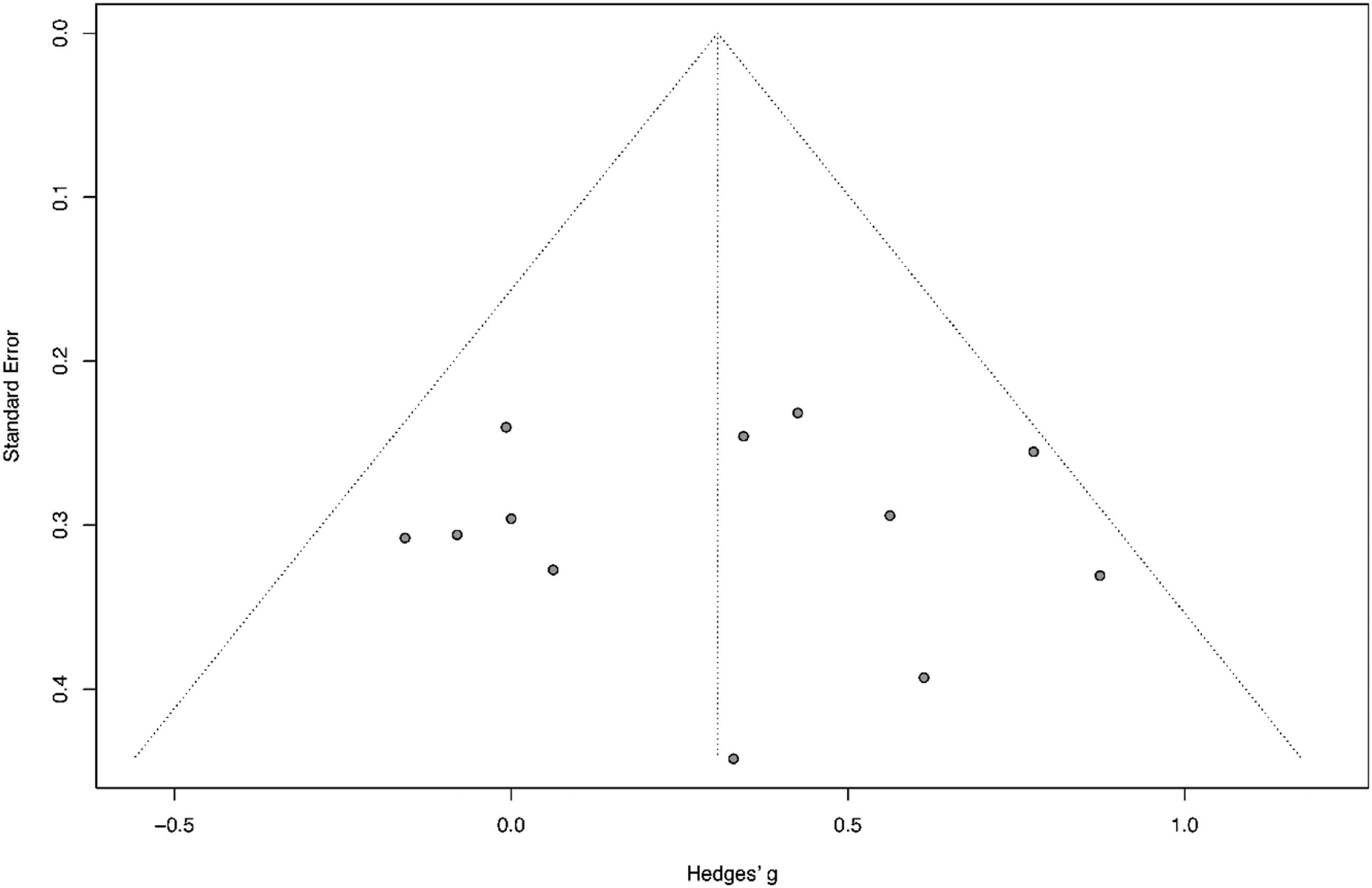

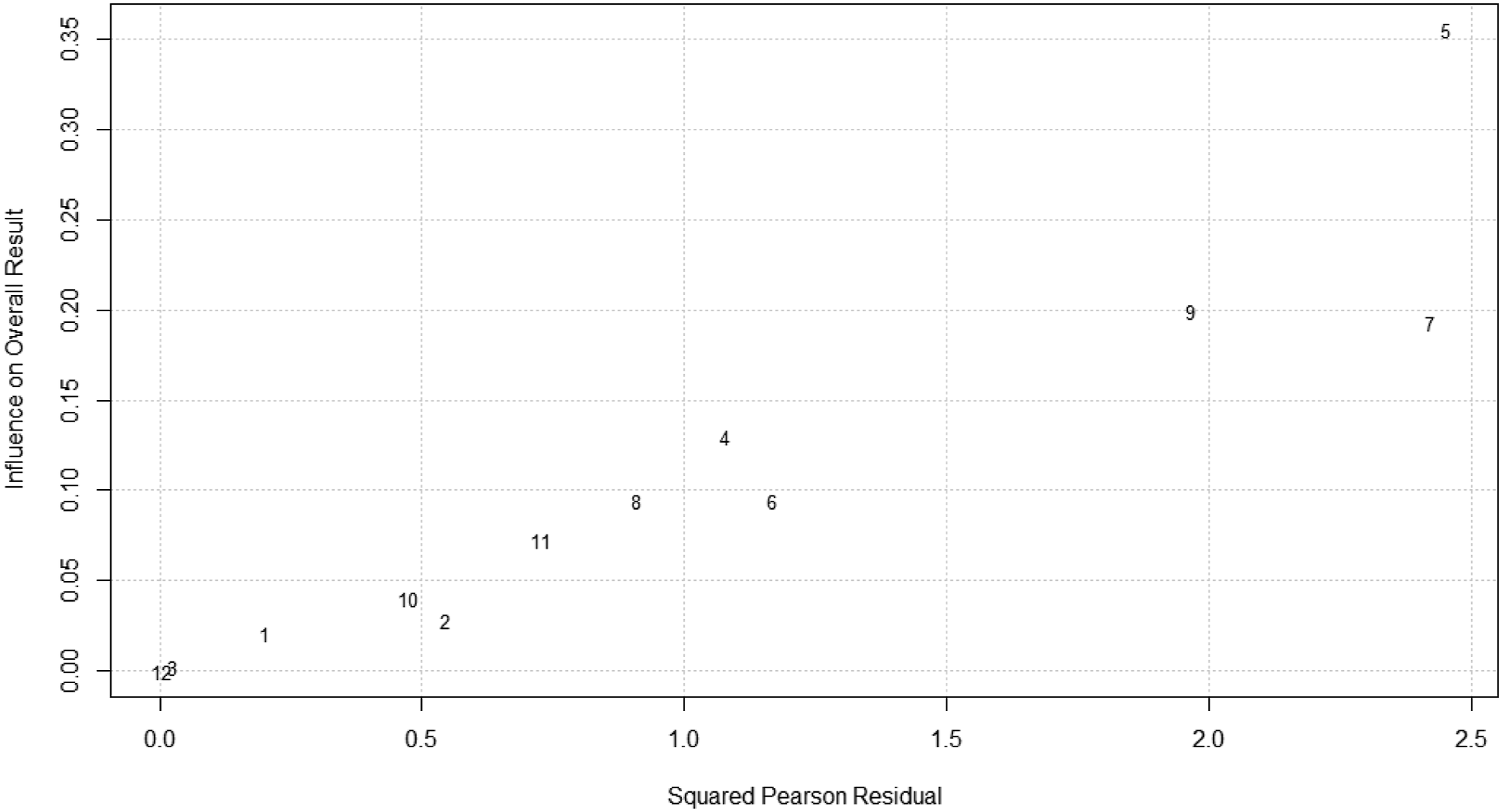

To evaluate any bias in the studies included in this meta-analysis, a funnel plot and a Baujat plot were constructed in R. To check for the risk of publication bias, we did a visual inspection of the funnel plot (see Figure 4). The funnel plot was mostly symmetrical and, therefore, showed no signs of publication bias. In order to confirm this, we conducted Egger’s test of the intercept to statistically test whether there was a bias, which also indicated that there was no risk of publication bias (Egger et al., 1997). To explore heterogeneity, we used a Baujat plot to identify specific studies that contributed to the heterogeneity (Baujat et al., 2002). The Baujat plot showed one distinct outlier (see Figure 5), indicating that this publication contributed to the heterogeneity. Funnel plot. The line in the middle shows the pooled effect size and the grey dots represent individual studies. Studies with a small sample size will scatter along the bottom of the funnel shape, whereas studies with high sample size and, therefore, smaller standard error will cluster at the top of the funnel. Asymmetry in for example the lower-left corner of the funnel plot can indicate that smaller studies with no significant treatment effect did not get published, which would indicate publication bias (Egger et al., 1997). This funnel plot is mostly symmetrical which means there is likely no publication bias. Baujat plot showing outlier Mellati and Khademi (2014) (5). The numbers in this plot refer to the study identifiers outlined in Table 2. The x-axis shows the squared Pearson residual for each study. A larger residual means the study’s estimated treatment effect lies further away from the overall pooled effect size in the meta-analysis. The y-axis shows the influence on the overall pooled effect size for each study. Studies in the upper-right corner are of high influence to the heterogeneity in the analysis (Parr et al., 2019).

To evaluate what the effect is of the outliers as indicated in the Baujat plot, we conducted a sensitivity analysis excluding the publication by Mellati and Khademi (2014) which showed to be contributing significantly to the heterogeneity. This resulted in a smaller effect of g = 0.27, p = .03, meaning the overall effect could be largely influenced by this one study.

Subgroup Analysis

Two subgroup analyses were conducted to measure differences in effect sizes among different moderating variables. According to the coding scheme that we used, there were three different task types used across the studies used in this meta-analysis: writing essays (n = 7), artefacts (n = 1), and presentations (n = 4). Because a subgroup with n = 1 is not very meaningful, we put the task types writing essays and artefacts together as products. A subgroup analysis was then conducted for the task types products (n = 8) versus presentations (n = 4), which resulted in effect sizes for products with g = 0.39 [ 0.11; 0.68], and for presentations with g = 0.12 [–0.38; 0.63]. This finding suggests that online peer feedback might be more effective when writing essays or artefacts are used as task types, although this difference was not significant with p = .17.

We conducted a second subgroup analysis differentiating between whether the outcome measure was a measurement of a competence (e.g., a foreign language or feedback competence), including effects 1–3, 5–7, and 10–12 (see Table 1), versus self-efficacy in a learned skill, including effects 4, 8 and 9 (see Table 1).This subgroup analysis resulted in an effect size for competences (n = 9) of g = 0.44 [0.20; 0.68] compared to an effect size for self-efficacy (n = 3) of g = −0.05 [–0.25; 0.16], indicating that online peer feedback has a significantly better effect on competences (p = .00) than on self-efficacy.

Review of Student Perceptions

Five out of the 10 studies included in the meta-analysis also reported student perceptions of peer feedback. By surveying the students, Bartholomew et al. (2019) found that students in the experimental group using Adaptive Comparative Judgment (a form of assessment using comparisons instead of criterion scoring) enjoyed the peer feedback process more, thought the process was easier to follow, and found the peer feedback more helpful than the students in the control group using paper-based peer feedback. Students in the experimental group stated they learned the most from receiving feedback, whereas students in the control group learned the most from direct communication with peers.

Ciftci and Kocoglu (2012) surveyed and interviewed the students in the experimental group who used blogs to give each other peer feedback. The results show that students felt positive about using blogs but weren’t sure about their confidence in giving and receiving peer feedback. However, they also stated they didn’t feel embarrassed when providing peers with feedback. The use of blogs was easy and time-independent and contributed to the feeling of being a ‘real writer.’

By surveying the students and interviewing eight students from both conditions, Lee and Evans (2019) concluded that students felt that giving peer feedback was more helpful than receiving peer feedback, without a distinct difference between online and offline peer feedback. The interviewees in the experimental group were positive about using Google Docs for online peer feedback because the asynchronous nature gave them more time to check the internet before providing fellow students with feedback. However, they also stated that these asynchronous discussions made direct exchanges between students difficult due to delayed responses.

Mellati and Khademi (2014) conducted interviews with one student from each condition, but they only used a few quotes from the students to back up the findings from their research, namely that online peer feedback can enhance the learning experience when teachers and students have the required skills to use computer mediated communication. One student mentioned that technology can function as extrinsic motivation, but the other student stated that unfamiliarity with technology could become an obstacle and lead to confusion. However, it remains unclear which student said what.

Yang (2016) used a survey to get students’ perceptions about online peer feedback. The students stated that online peer feedback could reduce their writing anxiety. It also gave them more time to think about how they would comment on their peers’ writing, whereas in on-site peer feedback students have a limited amount of time.

Overall, students seem positive about the use of online peer feedback. Especially the time-independence of online peer feedback is seen as convenient by students. It enables them to check resources and gives them more time to think about and phrase their comments before providing their peers with feedback. Online peer feedback thus reduces anxiety around giving peer feedback (Cifti & Kocoglu, 2012; Lee & Evans, 2019; Yang, 2016). Moreover, it gives the possibility to review work from multiple peers (Bartholomew et al., 2019). However, a possible downside of the asynchronous nature of online peer feedback is that participating in a feedback dialogue can become difficult (Lee & Evans, 2019) while this dialogue could benefit learning (Bartholomew et al., 2019). Some students also stated that they preferred feedback from a teacher, indicating that students do not always trust the feedback from their peers or feedback they give themselves (Bartholomew et al., 2019; Ciftci & Kocoglu, 2012).

Discussion

In this study, two research questions were addressed concerning the effect of online peer feedback. First, the effect of online peer feedback on learning outcomes among higher education students compared with offline peer feedback was meta-analysed. Second, the perceptions of students towards online peer feedback were reviewed, using those studies used in the meta-analysis that included such perceptions.

Effects of online peer feedback

Overall, a small-to-medium positive effect was found for online peer feedback, as compared to offline feedback. This finding suggests that online peer feedback can generate better learning outcomes compared with offline peer feedback. However, it should be noted that most of the analyzed studies reported no effect or a very small effect. Possible reasons for this are discussed in the limitations section of the discussion. A possible explanation for the overall positive effect is that this could be due to the time-independence of online peer feedback. It enables students to take their time to assess their peers’ papers and write considered comments to provide feedback. Moreover, an online system may be easier and faster to use to give peer feedback than a paper-based peer feedback activity (Li et al., 2009). In some cases, where feedback might be difficult to provide in short comments because it is more sensitive or abstract, face-to-face peer feedback may be more efficient. This way, students can engage in a feedback dialogue which can lead to greater engagement in the peer feedback activity itself (Zhu & Carless, 2018). When online peer feedback is still preferred, it is possible to facilitate dialogic feedback by combining feedback training and rating of feedback comments by students. This combination can lead to more reflection of the feedback that is both given and received (Filius et al., 2018).

Moreover, previous research has shown that online peer feedback yields stronger effects on writing assignments and artefacts (Cui & Zheng, 2018; Zheng et al., 2020). This finding would indicate that task types such as products could be more effective for using peer feedback than presentations. However, the subgroup analysis of task types in the current meta-analysis did not indicate a significant difference between products and presentations.

The second subgroup analysis suggests that online peer feedback is more effective for truly improving competencies rather than the improvement of self-efficacy in those competencies. This difference could be explained by the notion that there are some pitfalls in self-assessment. Students often have no clear view of the quality of their own work (Brown et al., 2015). Moreover, students could get a confidence boost when they give peer feedback on work that is of less quality than theirs, or they could get discouraged when they perceive the work of a peer to be better (Brown et al., 2015; Lee & Evans, 2019).

Student Perceptions of Online Peer Feedback

The results from the review of student perceptions show that students are predominantly positive about their experiences with online peer feedback. They describe the online peer feedback process as easy and useful for learning (Bartholomew et al., 2019; Ciftci & Kocoglu, 2012). This is a promising result since the positive attitudes are consistent with the enhanced effectiveness of online peer feedback activity.

According to students, the use of technology can work as an extrinsic motivation, leading to more engagement with the peer feedback activity (Mellati & Khademi, 2014). However, other research has pointed out that intrinsic motivation is more often the highest contributor to engagement with technology enhanced learning (Dunn & Kennedy, 2019; Seckman, 2019). The use of online technology may therefore only partly ensure higher engagement with the peer feedback activity.

The time-independence of online peer feedback is valued by students. It enables them to carefully formulate their feedback comments without the time constraint of an in-class peer feedback activity (Ciftci & Kocoglu, 2012; Lee & Evans, 2019; Yang, 2016). This finding is in line with the results of the current meta-analysis mentioned, indicating that the time-independence of online peer feedback can be a great advantage over offline peer feedback. However, it should also be mentioned that in some cases students found that this time-independence and asynchronous nature of online peer feedback becomes detrimental to a feedback dialogue (Lee & Evans, 2019). Learning can also occur from the direct communication with peers (Bartholomew et al., 2019). Whenever a feedback dialogue is desirable, for example when a discussion about the feedback is necessary, face-to-face peer feedback might be more suitable.

Students are more critical with regard to interpreting peer feedback in general compared to teacher feedback. They do not always readily accept the feedback a peer may have given them (Bartholomew et al., 2019; Ciftci & Kocoglu, 2012). This could be detrimental to the learning experience due to negative perceptions towards the peer feedback. However, other research has suggested that students being critical towards peer feedback, can also lead to deep learning. Filius and colleagues (2018) reported that students tend to question peer feedback more than they do with teacher feedback, which is more readily accepted. When students are aware of the benefits of (online) peer feedback, there may be less negative feelings towards the activity itself. It remains unclear whether there is a discrepancy in the acceptance of feedback between students receiving online peer feedback and students receiving offline peer feedback.

Online peer feedback has several benefits for both students and teachers. An online environment for peer feedback can create a more collaborative environment where students can receive feedback from more than one peer (Farahani et al., 2019). Moreover, teachers have the ability to monitor feedback comments more closely (DiGiovanni & Nagaswami, 2001), which in some cases can result in a stronger relationship between teacher and students because teachers can provide assistance in real time (Novakovich & Cramer Long, 2013). By allowing teachers to have access to students’ work in real time, online peer feedback can save the teacher time for logistics, as well as allow more time in class for other activities due to its time-independence. The time-independence of online peer feedback can also promote autonomy and self-study in students because they are able to decide for themselves how and when they give feedback (Mellati & Khademi, 2014). Autonomy is a key factor to increase motivation, according to the self-determination theory by Ryan and Deci (2000). Motivation, in turn, leads to more engagement with the peer feedback activity and, therefore, to better learning (Dunn & Kennedy, 2019). Another way to motivate students is by creating an authentic learning environment, such as an online blog to publish essays. Giving peer feedback via online blogs can make students feel like actual writers, which encourages students to think about their audience while writing their essays and feedback comments (Ciftci & Kocoglu, 2012; Novakovich, 2016; Novakovich & Cramer Long, 2013). Online peer feedback also seems to invite more critical and directive feedback comments than offline peer feedback, where students provide feedback comments that explain the effect of the work on its reader or give direct statements on how to improve the work. These kinds of comments can greatly enhance the quality of writing (Novakovich, 2016).

Limitations

There are a few limitations of this meta-analysis that should be mentioned. First, due to the limited number of studies, there is substantial uncertainty as to true effect sizes, as can be seen from large confidence intervals.

Second, not all the studies used the same online platforms to facilitate peer feedback, which may have contributed to heterogeneity in the studies. Weblogs, Google docs, Adaptive Comparative Judgment, and video-based peer feedback were among the platforms utilised to facilitate peer feedback. These methods of peer feedback differ from each other and can potentially affect student-motivation or user-friendliness of the tool in question differently (van der Pol et al., 2008). Moreover, the split-attention principle could play a role when students are faced with an online platform that separates the paper that needs to be reviewed and the instructions on how to review it. This could lead to a higher extraneous load and, consequently, influence learning outcomes. This is something that is worth investigating more closely (Ayres & Sweller, 2014). On the other hand, despite these differences in peer feedback tools, the results of the meta-analysis still show that online peer feedback is overall more effective than offline peer feedback.

Third, to what extent the use of technology in online peer feedback is a motivator on its own remains unclear. In a review of educational technology, Yeung et al. (2021) concluded that technology is only beneficial to learning when it involves unique affordances, rather than when it only involves presenting information. This is in line with Higgins et al. (2019), who stated that technology-assisted instruction is more motivating than technology-based instruction. It is therefore important to consider why online peer feedback is implemented and how it could be more effective than offline peer feedback. This is probably not because of the brute fact that peer feedback is offered online, but the result of the affordances of online peer feedback, such as time-independence, and how this can influence learning outcomes. However, we could not identify studies that have looked at mediators or moderators of the advantage of online feedback provision.

Implications

There are several implications for the use of online peer feedback in higher education and further research, which we will discuss in this section. In the first part the implications of our research findings for educational practice are discussed. Subsequently, the implications for future research are discussed in the second part.

Implications for Educational Practice

First of all, online peer feedback can assist students in formulating critical feedback comments for their peers, which can lead to better learning outcomes. This would support the use of online peer feedback instead of offline variants. However, this may not be true of all forms of assignments. It is important for teachers to rationalise which peer feedback activity would best complement the assignment that needs feedback. Writing assignments and artefacts yield the best results with online peer feedback, whereas subjective outcomes might benefit more from offline peer feedback with a feedback dialogue.

Negative emotions due to a lack of interpersonal contact in an online peer feedback activity could be detrimental to learning (Kim et al., 2014). However, when online peer feedback is implemented as an assisting technological feature, where the online activity is implemented next to in-class activities, as opposed to offering technology-based instruction, students will most likely benefit from its advantages (Higgins et al., 2019). To implement online peer feedback as an assisting online feature it would be best to provide enough direct interactions between students and teacher. Moreover, clearly communicating the relevance of a peer feedback activity to students might reduce any negative perceptions about the activity and increase motivation (Keller, 2010).

Implications for Future Research

This meta-analysis has pointed out that more research is needed towards the moderator variables that influence the effect of (online) peer feedback. Considerable research has been done showing the importance of feedback training or the effect of anonymity. However, to our knowledge, no research has been conducted that has looked at which affordances of online feedback are crucial, or which has compared different online peer feedback platforms. Experimental research comparing different features within platforms could give more insight into how online peer feedback can be most effective for certain academic outcomes. It could be interesting to investigate the differences in the structure of the platforms and how this affects cognitive load in students.

More research is needed to investigate why and when exactly online peer feedback is more effective than offline peer feedback. This could give more insight into how an online peer feedback activity could be implemented to be most effective for the learning experience of students.

Although students seem to be positive about their experiences with online peer feedback, it can be worth investigating whether students more readily accept feedback from their peers in an online setting compared to other peer feedback modes and how this affects learning outcomes. This could shed more light onto the student perceptions of (online) peer feedback and how to improve these to optimize learning through peer feedback.

Conclusion

Online peer feedback is, on average, more effective than offline peer feedback. Online peer feedback has advantages such as time-independence, enabling students to engage in the activity in their own time without the time constraint when it needs to be done in class. It also gives students the opportunity to check other sources before they give feedback. This can result in more critical and elaborate feedback comments, which lead to better learning outcomes.

Footnotes

Acknowledgments

We would like to thank Robin Straaijer for his help in selecting the studies for this meta-analysis.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.