Abstract

Post-secondary students are exposed to a wide variety of stressors, and mental health-related concerns continue to increase among this population. The development and improvement of mental health services hinges on the use of valid and reliable measurement tools to assess student stress and evaluate the efficacy of interventions. To date, there has been no comprehensive overview of available instruments intended to evaluate post-secondary student stress. The purpose of this study was to conduct a rigorous scoping review of published works detailing the use of instruments to evaluate post-secondary student stress, and evaluate the psychometric properties of these tools as well as their suitability for use among post-secondary populations. Four academic databases were searched, including HaPI, CINAHL, Medline, and PsycINFO, including peer-reviewed records published in English between 2012 and 2024. Articles were independently screened for inclusion by title, abstract, and full-text review. A total of 62 records and 11 instruments were screened into the review. The majority of instruments included in our review did not meet the criteria for suitability, nor did they have published, comprehensive psychometric evaluations. The Post-Secondary Student Stressors Index emerged as the most appropriate instrument for evaluating post-secondary student-specific stress owing to both its suitability and strong psychometric properties. The Perceived Stress Scale and Stress Overload Scale were deemed to be the most appropriate instruments for evaluating general stress levels.

Keywords

Introduction

Post-secondary students face several stressors related to academics, social interactions, personal factors, and finances, as emerging adults between the ages of 18 and 24 years (Linden et al., 2022; Wider et al., 2023). Emerging adulthood is a period of significant development and transition characterized by social, cognitive, and physical changes that impact wellbeing (Wider et al., 2023). As a result, post-secondary students are particularly vulnerable to experiencing excess stress which may lead to mental health issues while navigating this transition period (Linden et al., 2022; Linden & Stuart, 2020; Wider et al., 2023). Research has established a connection between excess stress and adverse implications among post-secondary students, including substance misuse, development of mental illnesses, declining academic performance, and suicidality (Duffy et al., 2020; Linden et al., 2022; Linden & Stuart, 2020; Moghimi et al., 2023). Upon entry to university, perceived stress is a risk factor for poor mental health outcomes, notably symptoms of depression and anxiety (Duffy et al., 2020; Moghimi et al., 2023). The COVID-19 pandemic exacerbated mental health concerns, as novel stressors were introduced (isolation and loneliness, financial uncertainty, disrupted extracurriculars, transition to remote learning), adding to existing ones (Linden et al., 2022). Existing literature has found that students without pre-existing mental health concerns exhibited worsening mental health due to elevated stress during the COVID-19 pandemic (Ewing et al., 2022; Hamza et al., 2021; Watkins-Martin et al., 2021).

The prevalence of mental health concerns also appears to be increasing among the post-secondary student population (Linden et al., 2021). Between the 2013 and 2019 releases of the National College Health Assessment (NCHA-II) surveys, self-reported symptoms of mental illness and above-average stress levels significantly increased (Linden et al., 2021). From 2013 to 2019, stress and anxiety among Canadian students increased from 38.6% and 28.4% to 41.9% and 34.6%, respectively (American College Health Association, 2013, 2019). The 2022 NCHA survey indicated high levels of self-reported stress and help-seeking from on-campus mental health supports (American College Health Association, 2022). Notably, the 2022 NCHA used the NCHA-III, rather than the NCHA-II, so a direct comparison of these survey results is not recommended.

Alongside the increased prevalence of excess stress, greater demand has been placed on student mental health services (King et al., 2021; Linden et al., 2022). Many post-secondary institutions offer mental health services in the form of short-term therapy, counseling, peer support, mental health education, and outreach programs (Jaworska et al., 2016; Moghimi et al., 2023). In the wake of the increased demand, on-campus and online resources have expanded, but demand continues to exceed the available resources (King et al., 2021; Linden et al., 2022). Current literature suggests that Canadian post-secondary students feel that mental health promotion and outreach supports should be increased (Jaworska et al., 2016; Moghimi et al., 2023). Further, students face significant barriers to accessing downstream mental health services including long wait times, financial factors, stigma, past negative experiences, limited knowledge of available supports, and cultural barriers (Jaworska et al., 2016; Moghimi et al., 2023; Peterson et al., 2024). While there is evidence that low stress levels (eustress) can positively impact performance, there is a threshold beyond which it becomes negative (distress) (Frazier et al., 2019). Ongoing, high levels of stress without adequate treatment increases the risk for psychological and emotional distress (Mofatteh, 2021). In turn, distress may lead to poor academic performance, substance abuse, suicidality, and may strain relationships (Mofatteh, 2021).

Existing mental health supports can be improved through more precise targeting of stressors, which requires a more holistic understanding of the stressors students experience (Linden & Stuart, 2019). Furthermore, the development and testing of new interventions hinges on the use of an accurate and comprehensive instruments designed to evaluate stress within the student context (Kokka et al., 2023; Linden & Stuart, 2019). Evidence-based interventions and supports that teach stress management skills and support mental wellbeing can significantly improve students' mental health, resiliency, and academic performance (Alborzkouh et al., 2015). Researchers must have knowledge of and access to tools that can provide a valid measurement of student stress. Within the current published literature, there is a lack of overview regarding available instruments designed to evaluate stress and their respective suitability for use among the post-secondary population. Three relevant reviews were identified, though the tools assessed in two are not specific to the post-secondary student population and do not evaluate general daily stress (Alanazi et al., 2023; Kokka et al., 2023), while the third is outdated (published >10 years ago) (Downs et al., 1990). The goal of the present study was to address this gap by conducting a thorough scoping review of peer-reviewed articles on existing instruments used to evaluate stress among post-secondary students, and determining their suitability for use and the strength of their psychometric properties.

Methods

We conducted a two-phase study to evaluate existing instruments used to assess stress among post-secondary student populations. First, we conducted a scoping review of published literature where studies detailed primary data collection including a measurement of post-secondary student stress. We used Arksey & O’Malley’s (2005) 5-step methodological framework for scoping reviews: (1) research question identification, (2) identifying relevant studies, (3) selecting studies (4) data charting, and (5) collecting, summarizing, and reporting results. This guideline was utilized within the Preferred Reporting Items for Systematic reviews and Meta-Analyses extension for Scoping Reviews (PRISMA-ScR) framework (Tricco et al., 2018). Next, we extracted the instruments identified in the articles included in the scoping review and conducted a quality assessment by evaluating validity and reliability evidence for each tool, as well as the tool’s suitability for use among post-secondary students.

Scoping Review of Literature Evaluating Student Stress

Identification of the Research Question

A primary research question was developed outlining the target population, research scope, and overall objective: “What instruments have been used in published studies to measure stress and sources of stress among post-secondary student samples?” The secondary research question was “What is the quality of these instruments, as determined by analyzing their evidence for validity and reliability, as well as their suitability for the target population?”

Identification of Relevant Studies

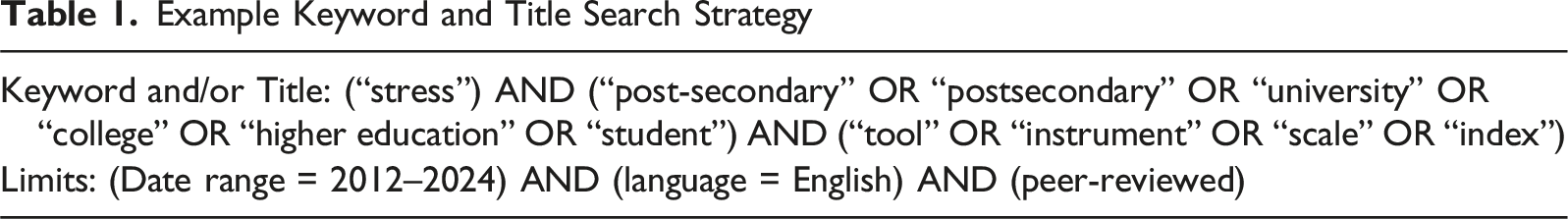

Example Keyword and Title Search Strategy

Study Selection

Records were screened into the review if: the study reported the use of an instrument to collect primary data measuring stress, the sample consisted of post-secondary students, the study was within the specified publication range (2012–2024), was available in English, and was peer-reviewed. Records were excluded if full-text articles were not available, if the sample studied was not the target population (e.g., post-secondary students), primary data was not collected, no instrument was used to collect data specifically on student stress, or if the study and/or instrument used focused on a traumatic or critical life event (e.g., post-traumatic stress disorder).

Data Extraction and Content Analysis

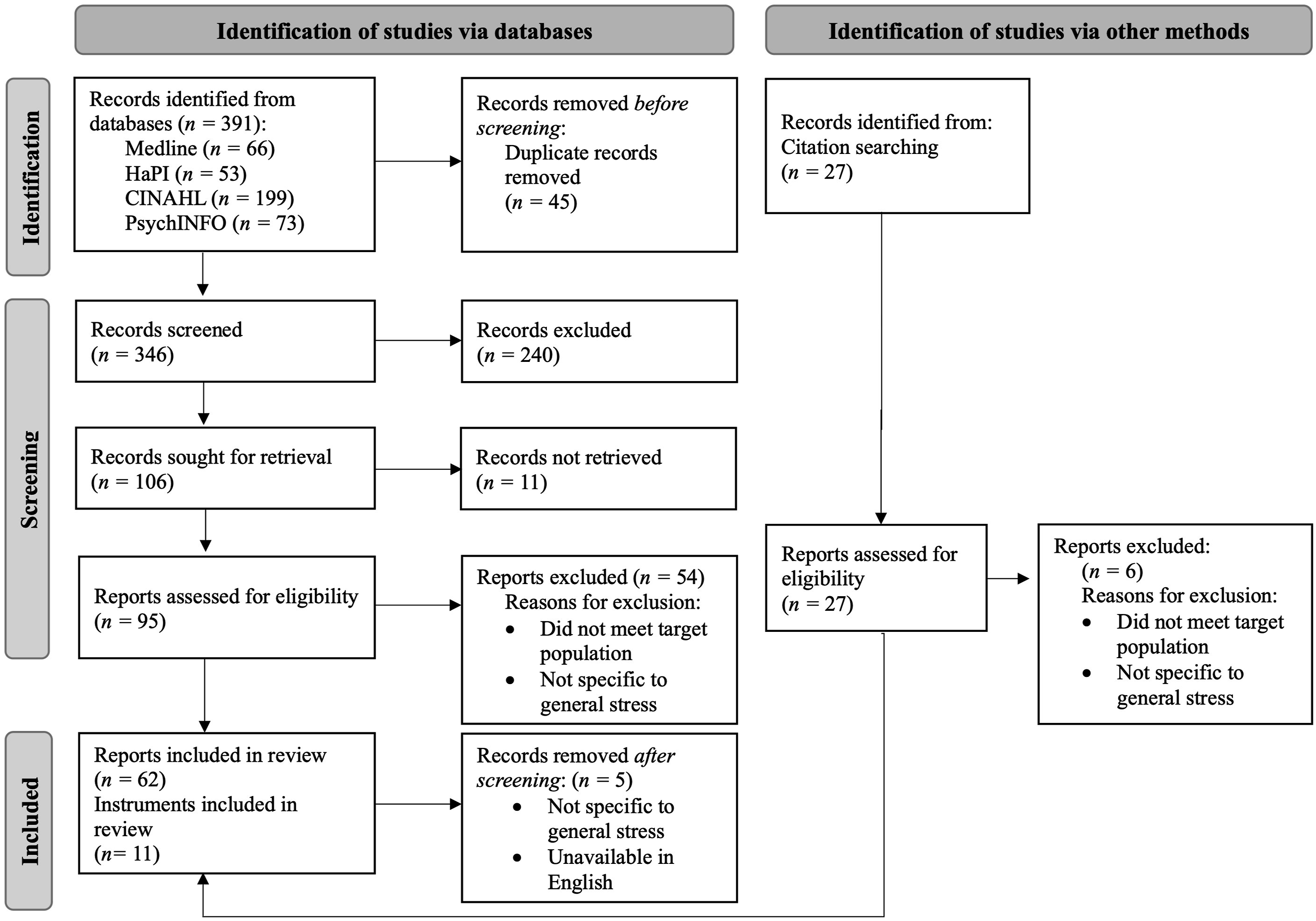

All identified records were imported into Zotero citation manager and duplicates were removed. Records were then imported into an excel tracking document. Two reviewers independently completed screening by title and abstract, followed by full-text review to remove articles that did not meet the inclusion criteria. After title/abstract screening and full-text screening, respectively, selected studies were compared and inconsistencies were resolved, with a third reviewer available to break ties. The following information was extracted from selected studies: (1) citation information [including author(s), title of article, journal, year of publication], (2) study population, (3) location of the research study, (4) name of the instrument used to assess stress. This systematic process is in line with the recommended team approach for study selection outlined by Levac and colleagues (Levac et al., 2010). Figure 1 displays a PRISMA flow diagram detailing this process. In total, 62 records and 11 instruments were included in the review. PRISMA Flow Diagram for Record Screening and Selection

Quality Assessment of Stress Instruments

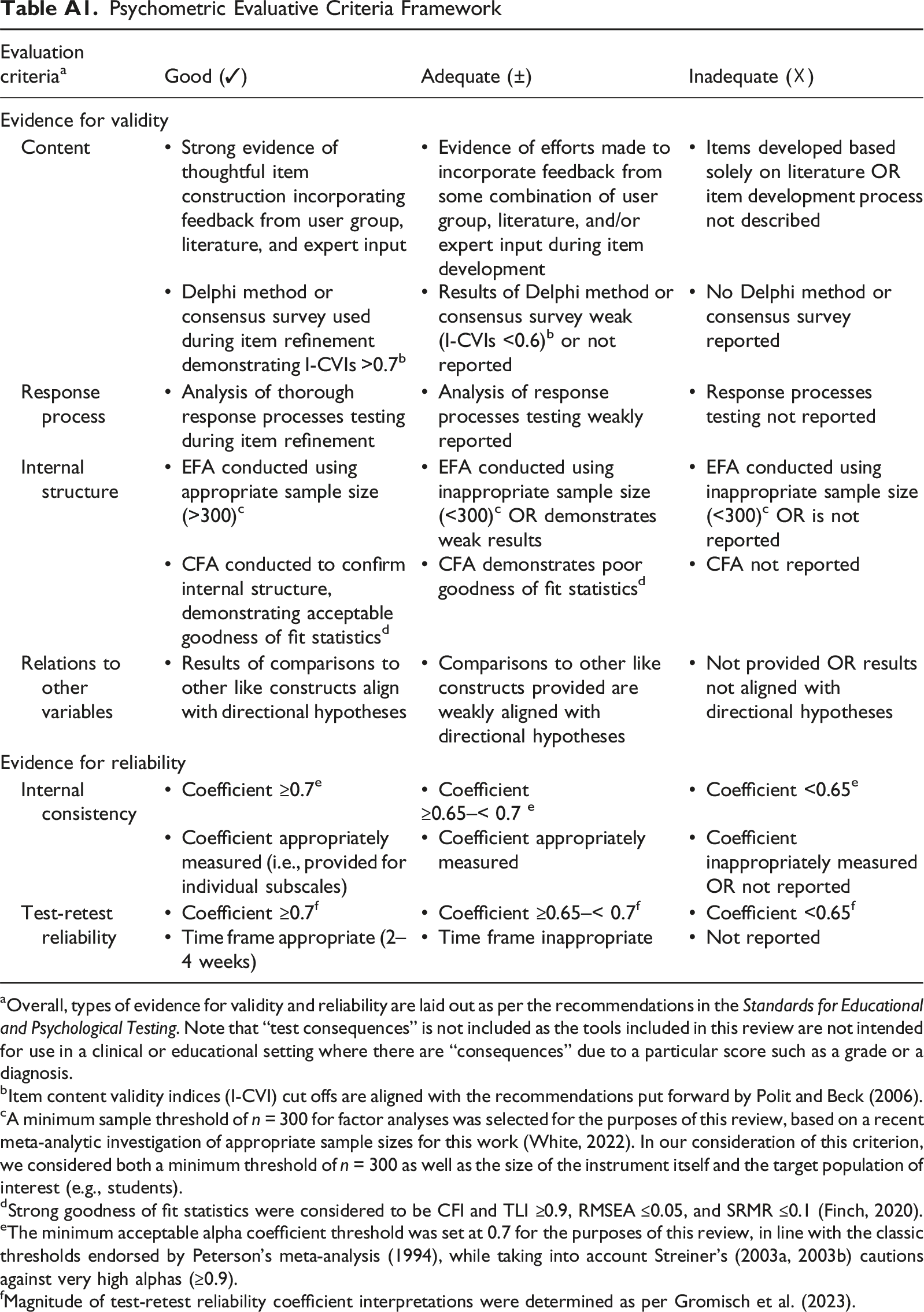

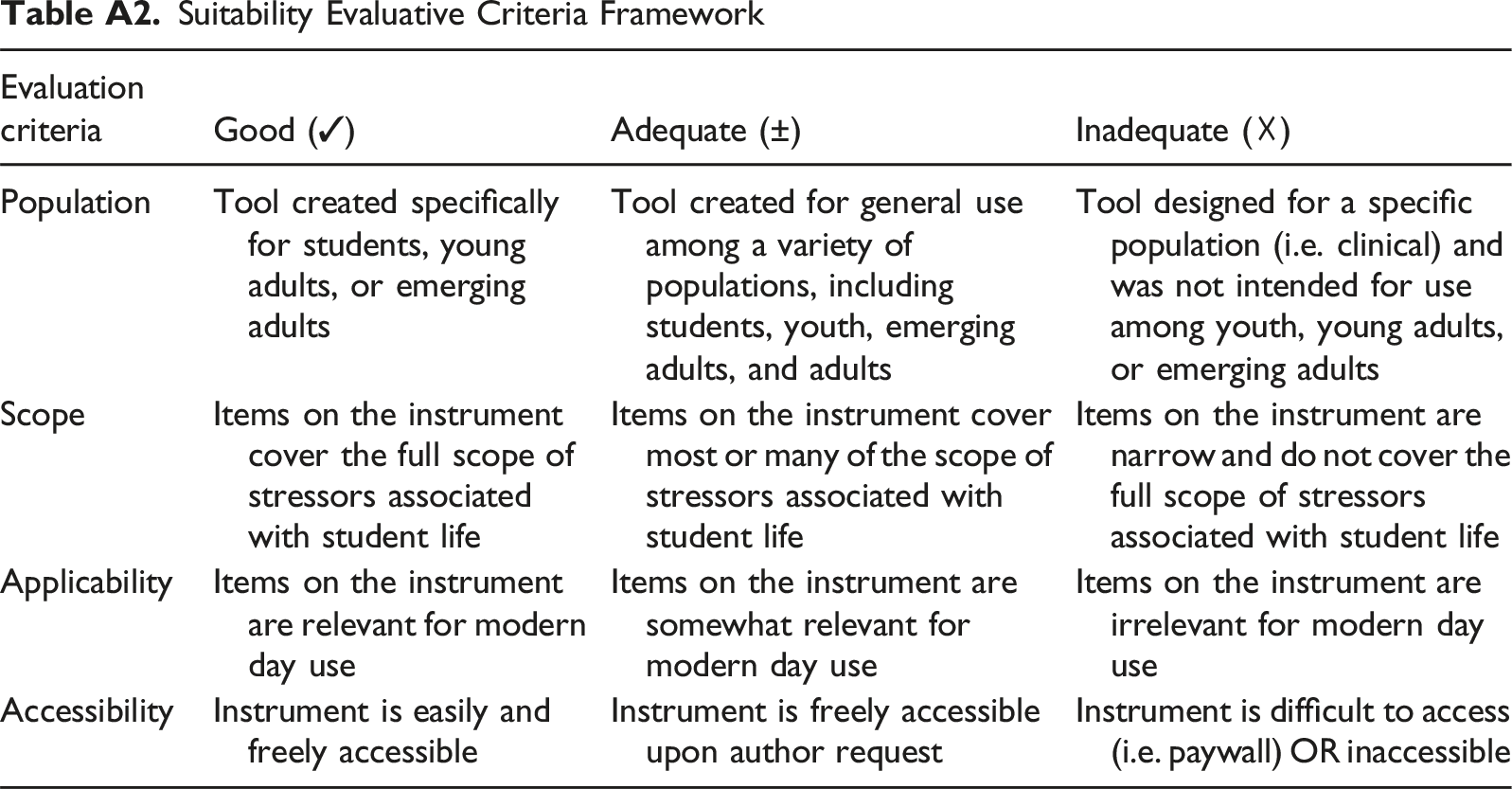

All instruments utilized in the articles screened into the scoping review were extracted into a separate tracking document. A subsequent search for published information on the psychometric properties of these instruments was conducted. We used an adapted version of Miles and colleagues (2018) psychometric evaluative criteria framework to systematically assess evidence for validity and reliability and providing rankings of good, adequate, and inadequate based on the criteria laid out in Appendix A, Table A1. We assessed several types of evidence for validity, in line with the Standards for Educational and Psychological Testing (“the Standards”) (American Educational Research Association, 2014): content, response processes, internal structure, and relations to other variables. We also assessed reliability by searching for evidence of internal consistency (the degree to which items measure a common, underlying latent construct of interest) and test-retest reliability (the temporal stability of the instrument over time). A second evaluative framework was created to assess the suitability of instruments for use in the post-secondary population, encompassing: (1) population, (2) scope, (3) applicability, and (4) accessibility, as illustrated in Appendix A, Table A2.

Results

Summary of Records Included

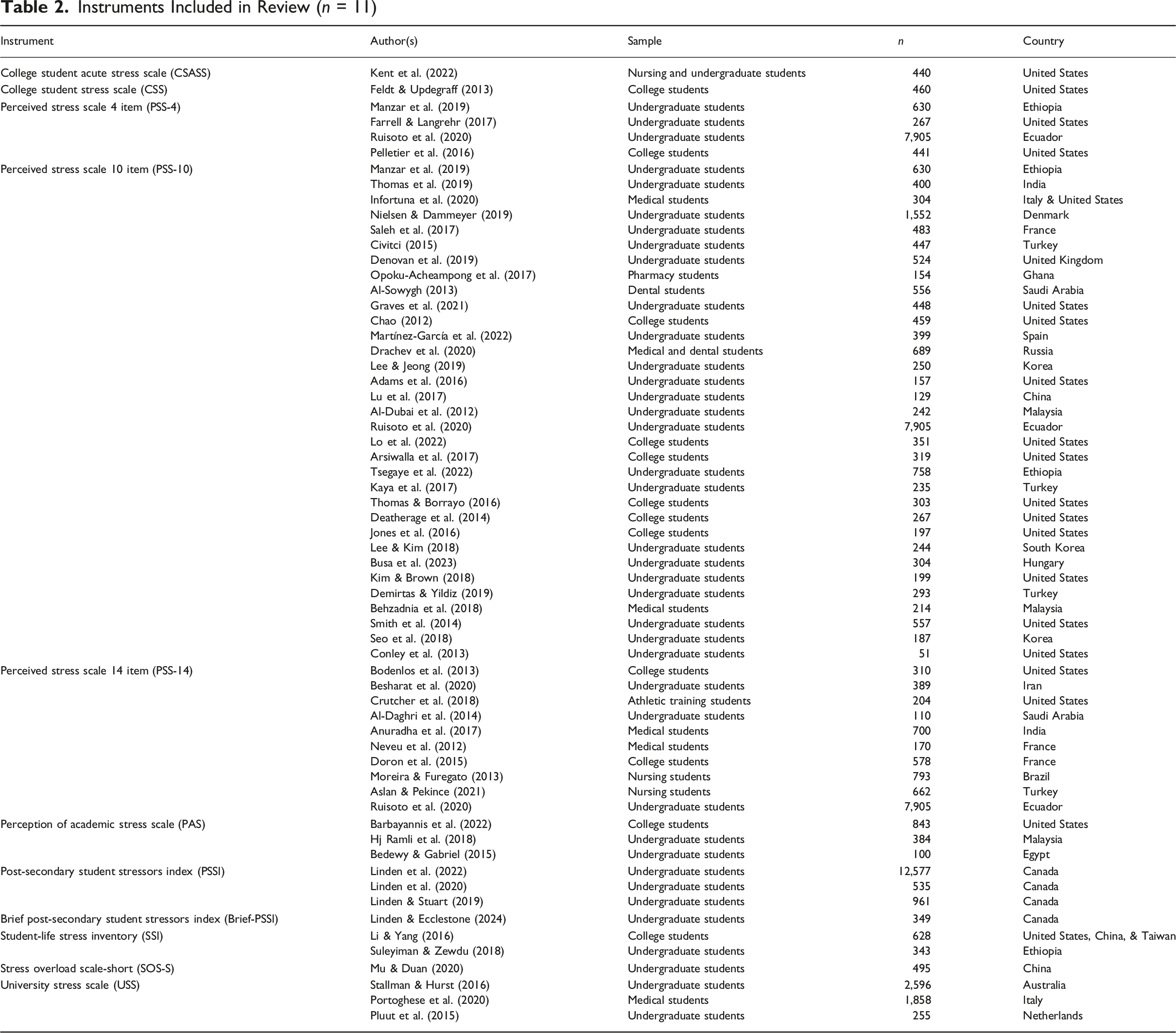

Instruments Included in Review (n = 11)

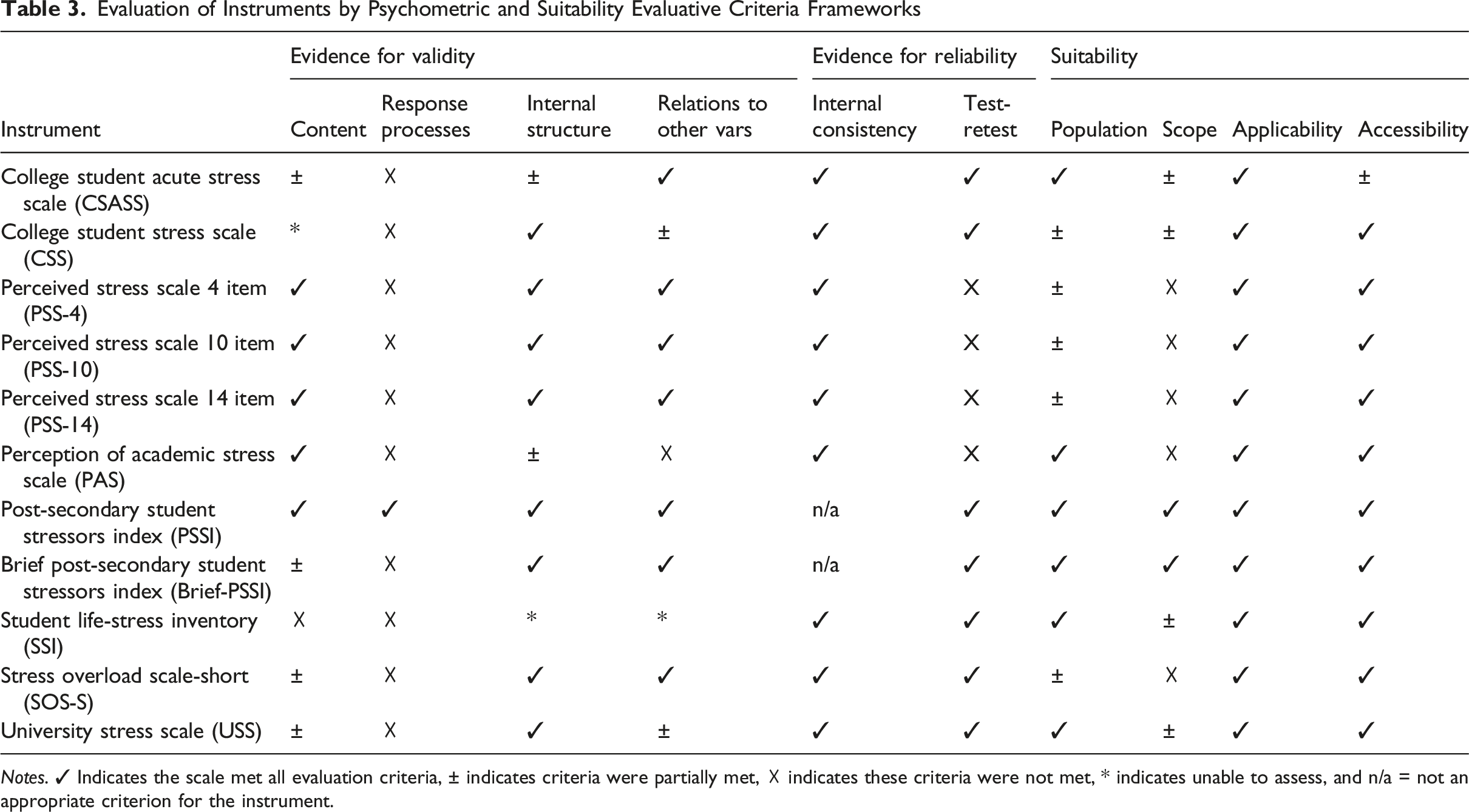

Evaluation of Instruments by Psychometric and Suitability Evaluative Criteria Frameworks

Notes. ✓ Indicates the scale met all evaluation criteria, ± indicates criteria were partially met, ☓ indicates these criteria were not met, * indicates unable to assess, and n/a = not an appropriate criterion for the instrument.

College Student Acute Stress Scale (CSASS)

The College Student Acute Stress Scale (CSASS) is a 13-item tool with responses ranging from 0 (no stress) to 4 (constant stress) (Kent et al., 2022). Items are summed for a composite score ranging from 0 to 52, where a higher score indicates higher stress levels (Kent et al., 2022).

Developed in 2022, the CSASS was intended to improve understanding of short-term stressors experienced by post-secondary students (Kent et al., 2022). Initial items for the CSASS were identified through a literature search followed by discussions with experts (e.g., a nurse, neuropsychologist, and biostatistician) and two graduate students, with preliminary analyses conducted among undergraduate students (Kent et al., 2022). While engagement with subject matter experts and students in developing the tool increases content validation, further student engagement to develop an instrument intended to assess student-specific stress is warranted. Finally, while the authors indicate that they did not “formally” evaluate content validity, they also indicate having released the instrument among a sample of test students who were invited to provide comments on the wording of items and suggest clarifications. However, neither the process nor the results of this stage of analysis are reported.

Kent and colleagues (2022) investigated the reliability of the tool in addition to exploring evidence of relations to other variables and internal structure validation among three small (all n ≤ 215), cross-sectional samples of undergraduate students at a single university in the USA. An exploratory factor analysis was conducted to assess internal structure, revealing a two-factor model representing “social” items and “non-social items.” The first factor explained the vast majority of the overall variance in responses. An additional model with the third sample of students produced a four-factor solution, though the authors reported too much cross-loading of factors to consider this adequate. A forced two-factor solution using this third sample resulted in the two groupings of “social” and “non-social” items as previously observed. Both internal consistency and test-retest reliability coefficients were reported as adequate (α ≥ 0.7), while evidence of relations to other variables was evident in the relationships between scores on the CSASS, the Perceived Stress Scale and Inventory of College Students’ Recent Life Experiences (Kent et al., 2022).

The items in the CSASS cover a fair scope of stressors related to the student experience, including academics, finances, and interpersonal relationships, but fail to address several personal aspects such as those related to internalized pressures and self-care.

College Student Stress Scale (CSS)

Developed in 2008, the College Student Stress Scale (CSS) was originally an 11-item scale with response options ranging from 1 (never) to 5 (very often). Respondents are asked to indicate the frequency with which seven items had caused distress or anxiety, while an additional four items asked respondents how often they felt they were able to attain goals and maintain control (Feldt, 2008). A seven-item version of the scale later became available. This version was used in the article screened into our review, as publications detailing the original fell outside of our date range inclusion criteria.

The CSS 11-item version has been shown to have moderate temporal stability (test-retest correlation coefficients ranging from r = 0.62–0.86 for individual scale items) but poor construct validity (Feldt, 2008). An assessment of the test-retest reliability of the scale in its entirety was not conducted, despite the fact that this may have been a stronger indicator of the tool’s temporal stability. Although a two-factor solution was initially recommended (Feldt & Koch, 2011), the second factor has consistently shown poor internal consistency reliability (α = 0.60) (Feldt, 2008; Feldt & Koch, 2011). Furthermore, the two-factor solution showed an overall poor fit. A follow-up analysis aimed to consider the scale’s construct validity in more detail (Feldt & Koch, 2011). Results showed poor fit for a unidimensional solution, and only adequate fit for a two-factor solution. Given the poor internal consistency for the second factor in the previous study, Raykov’s congeneric scale reliability analysis was used as an alternative. However, this analysis resulted in nearly the exact same reliability values as the original. Moving forward, Feldt and Koch (2011) chose to exclude the second factor owing to its poor fit and low factor loadings. The seven-item measure showed adequate fit, as well as a strong correlation with the Perceived Stress Scale (r = 0.79), demonstrating some evidence of relations to other variables. Much weaker correlations were observed for the scales more specific to student stress, such as Test Anxiety (r = 0.37) and Self-efficacy for Learning (r = −0.38), suggesting that the CSS may be an appropriate (if weak) measure of general stress among students, but not student-specific stress. This conclusion is shared by Feldt and Updegraff (2013). Notably, the authors did not disclose the item pool development process, making it difficult to evaluate the content validation evidence. Finally, all psychometric assessments conducted on this scale to date have used samples of >90% Caucasian undergraduate psychology students at a single college in the USA, significantly limiting the generalizability of these findings and our knowledge of how it may perform in more diverse student samples.

The goal of the CSS was to “create a brief index of perceived stress that emphasizes students’ subjective appraisal of stress concerning general classes of stressors and ability to maintain control” (Feldt, 2008, p. 855). Items include those related to personal relationships, family matters, financial matters, academic matters, housing matters, being away from home, and events not going as planned, demonstrating a fair scope related to the student experience.

Perceived Stress Scale (PSS)

The most frequently cited instrument in our review by a wide margin, the Perceived Stress Scale is a measure intended to evaluate general stress across various populations. Three versions of this tool were found in the process of our review—the 4-item (n = 4 articles), 10-item (n = 33), and 14-item (n = 10 articles). For all versions, response options ranged from 0 (never) to 4 (very often) on a four-point scale. Items are summed for a composite score ranging from 0 to 56, depending on the version used, where a higher score indicates higher stress levels. Items are intentionally general, designed to evaluate “the degree to which respondents find their lives unpredictable, uncontrollable, and overloading” and feel that these demands exceed their capacity to effectively cope (Cohen, 1986, p. 717).

Evidence for validity and reliability of the PSS has been widely collected. Lee (2012) provides an excellent systematic review compiling all studies that have explored the psychometric properties of the three versions of the tool. Ultimately, evidence of strong reliability (internal consistency) and validity (consistent internal structure and relations to other variables) were reported for PSS scores, though the temporal stability of the scale has not been assessed. Overall, the 10-item version of the tool demonstrated the strongest psychometric properties, while the four-item version of the tool demonstrated the weakest (Lee, 2012). Notably, the majority of these psychometric studies were conducted using samples of young adults and/or post-secondary students. Notably, there has been some debate in the literature regarding the internal structure of the PSS-10, in particular. While the original author of the scale has indicated that the PSS-10 should be treated as a single-factor, unidimensional scale, scholars have argued over the adequacy of this given that many internal structure analyses have revealed the scale to contain two distinct factors: perceived helplessness and perceived self-efficacy (e.g., Liu et al., 2020; Taylor, 2015).

While the PSS is a valuable tool, it is associated with rich evidence for validity and reliability, and has been used in many studies investigating post-secondary student stress. However, it is important to note that the tool was not specifically designed to evaluate student-specific stress. As a result, the PSS is an excellent tool to evaluate general stress among post-secondary populations, but not necessarily to assess student stress specifically.

Perception of Academic Stress Scale (PAS)

The Perception of Academic Stress Scale (PAS) is an 18-item scale with response options ranging from 1 (strongly disagree) to 5 (strongly agree). Responses are summed for a composite score ranging from 18 to 90, where a lower score indicates a more severe perception of stress (Bedewy & Gabriel, 2015).

Items for the PAS were initially developed based on factors most frequently attributed to student stress in the existing literature. A modified Delphi survey was then conducted among 12 experts with over 15 years of experience in education and educational psychology to quantify content validity. In addition to the collection of content evidence for validity, the authors distributed the instrument to 100 undergraduate and graduate educational psychology students at a single university to further assess its psychometric properties (Bedewy & Gabriel, 2015). An exploratory factor analysis revealed a four-factor solution accounting for about 40% of the variance in responses, though it is worth noting that the sample sized used is well below what is typically recommended for factor analyses. Evidence of relations to other variables was not reported. An acceptable internal consistency reliability coefficient of α = 0.7 was reported, while test-retest reliability was not evaluated.

The PAS was designed to measure academic stress specific to university students, and the majority of the items reflect this purpose (Bedewy & Gabriel, 2015). Importantly, some items are not appropriately conceptualized as sources of stress. For example, “I am confident that I will be a successful student” and “I am confident that I will be successful in my future career” reflect degrees of confidence, not severity of stress relating to these items. Similarly, “I think that my worry about examinations is weakness of character” is a personal reflection on oneself, not a stressor. In fact, the authors labeled one of the factors derived from the factor analysis as “self-perceptions” (Bedewy & Gabriel, 2015). Finally, the PAS does not address socio-cultural and environmental sources of stress (e.g., learning environment, discrimination, and campus culture).

Post-Secondary Student Stressors Index (PSSI)

The Post-Secondary Student Stressors Index (PSSI) is a 46-item index developed in 2019, designed to evaluate severity and frequency with which students experience stressors specific to the post-secondary student context. While most instruments included in this review focus on only frequency or presence of stress, the PSSI asks respondents to rate both the severity and frequency of stress experienced for each stressor on a four-point scale ranging from 1 (not stressful/rarely) to four (extremely stressful/almost always) (Linden & Stuart, 2019). An additional response option of 0 in the event that a stressor is not applicable to the respondent or did not occur. Each stressor is treated as an individual variable (e.g., there is no summation of ratings for an “overall” score).

Initial items for the PSSI were developed through an extensive, iterative process combining students’ responses to both open-ended online survey questions and focus groups interview prompts (Linden & Stuart, 2019). Following this, individual cognitive interviews were conducted, where students explained their interpretation of the items and the reasoning behind their responses (e.g., response processes validation). A modified Delphi survey was then conducted among a new sample of students to quantify content validity. All items with acceptable item content validity indices were retained, resulting in the 46-item tool (Linden & Stuart, 2019). A pilot test among >500 students was conducted in 2019, resulting in strong internal structure evidence for validity through exploratory factor analysis as well as relations to other variables evidence through an examination of the correlations between the PSSI and like constructs evaluating stress and psychological distress (Linden & Stuart, 2019). Additionally, test-retest reliability coefficients supported strong temporal stability of the instrument (r s = 0.78), both in the overall sample, and in sensitivity analyses of subsamples (Linden & Stuart, 2019). In 2021, the tool was launched across 15 post-secondary institutions across Canada, with a confirmatory factor analysis providing further validation evidence (Linden et al., 2022).

The PSSI is available in both English and French as the 46-item version or the Brief-PSSI, a 14-item version. The Brief-PSSI was created in response to requests for an abbreviated version of the original PSSI. Evidence of internal structure and relations to other variables was assessed through the specification of multiple-indicator, multiple-cause models, an extension of confirmatory factor analysis (Linden & Ecclestone, 2024). Test-retest reliability analyses revealed moderate to strong temporal stability across items (r s = 0.50–0.73) (Linden & Ecclestone, 2024). Overall, scores on the Brief-PSSI were shown to be both valid and reliable, though the original PSSI boasts stronger psychometric properties and provides a more in-depth analysis of the sources of student stress.

Both versions of the PSSI cover the full scope of student stressors (e.g., academics, learning environment, campus culture, interpersonal, and personal stressors), though the longer version of the tool allows for a more in-depth, granular look at the sources of student stress. While French versions of both tools have been developed, associated validation evidence has not been collected to date.

Student-Life Stress Inventory (SSI)

The Student-Life Stress Inventory (SSI) was used in two studies screened into our review (Li & Yang, 2016; Suleyiman & Zewdu, 2018). Created in 1990, the SSI is a 51-item tool including two sections with sub-categories. The first section includes five categories of stressors, including frustrations, changes, pressures, conflicts, and self-imposed, while the second section includes four categories of reactions to stressors, including cognitive, emotional, behavioral, and psychological (Gadzella, 1994). Respondents are first asked to rate their overall stress as mild, moderate, or severe, and then rate each individual item on a frequency scale ranging from 1 (never) to 5 (most of the time). A composite score for the complete inventory of items is determined by summing all responses, though it is unclear what a higher score would represent given that there are two distinct sections to the instrument (Gadzella, 1994).

The authors of the instrument suggest that the reliability and validity of scores have been assessed (e.g., citing Gadzella et al., 1991; Gadzella & Guthrie, 1993), though the referenced works are almost exclusively papers presented at academic conferences and are not peer-reviewed, nor are they available to access. We located two published articles that highlighted the psychometrics of the scale. The first is a brief, two-page report that largely consists of a single table comparing the means and standard deviations for responses on the SSI from three samples of students across five studies (Gadzella, 2004). The author notes that these consistent findings provide validity and reliability evidence in support of the tool. In reality, comparable descriptive statistics across samples is not indicative of psychometric reliability. In the second published article, the author again describes the tool as both valid and reliable but reports only on reliability (Gadzella, 1994). In this paper, both internal consistency and test-retest reliability are psychometrically assessed, with coefficients of 0.76 and 0.73, respectively. Overall, we were unable to find any published evidence to support the validity of the SSI.

The SSI includes many stressors specific to post-secondary student experiences, including academics, finances, interpersonal factors, and some personal factors. It does not, however, assess environmental sources of stress (e.g., learning environment and campus culture), or those associated with self-care (e.g., cooking). Given the age of the tool, it is likely that the relevance of items designed to assess these factors would have been limited in the context of the current post-secondary setting. Notably, the second half of the tool (stress reactions) does not assess stressors, but rather an individual’s mental health outcomes resulting from exposure to stress.

Stress Overload Scale-Short (SOS-S)

Developed in 2012, the Stress Overload Scale (SOS) is a 30-item scale where respondents are asked to rate the frequency with which they have experienced stressors over the previous week on a five-point scale ranging from 1 (not at all) to 5 (a lot) (Amirkhan, 2012; Amirkhan et al., 2015). A 10-item version of the scale, the Stress Overload Scale-Short (SOS-S), was developed in 2018. Only the 10-item version was included in the current review, as publications detailing the original version were exclusively deployed among non-student samples. A composite score is derived from summing the responses to all 10 items, with scores ranging from 10 to 50 (Amirkhan, 2018). Higher scores indicate more frequent stress.

Items for the original SOS were developed through an extensive process of consultations with subject matter experts, students, and reference to the extant literature, with item pool reduction achieved through a two-stage consensus survey and exploratory factor analysis. The proposed two-factor internal structure was then validated through confirmatory factor analysis (Amirkhan, 2018). While not explicitly quantified for the SOS-S, its content evidence is based on that of the long-form instrument which demonstrated strong psychometric properties. Items for the SOS-S were chosen by selecting items from the original instrument with the strongest factor loadings and psychometrics, maintaining those with the strongest reliability and validity based on the results of eight previous psychometric studies (Amirkhan, 2018). Subsequent psychometric analyses provided strong evidence of internal structure and relations to other variables, including the original SOS and the popular 10-item Perceived Stress Scale. Evidence of internal consistency (strong) and test-retest reliability (moderate) was also reported for both the subscales and SOS-S in its entirety, with coefficients ranging from α = 0.87–0.91 and r = 0.71–0.75, respectively.

Overall, the SOS-S is a thoughtfully developed and well-validated tool for evaluating day-to-day stress. However, as the tool was developed to evaluate stress across a broad demographic spectrum, it does not evaluate student-specific stressors (Amirkhan, 2012).

University Stress Scale (USS)

Developed in 2008, the University Stress Scale (USS) is a 21-item tool where respondents are asked to indicate the degree to which each item had caused them stress in the previous month on a scale ranging from 1 (not at all) to 4 (constantly) (Stallman & Hurst, 2016). A composite score is derived from summing item ratings, with a higher score indicating a greater degree of stress (Stallman & Hurst, 2016). Items were developed based on topics identified in student focus groups, a review of self-help topics provided on Australian counseling service websites, and the authors’ clinical experience (Ryan et al., 2010; Stallman & Hurst, 2016).

Certain parts of the authors’ validity assessment were problematic. A six-factor solution was revealed through exploratory factor analysis, though low factor loadings resulted in the exclusion of four items for confirmatory factor analysis, including “university environment” and “work,” both of which have been noted in the literature as important sources of stress for students. While some evidence of relations to other variables was provided with the USS showing a moderate correlation with the Perceived Stress Scale (r = 0.47), moderate correlations were also shown with scales assessing physical illness, general wellbeing, and daily hassles and life events (r ranging from 0.44 to 0.46), which may indicate the scale is a better measure of general stress, rather than stress specific to students. Further supporting this, the scale demonstrated only a weak correlation with the University Connectedness Scale (r = 0.11). Evidence of internal consistency (α = 0.83) and test-retest reliability (ICC = 0.82, 95% CI 0.79–0.85) was also reported for the composite scale.

The authors argue that creating a measure that assesses broad categories “enables the measure to capture anything within a specific category that a student might have perceived as stressful, rather than being limited to specific events or problems identified by the authors” (Stallman & Hurst, 2016, p. 129). While this scale may serve as an excellent general assessment of stress, it does not meet its intended purpose of assessing stress specific to students. Of the 21 stressors contained in this scale, only eight reflect concerns related to student life (e.g., academic/coursework demands, university environment, study/life balance, etc.). Finally, the authors noted they intended to measure the severity of student stress, but the scale actually assesses frequency.

The USS was developed specifically to evaluate stress experienced by university students (Stallman & Hurst, 2016). The scale covers many stressors experienced by post-secondary students, including interpersonal relationships, finances, academics, university environment, and some personal factors (e.g., study/life balance). However, the scale does not address stressors associated with faculty (e.g., interacting with the professor), or stressors associated with navigating self-care and personal expectations (e.g., cooking for oneself and internalized pressures).

Discussion

The purpose of this study was to provide a comprehensive overview of instruments used to assess stress among post-secondary populations, contributing to a gap in the current literature. We aimed to identify instruments that had been identified in the published literature to date, and evaluate their quality based on both psychometric properties and suitability for use among student populations. In total, our review captured 62 published records and 11 instruments. The majority of the instruments included in our review (n = 7) were developed specifically to measure stress among students, while others were designed to evaluate stress more generally. While all instruments met the criteria for applicability and accessibility, most instruments did not fully meet the criteria for suitability, largely owing to the scope of items included. Importantly, students experience stress across multiple domains, including those more specific to the post-secondary environment (e.g., academic concerns, stressors related to learning environment, and stress around tuition fees and student loans) as well as those more holistically relevant to individuals at any stage of life (e.g., concern for the future, self-care, and relationship management) (Linden & Stuart, 2020). Many instruments intended for use among a student population tend to focus on the academic realm, but few incorporate stressors across these additional domains, resulting in a scope that is too narrow. Similarly, the concept of “general” or “global” stress differs conceptually from stress that is specific to students. While there may be some conceptual overlap, these general stress tools cover a scope that is too broad in nature to be suitable for measuring student stress.

Many of the instruments included in this review either did not have strong psychometric evidence or had limited published information regarding their psychometric properties. Content evidence was presented to some degree for nearly all instruments, while response processes evidence was provided for only one. While nearly all authors described their instruments as being content valid, items were most often developed by modifying items from pre-existing tools, from existing literature, or developed by experts working in relevant fields (e.g., psychologists and clinicians) with students themselves rarely consulted in the development process. Evidence of formal, quantification of content evidence through methods such as Delphi surveys or similar consensus questionnaires was rare.

Internal structure and relations to other variables evidence for validity, along with measures of reliability, were much more commonly reported. However, in many cases, the assessment of these types of evidence was inappropriate. This largely stemmed from the incorrect conceptualization of the majority of the instruments included in our review, as “scales,” when in fact, it would be more accurate to refer to a measure of stress as an “index.” Instruments that are considered to be “scales” are those that evaluate a single underlying latent construct of interest that influences the items on the scale to emerge or behave in a particular way. For example, the Generalized Anxiety Disorder scale (GAD-7) includes seven items that reflect common symptoms indicative of anxiety (e.g., excessive worry). The items on this scale are symptomatic of the underlying construct. In other words, they are considered to be “effect indicators” of anxiety (Streiner, 2003a). With an index, the influence moves in the opposite direction. In the case of instruments designed to assess stress, the individual items on the tool exert influence on the latent construct of interest, rather than the other way around. Stressors are not effects of experiencing stress; rather, they represent “causal indicators” of the underlying construct of interest (Streiner, 2003a). The conceptual distinction between scales and indices has important implications for psychometric analyses. In particular, the expectations for the internal structure and reliability of instruments are markedly different for an index compared to a scale.

For example, there is no expectation for correlation between items in an index, and the goal is not unidimensionality. One reason for this is that every respondent might experience a different combination of stressors, and assign a different level of stress to each. This variability is likely to lead to differences in common exploratory factory analyses that rely on measures of tau equivalence. While EFA can be useful for conducting an initial assessment of the structure of a tool and the behavior of individual items in a particular sample, the goal is typically to determine whether items can be dropped to evaluate the latent construct of interest using as few items as possible. In contrast, the goal of index development is to cover the entire scope of a latent variable, usually resulting in larger number of items covering a broad spectrum. Removing an index indicator may result in omitting a part of the latent construct itself. As such, internal structure evidence for indices is more appropriately assessed through structural equation modeling methods such as the specification of MIMIC models, which allow for estimation of relationships between latent constructs and other variables deriving evidence for both internal structure and relations to other variables.

Relatedly, without an assumption of tau equivalence, the evaluation of an index’s reliability through the assessment of internal consistency (e.g., using Cronbach’s alpha coefficient) is not appropriate (Streiner, 2003a). Instead, focus should be laid on evaluating the temporal stability of the instrument, assessed through test-retest reliability (Streiner, 2003a). Despite this, the alpha coefficient remains the most consistently reported measure in psychometric papers, regardless of the appropriateness of its use. While nearly all instruments included in our review reported an alpha coefficient, less than half assessed temporal stability. Another issue we observed was reporting a single alpha coefficient for an instrument in its entirety when it had been conceptualized as a multidimensional scale. In the case of “true” multidimensional scales, it is more appropriate to report a coefficient for each individual dimension or grouping of indicators (Streiner, 2003a, 2003b). Notably, coefficient alpha will naturally increase as the number of items in an instrument increases. Indeed, we observed this phenomenon in the high coefficients (≥0.9) reported for the larger instruments included in our review.

Overall, we observed a lack of thorough investigation into the psychometric properties of the instruments included in our review. With the exception of one, no instrument included in our review had an associated comprehensive, published psychometric assessment that included all types of evidence for validity per the Standards (e.g., content, response processes, internal structure, and relations to other variables) (American Educational Research Association, 2014). Notably, many developers relied on the more outdated conceptualization of “types” of validity (e.g., face validity, criterion validity, and concurrent/divergent) in contrast to the more modern Standards which align with the sentiment that “all validity is validity” (Streiner et al., 2014). While most authors reported conducting preliminary psychometric analyses often including EFA and correlational analyses, other types of evidence for validity (e.g., content, response processes, and test-retest reliability) were rarely reported.

Based on our evaluative criteria, the PSSI emerged as the most appropriate instrument in terms of both suitability and psychometric properties. The PSSI is the most thoroughly validated scale in our review, available at no cost, and co-developed with diverse samples of students. The PSSI provides some flexibility regarding instrument length, available as both a 46-item or 14-item index. It is important to note that original PSSI has superior psychometric properties to the Brief-PSSI. Additionally, the brief version does not provide the same depth of information that the original does. The version selected for use should therefore align with the measurement goals, whether that is to assess student stressors more generally (Brief-PSSI) or to conduct a more granular assessment of the sources and aspects of student stress (PSSI). The PSSI has been comprehensively evaluated among large samples of post-secondary students across various levels, areas of study, and regions in Canada. Its generalizability to student populations in other geographic locations remains untested. The PSSI has demonstrated strong evidence for content, response process, internal structure, and relation to other variables for validity, and strong test-retest reliability, and assesses student stressors by both frequency and severity, a unique measurement method compared to other instruments.

The USS is the next best option for a student stress assessment. Freely accessible, and designed to evaluate stress specifically among university students, the USS is a psychometrically strong instrument that is both relevant and applicable to student populations. Compared to the PSSI, the scope of the USS is more moderate, with some aspects of the student experience not addressed (e.g., interacting with faculty and navigating self-care) and others omitted during analyses (balancing academics and work, university environment). Additionally, the USS has primarily been used among samples of university students in Australia, making its generalizability to other geographic student populations inconclusive. The USS has demonstrated adequate psychometric properties, though it is lacking response processes evidence and formal, quantified content evidence for validity (e.g., consensus survey).

The PSS and SOS-S also emerged as good options in terms of psychometric strength. The PSS was the most commonly used scale in our review, is freely accessible, and is available in three different version lengths (4-item, 10-item, and 14-item) and multiple languages. The SOS-S is also freely accessible, and available as both a 30- and 10-item versions, though was only used by one record included in our review. Given that the scale was intended to evaluate general stress, non-specific to students, this is unsurprising. Both the PSS and SOS-S address general stress, rather than stressors specific to students. As such, stressors unique to the student experience are not measured by these instruments, limiting the scales’ suitability for use among this population. Nonetheless, if the goal is a general assessment of overall stress (rather than an assessment of student-specific stressors), both tools would be appropriate choices.

Strengths and Limitations

To our knowledge, this is the first thorough review of instruments used to measure stress specific to the post-secondary population. Beyond contributing to this gap in the literature, our work is strengthened by the use of comprehensive guidelines for conducting a methodologically rigorous review and formal evaluative criteria to assess both the psychometrics and suitability of the instruments in question.

Despite its strengths, there are some limitations to be acknowledged. Firstly, our inclusion criteria regarding data range for publication, English language restriction, and the selection of particular databases introduces the possibility that some articles (and thus, instruments), were missed. Notably, the majority of the published studies included in our review were conducted among samples of university students completing their education in North America (e.g., United States and Canada) or other higher-income countries, which may be partially attributed to our requirement for articles to be published in English. This resulted in less representation from non-Western and lower-income countries. In addition, the development and validation of the majority of the instruments reviewed was conducted among samples of university students, resulting in some uncertainty as to whether the recommended instruments are as suitable for populations of students in other types of post-secondary settings (e.g., colleges and vocational institutes). Finally, that inter-rater reliability was not established for the coding of quality indicators.

Conclusion

The majority of instruments identified in this review were designed specifically with the post-secondary population in mind. Despite this, students were infrequently involved in the process of development, hindering the content validity and suitability of instruments. Where possible, the target population should be included in the development process to ensure effective and appropriate measurement. Relatedly, thorough validation assessment is necessary in order to increase confidence in the quality and effectiveness of measurement approaches. This includes the collection of all types of evidence for validity and reliability, many of which were missing for the instruments included in our review (e.g., test-retest reliability, response processes evidence, and formal, quantified content evidence). Ideally, these psychometric properties should be peer-reviewed and published within the academic literature. In sum, the PSSI and USS emerged as the most appropriate available instruments for evaluating the sources of post-secondary student stress, while the PSS and SOS-S may be appropriate for use among student populations where the goal is to evaluate overall stress levels, rather than student-specific stressors. In particular, the use of these instruments in student wellness contexts on campuses may lead to targeted improvements in the tailoring of student supports and resources as well as provide guidance for related needs assessments.

Footnotes

Acknowledgments

BL’s position is supported by the Bell Canada Mental Health and Anti-Stigma Research Chair.

Author Contributions

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: BL is the developer of the Post-Secondary Student Stressors Index, a non-financial conflict of interest. CDG has no conflicts of interest to disclose.

Appendix

Psychometric Evaluative Criteria Framework aOverall, types of evidence for validity and reliability are laid out as per the recommendations in the Standards for Educational and Psychological Testing. Note that “test consequences” is not included as the tools included in this review are not intended for use in a clinical or educational setting where there are “consequences” due to a particular score such as a grade or a diagnosis. bItem content validity indices (I-CVI) cut offs are aligned with the recommendations put forward by Polit and Beck (2006). cA minimum sample threshold of n = 300 for factor analyses was selected for the purposes of this review, based on a recent meta-analytic investigation of appropriate sample sizes for this work (White, 2022). In our consideration of this criterion, we considered both a minimum threshold of n = 300 as well as the size of the instrument itself and the target population of interest (e.g., students). dStrong goodness of fit statistics were considered to be CFI and TLI ≥0.9, RMSEA ≤0.05, and SRMR ≤0.1 (Finch, 2020). eThe minimum acceptable alpha coefficient threshold was set at 0.7 for the purposes of this review, in line with the classic thresholds endorsed by Peterson’s meta-analysis (1994), while taking into account Streiner’s (2003a, 2003b) cautions against very high alphas (≥0.9). fMagnitude of test-retest reliability coefficient interpretations were determined as per Gromisch et al. (2023).

Evaluation criteria

a

Good (✓)

Adequate (±)

Inadequate (☓)

Evidence for validity

Content

• Strong evidence of thoughtful item construction incorporating feedback from user group, literature, and expert input

• Evidence of efforts made to incorporate feedback from some combination of user group, literature, and/or expert input during item development

• Items developed based solely on literature OR item development process not described

• Delphi method or consensus survey used during item refinement demonstrating I-CVIs >0.7

b

• Results of Delphi method or consensus survey weak (I-CVIs <0.6)

b

or not reported

• No Delphi method or consensus survey reported

Response process

• Analysis of thorough response processes testing during item refinement

• Analysis of response processes testing weakly reported

• Response processes testing not reported

Internal structure

• EFA conducted using appropriate sample size (>300)

c

• EFA conducted using inappropriate sample size (<300)

c

OR demonstrates weak results

• EFA conducted using inappropriate sample size (<300)

c

OR is not reported

• CFA conducted to confirm internal structure, demonstrating acceptable goodness of fit statistics

d

• CFA demonstrates poor goodness of fit statistics

d

• CFA not reported

Relations to other variables

• Results of comparisons to other like constructs align with directional hypotheses

• Comparisons to other like constructs provided are weakly aligned with directional hypotheses

• Not provided OR results not aligned with directional hypotheses

Evidence for reliability

Internal consistency

• Coefficient ≥0.7

e

• Coefficient

• Coefficient <0.65

e

≥0.65–< 0.7

e

• Coefficient appropriately measured (i.e., provided for individual subscales)

• Coefficient appropriately measured

• Coefficient inappropriately measured OR not reported

Test-retest reliability

• Coefficient ≥0.7

f

• Coefficient ≥0.65–< 0.7

f

• Coefficient <0.65

f

• Time frame appropriate (2–4 weeks)

• Time frame inappropriate

• Not reported

Suitability Evaluative Criteria Framework

Evaluation criteria

Good (✓)

Adequate (±)

Inadequate (☓)

Population

Tool created specifically for students, young adults, or emerging adults

Tool created for general use among a variety of populations, including students, youth, emerging adults, and adults

Tool designed for a specific population (i.e. clinical) and was not intended for use among youth, young adults, or emerging adults

Scope

Items on the instrument cover the full scope of stressors associated with student life

Items on the instrument cover most or many of the scope of stressors associated with student life

Items on the instrument are narrow and do not cover the full scope of stressors associated with student life

Applicability

Items on the instrument are relevant for modern day use

Items on the instrument are somewhat relevant for modern day use

Items on the instrument are irrelevant for modern day use

Accessibility

Instrument is easily and freely accessible

Instrument is freely accessible upon author request

Instrument is difficult to access (i.e. paywall) OR inaccessible