Abstract

Dynamic testing, though underutilized, holds potential for assessing learning and instructional needs. However, its limited adoption is often attributed to the perceived time and labor-intensive nature of its administration. This study explores the viability of a training-only dynamic test (ToDT) with a standardized procedure to identify instructional needs in school children. The study involved 66 fourth and fifth-grade students (M age = 10.89 years, SD = 0.53) and analyzed the relations between ToDT scores (number and type of hints), teachers’ estimations of instructional needs, and standardized math and reading scores. Results revealed moderate to strong correlations between ToDT scores, teachers’ estimations, and standardized scores. Additionally, children with different classifications based on ToDT scores differed in their instructional needs and standardized test scores. This study not only underscores the potential of the training-only dynamic test (ToDT) in gauging instructional needs, but also revealed distinct needs and outcomes based on their ToDT scores.

Keywords

Introduction

In the case of suspected problems with learning in school, educational psychologists typically use intelligence tests to determine a child’s general cognitive level of performance. Intelligence tests use a static format, where the test is administered at a single point in time and test administration does not include any training or feedback. However, these tests have been criticized in literature as they do not provide direct information about children’s learning potential or instructional needs, and tend to underestimate the abilities and potential of children from minority populations, children with different language backgrounds, and with learning problems such as test anxiety or specific learning disabilities (e.g., Resing et al., 2020).

Dynamic testing has often been mentioned as an alternative, using a training procedure as an integral part of the test. The integrated training makes it more suitable to observe and measure learning in action, thus providing information about children’s learning potential and needs for instruction. However, the addition of a training procedure, often in combination with a pretest and posttest, makes administration of these tests considerably more time and labor intensive than traditional, static test administration. To make dynamic testing feasible for more children who need individualized instruction in education, creating a shorter version of dynamic tests may unlock new possibilities (e.g., Veerbeek et al., 2017). The current study investigates whether a training-only format, using a standardized training procedure, can be used to determine children’s instructional needs in school.

Dynamic Testing and Assessment

Dynamic testing and assessment refer to a diverse collection of tests, approaches, and assessment methods, all connected under the same umbrella term through the inclusion of some type of training or help within the test (Resing et al., 2020). A distinction can be made between dynamic assessment on the one hand, and dynamic testing on the other. Dynamic assessment is mostly intervention-focused and aimed for usage in clinical practice. In this method, it is decided on a case-by-case basis what type of feedback or mediation and how much of it should be given. Help or mediation is offered whenever a child cannot solve an item independently. In contrast, dynamic testing represents an approach that is mostly used in research, and structures around standardized forms of testing and providing feedback (Elliott et al., 2018; Resing et al., 2020). To measure the effects of training, three separate phases are usually used: a pretest, a training, and a posttest. No help is offered during the pretest and posttest.

The graduated prompts approach is a specific method for providing standardized help to the testee during a dynamic test, typically in a pretest-training-posttest format. Through providing help in a hierarchical, predetermined manner, the minimal amount of help can be found that children need to correctly solve the task (Campione & Brown, 1978; Resing & Elliott, 2011). A graduated prompting procedure starts if a child cannot independently provide the correct answer to the task. In that case, a general, metacognitive prompt is provided, and the child is asked to solve the task again. If, after having received general, metacognitive prompts, the child is not able to provide a correct answer, task-specific, cognitive prompts are given, and again the child is asked to provide an answer. If after these cognitive prompts the child is still unable to provide the correct answer, a scaffolding procedure is started in which the correct solving procedure is modelled to the child (Resing & Elliott, 2011). Previous research has found this method of training to lead to more improvement in task performance than regular feedback (Stevenson et al., 2013), and both number of prompts and posttest scores can be considered measures of learning potential and have been found to predict future school success (e.g., Caffrey et al., 2008). Previous research has found both the number of prompts and the posttest scores to be related to scores on standardized tests of mathematics and reading comprehension ability (Veerbeek et al., 2019), and found latent training scores predictive of math and reading achievement (Stevenson et al., 2013).

Dynamic tests often utilize inductive reasoning tasks. This type of reasoning requires the problem-solver to infer a rule or multiple rules about (the changes in) the elements of the tasks, and how these elements relate to each other (Klauer & Phye, 2008). Inductive reasoning encompasses the application of reasoning from a specific situation to a general situation (Sternberg, 1985) and is closely related to general cognitive ability and performance in school (Molnár et al., 2013), as well as to skills necessary for learning and transfer (Klauer et al., 2002). The current research uses tasks that require analogical reasoning, a subtype of inductive reasoning, which plays a central role in cognitive development (Klauer & Phye, 2008) and develops throughout childhood (Leech et al., 2008). Large individual differences are found in children’s analogical reasoning ability (Siegler & Svetina, 2002), making it suitable to distinguish children’s cognitive abilities.

Linking Dynamic Test Outcomes to Instruction in the Classroom

One of the proposed advantages of dynamic testing is the information it can offer to inform instructional interventions. In a dynamic testing context, instructional needs refer to the help or feedback children need in order to obtain knowledge, skills, and abilities necessary to solve a task. Instructional intervention, in this context, involves the targeted and adaptive support provided to learners based on real-time feedback from their performance. This can include scaffolding techniques, personalized feedback, and tailored exercises that address individual learning gaps and promote continuous improvement (Resing et al., 2020). Dynamic testing thus integrates assessment and instruction, creating a responsive educational environment that fosters development. This can be of particular use for students that have special educational needs, such as children with learning disabilities, or other children that do not appear to optimally benefit from general classroom instruction. With regards to the targets of instructional intervention, Campione (1989) described a distinction between two main areas that can cause problems in children’s learning: (1) faulty domain-specific knowledge or (2) difficulties in monitoring and regulation of learning, also known as metacognition. These are directly linked to the types of prompts that are offered in tests using the graduated prompts approach, where the first prompts focus on metacognitive components, such as regulating behaviors, and latter prompts focus on task/domain-specific strategies and knowledge, to facilitate successful task solving behavior.

Some attempts have been made to directly link the outcomes of a graduated prompts training within a dynamic test of inductive reasoning to individual instruction in the classroom. Based on their research with children with mild to moderate intellectual disabilities, Bosma and Resing (2012) concluded that the graduated prompts approach made it possible to measure children’s needs for instruction, and that information about differences in instructions that benefitted children could aid teachers’ practices and inform educational planning. Teachers reported that they considered the results from the dynamic test, especially the number and type of prompts that were given, as valuable information to inform classroom instructional intervention. Moreover, Bosma et al. (2017) used a graduated prompts procedure with multiple training protocols to identify groups of children who showed differences in their profiles of instructional needs. This made it possible to identify the points in the task solving process where children experienced difficulties and distinguish the instructional needs of individual children with severe arithmetic difficulties. They concluded that teachers could use the outcomes of the dynamic test to adapt instructions to their students’ needs but also remarked that the multiple protocols they used where quite unpractical to apply and score (Bosma et al., 2017).

However, one of the factors that might hinder widespread implementation of dynamic tests that has been mentioned in research is the time and resource intensive nature of these tests (Resing et al., 2020). A previous study using a graduated prompts dynamic test of inductive reasoning found that removing the pretest from a dynamic test did not change the number of prompts children needed, the time it took children to complete the training phases or the test, nor did it influence outcomes on the posttest (Veerbeek et al., 2017). Therefore, the current research aims to go a step further, explore the possibility of using just a standardized graduated prompts training session as a test, and investigate the potential added value of such a testing procedure.

Current Study and Research Questions

The aim of the current study was to test whether a dynamic testing format consisting of only a single training session can be used to uncover information about children’s instructional needs. To this end, a single session graduated prompts training-only dynamic test of analogical reasoning was used, and the outcomes were compared to teachers’ estimations of students’ instructional needs, as well as students’ performance on standardized tests of math and reading comprehension ability.

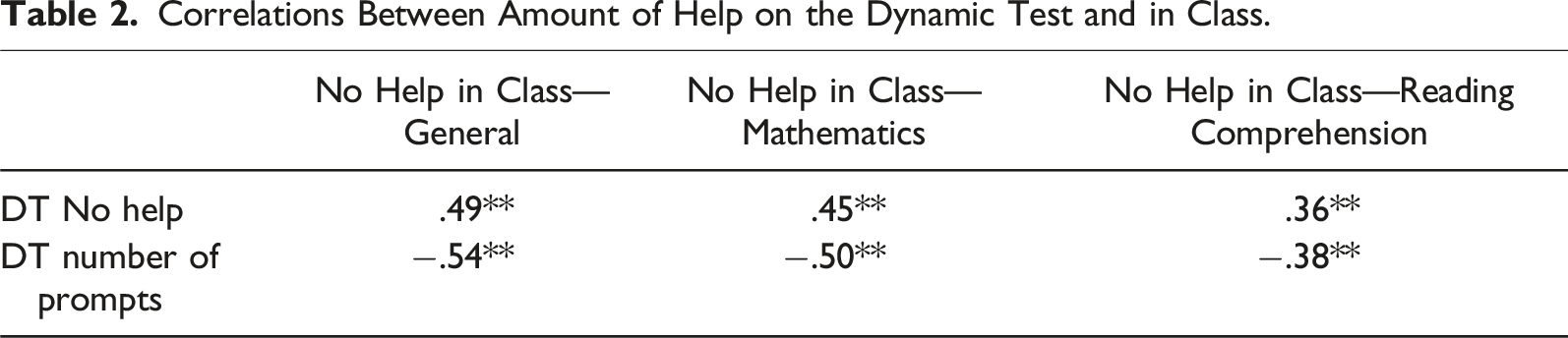

First, the measures obtained from the training-only dynamic test of analogical reasoning were compared to teachers’ estimation of the amount of help children needed in school. We expected a strong positive relationship between the number of items children could complete without help during the dynamic test, and the percentage of time teachers estimated that a child does not need help in class in general (hypothesis 1a). We also expected a positive relationship between the number of items children could complete without help during the dynamic test and the percentage of time teachers estimated that a child does not need help in class on math (hypothesis 1b) and on reading comprehension (hypothesis 1c). Similarly, we expected a strong negative relationship between the number of prompts given during the dynamic test and the percentage of time teachers estimated that a child does not need help in class in general (hypothesis 1d), the percentage of time teachers estimated that a child does not need help in class on math (hypothesis 1e) and on reading comprehension (hypothesis 1f).

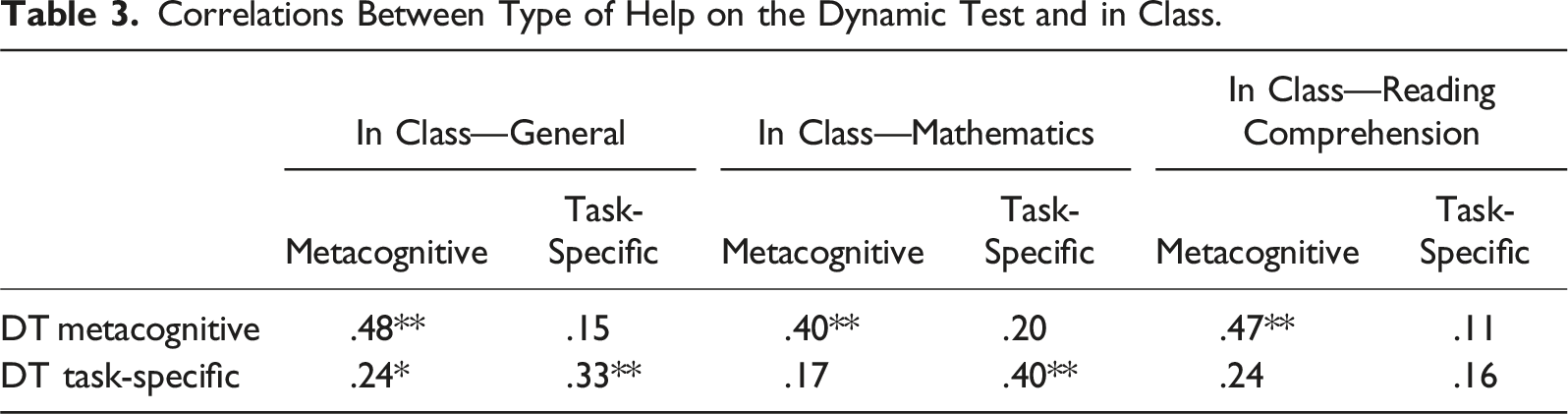

Next, the measures obtained from the training-only dynamic test of analogical reasoning were compared to teachers’ estimation of the type of help children needed in school. Regarding the types of help, we hypothesized (2a) that number of metacognitive prompts on the dynamic test would be strongly related to need for metacognitive help in class as estimated by the teacher. We also expected (hypothesis 2b) that task-specific prompts on the dynamic test would be strongly related to task-specific help in class, and (hypothesis 2c) the type of help on the dynamic test to be stronger related to the same type of help in class as estimated by the teacher than to the other type of help.

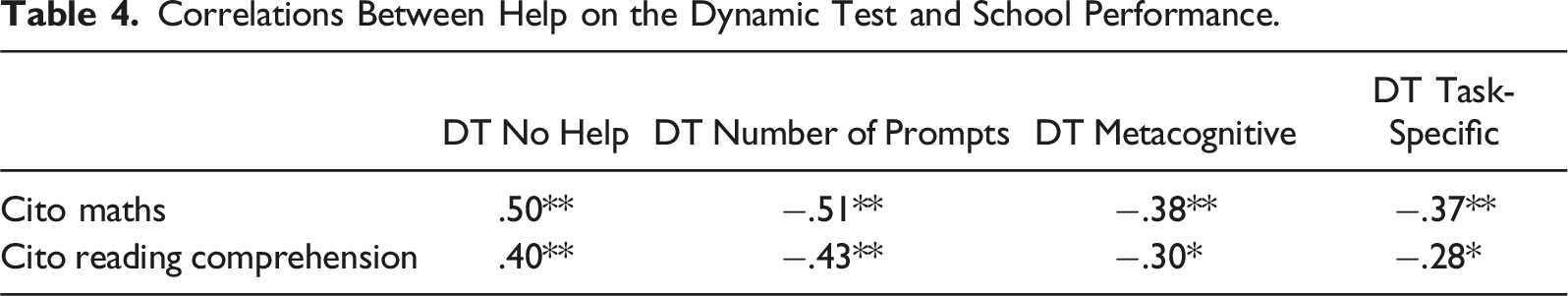

With regards to school performance, it was expected that there would be a positive relationship between scores on a standardized test of maths and the number of items on the dynamic test that children could solve without help (hypothesis 3a), as well as a negative relationship between scores on the standardized test of maths and the number of prompts that children needed during the dynamic test (3b), both for the total number of prompts given and for the number of metacognitive and task-specific prompts. Similarly, it was expected that there would be a positive relationship between scores on a standardized test of reading comprehension and the number of items on the dynamic test that children could solve without help (hypothesis 3c), and a negative relationship with the number of prompts that children needed during the dynamic test (3d), both for the total number of prompts given and for the number of metacognitive and task-specific prompts.

Lastly, based on the instructions children needed during the dynamic test, they were classified as either needing little instruction, needing metacognitive instruction, or needing task-specific instruction. It was hypothesized that these groups would differ in their (4a) performance in school subjects, and (4b) instructional needs in the classroom as observed by the teacher.

Method

Participants

Participants for the current study were N = 66 students (M age = 10.89 years, SD = 0.53), 27 boys and 39 girls, attending fourth and fifth grade education in a regular primary school in the Netherlands. A total of five classes from three schools participated in the study. All schools were in (lower) middle class areas in the Netherlands. The project was approved by the university’s ethics committee (ref. nr. ECPW2022/343). Informed consent was obtained from the parents of all participants prior to testing.

Design and Procedure

The study consisted of a correlational design, using data obtained from a training-only dynamic test administered to primary school students, questionnaire data from their teachers and standardized maths and comprehensive reading scores which were obtained from schools with permission from parents. The dynamic test was individually administered to children by the lead examiner, and a second examiner was present to observe and score along. The dynamic testing session took approximately 15–20 minutes. The examiners who administered the tests were trained masters students. Examiners had a laptop available with an automated excel sheet to check the correctness of answers and to provide the script for the training procedure. All materials were presented to children on paper, with verbal instructions from the examiner. Teachers were asked to fill out a brief questionnaire for each participant, asking information about their instructional needs and learning behavior.

Materials

Modified Dynamic Test of Analogical Reasoning

The dynamic test used in the current study was based on the graduated prompts training of a dynamic test of analogical reasoning used in previous research (e.g., Vogelaar et al., 2017, 2021; Vogelaar & Resing, 2018). The items consisted of geometric analogies, with a A:B::C:? format. To solve these, students were required to identify the relationship between A and B, and apply this to C to determine the correct answer. The analogies consisted of configurations involving multiple geometric figures, and could differ or transform on several dimensions: shape, size, rotation/mirror, location, and half/whole figures. In contrast to the original versions, the currently used dynamic test did not use a pre- or posttest, but consisted instead of a single training session of 12 items, administered with an increasing level of difficulty. The training was shown to be effective in improving children’s analogical reasoning abilities in previous research (Vogelaar et al., 2021; Vogelaar & Resing, 2018). Reliability of the items was on the border of acceptability with Cronbach’s α = .68. To make it easier for the examiners to determine which answers were correct, a multiple-choice format was used using six choices. Items were presented on paper, with participants being asked to provide the answer verbally or by pointing.

The graduated prompts training procedure entailed a pre-scripted response to incorrect answers provided by the participant. The lead examiner provided the prompts to the child by speaking out the pre-scripted responses. In the case of an incorrect response, the examiner provided the participant with a metacognitive prompt, after which the participant was asked to provide an answer to the item again. If this led to the correct answer, the procedure moved on to the next item. If, in contrast, it was not yet correct, the examiner provided another metacognitive prompt. If these metacognitive prompts did not lead to the participant providing the correct answer, the examiner provided more task-specific, cognitive prompts. If these also did not elicit a correct response from the participant, the examiner provided modeling of the solving processes that lead to the correct answer.

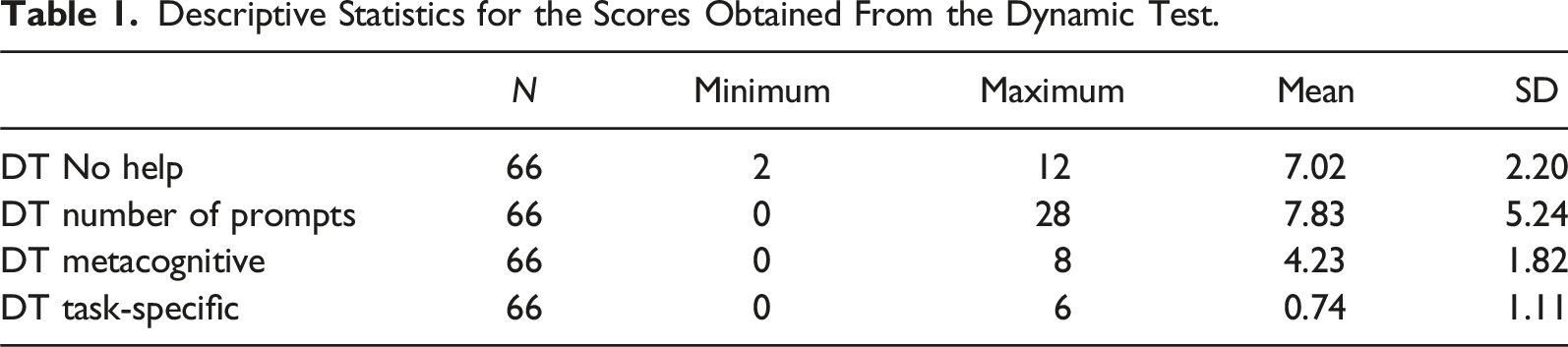

Based on participants’ performance on the dynamic test, four different scores were calculated: 1) DT No help, which represented the number of items children could solve without receiving prompts, 2) DT Number of prompts, which is a sum of the total number of prompts that were given to the participants during the dynamic test, 3) DT Metacognitive, the number of items that participants could solve after receiving general, metacognitive prompts, and 4) DT Task-specific, the number of items that participants could solve after receiving task-specific, cognitive prompts. DT Task-specific also included the provision of modelling procedures, as these were not given often enough on the test to warrant a separate category (four times in total).

Additionally, participants were assigned to Instruction Groups, based on their instructional needs during the dynamic test. All participants were classed as either (1) Low instruction, which consisted of children needing less than 5 metacognitive prompts and 2 task-specific cognitive prompts, (2) Metacognitive instruction, which were children needing 5 or more metacognitive prompts and less than 2 task-specific cognitive prompts, and (3) Task-specific instruction, with children needing 2 or more task-specific cognitive prompts, irrespective of their need for metacognitive prompts. The classifications were based on initial inspection of the data. The cut-off point of 5 metacognitive prompts was based on the mean and median (M = 4.23, Median = 4.00), scores above the mean were considered “high,” below the mean “low.” For task-specific cognitive prompts, a low mean score was found (M = 0.74). It was decided to use the cut-off score of 2 task-specific cognitive prompts because a score of 1 would be too sensitive to error. These scores also led to groups of acceptable sizes (Low instructional need (N = 31); Metacognitive instruction (N = 24); Task-specific instruction (N = 11).

Teacher Questionnaire Instructional Needs

Teachers were asked to complete a questionnaire for each child, to obtain information on the amount and type of instruction children needed in school as estimated by the teacher. The questionnaire used a matrix in which teachers were asked to provide a percentage of tasks in class where the student needed no help, general metacognitive instruction, task-specific cognitive help, or modelling of solving the task. These percentages were asked for general school behavior, mathematics, and reading. For the analyses, the cognitive and modeling categories were combined to match the structure of scores of the dynamic test of analogical reasoning.

Standardized School Achievement Tests

As indicators of children’s performance in school subjects, the results of the Cito 3.0 Mathematics (Hop & Engelen, 2017; Hop et al., 2017) and Cito 3.0 Reading Comprehension (Tomesen et al., 2017, 2018) tests were obtained from schools. The Cito 3.0 Mathematics and Reading Comprehension are standardized and norm-referenced tests, that are used to gauge children’s performance levels. These tests can be administered twice per year to follow children’s development. In the current research, the skill level scores were used, which can be compared over different school grades. Cito Mathematics was found to be a valid and reliable measure for mathematical skills, M acc = .94–.96 (Hop & Engelen, 2017; Hop et al., 2017), as was Cito Reading comprehension of reading comprehension ability, M acc = .88–.93 (Tomesen et al., 2017, 2018).

Results

Descriptive Statistics for the Scores Obtained From the Dynamic Test.

Quantity of Instruction

Correlations Between Amount of Help on the Dynamic Test and in Class.

Type of Instruction

Correlations Between Type of Help on the Dynamic Test and in Class.

Relation to Academic Performance

Correlations Between Help on the Dynamic Test and School Performance.

Distinguishing Groups Based on Instructional Needs

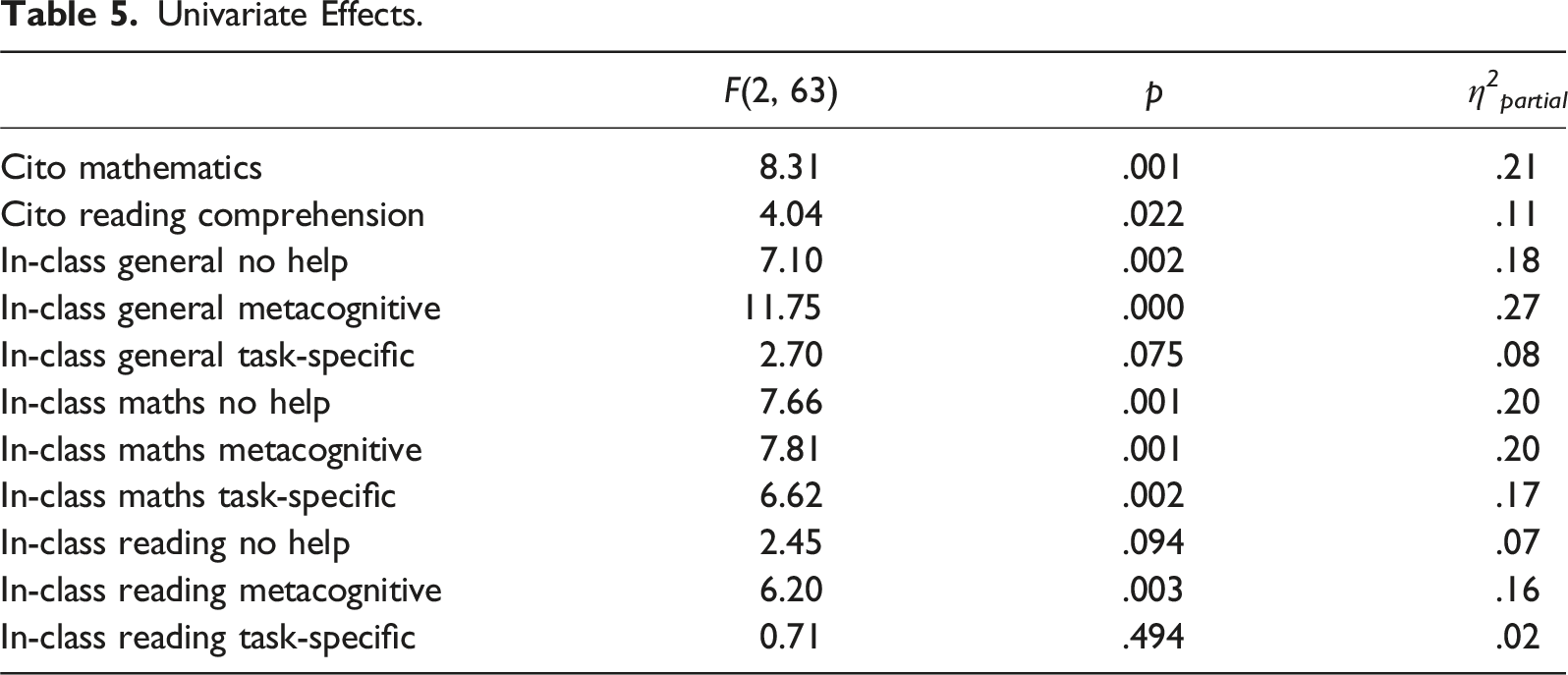

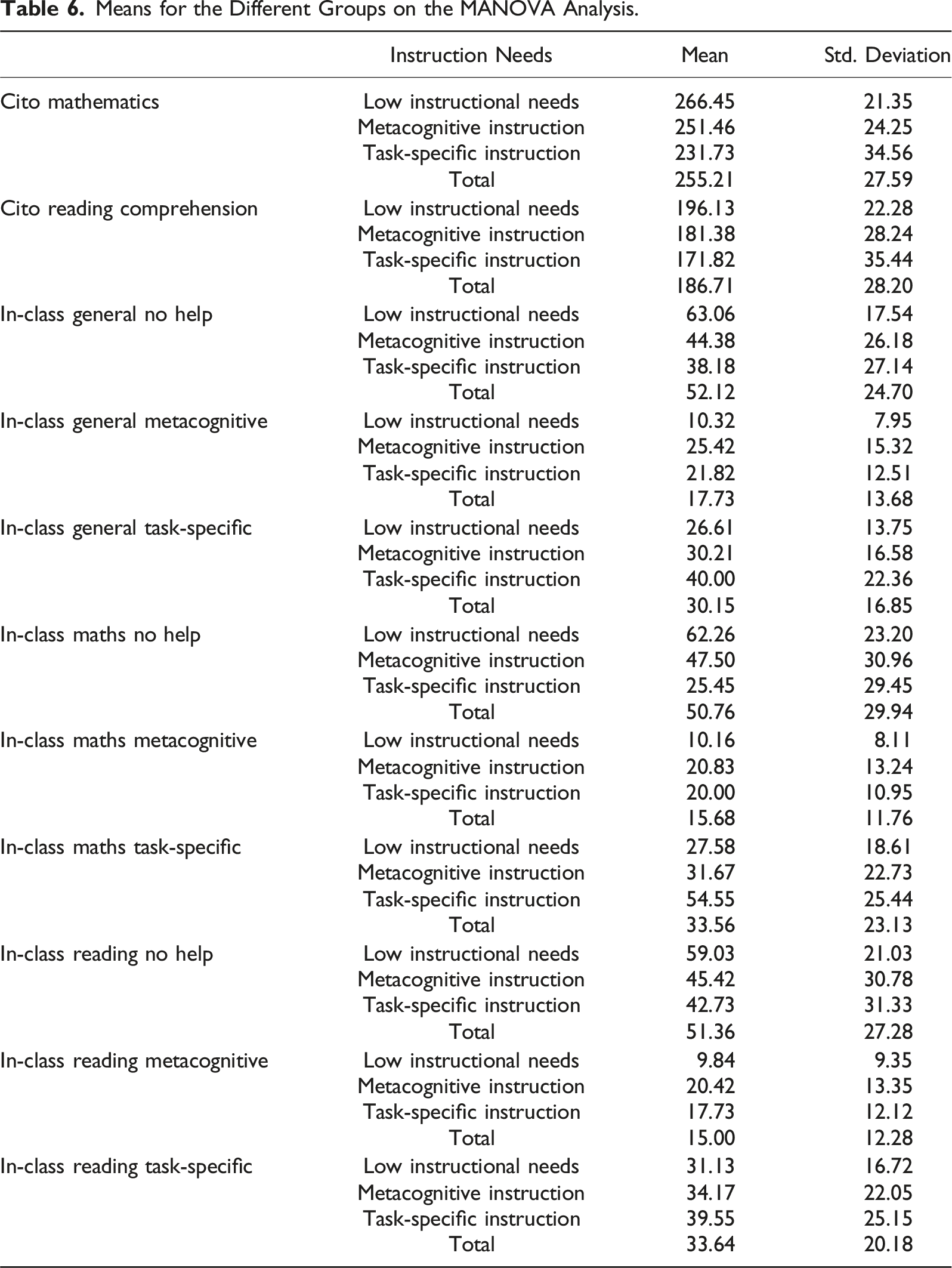

To investigate whether groups based on their instructional needs during the dynamic test differed in terms of their performance and instructional needs in school, a one-way Multivariate Analysis of Variance was used with the instructional needs classification (1: Low instructional need (N = 31); 2: Metacognitive instruction (N = 24); 3: Task-specific instruction (N = 11)) as the between-subjects factor. The dependent variables were the academic performance on the standardized tests of mathematics and reading comprehension, and the different types of instructional needs as estimated by the teacher. Multivariate tests indicated a significant effect for instruction class (Wilks’ λ = .47, F(16, 112) = 3.18, p < .001, η 2 partial = .31) with a large effect size.

Univariate Effects.

Means for the Different Groups on the MANOVA Analysis.

Post-hoc tests using Tukey’s Honest Significant Difference showed significant differences between de Low instructional needs group and the Task-specific instruction group on Cito Mathematics (p < .001) and Cito Reading comprehension (p = .033), with the Low instructional needs group obtaining higher scores on both measures than the Task-specific instruction group. On Cito mathematics the Metacognitive instruction came close to a significant difference with both the Low instructional needs group (p = .077) who scored higher, and the Task-specific instruction group (p = .084) who scored lower. For Cito reading comprehension, Metacognitive instruction did not significantly differ from Low instructional needs (p = .118) nor from Task-specific instruction (p = .596).

In terms of teachers’ estimations of children’s instructional needs in-class, a significant difference was found on General No help between the Low instructional needs and both Metacognitive instruction (p = .010) and Task-specific instruction (p = .007), but not between Metacognitive instruction and Task-specific instruction (p = .734). A similar pattern was found on General Metacognitive, where Low instructional needs differed significantly from both Metacognitive instruction (p < .001) and Task-specific instruction (p = .020), but not between Metacognitive instruction and Task-specific instruction (p = .684).

For teacher’s estimation of instructional needs on math, Low instructional needs did not differ significantly from Metacognitive instruction (p = .123), but did from Task-specific instruction (p = .001). The difference between Metacognitive instruction and Task-specific instruction fell short of significance (p = .076). On metacognitive instruction in maths, significant differences were found between Low instructional needs and Metacognitive instruction (p = .001) and Task-specific instruction (p = .029), but not between Metacognitive and Task-specific instruction (p = .975). On task-specific instructions in Maths, no significant difference was found between Low instructional needs and Metacognitive instruction (p = .762), but Task-specific instruction differed significantly from both Low instructional needs (p = .002) and Metacognitive instruction (p = .013).

Finally, for the teacher’s estimation of instructional needs on reading comprehension, no significant effects were found on No Help and on Task-specific instruction. In relation to teachers estimation of need for Metacognitive instruction in Reading Comprehension, Low instructional needs was significantly different from Metacognitive instruction (p = .003), but not from Task-specific instruction (p = .128), nor did Metacognitive instruction differ from Task-specific instruction (p = .794).

Discussion

The study described investigated whether an abbreviated version of a dynamic test, using a single graduated prompts training session only, can potentially be used to estimate children’s instructional needs, thus providing valuable information for classroom instructional intervention. In general, the scores obtained from the training-only dynamic test appeared to be valid indicators of instructional needs. The number of items that could be solved without help and the number of prompts provided during the dynamic test were both moderately to strongly related to teachers’ estimations of independent work in class. Moreover, the type of help in the dynamic test was moderately related to the same type of help in class, whereas it was not significantly or only weakly related to the opposite type of help, providing tentative evidence for both convergent and divergent validity of these measures. Overall, the picture appears to be in line with prior research that has shown dynamic testing using graduated prompts to be able to provide insight into individual children’s instructional needs, and can help teachers to address the needs of students (e.g., Bosma et al., 2017; Bosma & Resing, 2012). The exception to this was the task-specific help in reading comprehension, which was not related to task-specific prompts on the dynamic test.

In terms of relations with standardized tests of mathematics and reading comprehension, moderate to strong relations were found with all measures from the dynamic test, in line with previous findings where the instructional needs during the dynamic test were shown to be related to mathematics and reading abilities (Stevenson et al., 2013; Veerbeek et al., 2019). The only exception here was again the relations between task-specific help and reading comprehension scores. Here, as well as for the previous findings surrounding in-class instruction, the task-specific cognitive instruction for inductive reasoning versus reading comprehension may have simply been too far a transfer to make. These findings seem to indicate that the extent to which task-specific instruction is needed by a child does indeed depend on the task at hand. These findings can be seen as an extension of previous research in which children were found to show different growth curves on dynamic tests of different domains, leading to the conclusion that learning potential is task-specific and domain specific (Tissink et al., 1993).

The classifications based on the instructions that led to children providing the correct answer, seemed for the most part to differentiate between children on performance as well as on their needs for instruction. Contrary to Bosma et al. (2017), the currently used test had a single graduated prompts protocol and classified children based on the prompts that led them to provide the correct answer. Overall, the distinction between children based on low instructional needs, metacognitive instruction, or task-specific instruction seemed to help differentiate between children’s instructional needs. In general, again, reading comprehension seemed to be the furthest removed from the dynamic test.

Limitations

The first limitation of the current study centers around the materials used and the effects this had on how children interacted with the test material. For this research, a multiple-choice answering format was used, in contrast to the prior research with these items, which used a constructed response answering format (e.g., Vogelaar et al., 2017, 2021; Vogelaar & Resing, 2018). The combination of a multiple-choice format and the provision of a maximum of five prompts, had two consequences. The first was that children could eliminate answer options and therefore may not have focused on the instructions to learn from them. Second, the option of elimination made that very few children needed task-specific cognitive prompts and even fewer needed modelling, while this may not have reflected their instructional needs, but may have more been an indication of their use of an elimination strategy. In effect, this also meant that the test was limited in differentiating based on instructional needs because it did not provide the possibility to distinguish children who needed modelling from those needing task-specific cognitive prompts. Future research using a constructed response answering format is needed to investigate whether that format would be able to also distinguish a need for modelling instruction. A computerized version of the task, such as was used for Vogelaar et al. (2021) could provide the possibility to immediately score children’s constructed responses and provide prompts if needed.

One aspect that may very well be needed to inform instructional intervention and may have been a source of variance not accounted for in the current study, but was outside of the scope, is providing information on children’s motivation and motivational strategies (Tiekstra et al., 2016). Future research could investigate a combination of a training-only dynamic test with measurement focused on children’s motivation, and focus on various factors that influence children’s needs for instruction.

Implications

As was pointed out in Veerbeek et al. (2017), the decision whether to use a shorter version of a dynamic test should be determined based on the question that prompted the use of the dynamic test. The current research provided tentative evidence that a training-only version of a dynamic test can be used to provide information about the amount and type of instruction a child needs. However, when no pre- and posttest are used, no direct information can be obtained about a baseline performance, potential level of performance, or rate of learning as a result of training.

Using a quite simple graduated prompts format facilitates implementation of a dynamic test in the classroom, which can, in a relatively short timeframe, provide a first snapshot of a child’s instructional needs. This might make dynamic testing more readily applicable within daily educational practices. Further, the format of a graduated prompts training could be applied to other materials as well, making it possible to create dynamic tests in several different domains.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.