Abstract

Despite widespread evidence-based treatments for addressing internalizing concerns, many youth do not demonstrate reliable or clinically meaningful improvement. Regular progress monitoring, consisting of measurement and feedback, offers the opportunity to improve outcomes in real time. The 21-item Depression Anxiety and Stress Scales (DASS-21; Lovibond & Lovibond, 1995) has potential as a progress-monitoring tool for internalizing concerns in youth; however, limited psychometric data are available to support this use. The purpose of this study was to investigate the dependability of data obtained from the DASS-21 when completed by adolescents in a clinical setting. This study also aimed to understand the feasibility and utility of using the DASS-21 as a progress-monitoring tool from youth and clinicians’ perspectives. Generalizability and dependability analyses were conducted to determine the number of ratings needed to obtain a dependable estimate of youth functioning within 1 week. Whereas two daily ratings were needed to dependably estimate total distress, results for the depression, anxiety, and stress subscales indicated that two to five data points would be needed over the course of the week. Finally, results demonstrate the usability of the DASS-21 from both youth and clinician perspectives when used in a progress-monitoring context.

Introduction

The task force of the American Psychological Association (APA) noted that evidence-based practice and clinical specialization include “monitoring of patient progress…that may suggest the need to adjust the treatment” (2006, p. 276). Known as routine outcome monitoring or progress monitoring, this assessment practice involves collecting self-report data from individuals in treatment such that clinicians can be alerted to positive or negative deviations from the expected course of treatment and potential deterioration (thus allowing clinicians to ask the question of Is this treatment working for this client?). Progress monitoring may be especially important in areas where early response to treatment is particularly important and informative, such as suicide risk management (Restifo et al., 2015). In these instances, a system that monitors changes day-to-day, rather than in weeks or months, may be essential in documenting early responses to treatment. Results of meta-analytic reviews have found that clients experience more significant gains while in treatment when clinicians utilize ongoing progress monitoring during psychotherapy (Lambert et al., 2018).

Despite the identified benefits of progress monitoring, research suggests that it is underutilized in practice. One study found that only 37% of clinicians used a form of routine outcome monitoring (Hatfield & Ogles, 2004), whereas in another study clinicians reported using progress monitoring in less than half of sessions (Persons et al., 2016). Furthermore, research has suggested that when clinicians do implement progress monitoring, many do not accurately assess progress in treatment and, more specifically, are poor at identifying deterioration in treatment (Hatfield & Ogles, 2004). Although multiple potential explanations have been identified in the literature for the limited uptake of progress-monitoring tools (e.g., time/resources required and burden to client), one factor worthy of consideration is knowledge (Ionita et al., 2020). That is, clinicians may not be aware of the measures that are available to them for the repeated monitoring of concerns in adolescents. For example, although many self-report measures exist to assess anxiety (e.g., Revised Children’s Manifest Anxiety Scale – Second Edition, Reynolds & Richmond, 1985; Multidimensional Anxiety Scale for Children – Second Edition, March et al., 1997; Beck Anxiety Inventory, Beck et al., 1988), these measures have been designed to compare progress between two time points and/or for diagnostic purposes in relation to normative benchmarks. Studies have yet to examine the repeated use of these measures on a daily or weekly basis over an extended period, and therefore further investigation is needed into their utility as regular progress-monitoring tools.

Dart et al. (2019) recently completed a systematic review of progress-monitoring measures for internalizing symptoms in school-aged children and adolescents. They identified five self-report assessment measures, including the Positive and Negative Affect Scale for Children (PANAS-C; Laurent et al., 1999), Separation Anxiety Avoidance Inventory (SAAI; Eisen & Schaefer, 2007), Leibowitz Social Anxiety Scale (LSAS; Heimberg et al., 1999), Symptoms and Functioning Severity Scale (SFSS; Athay et al., 2012), and the Outcome Questionnaire (OQ; Vermeersch et al., 2000). Nearly all the assessments met the criteria of being administered weekly, though the psychometric details of other potential progress-monitoring intervals (e.g., daily) were not discussed. The exception to the weekly measurement was the PANAS-C, which was completed multiple times per day over a telephone call. Furthermore, two of the five assessments (e.g., SAAI and LSAS), measured only one core internalizing domain, such as anxiety, rather than internalizing states across multiple domains (Dart et al., 2019). Of the five assessments, only two are freely available (i.e., PANAS and SFSS).

Depression Anxiety and Stress Scales (DASS-21)

Limitations of the current validated measures to progress monitor internalizing symptoms in adolescence lead to the consideration of other self-report measures. One tool with potential for use across internalizing concerns within a progress-monitoring context is the 21-item Depression Anxiety and Stress Scales (DASS-21; Lovibond & Lovibond, 1995). In addition to providing an overall measure of distress, the DASS-21 includes three seven-item subscales. The depression subscale measures dysphoria, hopelessness, low self-esteem, self-depreciation, lack of interest, anhedonia, and low positive affect. The anxiety subscale assesses autonomic arousal, musculo-skeletal symptoms, situational anxiety, and subjective experiences of anxious arousal. Finally, the stress subscale measures difficulty relaxing, tension, agitation, irritability, and negative affect (Lovibond & Lovibond, 1995). Although the DASS-21 was originally developed for adults, it has been used successfully with many adolescent samples to date (e.g., Moore et al., 2017; Patrick et al., 2010; Silva et al., 2016; Szabó, 2010; Tully et al., 2009; Willemsen et al., 2011).

Research conducted over the past 15 years has found that the DASS-21 is a psychometrically sound tool for the assessment of general distress, depression, anxiety, and stress in adolescents, as each subscale demonstrated evidence of convergent validity and internal consistency reliability (Dwight et al., 2023). The fact that the DASS-21 has undergone substantial psychometric investigation, combined with the measure’s brevity and wide availability (i.e., freely available and online), appears to make it a strong candidate for use in progress monitoring. According to the publisher’s website (https://www2.psy.unsw.edu.au/dass), the DASS questionnaire may be administered according to various time frames depending on interest, though no recommended interval is provided. Dart et al. (2019) found that most progress-monitoring measures involving adolescent self-report of internalizing symptoms utilized a weekly rating; however, it is unknown whether such an assessment timeframe is empirically derived. Internalizing symptoms such as anxiety can fluctuate greatly from day to day (Walz et al., 2014), thus making a single measurement occasion potentially problematic and undependable. Given the potential utility of the DASS-21 to monitor response to treatment, additional psychometric research supporting the DASS-21 with youth for clinical progress-monitoring purposes is needed.

Purpose of Study

To address challenges related to internalizing problems among adolescents, valid, dependable, and time efficient progress-monitoring measures are needed for use with this population. Although the DASS-21 appears to have potential for progress monitoring in adolescent populations, psychometric investigation to date has focused on its use within a more summative context. As such, important indicators of psychometric adequacy and associated guidelines for use of the DASS-21 as a progress-monitoring tool have not yet been established. Thus, our primary research question was to (RQ1) determine how many daily DASS-21 ratings are needed to obtain a dependable estimate of self-reported youth levels of depression, anxiety, stress, and overall distress within a given week during treatment. Such information would help to inform how frequently the DASS-21 should be administered if used to monitor treatment progress. Additionally, given limited exploration of the DASS-21 as a progress-monitoring tool, we sought to examine social validity by asking, (RQ2) To what extent do clinicians find progress monitoring with the DASS-21 to be feasible to utilize, meaningful to interpret, and useful in making treatment decisions? (RQ3) What is the perceived usability of the DASS-21 as reported by youth?

Method

Participants and Setting

The study was conducted in two private, outpatient clinics located in two cities in the Northeastern United States. Primary study participants were 18 youth (M = 15.49 years old; SD = 1.97 years) seeking services at these clinics. Participants identified as female (n = 12), male (n = 5), and non-binary (n = 1). The majority (83.3%; n = 15) of participants in this subsample were from one clinic. These participants presented with a range of diagnoses, including Generalized Anxiety Disorder, Disruptive Mood Dysregulation Disorder, Major Depressive Disorder, Obsessive Compulsive Disorder, Post-traumatic Stress Disorder, Selective Mutism, and Social Anxiety Disorder. Additionally, data were collected from the 14 clinicians working with these youth, including psychologists, social workers, and mental health counselors (Lawrence et al., 2015). Clinicians reported being in practice for an average of 6.50 years (SD = 4.88). All clinicians reported that they used some form of youth self-report outcome assessment in their practice.

Measures

In addition to demographic questionnaires, youth participants completed the Depression Anxiety Stress Scales, Short Form (DASS-21) Questionnaire and the Adolescent Self-Report Questionnaire on the Usability of the DASS-21. Clinicians completed the Clinician Self-Report Questionnaire on the Usability of the DASS-21 with Youth and were asked several questions regarding their own training and clients’ participation in sessions.

Demographics

Adolescent participants were asked questions about their age and gender identity. Clinicians were asked demographic questions about (a) their degree and number of years in practice and (b) their clients’ diagnoses and current treatment.

DASS-21

The 21-item DASS-21 utilizes a four-point Likert-type format and ranges from 0 (“Did not apply to me”) to 3 (“Applied to me very much of most of the time”). Scores range from 0 to 21 for each subscale and are obtained by summing the seven items from each of the three subscales. Higher scores indicate higher levels of depression (DASS-D), anxiety, (DASS-A), or stress (DASS-S; Moore et al., 2017). All subscale scores can be summed to provide a total score (i.e., DASS-T), or measure of overall distress, ranging from 0 to 63 (Evans et al., 2020). When multiplied by two, the DASS-21 total score ranges from 0 to 126, yielding equivalent scores to the full 42-item DASS. A recent literature review found strong internal consistency reliability coefficients for the DASS-21 scales in adolescent populations: DASS-D (α = .72–.99), DASS-A (α = .75–.99), DASS-S (α = .70–.94), and DASS-T (or total; α = >.80–.95; Dwight et al., 2023). This review also found strong support for the convergent validity of the DASS-D, DASS-A, and DASS-T subscales, whereas weaker results were found for the DASS-S subscale (Dwight et al., 2023).

Usability measures

Adolescent participants completed a usability questionnaire at the completion the study that was adapted from the Children’s Usage Rating Profile (CURP) Actual (Briesch & Chafouleas, 2009). They were asked 10 questions related to completing the measure, rating each item on a 1–4 scale ranging from “totally disagree” to “totally agree.” Prior research on the 23-item measure has suggested adequate internal consistency reliability for the three subscales of Personal Desirability (α = .92), Feasibility (α = .82), and Understanding (α = .75).

The Clinician Self-Report Questionnaire was developed for this study and used to assess clinicians’ perceptions of the feasibility, interpretability, and utility of the DASS-21 as a youth progress-monitoring tool. Additionally, this measure aimed to assess clinicians’ use of the DASS-21 in making treatment-informed decisions. Clinicians were asked two questions related to their own training in both use of outcome measurement and use of the DASS-21 specifically. Using survey questions from Hatfield and Ogles (2004) as a model, clinicians were then asked to rate six questions related to the importance of using outcome measurement and ten questions related to perceived barriers to using the DASS-21 on 0–5 scales ranging from “not at all” to “very much” and “not at all a barrier” to “very much a barrier,” respectively.

Procedures

In a clinic-wide staff meeting, clinicians were informed of the goals and procedures of the study. Additionally, clinicians were trained how to verbally introduce the study to both existing clients and those visiting the clinic for an initial intake consultation appointment. Inclusion criteria were that participants had to speak English as their primary language, be between the ages of 12–18 years old, and be receiving evidence-based treatment (i.e., Acceptance and Commitment Therapy, Cognitive Behavioral Therapy, and Dialectical Behavior Therapy) at the outpatient clinic for internalizing concerns (e.g., anxiety and depression). After caregivers and youth expressed interest in the study, they received via email a letter detailing the purpose of the study and what participation entailed. This letter gave parents the opportunity to provide consent and allow their child to opt in and complete DASS-21 progress-monitoring measures. Adolescent written assent was also collected. Clinicians of participating youth were contacted via email to request participation in the study. Ultimately, a total of 31 youth and their families provided consent/assent, whereas 31 families (i.e., 20 caregivers and 11 youth) declined invitations to participate.

After completing the consent process, participants were asked to complete daily self-report DASS-21 ratings. Daily ratings were completed on a secure online platform, and participants were sent daily email and text reminders (as preferred). The daily ratings were sent to youth participants between 3 and 8 pm each day, with the specific time identified based on youth preference. The daily ratings took about 2 min to complete. If youth did not complete the daily rating before midnight, the rating was unable to be completed. To be included in the study, adolescent participants had to have completed at least three daily ratings for 3 weeks while receiving treatment. As a result, DASS-21 ratings for a total of 18 youth were used in the current study.

Once all the DASS-21 ratings were completed, participating clinicians were invited to complete an electronic questionnaire assessing the usability of the measure. At the same time, youth participants were asked to complete an electronic questionnaire assessing the usability of the DASS-21 and their experience completing the daily ratings.

Data Analysis

This study answered the three research questions with quantitative and descriptive data. The first quantitative phase of the study explored the psychometric properties of the DASS-21 within a progress-monitoring context (i.e., dependability). The second descriptive phase of the study explored the perceived usability of progress monitoring using the DASS-21 amongst clinicians and youth.

Dependability of data

To determine the number of daily ratings needed to obtain dependable estimate of behavior during treatment, a generalizability theory (GT; Cronbach et al., 1972) framework was used. GT offers two main advantages over a classical test theory approach. First, whereas traditional reliability coefficients only provide information about the influence of one source of score variance at a time (e.g., how much scores fluctuate across time in the case of test–retest reliability and how much scores fluctuate across raters in the case of interrater reliability), one can examine multiple sources of score variance simultaneously within a GT framework. It is therefore possible to assess the relative contribution of each source of variance and to proactively identify what changes may need to be made to an assessment procedure to improve reliability. Such questions are answered by conducting a variance component analysis or generalizability (G) study. If a measure was able to perfectly discriminate between individuals, the greatest percentage of variance would be attributable to differences between persons and a negligible percentage of variance would be attributable to residual (i.e., unexplained) error. The current study utilized a two-facet fully crossed design in which every participant completed the DASS-21 on every occasion (i.e., person × scale × occasion design). The facet of occasions was treated as random, given the intention to generalize results beyond the specific days sampled. However, the facet of scale was treated as fixed, as we were only interested in the specific scales included within the DASS-21. Given mixed evidence regarding the factor structure of the DASS-21 and variance attributable to subscale (Dwight et al., 2023), analyses were conducted for the DASS-T, DASS-D, DASS-A, and DASS-S scores separately. All variance component analyses were conducted in SPSS 26.0.

Results of the G study were then used to inform subsequent decision (D) studies. Whereas traditional reliability coefficients only provide information to inform relative (i.e., normative) decision making, through D studies researchers can also compute coefficients for the purposes of absolute (i.e., dependability coefficient) decision making, as is relevant to progress monitoring (Briesch et al., 2014). The goal of the D studies was to explore whether dependable estimates of overall distress, depression, anxiety, and stress could be obtained with a single rating or if additional ratings were needed. Results indicate how well one data point from a single occasion can generalize to adolescents’ functioning across the course of 1 week when making intraindividual decisions. Separate dependability analyses were conducted for each of the 3 weeks.

Perceived usability

To answer the research questions regarding perceived usability of the DASS-21, the results of the clinician and youth self-report questionnaires were descriptively analyzed by calculating means and standard deviations for each item.

Results

Clinicians were asked to rate clients’ participation in sessions on 1–5 scales ranging from “completely disagree” to “completely agree.” Most clinicians reported strong client participation in sessions (M = 4.18, SD = .73). Additionally, clinicians reported that clients attended an average of 1.41 sessions per week (SD = .51) and an average of 7.12 total sessions (SD = 3.69) during each participant’s time enrolled in the study.

Dependability of Data

Given that daily DASS-21 total and subscale ratings for 18 youth participants were conducted across 3 weeks (7 days/week), the total possible number of daily DASS-21 data points was 1512. A total of 484 data points (32%) were identified as missing due to factors such as participants forgetting to complete the daily DASS-21 rating or participants being unable to access the internet on a particular day. Because the data were considered missing at random, multiple imputation was employed (Enders, 2001). The imputation model included all variables and interactions, and a total of 10 datasets were generated. The possible score range for the total DASS-21 ranged from 0 to 63, whereas each subscale ranged from 0 to 21. Mean scores across time were 20.37 (SD = 13.75) for the DASS-T, 8.92 (SD = 7.00) for the DASS-D, 6.85 (SD = 6.69) for the DASS-A, and 8.12 (SD = 6.75) for the DASS-S. Mean scores across participants fluctuated to a small degree across weeks (i.e., typically no more than one to two points from 1 week to the next).

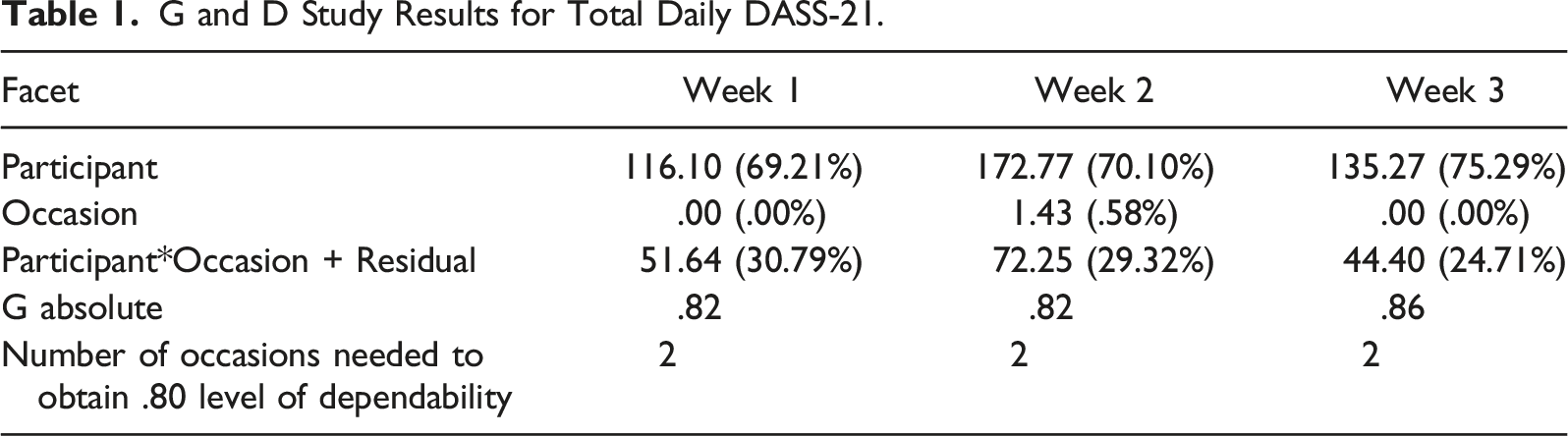

G and D Study Results for Total Daily DASS-21.

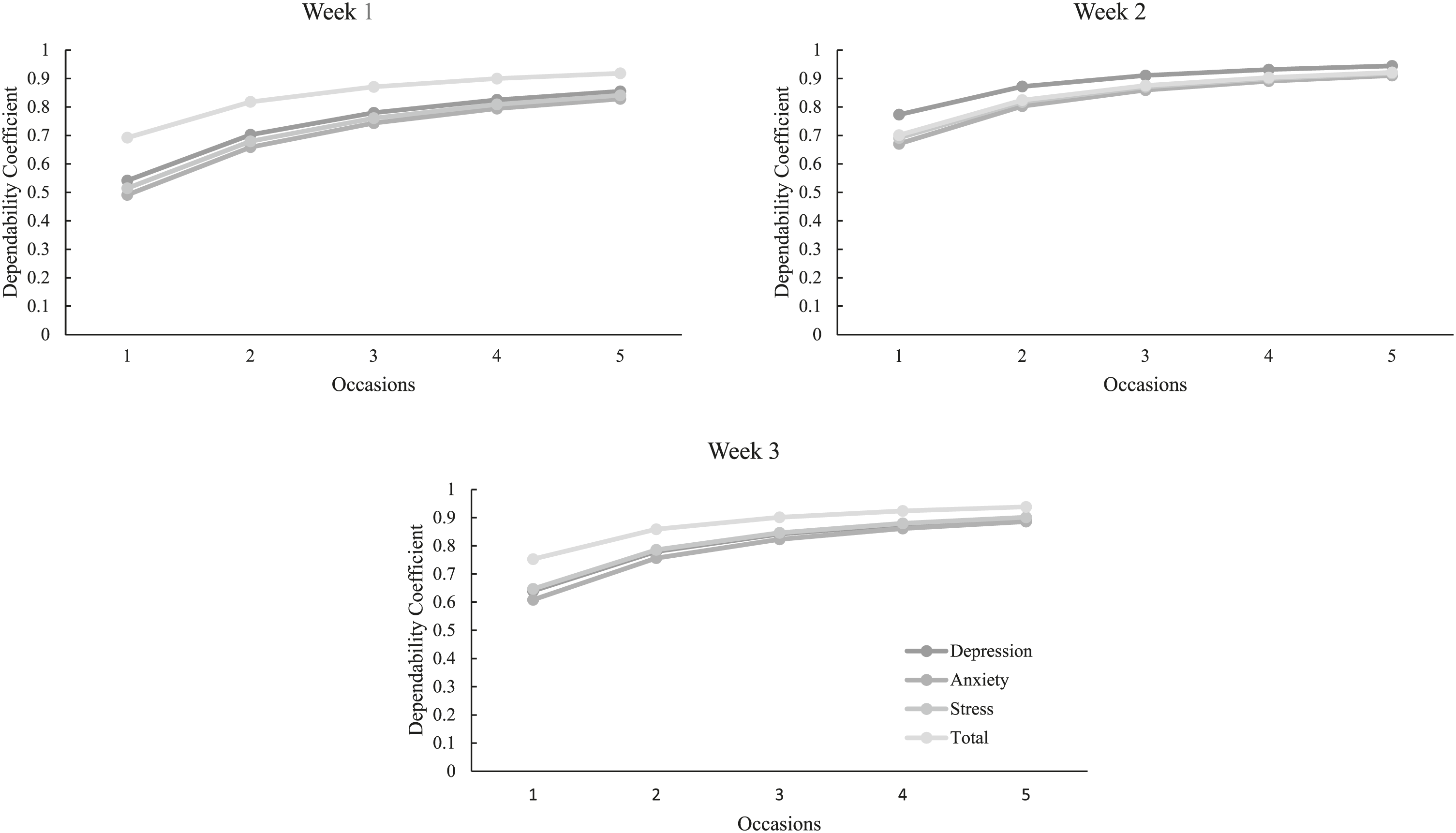

Dependability coefficients by scale and week.

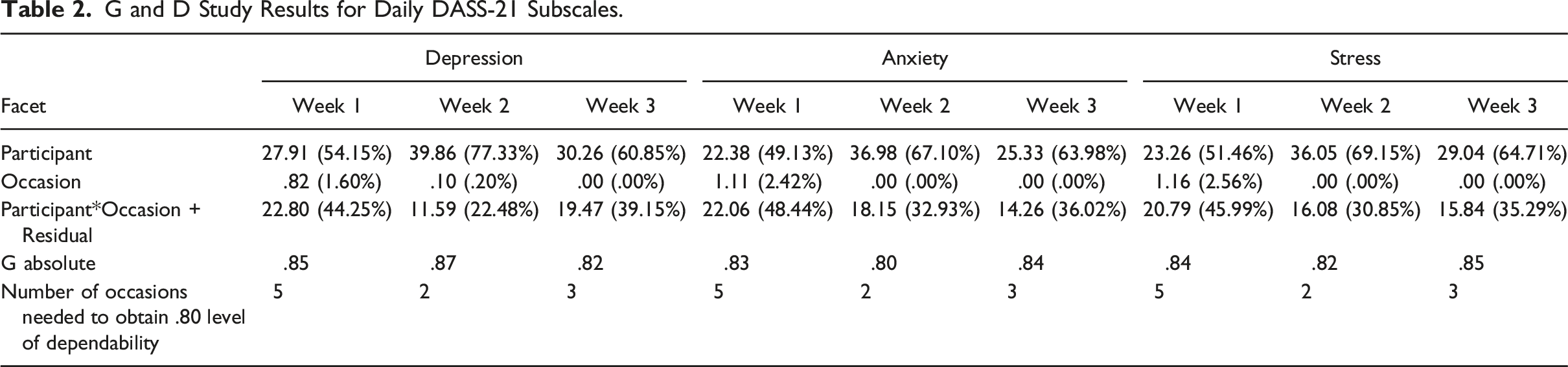

G and D Study Results for Daily DASS-21 Subscales.

Perceived Usability

Overall, youth reported that they perceived the DASS-21 to be usable. Most youth agreed that it was clear what they had to do (M = 3.78, SD = .43), they understood why their clinician asked them to complete the rating scale (M = 3.44, SD = .71), that they were able to do every step of the ratings (M = 3.39, SD = .78), and that this was a good way to help people (M = 3.28, SD = .67). Generally, youth were not worried that their caregiver would see their answers (M = 1.94, SD = 1.06), nor felt that it was too much work for them (M = 1.89, SD = .83) or that the ratings took too long to do (M = 1.83, SD = .86). Conversely, youth tended to disagree when asked whether they could see themselves using this method again (M = 2.44, SD = .86). On open response comments, one youth highlighted that the ratings became exhausting and they ignored the reminder alerts, whereas others reported that it would be helpful for their therapist to see the data throughout the week, and that they liked the daily ratings more than the weekly ratings as it more accurately encompassed their symptoms and experiences.

On a scale from 0 (Not at all) to 5 (Very much), clinicians reported receiving moderate training in using self-reports within progress monitoring (M = 3.54; SD = 1.27) but somewhat less training (M = 2.54; SD = 1.33) in the use of the DASS-21 specifically. When asked to rate the reasons why they used self-report measures as part of their practice, greatest importance was placed on tracking client progress (M = 4.15, SD = .80), the fact that it was required by their work setting (M = 4.08, SD = .86), and that it helped determine if there was a need to alter treatment (M = 4.00, SD = .91). The most notable barriers to using the DASS-21 to progress monitor noted by clinicians included the extra burden on clients (M = 2.77, SD = 1.64) and client refusal (M = 2.69, SD = 1.38). In contrast, only slight concern was noted regarding the measure taking too much time to review (M = 1.54, SD = 1.61), feeling it was not helpful (M = 1.00, SD = 1.63), and not having enough resources to use it (M = 1.00, SD = 1.53). Open response options from clinicians indicated additional barriers, including insufficient training and experience, difficulty remembering to check on measure completion, and difficulty navigating the technological platform.

Discussion

Many adolescents receive evidence-based interventions for internalizing concerns; however, there exists a need for feasible and dependable progress-monitoring tools to measure response to intervention. One tool with potential promise is the DASS-21; however, limited psychometric evidence on the dependability of data prompted the need for further exploration within the current study. Given the ability to detect responses to intervention, the brevity, and ease of completion, along with clinicians’ and youths’ general positive regard for the DASS-21, results of this study suggest that the DASS-21 may be a helpful tool to incorporate into adolescent treatment to obtain regular estimates of youth functioning.

One of the goals of this study was to determine the number of daily self-reported DASS-21 ratings needed to obtain a dependable estimate of depression, anxiety, stress, and total distress within 1 week. Results suggest that asking adolescents to complete the DASS-21 twice per week may be sufficient to obtain a dependable estimate of overall distress (i.e., DASS-T) over the course of a week. When assessed by subscale, results were slightly more variable; however, generally suggested that only two to three ratings per week were needed. The exception to this were the ratings obtained in Week 1, for which four to five ratings were necessary to obtain a sufficiently dependable estimate. The finding that more than one rating was needed to obtain a dependable estimate of behaviors is not surprising, given that depression, anxiety, and stress may be highly linked to contextual factors, such as adolescents feeling down or worried about a perceived negative social interaction or an upcoming stressful test or activity. These contextual factors may then contribute to increased variation (less stability) in DASS-21 self-report ratings of depression, anxiety, and stress across days. The fact that a greater number of ratings were needed during Week 1 may be a function of youth participants adjusting to, and becoming more comfortable with, the rating process; however, it should be explored further.

To date, limited research has been conducted on the stability of self-reports related to internalizing behaviors in adolescents and has focused on summative, longitudinal data, rather than in the context of progress monitoring. For example, Reitz et al. (2005) found the stability of self-reported internalizing problem behaviors over a 1-year period to be high for both boys and girls in early adolescence. Similarly, in adolescent and young adult samples, Tram & Cole (2006) found the stability of depressive symptoms to be high when they assessed depressive symptoms twice a year from fifth to eighth grades. As such, results of the current study are the first to investigate the stability of youth self-report DASS-21 ratings on a more frequent basis covering a shorter span of time.

Additionally, this study also investigated youth and clinician perceived feasibility, utility, and barriers to progress monitoring using the DASS-21. In general, many youth noted the ease of completing the DASS-21 given the brevity and clarity of the measure, though youth also noted barriers to daily completion, such as feeling as though they were asked to complete it too often. Overall, clinicians noted the feasibility and utility of the DASS-21 but highlighted some barriers, such as the burden to youth and youth refusal to complete the ratings. It is important to note that perceptions of usability were based on asking youth to complete daily ratings, whereas results of this study indicate that the DASS-21 does not have to be completed every day to capture a dependable estimate of behavior. Thus, it is important to examine whether similar barriers are identified when using the schedule of administration recommended by results of this study (i.e., two to three times per week).

Limitations and Future Directions

The results of this study should be viewed with a few limitations in mind. First, although prompts to complete the self-report DASS-21 were sent daily, data were reliably collected 3 to 5 days of the week for most participants. Due to missing data, multiple imputations were completed in this study. It is important to note that participants may have experienced significant differences or increased variability in their emotional and behavioral experience on days when ratings were not completed. For this reason, it may be important to collect data across all days of the week to determine if there is a change in the dependability estimates.

Second, it is important to consider the limited sample size in this study and associated threats to generalizability. Although statistical power does not apply and definitive sample size requirements do not exist for use of GT (Briesch et al., 2014), variance component estimates do become more stable when there are more participants (Smith, 1981). Relatedly, the current study was conducted within an outpatient clinic and many of the youth presented with multiple diagnoses. As a result, the degree to which obtained dependability estimates would replicate in samples within non-clinical settings and varying levels of impairment should be explored. Finally, participants in the current study were in different phases of treatment, with some having just begun treatment, whereas others had already been working with their therapist for many months. There may be implications of having these two groups of participants. For example, it is possible that the results of the dependability analyses could become more stable or need fewer ratings per week for a dependable estimate of symptoms, the longer someone is receiving treatment. Due to the limited sample in the current study, we were unable to examine whether differences in dependability existed as a function of stage of treatment; however, this would be an interesting avenue for future research. Finally, dependability estimates obtained within this study were based on youth actively receiving treatment and may look different during baseline conditions. Conducting additional dependability studies under baseline conditions would provide an understanding of whether guidelines for data collection may be variable across phases.

Implications for Future Research and Practice

Given the initial promise that this study shows for using the DASS-21 as a dependable progress-monitoring tool for youth, it is important to consider the implications of using the measure across both research and practice contexts. First, findings of the current study suggest that asking youth to complete the DASS-21 on one occasion may not provide a dependable estimate of their general levels of depression, anxiety, stress, or total distress throughout the week. Although results suggest that completing two to three DASS-21 ratings per week may provide a dependable estimate of self-reported behavior, future research should explore if timing variables (e.g., time of day) influence the generalizability and dependability of ratings. For example, it is possible that youth rating their distress in the afternoon (e.g., following a potentially tiring day at school and looming homework) may lead to increased endorsement of symptoms compared to completion of ratings in the evening after one may feel more settled and had the opportunity to process their emotions and stressors. This information would provide additional support for best practice guidelines and considerations for utilizing the DASS-21 self-report to monitor internalizing symptoms.

Second, although two to three daily ratings were typically sufficient to produce a dependable estimate of youth functioning, there may be advantages to collecting DASS-21 data more frequently. For example, the DASS-21 can be used to inform intervention, offering insight into functioning over the previous days or week. This information can then be used to initiate an unscheduled meeting (e.g., if responses indicated a concern or reduction in functioning), to help structure the next scheduled meeting (e.g., prompting youth to share more information about specific ratings), and/or to select specific intervention strategies (e.g., focusing on behavioral activation strategies if an adolescent is endorsing elevated levels of depression). Furthermore, some youth in the current study reported that daily ratings were helpful and preferred over weekly ratings. They pointed to the utility in having their therapist see daily data as it accurately captured their changing symptoms and experiences. Thus, clinicians may want to consider engaging in individualized conversations with clients about their preference and frequency of ratings. Clinicians highlighted client-related barriers as those most significant, including client burden and refusal; thus, these are also important conversations to have with clients prior to the onset of frequent progress monitoring. Once data collection has begun, clinicians may also need to modify the progress-monitoring plan to best suit the needs of individual clients, treatment plans, and intervention specifications. For example, if a client began rating their symptoms on a daily basis, but then the clinician found that ratings were highly stable across days, the clinician may determine it is warranted to rate their behavior once weekly rather than daily.

Conclusion

Although the developers of the DASS-21 noted that the measure could be administered according to various time frames depending on interest, they did not provide a recommended interval nor examples. Furthermore, the DASS-21 was developed for adult use, and though the developers and other researchers have indicated the utility of the DASS-21 to assess youth symptoms (e.g., Moore et al., 2017; Patrick et al., 2010; Silva et al., 2016; Szabó, 2010; Tully et al., 2009; Willemsen et al., 2011, etc.), there has been mixed evidence supporting the applicability to adolescents. Thus, this study provides a unique contribution to the literature regarding the dependability of the DASS-21 and necessary frequency of administration in an adolescent sample. Additionally, this study highlights the generally positive regard youth and clinicians have towards the use of the DASS-21 as a progress-monitoring measure. Overall, results of this study suggest that the DASS-21 has the potential to be a highly feasible, practical, and effective tool that clinical providers can use to inform treatment planning and monitor progress of youth over time.

Footnotes

Authors’ Note

This research was completed to fulfill the dissertation requirement for the first author.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.