Abstract

The purpose of this study was to identify the current state of artificial intelligence (AI) policies in U.S. education and propose actionable recommendations through large language model–based topic modeling and Delphi surveys. Out of 12 policy documents released between 2015 and 2025, only two documents (National Center for Learning Disabilities, 2024; W.A. v. Clarksville/Montgomery County School System, 2024) specifically addressed learning disabilities. Policy documents addressing topics such as AI-driven risk assessment, data protection, legal risk management, and ethical guidelines covering other disabilities and general AI in education policy were provided as baselines that could be discussed and validated through the following Delphi surveys involving 17 experts from diverse stakeholder groups. A total of 36 policy items across five thematic categories of inclusive and personalized learning (11 items), ethics, equity, and inclusion (nine items), student empowerment and AI literacy (six items), assessment and research (six items), and educator preparation (four items) were proposed. Based on experts’ ranking of the top 10 policy items important for students, the most essential policy suggestions include student empowerment and AI literacy.

Keywords

Introduction

In today’s technology-rich classroom, students with specific learning disabilities (SLD) are supported through a range of assistive and instructional technologies. Students with SLD have historically utilized various assistive and instructional technologies to support their access to the curriculum and facilitate their ability to perform actions that would otherwise be impossible for them (Perelmutter et al., 2017). One of the policies that allows students to access various technologies is through the Assistive Technology Act (2004). The Assistive Technology Act is designed to provide full inclusion for people with disabilities by developing appropriate assistive technology resources. Together with the Individuals with Disabilities Education Improvement Act (IDEA, 2004), these acts provide the legal basis for supporting students with disabilities through evidence-based technology that enhances their ability to access the curriculum.

Research has consistently demonstrated that assistive and instructional technologies lead to positive outcomes for students with disabilities, supporting their academic achievement, communication, and independence (Perelmutter et al., 2017). Building on this foundation, recent advances in artificial intelligence (AI) offer new opportunities to enhance these outcomes for students with SLD. Since the public release of AI tools, education systems have rapidly adopted these technologies, resulting in significant shifts in teaching and learning. In today’s educational landscape, classrooms are evolving into a new phase where students can access information instantly, generate content, develop ideas, and receive real-time academic support (UNESCO, 2023).

AI in education involves the use of machine learning algorithms and computational models that stimulate human-like decision-making to enhance instructional delivery, student engagement, and learning outcomes (Luckin et al., 2016). AI offers a wide range of benefits to students and teachers, including tailoring learning experiences to individual cognitive and learning profiles (Goyibova et al., 2025). These include personalized learning, adaptive instruction, differentiated materials, formative assessment, and language support (Goyibova et al., 2025). For example, AI can adjust reading materials to lower Lexile levels, making content more accessible for students who require individualized support. By tailoring instruction to students’ cognitive and learning profiles, AI enables more targeted and practical teaching (Office of Educational Technology, 2023; UNESCO, 2023).

AI in Education for Students With SLD

As interest in AI grows, emerging trends in special education reveal how these tools are beginning to shape teaching practices and improve outcomes for students with SLD (Bressane et al., 2024; Carter et al., 2023). AI applications like generative AI (e.g., ChatGPT and Sora), adaptive learning systems (e.g., DreamBox and Knewton), and predictive analytics are being leveraged to personalize instruction, deliver real-time feedback, and identify students who may need early intervention (Holmes & Porayska-Pomsta, 2024; Kasneci et al., 2023). Auto-feedback tools embedded in learning platforms (e.g., Khan Academy and ASSISTments) deliver immediate corrective feedback that fosters conceptual understanding and positive student outcomes (Heffernan & Heffernan, 2014). These tools process and generate natural language and images, enabling dialogue, summarization, and customized explanations and feedback in writing tasks for students with disabilities (Marino et al., 2023).

Recent studies have also highlighted the expanding role of AI in supporting students with SLD through enhanced classroom instruction, improved study strategies, and the development of personalized assistive tools (Bressane et al., 2024). In particular, AI-driven tools are being used to streamline the assessment process, provide immediate feedback, reduce bias, and promote fairness (Trajkovski & Hayes, 2025). Furthermore, reducing cognitive overload, improving engagement, and addressing instructional gaps for struggling learners can lead to improvements in a student’s knowledge retention over time (Naseer & Khawaja, 2025). These innovations are particularly critical for students with SLD who often benefit from tailored instruction, repetition, and consistent feedback, which is well supported by AI-driven technologies (Harkins-Brown et al., 2025). Recent advances in AI show great promise in expanding access and improving outcomes for learners with SLD.

While traditional assistive and instructional technologies have been integrated into practice, the rapid emergence of AI tools presents both opportunities and concerns (Akgun & Greenhow, 2022; Marino et al., 2023). These AI platforms are not yet evidence-based and often lack the research needed to validate their effectiveness. Currently, there are no formal guidelines to help educators, practitioners, students, or families. The recent influx of AI tools has left educators without clear, evidence-based guidelines for their implementation. This lack of clear evidence leads to challenges in determining the most effective way to allocate limited time and resources to support students with SLD (Panjwani-Charania & Zhai, 2024).

Ethical Considerations and Legal Risks Related to AI

While the use of AI is inevitable in this technology-rich era, it can potentially benefit students with SLD; however, the ethical implications and risks posed by AI systems should be carefully considered (Dwivedi et al., 2021). Several ethical concerns in AI algorithms arise due to the existing power structures and biases against marginalized groups being used as input to machine learning models (Krutka et al., 2019), which exacerbate the existing biases against these groups (Tilmes, 2022).

The first ethical challenge surrounding the use of AI with students with SLD is to ensure students’ privacy (Regan & Jesse, 2019). Students with disabilities, including those with SLD, are more vulnerable to privacy and consent issues since their teachers need to handle privacy-sensitive personal information. Considering the recent surge in AI use in education and the limited professional development available, many teachers may lack the necessary skills and training needed to integrate AI ethically into their instructional practices (Alwaqdani, 2025).

The next concern is that AI requires advanced technology tools, which can be costly. Thus, the availability and affordability of technology can exacerbate disparities in AI adoption between wealthy and impoverished schools, creating further digital divides based on the socioeconomic status of students and schools (Katona & Gyonyoru, 2025). While not specific to students with SLD, the literature so far highlights the need for careful ethics-related consideration in the use of AI, especially for students with SLD, inclusive education, addressing gaps in bias, privacy, and teacher training, and the digital divide to ensure that AI can benefit students with SLD (e.g., Alwaqdani, 2025; Regan & Jesse, 2019; Tilmes, 2022).

The last concern is overreliance of students on AI. Regardless of a student’s background, AI must be handled carefully to avoid AI doing the work for the student, whereby students graduate with limited skills to function independently (W.A. v. Clarksville/Montgomery County School System, 2024). These growing concerns and rapid shifts call not only for technological adaptation but also for strategic policy responses that harness AI’s potential while mitigating its risks and ensuring its ethical use.

Current Void and Need for AI-Specific Policy

While the use of AI holds great potential to support students with SLD, it is vital to develop AI-specific policies that inform educational planning processes in alignment with IDEA. At the heart of students educational planning is the requirement to provide students with evidence-based instruction to meet their goals that are “appropriately ambitious in light of [their] circumstances. . .[with] goals [that] may differ, but every child should have the chance to meet challenging objectives” (Endrew F. v. Douglas County School District, 2017, p. 14). To date, while there is minimal case law concerning the use of AI in the PreK–12 setting, there is the notable case of a high school student who was granted access to AI technology yet graduated unable to read. AI was used as accommodation; unfortunately, the court determined that, despite the use of technology, the student was not provided with free, appropriate public education (FAPE). Appropriate policies addressing the implementation of AI can support the needs of students with SLD while also addressing concerns regarding FAPE, security, and accessibility (Al-Zahrani, 2024; Selvam & Vallejo, 2025).

Developing AI policy guidelines is a challenging task because policy design does not follow a standardized process (Ewoldt et al., 2020). School personnel need to recognize that a critical part of the policy development process involves communicating with collaborators centered on their partnerships and understanding (Allen & Kendeou, 2023). Although relationships are a key component of creating sound policy, it behooves educators “to consider legal mandates as a critical component of successful policy implementation” (Ewoldt et al., 2020, p. 227). Within these volatile and dynamic relationships, establishing a sound policy for AI in education for students with SLD can facilitate ongoing communication among local members and school leaders (Ewoldt et al., 2020).

Filling this void of AI policy in education, there is an immediate need to gather voices from stakeholders and identify urgent concerns and needs related to the use of AI tools with students with SLD. The first step was to analyze existing policy and legal documents, which can provide insight into the current literature. To critically interpret the results of this analysis, it is essential to consider the perspectives of stakeholders, including parents, administrators, educators, students, and researchers. Seeking the perspectives of these experts and guidelines can provide support to validate what is missing in the current policy (McNamara et al., 2025; Shin et al., 2025). The present study addresses this need by seeking expert consensus on key policy considerations, thereby providing a foundation for future decision-making processes of practitioners, educators, and students regarding the utilization of AI to enhance outcomes beyond school doors and prepare them for successful transition into post-secondary education and career readiness.

Study Purpose and Research Questions

The purpose of this study is to identify current AI policies in education for students with SLD and propose actionable recommendations on AI policy based on policy document analysis and Delphi surveys. We investigated the following research questions:

Method

The research team employed comprehensive topic modeling and developed actionable AI policy recommendations through two rounds of Delphi surveys (Beiderbeck et al., 2021; Shin et al., 2025). Prior to conducting a Delphi study, we analyzed the existing AI policy documents to determine the current status of policy topics related to students with SLD. This analysis also aimed to identify gaps between policies related to students with SLD and students with other disabilities, or toward AI in education in general.

This study was completed in five phases: (a) policy document analysis, (b) recruitment of expert panel, (c) developing and conducting Round 1 Delphi survey, (d) developing and conducting Round 2 Delphi survey, and (e) finalizing and disseminating the policy items. In each phase, the research team met virtually via Zoom to discuss the method, data collection, and data analysis, which incorporated both human and generative AI components. Figure S1 in the online supplemental materials depicts the flowchart of this method.

Data Collection and Procedures

Phase 1. Policy Document Analysis

We initialized the conceptualization of research and developed preliminary AI policy action items by analyzing existing policy documents. For the search for policy documents, we employed two search strategies. We conducted an online database search through Westlaw, which provides legal research services and records for the publication years from January 2015 to March 2025. The key terms of “artificial intelligence*” AND (educat* OR “special educat*” OR instruct* OR teach*) were used. Then, to broadly include any publicly available online policy documents, we conducted an additional search of these expressions through Google and manually screened web pages and documents until we did not identify any relevant data.

The inclusion criteria included the following: (a) the policy is available to the public and in the U.S. context; (b) the policy focuses on AI; (c) the type of documents includes case, regulations, laws, acts, and federal or national disability-related organizations’ policy recommendations, and (c) the policy includes education as one policy consideration. We excluded it when the policy was based on global contexts, was from outside the United States, was not publicly available, or did not focus on AI in education. During the initial search before the formal database search, we identified a limited number of policy documents that addressed AI issues for and with individuals with disabilities, particularly those with SLD. Thus, while developing our preliminary survey times, we comprehensively included policy educations that met these inclusion criteria as our foundational policy datasets to consider. Additionally, to generalize our policy recommendations to a broad range of stakeholders in the community, we have covered policies inclusively, drawing on nationwide organizations, federal-level regulations, and relevant cases.

Initially, a total of 6,338 records were found via Westlaw. After reviewing the title and policy description in the database, we excluded policy documents that did not meet our criteria from 5,350 secondary sources, 503 proposed and enacted legislations, 118 briefs, 121 proposed and adopted regulations, 33 administrative decisions and guidance, 195 regulations, and 12 cases, and screened 6 records. Then, after the web search through Google, we manually screened 12 additional records. After thoroughly reviewing all 18 policy documents, we removed 6 records (67%) because they did not specifically target AI (n = 3), did not focus on education (n = 2), or did not include policy (n = 1). Thus, we include 12 policy documents for our in-depth text mining analysis for preliminary AI policy items used in Round 1 of the Delphi survey. We achieved 100% inter-rater reliability by calculating the number of agreed-upon documents divided by the total number of 18 documents, then multiplying by 100.

We reviewed 12 policy documents and manually extracted paragraphs that included “artificial intelligence.” “education,” “instruction,” “teaching,” “learning,” “student,” “children,” or “teacher” to the Google spreadsheet. Based on expert judgment, we also included any contextual paragraphs that supported the AI policy in education and content for individuals with SLD in the policy statements. When each policy document included terms related to “disability” and “SLD,” we coded this as separate document-level variables. To assess the state of policy issues that overlapped and were distinct across documents and topics, the team computed semantic similarities by comparing their vector representations, derived from topic modeling of the corpus on SLD, other disabilities, and general AI in education documents. We extracted relevant policy features using natural language processing (NLP) techniques and topic modeling. In RStudio, the team utilized the reticulate R package (Ushey et al., 2025) to import Sentence-BERT (i.e., the all-MiniLM-L6-v2 model), which generates 384-dimensional embeddings (Reimers & Gurevych, 2019), and calculated the semantic similarity between policy documents and topics.

Phase 2: Recruitment of Expert Panel

Expert panel participants were recruited through a purposive sampling approach. The recruitment process was guided by the goal of representing a range of perspectives from individuals with both experience and professional expertise in special education, learning disabilities, and AI in education. After receiving research approval from the Institutional Review Board team, we recruited education experts based on five qualifications were invited: (1) parents or adults with disabilities who advocate for the rights of individuals with learning disabilities; (2) a school-based administrator with demonstrated expertise in special education; (3) special education or general education teachers who actively integrate AI tools into their classroom instruction when working with students with learning disabilities; (4) university faculty members with academic expertise at the intersection of special education and AI as a teaching and learning tool for students with SLD; and (5) industry personnel or researchers who conduct research at the intersection of education, AI, and learning diversity.

Experts were identified through academic networks, professional associations, and relevant organizations to create a diverse and knowledgeable panel. To maintain methodological rigor while supporting meaningful consensus-building, we set a minimum target of ten expert participants for our Delphi panel. This decision was guided by recommendations in prior methodological research on Delphi studies, which indicates that panels comprising at least ten experts can achieve reliable and stable results (Franc et al., 2023). A total of 23 experts were identified and invited via email based on the above criteria. After sending our invitation email to the expected participants, we got consent before the two rounds of surveys. At each round, participants had 4 weeks to respond through the online Qualtrics system voluntarily. All data were deidentified and exported as an Excel file from this online platform. Following the completion of Round 1, all 17 experts were invited to participate in Round 2. A total of 14 experts responded and completed Round 2 of the Delphi process.

Phase 3: Developing and Conducting Round 1 Delphi Survey

A total of two rounds of Delphi surveys were conducted. In each round, we provided survey items to be evaluated through Qualtrics using an anonymous online link. Based on the policy document analysis and review of 12 policy documents in Phase 1, the team developed Round 1 Delphi survey items, applying the same NLP and topic modeling. To identify the optimal number of topics, we evaluated goodness-of-fit via held-out likelihood (the log probability of topics assigned in the test set, validating topics in the training set), residuals (the difference between the predicted and expected topic predictions), semantic coherence (co-occurrence of words in a topic), and lower bound (lower bound of the marginal log-likelihood) through a searchK() function in the stm R package (Roberts et al., 2019; Rodriguez & Storer, 2020; Shin et al., 2023). Researchers manually reviewed and revised each of the machine-generated survey items, resulting in a total of 32 policy items being included in the Round 1 Delphi survey. Then, the team provided 32 items to experts for rating and indicating their level of agreement with each policy item. They also selected the 10 most important policy items and provided additional recommendations in one open-ended question. All Likert scale items were based on a 5-point scale (1 = strongly not relevant to 5 = strongly relevant), and one open-ended question was asked about experts’ perspectives and concerns regarding the use of AI in the context of special education for students with SLD.

Phase 4: Developing and Conducting Round 2 Delphi Survey

In Round 2, items validated by education experts through Round 1 were included. Additionally, newly identified questions were added based on the analysis of open-ended responses in the previous round. Keeping the same quantitative and qualitative survey question formats, researchers included both Likert scale and open-ended written responses. Based on open-ended questions from the Round 1 survey, we applied the same procedures (machine-generated and human-manual validation). We created 14 new items to rate (on a 5-point scale) in Round 2 and included all items retained in both rounds to select the top 10 important items. Additionally, in the last question, we provided experts with an optional question to suggest refinements that would improve the clarity, wording, or specificity of any items.

Phase 5: Finalizing and Disseminating the Policy Items

The finally agreed-upon actionable AI policy recommendations were disseminated to the community through a public website at https://mshin77.github.io/AI_policy_LD. The final policy items were disseminated and downloadable in PDF format, meeting open (i.e., non-proprietary) standards. All R code used for data analysis is publicly available through the Open Science Framework, and all versions are maintained in the GitHub repository.

Data Analysis

Natural Language Processing and Topic Model for Text Data

During the policy document analysis in the Phase 1 and two rounds of Delphi surveys in Phases 3 and 4, we preprocessed the textual data in three steps: (1) constructing a corpus by combining extracted text columns in the spreadsheet, (2) constructing a token object by segmenting complex policy text into smaller words; and (3) constructing a document–feature matrix that displays the tokenized words for each policy document. Unnecessary words and phrases were removed, as were special characters and stop words that did not require a special meaning. Instead, 10 commonly observed multi-word expressions (e.g., “artificial intelligence”) were added to the corpus. During the two rounds of Delphi surveys, the same text preprocessing was employed. The quanteda (Benoit et al., 2018) and TextAnalysisR (Shin, 2025) R packages were used in all these procedures.

We employed a topic modeling technique to derive an interpretable topic structure that informed the development of initial policy items. Topic modeling is a machine learning analysis technique that derives topics by using the latent Dirichlet allocation technique based on a three-level (e.g., word, topic, and document) hierarchical Bayesian model (Blei et al., 2003). Incorporating structural topic modeling (Roberts et al., 2014), we uncovered the hidden patterns in AI policy documents. We considered whether the document covers the issue of people with disabilities as a document-level variable (Roberts et al., 2019). We reviewed the policy items with a high theta (θ) value, representing the probability of each policy question appearing in an individual topic using the stm R package (Roberts et al., 2019). Finally, we used semi-automatic item label generation in three steps. The research team first developed system prompts (e.g., see Figure S2) that support the expected behavior of the OpenAI agent and incorporated these into an automated function in the data analysis code. Then, we applied this one-shot prompting to generate survey item questions via OpenAI’s API, “gpt-3.5-turbo” and “gpt-4o” LLMs (OpenAI, 2025b), weighting the top 10 words extracted for each topic with high beta (β) values. Lastly, we manually reviewed and edited items until the team got 100% consensus on each question.

Decision-Making Rule of Selecting Policy Items

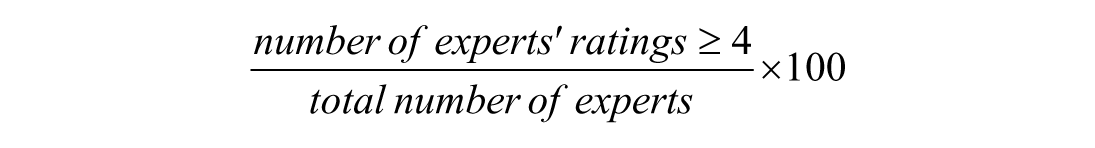

After collecting the response from Round 1 and 2 sequentially, researchers reviewed and applied the following dual criteria for each item, whether to retain, consider, and revise/remove it at teach time: (a) retaining when 75% or more of experts agreed with at least 4 points (out of 5) and with at least 0.60 content validity ratio (CVR); (b) considering when 70% or more of experts agreed to rate at least 4 points with at least 0.60 CVR; (c) considering when 75% or more of experts agreed with at least 4 point with less than 0.60 CVR; and (d) revising or removing when otherwise. For each policy item, expert agreement was calculated by calculating the percentage of experts who rated at least 4 points (4 = agree or 5 = strongly agree) on the 5-point Likert scale using the following formula:

Additionally, CVR was calculated using the following formula (Lawshe, 1975):

Theme Generation and Classification of Actionable Policy Items

Following the two rounds of Delphi surveys, we conducted an additional thematic analysis of policy items that met the decision-making rules in two steps. We first considered the top 10 policy items, as rated most important by experts. Then, the team developed two sequenced prompts and utilized OpenAI’s ChatGPT (“gpt-4o”) for unsupervised zero-shot theme generation covering policy items (OpenAI, 2025a; Vaswani et al., 2017). We reviewed and validated all machine-recommended categories, identifying a total of five themes that could encompass the currently proposed policy items. Four authors reached consensus on these five themes. Then, we re-prompted ChatGPT to reclassify the entire policy items using these predefined policy themes.

Results

RQ1. Existing Body of Policy in U.S. Policy Documents Related to AI in Education

Table S1 in the online supplemental materials depicts the summary of policy document characteristics. Out of the 12 included policy documents, 4 were federal policy reports, followed by 2 cases, 2 proposed acts, 2 policy reports from national disability-related organizations, 1 regulation, and 1 enacted law. Additionally, out of 12, 7 targeted students with disabilities other than SLD (as per the other disability policy hereafter) and 2 specified considerations for students with SLD (as per the SLD policy hereafter). Three documents only reported AI in education without specifying these target groups (general policy hereafter).

As shown in Figure S3, SLD policy documents showed slightly more similarity compared to other disability policy documents (M = 0.50, range = 0.29–0.74) than to general policy documents (M = 0.42, range = 0.22–0.54). Document-level similarity analysis represented one unique SLD policy document (W.A. v. Clarksville/Montgomery County School System, 2024) with minimal semantic overlap (similarity range = 0.22–0.51) compared to documents in other categories (other disabilities and general categories). Conversely, the other SLD policy document (National Center for Learning Disabilities, 2024) exhibited cross-policy content as demonstrated by a relatively higher similarity (similarity range = 0.47–0.74). Additionally, content from three other disability (National Artificial Intelligence Initiative Act of 2020, 2020; Office of Science and Technology Policy, 2022; U.S. Congress, 2019) and three general (Artificial Intelligence Research and Education, 2021; Harris v. Adams, 2024; NSF AI Education Act, 2024) policy documents showed less than 0.6 similarity with SLD policy documents, representing potential policy gaps not represented in SLD policy.

Table S2 in the online supplemental materials summarizes emerging policy topics and top term probabilities across three policy domains: SLD, other disabilities, and general AI in education. As shown in Figure S4, the semantic similarities of the policy topics were compared based on the numeric representations of a topic as a vector in a multidimensional space (Reimers & Gurevych, 2019). Three topics of technology in education for students with disabilities, writing and reading technology for students, and monitoring online student activities with AI were found to represent cross-policy topics, yet with other disability policy topics only with moderate to high semantic similarity (maximum similarity = 0.60–0.64). Additionally, seven topics related to AI-based student activity monitoring, student-centered technology for dyslexia support, assistive software for read-aloud and writing support, and workplace accommodations and task assignments were identified as unique SLD policy topics, each displaying low similarity to topics in other domains (maximum similarity = 0.49–0.60). A total of 18 policy topics were identified as missing from SLD policies, despite being present in other disability (n = 8) and general (n = 10) policy domains. Specifically, other disability policy topics, such as AI-driven risk assessment, adaptive learning systems, data protection and automated processing in AI systems, legal risk management, AI development in educational learning tools, and AI resources for student engagement and support (with a maximum similarity of 0.31–0.56), were also missing in the SLD policies. Furthermore, other topics addressed in general AI in education, lacking in SLD policies, included AI in higher education programs, AI guidelines for students in schools, AI use and ethical guidelines, and AI-driven student project implementation in schools, which were also shown to be missing in SLD policy (maximum similarity = 0.21–0.59).

RQ2. Actionable Recommendations of AI Policy in Education for Stakeholders

Table S3 depicts the demographics of expert participants in two rounds of Delphi surveys. The majority of experts were located in the Northeast (41.2%), followed by the South (23.5%), the Midwest (17.6%), and the West (17.6%). The panel consisted of 15 women (88.2%) and 2 men (11.8%). Experts held multiple positions, including university faculty (47.1%), followed by researchers (35.3%), special education teachers (35.3%), parents (29.4%), general education teachers (23.5%), adults with disabilities (11.8%), and industry professionals (11.8%). They engaged at a wide range of school levels, including college or university (52.9%), high school (35.3%), middle school (29.4%), elementary school (41.2%), kindergarten (29.4%), and early childhood or Pre-K (11.8%). Most (94.1%) did not identify as Spanish/Hispanic/Latino; only one expert did. More than half of the experts were White (64.7%), followed by Black/African American (17.6%), Asian (17.6%), and Native Hawaiian or Pacific Islander (5.9%).

In Round 1 of the Delphi study, out of 32 policy items, five items (Q10, Q17, Q22, Q23, and Q25) were removed based on our predefined decision rule, resulting in 27 items to be retained. Figures S5 and S6 depict the distribution of responses by each question. This rule incorporated both experts’ consensus levels and CVR thresholds to determine item retention. These items did not meet the minimum criteria for agreement (75%) or CVR (.60) and were therefore excluded from further consideration. In Round 2, researchers identified 14 additional policy items based on open-ended responses from Round 1 and subsequently removed five of these (Q3, Q4, Q8, Q9, and Q14), resulting in nine items to be included in this second round. Through these two rounds of surveys, a total of 36 policy items were included in the final actionable recommendations of AI policy in education for students with SLD.

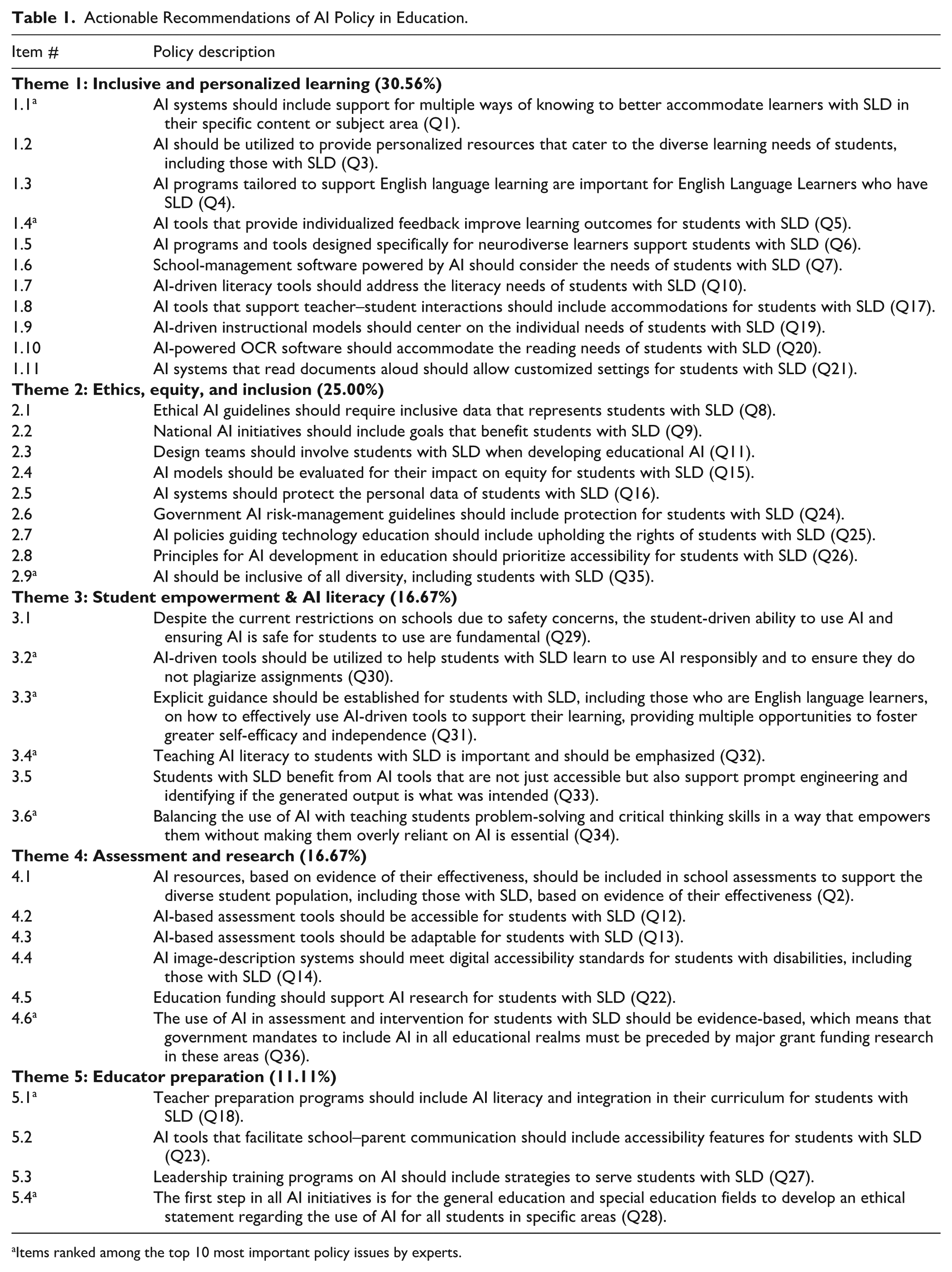

Based on the thematic analysis of the 10 highest-ranked policy items, five overarching themes emerged: (1) Inclusive and Personalized Learning, (2) Ethics, Equity, and Inclusion, (3) Student Empowerment and AI Literacy, (4) Assessment and Research, and (5) Educator Preparation. These themes were ranked by frequency of appearance among the top 10 items, providing a data-driven foundation for our thematic framework. See Table 1 for the categorization of each policy item under its respective themes.

Actionable Recommendations of AI Policy in Education.

Items ranked among the top 10 most important policy issues by experts.

Theme 1. Inclusive and Personalized Learning

The most common theme identified by experts was Inclusive and Personalized Learning, which emphasizes the role of AI in tailoring education to accommodate the needs of students with SLD. Experts agreed that AI should be used to provide individualized support that personalizes the learning experience, ensuring students receive instruction tailored to their strengths and challenges (Q3, Q5, and Q21). This includes tools specifically designed for neurodiverse learners (Q6), as well as AI programs that support English learners with SLD by addressing both content comprehension and language development (Q4). Furthermore, AI systems that support multiple ways of knowing and learning (Q1) should be accompanied by tools that provide accessible formats, such as text-to-speech and OCR functions, to enhance reading access (Q10, Q20, and Q21). AI tools should also promote meaningful teacher–student interactions by offering support for real-time feedback and communication (Q17 and Q19), while ensuring students can customize and control how the AI engages with their learning preferences (Q7).

Theme 2. Ethics, Privacy, and Inclusion

The second theme was Ethics, Privacy, and Inclusion, reflecting expert concern for safeguarding the ethical use of AI and protecting the rights of students with SLD. Experts emphasized the need for clear, sector-wide ethical guidelines to ensure that AI guidelines should require inclusive data representing students with SLD and call for national AI initiatives to explicitly include goals that benefit students with SLD (Q8, Q9, and Q35). Protecting students’ personal data was highlighted as a critical priority, with a call for privacy to be a central consideration in the design and implementation of AI systems (Q16). Experts also emphasized the importance of robust government oversight, advocating for risk management policies and inclusive AI regulations that safeguard the rights of students with SLD (Q25 and Q24). The inclusion of students with SLD in the development of educational AI was viewed as essential for building equitable AI systems that serve all learners (Q11). Experts recognized the need to evaluate the broader equity impacts of AI for students with SLD (Q15).

Theme 3. Student Empowerment and AI Literacy

The third theme that emerged was Student Empowerment and AI Literacy, highlighting the importance of teaching students with SLD, including those who are English language learners, how to use AI tools effectively, responsibly, ethically, and independently. These include offering clear guidance on how to engage with these tools in ways that support learning rather than replacing essential cognitive and academic processes (Q31). There was also concern about academic integrity, with calls to ensure that students understand how to avoid plagiarism and misuse of AI in support of their learning (Q30). Fostering self-efficacy, independence, and decision-making in AI usage was viewed as essential, particularly through opportunities that help students develop confidence and competence over time (Q31 and Q34). Incorporating AI literacy into instruction for students with SLD was recognized as critical (Q32) and a means to enable them to evaluate the accuracy and appropriateness of AI outputs, thereby enhancing their prompt engineering skills (Q33). This also includes ensuring that AI tools used in education are safe, inclusive, and appropriate for student engagement (Q29).

Theme 4. Assessment and Research

The fourth theme, Assessment and Research, emphasizes the importance of utilizing AI tools in evidence-based, accessible, and unbiased ways for students with SLD. Experts noted that AI should be included in school assessments only when there is strong evidence of its effectiveness (Q2). Accessible and adaptable assessment tools are crucial for meeting the diverse needs of students with SLD (Q13 and Q14). There was also a clear call for government policies and mandates related to AI in education to be grounded in rigorous research and supported by major funding initiatives (Q22 and Q36). The expert panel emphasized the importance of assessing the impact of AI tools on equity to ensure equitable outcomes for all students (Q15).

Theme 5. Educator Preparation

The theme of Educator Preparation highlights the importance of ensuring that teachers and school leaders are equipped to effectively integrate AI in ways that support students with SLD. Although it was less frequently mentioned among the top-rated items, experts agreed that preparing educators is essential for the successful and ethical use of AI in schools. They emphasized that teacher preparation programs should include AI literacy and practical strategies for using AI tools to support diverse learners (Q18). Leaders should ensure that communication tools used to facilitate school–home connections are fully accessible (Q23). Additionally, leadership training should address how to implement AI systems that serve students with SLD (Q27), and communication tools powered by AI should include accessibility features to support collaboration with families (Q23).

Discussion

Existing Body of Policy in U.S. Policy Documents Related to AI in Education

Federal law mandates the use of evidence-based practices in interventions for students with disabilities, as well as an assistive technology evaluation, during the development of each student’s Individualized Education Program (IEP; IDEA, 2004). The field of special education provides guidelines for identifying evidence-based practices to guide these decisions (Heyman, 2018). Despite the growing presence of AI in education, a notable absence remains in policies specifically addressing the ethical, equitable, and effective use of these tools for students with SLD.

The identification of SLD-specific policy documents with low similarity scores highlights areas where SLD policies provide unique content, not covered by any other policy documents.

Out of 12 policy documents on AI in education, only 2 targeted students with SLD. Specifically, 1 legal case, W.A. v. Clarksville/Montgomery County School System (2024), addressed unique topics related to students with SLD that were not commonly addressed in other disability or general AI in education policy documents. Thus, topics such as student-centered technology for students with dyslexia and the use of AI as assistive technology for reading and writing instruction address specific needs for students with SLD that were previously missing in other AI education policies.

Additionally, topic modeling based on policy documents for each subset of SLD, other disabilities, and general AI in education policy documents enables the identification of unique, missing, or overlapping policy topics, which can inform subsequent policy issues or recommendations that experts can validate during the following Delphi surveys. The findings noted that the SLD policy consistently lacked concerns related to AI-driven risk assessment, data protection, legal risk management, and ethical guidelines, which were primarily addressed in other domains, such as disabilities and general AI in education policy documents. These gaps indicate policy concerns that are currently missing in the policies for students with SLD and can provide supplemental guidelines for addressing these concerns in students with SLD. Thus, addressing these ethical considerations and legal risks related to AI (Dwivedi et al., 2021; United Nations, 2021) for students with SLD is necessary in developing comprehensive policy recommendations for students with SLD.

Actionable Recommendations of AI Policy in Education for Stakeholders

The findings of this study highlight a pressing need for actionable AI policy recommendations in education for stakeholders. Based on experts’ ranking of the top 10 policy items that are important for students with SLD, the most essential policy suggestions include student empowerment and AI literacy, inclusive and personalized learning, and educator preparation, followed by ethics, equity, and inclusion, as well as assessment and research. This section presents key findings, discusses limitations, outlines future research, and explores practical implications.

First, student empowerment and AI literacy emerged as the most strongly endorsed theme among expert participants, reflecting a shared belief in the importance of equipping students with SLD to use AI tools effectively, ethically, and independently. The use of AI tools is associated with risks, such as overreliance on AI, which may lead to a decline in critical skills, increased standardization and misinformation, and a loss of human engagement, all of which could interfere with classroom dynamics (Alexopoulos, 2024). However, AI tools can also assist special education teachers in supporting students with SLD by providing resources that promote greater independence and inclusivity (August & Tsaima, 2021).

Given concerns about overreliance on AI and the possible loss of opportunities for skill development, critical thinking, and problem-solving (Alexopoulos, 2024), In this study, experts prioritized the need to balance AI use with the development of critical thinking skills, expressing concern about the potential for overreliance on automated tools. Four of the 36 items were ranked among the top 10, emphasizing the importance of teaching AI literacy by providing explicit instruction and guided opportunities to build self-efficacy, and ensuring students understand responsible use, including how to avoid plagiarism. These priorities align with existing research advocating for the intentional integration of AI literacy into K–12 education to foster digital agency and independent learning (Holmes & Porayska-Pomsta, 2024; UNESCO, 2023).

Second, the expert panel agreed on the importance of AI’s role in supporting inclusive and personalized learning opportunities by ranking 2 of the 36 items among the top 10. These findings are consistent with the emphasis of previous researchers on the role of AI (Bressane et al., 2024; Carter et al., 2023; Office of Educational Technology, 2023). Furthermore, AI can help adjust the level of task difficulties by providing adaptive testing and content creation that match students’ cognitive profiles (Bressane et al., 2024). Recent studies noted AI’s role in study strategies, classroom integration, and personalized assistive tools (Bressane et al., 2024; Panjwani-Charania & Zhai, 2024).

Third, experts in this study identified educator preparation as the third most crucial policy theme, emphasizing the need to train both teachers and administrators to understand the potential of AI in supporting students with SLD and to ensure its ethical and effective use in practice. Thoughtful integration of AI tools might help address challenges faced by special education teachers, such as IEP development and instructional planning aligned with IEP goals. However, no clear guidelines have been established to guide the application of AI in the context of special education, such as the IEP process. Preparing educators to understand these functionalities and effectively use AI is a critical step toward ensuring these tools have a positive and meaningful impact on students with disabilities, including those with SLD (Zhang et al., 2024).

Fourth, experts in this study highlighted that ethics, bias, and inclusion are the foundation for developing responsible AI policies that serve all learners, including students with SLD. They stressed the need for equity in data representation, calling for AI tools to reflect the experiences and needs of students with disabilities. Furthermore, experts emphasized that AI policies must be inclusive of all forms of diversity (e.g., disability, language, culture) and involve students with SLD in the co-design of educational AI tools, a critical step toward developing systems that are both equitable and effective. Clear policies are essential to prevent inequalities, protect data privacy, and mitigate algorithmic biases (UNESCO, 2021). They should enhance student outcomes, improve curriculum accessibility, and reduce educators’ administrative burdens. Responsible AI can customize instruction for diverse learners, including those with disabilities, and give teachers more time for meaningful instruction (UNESCO, 2023).

Last, the final theme shared by experts in this study is that the use of AI in assessment and intervention for students with SLD must be grounded in evidence-based practices. Although only one policy item ranked among the top ten among experts, it highlights the importance of ensuring that AI-driven assessment tools are not only effective but also equitable and reliable (Trajkovski & Hayes, 2025). This involves validating tools prior to implementation, monitoring their impact on learning outcomes and equity, and ensuring they are accessible for students with SLD. The experts stressed that tools used to measure student progress or inform instruction must be supported by a strong evidence base and designed to address the diverse needs of students with SLD.

Limitations and Future Research

There were three limitations and considerations for future research related to research methodology. First, one limitation is that we focused on students with SLD during the two rounds of Delphi surveys in educational settings only, excluding validation of AI policy across all other age groups or lifespans, including adult learners. Future researchers can validate each of the proposed actionable recommendations for individuals with SLD in other contexts and at different ages. Second, despite a thorough search of policy documents, our current online database was limited to Westlaw. The additional manual search through the Google search engine could also be biased by popular and high-traffic documents, which may overshadow unpopular opinions. Thus, future researchers can consider other legal platforms, such as Lexis+ and Bloomberg Law, to further explore U.S. federal and state law sources, as well as other types of databases, including ERIC, Web of Science, or Scopus, which provide access to peer-reviewed research on policy issues. Third, the team applied both AI-supported and human-manual validation processes throughout the data collection and procedures. It is noticeable that when we applied one-shot or unsupervised zero-shot prompting for policy item or theme generation (OpenAI, 2025a, 2025b; Vaswani et al., 2017), the immediate machine-recommended output required several validations and check-ups by human researchers in correcting the content and contextual meaning of text. Future researchers can replicate the process through the open data analysis R code shared by the research team and continuously co-design the human-centered AI research methodology.

Practical Implications

There are three practical implications. First, the current study emphasizes that educators should integrate AI literacy into their practices by explicitly teaching students with disabilities how to use AI tools responsibly and utilizing AI to support—not replace—their learning. This is critical to ensure equitable access to emerging technologies while enhancing students’ independence and avoiding overreliance on AI (Alexopoulos, 2024).

Second, the study highlighted the importance of utilizing AI to personalize instruction and assessment to provide differentiated instruction that meets the diverse needs of students with SLD, including those who are neurodiverse or multilingual. For instance, AI tools enable users to effectively manage their level of tasks and language demands. Students can get immediate feedback, adjust the problem-solving process, and self-monitor their learning behaviors using technology, including AI systems (Naseer & Khawaja, 2025; Zhang et al., 2024). To do so, educators should be trained in how to use AI as both an instructional and a learning scaffold for students with SLD.

Third, in developing a specific policy proposal, we identified policy topics through topic modeling across the three policy domains: SLD, other disabilities, and the general area of AI in education. Both document- and topic-level analyses led to the identification of unique SLD policy issues, overlaps, and gaps currently facing these topics. Thus, this policy document analysis could serve as the foundation for the Delphi surveys. The interactive topic modeling and thematic analyses, followed by evaluations from the research team and experts, enhance the validation of the emerging themes. The methodological approach demonstrated in the present study emphasized the practice of human-in-the-loop decision-making (Office of Educational Technology, 2023).

Supplemental Material

sj-docx-1-ldq-10.1177_07319487251412879 – Supplemental material for Addressing the Void of AI Policies in Education for Students With Specific Learning Disabilities

Supplemental material, sj-docx-1-ldq-10.1177_07319487251412879 for Addressing the Void of AI Policies in Education for Students With Specific Learning Disabilities by Mikyung Shin, Fatmana Deniz, Latesha Watson, Cynthia Dieterich, Kathy B. Ewoldt, Friggita Johnson, Jennifer E. Kong, Sung Hee Lee and April Whitehurst in Learning Disability Quarterly

Footnotes

Acknowledgements

This study was a joint effort of the Information and Communication Technology Committee of the Council for Learning Disabilities. We thank the participating experts for contributing their expertise on the topics discussed.

Author contributions

MS, FD, and LW led the study conceptualization, secured IRB approval, conducted data collection and analysis, and drafted the results and discussion sections. The other authors, listed alphabetically, contributed to data collection, performed the literature review, and supported data analysis and manuscript development. All authors reviewed, validated, and approved the final manuscript.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

ORCID iDs

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.