Abstract

Implicit bias reduction has become an increasingly popular feature of so-called ‘diversity training’ in both public and private organizations. It remains popular, despite a lack of robust evidence suggesting that it is possible to accomplish lasting changes to individual implicit bias. In addition, previous research relies almost entirely on laboratory experiments; almost nothing is known about the scope of these findings. The present study aims to engage theoretically and empirically in this debate. An implicit bias reduction intervention developed by psychologist Patricia Devine will be tested and approached from a sociological perspective. The study is based on an experiment setting with social workers at 13 Swedish social assistance units. Beyond testing the scope of this particular intervention, the study highlights some of the methodological problems that come with the unrealistic standards of exact replication within the social sciences. The results show that the intervention increased the participants’ awareness of prejudice and implicit bias, but it did not reduce the participants’ implicit biases. The scientific and social policy consequences of these findings are discussed.

Introduction

Implicit bias – automatic, nondeliberate, favorable, or unfavorable mental representations of social categories such as ethnicity, race, or gender (e.g. Greenwald and Banaji, 1995) – has been shown to predict discrimination in a wide range of settings (for a recent meta-analysis, see Kurdi et al., 2019).

From the perspective of policymakers and employers devoted to increased workplace diversity and equal opportunity, the prospect of implicit bias poses a different challenge than explicitly held attitudes since it is difficult to address biases that people have a limited awareness of. As key actors in the realization of equal opportunity and diversity in the labor market and working life, employers have tried to increase diversity and improve equal opportunity in their organizations in two ways: by improving organizational procedures and routines and by trying to align employees’ attitudes and behavior with those of the organization through antibias/diversity training (Dobbin and Kalev, 2017; Stephens et al., 2020).

Enrolling the organization in diversity training signals, both to the outside world and to the employees, that an organization is committed to diversity and equal opportunity. Diversity training has become the favored tool of employers to address these issues (Dobbin and Kalev, 2018; Noon, 2018), even though evidence of positive effects of diversity training is considered mixed (Bezrukova et al., 2016; Paluck et al., 2021; Paluck and Green, 2009) or even weak (Dobbin and Kalev, 2017, 2018; Stephens et al., 2020).

Diversity training has traditionally focused on explicitly held biases/attitudes, but within the US and British context, implicit bias training is increasingly being incorporated into commercial diversity training toolkits (Dobbin and Kalev, 2018; Noon, 2018; Paluck et al., 2021). A potential problem with this development is that although a wide range of cognitive exercises has been developed to reduce or control implicit bias (for a review, see Lai et al., 2013), it seems difficult to reduce implicit bias beyond the training session (Lai et al., 2016). In addition to this, these techniques have not been thoroughly tested in natural settings, that is, outside of the psychology laboratory (Greenwald et al., 2022b: 20). Thus, little is known about the scope of implicit bias reduction programs, a limitation that this field shares with other laboratory experiment-based social sciences (Jackson and Cox, 2013).

It seems fair to assume that the premature implementation of implicit bias reduction into diversity training (for a similar view, see Paluck et al., 2021) is, at least in part, a consequence of the general tendency within the scientific community to treat findings from laboratory experiments as generalizable, in the absence of results based on other types of data (Henrich et al., 2010; Jackson and Cox, 2013). This issue is not addressed by recent replicability projects (Aarts et al., 2015; Camerer et al., 2018), which merely tries to establish whether research findings have occurred by chance (repeatability). From a sociological perspective, generalizability is primarily a question of scope: in what context, with what sample, and under what conditions does an experiment replicate (Freese and Peterson, 2017)? Addressing the question of scope is crucial for the implementation of implicit bias reduction into diversity training, especially since both of these fields have recently been the subject of rather sharp criticism (e.g. Dobbin and Kalev, 2018; Noon, 2018).

This study aims to contribute to this endeavor by testing the scope of an implicit bias reduction program that has reduced implicit racial bias over the long-term among university students, Patricia Devine’s et al. (2012) ‘Prejudice Habit-Breaking Intervention’. The study tests the intervention concerning implicit bias against Muslims (Rooth, 2010) with Swedish social workers as participants. Social work was chosen to introduce the intervention to participants who are likely to be willing to reduce their implicit biases; social workers are known to be moral actors (e.g. Zacka, 2017), dedicated to help society’s most vulnerable members (Lipsky, 1980; Meeuwisse et al., 2011).

The study is outlined as follows. First, I provide a contextual background to diversity training and implicit bias reduction in relation to repeatability and scope. Thereafter, the choice to evaluate Devine’s et al. (2012) Prejudice Habit-Breaking Intervention and other design considerations is motivated. This section is followed by an account of data and methods, and a presentation of the results. The study findings are summarized and discussed in the concluding discussion.

Diversity training

Diversity, or antibias training (from here on jointly referred to with the broader term ‘diversity training’) involves educational programs aiming to facilitate positive intergroup relations and to reduce racism and discrimination within organizations. It thus encompasses a variety of different types of prejudice-reducing, equality, and diversity-promoting programs and interventions (for reviews, see Bezrukova et al., 2012, 2016; Paluck et al., 2021; Paluck and Green, 2009). Diversity training was first introduced in organizations on a large scale in the 1970s in the US context, to meet the goals of the antidiscrimination policies that were set to mitigate some of the pervasive racial injustices of American society. Today, it is a frequent component of organizations’ broader diversity management programs, and a thriving industry, in the US (Kelly and Dobbin, 1998), as well as, for instance, in the UK (Noon, 2018).

Despite its popularity among many employers, diversity training has in recent years been subject to criticism. It has been attacked by the political right, who question the value of investing in diversity. 1 However, diversity training has also been criticized by scholars committed to ethnoracial and gender equality. The critical diversity management literature has emphasized that some diversity initiatives, implemented by HR specialists belonging to advantaged social categories, tend to reinforce already existing social hierarchies (e.g. Fernando, 2021). Another line of critique has noted the comparatively scant evidence supporting its efficiency in terms of measurable organizational change or in changing organizational culture. Diversity training has, accordingly, been described as inefficient (Dobbin and Kalev, 2018), ‘pointless’ (Noon, 2018), ‘symbolic’ (Edelman, 1992), or even as ‘harmful’, in the cases where it backfires (Kidder et al., 2004). Drawing on longitudinal data from large private firms in the US, Kalev et al. (2006) show, for one, that enrolling managers in diversity training has not increased the share of female or minority managers.

However, positive effects of less widespread diversity programs may be drowned out in such data if more popular programs are inefficient or even have negative effects. As argued by Dobbin and Kalev (2017), no one, neither diversity proponents within organizations, nor employers, really knows what diversity management strategies really work due to a lack of scientific evaluation. As a result, diversity proponents have at times championed for initiatives that have been inefficient, or even harmful. For instance, while mandatory training may intuitively seem like the way forward by rounding up those employees who need it the most, Dobbin et al. (2015) found that only voluntary diversity training increased management diversity in American firms.

Assessments of the efficacy of diversity training also vary by outcome measures, that is, by how efficiency is defined and operationalized. When diversity training is evaluated on attitudinal or behavioral measures, meta-reviews have drawn generally positive conclusions about efficiency (see Bezrukova et al., 2016: 1237) on diversity training and Paluck et al. (2021) on prejudice reduction). The range of interventions and programs that are covered by such assessments also vary greatly depending on how diversity training is defined. Paluck et al. (2021) categorize only six out of 309 prejudice reduction studies as diversity training, using emic ascription to round up this specific category. Bezrukova et al. (2016: 1233), on the other hand, identified 236 diversity training studies in their review. Thus, it should come as no surprise that such reviews differ in their overall judgment.

While Paluck and colleagues are generally positive about the recent developments in the field of prejudice reduction, they identify a shortage of studies testing for long-term effects (see Bezrukova et al., 2012 for a similar conclusion). This is a significant gap in the literature since the societal relevance of diversity training clearly hinges on the extent to which effects linger. As already mentioned, the current study specifically aims to contribute toward the filling of this gap.

Reducing implicit bias

As mentioned in the introduction, ‘implicit bias’ refers to an automatic, nondeliberate, favorable, or unfavorable mental representation of a social category such as ethnicity, race, or gender. 2 Explicit bias, on the other hand, refers to the opposite; nonautomatic, deliberate, and cognitively effortful evaluations of a phenomenon or category; an explicitly held attitude, stereotype, or belief (Devine, 1989; Fazio and Olson, 2003; Greenwald and Banaji, 1995). The division of cognition into implicit/automatic and explicit/deliberate cognition has predominantly been conceptualized through two distinct cognitive processes: one automatic and associative, (Gawronski and Bodenhausen, 2006; Greenwald and Banaji, 1995). Implicit evaluations guide behavior when there is no time for deliberation, i.e. during much of everyday life. However, it also impacts deliberate behavior, which is predicted by both implicit and explicit evaluations (see, e.g. Kurdi et al., 2019).

Implicit ethnoracial bias is shaped by the larger societal context of individuals (i.e. through various socialization processes); higher exposure to positively connotated images, information, and social interactions with the in-group compared with out-groups results in more positive automatic evaluations of the in-group. Such imbalance may, in principle, be caused by ethnoracial segregation alone since segregation inevitably results in overexposure to the in-group for both advantaged majority groups and disadvantaged minority groups. Segregation – residential, school, and workplace – inevitably leads to a larger number of meaningful social interactions and relationships with in-group members (e.g. Blau, 1977; Mouw and Entwisle, 2006), contributing to a comparatively more nuanced perception of the in-group (e.g. Allport, 1979 [1954]). Exposure to negatively connotated images and information – cultural stereotypes about minorities – affects all members of society, minority individuals included. This is illustrated in the findings that some stigmatized minorities express weaker implicit in-group bias than majority groups (Nosek et al., 2007). Imbalance in exposure to positive and negative information about in- and out-groups, or majority and minority groups, influences concept accessibility by affecting the ease with which individuals automatically link these groups to positive and negative concepts (Brownstein et al., 2020; Payne et al., 2017). The production of such imbalance is a persistent feature of Western societies. Thus, implicit ethnoracial bias results from both socioeconomic structures and dominant culture (Hehman et al., 2018; Quillian, 2008). Concept accessibility may also vary across situational contexts (e.g. encountering a stigmatized minority individual in a dark alley or at a table in an upscale restaurant) (Wittenbrink et al., 2001) and by the recency of a negative exposure (Park et al., 2007), e.g. media focus on a sudden Islamic terror attack.

However, implicit bias cannot be reduced to a macrolevel phenomenon since social context influences individuals differently. Personality, for instance, is the difference in how people react to situations, it is not a general, context-free individual difference (Brownstein et al., 2020). Thus, while societies vary in terms of the overall levels of implicit biases they ‘produce’, there is also a highly individual component to concept accessibility, as shown in the numerous studies documenting between-person differences in implicit bias, including correlations between implicit racial bias and various demographic characteristics (Nosek et al., 2007) and political sympathies (Greenwald et al., 2009). Thus, attempts to reduce implicit bias are meaningful both at the micro and macrolevel. To sum up, implicit out-group bias is a feature of societies, but its salience varies over situations, and it rubs off with varying magnitude at the individual level (Payne et al., 2017).

Implicit cognition has been a tacit component of theories of culture and social inequality in several disciplines of the social sciences (for anthropology, see e.g. D’Andrade, 1995; for sociology, see e.g. Bonilla-Silva, 2017 [2003]; Bourdieu, 1984; Giddens, 1984; Ridgeway, 2011). It is, however, within psychology that implicit bias has received the most attention, and this is where robust techniques for studying it empirically have been developed, such as the implicit association test, the ‘IAT’ (Greenwald et al., 1998). The IAT is a behavioral test in which implicit bias is measured as the differences in response latencies in the pairing of social categories (like photographs of White and Black people) with positively and negatively connotated words. 3 The IAT has received widespread attention for identifying a discrepancy between most test-takers’ implicit and explicit racial attitudes, revealing negative implicit out-group biases in people with explicit egalitarian ethnoracial attitudes across contexts (Nosek et al., 2007). It was not the first test developed to measure implicit bias, but it was with the introduction of the IAT – easy to use, interpret, and replicate – that the field exploded, and it remains the most widely employed measure (for a comparison of different tests, see Bar-Anan and Nosek, 2014; Miles et al., 2019).

Within the implicit bias field, a more activist subfield has emerged, aiming for social change. Expanding on the tradition of documenting and explaining (cf. Gagnon et al., 2022) implicit bias, a wide range of cognitive training techniques have been developed that aims to enable individuals to take control over unintended thoughts and behavior. These techniques target the automatic cognitive processes that link in- and out-groups with automatic positive and negative associations or propositions, either by retraining implicit evaluations, by shifting the context of evaluation, or by preventing biased responding (Lai et al., 2013).

Most implicit bias-reduction techniques have been designed as brief ‘one-shot interventions’ evaluated in randomized laboratory experiments where: i) all participants (treatment and control groups) take an IAT, ii) the participants under the treatment condition are exposed to the intervention, and iii) all participants take a new IAT. These elements i)–iii) are typically conducted in a single session. The effects of the interventions are assessed through a comparison of group change in implicit bias score, the ‘D-score’. Numerous studies have shown that these one-shot interventions efficiently reduce implicit bias in the short term; several studies have also identified lingering effects (Lai et al., 2013).

Another type of intervention posits that for implicit bias reduction to have lingering effects, interventions or training must be more long-lasting and effortful. This is in line with previous claims that concept accessibility is not affected in the long term by single events (Forscher et al., 2019; Greenwald et al., 2022b; Lai et al., 2016). In a field experiment, Rudman et al. (2001) showed that participation in a seminar series on intergroup relations (reducing bias by persuasion) had reduced implicit bias among the participants at the end of the semester. In a natural experiment in which college students were randomly assigned White or Black roommates (reducing bias by increasing intergroup contact), Shook and Fazio (2008) showed that White college students who were assigned Black roommates had reduced levels of implicit biases at the end of the semester. Dasgupta and Asgari (2004) showed that undergraduate women who had more contact with female instructors (evaluative conditioning, i.e. reducing bias by changing evaluative associations) had weaker implicit associations between men and leadership, 1 year later. Finally, Devine et al.’s (2012) Prejudice Habit-Breaking Intervention program reduced students’ implicit racial bias through the participation in an implicit bias-reduction workshop and through training with the techniques learned during the workshop over several weeks. This study indicates that the techniques used in one-shot interventions may have lingering effects, if practiced repeatedly.

Repeatability and scope of implicit bias reduction

While a wide range of implicit bias reduction strategies have been developed, only 4% of the studies covered in the metareview by Forscher et al. (2019) measure long-term implicit bias change. Second, in a study designed as a ‘research contest’, several research groups collaborated to compare the long-term efficiency of eight one-shot techniques that had previously been identified as efficient in the short term (Lai et al., 2014, 2016). The results were discouraging; none of the interventions reduced implicit bias beyond 24 hours.

From the perspective of common sense, it is not surprising that brief interventions do not have the power to permanently alter the effects of long-term socialization processes. To quote US Vice-President Kamala Harris: ‘there is no vaccine for racism’. 4 Just like a sudden terror attack can temporarily enhance concept accessibility between ‘Islam – Bad’, as discussed earlier, single-occasion positive out-group exposure will also only impact concept accessibility momentarily. If it is at all possible to permanently reduce implicit bias, one should look to the interventions that are longer lasting or that require more of an effort on behalf of the participants. There have been almost no replication attempts of these interventions, with the exception of Devine et al’.s (2012) Prejudice Habit-Breaking Intervention (from here on abbreviated as PHBI). Both Forscher et al. (2017) and Carnes et al. (2015) failed to replicate the long-term implicit bias malleability findings of Devine et al. (2012), but both studies reported changes to explicit and/or behavioral measures (which are also a part of the PHBIs outcome measures). However, Lai and Lisnek (2023) did not find lasting effects on self-report measures in a diversity training program taken by police officers, strongly inspired by the PHBI. Interestingly, a recent working paper from the Devine group claims to have successfully replicated also the implicit bias reduction component (Cox et al., 2022, preprint). Since this study is not yet published, these results are referred to here as tentative evidence. In sum, given this rather mixed bag of evidence, both the repeatability and scope of the PHBI when it comes to altering implicit measures are currently uncertain (for a critical discussion on implicit bias reduction, see Greenwald et al., 2022b).

Laboratory experiments are often criticized for their artificiality and for relying on homogeneous convenience samples, leaving the generalizability (e.g. Borgetta and Borhnstedt, 1974; Henrich et al., 2010), or scope (Freese and Peterson, 2017) of these findings unaddressed. A major strength of the long-lasting interventions is that they are typically conducted in the field (albeit in educational settings), increasing the likelihood of a wider scope. Nonetheless, relying on student samples remains a severe limitation. In a meta-review comprising 7000 studies, Peterson (2001) found that US college students differ significantly from other populations on approximately 50% of the compared variables, attitude change included. Students may thus deviate from other samples on both ability and motivation to reduce implicit bias. Hence, before incorporating any intervention that has been successful within the educational setting into diversity training, or before basing social policy on successful attempts to reduce implicit bias, the scope of these interventions has to be addressed.

Design considerations

Choosing an intervention and a workplace context

Enrolling all employees in diversity training is expensive, requiring organizations to pause regular activities. Therefore, it is typically conducted only occasionally (e.g. Dobbin and Kalev, 2018), and the courses offered to organizations by diversity consultants are often ‘light touch interventions’ (Paluck et al., 2021): 2-hour lectures or half-day workshops. Against this backdrop, organizational constraints rule out most long-term interventions accounted for above as unviable for implementation at workplaces, with the exception of Devine et al’.s (2012) Prejudice Habit-Breaking Intervention (from here on ‘PHBI’). It has an on-the-job-training format by design, 5 and it is highlighted by Dobbin and Kalev (2018) as one of the most promising implicit bias reduction programs for implementation in organizations.

The PHBI, which targets both explicit and implicit attitudes, likens implicit bias to a deeply entrenched habit that has been developed through lifelong socialization processes, which is why the overcoming of implicit bias must be a long-spun or effortful process. The key elements of the PHBI involve learning about the contexts that activate bias, how to replace the biased response with nonbiased responses, and how to discover biases and practice nonbiased responses. In other words, overcoming implicit bias concerns developing an ‘unbiased habit’.

In the Devine et al. (2012) study, all participants received feedback on their individual IAT scores to create awareness of their own implicit biases and to spur an incentive to reduce them. The treatment group thereafter participated in the PHBI program in which they were informed about what implicit bias is, and how it is related to behavior. Thereafter, the program described five established implicit bias-reducing strategies and how to use them in everyday life. The strategies were: i) stereotype replacement, ii) counter-stereotypic imaging, iii) individuating, iv) perspective-taking, and v) increasing contact. Thus, the program includes both strategies aiming at the retraining of implicit evaluations (i, ii, and v) and preventing biased responding (iii and iv). The participants were encouraged to work with the strategies as best they could and to report on their use of these strategies. The effect of the intervention was measured through a treatment – control group comparison of implicit (IAT) and explicit racial attitude change, comparing measures prior to participation with measures 4 and 8 weeks after the intervention. The experiment group successfully reduced their implicit racial biases compared with the control group with an effect size of −0.19. There were also improvements to explicit measures, such as increased personal awareness of one’s own implicit bias.

The PHBI hinges upon the participants being motivated to engage with implicit bias reduction training. There are several occupational contexts where such motivation can be assumed to be high; social work was chosen since it is a profession dedicated to helping society’s most vulnerable members. Social workers are, by education as well as experience, generally well aware that they are gatekeepers of society’s scarce resources, and that their evaluations impact their clients’ life chances (e.g. Lipsky, 1980; Meeuwisse et al., 2011; Zacka, 2017). Thus, social workers seemed like a group that would find implicit bias reduction important.

Implementation

Ideally, a study of scope should keep the experiment design identical to the design that it evaluates. However, this is in practice virtually impossible since applying a study designed to fit one specific context rarely fits perfectly in another context (Nosek and Errington 2020; see also Fernando, 2021 for a critical discussion on perceiving diversity management programs as universally implementable). This study is no exception; several minor deviations from the original design have been deemed necessary to fit the new context. First, since the primary objective of this article is to study the possibility of reducing implicit bias, I have focused on D-score change. Several of the explicit measures of the PHBI did not translate well into the Swedish language and context and have therefore been replaced by other measures. 6

Adapting the intervention to the Swedish context

Sweden is a country of rather recent immigration from a wide range of countries, resulting in an ethnically heterogeneous population. Thus, ethnoracial relations do not revolve around the White-Black distinction in the way that it does in the context in which the IAT was initially developed, the US. This study has identified Muslims originating from the larger Middle East as the main target of xenophobic attitudes in Sweden. This has also been the choice of previous research on implicit bias in the Swedish context (see Rooth, 2010). Thus, the case has been changed from a racial White/Black IAT to an ethnoreligious Swedish/Muslim IAT. Changing of in-group and out-group categories should have little relevance for the interventions potential to reduce implicit bias (Petty et al., 2007). However, in the translation of the intervention to concern bias against Muslims, the technique Counter stereotypical imagining, a technique that aims to strengthen the concept accessibility of the out-group with ‘Good’ by thinking about either admired famous people or appreciated people from the individual’s social network who are African American, had to be replaced since there are very few, if any, widely admired Muslims in Sweden. This is in part a sign of the severity of the stigmatization of Muslims (Gardell, 2010), in part due to Sweden’s short history as a multicultural society. Since it would be difficult for the participants to use this strategy; it was replaced with a similar technique, Implementing intentions (Lai et al., 2016). It prevents biased responding by training an automatic positive response to out-groups, for example, ‘Each time I see or meet a person who is a Muslim, I shall think ‘Good’’.

Adapting the intervention to the workplace setting of social work

Receiving individual IAT feedback about having implicit out-group bias is an unpleasant experience that challenges the self-image of people with explicitly egalitarian attitudes, often resulting in defensive reactions (Schlachter and Rolf, 2017). In the context of social work, learning about individual implicit bias may also be perceived as a threat to social workers' professionalism’ as public officers, additionally enhancing the risk of defensive reactions. Efforts to ameliorate such defensive responses to the IAT were made in two ways. First, the original PHBI provided the participants with individual feedback on their D-scores to enhance participant motivation. The participants were instead informed of the average D-score of the work unit, motivating the participants to reduce implicit bias together. Providing feedback at the group level also seemed more ethical since the first IAT was part of mandatory training (the storing of their data was, of course, voluntary).

A second concern with defensive reactions to the IAT concerns its potential impact on the quality of the data, both in terms of attrition and in terms of ‘noise’ in the outcome measure. Previous research has shown that ‘test anxiety’ (Schmidt and Rinolo, 1999) impacts test performance negatively, that is, participants' true skills are obscured because of stress at the moment of measurement. The study tried to prevent test-anxiety by informing the participants that most people acquire some level of implicit bias through exposure to cultural stereotypes, that is, that it is not their ‘fault’ if they have implicit bias.

Finally, to increase the chances that the managers accepted participation and to lower the risk of high attrition, the training period was reduced from 4 to 2 weeks.

Study design and data collection

The study is a controlled field experiment in which all participants (treatment and control groups) received a link by email to an online survey that included a Swedish – Muslim IAT and a survey with demographic questions and other self-reported measures. One to five days after distribution of the survey, the research team visited the workplaces that had been assigned to the treatment condition. After introducing the research project, the participants conducted the PHBI program individually on their computers. The program combines written text, audio, and shorter written assignments. When the participants had completed the intervention, the research team and the participants met up for a Q&A. The participants were encouraged to practice the five strategies as much as possible for 2 weeks. A ‘diary’ was distributed in which they were asked to document their use of the techniques. They also had access to a website where they could take the program again and read summaries of the five strategies. The website also had online material with which they could practice some of the strategies. They could also communicate with the research team in a chat function on the project website. When the 2 weeks had passed, the participants received an email with a link to Survey 2 with a new, identical, IAT. Some items from Survey 1 recurred to measure change, and some were new, including questions on participant activity and an anxious personality scale to capture the prevalence of test stress/stereotype threat. An open-ended questionnaire item where the participants could give feedback on their experiences of participating in the study has been used as a validation of the research findings. Participation in the control condition involved filling out Survey 1 and Survey 2 minus questions directly related to the intervention within the same time frame as the treatment groups. After completing Survey 2, the control groups were given access to the project website.

Data were collected from the fall of 2017 until the fall of 2018. An email including information about the research project and an invitation to participate in the study was first sent to social assistance offices in the two chosen metropolitan areas, offices that were selected randomly. Thereafter, the office managers were contacted by telephone. The original intent was to randomly assign social assistance offices to the experiment conditions. This procedure was mostly followed, but in a few cases, the office managers only agreed to participate if they were assigned the treatment condition because they wanted the employees to get the workshop. 7 Since recruitment to this rather time-consuming study was difficult, I accepted this. However, there is no within-organization selection – the participants in these municipalities have all been assigned the treatment condition, the managers have not assigned specific work units to different experiment conditions. Allocation to experimental conditions has thus been conducted according to a near-random assignment approach, that is, assignment has been arbitrary rather than random (Paluck and Green, 2009).

It was thus the managers who accepted participation, not the individual social assistance officers. From the perspective of the managers in the treatment condition, employee participation in the workshop was mandatory diversity training, but participation as research participants was, of course, voluntary. 8 The participants actively chose whether to agree on the storing of their data upon entering and completing participation. Another way to opt out of the study was in practice not to fill out the second survey.

Thirteen work units in 8 municipalities (social assistance in Sweden is organized at the municipal level) located in metropolitan areas participated in the study. The work units varied in size, from around 10 to 40 employees. The aim was to focus on social work units that handle social assistance (i.e. financial support). The larger municipalities have work units focused on social assistance alone, whereas the smaller ones had work units that were not specialized (i.e. the same work unit dealt with social assistance and other social problems).

One hundred twenty-five social workers completed the entire study. The participant sample is heterogeneous in terms of demographic characteristics; it is varied in terms of age, 62% are female (a high share of males for social work), 75% report having at least a bachelor’s degree, and 13% are foreign-born. There was, as in many longitudinal experiments, significant attrition; 224 participants filled out Survey 1, resulting in an attrition rate of 44%. The drop-out analysis reveals no dramatic differences in demographic characteristics between those who dropped out and those who completed participation. The mean D-score upon entering participation was, for instance, similar for those who dropped out and those who completed participation (0.42 and 0.47; see Supplemental Material Appendix B for more details).

Constructing the IAT

The IAT was constructed following the recommendations of Greenwald et al. (2003) and thus included seven test blocks. Four of these blocks, which included 40 trials in each block, were used to calculate the participants’ D-scores. In the trials, the attribute (positive, negative) and the targets (in-group, out-group) were strictly alternated. In the IAT, Muslim and Swedish identity was signaled through names. The measure of implicit bias strength was established with the algorithm recommended by Greenwald et al. (2003). A positive D-score implies faster response time when Swedish names co-occur with positively connotated words and when Muslim names co-occur with negatively connotated words compared with the reverse (see Supplemental Material Appendix A for a more detailed account of the procedure and for the names and words used). To avoid unnecessary noise (the sample is rather small), the IATs were not counterbalanced. That is, the order in which the pairing tasks (either Muslim name + Good and Swedish name + Bad, or Muslim name + Bad and Swedish name + Good) occurred was not randomized (see Greenwald et al., 2003). All participants began with the ‘incompatible’ block (Swedish name + Bad, Muslim names + Good). Hence, the average D-score may be somewhat higher than it otherwise would have been. Since the study focus is implicit bias change, the primary focus has been to reduce noise, not to identify the participants’ ‘true’ D-score (which is also influenced by other features of the tests (Bursell and Olsson, 2020).

Variables and statistical analysis

The primary focus of the empirical analyses is the effect on the D-score of participating in the treatment condition. Analyses are also conducted on two explicit attitudinal outcome measures: awareness of prejudice at the (participants’) workplace and awareness of individual prejudice. Both variables are indexes of attitudinal measures on the two topics. The former captures changes between t1 and t2, while the latter was only measured at t2 (see Supplemental Material Appendix C). The explanatory variable concerns experiment conditions, in the analysis labeled ‘Treatment’ with ‘Control’ as the reference category. The effect on Post D-scores of participating in the treatment condition is assessed with ANCOVA, with Baseline D-scores as covariate, in line with the recommendations of Greenwald et al. (2022a).

Results

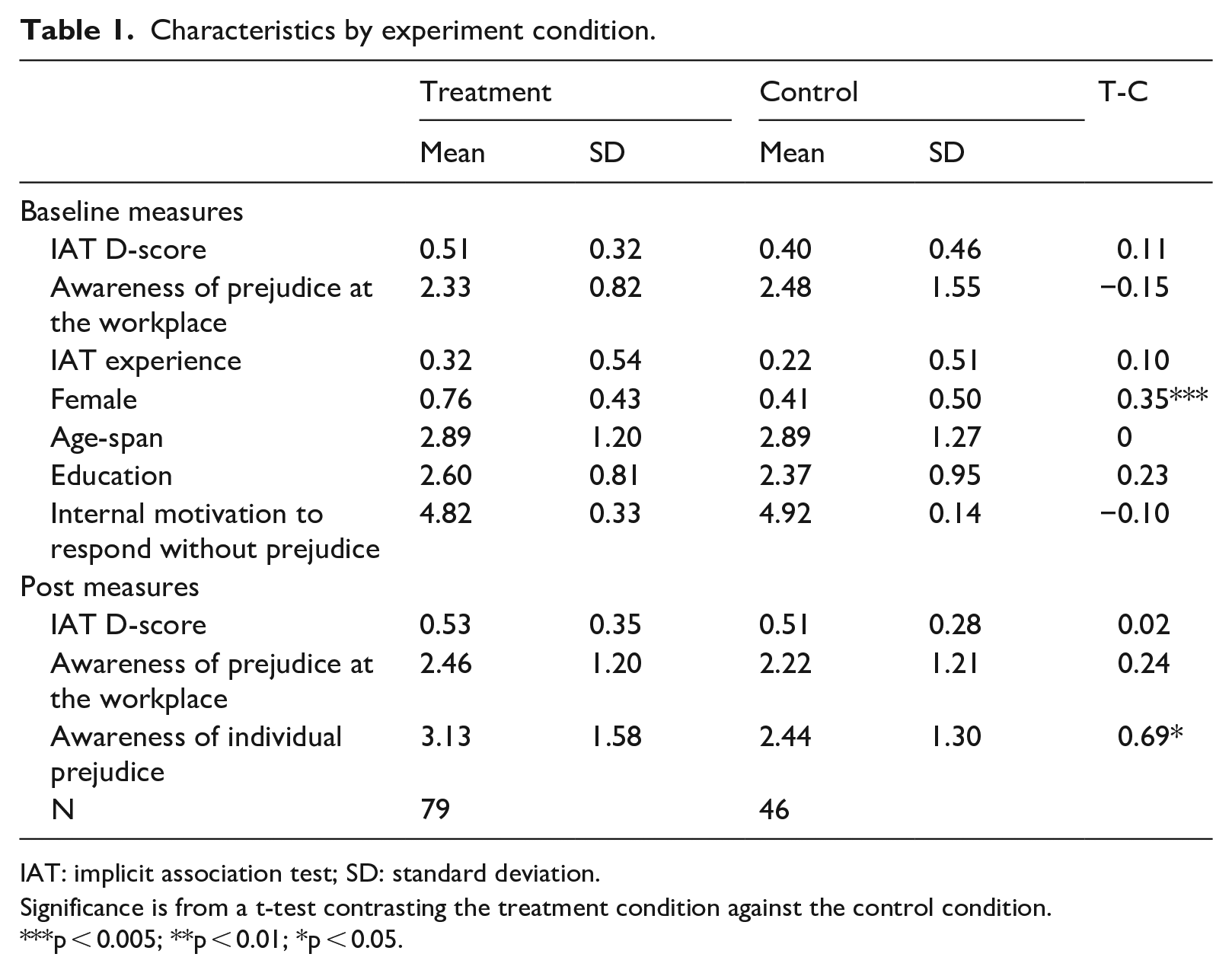

The descriptive characteristics of the treatment and the control group are presented in Table 1, the upper panel displays the baseline measures, and the lower panel displays the measures that were collected 2 weeks after the intervention. In non-random samples, there are often some baseline differences in characteristics between the experiment conditions. In this study, there is a statistically significant gender difference between the experiment conditions; there are more women in the experiment group than in the control group. However, the correlations between all the study variables in Table 2 show that gender does not correlate significantly with any other study variables. Thus, it is unlikely that the gender differences between the groups impact the results in any significant way. Table 1 also reveals a difference in Baseline D-scores, but it is not statistically significant (not even at the 10% level).

Characteristics by experiment condition.

IAT: implicit association test; SD: standard deviation.

Significance is from a t-test contrasting the treatment condition against the control condition.

p < 0.005; **p < 0.01; *p < 0.05.

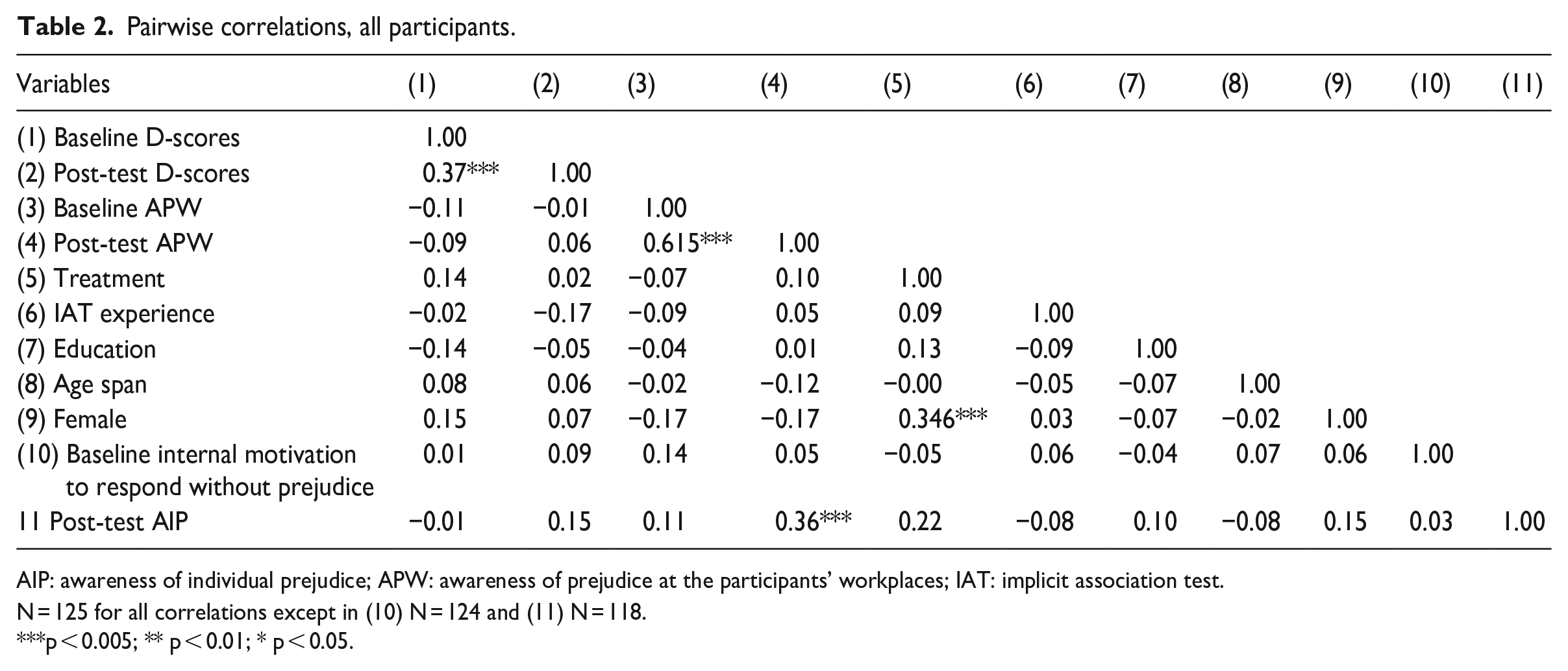

Pairwise correlations, all participants.

AIP: awareness of individual prejudice; APW: awareness of prejudice at the participants’ workplaces; IAT: implicit association test.

N = 125 for all correlations except in (10) N = 124 and (11) N = 118.

p < 0.005; ** p < 0.01; * p < 0.05.

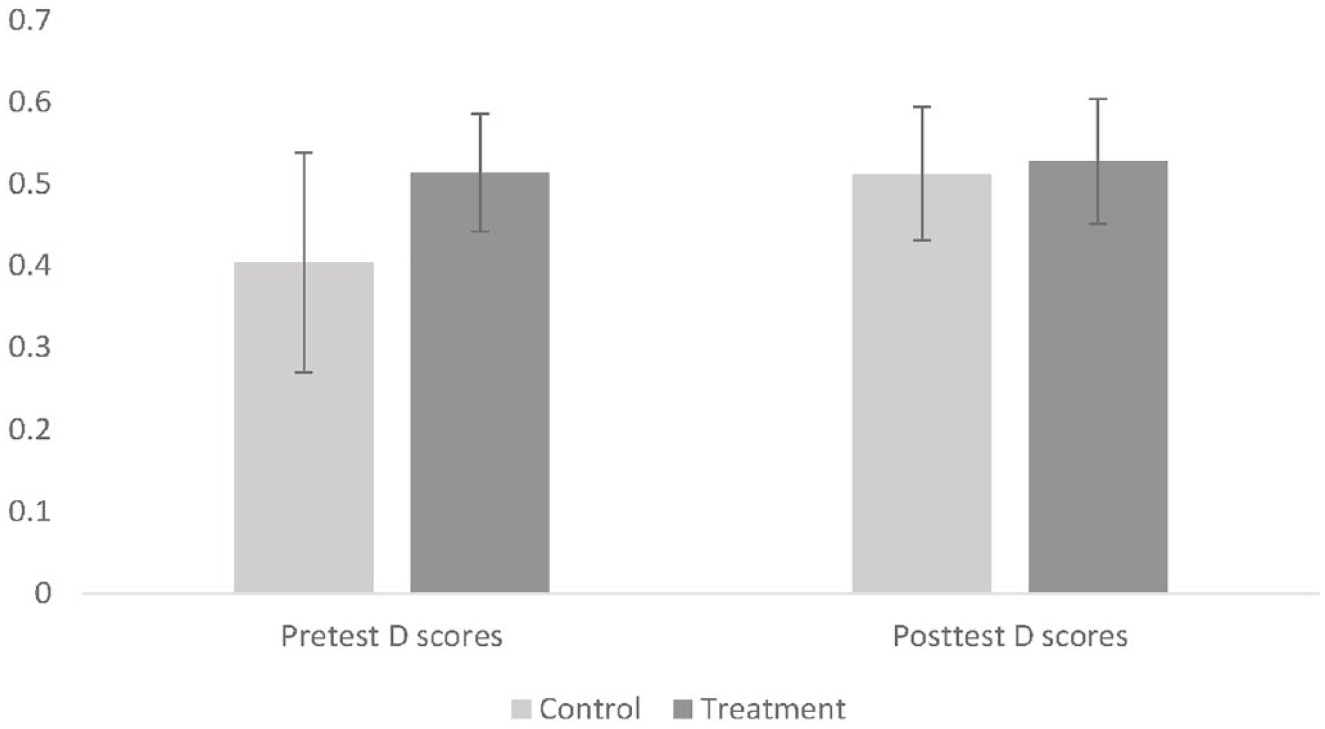

Implicit bias change

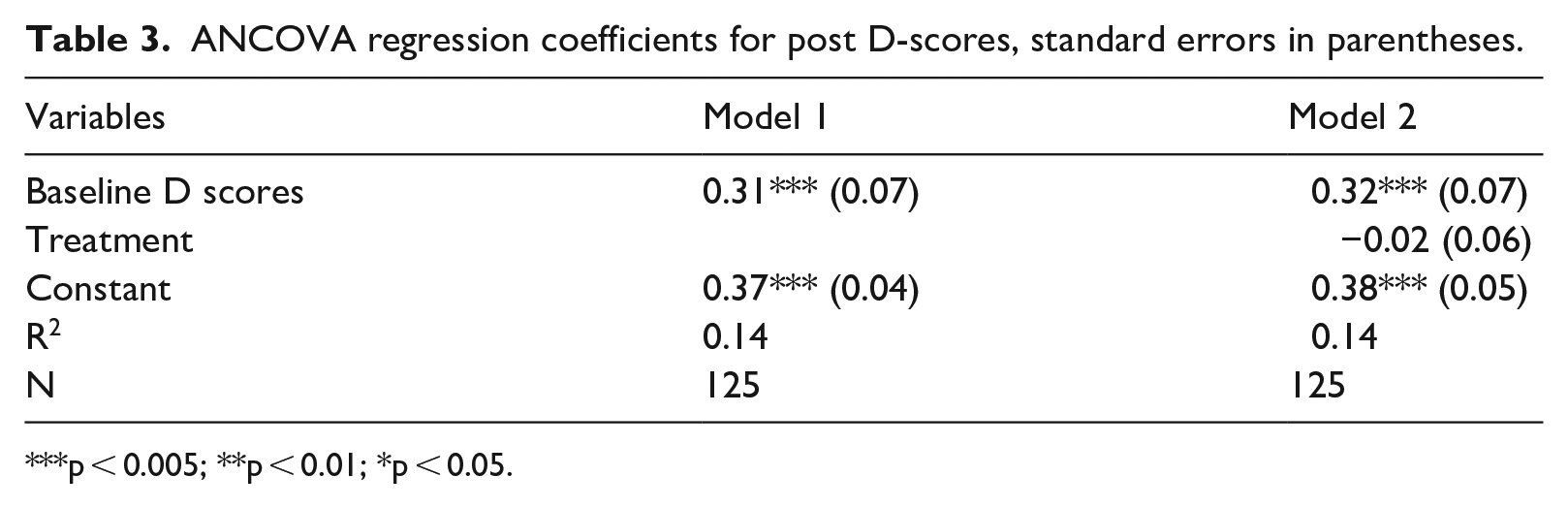

The question of primary interest of this study is implicit bias reduction, that is, whether the intervention reduces IAT D-scores. D-scores were measured at both baseline and post-test, enabling the identification of actual change as a result of the intervention. Figure 1 illustrates the mean D-score change reported in Table 1 by experiment condition. It shows that there is little observable change in mean D-scores in the treatment category, and a small increase in D-score in the control group. The effect of participation in the treatment category has also been analyzed with a hierarchical regression (ANCOVA), where Baseline D-scores was introduced in Model 1, and the Treatment variable in Model 2, to assess the effect of each independent variable. Baseline D-scores significantly predicts post-test D-scores F(1,123) = 19.84, p > 0.005. The regression coefficient of 0.31 (p = 0.0002) and the model’s R2 is 0.13 (see Table 3 below). As anticipated based on the information in Figure 1, treatment has no effect at all on the participants D-score F(2, 122) = 0.12, p > 0.76, n2 = 0.001. The regression coefficient for treatment presented in Table 3 is weak, 0.02, and statistically insignificant. Thus, participating in the intervention does not impact mean D-scores in the treatment category.

Mean D scores at pre-test and post-test by experiment condition with 95% confidence intervals.

ANCOVA regression coefficients for post D-scores, standard errors in parentheses.

p < 0.005; **p < 0.01; *p < 0.05.

Explicit awareness change

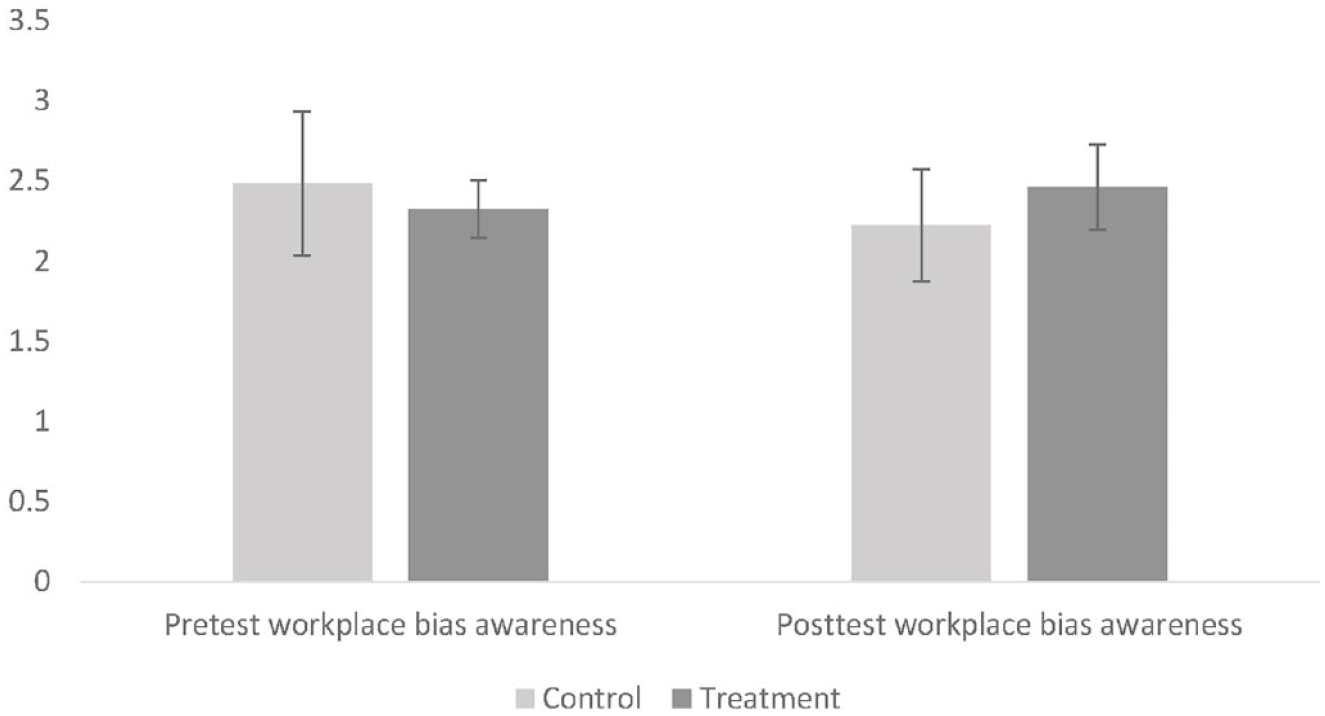

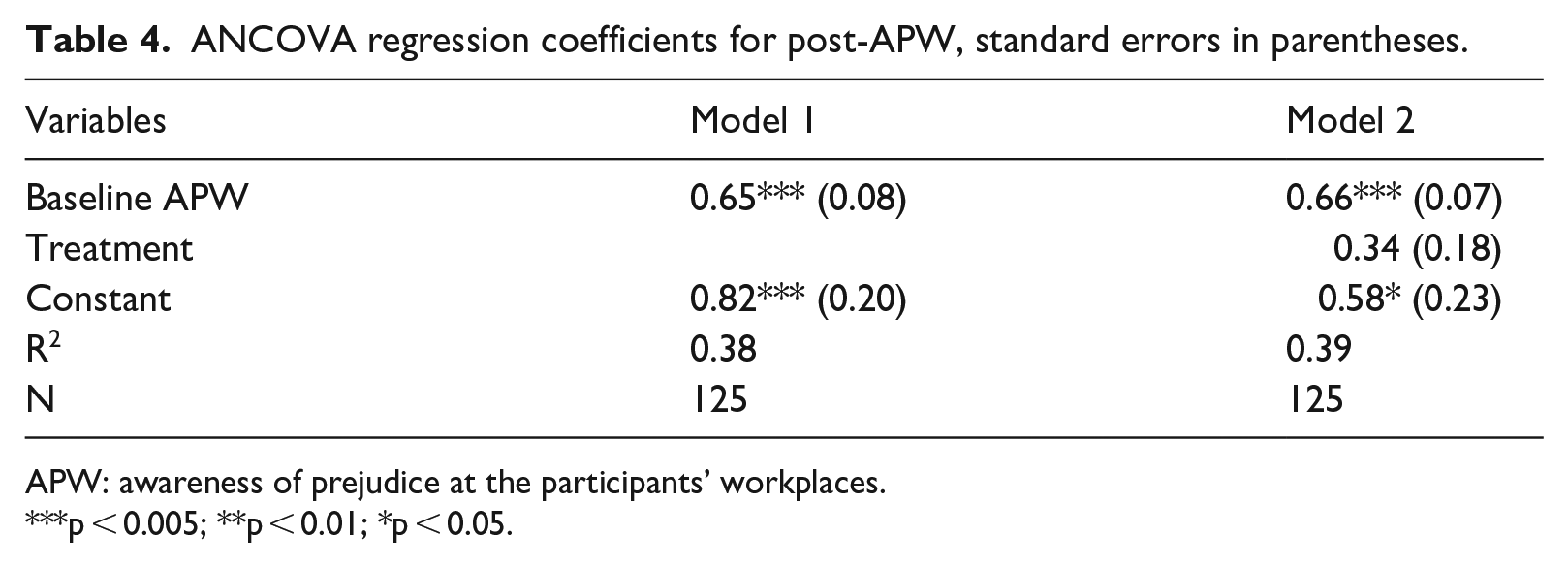

While implicit bias reduction is the question of primary interest in this study, the PHBI has on several occasions identified improved explicit attitudes or increased awareness of bias (Carnes et al., 2015; Devine et al., 2012; Forscher et al., 2017). As mentioned earlier, Awareness of prejudice at the participants’ workplaces (APW) was measured in both the baseline and the post-test survey, enabling the identification of actual changes as a result of the intervention. Figure 2 illustrates the change in mean APW scores, showing that the treatment groups’ mean APW scores increased, but also that the treatment category’s mean scores decreased, over time. The results of a hierarchical regression with post-test APW as a dependent variable where the variables baseline APW and treatment are added one at a time show that baseline APW predicts post-test APW in model 1 F(1, 123) = 74.93, p < 0.005. In Model 2, Treatment is at the border of statistical significance F(1, 122) = 3.41, p < 0.052, n2 = 0.03. The models’ regression coefficients are presented in Table 4. In Model 1, the baseline APW coefficient is 0.65 (p = 0.000), and R2 is 0.37. In Model 2, the Treatment coefficient is 0.34 (p = 0.0526). Adding treatment in Model 2 increases adjusted R2 only slightly, to 0.38. Thus, we observe an increased awareness of workplace bias in the treatment category, but as illustrated in Figure 2, it is driven both by an increase in the treatment category and by a decrease in the control group’s awareness of workplace bias.

Mean awareness of workplace bias awareness at pre-test and post-test by experiment condition with 95% confidence intervals.

ANCOVA regression coefficients for post-APW, standard errors in parentheses.

APW: awareness of prejudice at the participants’ workplaces.

p < 0.005; **p < 0.01; *p < 0.05.

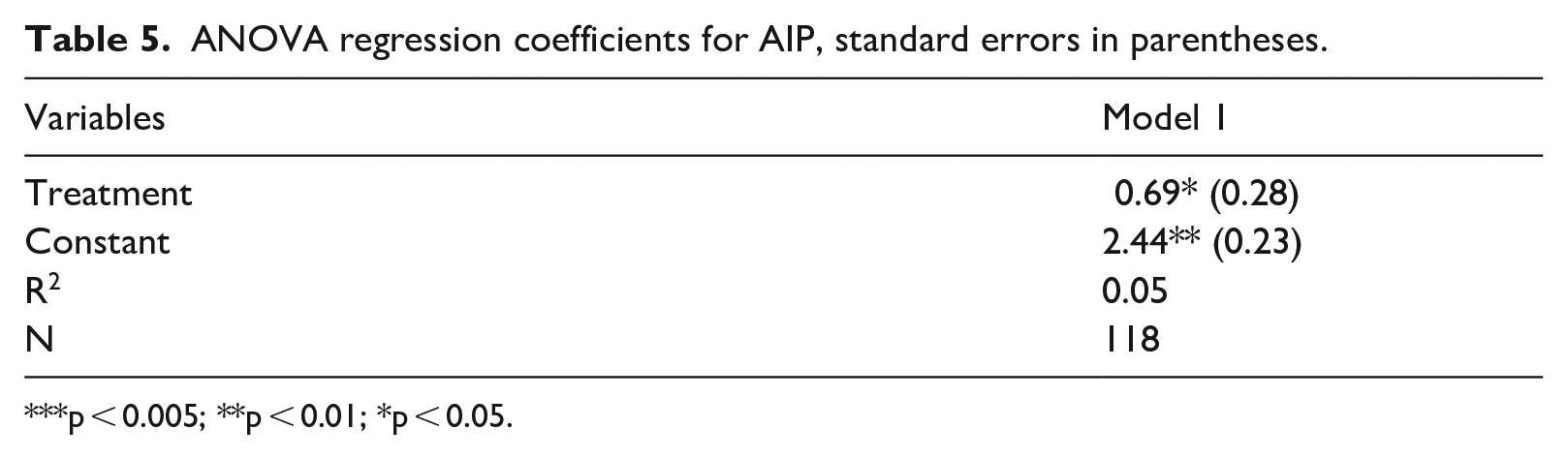

The second explicit measure, awareness of individual prejudice (AIP), was only measured in the second survey, that is, as a post-test, but since there were no other significant baseline correlations except for gender, differences in post-test score may be a result of the intervention. The results of an ANOVA show a statistically significant difference in individual AIP between the treatment and the control group, F(1, 116) = 3.81, p < 0.05, n2 = 0.05. The AIP coefficient reported in Table 5 is 0.69 (p = 0.0162). Again, treatment explains a rather moderate amount of the variation in the dependent variable, R2 is 0.04.

ANOVA regression coefficients for AIP, standard errors in parentheses.

p < 0.005; **p < 0.01; *p < 0.05.

As a robustness check, I have also assessed the impact of outliers, but they do not impact the results. Against this backdrop, I conclude that the PHBI did not reduce implicit bias against Muslims among the participating social workers, but that it did, albeit rather moderately, raise awareness of individual and workplace bias 2 weeks after the intervention.

Discussion

Employees who seek to improve diversity within organizations navigate both organizational directives and constraints, organizational inertia, and in recent years, sometimes also political backlash questioning the very value of diversity. At the same time, the lack of robust scientific evidence on what diversity initiatives are efficient in practice leaves organizational practitioners fumbling in the dark for efficient tools (Dobbin and Kalev, 2017). Research on diversity training, implicit bias reduction included, can assist organizations by filling this gap, evaluating interventions not just in the laboratory but also in organizational settings. One of the aims of this study has been to contribute to this effort. Against the backdrop of an increasing organizational interest in implicit bias training, this study set out to explore whether Devine et al’.s (2012) ‘PHBI’ originally evaluated in the educational setting, reduces ethnoreligious implicit biases in workplace setting. This was achieved by inviting social workers in Swedish municipalities to take the program as diversity training. The intervention increased the treatment groups’ explicit awareness of prejudice at their own workplace and their awareness of their own implicit biases. In this sense, the intervention was successful. However, it did not accomplish its primary aim to reduce implicit bias against Muslims.

By testing the PHBI in a new setting, the study has tested the scope (Freese and Peterson, 2017) of the intervention, not its repeatability (Aarts et al., 2015; Camerer et al., 2018). In other words, it has shown that the implicit bias reduction component of the PHBI is context-dependent, a finding that has important implications for the implementation of implicit bias reduction in diversity training. Whether or not the PHBI can be successfully repeated in the sense that it reduces racial implicit bias within the American educational setting (as the tentative evidence in Cox et al. (2022) suggests), is a separate matter.

It may be objected that the results of the current study are driven by the deviations made from the original design. However, if the PHBI is not robust to deviations that are necessary for implementing the intervention in the work context, it also means that it has a limited scope. In the encounter with occupations and workplaces that vary on participant workload, workplace culture, organizational constraints, and experiment design, trade-offs will have to be made. As noted earlier, the PHBI did not replicate D-score reduction when Devine and colleagues adjusted the original design (Forscher et al., 2017) or changed context (Carnes et al., 2015) either. Thus, for interventions to be of practical use outside of the laboratory, the effects must be robust to minor modifications of the experiment design.

The results of the present study strengthen the concerns that have been expressed earlier concerning implicit bias training (Greenwald et al., 2022b; Noon, 2018). In the worst-case scenario, participants who reduce their implicit biases momentarily through brief one-shot interventions (e.g. Lai et al., 2014) may mistakenly believe that they no longer possess implicit biases. That said, in contrast with diversity training critics like Noon (2018), who describe diversity training as ‘pointless’ since it targets individual attitudes when the problem is structural, I would argue that implicit bias training does have the potential to contribute to improving organizational diversity and fairness. Structural critiques of diversity training seem to neglect the interplay between organizations and the individuals working within them. Individual employees do at times accomplish changes aimed at promoting diversity (Dobbin and Kalev, 2017); when these changes concern the organizations’ formal regulations or routines, these changes may even last beyond these individual’s own employment.

Diversity training may improve employee knowledge and awareness of different types of racism, prejudice, and discrimination (structural, individual, implicit, explicit, etc.), as exemplified by the PHBI studies (Carnes et al., 2015; Devine et al., 2012; Forscher et al., 2017) as well as this, and many other studies (for a recent review, see Paluck et al., 2021). Integrated with the employees’ own practical knowledge about when and where in the organization racism, prejudice, or discrimination is most likely to occur, and where it is likely to do the most harm, knowledge acquired from diversity training may contribute to more informed organizational change in ways that are not measurable in IATs or self-report measures. To exemplify, a diversity training course teaching participants about implicit bias, how it is related to behavior and that it is very difficult to control, may strengthen the arguments of those in an organization who argue for increased formalization or automation in the assessments of clients, jobseekers, employee performance evaluations and the like (for a related argument on discretion elimination, see Greenwald et al., 2022b: 27ff). Thus, until robust evidence replicated in different contexts has been presented showing how implicit bias can be reduced with effects that are long-lasting enough to be practically meaningful, implicit bias training may benefit from focusing on education rather than individual change.

Supplemental Material

sj-docx-1-ssi-10.1177_05390184241230397 – Supplemental material for The scope and limits of implicit bias training: An experimental study with Swedish social workers

Supplemental material, sj-docx-1-ssi-10.1177_05390184241230397 for The scope and limits of implicit bias training: An experimental study with Swedish social workers by Moa Bursell in Social Science Information

Footnotes

Acknowledgements

I would like to thank Anthony Greenwald and an anonymous reviewer for helpful comments on a previous version of the manuscript, and Filip Olsson for valuable assistance with the data collection.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The study has received financial support from the Swedish Research Council for Health, Working Life and Welfare, grant no. 2016-00173, and from the Royal Swedish Academy of sciences.

Supplemental material

Supplemental material for this article is available online.

Notes

Author biography

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.