Abstract

Our study investigates the impact of the British Research Assessment Exercise in 2008 and Research Excellence Framework in 2014 on the diversity and topic structure of UK sociology departments from the perspective of habitus-field theory. Empirically, we train a Latent Dirichlet allocation on 819,673 abstracts stemming from the journals in which British sociologists submitted at least one paper in the Research Assessment Exercise 2008 or Research Excellence Framework 2014. We then employ the trained model on the 4822 papers submitted in the Research Assessment Exercise 2008 and 2014. Finally, we apply multiple factor analysis to project the properties of the departments in the topic space. Our topic model uncovers generally low levels of research diversity. Topics with global reach related to political elites, demography, knowledge transfer, and climate change are on the rise, whereas locally constrained research topics on social problems and different dimensions of social inequality get less prevalent. Additionally, some of the declining topics are getting more aligned to elite institutions and high ratings. Furthermore, we see that the associations between different funding bodies, topics covered, and specialties among sociology departments changed from 2008 to 2014. Nonetheless, topics aligned to different societal elites are found to be associated with high Research Assessment Exercise/Research Excellence Framework scores, while social engineering topics, postcolonial- and cultural-related, as well as more abstract topics are related to lower Research Assessment Exercise/Research Excellence Framework scores.

Introduction

The Research Excellence Framework (REF) and its predecessors, the Research Assessment Exercise (RAE) and Research Selectivity Exercise (RSE), have exerted profound impact on research autonomy, distribution of funding and prestige, and working conditions of scholars in the United Kingdom for decades (McNay, 2022; Schäfer, 2018). They have introduced a focus on topics with easily measurable scientific, social, and economic impact, while open-ended, explorative research has been devalued and often left unfunded (Martin, 2011; Thorpe et al., 2018; Watermeyer and Chubb, 2019; Watermeyer and Hedgecoe, 2016). Despite the REF’s, RAE’s, and RSE’s aim to improve the competitiveness of the UK research system (Tight, 2019), no substantial improvements were found in previous studies. Instead, the REF and RAE have promoted gaming the system strategies which have led to close-mindedness, over-exaggeration of research findings and their importance to stakeholders (Kidd et al., 2021), rising inequality among universities (Münch and Schäfer, 2014), and a loss of research autonomy (Schäfer, 2018).

Despite the plethora of research on the impact of the REF and other research assessments on research funding, academic freedom, publication practices, and impact measurements (Hamann, 2016; Hazelkorn, 2011; Hazelkorn and Gibson, 2018; Kidd et al., 2021; McNay, 2007, 2022; Pinar and Unlu, 2020; Thorpe et al., 2018; Watermeyer and Chubb, 2019; Watermeyer and Hedgecoe, 2016; Weinstein et al., 2021), there is little research on their impact on research diversity and topics covered by participating institutions. If available, research is mostly restricted to management and business research (Lee et al., 2013; Stockhammer et al., 2021; Tourish and Willmott, 2015). Furthermore, critical studies on the impact of assessments on the academic field indicate a loss of research diversity (Hamann, 2016; Lee, 2007; McNay, 2022; Martin, 2011), yet these studies refrain from investigating the impact of research assessments on research diversity and topic structure empirically. We address this research gap by combining natural language processing (NLP) (topic modeling) with multiple factor analysis (MFA). By doing so, we bring the topics extracted from a large corpus of article abstracts and diversity measures applied on text as data into dialogue with department metadata such as the quality rating of submissions, different forms of research funding, and the number of research-active staff.

In the following, we focus on sociology as a case study, as sociology is a highly diverse, internally contested, multiparadigmatic, and divided discipline (Schwemmer and Wieczorek, 2020). As such, it is an example of the social sciences and humanities which are more vulnerable and more dependent on revenue streams distributed by the REF (Münch, 2014; Münch and Schäfer, 2014; Schäfer, 2018), but also yield multiple streams to generate and consolidate research innovation (Schwemmer and Wieczorek, 2020). As sociology is internally fragmented into different schools, which are also located at different elite and nonelite departments (Warczok and Beyer, 2021) and professional organizations (Schmitz et al., 2019), we expect to see a pronounced effect of the RAE/REF on the topics covered (e.g. via the alignment of less reputed departments toward elite departments), and thus a reduction in research diversity. Against this backdrop, we ask . . .

. . . is sociological research in the UK getting less diverse between the RAE 2008 and REF 2014?

. . . how are the topics (a) distributed among the sociology departments, and (b) how does the topic structure change between 2008 and 2014?

. . . which topics are getting consecrated by the RAE 2008 and REF 2014?

To answer these research questions, the remainder of the article is structured as follows: We provide a short history of the RAE/REF in section ‘A short history of the RAE and REF’. In section ‘The symbolic power and rule of research assessment bodies and its interaction with peer review practices as two-level supervision of research’, we introduce mechanisms stemming from habitus-field theory (HFT) to capture mechanisms associated with (a loss of) research diversity and the relation between topics, on the one hand, quality scores, funding streams, and the size of the sociology department, on the other. We also introduce the notion of two-level supervision to conceptualize how the RAE/REF imposes a supervision of research outputs on already existing peer review and quality assurance practices present in the academic field. Thus, the whole peer review and quality assurance process present in academia is under scrutiny of actors situated in the field of power. Departments have to signal accountability to different types of stakeholders (e.g. companies, media, the electorate). In section ‘Research strategy’, we explain the employed mixed-methods approach (Creswell and Plano Clark, 2011), in which we combine Latent Dirichlet allocation (LDA) with MFA. We then present the results of our analysis in section ‘Results’, and discuss the findings in section ‘Discussion’. The article closes with pointing out limitations and future directions of research in section ‘Conclusion’.

A short history of the RAE and REF

The roots of the REF, its symbolic and material effects on the UK academic field can be traced back to the Jarred and Lindop Reports of 1985. Both reports aimed at introducing a quality assurance and accountability system in higher education (Harvey, 2005). They were accompanied by a sentiment of distrust toward scholars who were perceived as tax money wasters and as being insensitive to societal, economic, and technological challenges. In this context, the University Grants Committee under the leadership of Peter Swinnerton-Dyer initiated the RSE to prevent the waste of tax money (Kogan and Henney, 2000; Shattock, 2012). It aimed at increasing research efficiency of universities and at allocating funding to most productive units with best quality research. New Public Management (NPM) was employed to ensure rising productivity by introducing managerial practices including project-based employment, benchmarking, or total quality management. Additionally, accountability measures, output orientation, and quasi-markets were introduced on a systemic level. These reforms led to the professionalization of the university management and established mission agencies such as the Higher Education Funding Council for England (HEFCE) to control research efficiency and effectiveness (Whitley and Gläser, 2014).

The second RSE of 1989 implemented a peer review procedure mediated by subject-specific expert panels. Polytechnics were granted the status of full universities to raise competition among universities for funding and prestige. This increase in competition was expected to improve the efficacy of funding allocation through nudging scholars to meet highest quality standards and focus their research on relevant social, economic, political, and technological issues.

The RSE was followed by the RAE, which was conducted in 1992, 1996, 2001, and 2008. From 1992 to 2001, disciplines at participating universities were ranked categorically (the full list of definitions is provided in Appendix 1). In 2008, the rating system changed from assignment to a single quality category to a quality profile. Quality profiles were aggregated from the submissions of scholars from each subject. Scholars could send up to four submissions which were then reviewed and graded by discipline-specific expert panels. The quality profiles consisted of the share of submitted and evaluated research publications on a 1* to 4* scale with an additional unclassified category.

In 2014, the REF introduced qualitatively evaluated impact studies and added ‘impact’ and ‘environment’ as additional assessment criteria. These three assessment criteria were summed up, resulting in the overall REF quality profile. Impact is related to research output in terms of culture and media, economy and economic development, environmental issues, healthcare issues, professional services, policy, law, and public services (REF, 2012: 68–70). It is measured by citation of the research by non-academic bodies (e.g. non-governmental organizations (NGOs), public debates) or by being documented in standards, guidelines, training materials, and governmental statistics. Environment is related to the university management’s ability to achieve strategic goals. These goals are aligned to research impact (academic and social) and the development and monitoring of research profiles between 2008 and 2013. Likewise, the share of research staff that raised grants, was granted research fellowships, had fixed-term appointments, and was engaged in interdisciplinary research collaboration in addition to the share of students included in research endeavors was evaluated (REF, 2012: 75–77).

The symbolic power and rule of research assessment bodies and its interaction with peer review practices as two-level supervision of research

Theoretical foundations

From the perspective of HFT, the development outlined so far is a result of devaluation of basic research, the redefinition of research as a means to economic growth, and, accordingly, the identification of academic autonomy as a problem in the field of power. The latter is an arena comprising well-endowed and well-connected actors stemming from different fields (academic, economic, bureaucratic, political, among others) (Schmitz et al., 2017). These actors include political parties, funding agencies, ministries, philanthropy, even university administrators, experts, and scholars. They determine, for example, what the ‘economy’ needs, how to govern science properly, or what counts as valuable research eligible to get funded. As these are ambiguous concepts, actors situated in the field of power define the meaning of these concepts – in our case how to measure excellent research properly, or whose problems should be addressed in a certain manner (e.g. problems like social inequality which are addressed using regression techniques).

At the same time, these actors seek policy advice for informed decision-making to give their decisions additional weight. Nowadays, such policy advice is largely dominated by economists and economic models. Public choice theory and in its wake NPM have gained nearly unquestioned recognition worldwide. Among the social sciences, economics claims ‘superiority’ because it has adopted the basic methodological principles of the natural sciences (Fourcade et al., 2015). In this context, governing by numbers has become routine. Governmental bodies and administrations follow this agenda in distributing grants and nudging researchers to meet the established standards and/or demands of stakeholders through regular research assessment.

As these mechanisms establish a system of assessment categories and link the distribution of material and symbolic resources to it, research assessments exert symbolic power, rule, and violence on academia. Symbolic power is defined as the ability of actors to establish and change the rules of a game played within a field against the resistance of other actors, for example, what counts as good research, or how to meet demands of stakeholders properly. Symbolic rule is defined as the incorporation of an unquestioned belief as to how a field should be, how to behave adequately, and what aims to follow properly (doxa, see Bourdieu, 1985: 734, 1989). Symbolic violence is the internalization of negative valuations by dominated actors, for example, their ability to produce REFable research outputs (Schäfer, 2018).

Via the revenue structure, media attention, and acknowledgment inscribed into the quality profiles by the RAE/REF, the role and worth of scholarship, scientific knowledge, departments, and scholars alike are valuated and evaluated. In this way, economic, cultural, and academic capital (as form of symbolic capital) is allocated among departments. In our case, economic capital means funding; cultural capital, the (incorporated) academic and institutional knowledge generated by ‘REFable’ scholars; and academic capital, the quality score assigned to each department. In these bounds, scholars develop a taste for research which combines a preference for theories, methods, research questions, and audiences (Schwemmer and Wieczorek, 2020). It is part of the academic habitus which enables scholars to adapt (more or less) to the demands placed on researchers by the REF/RAE. Against this backdrop, we interpret the REF as an organizational form of symbolic power, rule, and violence which is imposed on scholars by diverse actors in the field of power.

Two-level supervision of research

Despite regular assessment of research outcomes has become commonplace in the wake of the worldwide application of NPM (Martin-Sardesai et al., 2017; Münch, 2019), whether such practices really improve research quality is still a controversial issue. The United Kingdom and the REF therein are no exceptions. In fact, many authors highlight adverse effects on research quality and argue that the investment in increasingly complex research assessments outweigh their benefit (Hamann, 2016; McNay, 2022; Watermeyer and Chubb, 2019; Watermeyer and Hedgecoe, 2016). Independent of the real effect of these devices, NPM tools are implemented by governments and administrations to gain legitimacy (Meyer et al., 1997), as governments and university administrators are widely interested in such assessments in order to have data for making rational decisions on allocating funds at their disposal. They exert a form of symbolic power by determining valuable research according to criteria previously negotiated in the field of power.

In turn, this procedure pushes scholars to follow established lines of research that comply with quality assurance practices, which mostly means publishing in high-impact journals. Knowledge about this procedure, in turn, has an impact on the research and publication strategy of scholars (Rushforth and de Rijcke, 2015) which reinforces the symbolic dominance of the editors of leading journals in setting the parameters of ‘good research’. More often than not, these criteria mirror the definitions negotiated in the field of power. They are, in other terms, homologous. This is an atmosphere in which paradigm shifts (Kuhn, 1962) hardly occur. Progress in scientific knowledge needs a leeway for creative deviance (Mainemelis, 2010), and in the most radical sense for anarchy according to Paul Feyerabend’s (1993) plea for methodological pluralism. Such research can principally not be brought under administrative control, but must assert itself against such control. Therefore, strengthening this already strong control in first-level peer review through superimposing a regular governmentally organized second-level assessment increases the standardizing and normalizing effects on scientific research and hinders renewal and progress of scientific knowledge. We witness a similar tension between the ruling scientific elite in administrative positions at the heteronomous pole of the academic field and uprising rebellious innovators at the autonomous pole as described by Bourdieu (1988: 112–118) in Homo academicus with the debate between Raymond Picard representing the ruling hermeneutic position in literature studies and Roland Barthes representing the post-structuralist and deconstructionist revolt in the French academic field of the 1960s.

Thus, everyday scientific practice always takes place under the reign of standardization and normalization through peer review, which we may also perceive as practices of symbolic power and violence used to consecrate research and to distinguish ‘good’ from ‘bad’ research. What is new with regular research assessment according to NPM is the superimposition of organized peer review on the everyday practice of scientific peer review. In other words, it is a peer review of the peer review process combined with the adaptation of the struggles for knowledge in the academic field to problems (and thus struggles) outside academia. In this way, the already existing standardization and normalization of scientific research gain in power. In this way, the expert panels gain additional governmental authority. 1

This crucial change is what we coin two-level supervision of research. Its institutionalization shifts the symbolic power to define the rules of academic practices further toward the pole of heteronomy of the academic field (Bourdieu, 1988), as scholars are urged to increasingly struggle for institutional power through the accumulation of institutional capital instead of gaining scientific capital. This form of capital is embodied in scholars’ need to occupy central positions in the administration of science. This includes editorships and board or committee memberships in universities, research centers, foundations, academies, and governments. Accumulating institutional capital comes along with the aging of reputed scholars with an interest in and feeling for governmental activities. Their acquisition of an administrative mind is the natural part of the habitus of making a career in the governance of scientific research. That means, there is a natural tendency toward maintaining an established order of scientific knowledge, that is, standardized and normalized science, in the execution of administrative and reviewer activities. The administrator of science needs standards in order to make basically justified decisions, for example, on publishing an article, awarding a grant, or allocating funds.

Possible adverse effects of the two-level supervision of research

The new two-level assessment of research dresses very old hierarchies of the academic field in new clothes. As demonstrated by Hodgson and Rothman (1999) for economics and by Young, Ioannidis, Al-Ubaydli (2008) for the health and life sciences (Fleck, 2013; Münch, 2014: 67–125), this is true even more so as the scientific publication market has been increasingly subjected to the oligopoly of high-impact journals since the establishment of the journal impact factor as the ‘gold standard’ of high-quality research. This is in line with studies which identify a bias against novelty in the academic field (Van Raan, 2004; Wang et al., 2017). According to these findings, paradigm shifts only occur if novel ideas stick around for at least 15 years, are carried into mainstream journals by scholars based on the symbolic capital manifested in the reputation of their home institutions, and establish new schools of thought. Usually, this process takes a whole generation to be accomplished, and not the 4–7 years between the RAE/REF waves.

Against this backdrop, it is no surprise that previous studies uncover negative effects of assessments such as the RAE/REF on research autonomy and diversity, both major structural preconditions of innovation in science (Lee et al., 2013; McNay, 2007). Particularly, two reasons speak for this effect. First, the introduction of performance indicators needed for evaluating research is tied to career opportunities and the allocation of research grants more than ever before. In this vein, scholars choose their research strategies in line with Campbell’s law (Campbell, 1976). This law tells us that once an indicator to measure any kind of success is in place, the evaluated will align their behavior in accordance with this indicator. This, in turn, compromises the indicator and leads to unintended effects, such as the abandonment of research not covered by the REF research quality criteria (see Gruber, 2014; Hazelkorn, 2011). Second, research assessments introduce professionalized governance structures in universities and ministries to ensure the alignment of research with established quality criteria. These structures aim to increase the productivity of scholars and nudge them to conduct research meeting the established standards and/or expectations of stakeholders (Shattock, 2012; Tight, 2019). The interaction between these two effects of assessments narrows autonomy, diversity, and innovation of research (Hamann, 2016; Münch, 2014; Schäfer, 2018; Whitley et al., 2018).

Under this regime of two-level supervision, scholars must develop strategies such as exaggerating findings, selective reporting, tailoring research toward the needs of funding agencies, selective curiosity, and nimble knowledge production (Hoffman, 2020; Kidd et al., 2021). Furthermore, they must produce usable knowledge for stakeholders outside the academic field. This subjection of research to criteria established by ruling bodies is ensured by the REF expert panels which grade the submissions delivered by the participating departments. In this way, Campbell’s law is taking effect. Being predominantly staffed by scholars affiliated with Russell Group universities (Hamann, 2016; McNay, 2022), the expert’s taste defines what is denoted as valuable research. This is even seen in the language of submissions to the REF. Thorpe et al. (2018) found that submissions by high-ranking universities underpin their world-leading role, public reputation, and institutional stability, whereas low-tier universities signal high levels of activity regarding institutional change as well as the aim to emulate the excellence of their top-tier competitors.

Implications for the empirical investigation

Taken together, the concept of two-level supervision implies that, first, those who share the taste for research of the panel members will be more favorably rated and thus get better funded. Second, this incentivizes others to emulate the research of those favored in order to gain reputation and funding. Third, scholars are urged to present their research in a way which might address different stakeholders outside of the academic field, while exaggerating their (social) impact and the certainty with which research results are to be expected. Fourth, scholars need to conduct ‘REFable’ research in order to get one or more papers, monographs, contributions to proceedings, and so on, to be submitted by the university administration to the REF expert panels (Watermeyer and Hedgecoe, 2016). This should lead to the submission of highly standardized research output that yields low levels of research diversity and which is aimed at three audiences at once: the university administration, the expert panel, and a (potential) wider audience situated in other social fields.

For these reasons, we expect to see a focus on a limited number of topics only present in the RAE/REF submissions that (a) comply with the rules of peer review in dominant high-impact journals, (b) show easily measurable impact, and (c) are aligned to the taste of the REF/RAE expert panels. Furthermore, these submissions must (d) demonstrate usefulness to external stakeholders and (e) yield benefits for the submitting departments. As these factors have an impact on the daily routines of scholars, and thus their opportunities to develop a taste for research, we expect negative effects on research diversity among the sociology departments under investigation. In combination with the character of sociology as discipline, which is divided among schools of thought and symbolic boundaries drawn by sociology departments, we also expect a sharp distinction between research foci present at elite and nonelite departments to emerge from our data.

Research strategy

Data and methods

We apply the following procedure to analyze possible associations between the two-level supervision of the RAE/REF, on the one hand, and topics, research diversity, and different forms of capital, on the other. First, we downloaded all information available on sociology departments for the RAE in 2008 and REF in 2014 from the RAE/REF webpages. The data downloaded contain information on the quality profile, headcount (total, research-active), volume and sources of funding, number of doctorates granted, and selected publication data (with a maximum of four publications per research-active staff). In a second step, we extracted the publication data from the RAE/REF data frames, resulting in a total of 4996 submitted research papers (2630 in 2014 and 2366 in 2008). Of these papers, we were able to retrieve 4822 abstracts either by matching the papers with Scopus publications or by hand via a web search.

We then compiled a journal list consisting of 1249 outlets from the research output submitted in 2008 and 2014. As these were the journals addressed by sociological research in the United Kingdom, we took these outlets as starting point to construct our topic space. In a fourth step, we downloaded the abstract data of all journals available in the Scopus database. 2 Despite we only analyzed the RAE/REF waves of 2008 and 2014, which allow for submission of articles issued between 2000 and 2013 only, we decided to include abstracts of articles published between 2015 and early 2022 for the following reasons. First, we assume that articles published in 2013 might spark further debates on the issues discussed in the following years, meaning that in 2013 or 2014 there might only be a few articles which constitute novel topics that may become salient afterward. If we excluded articles published between 2014 and 2020, we would falsely assign these articles different topics. Second, including additional text data increases the chances to extract meaningful topics from our corpus significantly. This procedure resulted in a total of 819.673 abstracts of articles published between 2000 and 2022. In line with standard NLP terminology, we refer to the journal abstracts retrieved from Scopus as well as from our web search as text corpus in the following.

As usual in the domain of NLP, we must pre-process and clean the corpus before we can extract topics from the abstracts. 3 We begin with tokenizing and lemmatizing the abstract data. Tokenization is understood as the process of separating the text into discrete entities, in our case words (see Schneijderberg et al., 2022: 383–391 for a detailed account on tokenization and other preprocessing steps). Lemmatization is defined as the reduction of tokens on its basic form (e.g. running, ran, run→run). To improve the coherence of our topics, as demonstrated in earlier research (Huang, 2017; Martin and Johnson, 2015), stopwords 4 were removed and part of speech tagging was applied to filter all word types except for nouns, verbs, and adjectives. In the following, we concatenated bigrams which appeared more than 1000 times in the corpus. Bigrams are phrases of two tokens such as ‘negative_effect’ or ‘qualitative_interview’ (Blaheta and Johnson, 2001). We decided on such a large threshold due to the sheer size of our corpus. At last, we converted the abstracts into a ‘bag-of-words’ format. A bag-of-words is a vectorized text representation, in which an id is assigned to each token, which then is counted per text (e.g. ‘qualitative_methods’ is given id 1, appears 4 times in text 1, 6 times in text 2). Such an approach ignores grammar and text structure, and is thus not a realistic representation of the abstracts included in our analysis. Nevertheless, it has been proven to be a reliable approach for topic extraction and topic interpretation in large corpora (DiMaggio et al., 2013; Wieczorek et al., 2021).

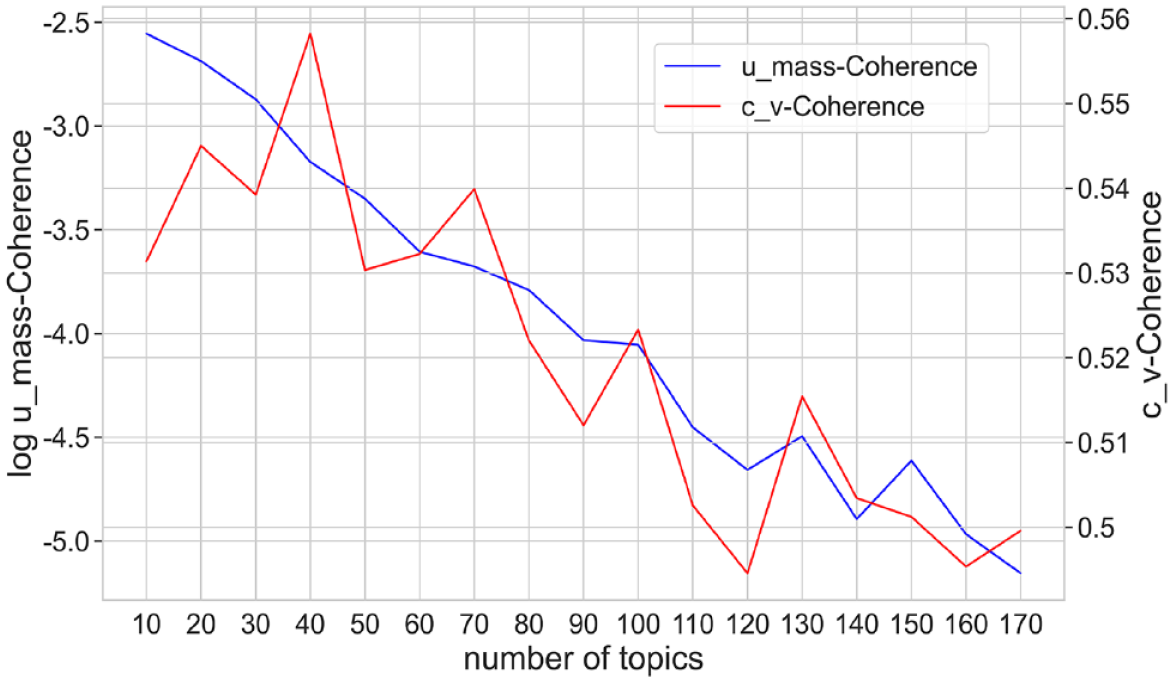

In the next step, we performed an LDA for topic extraction. LDA is a probabilistic topic modeling approach, meaning that every text is composed of a mixture of different topics (Blei et al., 2003). However, the underlying algorithm cannot detect an optimal number of topics (k) automatically. We, therefore, had to define the number of topics a priori and calculate multiple models. In our case, k ranged from 10 to 150 (calculated in steps of 10). To evaluate the topics and choose the optimal number, we first applied coherence and perplexity measures (Blei et al., 2003; Mimno et al., 2011). Figure 1 shows the c_v-coherence and log-u_mass-coherence values calculated from the text data using the python genism package (Řehůřek and Sojka, 2011). Second, we analyzed the most prevalent words and performed close reading of the abstracts with the highest propensity of each topic. 5

Coherences per topic.

We evaluated different models (k = 40, k = 70, k = 100) where both coherence measures reached a plateau. We then conducted close reading of the most prevalent tokens of each topic and interpreted at least five abstracts with the highest topic loadings which resulted in a model containing k = 40 topics. 6 Finally, we excluded four topics as they did not have a single reference to sociology. The reason is that some sociologists published in journals such as PLOS One, Nature, or Science. Since these journals also contained articles stemming from other disciplines, this resulted in topics unrelated to sociology.

Following this procedure, we applied the trained model to our reduced corpus of 4822 abstracts and assigned topic distributions. We then calculated the cosine similarity (Lahitani et al., 2016) between all submissions of the sociology departments as well as the mean Shannon entropy per article submitted to the RAE/REF (Shannon, 1948). The former measures the uniformity of the texts regarding their topic structure on the department level, whereas the latter is a measure of topic diversity. We then averaged topic prevalence and similarities on department level and fused them with departmental metadata.

To investigate changes in the topic structure between 2008 and 2014, we report the 10 topics with the steepest increase and decrease in topic prevalence. To check whether research diversity overall declined, we performed a one-sided Wilcoxon rank-sum test on cosine similarity and Shannon entropy on department level. We restricted our test to departments that participated in the RAE 2008 and REF 2014 to avoid biases resulting from differing samples.

Finally, we used the data on department level to perform a diachronic MFA of the combined topic space and indicators of economic, cultural, and academic capital provided by the RAE/REF. MFA is an extension of correspondence analysis and is used for dimensionality reduction of complex, multivariate datasets which contain metric as well as qualitative variables (Escofier and Pages, 1994). 7 This procedure enables us to combine topic prevalence, endowment with different forms of capital as well as sources of funding and to link them to the names of sociology departments. Following this procedure, we are also able to uncover topics, departments, and forms of capital related to low levels of diversity and whether these relations change between 2008 and 2014.

Variables included in the diachronic MFA

We include the following six types of variables as active variables in our diachronic MFA:

1. Topic prevalence: This set of variables measures the mean probability that texts per department are assigned to a given topic. The higher the topic prevalence per department, the more likely it is that all texts are related to the topic on average.

2. Academic capital – Ranking scores: These variables comprise the percentage of research assigned to the 1*, 2*, 3*, or 4* categories. In 2008, scores are measured unidimensionally. As the rankings are subdivided into environment, output, impact, and overall, in 2014, we decided on using the overall rankings to ensure comparability.

3. Diversity measures: These include averaged cosine similarity and Shannon entropy.

4. Cultural capital: We use total headcount and full-time equivalent (FTE) research-active staff (category A staff) as indicator of cultural capital. We do so, as the staff selected is deemed worthy as a token to gain a favorable RAE/REF outcome, to generate funding, and to conduct ‘world-class’ research.

5. Economic capital – United Kingdom: Here, we include funding acquired from research councils, industry, and different government bodies.

6. Economic capital – European Union (EU): We include funding acquired from EU institutions and different other EU sources. Albeit the REF 2014 provides a more detailed account (e.g. funding provided by industry located in the EU), we decided on merging the different sources for comparability with the RAE 2008 wave.

In addition to these active variables, we also projected department names as passive variables onto the spaces of 2008 and 2014. Descriptive statistics of all variables included in the models is provided in Appendix 4 and Supplemental Appendix D, the scree plots with the variance explained are presented in Appendix 5, and the position of each department on the dimensions extracted from the data is entailed in Appendix 6.

Results

In the following, we report first the overall changes in topic structures and research diversity between the RAE 2008 and REF 2014 waves. Afterward, we report the findings of our diachronic MFA.

Changes in topic structure and research diversity 2008 and 2014

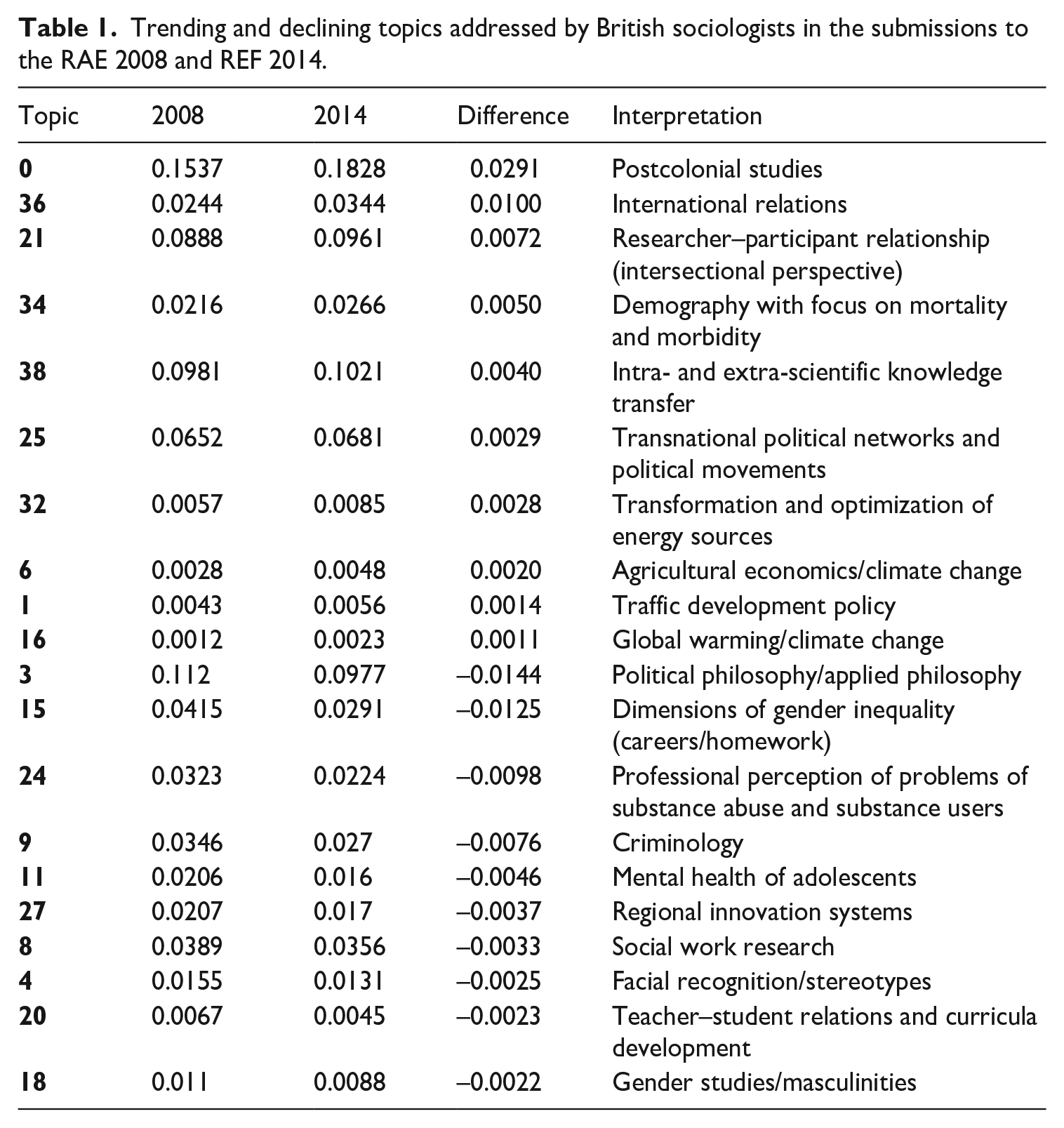

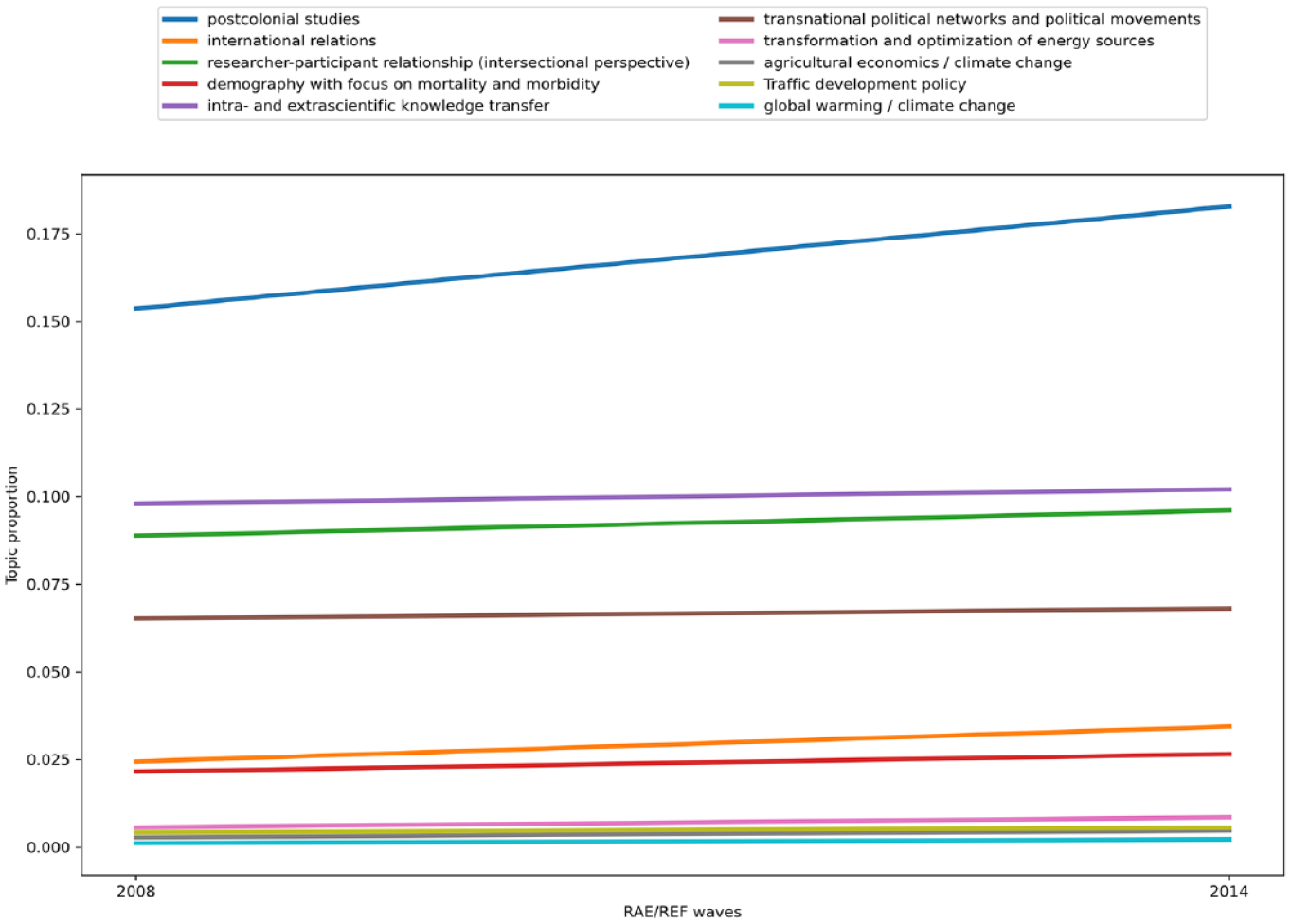

Beginning with the trending and declining topics, we witness a rise of topics (T hereafter) with focus on international politics, including postcolonial studies (T0), international relations (T36), and transnational political networks and political movements (T25). Research on climate change is also on the rise and includes transformation and optimization of energy sources (T32), agricultural economics/climate change (T6), and traffic development policy (T1). Additionally, we see that demography with focus on mortality and morbidity (T34) and the relation between researchers and their different audiences (comprises intra- and extra-scientific knowledge transfer, T38) gain in prevalence.

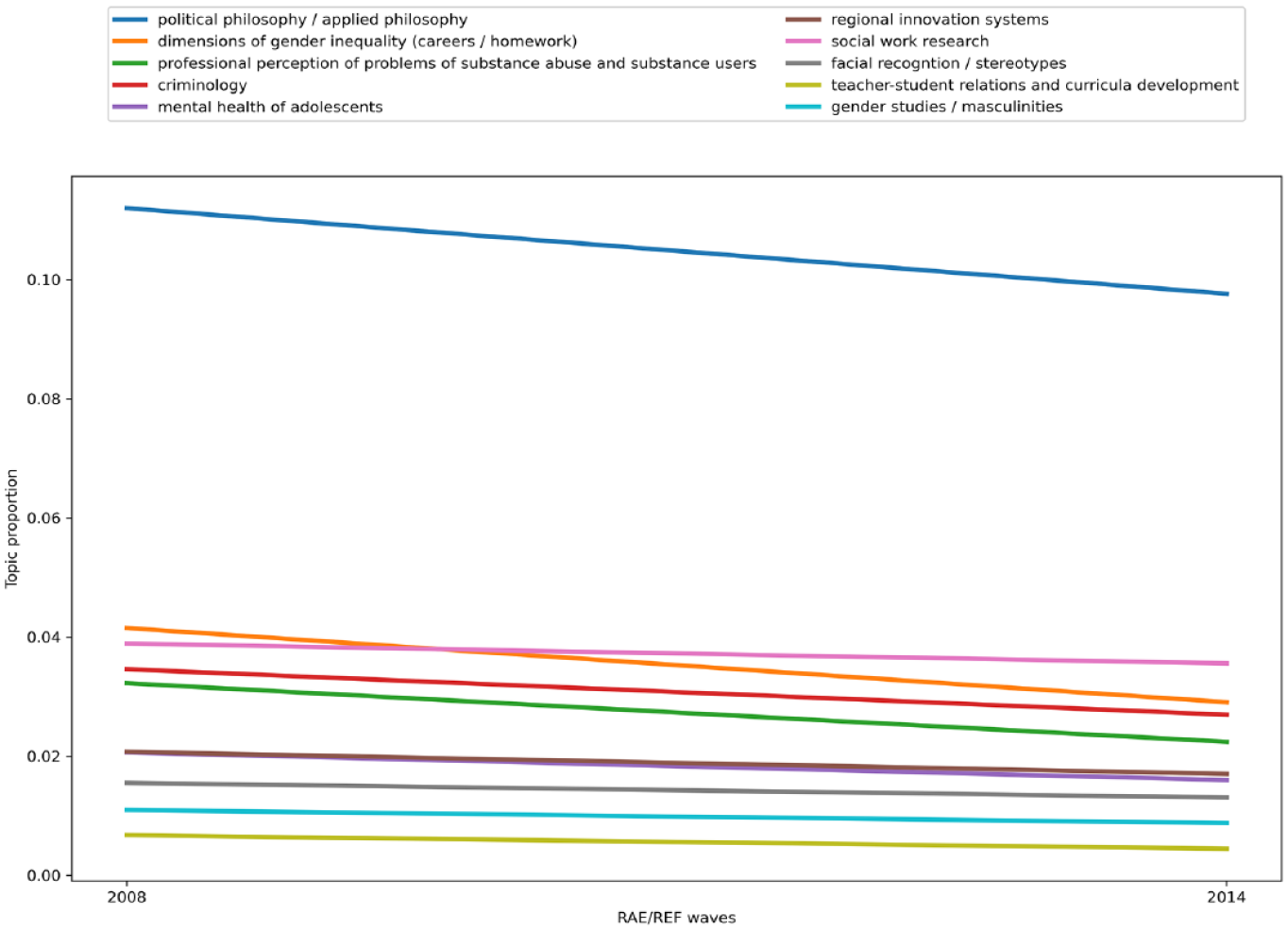

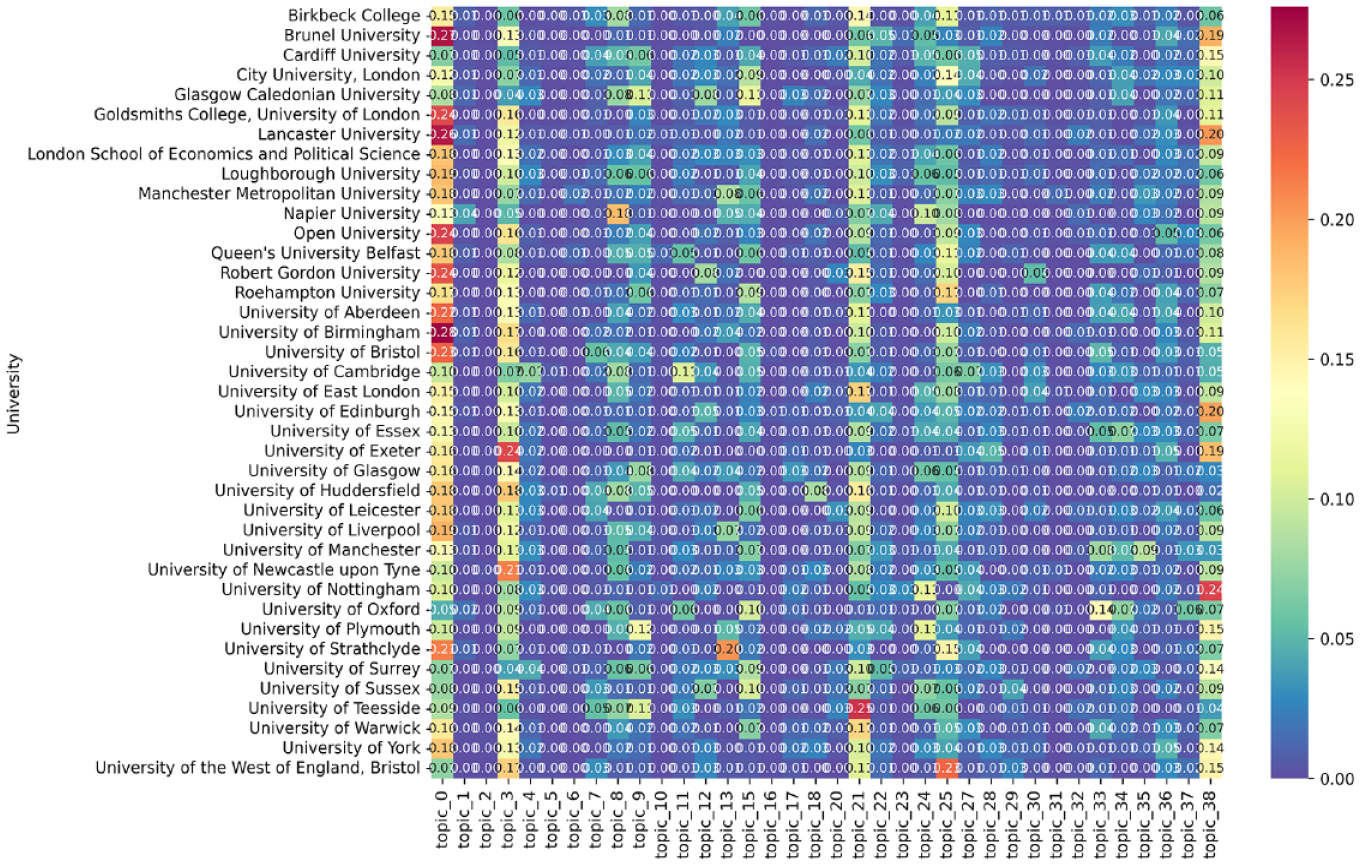

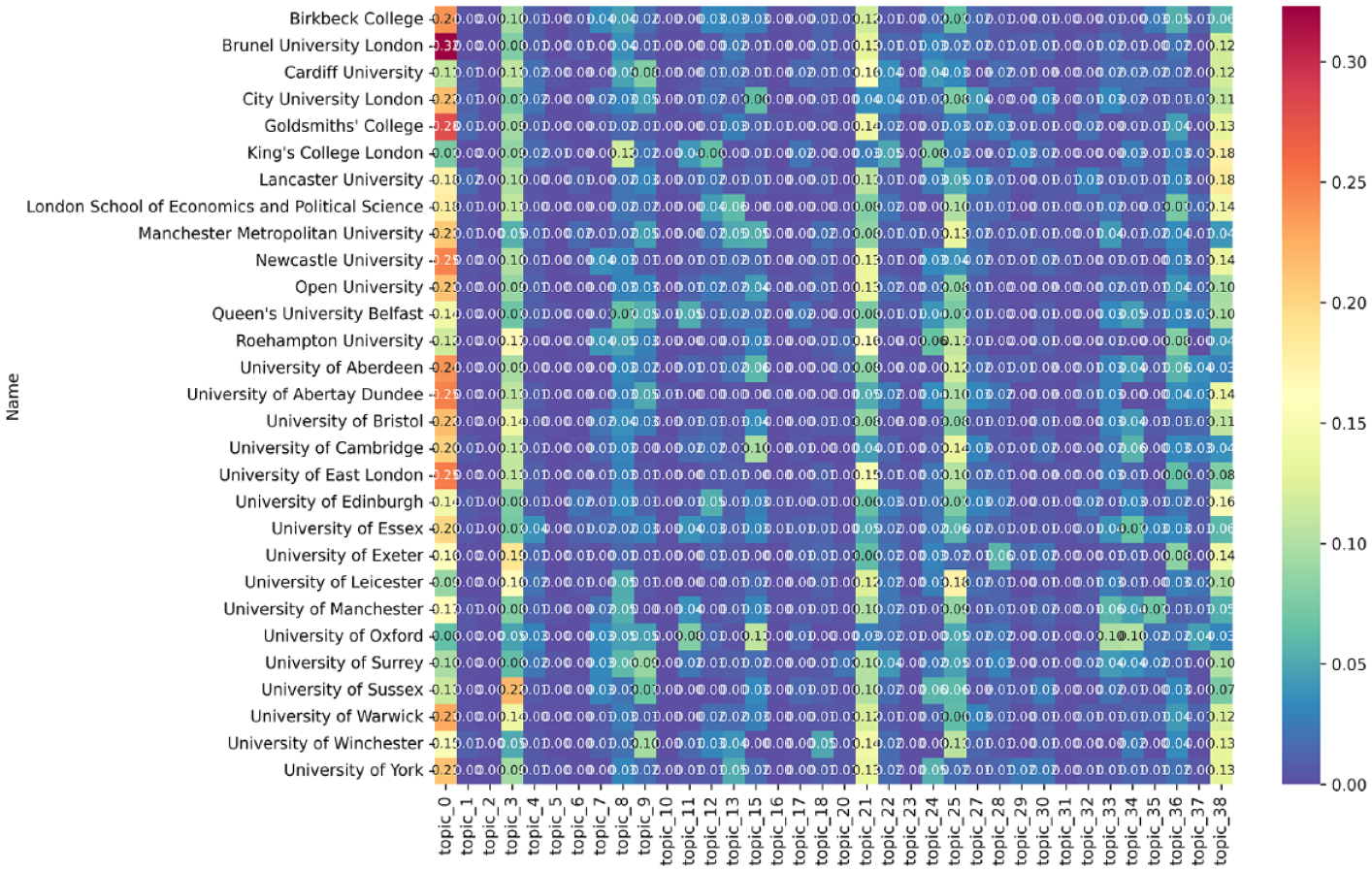

At the same time, topics related to social work research (T8) are in decline regarding the share of papers submitted to the REF in 2014 compared to the RAE in 2008. These include domestic social problems (criminology, T9; professional perception of problems of substance abuse and substance users, T24; mental health of adolescents, T11; teacher–student relations and curricula development, T20), and social inequality (dimensions of gender inequality (careers/homework), T15; gender studies/masculinities, T18). Furthermore, studies with focus on single regions (regional innovation systems, T27), research on stereotypes (facial recognition/stereotypes, T4), and abstract topics such as political philosophy/applied philosophy (T3) are in decline. Table 1 and Figures 2 and 3 provide an overview on trending and declining topics.

Trending and declining topics addressed by British sociologists in the submissions to the RAE 2008 and REF 2014.

Change in prevalence of trending topics.

Change in prevalence of declining topics.

Trending topics appear to be geared toward powerful elites (politics and ministries) and publics which must be reached to demonstrate that sociological research generates value for money and societal impact. Furthermore, rising topics are more ‘global’ in the sense that they are not restricted to regions, households, and some areas of social inequality which are linked to social work. In other words, we witness that trending topics aim to generate social impact on the elites, while declining topics are related to generating impact on socially disadvantaged people who face social problems such as crime, mental, and physical health issues.

Turning to the distribution of topics among departments, we see that the two-level supervision of the RAE/REF led to a concentration of research submissions on postcolonial studies/cultural studies (T0), political philosophy/applied philosophy (T3), researcher–participant relationship (intersectional perspective) (T21), transnational political networks and political movements (T25), and intra- and extra-scientific knowledge transfer (T38). To a lesser extent, departments focus on topics like empirical educational research, social work research, criminology, public health/health risks, mental health of adolescents, financial markets, (sustainable) urban planning/relation between city and nature, dimensions of gender inequality (careers/homework) (T7–T15), professional perception of problems of substance abuse and substance users (T24), regional innovation systems (T27), and medical treatments of chronic diseases/survival analysis (T29), as well as organization studies, demography with focus on mortality and morbidity, sophisticated and novel multivariate models and their problems, international relations, treaties and conflicts, voting behavior/political participation (T33–T37).

The concentration on specific topics is slightly higher in 2014 compared to 2008. This is captured by the heatmaps (Figures 4 and 5). Here, brighter colors indicate higher prevalence of the topics per department. At the same time, we see outliers, for example, mental health of adolescents (T11) at Napier University, transnational political networks and political movements (T25) at the University of West England Bristol, (sustainable) urban planning/relation between city and nature (T13) at the University of Strathclyde in 2008, or organization studies (T33) at the University of Oxford. In 2014, we witness less outliers, for example, King’s College London on social work research (T8), the University of Oxford on organization studies (T33) and demography with focus on mortality and morbidity (T34), or the Universities of Cambridge and Oxford on dimensions of gender inequality (careers/homework) (T15). However, we see fluctuations in the topics which are covered to a lesser extent (T7–15, 24, 27, and 33–36). In this sense, we expect these fluctuations to make a difference in the association between quality profiles and topics covered by each department.

Heatmap of topic distribution between sociology departments, RAE 2008.

Heatmap of topic distribution between sociology departments, REF 2014.

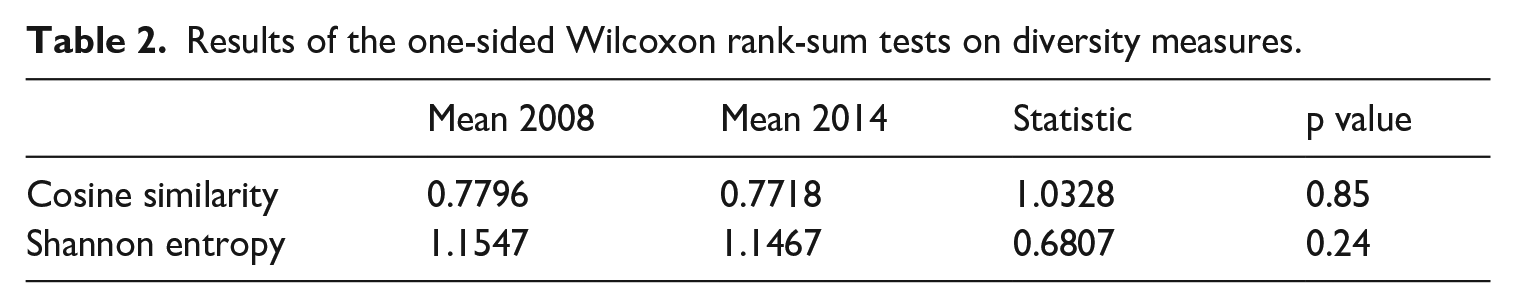

Let us now turn to the results of the Wilcoxon rank-sum tests on cosine similarity and Shannon entropy as measures of research diversity (see Table 2). Contrary to our expectations, there appears to be no decline of research diversity regarding the cosine similarity and Shannon entropy. The mean values of 2008 (0.7796) and 2014 (0.7718) show no significant decrease (p ≈ 0.85). However, the cosine similarity values are very high at both points in time, as cosine similarity can take values between 0 and 1. This indicates overall high levels of uniformity of research topics regarding the submissions of British sociology departments to the RAE 2008 and REF 2014. The Shannon entropy does not yield significant changes either. It drops slightly from 1.1547 to 1.1467 in the case of the departments which submitted output to the two-level supervision system of the RAE/REF. In general, lower values indicate higher concentrations of research topics within sociology departments. Nonetheless, we must consider that entropy was already very low regarding the submissions in 2008 since the maximum entropy is log2(k) = 5.3219 for k = 40 topics.

Results of the one-sided Wilcoxon rank-sum tests on diversity measures.

The structure of the field in 2008

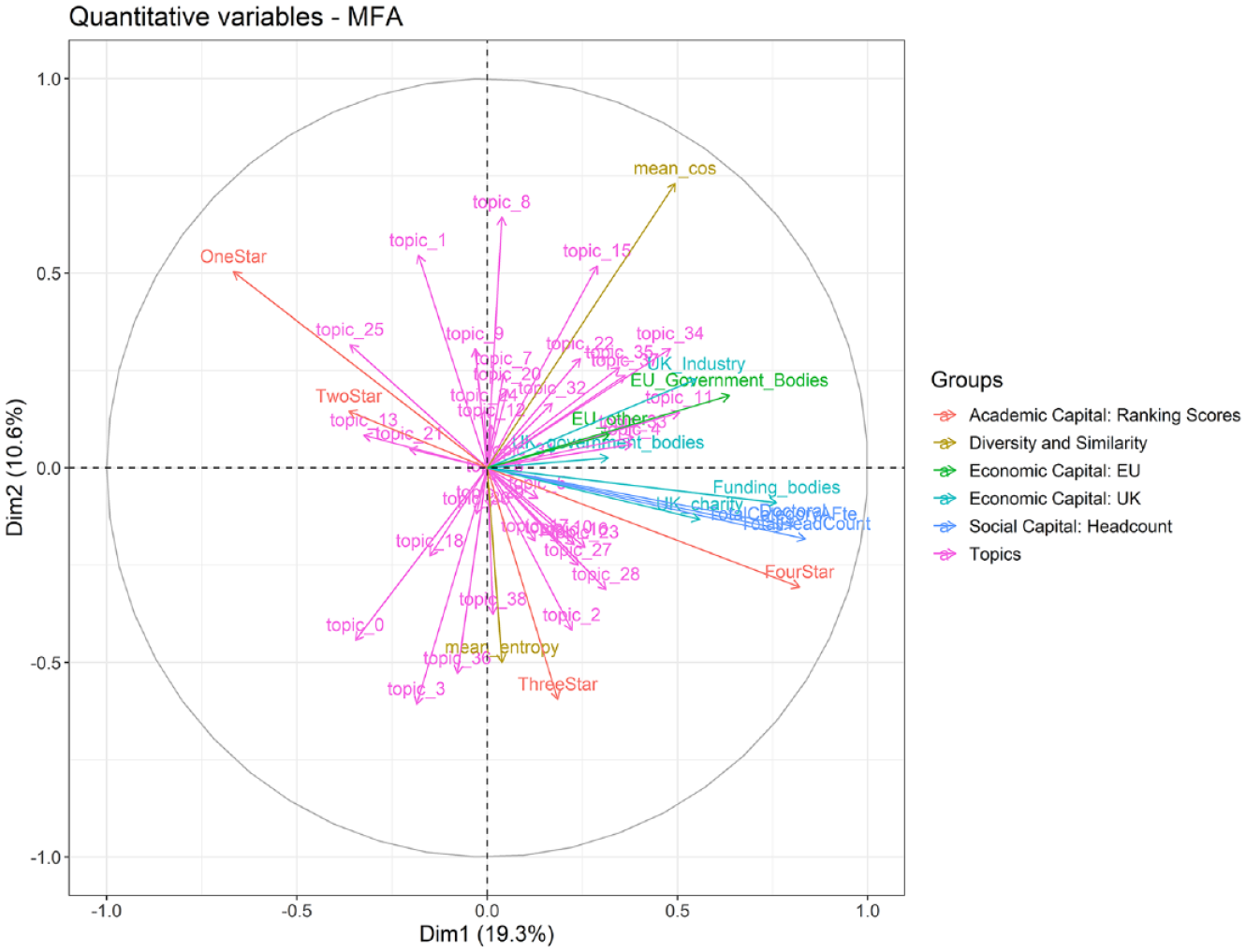

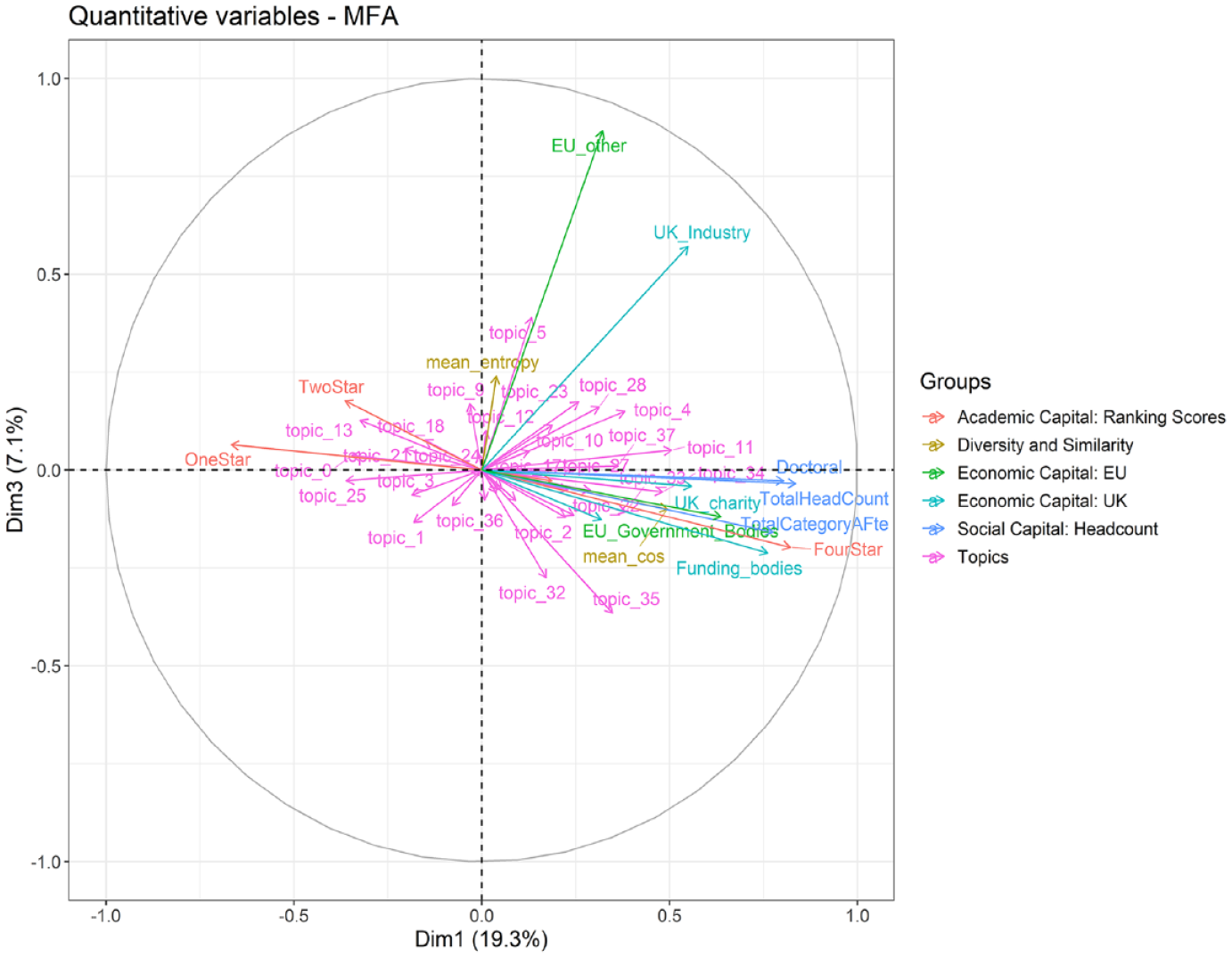

Let us now proceed with the results of the MFA conducted on the topic distribution and metadata of the REF 2008 wave. The loadings and positions of variables included in the models are depicted for axes 1 and 2 in Figure 6, for axes 1 and 3 in Figure 7.

MFA 2008, dimensions 1 and 2.

MFA 2008, dimensions 1 and 3.

Axis 1 explains 19.3% of the variance present in our dataset and indicates a difference between symbolically consecrated sociology departments with abundance of economic and cultural capital on the right, and departments with low volumes of capital and low-ranking scores on the left. Sociology departments on the right have many research-active scholars, graduates, as well as UK- and EU-based funding at their disposal. Furthermore, these departments are associated with 4* ratings for their submissions.

Regarding the topics covered, we see that departments with high levels of consecration and volumes of economic and cultural capital focus on demography addressing mortality and morbidity (T34), organization studies (T33), facial recognition/stereotypes (T4), sophisticated and novel multivariate models and their problems (T35), and, to a lesser extent, public health/health risks (T10). Interestingly, the mean cosine similarity is associated with this side of dimension 1, meaning that research submitted to the RAE 2008 by high-ranking departments tends to be more similar in topics compared to departments with lower-ranking scores. Furthermore, the topics covered show an influence of psychological research, business studies, economics, political science, and statistics on the research submitted.

On the left-hand side, we witness the highest shares of research associated with submissions scored 1* or 2* in the RAE 2008. Associated departments are deprived of economic, cultural, and academic capital. Scholars situated in these departments conduct research on (sustainable) urban planning/relation between city and nature (T13), transnational political networks and political movements (T25), postcolonial studies/cultural studies (T0), researcher–participant relationship (intersectional perspective) (T21), and traffic development policy (T1). In contrast to the topics of the well-endowed, high-ranking departments, we see a tendency to focus on regionally constrained qualitative research, which is why we interpret the first dimension as opposition between (relatively) uniform, symbolically dominant, elite-aligned quantitative research endowed with high volumes of capital and qualitative research with local focus conducted at dominated, capital-deprived departments.

Axis 2 explains 10.6% of the variance present in the 2008 RAE data. It spans an opposition between relatively uniform research at the top of the graph and rather lower-tier departments, and relatively diverse research submitted by 3* departments at the bottom. Topic-wise, social work research (T8), traffic development policy (T1), dimensions of gender inequality (careers/homework) (T15), and to a lesser extent criminology (T9) are associated with rather uniform research on this side of the axis. At the bottom, we see research on political philosophy/applied philosophy (T3), international relations, treaties and conflicts (T36), postcolonial studies/cultural studies (T0), and agricultural sciences (T2). Overall, we interpret this opposition seen in dimension 2 as low-tier research on disadvantaged groups versus relatively diverse research with focus on political problems stemming from the British colonial past.

Finally, axis 3 shows a link between industry-funded research (EU-other and UK-industry) and neuroscience-infused research (neurobiology, T5; health-related behavior/planned behavior, T23; cognitive sciences/information processing, T28), which is probably associated with social engineering and nudging. At the bottom, we observe research on statistical methods and their problems (T35), and transformation of energy sources (T32). This can be interpreted as quantitative research on energy problems and the beginning impact of climate change.

The structure of the field in 2014

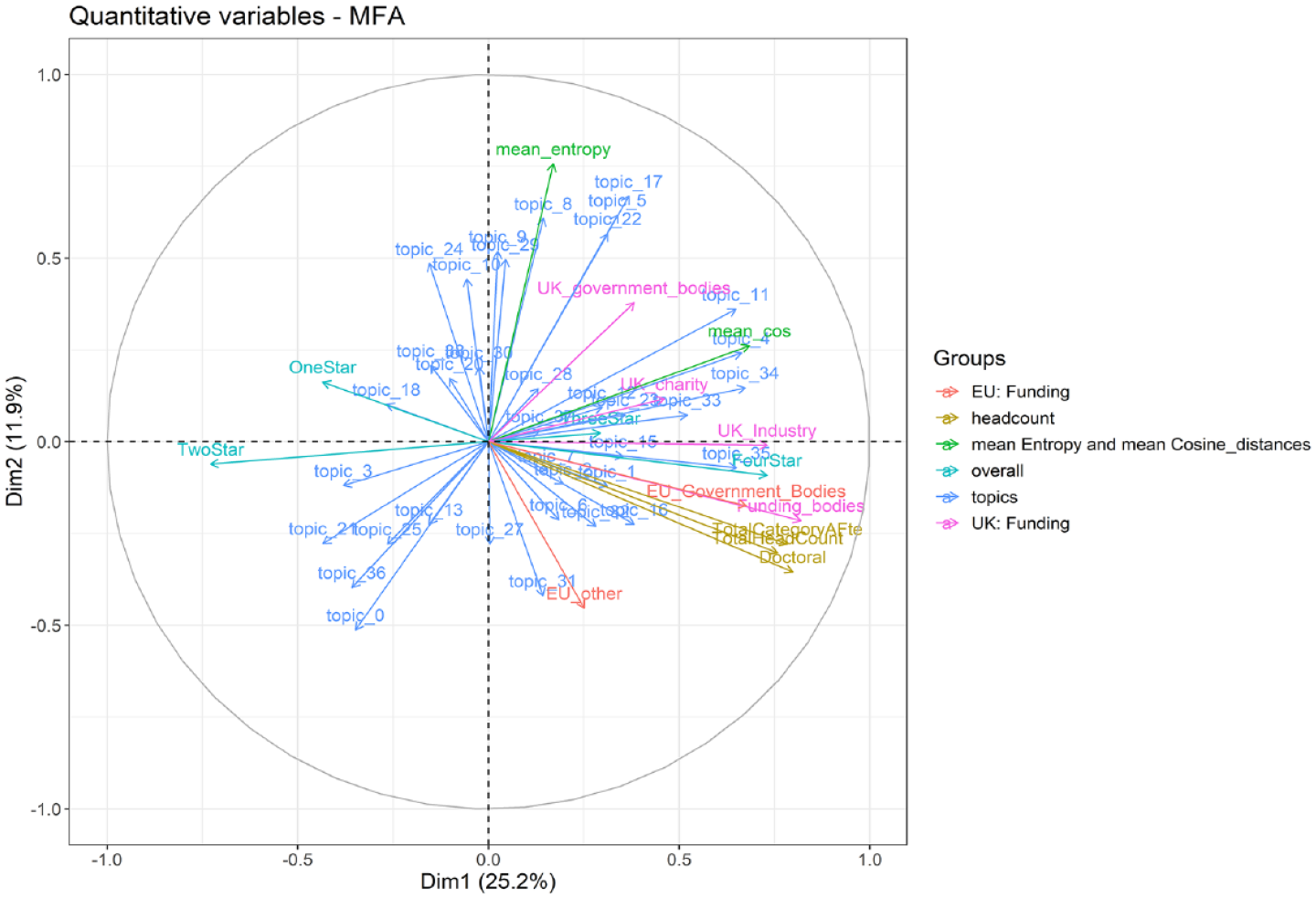

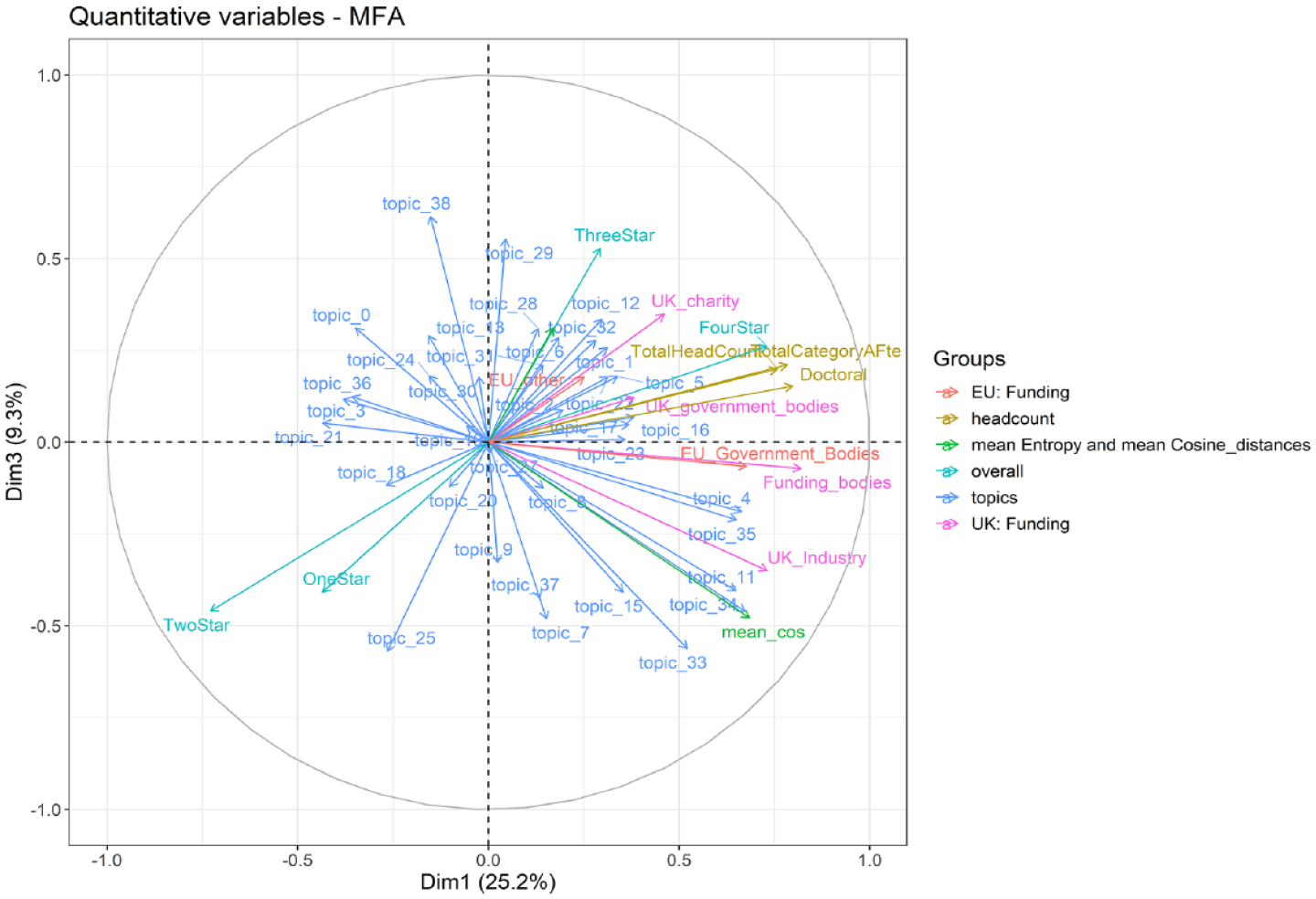

Now let us continue with the results of the 2014 REF wave. Figures 8 and 9 depict the position of variables included in our model for axes 1 and 2, and 1 and 3, respectively.

MFA 2014, dimensions 1 and 2.

MFA 2014, dimensions 1 and 3.

Dimension 1 now explains 25.2% of the variance instead of the 19.3% in 2008. Basically, we see the same distinction between symbolically dominant topics, and economically and academically well-endowed departments, on the one hand, and dominated departments and topics, on the other. Yet, there are nuanced differences regarding the type of economic capital associated with the first dimension as well as the topics covered by the respective departments.

On the right-hand side, we observe a stronger association of economic capital provided by UK industry compared to the RAE 2008 wave. This is interesting, insofar as attaining grants provided by actors situated in the economic field was a structuring principle of the third dimension in 2008. Topic-wise, the focus on research on facial recognition/stereotypes (T4), sophisticated and novel multivariate models and their problems (T35), demography with focus on mortality and morbidity (T34), and organization studies (T33) shows some continuity between the consecrated departments of the RAE 2008 and REF 2014. Yet, we see that research on mental health of adolescents (T11) replaced voting behavior/political participation (T37). Publications submitted to the REF 2014 by sociology departments located on this side of axis 1 are again characterized by high levels of similarity regarding to cosine similarity.

Another continuity is that we find more submissions ranked as 1* or 2* at the left-hand side of axis 1. These submissions focus on postcolonial studies/cultural studies (T0), transnational political networks and political movements (T25), and researcher–participant relationships (intersectional perspective) (T21) which were also situated at this end of dimension 1 in 2008. We also recognize a larger fluctuation in topics compared to the opposite side of axis 1, as political philosophy/applied philosophy (T3), international relations, treaties, and conflicts (T36), and gender studies/masculinities (T18) are now much more strongly related to low levels of consecration. Overall, we coin the opposition incorporated in axis 1 as symbolically dominant, materially and symbolically well-endowed research with quantitative focus on health, demography, and stereotypes versus symbolically dominated, qualitative research with a homology between disadvantaged scholars and disadvantaged social groups. It appears that, besides the volumes of capital, the main distinction is now the coverage of quantitative, health-related topics versus the philosophical debate on gender, international relations, and the colonial heritage of the United Kingdom.

Axis 2 explains 11.9% in the variance present in the REF 2014 dataset compared to 10.6% of the variance present in 2008, and it changed even more drastically compared to axis 1. On top, we see research with high levels of diversity expressed by relatively high entropy values. Diverse research is tendentially associated with UK governmental bodies (ministries or the HEFCE). Thematically, only social work research (T8) remained characteristic for this side of axis 2. At the same time, traffic development policy (T1), dimensions of gender inequality (careers/homework) (T15) were replaced by neurobiology (T5), management/organizational performance (T22), professional perception of problems of substance abuse and substance users (T24), and public health/health risks (T10). Here, we observe those topics to be associated with social engineering in dimension 3 in the RAE 2008 fused with studies on management, public health, and social problems. They are now one of the structuring principles of dimension 2.

At the bottom, we see research funded by diverse EU actors, including companies and philanthropy. Departments located at this side of axis 2 show high output of PhD graduates which was more closely associated with FTE staff on dimension 1 in 2008. Research output issued to the REF 2014 located at this side of axis 2 still focuses on postcolonial studies/cultural studies (T0) and international relations, treaties and conflicts (T36), but are now fused with ecosystems/climate change (T31), and regional innovation systems (T27) instead of agricultural sciences (T2) and political philosophy/applied philosophy (T3). Overall, axis 2 unveils an opposition between social engineering in the United Kingdom with regard to crime, health, and economic issues and European-funded, globally oriented research on the impact of climate change on societies, international relations, and regional innovation systems.

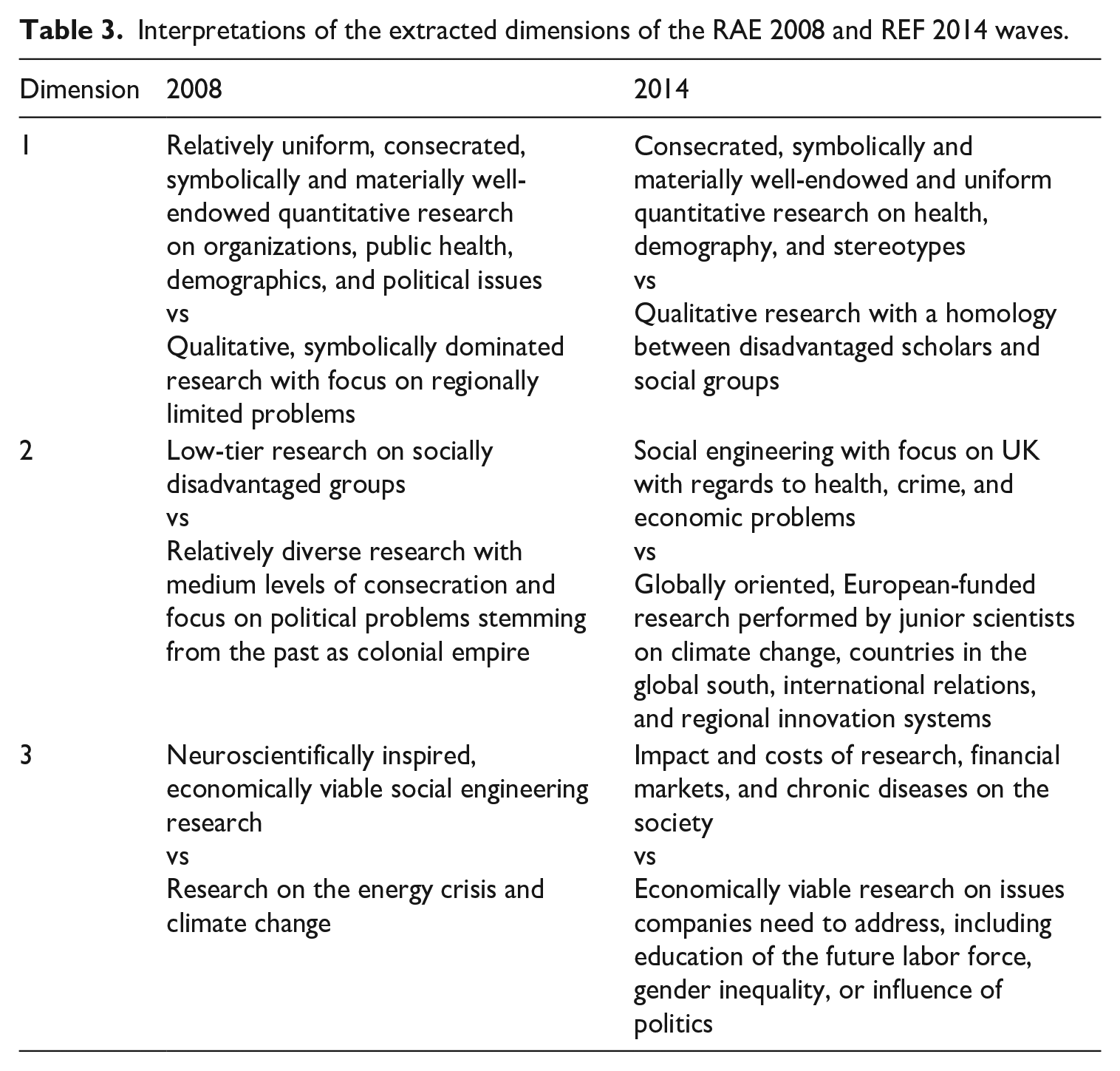

The third dimension covers 9.3% of the variance present in the REF 2014 dataset and depicts the difference between relatively highly consecrated research (3*) funded by charities and relatively uniform research with low levels of consecration (1* and 2*), endowed with funding provided by UK industry. None of the topic’s characteristics for the 2008 RAE wave loads strongly on axis 3 in the 2014 REF wave. We now witness an opposition between research on intra- and extra-scientific knowledge transfer (T38), medical treatments of chronic diseases/survival analysis (T29), and financial markets (T12) on the top side of axis 3, whereas organization studies (T33), mental health of adolescents (T11), transnational political networks and political movements (T25), empirical education research (T7), dimensions of gender inequality (careers/homework) (T15), and political philosophy/applied philosophy (T3) now load at the bottom. This makes sense, insofar as one pole describes research on the societal impact of research, chronical medical conditions, and financial markets, whereas the other pole entails economically viable research on issues companies might need to address. This includes education, gender inequality, and the relations to the political sphere, as well as ethical consequences of decisions made in the companies, as the presence of (T3) suggests. Table 3 summarizes and contrasts the findings of the MFA conducted on the RAE 2008 and REF 2014 data.

Interpretations of the extracted dimensions of the RAE 2008 and REF 2014 waves.

Discussion

Our findings reveal a methodological divide between quantitative and qualitative methods (Schwemmer and Wieczorek, 2020) which is linked to the levels of consecration incorporated in the quality profiles in 2008 and 2014. We see quantitative research and associated topics, mainly provided by departments like Oxford, to dominate the RAE 2008 and REF 2014 quality scores. At the same time, these departments yield the lowest levels of diversity internally, meaning they are highly specialized, and provide the role model for conducting ‘REFable’ research. Regarding Campbell’s law (Campbell, 1976) and its relation to the two-level supervision and power relations, we recognize that research conducted by these departments does not abandon topics not covered by those criteria (see Gruber, 2014; Hazelkorn, 2011), but actively defines those criteria. These, in turn, are known by scholars and heads of departments so that they are enabled to rely on their ‘outstanding’ research, while other departments must tailor their submissions to the criteria of RAE/REF expert panels, and the way they present their research in line with Kidd et al. (2021) and Thorpe et al. (2018). At the same time, symbolically dominated departments must probe what kind of research and research topics meet the taste of the RAE/REF expert panels and is thus being funded by them. Without funding, scholars affiliated with these departments must rely on a strategy called nimble knowledge production (Hoffman, 2020), while aligning themselves at the same time to the dominant taste of the editors of high-impact journals and expert panels at once. This might yield the opportunity of creative deviance (Mainemelis, 2010), but, in fact, creative endeavors with high levels of novelty are snuffed out by a lack of economic capital and symbolic resources (especially regarding the possibility to set the standards of ‘good’ and useful research). This is in line with our argument on the two-level supervision and the subsequent expectations formulated in section ‘The symbolic power and rule of research assessment bodies and its interaction with peer review practices as two-level supervision of research’.

This finding is mirrored in the developments of the topic dimensions. In dimension 1, topics relating to the taste of the dominant actors in the field of power, global, quantitative approaches, and economically well-endowed research are consecrated, whereas locally embedded research is marginalized. This is associated with a shift from politics and studies on organization to health and demography, as well as psychologically infused research on stereotypes in topic dimension 1. At the same time, we see topics related to regionally located (social) problems moving from dimension 1 to dimension 2 where they fuse with social engineering. The local is now combined with high degrees of entropy and is contrasted by focusing on topics like Europe or global dimensions. This tells us that either the departments must probe into these topics or that topics focusing on social problems are increasingly outsourced from dominant to dominated departments.

These departments yield low chances to acquire funding by the HEFCE, so they possibly seek to secure grants provided by transnational philanthropy (e.g. the Bill & Melinda Gates foundation) which also has a relatively narrow focus of funded topics. At the same time, departments with research on more local topics seek to aid communities and relatively dominated groups in the United Kingdom, which mirrors the topic division between departments as uncovered by Warczok and Beyer (2021) for US sociology and Schmitz et al. (2019) for German sociology. This could be interpreted as division of labor within the academic field of the United Kingdom, but is, at the same time, a sign that many departments might have not the resources to conduct large-scale research like Oxford. Again, department and scholars comply with demands to ‘serve’ the society incorporated in the REF and thus indicate that Campbell’s law is at work. Yet, dominated departments are prevented from effectively doing so by their lack of economic, cultural, scientific, and administrative capital.

Furthermore, the relevance of economic capital provided by companies highlights the need and opportunity to diversify funding sources of dominant sociology departments. Therefore, for many scholars, chances of getting funded by the REF could be too low, so they adjust to topics which are of interest to companies. Insofar, they exchange one sort of situation in which they comply with the mechanism described by Campbell’s law (REF criteria) with another more suited to the economic field (economically viable output). As such heteronomous research gets increasingly consecrated, the two-level supervision of the RAE/REF provides a signal to dominated departments that it is worth to adjust research output to business actors situated in the field of power. In this sense, the symbolic power of the RAE/REF leads to overdriven adaptation of departments and submissions to the heteronomous pole of the academic field and to the autonomous pole with the editors and peer reviewers of renowned journals led by the academic elite.

Moreover, we witness the decoupling of departments with high levels of cultural capital embodied in the number of FTE staff and PhD output. This could be read as a strategy to produce REFable scholars. However, these are also possible knowledge workers who might be transferred to organizations in different fields and then become stakeholders of the experts placed in the expert panels. This might be also a strategy to circumvent the two-tier supervision.

The panelists’ taste for causality and directly measurable impact (Watermeyer and Hedgecoe, 2016) is reflected in the association between quantitative and elite-related topics and the consecration of research in terms of 4*-RAE/REF ratings. These panels bring abstract research findings into a vertical order, and, by doing so, reinforce, the symbolic hierarchy and symbolic violence already in place. They also yield control over the intrusion of methods/topics related to different disciplines into sociology. Against this backdrop, we see that different departments (1*–3*) seek to emulate excellence provided by 4* departments.

Therefore, we suggest 4* departments to have a quadruple advantage: (a) They set the rules of the academic discourse, what is to be funded, and indirectly how departments should adapt to what is expected of them. These developments (b) enforce a bias against novelty, insofar as not only topics related to high-impact journals get consecrated, but (c) these topics must be also consecrated by the experts responsible for establishing the quality profiles. In this sense, we expect (d) increasing pressure that researchers at mid- and lower-ranking universities are exposed to.

Conclusion

The aim of our article was to investigate the impact of the two-level supervision imposed by the RAE/REF on the academic field using UK sociology as an example. We focused on sociology, as it is a deeply divided discipline with disputes between different schools of thought and departments, rendering it particularly vulnerable to external influence. We applied a mixed-methods framework and combined LDA with a diachronic MFA to analyze the interplay between the topic structure and the capital distribution among the sociology departments in the RAE 2008 and REF 2014. Our findings suggest that quantitative approaches geared toward the needs of actors in the field of power get consecrated by the highest quality scores (4*), which is in line with expectations a, c, and e in section ‘Implications for the empirical investigation’. These include organizations, voting behavior, public health, and demography in 2008, and health, demography, and research on stereotypes in 2014. Research aligned with qualitative approaches, the aftermath of the UK empire, or regionally limited studies yield low levels of consecration. Overall, research diversity according to our measures was very low at both points in time, but especially so at departments with the highest quality scores.

So, has sociological research become less diverse between the RAE 2008 and REF 2014? The answer clearly is no, but this is not surprising given the already low levels of research diversity in 2008. We rather find the need to adapt to changing stakeholder demands as outlined in expectation d outlined in section ‘Implications for the empirical investigation’. If scholars are backed by well-endowed and staffed departments and use easily reproducible, quantitative methods, then it is most likely that their departments will get funded (gaining 3* or 4* scores), which is in line with expectations a, b, and e. Yet it also appears that being funded by the REF is getting riskier, as the diversification of funding sources in 2014 especially for dominant departments but also to a lesser degree for mid-tier departments suggests. The other interpretation in line with HFT is that actors situated in the economic field have become more powerful in the field of power and were thus able to impose their needs on the second level of the two-level supervision, or sociological research with the abovementioned focus has become more useful to these actors. This is associated with the devaluation of research on aspects of social inequality (e.g. related to social work or social problems). Here, a homology is seen between the departments deprived of social, economic, and symbolic capital and their research subjects. This is nothing less than a reinforcement of the class structure of the academic field in the United Kingdom and in society at large.

As always, our study is prone to a few limitations. First, it lacks in comparability. We only investigated the impact of two-level supervision on sociology, using only two waves of the RAE/REF. As the results of the REF 2021 were published only recently and could not be included in the study at hand, future studies should include at least this wave and different research subjects, for example, psychology, economics, or physics. This research strategy could yield a contrast foil against which the diversity measures could be compared. Second, we did not control who was selected as REFable and thus might underestimate the real diversity of sociological research conducted at UK departments. However, this would only underscore how narrowly defined the eligibility for research under the two-level supervision in the United Kingdom is. Another reason for underestimation might be the use of LDA which aims at maximizing the probability of a text getting assigned to a limited number of topics. We, therefore, suggest that different topic modeling techniques such as Structural Topic Modeling or Latent Semantic Analysis should be used for comparison.

Nevertheless, our approach demonstrates that the combination of computational methods, methods provided by the canon of sociological research, and relational theory yield promising lines of research to focus on the discursive-symbolic structures, power structures, and social change at the same time. Additionally we advise scholars to include qualitative methods, such as interviews of researchers, department heads, expert panelists, and personnel employed at funding bodies, into their research endeavor. These people could help to uncover different aspects of the habitus and practices which would substantiate the relation between the different levels of supervision, possibilities of adaptation, and ultimately the rationale for focusing on more or less novel lines of (more or less diverse) research.

Furthermore, future studies should account for the Journal Impact Factor of the submissions, as well as the subject areas covered by these journals. This research strategy would allow us to assess how interdisciplinary each department is, and if so, what kind of interdisciplinarity they pursue. For example, as in the German case (Schmitz et al., 2019), quantitative departments may establish interdisciplinarity through their methods with economics, political science, or computer science, while qualitative departments tend to cooperate with the humanities and linguistics. Finally, we suggest to collect all output by sociologists (or other disciplines) and then recalculate the diversity measures over all publications and compare them to submissions in the RAE/REF. This procedure would allow us to check the difference between all research performed and the selected, thus visible part of research submitted to the expert panels. Only by doing so, we will be able to grasp the direct and indirect symbolic effects of performance measurements, self-optimization, and gaming the system strategies on the knowledge-producing and problem-solving capacities of academic disciplines, and the research diversity covered by different disciplines. Applying the framework of two-level supervision, we might as well uncover possible negative effects on the next generation of scholars (Unger et al., 2022) who are key to uphold research diversity and to provide solutions to pressing technological and societal problems.

Supplemental Material

sj-pdf-1-ssi-10.1177_05390184231158210 – Supplemental material for All power to the reviewers: British sociology under two-level supervision of the Research Excellence Framework

Supplemental material, sj-pdf-1-ssi-10.1177_05390184231158210 for All power to the reviewers: British sociology under two-level supervision of the Research Excellence Framework by Oliver Wieczorek, Richard Münch and Daniel Schubert in Social Science Information

Footnotes

Appendix 1

Appendix 2

Appendix 3

Appendix 4

Appendix 5

Appendix 6

Appendix 7

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The German ministry of education and research under grant no. 01PY13010 (Non-intended effects of performance assessment in science).

Supplemental material

Supplemental material for this article is available online.

Notes

Author biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.