Abstract

The advancement of driving automation systems is transforming surface transportation. Understanding how drivers develop trust in these systems is crucial for their effective implementation. This study investigated the influence of drivers’ initial expectations and the consistency in system errors on trust in a Level-3 automated driving system (ADS). Participants read descriptions that characterized system capabilities to be either high or low, following which their initial levels of expectation were assessed. Error patterns (no, consistent, inconsistent errors) were manipulated across three simulated drives. Subjective trust ratings after each drive and reaction time to takeover requests (TORs) were measured. Results showed that initial expectations did not significantly impact overall trust or TOR performance; instead, trust was adjusted based on the system’s actual performance. These suggested a greater influence of direct experience over drivers’ preconceived expectations. The perceived predictability of the system partially mediated the effect of error consistency on trust, with inconsistent errors worsening TOR performance. The study highlighted the need for predictable ADS designs and driver-system interactions to enhance trust and road safety.

Keywords

In recent years, driving automation systems have advanced significantly, promising to transform the landscape of surface transportation. These systems, which assist drivers by consistently managing various aspects of the dynamic driving task (

Trust in Driving Automation Systems

Trust in automation is “the attitude that an agent will help achieve an individual’s goals in a situation characterized by uncertainty and vulnerability” (

Trust is a dynamic concept. The initial level of the drivers’ trust can be quickly influenced by initial experiences with automation systems (

The Society of Automotive Engineers categorizes levels of automation in driving automation systems from 0 to 5: Level 0 is no automation, which requires the human to perform all driving tasks; Level 5 involves full automation without human intervention (

Driver Expectations

Drivers’ expectations originate from their mental models of the driving automation systems. A mental model of a system is a person’s internal representation of that system, including its purpose, structure, and functioning (

Mental models, and thus expectations, evolve with experience. A recent on-road study (

Setting drivers’ expectations of the driving automation systems may have an impact on trust. Previous research has shaped participants’ expectations through instructions on system capabilities before interaction with the driving systems, revealing mixed results on how expectations influenced trust in systems. Beggiato and Krems (

Because of the unclear relationship between mental models and trust, we grounded our approach in the expectation-confirmation theory (ECT). This ECT, initially proposed to explain consumer satisfaction, posits that products meeting or surpassing prepurchase expectations lead to satisfaction, while unmet expectations result in dissatisfaction (

Automation Errors

Another influential factor in trust is automation errors. Studies showed that failures to perform actions or takeover requests (TORs) requiring manual intervention reduced trust (e.g.,

An important yet under-researched aspect of automation errors is whether they occur for consistent reasons. Muir (

Current Study

Building on earlier work from this research (

At the beginning of the experiment, participants’ expectations were set at either high or low through descriptions of the system’s capabilities. Error patterns were manipulated across three simulated driving scenarios: no error (baseline condition without errors), consistent errors (errors always occurred for the same reason), and inconsistent errors (errors varied across drives). The errors were prompted by TORs. Participants’ trust was measured through subjective reports after each drive, and their reaction time to the TORs (TOR time) was recorded in the two error conditions. The following hypotheses were formed based on prior literature:

Hypothesis 1.1

The low-expectation group would have higher subjective trust than the high-expectation group because the system’s actual performance would exceed that of the low initial expectations.

Hypothesis 1.2

The no-error group would have the highest subjective trust among the three error-pattern conditions; the inconsistent-error condition would have lower subjective trust than the consistent-error condition because of unpredictability.

Hypothesis 2.1

Participants in the low-expectation group would have a shorter overall TOR time than the high-expectation group as they would anticipate the system to fail more frequently, preparing them to regain control more swiftly.

Hypothesis 2.2

The inconsistent-error condition would result in a longer overall TOR time than the consistent-error condition because unpredictability would delay participants’ takeover responses.

Method

Participants

A power analysis using an alpha level of .05 and an effect size of .25 suggested a sample size of 158 for a power of .80. Considering the counterbalancing design and the feasibility of data collection, we adjusted the planned sample to 144. We recruited 150 undergraduate students from Rice University, offering one research credit for their participation. After excluding invalid data (see the section “Data Exclusion” for the criteria and process), the final sample retained 138 participants, which falls within the acceptable range reported in comparable studies (e.g.,

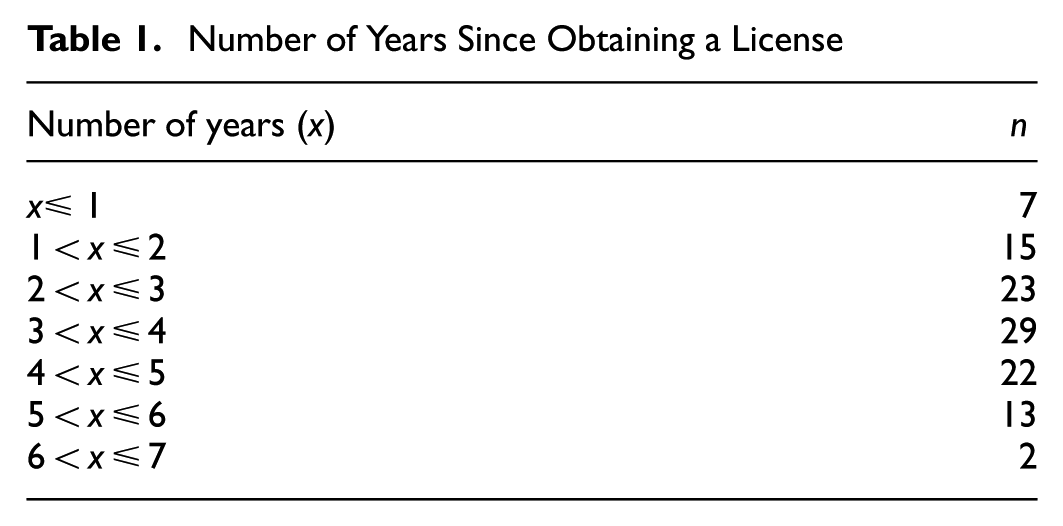

Driving experience data were collected from 111 participants (the inclusion of questions concerning driving experience in a Qualtrics survey was integrated into the study protocol after data collection had commenced): the number of years since obtaining a driver’s license ranged between no more than one year to seven years (Table 1). On average, they drove 4,761.38 mi (SD = 6,433.46) per year. Automated driving experience data were gathered from interviews with 108 participants (audio recordings for the other three participants were not captured): 17 of them had sat in an autonomous vehicle while it was on autopilot, 46 had used advanced driver-assistance technology (such as adaptive cruise control), and 45 had no experience in ADSs.

Number of Years Since Obtaining a License

Apparatus

A STISIM Drive (Build 3.20.03) driving simulator was employed. The driving environment was projected onto three table-mounted Dell Ultrasharp 27 monitors, placed on a table covered by a black cloth (Figure 1). The experimenters used a fourth monitor, out of participants’ sight on another table, to administer the experiment. Participants interacted with the simulator using a Logitech G920 Racing Wheel and pedals.

Apparatus setup.

Stimuli

Because error consistency was a critical manipulation in this study, it was necessary for errors to be distinguishable. We adopted errors from three functional components in a proposed autonomous car architecture (

We designed driving events to reflect three functional errors (Figure 2). In the perception event, there were no lane markings or incoming vehicles for the ego vehicle to infer lane boundaries. In the localization event, the vehicle lacked access to a GPS signal. In the planning event, the vehicle faced a decision conflict when it stopped at a red light with an approaching ambulance such that it could not determine what to do. All three triggering events happened when the ego vehicle came to a stop at a red light or stop sign. Each event could potentially trigger an error caused by a problem in the corresponding function. There would be an error only if the event triggered a TOR but no error if the vehicle could handle the event on its own.

Triggering events for the three errors.

Three indicators on the right side of the dashboard reflected the status of the three vehicle functions. Normally, the indicators were lime green. During a triggering event, the corresponding indicator flashed vivid pink to inform participants of the detected abnormality. In addition, a TOR icon appeared on the left side of the dashboard when an error occurred, accompanied by three 1,000 Hz, 250 ms beeps (in accordance with

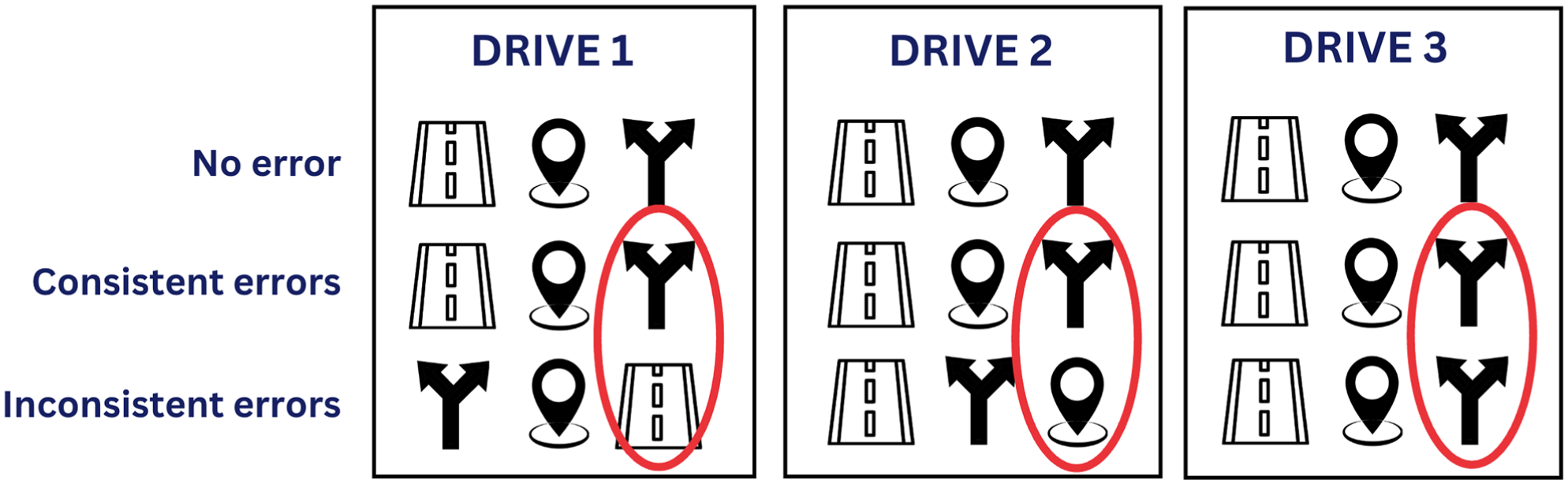

Participants experienced three drives in all conditions, each containing all three triggering events spaced out with varying intervals (Figure 3). TORs were prompted at the last event except in the no-error condition. The three drives, set up under different environments (rural, suburban, and urban), had varying durations (about 4, 5, and 7 min) to make TOR timing unpredictable. The drive order was fully counterbalanced.

An example arrangement of the three drives.

Experimental Design

The experiment used a 2 (initial expectations: high, low) × 3 (error patterns: no error, consistent errors, inconsistent errors) between-subjects design. Participants were randomly assigned to one of the six conditions.

At the beginning of the experiment, drivers read instructions in a Qualtrics survey, which described the system’s capabilities differently. The high-expectation group read, “the autonomous vehicle is capable and reliable in perceiving its surroundings, identifying the vehicle’s location, and planning an appropriate route given the road conditions,” while the low-expectation group read, “the autonomous vehicle may fail to perceive its surroundings, identify the vehicle’s location, or plan an appropriate route given the road conditions.”

Error patterns were manipulated across three simulated drives, with all three triggering events (missing lane marking, missing GPS signal, and decision conflict) present in each drive in counterbalanced orders. In the no-error condition, the system managed all events without issuing TORs. In the consistent-error condition, the same event caused errors across all drives. The specific event among the three triggering events that caused the errors was randomized for each participant. In the inconsistent-error condition, different triggering events led to errors across three drives. Each event triggered an error exactly once, such that participants experienced all three errors in a counterbalanced order.

The experiment included two dependent variables. First, TOR time was a measure of readiness to take over the vehicle, defined by the duration between the initiation of a TOR and participants’ earliest behavioral response (e.g., steering, pressing the gas or brake). Second, participants’ subjective trust in the system was measured using Jian and colleagues’ 12-item questionnaire (

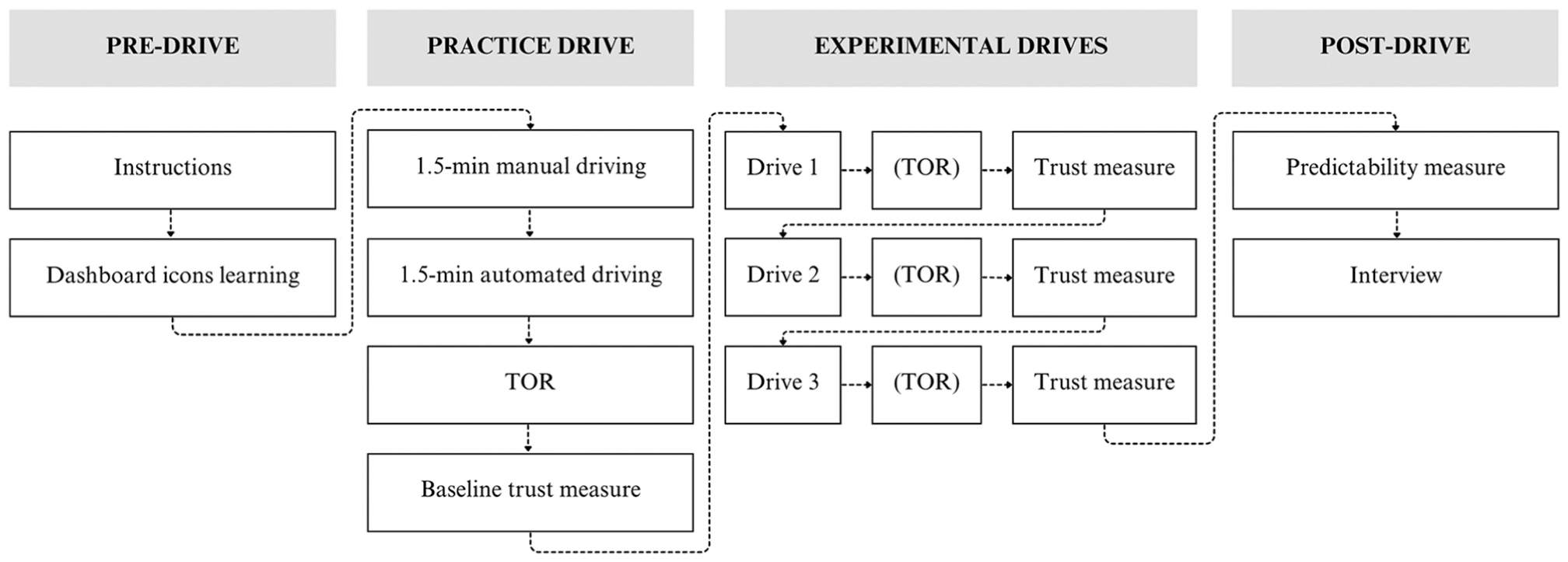

Procedure

Figure 4 presents a flowchart of the study procedure. On arriving at the laboratory, participants reviewed and signed the consent form, presented a valid U.S. driver’s license, and silenced their phones. The experimenter introduced the brake pedal, gas pedal, and turn signals, instructing participants to adjust a non-rolling chair for comfortable operation.

Primary study procedure.

Participants were presented with a Qualtrics survey. In the survey, participants read aloud the instructions that manipulated their initial expectations and reminded them to be ready for potential TORs. They answered three 7-point Likert-scale (1 = strongly disagree; 7 = strongly agree) questions that measured their initial level of expectation before any interaction with the driving simulator: “The autonomous vehicle can perform normally when the lane markings are missing,”“The autonomous vehicle can perform normally when the Global Positioning System is not working,” and “The autonomous vehicle knows what to do when it stops at a red traffic light while an ambulance is approaching from its back.” The survey explained the meaning of each dashboard icon by presenting the icon and its interpretation side-by-side, followed by a learning check. Incorrect answers led participants back to the explanations until they answered all questions correctly.

Next, participants performed a two-phase practice drive (identical regardless of condition) on the STISIM drive simulator. The first phase involved 1.5 min of manual driving to familiarize participants with simulator operations, without any dashboard indicators. The second phase involved 1.5 min of automated driving to demonstrate the system’s capabilities and dashboard icons related to autonomous functions. During the transition between the two phases, the experimenter announced the onset of automated driving and reminded participants to place their hands on the steering wheel and their feet on the pedals. The three indicators initially appeared in lime green. Later, one flashed in vivid pink without any critical event, preventing participants from anticipating the critical event in the experimental drives and ensuring unbiased TOR times. The experimenter explained the meaning of dashboard icons to ensure participants understood the appropriate actions to take in each state. At the end of the practice drive, a TOR was presented visually and auditorily to familiarize participants with the takeover action. On completing the practice drive, participants completed the initial trust questionnaire (

Participants experienced three experimental drives based on their - condition. Trust was measured once after each drive using the same questionnaire as the baseline. TOR times were measured following each TOR in the consistent- and inconsistent-error conditions only. After each drive, participants in the consistent- and inconsistent-error conditions verbally answered the questions “Please describe in your own words the reasons you believe led to the vehicle asking you to take over during the drive session” as a manipulation check for error patterns, and “What were you trying to do after the vehicle asked you to take over?” to understand drivers’ behaviors following TOR.

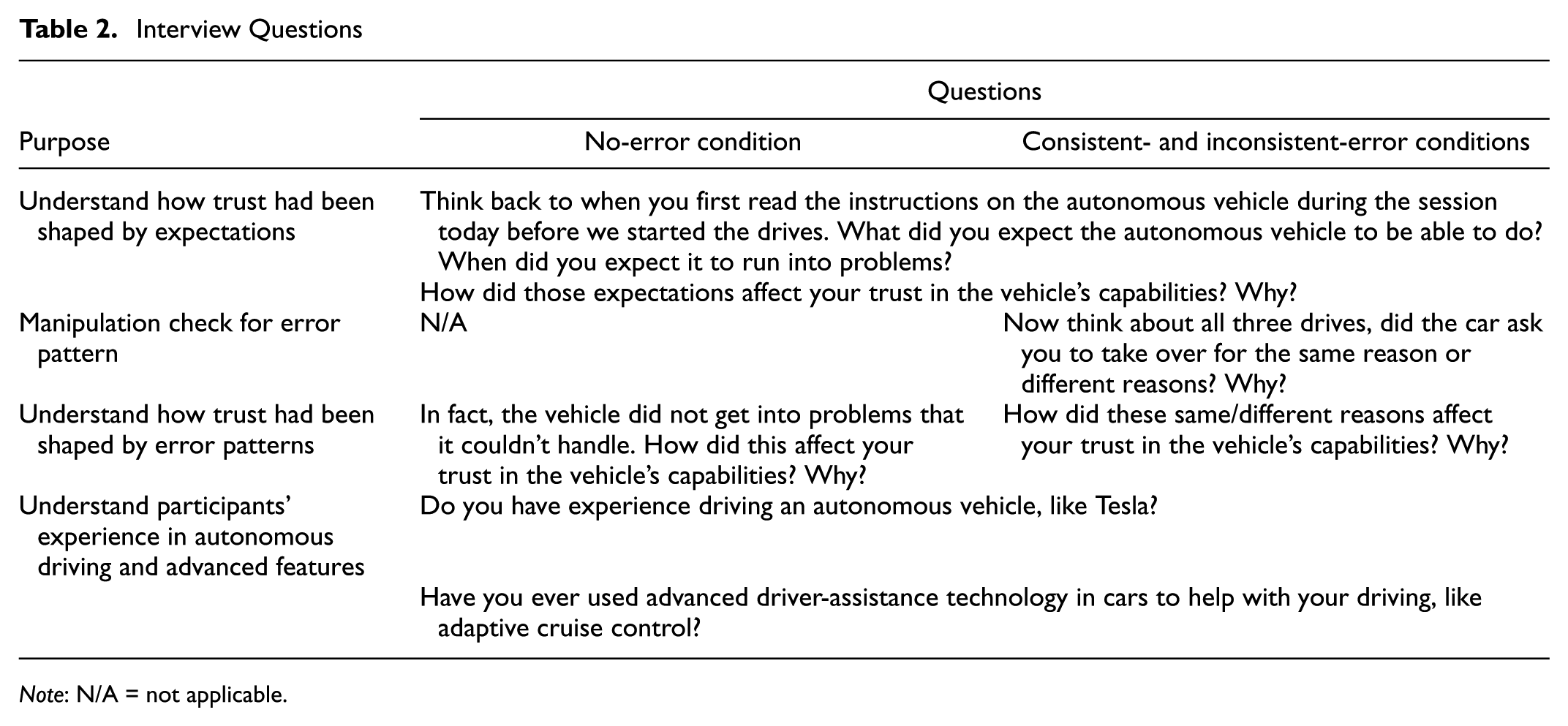

After the drives, participants rated the system’s predictability: “Based on your experience with the autonomous vehicle over the three drives, to what extent do you agree with the following statement? The behavior of this autonomous vehicle is predictable” (1 = strongly disagree; 7 = strongly agree). They also reported demographics and driving experience. Finally, a semistructured interview (Table 2) was conducted to explore how trust was shaped by initial expectations and error patterns, check the effectiveness of error-pattern manipulation, and gather information on participants’ experience with autonomous driving and advanced driver-assistance technology.

Interview Questions

Results

The Brown-Forsythe test confirmed that the assumption of homogeneity of variances was met for all analyses of covariance (ANCOVA) and analyses of variance (ANOVA) conducted throughout the results section.

Data Exclusion

Of the 150 participants, eight were excluded because of missing data, procedural errors, and participant eligibility issues. Four participants provided incorrect reasoning for why the system issued TORs or were unsure why the system asked them to take over in at least one drive, and thus were removed from the analysis. Data from the remaining 138 participants were included in the subsequent analyses.

To evaluate participants’ initial level of expectations, we computed an expectation score by averaging responses to the three questions that measured participants’ initial expectations immediately following the instructions. As the questions were on a 7-point Likert scale and were positively worded, a score above four indicated positive expectations, and a score below four indicated negative expectations. An independent samples

Trust

In this section, we assessed participants’ trust in the ADS using three distinct trust measures. The

Based on a review of 149 peer-reviewed articles that applied Jian et al.’s trust scale to evaluate trust in automation, AI, or related systems conducted in June 2024 (

The initial level of expectations was assessed with three Likert-scale items targeting the vehicle’s ability in perception, localization, and planning. The internal consistency (Cronbach’s α = .56) did not fall in a satisfactory range (

Baseline Trust Measure

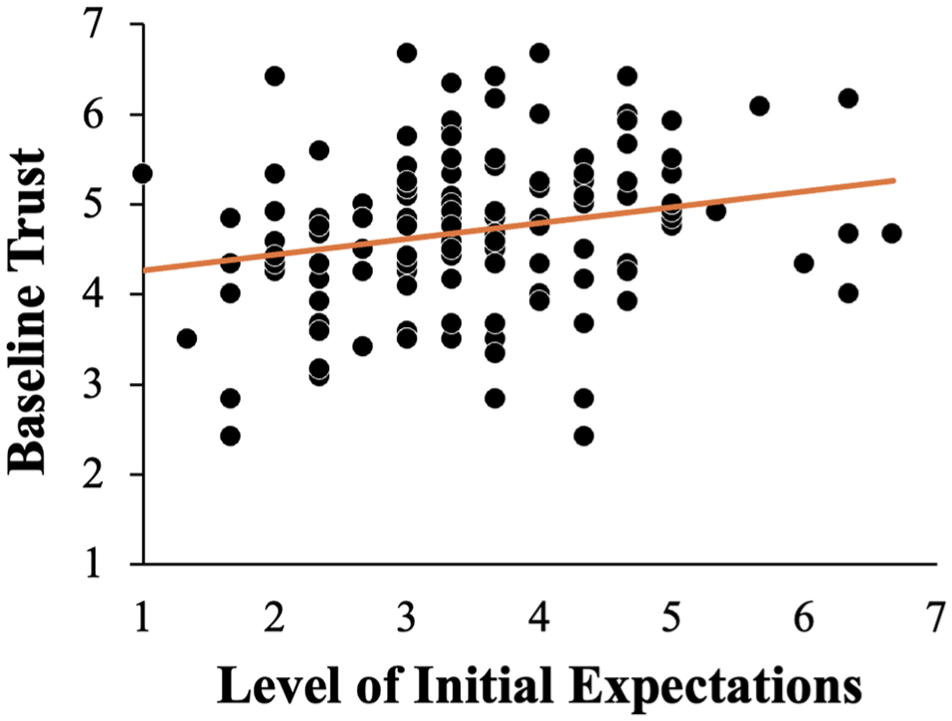

To examine whether experimental conditions were equivalent in their trust level before the experiment, we performed a one-way ANCOVA to examine the effects of error pattern on baseline trust while controlling for expectation (with Type III sums of squares). After controlling for expectation, the effect of the error pattern on baseline trust was not statistically significant,

Relationship between expectations and baseline trust.

Effects of Expectation and Error Pattern on Overall Trust

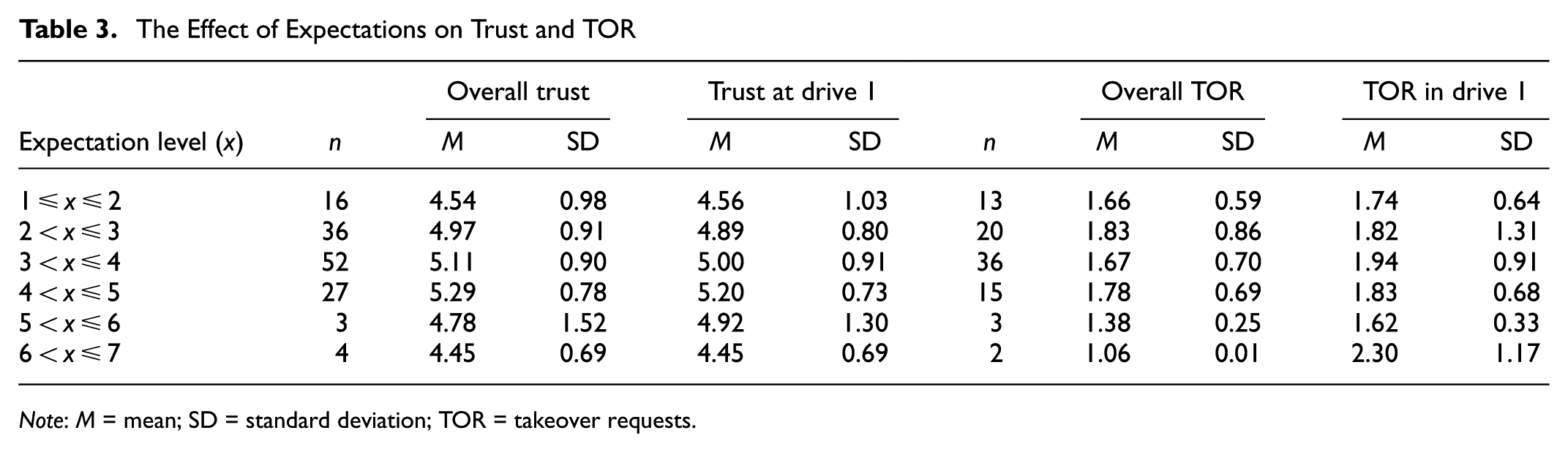

A one-way ANCOVA with Type III sum of squares was conducted to examine the effects of error pattern on overall trust, an average of trust measured after each of the three drives, while controlling for expectation. After controlling expectation, the effect of the error pattern on overall trust was not statistically significant,

The Effect of Expectations on Trust and TOR

Given that trust might have been adjusted over the course of the three drives, it was essential to also consider the influence that initial expectations might have on early trust formation. Therefore, in addition to examining the effect of expectation on overall trust, we conducted a similar ANCOVA on trust measured immediately after the first drive, before substantial evolvement could occur. After controlling for expectation, the effect of the error pattern on trust was not statistically significant,

Mediation Analysis for the Effect of Error Pattern on Trust through Predictability

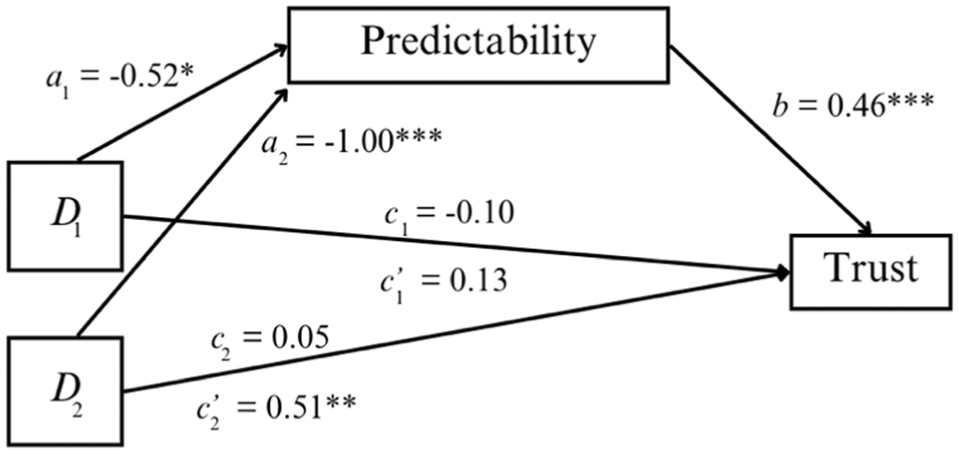

The ANCOVA results might not fully capture the dynamics underlying error pattern and trust because error consistency might have impacted the perceived predictability of the system, a key determinant in trust development (

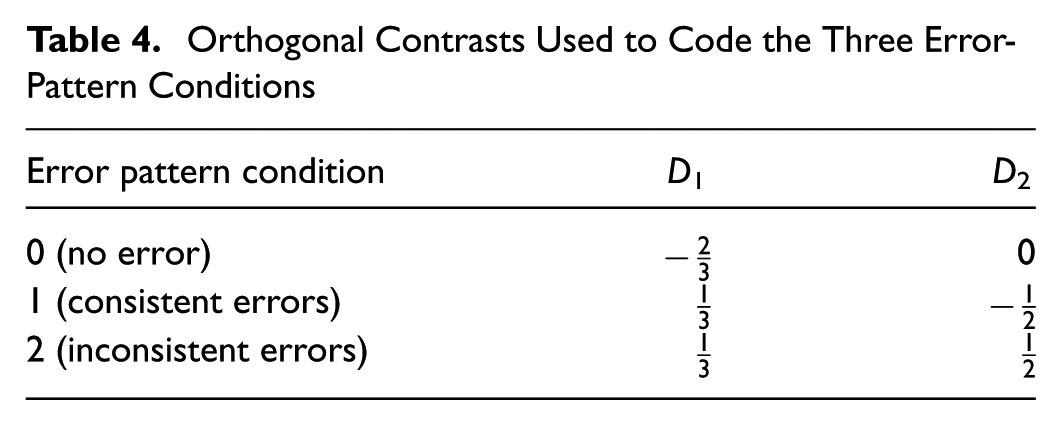

Using the approach recommended by Hayes (

Orthogonal Contrasts Used to Code the Three Error-Pattern Conditions

The relative indirect effect of the error pattern on overall trust through the perceived Predictability of the system performance was statistically significant. Specifically, the coefficient of the indirect effect of experiencing errors in the drives (regardless of the consistency) relative to no error was

The mediation effect of error pattern on trust through predictability.

The relative direct effect,

The relative total effect was the overall effect of error pattern on trust without considering the mediation effect. The coefficient of the total effect of experiencing errors on trust relative to no error was estimated to be

TOR Time

In this section, we examined two measures of reaction time to assess participants’ driving behavior in response to TORs. The average TOR time represented the mean of reaction time to TORs across three drives. The other was the first TOR time measured in Drive 1.

Outlier Handling

We defined outliers as any values that were more than three interquartile ranges (IQRs) above the third quartile or below the first quartile (

Effects of Expectation and Error Pattern on TOR Time

A one-way ANCOVA with Type III sum of squares was conducted to examine the effects of error pattern on average TOR time, the mean of TOR time measured in three drives, controlling for expectation. After controlling expectation, participants who experienced inconsistent errors (

In addition, we investigated whether similar effects could be found on the TOR time measured during the first drive. This measure captured participants’ driving safety behavior early on before adjusting their expectations based on actual experiences with the system. We conducted another ANCOVA on TOR time in the first drive. Similarly, we found that the initial expectations did not significantly influence the TOR time in Drive 1,

Mediation Analysis for the Effect of Error Pattern on TOR through Predictability

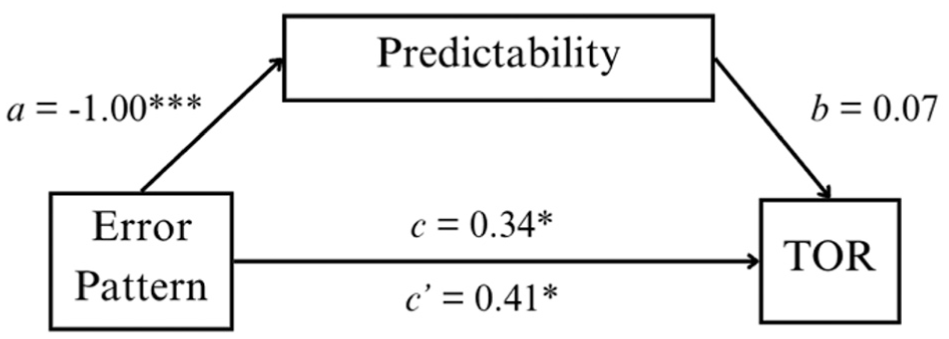

Similarly, we performed a mediation analysis using the PROCESS function in R (

Based on the analysis, there was a lack of evidence for the mediation effect (Figure 7). The indirect effect of the error pattern on overall TOR time through the perceived Predictability of the system performance was not statistically significant,

The mediation analysis of error pattern on TOR time through predictability.

Experience with ADSs

Given participants’ varied prior experience with ADSs (see section “Participants”), we also explored how these differences might have influenced their expectations of system capabilities and their trust in the system.

The Effect on Initial Expectation

A one-way ANOVA with Type III sum of squares was conducted to examine the effects of prior experience on initial expectation. No significant difference in the level of expectation was observed across participants who had experience with highly automated features such as autopilot (

The Effect on Overall Trust

A one-way ANOVA with Type III sum of squares was conducted to examine the effects of prior experience on overall trust. There was no significant difference in overall trust across participants who had experience with highly automated features such as autopilot (

Discussion

The goal of the present study was to investigate how initial expectations of system capabilities and error consistency might influence drivers’ trust in the system and driving safety. We evaluated the validity of the four proposed hypotheses based on our results.

There was a lack of evidence to support Hypothesis 1.1. Participants’ initial expectations did not affect their overall trust in the system. Since trust can be changed through experiences with the ADSs (

Hypothesis 1.2 was partially supported. Predictability fully mediated the relationship between the absence or presence of errors and overall trust: experiencing errors reduced trust by 0.24 points on a 7-point scale through predictability. This aligns with an array of previous research on trust reduction as a result of system failures (

We measured TOR time to understand how people’s responses to ADS errors might be influenced by initial expectations of the system’s capabilities and error consistency. The level of expectations did not affect the overall TOR time or the TOR time in Drive 1. Consequently, Hypothesis 2.1 was not supported. Inconsistent errors resulted in longer TOR time, validating Hypothesis 2.2. Though this relationship was not mediated by predictability, our analysis revealed a particularly strong link between error pattern and perceived predictability (Figure 7). Participants rated the system as substantially less predictable in the inconsistent-error condition compared with the consistent-error condition, highlighting that variability in failure modes strongly shaped drivers’ perceptions of the system. Participants remained vigilant to potential TORs regardless of initial expectations: the grand mean TOR time across conditions was 1.71 s (

Limitations and Future Research

Our study encountered limitations that future research could address to better understand how error patterns influence user trust in ADSs. First, the manipulation of the error pattern involved presenting three critical events in each drive, and only the last one triggered a TOR. This design aimed to highlight each error as distinct; however, it led to unexpected interpretations of inconsistent errors by some participants, who perceived the system as learning from previous events. Future studies might consider increasing the number of drives such that each error is repeated to demonstrate that the system does not learn from errors made in previous drives. Alternatively, one can present only one critical event per drive to clarify the system’s error-handling capabilities without suggesting inadvertent learning.

In addition, it appeared that the manipulation of initial expectations at the start of the experiment did not work as intended, resulting in similar levels of expectations across groups. Several reasons could explain this pattern. First, the written instructions might have been insufficient to effectively alter participants’ expectations. People’s perceptions of what the system can do might be unlikely to change without witnessing its capabilities firsthand. Second, as one participant noted, they may have perceived the instruction in the low expectation-condition (“the autonomous vehicle may fail to perceive its surroundings, identify the vehicle’s location, or plan an appropriate route given the road conditions”) as a common disclaimer that car manufacturers use, which did not necessarily imply that the system lacked these abilities. Third, participants in both conditions were informed that there could be a need for a TOR, requiring them to take back control. Although the high-expectation group was told the system would be capable, the mention of a TOR might have diminished their expectations, reducing the impact of the manipulation. Since these are only speculations, further research is warranted to better understand the factors that shape expectations and to identify more effective methods for manipulating expectations.

Another limitation was the study’s inability to separate the effects of error consistency from the number of distinct errors. In conditions with consistent errors, participants encountered only one type of error; whereas in the inconsistent-error condition, participants encountered three different errors. This design did not rule out the possibility that it was merely the number of distinct errors—rather than their inconsistency—that contributed to the observed effects on trust. Future studies should attempt to isolate the two factors to determine their specific impact on trust and TOR performance.

It is also essential to acknowledge the potential effects of error attribution on our results. While we designed three scenarios to represent failures in vehicle functionality, participants might have attributed these events to external environmental factors unrelated to the vehicle. For instance, a missing GPS signal might have been interpreted as the result of poor signal coverage in the geographical region rather than a vehicle error. Such external attribution likely attenuates the impact of errors on trust, as participants do not perceive the vehicle itself to be erroneous. To assess the extent to which error attribution may have influenced our findings, we analyzed participants’ verbal responses to the open-ended question, “Please describe in your own words the reasons you believe led to the vehicle asking you to take over during the drive session.” These responses were categorized as: (1) internal attribution to vehicle failures, (2) external attribution to environmental factors, or (3) neutral, defined by participants either restating the TOR message displayed (e.g., “the GPS signal was missing”) or providing ambiguous explanations that could not be clearly classified. Among the 89 participants in the consistent- and inconsistent-error conditions, 15 attributed the errors internally, five attributed them externally, and 69 provided neutral responses. Future studies on automation errors could benefit from making the source of errors more explicit to ensure participants attribute them correctly to the system and incorporating direct measures to assess participants’ error attributions.

Lastly, the participant pool consisted of college students, consistent with many prior studies on trust-in-automation (e.g.,

Conclusion

In conclusion, this study reinforces existing research on trust in ADSs by examining the dynamics among initial expectations, error consistency, and drivers’ trust. Contrary to our initial hypotheses, initial expectations did not affect trust or TOR performance; instead, trust was shaped by the system’s actual performance during real-time interactions, indicating that direct experience is more influential than preconceived expectations. Our findings also highlight how quickly trust can change, even with limited exposure to ADS behavior in the course of this experiment. The impact of inconsistent errors on trust was influenced by the perceived predictability and learning capabilities of the system, suggesting a need for further study in this area. These findings have practical implications for driver education and ADS engineering. Facilitating interactions between drivers and ADSs allows drivers to accurately assess the capabilities and limitations of ADSs, which is instrumental in achieving the appropriate level of trust. The study also indicates the importance of consistency and predictability in system engineering, thereby improving safety and reliability in automated transportation.

Footnotes

Acknowledgements

We thank the undergraduate research assistants for their efforts in data collection and data coding: Faith Zhang, Teon Golden, and Aisha Khemani, as well as Scott A. Mishler and Katherine R. Garcia for their help setting up the driving simulator. We also thank the reviewers for their feedback.

Author Contributions

The authors confirm contribution to the paper as follows: study conception and design: X. Cheng, J. Chen; data collection: X. Cheng; analysis and interpretation of results: X. Cheng, J. Chen; draft manuscript preparation: X. Cheng, J. Chen. All authors reviewed the results and approved the final version of the manuscript.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was partially supported by the National Science Foundation (Grant No. 2245055).