Abstract

Road infrastructures are fundamental parts of peoples’ lives, allowing them to access various destinations and activities. Accordingly, infrastructure should be in an appropriate condition. A pavement maintenance plan should be optimized, and pavement condition should be predicted accurately to obtain optimal pavement maintenance solutions. Therefore, the prediction of pavement conditions with high accuracy has been an immense concern. This study aims to introduce a new approach to accurately predict pavement international roughness index (IRI) over the long term. To this end, all the vital parameters, including initial IRI, pavement age, lane width, traffic loadings, structural characteristics, climatic features, and pavement distresses, are considered. With all the vital parameters, the prediction problem includes 58 variables. Thus, the application of a proper feature-selection technique is vital. To this end, a novel hybrid feature-selection method is introduced by a combination of arithmetic optimization algorithm and stochastic gradient descent regression (AOA-SGDR). Moreover, the performance of the proposed feature-selection method is compared with Lasso and all features. Five machine-learning algorithms, including random forest regression (RFR), support vector machine, multi-layer perceptron, decision-tree regression, and multiple linear regression, are employed for the prediction process. By employing AOA-SGDR, the average testing-data mean absolute error (MAE) reduces by at least 7.92%. Meanwhile, RFR provides the highest accuracy, with average testing-data MAE of 0.095 m/km. Moreover, analyzing the parameters indicates that initial IRI, pavement age, equivalent single axle load (ESAL), and structural number (SN) have the most significant relative influence on IRI.

Keywords

Transportation infrastructures provide vast mobility and economic development to modern societies and form the economy’s foundation ( 1 ). One of the main components of the transportation infrastructure is pavement. Pavement condition plays a vital role in the safety and serviceability of roads. The paved roads in the United States are vast, reaching 5 million kilometers, and many passengers and large quantities of goods are transported via paved roads ( 2 ). Although pavements deteriorate over time as a result of aging, increasing traffic loadings, and increasing intensity and frequency of severe weather, pavements should be in an acceptable condition because of their high importance. Nevertheless, over 40% of all roads in the United States were in poor or mediocre condition in 2021 ( 3 ).

To maintain the pavements in the desired condition, maintenance and rehabilitation (M&R) treatments must be performed. To optimize M&R treatment planning, the future condition of the pavements must be predicted, which can be done through the pavement condition indicators ( 4 ). The international roughness index (IRI) is the most extensively used indicator to characterize pavement surface overall roughness, which has been widely used in previous studies to plan M&R treatments ( 5 – 7 ). Because of the high importance and usage of IRI, many studies have developed various IRI models to predict IRI increments ( 8 ). To this intent, previous studies employed various features affecting pavement roughness to develop IRI models.

Al-Suleiman and Shiyab ( 9 ) developed two IRI-prediction models for slow and fast lanes using regression. Pavement age was considered as an independent variable. The coefficients of determination (R2) of the models for slow lane and fast lane were 0.80 and 0.61, respectively. Moreover, Tsunokawa and Schofer ( 10 ) developed an IRI-prediction model considering initial IRI and pavement age. However, using only initial condition and pavement age may not lead to obtaining high accuracy.

In addition to the pavement age, traffic and structural features have been extensively employed to develop IRI performance models. Equivalent single axle load (ESAL) and cumulative ESAL (CESAL) have been widely used as a traffic loading feature by previous researchers ( 11 – 17 ). In addition, structural number (SN), as an important structural feature, has been broadly employed in IRI-prediction models (11–13, 15 , 16 ). Choi et al. ( 12 ) utilized CESAL, asphalt content, and SN to form an IRI-prediction model using multiple linear regression (MLR) and backpropagation neural network. The correlation coefficient (R) of the models developed by MLR and backpropagation neural networks were 0.46 and 0.87, respectively, which may not be perfect.

Jaafar and Fahmi ( 15 ) employed initial IRI, pavement age, ESAL, construction number, and SN to develop two IRI performance models using 34 observations from the Long-Term Pavement Performance (LTPP) database. The MLR and artificial neural network (ANN) techniques were utilized to establish the models. The R2 of MLR and ANN models was 0.25 and 0.80, respectively.

Another set of features that has been widely used in the IRI-prediction models is that of climatic characteristics. Climatic features that are extensively employed in IRI model development are average annual temperature, the number of annual freeze–thaw cycles, annual freezing index, annual total snowfall, and annual precipitation ( 13 , 14 , 17 ). Albuquerque and Núñez ( 13 ) employed ESAL, SN, and average precipitation to develop two IRI-prediction functions using MLR. The mentioned model reached a coefficient of determination of 0.87. Initial IRI, CESAL, pavement thickness, and two climatic features, including precipitation and temperature, were used to generate an IRI model for flexible pavements by Khattak et al. ( 14 ). The regression technique was applied to develop the model with an R2 of 0.47 based on 623 data. However, not all required parameters might be applied in the aforementioned studies as their accuracy was not ideal.

Marcelino et al. ( 18 ) proposed an approach to predict IRI for up to 10 years. Climatic parameters, structural conditions, and traffic data were considered the model’s input. The IRI prediction was modeled using random forest regression (RFR), and the model attained an error of 6.95% ( 18 ). Marcelino et al. ( 19 ) modeled a flexible pavement IRI-prediction model using AdaBoost, using pavement thickness, annual average daily traffic, SN, and climate conditions (i.e., annual average temperature and annual average precipitation) as the prediction model’s features. The results revealed that machine AdaBoost was highly qualified to predict pavement IRI, and it obtained an R2 of 0.986.

Dalla Rosa et al. ( 20 ) used a regression model to predict IRI based on climate, treatment type, subgrade, traffic loading, and pavement type. The model attained a root mean square error (RMSE) of 0.21 m/km. Damirchilo et al. ( 21 ) used ESAL, pavement age, precipitation, number of freeze–thaw days, freeze index, and number of hot days as variables to predict pavement IRI. Extreme gradient boosting, RFR, and support vector machine (SVM) were employed for prediction purposes, and those techniques obtained R2 of 0.7, 0.66, and 0.44, respectively.

Many studies have represented that pavement distresses should be used as independent features in IRI model generation ( 17 , 22–25). Some studies only used pavement distresses to predict IRI ( 22 , 23 ). Owolabi et al. ( 23 ) established an IRI-prediction function applying the MLR technique. The authors considered only three pavement distresses to develop the model, including rut depth, number of patches, and longitudinal crack length.

As mentioned, several studies used only pavement distresses to develop IRI models. As well as these, some studies employed other features in addition to pavement distresses to generate the IRI performance model. Abdelaziz et al. ( 25 ) used initial IRI, pavement age, and three pavement distresses, including fatigue cracking, transverse cracks, and standard deviation of rut depth, to form two IRI performance models. To this intent, MLR and ANN techniques were employed to establish the models. R2 of 0.57 and 0.75 for MLR and ANN techniques was reported for IRI models, respectively.

As stated previously, many features were employed to develop the IRI-prediction models, but few studies used feature-selection methods to enhance the accuracy of the IRI prediction ( 17 , 25 ). Gong et al. ( 17 ) indicated that initial IRI was the most important feature in the IRI-prediction model using importance scores of the RFR technique. On the other hand, the results revealed that polishing, raveling, patching, and potholes had an insignificant correlation with IRI. In addition, Abdelaziz et al. ( 25 ) used the Pearson correlation coefficient to measure the significance of each feature. The authors stated that initial IRI, pavement age, the standard deviation of total rut depth, and transverse cracks had a high correlation with IRI. What is more, the site factor, longitudinal cracks, rut depth, patching, and block cracks had little effect on the IRI-prediction model based on the results of their study.

A more detailed look at the studies discussed reveals that earlier studies did not employ many parameters to generate the IRI-prediction models, because using many parameters increases the model’s complexity. In addition, the consideration of all categories of parameters, including initial IRI, pavement age, lane width, traffic loadings, structural characteristics, climatic features, and pavement distresses, together has not received enough attention in IRI model generation. Meanwhile, it has been proved that application of robust feature-selection techniques can increase the prediction accuracy significantly ( 26 ). However, the application of powerful feature-selection techniques is rarely seen in IRI-prediction problems. Furthermore, powerful machine-learning techniques need to be used to develop IRI performance functions. However, comparing several prediction techniques to obtain the highest possible accuracy has not been taken into account comprehensively.

Methods

This study attempts to develop a novel IRI performance model to accurately predict IRI for flexible pavements. This study does not consider the data collection process, and only focuses on the IRI-prediction modeling. To this end, effective parameters for IRI are determined by developing a new feature-selection technique. A hybrid feature-selection method that combines arithmetic optimization algorithm (AOA) and stochastic gradient descent regression (SGDR) is introduced to select optimal features to obtain the highest accuracy. Five machine-learning methods are used to generate the IRI performance model, including RFR, SVM, a deep-learning method called multi-layer perceptron (MLP), decision-tree regression (DTR), and MLR. The performance of these techniques is compared to select the most powerful method. Ultimately, the parameters are ranked based on their relative influence on IRI. The results of this study will help decision-makers, transportation agencies, and policy-makers to employ optimal features and the most powerful machine-learning algorithm in the IRI performance model and so to predict the pavement’s future IRI with the highest accuracy.

Data Preparation

The required data for IRI-prediction model development are extracted from the LTPP database, which holds condition data for 526 flexible pavement sections in 50 states of the United States. These sections are from highways outside cities, that connect different cities in the United States. One of the objectives of this study is to determine the optimal features for IRI model development over time. Initial condition, pavement age, lane width, traffic characteristics, structural parameters, climatic conditions, and pavement distresses are utilized to develop the model. Cumulative ESAL as a traffic characteristic and SN as a structural parameter are used in the model development.

Average annual temperature, annual freezing index, the number of annual freeze–thaw cycles, annual precipitation, and annual total snowfalls are employed as climatic features. Further, the following distresses are considered variables in the modeling:

The area of high, medium, and low severities of alligator cracking

The area of high, medium, and low severities of block cracking

The area of high, medium, and low severities of patches

The area of high, medium, and low severities of potholes

The area of bleeding

The area of shoving

The area of polished aggregate

The area of raveling

The length of high, medium, and low severities of wheel-path and non-wheel-path longitudinal cracks

The length of transverse cracks

The length of well-sealed transverse cracks

The length of medium and low severities of edge cracks

The length of wheel-path and non-wheel-path well-sealed longitudinal cracks

The length of pumping and bleeding

The length of wheel-path cracks

Transverse cracks length greater than 1.83 m

The number of high-, medium-, and low-severity patches

The number of high-, medium-, and low-severity potholes

The number of high-, medium-, and low-severity transverse cracks

The number of high-, medium-, and low-severity pumping lengths

The number of shoving instances

The number of pumping instances

The number of bleeding instances

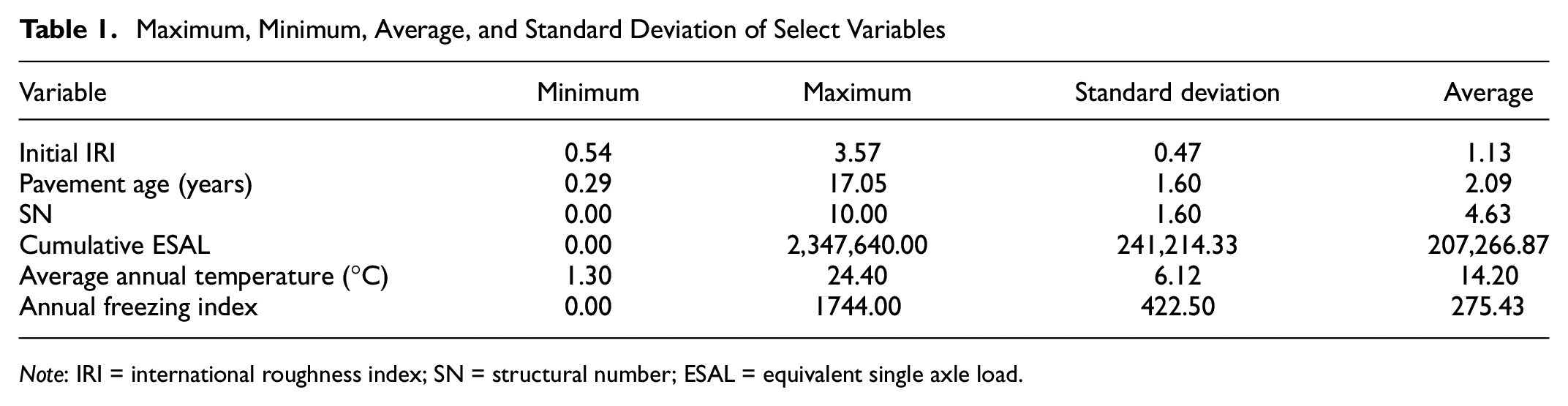

Finally, 58 features are used to develop the model. The data of pavement sections that have all 58 features and those of the M&R treatments that were not performed on them are extracted to generate the IRI performance model. Data extracted from the LTPP have 106 days to more than 17 years of IRI information ( 27 ). The maximum, minimum, average, and standard deviation of select variables are shown in Table 1. The data are generally divided into three groups: training, validation, and testing data. The training data are used to train the model ( 28 ). Validation data are employed to tune hyperparameters. Hyperparameters are the parameters that are tuned by users when training machine-learning techniques (e.g., the number of iterations and learning rate in SGDR) ( 29 ). Finally, unseen data (the data that have not been used in the training process) are applied to evaluate the prediction power of the model. Therefore, testing data are eventually used to assess the prediction accuracy of the prediction technique ( 30 ). In this study, the data are randomly divided into three groups: 60% as a training set, 15% as a validation set, and the remaining data as a testing set to assess the models’ prediction ability ( 31 ).

Maximum, Minimum, Average, and Standard Deviation of Select Variables

Note: IRI = international roughness index; SN = structural number; ESAL = equivalent single axle load.

Feature Selection

Feature selection becomes essential for machine learning and data analysis. The selection of features leads to the identification of optimal features. Feature selection decreases dimensionality by eliminating redundant or excessive data to fit the best model ( 32 ). Feature selection is needed to enhance the computational efficiency and pattern recognition of machine-learning algorithms. Furthermore, by labeling features as effective or non-effective, feature-selection results give in-depth insights into identifying optimal features ( 33 ). Additionally, feature selection and filtering out irrelevant features prevent overfitting ( 34 ). In this way, the model’s accuracy can be increased, and the model’s run time is reduced ( 35 ). As previously mentioned, 58 features are employed to generate the IRI performance model. To this intent, the widely used Lasso model and a newly developed hybrid method are applied to select the optimal features. By virtue of AOA, as a novel metaheuristic optimization algorithm, and SGDR, a new feature-selection method (AOA-SGDR) is introduced to determine the optimal features that lead to the minimum prediction error. Lasso and AOA-SGDR are described in the following paragraphs.

Lasso was introduced by Tibshirani ( 36 ) to perform feature selection and regularization. Lasso technique applies a constraint on the sum of the absolute model parameter values. The sum must be lower than a predetermined value which is called the upper bound. To do this, the technique uses a shrinkage (regularization) procedure that penalizes the regression features’ coefficients, reducing some of them to zero. Features with a non-zero calculated coefficient after the shrinking step are chosen to be used in the model generation during the feature-selection procedure. This procedure aims to reduce the prediction error ( 37 ).

To develop the novel feature-selection technique, a machine-learning technique needs to be run in each iteration of the optimization process. Therefore, a quick machine-learning method needs to be applied to reduce the computational cost (i.e., running time) ( 26 ). SGDR techniques generally converge quickly ( 38 ). Further, the selected machine-learning technique needs to be combined with other techniques to generate a hybrid model. SGDR methods have been shown to be appropriate methods to combine with other computational techniques ( 39 ). As a result, SGDR is the machine-learning technique applied to develop AOA-SGDR. SGDR is a well-known technique that is an inherently iterative algorithm and an approximation of the true gradient. SGDR starts with an initial estimation, and the processing of each step depends on the parameters learned from the previous iteration ( 40 ). SGDR chooses a single example to update the parameters, then travels in the gradient direction with respect to the selected example. This technique is straightforward, with few hyperparameters. The gradient is estimated by analyzing the training samples, over which the optimal solution can be obtained with only a few numbers of iterations. Therefore, the empirical and theoretical convergence rates of SGDR are well understood ( 41 ).

To develop the new hybrid feature-selection algorithm, an optimization technique is required to find the optimal features. The mentioned optimization technique needs to be accurate to find the global optimal solution and fast to reduce the computational cost. In solving challenging optimization problems and reducing running time, AOA outperforms various optimization techniques, such as genetic algorithm, particle swarm optimization, biogeography-based optimization, grey wolf optimizer, flower pollination algorithm, bat algorithm, firefly algorithm, moth-flame optimization, cuckoo search algorithm, differential evolution, and gravitational search algorithm ( 42 ). Therefore, AOA is used as the optimization algorithm in the proposed feature-selection technique. AOA is a novel and powerful optimization algorithm that was motivated by using the arithmetic operators, including division, multiplication, subtraction, and addition, in solving arithmetic problems ( 42 ). The optimization process of AOA consists of three phases. A set of search agents is created at random in the first phase. Afterward, in each iteration, the best search agent is considered as the optimal or the near-optimal search agent up to this point. In the second phase, division and multiplication operators are used to find a stronger search agent by exploring the search area randomly. Subtraction and addition operators are employed to overcome the weakness of division and multiplication in achieving the optimal solution. Because of the low distribution of subtraction and multiplication, the model does not get stuck in the local optimum solutions and is able to find the global optimal solution.

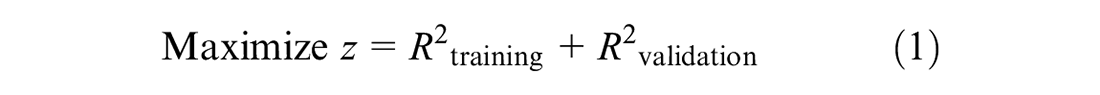

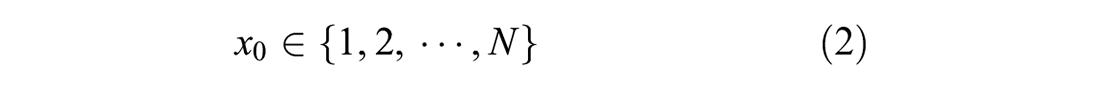

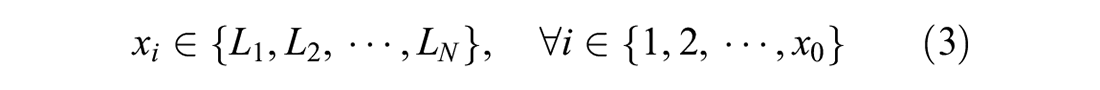

An optimization problem is modeled to develop AOA-SGDR in which SGDR is employed through the optimization process, and AOA is utilized to solve the mentioned optimization problem. The objective function and constraints of the optimization model are defined to determine the optimal features for IRI-prediction model development. The model’s objective function is indicated in Equation 1. Different combinations of features are selected by AOA and trained in SGDR. Then, the R2 of the training and testing data of the trained models based on the selected features is calculated. That is to say, some groups of features are selected in each iteration, and SGDR runs with the selected features. The coefficient of determination is calculated, and the fitness value (Equation 1) is assessed. Afterward, the fitness value of the various feature combinations is compared. After a particular number of iterations, optimal features are selected by AOA to develop the model. Therefore, by using this technique, the feature-selection method is combined with optimization to select optimal features.

subject to

where

and

Equation 1 maximizes the summation of the coefficient of determination of training and testing sets. Testing set R2 is considered in the objective function to select the features which increase the model’s prediction accuracy. Furthermore, using the coefficient of determination of unseen (testing) data in the objective function prevents the IRI-prediction model from overfitting. Equation 2 chooses the number of selected features from all features and assigns them to the model. This constraint ensures that the number of selected features has to be between 1 and

As mentioned, three feature groups, that is, optimal features introduced by the hybrid AOA-SGDR, features selected by Lasso, and all features, are employed to be used in IRI-prediction model development. The average of the summation of coefficients of determination obtained from the developed IRI-prediction models by machine-learning algorithms for all three feature-selection methods is calculated. The method that achieves the highest average coefficients of determination has better performance and can select the optimal features.

Prediction Methods

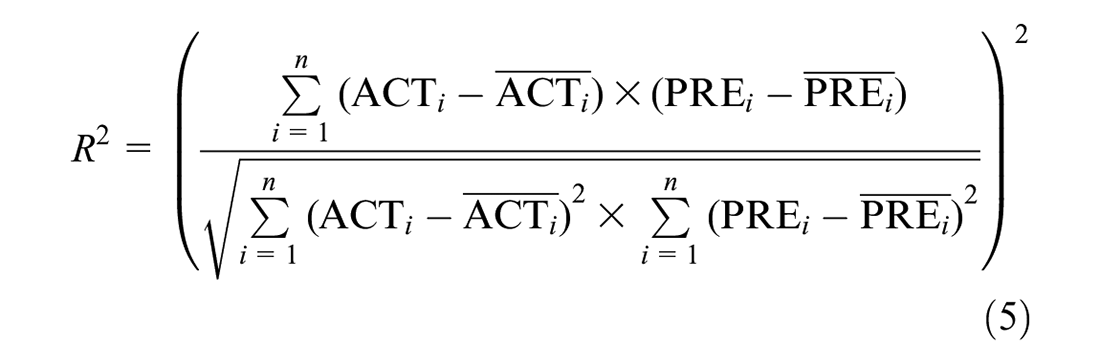

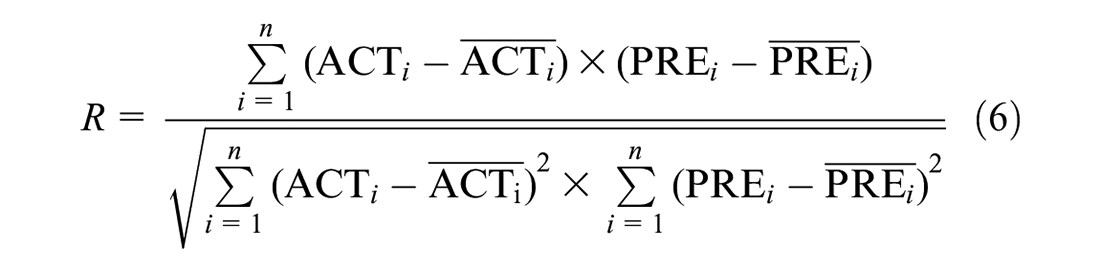

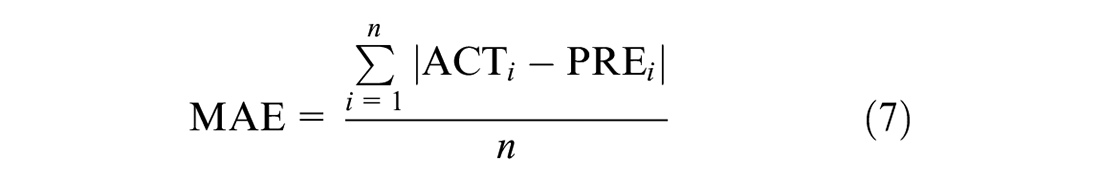

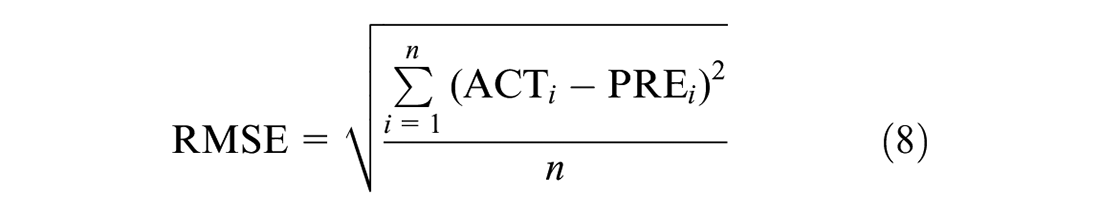

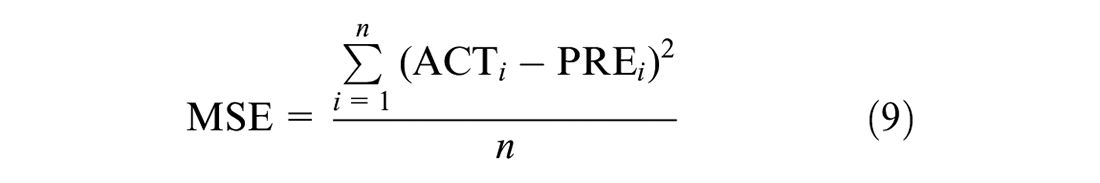

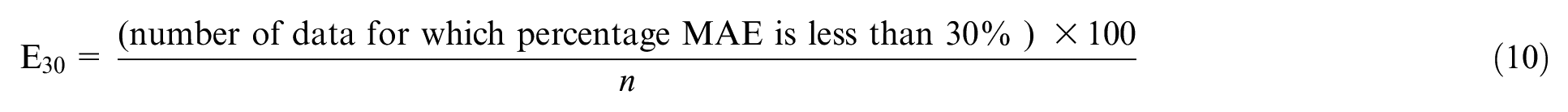

As mentioned, five extensively used powerful machine-learning techniques are used in IRI-prediction model development, including RFR, SVM, MLP, DTR, and MLR. Six machine-learning performance indicators are used to measure the accuracy of the machine-learning methods, including coefficient of determination (R2), correlation coefficient (R), mean absolute error (MAE), RMSE, mean square error (MSE), and the percentage of data with a mean absolute percentage error of less than 30% (E30). The most valuable feature-selection method and machine-learning algorithm are determined based on the mentioned machine-learning performance indicators. The formulation of R2, R, MAE, RMSE, MSE, and E30 are represented as follows ( 43 ):

where

Results and Discussion

In this section, the results of the five machine-learning algorithms using three feature-selection groups are represented. Then, the performance of the five machine-learning algorithms is compared, and optimal features are determined.

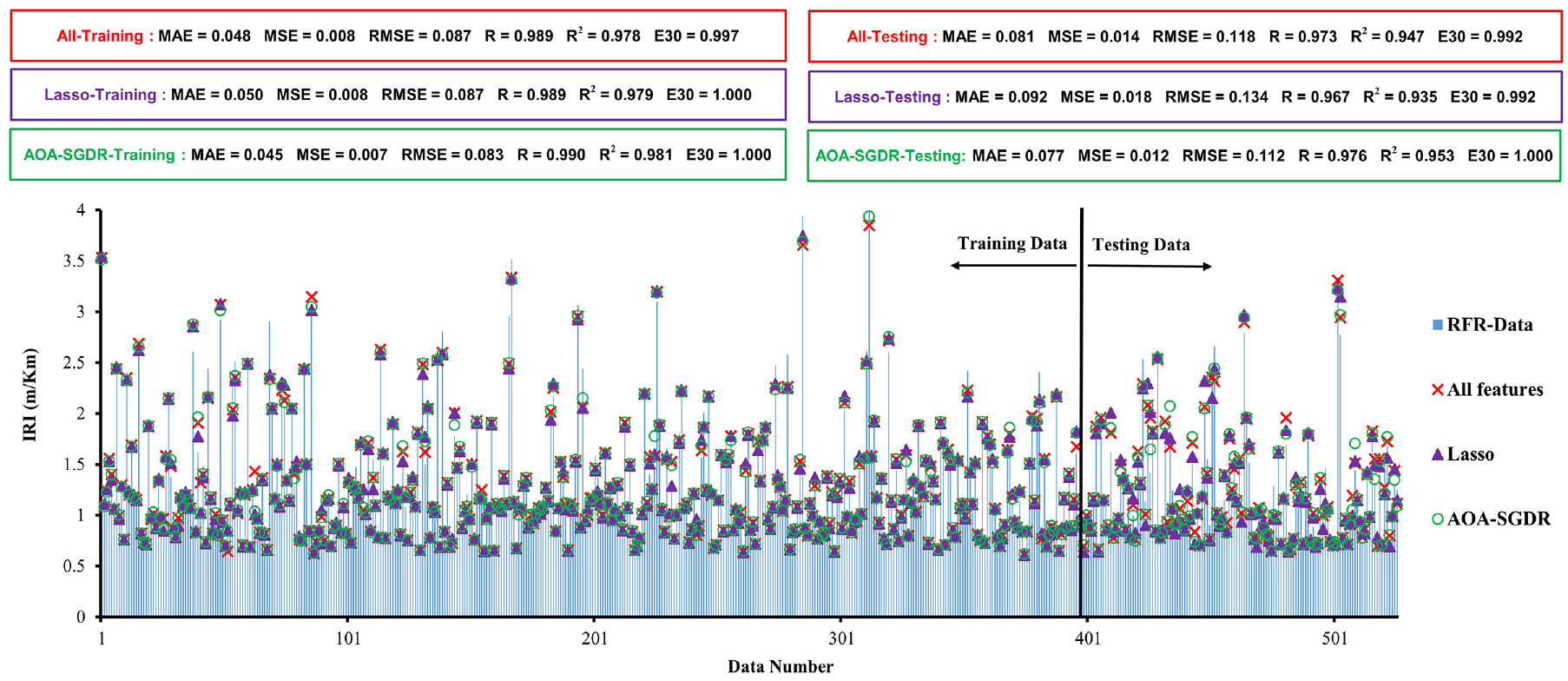

Random Forest Regression

The machine-learning performance indicators and error histograms of RFR are represented based on three feature-selection groups for training and testing data in Figure 1. In the error histogram, the lines indicate the real value of data (i.e., IRI). The other symbols show the values predicted by the model using different feature-selection techniques. Therefore, if a symbol is located at the end of the line, the error of the model for that data observation equals zero; otherwise, the distance between the symbol and the end of the line demonstrates the prediction error for the mentioned data observation. As represented in Figure 1, for the RFR technique, R2 of the testing data based on AOA-SGDR-selected features is 0.953. In addition, testing-data R2 results based on all features and Lasso are 0.947 and 0.935, respectively. Therefore, testing-data R2 of the novel hybrid method is 0.018 and 0.006 greater than for all features and Lasso, respectively. Similarly, testing-data MAE of AOA-SGDR-selected features for the RFR technique, which is equal to 0.077 m/km, is 11.96% and 16.30% lower than testing-data MAE of all features and Lasso features, respectively. Testing-data RMSEs of AOA-SGDR, all features, and Lasso in the RFR algorithm are 0.112, 0.118, and 0.134 m/km, respectively. Testing-data E30 using the AOA-SGDR method is equal to 100%, and testing-data E30 for both all features and Lasso is 99.2% using the RFR method. Therefore, the AOA-SGDR method in the RFR algorithm can predict all IRI testing data with an error of less than 30%, whereas the other methods cannot achieve this accuracy.

Machine-learning performance indicators and error histograms for RFR.

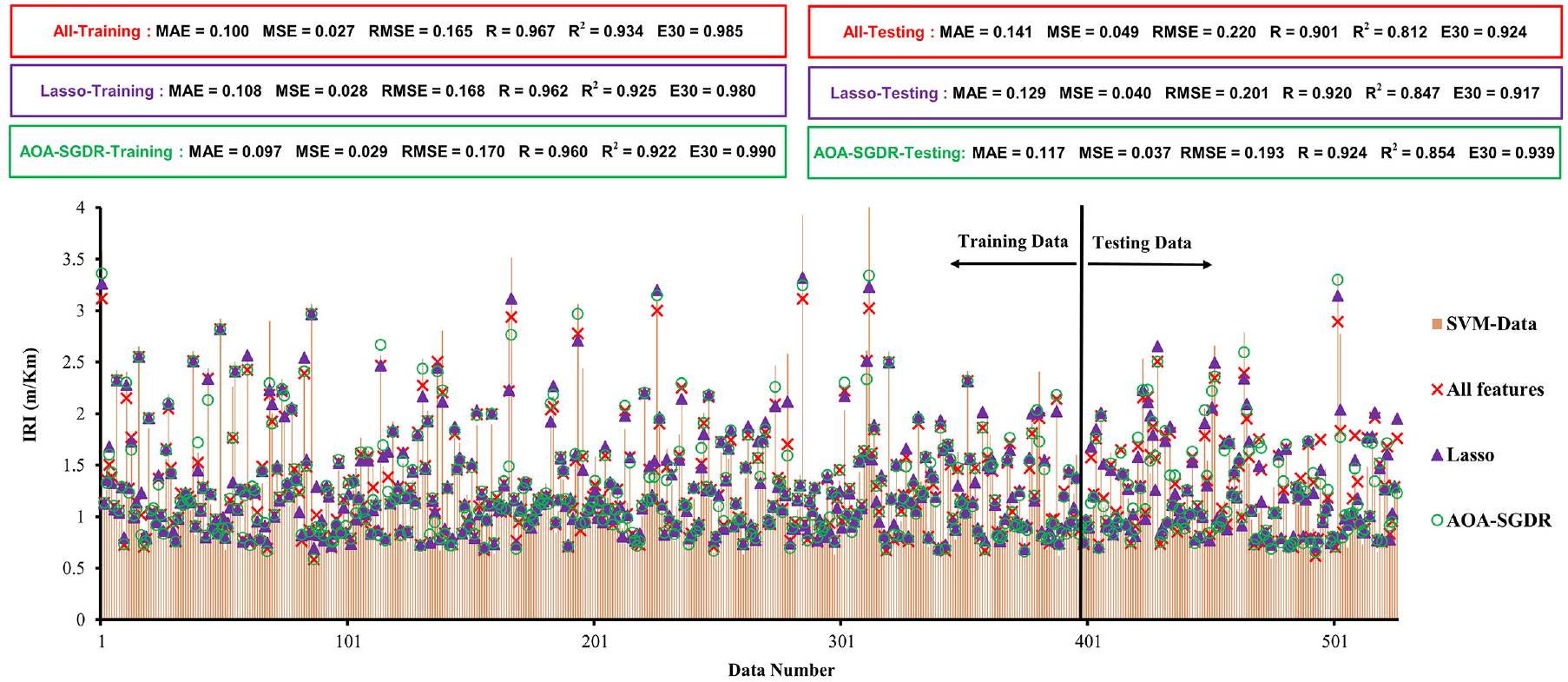

Support Vector Machine

The results of the SVM technique for AOA-SGDR, Lasso, and all features in the form of error histogram and machine-learning performance indicators are represented in Figure 2 for training and testing data. As can be perceived, testing-set R2 results of AOA-SGDR, Lasso, and all features in SVM algorithm are 0.854, 0.847, and 0.812, respectively. Therefore, testing-data R2 of the novel hybrid method is 0.007 and 0.042 greater than for Lasso and all features methods. By applying the AOA-SGDR method instead of Lasso and all features, testing-data MAE for the SVM technique is reduced by 10.43% and 20.74%, respectively. Testing-data RMSEs of the novel hybrid, Lasso, and all features using the SVM algorithm are 0.193, 0.201, and 0.220 m/km, respectively. Testing-data E30 figures for AOA-SGDR, Lasso, and all features using the SVM algorithm are 93.9%, 91.7%, and 92.4%, respectively. Therefore, testing-data E30 of the novel feature-selection method is 2.2% and 1.5% greater than for Lasso and all features.

Machine-learning performance indicators and error histograms for SVM.

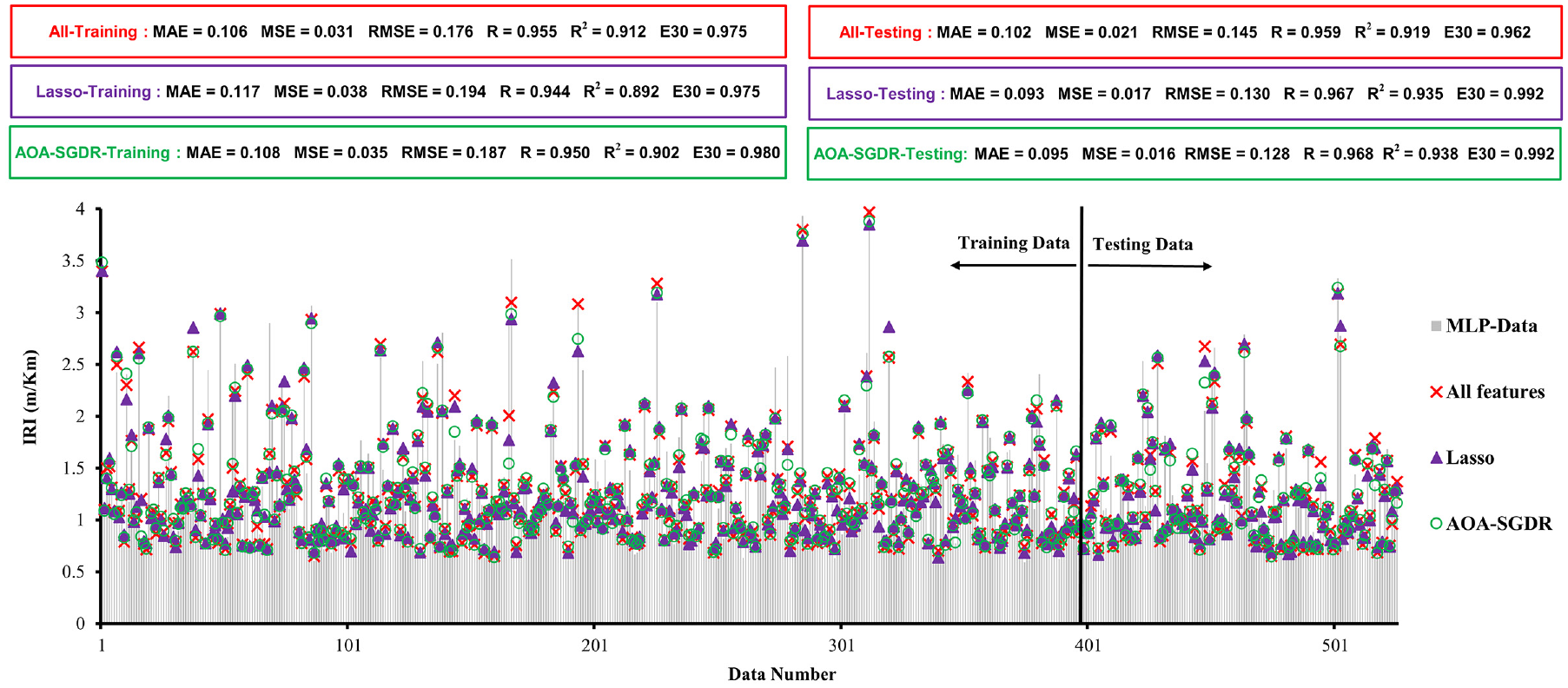

Multi-Layered Perceptron

Error histograms and values of machine-learning performance indicators of the MLP technique are presented in Figure 3. As can be seen, testing R2 of the novel hybrid method is 0.003 and 0.019 greater than Lasso and all features, respectively. The MAEs of the introduced feature-selection method, Lasso, and all features are 0.095, 0.093, and 0.102 m/km, respectively. Moreover, the RMSE of the testing data using the AOA-SGDR method is 0.128 m/km. If the AOA-SGDR method is used instead of Lasso and all-feature testing, the testing-data RMSE of the model decreases by 2.01% and 13.85%, respectively. The E30 results of the testing set for the AOA-SGDR, Lasso, and all features methods are 99.2%, 99.2%, and 96.2%, respectively.

Machine-learning performance indicators and error histograms for MLP.

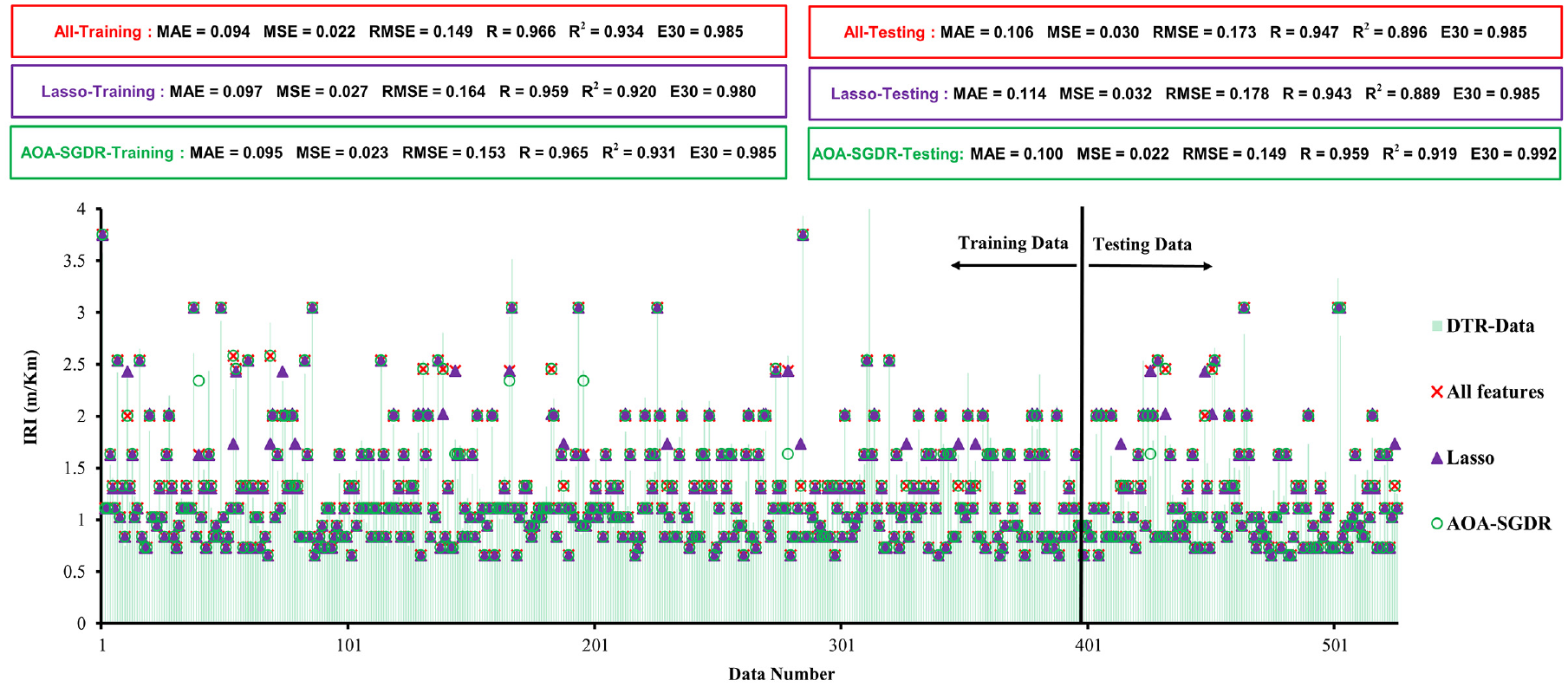

Decision-Tree Regression

The obtained results of the DTR algorithm for the three feature-selection groups are indicated in Figure 4 for both training and testing data. Testing-data R2 of the hybrid introduced feature-selection method is 0.023 and 0.030 greater than for the all features and Lasso methods, respectively. By employing the AOA-SGDR method instead of all features and Lasso, the testing-data MAE for the DTR technique decreases by 6% and 14%, respectively. Testing-set MSEs of the novel hybrid, all features, and Lasso methods are 0.022, 0.030, and 0.032 (m/km)2, respectively. Therefore, the MSE of testing data for the AOA-SGDR method is 0.008 and 0.010 (m/km)2 lower than for all features and Lasso, respectively. Testing-data E30 of the AOA-SGDR method is 99.2% using the DTR technique. In addition, testing-set E30 of both all features and Lasso is equal to 98.5%, which is lower than AOA-SGDR E30.

The machine-learning performance indicators and error histograms of the DTR.

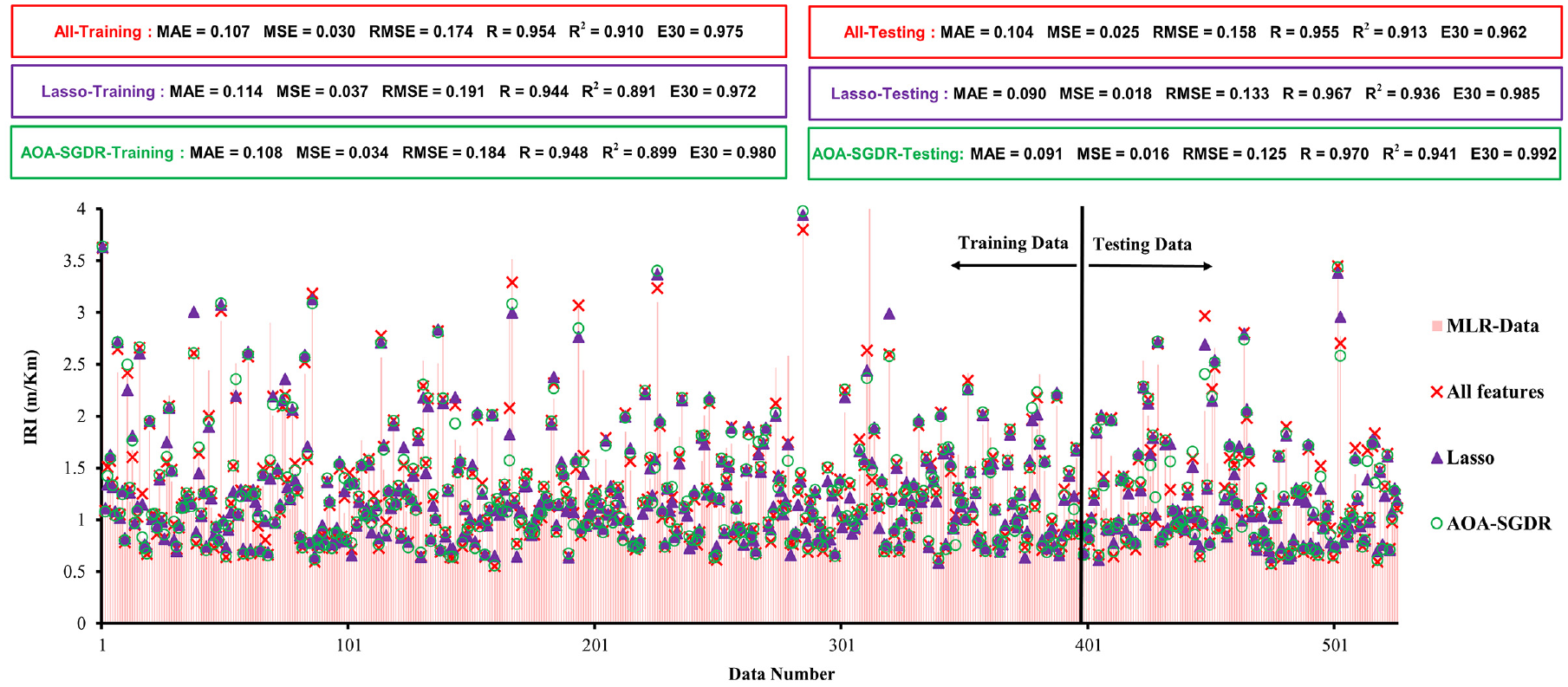

Multiple Linear Regression

The performance of the MLR algorithm based on error histograms and machine-learning performance indicators is represented in Figure 5. Testing-data R2 of the AOA-SGDR method using the MLR technique is 0.005 and 0.028 greater than for Lasso and all features, respectively. MAE of the testing data for the AOA-SGDR method is 0.091 m/km using the MLR algorithm. Moreover, testing-set MAEs of Lasso and all features are 0.090 and 0.104 m/km, respectively. Using the introduced feature-selection method (AOA-SGDR) instead of Lasso and all features, the model’s testing-data RMSE decreases by 7% and 26.82%, respectively. The testing-data MSE for AOA-SGDR is 0.002 and 0.009 (m/km)2 lower than for Lasso and all features, respectively. E30 of the hybrid introduced feature-selection method is 0.8% and 3.1% greater than for Lasso and all features, respectively.

The machine-learning performance indicators and error histograms of the MLR.

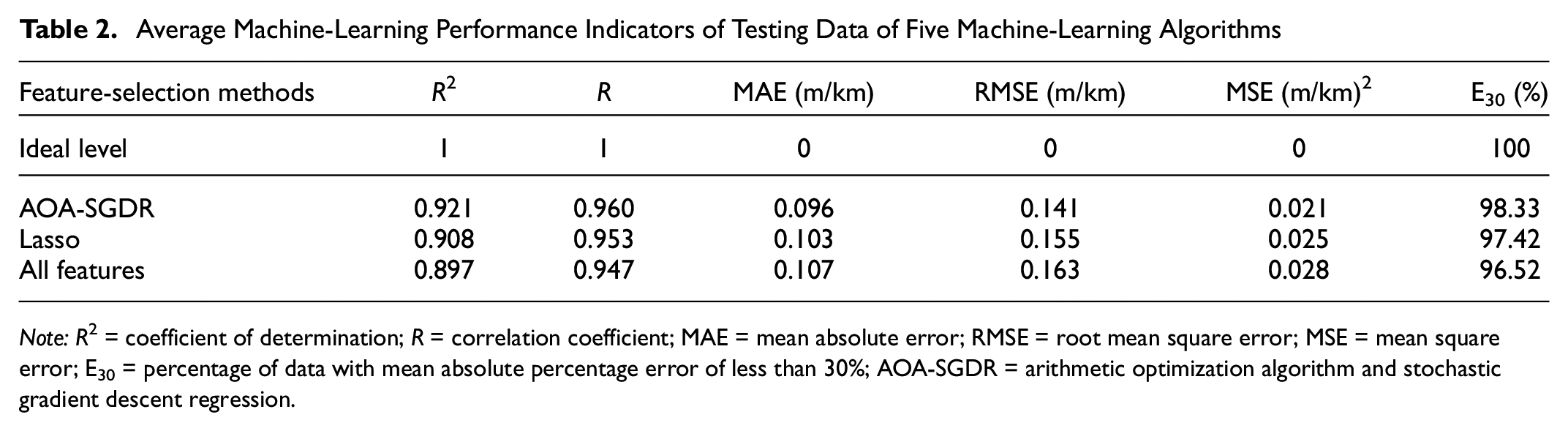

The Overall Performance of Feature-Selection Techniques

Average machine-learning performance indicators of the testing data for the five machine-learning algorithms are calculated as represented in Table 2 to compare the performance of the three feature-selection methods. Testing-data R2 of the AOA-SGDR method is 0.013 and 0.024 greater than for Lasso and all features, respectively. By applying AOA-SGDR instead of Lasso and all features, the average testing-data MAE of the five machine-learning algorithms decreases by 7.92% and 11.74%, respectively. On average, the testing-set RMSE using the AOA-SGDR method is 0.014 and 0.022 m/km lower than for Lasso and all features, respectively. The results indicate that average testing-data E30 using the AOA-SGDR method is 0.9% and 1.8% greater than for Lasso and all features. Therefore, the AOA-SGDR feature-selection method can increase the accuracy of the IRI-prediction model more than can the Lasso and all features methods. The novel hybrid feature-selection method has a better performance than the Lasso and all features methods, according to the machine-learning performance indicators discussed above. The AOA-SGDR feature-selection technique, which is introduced in this study, outperforms Lasso. In addition, an appropriate feature-selection method should be employed instead of using all features to develop the model to increase its accuracy. Thus, it can be postulated that AOA-SGDR is highly qualified to detect the IRI-improvement model’s optimal features, and its optimal features are introduced as the features that should be applied to predict IRI with the highest accuracy.

Average Machine-Learning Performance Indicators of Testing Data of Five Machine-Learning Algorithms

Note: R 2 = coefficient of determination; R = correlation coefficient; MAE = mean absolute error; RMSE = root mean square error; MSE = mean square error; E30 = percentage of data with mean absolute percentage error of less than 30%; AOA-SGDR = arithmetic optimization algorithm and stochastic gradient descent regression.

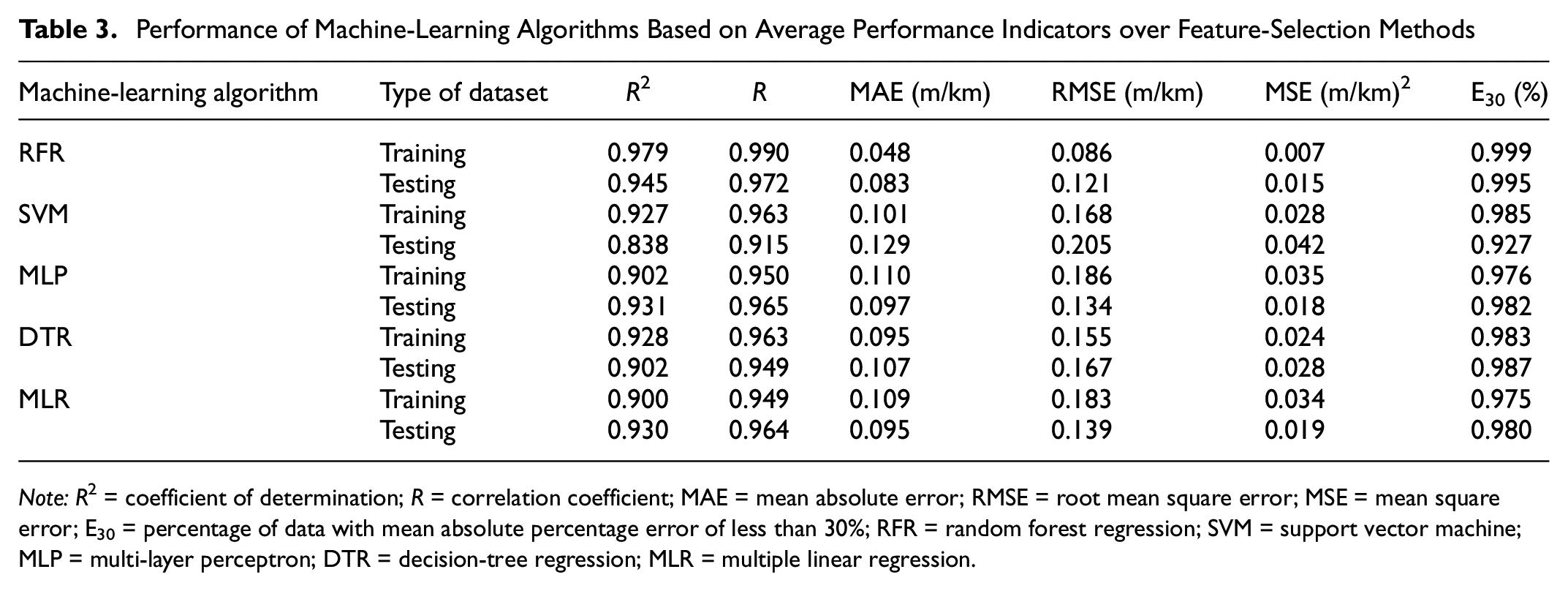

Comparing the Performance of Machine-Learning Techniques

The average performance indicators over feature-selection techniques for machine-learning algorithms are presented in Table 3. The results of the R2 of testing data indicate that the RFR technique performs better than the other techniques. By utilizing RFR, MLP, MLR, and DTR instead of SVM, the MAE decreases by 54.89%, 33.47%, 35.93%, and 20.46%, respectively. RMSE of the RFR technique is 0.084, 0.046, 0.017, and 0.013 m/km, less than RMSE for SVM, DTR, MLR, and MLP, respectively. E30 for the RFR technique is 6.82%, 1.52%, 1.26%, and 0.76% greater than for SVM, MLR, MLP, and DTR, respectively. As can be perceived from Table 3, the most powerful technique for predicting is the RFR technique, because of its higher R2, R, and E30 and lower MAE, RMSE, and MSE. Therefore, considering the highest accuracy, the use of the RFR method to develop the IRI performance function is strongly suggested to researchers and decision-makers.

Performance of Machine-Learning Algorithms Based on Average Performance Indicators over Feature-Selection Methods

Note: R 2 = coefficient of determination; R = correlation coefficient; MAE = mean absolute error; RMSE = root mean square error; MSE = mean square error; E30 = percentage of data with mean absolute percentage error of less than 30%; RFR = random forest regression; SVM = support vector machine; MLP = multi-layer perceptron; DTR = decision-tree regression; MLR = multiple linear regression.

According to the results discussed, the novel hybrid feature-selection method achieves the greatest accuracy in different machine-learning techniques based on the machine-learning performance indicators mentioned above. It can be deduced that policy-makers and researchers can use the optimal features selected by AOA-SGDR to develop IRI performance models. Therefore, using features selected by the introduced method and predicting IRI by the RFR technique leads to an accurate IRI performance model.

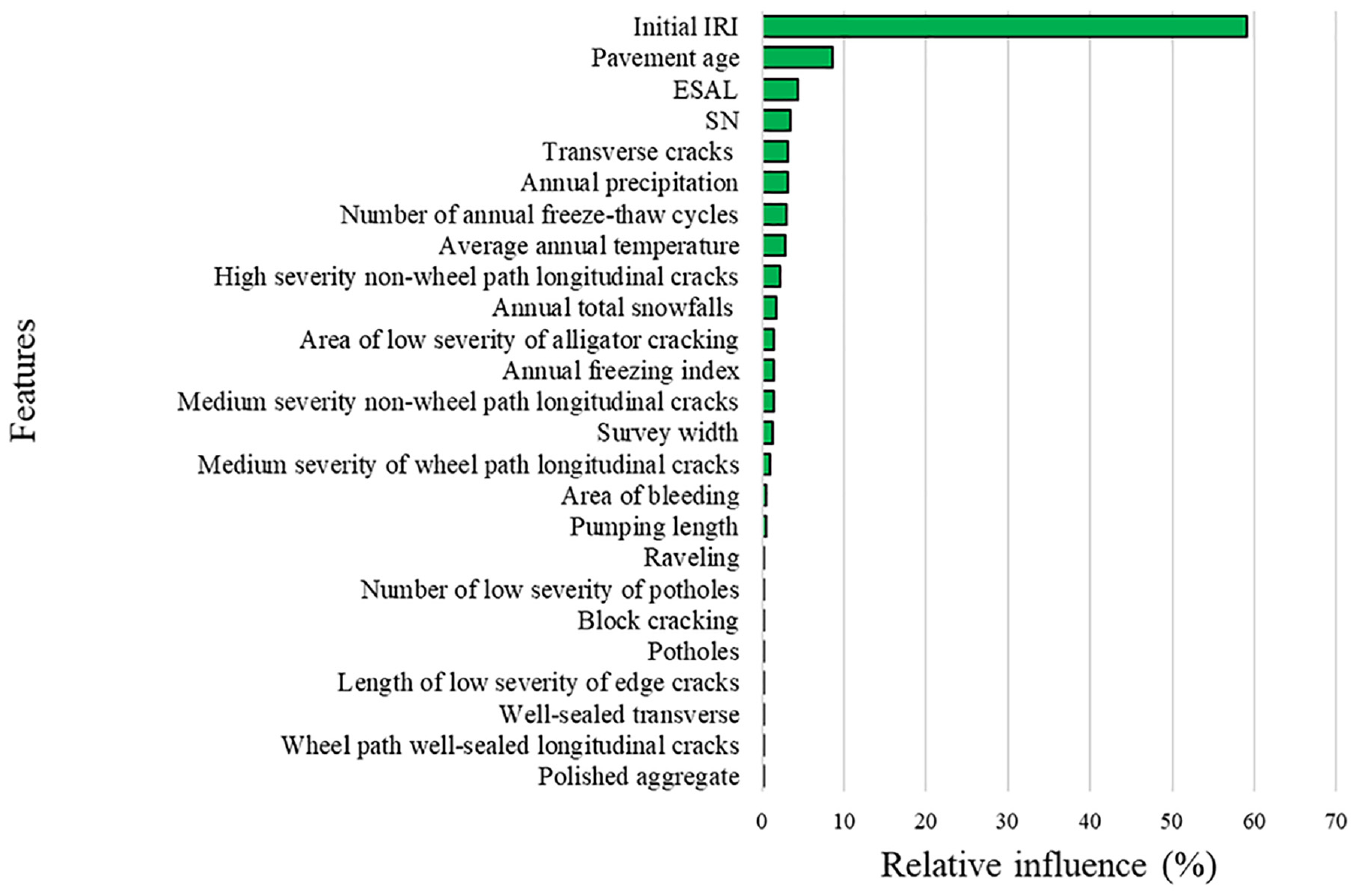

Prioritizing Optimal Features Based on Their Relative Influence on IRI

As described in the previous sections, a combination of AOA-SGDR and RFR leads to maximum prediction accuracy. As a result, the relative influence of each optimal feature (selected by AOA-SGDR) on IRI is calculated using RF. Initial IRI, pavement age, ESALs as a traffic parameter, SN as a structural feature, lane width, average annual temperature, annual freezing index, number of annual freeze–thaw cycles, annual precipitation, and annual total snowfalls as climatic features are selected in the novel hybrid feature-selection method. Additional factors employed as pavement distresses in the AOA-SGDR method include the areas of low-severity alligator cracking, block cracking, and potholes; the areas of bleeding, polished aggregate, and raveling; the lengths of low-severity edge cracks and wheel-path well-sealed longitudinal cracks, medium-severity wheel-path longitudinal cracks, high- and medium-severity non-wheel-path longitudinal cracks, and well-sealed transverse cracks; pumping length; and the number of low-severity potholes and transverse cracks. The relative influence of these features on IRI is shown in Figure 6. It is worth mentioning that these relative influences are obtained based on the applied case study in this investigation, and they may slightly differ in other countries with different climate conditions. As can be seen from this figure, Initial IRI has the greatest impact on the IRI in the future. This process can be related to the exponential trend of pavement deterioration. That is, the pavement deterioration follows an exponential trend, and IRI is increased exponentially ( 44 ). Further, pavement age, ESAL, and SN are the most influential parameters on IRI, which indicates the importance of traffic and structural conditions on pavement roughness. Transverse cracking is the most influential parameter on IRI when comparing the relative influence of optimal distresses. Because of temperature variations and extreme cold temperatures during winter, pavements are prone to transverse cracking. Further, transverse cracking can also appear in the form of reflective cracking ( 45 ), and these can be the reasons why transverse cracks have a higher relative influence on IRI than other distresses. Moreover, precipitation is detected as the most important climate condition for IRI prediction. These results can guide decision-makers to collect parameters with the highest relative influence to predict IRI. Similarly, the proposed feature-selection method can be applied in pavement M&R optimization problems to introduce accurate solutions since the proposed technique can increase the accuracy of IRI prediction.

Relative influence of parameters on IRI.

Practical Implications

Infrastructures are fundamental tools to enhance economic growth. Pavements are vital parts of transportation infrastructures. However, these pavements deteriorate over time, and their condition worsens. Therefore, maintenance should be implemented to keep pavements in an appropriate condition ( 46 ). To this end, optimization techniques are generally applied to optimize the pavement maintenance actions in the given planning horizons. These optimization models try to determine when, where, and which pavement maintenance actions should be implemented to maximize the condition of pavements ( 47 ). One of the essential parts of pavement maintenance planning is to predict the condition of pavements in the networks over time. In this regard, the prediction accuracy of pavement condition can significantly influence the reliability of the maintenance plans ( 48 ). In this study, a novel technique is developed that can maximize IRI-prediction accuracy. As a result, using the proposed method, the condition of pavement sections during the planning horizon can be calculated with the highest possible accuracy, and the results of the pavement maintenance plan can be accurate and trustworthy.

Conclusions

This study aims to develop an accurate IRI performance function. Therefore, 58 features are selected based on the LTPP data. A novel feature-selection method, named AOA-SGDR, is developed to determine the significant features for the IRI model. In addition, the Lasso method is used to evaluate the performance of the new hybrid method. Ultimately, two feature-selection methods are employed to select optimal features. Five machine-learning techniques containing RFR, SVM, MLP, DTR, and MLR are used to develop IRI-prediction models.

The novel hybrid feature-selection method (AOA-SGDR) increases R2, R, and E30 by 0.024, 0.013, and 1.81%, respectively, and decreases MAE, RMSE, and MSE by 0.011 m/km, 0.022 m/km, and 0.007 (m/km)2, respectively, compared with the method in which all features are considered. Also, AOA-SGDR compared with Lasso feature-selection method increases R2, R, and E30 by 0.013, 0.007, and 0.9%, respectively, and decreases MAE, RMSE, and MSE by 0.008 m/km, 0.014 m/km, and 0.004 (m/km)2, respectively. Therefore, the hybrid introduced feature-selection method outperforms the other methods, and using this method leads to the highest accuracy in IRI feature selection.

The RFR technique has the best performance, followed by MLP, MLR, DTR, and SVM. Employing features selected by AOA-SGDR to develop IRI prediction by RFR leads to the most accurate IRI-prediction model in this study. R2, R, MAE, RMSE, MSE, and E30 of this IRI model are 0.953, 0.976, 0.077 m/km, 0.112 m/km, 0.012 (m/km)2, and 100%, respectively. Therefore, taking into consideration the highest accuracy, employing RFR technique and features selected by the AOA-SGDR method is strongly proposed to decision-makers, policy-makers, and researchers. Based on the results, initial IRI has the highest relative influence on IRI, followed by pavement age, ESAL, SN, transverse cracks, annual precipitation, number of annual freeze–thaw cycles, and average annual temperature. Therefore, transverse cracking is the most influential parameter on IRI when comparing the relative influence of optimal distresses. Further, annual precipitation is the most influential climate-related parameter for IRI, according to the relative influence of factors on IRI.

One of the limitations of this study is to apply only one metaheuristic algorithm (AOA) to find the optimal features. Therefore, it is recommended to employ other powerful metaheuristic algorithms to develop new feature-selection techniques and compare their accuracy with AOA-SGDR.

Footnotes

Author Contributions

The authors confirm contribution to the paper as follows: study conception and design: H. Naseri, M. Shokoohi, H. Jahanbakhsh, E.O.D. Waygood; data collection: H. Naseri, M. Shokoohi; analysis and interpretation of results: H. Naseri, M. Shokoohi, H. Jahanbakhsh; draft manuscript preparation: H. Naseri, M. Shokoohi, H. Jahanbakhsh, M. Karimi, E.O.D. Waygood. All authors reviewed the results and approved the final version of the manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.