Abstract

This article offers a critical and integrative review of how artificial intelligence (AI) is being incorporated into academic library systems, particularly in the context of scientific research production. Based on 29 studies, the review explores ethical practices, institutional boundaries, and epistemological challenges surrounding AI adoption. Findings reveal that AI is reshaping scholarly workflows, such as metadata creation, information retrieval, and literature review, while also introducing unresolved ethical concerns, including data privacy, algorithmic bias, academic integrity, and diminished human agency. The study identifies a misalignment between the rapid pace of AI implementation and the capacity of academic institutions to regulate its use responsibly. Librarians are situated at the intersection of innovation and ethical mediation, often without formal training or institutional support. The review concludes that AI should not be viewed merely as a functional tool but as a socio-technical agent requiring ethical governance, critical AI literacy, and structural accountability across academic ecosystems.

Introduction

The integration of artificial intelligence (AI) into scientific knowledge production has transformed academic research workflows, authorship practices, and publishing mechanisms. With the emergence of generative AI tools, particularly large language models (LLM) such as ChatGPT, researchers now engage in writing, peer review, data interpretation, and even ideation in ways that were unimaginable just a few years ago (Carabantes et al., 2023; Scherbakov et al., 2025). While these technologies promise efficiency and accessibility, they have also prompted serious concerns about authorship legitimacy, scientific integrity, ethical accountability, and transparency in scholarly communication (Kousha and Thelwall, 2024; Mannheimer et al., 2024; Stockemer and Reidy, 2024).

Academic libraries have historically served as institutional anchors for research ethics, information literacy, and scholarly standards (Osdoski and Costa, 2024; Zondi et al., 2024). As research environments evolve under the influence of digital intelligence, academic libraries are increasingly positioned not only as service providers, but also as stewards of responsible AI integration in higher education. Library professionals now face the challenge of navigating and guiding ethical uses of AI in the scientific lifecycle, particularly in authoring, publishing, and reviewing processes (Aboelmaged et al., 2025; Giray et al., 2025).

However, the rapid diffusion of AI tools into research settings has outpaced the development of normative frameworks for responsible use (Heyder et al., 2023; Laine et al., 2024). Despite calls for increased AI literacy and ethical oversight, there remains a lack of coordinated institutional responses across academic libraries (Harisanty et al., 2025; Mannheimer et al., 2024). This is especially problematic given the rising use of generative AI by students and researchers, often in the absence of clear policies or training (Davy Tsz Kit Ng et al., 2024; Hossain et al., 2025).

While recent literature reviews have examined the implementation of AI in library services (Concha et al., 2024; Zondi et al., 2024), few studies have addressed the intersection between AI-assisted scientific production and the ethical responsibilities of academic libraries. Similarly, the debate on academic misconduct and the erosion of traditional authorship models has not sufficiently integrated the library perspective (Kankanhalli, 2024; Stockemer and Reidy, 2024). This reveals a significant gap in the literature: the need to explore how academic libraries can support ethical, transparent, and informed use of AI in the creation of scientific knowledge.

Against this background, this study is guided by the following research question: How can academic libraries promote ethical practices and institutional guidance in the use of AI tools for scientific research production?

This question arises from two converging realities: the exponential growth in the use of generative AI in academia, and the unclear role of libraries in mediating this transformation.

To address this question, the study adopts a systematic literature review methodology, grounded in a comprehensive exploration of peer-reviewed publications and institutional studies from 2022 to 2025 (plus early 2026). The scope includes literature on generative AI in academic writing, ethical dilemmas in authorship and peer review, library-based research support services, and AI literacy initiatives.

The central hypothesis of this study is that academic libraries are uniquely positioned to serve as ethical mediators in AI-integrated research environments, but that their current involvement is fragmented, under-researched, and unevenly institutionalized.

To analyze the ethical, institutional, and professional challenges of integrating AI tools into scientific knowledge production, with a specific focus on the role of academic libraries in guiding responsible use:

To identify how AI tools are currently being used in academic research writing, publishing, and peer review; To examine the extent to which academic libraries engage in AI literacy, ethical awareness, and responsible innovation; To explore the ethical tensions and risks associated with AI-supported research outputs, including authorship ambiguity and integrity breaches; To map institutional responses, professional behaviors, and policy recommendations emerging from the literature; and To propose strategic roles for academic libraries in promoting ethical AI integration in higher education research contexts.

Academic libraries stand at a critical junction: either they actively shape the norms for ethical AI use in research, or they risk being passive observers in a rapidly shifting scholarly landscape. Recognizing this inflection point is not merely a matter of innovation management, it is a question of institutional responsibility, epistemic trust, and the preservation of scholarly integrity in the digital era. This article responds to that challenge, offering a grounded, critical, and timely investigation into how libraries can rise—not react, to the age of artificial intelligence.

Methodology

A systematic literature review was conducted in July 2025 to explore the ethical practices, boundaries, and challenges surrounding the use of AI in scientific knowledge production, with particular emphasis on the role of academic libraries. Four bibliographic databases were selected for this study: Web of Science, Scopus,

1

Dimensions.ai, and Library and Information Science Abstracts (LISA). A consistent and comprehensive search strategy was applied across all platforms, using the following expression: “artificial intelligence” OR “AI tools” OR “generative AI” OR “large language models” OR “ChatGPT” AND “scientific publishing” OR “academic writing” OR “research production” OR “knowledge creation” AND “academic libraries” OR “university libraries” OR “higher education libraries” AND “ethics” OR “scientific integrity” OR “transparency” OR “authorship” OR “information literacy” Publication period – 2022–2026 (2026 was included to capture early access); Document type – review article; and Category – Information Science & Library Science, with results limited to open access. This yielded 118 results.

In Dimensions.ai, the following refinements were applied:

Publication period – 2022–2025; Document type – article; and Audience – students and researchers. This resulted in 59 records.

In LISA, the same refinements applied to Web of Science were replicated (including 2026), also resulting in 118 records.

All retrieved records (n = 150) were imported into the AI-assisted tool Rayyan, which was used to identify potential duplicates (none were found) and to support blinded screening of abstracts and titles. Screening followed well-defined inclusion and exclusion criteria:

Inclusion criteria:

explicit relation between AI and academic writing or scientific research production; reference to the role of academic libraries or library professionals in the use or explanation of AI tools; and emphasis on ethical or integrity-related perspectives. Exclusion criteria:

articles outside the scope of AI-assisted research production or lacking relevance to the outlined inclusion points.

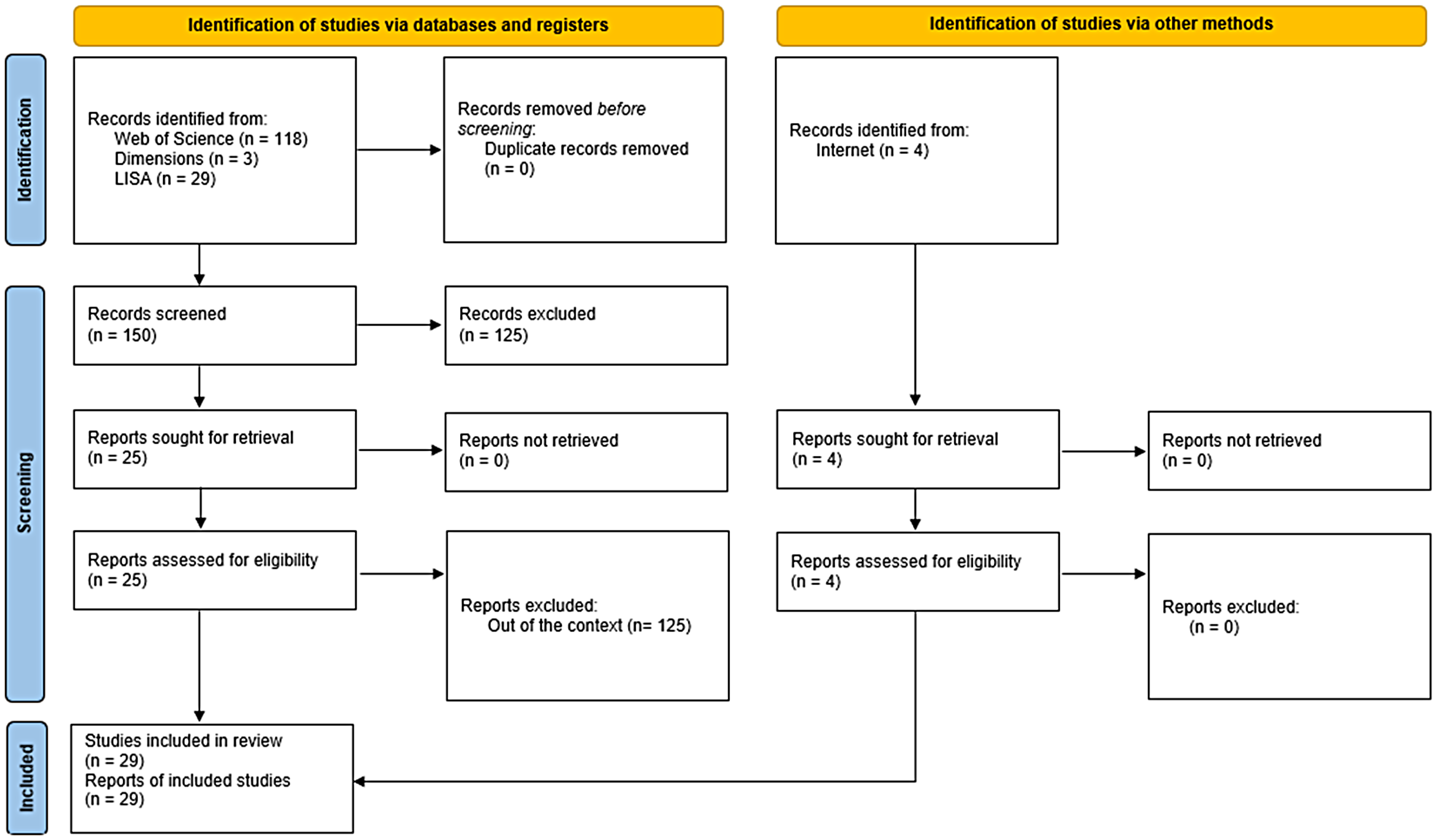

Following the initial screening, 125 records were excluded, resulting in a final sample of 25 articles for review. An additional two web-based documents were also included based on their high relevance and citation potential, bringing the total to 29 sources. This process is visually summarized in Figure 1, a PRISMA Flow Diagram that outlines the search, screening, and inclusion phases of the review.

Prisma flux diagram.

The selected articles were analyzed based primarily on their abstracts and keywords, which were systematically examined to extract thematic content related to AI tools, ethical implications, library involvement, and scholarly communication practices. When necessary, full texts were consulted to clarify ambiguous cases or verify thematic relevance.

The analysis adopted a qualitative synthesis approach, allowing for the categorization of emerging themes and trends without applying formal coding schemes. This process supported the identification of knowledge gaps and informed the recommendations presented in later sections of the article. All steps followed the PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) framework and were transparently documented to ensure methodological reproducibility and rigor.

To support the visual representation of key findings and conceptual frameworks discussed throughout this study, several figures were generated using ChatGPT's image-generation capabilities. The content of each figure was conceptually designed and validated by the author based on the reviewed literature, ensuring alignment with the study's analytical framework. The use of generative AI for figure creation was carried out transparently, and its application is disclosed here in accordance with principles of research integrity and responsible use of AI tools in academic contexts.

State of the art

AI's impact on scholarly publishing and peer review

Recent developments in scholarly communication highlight both opportunities and threats introduced by AI. On the one hand, AI tools are being leveraged to streamline the publishing process, for example, software can recommend journals for submission and perform initial quality control checks (e.g. plagiarism or statistical consistency) (Kousha and Thelwall, 2024). Kousha and Thelwall (2024) summarize that AI is useful for tasks like suggesting reviewers or flagging potential issues in manuscripts, but current systems cannot replace the nuanced judgment of human peer reviewers. In fact, they conclude that it is not yet sufficient to support reviewing and should not replace human reviewers, underscoring the need for human–AI collaboration rather than full automation in peer review.

Concurrently, the scholarly community is grappling with new forms of academic misconduct that exploit the evolving publishing landscape. Stockemer and Reidy (2024) document cases of fake acceptance letters and financial fraud targeting researchers. In one scam, third parties impersonating journal editors sent fake acceptance notifications and then demanded article processing charges from unwitting authors. These frauds were enabled in part by the shift toward open access (with its APC model) and by the ease of generating official-looking communications.

The authors situate these issues against a backdrop of industry change, notably the rapid move to open access publishing and the advent of AI text generators like ChatGPT, which have the potential to prepare essays and other types of scholarly manuscripts automatically. While generative AI can assist in writing, its misuse raises concerns about plagiarism and falsified research, adding urgency to conversations about research integrity. Journals and publishers are now not only battling traditional problems (data fabrication, plagiarism) but also AI-augmented misconduct, requiring new detection strategies and awareness.

The peer review process itself is under pressure in the age of generative AI. Some researchers have provocatively asked whether tools like ChatGPT could serve as peer reviewers. Carabantes et al. (2023) tested ChatGPT as a reviewer and found clear limits to AI-driven reviews, noting that while ChatGPT can produce superficially structured reports, it lacks the expert insight and critical nuance of human reviewers. Early experiments with GPT-4 in a reviewer role show that AI can help summarize and evaluate manuscripts to an extent, but it cannot fully replicate human expertise in judgment, context awareness, and ethical oversight. In one study, GPT-4's reviews were sufficiently accurate to alleviate the burden of reviewing but not completely and not for all cases, and authors highlighted risks like AI's potential bias or value misalignment if used naively.

These findings align with Kankanhalli’s (2024) position that peer review will remain a human-centric practice, even as generative AI assists in routine tasks. Indeed, emerging guidelines argue that AI should be restricted to a supporting role (e.g. helping identify suitable reviewers or triaging submissions) and not be an autonomous arbiter of scientific quality. Ethical concerns have already been voiced: using AI to evaluate others’ work could breach confidentiality and introduce invisible biases.

In summary, the literature portrays a scholarly publishing ecosystem in flux, AI is streamlining workflows and augmenting decision-making, but strong consensus holds that human expertise and ethical standards must remain at the core of authorship and peer review.

Generative AI in academic writing: Opportunities and concerns

One of the most debated disruptions has been the rise of LLM like ChatGPT in academic writing and research. Generative AI offers undeniable productivity benefits; for instance, it can rapidly produce well-structured text, translate or summarize literature, and serve as a conversational assistant during the writing process. Giray et al. (2025) observe that generative AI is already influencing academic writing and research, improving efficiency in tasks like literature reviews and manuscript drafting. Students and researchers are using tools like ChatGPT as writing tutors, for brainstorming and even for generating early drafts of papers. These capabilities have prompted some educators to explore how AI might be constructively integrated into academic curricula.

Kell et al. (2025) describe experiences of students who used generative AI while writing their practice-based dissertations. The students found AI helpful for tasks such as refining research questions, generating example text, and proofreading, essentially as a transformative support throughout the dissertation journey. Crucially, however, Kell et al. (2025) emphasize a human-centric approach: the AI's contributions were mediated by faculty mentorship and the students’ own critical inquiry, ensuring that the resulting work remained authentically their own and met scholarly standards. This suggests that when guided by educators and ethical frameworks, AI can augment learning and writing without supplanting the student's intellectual development.

On the other hand, generative AI poses serious challenges for academic integrity and skill development. Giray et al. (2025) caution that along with efficiency gains come challenges related to academic development and integrity. If over-relied upon, AI tools may short-circuit the learning process, and students might bypass developing critical thinking, writing proficiency, or language skills by letting the AI do the heavy lifting. Hossain et al. (2025) surveyed English as Foreign Language students about AI usage in academic writing and found mixed levels of AI literacy and ethical awareness. Many students were intrigued by AI's assistance (e.g. for grammar or idea generation), yet they held uncertainties about plagiarism, proper attribution, and where to draw the line between assistance and cheating.

These findings echo a broader concern in academia: while AI can improve writing, institutions must educate users on the ethical use of AI (e.g. disclosing AI involvement, avoiding verbatim AI-generated content in submissions and critically evaluating AI-provided information). The need for AI literacy education is increasingly recognized. Davy Tsz Kit Ng et al. (2024) examined secondary school curricula for AI literacy and noted a push to teach students not only how AI works, but also how to use AI tools responsibly and judiciously. Such early interventions could help future university students approach generative AI with a more critical and ethical mindset.

Academic publishers and conferences have likewise responded. Some journals now explicitly forbid listing ChatGPT as a co-author and require authors to certify that AI did not distort or fabricate results. Peer reviewers are also developing a keen eye for AI-generated text; recent analyses even identified tell-tale stylistic markers (“buzzword” adjectives, overly polished phrasing) that may signal a review was written with AI assistance. This raises further ethical questions (e.g. is using AI to polish a peer review tantamount to undisclosed help?) and has prompted calls for transparency whenever AI is used in the research or review process.

In response to such concerns, several professional bodies and universities have begun formulating guidelines for AI in scholarly work, aiming to delineate acceptable use cases and ensure proper attribution. In sum, generative AI represents a double-edged sword in academia. It can democratize and accelerate the writing process, potentially leveling the field for non-native English writers or novice researchers, and it can free scholars to focus on higher-level analytic work (Figure 2).

Applications of artificial intelligence in scientific research.

While these applications reveal the substantial promise of generative AI to support scholarly communication and knowledge production, they also raise important questions regarding authorship, originality, and scholarly integrity. These concerns warrant careful examination, particularly in educational contexts where ethical literacy may still be developing.

Yet without proper oversight, it risks undermining the very scientific tradition of originality and critical rigor. This tension underscores much of the current literature. As one review put it, generative AI's role must be balanced such that we amplify human intellect, not automate it away. Striking that balance will require ongoing dialogue, updated academic integrity policies, and above all, education in AI literacy for both students and faculty. The coming years will likely see a normalization of AI as one more tool in the scholar's repertoire, akin to a grammar checker or reference manager, but governed by new norms that ensure the human researcher remains accountable for the content and quality of their work.

AI implementation in academic library services

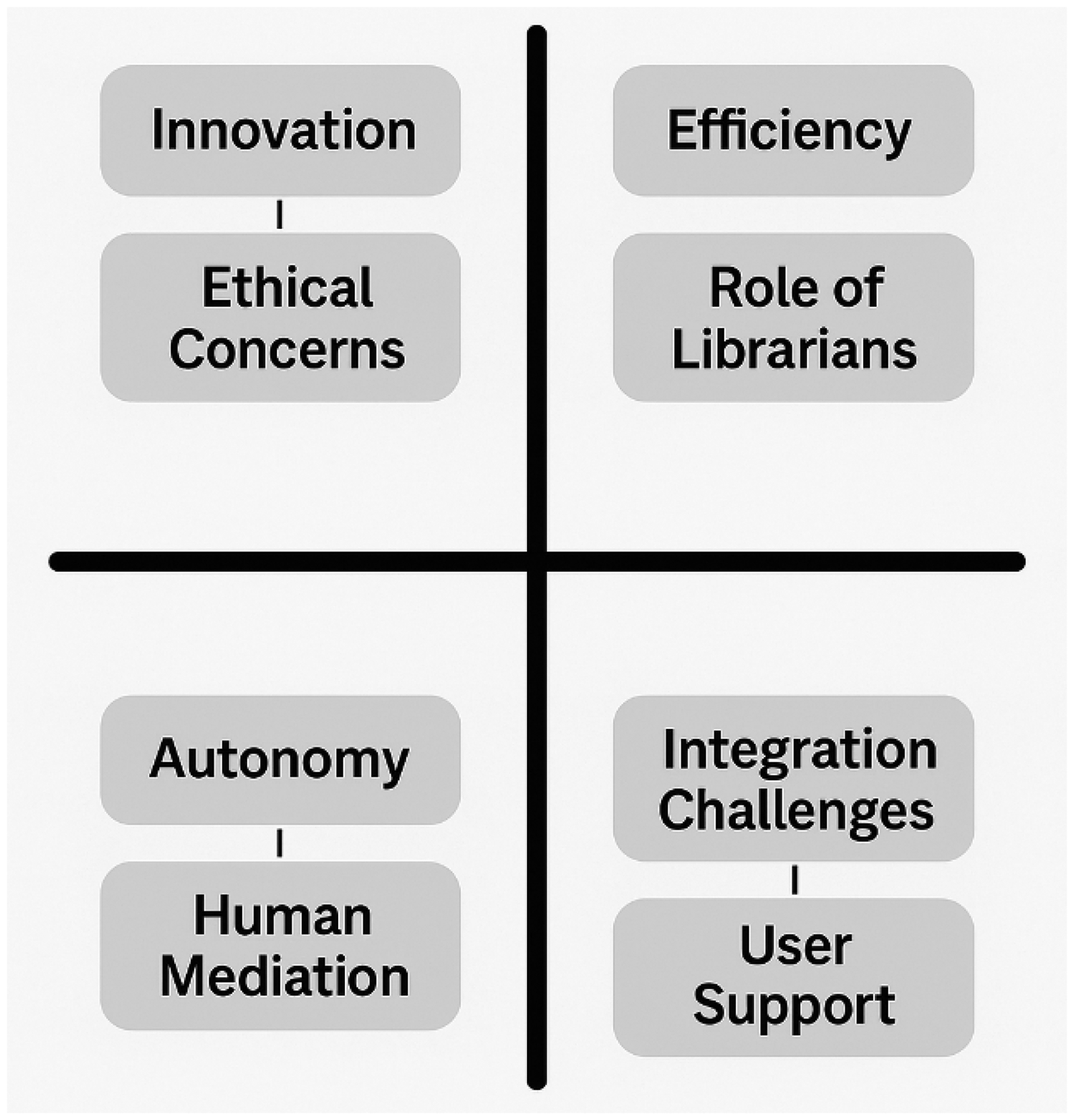

The implementation of AI across academic libraries can be conceptualized as a continuum, spanning from exploratory experimentation to fully institutionalized practices. As shown in Figures 3–5, this trajectory reflects varying degrees of maturity, preparedness, and strategic alignment, which merit deeper discussion.

Artificial intelligence integration continuum in academic libraries.

Ethical and practical challenges of AI in scientific research and academic libraries.

Artificial intelligence ethical and transformation tensions in academic libraries.

Academic libraries have emerged as key sites for AI innovation, often leading the way in practical deployments of AI to enhance information services. A surge of literature in the past three years examines how libraries are integrating AI into their operations, from chatbot reference assistants to intelligent search systems and beyond. In fact, the volume of research on AI in libraries itself has grown markedly. A systematic review by Concha et al. (2024) found a sharp rise in publications on library AI applications starting in 2022. This corresponds with many national governments releasing AI strategies or policies around that time, suggesting that broader technological trends have catalyzed interest in library-focused AI research.

Early implementations showcase AI-driven services designed to improve user experience and operational efficiency. For instance, libraries have adopted chatbots, conversational AI agentes, to handle routine patron queries, assist with catalog searches, or provide 24/7 reference help. Aboelmaged et al. (2025) conducted an integrative review of library chatbots, noting that such systems are replacing some of the library services that humans conventionally perform and rapidly evolving in sophistication. Their review found five dominant themes in the literature:

The technological evolution of chatbots in libraries; Factors driving chatbot adoption; User experience and satisfaction; The surge in chatbot use during the COVID-19 pandemic (when remote services became vital); and Ongoing challenges. Users generally appreciate the immediate responses and after-hours help, although studies show mixed results on whether chatbots can fully resolve complex inquiries. Challenges include limitations in understanding nuanced questions, the need for constant updating of chatbot knowledge bases, and occasional user frustration. Nonetheless, the trajectory is clear: library chatbots are maturing from simple FAQ responders to more sophisticated virtual assistants.

Beyond chatbots, AI is being woven into diverse library functions. Zondi et al. (2024) present a critical review of AI implementations in academic libraries, highlighting use cases such as automated cataloging/classification, predictive analytics for collection management, and recommender systems. A prominent trend is the shift from traditional resource management models to intelligent, personalized, and proactive information service models, enabled by advances in machine learning and big data analytics. For example, AI-powered search platforms can understand natural-language queries and fetch results based on semantic relevance rather than exact keyword matches. Zhang et al. (2025) describe how LLM like ChatGPT have fundamentally reshaped academic users information retrieval methods, a point also reinforced by Hamam and Fatouth (2023), who offer a comprehensive analysis of ChatGPT's scientific research capabilities. Their case study of Tsinghua University Library illustrates libraries’ proactive approach: Tsinghua's AI + Workshop program now trains users in AI-based academic tools. Similarly, the Chinese Academy of Sciences launched ChatLibrary, an AI-assisted platform integrating Q&A, smart recommendations, and automated content analysis. These examples show libraries not only implementing AI behind the scenes but also openly offering AI capabilities to patrons. Zhang et al. (2025) note that while generative AI can handle many front-end queries, libraries remain irreplaceable in systematic knowledge management and discipline-specific services.

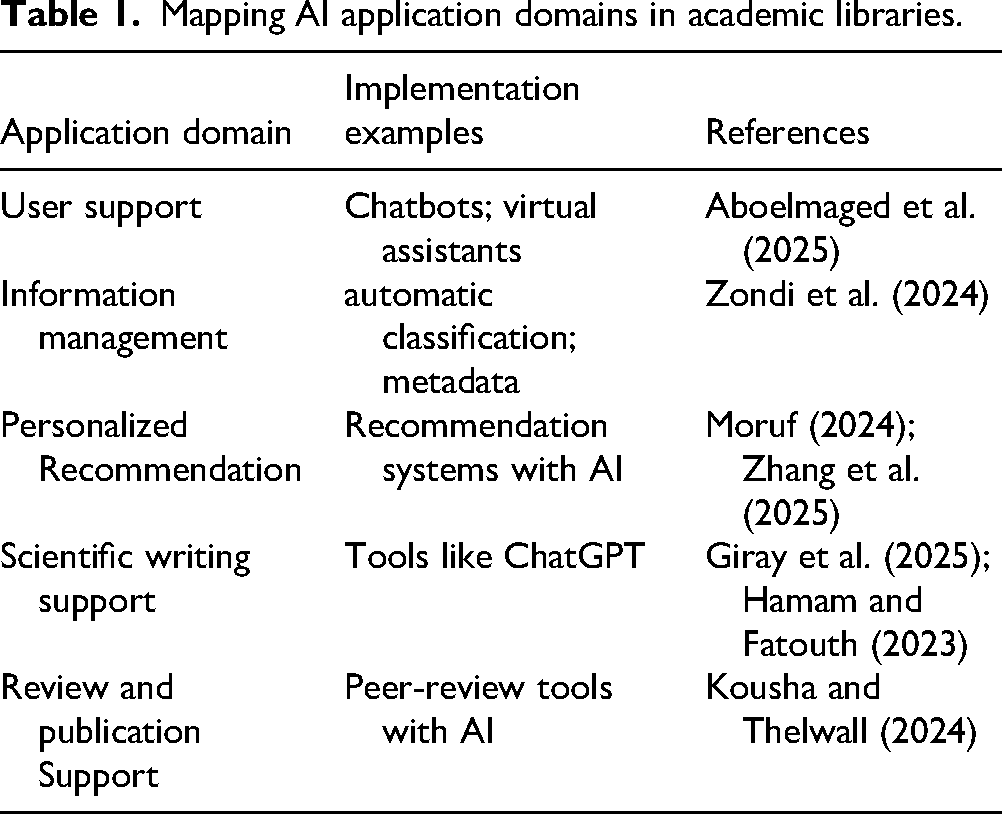

Despite enthusiasm for AI's benefits, the literature does not shy away from discussing barriers and challenges. Academic libraries, particularly in developing regions, face hurdles such as limited technical infrastructure, scarce funding, and staff shortages. Zondi et al. (2024) observe that the integration of AI faces hurdles like inadequate hardware, insufficient training for librarians, and even employment concerns. Successful AI projects in libraries often hinge on change management (Table 1).

Mapping AI application domains in academic libraries.

The review by Zondi et al. (2024) concludes that AI heralds an era of enhanced service delivery for libraries, albeit accompanied by challenges. Moruf (2024) reviewed emerging AI tools for academic research support, cataloging tools like Jenni AI and ChatPDF that libraries might incorporate. These tools promise to enhance research and writing but raise questions about reliability and ethics. A human-in-the-loop approach is recommended to maintain quality and accountability.

Evaluating the effectiveness of AI-based services has become another scholarly focus. Zhang et al. (2025) applied an Analytic Hierarchy Process methodology to assess discipline-specific information services in a digital-intelligence environment. Their evaluation framework included factors like information quality, usability, and user experience. The Analytic Hierarchy Process weighting revealed that tool application and information quality were the most influential factors for user satisfaction. While services were deemed satisfactory, the authors recommended precision-driven optimization and more feedback loops. This reflects a maturing field concerned not only with experimentation but with evidence-based refinement.

In summary, academic libraries are embracing AI as a means to innovate and improve services, from intelligent search and recommender systems to interactive chatbots and automated tools. The literature portrays libraries as spaces of innovation, where human-centered values guide AI integration to ensure meaningful, ethical, and equitable services.

Librarians’ roles, skills, and AI literacy

Implementing AI in libraries inevitably transforms the roles and required competencies of library professionals. Far from rendering librarians obsolete, the literature suggests that AI can augment the librarian's expertise, but it does necessitate upskilling and role evolution. Several studies examine how librarians are adapting to and adopting AI, often through the theoretical lens of innovation diffusion. Harisanty et al. (2025) conducted an explorative study on librarian behavior toward AI, framed by Rogers’ Diffusion of Innovations theory. They observed that librarians fall into identifiable adopter categories: a few innovators and early adopters enthusiastically experiment with AI tools (for instance, tech-savvy librarians building their own mini-chatbots or training machine learning models for collections), while a larger segment cautiously observes, and some laggards remain skeptical or resistant. Perceived usefulness and ease of use (analogous to Technology Acceptance Model factors) strongly influenced adoption willingness, as did peer influence and organizational support. A key finding was that many librarians are curious about AI but feel they lack the technical knowledge to fully engage, highlighting a need for professional development in this area. Harisanty et al. (2025) conclude that targeted training and success stories can help move librarians through the adoption curve, turning more of the skeptics into informed users of AI in daily practice.

One area where librarians have long played a crucial role is in promoting tools for information management and research productivity. Bapte and Bejalwar (2022) point out that even prior to modern AI, librarians were champions of technologies like reference management software (e.g. EndNote, Zotero, Mendeley). Their review, “Promoting the use of reference management tools: An opportunity for librarians to promote scientific tradition,” is instructive in the present context. They found that although such tools greatly enhance citation management and writing efficiency, many researchers under-utilize them without exploiting features like metadata retrieval, group collaboration, or manuscript formatting. Librarians, being information experts, were seen as key to bridging this gap. By training users in these tools and integrating them into library instruction, librarians reinforce the scientific tradition of proper citation and reduce the clerical burden on researchers.

The same logic now extends to AI tools. Just as librarians taught database searching or citation management, they are increasingly called to teach AI literacy: how to formulate good prompts for a research chatbot, how to verify AI-provided information, how to use AI-based translation or transcription services, etc. Mannheimer et al. (2024) underscore this in their review “Responsible AI practice in libraries and archives.” They observed that many libraries are developing workshops or guides on using AI ethically, for example, advising students on the dos and don’ts of ChatGPT in coursework, or guiding faculty on experimenting with AI for literature reviews while avoiding breaches of confidentiality or bias. This proactive stance positions librarians as AI consultants within their institutions, consistent with their broader mission of information literacy in whatever form it takes.

To fulfill these new responsibilities, librarians themselves must attain a level of AI fluency. Several professional organizations have initiated discussions on core AI competencies for information professionals. These include understanding the basics of how AI/machine learning algorithms work, awareness of data ethics and bias, ability to critically evaluate AI tools/vendors, and skills to configure or customize AI-based library systems. There are also calls for library schools to update curricula, so that new librarians enter the field with exposure to AI concepts and practical experience with relevant technologies (such as using Python for data analysis, or implementing a simple chatbot). Continuing education is equally emphasized for current practitioners. Phinney et al. (2024) note, in the context of health sciences librarians, that continuous upskilling (through workshops, online courses, etc.) is vital as the information landscape rapidly evolves. The diffusion of innovations study by Harisanty et al. (2025) also indicated that librarians who had participated in digital skills training were far more likely to experiment with AI, highlighting the payoff of investing in professional development.

Crucially, none of the literature suggests that librarians’ traditional ethos is made irrelevant by AI, rather, it is augmented. For example, while an AI discovery system might automatically generate a bibliography for a student's topic, librarians are needed to teach that student how to interpret and refine the results, how to distinguish authoritative sources, and how to integrate those sources into their research ethically. This resonates with Kaplan’s (2022) findings on information literacy self-efficacy: in contexts (like Iran) where students were not adequately prepared by school libraries, public libraries stepped in to cultivate those lifelong learning skills. Similarly, academic librarians now extend their literacy mandate to AI literacy, ensuring the academic community can use these powerful tools in a savvy and principled way.

Ethical and policy considerations for AI in libraries

As libraries integrate AI, they must navigate a complex landscape of ethical issues, from data privacy to algorithmic bias to transparency. Responsible AI use is a recurring theme in the literature, reflecting broader societal concerns but also some nuances specific to library and knowledge environments. Mannheimer et al. (2024) provide a comprehensive literature review on this topic, examining how libraries and archives are approaching responsible AI. They found that library discourse often aligns with core library values, privacy, intellectual freedom, and equity of access, when evaluating AI systems. For instance, if a library deploys a recommendation algorithm, is it transparent about how the algorithm works and does it protect user reading history? If a chatbot logs user queries, how is that data stored and who has access? These questions are driving libraries to formulate their own AI usage policies and ethical guidelines. In some cases, libraries have chosen not to implement certain AI features because they conflict with patron privacy (for e.g. avoiding cloud-based AI services that might harvest user data).

Broader research on AI ethics offers frameworks that libraries can adapt. Laine et al. (2024) conducted a systematic review of ethics-based AI auditing across sectors, focusing on how ethical principles are conceptualized and operationalized. They identify key principles, such as transparency, justice/fairness, non-maleficence, responsibility, and privacy, and discuss how different stakeholders (developers, users, regulators) prioritize these. For libraries, these principles translate into practical steps: transparency might mean openly documenting where and how AI is used in library services; fairness might involve regularly checking an AI recommendation system for inadvertent bias against under-represented subjects or authors; responsibility could entail having humans review AI decisions, especially in sensitive contexts like deciding to withdraw content or not. Libraries are well positioned to implement AI auditing given their experience with information governance and assessment. Some academic libraries have even formed ethics committees or task forces on AI, to review proposed projects and ensure alignment with values and regulations.

A concrete ethical issue in libraries is AI-based user profiling. Modern knowledge management systems, especially in big libraries or consortia, can use AI to analyze user data (search queries, borrowing history, etc.) to personalize services. While personalization can improve user experience, it also raises flags about surveillance and autonomy. Njiru et al. (2025) critically reviewed such AI-driven user profiling and noted prominent challenges. These include the risk of reinforcing biases (e.g. if an algorithm only ever shows users items similar to what they used before, it can create an information bubble), the potential infringement on user privacy and anonymity, and lack of transparency if users don’t know profiling is happening. The authors discuss mitigation strategies like anonymizing data, allowing users to opt out of profiling, and ensuring algorithms are periodically evaluated for bias or error. They advocate that any knowledge organization implementing AI profiling should do so under a framework of ethical oversight, likely involving policy guidelines and possibly external audits.

Finally, national and international policies are beginning to influence library AI practices. Many countries have introduced AI laws or strategy documents, as noted in Concha et al.’s (2024) review. For example, the European Union's proposed AI Act will regulate high-risk AI systems, potentially including those used in education or public services, which could cover some library applications. Libraries, as institutions often publicly funded and mission-driven, may eventually be held to higher standards of algorithmic accountability. Professional bodies like the IFLA have preemptively issued statements and guidelines; the IFLA Statement on Libraries and Artificial Intelligence (2020) is cited by Concha et al. (2024) as outlining uses of AI in libraries and urging an approach consistent with human rights and library ethics.

We also see a push for open science principles to permeate AI development, e.g. using open training data, open-source algorithms, which aligns with library advocacy for openness. Guevara-Pezoa (2023) in a bibliometric review links open science to innovation, suggesting that transparency and collaboration (hallmarks of open science) accelerate innovation. Libraries can exemplify this by favoring AI systems that are auditable and community-developed, rather than proprietary black boxes.

In conclusion, the literature portrays the adoption of AI in libraries as a microcosm of the larger AI ethics dialogue, but with the library's patron-centric perspective in focus. Ethical AI in libraries is not just about avoiding harm; it is about actively upholding the trust that users place in these public institutions. The state of the art includes not only technological advances and user studies, but also these normative discussions. As Heyder et al. (2023) argue, we need an ethical management of human–AI interaction that spans from theory to practice. For libraries, this means creating policies, educating staff and users, and choosing AI deployments carefully so that they enhance rather than compromise the library's mission. The very fact that so many library and information science researchers are publishing on AI, from technical evaluations to ethical reviews, shows a profession actively engaging with change. Libraries are leveraging AI to reinvent services and extend their reach, while simultaneously carving out a leadership role in advocating for responsible AI that serves the public good in education and research.

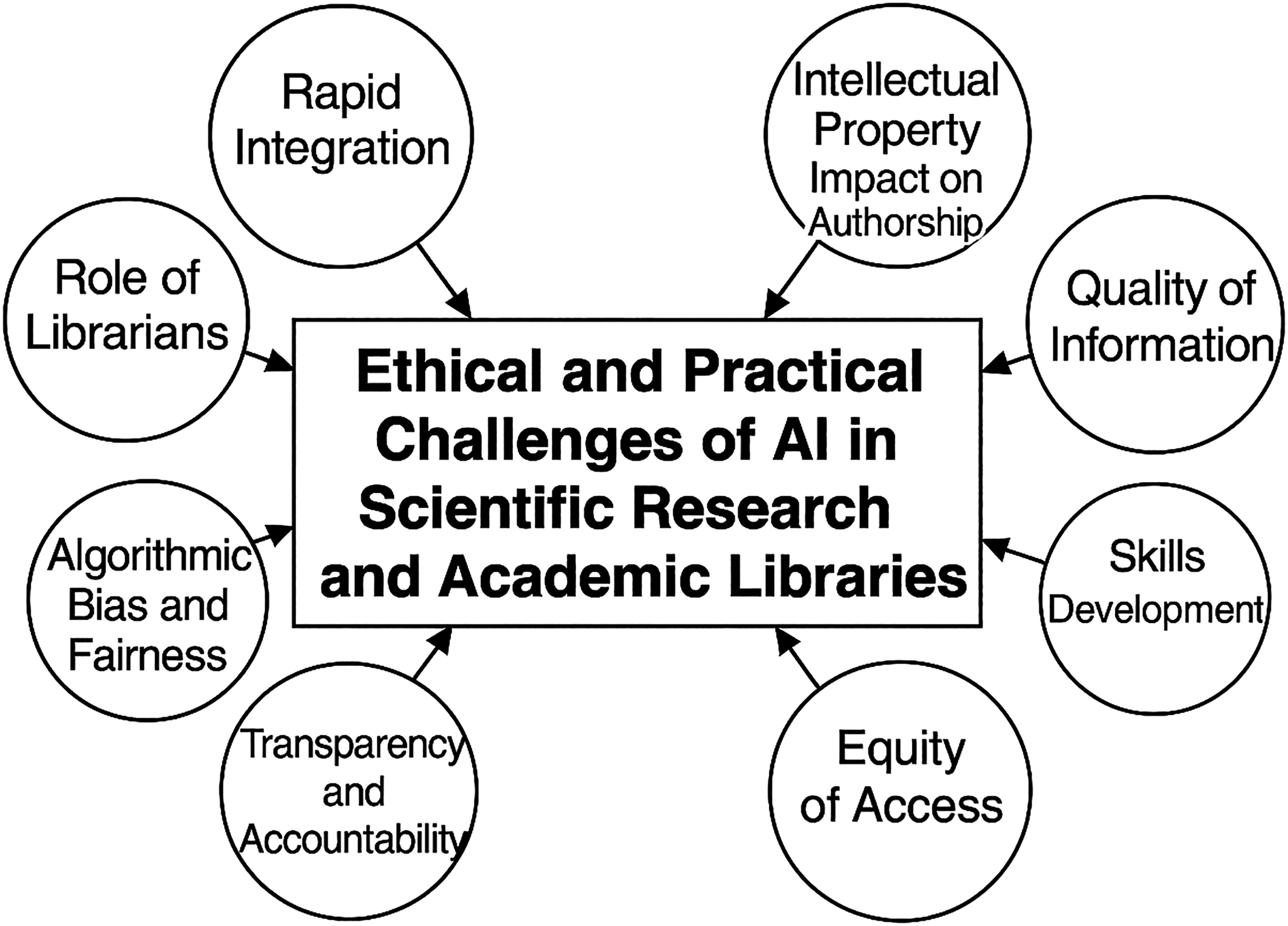

The ethical and operational risks associated with AI adoption in libraries can be both systemic and context-specific. Figure 3 offers a synthesized overview of these challenges, emphasizing the intersections between technological potential, regulatory uncertainty, and professional responsibility.

Results

The reviewed literature reveals a rapidly evolving intersection of AI with academic libraries, scholarly communication, and education. A clear trend is the growing implementation of AI technologies in library operations and services. Academic libraries are experimenting with AI-driven tools to enhance efficiency in information services and customer support. Also they are no longer simply information providers but are being reimagined as dynamic spaces for digital pedagogy, research collaboration, and intellectual empowerment (O’Donnell and Anderson, 2022). For instance, Zondi et al. (2024) report that AI has been integrated into various academic library services to streamline operations and improve service quality. Their comprehensive review of library practice finds that AI can augment routine tasks and user interactions, heralding more efficient service delivery. However, they also identify significant challenges such as shortages of AI expertise, insufficient infrastructure, limited funding, and even staff anxieties about job security that complicate AI adoption (Zondi et al., 2024). Similarly, a systematic review by Concha et al. (2024) shows a surge in research on library AI applications since 2019, reflecting heightened global interest as institutions publish new AI strategies and policies. This bibliometric analysis notes that studies on AI in libraries have expanded across regions and languages in recent years, indicating that academic libraries worldwide are recognizing AI's potential and actively exploring its use (Concha et al., 2024). In developing contexts, the promise of AI is tempered by practical constraints: Zondi et al. (2024) observe that in countries like South Africa, success with library AI projects hinges on careful planning, strong management support, and ongoing promotion to overcome resource limitations. Overall, the literature suggests that while AI offers academic libraries powerful new capabilities, its implementation is uneven and requires deliberate effort to navigate technical and organizational hurdles.

A prominent application of AI in libraries is the deployment of conversational agents or chatbots to assist users. Aboelmaged et al. (2025) provide an integrative review of library chatbots, showing that these systems are increasingly used to handle user queries, perform virtual reference interviews, and deliver information in a conversational manner. They found that library chatbots have been especially beneficial for providing uninterrupted remote support; for example, during the COVID-19 pandemic when physical access to libraries was limited, AI chatbots ensured that patrons could still obtain help and information at any time (Aboelmaged et al., 2025). The evolution of chatbot technology in libraries has been rapid: early systems were quite basic, but newer AI-driven chatbots (sometimes powered by LLM like ChatGPT) can understand natural language questions and supply relevant answers or guidance, effectively handling a portion of the reference workload that was once exclusively managed by librarians.

According to Aboelmaged et al. (2025), literature on library chatbots has concentrated on several themes, including the drivers for chatbot adoption, user experiences and satisfaction, increased usage during pandemic-related library closures, and various technical and ethical challenges, and while this area of research is still in its infancy, it shows a clear trajectory of growth. Giray et al. (2025) further illuminate the role of generative AI in academic writing support and reference services. They argue that generative AI tools are already influencing how students and researchers approach writing tasks and library interactions, yielding efficiency gains in tasks like drafting or language translation.

At the same time, these authors caution that such tools raise concerns about academic skill development and integrity. The ease with which generative AI can produce fluent text might tempt users to bypass the deeper learning that comes from the writing process, and it poses new challenges for libraries in advising users on acceptable and ethical use (Giray et al., 2025). Overall, the introduction of AI chatbots and writing assistants in the library context has demonstrated clear benefits, such as 24/7 availability, personalized assistance, and faster servisse, but also highlights ongoing concerns around accuracy, potential AI hallucinations, and the need for user education to ensure these tools are used appropriately.

Beyond chatbots, libraries are leveraging AI for a range of specialized information services and decision support. Zhang et al. (2025) explore the use of advanced analytical techniques (specifically an Analytic Hierarchy Process model) to evaluate discipline-specific information services in academic libraries. Their work, situated in an era of digital intelligence, exemplifies how libraries can apply AI and quantitative decision methods to improve service planning and resource allocation. By incorporating AI-driven evaluation, libraries are able to better assess user needs and the performance of tailored services for different academic disciplines (Zhang et al., 2025). Other emerging applications include AI-powered recommender systems for literature or resources, automated indexing and cataloging systems using machine learning, and computer vision for managing digital collections.

Moruf (2024) provides a broad review of such emerging AI tools in libraries and their prospects for academic research. According to this author, modern libraries are beginning to embrace tools like natural language processing for searching and summarizing literature, machine learning algorithms for trend analysis in bibliometrics, and generative AI for tasks such as proofreading, translating, or even drafting sections of research papers. These technologies can significantly simplify and accelerate research processes, making it easier for students and scholars to find and use information. For example, AI tools now assist with tasks ranging from automatically checking grammar and formatting references to detecting plagiarism or suggesting relevant articles based on a manuscript draft (Moruf, 2024). However, with these opportunities come critical challenges. Many authors note that responsible integration of AI is essential to maintain academic quality and integrity.

Moruf (2024) emphasizes ethical considerations in using generative AI for research and writing, urging that libraries develop guidelines for fair use of AI and instruct users on the limitations of these tools. Indeed, a recurring theme across the literature is that libraries and information professionals must act as stewards of ethical AI usage, ensuring that technologies are leveraged to assist learning and research without undermining trust or scholarly standards.

A significant body of work also addresses the role of librarians and educators in the age of AI, especially concerning information literacy and AI literacy. As AI becomes embedded in information systems, both library staff and patrons require new skills to navigate this landscape. Harisanty et al. (2025) investigate librarian behavior toward AI and find a spectrum of attitudes and adoption levels among library professionals. Using Rogers’ diffusion of innovations theory, they note that some librarians emerge as early adopters and champions of AI tools, experimenting with innovations to improve services, while others are more cautious or even resistant, often owing to concerns about reliability, ethical issues, or lack of training. Key factors influencing librarians’ willingness to use AI include perceived usefulness of the technology, the ease of learning to use it, and the support provided by their institutions (Harisanty et al., 2025).

This suggests that successful implementation of AI in libraries is not just a technical matter but also a human one: professional development, peer mentoring, and clear communication about the benefits of AI can encourage more librarians to engage positively with these tools. In line with this, Bapte and Bejalwar (2022) illustrate how librarians are already leading the way in promoting digital tools to uphold scholarly best practices. They discuss the promotion of reference management software (e.g. Zotero, EndNote, Mendeley) as an opportunity for librarians to reinforce the scientific tradition of proper citation and research organization. By training and encouraging users to adopt such tools, librarians help researchers manage information more effectively and avoid plagiarism (Bapte and Bejalwar, 2022). Although reference managers are not AI per se, this example highlights librarians’ pedagogical role in bridging technology and scholarship, a role now extending to AI literacy as well.

Indeed, AI literacy has become a critical extension of information literacy in the educational sphere, and the literature documents rising efforts to integrate AI literacy into curricula. Davy Tsz Kit Ng et al. (2024) provide a review of AI literacy education initiatives at the secondary school level, emphasizing that today's students must acquire a foundational understanding of AI technologies, their capabilities, and their limitations. They define AI literacy as a set of competencies enabling individuals to critically evaluate AI outputs, communicate and collaborate with AI systems, and use AI tools effectively and responsibly. In their review, Davy Tsz Kit Ng et al. (2024) find that educators around the world, from Hong Kong to Finland to the United States, are beginning to introduce AI concepts in classrooms and to develop pedagogical strategies for teaching students how AI works and how it should (and should not) be used in learning.

However, integrating AI literacy into standard curricula remains challenging, as teachers themselves often need guidance and training to confidently handle AI topics. The use and misuse of AI by students (such as using ChatGPT to do homework) has left many schools scrambling to develop policies and learning modules that channel AI use in positive directions rather than banning it outright. Hossain et al. (2025) add insight from the perspective of higher education students, particularly those writing in a second language. They explore how English as a Foreign Language students perceive AI tools in academic writing. Many such students see AI-based writing assistants (for grammar checking, text generation or translation) as valuable aids to overcoming language barriers and improving their academic English.

Hossain et al. (2025) note that familiarity with AI writing tools is growing among students, but knowledge about the proper and ethical use of these tools is lagging. Notably, students express mixed feelings on the ethics: some view AI suggestions as just another form of help (akin to an advanced spellchecker), while others worry that relying on AI might constitute a form of cheating or could hinder their own skill development. This underscores a need for clear guidelines and education on AI use in academic work. In related contexts, public and academic libraries are seen as pivotal in continuing to foster information literacy and lifelong learning skills.

Kaplan (2022), for example, highlights how public library programs can boost individuals’ self-efficacy in information literacy, especially in environments where formal education falls short in preparing learners for the information demands of contemporary society. Although Kaplan’s (2022) commentary focuses on Iranian public libraries and traditional information literacy, the underlying message resonates in the AI era: libraries are essential centers for learning, where people can develop the critical thinking and evaluative skills needed to navigate increasingly AI-mediated information landscapes.

Artificial intelligence is also making significant inroads into scholarly communication and publishing, and the reviewed literature reflects both enthusiasm and caution in this domain. Kousha and Thelwall (2024) present a comprehensive overview of how AI is being used to support academic publishing and peer review processes. They find that a variety of software tools, often powered by AI, have been developed to automate or assist with tasks such as suggesting journals for manuscript submission, checking incoming submissions for plagiarism or compliance with formatting guidelines, selecting potential reviewers for a paper or grant proposal, and even generating initial review comments or scoring manuscripts.

According to their review, some of these applications have proven quite useful, for example, algorithmic matching of reviewers to papers is already employed by many journals to handle high submission volumes, and it tends to expedite the reviewer assignment process (Kousha and Thelwall, 2024). Artificial intelligence-driven quality checks (like automatic flagging of methodological flaws, missing sections, or language issues in a submitted paper) can help editors identify problematic manuscripts early. However, fully automated peer review remains out of reach.

Kousha and Thelwall (2024) stress that, despite rapid progress, AI tools have not yet demonstrated the nuanced judgment required for substantive content evaluation in peer review. Tasks like assessing the soundness of a study's conclusions or the significance of its contribution are complex and context-dependent, and current AI lacks the reliability and depth of understanding to replace human reviewers. Another study by Carabantes et al. (2023) provides empirical evidence on this point by testing ChatGPT's performance as a surrogate peer reviewer. They had generative AI models (GPT-3.5 and GPT-4, through interfaces like ChatGPT and Bing Chat) produce reviews for already-published journal articles and then compared these AI-generated reviews with the original human peer review reports. The AI was indeed able to produce detailed review texts, which on the surface could pass as plausible peer reviews. Yet, as Carabantes et al. (2023) document, these AI reviews often contained hallucinations (fabricated content or references) and missed context that the human reviewers had noted. One major limitation observed was the context window constraint of the models: they could only consider a certain amount of text at once, which made it difficult for the AI to reliably handle long and complex manuscripts or to recall details across an entire paper.

Thus, while the experiment showed some potential for AI assistance (especially in providing quick summaries or catching obvious issues), it ultimately underscored that current generative AI cannot be fully trusted to deliver expert-quality peer reviews without human oversight. In a related commentary, Kankanhalli (2024) reflects on how the peer review system might evolve in the age of generative AI. There is recognition that AI could help alleviate some burdens on reviewers (for instance, by automating checks of references, statistical errors, or writing clarity) and perhaps make the review process more efficient. However, Kankanhalli (2024) also warns of new challenges: journals and conferences may need to adapt their policies to clarify the acceptable use of AI for reviewers and authors, to ensure transparency and maintain fairness. For example, if an author uses AI to polish their writing, or a reviewer uses AI to draft portions of their review, those practices should be disclosed to uphold the integrity of the academic communication process.

Concerns about academic integrity, fraud, and ethical use of AI surface frequently in the literature, indicating that the rise of AI is intertwined with broader issues of research ethics. Stockemer and Reidy (2024) describe cases of blatant academic fraud that, while not caused by AI, form part of the challenging new publishing environment in which AI exists. They report on a disturbing phenomenon of fake acceptance letters and financial scams in the context of academic journal publishing. In one scheme, unscrupulous third parties generated counterfeit journal acceptance letters (for papers that were never actually submitted or peer-reviewed) and then tricked authors, often early-career researchers eager to publish, into paying bogus article processing charges. This kind of misconduct, as Stockemer and Reidy (2024) document, exploits the pressures of the open access publishing model and the complexities of online workflows. While AI is not directly implicated in these cases, the authors mention that the overall scholarly landscape is in flux owing to factors like the open access movement and the advent of AI-driven tools (Stockemer and Reidy, 2024). The implication is that the community must remain vigilant: just as we address traditional fraud, we also need strategies for new AI-related threats such as deepfake data, AI-generated plagiarism, or automated paper mills.

Mannheimer et al. (2024) directly tackle the notion of responsible AI practice in libraries and archives. In their literature review, they note that information organizations are drafting guidelines to ensure that any AI used aligns with core values of librarianship, such as intellectual freedom, equity of access, privacy, and trustworthiness of information services. This involves adopting ethical principles for AI, for example, being transparent when a patron is interacting with an AI system rather than a human, ensuring that AI algorithms used for search or recommendation do not unintentionally marginalize certain viewpoints or user groups, and protecting user data that AI systems might collect or analyze (Mannheimer et al., 2024).

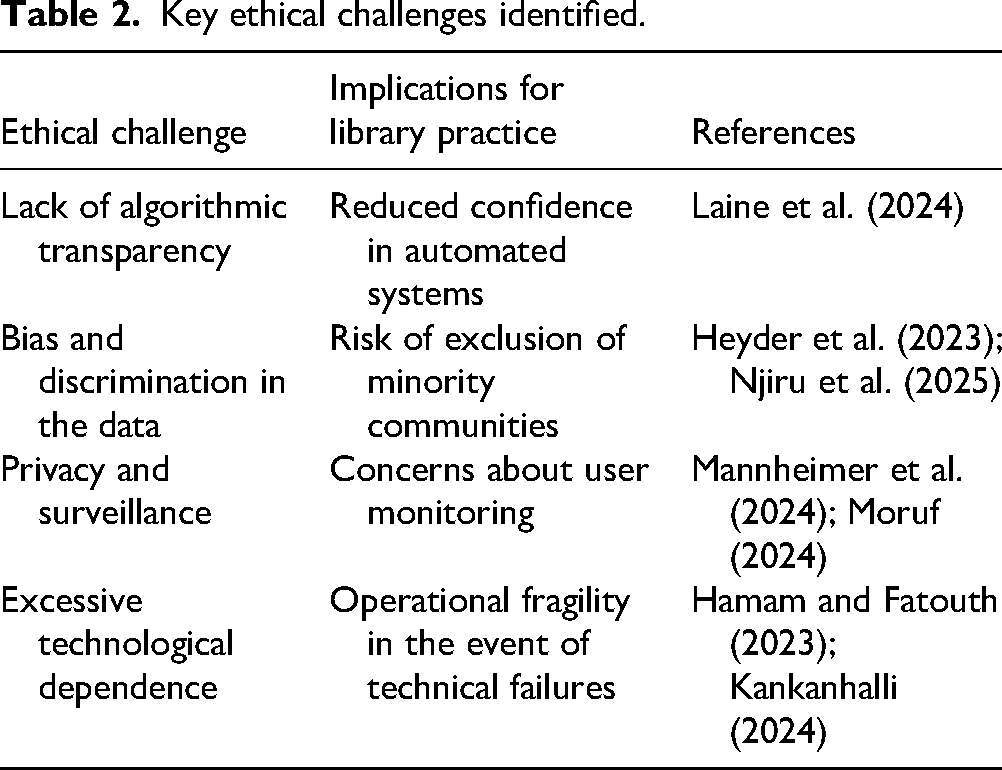

Expanding on these insights, Table 2 summarizes the main ethical challenges identified across recent literature. Each challenge is linked to its practical implications for library operations, as well as to key academic sources that explore these concerns in depth.

Key ethical challenges identified.

Broader frameworks for governing AI ethically are also emerging. Heyder et al. (2023) examine strategies for the ethical management of human–AI interaction from a sociotechnical perspective, proposing theoretical models to integrate ethical considerations into AI design and implementation. Likewise, Laine et al. (2024) focus on ethics-based AI auditing, compiling how organizations can systematically review and audit AI systems against ethical principles (like fairness, accountability, transparency) to identify and mitigate biases or risks. These works collectively suggest that as AI becomes more prevalent, formal mechanisms (audits, guidelines, and ethical frameworks) are needed to ensure that AI tools are deployed responsibly in academic settings. A specific concern in knowledge environments is AI-based user profiling, using AI to analyze user behavior and tailor services, which can raise privacy issues.

Njiru et al. (2025) critically review such practices, cautioning that while personalization can improve user experience in knowledge management systems, it must be balanced against risks of privacy invasion and discrimination. They advocate for strict ethical controls and user consent protocols whenever AI algorithms track or profile patrons (Njiru et al., 2025). These ethical perspectives underscore that the integration of AI in libraries, research, and education cannot be divorced from questions of policy, governance, and moral responsibility.

Finally, the literature consistently highlights the expanded role of academic libraries as partners in research and innovation in this AI era. Academic libraries have increasingly become hubs for research support beyond their traditional remit. Osdoski and Costa (2024) present a systematized review of library-based research support services, noting that academic libraries are actively engaging in areas like research data management, digital scholarship, and researcher training. They find that modern libraries often help scholars with data curation plans, open access publishing, bibliometric analyses, and even grant writing support, thereby embedding the library in the research lifecycle (Osdoski and Costa, 2024).

The advent of sophisticated AI tools in literature searching and analysis only amplifies this role. For example, in the health sciences domain, Rogers et al. (2024) describe how librarians began using AI tools to accelerate systematic reviews and evidence syntheses. Known as rapid reviews, these are streamlined literature reviews that leverage AI for tasks like scanning large volumes of citations, extracting key findings, or even drafting summaries of evidence.

Rogers et al. (2024) recount how applying AI in this workflow significantly reduced the time required to identify relevant studies and compile results, effectively allowing medical librarians and researchers to learn on the job by offloading some labor-intensive steps to machine assistance. Scherbakov et al. (2025) similarly explore LLM as aids in literature reviews, even conducting a systematic review with the help of a language model to demonstrate its capabilities. Their findings illustrate that LLM can quickly summarize articles and suggest connections between studies, acting as a knowledgeable assistant during the review process.

However, they also caution that quality control by human experts remains essential, as the AI may overlook nuances or propagate errors if not carefully checked (Scherbakov et al., 2025). In the context of academic writing and dissertations, AI's role is also being navigated. Kell et al. (2025) discuss the experiences of students incorporating AI tools into writing their practice-based dissertations. They find that AI can be a double-edged sword: on the one hand, generative AI provides helpful feedback and can overcome writer's block by suggesting ways to articulate ideas; on the other hand, students and faculty mentors alike must consider how to maintain a human-centric scholarly process, ensuring that the resulting work reflects original critical thinking and meets academic standards. The overall picture that emerges from these studies is one of academic libraries and researchers actively experimenting with AI to enhance productivity and innovation. Importantly, it also shows an acute awareness of the accompanying challenges, from maintaining ethical standards to preserving the integrity of learning and research processes.

Discussion

To frame the complex dynamics that underlie the integration of AI into academic libraries, Figure 4 outlines the ethical and transformational tensions shaping this process. These tensions represent critical inflection points that influence decision-making, professional identity, and service innovation.

The synthesis of these 27 sources portrays a scholarly landscape in transition. Academic libraries and the broader research community are enthusiastically exploring AI's potential while grappling with its pitfalls. A critical discussion of these findings highlights several overarching themes: the balance of efficiency vs. quality in AI adoption, the evolving roles and competencies required of librarians and researchers, the imperative of ethical governance, and the broader impact on scholarly integrity and innovation.

AI’s promise of efficiency vs. quality and trust

One prominent theme is the tension between the efficiency gains offered by AI and the need to maintain quality and trust in scholarly work. Numerous studies celebrate the productivity enhancements from AI, whether it is a chatbot handling routine queries so that librarians can focus on complex tasks, or an algorithm speeding up literature screening for a review. For instance, Rogers et al. (2024) and Aboelmaged et al. (2025) both showcase how AI systems can save time and extend service coverage. These efficiency gains are not trivial: they can mean faster turnaround for patron inquiries, more comprehensive literature searches, and even cost savings in operations. However, the results also expose clear limitations and risks that accompany reliance on AI. The work of Carabantes et al. (2023) on AI-generated peer reviews underscores a key point: automation can mimic but not yet fully replicate human judgment. While an AI might check formatting or summarize content in seconds, it may also produce false information (e.g. hallucinated references or incorrect interpretations) that a human expert would catch. Thus, there is a consensus across the literature that AI tools must be used as assistants, not autonomous decision-makers, in scholarly contexts.

The finding by Kousha and Thelwall (2024) that AI has not clearly demonstrated value in the core evaluative aspects of peer review is indicative of a broader caution: speed and convenience cannot come at the expense of rigor and accuracy. Trust is a cornerstone of libraries and academia: users trust librarians to provide reliable information, and readers trust that published research has been properly vetted. If AI systems introduce errors or biases, a concern raised by Scherbakov et al. (2025) and others is that trust can be undermined. Therefore, the challenge moving forward is to strike an appropriate balance. Libraries and research institutions should continue to leverage AI for what it does well, handling volume, automating rote tasks, and detecting surface-level patterns, while instituting robust oversight and quality control mechanisms. In practical terms, this might mean developing workflows where AI outputs are systematically reviewed by humans (for e.g. an AI-generated literature summary that a librarian verifies and supplements) or setting thresholds beyond which human intervention is mandatory (such as any critical decision about collection development, user privacy, or publishing ethics being made by a person, not an algorithm). By consciously pairing human expertise with AI efficiency, the academic community can aim to harness the best of both, improving productivity without compromising standards.

Evolution of professional roles and skills

A second major theme is the evolution of roles for librarians, educators, and researchers in response to AI. The literature makes it evident that AI is not simply a new tool but a catalyst forcing a re-examination of professional identity and skill sets in academic libraries. Librarians, traditionally experts in curating and disseminating information, now find themselves needing to be technology specialists, data ethicists, and AI educators. Harisanty et al. (2025) illustrate that within librarianship there is a range of readiness: some librarians are upskilling and becoming fluent in AI technologies, while others feel uncertainty or concern. Importantly, their study and others suggest that institutional support and training will be pivotal in enabling library staff to adapt. If libraries invest in continuous professional development, for example, workshops on understanding AI algorithms, forums to share experiences about new tools, and guidance on managing AI projects, they empower their staff to confidently integrate AI into services.

Conversely, without such support, there is a risk of a skills gap where only a few enthusiastic individuals drive AI initiatives, potentially leading to uneven implementation and burnout. The expanding role of the librarian is also seen in how they mediate between AI tools and users. Bapte and Bejalwar (2022) and Kaplan (2022) both reinforce the image of librarians as educators and facilitators of literacies. In the past, this meant information literacy and digital literacy; now it increasingly includes AI literacy.

Librarians are positioned to guide students and faculty in understanding when and how to use AI tools like ChatGPT, just as they guide them in using databases or citation software. This mentorship role is crucial to ensure that users do not just use AI, but use it well, aware of its fallibilities and ethical use. In academic research, similarly, the role of the researcher is evolving. Scholars must now be conversant not only in their disciplinary methods but also in how to deploy AI for data analysis, literature reviews, or writing, as highlighted by Kell et al. (2025) in the context of doctoral work. The notion of a “human-centric” approach implies that researchers should treat AI as a collaborator that augments human creativity and inquiry, rather than a replacement for them. This requires new skills in prompt design (how to ask questions of AI), critical evaluation of AI-generated outputs, and even a comfort with a trial-and-error approach to incorporating novel tools into one's workflow.

Educational programs, both for librarians in graduate library schools and for students across disciplines, will need to update curricula to include these competencies. The broader implication is that professions in the knowledge sphere are being redefined: future librarians and academics will be those who can seamlessly blend traditional critical thinking and domain expertise with adept use of AI and data-driven tools. Far from making these professions obsolete, AI is increasing the demand for high-level human skills, such as interpretation, ethical judgment, and empathic user engagement, that cannot be automated.

Ethical and responsible AI governance

The need for strong ethical governance of AI in academic contexts emerges as a unanimous concern. The results highlight scattered instances of AI-related ethical breaches (like potential AI plagiarism or biased profiling) and even unrelated fraud (Stockemer and Reidy, 2024) that together contribute to an atmosphere of caution. In the discussion, it becomes clear that establishing trust in AI systems is paramount if they are to be sustainably integrated into libraries and research. Laine et al. (2024) and Mannheimer et al. (2024) both underscore that responsible AI is not automatic; it must be actively cultivated through policies, audits, and a culture of ethics. One practical step many have pointed to is the development of explicit guidelines for AI use. Academic libraries, for example, might draft ethical use policies for AI chatbots, clarifying issues like data privacy (what user data it is acceptable for the chatbot to retain or learn from), transparency (whether the AI should identify itself as non-human when interacting with a patron), and bias mitigation (how the system's suggestions or answers will be evaluated for fairness and inclusivity).

Recent work extends these concerns to the domain of cybersecurity and systemic resilience. Radanliev (2025a) demonstrates that modern AI systems can amplify cyber risks in sensitive areas of research, where opaque decision-making and pervasive data collection threaten user autonomy and institutional trust. Complementing this, a related contribution emphasizes the integration of transparency, fairness, and privacy as foundational design principles in AI development, rather than as remedial safeguards (Radanliev, 2025b). Aligning the findings of this review with these perspectives suggests that academic libraries must not only ensure responsible oversight of AI-driven services but also anticipate cybersecurity vulnerabilities and embed ethical standards into the technological infrastructure itself.

The discussion of Njiru et al. (2025) about user profiling amplifies the privacy aspect: AI's strength lies in learning from large amounts of data, but in a library or educational setting, users have a right to know and control how their data, whether search queries, reading history, or personal information, are being used by AI systems. Ethical AI auditing, as described by Laine et al. (2024), offers a systematic way to enforce these values by periodically checking that AI tools in use conform to agreed principles (for instance, an audit might reveal if a recommendation algorithm consistently overlooks content about certain groups, indicating a bias that needs correction).

Moreover, the ethical governance theme extends to the research process itself. The community is actively debating norms around AI-generated content in scholarship. A question faced by many universities and publishers in 2024–2025 is: Should AI contributions be acknowledged or limited in academic writing? Some journals have begun requiring authors to disclose if AI was used in producing a manuscript. This push for transparency aligns with the responsible conduct of research; readers and reviewers deserve to know what role AI played in generating results or text. Hamam and Fatouth (2023) argue that leveraging ChatGPT in research can be beneficial, but only if done with full awareness of its limitations and with honesty about its use. Weighing all these insights, one arrives at the notion that ethical stewardship of AI is now a collective responsibility. Librarians, as information custodians, probably need to take on advocacy roles, ensuring that the AI products adopted by their institutions are not just convenient or cost-effective, but also aligned with academic integrity and social values. The discussion here serves as a call to action: universities and libraries might consider forming AI ethics committees or task forces (if they haven’t already) that bring together librarians, IT staff, faculty, and students to continuously evaluate the impact of AI deployments on campus. In essence, human oversight and ethical vigilance must grow in parallel with AI usage to safeguard the scholarly ecosystem.

Impacts on academic integrity and learning

A closely related theme, deserving special attention, is the impact of AI on academic integrity and the nature of learning. Generative AI systems capable of producing text, code, or even entire essays have forced academia to confront new forms of plagiarism and misconduct. Giray et al. (2025) and Hossain et al. (2025) both touch on the dilemma that while AI can assist students in writing and learning, it also makes it easier to evade authentic intellectual effort. For example, a student could ask ChatGPT to write a literature review or solve an assignment, potentially undermining the learning process and violating honesty policies. The literature suggests that institutions are beginning to respond by revising academic integrity guidelines to include AI (some universities now explicitly ban or regulate the use of AI in coursework unless permitted). However, a purely punitive or prohibitive approach may not be sustainable; as AI becomes ubiquitous, detecting AI-generated content reliably is itself an arms race, and students need to learn how to work alongside AI in legitimate ways.

The widespread adoption of AI in research environments presents pressing challenges related to data privacy, algorithmic bias, transparency, and accountability. Figure 6 summarizes these critical dimensions, framing the debate on how AI may affect the foundational principles of academic inquiry and integrity.

Using AI in scientific research.

These risks highlight the urgent need for regulatory frameworks, institutional safeguards, and pedagogical strategies that promote responsible use of AI. The following discussion explores how academic integrity can be upheld in the face of rapidly evolving technological capabilities.

Here, libraries and instructors together must foster a culture where the use of AI is transparent and complements learning rather than replacing it. One promising angle discussed in the literature is educating students about the shortcomings of AI. When students realize, for instance, that ChatGPT can produce confidently wrong answers or cannot be cited as a credible source (because it provides no verifiable references for its claims), they may better appreciate the value of doing the intellectual heavy lifting themselves or at least cross-verifying AI outputs. Additionally, assignments can be redesigned to be AI-resistant or rather AI-enhanced, for instance, tasks that require personal reflection, critical analysis of AI outputs, or iterative feedback with an instructor that cannot be easily outsourced to a machine. The notion of open science brought up by Guevara-Pezoa (2023) also connects here: promoting openness in methods and data can act as an antidote to some negative uses of AI.

If students and researchers are steeped in a culture of sharing and transparency, the temptation to use AI in covert or deceptive ways might lessen, and the collaborative ethos of science can be reinforced (Guevara-Pezoa, 2023). In summary, the discussion recognizes that AI challenges us to reaffirm what academic integrity means. Rather than seeing technology and integrity in opposition, the way forward might be to integrate AI literacy into integrity education, teaching how to use AI appropriately (for example, for generating ideas, improving grammar, or as a study aid) while clearly delineating and prohibiting unethical uses (such as submitting AI-written work as one's own or using AI to fabricate data).

Broadening access and innovation

It is also worth discussing how AI, if harnessed responsibly, might serve as a catalyst for broader access to information and spur innovation in research practices. Many of the sources hint at positive outcomes: AI could democratize certain expertise, for instance, by helping non-native English speakers write in fluent academic English, a benefit noted by Hossain et al. (2025), or by guiding novice researchers through the initial stages of literature discovery with intelligent search tools.

Moreover, AI's ability to analyze massive datasets in seconds opens possibilities for new kinds of research questions and methodologies that were previously untenable. We see early evidence of this in how LLM were used by Scherbakov et al. (2025) to map out a large body of literature swiftly, potentially enabling meta-analyses or interdisciplinary connections that a human might miss when overwhelmed by information. Open science, as reviewed by Guevara-Pezoa (2023), provides a synergistic backdrop to these developments: when research outputs (papers, data, code) are openly available, AI systems can be trained on them to create even more powerful tools that benefit everyone.

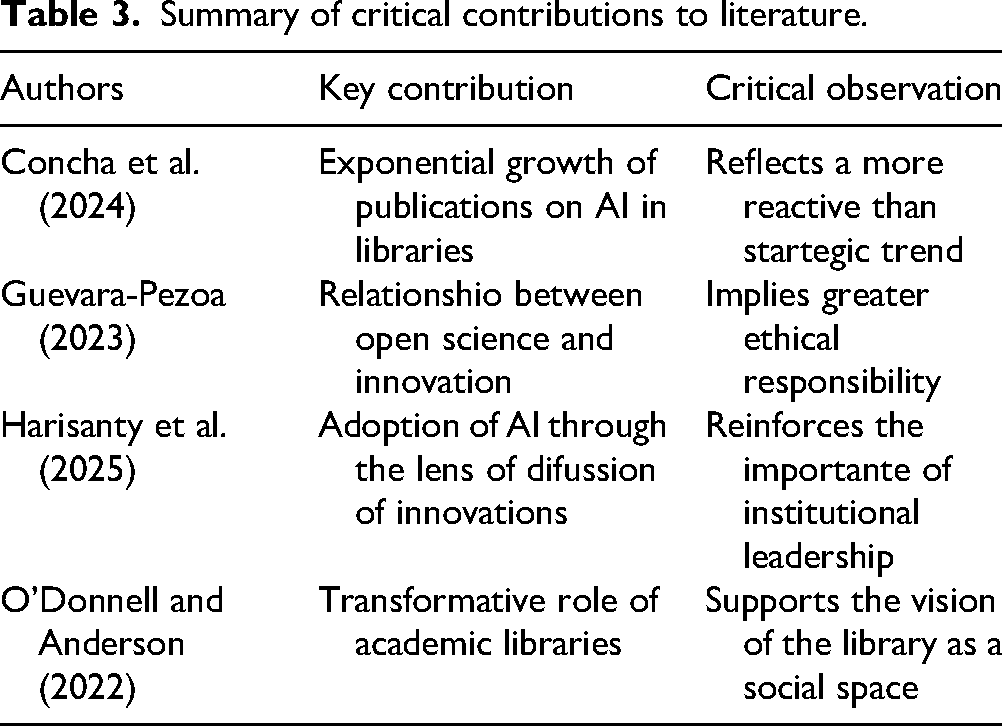

Before moving to the final reflections, Table 3 presents a synthesis of key scholarly contributions. These works offer diverse critical perspectives on the evolution of AI in academic library services and highlight recurring themes that shape the discourse.

Summary of critical contributions to literature.

Conclusion

This study set out to systematically examine how academic libraries are responding to the integration of AI, particularly generative AI and large language models, into scholarly communication and research support. Drawing on 27 peer-reviewed articles published between 2022 and 2025 plus 2026, the findings reveal a field in flux, marked by innovation, experimentation, and critical introspection.

From the emergence of AI-driven chatbots and intelligent search tools to the ethical and pedagogical concerns surrounding AI literacy and academic integrity, the research reviewed underscores a central paradox: libraries are both early adopters of AI technologies and guardians of the values that those very technologies can challenge. Artificial intelligence promises new efficiencies, greater personalization, and expanded access to knowledge. However, it also introduces new risks, of bias, opacity, misinformation, and the erosion of human expertise, especially in areas where nuance, context, and ethical judgment are paramount.

This review makes clear that academic libraries are not passively absorbing these technologies. Rather, they are actively shaping the conversation: developing training programs, engaging in ethical audits, piloting new services, and critically, reasserting their role as mediators between knowledge and technology. The evidence points to a profession that is aware of the stakes and deeply invested in making AI work for, rather than against, scholarly values.

However, several challenges remain. There is a lack of longitudinal data on the real-world impacts of AI tools in library settings. Ethical frameworks exist in theory but are inconsistently applied in practice. And while students and researchers increasingly rely on AI for writing and research, many lack the literacy skills to use it responsibly. If libraries are to retain their relevance and authority in the age of generative AI, they must not only implement cutting-edge tools but also lead in articulating the human-centered, ethical use of those tools.

In this light, the present investigation contributes not only a map of the current landscape, but also a call to action. Academic libraries must position themselves not as reactive service providers, but as proactive agents in shaping a scholarly environment where AI augments rather than replaces human intelligence. This means investing in librarian training, developing institutional policies that balance innovation with accountability, and conducting rigorous, context-sensitive research on AI pedagogical and operational consequences.

The impact of this investigation lies in its synthesis of emerging knowledge and its articulation of a critical juncture: academic libraries now stand at the intersection of tradition and transformation. What they choose to preserve, what they decide to change, and how they navigate the ethical tensions of AI will help define the future of knowledge production and dissemination in higher education.

Footnotes

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.