Abstract

We face the situation of radical change in work due to advances in AI and related digital technologies, with uncertainty about how this change will affect workers’ opportunities for meaningful work designs, as well as the flow-on effects for worker well-being, health, skills and productivity. A ‘technocentric fallacy’ assumes that technology itself is the primary driver of successful digital transformation. Yet we have learned from history that technological considerations alone are insufficient for human well-being and productivity. The long-established sociotechnical systems theory of work design advocates that the social aspects of work (e.g. leadership, culture, task allocations) and technical aspects of work (e.g. AI, robots) need to be jointly optimised to achieve quality work. In this article, we expand this theoretical approach to fit current challenges, and to enable its wider-scale application. Our team of social and technical scholars propose a ‘co-evolving’ sociotechnical systems’ (CeSTS) approach to the design, implementation, and use of digital technology in work contexts. CeSTS expands thinking across time and across levels of analysis to create a more proactive, and ultimately more balanced, approach. Achieving CeSTS requires interdisciplinary collaboration, methods that can track dynamic and emergent change, and a multi-stakeholder approach that both informs research and shapes change in work. Altogether, the radical changes in technology demand an equally radical shift in how scholars investigate, and ultimately help to shape, future work.

Keywords

1. Introduction

Imagine you have a crucial decision to make as part of your job, and you can approach colleagues for advice. Your colleague ‘Bluster’ tells you what he thinks you should do, at length, and then justifies why this is the case. Your other colleague, the equally smart and experienced ‘Prudence’, takes a different approach: she asks you what you think you should do, and then provides feedback, with evidence to support the decision and evidence against it. If this changes your mind, Prudence gives feedback on new options. Which colleague would you choose: Bluster or Prudence?

Your answer likely depends on several factors, such as the stakes of the decision and your available time, but for many purposes you are likely to prefer Prudence. Yet Tim Miller, the professor of artificial intelligence who developed this scenario (Miller, 2023), argues that the predominant design of contemporary AI is akin to Bluster: AI provides a recommendation and explains why the AI system made that decision, when in fact what might better support human decision-making is systems more like Prudence, providing – helping decisions makers to find evidence to critique their ideas. It is not difficult to see the benefits of Bluster-like systems: speed, scale, and the possibility to mitigate human errors. However, it is also not difficult to see the benefits of Prudence: the human worker maintains control of the decision, leveraging their human expertise, and the AI generates clearer bounds of accountability, reduces over-reliance on AI systems, mitigates skills erosion, and develops decision-making skills for novices (Le et al., 2024). Conceptualising AI systems as more Prudence-like opens up AI system designs to be interactive tools driven by the human user, rather than relegating the human user to a role of oversight only. Such a new approach would be to create, at the outset, technology that truly enables augmentation of human ability, rather than it being a tool that replaces human skills and delegates people to the role of monitoring technical systems.

This hypothetical example highlights a key point of view that we develop in this paper: that so far, a technocentric approach to technology dominates; one that fails to give sufficient attention to human and work issues and that threatens to undermine the quality of human work. Technological transformation, such as that involving the application of Artificial Intelligence (AI), sensors and robotics, is occurring more quickly and radically than any other technical change in the past 100 years. This change is being driven in part by drastic advances in computing, increasing access to data and unprecedented investments of up to around $1B per day globally. These technical breakthroughs have led to much speculation about the future benefits of AI and related digital technologies for businesses and work. However, exactly how these benefits can be realised has had far less attention. Moreover, the potential pitfalls of AI and associated technologies for work and workers have been glossed over, with a narrow focus on how many and which jobs will be eradicated or a broad concern with ethical issues (House of Representatives Standing Committee on Employment, Education, and Training, 2025). Technological transformation is a grand challenge for academic scholars globally (George et al., 2016) and generates a complex national challenge with crucial implications for local economic, legal, industrial, innovation, and other systems (Brammer et al., 2019).

There is a need to move beyond macro-level projections about future job numbers to consider how technological change affects the quality of work. Job projection forecasts have largely been derived from highly abstract analyses of general trends in the labour market, relying on standardised systems like O*Net that describe occupation-level trends (Bailey and Barley, 2020). Such an approach disregards the strong likelihood that it is tasks within a job that are likely to change due to automation, rather than the replacement of whole jobs (Paolillo et al., 2022). The change-in-task perspective raises important work design challenges in the machine-human interface, such as how machine-executed tasks should be coordinated with human-executed tasks. The job replacement perspective also disregards that work changes when technology is applied, with the nature of such changes being hugely dependent on choices made by individuals, organisational leaders, regulators, and multiple other stakeholders. In other words, instead of a deterministic perspective around technology replacing human work, it is important to understand how work can be actively designed so that people can use technology to augment their performance, while also ensuring safe, healthy and productive jobs.

In this article our focus is on the diverse ways in which work changes because of digital technologies, and especially, how this change can be investigated and influenced. We use the term ‘intelligent technologies’ to refer to autonomous systems performing tasks that typically require human intelligence, albeit instead utilise advanced algorithms and machine learning techniques reason and problem-solve for task completion (Miller, 2024; Walsh, 2018). We focus on the implications of intelligent technologies for human work design, or the ‘content and organisation of one’s work tasks, activities, relationships, and responsibilities’ (Parker, 2014: 661). A work design lens is appropriate since a vast amount of evidence shows that the quality of people’s work design has profound implications for outcomes ranging from individual mental and physical health to organisational-level productivity and even extending beyond to national levels of people’s happiness (Parker et al., 2017a, 2021). This means that, if AI impairs the quality of work design, it is likely to reduce people’s job satisfaction, impair their mental health, and impinge on their work performance.

Broadly, three sorts of changes affect work design. First, because intelligent technologies can carry out cognitive tasks previously carried out only by humans, changes are occurring in ‘what’ tasks people do, and ‘how’ they execute those tasks. For example, AI can now carry out and/or support tasks such as creative idea generation, diagnosis, analysis of information, and even management. The agency of technology is also unprecedented. Increasingly AI-based systems can directly interact with the environment and ‘learn on their own’, raising questions such as who should make decisions: humans, AI, or both? Thus, intelligent technologies such as AI can significantly change ‘what’ tasks people do, and ‘how’ they execute those tasks. Of course, the degree of this change varies according to the type of technology and whether it is being used to automate or augment human work. For example, Makarius et al. (2020) identified more change for workers’ roles when AI is ‘context changing’ (more general and radical applications) rather than ‘content changing’ (affecting a narrow task only), and when the degree of novelty of application is high. In this framework, content change coupled with low novelty typically occurs in low skill situations in which machines substitute for human labour, such as assembly line robots, with humans remaining in control although other issues – such as job security–being raised. At the other extreme, high novelty and context changing, would be fully autonomous and superintelligent machines for which human’s main role will be to ‘comprehend’ what is happening.

Second, technology is enabling radical changes in ‘where and when’ work tasks are performed. For example, work is increasingly carried out remotely, with data from computer sensors and other devices being used to feed into automated decision-making and to connect processes across complex networks in diverse industries such as health, emergency services, and education. Not only does this capability create new ways of working, such as remote operations and hybrid working, we have also witnessed the growth of new business models. An example is the platform economy which leverages technology to decentralise coordination of supply and demand, transforming logistic efficiency. Yet as we shall discuss, these new models do not always operate in the best interests of human workers, sometimes creating precarious and low autonomy work.

Third, these technologically-enabled shifts in the ‘what and how’ and ‘where and when’ of work give rise to implications for the ‘who’ of work, changing the skills and capabilities that workers require to be effective. In turn, the skills people have and develop influence what work designs are possible as well as how effectively technology can be used.

Altogether, our aim in this article is to develop and advocate a work design perspective for understanding and shaping the effects of digital change on work and workers. Specifically, we propose a co-evolving sociotechnical approach (CeSTS) as a crucial foundation for building quality human work in the future. In what follows, we first discuss the value of a work design perspective for understanding technological change. Next, we delve more deeply into the history and application of a specific work design approach: sociotechnical systems theory, which advocates that the social aspects of work (e.g. leadership, culture) and technical aspects of work (e.g. AI, robots) need to be jointly optimised to achieve quality work, rather than prioritising the technological over the social, as commonly occurs. Next, while existing sociotechnical systems theory provides an important foundation, we argue that this theory needs expanding to be fit for the purpose of understanding contemporary technological change. We unpack our CeSTS approach to the design, implementation, and use of digital technology in work contexts, suggesting that researchers need to expand thinking across time and across levels of analysis to create a more proactive and genuinely balanced approach to technical change. Finally, we discuss how a CeSTS might be realised, suggesting the need for an interdisciplinary collaborative approach, the application and development of new dynamic methods, and a multi-stakeholder approach that both informs research and shapes change in work practices and policy.

2. Work design as an important lens for understanding technological change

As noted above, work design is about the content and organising of one’s work tasks, activities, relationships, and responsibilities. From a theoretical perspective, different sorts of task content and organisation, or work design, gives rise to important ‘work characteristics’ that have psychological significance. For example, decision-making responsibilities can be allocated in such a way that workers have control over how they execute their tasks, generating a high level of job autonomy. Theoretically, job autonomy allows individuals to experience a sense of volition over their environment, which helps to fulfil a fundamental human need for control (Deci and Ryan, 2008) and thereby fosters a sense of meaning, authenticity, and psychological ownership (Hackman and Oldham, 1976). Job autonomy also gives workers latitude to manage the demands they experience in their work, which reduces negative experience of psychological strain at work (Karasek, 1979). When autonomy is lacking, this increases worker stress, impairs their well-being, increases disengagement, and reduces their likelihood of innovation (Carter et al., 2019; Parker and Fisher, 2022).

Job autonomy is just one important component of work design. In fact, over the decades, over 30 distinct work characteristics have been theorised and shown to be psychologically important. To help synthesise and make sense of the large number of work characteristics that have been identified, scholars recently theorised and empirically demonstrated five higher-order categories of work characteristics that collectively capture the key attributes of work that matter for people (Parker and Knight, 2024). These higher-order categories include the extent to which work is stimulating (includes work characteristics such as skill variety, task variety, and problem-solving demands); provides opportunities for mastery (has work characteristics such as role clarity and feedback) and autonomy (has work characteristics such as timing autonomy, method autonomy, and decision-making autonomy); satisfies relational needs (has work characteristics such as positive social contact and social support); and ensures workers’ job demands are tolerable (has manageable levels of work characteristics such as emotional demands, work load, and time pressure). When work has these ‘smart’ work characteristics, it predicts multiple individual, team, and organisational outcomes, ranging from mental health and well-being to innovation and creativity to reduced absenteeism (Humphrey et al., 2007; Parker et al., 2017a).

This large evidence-base means that, by drawing on a work design perspective, we can better understand ways to optimise future benefits and minimise future risks to work quality when designing, procuring, and implementing digital technologies. On the one hand, there are examples in which the application of technologies improves work design (Parker and Grote, 2022a, 2022b). For example, digital technologies can make work more stimulating by automating ‘dull, dirty, and dangerous’ tasks and replacing them with tasks that require reasoning and problem-solving (Walsh and Strano, 2018). Likewise, the implementation of digital robots in furniture-making companies increased the cognitive complexity of work, creating expanded problem-solving for workers who had to ‘outsmart’ the robots to get them to work effectively (Goštautaitė et al., 2024). Another example is that the use of AI tools by nurses enabled them to make higher-level medical decisions that typically would have needed to be carried out by a doctor (Autor, 2024). Further, the use of AI-based loan systems resulted in lower-skilled employees at banks having greater decision-making autonomy when providing customer loans because of the knowledge this system gave them access to (Strich et al., 2021).

On the other hand, there is evidence that technological change can negatively affect work design, with flow-on negative effects on worker well-being and safety (Parker and Grote, 2022a, 2022b). There are many examples of automated systems that make work less stimulating for people by replacing tasks that require judgement and decision-making with tasks that require passive monitoring (e.g. Haslbeck and Hoermann, 2016). As further examples: the use of robotic technologies in surgery can limit trainees’ exposure to challenging tasks, impeding their skill variety and opportunities for learning (Dominiczak and Khansa, 2018); AI can replace basic tasks in professional jobs, reducing learning opportunities for entry-level graduates and inhibiting new professionals from acquiring important tactical knowledge (Hunter, 2020); and platform work enabled by technology is often characterised by so-called ‘microtasks’ that reduce skill utilisation (Arora and Garg, 2024).

The deployment of AI can also affect other aspects of smart work. In one case (Zhang et al., 2021), showing implications for both mastery and autonomy, designers in a semiconductor firm’s role changed significantly when algorithms were used to find design solutions, with designers experiencing reduced understanding of how their own actions led to a particular algorithmic-generated result and less control over the solutions. Likewise, algorithmic management, in which task allocation and other management functions are carried out by algorithms rather than human managers, has been shown on average to intensify work and to reduce worker autonomy (Moore and Hayes, 2018; Parent-Rocheleau and Parker, 2022). Often algorithmic management involves the intensive use of technology to monitor and control workers’ behaviours. Algorithmic surveillance refers to monitoring tasks being conducted by algorithms, such as when food-delivery platforms capture all sorts of data (e.g. time taken to deliver a job), with the data then being used to allocate tasks, decide payment, and sometimes even fire the food delivers. However, the automated data that monitoring is based on is, by definition, automated, and thus does not account for the unique situations people are faced with, such as poor road conditions when delivering food. There are few opportunities to challenge the surveillance data that is collected even if the worker perceives it as unfair. Consequently, algorithmic surveillance – common in gig work – is often an ‘intolerable demand’ that causes stress for workers, especially when coupled with precarious employment conditions (Newlands, 2021). As further examples of demands that can be increased by digital technologies, heavy reliance on videoconferencing when remote working causes ‘zoom fatigue’ affecting tolerable demands, and can increase isolation, impairing relational work design (for a summary see Parker and Grote, 2022a, 2022b). AI also introduces new demands on workers in terms of oversight (‘human in the loop’) requirements, yet workers may lack the technical skills, or even time, to interrogate the recommendations from opaque automated decision-making systems. This can expose workers to physical, regulatory and moral risks, such as where automated loan assessments contain hidden bias or error (Sargeant, 2023).

These examples of negative work design consequences from AI have flow-on impacts for worker and societal well-being, and for productivity. For example, the reduction of control and increased time pressure caused by algorithmic management results in impaired worker mental health (Parent-Rocheleau and Parker, 2022), and the increased isolation arising from remote work increases people’s loneliness (Bollestad et al., 2022). Worker safety too can be at risk, with a recognised possibility for disasters if technology is not properly considered alongside work design. An example comes from the tragic Boeing 737 crashes in 2019. Commercial pressures led to rushed development of new software, disregard of worker concerns and, most crucially, work designed in such a way that the pilots were ‘out of the loop’ – uninformed of, and untrained in, and therefore unable to have any control over the new software and thus unable to override the autopilot (Johnston and Harris, 2019).

A different sort of technology-induced disaster is the case of Robodebt in Australia, an automated decision-making system that also removed workers’ judgement from the system, lowering their job autonomy and taking them out of the loop (Murray et al., 2023). The poorly designed, implemented, and governed system caused emotional and financial trauma for thousands of welfare recipients (Braithwaite, 2020) and has cost over AU$1.8 billion in a class action. Other examples show that AI applications can result in all sorts of ethical failures and biased outcomes because automatic decisions are made based on non-transparent, flawed, and inaccurate data-bases, yet workers lack the control over the system to prevent or address these problems (Paterson, 2021; Raji et al., 2022). Thus, it can be seen that, when intelligent technologies are implemented without careful attention to work design, the flow on effects include consequences traditionally focused on in the work design domain such as people’s psychological well-being and mental health, but also extend to wider societal issues, such as ethical violations and systemic bias.

From the perspective of productivity, many implementations of AI fail to generate benefits (The Economist, 2020). Scholars have identified the ‘fallacy of AI functionality’ because of the large amount of evidence of failed applications (Raji et al., 2022). A classic case is that IBM Watson recorded an 80% failure rate in healthcare applications, with little or no positive impact on care and billions of dollars wasted, largely due to an inability to integrate the technology into the existing work systems and culture, including a failure to consider work design (Strickland, 2019). Dysfunctional AI has caused, among other problems, wrongful arrests, lost access to healthcare, and misdiagnosed medical images; events that not only harm individuals but that waste resources and are expensive to rectify (Raji et al., 2022).

3. Moving forward via sociotechnical systems theory

In 1986, Kranzberg (1986: 545) stated that ‘technology is not inherently good or bad; nor is it neutral’. Our analysis above confirms this idea: research shows that intelligent technologies can positively and negatively influence key work characteristics, affecting how much work is stimulating, has mastery and autonomy, is relational, and has tolerable demands. Since these aspects of work design, in turn, consistently affect critical individual and organisational outcomes, understanding the impact of technology on work design – and shaping this impact – will go a long way towards improving the consequences of new technologies for workers and society. However, while there is a recognised and strong imperative for designing, implementing, and using technology in ways that generate positive work designs, exactly how to achieve this outcome is much less well-understood. In other words, when there are technological applications at work, how do we avoid the bad and achieve the good? To address this question, it is necessary to move from a rather passive perspective focused on understanding the impact of technology on work design to a more proactive perspective focused on change.

Here, we propose the importance of a long-standing work design theory: sociotechnical systems theory. The foundation for this approach was developed in coal mines in the 1950s (Emery and Trist, 1965; Trist and Bamforth, 1951) yet, as we elaborate shortly, this perspective enables a deep understanding of human work that remains relevant today. Specifically, sociotechnical ideas arose from a powerful natural experiment of the implementation of mass production technologies in coal mines. Before the technology, small groups of two to eight self-managing colliers and their helpers contracted with management to work a small coal face. They selected their own team members, carried out the whole task, and used a range of skills. Their ‘responsible autonomy’ enabled them to adapt their work pace to changing conditions, as well as to the stamina of team members. However, bolstered by what was happening in manufacturing and elsewhere, to improve efficiency, conveyor belts and coal-cutting tools were introduced in what was referred to as the ‘longwall’ method of mining. Forty to fifty men worked a long coal seam, spread out over a large distance. Work was broken down into three shifts, each carrying out a separate process. Each worker performed one task repeatedly across the shift, such as boring holes in preparation for the next shift. The new approach meant managers and deputies were needed, so instead of a self-managing group, hierarchical models of management were implemented. The new work system was a failure. Unpredictable conditions underground conflicted with the rigid work sequencing, creating tensions in the interdependent system. Communication was restricted, meaning that workers were not able to relay fears and risks (Trist and Bamforth, 1951). There were poor social relations between managers and workers as overworked deputies tried to control the system. Conflict emerged among workers as competitiveness, mistrust, secrecy, conflict, passing the buck, absenteeism, and bribery became rife. Unsurprisingly, levels of productivity under the longwall system were lower than under the previous system, despite the new technologies.

The authors concluded that there had simply been too much focus on the technology, and not enough attention to how people worked together, or what these authors called the social aspects of work. Their observations led Trist and Bamforth (1951) to advocate the need for joint optimisation of social and technical elements, which became known as the ‘sociotechnical systems’ approach. In a later coal mine study, Trist et al. (1977; see also Rice, 1953) observed that the destructive effects of the mechanistic method of mining were alleviated when groups of miners found a way to complete whole tasks with autonomy.

Over time, based on the analysis of these and other cases, a series of sociotechnical principles emerged (Cherns, 1976, 1987; Clegg, 2000; Emery and Trist, 1965). A key principle includes the need to ‘control variances at the source’. In work design terms, this means providing workers this sufficient job autonomy to manage everyday problems that occur in their work. Further important principles include involving workers in the design of the system, ensuring that the ways of working are minimally specified, ensuring that boundaries are not drawn so as to impede the flow of information, ensuring that information and resources flow to the people who need it, and that redesign be a continuous process. These sociotechnical principles have often been realised through the implementation of autonomous work groups (sometimes called self-managing teams) in which the group itself allocates tasks and makes day-to-day operational decisions, and have been applied in contexts such as operating safely in complex systems. For example, the concept of ‘human in the loop’–lacking in the Robodebt and Boeing 737 examples discussed above–aligns with the STS principle of controlling variances at the source.

A sociotechnical approach continues to be highly relevant to the intelligent technologies currently being implemented (Guest et al., 2022; Makarius et al., 2020). Once again, we are witnessing a ‘technocentric fallacy’ – an assumption that technology itself is the primary driver of successful digital transformation (Kane, 2019). Indeed, of the approximately $1B spent on technology daily, most of this spend is for advancing the technology (https://www.cnbc.com/2025/02/08/tech-megacaps-to-spend-more-than-300-billion-in-2025-to-win-in-ai.html). The technocentric fallacy, and the consequent lopsided resource allocation by government and industry, must be challenged because AI or similar technologies alone do not improve productivity nor generate healthy work, as our examples above have shown. Indeed, the pace of technological change means integration of social and technical systems is more important than ever for a functioning society, with some scholars advocating for a sociotechnical perspective as ‘the cornerstone in discussions about smart working in Industry 4.0 and 5.0” (Bednár and Welch, 2020: 291).

However there needs to be expansion of STS theories to make them fit for the contemporary purpose. Let us return to the idea that technology is changing the ‘what and how’ people work. One of the crucial emerging and unresolved issues is around human autonomy. AI systems lack transparency because of they rely on patterns in huge volumes of data and moreover may have self-learning capability, creating continuous change over time; even the developers of the systems do not know how they work. Crucially, the opaqueness of these systems reduces the possibility for humans to actively control the technology (Castelvecchi, 2016), with autonomy by workers being a crucial principle of sociotechnical systems theory and integral to the idea of ‘human in the loop’. If workers do not understand how a technical system works, they cannot effectively manage the autonomy they have been given, nor, at least in normative terms, can they be held accountable (Boos et al., 2013; Grote et al., 2024). We do not yet understand how to address this issue of control versus accountability with new intelligent systems. Next, we turn to the ways that that sociotechnical system theory needs to develop to help achieve better quality work in a digital future.

4. Co-evolving sociotechnical systems: An integrating approach for the future

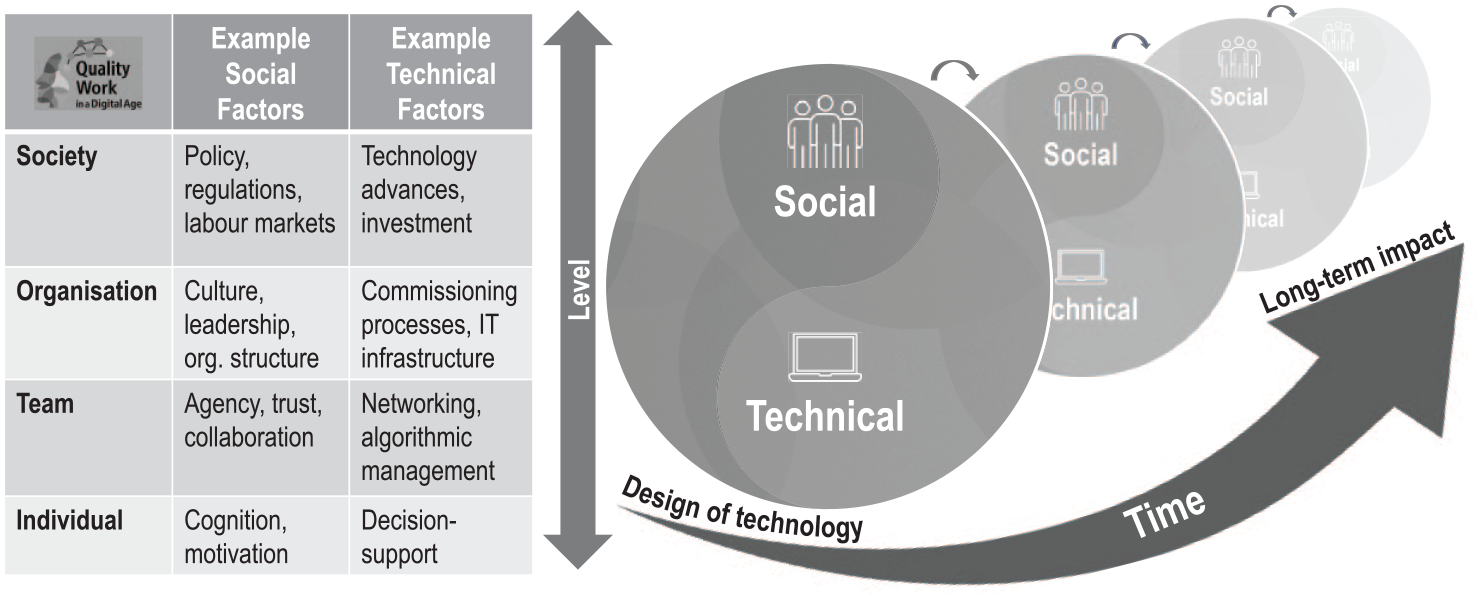

In this section, we draw on the past innovations in STS and the ongoing challenge of changing environments to propose a new perspective on STS. We introduce the concept of ‘co-evolving’ sociotechnical systems (CeSTS) as an integrating perspective for the future. Our co-evolving perspective highlights that both human and technical roles are undergoing continuous and interdependent change as they interact in a dynamic system. Our perspective extends the ‘human in the loop’ imperative in two ways. First, CeSTS incorporates a time perspective in which people and technology adapt together in a process of continuous learning. Second, CeSTS incorporates a multilevel perspective that considers the level of analysis where change unfolds. We describe these extensions next, summarised in Figure 1.

Co-evolving Sociotechnical Systems (CeSTS) perspective interlinking social and technical factors to jointly optimise outcomes across levels and over time.

4.1. Co-evolution over time

To date, attempts to elevate social priorities within STS tend to assume a stable technology that is already in place. The focus of sociotechnical experts, then, is on how social factors such as leadership, work roles and team structures fit into or around the existing technology. These efforts primarily aim to correct techno-centrism through a focus on the social aspects of work after technology is deployed. This approach can control some variances at the source when technologies are relatively stable. However, even in relatively fixed technology implementations, research shows the actual work frequently involves workers engaging in multiple work arounds, adaptions, and improvements over time, often because the technology design was not sufficiently suited to its purpose. In what follows, to develop our CeSTS approach, we first recommend a need to adapt the initial technology design by applying a social lens to the design. Second, we advocate the need to consider technology design, development, and use as co-evolving and dynamically affecting each other. Third, we recommend research reflects how people’s attitudes and skills change over time, and is mutually dependent on, and affected by, the wider work and technological system.

With respect to the first point, failures that have left humans ‘out of the loop’ can often be traced back to design flaws, such as technology inhibiting people from overriding incorrect decisions, as occurred in a case of algorithms incorrectly cutting health care support to particular groups (Raji et al., 2022). The design of technology for work systems often proceeds with minimal worker input. Instead, business effectiveness and efficiency dominate the decision-making processes involved in commissioning and designing technology. By temporally expanding sociotechnical systems thinking and applying it to the design phase of technology, new research questions are stimulated. For example, with respect to what tasks people do, and how they do them, we need to better understand the perspectives of different actors in the system. Designers and developers often have a job-replacement mind-set rather than an augmenting mind-set (Bailey and Barley, 2020), so understanding fully the attitudes and orientations of designers, as well as how to shift these mindsets, is needed. Such reasoning also applies to the ‘where and when’ of work. For instance, the design of online platforms in which workers carry out their tasks and are managed through algorithms can significantly reduce the degree of autonomy afforded to workers (Lehdonvirta, 2018). In essence, the technological design might unnecessarily constrain the work design options. Exactly what sorts of alternative design options are feasible, and what it takes to get them into place, needs much more investigation.

As an example, we can see the relevance of an expanded sociotechnical approach in the design of ‘algorithmic triage’ systems in which algorithms, such as AI models, have an in-built confidence estimator. Previous research optimised the AI model’s decision accuracy and then assumed that this would lead to overall better performance (Raghu et al., 2019). But recent research shows that using machine learning to determine which decisions to defer to humans (where the model has low confidence) and then optimising the remaining space (where the model has high confidence) produces higher joint-decision quality (Bansal et al., 2021). This example shows how social factors can be considered in the design and programming of digital technology.

More generally, there is much advocacy around the need for greater technological transparency as a solution to human and work challenges. But, showing the situation is more complex than this, one study highlighted that, even when the risks and uncertainty about an AI-assisted decision systems are communicated, users tended to over-rely on and over-trust AI systems (Schömbs et al., 2024). This suggests that transparency alone is not sufficient, and that autonomous system needs to embed human actions to ensure human oversight (Holzinger et al., 2025), along with governance principles (Australian Voluntary AI Safety Standard, 2024). If human oversight is ultimately impossible, then other changes in the work system – such as new approaches to accountability–will need to be designed. For example, Grote et al. (2024) argue that, as machines become more autonomous, and as the line between AI development and use increasingly blurs, it becomes more and more difficult to identify who is in control of the system and, consequently, who is accountable. The authors argue that managing these uncertainties requires decentralised forms of stakeholder governance, with governance issues needing to be addressed across the whole AI life cycle.

The second point is that, increasingly, we need to consider technology design, development, and use as co-evolving and dynamically affecting each other. AI systems ‘learn’ over time, which means technologies will update more frequently with greater capacity to adapt and innovate. Consequently, the line between technology development and use will become increasingly blurred, challenging the traditional separation of research focused on creating AI systems to research focused on investigating the organisational consequences of implementing new systems. There is an increasing mutual dependence of technology and people that blurs the distinction between technology design, development and use in many AI applications.

To illustrate the co-evolution of sociotechnical systems over time, we draw an example from policing in which AI uses historical data to predict where and when certain crimes might be committed. The predictions are then used to shape the allocation of police resources, influencing how these decisions are made and who makes them. In addition, keeping the system up to date means that police officers need to increasingly act as data collectors, with the new data they collect needing input to the system. These changes to ‘what and how people work’, and ‘where and when they work’, have significant work design implications that need to be considered alongside technology development. For example, in one case, the algorithm used data to predict where and when crime might happen up to a week in advance, with police managers then using this data to decide where and when to schedule patrol officers, creating an apparent short-term successful outcome because crime was reduced in areas it was allocated (Waardenburg and Huysman, 2022). But then, a few weeks later, the same crime rates surged again, resulting in a re-assignment of police back to the original area. Why did this pendulum effect occur?

According to the in-depth analysis, crimes were reduced after an AI-stimulated police response, this new data shaped subsequent predictions, suggesting this area was no longer a hotspot, reducing the assigning of officers to the area, leading criminals to take advantage, spurring yet again to a crime-spike, police response, and change in the data. The police officers were sent ‘from pillar to post’ because developers did not understand how police officers used the algorithmic data in practice, and in turn, the police officers did not understand how their work practices unintentionally influenced the algorithmic data. This is an example of how technology development and use can actively shape each other, affecting work practices and outcomes in unintended ways. Thus, socio-technical processes can only be fully understood if it is recognised that AI systems are co-created, with development and use dependent on each other.

A third temporal issue is that not only does technology and its use change over time, but people change too. CeSTS defines the continuous adaption of people and technology as a central feature of any sociotechnical system. While much attention is currently given to the way technical applications can learn, we argue that human learning and adaption have equal importance in the evolving system.

A time perspective is needed to understand and manage when and how adaptions and innovations are mutually determined by humans and technology. On the one hand, new skill sets are likely to be required when work changes as a result of technology, such as in the policing example which showed that, among other changes, police officers needed to develop an ability to understand the limitations and strengths of algorithm-generated data. Yet at the same time, the work practices and technology can interact in ways that stifle or inhibit the acquisition of these skills. As an example, recent advances in Human-Robot Interaction (HRI) have explored the teleoperation of robots, allowing human operators to remotely control robotic systems for tasks requiring dexterity, adaptability, and real-time decision-making (Pan et al., 2024). By integrating human cognitive strengths with robotic precision, remote manipulation and navigation have been enhanced, particularly in environments in which direct human intervention is impractical or hazardous (Pan et al., 2024). Beyond teleoperation, research has increasingly focused on improving robots as collaborative partners through models that support joint action and common ground between humans and robots (Zhang et al., 2025). Developments in intent recognition, adaptive behaviours, and shared task execution enable robots to anticipate and respond dynamically to human actions, fostering more fluid and cooperative interactions (Tabatabaei et al., 2025). These advancements contribute to the creation of more natural and autonomous robotic systems, ultimately paving the way for AI-driven agents that have more potential to seamlessly integrate into work domains. Again, however, worker engagement in the design and deployment of such systems will be essential. Some people, for instance, are unsettled by humanistic robots and, at the very least, care should be given to the way in which the artificial status of these agents is clearly signalled and managed.

In sum, CeSTS defines the continuous adaption of people and technology as a central feature of any sociotechnical system. We advocate a need to investigate how to extend sociotechnical systems principles earlier in the process, including: to be part of the initial commissioning and design phases; how to better understand technology design, development and use as mutually co-evolving; and how to prioritise and recognise not only machine learning but also human learning and adaption in the work system. In a rare study that explicitly focuses on this sort of co-evolution over time, Grønsund and Aanestad (2020) investigated how an organisation introduced algorithmic support for analysing geospatial satellite data in a ship brokering company. As an intermediary between ship owners/charterers and cargo owners, the company required up-to-date analysis on maritime activities to predict ship behaviour and trade flows. An algorithmic system was used that drew on data from an Automatic Identification System that provided information to the sector about the ships’ identity, location, and cargo, as well as other data sets, to provide timely and accurate information beyond that available to customers and competitors. The authors described how, over time, new configurations of work designs emerged. To begin with, a data scientist mostly worked on auditing and improving the quality of the data being used in the system, adjusting the system in light of data quality issues. Over time, the focus shifted towards evaluating the output of the algorithmic system, which required discussions with multiple domain experts working as a team with the data scientist and researcher. After a while, the work design and skills evolved again, as the team started to compare the algorithmic outputs with those produced manually. Two years later, the algorithm reached a level of accuracy that was the same or better than that of the researcher, leading to efforts to expand the system to analyse additional aspects. Achieving success in these shifting work configurations, each with new tasks and requiring new skills, depended on a culture of openness and a willingness to experiment. The human in the loop principle remained core, although not so much for the worker to ‘control’ the technology, but because human-generated data was essential in providing a ‘ground truth’ against which to audit the algorithmic output, and because workers in the system had to frequently alter the algorithm in the light of this auditing. The ‘technical’ design of the new system was thus emergent, deeply entangled with use, and fully dependent on effective ‘social’ processes, such as creating an appropriate work design configuration as well as continual learning and adaptation over time, all within a culture that supported experimentation.

4.2. Co-evolution across levels

Traditionally sociotechnical systems theory has focused on the work system or organisational level of analysis, investigating how aspects such as task allocation, leadership, and culture need to be considered alongside technology. We argue that the team and organisational level of analysis continues to be vital into the future. For example, there is strong evidence that, when organisations possess a strong ‘psychosocial safety climate’ in which senior management genuinely prioritise worker psychological health and provides support and commitment to stress prevention, there are flow-on consequences for quality work design (Dollard and Bakker, 2010). Since psychosocial safety climate taps into management mind sets and values, knowing about it, we can significantly predict how future work will be designed. For instance, research shows that psychosocial safety climate predicts future manageable job demands, high control and high learning possibilities (Dollard et al., 2019), which in turn flow through to productivity outcomes such as lowered absenteeism, presenteeism, and compensation costs (Dollard et al., 2024) as well as worker qualities required in a technology-rich context, such as creativity and engagement (Zadow et al., 2023). Understanding management mind sets with respect to human needs versus profit thinking in relation to future AI integrated work conditions, is likely to be fundamental to know how well the implementation of new technologies will fare in relation to meeting a human-centred approach (Dollard and Bailey, 2021), as well as in helping to generate organisation’s innovation capacity and hence their readiness for Industry 5.0.

Other organisational-level factors may also play a role in effective sociotechnical processes, such as an organisation’s size. Smaller companies may especially benefit from the potential of AI, yet there is some evidence that they often lack the financial resources, access to skilled personnel, and can feel overwhelmed by the perceived complexities of implementing the technologies (Dey et al., 2024). On the other hand, there may be advantages to being small in some cases, such as a greater ability to be agile; which has been identified as crucial for effective AI implementation (Tominc et al., 2023).

However, despite the criticality of addressing sociotechnical concerns at the team and organisational level, we further propose that consideration of lower and higher levels of analysis is also crucial, both from a research perspective and to bring about real change. Thus, in CeSTS, our second point is that we advocate for equal consideration of factors at multiple levels of analysis including: technology design as discussed above; the level of individual workers (such as how individuals’ cognition, physiology, personality, and background affect their responses to new technologies); work teams (such as how team members share knowledge and learn, the trust between workers, or the impact of algorithmic management on team processes); organisations (such as how psychosocial safety climate, finance, size, and other organisational or technical systems affect technology implementation), and societal systems (for example, the role of regulations, industry and occupational factors, union density, labour markets, and advances in technology).

To illustrate, let us re-visit the policing example above. On the individual side, we see key implications for individual front line police officers, tactical police leaders directing local operations, HR decision makers planning future workforce needs, as well as all those involved in technical procurement and implementation. All these groups of individuals will need to be exposed to new task requirements and will likely need new skills to be effective. At the other end of the spectrum, it is important to consider more macro levels such as government policies that shape the use of policing technology as well as broader education systems to ensure appropriate skills. One can go further to highlight the need to consider the larger societal issues that might arise from systems like predictive policing, such as the possibility for algorithms to perpetuate bias towards already vulnerable communities, or that predictive modes of policing might reinforce punitive rather than more community-building perspectives of policing (Asaro, 2019).

Sociotechnical processes will be shaped by other features of the macro-level context, such as the industry within which the organisation sits. While industry does not play a deterministic role, factors like occupations and skills vary by industry, with research showing that these factors shape how technology and work design intertwine. For example, we already know that occupation-level factors affect work design through influencing the sorts of tasks that people carry out, through the values typically held by people attracted to these occupations, and through occupational norms (Dierdorff and Morgeson, 2013; Parker et al., 2017b). As an example, in the health sector, medical professions often have rather strong demarcations around which tasks can be carried out by doctors versus nurses, which can significantly affect work design choices, and interact with technological impacts in various ways. Sutcliffe et al. (2012) showed that doctors’ tasks of supporting childbirth required more technological support relative to midwives because the latter were not allowed to engage in certain medical procedures. In this case, technology interacted with occupational demarcations to influence the work of the different professionals in distinct ways.

In a similar vein, scholars have theorised more positive effective use of information technologies for workers in high-skill jobs versus low-skill jobs, and more positive effects for work that is more routine relative to more complex work (Autor et al., 2003). In the case of the former, referred to as ‘skill-biased technical change’, low-skill employees may be in less demand in the labour market, giving these workers have less power to negotiate better quality work. In the latter case, ‘routine-biased technical change’, technology is more able to substitute for and replace routine tasks, reducing the demand for workers whose jobs involve mostly routine work, and thus reducing their power to protect work quality. In contrast, it is argued that technology tends to complement and augment more complex and high skill work, both requiring better work designs to realise the benefits and increasing worker bargaining power to achieve better work designs. In fact, the evidence for skill-based or routine-based change is mixed and complex, with some studies showing negative effects of technology on work design even in high skill jobs or for complex work (see for a review Parker et al., 2017b). These sorts of theories have yet to be fully examined with respect to AI, but they do alert to the importance of considering macro-forces beyond the team and organisation level, such as the dominant occupation or the sorts of skills required in a particular sector. We might predict, on average, more issues of poor work design arising from AI in contexts like call centres and production departments relative to, say, health and law in which skill levels tend to be higher and there is less routine work. There could also be a caveat to this prediction; organisational and individual factors could reverse these generic predictions.

One especially powerful reason for considering sociotechnical aspects at multiple levels of analysis is that, to date, sociotechnical approaches have not achieved hoped-for outcomes at scale. Scholars have recognised that, on average, existing approaches have failed to embed the ideas into wider power structures and knowledge systems, such as into educational practices, union and worker agendas, and legislation (Guest et al., 2022). A focus on macro-level issues that shape power dynamics is especially important in the field of technology because key corporate stakeholders, such as CEOs or IT department leads, tend to align with a technocentric agenda in which the emphasis is on replacing human labour and efficiency (Bailey and Barley, 2020). In other words, cost-cutting business interests tend to drive technology development and application among large corporates, with the impact of technologies on workers’ safety, mental health, and inclusion being of much less concern. The need to counter these macro trends are gradually being acknowledged. An illustrative example in Australia are the recent changes to law that have strengthened the legal requirements to eliminate and control psychosocial risks (mental health risks for workers), which include the psychosocial risks that might arise from new technologies, such as new forms of worker surveillance and control (Safe Work Australia, 2024). Hopefully, these laws will be utilised as a lever to centre corporate decisions around human needs. Legislation to protect workers is an especially relevant topic in contexts like gig work, in which precarious workers are subjected to algorithmic control and other negative work conditions. Indeed, currently, there are multiple legal battles around the world concerning whether gig workers are classified as ‘employees’ or ‘independent contractors’, with this decision directly shaping access to workers’ legal rights.

Nevertheless, and returning to our point that multiple levels of analysis must be investigated and considered, policy change alone has been shown to be insufficient to re-orient away from a technocentric perspective. For example, regulation to prevent and address work stress has been slow and reactive, it is also hard to audit and has required complementary changes led by organisations to be effective (Leka et al., 2015). In other words, while interventions to stimulate change at the macro policy level have an important role to play, effective change requires intervention at the organisational, team and individual levels as well.

Beyond the need to consider multiple levels of analysis, CeSTS draws attention to the way that levels interact across the lifespan of change, with different time frames and opportunities for action at each level. To develop models of co-evolution across levels it is important to identify key features at each level that might respond to or shape the evolving system. For example, AI may lead to skill degradation over time for individuals who are less engaged or proactive, but for those who demonstrate higher levels of these constructs, AI may stimulate curiosity, learning, and the acquisition of new skills. A recent study (Lee et al., 2025) showed that when people use generative AI systems to improve their efficiency, people with high confidence in the AI system engage less in critical thinking, while those with high confidence in their own abilities engage more in critical thinking. From this, we can hypothesise that more experienced workers will suffer less from the erosion of their own expertise, while newer workers may fail to develop such expertise, unless the design of these systems explicitly mitigates this and/or individuals are trained to use the tools appropriately. In another study, AI was used to improve productivity in a US lab, increasing material discoveries by 44% and patent discoveries by 39% (Toner-Rodgers, 2024: 1). However, the benefits were unevenly distributed. Top-performing scientists gained more while others experienced decreased job satisfaction due to the automation of creative aspects of their work. These studies show how the temporal impacts of AI on skills depends on individual factors, in interaction with the sorts of tasks that AI is being used to carry out.

Skill considerations, in turn, shape and are shaped by macro-level education systems such as of university and vocational courses may need to be adjusted, in both content and style. There remains a significant disconnect between education and work, making transitions challenging. Strengthening connectivity between these contexts is essential to ensure that skills development aligns with evolving workplace needs (Kyndt et al., 2021). At the same time, at the team and organisational level, what also matters will be the work design practices of organisations. Well-designed stimulating and autonomous work promotes learning and development, and might even enable the more flexible and innovative use of technology through fostering proactive workers behaviours such as job crafting (Parker et al., 2021).

From a design perspective, Learning from Demonstration (LfD) serves as an example of a method that enables workers to utilise robot technology in a flexible and intuitive way, potentially allowing them to retain control over automation while adapting it to their specific needs (Bilal et al., 2024). By enabling workers to teach robots tasks through demonstration rather than programming, LfD ensures that technology aligns with existing workflows rather than imposing rigid structures. This supports the sociotechnical principle that ways of working should be minimally specified, preserving worker autonomy in managing everyday challenges and empowering workers to master new tools such as AI and robotics without the cumbersome need to learn complex programming. Just as most office workers use computers daily without needing to understand operating systems or write code, there is a growing need to develop systems that enable end-users to creatively and effectively harness emerging technologies without requiring specialised technical knowledge. By designing tools that prioritise usability and adaptability, we can ensure that automation serves to enhance, rather than constrain, human work.

Third, we need to understand how these levels of influence evolve across space and place. With the rapid pace of accelerating change, driven by digital technologies and externalities like climate change, CeSTS processes must adapt and transform at a more rapid pace than ever before. The issue with adaption and transformation is that it tends to have fewer negative repercussions for the elite core, while those on the periphery tend to be left behind. This can be seen, for example, in rural Indigenous communities where digital, and indeed even basic, literacy has been falling behind at a rapid rate (Featherstone et al., 2024). This has important implications for diversity, closing the gap and the evolution of our society as we become increasingly digital. As new digital technologies evolve, they might be more likely to benefit those in cities or destroy jobs that would have been once filled by lower-skilled staff or lock certain groups out of participation. We know that regions can differ significantly in their technical and commercial efficiency and that spatial agglomeration effects, dynamism and crowding out from digital innovations impact regions, but what is less clear is how these factors impact jobs and the workforce (Pham et al., 2024). By better understanding the interactions among the various levels as they unfold over time and across space, we will be in the best position to achieve genuine sociotechnical system change.

In sum, CeSTS involves the need to recognise multi-levels of influence on sociotechnical processes, and to consider how these influences dynamically interact and shift over time and space. Again, while there is overall little published research that focuses in depth on such processes, some studies provide examples. For instance, an analysis of the implementation of an AI-based system to analyse documents in the Danish Business Authority, identified how macro factors shaped technical and social factors at other levels. As the authors noted (Asatiani et al., 2021: 344), at the same time as facing pressure to be efficient, because they were a government body, the authority had to remain a ‘transparent and trustworthy actor in the eyes of the public’. Consequently, transparency in decision-making was essential to be sure that systematic biases or unfair decisions were not being made, even if this meant ultimately lower efficiency. A process of ‘envelopment’ was therefore implemented in which clear boundaries were set around AI inputs and outputs. These boundaries were sometimes technical, such as avoiding AI models that learn-on-the-fly, and sometimes ‘social’, such as allowing workers to overrule the model’s recommendations if needed. Thus, the macro-level element of being in the public sector created the need to manage via sociotechnical processes the trade-off between low explainability and high performance caused by using inscrutable methods.

5. Expanded methods and approaches

Above we have advocated expanding the sociotechnical approach over both time and levels of analysis in an approach we call CeSTS. To pursue this agenda, we advocate management research will benefit from interdisciplinary collaboration that engages multiple stakeholders and adopts innovative research methods that illuminate multilevel and longitudinal processes.

5.1. Interdisciplinary collaboration

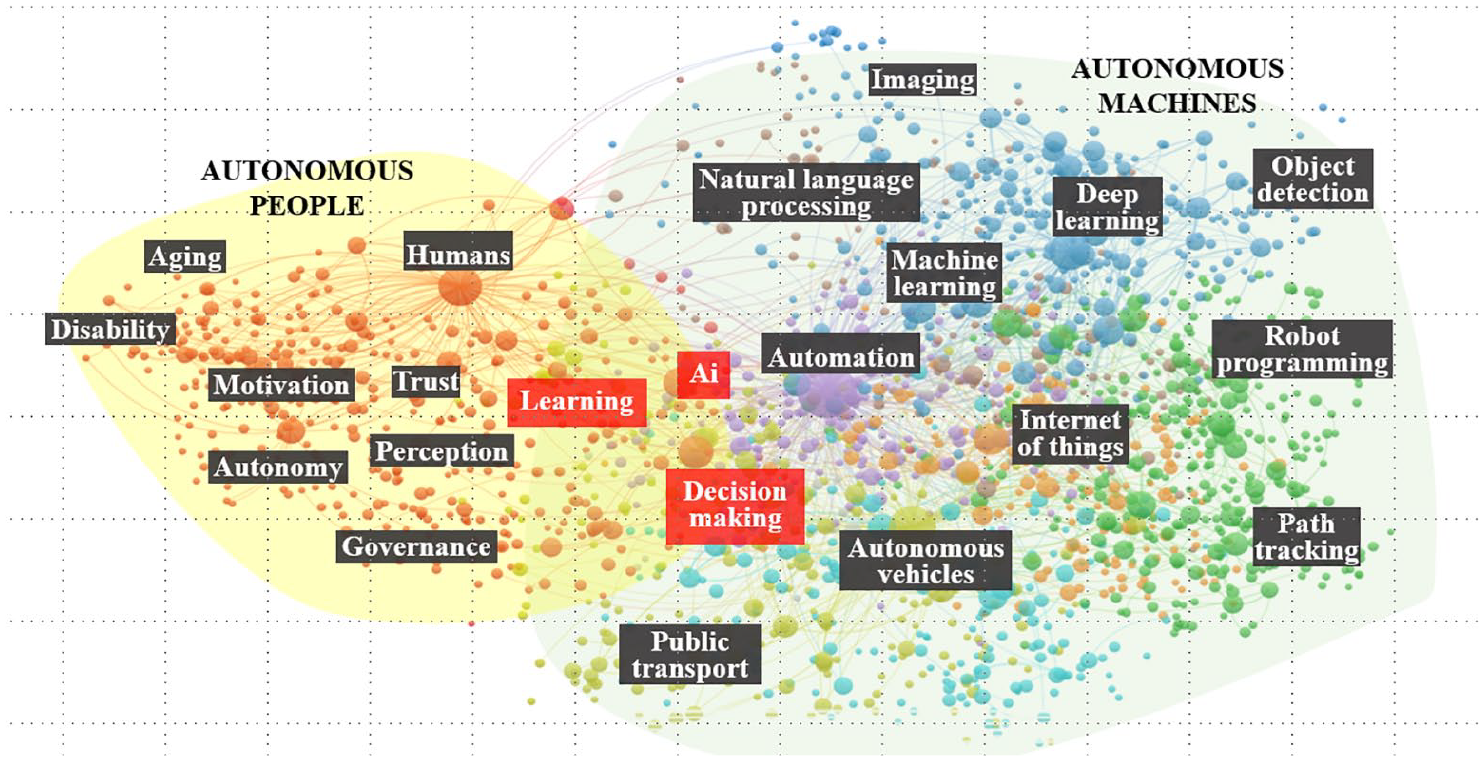

Achieving quality human work in a digital world can be seen as a ‘meta-problem’ that transcends disciplinary boundaries (Dalton et al., 2021). However, to date, research on ‘technical’ topics by scholars in information science, engineering, computer science, and related disciplines mostly proceeds in isolation from research on ‘social’ topics by scholars such as psychology, law, design thinking, labour economics, education, and human factors. As an illustration, Figure 2 shows a scientific map of research on human autonomy and research on machine autonomy. As this figure depicts, these fields of research rarely intersect, yet by definition these elements should be considered simultaneously; since a machine is fully autonomous it constrains the level of human autonomy, and vice versa.

Scientific map of strength of association among key words in published abstracts based on VOSviewer Analysis (2019-2024).

For the progression of sociotechnical knowledge, we argue that an interdisciplinary research approach is needed in which researchers from social and technical disciplines work together ‘communally, on a pre-defined challenge’, integrating the diverse perspectives into a research outcome (Alvargonzalez, 2011). We nevertheless acknowledge that achieving such interdisciplinary collaboration is not easy because the resulting research teams would ‘span the current division of labor in most universities’ (Bailey and Barley, 2020). Moreover, as has been discussed elsewhere (e.g. Bates et al., 2023), individuals from different disciplines come with diverse languages, norms, goals, and perspectives, such as different views about what constitutes ‘research’, so new sorts of collaborative structures are likely to be needed to foster genuine interdisciplinary working. Crucial to success will be teams in which researchers have an overarching collaborative purpose; we argue this is addressing the meta-problem of how to create healthy, inclusive and productive human work in a digital age. And this purpose also needs to be balanced against sufficient local autonomy to pursue innovative agendas (Kaufmann et al., 2020). Researchers have identified other drivers of interdisciplinary collaboration that need to be embedded into the design and running of interdisciplinary research teams, such as the importance of prioritising time to develop shared understanding and trust, team-centric leadership (e.g. Kozlowski et al., 2016), and activities to highlight a shared goal (Specht and Crowston, 2022). Intriguingly, since technologies like computer-supported collaborative software can help complex systems to coordinate effectively, the research team can itself be seen as a sociotechnical work system.

5.2. Innovative methods that are dynamic and able to capture sociotechnical complexity

At the individual-level, there are innovative tools that can be used to gain a deeper understanding of human behaviour in the workplace. In particular, there are a range of different methods for capturing neuro-physiological signals, and audio/visual data than can provide an indication of emotional state or cognitive load. The DEAP data set (Koelstra et al., 2011) provides an example of how electroencephalogram (EEG) and physiological signals (heart rate, galvanic skin response) can be used to reliably measure emotions in response to visual stimuli. In this case, the data was recorded in a laboratory setting, but other researchers have recorded physical cues in a more natural setting such as in conversations between pairs of people (Saffaryazdi et al., 2022). More recently, researchers have begun to explore how to measure valence, arousal and cognitive load in a remote work setting (Sarkar et al., 2023), although just using visual and audio cues.

As an example, remote work provides an ideal scenario for using AI to identify the emotional state and cognitive load of meeting participants. The audio and visual cues from the video conferencing link can be captured and processed by machine learning techniques to measure emotional state. Emotional state data can be combined with richer physiological signals from body worn devices such as smart watches or EEG headbands. This can be done in an unobtrusive manner and used to provide enhanced awareness of the state of people in the meeting. AR and VR displays are also now becoming available that have a wide range of physiological sensors embedded into them (e.g. OpenBCI Galea display, https://galea.co/), making it easy to capture data. In this case, sensors are embedded in the faceplate of the displays to reliably capture data from skin contact. Today AI tools are commonly used to provide transcriptions of video conferencing sessions. In the future, subject to appropriate treatment of privacy issues, AI tools will be able to identify emotional and cognitive states, which may ultimately even play a role in providing AI-facilitated group meetings.

Research on AI and other digital technology in the workplace is booming. But even so, there remain relatively few in-depth studies of what happens to work roles and worker experiences over time. At the work system level, there is a particular need for in-depth applied field studies that closely observe and examine longitudinally the complex interdependencies among work design, technology, individuals, leaders, and external regulatory factors; it will also be critical to track how these aspects shift dynamically over time. An example of one such study is that by Maiers (2017) who showed, through in-depth interviews and observations over time, how medics expected to use algorithms to detect infants’ infections in a neonatal intensive care unit were often sceptical about the output of the algorithms, and ultimately used the outputs in highly contextualised ways, sometimes discounting the information all together. These rich, often qualitative analyses, provide important insights into how digital changes are experienced by workers, over time, and the multi-level forces that shape these impacts.

Another methodological strategy also at the level of the work system involves creating sophisticated analytical tools that faithfully represent how complex work systems play out over time. For example, computational models can be developed that operationalise specific assumptions about the dynamic process by which these factors interact and coevolve over time (Ballard et al., 2021). These models can be used to develop more rigorous theory that can forecast the future impact of new technologies. Another theoretical and methodological tool to represent complex work systems is social network analysis. This tool measures multiple stakeholder engagement across organisations (Lomi et al., 2014), including components of work design such as knowledge transfer (Brennecke et al., 2025). The social network analysis framework maps relationships as a network of connections, allowing them to be visualised as the interplay of formal (i.e, reporting lines) and informal relations (i.e. networks) (Lomi et al., 2014) as well as frameworks for intervening in networks (Robins et al., 2023). For example, a new technical intervention may alter social relationships, with this visual map representing potential threats to knowledge flows. Social network analysis specifically accounts for the interdependencies of relationships and is extremely well-placed to examine sociotechnical complexity required for innovation.

We also need better and more accessible panel data that tracks workers across multiple measures across time and space. Many countries are starting to move towards linked employer-employee microdata, but these valuable data assets need to be made more widely available with less restrictions for researchers so that researchers can pose difficult questions and develop novel theories. Such panel data sets lend themselves towards natural experiments, in which policy-level interventions, and even organisational-level interventions, can be tested causally. In addition to thinking about causality, investigation of the longer-term outcomes of work redesign are needed with sophisticated models such as growth modelling (Collins et al., 2016). For example, do work redesign interventions turnaround negative to positive outcomes? And are the benefits sustained over time? (Collins and Gibson, in press). Furthermore, with the move towards more open access frameworks for linked employer-employee data sets, in which novel data sets can be extended, such as scraping of job description data, social media posts or government strategies, would offer original insights and a valuable resource for future research and policy guidance.

Altogether, there are opportunities to use new methods, and existing methods in new ways, to investigate sociotechnical systems processes. It is important to note that, with some of these methods, new ethical concerns may arise that need to be addressed. For instance, when scraping data from social media, or when measuring EEGs and other such physiological data, it continues to remain important to consider privacy issues and to incorporate the voices of workers rather than treating them purely as subjects or objects of surveillance. Certainly, workers have expressed concerns about the surveillance potential of these kinds of technologies, which appear to peer into their innermost thoughts (Future of Work Report, 2024). ‘Privacy by design’ should remain a guiding principle of any deployment of AI, even that which may purport to promote safety or well-being, or generate the data to investigate these issues. Worker engagement into the operation, scope and safeguards on these kinds of ‘emotional AI’ are critical to social licence, and in some contexts legal compliance.

5.3. A multi-stakeholder approach

Matching the above conceptual and methodological advances, there is a need for research to be conducted in close collaboration with designers, managers, educators, policymakers, regulators and other important work stakeholders.

First, such collaboration is often essential for research to be appropriately adapted to different contexts. Collaboration among diverse stakeholders enables a thorough exploration of the problem space, allowing for a co-evolutionary process to support desirable future work scenarios (Bailey and Barley, 2020; Criado-Perez et al., 2022). International thinktanks such as the United Nations’ AI for Good, aim to address grand challenges; these are of course critical. Yet breaking these grand challenges into actionable sub-challenges at national and sectorial levels facilitates localised, context-specific problem-solving (Brammer et al., 2019). Focusing on the local level, such as how national challenges are tackled within a specific work system, enables researchers to “be as close to the challenges as possible (Boon and Edler, 2018: 445). Engaging with system-level constraints and enablers – such as economic and legal factors – ensures that solutions are not only theoretically, but also practically, implementable.

Second, engagement of stakeholders can enable new modes of research and learning, which speeds up application. For example, a key method for bridging the gap between academic research and industry practice is the Research Innovation Sprint, a structured, high-intensity collaboration designed to solve complex problems in just 30 days (Tate et al., 2018; Kowalkiewicz et al., 2023). Unlike traditional research models that can take years to yield actionable insights, research sprints bring together multidisciplinary academics, industry partners, policymakers, and end-users in a compressed timeframe. This approach fosters rapid experimentation, co-creation, and the immediate application of research findings to real-world challenges. By embedding researchers directly into industry settings, sprints help to ensure that technological advancements align with human-centred work design, rather than being imposed without consideration of social and organisational contexts. This structured yet agile approach exemplifies how academia can work collaboratively with stakeholders to shape not only the research agenda but also its execution and long-term impact.

Third, beyond generating immediate outcomes, engaging multiple stakeholders through research sprints and other participatory methods helps disseminate findings, drive policy changes, and establish frameworks for innovation that has industry impact. One reason sociotechnical approaches have often struggled to create lasting change is their tendency to remain within single organisations without influencing broader policy and governance structures. By embedding research within an iterative, real-world problem-solving context, we can accelerate the adoption of new work design principles, while fostering the conditions for long-term, system-wide transformation. One example of an approach that successfully integrates multiple stakeholders to drive systemic change is the Thrive at Work framework, which emphasises the co-evolution of work design, capability development, and policy to enable long-term, sustainable improvements in work quality (Parker et al., 2021). This framework translates sociotechnical principles into practice by embedding evidence-based work design strategies into organisational policy, ensuring that work design initiatives align with broader workforce and policy objectives.

To embed sociotechnical principles at multiple levels, Thrive at Work employs structured methodologies that support both within-organisation alignment and cross-sector implementation (Parker and Jorritsma, 2021). These include capability-building interventions, such as leadership development programmes, multi-stakeholder collaboration tools, and organisational benchmarking, which provide structured mechanisms for embedding work design improvements. In addition, participatory frameworks, including industry-wide consultations, policy development partnerships, and knowledge-sharing consortia, enable diverse stakeholders – workforce representatives, regulators, industry groups, and researchers – to coalesce around shared objectives and align work design strategies across the system. Through these mechanisms, Thrive at Work ensures that sociotechnical principles are not only embedded within individual workplaces but also systematically integrated into broader workforce and policy ecosystems, fostering scalable and sustainable change.

As another example, it is important to think about capability development, such as by creasing knowledge about work design among technical experts, professionals in areas such as law, health and safety, and among managers and leaders. Currently, work design is neglected as a topic in much training and development of these professionals. Training typically focuses on technical skills required to execute tasks or resolve imminent problems. Managers, management consultants, information systems graduates, engineers, operations managers, and workers typically receive little education on this topic, or indeed, the wider topic of human-centred approaches. However, as workplace learning becomes increasingly important alongside formal training to keep up with changing skills requirements, understanding work design in relation to learning and technology will become crucial. As the challenges mount around how to design work in ways that AI augments human performance, these stakeholders will benefit from a deeper understanding of the topic.

By embedding sociotechnical principles into structured, multi-stakeholder interventions and capability-building efforts, research can move beyond isolated case studies and drive systemic, sustainable change in policy, education, and practice.

6. Conclusion

We advocate that we are at a critical juncture for intervening to create quality human work. Industry and government are rushing to implement new technology without the evidence, care or safeguards needed to protect workers, or even to achieve the promised productivity and efficiency gains from the various technologies. There is a need to ‘redouble efforts to drive more humane and stimulating workplaces that benefit society more broadly’ (Collins et al., 2020). We hope that our Co-evolving Sociotechnical Systems perspective, and our recommendations for an approach to realise this perspective, helps scholars to move forward on this crucial agenda.

Theoretical Contributions

1.

2.

1.

2.

3.

We advocate that we are at a critical juncture for intervening to create quality human work. Industry and government are rushing to implement new technology. We trust that our CeSTS perspective helps scholars to move forward on this crucial agenda.

Footnotes

Acknowledgements

We thank the Australian Research Council for its investment in Laureates, Future Fellowships, Linkages, and Discovery grants held by the authors over the past decade, as well as related Industry Training Transformation Centres (e.g., IC220100030).

Final transcript accepted on 15 March 2025 by Miles Yang (Deputy Editor).

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.