Abstract

The pupillary light response is a sensitive biomarker commonly used for neuromonitoring in intensive care units worldwide. Automated pupillometers have demonstrated increased inter-rater reliability compared with manual assessments by clinicians, allowing for objective repeated assessment. The purpose of this study was directly to compare the raw values and reproducibility of results from a novel automated pupillometer smartphone application, MindMirror, against an existing validated pupillometer, the Neuroptics NPi-200. This study was a prospective cohort study comparing pupillary light response values from three consecutive readings from the MindMirror application and the Neuroptics NPi-200 pupillometer in 66 well subjects. Initial diameter values for NPi-200 and MindMirror did not demonstrate a clinically significant mean error (−0.37 mm ± 0.51 mm) with comparable inter-sample repeatability. Other parameters suggestive of reduced constriction amplitude and velocity, were likely to be related to the clinical conditions of collection. The data suggested that the novel MindMirror smartphone application provided accurate results that were predictable and comparable to the gold standard device for automated pupillometry, and may therefore have utility as a portable accessible device for the rapid assessment of pupillary light response, particularly outside the intensive care unit.

Keywords

Introduction

The pupillary light response (PLR) refers to the stereotyped pupillary restriction and reflex dilation in response to a sudden increase in perceived light, a reflex mediated by autonomic signals. 1 The PLR is an accessible and reproducible clinical examination sign, which has made it a crucial part of bedside neurological assessments.2 –4 Demonstration of abnormal PLR responses is an independent predictor of adverse neurological outcomes in patients with a variety of common neurological insults, such as traumatic brain injury,4–6 cardiac arrest,4,7 hemispheric cerebral infarction, 8 and subarachnoid haemorrhage,4,5 In intensive care units (ICUs), where consistent objective assessment of these responses across time, and between clinicians, is paramount for reliable and expedient decision making, there is an increasing reliance on automated pupillometer devices, because of the demonstrable superiority in reproducibility and inter-observer agreement compared with manual torch assessments by clinicians.9–11

The Neuroptics NPi-200 automated pupillometer is a handheld optical scanner that uses an infrared camera to assess the PLR after exposure to an LED flash. 9 The three generations in clinical practice (NPi-100, NPi-200 and NPi-300) have demonstrated improved measurement accuracy compared with manual assessments for both well subjects and ICU patients, with high levels of measurement agreement between different devices and models.12–15 Scanning each pupil rapidly generates seven measured variables to assess the PLR: maximum diameter (MaxD), minimum diameter (MinD), percentage diameter change (%Change), latency (Lat), mean constriction velocity (MeanV), maximum constriction velocity (MaxV), and dilation velocity (DilatV). 9 The device compiles these values using a proprietary formula to generate the neurological pupil index (NPi), which gauges pupil reactivity through a dimensionless value between 0 and 5 (where >3 is considered normal) that controls for common confounding factors such as initial pupil diameter, conscious state, and opiate effect.9,16–19 A 2019 systematic review identified six studies which reported higher inter-user agreement and measurement precision for the NPi-200 pupillometer compared with clinician assessment, although all studies were non-randomised, did not control for the light stimulus between clinician operators, and were deemed as ‘low’ quality evidence by the assessors. 3 While accurate, the NPi-200 devices pose several barriers to use, such as a high purchasing cost, and the requirement for single-patient-use ‘Smartguard’ attachment consumables, 19 which limits the capacity to scale up their use in high turnover environments, such as the emergency department and the operating theatre recovery room. Furthermore, the proximity and intense light stimulus can be stimulating and poorly tolerated by clinical patients who, if they have altered mental status, may not comprehend the instructions necessary to participate consistently in assessment.4,9,20

The MindMirror smartphone application is a novel automated pupillometer that adapts a standard smartphone camera to generate pupillary measurements. Previous groups have attempted to use smartphone applications to improve accessibility to automated pupillometry in clinical and outpatient settings. However, these have been subject to significant practical limitations, such as poor reproducibility,21,22 a requirement for additional equipment to operate which limits ease of applicability,23–25 high rates of failed readings,21,26 and reduced measurement accuracy when held away from the face, making for a bright and uncomfortable stimulus.22–24 The MindMirror application builds on the technology of one such iteration, Pupilscreen, 23 with several pertinent enhancements that improve clinical relevance and ease of use. The application employs an artificial intelligence (AI) facial recognition programme to identify pupils from within the facial mesh on a portrait-orientation camera screen, which allows measurements to be obtained from a distance further from the face compared with similar pupillometers, and records binocular responses in a 10-second video recording with a single camera flash at 1 s. The application also has options to assess subjects with two repeated flashes, which may increase the sensitivity to detect abnormal pupillary responses through inducing fatigue, although the results obtained in this mode are not assessed in this study. The operator ensures that measured pupils occupy sufficient camera pixels for an accurate sample, by fitting the subject’s face into a grid imposed on the camera screen, which makes setting the appropriate distance for measurements easy. Another unique aspect of the MindMirror application is that collected videos are uploaded to a secure cloud, where an AI algorithm interprets the identified pixels of the pupil as pupillary diameter measurements over time, accounting for important confounders such as distance, yaw, blinking and tremor, and thus calculates clinically relevant PLR measurements from the recordings. The AI algorithm has also been used to apply a multivariate analysis that interprets PLR videos using adaptive machine learning on a novel pupillary score which can designate normal versus abnormal PLR responses; this is unique compared with previous mobile application iterations. The application of the algorithm to interpret PLR curves was not assessed in this study, which instead directly compares the measurement of PLR variables against a gold standard pupillometer before future studies can assess interpretation in a clinical setting. The use of AI interpretation on the secure cloud platform means that samples can be collected by clinicians or laypeople on multiple devices, and facilitates contemporaneous result sharing and rapid interpretation with a single metric, without a requirement for specialised equipment or patient-specific consumables. This application could increase the applicability of automated pupillometry in high turnover clinical environments, such as the operating theatre room or emergency department; however, improving the utility of this novel technology in a clinical context requires validation against an established gold standard for automated pupillometry. This study aimed to assess the degree of inter-device agreement in PLR parameters measured by the MindMirror smartphone application, compared with the Neuroptics NPi-200 pupillometer, in a cohort of well volunteers over the age of 18 years.

Methods

This study received ethics approval from the North Coast NSW Human Research Ethics Committee (approval number 2023/PID01484). Sixty-six subjects were recruited prospectively from hospital staff and medical students in the ICU and operating theatres at a single hospital in Tweed Heads, Australia. Subjects were eligible if aged over 18 years, and free from ocular conditions affecting light perception at the retina in either eye, such as cataracts or retinal infarcts. Subjects consented to storage of their videos for processing, and for the use of images as well controls in future studies.

Consented subjects were assessed in one of four locations within the ICU and operating theatres with minimal ambient sunlight or distractions, chosen arbitrarily for each subject based on availability at the time of assessment. Subjects wearing glasses removed them for assessment, but contact lenses were allowed to remain. The principal investigator collected three MindMirror videos on each subject, and then three paired samples using a Neuroptics NPi-200 pupillometer. All MindMirror samples were collected with the same smartphone, (Samsung S21), held in front of the subject at a distance guided by a marker template imposed on the camera screen by the MindMirror application. Neuroptics NPi-200 samples were collected with the Smartguard attachment positioned flush against the patient’s face, as per manufacturer recommendations. 19 For all recorded samples the subject’s gaze was fixed on a predetermined marker behind the investigator, avoiding direct focus on the recording apparatus to reduce blinking and mitigate any effect that the differing distances each measurement apparatus is positioned at would have on the subject’s focal point. Focal points were at different distances based on location, but the same point was employed for all tests collected at a given location. The recording device was offset slightly to allow focus on the distant point (mean yaw 9°).

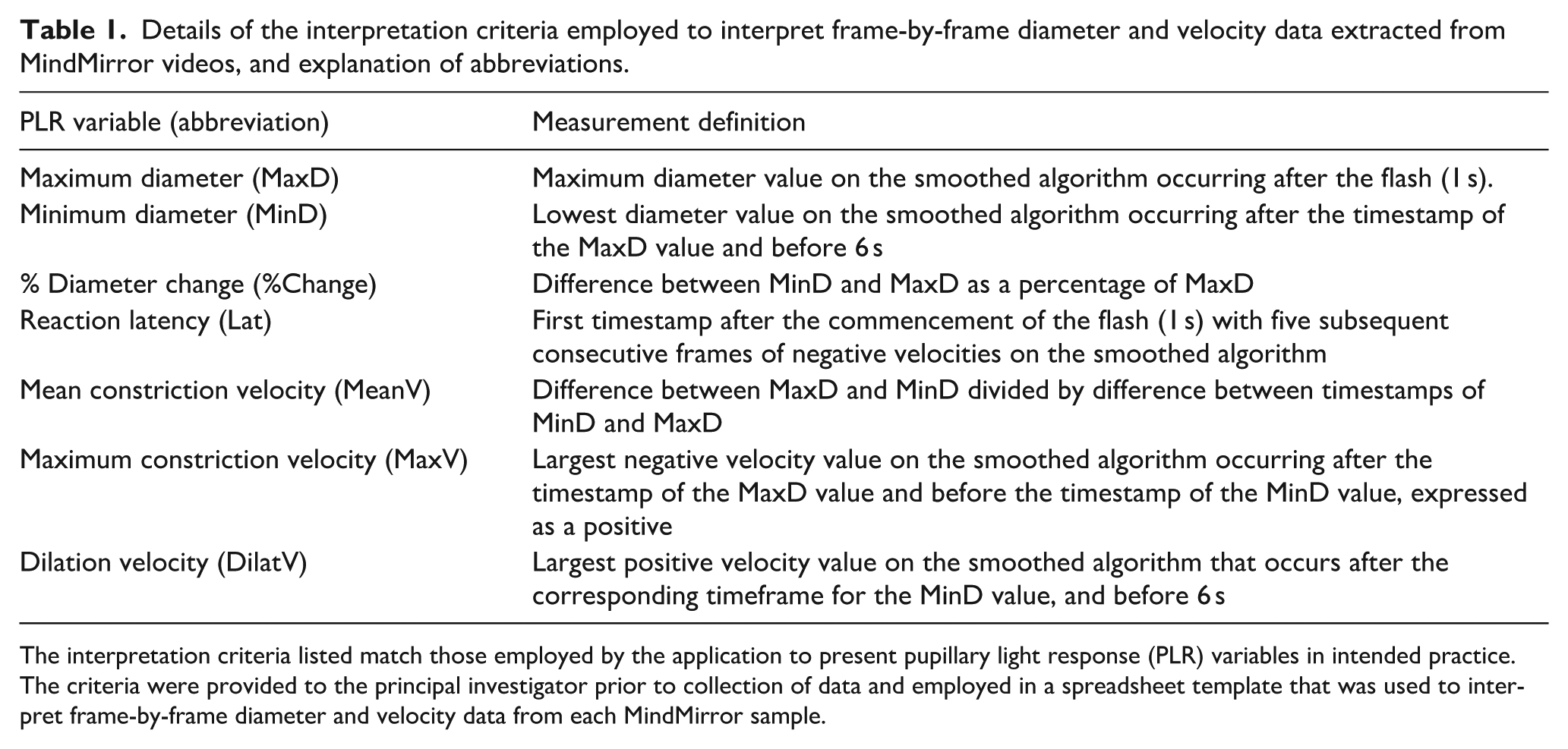

NPi-200 test values were documented directly from the results screen, recorded with a deidentified unique study ID number. MindMirror videos were uploaded to the cloud, designated to the unique study ID number, and the dataset of calculated diameters and velocities for each frame were returned to the primary investigator to be processed manually, using interpretation criteria established by the MindMirror algorithm. Operators processing MindMirror data were blinded to NPi-200 results prior to returning the raw data. Each subject’s videos were assessed for MaxD, MinD, %Change, MeanV, MaxV, DilatV and Lat, akin to the values generated by NPi-200 pupillometers (measurement criteria for each parameter can be found in Table 1). NPi values could not be obtained for MindMirror videos as the Neuroptics proprietary algorithm was not accessible for calculations. When NPi-200 tests failed to capture appropriate data, samples were repeated contemporaneously until three successful paired tests were completed. Due to the delay in alerting to inadequate MindMirror videos imposed by external processing, subjects with corrupted MindMirror samples were contacted again to re-test samples for both modalities, which became the new data. Subjects who could not be re-tested were excluded from analysis.

Details of the interpretation criteria employed to interpret frame-by-frame diameter and velocity data extracted from MindMirror videos, and explanation of abbreviations.

The interpretation criteria listed match those employed by the application to present pupillary light response (PLR) variables in intended practice.

The criteria were provided to the principal investigator prior to collection of data and employed in a spreadsheet template that was used to interpret frame-by-frame diameter and velocity data from each MindMirror sample.

Each subject’s samples for both modalities were analysed as a mean of three readings. Sample means for each modality were assessed for differences between subgroups of gender (male/female), eye colour (brown, green/hazel, blue), time of day (12–6 pm, after 6 pm), location (1–4) and test number (1st, 2nd 3rd sample) using Student’s t tests or analysis of variance (ANOVA) for multicategory variables, with statistical significance thresholds set at 0.05 adjusted by Bonferroni correction for each analysis. Results for each modality were compared with Bland–Altman plots of limits of agreement for all seven PLR variables, with the inter-modality difference calculated as NPi-200 minus MindMirror. Influential outliers were defined as values with subject difference greater than 4 standard deviations (SDs) away from the mean difference.

The inter-reading reliability of each modality was compared, analysing each subject’s data as two inter-sample comparisons variable (1st vs. 2nd reading and 2nd vs. 3rd reading). Agreement between adjacent samples was analysed with the mean and confidence interval of absolute and relative error, mean coefficient of variation, and the frequency that inter-sample differences exceeded clinical significance thresholds. The margins used for clinical significance were adapted from previous studies;9,11,13 less than 0.5 mm difference for diameter, less than 10% difference for percentage change, less than 0.1 s difference for latency, and less than 0.8 mm/s difference for velocities. Trends of inter-reading reliability were analysed with a Bland–Altman plot for the inter-sample difference for each parameter and each modality.

Values from MindMirror PLR assessments were further analysed by plotting the relationship of MinD, MeanV, and MaxV against initial pupil diameter, which is known to be closely correlated in normal PLR measurements from NPi-200 pupillometers,9,20 analysed with a Pearson correlation coefficient.

Results

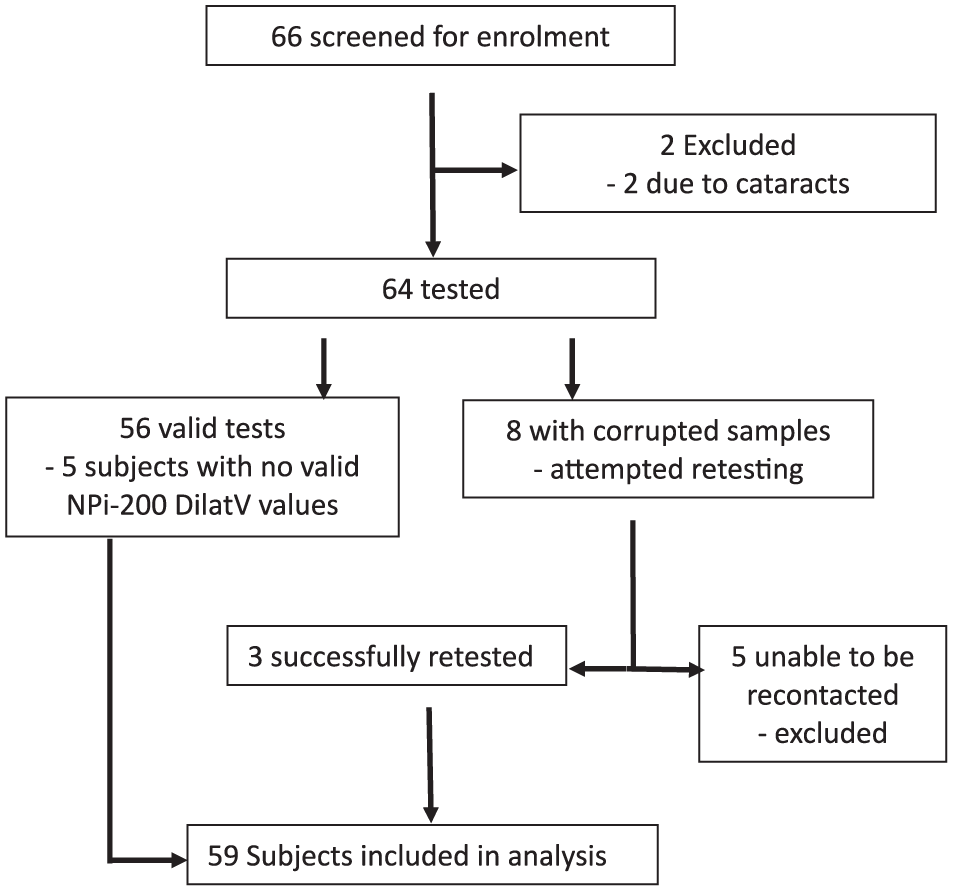

Sixty-six subjects were consented for the study. Of these, two were excluded, both due to known cataracts. Of the sixty-four remaining patients, eight required repeat collection due to missing or corrupted MindMirror videos. Three of these subjects were successfully retested. Five subjects could not be retested and were excluded from analysis. In total, fifty-nine subjects (354 readings, 118 eyes) were considered for analysis (Figure 1). For five subjects the NPi-200 pupillometer returned no valid DilatV readings in any of the three measurements. All patients recorded normal NPi values for each test (mean 4.36 ± 0.27).

Consort flow diagram showing study recruitment. Seven subjects were excluded due to medical exclusions (two subjects) and inability to retest for a complete dataset (five subjects).

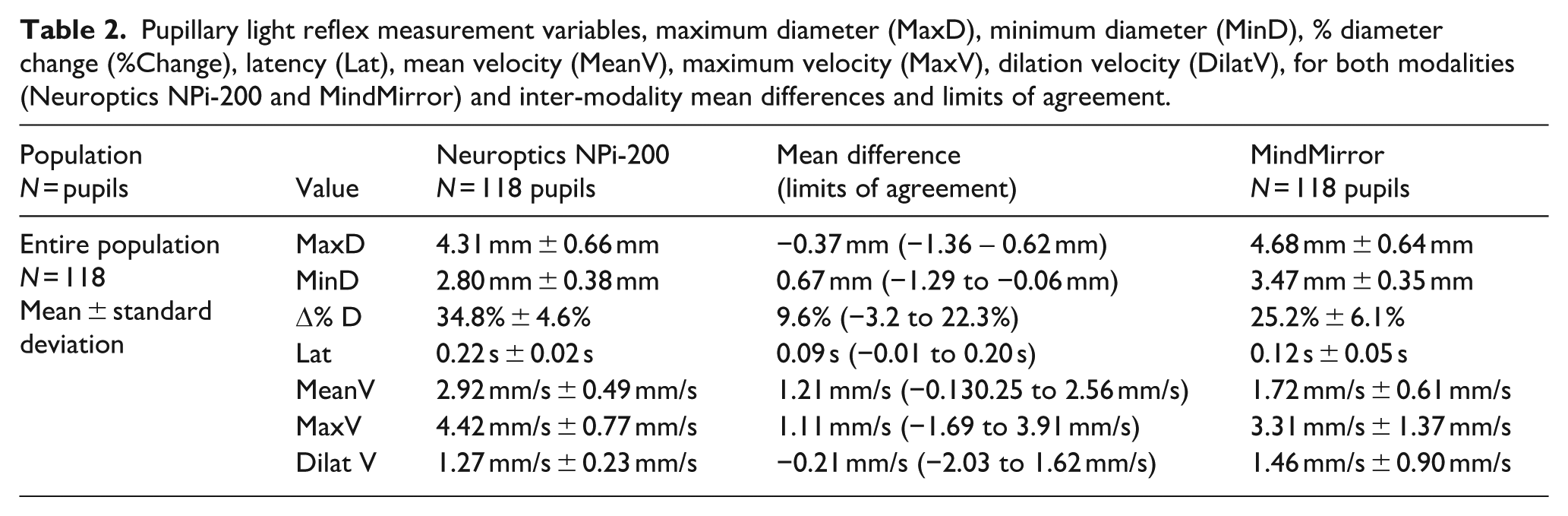

There were no statistically significant differences in PLR values for the left versus the right eye, gender, eye colour, or collection time subgroups in either modality (see Table 2, Supplementary material). Samples from location two demonstrated smaller MaxD in MindMirror and MinD and smaller MeanV and MaxV compared with other locations, which met the threshold for statistical significance in both modalities (P = 0.005). There were no statistically significant differences for any PLR parameter between the first, second and third tests of MindMirror or NPi-200 readings.

Pupillary light reflex measurement variables, maximum diameter (MaxD), minimum diameter (MinD), % diameter change (%Change), latency (Lat), mean velocity (MeanV), maximum velocity (MaxV), dilation velocity (DilatV), for both modalities (Neuroptics NPi-200 and MindMirror) and inter-modality mean differences and limits of agreement.

Cross-modality (MindMirror and NPi-200) agreement and repeatability

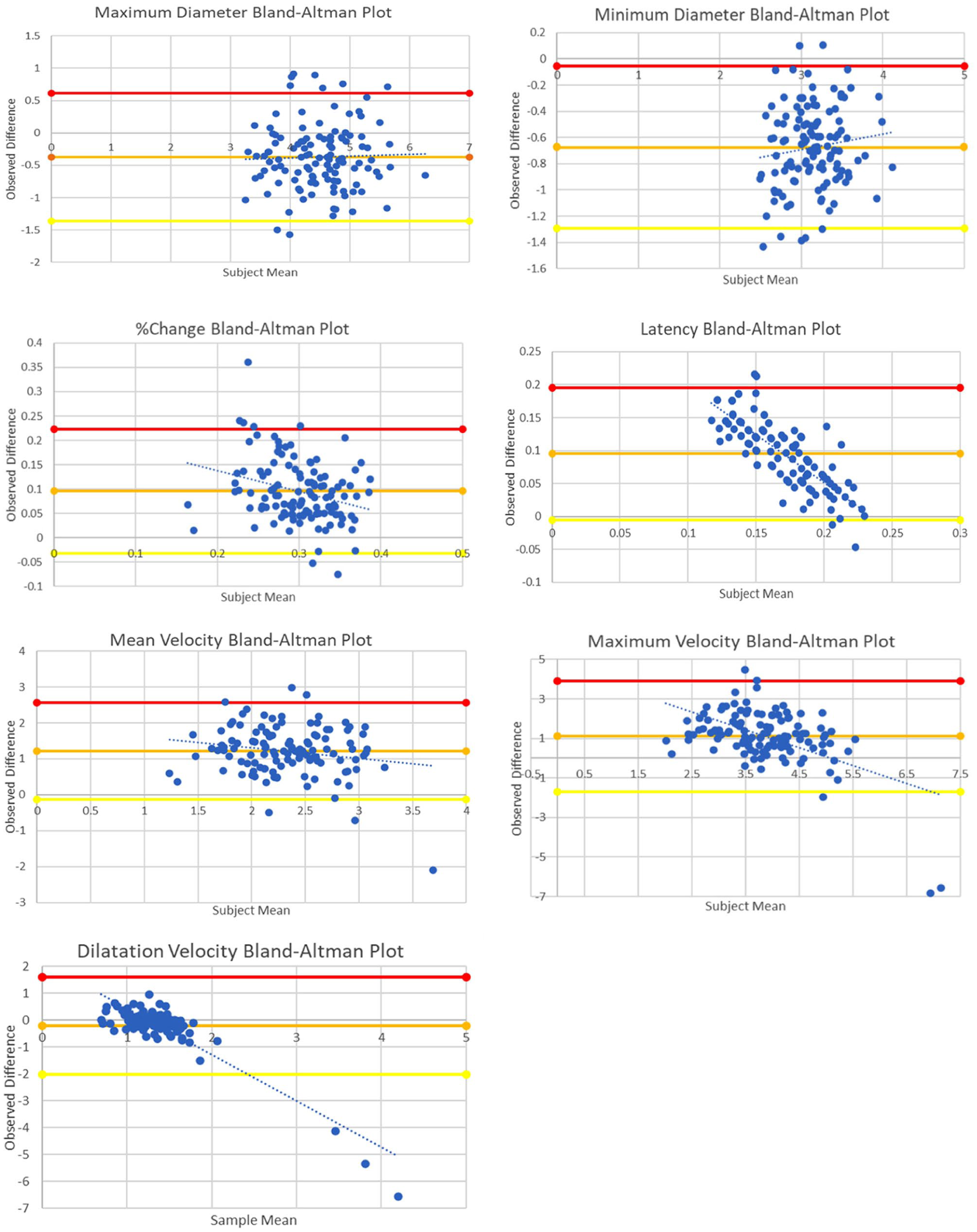

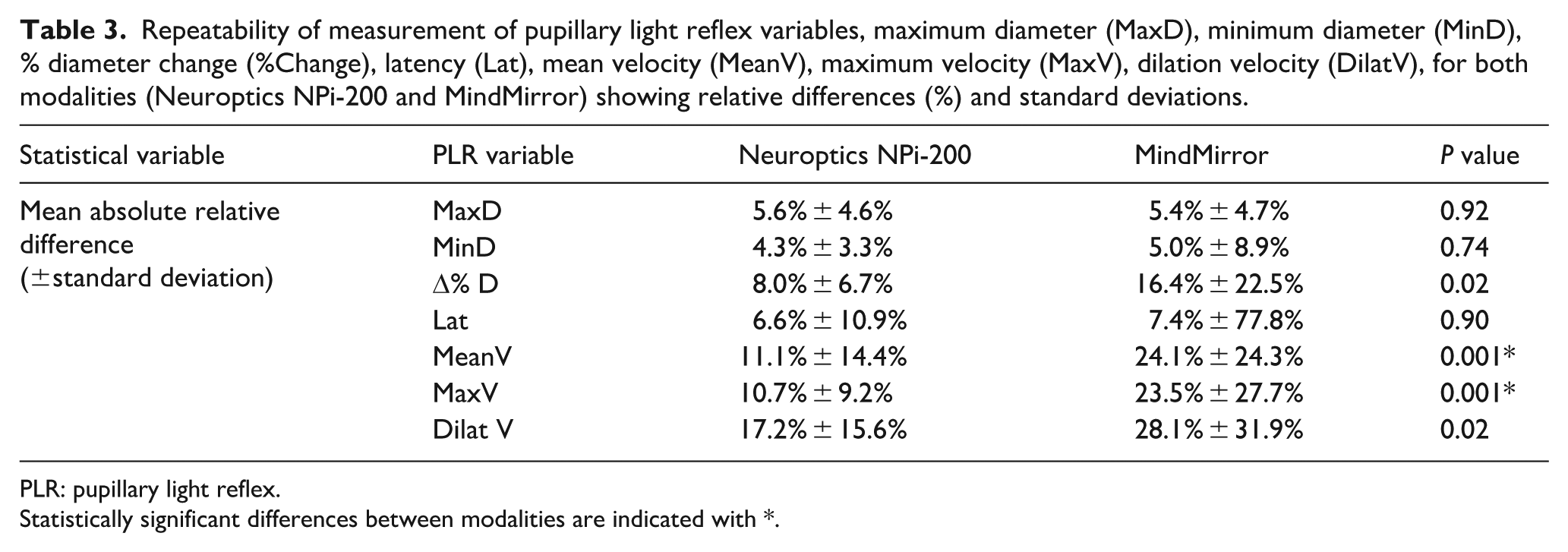

Results and Bland–Altman plots for cross-modality comparisons in each parameter are seen in Figure 2 and Table 2 (with further details listed in Supplementary Table 2, see Supplementary material). Results and Bland–Altman plots for repeatability analysis in each modality can be seen in Table 3 (with further details listed in Supplementary Table 3 and Figure 4, see Supplementary material). Equations and P values for all plots are available in Appendix 1 (Supplementary material).

Bland–Altman plots comparing the MindMirror smartphone application with the Neuroptics NPi-200 pupillometer for key variables, maximum diameter (MaxD), minimum diameter (MinD), % diameter change (%Change), latency (Lat), mean velocity (MeanV), maximum velocity (MaxV), dilation velocity (DilatV), with linear regression lines indicated. Thick horizontal lines represent the mean difference, and the upper and lower limits of agreement. Significant trends were found for Lat, Max V and Dilat V graphs, with MeanV, MaxV and Dilat V datasets impacted by influential outliers. See Table 1 for explanation of abbreviations.

Repeatability of measurement of pupillary light reflex variables, maximum diameter (MaxD), minimum diameter (MinD), % diameter change (%Change), latency (Lat), mean velocity (MeanV), maximum velocity (MaxV), dilation velocity (DilatV), for both modalities (Neuroptics NPi-200 and MindMirror) showing relative differences (%) and standard deviations.

PLR: pupillary light reflex.

Statistically significant differences between modalities are indicated with *.

Maximum diameter (MaxD) inter-modality mean difference was −0.37 mm (limits −1.36 to 0.62 mm). The inter-sample Bland–Altman plot (Figure 2) did not demonstrate systematic bias. There was no statistically significant difference for MaxD between modalities in mean absolute error (0.24 mm vs. 0.25 mm, P = 0.85), mean relative difference (5.6% vs. 5.4%, P = 0.92), coefficient of variation (0.039 vs. 0.036), or frequency of clinically significant sample differences (11.86% vs. 11.86%).

Minimum diameter (MinD) inter-modality mean difference was +0.67 mm (limits −1.29 mm to −0.06 mm). The Bland–Altman plot (Figure 2) did not show a statistically significant gradient, although the intercept of line of best fit was statistically significant, suggesting a persistently positive mean difference. There were no statistically significant differences identified in repeatability parameters between modalities.

The %Change inter-modality mean difference was +9.5% (limits −3.2 to 22.3%). The Bland–Altman plot demonstrated a statistically significant intercept consistently positive mean difference, and a gradient which met the threshold for statistical significance, but a small effect size of explained variability (r2 = 0.08). Although the coefficient of variation and percentage clinically significant differences were increased for MindMirror compared with NPi-200, the mean absolute error and absolute relative difference did not show statistically significant differences between modalities.

The latency (Lat) inter-modality mean difference was +0.09 s (limits −0.01 to 0.20 s). The Bland–Altman plot (Figure 2) was suggestive of a systematic bias in results, with statistically significant intercept and gradients, and high correlation to the line of best fit (R2 0.64). Repeatability parameters were suggestive of increased variability for MindMirror compared withNPi-200, although the mean absolute error and absolute relative difference did not meet the threshold for statistical significance.

Mean velocity (MeanV) inter-modality mean difference was +1.21 mm/s (limits −0.13 to 2.56 mm/s). The Bland–Altman plot (Figure 2) showed low correlation to the line of best fit, although the intercept was statistically significant. Although the mean absolute error and percentage clinically relevant error were comparable between modalities, the absolute relative difference and coefficient of variation repeatability measures were increased for MindMirror compared with NPi-200.

The maximum velocity (MaxV) inter-modality mean difference was +1.11 mm/s (limits −1.69 to 3.91 mm/s). The Bland–Altman plot (Figure 2) demonstrated a statistically significant trend of decreasing mean difference with increasing sample mean, impacted by influential outliers. (R2 = 0.28, P < 10−9). MindMirror repeatability variables were increased compared with NPi-200, which was statistically significant.

The dilation velocity (DilatV) inter-modality mean difference was −0.21 mm/s (limits −2.03 to 1.62 mm/s). The Bland–Altman plot (Figure 2) showed a significant trend of decreasing mean difference with increasing sample mean, impacted by influential outliers (R2 = 0.78, P < 10−38). The mean absolute error, coefficient of variation was increased for MindMirror compared with NPi-200, which was statistically significant.

The intermodality mean values for MinD, % change, MeanV, MaxV and DilatV parameters were all closely correlated to corresponding Mindmirror MaxD values which was statistically significant (Supplementary Figure 3). The inter-modality mean difference for MinD, % change, MaxV and DilatV had little or no correlation to the corresponding MindMirror MaxD value. The MeanV inter-modality mean difference did demonstrate a statistically significant correlation to MindMirror MaxD, but the effect size was small (R2 = 0.14, P < 10−4).

Three MindMirror samples for three subjects contained values meeting the criteria for designation as impactful outliers in either the inter-modality Bland–Altman plot (DilatV, MaxV, MeanV) or inter-sample variation Bland–Altman plot (MeanV, MaxV, DilatV, MaxD, MinD, %Change). One subject had outlier values for MinD, %Change, MeanV, MaxV and DilatV parameters, one subject had outlier values for MaxD and Max V and the third subject had outliers for MaxV and DilatV.

Twelve patients from the MindMirror dataset and seven patients from the NPi-200 dataset demonstrated anisocoria (unequal pupils); of these one subject recorded anisocoria in both modalities.

Discussion

Population mean values for each PLR parameter in both modalities were comparable to normative datasets of well subjects from previous studies.27–29 Results of NPi-200 measurements and inter-sample repeatability in this study were consistent with results produced in previous studies.12,13,21,24 MaxD results demonstrated high rates of inter-modality agreement, with a mean inter-modality difference that was not clinically significant, comparable degree of inter-sample repeatability parameters, and no systematic bias identified on the Bland–Altman plot. Constriction amplitude was significantly different between modalities, with a larger MinD and smaller %Change for MindMirror samples compared with paired NPi-200 samples, although repeatability parameters were comparable between groups, and no significant trends were identified on the Bland–Altman plot. Constriction velocities were also meaningfully different between modalities, with inter-modality agreement limits for MeanV and MaxV values beyond zero, increased absolute and relative inter-sample variation for all velocity values, and a systematic trend in the inter-modality Bland–Altman plot for MeanV, MaxV and DilatV, noting the influence of impactful outliers in the MindMirror dataset for all three parameters. Lat values demonstrated significant differences between modalities, with a significant mean difference between MindMirror and NPi-200 datasets, greater inter-sample variability for MindMirror compared with NPi-200, and a systemic trend on the Bland–Altman plot, noting a biphasic pattern of inter-sample difference with increasing sample mean.

MaxD readings for MindMirror samples were shown to have high agreement with NPi-200 MaxD, with a mean difference of −0.37 mm, no statistically significant differences in inter-sample repeatability metrics, and no systematic bias identified on inter-modality or inter-sample Bland–Altman plots. Compared with smartphone pupillometers examined in previous studies, the MindMirror application demonstrated favourable21–23 or comparable24–26 levels of inter-modality agreement with the NPi-200 pupillometer, and favourable21–23 or comparable24,25 inter-sample repeatability for MaxD measurements. The results of this study were achieved without methodological enhancements to the technology that have been employed in other studies, such as light shields 23 or camera stands,24,25 validating the intended point-and-shoot method of MindMirror recordings as being able to produce accurate results while also facilitating easy operation. The results of subgroup analysis for MaxD in each modality further validates that important methodological differences of the MindMirror application compared with NPi-200 did not impact the results. That left and right eye results were consistent for both modalities suggests that MindMirror videos using simultaneous binocular measurements are comparable to the consecutive measurements of the NPi-200 pupillometer, and that yaw from offsetting the camera angle did not impact the results. Although iris colour has not been demonstrated to impact PLR parameters in previous studies,17,30 that iris colour subgroups were comparable for both modalities suggests that MindMirror colour recordings do not impact results compared with the NPi-200 infrared camera, which negates the effect of iris colour.19,20,30 Location two recorded smaller mean MaxD in both modalities, which was statistically significant in the MindMirror dataset and approached the threshold for statistical significance in the NPi-200 dataset. The replication of these differences across modalities suggests a true result due to brighter ambient light in that location, thus reducing the relative impact of the light stimulus in the PLR response.17,27 That each modality produces similar results in response to changing ambient light between locations provides support that the impact of ambient light in MindMirror application recordings did not impact the initial MaxD.

MinD and %Change results were consistent with a reduced constriction amplitude for MindMirror samples compared with NPi-200 readings, with a negative MinD mean difference and positive %Change mean difference where the limits of agreement approached zero, and with statistically significant intercepts on inter-sample Bland–Altman plots for both parameters. This is despite that MaxD readings were comparable between modalities, absolute and relative inter-sample error was comparable between modalities for MinD and %Change, and no systematic trends were identified in inter-modality or inter-sample Bland–Altman plots for either parameter. This suggests the observed discrepancy in constriction amplitude is a true result as opposed to measurement error, suggesting the NPi-200 pupillometer evokes a more potent stimulus, causing a greater contraction amplitude and brisker PLR response compared with the MindMirror samples. The amplitude of a pupillary response to light stimulus is impacted by baseline influences from ambient light and physiological status prior to the stimulus, as well as the intensity of the new stimulus relative to this baseline.17,20,29,31 Previous studies have established that greater intensity light stimulus causes increased constriction amplitude, shorter latency times, and higher constriction and dilation velocities.17,20,29,31 Product information for the NPi-200 pupillometer does not quantify the light intensity of the flash stimulus, 19 but previous studies have listed the intensity as 1000 lux. 7 Such a potent stimulus is consistent with the intended use of the NPi-200 pupillometer, employing an LED flash removed from the face only by the Smartguard attachment, with the pupillometer obstructing the field of view. 19 This obstruction of ambient light amplifies the response to the flash stimulus compared with MindMirror samples, in which the phone is held further away from the pupil. The authors were unable to confirm the exact lux expected to be produced by the Samsung S21 Camera flash to compare with the NPi-200 pupillometer. A difference in stimulus potency is supported by the observed constriction velocity results, in which NPi-200 MeanV and MaxV was increased compared with paired MindMirror samples. The shorter latency values observed for MindMirror compared with NPi-200 samples is evidence against this, although as will be discussed this appears more likely to be related to systematic bias in recording than a true result.

The MindMirror dataset showed reduced MeanV and MaxV values and increased DilatV compared with corresponding NPi-200 values. Repeatability measurements also suggested increased absolute and relative variability for MindMirror values in these parameters compared with NP-200 tests, particularly for the MeanV and DilatV measurements. There are likely to be multiple contributory elements to these observed discrepancies. Reduced constriction velocity is consistent with a reduced intensity stimulus in the MindMirror tests, but increased dilation velocity is not consistent. It is likely that the influential outliers for constriction and dilation velocity parameters observed in the MindMirror datasets contribute to the greater intra-subject variability observed in this study. Accounting for these outliers would reduce the discrepancy in intersample variability but accentuate the observed intermodality mean difference for these values. To differentiate the effects of physiological PLR variations from measurement error in explaining observed discrepancies, a secondary analysis of MindMirror data was completed, analysing the inter-parametric relationships that would be expected of a normal PLR. Constriction amplitude and velocity have been observed to vary proportionally to MaxD in subjects with normal pupillary responses, 31 and analysing inter-parametric relationships has been used to identify measurement errors in previous studies.22,31 Although the NPi algorithm is not publicly available, it is known that the NPi-200 pupillometer corrects for the sample’s initial pupil diameter when calculating NPi.3,4 MindMirror MaxD was correlated closely with MindMirror MinD, MeanV, MaxV and DilatV and %change, all with statistically significant relationships, which would be expected of a normal PLR. In contrast, the inter-modality error for those same parameters was not closely correlated to the MindMirror MaxD. These relationships suggest that the systematic differences in constriction velocity and amplitude are better explained by variations of normal PLR in altered collection conditions, as opposed to measurement errors of identical responses. Although reduced constriction amplitude and velocity in the range observed in the MindMirror tests can sometimes suggest an abnormal PLR,31,32 these results are also compatible with normal pupillary responses. Previous studies have assessed the impact of MeanV on NPi and concluded that a low MeanV can still be compatible with a normal NPi, in a manner which is not explained by the sample MaxD. 32 Only one subject in this study recorded a MindMirror MeanV value which met the criteria for an ‘abnormal’ MeanV value in previous studies (defined as <0.8 mm/s, or >2 SDs below the population mean),13,32 noting that those studies included hospital inpatients and well controls. It is therefore reasonable to conclude that although constriction speed and amplitude was reduced in MindMirror readings compared with NPi-200 paired samples, this does not suggest differences in assessment of normal versus abnormal PLR.

Lat values in MindMirror samples were markedly lower compared with NPi-values, with a positive mean difference, and statistically significant inter-sample variability on repeatability parameters. The inter-modality Bland–Altman plot for Lat values suggested a decreasing mean difference with increasing sample mean, with statistically significant gradient and intercept values, which suggests systematic differences in the calculation of latency values. NPi-200 Lat values are determined by the timestamp of the frame in which constriction is first detected. The threshold diameter changes at which constriction is designated as having begun is not disclosed in the NPi-200 product manual, but as the device is calibrated to an accuracy of 0.03 mm, 19 it is likely to be greater than this error range. By contrast, the latency period in MindMirror samples was calculated as the first timepoint after the flash with five subsequent frames of negative velocities, without specifying a minimum constriction amplitude. It is likely in MindMirror measurements, the first recorded negative value is at a lower amplitude than the NPi-200 threshold and therefore detects at an earlier frame. Although the measured mean difference approaches a clinically significant amplitude (0.09 s),12,13 means for both modalities are within the range expected of normal PLR reflexes and are consistent with normal NPi values,12,31,32 and it is therefore unlikely that this observed difference would impact the clinical assessment of PLR. However, a constriction amplitude threshold may improve consistency in the timeframes where MindMirror deems constriction has started and reduce the high population variability demonstrated in the dataset for this modality.

Three samples in the MindMirror dataset were suggested to contain influential outliers with substantial differences from adjacent MindMirror readings and paired NPi-200 results at amplitudes not consistent with normal physiological function. Manual analysis of these videos compared with the corresponding pupil curve suggests the outliers align with subjects blinking, in which corresponding values were not excluded from integration into the smoothed curve. MindMirror data processing assigned a confidence score ranging from 0 to 100 to diameter measurements in each frame, with the manufacturer’s algorithm excluding all measurements with a confidence score below 60. Applying this metric has effectively excluded outlier diameter values in one of the outlier samples; of the two others, MaxD in one sample and MinD and %Change in the other sample were likely not to be excluded because the outlier was within the normal value range and was only detected as an outlier in repeatability analysis. However, the confidence score was less effective at excluding velocity values, which are calculated by incorporating adjacent frames, which may be assigned normal confidence values. As the obtained outliers were not excluded by the MindMirror interpretation algorithm, outlier samples were not excluded from analysis, obscuring a true reflection of the underlying distribution. It is likely that including these values impacts the systematic bias identified on MaxV and DilatV Bland–Altmann plots, the discrepancy in repeatability parameters for MeanV MaxV and DilatV values, and the observed difference in MeanV and MaxV between modalities. In clinical practice, contemporaneous review of the results would allow clinicians to identify tests in which PLR measurements have been impacted by blinking and reattempt immediately, as occurs with NPi-200 samples where retest is advised. 19 This was not possible in this experiment as data collection was separated from analysis to blind collectors to the results. The occurrence of outlier values in three of 177 MindMirror samples is rare for clinical measurements, but rectifying the influence of this artifact on data interpretation would improve the clinical utility of MindMirror samples. Combined with the rate of corrupted samples, the rate of failed tests was 18 out of 210 (8.5%) which compares favourably to failure rates reported for other smartphone pupillometers.21,26

The prevalence of observed physiological anisocoria in this study is consistent with the frequency observed in previous studies of well volunteers.27,31,33 It is of note that the observed frequency of agreement in anisocoria was very low, despite the high agreement in MaxD readings. It is known that the presence of physiological anisocoria is variable for a given patient over time; 33 however, no study has tested the variation in pupillary diameter difference in repeated contemporaneous samples. It is possible that the observed differences for these samples are reflective of measurement variation between modalities; it was hoped that the use of three averaged readings would reduce the impact of measurement variability. Given that none of the subjects observed to have anisocoria demonstrated a mean pupillary diameter difference greater than 1 mm, a threshold value for severe abnormality,9,15,33 it is unlikely that any of the observed differences would have an impact on clinical decision making.

A pertinent limitation of this study is that the primary measured outcome focussed on raw pupillary variables, as opposed to the overall assessment of normal or abnormal reflex, which is the intended use of pupillometer measurements. Individual PLR variables can demonstrate wide ranges of values as part of a normal reflex, in response to variations in collection conditions, with no ‘true’ value to estimate on a Bland–Altman plot. Although the study attempted to control for environmental influences that might increase PLR variability such as consistent ambient light and controlled point of focus, it is not possible to control or exclude external influences entirely without making the study conditions incompatible with conditions found in expected clinical practice. Furthermore, differences in raw PLR values do not directly translate to differences in assessment of the overall PLR. Detecting normal versus abnormal pupillary responses is the most clinically relevant goal of the measurement of pupillary reflex parameters, which the NPi-200 achieves by calculating the multi-parametric NPi value.3,9 It was not possible to calculate NPi values from MindMirror data for comparison as the algorithm is not publicly available. Furthermore, comparing accuracy in composite markers is fraught with error, as an equal result could arise from similar values, or from multiple measurement errors with opposing impacts on the combined result. An alternative comparison mechanism might be to analyse the diameter/time curve displayed by the pupillometer, which encompasses multiple dynamic PLR values. This was not possible in this study as frame-by-frame data for the NPi-200 pupillometer are not available, but this could be used as a method of analysis in future studies. The MindMirror application AI includes an interpretation algorithm that assesses the normalcy of PLR curves; however, the discriminatory value of this algorithm compared with the NPi was not assessed in this study, as the pre-test probability of abnormal results was likely to be low in a cohort of well volunteers. The use of well volunteers also limited the ability to assess the impacts of common demographic variables that affect PLR, such as age,9,34 physical fitness, 35 smoking, 27 medical comorbidities9,27 such as Parkinsons disease, diabetes, schizophrenia, migraines or multiple sclerosis, or medications such as tricyclic antidepressants, 27 angiotensin-converting enzyme (ACE) inhibitors 36 or opiates. 37 The sample size was not sufficient in this study to evaluate systematic differences meaningfully in these subgroups, as medical comorbidities are not highly represented in a healthcare worker population.

Another limitation of this study is the inability to control for the impact of pupillary fatigue from repeated PLR tests. Previous inter-device agreement studies have conducted four or fewer PLR measurements,12,13,21,24 whereas subjects in this study completed nine consecutive PLR measurements; three binocular MindMirror measurements and three NPi-200 samples per eye. Although the PLR response is completed in a five to six period, 28 the study protocol ensured that all samples were collected at least ten seconds apart, meaning that each PLR response had normalised before the next test. However, the fatigue effect from multiple repeat responses is not well quantified. There was not a statistically significant difference in MaxD, constriction amplitude or constriction velocity between first, second and third samples in either modality; however, the examined cohort size is underpowered to detect small differences in PLR variables with fatigue, given that well subjects would manifest fatigue with low-amplitude changes initially. Pooling results of both modalities was not appropriate, despite high agreement for MaxD, because samples were collected consecutively by modality rather than alternating, which would dilute the impact of the sample order. The MindMirror application includes a setting where subjects are exposed to two flashes two seconds apart on the same recording, which may induce pupillary fatigue and increase the sensitivity to detect abnormal results in patients with subtle PLR abnormalities manifesting as augmented fatigue. This hypothesis can be a focus of future studies.

Conclusions

The results of this study indicate that the MindMirror pupillometer demonstrates high agreement with the NPi-200 pupillometer for pupillary diameter measurements, with comparable rates of measurement repeatability. However, the results for each modality are not interchangeable, because of consistent and reproducible differences in the PLR constriction amplitude and constriction velocity, most likely due to differences in the collection conditions, and in the reaction latency, likely due to systematic differences in calculation methods. The MindMirror application produces clinically significant outlier values not excluded by the AI algorithm which may impact the interpretation of PLR results clinically, and although these occur at a low frequency, consideration of the veracity of obtained results may be necessary before practitioners can interpret results clinically. The agreement of raw values cannot be extrapolated to imply agreement in the detection of abnormal pupillary responses, and further studies comparing the MindMirror AI PLR interpretation against accepted pupillary interpretation metrics like the NPi will be necessary to ensure applicability in clinical practice.

Supplemental Material

sj-docx-1-aic-10.1177_0310057X251381378 – Supplemental material for A novel pupillometer smartphone application demonstrates accurate results compared with a validated clinical pupillometer: The Pupillary Light Response Validation (PLR-V) Study

Supplemental material, sj-docx-1-aic-10.1177_0310057X251381378 for A novel pupillometer smartphone application demonstrates accurate results compared with a validated clinical pupillometer: The Pupillary Light Response Validation (PLR-V) Study by Jake C Schmidt, Paul M Middleton and William Davies in Anaesthesia and Intensive Care

Supplemental Material

sj-docx-2-aic-10.1177_0310057X251381378 – Supplemental material for A novel pupillometer smartphone application demonstrates accurate results compared with a validated clinical pupillometer: The Pupillary Light Response Validation (PLR-V) Study

Supplemental material, sj-docx-2-aic-10.1177_0310057X251381378 for A novel pupillometer smartphone application demonstrates accurate results compared with a validated clinical pupillometer: The Pupillary Light Response Validation (PLR-V) Study by Jake C Schmidt, Paul M Middleton and William Davies in Anaesthesia and Intensive Care

Supplemental Material

sj-docx-3-aic-10.1177_0310057X251381378 – Supplemental material for A novel pupillometer smartphone application demonstrates accurate results compared with a validated clinical pupillometer: The Pupillary Light Response Validation (PLR-V) Study

Supplemental material, sj-docx-3-aic-10.1177_0310057X251381378 for A novel pupillometer smartphone application demonstrates accurate results compared with a validated clinical pupillometer: The Pupillary Light Response Validation (PLR-V) Study by Jake C Schmidt, Paul M Middleton and William Davies in Anaesthesia and Intensive Care

Supplemental Material

sj-docx-4-aic-10.1177_0310057X251381378 – Supplemental material for A novel pupillometer smartphone application demonstrates accurate results compared with a validated clinical pupillometer: The Pupillary Light Response Validation (PLR-V) Study

Supplemental material, sj-docx-4-aic-10.1177_0310057X251381378 for A novel pupillometer smartphone application demonstrates accurate results compared with a validated clinical pupillometer: The Pupillary Light Response Validation (PLR-V) Study by Jake C Schmidt, Paul M Middleton and William Davies in Anaesthesia and Intensive Care

Supplemental Material

sj-docx-5-aic-10.1177_0310057X251381378 – Supplemental material for A novel pupillometer smartphone application demonstrates accurate results compared with a validated clinical pupillometer: The Pupillary Light Response Validation (PLR-V) Study

Supplemental material, sj-docx-5-aic-10.1177_0310057X251381378 for A novel pupillometer smartphone application demonstrates accurate results compared with a validated clinical pupillometer: The Pupillary Light Response Validation (PLR-V) Study by Jake C Schmidt, Paul M Middleton and William Davies in Anaesthesia and Intensive Care

Footnotes

Author contributions

Declaration of conflicting interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: This study was commissioned by the MindMirror research department. MindMirror staff were involved in extracting raw data from videos to be analysed by Dr Schmidt but did not contribute to the statistical analysis of provided data. To complete the study, Dr Schmidt was provided access to the beta MindMirror application, which was revoked at the conclusion of the study. Dr Schmidt has not received financial compensation from MindMirror for involvement in the study and has not received editorial input from MindMirror in the statistical analysis or study commentary. Professor Middleton and Dr Davies act as unpaid medical advisors with MindMirror. They have not received any financial compensation, research grants, or board appointments from MindMirror.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplementary material

Supplementary material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.