Abstract

It returns multiple hits from a wide variety of pornography websites of these famous women allegedly involved in a variety of kinky and shocking sex acts.

But not one of these videos is genuine. They are all fakes of varying degrees of sophistication, created with free – and freely-available – software used to substitute their faces on the bodies of actual porn actresses.

Welcome to the world of deep fakes – a portmanteau of “deep learning” and “fake” – which uses machine learning and artificial intelligence to create videos portraying people saying or doing things they never said or did.

Raymond Joseph teaches Ethiopian journalists how to spot fake photos

CREDIT: Sarah Bushman/IREX

It is not the first time that the multi-billion-dollar porn industry has taken the lead in creating or mainstreaming new tech, including e-commerce, webcams and streaming video. Deep-fake pornography first surfaced on the internet in 2017 when videos were posted on Reddit by a user under the pseudonym “Deepfakes”.

The trickle of deep-fake hardcore porn videos soon turned into a deluge with the release of free software that made them relatively easy for anyone with a basic understanding of artificial intelligence to create. The problem became so serious that several platforms, including Reddit and Twitter, banned them. Late last year, Google also cracked down, adding “involuntary synthetic pornographic imagery” to its ban list and allowing anyone falsely depicted in them as “nude or in a sexually explicit situation” to request searches to the content be blocked.

So common has celebrity deep-fake porn become that actress Scarlett Johansson told the Washington Post: “Clearly this doesn’t affect me as much because people assume it’s not actually me in a porno, however demeaning it is. I think it’s a useless pursuit legally, mostly because the internet is a vast wormhole of darkness that eats itself. The fact is that trying to protect yourself from the internet and its depravity is basically a lost cause, for the most part.”

But dismissing online deep fakes as just something in the world of porn ignores the very real potential for them to be deployed on the mainstream web and in politics, taking online misinformation and disinformation to a new level.

You need only think of the damaging and divisive role played by social media in the US and other elections, and Brexit, to realise the potential damage well-crafted deep fakes could cause. In fragile democracies divided by strongman politics and cultural and tribal divides, the potential for using them to stir up hate and violence is a very real possibility.

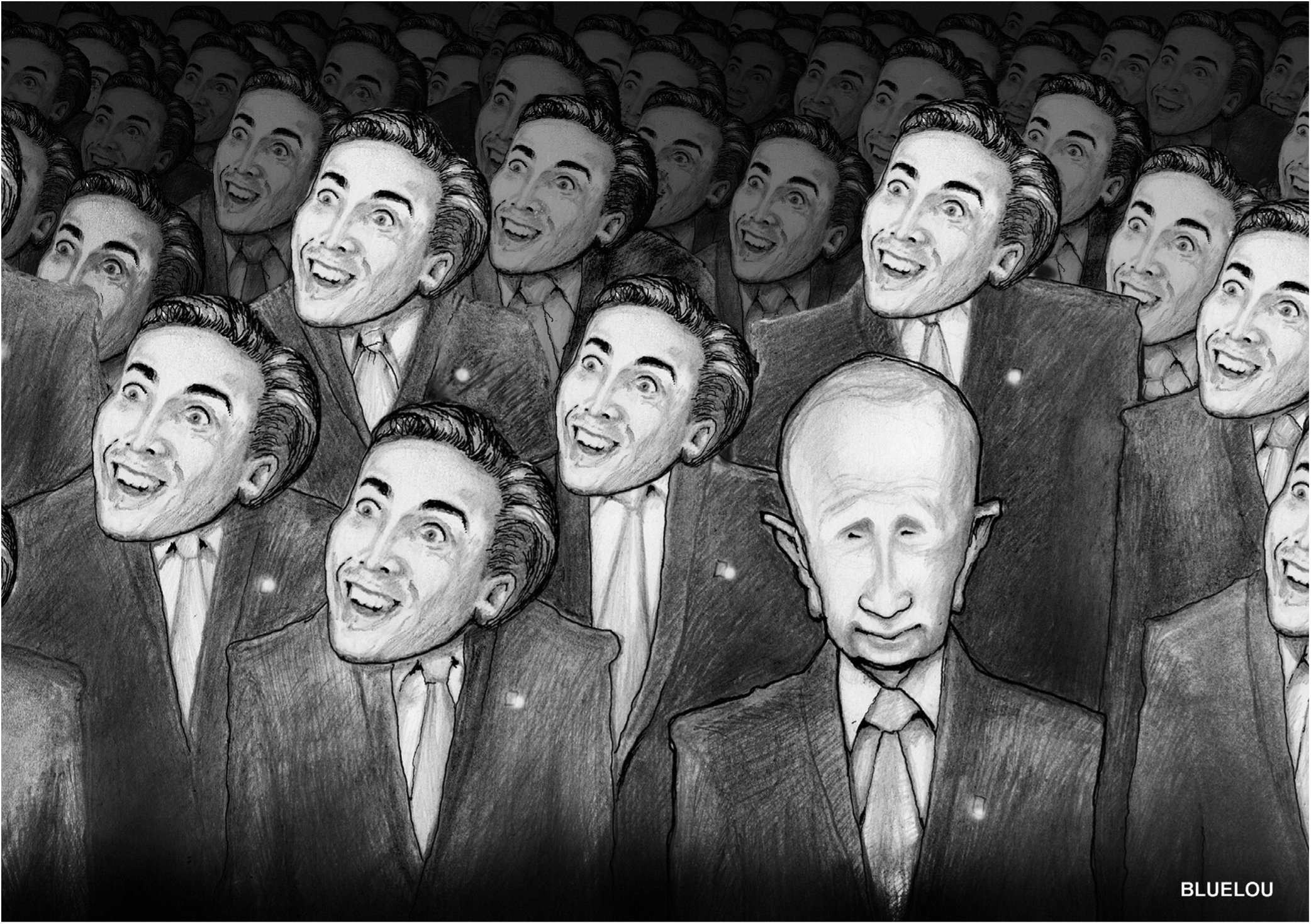

Deep fakes are yet to be widely deployed “in the wild” beyond the world of porn, although there is a trend, which began as a meme on social media and went viral, of superimposing the face of the US actor Nicolas Cage onto the heads of other actors in popular movies. And another recent viral video created by actor-director Jordan Peele of former US President Barack Obama saying things he never said – such as that current President Donald Trump is a “dipshit” – highlighted the potential of deep fakes being used to spread disinformation … and worse.

But fakes don’t need to be deep to be dangerous. Last year the White House shared a video after a confrontation between CNN’s Jim Acosta and Trump that tried to alter what actually happened. The poorly doctored video attempted to make it appear that the veteran reporter had attacked an intern trying to wrest a microphone from him.

Concerned that deep fakes may be a threat to national security, several Congressmen have asked US intelligence authorities to investigate and report on their possible impact.

For journalists, let alone ordinary users, already having to navigate the proliferation of misinformation, disinformation, hoaxes and assorted fakery swilling around online, debunking deep fakes raises the ante to a frightening new level. Leading experts say that although deep fakes are not yet a big problem, they could become one in the future.

And they agree that even though powerful tools will emerge to help identify deep fakes, the role of journalists doing traditional factchecking and verification are key weapons in combating them.

Researchers and developers around the world are working furiously to build tools that will help to identify deep fakes.

The US military has already developed a tool to identify them, although it is not commercially available. And Carnegie Mellon University researcher Satya Venneti has had some success with a tool that analyses the pulses and blood flows of people in videos to help identify fakes.

Claire Wardle, the head of research at First Draft News and a leading expert on verification, says that while deep fakes need to be watched, she is not disproportionately worried by them.

“I’m more worried about all the news coverage of it, much of which is fearmongering. It’s potentially going to lead to people not trusting anything they see,” she said.

“We do have pretty sophisticated tools to verify images and videos that have been honed over the last decade of doing things like verifying YouTube videos of chemical weapons attacks in Syria. That is also really hard. There are also many very smart academics working on tools to detect deep fakes so by the time they are a real problem I feel confident we will have tools to fight back.”

CREDIT: Lou McKeevor

Journalists will have to use all the same skills they’ve always used, she says.

“Is there supporting evidence to suggest this event took place? Does the location make sense? What does the transcript of the speech say? What did witnesses say?”

Ben Nimmo, a senior fellow for information defence at the Atlantic Council’s Digital Forensic Research Lab, was at the forefront of unmasking Russian bots that interfered in the US 2016 elections.

“At the moment, we haven’t seen [deep fakes] used,” he said. “The Russian government has run plenty of shallow fakes (such as manipulated images) which have been caught out. Deep fakes would be yet another escalation in the information warfare. It’s probably only a matter of time.”

Deep fakes, he says, are a risk because they could lead to journalists making mistakes. They must be aware of the problem and always look for corroborating sources.

“Ultimately, though, they’ll need to develop a stronger relationship with the tech platforms, who have the best technical expertise and who have a big stake in not letting their platforms be taken over by fakes.”

He also has a stark warning: “If you wait for the lies to come, you’ll be too late. The media need to educate themselves, and the public, in how to predict all sorts of fakes and disinformation. For example, the Russian government always uses the same four techniques: dismiss, distort, distract and dismay. Teaching people to spot those are the best way of helping them to protect themselves.”

Peter Cunliffe-Jones, executive director of fact-checking organisation Africa Check, believes that deep fakes present a serious challenge to journalists.

“They need – first of all – to be aware of the problem,” he said. “[In] the run-up to the elections in Nigeria and Senegal, Africa Check saw many media simply relaying content that was false or faked. The first thing for any editor to do with shocking or surprising content is to ask a series of questions about its source and origin – and that goes for video, too.

“Fortunately, deep fakes appear still to be quite rare at present. But that does not mean that they can be discounted. And in fragile societies, deep-fake videos hitting a nerve on sensitive topics have the potential to sow real violence or undermine due process.”

Analyst Kyle Findlay played a key role in identifying the so-called Guptabots deployed as part of a campaign by British PR agency Bell Pottinger. The Guptabots were social media accounts run to discredit critics of the then South African president Jacob Zuma and his friends. They were named after the Gupta brothers who were accused of having a corrupt relationship with Zuma. The bots helped sow racial tensions in the country by accusing critical journalists of spreading a “white monopoly capital” narrative.

“For now, deep fakes have statistical patterns present in them that make them identifiable by machines,” said Findlay. “Over time, these might be smoothed over by the makers. For now, though, in some cases there are ways of identifying them algorithmically.”

He says the war against deep fakes “will turn into an evolutionary arms race. Tools for detection will arise and be circumvented”.

He added: “We might need to supply journalists with automated ‘image provenance’ tools, like the plug-ins that you use for reverse image search to automatically trace back the ‘share’ trail of all media to their sources.”

But his advice is ultimately non-technical and encourages the use of good old-fashioned journalism.

“Treat everything with suspicion. Focus on names you trust and insist on visible trails linking the media that you are viewing back to those trusted sources.”

Tips to Help you Identify Deep Fake Videos

THERE ARE WAYS, for now, to help you identify a deep fake.

Here are some giveaway signs to look for in a manipulated deep-fake video (based on tips by artist and coder Kyle McDonald). Check out his blog at www.medium.com/@kcimc for example photos and additional tips and details