Abstract

Medical journals are no longer fit for purpose and bestow credibility on research that often does not deserve it, argues

Medical journals, the main way in which medical research reaches both doctors and the public, are a corrupted form of communication. That’s the melancholic conclusion I reached after 25 years as an editor on the BMJ (formerly the British Medical Journal) and two months in a 15th-century palazzo in Venice in 2003 writing a book. The Trouble with Medical Journals was published in 2006. So, five years later, are things better or worse?

The premise for my book was that medical journals were over-influenced by the pharmaceutical industry, too fond of the mass media, and yet neglectful of patients. The research they contained was hard to interpret and prone to bias, while peer review, the process at the heart of journals and all of science, was deeply flawed. Many of the studies journals contained were fraudulent, and yet the scientific community had not responded adequately to the problem of fraud. Editors themselves also misbehaved. The authors of the studies in journals often had little to do with the work they were reporting and many had conflicts of interest that were not declared. And the whole business of medical journals was corrupt because owners were making money from restricting access to important research, most of it funded by public money. All this matters to everybody because medical journals have a strong influence on their healthcare and lives.

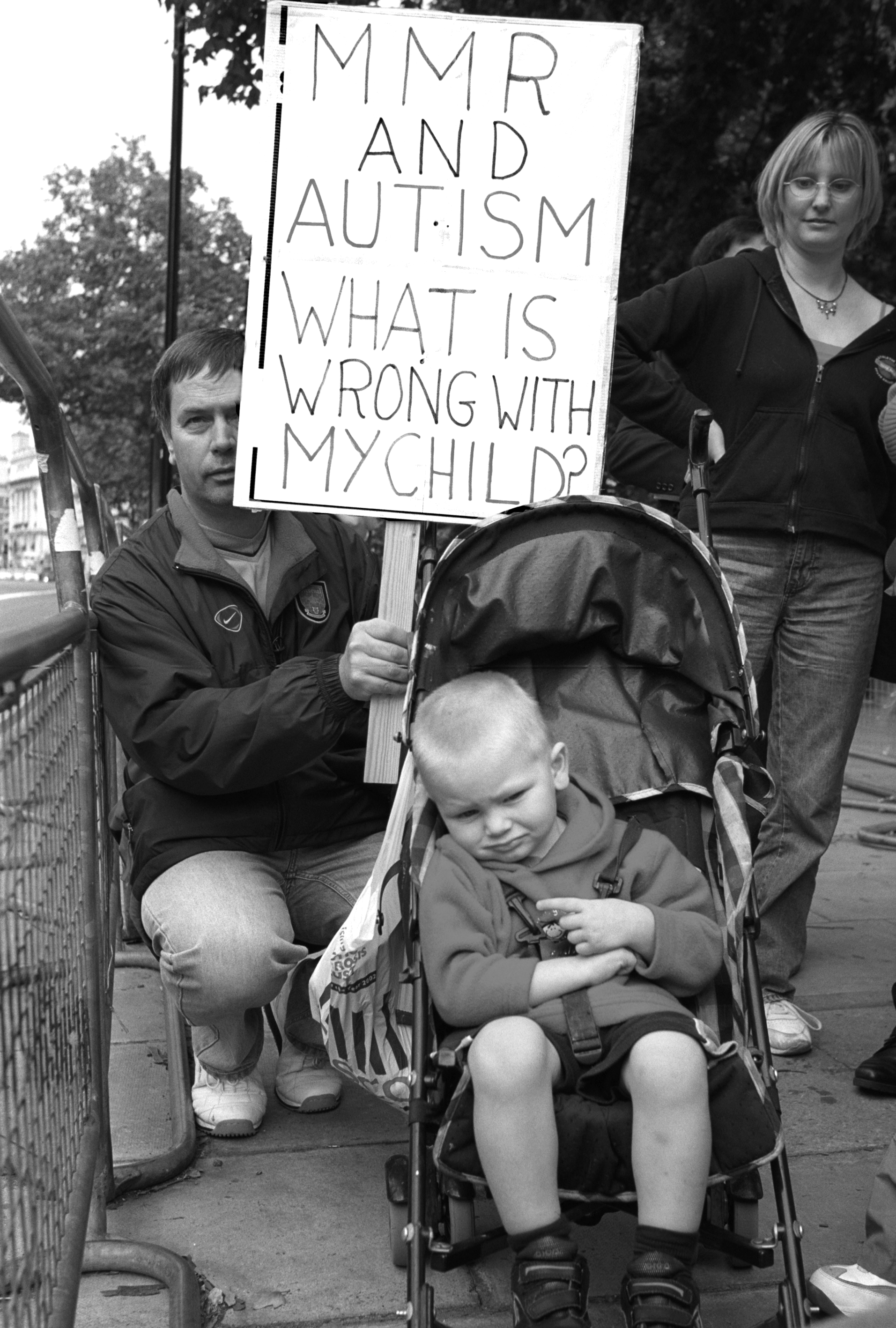

Families concerned about the reported link between autism and the MMR triple vaccine, Downing Street, UK, 2002

Credit: Janine Wiedel Photolibrary/Alamy

For 13 of my years at the BMJ I was both its editor and chief executive of the BMJ Publishing Group, which publishes a stable of journals and does much else. Since I left, in 2004, I have continued to write for journals, blog weekly for the BMJ, and serve on the board of the Public Library of Science (PLoS), a not-for-profit organisation that aims to make all scientific research ‘open access’, meaning both that it can be accessed by anybody for free and reused without having to ask permission. In the longer term, PloS wants to reinvent the publishing of science, believing that discoveries should be disseminated more quickly and effectively. It already publishes several journals, including PloS Medicine, which began in 2004, and the revolutionary PloS One, which began in 2006, of which more later. So, despite my disillusionment with medical journals I continue to be heavily involved with them. This is not entirely hypocritical because they are, for now, the best place for doctors, scientists and others to debate medicine and science.

Yet it is common for articles in journals to be scientifically poor, while some have been profoundly flawed and caused great harm. By far and away the best example of this is the notorious article by Andrew Wakefield and others published in the Lancet in 1998 that linked the MMR (measles, mumps, rubella) vaccine to autism. The idea took hold, and many parents decided not to have their children vaccinated, leading to outbreaks of measles.

The original Lancet paper described a series of 12 cases where there seemed to be a link between children developing a bowel disorder and autism and having been given the MMR vaccine. The study was scientifically very weak and was strongly criticised. A series of subsequent papers by other scientists did not find any link. In 2004, following an investigation by the journalist Brian Deer, it emerged that Wakefield had been paid by lawyers to establish whether there was a link between the vaccine and autism. He had not declared this conflict of interest and Richard Horton, the editor of the Lancet, said that he would not have published the study if he had known about it. Subsequently, ten of the 13 authors of the study retracted its interpretation that there was any link between the vaccine and autism. Wakefield has denied any wrongdoing.

The story may well have further to go. After Deer’s investigation, Wakefield and a colleague appeared before the General Medical Council (GMC), which regulates British doctors, in one of its longest-running cases. In 2010, both doctors were found guilty of serious professional misconduct and struck off the medical register. Wakefield was found guilty of dishonesty and causing children to be subjected to invasive procedures that were clinically unjustified. Both doctors have appealed the judgment to the High Court.

Deer continued his investigations, and this year published three important articles in the BMJ. The first argues that the original study was not simply unethical, the main finding of the GMC, but fraudulent, with many dishonest claims being made about the cases. The second article reveals Wakefield’s plans for a potentially lucrative commercial deal for his research, and the third article, in many ways the most troubling, argues that the Lancet conducted a rapid and inadequate investigation when serious accusations about the original paper were brought to it by Deer in 2004. Fiona Godlee, the editor of the BMJ, wrote about Deer’s allegation: ‘It is hard to escape the conclusion that this represents institutional and editorial misconduct.’

The world of full-time medical editors is small, and Godlee and Horton have known each other for years. Godlee thought long and hard before publishing the article about the Lancet and concluded that a journal ought to be willing to criticise anybody, including its friends, when necessary. Interestingly, the Lancet has never responded publicly to the BMJ article and, as far as I know, the Lancet’s owners are not conducting an inquiry. Is this an example of editorial misconduct?

Some of the best researchers may have misbehaved

What this sorry episode illustrates is that you cannot automatically trust the material that appears in medical journals – it may be scientifically weak or even fraudulent. Since my book was published there have been many examples of fraud. Indeed, in September, the Economist told the story of a group from Duke University in North Carolina who published a study in the New England Journal of Medicine, the world’s leading medical journal, describing how they could predict the course of a patient’s lung cancer using genetic techniques. Soon after they published another study, in Nature Medicine, of using genetic techniques to predict which cancers in individuals would respond to chemotherapy. These were important developments, and other groups tried to replicate the work. They couldn’t and discovered many errors in the original studies. Duke University was asked to investigate but found no problems. Then it emerged that one of the Duke researchers had lied about his qualifications, including that he had been a Rhodes scholar in Australia. (It was odd that nobody picked up on this earlier as Rhodes scholars all go to Oxford.) At this point everything unravelled, and the studies have since been retracted – meaning that they should be ignored.

Unfortunately this sort of story is very familiar. Universities naturally find it very uncomfortable to think that some of their best researchers may have misbehaved and tend to be slow to investigate and too quick to find ‘no problem’. That inevitable conflict of interest was compounded in this case by the university having ties with companies that the researchers were involved with. The journals were also reluctant to publish criticisms of the studies, and those who did criticise the work had to publish their findings in less high-profile journals.

The Economist concludes its piece with the observation that ‘the episode does serve as a timely reminder of one thing that is sometimes forgotten. Scientists are human, too’. I’ve long argued that we may have such difficulty managing misconduct in science because we cling to the idea that science is an objective activity and somehow not prey to the human failings that are seen in every other walk of life. It is, of course, a human activity carried out by human beings, and so there will be misconduct. Indeed, because the stakes can be so high and the controls so poor there may be more misconduct in science than in many other spheres.

Daniele Fanelli, from Edinburgh University, systematically reviewed 21 studies that asked scientists about misconduct in 2009 and found that 2 per cent admitted having fabricated, falsified, or modified data at least once and 14 per cent said that they knew of colleagues doing so. These are serious offences. The review also looked at ‘questionable research practices’ (things such as intentional non-publication of results, biased methodology and misleading reporting) and found that 34 per cent of researchers admitted to these and 72 per cent thought that their colleagues had been guilty of them.

The review did not consider plagiarism and professional misconduct (things such as guest authorship and failing to declare conflicts of interest), but the results are staggering, even terrifying. If they are to be believed – and they come, remember, from a systematic review of many studies – then there may be profound corruption of the scientific record. Many have argued that it is the common minor distortions, the ‘questionable research practices’, that cause more harm than the high-profile cases of fabrication and plagiarism.

Credit: John McPherson/www.CartoonStock.com

The response of the scientific community to research misconduct has been weak. Many countries have no system at all for preventing and responding to research misconduct, and the British story is one of procrastination and obfuscation. For a long time scientific leaders in Britain argued that the problem was small (without any evidence at all), little harm was done and science was in any case self-correcting. But at a meeting of leaders in medical research in Edinburgh in 1999 it was agreed that something had to be done. Nothing happened until a few people who were deeply concerned managed to set up the UK Panel for Research Integrity in Health and Biomedical Sciences in 2006, now known as the UK Research Integrity Office (UKRIO).

I am a member of UKRIO and, as our legally qualified chairman told us, we have the legal status of a ‘cricket club’– in other words, we are a group of people who have come together concerned about an issue but with no legal powers to investigate or enforce anything. We can advise and support but little more. This has caused many critics to describe UKRIO as ‘toothless’ and hence ineffective. The organisation has certainly had a shaky history. It began slowly, and last year the UK Research Councils and Universities UK decided that they would no longer support it financially and would eventually – after an interregnum – start their own organisation. UKRIO was not against a new organisation but was highly sceptical that any new body would actually appear. It feared that the image of UK science would be damaged by having taken two decades to set up any kind of body and then disbanding it after a few years. So UKRIO has continued, seeking funding from individual universities.

Although it is ‘toothless’, it is probably the best kind of institution for Britain at the moment. Universities, where most research is done, are very keen to preserve their independence and resist strongly a body that would have statutory powers to investigate and, if necessary, punish them. They are, however, open to support and advice, and it may be that if most universities do join UKRIO then it will be embarrassing not to belong – and that the power of peer pressure to be serious about research misconduct may be more effective than new laws.

While national scientific authorities have been slow to respond to misconduct, journals have done much better. I was a founder member of the Committee on Publication Ethics (Cope) in 1997, and we began as a small self-help group for editors, helping each other respond to the many ethical problems editors faced once they chose to recognise them. At the BMJ we underwent a transformation from thinking that ethical and misconduct issues in papers submitted to the journal but not accepted by us (about 90 per cent of those submitted) were not our problem, to recognising that we had a duty to act. The result was that we went from dealing with one or two cases a year to dealing with perhaps 20.

Cope now has some 7,000 members from across the world, including many non-medical journals. It has full-time staff, a code of conduct and guidelines on ethical issues and holds seminars around the world and funds research. It has dealt with over 400 cases of misconduct and has a database of them all.

There is still, however, a considerable mismatch between the scale of the problem of misconduct, as shown by the systematic review, and the response. We might expect universities to be dealing with dozens of cases, not just a handful, and hundreds of ‘retractions’ of articles. Retraction of a study means that it can no longer be trusted. Retraction of an article is signalled on databases such as PubMed although, ironically, retracted articles tend to be cited just as often as those that are not retracted, showing how sloppy people are in their ‘scholarship’.

Retractions of articles have increased sharply since 1980, but only about 0.02 per cent of articles are retracted – and only a third of those for misconduct. We have to worry that there are many more studies that should be retracted, but retraction is embarrassing for authors, editors, journals, publishers and funders. The temptation to turn a blind eye is huge.

Interestingly, higher profile journals have higher rates of retraction. Why? Nobody knows for sure, but it might well be that those journals have more staff and more resources to work through the complex process of organising a retraction. But it might also be that those journals are attracted to the new, exciting and sexy, the very characteristics that fraudsters are trying to achieve.

Journals may be attracted to the exciting and sexy

One of the biggest insights I have gained into journals since I wrote my book came from a paper published in PLoS Medicine that described the ‘winner’s curse’. This is an economic concept that says that in a bidding process the person who wins may well have overbid, promising too much or offering too low a price. Those who regularly make bids recognise the problem and may reduce the attractiveness of their bid, and those who select bids may ignore the ones that seem most attractive. John Ioannidis, a brilliant researcher who has done more than anybody to identify serious problems with the publishing of science, was one of the authors of the paper, and the implication is that the top journals, which fight hard for the most important papers, may well be filled with papers suffering from the winner’s curse. This might explain the higher rate of retraction.

Ioannidis and his colleagues have some evidence to support their hypothesis. A study from the Journal of the American Medical Association (JAMA) showed that of the 49 most highly cited papers on medical interventions published in high-profile journals between 1990 and 2004, a quarter of the randomised trials and five of six non-randomised studies had been contradicted or found to be exaggerated by 2005. A second study looked at original studies of biomarkers with 400 citations or more from 24 highly cited journals. These studies were compared with subsequent meta-analyses that evaluated the same biomarkers, and of the 35 highly cited original studies, 29 showed an effect size larger than that in the meta-analyses. What this means is that if people are reading only top journals – Nature, Science, Cell, New England Journal of Medicine, Lancet, JAMA, BMJ – they are getting a distorted view of science. Treatments will seem more effective and diagnostic tests more accurate than they actually are.

This is a profound observation because it undermines the main reason that scientific journals exist. Every year hundreds of thousands of scientific studies are published. These could all be simply put on databases (as is starting to happen), but instead there is an elaborate, expensive and time-consuming process for sorting these papers with the idea that the most important appear in the high-profile journals. In other words, the main value of journals is that they sort the hundreds of thousands of studies – they are, in effect, a device for coping with ‘information overload’. The work of Ioannidis and others suggests that far from sorting the information, they are introducing a bias into the system.

The other main function of scientific journals is quality assurance. They do this through peer review, and the implicit promise is that what appears in journals is scientifically sound and can be trusted. In my book, I described the substantial evidence that much of what appears in journals is scientifically weak and expressed my scepticism about peer review. Since then I have reached the conclusion that little would be lost and much gained if we were to abandon what is now called ‘pre-publication peer review’.

In the 80s, people began to study peer review and reveal how those studies have failed to show any benefit but have revealed many problems: peer review is slow, expensive ($1.7bn a year), inefficient, wasteful of academic time, largely a lottery, ineffective at spotting error, anti-innovatory and unable to detect fraud. A recent example I have encountered of this concerns an innovatory study that was reviewed by 24 people on behalf of four journals over two years and was eventually published without any important changes.

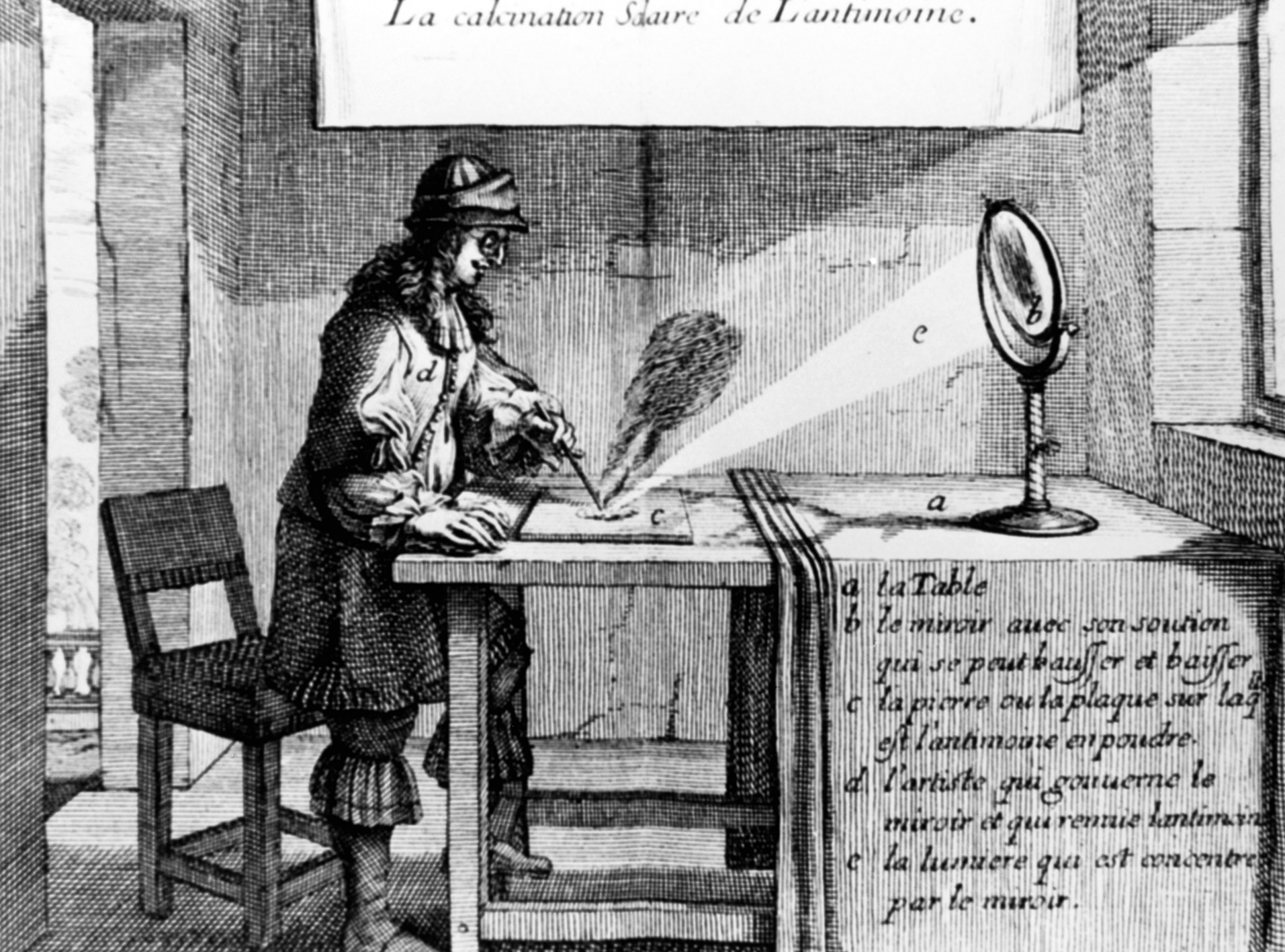

The heyday of scientific research in the 17th century when peer review took place through open debate

Credit: Science Photo Library

Distressingly, few scientists and editors are aware of the evidence on peer review, yet many continue to believe in it passionately. Ironically, pre-publication peer review is a faith-based rather than an evidence-based process. What I and others who are sceptical of pre-publication peer review argue is that post-publication peer review has always been the real peer review, in that it decides, ultimately, the importance of a study. By post-publication peer review I don’t mean the comments attached to published papers, which are exceedingly sparse, but rather the market of ideas, whereby readers, commentators, journal clubs, systematic reviewers and others digest a study. I fear that the main reason that people stick so enthusiastically to pre-publication peer review is summed up by Upton Sinclair’s observation: ‘It is difficult to get a man to understand something, when his salary depends upon his not understanding it.’

Were people to accept that pre-publication peer review is worthless and that the sorting of studies by publishing the most important ones in the top journals is actually introducing bias, then the two main pillars of scientific journals collapse. Huge vested interests are opposed to these ideas because journal publishing is a business generating billions of dollars and employing tens of thousands of people.

The newspaper business generates even more income (although perhaps lower profits) and provides more jobs, but it is dying. The Economist recently argued that newspapers may prove to be an ‘historical aberration’ and that news will return to its roots of being spread by word of mouth in markets and taverns – only now it will be spread through the internet. I believe that scientific journals may also prove to be an historical aberration and that the dissemination of science could return to the 17th century when scientists went to meetings and presented their studies. The assembled scientists would then discuss and critique the studies. We can imagine the intensity, energy, and passion of those meetings. This was the original peer review: immediate and open. The same could happen now on a global scale, again through the internet.

One development since I published my book may prove to be a game-changer – that is, the publication of thousands of articles on open access databases after peer review that doesn’t ask whether a study is ‘important and original’ but simply whether the conclusions are supported by the methods and data. PLoS One was the first of these, but it has spawned almost a dozen imitators. PLoS One has grown dramatically and is now publishing almost a thousand papers a month, making it by far the largest scientific journal in the world (if it can be called a journal).

Scientific journals may prove to be an historical aberration

The reason that so many publishers have copied the idea is not, I fear, because they see it as important for science, but rather that it can be highly profitable. If their journals attract as many papers as PLoS One and if the scheme continues to grow at the same rate then it might be that traditional journals begin to disappear. So far this hasn’t happened, and some of them continue to be extremely profitable. The profit margins of scientific publishers such as Elsevier and Springer still run at above 30 per cent. The basis of their success is that their most valuable asset – the science – comes for free. Others have paid for it, usually with public money. Interestingly, both the Guardian and the New York Times have recently run articles critical of the high prices and profits of scientific publishers.

Pressure for open access to research is steadily mounting from funders and universities. Many universities have created open access repositories for studies from their staff (known as ‘green open access’ in contrast to ‘gold open access’, which means published in journals), and some traditional journals will allow authors to place their articles in the repositories, which could become alternatives to journals.

There are now nearly 5,000 open access journals and 200,000 open access articles, but in 2009 only 7.7 per cent of articles were gold open access. So 90 per cent of research articles are still locked away behind access controls with the fee for access to a single article usually around $30. I was recently looking for the first piece I ever published in a medical journal – a short letter in the Lancet in 1974. It would have cost me $31.50 to access the letter, something like 25 cents for every word.

I have been arguing since the mid-90s that eventually all studies would be open access because the drivers, particularly the financial drivers, are so strong. There are arguments over the economics, but it seems almost certain that all research could be open access through an author-pays (or more correctly institution-pays) model for less than is currently spent on journals. The age of austerity leads to increasing pressure for reform, but so does the drive for economic growth, because the more people who have access to the research the more likely that new products, services and businesses can develop.

The main factor that holds back universal open access is academic credit, which is tied to where people publish. In order to flourish as a scientist you must appear in prestigious journals such as Nature, Science, Cell, New England Journal of Medicine and the Lancet, none of which are open access journals. Most of the ‘second division’ of journals are also closed access. PloS tried to end this stranglehold by creating prestigious open access journals, but it has not yet been able to make the breakthrough.

Now, however, three major research funders, including the Wellcome Trust, are planning to launch an open access journal in 2012 that they hope will change the game. The journal, which will compete head on with Nature, Science and Cell, will be edited by scientists rather than ‘failed postdocs’, as it disparagingly sees professional editors. Peer review and publication will be rapid, reviewers will be paid and everything will be open access. The funders probably have enough money and clout to succeed.

Ironically, attributing credit to academics based on where they publish is unscientific – because the impact factor of a journal (the number of citations divided by the number of citable articles) is driven by a few articles that are highly cited. There is little correlation between the citations of articles in a journal and its impact factor.

It makes much more sense to judge an individual article on its own merits rather than use the journal in which it is published as a surrogate measure of quality. This is now possible because PloS has introduced something called article level metrics. If you click on any article published in a PloS journal you can see the number of views and downloads, a graph of views over time (showing whether the paper is still ‘alive’), citations in several databases and other measures of impact. These data are almost in real time and they are a much better way to judge the importance of an academic’s paper. These metrics are also a potential gamechanger, but most journals still don’t have them – even though the software that PloS uses is open source.

In general, I think that most of the problems surrounding medical journals that I first identified in my book persist today. Perhaps if I take another snapshot in five years’ time there will be more improvements, or perhaps journals will have begun to disappear, proving after all to have been nothing more than an ‘historical aberration’.□