Abstract

Gran Sasso National Laboratory, Assergi, Italy, where an international team of scientists said they had recorded neutrinos travelling faster than light, 26 September 2011

Science is the ultimate totalitarian regime, says

If he worked in any other sphere, marine biologist Donald Williamson might get a bit of sympathy – maybe even kindness. In 1990, he slipped and fell while collecting samples on a beach. Ever since, he has been confined to a wheelchair. The accident didn’t stop him contributing to science, even in his late 80s. But to his scientist colleagues, this human tragedy means nothing: his ideas are still ‘the most stupid thing that has ever been proposed’.

In August 2009, Williamson published a paper on the origin of butterflies. Once upon a time, he suggested, a winged insect inadvertently fertilised its eggs with sperm from a velvet worm. It’s easy to see what Williamson was thinking. Male velvet worms place their sperm on the female’s skin, rather than anywhere out of sight, so an insect could pick it up. What’s more, these worms do look a bit like the larvae of Micropterix, a relative of the butterflies. If the hybridisation did happen, the Earth might have seen the birth of an organism girded with two separate body growth programmes in its DNA: one larval, one for a winged insect. Hey presto: the mysterious double-life cycle of the butterfly.

The idea passed enough muster to be published in the Proceedings of the US National Academy of Sciences. And then the knives came out. While lay observers might consider it an interesting and worthwhile contribution, most scientists working in insect development were ruthless. One said the paper would be more suited to the ‘National Enquirer than the National Academy’. Another was less emotive but similarly dismissive: ‘The paper is hypothetical and speculative and not a single bit of evidence supports the idea.’

Williamson, who will be 90 in January, has said he is ‘on a straight-line course for posthumous recognition’. His peers disagree: there will be no recognition, posthumous or otherwise, because his contention is ‘bizarre and unsupported’. Examination of the genomes of the creatures involved show no evidence in the idea’s favour, and Williamson’s ‘execrable piece of work’ has been torn to shreds.

Science is brutal. The physicist Carl Sagan once wrote that its pursuit creates ‘a roiling sea of jealousies, ambition, backbiting, suppression of dissent, and absurd conceits’. It is a totalitarian regime living among us. But this, it can be argued, is a strength, not a weakness.

Though it seems harsh and heartless at times, science owes its success to the hard time that new ideas receive. They must pass the test of experiment, then verification of that experiment, and even then a scientist’s peers and colleagues will call for extraordinary evidence if the results fly in the face of accepted wisdom. When Italian researchers suggested they had seen neutrinos flying at faster-than-light speeds in September this year, excited news stories abounded, but scientists showed nothing but intense scepticism. Why? According to Einstein’s special theory of relativity, nothing can travel faster than light. Reports of a break in the law of physics might provoke journalists to dizzy speculation, but scientists are much harder to move: extraordinary claims, as the adage goes, require extraordinary evidence.

Even when extraordinary evidence is in hand, recognition is not guaranteed; some ideas wait in the wings for decades before achieving the status of orthodoxy. Those who came up with the idea might be long dead by then. Alfred Wegener, who first proposed that the continents might have drifted across the surface of the earth, had been dead 34 years before his notion was accepted.

Science, then, lives on the horns of a dilemma. In order to progress, it must destroy its most cherished truths. And it does that only when it absolutely must.

Nature abhors a vacuum but not asmuch as scientists

The philosopher of science Thomas Kuhn addressed this issue in his 1962 book The Structure of Scientific Revolutions. Citing example after example, Kuhn showed that our understanding of the universe progresses through a gradual process of evidence piling up against an orthodoxy until it can no longer be ignored. This is the moment Kuhn called ‘crisis’. The trouble is, it is not enough for an orthodoxy to fail all experimental tests; there must be a replacement that can be slotted into the failing paradigm’s place. Nature abhors a vacuum, but not as much as scientists do.

That is why Ptolemy’s theory describing the way the planets orbited Earth had to be tweaked every time problematic new data came in. Until Copernicus arrived with the extraordinary and heretical (to scientists as well as the Church) notion that Earth and the other planets orbit the sun, there was no alternative but to add new ‘epicycles’ to the Ptolemaic system.

A contemporary example is the scientific explanation for how we distinguish between scents. We know that the nose contains variously-shaped pockets, known as receptors, into which odiferous molecules lodge. Some molecules ‘turn on’ the receptors, causing them to send an electrical signal into the brain.

The standard view is that it is a ‘lock and key’ mechanism: only if the molecule has the right shape to unlock the receptor will the signal be sent. It sounds plausible, but we can recognise around 100,000 smells, and have only 400 differently-shaped smell receptors. More problematic still, some chemicals smell similar but look very different, while others have the same shape but smell different.

There is a competing theory that is a much better fit – it has to do with the way the molecules vibrate. However, it has almost no traction within the smell-researching community because, for now, shape simply remains the dominant paradigm, and crisis point has not yet been reached.

Trace this desire to stick with the orthodoxy down to its source and we find that the problem is human beings. The astronomer Donald Fernie once made a wry observation about the tribal instincts of those working in his field: ‘There are times when we resemble nothing so much as a herd of antelope, heads down in tight formation, thundering with firm determination in a particular direction across the plain’, he said. ‘At a given signal from the leader we whirl about, and, with equally firm determination, thunder off in a quite different direction, still in tight parallel formation.’

Fernie’s invocation of the leader’s role is telling. Science is meant to be about ideas, laws and principles, rather than the inclinations of a senior figure. But science is a human endeavour, and humans have always followed leaders. Here’s what biologist Carl Lindegren had to say on the subject:

One likes to think of science as divorced from personalities because one seeks the guidance of a principle rather than a person. Thus, the individual scientist experiences a feeling of freedom since he has the impression he lives in a community in which the law and not the man is the ultimate arbiter. This truly democratic practice has led to the fallaciously democratic practice of determining the validity of a scientific view by finding out how many other scientists agree with it. Voting in this context is so much influenced by past training and indoctrination that it tends to reject the new and to reaffirm the old.

This has repercussions in funding, of course: the money is distributed on the recommendations of senior figures, most of whom have a vested interest in ensuring future research validates the orthodoxy they helped establish.

If further proof were required that science runs along totalitarian lines, it is the fact that acts of sedition and anarchy are the only way to make progress.

Faced with overwhelming scepticism from the medical establishment, Australian doctor Barry Marshall resorted to self-experiment in order to prove that bacteria are the most common cause of stomach ulcers. In an experiment carried out without ethical approval, and with the risk of serious danger to his own health, Marshall drank a cupful of the bacteria he believed caused ulcers. The ensuing illness and the results of biopsies of Marshall’s stomach (carried out by colleagues who took a ‘don’t ask, don’t tell’ attitude) forced a complete turnaround in the medical establishment’s advice on stomach ulcers within just a few years. What had once been caused by stress, alcohol, smoking and poor diet was now, thanks to an underhand strike against the establishment, attributed to a pathogen.

There are many such stories in the history of medicine, but self-experiment without ethical approval is only one kind of anarchy. We learned the structure of DNA through similarly subversive means. Crick and Watson had been instructed to stop their research by bosses at Cambridge; they ignored the orders and carried on using methods that included what Crick referred to as ‘burglary’ of data from collaborators working at King’s College London. No one knew how to copy DNA quickly until Kary Mullis established a different way of thinking about the problem by using psychoactive drugs, including LSD, to retrain his brain. He won a Nobel Prize for his troubles.

In the absence of strong supporting evidence, Stanley Prusiner shattered the paradigm of disease transmission by a programme of vigorous PR work on behalf of his ‘prion hypothesis’. This was the idea that the transmission of diseases such as BSE and CJD involved a particle that Prusiner named a ‘prion’ – even though he couldn’t define what a prion actually was. Colleagues howled (and some resigned) at his subversion of the normal scientific way of working: ‘There’s no point creating a name for something that we don’t even know exists yet’, one colleague complained. Nonetheless, Prusiner was rewarded with a Nobel Prize and a position of pre-eminence in the field – and he broke a long-standing deadlock in the pursuit of an understanding of these diseases.

Having been denied the chance to publish through normal channels, Lynn Margulis resorted to publishing a book on her hypothesis about how complex life emerged in evolutionary history. She ‘didn’t follow the rules and pissed a lot of people off’, is how one expert put it.

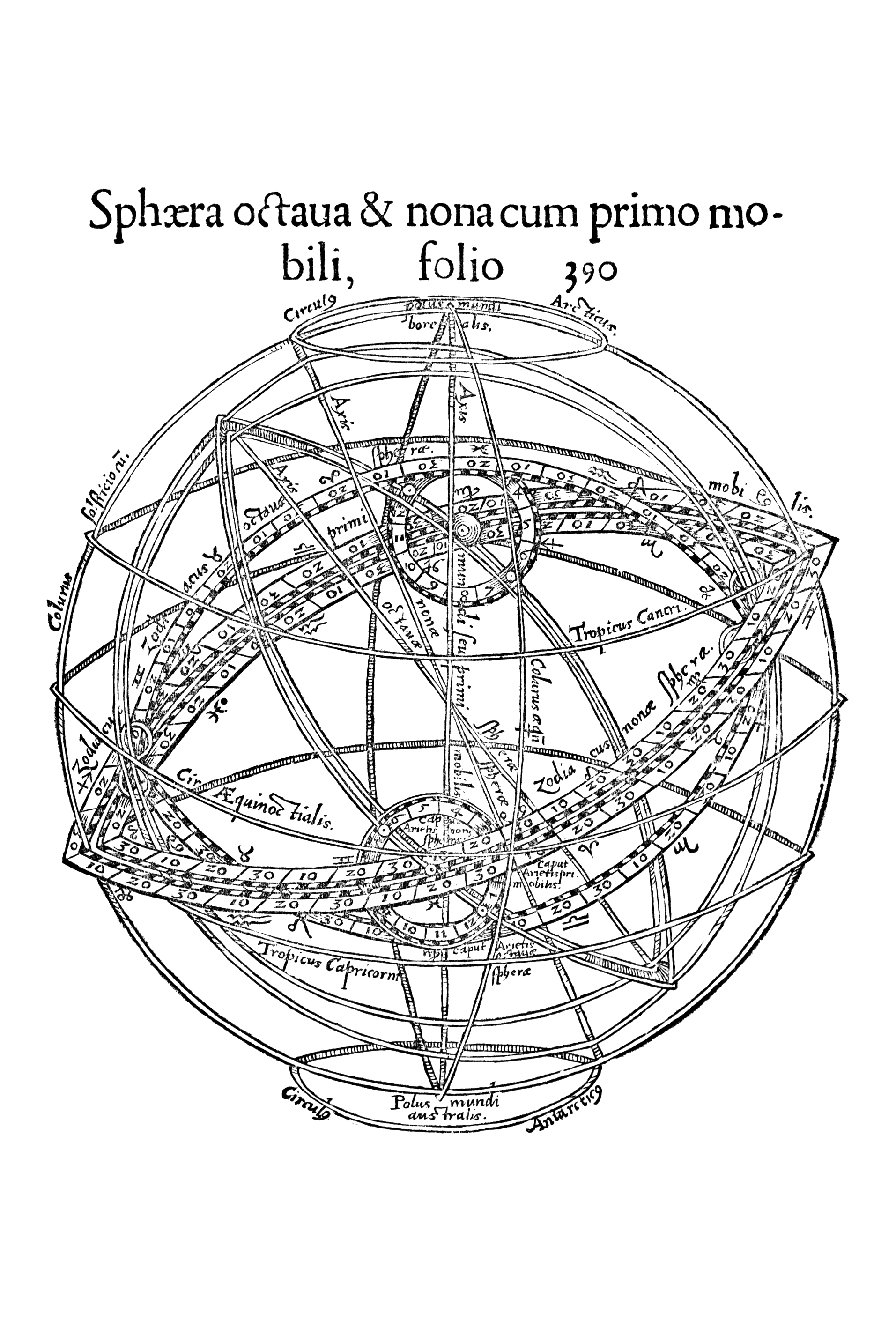

Peuerbach planetary model by Erasmus Oswald Schreckenfuchs, an attempt to reconcile theories of Aristotle and Ptolemy, 1556

Credit: Royal Astronomical Society/Science Photo Library

In the light of such stories of rule-breaking in the pursuit of discovery, it is tempting to declare we should accelerate scientific progress by making it easier to challenge the orthodoxy. Perhaps, for instance, we should lower the standards required for a theory to be taken seriously, or for experimental proof to be accepted?

Though tempting, it is a fool’s errand because nature is the only arbiter of science. As Galileo put it, ‘Nature is inexorable and immutable; she never transgresses the laws imposed upon her, or cares a whit whether her abstruse reasons and methods of operation are understandable to men.’ That is why scientists place such great store by the experiment. You can make up ideas about the way things are, but ask a question of nature, and she will soon set any delusions straight.

Modern science was founded with this attitude: the Royal Society’s motto is nullis in verbia: take nobody’s word for it. Scientific truth is established only through experiments carried out in the presence of, or communicated to, many witnesses who all agree on what has been observed and what it means.

That explains – partially, at least – why science progresses so slowly. Nature does not relinquish her secrets easily: experiments are difficult, and successful experiments are rare. Even rarer is the profound result whose implications are universally accepted. ‘Nearly all scientific research leads nowhere,’ Nobel laureate Sir Peter Medawar once said.

The difficulty of getting answers from nature is illustrated by the fact that scientists are already stretching the rules to breaking point.

In 2005, the journal Nature published a study entitled ‘Scientists Behaving Badly’. It was based on a survey in which one-third of the scientists polled admitted to indulging in some kind of research misconduct in the previous three years. That might be anything from cherry-picking data that supported a favourite hypothesis to carrying out medical research without ethical approval.

Usually, the motivation is that, without bending the rules, generating reliable, reproducible results whose implications can be clearly drawn is just too hard. Another survey, published in 2006, said such rule-bending is ‘normal misbehaviour’ and plays ‘a useful and irreplaceable role’ in science.

To argue that scientists simply do experiments and accumulate a heap of indisputable facts is to miss the subtleties of the endeavour. ‘Facts’ are interpretations of the results of experiments. Those interpretations are not always correct, as Francis Crick and James Watson learned – almost to their cost. As they were racing towards a structure for DNA, the shadow of Linus Pauling loomed large. Across the Atlantic, Pauling, an eminent chemist whose reputation eclipsed that of Crick and Watson, was also closing in on the answer. In order to get there first, Crick and Watson ditched an orthodoxy, in the form of the accepted value for the angle formed by a chemical bond. A colleague looking over their shoulder told them the textbook value was a guess that had been repeated so often it had gained the status of fact.

James Watson and Francis Crick with their model of part of a DNA molecule, Cambridge, UK, 1953

Credit: A Barrington Brown/Science Photo Library

Crick said afterwards he learned ‘not to place too much reliance on any single piece of experimental evidence’. Watson’s view was similar: ‘Some data was bound to be misleading if not plain wrong’, he said.

Einstein was similarly unwilling to be a slave to experimental results. His theory of special relativity did not fit with data on the way electric and magnetic fields deflected beams of charged particles. A rival theory was far more ‘accurate’, but Einstein shrugged; he knew his theory was right, and the others wrong for other reasons. In the end, his scepticism about the accuracy of the data was proved right.

We can’t, then, lower the bar on the practice of science; it has already been lowered as far as is commensurate with the reliability required of experiments.

There is another option, however. We can’t make it easier to overthrow a regime, but we can shift the balance of power by increasing the number of revolutionaries on the attack, and reducing the numbers working in defence of the orthodoxy.

Scientists rely on a subsection of their population being possessed of the ego and tenacity of the adventurer seeking new mountains to climb and new territories to claim. These subversive, anarchic individuals are willing to question the orthodoxy and propose alternatives. The size of, and attitude towards, this minority group determines whether a particular field will thrive or stall.

At the moment, science does not attract these types in large numbers. The few studies that exist in this area suggest that students with extrovert characters drop mathematics and science subjects at the first opportunity. The most explicit study was done on Dutch schoolchildren, and published in 2010. Amongst the nearly 4,000 children studied, the researchers ‘observed that students who took advanced mathematics, chemistry, and physics were less extroverted and more conscientious than students who chose a less science-oriented set of subjects’.

Clearly, students’ subject choices are related to their personality. If science wants to help itself battle orthodoxy, and thus make better progress, it has to address its lack of appeal to risk-taking, adventurous types.

There is just one problem with this: the rewards are extremely hard to quantify. Scientists rarely get rich. Even fewer of them become famous. The French physiologist Claude Bernard called the joy of discovery ‘the liveliest that the mind of man can ever feel’, but it’s hard to sell that joy to a generation that has never experienced it. Then there is the appalling treatment meted out to many dissenters, the likelihood that the dissent is misguided anyway, and the possibility of limited or no recognition within your lifetime even if you are right.

Battling the totalitarianism of science is not for the faint-hearted or the thin-skinned, so who would choose the role of the scientific revolutionary? The answer comes from the man who won a Nobel prize for his discovery of vitamin C. ‘Research uses real egotists who seek their own pleasure and satisfaction, but find it in solving the puzzles of nature’, said Albert Szent-Györgyi.

Great science is done by those driven so hard by a selfish desire to pry open nature’s secrets that they are willing to risk the oppression and condemnation of their colleagues. Such characters are few and far between; rather than worry about suppression of dissent, we should perhaps be grateful that 400 years of science has produced enough of these people to get us where we are today. □