Abstract

This study investigates what Artificial Intelligence (AI) and the generative pre-trained transformer (ChatGPT) software offer to engineering education. First, our literature review shows key challenges and considerations linked to infrastructure and resource requirements, digital inequality, data privacy, copyright and some complex ethical issues that come with this pedagogical transformation. However, the article next contextualizes AI and ChatGPT within foundational learning theories such as Constructivist Learning Theory, Personalized Learning Theory, Cognitive Load Theory, and Vygotsky's Zone of Proximal Development, showing how AI and ChatGPT can shift educational practices towards more individualized, dynamic, and active learning experiences. By bringing together practical considerations demanding attention when introducing AI and ChatGPT to engineering course, and the learning theories the digital tools can promisingly enact, this study significantly contributes to ongoing discourse on AI and ChatGPT in engineering education. Different stakeholders, including educators, students, institutions, and industry partners contemplating AI and ChatGPT introduction are likely to be weighing up options. Readers considering whether pedagogical benefits are worth the considerable care needed to introduce these tools in their own engineering faculty should find this article helpful.

Introduction

The complicated nature of engineering concepts and the constant pace of technological innovation affect engineering education, leading to various pedagogical challenges. 1 One possible solution to alleviate these challenges is the integration of artificial intelligence (AI) and the generative pre-trained transformer (GPT). While the underlying model is GPT (a type of large language model), this paper focuses specifically on ChatGPT, the publicly accessible chatbot developed by OpenAI based on GPT-3.5 and GPT-4. ChatGPT differs from other GPT-based tools (e.g., BioGPT, EinsteinGPT) in its general-purpose design and conversational interface that allows for interactive educational use across disciplines. Through AI's visualization capabilities and ChatGPT's ability to provide personalized explanations, complex engineering ideas can become more comprehensible.2–4 For clarity, this study primarily references ChatGPT-3.5 and GPT-4, which were the most widely available and extensively documented versions of OpenAI's ChatGPT at the time of writing. These versions offer well-established examples of the capabilities, pedagogical applications, and limitations relevant to engineering education. We specifically focus on ChatGPT due to its user-friendly conversational interface, extensive accessibility to educators and students, wide adoption across educational contexts, and substantial prominence in current academic and public discussions. These characteristics make ChatGPT a particularly representative example for exploring AI integration into engineering education. Delivering individualized attention in large-scale instruction can be challenging, 5 but AI can offer individualized learning paths based on performance data analysis. There is potential to bridge the gap between theoretical knowledge and practical application by utilizing ChatGPT's AI-based virtual laboratories and simulations, and its real-time feedback. These digital tools’ capacity to stay updated with the latest breakthroughs can assist educators in keeping the curriculum current with rapid technological advancements. 6 Innovative AI evaluation methods can complement traditional assessment approaches, while ChatGPT's real-time feedback fosters continuous development for engaged learners. Student engagement is likely to increase given AI's gamified learning environments and ChatGPT's interactive features. Additionally, ChatGPT can serve as a valuable tool for developing students’ written communication skills,7–10 often overlooked in engineering programs.

Traditional pedagogies usually cannot cater to the diversity of each student's learning preferences, pace, and intellectual capacity. 11 Particularly in a challenging field like engineering, this lack of personalization can result in reduced interest and understanding. 12 Furthermore, logistical constraints and the inability to simulate real-world engineering scenarios within a classroom often inhibit the immersive, hands-on experiences crucial for developing practical skills. 13 Additionally, the regular update cycles of traditional curricula may struggle to keep pace with the rapid technological advancements in the engineering industry, leading to a gap between training and the industry practices that graduates face. With their inherent flexibility, interactive nature, and continuous learning capabilities, AI and ChatGPT offer practical solutions to these challenges. For instance, ChatGPT can offer personalized explanations and immediate feedback, helping students grasp complex concepts interactively. However, it is important to acknowledge ChatGPT's limitations: its responses can become predictable after extended use, which may reduce its effectiveness in promoting deep critical thinking. While ChatGPT can support personalized education at scale and facilitate experiential learning through guided problem-solving and scenario-based prompts, its ability to enable immersive simulations varies across engineering disciplines. In areas such as software engineering or design-focused fields, ChatGPT may effectively support exploratory learning. However, in branches requiring precise numerical computation or domain-specific modeling—such as aerospace or chemical engineering—it may serve better as a supplemental tool rather than a standalone simulation platform. This highlights the need for discipline-specific integration strategies when adopting ChatGPT in engineering education.14–16

Recent research into how to successfully incorporate AI and ChatGPT into engineering education to enhance instructional strategies and learning outcomes considers educational, technological, socio-economic, and ethical aspects.6,10 On the pedagogical front, the optimal combination of AI and human instruction, the creation of AI-compatible curricula and learning modules, and effective implementation methodologies have been investigated. 17 In terms of technology, educators must consider the infrastructure requirements for widespread AI integration, the limitations of current AI technologies in the learning environment, and potential future paths for their development. 18 From a socio-economic perspective, addressing the digital divide amongst learners, ensuring access to resources, and assessing the financial implications of AI implementation are crucial considerations. 10 Finally, careful evaluation of ethical issues, including algorithmic bias, data privacy, copyright, and the responsible use of AI technology, is important. 19 Our article contributes to discourse considering incorporating ChatGPT and AI into engineering education, leveraging their advantages while minimizing potential risks. These risks and challenges include technical limitations, ethical concerns, pedagogical complexities, and infrastructural barriers, all of which are explored in detail in later sections.

Literature review

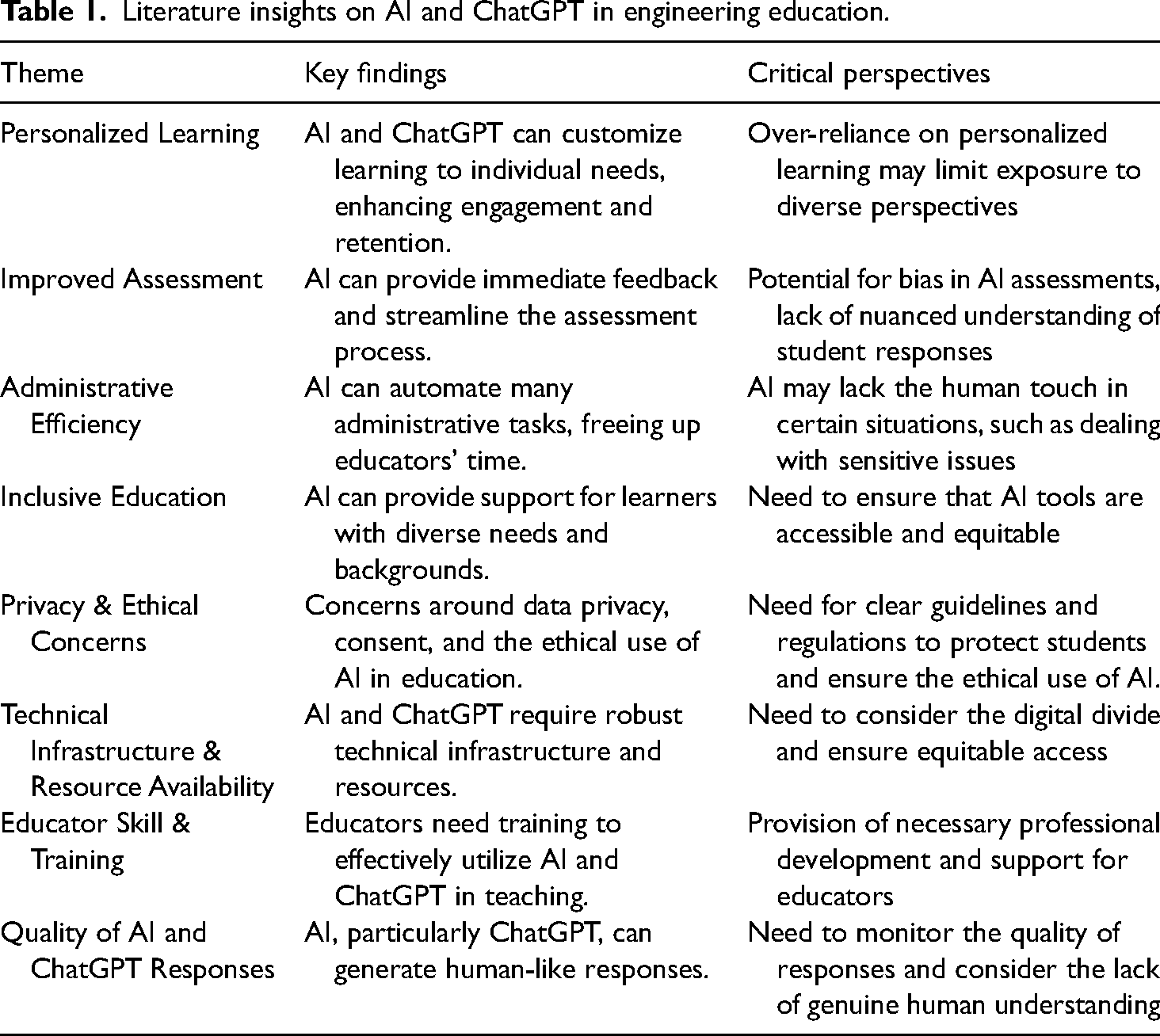

As the literature on AI and ChatGPT in engineering education continues to expand, several key findings and perspectives emerge, as discussed below. Table 1 presents key findings and critical perspectives from literature on the implementation of AI and ChatGPT in engineering education. Following the Table, we present and discuss each theme.

Literature insights on AI and ChatGPT in engineering education.

Key findings and observations

Personalized learning

One of the significant findings from the literature review is that AI, including ChatGPT, can adapt to the learning pace, style, and level of each student.4,20–22 Personalized learning, as discussed by Xie et al., 23 represents a promising frontier in the application of AI in education. AI systems, especially models like ChatGPT, offer adaptive learning pathways, personalized content, and immediate, targeted feedback. In the case of ChatGPT, this adaptability is user-driven—meaning the student must actively ask questions, seek clarification, or iterate on prompts for the tool to adjust responses to their learning needs. Therefore, for effective personalized use, educators play a key role in guiding students on how to engage with ChatGPT intentionally. This includes designing inquiry-based assignments, modeling effective prompt engineering, and encouraging reflective questioning practices. In this way, ChatGPT becomes a tool that adapts to pace and style based on student engagement, supported by thoughtful instructional design. Adiguzel et al. 24 emphasized how AI models could evaluate individual learning styles and needs, subsequently customizing the educational content. They depicted how ChatGPT could engender dynamic, interactive learning settings attuned to the learner's real-time feedback. They also illustrated how these tools could assist educators by offering valuable insights into student performance and progress, aiding them in refining their teaching approaches and interventions. Furthermore, AI and ChatGPT's real-time and personalized feedback allowed students to understand their learning gaps and progress effectively. 25

However, Akgun and Greenhow 26 express concerns about the potential oversimplification of the learning process by AI, particularly when such systems overly prioritize quantifiable learning metrics at the expense of broader educational goals and deep level learning. Furthermore, they address the risks of privacy invasion and data misuse when algorithms handle sensitive student data. 26 In-depth empirical data regarding the specific pedagogical impact of these cutting-edge technologies are rarely provided in the literature. 24 Numerous studies cover the possible benefits from a theoretical perspective,6,24 but very few go into detail on how these technologies change teaching approaches, affect learning processes, or affect academic results. Recent studies by Selwyn 27 and Akgun and Greenhow 26 highlight another important consideration: the digital divide between learners who have good access to the internet and those who do not. Socio-economic disparities can affect access to these technologies, potentially widening educational inequalities.

Improved assessment

AI and ChatGPT can alleviate the burden of manual grading for educators, enabling them to focus on pedagogical strategies and student engagement.6,28,29 The software can help develop adaptive assessment systems that modify the difficulty of problems based on the learner's performance, thus providing a tailored developmental evaluation of learning progress. 25 This could be particularly useful in engineering education, where problem-solving and application of concepts are critical. However, the literature also emphasizes potential challenges, such as AI's current ability to evaluate only quantitative responses accurately, limiting its effectiveness for qualitative or creative tasks. Plagiarism is also a growing concern in the context of AI-assisted education. As students may use tools like ChatGPT to generate content with minimal effort, distinguishing between original and AI-generated work becomes increasingly difficult, raising academic integrity issues. 30 Besides, there are concerns regarding the transparency of AI grading algorithms, as it might not be clear how the AI arrived at a specific grade or feedback. This could potentially lead to distrust or misunderstanding among students about their evaluations.15,16,25,31

Administrative efficiency

AI and ChatGPT could be used for enrollment and admission processes, automating the assessment of thousands of applications swiftly and fairly.15,16,32,33 AI has proven efficient in automating administrative tasks such as scheduling, grading, and maintaining records, resulting in saved time and reduced human error.34,35 AI-powered Chatbots like ChatGPT can help answer repetitive queries from students about course content, deadlines, and more, reducing the administrative burden on educators. 36 Furthermore, AI can provide predictive analytics for student success rates, dropout rates, and other key performance indicators, enabling proactive institutional management to proactively strategize and implement measures that enhance efficiency, improve student outcomes, and resolve potential issues before they become major concerns.

Yet, technical infrastructure and resource availability are key considerations in integrating AI and ChatGPT into engineering education, as underscored in the literature. Successful deployment depends on access to robust computing systems, high-speed internet, and secure data storage. However, there is a notable lack of comprehensive studies detailing the specific technical and economic requirements needed to implement and sustain these technologies—particularly in resource-constrained institutions. This lack of guidance may hinder equitable adoption and widen the global digital divide.22,37,38

Inclusive education

AI systems, including ChatGPT, can significantly enhance accessibility in education for students with disabilities, as AI can be harnessed to provide accommodations, such as speech recognition and text-to-speech functionalities, enabling them to interact with educational content in meaningful ways. Similarly, AI's ability to offer personalized learning experiences can help address the diverse needs of learners, perhaps using visual aids or real-world applications, making it a potent tool for inclusivity.24,39 However, issues of algorithmic bias surfaced prominently, potentially leading to discriminatory learning experiences. 10 For example, an AI model that's been trained predominantly on engineering textbooks and resources from the west will unintentionally favor teaching methods and content relevant to that region that may be less applicable or entirely discordant in other regions like Africa or Asia. Varied student and staff input should be included in the development and deployment of AI systems to make sure that these systems are responsive to the various needs, experiences, and views of all students. Furthermore, while AI can make education more accessible for some learners, the digital divide could exacerbate educational disparities for students in low-resource environments. 14 Therefore, it is imperative to design AI applications like ChatGPT with a holistic understanding of inclusivity, considering socio-economic, disability-related, and learning preference factors.40,41 Explaining benefits and potential problems explicitly to students and listening to their views will encourage student buy-in.

Educator skill and training

Educators may struggle with understanding the technical complexities of AI due to limited training, which can result in underutilization or misuse of tools like ChatGPT. 42 This challenge is further highlighted by a lack of practical guidance on how to integrate such tools effectively into teaching and learning environments. 7 To address this, professional development should be comprehensive, ongoing, and responsive to educators’ varying levels of digital proficiency. 43 In addition to technical training, there is a growing emphasis on ethics education to help educators critically engage with issues such as algorithmic bias, fairness, and data privacy.24,44 For instance, the efficiency and fairness of AI systems depend heavily on the quality and diversity of the data they are trained on, and educators must understand how data limitations can lead to biased or flawed outputs.14,45 Given that many AI tools, including ChatGPT, are developed by private companies that may not fully disclose their decision-making mechanisms or data handling practices, it becomes even more essential for educators to be trained to question, adapt, and establish their own safeguards. Training programs should empower educators not only to use these tools but also to understand their limitations and communicate these clearly to students. Institutions can support this by encouraging collaborative policy development, providing ethical-use guidelines, and fostering a culture of critical inquiry in technology use. As AI tools become more integrated into educational practice, educators must transition into new roles as facilitators of human-AI collaboration. This involves understanding both the capabilities and limitations of AI while preserving the irreplaceable human element in teaching.22,31,38

Privacy and ethical concerns

Our examination of the literature reveals that there are very few specific ethical standards for using AI in the educational setting, given the sensitive nature of student data and the considerable responsibility put upon algorithms in determining the learning experience. 46 The absence of well-accepted ethical norms for how AI and ChatGPT handle data and make decisions in educational contexts now represents a substantial research gap, one that puts limitations on practice. Although AI developers are responsible for model transparency and ethical design, they often do not fully disclose how data is processed or how decisions are made. This lack of transparency makes it essential for educators and institutions to adopt protective strategies—such as internal AI-use guidelines, student data minimization practices, and awareness-building efforts—to safeguard both students and staff, even when tools remain partially opaque. AI's effectiveness hinges on its ability to learn from data, including from student learning habits and performance. While this enables individualized learning, it triggers privacy issues. Prominent among these is algorithmic bias, where skewed training data may result in unfair outcomes. For example, an AI tutor may unintentionally favor certain groups if trained on biased data. 47 Another ethical concern is the potential misuse of AI and ChatGPT's advanced capabilities for dishonest intellectual practices that may be hard for lecturers to pick up.40,41

Moreover, questions of consent and data ownership were identified as crucial ethical challenges. Students may not fully understand the implications of their data being used, which could undermine informed consent principles.48–50 However, the development of robust data privacy policies, ethical guidelines for AI use in education, and the implementation of bias-mitigation strategies are steps that can help manage these concerns. These efforts also offer an opportunity to overtly discuss ethical considerations with students, reinforcing the importance of digital responsibility and ethical professionalism. Additionally, compliance with pertinent regulations is mandatory, including the General Data Protection Regulation (GDPR) in Europe and any similar local data protection requirements. This includes both administrative controls like stringent privacy regulations, limited access to data, and frequent audits, as well as technology precautions like data encryption and secure databases.

Quality of AI and ChatGPT responses

The

Variation in AI effectiveness across engineering disciplines

It is important to recognize that the effectiveness of ChatGPT varies significantly across different engineering disciplines. For instance, ChatGPT may offer substantial benefits in areas such as engineering design, communication skills development, and conceptual explanation, where nuanced and descriptive interaction is beneficial. However, in disciplines heavily reliant on precise numerical methods and exact reasoning—such as mathematics-intensive engineering topics, control systems, or computational analysis—the current versions of ChatGPT (3.5 and 4) show clear limitations. These AI models may sometimes generate responses lacking rigorous mathematical accuracy or precision, highlighting a critical area for ongoing development and cautious pedagogical integration.2–4,44

Theoretical framework

A constellation of educational theories that underscore the importance of personalized, active learning counterweighs the lack of empirical data to date about AI and ChatGPT. Theoretical inspiration drives our interest, and is likely to motivate other educational practitioners. Four key theories forming the theoretical bedrock of these educational technologies include the Constructivist Learning Theory, 53 Personalized Learning Theory, 54 Cognitive Load Theory, 55 and the Zone of Proximal Development. 56 Table 2 provides a succinct overview of the theoretical frameworks as they apply to the use of AI and ChatGPT in engineering education. These theories underscore the shift from traditional, one-size-fits-all education to more individualized, dynamic, and active learning, a shift that AI and ChatGPT can facilitate.

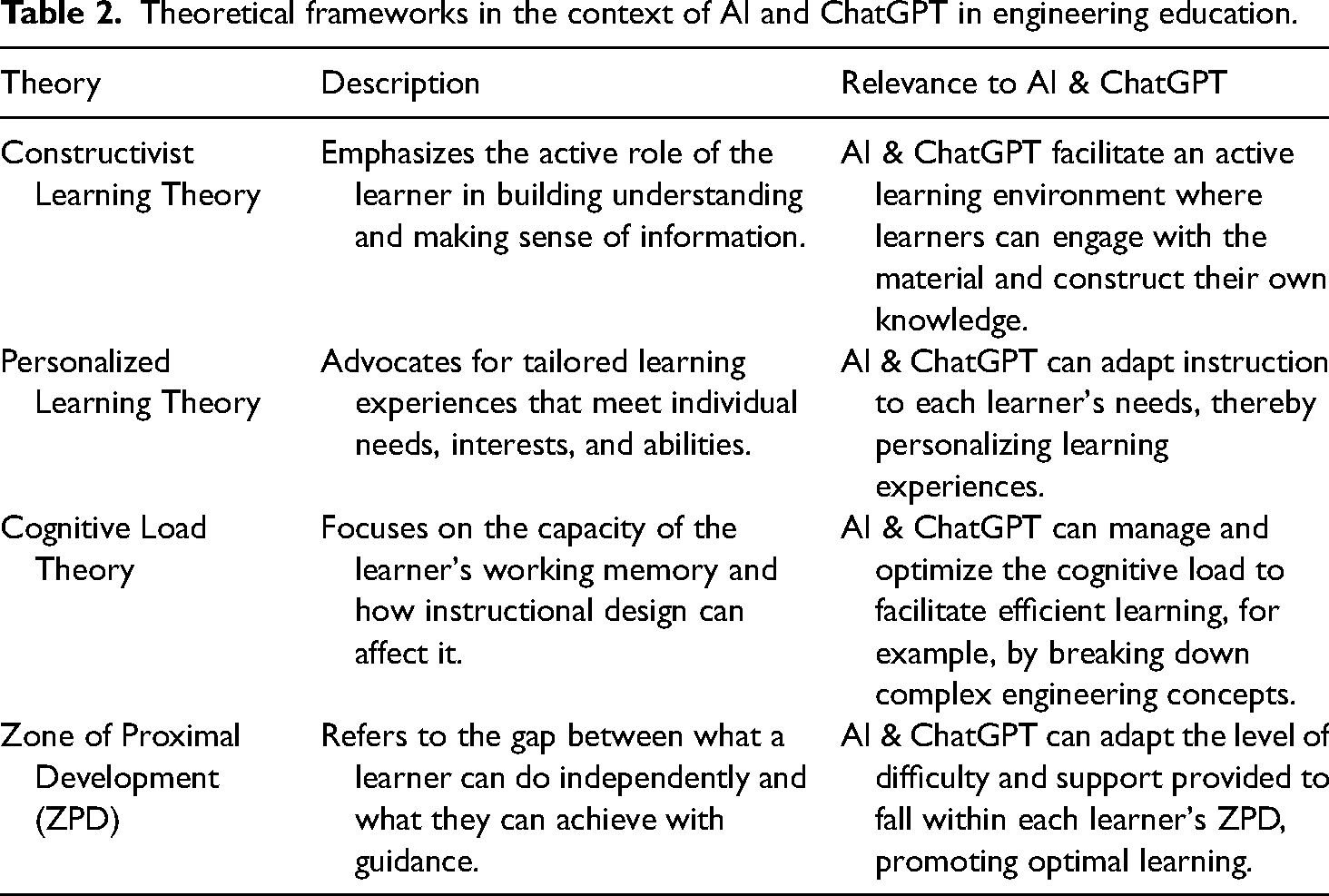

Theoretical frameworks in the context of AI and ChatGPT in engineering education.

Constructivist learning theory in the context of AI and ChatGPT

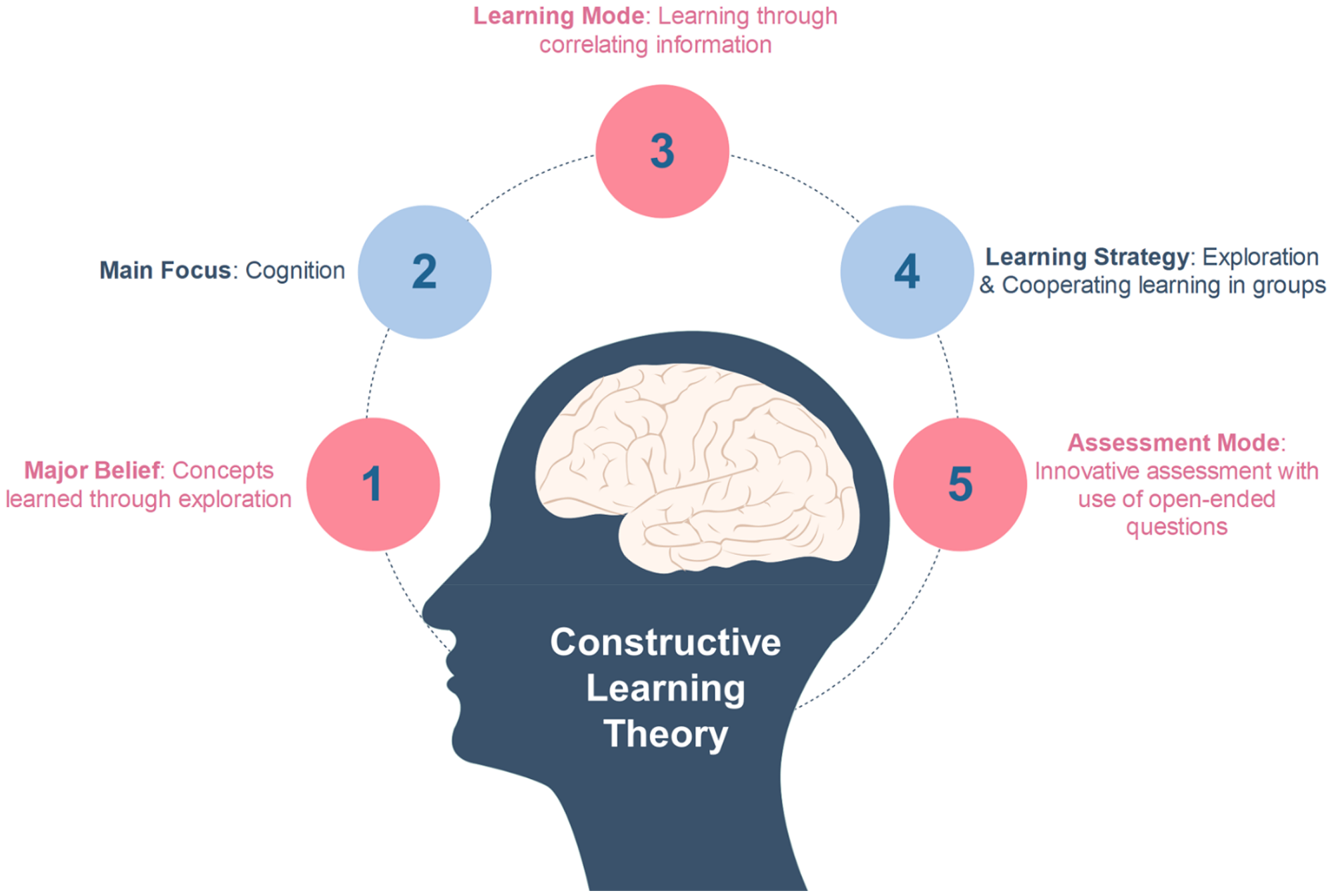

The Constructivist Learning Theory, grounded in epistemological philosophy, emphasizes that learners actively construct new knowledge by connecting it to their existing understanding.57,58 The Constructive Learning Theory is characterized by five key components, Figure 1. Learners should acquire knowledge through active exploration, first understanding, then correlating and integrating information, and enhancing understanding through associations. To facilitate this, the instructional strategy fosters exploration and cooperative learning within groups. 5 Finally, the assessment mode is quite innovative, using open-ended questions to evaluate learners, thereby encouraging creative and critical thinking.

Visual representation of the constructive learning theory Components. 53

Aligned with this theory, AI and ChatGPT may promote a constructivist learning environment by providing engaging and customized educational experiences, fostering active involvement, and supporting each learner's knowledge construction. ChatGPT encourages inquiry-based, participatory learning. Students may interact with ChatGPT through live conversations, asking questions and exploring ideas to proactively engage with new material. However, this individual engagement is not meant to replace collaborative learning. Instead, ChatGPT can support students’ individual understanding before they engage in group discussions, helping them contribute more meaningfully. In this way, AI becomes a scaffold for human-centered learning, enhancing peer interaction rather than isolating learners. Engineering students frequently have to put theoretical concepts into practice. AI can assist in this process by offering tools and simulations that enable students to interact with engineering concepts and deepen their comprehension.

Personalized learning theory in the context of AI and ChatGPT

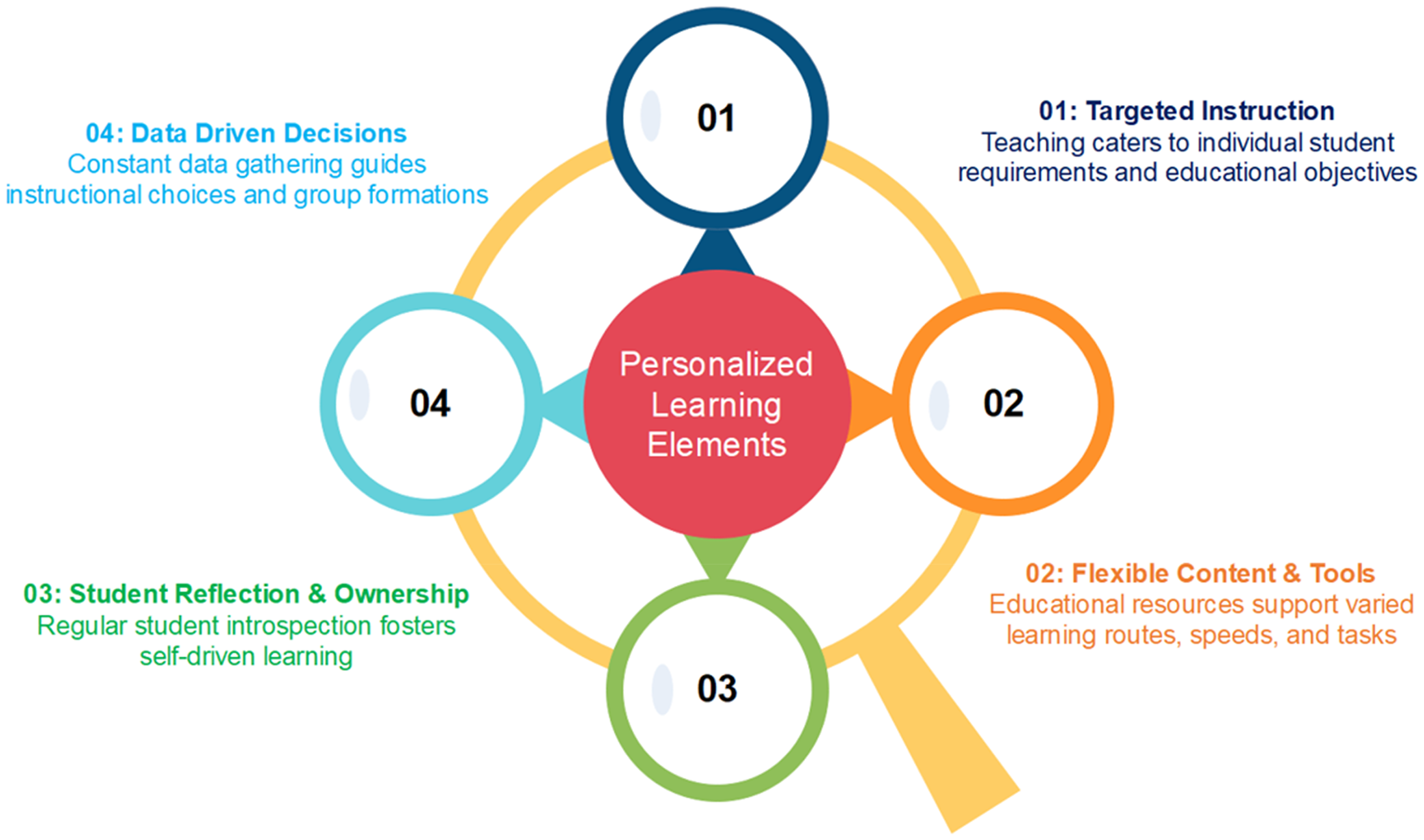

According to the personalized learning theory, a curriculum should have enough flexibility that each student's unique demands and learning preferences can usually be met. Personalized Learning Theory involves various crucial elements, Figure 2. First, individualized instruction tailors teaching strategies to meet individual student needs and educational goals. For example, the use of AI and technologies like ChatGPT can assist in providing personalized feedback and guidance in two complementary ways: educators can use these tools to support instructional decision-making and offer scalable, targeted support, while students may also use ChatGPT independently to ask questions, explore concepts, and receive immediate, tailored feedback. Additionally, course design strategies can include diverse activities, assignments, and assessment methods to cater to different learning preferences and speeds. In this context, individualized instruction means creating an inclusive learning environment where the course materials and activities are diverse and flexible enough to cater to each student's different needs. Secondly, the theory calls for adaptable resources, where learning materials and tools can be adjusted to accommodate different learning paths, speeds, and tasks. Another critical aspect is promoting student self-reflection and autonomy. This encourages students to regularly reflect on their learning journey and take ownership of it. Lastly, data-informed decisions form an integral part of this theory. Here, continuous data collection is utilized to inform instructional decisions and establish suitable learning groups. Data sources might include student performance on assessments, participation in online discussions, project completion times, and direct student feedback. AI and other digital tools can track things like how students interact with online learning materials, time spent on specific tasks, and more. This collected data can then be analyzed to identify trends, strengths, weaknesses, and opportunities for targeted instruction.

Key elements of personalized learning Theory. 54

ChatGPT can support learners by acting as a conversational aid, offering guidance through complex engineering concepts and problem-solving approaches. It provides quick explanations that may help clarify difficult material, especially in early learning stages. However, there is a risk that students might misinterpret these simplified responses as comprehensive understanding. In engineering education—where abstract theories and applied problem-solving require depth and precision—over-reliance on AI-generated summaries may lead to superficial learning. It is therefore essential to position ChatGPT as a supportive tool rather than a substitute for rigorous study, and to help students develop critical awareness of the limitations of natural language models.

Cognitive load theory in the context of AI and ChatGPT

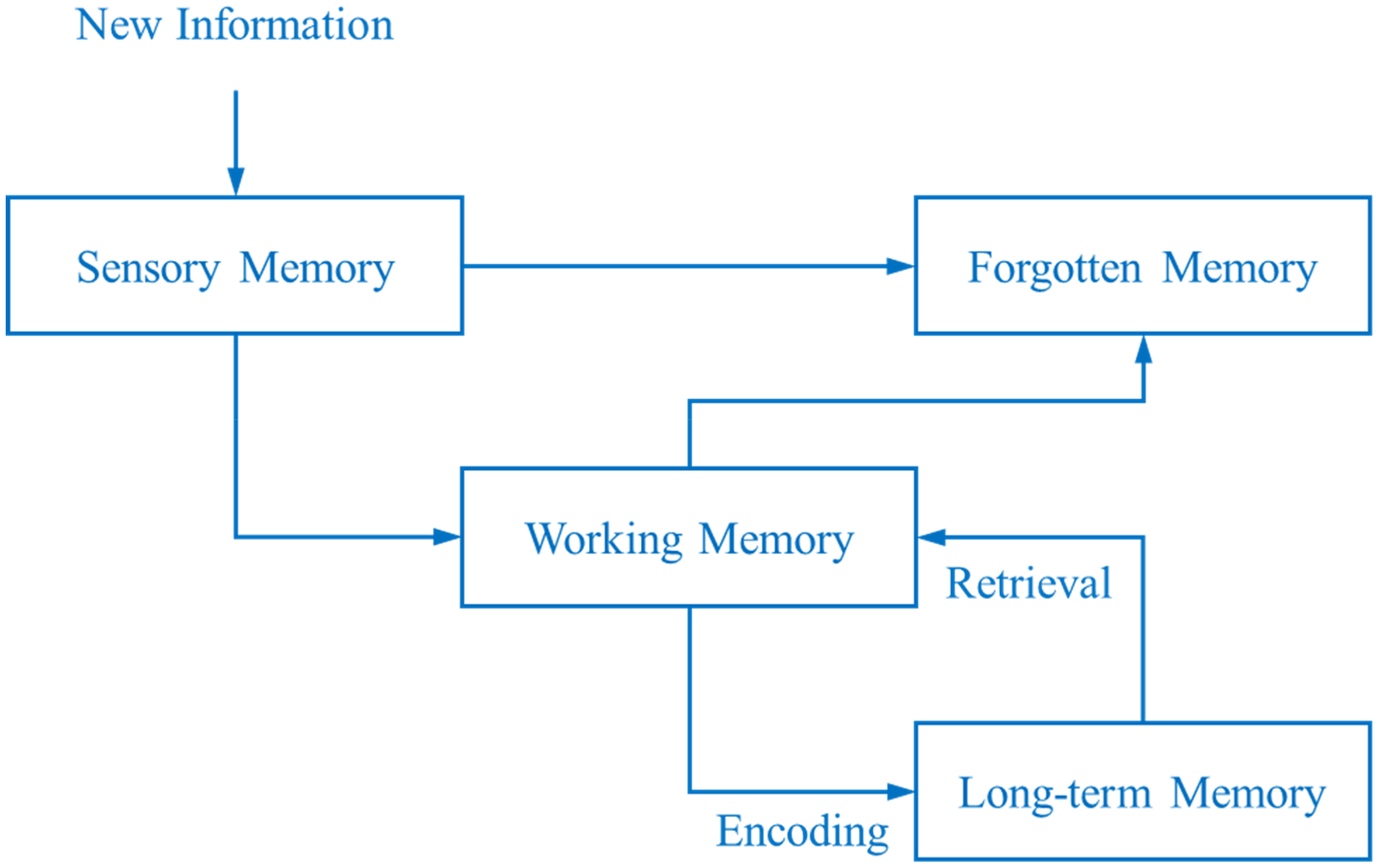

According to the Cognitive Load Theory (CLT), learners do well to manage their limited working memory capacity, or “cognitive load”. Cognitive Load Theory draws on analysis of how memory functions to facilitate learning, Figure 3: Sensory Memory, the initial stage where new information stimuli are briefly held and processed; Working Memory, a limited capacity system that manipulates short-term information; Long-Term Memory, an enduring store of knowledge, skills, and experiences, where data from working memory is stored permanently; and Forgotten Memory, denoting previously stored but now inaccessible or irretrievable information from our long-term memory. These components are helpful to understand and manage for effective learning experiences, particularly within the complex intricacy of engineering.

Key components of cognitive load Theory. 55

We argue that, particularly in complicated areas like engineering, AI is excellent at controlling cognitive load. It simplifies complex subjects into small chunks, reducing the inherent burden and making it more likely these small blocks of knowledge can move through the processing system to long-term memory. A step-by-step approach makes it easier to digest information and comprehend concepts. This approach is supported by ChatGPT's clear explanations in a conversational setting. By demystifying technical concepts and offering examples from real-world situations, the digital tools reduce unnecessary cognitive burden. However, this benefit depends heavily on how these tools are introduced and used. Without proper guidance, students may be tempted to rely on simplified AI-generated explanations without fully grasping the underlying principles—particularly in complex topics that require precise understanding for future application. Therefore, educators must provide explicit instruction on how to critically evaluate AI outputs and integrate them into deeper learning. Structured activities, reflective questions, and collaborative tasks can help ensure that ChatGPT is used as a supplement to—not a substitute for—rigorous conceptual mastery. The interactive features of ChatGPT also help with relevant cognitive load, encouraging participation and comprehension. Through good cognitive load management, AI and ChatGPT may reduce cognitive overload and promote more efficient learning. These technologies help students to concentrate more on intrinsic and relevant loads by lowering unnecessary load, which improves their comprehension of engineering principles and fosters the development of critical thinking abilities.

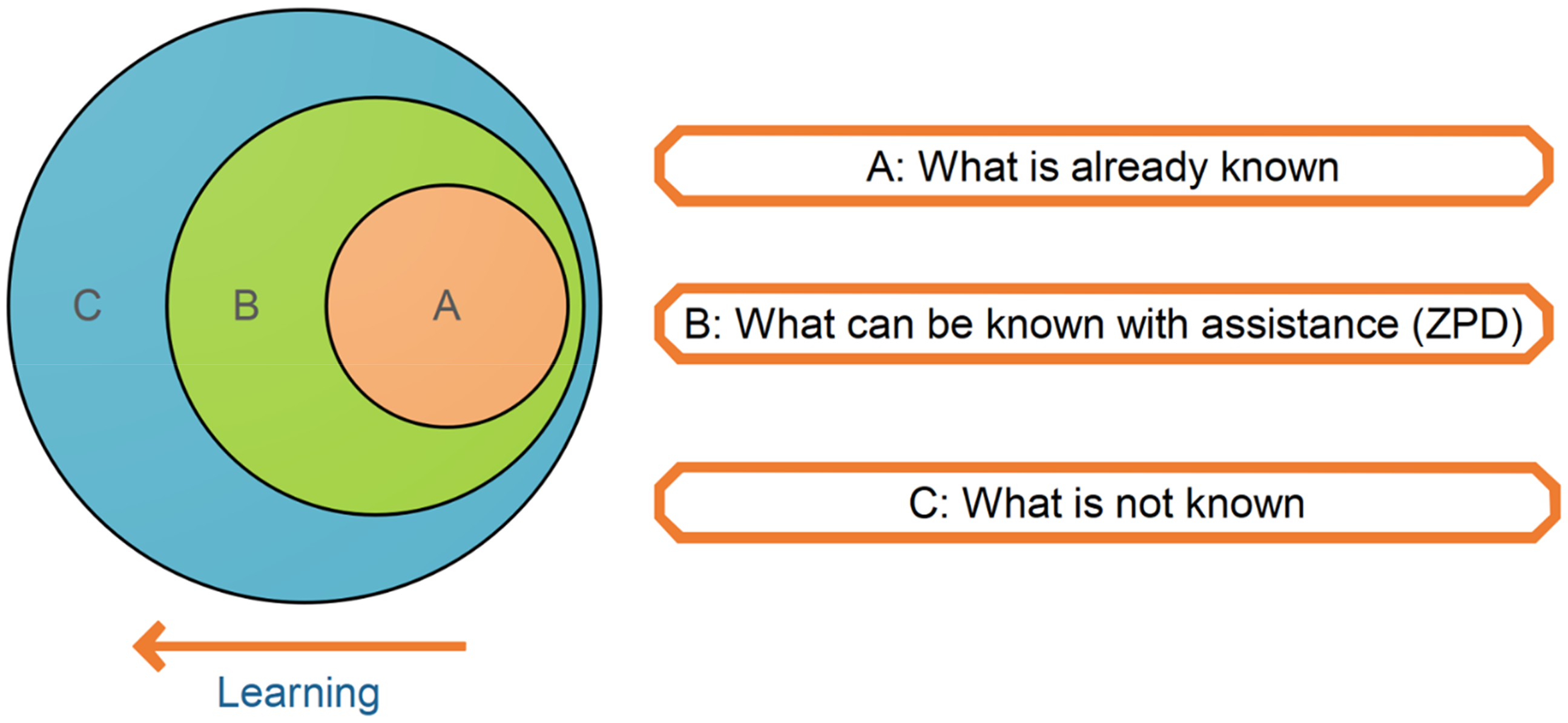

Zone of proximal development (ZPD) theory in the context of AI and ChatGPT

Lev Vygotsky, a psychologist, created the concept of the Zone of Proximal Development (ZPD), which distinguishes between what students are capable of doing on their own and what they may be able to achieve with assistance, Figure 4.

Visual representation of zone of proximal development (ZPD) Theory. 56

The ZPD serves as the ideal learning environment, where teaching materials and support encourage development without overburdening students. By evaluating a learner's prior knowledge and accordingly adapting the difficulty of the content and the amount of help, AI and ChatGPT tends to ensure that individual learning occurs within this optimal zone. For instance, through a study of learner performance, AI may provide specialized resources and assignments that are challenging but do not surpass the student's capabilities. Similarly, ChatGPT can provide individualized instruction by responding to specific questions and supporting content understanding. However, this should not be seen as a substitute for the social interactions at the heart of Vygotsky's theory. Rather, AI can help learners enter social learning environments with better-prepared questions, clearer understanding, and increased confidence. When used intentionally, this support aligns with the interactive nature of the ZPD—as a tool to amplify human mentoring, not replace it. In this way, ChatGPT can complement the educator's role in facilitating peer-based discussion and collaborative problem-solving, ensuring that learning remains both individualized and socially grounded.

Thus far, we have given attention to literature on AI and ChatGPT, including what educationalists found advantageous and what was limiting or potentially problematic about these tools. We have presented four teaching and learning theories intended to serve as a foundation for integrating software tools into instructional design and to offer a conceptual framework for educators reflecting on their pedagogical practices. Assuming that readers are considering whether or not to use AI and ChatGPT in their engineering teaching, we next summarise what should be considered.

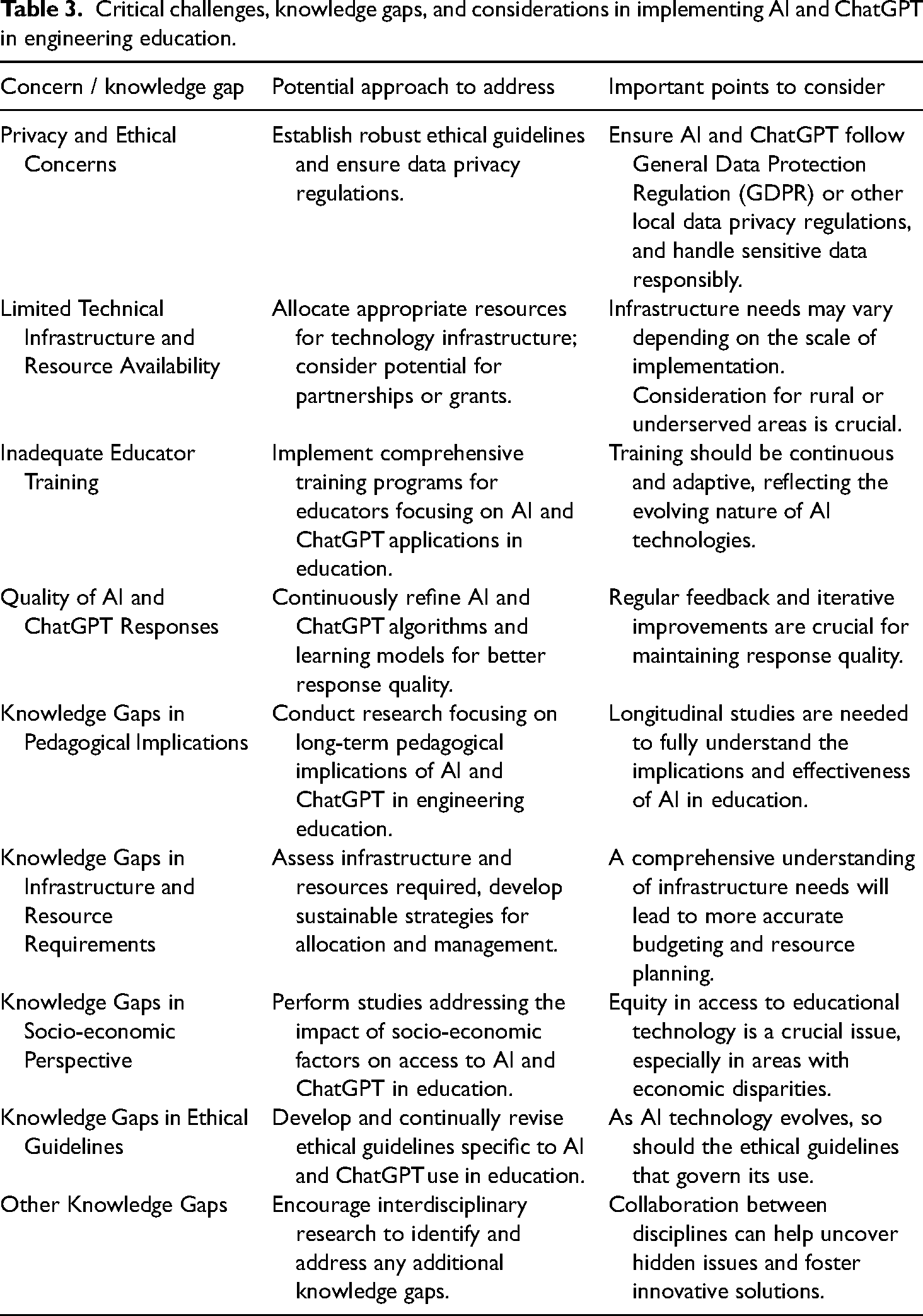

Critical perspectives and knowledge gaps in the use of AI and ChatGPT

While learning theories highlight the potential of digital tools for engineering teaching, there are practical considerations that need to be considered. In the context of this study, challenges refer to a range of barriers that may hinder the effective implementation of AI and ChatGPT in engineering education. These include technical limitations (e.g., infrastructure and access), ethical concerns (e.g., data privacy and bias), pedagogical complexities (e.g., curriculum integration and instructional design), and institutional or resource-related constraints. Table 3 presents a comprehensive summary of key challenges, knowledge gaps, and considerations in implementing AI and ChatGPT in engineering education. It underscores the multifaceted nature of the issues at hand and the need for a diverse set of solutions to address them effectively. The strategies presented below vary in scope: some can be implemented by individual educators within their classrooms, while others require institutional support, infrastructure, or policy development.

Critical challenges, knowledge gaps, and considerations in implementing AI and ChatGPT in engineering education.

Addressing critical perspectives

Addressing Privacy and Ethical Concerns

Rather than reiterating the specific challenges already discussed, this section emphasizes the importance of translating those concerns into concrete implementation strategies. Ensuring ethical and effective use of AI and ChatGPT in engineering education requires both individual and institutional action. At the educator level, this includes thoughtful instructional design, critical use of AI outputs, and guiding students to engage responsibly with AI tools. At the institutional level, sustained investment in infrastructure, inclusive policy frameworks, interdisciplinary collaboration, and ethical oversight are essential.

Furthermore, as AI technologies evolve rapidly, institutions should adopt flexible strategies for AI governance, regularly revisiting training needs, updating data protection policies, and promoting inclusive development practices. Future research should focus on evaluating the long-term pedagogical and ethical impacts of these tools, ensuring that innovation in engineering education is not only effective but equitable and responsible.

Addressing technical infrastructure and resource availability issues

Options for providing equitable access to AI and ChatGPT include

The establishment of partnerships with technology companies; Securing government or private funding for infrastructural upgrades; and Leveraging scalable, cloud-based AI solutions that require less local computational power. These solutions are hosted on cloud servers and can be accessed via the internet, reducing the pressure on local resources. Such cloud-based platforms could offer a viable and cost-effective alternative for institutions struggling with resource limitations.

Addressing inadequate educator training

Regular training courses and seminars that focus primarily on the comprehension and use of AI technologies in the educational setting must be made available to educators. Library staff, academic developers or colleagues with expertise could be encouraged to take up this service. These courses ought to emphasize not just how to use AI technologies technically but also how to successfully incorporate them into instructional methods. Additionally, continuous technical assistance must to be accessible to help instructors troubleshoot and make the most use of these technologies. In addition, encouraging a collaborative culture has its advantages. Peer learning may be facilitated and a supportive community can be created by providing educators with a platform where they can discuss problems, trade methods, and share experiences related to using AI and ChatGPT.

Addressing quality assurance for AI and ChatGPT outputs

Ensuring the reliability and educational value of AI and ChatGPT responses requires a structured quality assurance process. This includes evaluating outputs across a range of engineering tasks to identify errors, oversimplifications, or contextual misalignments. Regular updates to AI models are essential to reflect evolving content, improve accuracy, and address known issues. User feedback from students and instructors is central to this process. Institutions and developers should implement clear channels for reporting and reviewing AI performance, and use these insights to refine model responses. It takes a collaborative, multi-stakeholder strategy to effectively manage quality control for AI in education. 59 Educators must also be trained to interpret AI outputs critically and teach students how to engage with them responsibly. Institutions should support this with transparent guidelines, regular training, and protocols for error detection and ethical use. Together, these efforts can help ensure that AI tools support, rather than compromise, educational standards in engineering contexts.

Stakeholder impacts: a critical examination in the context of AI and ChatGPT-infused engineering education

The integration of AI and ChatGPT into engineering education is transforming the responsibilities and expectations of key stakeholders, including educators, students, institutions, and industry partners. Rather than repeating challenges already discussed, this section explores how these roles are shifting and what is needed to support this transition.

Educators

The growing presence of AI tools such as ChatGPT enables educators to shift their focus from routine instruction to higher-order pedagogical tasks, such as facilitating critical thinking, managing collaborative learning, and designing inquiry-driven curricula. However, to fully harness these tools, educators must acquire both technical fluency and a strong ethical foundation. This includes understanding AI limitations, being able to evaluate its outputs, and designing learning activities that balance automation with human interaction. Institutions must support this shift through sustained professional development and by fostering a culture of responsible AI use in teaching practice.

Students

AI promises personalized, responsive, and interactive learning environments, empowering students to engage with complex concepts at their own pace. Yet, this also demands increased student responsibility and critical digital literacy. Students must learn how to evaluate AI-generated information, ask meaningful questions, and integrate machine responses into deeper understanding. As AI tools become ubiquitous in the workforce, developing proficiency in using these systems becomes an essential graduate attribute. At the same time, institutional support is needed to address ongoing concerns related to the digital divide and data privacy, ensuring all students have equitable access and understand how their data is used.

Teaching institutions and industry partners

Universities must take the lead in establishing ethical, inclusive, and technically robust frameworks for AI adoption. This includes investing in infrastructure, creating interdisciplinary support teams, and promoting policy coherence. While many engineering institutions already operate with advanced computing capabilities, deploying tools like ChatGPT at scale requires more than hardware capacity. Institutions must ensure stable internet connectivity, manage cloud-service integration, and provide equitable access across student populations. They must also commit resources to educator training and ensure that the implementation of AI tools aligns with ethical standards and pedagogical goals. Industry partners also play a role in shaping educational AI by offering domain-specific models, co-developing curriculum content, and identifying workforce-aligned skills. A shared understanding among stakeholders is essential to ensure that AI supports, rather than replaces, the collaborative and humanistic core of engineering education.

Limitations and future research

The prospective uses and effects of AI in the field of education will change as the technology does. Additionally, this study has generally skewed on increases in student learning outcomes that can be measured. There is still much to learn about the subjective perceptions of students utilizing AI technologies and how they affect factors like motivation, self-efficacy, and engagement in the learning process.

The integration of ChatGPT into engineering education raises several long-term implications worth careful consideration. Over-reliance on AI-driven explanations could potentially reduce students’ development of independent critical thinking and problem-solving skills. Dependence on readily available AI responses might discourage deep learning and self-directed inquiry. Conversely, evolving pedagogical methods could embrace these AI tools constructively, reshaping educators’ roles from knowledge transmitters to facilitators of active, collaborative, and inquiry-driven learning experiences. Hence, future research should continuously assess how AI affects not only student skills and reliance but also the transformation of instructional strategies within engineering education contexts.

Given these acknowledged limitations, several exciting directions for future research emerge. Longitudinal studies tracking the evolution of AI applications in education and their sustained impact on teaching and learning would be of significant value. Furthermore, research could explore dimensions such as student attitudes towards AI, motivations driving its acceptance, and perceptions of its role in learning. Finally, future research could extend beyond the classroom to examine broader societal implications of AI in education. This could include investigations into its effect on job markets, the evolving skills gap, and critical issues surrounding equity and access in education.

Conclusions

The integration of ChatGPT in engineering education is not simply a technological shift. It represents a deeper pedagogical transformation that redefines how students learn, how educators teach, and how institutions support the learning process. To harness this potential responsibly, stakeholders must invest in ethical, inclusive frameworks, robust training, and continuous quality assurance. These efforts are essential to ensure that AI tools enhance rather than diminish the human-centered values at the core of engineering education.

This paper makes two key contributions. The first is to explain how ChatGPT can help translate established learning theories into practice within engineering courses, which are often large and centered on complex topics. These tools offer new opportunities to support constructivist, personalized, cognitive load-aware, and ZPD-informed learning experiences. This framing may empower enthusiastic educators to safely explore AI integration and provide those already experimenting with a stronger theoretical grounding. We have shown that AI and ChatGPT can directly support pressing needs in engineering education, such as applying abstract principles, scaling feedback in large classes, and encouraging lifelong learning in an era of rapid technological change.

The second contribution is to clearly outline the substantial challenges of introducing digital teaching tools. These include technical and infrastructural demands, resource inequalities, and the need for balanced human-digital interaction. Ethical issues, especially around data privacy and algorithmic bias, are critical and must not be overlooked. We have summarized how these challenges and benefits affect all stakeholders involved in engineering education.

It is essential to acknowledge that the global inequality of institutional resources may be further exacerbated by the rise of AI and ChatGPT. Without intentional planning and inclusive research, such tools risk deepening rather than narrowing educational gaps. Addressing this risk is urgent. The research community must actively engage with questions of access, ethics, and equity to ensure that AI truly contributes to the advancement of quality, inclusive engineering education for all. This is not only possible but necessary to prepare future engineers for a world increasingly shaped by intelligent systems.

Footnotes

Consent to participate

Not applicable, as this study did not involve human subjects.

Consent to publish

All authors give their consent for the publication of this manuscript.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Ethical approval

This study did not involve human participants or animals; therefore, ethical approval was not required.

Data availability statement

The datasets utilized and/or analyzed during the course of this study can be obtained from the corresponding author, subject to reasonable requests. Comprehensive references to these sources have been meticulously cataloged in the manuscript's bibliography section. It should be noted that the analysis delineated in this study represents a synthesis of data previously published in academic literature. For inquiries pertaining to the sources of data and their accessibility, please direct correspondence to the corresponding author.